Abstract

The availability of structured data has increased significantly over the past decade and several approaches to learn from structured data have been proposed. These logic-based, inductive learning methods are often conceptually similar, which would allow a comparison among them even if they stem from different research communities. However, so far no efforts were made to define an environment for running learning tasks on a variety of tools, covering multiple knowledge representation languages. With SML-Bench, we propose a benchmarking framework to run inductive learning tools from the ILP and semantic web communities on a selection of learning problems. In this paper, we present the foundations of SML-Bench, discuss the systematic selection of benchmarking datasets and learning problems, and showcase an actual benchmark run on the currently supported tools.

Introduction

With the growth of the number and size of data sources over the last years, there is an increasing demand for algorithms and tools to perform accurate analysis of these datasets. History in computer science has shown that the main driver to scientific advances, and in fact a core element of the scientific method as a whole, is the provision of benchmarks to make progress measurable. A famous example from database benchmarking (specifically TPC-A1

One area, which is not extensively covered by benchmarks yet is symbolic supervised machine learning from structured data. In this task, background knowledge is modelled using RDF, OWL, Prolog, or other knowledge representation languages. Within this background knowledge, entities are selected as positive and negative examples (supervised learning). Based on those examples, logical formulas e.g. Horn rules or OWL class expressions are learned, using some algorithms, which separate positive and negative examples. These formulas or rules are later used to predict further unseen entities. Hence, this supervised machine learning discipline considers classification methods that generate symbolic (as opposed to numeric) classifiers which describe distinctive structures of the underlying knowledge base w.r.t. the given positive (and negative) examples. For instance, given a dataset describing chemical compounds in which positive examples are compounds known to cause cancer and negative examples are compounds which do not cause cancer, the algorithms would induce formulas describing what causes cancer. An example result of an OWL class expression describing carcinogenic compounds would be4

See

While a large body of research work has been devoted to this area, the evaluation scenarios are scattered and no generally accepted reference benchmarking platform exists. There are at least two major reasons for this: 1) The first problem is that the use of different knowledge representation languages makes results very difficult to compare. For instance, logic programs are incomparable in terms of expressivity to the description logics underlying OWL. In practice, the knowledge modelling is different to such an extent, that automatic conversion methods do not output satisfactory results. 2) The second reason is that the effort required to model the background knowledge using semantic technologies can be considered significantly high. Besides the necessary domain expertise, a basic understanding of ontology engineering principles and knowledge of basic vocabularies and existing complementary background knowledge bases is required. Applying this to compile knowledge bases for many learning problems from different domains and setting up a repository is a major effort. Overall, this has led to benchmarks being scattered across different publications and scientific communities.

To overcome this problem, we have performed a systematic scientific literature analysis in order to collect relevant benchmarks. Those were then translated into different knowledge representation languages if needed. For the execution of benchmarks, a framework has been implemented, which allows the execution of different learning systems over a given set of learning tasks and measure performance metrics. Moreover, wrappers for the systems Progol,5

A systemic survey of articles published in the last 10 years in relevant scientific conferences and journals collecting benchmarks for structured machine learning.

The preparation of nine learning tasks, which constitute our current benchmark suite, including translations of the background knowledge in OWL and different logic programming dialects.

The creation of a framework (called SML-Bench), which allows comparison of systems which differ in the knowledge representation languages they support, and the programming languages they are written in.

The creation of wrappers for eight learning systems for their inclusion in SML-Bench.

The paper is structured as follows: In Section 2 we give an overview of related work, followed by sections introducing the challenges (Section 3) and benchmarking strategies (Section 4) of structured machine learning. We further describe our dataset review process in Section 5. In Section 6 we introduce our benchmark framework and describe our evaluation setup and results in Section 7. After a discussion in Section 8 we give an outlook and conclude our paper in Section 9.

Machine learning is a vast field with a variety of application domains and learning problems. Existing benchmark initiatives can be categorised based on data sizes, learning problems, and data types (associated with a particular set of algorithms). However not all of those categories have to apply. For instance, popular dataset repositories like UCI [4], StatLog [10] and StatLib [26] – though they provide datasets with evaluation results of individual algorithms – have the major aim of providing data, and do not provide comprehensive comparisons of different algorithms.

A noticeable effort for benchmarking, both datasets and algorithms, can be seen in LIBSVM11

RLBench,12

A benchmark focusing on neural networks is Shirley’s Next-Generation Benchmark Suite.13

Recently, benchmarking and data collection attempts have started to cover big data and scalability tests. Bench-ML14

Comparison of different benchmarking efforts

One of the noticeable benchmarks in the semantic web community for query performance is proposed by the Linked Data Benchmark Council (LDBC)16

As we focus on symbolic machine learning from examples and semantically structured background knowledge, the benchmarking projects introduced here are not suitable. None of them deals with semantically structured data and/or algorithms, that explicitly present a supervised structured machine learning problem, making our benchmarking effort considerably different and unique.

In this section, we describe some of the challenges of machine learning on structured data grouped by category:

Background language expressivity A powerful property of machine learning algorithms operating on structured data is their ability to reason about the background knowledge. Generally, more expressive languages for the background knowledge, e.g. an expressive description logic such as

Size Larger datasets affect the efficiency of structured machine learning tools. There are three main aspects of size: (1) The size of the schema, (2) the size of the instance data and (3) the number of examples. The first aspect, schema size, affects mainly the hypothesis space as the learned concepts are constructed from the available schema. The schema size is the number of predicates in the background knowledge (where OWL classes are unary and OWL properties are treated as binary predicates). In the absence of a particular language bias, e.g. restrictions on the length of learned concepts, nested concepts etc., the size of the hypothesis space can become extremely large for big schemata. The second and third aspect, i.e. the instance data size and number of examples, affect mainly the time for hypothesis checking, i.e. validating whether a concept fits the given examples well.

Target concept language Given the same background knowledge, the performance of a structured machine learning algorithm will still heavily depend on the target concept language which is not necessarily the same as the background knowledge language. For instance, some algorithms can learn arbitrarily nested predicate structures (e.g. “parents having studied in Germany” could be represented via nesting of the predicates

Within our evaluation, we will discuss how the tools perform on the selected datasets with respect to the above challenges (often also referred to as choke points in the benchmarking literature).

Benchmarking structured machine learning algorithms

As introduced in Section 1 the general task of an inductive learning algorithm is to learn a classifier discriminating two classes (generally referred to as positive and negative) based on distinguished examples and some background knowledge. In case of the symbolic learning approaches considered here those classifiers are, for example, Horn rules or description logic class expressions. For benchmarking, the respective implementations of the considered algorithms are taken as ‘black boxes’, i.e. no evaluations on algorithmic or implementation details are performed. To judge the quality of a binary classifier there are several measures that are all derived from the confusion matrix [25] which provides the numbers of examples correctly classified as positive, incorrectly classified as positive, correctly classified as negative and incorrectly classified as negative. Derived quality metrics are, e.g. accuracy, precision, recall, F-score, specificity, and AUC [25]. In Section 7 we focus on average accuracy and average F1-score for brevity.

Considering more general performance indicators of software systems [27], measures that might apply in the structured machine learning context are memory usage and the overall runtime. Taking the overall runtime into consideration does not allow a complete comparison across all learning systems since some of them implement anytime algorithms (which terminate after a pre-set execution time). Thus, even though the overall runtime values are provided with the benchmark results, in Section 7 we only report when systems could not finish within the maximum execution time configured in the overall benchmark settings.

In terms of memory usage, measuring the space complexity of a certain algorithm would be a valuable metric for comparison. However, since the respective algorithms are implemented using different programming languages and execution environments, determining the actual memory usage is not an easy task. First of all, to run a set of such heterogeneous implementations we cannot invoke them inside one single benchmarking system but have to execute the implementations as own processes in their native environments, like, for example, a Prolog interpreter, a Java Virtual Machine etc. This means that specialized memory analysis tools like Valgrind18

Assessing the scalability of a structured machine learning algorithm along the dimensions reported in Section 3 would require a learning problem generator which can create synthetic training data which may differ in terms of its knowledge representation language expressivity, number of schema axioms/rules, number of assertions/facts, number of examples, and the expressivity of the target concept language. We consider the problem of designing such a learning problem generator, which provides freely scalable, sound, meaningful and non-biased learning problems a non-trivial task which was not investigated in depth, yet, and thus requires further research. Hence, we leave this as future work.

To review and collect datasets already proposed or used in other works, we performed an extensive literature review. The five co-authors investigated publications that appeared in major conferences and journals related to structured machine learning in the past 10 years. An overview of the conferences and journals covered is given in Table 2.

Conferences and journals with number of surveyed papers (#P) and candidate datasets (#C)

Conferences and journals with number of surveyed papers (#P) and candidate datasets (#C)

The actual review was performed by either reviewing the accepted papers as linked in the conference schedule or in the corresponding proceedings and journal issues. The papers were scanned for relevant learning tasks involving datasets that are suitable for our benchmarking approach. The following selection criteria were used in order to determine whether a learning task is relevant:

Paper is available One first requirement was to be able to access an electronic version of the paper on the Web. This included PDF versions of the accepted submissions that were made available on the conference website as well as papers provided by the publishers of the corresponding conference proceedings or journals. All considered publications met this criterion.

Availability of the dataset A major requirement regarding the actual data was its accessibility on the Web to be able to investigate its suitability. In the easiest case downloads and further information were provided on dedicated Web pages. However, frequently datasets were just referred to by name. In such cases we considered a dataset available if we could find an entry via a search engine or in one of the major public machine learning dataset repositories with an unambiguously matching name, file name or description. If datasets were only indirectly referenced by pointing to other publications introducing or using them, we considered them available if we could find a corresponding Web site after (transitively) following and reviewing the referenced papers. Although the portion of datasets actually available varies among the considered literature sources and time, we observed that only a small fraction of approximately 40% of the datasets were accessible, whereas the majority of them stems from benchmark dataset repositories.

Structure of the dataset Since the main aim of our framework is to provide benchmark scenarios for inductive learning tools working on structured logical representations, we focused on datasets that contain logical relations between single data entries or attributes. Hence, flat datasets mainly describing data with numeric attributes were not considered. However, data that does not comply with this requirement, but could easily be enriched with other structured data, was also further investigated in our review. An example of such a case would be data from clinical trials that could be linked to the Gene Ontology19

Dataset size A further requirement was that a candidate dataset should be sufficiently complex in terms of its size. The main aim behind this requirement was to not just provide small toy examples that will show only negligible differences between the benchmarked tools. Ideally, datasets should cover non-synthetic, real world problems to prove the practical applicability of tools obtaining high SML-Bench scores.

Derivable inductive learning problems The last requirement was that the described dataset represents an inductive learning scenario, or that such a scenario could be derived trivially. This means that a supervised machine learning task with positive and (optionally) negative examples is provided or can easily be constructed. In the latter case the dataset must describe certain entities that can be distinguished through relations or data values contained or added to the dataset by means of external data sources. If no example data is given entities from one of those distinguishable sets of entities need to be sampled to serve as positive examples. Negative examples can then be drawn from the remaining distinguished sets. A simple illustration for this procedure is given in case of the Premier League dataset (described below) which provides statistics of soccer players. Here to form one particular learning scenario the positive examples were taken from the set of goal keepers whereas the negative examples were constructed randomly drawing from the set of non-goal keepers, i.e. outfield players. Of course the respective distinctive feature, i.e. a soccer player’s field position, need to be removed from the dataset afterwards to avoid trivial solutions.

The review was performed in two rounds: In the literature review phase candidate datasets are selected based on their description in the corresponding paper or after briefly checking the actual data. The publications were then marked as either not, maybe, or likely containing suitable datasets. In the candidate review round all papers maybe or likely containing suitable datasets were examined in depth. Here we tried to download the datasets and assessed them manually exploring the raw data (e.g. in a text editor or via SQL queries after loading it into a database).

Overall 6890 publications were reviewed and 160 candidate datasets selected. From the datasets that were found and could be used for our framework, data conversions and adaptions were performed to work with all tools, if necessary. Besides common and simple formats like CSV or relational databases, data was also provided in special file formats like the Chemical Table file (CTfile).21

As of March 2018, 9 out of 78 datasets were converted and integrated in SML-Bench. Due to the high effort to prepare benchmarks of good quality, including the configuration and verification in the participating inductive learning programs, this is an ongoing task for which we also acknowledge support from the community. The datasets were then labelled with the initial version tag v0.1 and added to our learning task repository. After internal reviews and discussions or external user feedback this version number can be increased to allow a unique reference to the dataset and represent its maturity.

Apart from conference and journal publications, we also investigated public machine learning dataset repositories mentioned in the reviewed literature. The investigated repositories are summarised in Table 3. If possible, we pre-filtered the repository to classification datasets, ignoring regression or clustering use cases. However, we also examined all datasets that were not grouped into any of those categories or could not be pre-filtered. Most of the repositories provide data that is mainly numeric and lacks a sufficiently deep structure. More deeply structured graph or network datasets, however, usually only provide one kind of edge which would translate into having, e.g. just one Prolog predicate or OWL property in the whole knowledge base, and thus would render those use cases unrealistic for structured machine learning approaches. Another criterion that was rarely met was to have a dataset that is sufficiently large to serve as a benchmark task. For example, even though the DL-Learner repository comes with a lot of learning scenarios that are perfectly tailored for the inductive learning tasks on structured data, most of them would be too simple to distinguish a field of state-of-the-art structured machine learning algorithms. Thus, overall only a very small portion of datasets were considered suitable for our benchmarking purposes.

Surveyed benchmark dataset repositories with their number of datasets (#D) as of March 2018, and the number of candidates (#C) that could be used in our framework

An overview of datasets that are part of the SML-Bench framework is given in Tables 4 and 5.

Overview of the datasets that are part of the SML-Bench framework

Overview of the OWL versions of the datasets that are part of SML-Bench with their number of axioms (#A), classes (#C), object properties (#O), datatype properties (#D) and expressivity (Expr)

The datasets Lymphography, Mammographic, Pyrimidine and Suramin are rather small in terms of their instance and schema data. They have a very low expressivity and mainly differ in their number of examples with Suramin having the fewest (16), followed by Pyrimidine (40), Lymphography (148), and Mammographic (961).

Overview of the SML-Bench framework. Learning problems (

Mutagenesis, Hepatitis and Carcinogenesis can be considered as ‘medium size’ datasets w.r.t our benchmarking repository. While the OWL representation of the Mutagenesis dataset also shares the very simple DL family

The most complex dataset w.r.t. the expressivity of its OWL representation is NCTRER. In size, however, it can also be considered medium, be it in terms of schema and instance data, or w.r.t. the number of examples.

The biggest dataset we provide is Premier League. It provides a lot of different statistics which are expressed through an extensive (though still simple) schema and comprehensive instance data.

Further information about the datasets (like their origin, size, etc.) can be found in the dataset description files in the SML-Bench GitHub repository. The dataset descriptions are provided as RDF data and can be found in the respective knowledge representation language directory for each learning task.22

E.g.

With SML-Bench our aim is to provide a framework which is open and extensible but already comes with predefined benchmark scenarios and presets for relevant tools, thus being ready for use. The core framework is developed in the Java programming language and is intended to be used via a command line interface. However, the system can easily be extended to support graphical user interfaces. SML-Bench is provided as free software and accessible on the Web.23

Architecture The overall architecture of SML-Bench is shown in Fig. 1. The framework’s main building blocks are the tools to execute during a benchmark run and the benchmark scenarios. SML-Bench provides means to connect a set of inductive learning tools with such scenarios to run the evaluation on. This overall setting is held in a benchmark configuration and the framework will take care of providing the tools with the required data, performing the benchmark and collecting the results.

To support a wide range of tools and the introduction of own inductive learning implementations the SML-Bench framework follows a lightweight extensibility approach. Based on the relations between benchmark scenarios, their background knowledge, utilised KR languages, and benchmarked inductive learning systems we define some conventions which allow the extension of the framework with new use cases and tools without any changes in the code base or further wiring.

Benchmark scenarios To better structure scenarios and allow different benchmark variations based on the same data we distinguish between learning tasks and learning problems. Learning tasks define the actual background knowledge the benchmark is run on for learning problems. Learning problems are thus learning task-specific and comprise a set of positive and (optionally) negative examples, as well as optional tool settings dedicated to the given example declarations (cf. Fig. 1). Accordingly, varying example constellations or tool configurations are realised as separate learning problems.

In our ‘convention over configuration’ approach the files containing the background knowledge for a learning task A, given in a knowledge representation language L, are expected to reside in the directory path learningtasks/

An individual learning problem P can be defined adding a file containing positive examples and an optional file containing negative examples to a directory named learningtasks/A/L/lp/

Benchmarked tools A similar approach is followed to integrate inductive learning tools into SML-Bench. To make a tool X available to the benchmark framework, a corresponding directory has to be created at learningsystems/

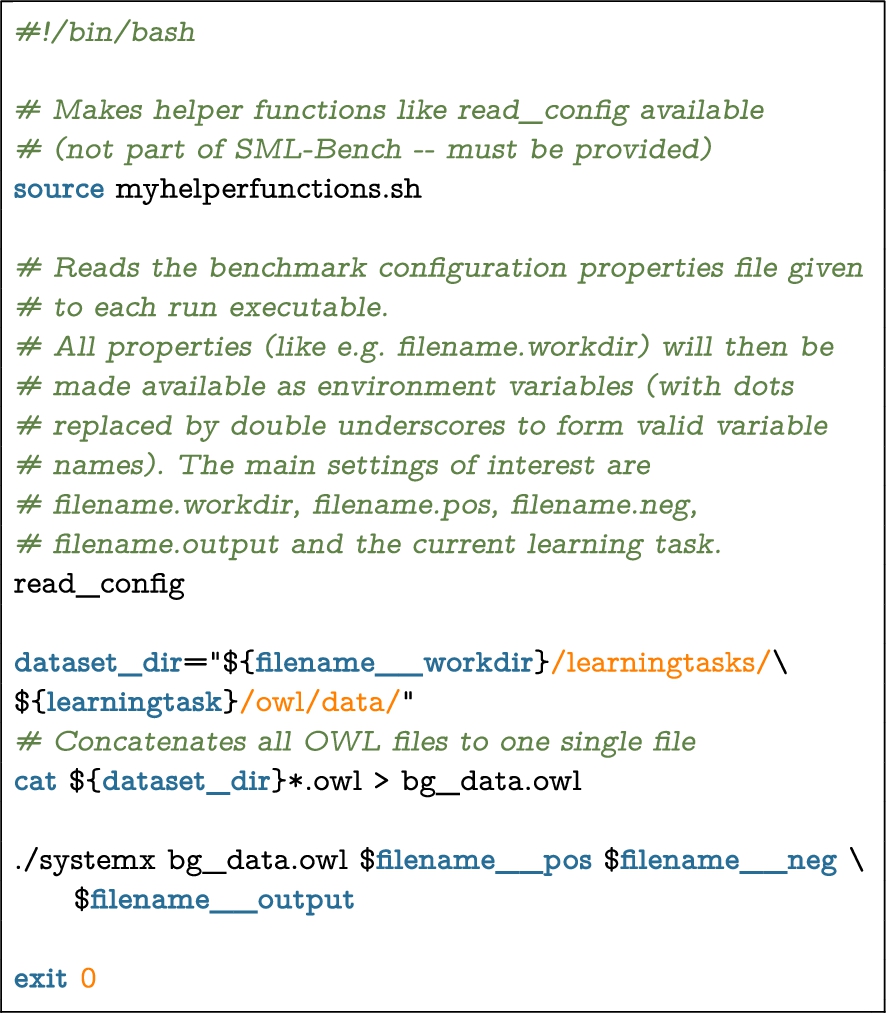

To illustrate the extensibility we consider an imaginary learning system with the main executable systemx which takes the file paths to the OWL background knowledge, positive and negative examples as input and generates an OWL class expression written to an output file specified as last argument. A possible run shell script adapting this system to SML-Bench is sketched in Listing 1. In this example the result written to the output file is a (tool-specific) class expression string like e.g. hasStructure some (not (Amine)). In the next step the validate script will be invoked which parses this string, again reads files containing positive and negative examples, and feeds the background knowledge files into an OWL reasoner. This OWL reasoner will then be used to assess the result class expression in terms of provided positive examples being an instance of it (true positives, tp), positive examples not being an instance of it (false negatives, fn), negative examples being an instance of it (false positives, fp), and negative examples not being an instance of it (true negatives, tn). The expected output of this script will be a file with the counts for the respective classification category as shown in Listing 2. Based on those numbers measures like accuracy, F-score etc. are computed.

Example run script.

Example validation result file.

Benchmark settings To generate a custom benchmark on a selection of tools and learning problems, a global configuration has to be provided defining which tools to run, which learning problems to tackle, and optionally, additional benchmark-specific tool configurations. The framework then executes the run and validate executables with the corresponding configurations of all selected tools. SML-Bench supports arbitrary train-test splits, n-fold cross validation, as well as running the training and validation on the whole set of examples.24

Though uncommon in machine learning, this was a requirement in a 3rd party use case for a separate feature extraction phase over the example set.

Example configuration of the SML-Bench benchmark runner.

SML-Bench supports the generation of semantic descriptions of a benchmark setup based on the MEX vocabulary [6]. Such descriptions do not only comprise general configuration issues as shown in Listing 3 but cover all the details to comprehend the benchmark settings in detail. This includes detailed specifications of the tools executed together with their runtime configurations, details about the data used in the benchmark and the actual evaluation results.

Available learning systems In its current state SML-Bench supports eight inductive learning tools, collected during our literature review. A tool was introduced into SML-Bench as learning system if it implements a published inductive learning algorithm, if it is freely available and sufficiently documented.

The oldest of the available learning systems is the classic ILP tool Golem [21] which was published in the year 1990, implementing a Relative Least General Generalisations-based induction approach. Golem supports a Prolog-based knowledge representation language. Another, slightly more recent ILP tool called Progol [20] uses inverse entailment to derive covering clauses based on examples and background knowledge given as Prolog-like logic programs. An ILP tool completely implemented in Prolog is Aleph which supports a number of ILP algorithms. Similarly, the General Inductive Logic Programming System (GILPS) comprises several Prolog programs realising different inductive learning approaches. FuncLog [24], one tool of the GILPS collection, is specialised in learning on Head Output Connected learning problems. Besides this, the tools TopLog [23] and ProGolem [22] which are based on Top Directed Hypothesis Derivation and Asymmetric Relative Minimal Generalisations, respectively, are also part of GILPS and supported in SML-Bench.

On the description logics-based knowledge representation field, several algorithms are integrated in one tool: DL-Learner [15] is a framework for inductive learning on RDF and OWL-based background knowledge. It supports a wide range of algorithms, including refinement operator based algorithms and evolutionary inspired approaches, as well as different OWL profiles.

In terms of Statistical Relational Learning (SRL) tools, we considered RapidMiner25

See

Evaluation results of an SML-Bench benchmark run. All tools were run with a maximum execution time of 5 minutes. Reported are the

To give a general impression of our framework, we ran SML-Bench with all provided learning systems on all available learning problems, excluding Suramin due to its small number of examples. We applied 10-fold cross validation and executed all learning systems in their default settings except the DL-Learner running OCEL which requires a noise value to be set to allow a certain number of misclassifications. Thus, we set the noisePercentage parameter to 30. Leaving the learning systems unconfigured will not show their optimal performance, but will rather reflect whether a tool performs well ‘out-of-the-box’ and whether the executed algorithms fit the particular learning scenarios. This also means that highly specialized algorithms might not work well on all of the learning scenarios, or even, that our learning problems do not have certain, expected characteristics an algorithm requires to function well. Following this approach our main aim is to assess whether SML-Bench can provide a meaningful benchmarking environment. To consider the provided set of benchmarking tasks meaningful we would expect to see that 1) not all learning problems can be ‘solved’ easily in default settings 2) the learning problems are able to distinguish the field of competitors, i.e. that they point out certain strengths and weaknesses of the learning systems under assessment 3) the learning problems are diverse enough to address different strengths and weaknesses of the learning systems under assessment.

In the case of DL-Learner and TreeLiker we executed a set of available algorithms introduced in the following. The OWL Class Expression Learner (OCEL) algorithm, which is part of the DL-Learner, is a refinement operator-based learning algorithm using heuristics guiding the search. An evolution of OCEL which is more biased towards short and human readable concepts is the Class Expression Learning for Ontology Engineering (CELOE) algorithm [16]. A third implementation provided by the DL-Learner framework is the EL Tree Learner (ELTL) algorithm which is restricted to OWL EL as target concept language.

The TreeLiker tool can be configured to utilize a block-wise construction of tree-like relational features (RelF) [14], a hierarchical feature construction (HiFi) [13], or a Gaussian Logic-based algorithm (Poly) [11] for classification. These three algorithms can also be run in a grounding-counting setting (GC) considering the number of examples covered by a generated feature during learning. Since the TreeLiker works on a Prolog-like knowledge representation language that does not support certain Prolog expressions, we could not assess it on the Mutagenesis and NCTRER datasets.

We set an overall maximum execution time of 300 seconds and executed all tools sequentially. The benchmark was performed on a machine with 2 Intel Xeon ‘Broadwell’ CPUs with 8 cores running at 2.1 GHz with 128 GB of RAM. A benchmark description based on the MEX RDF vocabulary can be found at

Evaluation results of an SML-Bench benchmark. All tools were run with a max. execution time of 5 minutes. Reported are the average F

1

-score and its standard deviation of 10-fold cross validation

Evaluation results of an SML-Bench benchmark. All tools were run with a max. execution time of 5 minutes. Reported are the

One first observation we made is that we seem not to have a suitable learning scenario which would benefit from FuncLog’s specialization in learning with head output connected predicates. Since it did not return learned rules on any of the learning problems we did not list FuncLog in Tables 6 and 7.

Another observation is that Aleph, CELOE and OCEL already provide default settings which work well on the learning problems and lead to very good results on mutagenesis/42, premierleague/1 and pyrimidine/1.

Taking into consideration that Golem was implemented in the 1990s one possible explanation for its lower performance could be that the default settings reflect hardware expectations in terms of available memory and computing power that are now superseded. This might also apply to constants defined in Golem’s source code. Hence, adjusting settings to current hardware capabilities might make a considerable difference here. A similar argumentation might apply for Progol. Besides this Golem’s mode declarations do not provide means to express explicitly which predicates should appear in the head of a learned rule. This might be an explanation for some of the results that do not provide a description for the given examples, at all, as in the case of nctrer/1 where we observed learned rules like bound_atom(A,B) :- first_bound_atom(A,atom_232_2) that should actually characterize molecules, i.e. positive examples like molecule(molecule13).

Progol and ProGolem appear to be overly curtailed by the restricted execution time. In those cases, the algorithm itself may be very suitable for the learning problems but increasing the time limit further would lead to prohibitive runtimes of the evaluation scenario. Currently, the maximum runtime is approximately 100 hours (10 folds × 8 learning problems × 15 configured learning systems × 5 minutes).

In their default settings, the GILPS tools ProGolem and TopLog often returned identical results. Even though we can only report a low performance on all the learning problems, the authors of ProGolem and TopLog published experiments showing much better results on Carcinogenesis, Pyrimidine and Mutagenesis [22,23]. This might emphasize that a proper configuration substantially impacts the tool’s performance, or suggest that differing versions of the respective datasets were in use.

For the TreeLiker algorithms we can also observe that different settings might give identical results. The low performance can be attributed to the fact that TreeLiker is a collection of feature construction algorithms and to the way we use it in our benchmark framework. Since the TreeLiker algorithms usually produce a high number of features we currently only consider the best one for our evaluation. This might not fully exploit TreeLiker’s capabilities and we are in contact with one of the tool authors to improve this.

Even though the premierleague/1 learning problem is large in terms of background knowledge with more than 200 thousand axioms, Aleph was able to learn almost perfect results. For the TreeLiker we gradually increased the maximum available JVM heap size up to 10 GB. Increasing it even further might also give results for its algorithms. However, since other Java implementations could generate results with 2 GB of available maximum heap size, we stopped there.

Overall, the evaluation supports our initial expectations. However, we also have to admit that some of the learning systems need to be adjusted properly to provide competitive performances. This will be discussed in the following.

With SML-Bench we built a benchmarking framework that is extensible and comes with a set of initial scenarios to evaluate arbitrary inductive learning tools. As shown in the previous section, the provided learning problems are able to discriminate the performance of different learning systems but are not complete in the sense that we are lacking some datasets that are tailored for particular capabilities of certain tools (in particular FuncLog). We also believe – and verified this in some cases manually – that most of the results can be improved by spending more effort in configuring the learning systems, hence generating more competitive results. We already tried to get in contact with the tool authors to support this, but only got a reply from one of the TreeLiker developers. In the future, we may allow an explicit parameter tuning phase, e.g. via nested cross validation or explicit tuning examples, in our benchmark for systems which are capable of this functionality. Apart from the issues revolving around system configuration, the literature survey and the actually converted datasets have shown that datatype properties are widely used in many learning scenarios. However, the tools currently do not fully exploit this part and focus more on the structured components. In this sense, the tools would benefit more from deeper structures in the datasets. To this end, we will work on further enriching datasets in this direction if possible. Of course doing so requires considerable effort and extensive domain knowledge (e.g. in chemistry or genetics). Through the community feedback we obtain, we will continuously extend and refine the learning problem library. SML-Bench is part of a funded research programme and benchmarking challenges will be presented in a series of (yet to be finally determined) venues. We will use those as a feedback channel.

A further issue that needs to be discussed is the representation of knowledge in different KR languages. Most of the design decisions for the dataset conversions were made individually, since an automatic conversion would not yield a satisfactory modelling result exploiting the strengths of Prolog or different OWL profiles even in cases when it is theoretically possible. This can potentially lead to a bias since particular modelling choices may lead to different solutions provided by the tools. While we acknowledge this problem, we do not see a straightforward solution and also believe that to some extent this could spur some competition in terms of finding appropriate KR languages or dialects to support in inductive learning.

In our opinion, the availability of SML-Bench will improve the state of the art for symbolic machine learning from expressive background knowledge in the next years. While many efforts in this field date back to the early 90s, only in the past years the availability of data has increased significantly. However, it was still challenging for individual researchers or small groups to perform a comprehensive benchmark. We believe to have closed this gap, which could in turn lead to similar improvements as we have seen for question answering, link discovery and query performance for RDF.

Conclusion and outlook

In this paper, we have presented SML-Bench – a benchmarking framework for structured machine learning. We performed a systematic literature survey to obtain relevant benchmark datasets. Overall, we analysed 6890 papers, which led to 160 candidate learning problems. For 9 of those, we converted them across the used KR languages and set them up for all learning systems. Currently, 8 learning systems are integrated with two further inclusion requests in the works. A first analysis presented here has identified some shortcomings of individual tools. Generally, we believe that a mature research area requires a benchmark to evolve further. In particular, we want to contribute to bringing the Semantic Web and machine learning areas close together. We also aim to reduce the boundaries of knowledge representation languages as well as the research communities behind them. We further envision that SML-Bench could in the future evolve into a central hub for comparing suggested tool settings, learning problems, and performances.

We will perform further analysis and regular benchmarking runs in the scope of the HOBBIT project28

Another direction for future work is the integration of means to import MEX machine learning experiment descriptions to generate benchmark configurations. In combination with our MEX export function this would allow to load experiments from other researchers or share own benchmark settings.

Footnotes

Acknowledgements

This work was supported by grants from the EU FP7 Programme for the projects QROWD (GA no. 732194), SLIPO (GA no. 731581), HOBBIT (GA no. 688227) as well the German BMWi project SAKE.