Abstract

The ability to compare systems from the same domain is of central importance for their introduction into complex applications. In the domains of named entity recognition and entity linking, the large number of systems and their orthogonal evaluation w.r.t. measures and datasets has led to an unclear landscape regarding the abilities and weaknesses of the different approaches. We present

Keywords

Introduction

Named Entity Recognition (NER) and Named Entity Linking/Disambiguation (NEL/D) as well as other natural language processing (NLP) tasks play a key role in annotating RDF knowledge from unstructured data. While manifold annotation tools have been developed over recent years to address (some of) the subtasks related to the extraction of structured data from unstructured data [20,28,38,40,42,48,52,59,62], the provision of comparable results for these tools remains a tedious problem. The issue of comparability of results is not to be regarded as being intrinsic to the annotation task. Indeed, it is now well established that scientists spend between 60 and 80% of their time preparing data for experiments [23,30,47]. Data preparation being such a tedious problem in the annotation domain is mostly due to the different formats of gold standards as well as the different data representations across reference datasets. These restrictions have led to authors evaluating their approaches on datasets (1) that are available to them and (2) for which writing a parser and an evaluation tool can be carried out with reasonable effort. In addition, many different quality measures have been developed and used actively across the annotation research community to evaluate the same task, creating difficulties when comparing results across publications on the same topics. For example, while some authors publish macro-F-measures and simply call them F-measures, others publish micro-F-measures for the same purpose, leading to significant discrepancies across the scores. The same holds for the evaluation of how well entities match. Indeed, partial matches and complete matches have been used in previous evaluations of annotation tools [11,57]. This heterogeneous landscape of tools, datasets and measures leads to a poor repeatability of experiments, which makes the evaluation of the real performance of novel approaches against the state-of-the-art rather difficult.

Thus, we present This paper is a significant extension of [64] including the progress of the

In the rest of this paper, we explain the core principles which we followed to create GERBIL and detail our new contributions. Thereafter, we present the state-of-the-art in benchmarking Named Entity Recognition, Typing and Linking. In Section 4, we present the

Insights into the difficulties of current evaluation setups have led to a movement towards the creation of frameworks to ease the evaluation of solutions that address the same annotation problem, see Section 3.

FAIR principles and how Gerbil addresses each of them

FAIR principles and how

To ensure that the Available at

After the release of

Finally,

Named Entity Recognition and Entity Linking have gained significant momentum with the growth of Linked Data and structured knowledge bases. Over the past few years, the problem of result comparability has thus led to the development of a handful of frameworks.

The BAT-framework [11] is designed to facilitate the benchmarking of NER, NEL/D and concept tagging approaches. BAT compares seven existing entity annotation approaches using Wikipedia as reference. Moreover, it defines six different task types, five different matchings and six evaluation measures providing five datasets. Rizzo et al. [52] present a state-of-the-art study of NER and NEL systems for annotating newswire and micropost documents using well-known benchmark datasets, namely CoNLL2003 and Microposts 2013 for NER as well as AIDA/CoNLL and Microposts2014 [4] for NED. The authors propose a common schema, named the NERD ontology,6

Over the course of the last 25 years several challenges, workshops and conferences dedicated themselves to the comparable evaluation of information extraction (IE) systems. Starting in 1993, the Message Understanding Conference (MUC) introduced a first systematic comparison of information extraction approaches [60]. Ten years later, the Conference on Computational Natural Language Learning (CoNLL) offered the beginnings of a shared task on named entity recognition and published the CoNLL corpus [61]. In addition, the Automatic Content Extraction (ACE) challenge [17], organized by NIST, evaluated several approaches but was discontinued in 2008. Since 2009, the text analytics conference has hosted the workshop on knowledge base population (TAC-KBP) [37] where mainly linguistic-based approaches are published. The Senseval challenge, originally concerned with classical NLP disciplines, widened its focus in 2007 and changed its name to SemEval to account for the recently recognized impact of semantic technologies [31]. The Making Sense of Microposts workshop series (#Microposts) established in 2013 an entity recognition and in 2014 an entity linking challenge focusing on tweets and microposts [55]. In 2014, Carmel et al. [6] introduced one of the first Web-based evaluation systems for NER and NED and the centerpiece of the entity recognition and disambiguation (ERD) challenge. Here, all frameworks are evaluated against the same unseen dataset and provided with corresponding results.

Architecture overview

Overview of

Experiments run in our framework can be configured in several manners. In the following, we present some of the most important parameters of experiments available in

Experiment types

An experiment type defines the problem that has to be solved by the benchmarked system. Cornolti et al.’s [11] BAT-framework offers six different experiment types, namely (scored) annotation (S/A2KB), disambiguation (D2KB) – also known as linking – and (scored respectively ranked) concept annotation (S/R/C2KB) of texts. In [52], the authors propose two types of experiments, highlighting the strengths and weaknesses of the analyzed systems. Thereby, performing

We implement 8 types of experiments:

With this extension, our framework can now deal with gold standard datasets and annotators that link to any knowledge base, e.g., DBpedia, BabelNet [45] etc., as long as the necessary identifiers are URIs. We were thus able to implement 37 new gold standard datasets, cf. Section 4.4, and 15 new annotators linking entities to any knowledge base instead of solely to Wikipedia, as in previous works, cf. Section 4.3.1. With this extensible interface,

Matching

A matching defines which conditions the result of an annotator has to fulfill to be a correct result, i.e., to match an annotation of the gold standard. An annotation has either a position, a meaning (i.e., a linked entity or a type) or both. Therefore, we can define an annotation

The first matching type

For the D2KB experiments, matching is expanded to

The strong annotation matching can also be used for A2KB and Sa2KB experiments. However, in practice this exact matching can be misleading. A document can contain a gold standard named entity, such as,

However, the evaluation of whether two given meanings are matching each other is more challenging than the expression

The key insight behind the solution to this problem in

Schema of the four components of the entity matching process.

Second, the URI set retrieval as well as the URI checking cause a huge communication effort. Since our implementation of this communication is considerate of the KB endpoints by inserting delays between the single requests, these steps slow down the evaluation. However, our future developments will attempt to reduce this drawback.

While all cases are taken into account for the normal measures, the

The different classification cases that can occur during the evaluation. A dash means that there is no URI set that could be used for the matching. A tick shows that this case is taken into account while calculating the measure

The different classification cases that can occur during the evaluation. A dash means that there is no URI set that could be used for the matching. A tick shows that this case is taken into account while calculating the measure

To support the development of new approaches, we implemented additional diagnostic capabilities such as the calculation of correlations of dataset features and annotator performance [63]. In particular, we calculate the Spearman correlation between document attributes (e.g., number of persons) and performance (i.e., F-measure) to quantify how the first variable affects the second. Figure 3 shows the correlation between the performance of systems and selected features of the datasets. This can help determine strengths and weaknesses of the different approaches.

Absolute correlation values of the annotators’ Micro F1-scores and the dataset features for the A2KB experiment and weak annotation match (

We describe the exact requirements for the structure of the NIF document on our project website’s wiki, as NIF offers several ways to build a NIF-based document or corpus.

Currently,

entityclassifier.eu: Dojchinovski and Kliegr [18] present their approach based on hypernyms and a Wikipedia-based entity classification system which identifies salient words. The input is transformed to a lower dimensional representation keeping the same quality of output for all sizes of input text.

Overview of implemented annotator systems. Brackets indicate the existence of the implementation of the adapter but also the inability to use it in the live system

Table 3 compares the implemented annotation systems of

Datasets, their formats and features. Groups of datasets, e.g., for a single challenge, have been grouped together. A ⋆ indicates various inline or keyfile annotation formats. The experiments follow their definition in Section 4.2

Datasets, their formats and features. Groups of datasets, e.g., for a single challenge, have been grouped together. A ⋆ indicates various inline or keyfile annotation formats. The experiments follow their definition in Section 4.2

BAT enables the evaluation of different approaches using the AQUAINT, MSNBC, IITB and the four AIDA/CoNLL datasets (Train A, Train B, Test and Complete). With

We capitalize upon the uptake of publicly available, NIF based corpora from the recent years [53,58].31

The extensibility of datasets in

The licenses and instructions can be found at

To describe annotators in a similar fashion, we extended DataID for services. The class

Offering such detailed and structured experimental results opens new research avenues in terms of tool and dataset diagnostics to increase decision makers’ ability to choose the right settings for the right use case. Next to individual configurable experiments,

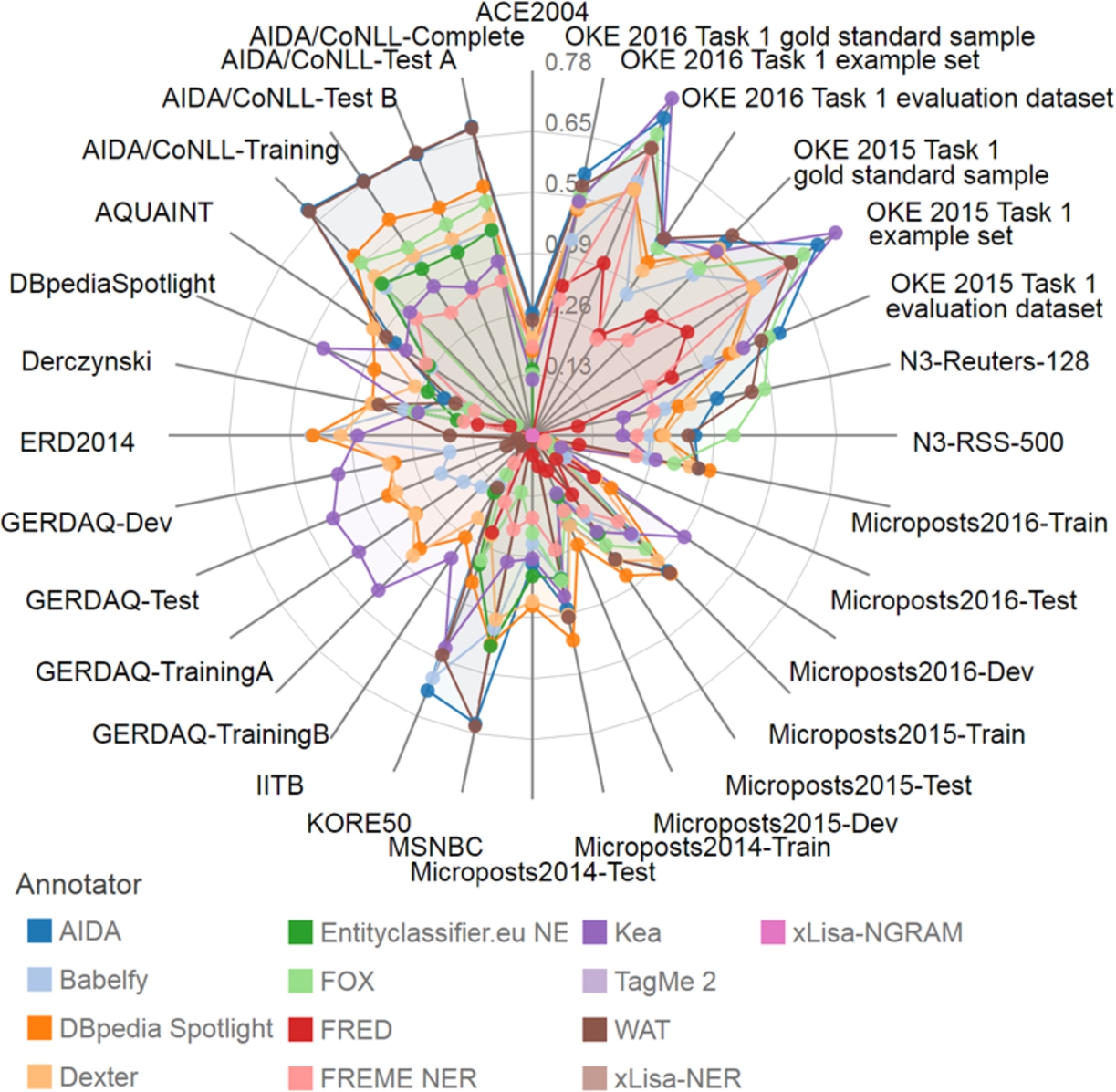

Example spider diagram of recent A2KB experiments with weak annotation matching derived from our online interface (

One of

Number of tasks executed per annotator. By caching results we did not need to execute 12,466 tasks but only 9906. Data taken from 15th February 2015

Number of tasks executed per annotator. By caching results we did not need to execute 12,466 tasks but only 9906. Data taken from 15th February 2015

Furthermore, we evaluated the amount of time that 5 experienced developers needed to write an evaluation script for their framework and how long they needed to evaluate their framework using GERBIL. The comparative times of writing an evaluation script for a system and a single dataset compared to the time needed to write an adapter for

Comparison of effort needed to implement an adapter for an annotation system with and without

In this paper, we presented and evaluated

In the future,

Another development is the further support of developers with direct feedback, i.e., showing the annotations that have been marked incorrect in the documents. This feature has not been implemented because of licensing issues. However, we think that it would be possible to implement it without license violations for datasets that are publicly available.

Footnotes

Acknowledgements

This work was supported by the Eurostars projects DIESEL (E!9367) and QAMEL (E!9725) as well as the European Union’s H2020 research and innovation action HOBBIT under the Grant Agreement number 688227.