Abstract

Remote and objective assessment of the motor symptoms of Parkinson’s disease is an area of great interest particularly since the COVID-19 crisis emerged. In this paper, we focus on a) the challenges of assessing motor severity via videos and b) the use of emerging video-based Artificial Intelligence (AI)/Machine Learning techniques to quantitate human movement and its potential utility in assessing motor severity in patients with Parkinson’s disease. While we conclude that video-based assessment may be an accessible and useful way of monitoring motor severity of Parkinson’s disease, the potential of video-based AI to diagnose and quantify disease severity in the clinical context is dependent on research with large, diverse samples, and further validation using carefully considered performance standards.

INTRODUCTION

The objective assessment of motor severity in Parkinson’s disease (PD) is a major priority not only for the clinical follow up of individual patients and their objective response to drug changes, but also in the evaluation of experimental approaches in clinical trials. Since the COVID-19 crisis emerged, routine in-person assessment of PD severity has become impractical or undesirable in many cases; many older patients diagnosed with PD are considered part of the ‘at risk group’ and have been formally advised to shield [1]. This has resulted in remote/video approaches being encouraged to support patients suffering from chronic illnesses such as PD [2–4] as well as in current clinical trials of PD [5].

Video assessments can also facilitate the evaluation of patients in the absence of their regular dopaminergic medication, which can be useful in the assessment process for treatments such as deep brain stimulation (DBS) surgery, where an “off” and “on” medication assessment is required as standard practice. Avoiding the need to travel to a clinic or hospital in the “off medication” state can be far more comfortable for patients, may reduce the duration of the time they spend in a suboptimal state, and reduce expenses due to travel costs and parking fees.

Alongside this, there have been a number of attempts to develop the automated rating of PD severity using video-recordings and Artificial Intelligence (AI)/Machine Learning techniques. This may provide support to clinicians when identifying and diagnosing disease. Given this growing interest in using a) video based/ remote assessments to assess PD severity, and b) AI rating of PD, this review aims to discuss the major issues that must be recognised as part of the potential role of video assessment and analysis in future management of PD patients, in the context of the growing use of Digital Health Technologies in PD.

CLINICAL TOOLS

The most widely used tool for the assessment of PD is the Movement Disorder Society Unified Parkinson’s Disease Rating Scale (MDS UPDRS). Part 3 of the MDS-UPDRS is the gold standard assessment tool for measuring motor signs of PD [6, 7]. Other validated scales exist for the evaluation of dyskinesia and tremor, but for the purpose of this review, discussion will be limited to the MDS UPDRS part 3, although the same principles may apply to the other scales.

LITERATURE SEARCH

We searched PUBMED, Web of Science and SCOPUS i) from 2000 to 2020 using “Video assessment”, “Parkinson’s disease”, “Telemedicine”, “Telehealth” and “MDS-UPDRS” and ii) from 2016 to 2020 using “Video assessment”, “Artificial Intelligence”, “Machine Learning”, “Automated” and “Parkinson’s disease” “Motor symptoms” as key words. Reference lists from the identified articles were cross-checked to identify any other potentially eligible studies.

STUDY SELECTION

We included observational and experimental studies conducted in PD patients in which i) items of MDS-UPDRS part 3 measured by a clinician through videos, were utilised as at least one of the outcome measures and ii) studies that used motor symptoms of PD measured by a machine learning algorithm relying on video-assessments. We excluded reviews and studies written in languages other than English. All retrieved abstracts were independently screened. The full texts of potentially relevant articles were retrieved for further assessment and were included if they met the above criteria.

CAN PD MOTOR DISABILITY BE ADEQUATELY CAPTURED ON VIDEO?

Direct comparisons of video-based and live evaluations of the MDS-UPDRS

Before considering whether computer vision or machine learning techniques can improve upon traditional human rating of PD patients, it is important to consider the initial impact of using video rather than live face to face examination. Video-based administration of the MDS-UPDRS motor section has been successfully explored as a way to measure motor function of patients with PD [8–14], even among older individuals with PD who have substantial disability [15]. Studies directly comparing video-based and face-to-face scores of the MDS-UPDRS have shown moderate-good agreement, with intraclass correlation coefficients (ICC) ranging from 0.53–0.78 [9, 16].

Closer scrutiny of the studies comparing video-based and face-to-face analyses of PD symptoms reveals poor agreement however for specific elements of the MDS-UPDRS. Whilst studies have shown good agreement between live and video evaluations for scores of postural stability and gait [17], the same investigators have shown poor agreement for items of bradykinesia [10, 17] and tremor [17]. It is thought that because the tasks measuring bradykinesia involve rhythmic and continuous movements, technical difficulties such as poor internet connection, time lags and motion blur could affect accurate scoring [13]. In addition, multiple elements are included in rating bradykinesia, which may add greater complexity to assessing these tasks compared with rating more uniform manifestations such as gait and balance [18, 19]. There are also reports of difficulty rating rest tremor via videos due to the manner of rating tremor amplitude in centimetres, which may not be easily discerned [13].

However, a recent study comparing video and in-person assessments of upper limb function, which utilised a series of standardized measures including motor speed and tremor in 21 patients with PD, found good agreement across all measures, with ICC ranging from 0.75–0.99 [20]. It should be noted here that participants had access to high speed Internet and measures were completed in real time via Skype. Data from the PREDICT-PD study corroborates this view, demonstrating that subtle subclinical signs of PD can still be discerned from video assessments [21]. Whilst much of the research discussed above demonstrates that video assessment can serve the clinical management of PD [8–14], a recent analysis of the STEADY-PD trial has shown that virtual visits involving video-based assessments of motor symptoms, is both feasible and comparable to in-person assessments [15], which suggests that video-assessments may also be of use in clinical trials. The current research into video assessments of PD motor symptoms has shown that video-based assessments can be carried out in patients own homes [12, 22] as well as in clinic [9, 17]. Methodological issues with conducting video assessments in these respective environments are described in Table 1 which also outlines some of the other challenges associated with video assessment of PD motor severity.

Identified challenges and potential solutions associated with rating motor symptoms of Parkinson’s disease via video

It should be noted here that the majority of studies were video telehealth consultations, where video-based MDS-UPDRS part 3 assessments were carried out as a secondary interest [8–16]. Studies conducting formal video-based MDS-UPDRS motor assessments measured only some items of the MDS-UPDRS [20] or did not compare scores with in-person assessments [21]. No study to the best of our knowledge has validated video-assessment against in-person assessment of the MDS-UPDRS.

Incomplete assessment

During video administration of the MDS-UPDRS, there is an inability to perform parts of the motor exam such as assessment of rigidity and postural stability, which require a hands-on assessment. Asking an untrained carer or family member to perform a Pull test to assess postural stability may lead to falling and injury. Many studies investigating video versus live administration of the MDS-UPDRS therefore omit these items from the assessment [9, 15].

The inability to assess patient rigidity may represent a major limitation for video assessment especially among individuals in whom this is a major feature. More importantly, the restricted examination of patients (when limited to the MDS-UPDRS), may not detect the presence of co-morbid signs contributing to a patients’ disability. An example may be a patient with progressively worsening balance due to cervical myelopathy or sensory neuropathy, which may only be evident following examination of tendon reflexes or distal sensory examination. While the MDS-UPDRS score is designed to be used without direct interpretation whether a change in score is due to PD progression or not, day to day clinical evaluation of patients needs to consider whether other explanations may exist for a change in PD severity suggested by MDS-UPDRS part 3 scoring.

Despite these concerns, there are data to show that a modified MDS-UPDRS, in which elements that require a physical exam, including postural stability and rigidity, are excluded from rating, can remain a reliable and valid assessment of motor function. In a secondary analysis of the CALM-PD clinical trial, which compared a modified MDS-UPDRS to the standard motor UPDRS (including all items), found that the modified versus standard UPDRS was cross-sectionally (ICC≥0.92) and longitudinally (ICC≥0.92) reliable and valid [25].

Nonetheless, the use of objective measures such as wearable sensors may be used in conjunction with video assessment, to partially compensate for the missing data from the MDS-UPDRS scores for these items. A recent study with 32 patients with PD found that patient-worn wearable sensors combined with machine learning techniques were able to accurately predict clinician-assigned MDS-UPDRS scores for rigidity in 85.4% of cases [26]. Likewise, wireless accelerometers have been shown to successfully detect postural instability in patients with PD, produce scores that correlate with scores from gold standard assessments, and detect slight postural abnormalities in early PD [26–32]. Consequently, video-based analyses in conjunction with the support of wearable sensors may give us the means to create an ecologically valid clinical picture remotely. Whilst a discussion of wearable sensors is beyond the scope of this article, wearable sensors have a large role in Digital Health Technologies and will be covered in a separate article in this issue.

COMPUTER VISION VIDEO ANALYSIS

Despite the challenges outlined, the recording of movement using video opens the possibility of using AI/Machine Learning techniques to quantitate human movement, which may be potentially useful in the diagnosis of movement disorders such as PD, and their longitudinal assessment. This has the theoretical advantage of improved objectivity and access and therefore improved signal to noise ratio in comparison to clinician ratings, inevitably subject to fatigue and intra- and inter-rater variability.

Computer vision defines humans as articulated objects with parts moving according to these articulation points. Detecting human poses from a single viewpoint presents many challenges given the complexity of human structure. Put simplistically, computer vision uses low level features such as edges, shapes, colour, texture, and combines these with higher level features such as context and motion, prior models of human body parts and enhanced deep learning algorithms to assemble a human body model from a two dimensional image (2D) [33].

Several companies and academic research labs are attempting to develop machine-learning algorithms to aid in the measurement of PD severity. Strategic differences exist between them, either to provide an AI estimate of the modified MDS-UPDRS, to provide an AI estimate of sub-items of the MDS-UPDRS, or to provide an AI estimate of movement fluidity independently of the items incorporated in the MDS-UPDRS. All of these commercial ventures have challenges to overcome. The success of the pose estimation depends on many factors, such as the whole body being captured in the image as well as many additional issues relating to the lighting level, the background, the possible presence of other people, all of which represent major challenges for computer vision to recognise the human pose on a 2D image. Additionally, there are challenges involved for participants unable to follow the instructions appropriately leading to incomplete or inconsistent data.

In a recent study combining video-based analyses with machine learning techniques, severe motion blur and fluency issues with videos made it difficult for the AI system to score aspects of bradykinesia in 60 patients with PD [34]. Rating items of bradykinesia using the MDS-UPDRS requires scoring the fluency and quality of movement, and likewise characterising small amplitude tremor relies on discernment. Work utilising computer vision-based methods should therefore consider that accurate scoring of these items via video relies on the quality of video. Table 2 summarises the progress made by difference commercial approaches to video analysis of PD. Discussion of the machine-learning techniques utilised in the examples presented in Table 2 are beyond the scope of this review, please see [35] for further reading.

Commercial approaches to video-analyses of Parkinson’s disease

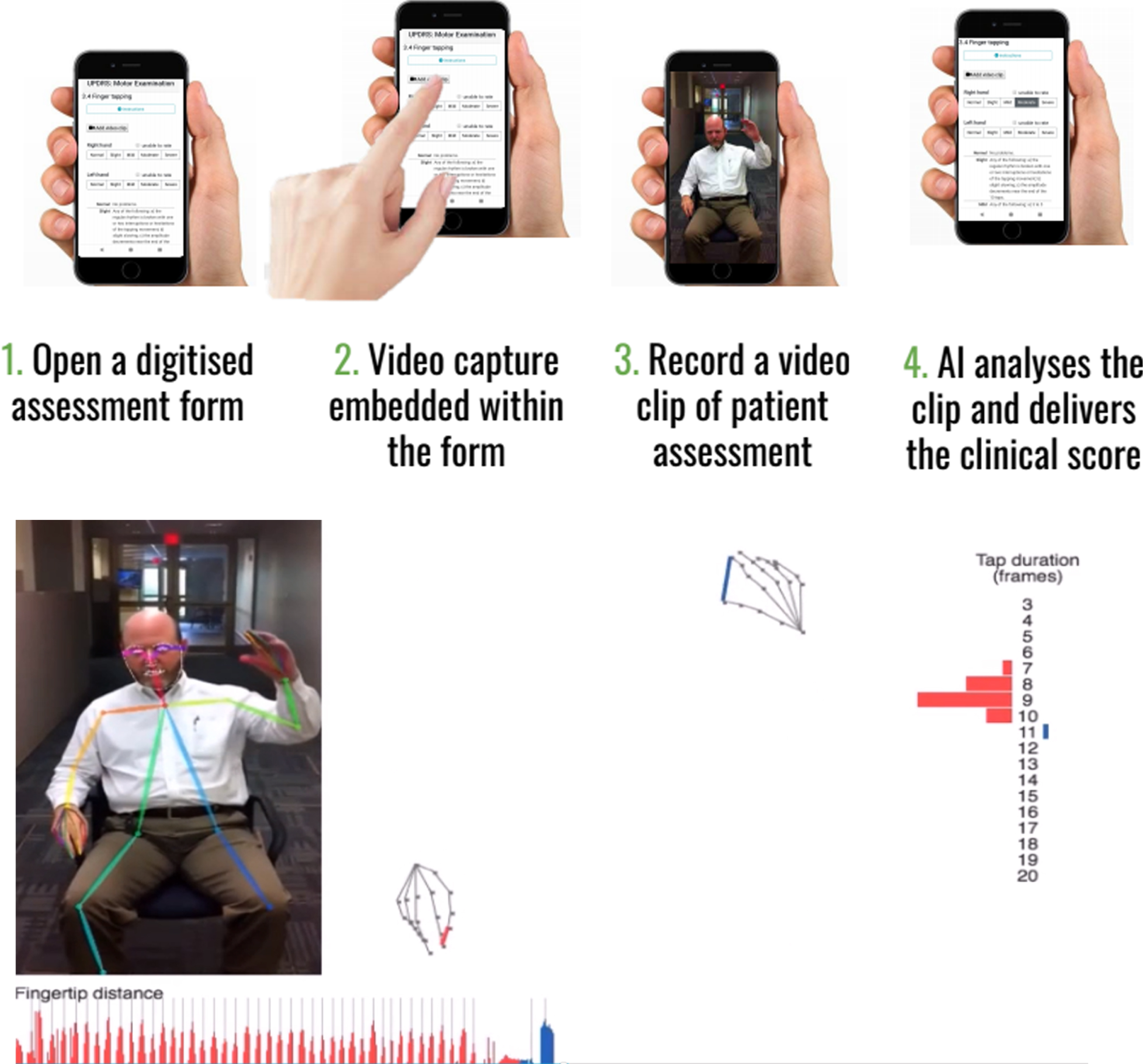

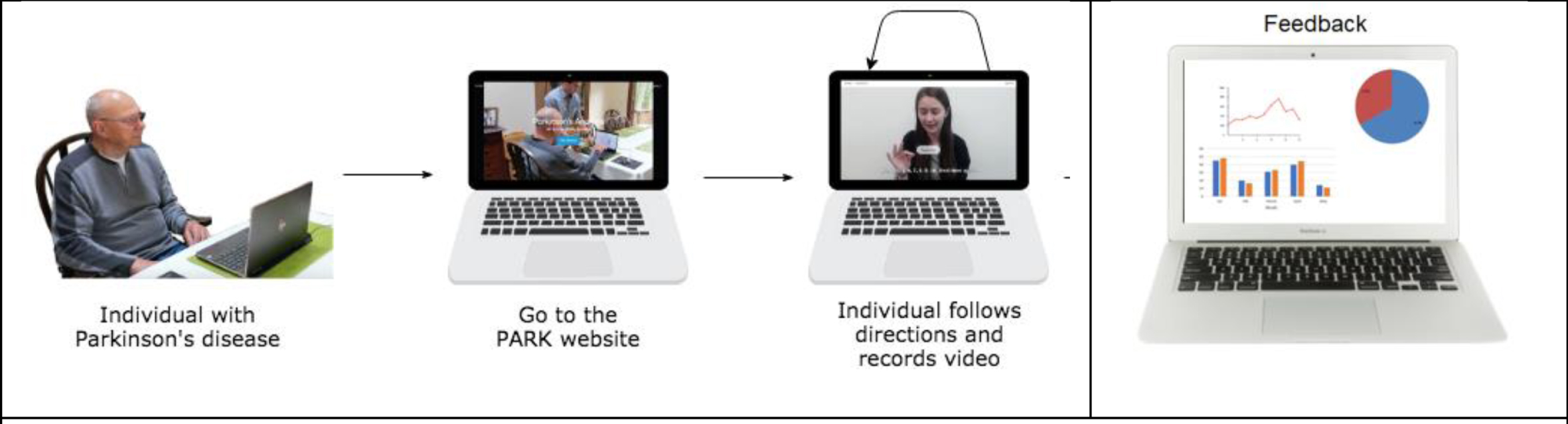

Beyond the demonstration that technical difficulties can be overcome, the interface has to be acceptable to the users (both patients and clinicians) and the data needs to be safely and securely stored (with informed consent) while meeting data protection requirements. Ultimately the analysis pipeline should be as automated as possible while still needing quality control checks to ensure a patient has engaged properly with the appropriate movement item. See Figs.1a and 1b that depict two example processes of using automated video assessment tools.

Example of Kelvin, a platform that allows the user to record 2D videos of patients with PD on any accessible device, and an inbuilt AI system will analyse the clip and denote scores according to items of the UPDRS. Reproduced with permission.

Example of Park, a platform that allows the user to perform the UPDRS score at home. Reproduced with permission.

The development of such tools and applications to an illness such as PD requires extensive model optimisation using data from large numbers of individuals and then complex validation with careful consideration of the gold standard against which the tool should be validated, given that our human clinical skills are intrinsically flawed, and patient performance varies according to fatigue, medication and time of day. In addition, most machine learning techniques such as those described above, are supervised, for example, an AI model dedicated to scoring a video according to the MDS-UPDRS is trained using clinician’s scores of MDS-UPDRS [34]. Therefore, at best, the accuracy of machine learning techniques will be as good as the clinician assessment of MDS- UPDRS, which already presents issues with inter/intra rater variability, thus there is a need to demonstrate greater inter/intra assessment reliability using AI tools which would further the argument that AI can provide truly objective ratings. In addition, a deep learning method (utilised by multiple companies in Table 2), works by training a model using example data that is inputted, to be able to subsequently identify PD from novel data and this heavily relies on large amounts of high quality labelled data, to ensure that the model achieves state of the art accuracy. One caveat with the current research into automated video-based assessments is that often the models are trained using small samples of cognitively intact, predominantly white participants, that are relatively younger, and present milder symptoms of disease (Hoehn and Yahr stage 2) [34, 41], which may bias the automated assessment framework and thus findings cannot necessarily be generalised to the wider population of people living with PD. One study has demonstrated that it is possible to apply automated video-based assessment to an older population with lower cognitive status [42], but this is yet to be demonstrated in the older population of people with PD, who have a more severe disease status.

So far, recent research developing computer vis-ion-based methods for AI analysis have shown success in scoring bradykinesia, gait and facial expressions, as well as AI scores showing good correlation with clinician ratings among these studies [34, 38–44]. At present, the application of AI to diagnosis of movement disorder patients will likely only ever be used in conjunction with human expert movement disorders clinicians, since rigorous validation of AI technology and subsequent regulatory approval would be required. Nevertheless, with computer vision-based analysis of PD there appears to be potential for repeated, longitudinal data collection, with internal consistency.

FUTURE DIRECTIONS

In the future, in addition to video-based assessment to measure MDS-UPDRS-part 3 scores, we may even see more applications of passive sensing towards early diagnosis and possible referral for PD. For example, with users’ permission, any video feeds, e.g., even from videoconferencing sessions could be analysed for subtle variations of micro expressions over time as an early detector of PD. The ethical implications of this are of course potentially myriad. While uncertainty around video quality and the noise in the data diminishes the human performance on measuring MDS-UPDRS score, with recent advances in AI [45], it is possible to reduce the video bandwidth usage to one-tenth, resulting in high quality video despite having low-bandwidth. While many irregularities in the data may appear noise to humans; for an AI, these are patterns. With enough data, the noise can be modelled and successfully decoupled. In addition, future work could consider comparing machine-generated scores with clinician assessments of motor symptoms in blinded OFF and ON medication conditions to assess the AI ability to detect different clinical status in the same patient, which would provide detail into the sensitivity of AI as well as further validate machine-learning techniques for clinical purposes. Furthermore, large datasets representing a wider population across race, gender, geography and socio-economic boundaries would be key in order to facilitate an equitable machine-learning outcome.

CONCLUSION

In summary, the move towards remote video measurement of PD severity has been greatly accelerated by the COVID19 pandemic. As such, it is vital that clinicians and researchers devise a valid and safe way to continue support and monitoring of patients with PD without exposure to infectious risks, while also without losing important details currently captured in face-to-face assessments. Whilst video-based assessment of the MDS-UPDRS presents some challenges, it is likely that remote video capture is an accessible means for neurologists to continually monitor and support patients living with PD. However, this process must also consider the circumstances in which a face-to-face consultation should be triggered, for example, to evaluate the emergence of atypical features of parkinsonism or other causes for deterioration in the clinical signs. At present, whilst the growing use of Digital Health Technologies is full of promise for supporting chronic neurological conditions such as Parkinson’s disease, it seems that using automated video assessments for diagnostic purposes and to accurately quantify disease severity depends on research with large, diverse samples and further validation, in order to best represent and thus be useful for the 10 million people worldwide with Parkinson’s disease at present.

Footnotes

ACKNOWLEDGMENTS

This work was partially funded by the National Institute of Neurological Disorders and Stroke of the National Institutes of Health under Award Number P50NS108676.

CONFLICT OF INTEREST

TF has received grant funding from Cure Parkinson’s Trust, National Institute for Health Research, John Black Charitable Foundation, Michael J Fox Foundation, Van Andel Research Institute, Defeat MSA. TF has received funding from Innovate UK to collaborate in the assessment of Kelvin-UPDRS with Machine Medicine Technologies. He has received honoraria for talks sponsored by Bial, Profile Pharma, Boston Scientific and has served on Advisory Boards for Peptron pharmaceuticals, Handl therapeutics, Living Cell technologies and Voyager Therapeutics.