Abstract

Background:

There is rising interest in remote clinical trial assessments, particularly in the setting of the COVID-19 pandemic.

Objective:

To demonstrate the feasibility, reliability, and value of remote visits in a phase III clinical trial of individuals with Parkinson’s disease.

Methods:

We invited individuals with Parkinson’s disease enrolled in a phase III clinical trial (STEADY-PD III) to enroll in a sub-study of remote video-based visits. Participants completed three remote visits over one year within four weeks of an in-person visit and completed assessments performed during the remote visit. We evaluated the ability to complete scheduled assessments remotely; agreement between remote and in-person outcome measures; and opinions of remote visits.

Results:

We enrolled 40 participants (mean (SD) age 64.3 (10.4), 29% women), and 38 (95%) completed all remote visits. There was excellent correlation (ICC 0.81–0.87) between remote and in-person patient-reported outcomes, and moderate correlation (ICC 0.43–0.51) between remote and in-person motor assessments. On average, remote visits took around one quarter of the time of in-person visits (54 vs 190 minutes). Nearly all participants liked remote visits, and three-quarters said they would be more likely to participate in future trials if some visits could be conducted remotely.

Conclusion:

Remote visits are feasible and reliable in a phase III clinical trial of individuals with early, untreated Parkinson’s disease. These visits are shorter, reduce participant burden, and enable safe conduct of research visits, which is especially important in the COVID-19 pandemic.

INTRODUCTION

Our current model of conducting clinical trials is expensive and has numerous inefficiencies [1]. Drivers include slow recruitment, poor participant retention, and rising infrastructure costs [2, 3]. Additionally, in the setting of the COVID-19 pandemic, in-person visits have largely been halted [4]. Investigators, regulatory agencies, and sponsors are rapidly considering methods to continue trial activities while limiting in-person interactions [5]. To address these concerns, there is increasing interest in the use of remote video-based research visits in the conduct of clinical trials [6–8]. Video-based visits utilize HIPAA-compliant video conferencing software to connect research participants with remote investigators. Outcome measures completed during in-person visits can be modified for conduct during the remote visit.

Prior work has demonstrated the utility of remote research visits in multiple diseases from acne to migraine to neurodegenerative conditions [9–12]. There is substantial interest in remote visits in Parkinson’s disease (PD), and previous studies have demonstrated the feasibility and value of remote research visits in observational studies in PD and other neurodegenerative conditions [10, 13–16]. However, a systematic assessment of such visits within a clinical trial is lacking. Here, we present data from a sub-study of a phase III clinical trial in PD, STEADY-PD III (ClinicalTrials.gov identifier: NCT02168842), with the aims to evaluate the feasibility, reliability, and value of video-based research visits.

METHODS

STEADY-PD III, a randomized, double-blind, placebo-controlled trial, evaluated isradipine as a disease-modifying agent in individuals with newly diagnosed PD. We aimed to enroll 40 individuals concurrently enrolled in STEADY-PD III to complete a 1-year sub-study evaluating remote research visits. The aim of the sub-study was to evaluate the feasibility and validity of conducting (nearly) all assessments completed during in-person visits by a remote study team at a single site. Enrollment criteria for STEADY-PD III have been published previously [17, 18]. Additional enrollment criteria for this study included access to high-speed internet at home. Recruitment was limited to participants from ten of the top enrolling US STEADY-PD III sites.

Participants

Local study staff at enrolling sites briefly discussed the sub-study with participants presenting for their routine study visit. If interested, a coordinator at the University of Rochester contacted the participant by phone to discuss the study and obtain informed consent. Enrolled participants were provided with a Samsung Note 5 tablet (Seoul, South Korea) and a Bluetooth enabled automated blood pressure monitor (A&D Medical; California, USA). Software created by AMC Health (New York, USA) was pre-loaded onto the tablets to allow conduct of a two-way video teleconference and collection and transmission of vital sign readings.

Enrolled participants completed three visits with a study coordinator and investigator at the University of Rochester over 12 months (baseline, 6 months, 12 months), each occurring within approximately 4 weeks of a corresponding in-person visit. During each remote visit, participants completed outcome measures completed at the corresponding in-person visit. Outcome measures included: Unified Parkinson’s Disease Rating Scale (UPDRS) Parts I-IV [19], Movement Disorder Society Unified Parkinson’s Disease Rating Scale (MDS-UPDRS) Parts I-IV [20], Modified Hoehn and Yahr stage [21], Schwab and England Activities of Daily Living [22], Montreal Cognitive Assessment (MoCA) [23], Parkinson’s Disease Questionnaire-39 (PDQ-39) [24], Modified Rankin Scale [25], Columbia Suicide Severity Rating Scale [26], and orthostatic vital signs (blood pressure and pulse). Participants completed a more comprehensive battery of assessments at remote visits that corresponded to “annual” in-person visits (Supplementary Material). Assessments of rigidity and postural instability were not performed or rated; prior work has demonstrated the validity of the modified UPDRS assessment [14]. Participants completed all patient-reported outcome measures following the visit with the investigator using pre-loaded software on the tablet.

Data analysis

We assessed the feasibility of remote visits in a phase III clinical trial in PD by evaluating the proportion of assessments completed in full during the final remote visit, defining 80% assessment completion as the acceptable cutoff. Secondary measures of feasibility included the proportion of remote visits occurring within 4 weeks of an associated in-person visit.

We evaluated the reliability of remote visits by assessing agreement between in-person vs remote assessments. For measures performed at multiple time points, the final remote-performed assessment and corresponding in-person assessment were used. Continuous and essentially continuous variables (e.g., MoCA) were assessed using intra-class correlation (ICC). Agreement for ordinal outcomes (Hoehn and Yahr stage, Schwab and England Activities of Daily Living, modified Rankin scale) was assessed using weighted kappa statistics and observed agreement between in-person and remote assessments.

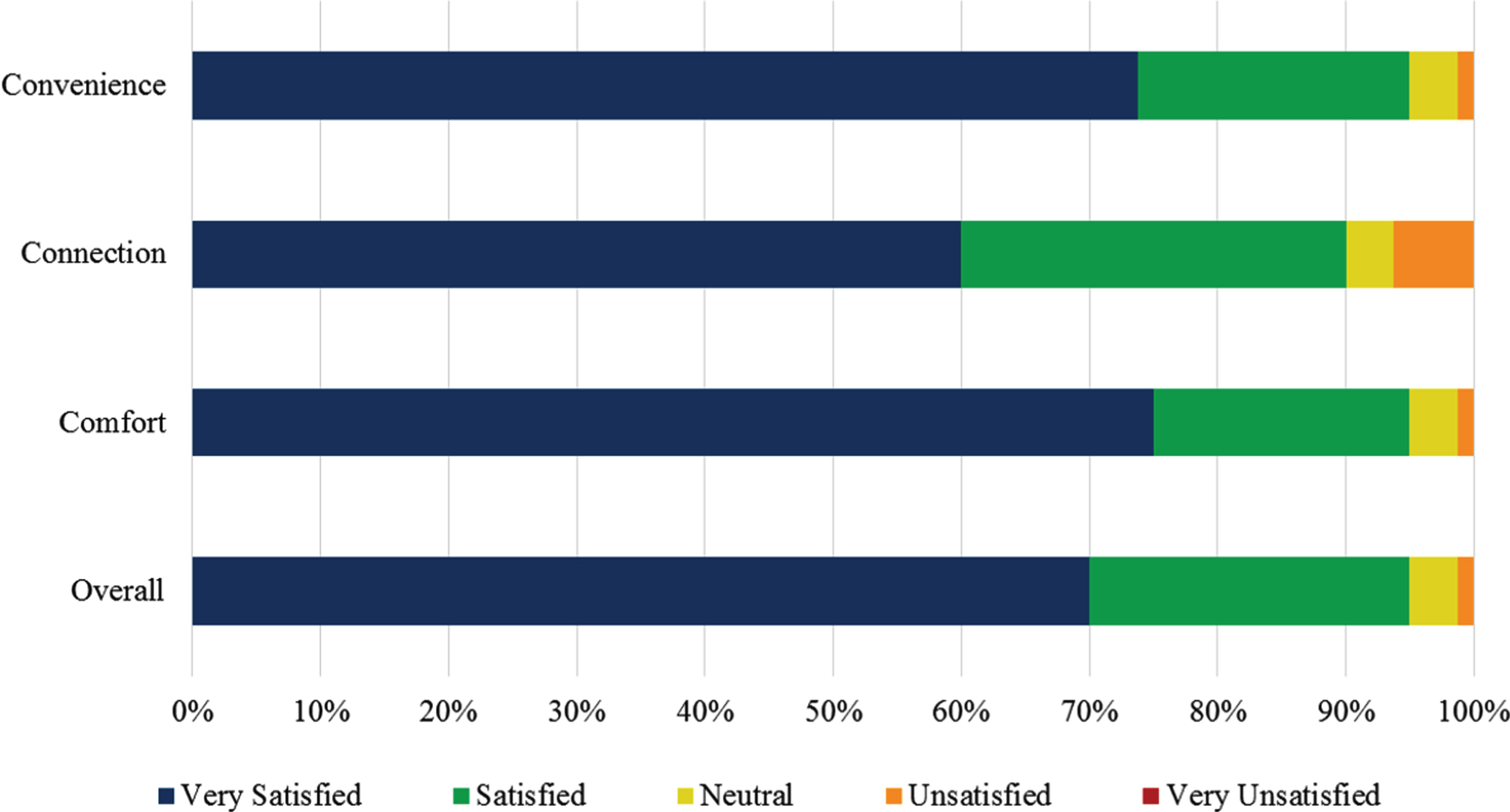

We assessed the value of remote visits with a survey (Supplementary Material). We assessed participant opinions on the comfort, convenience, connection, and overall satisfaction with the remote visit using a 5-point Likert scale (Very satisfied to Very unsatisfied). In addition, there was space for free-text responses with overall themes identified and reported. We assessed participant preference for remote vs in-person visits, travel time and distance to in-person visits, and baseline time in the in-person vs remote visit. We evaluated for statistical significance in participant preference for remote vs in-person visits using a one-sample Chi square test (α= 0.05) and in mean differences in visit time using paired t-tests (α= 0.05).

RESULTS

We screened and enrolled 40 individuals in the study. Among enrolled participants, 38 (95%) completed all three remote visits. The two participants who withdrew did so between the screening and baseline visits; both remained enrolled in the parent study. Demographics and baseline characteristics are presented in Table 1. There were no substantial differences in demographics between participants in this study vs the larger cohort enrolled in STEADY-PD III.

Baseline characteristics as measured during the virtual visit. Values are mean (standard deviation) unless otherwise noted. All motor assessments were performed without rating of rigidity or postural instability

*Patient-reported outcome measure completed on smartphone MDS-UPDRS, Movement Disorder Society-Unified Parkinson’s Disease Rating Scale; UPDRS, Unified Parkinson’s Disease Rating Scale.

Feasibility

Around 92% of scheduled assessments were completed at the final remote visit; the majority of incomplete assessments (80.6%) were patient-reported outcomes that were meant to be completed on the tablet following the visit with the investigator. Mean (standard deviation) duration between virtual vs in-person visits was 24.5 (15.5) days (range 11–151 days); 84.2% of virtual visits occurred within 4 weeks of an in-person visit.

Reliability

Agreement between in-person and remote assessments was highest among the MDS-UPDRS II (ICC = 0.87), MDS-UPDRS IA (ICC = 0.82), and MDS-UPDRS IB (ICC = 0.81). Observed agreement was also high between the remote and in-person modified Rankin Scale (94%) and Schwab and England Activities of Daily Living (92%). We identified moderate agreement between in-person and remote performed motor assessments for both the UPDRS Part III (ICC = 0.51) and MDS-UPDRS part III (ICC = 0.43). Additional reliability assessments are shown in Table 2.

Intra-class correlation coefficients* and weighted kappa statistics (percent agreement)† between in-person and remote-performed outcome measures. All motor assessments were performed without rating of rigidity or postural instability

Value

Satisfaction with the remote visits was high with more than 90% of participants indicating satisfaction with the connection, convenience, comfort, and overall experience (Fig. 1). Participants specifically cited the ease of scheduling, convenience of the visit in their home, comfort with meeting a new investigator, and improved safety with not having to drive without taking symptomatic therapy. While dissatisfaction rates were low, 8 (21%) participants indicated frustration with the software/connection, and 3 (7.9%) participants each indicated frustration with the size of the tablet (too small) and feeling like the remote assessment was incomplete. There was no significant difference in preference for in-person vs remote visits (7 vs 11 participants, p = 0.27) with 18 (52.9%) participants indicating neutrality to the method of assessment. However, 76% of participants indicated that inclusion of remote visits would increase their likelihood of participating in future trials. On average, participants traveled around 88 miles round trip with over 2 hours total travel time to their in-person visit. Remote visits were significantly shorter than in-person visits (mean visit duration 54.3 vs 190.2 minutes, p < 0.001), even excluding travel time (54.3 vs 73.8 minutes, p = 0.003).

Participant satisfaction with remote research visits.

DISCUSSION

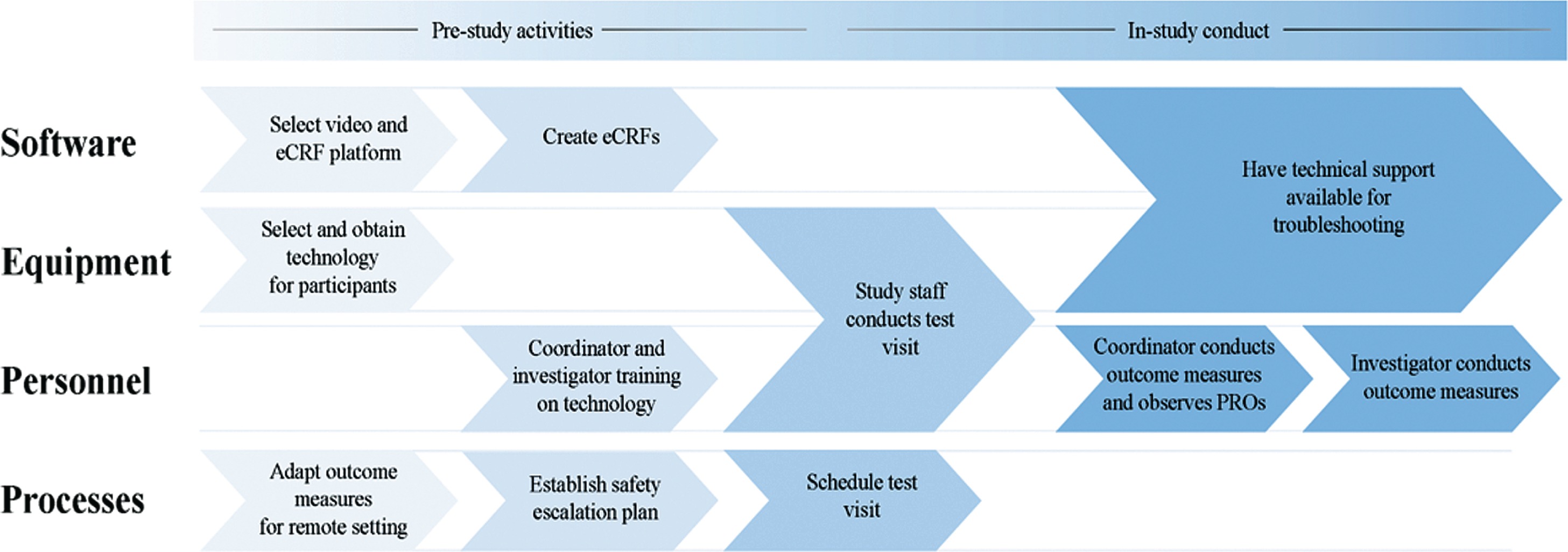

In the setting of the COVID-19 pandemic, the US Food and Drug Administration (FDA) and the European Medicines Agency (EMA) have issued guidance calling for the use of remote, video-based assessments. This small study demonstrates the successful implementation of remote visits in a phase III clinical trial of individuals with early, untreated PD. The visits are feasible, reasonably reliable, and valuable, particularly as COVID-19 limits in-person interactions. Moreover, participants expressed high satisfaction with the visits. While the outcome measures assessed are somewhat specific to PD trials, the feasibility and value of remotely administered assessments are likely translatable to numerous other diseases, and the process we undertook to implement the remote study is broadly applicable today. We present a framework to develop and implement a remote study in Fig. 2

Framework for implementation of a remote clinical trial (eCRF = electronic case report form; PRO = patient-reported outcomes).

Agreement between in-person vs remote motor assessments were lower than expected, likely driven by multiple factors. First, in-person and remote visits were conducted at different time points. We attempted to reduce variability by standardizing motor status (OFF vs ON) between remote and in-person visits and establishing a visit window, but around 15% of remote visits were conducted more than 4 weeks from the corresponding in-person visit. Second, differences in the method of assessment likely introduced variability. While exam features that could not be performed remotely (e.g., rigidity, postural stability) were excluded in the correlation analyses, the ability to assess these features in person may have impacted investigator ratings of other items. Third, kappa statistics were low, particularly for the modified Rankin Scale and Hoehn and Yahr stage, even though the observed agreement was high. This is a known phenomenon that occurs when there is a high proportion of one response in the measurement.[27] In this case, over 85% of participants were rated “No significant disability despite symptoms” on the modified Rankin Scale and over 80% of participants were rated modified Hoehn and Yahr Stage 2. Because of this, additional estimates such as the observed agreement (which were 94% and 76%, respectively) should be considered in addition to the kappa statistic. Finally, different investigators performed the in-person vs remote assessments. Our results are reasonably close to the expected correlation when accounting for both inter-rater and inter-method (remote vs. in-person) reliability of motor assessments [19, 29]. Additionally, while inter-rater reliability is high among trained raters of the motor UPDRS (ICC = 0.82) [19], a subset of in-person investigators tended to rate participants more severely, lowering the correlation values.

This study has some additional limitations. First, the sample is small and relatively homogeneous, and those who elected to enroll likely had more experience with the use of technology than the broader PD population. However, the recent rise in telemedicine use suggests that more research participants and investigators are now familiar with this technology. Second, investigators in our study were located at a single, remote site and differed from the participant’s in-person investigator, which introduced additional variability in the reliability assessments. In future trials, remote assessments could be conducted by investigators at enrolling sites to maximize consistency in assessments, or conducted entirely by remote investigators for participants not linked to a site. While the use of site-based investigators may increase regulatory requirements and site startup needs, with adequate training, the additional burden for sites would likely wane and be outweighed by the benefit for participants and investigators.

Concerns remain about the digital divide and access to technology in the broader implementation of remote visits in research [30]. Our study addressed these concerns by providing the necessary study technology to participants, which allowed standardization of outcome measures on a single device and likely expanded access. However, these benefits need to be balanced with the potential burden of introducing an unfamiliar device, which we identified as a common complaint, particularly related to software stability and device size. Studies implemented today will likely benefit from advances in technology and software since the implementation of this study. Consideration of disease-specific technological needs (e.g., the need for larger devices given problems with dexterity in PD) is also essential.

This study represents an important step toward broadening implementation of remote visits in PD clinical trials. Future work should continue to assess these methods in broader PD populations, including those with more advanced disease. Our results are particularly relevant in the setting of the COVID-19 pandemic, as we aim to promote the safety of research participants while continuing to evaluate and advance new therapies. Implementation in future trials will require buy-in from sponsors and regulatory agencies, though the FDA and EMA have already recommended, “[c]onversion of physical visits into phone or video visits, postponement or complete cancellation of visits to ensure that only strictly necessary visits are performed at sites” [31, 32]. We are likely in a unique window to advocate for broader use of these methods given the need for rapid transition of workflows today. Remote visits offer benefits for streamlining trial conduct, lowering trial costs, enhancing participant satisfaction, and most importantly, ensuring the safety and welfare of study staff and research participants.

CONFLICT OF INTEREST

The authors have no relevant conflict of interest to report.