Abstract

Background:

Clinical rating of bradykinesia in Parkinson disease (PD) is challenging as it must combine several movement features into a single score. Additionally, in-clinic assessment cannot capture fluctuations throughout the day.

Objective:

To evaluate the reliability and responsiveness of a motion sensor-based tablet app for objective bradykinesia assessment in clinic and at home as compared to clinical ratings.

Methods:

Thirty-two PD patients treated with subthalamic deep brain stimulation (DBS) were outfitted with a motion sensor on the index finger of the more affected hand to perform two repetitions of finger-tapping, hand opening-closing, and arm pronation-supination tasks with DBS on and 10, 20, and 30 minutes after turning DBS off. Tasks were videotaped for blinded clinician rating using the Modified Bradykinesia Rating Scale (MBRS). Participants were then sent home with an app-based system to perform two repetitions of the same tasks six times per day spaced two hours apart, three days per week, for two weeks. Intraclass correlation (ICC) and minimal detectable change (MDC) were calculated.

Results:

As the effects of DBS wore off, motion sensors detected worsening of amplitude sooner than did clinician-rated MBRS for all three tasks. ICCs were significantly higher and MDCs were significantly lower for motion sensors in the clinic and at home than for clinician ratings (p < 0.01).

Conclusions:

The tablet-based app demonstrated higher reliability and responsiveness in capturing bradykinesia-related tasks in the clinic and at home than did clinician ratings. This tool may enhance the assessment of novel therapies.

INTRODUCTION

Upper extremity bradykinesia in Parkinson’s disease (PD) is typically evaluated per the Unified Parkinson’s Disease Rating Scale (UPDRS) as a patient performs repetitive finger-tapping, hand opening and closing, and arm pronation-supination [1]. However, this evaluation is challenging as it combines several features (speed impairment, amplitude reduction, fatiguing, hesitations, arrests in movement) into a single score [2]. To overcome these challenges, the Modified Bradykinesia Rating Scale (MBRS) was developed to independently evaluate speed, amplitude, and rhythm during the standard UPDRS bradykinesia-assessment tasks [2, 3]. While the MBRS is more sensitive than the UPDRS in identifying how different aspects of bradykinesia respond to dopaminergic medication [4, 5], it has similar inter- and intra-rater reliability to the UPDRS [2].

Moreover, clinical assessment, regardless of sensitivity, does not capture symptom fluctuations throughout the day in response to treatment. A home-based assessment using wearable sensors may improve temporal resolution and aid in the evaluation and optimization of therapies [6–11]. The objective of this study was to expand upon our previous work [5, 12] by evaluating the reliability and responsiveness of a motion sensor- and tablet app-based system for objective bradykinesia assessment using the three classic UPDRS tasks in the clinic and at home. Objective measures were compared to clinical and patient-reporting rating and analyzed to determine if speed, amplitude, and rhythm respond differently to deep brain stimulation (DBS).

METHODS

Subject recruitment

Adults with idiopathic Parkinson’s disease, diagnosed by a movement disorders neurologist, and treated with subthalamic (STN) DBS were recruited at the Cleveland Clinic and the University of Cincinnati. Participants must have previously had rating(s) greater than or equal to 2 on at least one of the UPDRS finger-tapping, hand-movements, and pronation-supination tasks with DBS off as evidenced in the participant’s historical record and a score greater than 24 on the Montreal Cognitive Assessment (MoCA) [13]. No restrictions were placed on age, Hoehn and Yahr stage, disease duration, or DBS duration. All clinical testing was completed at the Cleveland Clinic and the University of Cincinnati under the purview of their respective institutional review boards and in accordance with the Declaration of Helsinki (2008). All study participants provided signed informed consent prior to their participation.

In-clinic protocol

Subjects arrived at the clinic with DBS on and on their regularly prescribed PD-related medication. They were outfitted with a motion sensor (Kinesia™, Great Lakes NeuroTechnologies Inc., Cleveland, OH) on the index finger of the more affected hand to perform UPDRS-directed finger-tapping, hand movements (opening-closing), and arm pronation-supination tasks for 15 seconds each. The sequence of tasks was then repeated so test-retest reliability and responsiveness could be examined under the assumption that symptom severities would not change substantially within the few minutes it took to complete the two task sequences. DBS was then turned off and two repetitions of tasks were performed 10, 20, and 30 minutes after turning DBS off to examine sensitivity in capturing DBS washout. No medication was taken during the 30-minute washout period. Each task performed was videotaped for subsequent blinded rating. The subjects’ DBS systems were turned back on before they left the clinic.

Video rating

Videos were edited, randomized, and presented to six movement disorder neurologists for rating of speed, amplitude, and rhythm using the MBRS [2, 3]. Prior to rating any videos, all six raters participated in MBRS training sessions to form a group consensus using 45 videos from a separate dataset as described previously [2]. To minimize clinician burden while maintaining data integrity, each clinician rated one-third of the videos and each video was rated independently by two raters. Randomization was performed such that all DBS conditions and repetitions of a given task were rated entirely by the same rater. The clinicians were blinded to the subject identity as much as possible by obscuring everything other than the subject’s hand.

Home protocol

The participants were then sent home with a motion sensor- and tablet app-based system (Kinesia ONE™, Great Lakes NeuroTechnologies Inc., Cleveland, OH) to perform the same tasks six times per day spaced two hours apart, three days per week, for two weeks. The tablet alerted the participant when it was time to perform an assessment. As in the clinic, the tasks were performed twice. All motion data was streamed from the finger-worn motion sensor to the tablet via Bluetooth low energy and uploaded via mobile broadband to a secure cloud server for automated, real-time processing into speed, amplitude and rhythm scores. Immediately following each assessment, the participant rated their perceived slowness of movement on a five-point scale. After the two-week home monitoring period, participants completed a questionnaire on the usability of the system and mailed the device back in a prepaid shipping box.

Analysis

Kinematic data collected from the Kinesia motion sensor were processed into 0–4 scores with 0.1 resolution for speed, amplitude, and rhythm using previously validated algorithms shown to produce outputs highly correlated with clinician MBRS ratings [2] and responsive to measuring changes in medication and DBS [5, 12]. For all clinician rating analyses, the ratings given by the two clinicians were averaged (separately for each task and separately for speed, amplitude, and rhythm). To examine sensitivity of Kinesia outputs and clinician ratings in capturing DBS washout, time points were compared using Tukey’s honest significant difference procedure with an alpha of 0.01. Intraclass correlation (ICC), a measure of consistency, and minimal detectable change (MDC), a measure of responsiveness or the minimum amount of true change that can be captured by a scale or instrument, were calculated between task repetitions for average clinician ratings as well as Kinesia outputs in the clinic and at home using the procedures described in [12].

To examine consistency of measures in the home, the ICC for Kinesia outputs and subject-reported slowness were computed for assessments performed during consecutive two hours, average of consecutive days, and average of consecutive weeks. As all participants were on chronic DBS treatment, we assumed symptom severities would not change substantially during the two-week monitoring period. To determine if there were training effects during the two weeks of home assessments, average Kinesia outputs during the first week were compared to scores during the second week using a Wilcoxon signed-rank test.

RESULTS

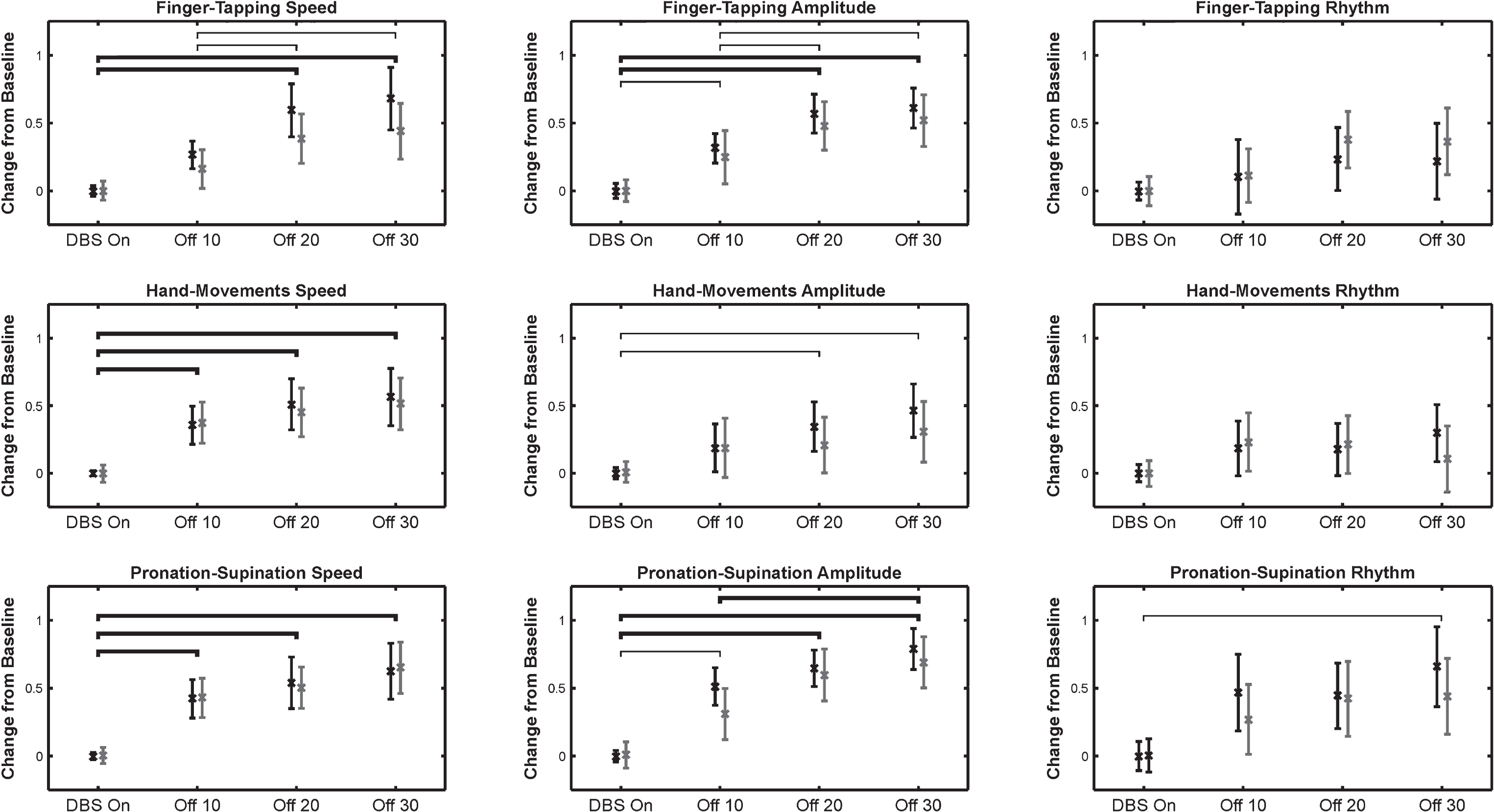

Thirty-two adults with idiopathic PD (26 male, 6 female; age 62.6±6.4 years, disease duration 13.6±4.9 years) treated with STN DBS were enrolled. Kinesia outputs and clinician ratings for speed, amplitude, and rhythm ratings are shown in Fig. 1 as the effects of DBS wore off. Kinesia and clinician ratings both detected significant worsening in speed at similar timepoints for all three tasks. However, Kinesia detected significant worsening in amplitude sooner than clinician ratings for all three tasks. Kinesia also detected additional significant worsening of finger-tapping speed and amplitude following the 10 minutes after turning DBS off timepoint, while clinician ratings did not.

The changes from baseline (mean±95% confidence interval) are shown for Kinesia outputs (black) and clinician ratings (gray) for all three tasks and score types. Thin brackets indicate intervals where changes were significantly different as measured by Kinesia and thicker lines indicate intervals where changes were significantly different as measured by both Kinesia and clinician ratings (p < 0.01). There were no intervals where clinician ratings measured significant differences, but Kinesia did not.

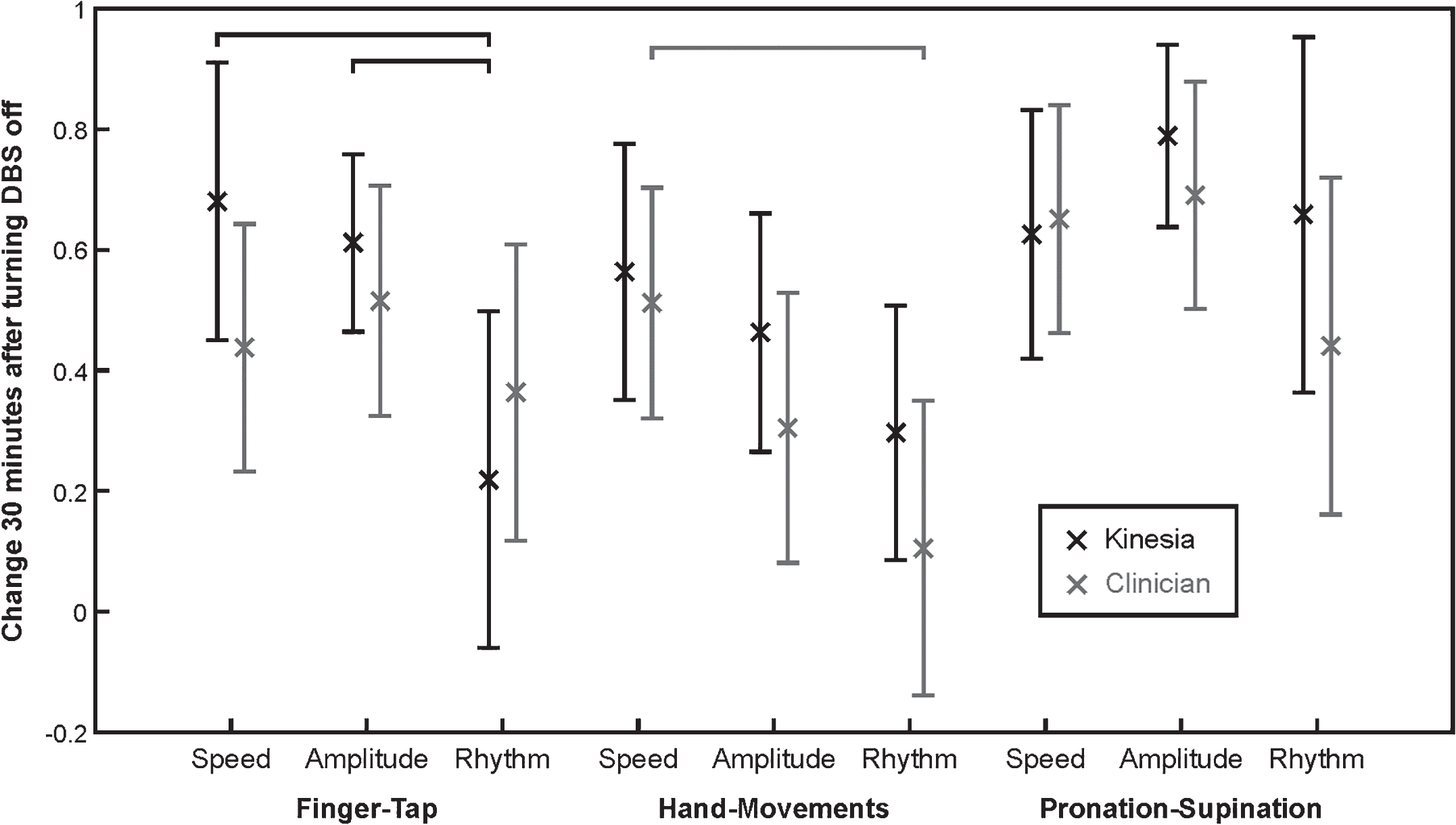

At the 30 minute DBS off point, finger-tapping speed and amplitude worsened more than did rhythm as measured by Kinesia (p < 0.01), but there was no significant difference between speed and amplitude (Fig. 2). Clinician ratings measured significantly worse hand opening-closing speed compared to hand opening-closing rhythm (Fig. 2). There were no other significant differences in worsening between speed, amplitude, and rhythm as measured by Kinesia or clinician ratings.

The changes from baseline (mean±95% confidence interval) 30 minutes after turning DBS off are shown for Kinesia outputs (black) and clinician ratings (gray) for all tasks and score types. Black brackets indicate significant differences in Kinesia outputs and gray brackets indicate significant differences in clinician ratings (p < 0.01).

ICCs and MDCs for the clinician and Kinesia ratings both in the clinic and at home are shown in Table 1. ICCs were significantly higher and MDCs were significantly lower for Kinesia in the clinic and at home than for clinician ratings (p < 0.01, Wilcoxon signed-rank test).

ICCs and MDCs for clinician ratings and Kinesia both in the clinic and at home

ICC: Intraclass correlation, MDC: Minimal detectable change, FT: Finger-taps, HM: Hand-movements, PS: Pronation-supination.

Thirty of the thirty-two individuals who participated in-clinic protocol also participated in the home protocol. Technical difficulties with the Kinesia ONE systems that were no fault of the participants prevented two individuals from participating in the home protocol. At home, the 30 participants successfully performed the motor tasks as requested (6 times per day, 2 hours apart, three days per week, for two weeks) 99.1% of the time. The ICC for Kinesia outputs and subject-reported slowness for assessments performed during consecutive two hours, average of consecutive days, and average of consecutive weeks are shown in Table 2. Consistency improved as more assessment times were included in the average. For all time ranges, Kinesia measures were more consistent than subject reported slowness.

ICCs for home-based Kinesia outputs and subject-reported slowness for every consecutive two hours, average of consecutive days, and average of consecutive weeks

FT: Finger-taps, HM: Hand-movements, PS: Pronation-supination, SR: Subject-reported, Q2H: Consecutive two hours.

When examining training effects, only hand opening-closing amplitude and pronation-supination amplitude changed significantly (p < 0.01) from week 1 to 2. However, while statistically significant, these changes consisted of median worsening of 0.13 and 0.16 on the 0–4 scale.

At the conclusion of the study, all the 30 individuals who participated in the home protocol completed a questionnaire on the usability of the Kinesia ONE system. Questions were answered on a 1–5 Likert scale (1 = strongly disagree, 5 = strongly agree). Responses to questions regarding overall system use are summarized in Table 3. Each question was rated 4 or 5 by at least 90% of participants.

Summary of Kinesia One usability questionnaire responses

Questions were answered on a 1–5 Likert scale (1–5 scale where 1 = strongly disagree, 5 = strongly agree). Percentage of responses in each category are shown.

DISCUSSION

The Kinesia outputs demonstrated higher reliability and responsiveness in capturing bradykinesia in both the clinic and at home than did clinician MBRS ratings for all three tasks, which is similar with to what we demonstrated previously in the clinic for finger-tapping [12]. Kinesia detected significant worsening of amplitude sooner than did clinician ratings for all three tasks (see Fig. 1). Also, only Kinesia detected significant additional worsening of finger-tapping speed and amplitude 20 minutes after DBS was turned off, further demonstrating improved responsiveness compared to clinician ratings. There were no intervals for which clinician ratings detected significant differences and Kinesia did not. While Kinesia detected worsening of speed at the same timepoint as clinician ratings for all three tasks, it is possible that waiting 10 minutes after turning DBS off was too long and that a shorter assessment interval (e.g., 5 minutes) would have been better for capturing differences. It is also important to note that clinician ratings used in this study are the average of two independent raters, which may reduce variability, but is not typical for clinical trials or patient care. Detecting very small changes might not be useful in routine clinical practice, but could be quite important for determining efficacy of novel therapies in clinical trials [12, 14].

These results have important implications for evaluating therapeutics in clinical trials and routine therapy optimization. As we discussed previously [12], increasing the reliability of ratings decreases the number of subjects necessary to detect significant changes in clinical trials. Likewise, detecting significant changes sooner could shorten the time necessary to detect significant changes and help new treatments get to market faster. Reducing the required numbers of subjects and study durations would dramatically reduce the costs of clinical trials.

In this study, finger-tapping speed and amplitude, as measured by Kinesia, and hand opening and closing speed, as measured by clinician ratings, worsened significantly greater than did rhythm 30 minutes after DBS was turned off, but there were no statistically significant differences in the speed and amplitude response for any task. This contrasts with our previous work showing that dopaminergic medication affects speed more so than amplitude or rhythm [4, 5] and suggests different mechanisms of improvement between medication and STN DBS and reiterates the need for independent assessment of speed, amplitude, and rhythm in clinical trials.

The high reliability and sensitivity of the objective Kinesia ratings demonstrated in the clinic extended into the home environment. Average Kinesia ONE outputs were either unchanged or slightly worse when comparing the second week to the first, indicating the absence of a learning effect from two weeks of home assessments. These results, coupled with high compliance and positive usability feedback from participants, suggest that the system can be used unsupervised in the home to evaluate upper extremity bradykinesia with minimal reduction in data quality. A common pitfall in home monitoring is assuming that slowness of movement is a proxy for bradykinesia when patient context and effort are unknown [9]. However, a key aspect of the home-based Kinesia assessment used in this study is that it required the subject to perform specific standardized tasks at specific times. Knowing what a patient is attempting facilitates high reliability and sensitivity assessment. Home assessment also permits more frequent assessment, which can improve temporal resolution and consistency (e.g., Table 2) as averaging multiple assessments during a day reduces noise. The home-based Kinesia assessment provided greater consistency than subject-reported slowness, which is particularly important for clinical trials as patient-reported diaries are commonly used as outcome measures. Home monitoring can also improve the efficiency of clinical trials by reducing required travel to the clinic for assessment and enabling real-time access to data for analysis.

In addition to benefiting clinical trials, the ability to reliably detect responses to therapy changes in the home could also assist with routine PD patient care. Determining an appropriate medication regimen (e.g., type, dose, frequency) often requires multiple clinical visits as patients receiving dopaminergic medication are typically advanced slowly to minimize side effects and utilize the smallest dose that provides adequate symptomatic control. As we and others have suggested previously, home assessment could potentially improve the efficiency of this titration process by enabling clinicians to remotely see how their patients are doing and then adjust treatment sooner [8, 15–17]. Future investigations are planned to further examine how symptoms fluctuate throughout the day during daily life for patients with and without DBS. As patients become more and more engaged in their own disease management, home monitoring using wearable sensors will likely play an important role in routine medical care.

CONFLICT OF INTEREST

Related to the research presented herein:

Dr. Heldman and Dr. Giuffrida have received compensation from Great Lakes NeuroTechnologies for employment. Dr. Urrea-Mendoza, Dr. Espay, and Dr. Fernandez received support from Great Lakes NeuroTechnologies through sub-awards from NIH/NINDS grant number 5R44NS065554-05.

Additional disclosures:

Dr. Espay has received grant support from the Michael J. Fox Foundation; personal compensation as a consultant/scientific advisory board member for AbbVie, Chelsea Therapeutics, TEVA, Impax, Merz, Pfizer, Acadia, Cynapsus, Solstice Neurosciences, Eli Lilly, Lundbeck, and USWorldMeds; royalties from Lippincott Williams & Wilkins and Cambridge University Press; and honoraria from UCB, TEVA, the American Academy of Neurology, and the Movement Disorders Society. Dr. Fernandez is a paid consultant for General Electric Healthcare, Merz Pharma, Medscape, and Pfizer, Inc.

Footnotes

ACKNOWLEDGMENTS

This work was supported by the National Institute of Neurological Disorders and Stroke of the National Institutes of Health under grant 5R44NS065554-05. The content is the sole responsibility of the authors and does not necessarily reflect the views of the National Institutes of Health. The authors would like to thank Jonathan Parks and Barry Goldberg for assisting with data collection and analysis.