Abstract

Fatigue driving is one of the primary causative factors of road accidents. It is of great significance to discern, identify and warn drivers in time for traffic safety and reduce traffic accidents. In this paper, a systematic review for the fatigue driving behavior recognition method is developed to analyze its research status and development trends. Firstly, the data information and its application scenarios related to fatigue driving is detailed. Three driving behavior recognition methods based on different types of signal data are summarized and analyzed, and this signal data can be divided into physiological signal characteristics, visual signal characteristics, vehicle sensor data characteristics and multi-data information fusion. By summarizing and comparing the recognition effect of existing fatigue driving recognition methods, combined with deep learning technology, the paper concludes the fatigue driving behavior recognition method based on single data source has some shortcomings such as low accuracy and easy to be affected by external factors, but the recognition method based on multi-feature information fusion can achieve a exhilarated recognition result. Finally, some prospects are given to analyze the development trend of fatigue driving behavior recognition in the future.

Introduction

With the rapid increase in the number of cars, there has also been a rise in the number of road accidents. Every year, countless people’s lives are threatened by various traffic accidents, which cause great casualties and economic losses. Finding the causes of traffic accidents and exploring effective ways to reduce the incidence of traffic accidents is an important task in front of researchers and scholars. Fatigued drivers are responsible for about 100,000 crashes each year, killing more than 1,500 people and injuring more than 71,000, according to the National Highway Traffic Safety Administration. According to the Global Roads Safety Report, annual road related deaths have risen to 1.35 million, or almost 3 700 people per day around the world [1]. In the United States and Europe, drowsy driving is responsible for 21% of serious traffic crashes [2]. Statistically, they cause over 40 % of serious road crashes and 83 % of road deaths [3]. According to the statistics of the National Bureau of Statistics of China, the economic loss caused by traffic accidents in the past five years has reached 5.294 billion yuan [4]. While cars bring convenience to people, they also increase the incidence of traffic accidents. Studies have shown that if the driver gets a warning one second before the danger and makes a timely response, the accident may eventually be avoided [5]. Therefore, it is very important in the field of driver safety to study and detect driver fatigue, accurately detect driver fatigue and provide early warning. This is of great importance in reducing the occurrence of traffic accidents.

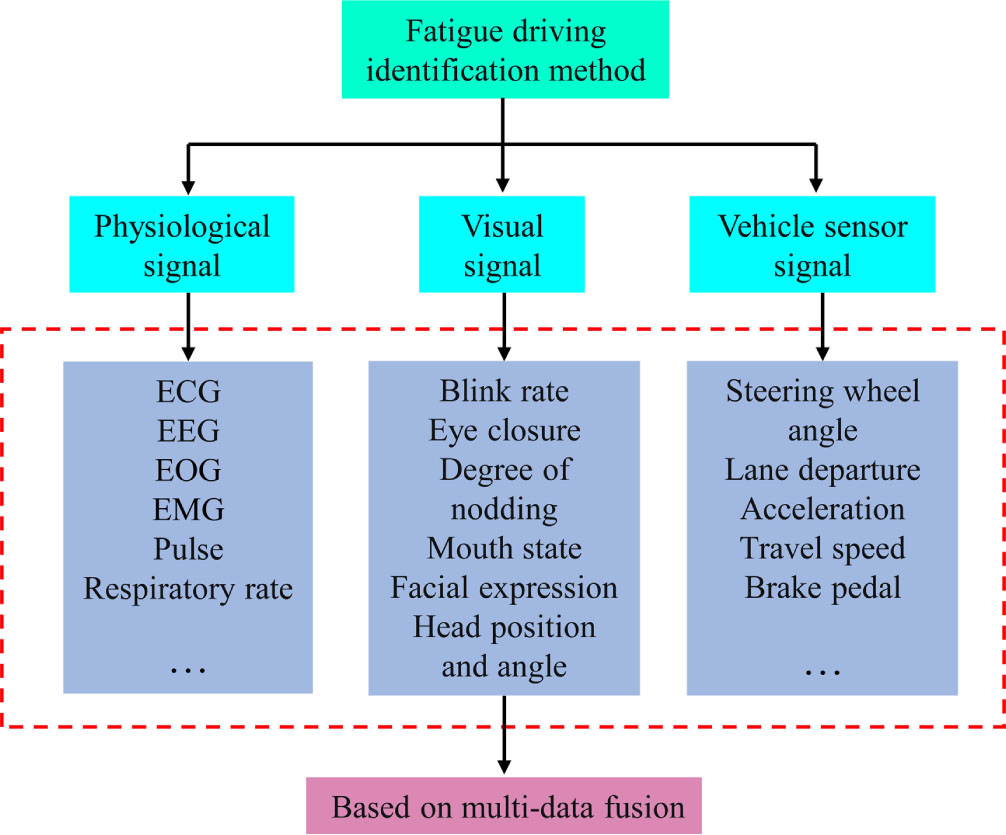

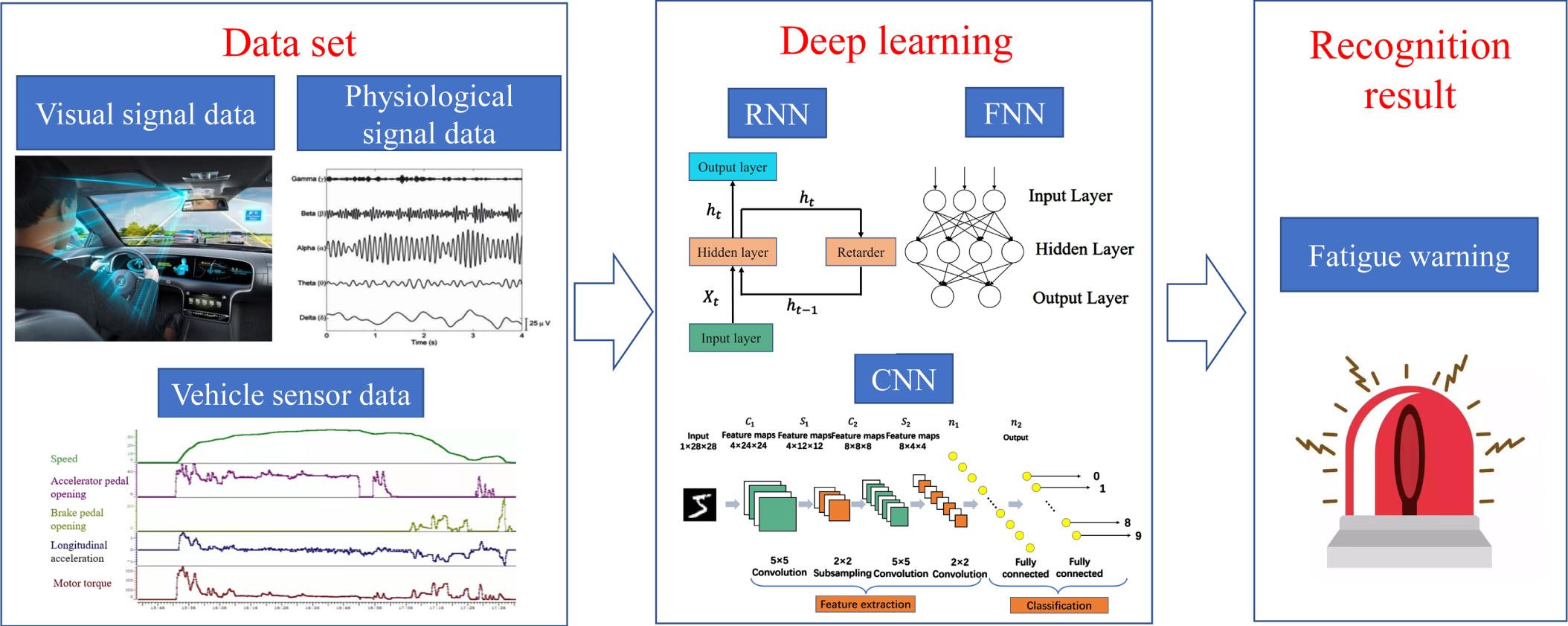

Tired driving refers to the phenomenon of physical and mental dysfunction caused by the excessive use of mental and physical energy in the process of driving for a long period of time. It causes a decline in physiology, recognition and control. When drivers are tired, their response will rapidly slow down, their hearing will decline, their attention will not be able to concentrate, and their sensory functions will be weakened, which will make them unable to judge the road situation and respond to unexpected situations in time, and seriously affect safe driving [6]. Tiredness is divided into active tiredness, passive tiredness and tiredness associated with sleeping. Active fatigue refers to mental fatigue that is attributable to active participation in a particular task, and active fatigue will occur in people who work at high intensity for a long period of time. Passive fatigue is caused by monotonous work or lack of concentration, and fatigue driving is mostly caused by passive fatigue. At present, there are three primary methods used to identify driver fatigue: (1) the recognition method based on physiological state; (2) Recognition method based on video image; (3) Identification method based on vehicle sensor data. In addition, due to the limitation of the accuracy of single fatigue driver recognition, the above three fatigue driver recognition methods are combined to form multi-data fusion. The classification of several fatigue driving recognition methods is shown in Fig. 1.

On the first method of identification, driver fatigue is intimately associated with brain activity, eye movements, and skin conductance, etc. Fatigue levels can be described by EEG (electroencephalogram), EOG (electrooculogram), and EMG (electromyogram) signals. Combined with these signals, they are used for detection from fatigue to sleep stages. In addition to the above features of the signals, the heart rate variability detected by the ECG signals also contains information about fatigue and sleep stages [7]. Sleep, based on several ECG characteristics (low frequency power, high frequency power and low/high frequency ratio), can be divided into periods of wakefulness, rapid eye movement and frequent eye closure. The collection of physiological information is mainly completed by wearable devices, which has the advantages of high detection accuracy and good effect. Kar et al. established a functional brain network and validated three blood parameters, glucose, urea and creatinine, which can be for detecting and quantifying fatigue and sleepiness [8]. Li [9], Zhao [10], Wang [11] and others used the phase lag index, the cocoherence coefficient and the Pearson correlation coefficient to construct functional networks of the brain before and after simulated driving tasks. The results showed significant increases in functional connectivity, intensity, clustering coefficients and mean path length in prefrontal, centrum and temple regions. Zhao and colleagues [12] measured the degree of mental fatigue during simulated driving using electroencephalographic and electrocardiographic measures, and found that these two measures were good predictors of mental fatigue. However, this recognition method also has some shortcomings, such as the high cost of the relevant sensor equipment, and direct contact with the driver, the experience is not good, and sometimes even affect the driving operation.

Classification structure of fatigue driving identification method.

For the second method, when the driver is tired, there will be obvious left-right eye drift, longer closing time, mouth yawning, frequent nodding, facial muscle expression and other obvious features. In-vehicle cameras capture images of the driver’s face in real time and process images of the eyes and mouth or the entire face. The characteristics of the relevant parts are extracted for data analysis, so as to complete the judgment of the driver’s driving state. At present, the features that can be used in this method mainly include eye features, mouth features, head posture features and facial expression features [13]. Singh et al. [14] used various techniques such as SVM, random forest (RF), CNN and artificial neural network (ANN) to analyses driver drowsiness using the front-facing camera of the mobile phone. Wu et al [15] used the AdaBoost algorithm’s classifier to identify the driver’s eye state, and determined the driver’s fatigue level by calculating the percentage of time per unit time the eyes were closed (PERCLOS). Vision drowsy driving detection requires no direct contact with the driver’s body and can automatically identify drowsy driving characteristics, significantly improving detection accuracy.

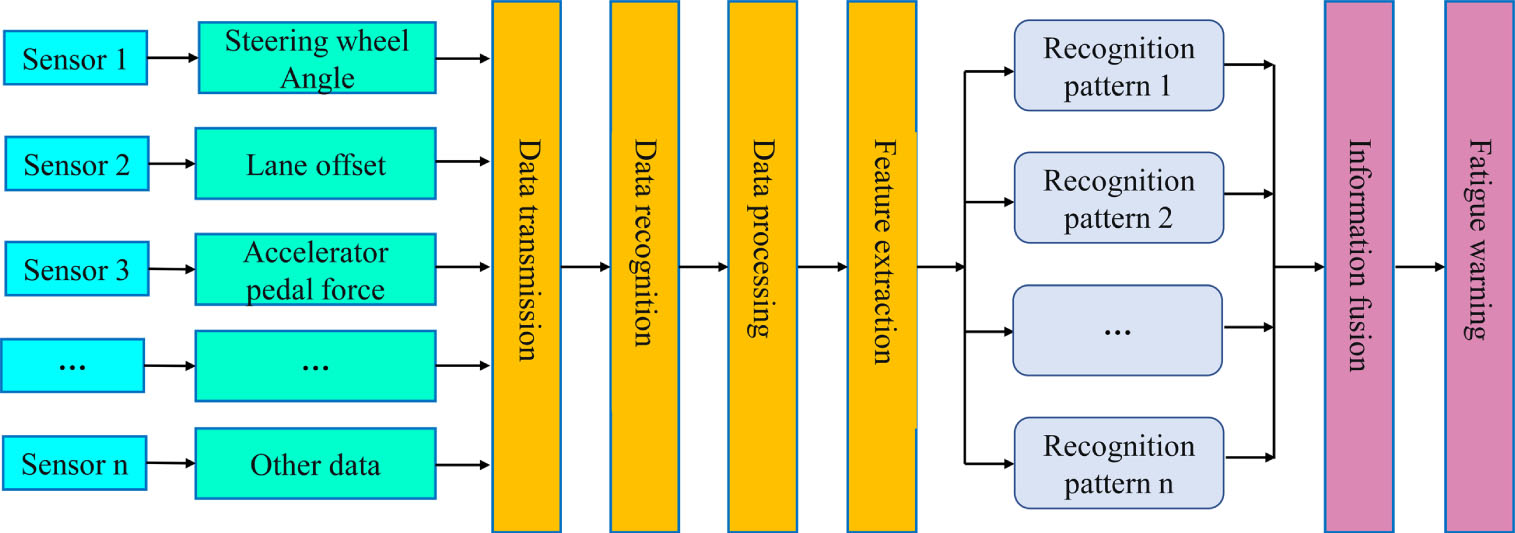

The third class is based on vehicle sensor data characteristics. The road traffic system consists of three elements: the driver, the vehicle and the road (including the traffic environment). In this system, the driver uses vision to obtain real-time information of the road environment system. These include the car’s location in the lane, the alignment of the road in front, the speed and distance of other vehicles, and the distance of obstacles. Through the experience of the driver, driving decisions are made, driving operation instructions and actions are formed, and the vehicle is controlled on the road. The sensor collects the changing process of data such as lane deviation, steering wheel reversal Angle, brake pedal stepping times and strength, and conducts multivariate correlation analysis between the changed driving data and the driving data of the vehicle during normal driving [16]. Rationality of driving is assessed by the data error between them. This completes the assessment of the state of fatigue. Takei et al. [17] proposed a method to identify tired driving by collecting vehicle steering Angle signals. Alharbey et al. [18] developed two different methods, one based on machine learning, using SVM to process EEG signals, with recognition accuracy up to 98%; The other relies on a deep learning model that uses CNN to process video signals with a detection accuracy of up to 99%. However, due to the differences in personal driving habits and characteristics of different drivers, the accuracy rate will also vary.

In summary, it is not entirely reliable to infer fatigue from a single physiological signal, although research into detecting fatigue using multiple physiological characteristics has made great strides. The same thing. Using a single visual signal to detect tired driving may be affected by the driver’s own conditions. Therefore, the accuracy of fatigue driving identification may be accurate only when a variety of characteristic signals are combined with the information of the driver’s working environment, specific circumstances and personal habits. There are limits to the accuracy of any one tired driver detection technology. To improve the accuracy of fatigue detection, the multi-information fusion based on driver fatigue detection method [19] integrates the above three methods. In fact, these methods use a variety of characteristic parameters or a variety of detection methods to judge the fatigue state. It is the focus of current research to establish reasonable and effective information fusion decision-making model according to the influence degree and reliability of various information. As deep learning is widely used in different domains, fatigue detection methods based on multifeatured information fusion and deep learning are rapidly developing. For example, Cheng and others [20] suggested an approach to integrate driver and vehicle attributes. Eye blinking speed, maximum closing time, steering wheel angle standard deviation and track position standard deviation have been selected to detect drowsiness. The findings showed that the accuracy of the vehicle correlations was 81.9%, the accuracy of the driver correlations was 86.9%, and the accuracy of the combined driver and vehicle correlations were 90.7%. In reference [21], the decision module is used to fuse three decisions with different characteristics made by the neural network to detect fatigue. The system utilizes the features of eye state, lateral position, SWA, ECG, EEG and surface electromyography, and the detection accuracy is 94.63%.

This paper summarizes and analyses the methods used to identify fatigued driving. Firstly, data for the identification of fatigue driving behavior were collected and processed. Then summarize and analyze the main methods of fatigue driving behavior recognition. It mainly involves the detection of driver fatigue on the basis of physiological indices, vehicle characteristics and video image processing. Methods of fatigue detection based on physiological indicators include electroencephalogram (EEG), electrocardiogram (ECG), electrooculogram (EOG), electromyogram (EMG), respiratory rate and blood pressure analysis. Method for detecting driver fatigue based on vehicle sensor data, including speed, acceleration, steering angle, lane keeping error and more. The machine vision based on fatigue driving detection method includes eye opening status, head movement posture, facial expression and mouth features. The last one is to integrate two or more of the above three recognition methods to form multi-feature information fusion, and then use deep learning methods for fatigue driving recognition. Finally, the paper suggests that high-precision fatigue detection based on multi-feature information fusion will be the development trend and research goal in the future by comparing the advantages and disadvantages of various detection methods and drawing the analysis conclusions and some prospects.

The structure of this paper is as follows: The second part introduces the collection of fatigue driving data; The third part introduces the fatigue driving behavior recognition method, and summarizes and analyzes the single recognition method and deep learning technology respectively. The multi-data fusion based on fatigue driving detection method is presented in the fourth part. The paper concludes with some conclusions and perspectives.

At present, the research on the driver status information collection technology has been relatively mature, mainly through the external sensor for the driver’s contact and non-contact status detection. According to the classification of fatigue recognition methods in the previous section, fatigue recognition based on physiological signals requires the acquisition of physiological information about the driver, such as electromyographic signals, ECG signals, EEG signals, pulse signals, etc. EEGs are gold standards in measuring brain activity and are thought to be an accurate measure of waking to sleep. The EEG can be obtained with the help of flat electrodes that are attached to the scalp. Surface EMGs are obtained from the driver in a simulated environment using electrodes placed on the neck, back, shoulder and wrist. Surface EMG sensors are highly invasive and have limitations when it comes to detecting driver fatigue in real time. In addition, by monitoring body temperature and heart rate, combined with facial expressions, it is possible to determine the driver’s physical state such as anger or fatigue. With the development of noninvasive sensors, current sensors can be mounted on steering wheels or seatbelts, capturing physiological signals from the driver. Heart rate (HR) and heart rate variability (HRV) are two important parameters for ECG identification of fatigue driving, therefore, the utilization of ECG signals for driver fatigue recognition has great potential [22].

To detect driver drowsiness based on visual signals, information about the drivers’ facial expressions, e.g., blink frequency, yawn frequency and degree of head nod, needs to be collected. With the help of image processing, the driver’s state of fatigue is determined by the difference between the degree of closure of the mouth when the driver is speaking normally and when he or she is yawning. The visual sensor (camera) mounted on the dashboard is used to acquire the driver’s face and eye image features. The PERCLOS method is based on visual sensors that detect the driver’s average eyelid closure frequency, the frequency of eye gaze blinks, and changes in facial expressions to determine whether fatigue is present. Mainly using the Logitech C310-720P external camera, and therefore pattern recognition, to monitor driver status in real time. GuardVant has developed a driver fatigue detection system [23] that uses infrared cameras to monitor the driver’s behavior, such as eyelid closure, head and facial movements and the direction of gaze. Another detection method measures the change in capacitance between the electrodes of the sensor array above the driver’s seat, determines the position of the driver’s head in three-dimensional space, and determines the fatigue state based on the change in head position over time [24].

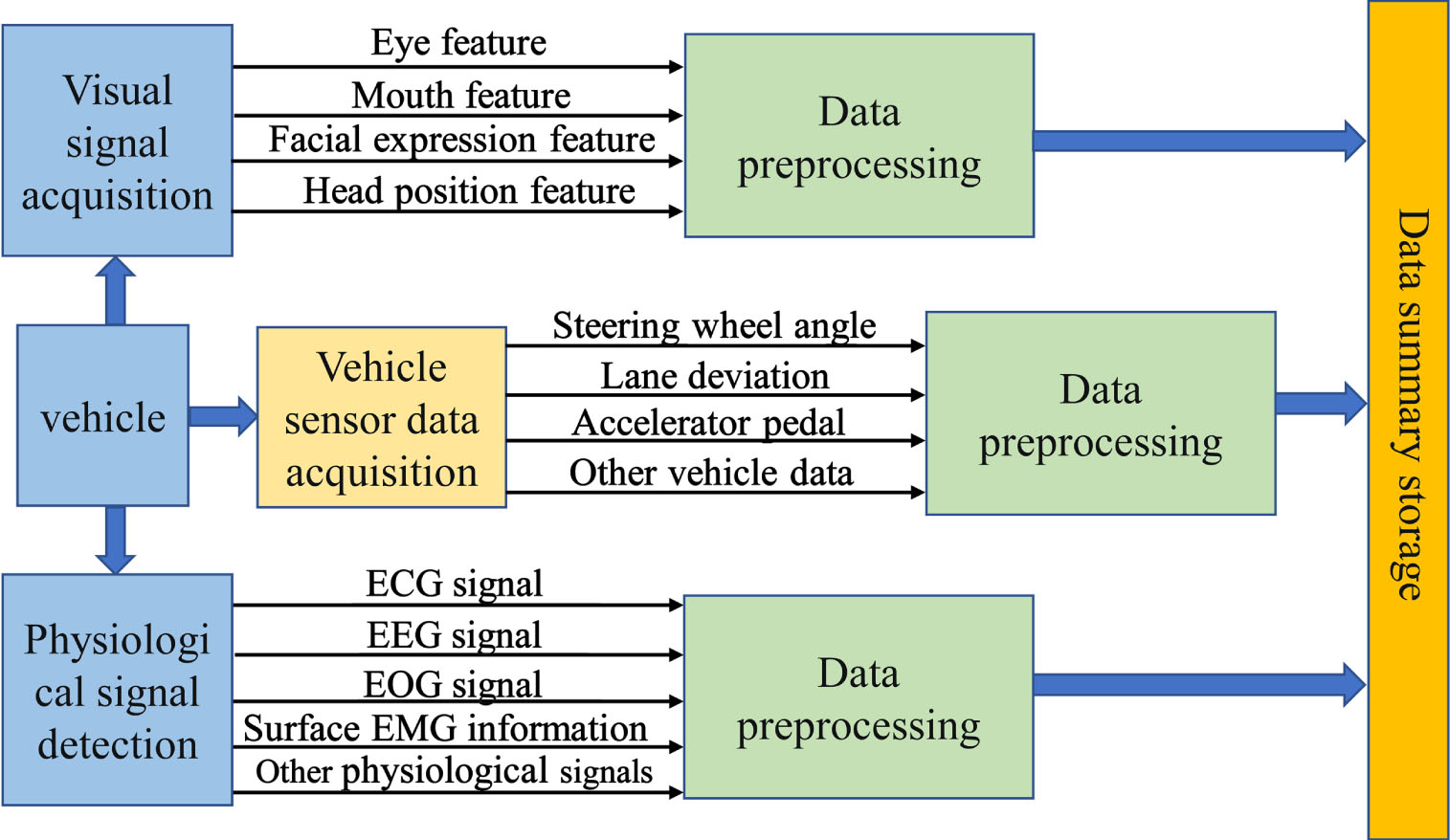

Steering wheel angle, acceleration, steering and lateral movement, brake pedal forces and the magnitude of accelerator pedal forces must be recorded for fatigue detection methods based on vehicle sensors. When a driver becomes fatigued, he or she becomes mentally distracted and the hand pressure on the steering wheel becomes less. The pressure changes are statistically analyzed to find the important pressure change points that reflect the driver’s fatigability. On the basis of the steering wheel sensor, the steering wheel rotation angle is used as an input signal and the driver’s mental state is analyzed with the help of chaos theory. Based on visual sensors, speed sensors and steering wheel rotation angle sensors, the vehicle’s route is monitored and analyzed to determine whether the driver is distracted, drowsy or other mental states. The driver’s voice information is acquired, and mathematical methods are used to analyze the degree of disorder of the voice to detect states such as nervousness, fatigue and lack of mental concentration [25]. Common fatigue driving identification information acquisition is shown in Table 1, and the data acquisition structure diagram is shown in Fig. 2.

Common driver status detection collection information

Common driver status detection collection information

Structure of experimental data collection based on multiple data fusion.

Fatigue driving recognition based on physiological signals

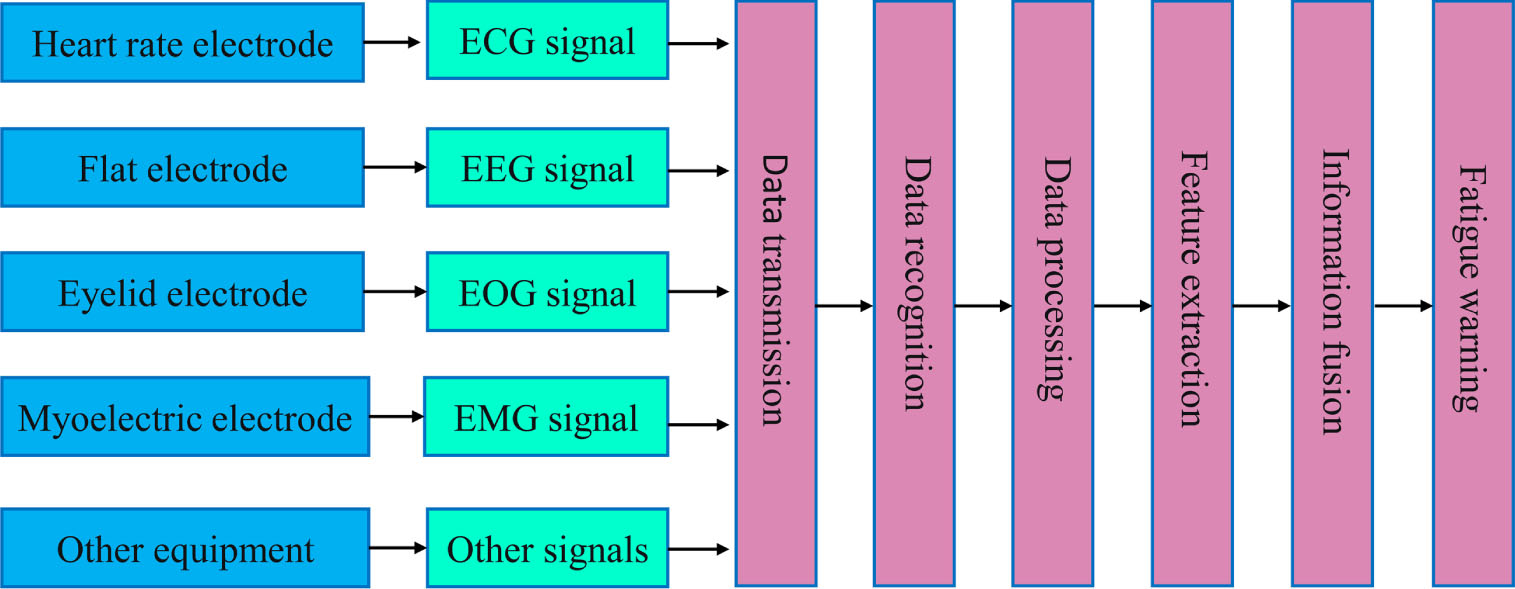

Physiological signal-based detection is a way of understanding driver fatigue status and determining fatigue levels by collecting, recording, detecting and analyzing various physiological signals that reflect driver fatigue. Research has shown that during fatigue, the physiological parameters of the driver differ from the standard state, so that changes in the driver’s physiological signals can be used to assess the level of fatigue. The main method used is to detect physiological information of the driver through heart, brain, skin, respiratory rate, skin resistance and skin temperature. An accurate method of detection is through changes in bio-signals such as EEG, ECG, EOG and surface electromyography. Among them, EEG has been regarded as the “gold standard” for fatigue identification, and it has been shown that the dominant rhythm of EEG waves can indicate the fatigue status of a subject, for example, as the fatigue level increases, the δ and θ waves in EEG increase and the α and β waves decrease [26, 27].

Flowchart for fatigued driving recognition based on physiological signal detection.

The information acquisition module acquires physiological signals of the human body from various information sources through various sensors, mainly including heart rate signals using heart rate electrodes, electroencephalography signals using flat electrodes, electrooculography signals using eyelid electrodes, electromyography signals using electromyography electrodes, pulse signals using pulse oximetry, and pressure changes in the driver’s back using pressure sensors. Researchers usually extract feature indicators that reflect fatigue based on the collected electrical signal information and use these feature indicators as input features for fatigue detection models [28, 29]. Figure 3 shows the flowchart for detecting driver drowsiness using physiological signaling.

An electrocardiogram is useful in determining driver fatigue, providing both heart rate and heart rate variability parameters. As drivers who are fatigued are often accompanied by a decrease in their ECG, HR and HRV are widely used as physiological characteristics to identify fatigued driving. It was concluded that mental fatigue decreases the HRV signal parameter and leaves the HR signal parameter largely unchanged; however, as physical fatigue increases, the HRV signal parameter decreases, and the HR signal increases significantly.

In their study of the relationship between ECG signals and fatigue driving, Gromer et al. [30] used an inexpensive ECG sensor to derive heart rate variability data from the driver to identify driver drowsiness. In the early days, non-invasive ECG sensors were fitted in the drivers’ seat or worn as a safety belt. But these were prone to error. A solution to this problem, Jung et al. [31] suggested to embed electrodes into a handlebar. An approach to detecting driver fatigue using PPGs has been proposed by Li and Chung et al. [32], where the PPGs are attached to the steering wheel and HRV is extracted from the raw PPGs.

Fatigue driving recognition based on EEG signals

The electroencephalogram (EEG) is considered to be the most frequently used physiological signal to measure fatigue driving, and measures fatigue by evaluating the average spectral power of various frequency bands of the driver’s brainwaves when awake and when drowsy. EEG signals can be categorized into four frequency segments, which include sleep-related delta waves (0.5–4 HZ), fatigue-related theta waves (4–8 HZ), creativity-related alpha waves (8–13 HZ) and alertness-related beta waves (13–25 HZ).

In examining the association between EEG signals and fatigued driving, Lin et al. [33] pre-processed the EEG signal data and extracted the features from the pre-processed data using Fast Fourier Transform (FFT), then fuzzy neural network was used for training and a model was built; Morales et al. [34] proposed a wearable single-channel EEG device as a fatigue driving detection tool, which the device can help drivers assess their fatigue level; The EEG signal data was collected by Luo et al. [35] using scale factor acquisition algorithm and multi-scale entropic feature extraction algorithm for classification of the extracted entropic features used in this study to detect sleepiness.

Fatigue driving recognition based on EOG signals

The difference in voltage between the human cornea and the retina creates an electric field, the electrooculogram (EOG) signal, which reflects the direction of gaze of the driver’s eye. Horizontal eye movements were studied by placing eyelid electrodes in the outer corners of the eyes and in the center of the forehead of each eye of the driver, and rapid eye movement (REM) and slow eye movement (SEM) parameters were extracted by the researchers in both non-fatigued and fatigued states of the driver. Some researchers have used electro-oculogram (EOG) signals as a measure of driver fatigue from eye movement and therefore fatigue. This can cause problems for drivers driving the vehicle normally, as the electrodes are installed very close to the eyes, which can limit the driver’s field of view.

In the investigation of the relationship between EEG signals and fatigue driving, a method to measure EOG by electrodes placed on the forehead was proposed by Zhang et al. [36], but fatigue recognition methods based on EOG sensors have limited application in real-time driver fatigue detection. Kurt et al. [37] studied three different physiological parameters based on EEG signals, combining EEG, EOG and ECG, and applied wavelet transform algorithms and neural network techniques to analyze the changes in physiological parameters from non-fatigue to fatigue. A fuzzy information method for feature extraction based on the wavelet transform has been proposed by Khushaba et al. [38], which combines three physiological parameters of driver’s ECG, EEG and EEG signals. A comparison was also made on the effectiveness of Linear Discriminant Analysis (LDA), KNN and Support Vector Machine algorithms for driver fatigue recognition. A fatigue detection device that can be used to detect eyelid closure without damaging the eye by attaching an electro-ocular signal pick-up device on the skin around the eyelid was invented by Artanto et al. [39], and based on this, the ESP8266 system for fatigue detection was designed.

Fatigue driving recognition based on EMG signals

The surface electromyographic signal (EMG) is the potential signal generated by human muscles at rest or during contraction. The potentials generated by the muscle cells were recorded by an EMG signal sensor via electrodes and the potentials generated by the muscles on the surface of the skin were measured. In order to predict fatigue, characteristics are extracted from the time domain and the frequency domain of the EMG signal. Fatigue characteristics include variations in mean and mean frequencies and effective amplitudes.

Hostees et al. [40] investigated the frequency and amplitude patterns of EMG in long-distance drivers during driving and found that amplitude increased with driving duration while frequency showed the opposite trend. Fatigue related EMG signal metrics were investigated by DR Bueno et al. [41] such as mean frequency, median frequency, root mean square and over-zero detection of surface EMG signals. Researchers have developed fatigue recognition models based on Gaussian Mixture Model (GMM) technique for fatigue testing. Surface electromyography (EMG) signals were used by Shi et al. [42] to detect fatigue state by performing classification. In this study, five relevant features were extracted: the polar deviation, the variance, the relative spectral power, the kurtosis and the shape factor. It was found that k-nearest-neighbor classifier was best at predicting with 90% accuracy. Wang et al. [43] proposed a detection method using electromyography with electrical muscle stimulation (EMS), where an acquisition system is used to record the EMG of the biceps femoris of the driver’s thigh, and the peak EMG factor is used as a feature to detect when the driver starts to feel tired. When this EMG peak factor exceeds a predetermined threshold, the driver’s hand extensor and thumb flexor muscles are stimulated for the purpose of fatigue relief.

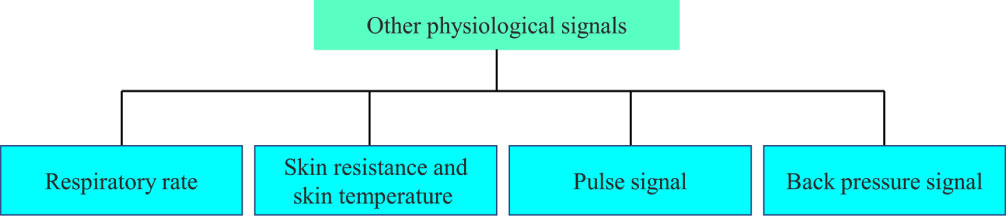

Fatigue driving recognition based on other physiological signals

In addition to the above four physiological signals to identify fatigued driving, a variety of other physiological signals to identify fatigued driving are listed in Fig. 4.

Other physiological signals.

Studies have shown that a person’s breathing rate and lung capacity are related to the degree of fatigue: from the awake state to the fatigue and sleep state, the lung capacity and breathing rate will decrease. Human skin temperature is related to emotional state and fatigue, but skin temperature is easily affected by the environment, mainly used in laboratory research, and it is difficult to apply in practice. The back pressure signal sensor is mounted on the driver’s seat and provides feedback and records the driver’s back pressure distribution while driving the vehicle. The pulse signal is recorded by a pulse oximeter [44].

Deep learning recognition schematic.

In addition to traditional physiological signal-based driving fatigue detection, deep learning-based detection and recognition is more accurate and robust, and a wealth of research has been achieved in the field of identifying fatigued drivers. Deep learning is a research method in the field of machine learning, and a multilayer perceptron with a number of concealed layers is a deep learning structure. Deep learning discovers distributed feature representations of data through the combination of low-level features into more abstract high-level attribute classes or features. Scientists working on deep learning are motivated to develop mechanisms for neural networks that can mimic the human brain for analytical learning and the interpretation of images, sounds and text. Deep learning is different compared to traditional shallow learning: (1) Typically 5, 6 or even 10 layers of hidden nodes are used to emphasize the depth of the model structure. The samples are transformed by a layer-by-layer transformation, which transforms the feature representation in the original space into a new feature space, to facilitate prediction or classification; (2) Learning features using big data is better able to characterize the rich intrinsic information of the data, compared to the method of constructing features using artificial rules. Although methods based on single feature types have produced satisfactory research results, various information fusion techniques based on deep learning have become a major field of exploration in recent years for detecting drowsy driving. Convolutional neural networks, recurrent neural networks, fuzzy neural networks and other fusion techniques [45–49] are some of the deep learning recognition techniques.

Figure 5 illustrates how deep learning can be used to detect driver fatigue. In the field of fatigue detection, which has been extensively researched by many scholars and has achieved excellent results, the deep learning technology has a wide application prospect. For example, the use of a recursive self-evolving fuzzy neural network (RSEFNN) to solve the EEG regression problem in driving fatigue brain dynamics was proposed by Liu et al. [50] with the aim of improving the adaptability of the EEG for practical applications, and the results showed that the RSEFNN has good discrimination performance. A real-time wireless system for the detection of sleepiness based on the EEG; a recursive self-evolving fuzzy neural network (RSEFNN) has been proposed by Devi et al. [51]. A tsk-type convolutional recurrent fuzzy network (TCRFN) framework using log-space transmitter layer functions was proposed by Du et al. [52]. In this framework, convolution blocks were introduced to extract the spatial dependency of the EEGs, and the local feedback method of the fuzzy neural network was used to process the EEGs and extract the temporal dependency. The results of the experiments show that the model is better able to withstand the noise and to predict the degree of sleepiness. An adaptive EEG sleepiness estimation technique combining Independent Component Analysis (ICA), Power Spectrum Analysis, AFSM, and Independent Component Analysis Fuzzy Neural Network (ICAFNN) has been proposed by Lin et al. [53]. The algorithm automatically selects effective features by analyzing the correlation between the power spectrums of fatigue-related components and driving errors, with experimental results showing an accuracy of 91.3%. By analyzing heart rate variability, a novel fatigue detection algorithm based on six features and a time-frequency domain (TFD) fuzzy neural network was proposed by Viswanathan et al. [54].

Analysis of physiological signals to identify fatigue driving characteristics

Analysis of physiological signals to identify fatigue driving characteristics

In summary, fatigue recognition based on physiological signals from the ECG signal, EEG signal, EEG signal, surface muscle signal, as well as respiratory rate and pulse and other indicators for fatigue recognition, the method identification accuracy, good reliability, but generally contact, for the driver is invasive, may interfere with normal operation. A summary of its research status and advantages and disadvantages is shown in Table 2.

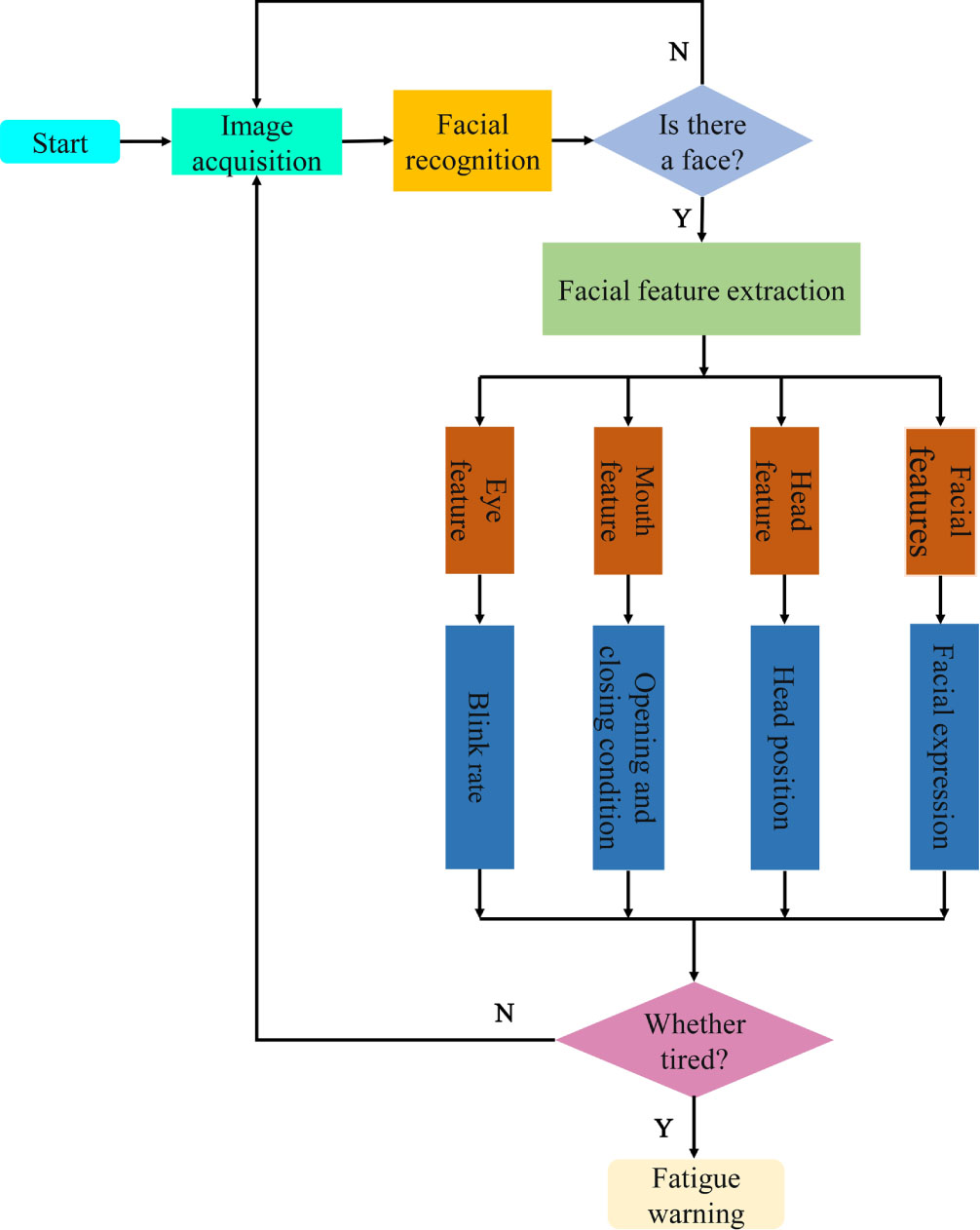

The visual signal-based detection method is based on the facial video acquired by the camera and other image sensors, and uses face recognition, facial feature point localization and other technical means in machine vision to extract features of the driver’s face such as gaze direction, eye opening (EAR), blink frequency, eye closing (PERCLOS), mouth opening and closing (MAR), head movement and head rotation angle. The method uses an algorithm to extract features such as eye direction, eye opening, blink frequency, eye closure per unit time (PERCLOS), mouth opening and closing (MAR), head movement and head rotation angle, and uses the extracted features to identify fatigued drivers. When the driver is tired, there are obvious characteristics such as left and right eye drift, decreasing eye opening time, increasing blink frequency, yawning and nodding frequently [55]. The fatigue recognition method based on driver’s behavioral characteristics is to collect the driver’s image information by installing a camera above the front, extract the features of the relevant parts of the face and analyze them, so as to determine whether the driver is in a fatigue state or not. Currently, the available features for this type of method mainly include eye features, mouth features, head posture features, facial expression features, etc. [56]. The technology roadmap is shown in Fig. 6.

Roadmap for fatigued driving recognition based on visual features.

In the application of driver fatigue recognition system based on vision, the driver’s eye state is an important feature to reflect the fatigue state. It has been noted that when drivers enter a state of fatigue, their blinking frequency decreases, their eye closure time increases significantly compared to the normal state, and their eye-opening time decreases [57]. If he enters a state of deep fatigue, his eyes may remain closed for a long time [58]. Fatigue is usually determined by percentage of eyelid closure (PERCLOS), blink rate, and maximum closure time. There are many detection methods for eye state. For example, template matching method was used to judge eye state in literature [59]. Literature [60] used gray projection curve of iris region to judge the state of eyes. Zhuang and colleagues [61] developed an optimized network model comprising split network and decision network, with 96.72 % accuracy for fatigue detection. A method for the assessment of the fatigue state based on a face localization algorithm has been proposed by Tao et al. [62]. The method uses six key points of each eye to locate the driver’s eyes, which can quickly detect eye fatigue and issue real-time alerts. Bakheet et al. [63] used an adaptive contrast limited histogram equalization algorithm to pre-process the collected facial images. The driver’s eye region was localized using the enhanced Active Shape Model (ASM) algorithm with over 95% accuracy.

Fatigue driving recognition based on mouth features

When a driver becomes fatigued, the state of the mouth may also reflect to some degree the driver’s fatigue, as the state of the mouth differs from that of the driver during normal speech or driving. The driver’s mouth characteristics are therefore an indicator of whether the driver is fatigued or not. Studies often use the shape of the mouth, the position of the corners of the mouth and the degree of opening of the mouth as indicators of drowsiness and yawning. Akrout et al. [64] proposed a method to identify the yawning state of a driver based on the analysis of mouth edge detection followed by temporal and spatial descriptions. Knapik et al. [65] proposed a method to identify fatigued drivers based on thermography in their study of the relationship between mouth characteristics and fatigue. The use of this method is not limited to changes in time and space, and does not interfere with the normal operation of the driver due to thermal imaging. Driver fatigue detection is performed based on the proposed thermal model to detect yawning. A method for the detection of fatigued driving has been proposed by Adhinata et al. [66], which combines the Face Net algorithm for the extraction of facial features with a K-NN or multi-class SVM classification method. The detection accuracy was 94.68%.

Fatigue driving recognition based on head features

Drivers often nod frequently and sharply when they are tired, resulting in sudden changes in head position. It is possible to determine whether the driver is in a state of fatigue by detecting the position of the driver’s head. The traditional method of calculating the head position is relatively complicated. It involves estimating the key points through the face and solving the 2D to 3D correspondence problem using the average head model. Literature [67] proposed a convenient and stable head posture estimation method, which predicted the attitude Angle of the driver’s head from pixel intensity through multi-loss depth network, to judge the fatigue state. A driver drowsiness detection system using RFID technology was proposed by Yang et al [68]. The system evaluates the driver’s nodding movement by measuring the swing in phase from two RFID tags attached to the back of his hat. A method for detecting driver fatigue based on estimating the head position at key points of the face was proposed by Zhang et al. [69] and shown to be able to accurately determine driver fatigue.

Fatigue driving recognition based on facial expression features

Facial expressions include the eyes, eyebrows, cheeks, and mouth. Generally, some muscles on the face become stiff when the driver is fatigued, which may affect slight movements. Zhao et al. [70] proposed a technique to classify driver fatigue using dynamic face fusion and deep belief nets, with an average accuracy of 96.7%. Liu et al. [71] proposed a multi-feature algorithm for the detection of fatigue in human faces by feeding several facial features (such as the duration of eye closure, head nods and yawns) into a dual-stream convolutional network. The algorithm was validated on the NTHU-DDD dataset and was 97.06% accurate. Ji et al. [72] proposed an algorithm for fatigue detection based upon multiple metric fusion and state recognition, using MTCNN (multiscale CNN) for face key point detection with 98.42 % accuracy for human eyes and 97.93 % accuracy for open mouths. A real time algorithm for the recognition of driver drowsiness based on the entropy of facial motion information was proposed by You et al. [73]. The results showed: 94.32% accuracy was achieved.

Visual signal recognition fatigue driving based on deep learning

Deep learning has a wide range of applications in the field of fatigue detection of visual signals, the schematic of which is shown in Fig. 5 [74, 75]. Many scientists have carried out a great deal of research on this subject and have achieved some remarkable results. For example, Feng et al. [76] used Deep Cascade Convolutional Neural Network (DCCNN) to detect the face region in video in real time, and input the aspect ratio of the eyes into a fatigue classifier based on Support Vector Machine, and the fatigue detection accuracy was 94.8%. Ansari et al. [77] proposed a new method to measure fatigue based on ReLU-BiLSTM, a deep learning network with improved Adam optimization algorithm. Liu et al. [78] proposed a convolutional neural network/long/short term memory (CNN-LSTM) based real-time fatigue detection method using driver facial image information with 99.78% detection accuracy. Ali et al. [79] used CNN to extract depth features from the face of the driver in the states such as whether the driver is yawning, closing his eyes and wearing glasses, in order to predict the driver’s drowsiness. Xing et al. [80] applied a CNN model to an ORL face database and achieved a face recognition rate of 85% and a recognition rate of 87.5% for fatigue. Kushwah et al. [81] proposed a YOLOV5 based neural network for driver drowsiness recognition, using a webcam to acquire the actual images, demonstrating a method for drowsiness detection as well as recognition of persistently obscured driving behavior. Ahmed et al. [82] proposed an integrated deep learning architecture dedicated to feature extraction of eye and mouth samples extracted from face images by MTCNN, using a benchmark NTHU-DDD video dataset to effectively evaluate the proposed model, which had an accuracy of 99.65%. Ma et al. [83] proposed a CNN-based driver fatigue detection system for deep video sequences with a dual-stream CNN architecture fusing the spatial information of the current depth frame and the temporal information of adjacent depth frames, and the identification accuracy of the procedure was as high as 91.57%. Eddoughmi et al. [84] proposed a method using recurrent neural networks for driver facial sequence images to analyze and predict drowsiness, and implemented a 3D convolutional network based on a multilayer model architecture to detect driver drowsiness with an accuracy of almost 92%. Magán et al. [85] presented the development of an advanced driver assistance system (ADAS) with driver drowsiness detection at its core, using RNN techniques to extract digital features from 60-second-long image sequences, which were then introduced into a fuzzy logic system with an accuracy of about 65% for the training data and 60% for the test data.

In summary, the vision-based detection of fatigue-related driving behavior is identified by indicators such as frequent closing of the eyes, yawning, frequent nodding of the head and facial expressions. The method has high recognition accuracy, stable performance, and good robustness, and can meet larger recognition needs. A summary of its research status and advantages and disadvantages is shown in Table 3.

Analysis of fatigue driving characteristics based on visual signal recognition

Analysis of fatigue driving characteristics based on visual signal recognition

In order to estimate of the driver’s level of tiredness, the method detects the driving behavior of the driver and the variation rule of the vehicle sensor data. Powerful indicators of driver fatigue include changes in steering wheel angle, changes in brake and accelerator pedal pressure, lane drift, seat load distribution and speed. Steering wheel movement (SWM), measured by a steering wheel angle sensor, is currently one of the main detection methods. Based on vehicle information, SWM is a common method of assessing driver tiredness. The angle sensor detects the driver’s turning movements and is located on the steering wheel. The steering wheel turns less frequently and the vehicle turns more sharply due to fatigue and sleepiness. The standard deviation of the lane position (SDLP) and the force of the accelerator pedal are also commonly used methods to assess driver fatigue and their determination is shown in Fig. 7.

Fatigue driving identification based on vehicle sensor parameters.

Steering wheel rotation data has been used by some scholars to study the aspects of fatigue driving recognition, e.g., For lane departure detection, Steering angle and random forest algorithms were used by McDonald et al [86]. It is more accurate, predicting fatigue lane departures up to 6 s earlier than PERCLOS. Wang [87] et al. designed a system that uses digital photoelectric angle sensors to detect steering wheel rotation signals, which can reflect driver fatigue by obtaining changes in signals such as pedal force, steering wheel angle and gear shifts. Yoshihiro Takei and others [88] applied Fourier and wavelet transforms to the turning angle signal and determined the transforms to monitor the mental state of the driver by means of chaos theory analysis. Deng et al. [89] gave an identification method based on HMM with an accuracy of 85%. A fatigue state detection model based on multiple regression was developed by Siegmund [90] et al. by experimentally collecting driving behavior data such as steering wheel angle and accelerator pedal force, and selecting 46 discriminative indicators. You et al. [91] selected eight parameters reflecting the vehicle motion state and driver’s physiological and psychological state and established a fatigue driving recognition model using SVM, with recognition accuracy reaching 80.83%. Takei et al. [92] applied the chaos theory Takens’ embedding theory to identify changes in steering wheel motion, and the recognition rate reached over 95%. An online drowsiness detection system based on steering wheel angle data collected by a transducer mounted on the steering wheel has been proposed by Li et al. [93]. The system uses a binary decision classifier to assess the fatigue of the driver. The results showed that the system operated online with an average accuracy of 78.01%. An approach to detect driver drowsiness based on time series analysis of steering wheel turning angle was proposed by Gao et al. [94]. This approach improves the accuracy of fatigue classification. Using the Stanford Sleepiness Scale (SSS), A method to detect driver drowsiness was proposed by Li et al. [95]. The detection of driver drowsiness was by means of ANOVA and the detection of the driver’s grip on the steering wheel was by means of the detection of the driver’s grip on the steering wheel. The overall success rates were 86.6% and 88.3%, respectively.

Fatigue driving recognition based on lane departure

Automatic lane departure detection has received much attention in recent years as many traffic fatalities are associated with unintentional lane departures. Some scholars have studied this, for example, Lee et al. [96] have proposed a feature-based machine vision system that uses edge information to define an edge distribution function (EDF) and uses it to detect lane departures of vehicles travelling on the road. A fatigue state detection model based on multiple regression and extracting features from vehicle lateral position parameters was developed by Berglund [97] et al. Image processing-based lane departure recognition techniques have been extensively investigated, such as video feature-based recognition methods [98, 99], neural network-based recognition methods [100–104], and probabilistic methods [105]. Most of the researchers share and combine the different principles of these methods, and a common feature of these methods is the way in which they provide a reliable algorithm for the extraction of lane-related information. Measuring the relative position between lane markings and vehicles has become a well-known method in lane departure detection design due to its conceptual simplicity [98, 107]. To obtain the offset between the lane center and the body center axis, the method relies on the precise positioning of the lane markings. Gaikwad et al. [108] proposed a technique which uses a Partial Linear Stretch Function (PLSF) to detect unwanted lane departures of vehicles on the road. It has been demonstrated that the system is able to detect the boundary between lanes of traffic in the context of various artefacts such as changing light conditions, poor lane markings and vehicle obstacles, while maintaining lane recognition rates of over 97%. Lee et al. [109] introduced a lane departure identification (LDI) system for sensing LD through artificial vision. The system enhances the robustness of machine vision through special methods such as boundary pixel extractor (BPE). Jung et al. [110] proposed a lane departure detection algorithm with haar-like features for low computational power systems. The results showed that the algorithm succeeded in detecting 90.16% of the messages. Yu et al. [111] introduced a lane departure detection method consisting of image preprocessing, binarization processing, dynamic threshold selection and linear parabolic model fitting. Experimental results demonstrated the method’s effectiveness and feasibility. He et al. [112] proposed a method based on Canny algorithm as an edge detection method, selecting Hough transform as an effective warning method to detect lanes deviating from a straight-line. The experimental findings demonstrate that it is effective and accurate in retrieving street information from the acquired images.

Fatigue driving recognition based on accelerator pedal

Miyajima et al. [113] proposed a Gaussian mixture model (GMM) for modelling and performed spectral analysis of accelerator pedal operation signals to extract driver features for recognition during acceleration or deceleration, and the results of the experiment revealed a successful recognition rate of 76.8%. A two-layer hidden Markov model (HMM) structure was proposed by He et al. [114] for pedal-based identification. Wen et al. [115] proposed a single-pedal regenerative braking control (RBC) system based on a learning algorithm to reduce driver workload. By recognizing the driver’s intention based on pedal operation, the strategy always meets the driver’s braking requirements.

Fatigue driving recognition based on sensor data of other vehicles

In addition to identifying fatigue driving behavior through the above vehicle driving data, some scholars extracted statistical features [116, 117] and time series features [118, 119] from other data collected by vehicle sensors. The features that are extracted are then applied to traditional machine learning techniques, such as the random forest [120]. and SVM [121] to detect changes in the movement of the vehicle. Some scholars used other vehicle parameters to identify fatigued driving behaviors. For example, Wakita and others [122] used vehicle speed, braking, accelerating and distance from the preceding vehicle as indicators of tiredness. The comparative study shows that the Gaussian model is more effective as a speed model than the Helly model, with an accuracy of 73%, when the features are entered into the Gaussian Mixture Model (GMM) and the Helly model. Some researchers are using different types of sensors to collect data on car speed, fuel consumption, engine speed, steering wheel grip, speed measurement, brake pedal force, etc. in order to detect drowsy2 driving [123–125].

Sensor data recognition of driving fatigue based on deep learning

In addition to identifying tired driving with traditional vehicle sensor data, vehicle sensor parameters based on deep learning show superior performance in identifying tired driving behavior and its principle is shown in Fig. 5. Relevant scholars have carried out many explorations on using deep learning to build fatigue driving recognition models and achieved excellent results. For example, Sandberg [126] et al. proposed a feedforward neural network based on fatigue detection model for steering angle detection, and experiments showed that the detection rate of this method was stable. The frequency of steering wheel angle was extracted as a discriminant index and a neural network-based detection model was developed by Sayed et al. [127]. A K-means fuzzy clustering method for fatigue driving behavior recognition was proposed by Hailin et al. [128]. The results indicate that the proportion of fatigued driving detected is 97.4%. Li and others [129] proposed the learning model of the characteristics of the fatigued driver based on the structure of the recurrent neural network, and the average recognition rate of this model was 87.30%. A Multilevel Ordered Logit (MOL) model, an SVM model and a BP neural network model have been developed by Chai et al. [130]. They found that the MOLs outperformed the other models in detecting driving conditions using steering wheel parameters and accounting for individual differences. Jeon et al. [131] proposed an integrated convolutional neural network model to detect pedal pressure pedal sensor data during driver fatigue with up to 94.2% accuracy. Based on the steering wheel direction perception time series, Li et al. [132] constructed a fuzzy recursive neural network model. After blurring the input layer, to realize the memory of the fatigue features, the hidden layer weights are distributed to improve the feature depth capability of the steering wheel angle time series. The average recognition rate of the model is 87.30%. Sun et al. [133] proposed an adaptive fatigue detection model based on Takagi-Sugeno Fuzzy Neural Network (T-SFNN) feature fusion. By using the driver’s visual measurement and the vehicle’s behavior as a fusion of the fatigue features, the model achieved an accuracy of 92.1%.

In summary, fatigue driving identification based on vehicle sensor data is carried out from steering wheel Angle, lateral offset, acceleration, and other indicators. This method has no intrusion on drivers and is convenient for data collection. Table 4 summarizes the state of research and the advantages and disadvantages.

Analysis of fatigue driving characteristics detected by vehicle sensor parameters

Analysis of fatigue driving characteristics detected by vehicle sensor parameters

With the development of Artificial Intelligence and the Big Data revolution, multi-data fusion approaches to fatigue detection are more accurate and robust than single-data approaches. A large number of studies on the use of multiple data fusion for the detection of driver fatigue have been carried out by relevant researchers and good results have been achieved. For example, Singh et al. [134] proposed a framework for efficiently detecting drivers’ drowsy states and automatically alerting them using deep learning CNN techniques. The researchers also compared the accuracy of the model under different activation functions, including ReLu, SeLu, Sigmoid, Tanh, and SoftPlus, and found that ReLu was more precise, with an accuracy of 98.21%. Abbas et al. [135] adopted the multi-layer transfer learning method based on CNN and DBN to identify drivers’ fatigue driving behaviors from various mixed features, with an accuracy of 94.5%. Using a LSTM layer and a gated recurrent unit (GRU) layer as the RNN, A detection method based on combining CNN and RNN was proposed by Sa et al. [136]. The accuracy of this method is 96.0%. Griesbach et al. [137] built a 2D-RNN LSTM model to extract inertial sensor data for recognition of fatigue driving behavior, with better performance than existing mainstream models. Yarlagadda et al. [138] used LSTM for the prediction of driver fatigue status with a detection rate of 97.25%. An approach based on convolutional neural networks and long-term and short-term memory to detect driver fatigue in real time was proposed by Liu et al. [139]. Using the continuous time series of the face and steering wheel angle feature parameters SA as input to the LSTMs and fatigue level as output, the LSTMs were able to segment the driver into super pixels using linear clustering with a real-time detection accuracy of 99.78%. Zhao and others [140] proposed a method that relies on multiple feature fusion and uses InceptionV3 (CNN) for the initial feature extraction. Then the LSTM was used to process the video data sequence for recognition, and finally the Blood Volume Pulses (PBV) was used to make the final judgement, with a recognition accuracy of over 95%. Zhu et al. [141] proposed a multi-feature fusion method based on Dempster-Shafer (DST) and FNN (FNN) methods, where recognition results are based on the modified evidence combined with Dempster rules. The recognition accuracy reached more than 90%, according to the experimental results.

In conclusion, the multiple data fusion based drowsy driving detection method can eliminate biases in the results caused by environmental factors such as lighting and overcome errors caused by delays in different detection devices compared to the other three single detection methods. The enhanced complementarity between the feature information involved in multi-data fusion can improve the reliability of fatigue detection and the adaptability to different environments.

Summary and prospects

This paper classifies the commonly used fatigue detection methods, and reviews the present state of research in fatigue detection, both nationally and internationally. The detection is based on the physiological signal of the driver, the detection is based on the visual signal of the driver, detection is based on vehicle sensor parameters and detection is based on multiple information fusion. We discuss the visual and non-visual characteristics of driver behavior. We also discuss driver performance behavior in relation to vehicle characteristics. We discuss in detail the detection of visual features, such as the detection of eye correlation, the detection of mouth and facial expression. Based on the relative practicability of the driver’s face recognition method, it is only necessary to install an on-board camera on the vehicle to capture the driver’s face features, use relevant algorithms for data analysis, and issue an early warning to avoid traffic accidents. Visual detection holds great promise as an approach to driver fatigue detection and will become an important driver safety tool. In terms of non-visual features, we discuss different physiological signals and possible ways to use these signals to detect driver fatigue This kind of detection method equipment installation is difficult, will cause interference to the driver’s normal driving, and expensive, difficult to promote. In the features based on vehicle sensor data, we describe steering wheel motion, gas pedal force, and lateral position offset. Finally, the development potential and trend of the driver tiredness detection model based on convolutional, recursive and fuzzy neuronal networks are analyzed and prospected. The following is a description of the characteristics of some of the methods used in this paper to detect fatigued driving.

Detection based on driver’s physiology has the advantages of being highly reliable, highly sensitive and highly resistant to interference. However, this detection method requires professional testing equipment, and the price is relatively high. However, this requires the use of complex detection equipment while driving, and this can cause a certain amount of inconvenience to the driver, interfering with the normal operation of the vehicle and reducing the driver’s field of view. Therefore, the practicability of this method is poor, and it is difficult to promote the use in the actual driving environment [142].

Fatigue detection methods based on visual signal characteristics usually identify the fatigue degree of drivers according to the behavioral characteristics of their faces (eyes, mouth, etc.) when they are tired. The disadvantage of this method is that it is easily influenced by factors like light, whether the driver is wearing glasses and the seating position. The advantage of this method is that it will not cause interference to the driver, and the feature data acquisition is more convenient and direct. As well as its high detection accuracy, it also benefits from good real-time performance, low cost and robustness. In the past few years, with the rapid progress of image recognition and computer vision technology, the convolutional neural network-based fatigue driving detection method can be used to quickly and accurately analyses image features. Fatigue detection algorithm based on visual signal features came into being and is becoming more and more mature. Thus, this method is more applicable than the other two and is likely to become the dominant method for driver fatigue detection going forward.

Driver fatigue detection uses vehicle sensor data to detect anomalies in vehicle speed, steering angle, and vehicle roll. This method is based on the driver’s operational control of the vehicle in combination with information on road conditions and vehicle performance data to assess the driver’s state of fatigue. The method is easy to detect and the algorithm is simple. However, road and driving conditions, vehicle type, individual driver differences and driving style can all make this less reliable [143]. With this method, abnormal vehicle data can only be detected when the driver is in the vicinity of an accident, which is extremely dangerous for the driver. In comparison with the visual signal detection, the detection effect of this method is a little bit worse. Thus, the analysis result of this method is more suitable as a secondary recognition indicator than as a primary recognition indicator.

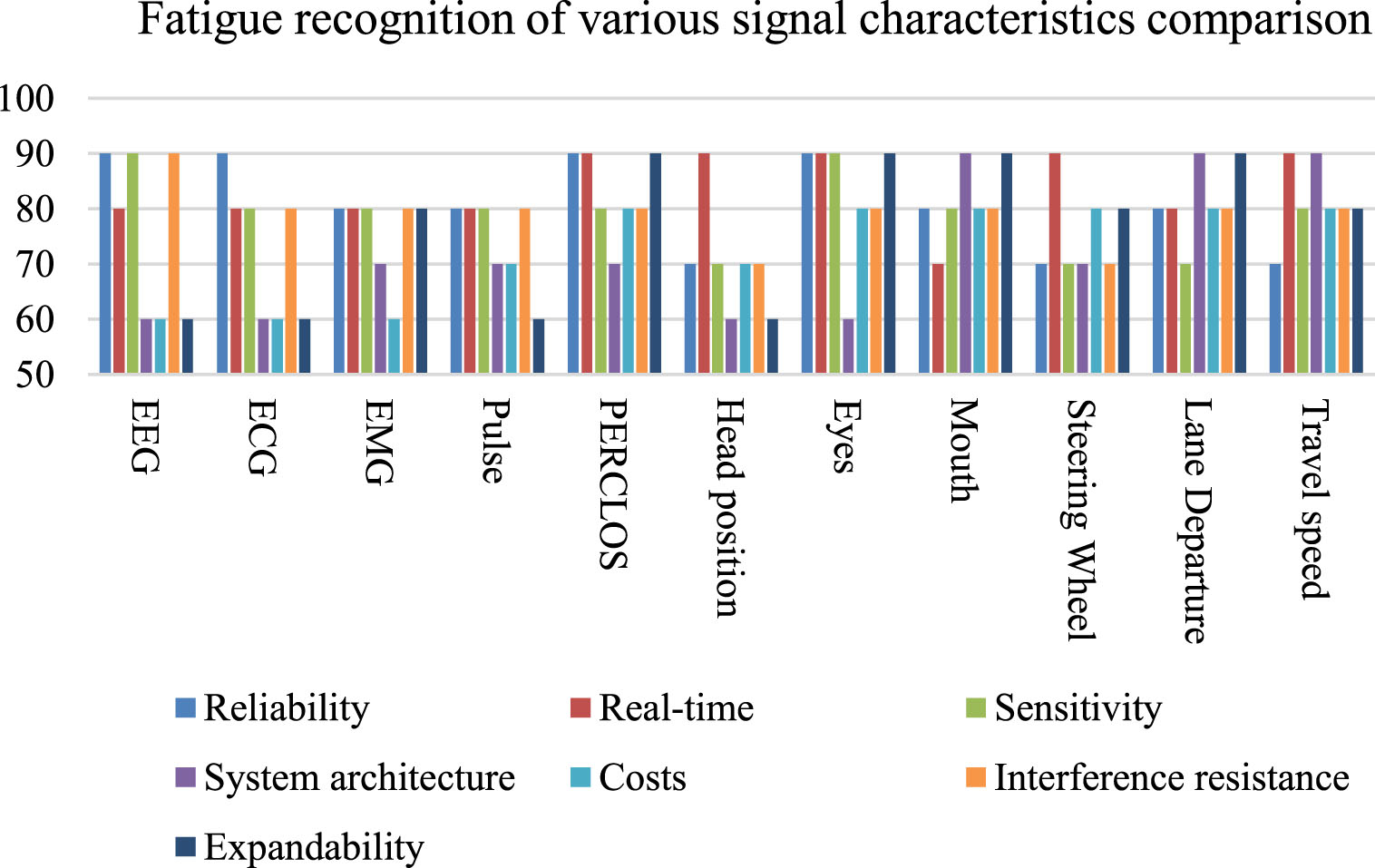

Comparison of the characteristics of several fatigue driving recognition signals

Comparison of the characteristics of several fatigue driving recognition signals

Comparison of the characteristics of the various signals for fatigue driving recognition.

Multi-feature fatigue detection combines fatigue characteristics such as driver behavior, facial information, vehicle operating parameters and physiological parameters to analyze fatigue status. The key and difficult point of the research of fatigue drive recognition method based on multi-information fusion is to establish an effective information fusion decision model according to the reliability and influence degree of various data information. As deep learning and neural networks are popularized and applied in various fields, research on multi-feature fusion deep learning driver fatigue recognition is advancing rapidly. These methods have great advantages in the accuracy of fatigue detection, and the results are relatively reliable. However, in order to collect more data information, more sensors and other devices will be used, and the cost of hardware will increase. What’s more, with more information being processed, operational costs are increasing, real-time performance is needed and large-scale sample training is challenging. Among them, LSTM, CNN, RNN and FNN classifiers are favored by researchers in sleepiness detection systems.

In general, fatigue driving is a major problem in public transport safety and the main cause of traffic accidents. It is an urgent task to study the effective detection method of fatigue driving. It is important to warn drivers in good time if they start to feel tired and to reduce the number of accidents.

The characteristics of various fatigue recognition signal data summarized in this paper are shown in Table 5. The evaluation indicators of various characteristics are expressed in the form of a percentage system. Among them, “good, high reliability, low cost, strong function, simple system” is 90;” Better, higher, stronger and more complex is “80;” Generally “is 70;” Poor, complex system, high cost “is 60. The resulting bar graph is shown in Fig. 8.

The number of road accidents increases as the number of cars on the road increases. Driving while drowsy is one of the major causes of accidents on the road. For this reason, it is very important to investigate how drowsy driving can be detected with a high degree of accuracy, good real-time performance and high resistance to interference. The field of fatigue detection is still evolving, as no single parameter is sufficient to accurately assess a driver’s fatigue state. Some researchers have proposed to develop a technology that can detect fatigue under all conditions with good robustness, real-time performance and high accuracy, which means there is still a lot of work to be done. Therefore, an important research direction for future fatigue driving detection will be the combination of deep learning and multi-data fusion techniques to identify fatigued driving behavior. Second, “car connectivity” is the development trend of intelligent transportation, the combination of “car connectivity” and driver fatigue detection is a good exploration and research, and its powerful data transmission and analysis capability can greatly contribute to the accuracy and real-time performance of driver tiredness recognition. At the same time, through combing the domestic and foreign fatigue driving recognition technology, it can be summarized that future research should be focused on the following aspects.

Firstly, how to make the visual signal monitoring system more stable, the transmission delay is shorter, the human-machine interface is more effective and more comfortable, and the fatigue detection method is more accurate and more efficient, will be the direction of development for this technology in the future.

Secondly, driver fatigue can be detected by combining active and passive detection methods. In the application of active detection method, we should avoid the interference of subjective factors as much as possible, and carry out reasonable weight allocation for different objective descriptions of participants.

Thirdly, the testing and verification of the fatigue detection algorithm can be transferred to the actual road as far as possible, which will greatly improve the practicability of the research results. The current detection algorithm cannot be tested independently and must be implemented with the help of a third-party platform. In the future, a module could be developed to store the algorithm and test it independently. At the same time, the data set used to test the algorithm is relatively single, and the requirements for the environment are also relatively strict. In the future, multiple data sets should be used to verify the algorithm to make it more stable in a complex environment.

Fourth, an alert system can be created in the future. that will remind the driver to adjust the sitting position when the driver’s head or body is twisted at an excessive Angle.

The authors declare that there are no conflicts of interest regarding the publication of this paper.

Footnotes

Acknowledgments

This work was supported by the Key Scientific and Technological Project of Henan Province (222102220106), partly supported by Key Research and Development Projects of Henan Province in 2022 (221111240200), and partly supported by Major Science and Technology Projects of Henan Province in 2022 (221100220200).