Abstract

Pedestrians are the most critical and vulnerable moving objects on roads and public areas. Learning pedestrian movement in these areas can be helpful for their safety. To improve pedestrian safety and enable driver assistance in autonomous driver assistance systems, recognition of the pedestrian direction of motion plays an important role. Pedestrian movement direction recognition in real world monitoring and ADAS systems are challenging due to the unavailability of large annotated data. Even if labeled data is available, partial occlusion, body pose, illumination and the untrimmed nature of videos poses another problem. In this paper, we propose a framework that considers the origin and end point of the pedestrian trajectory named origin-end-point incremental clustering (OEIC). The proposed framework searches for strong spatial linkage by finding neighboring lines for every OE (origin-end) lines around the circular area of the end points. It adopts entropy and Q measure for parameter selection of radius and minimum lines for clustering. To obtain origin and end point coordinates, we perform pedestrian detection using the deep learning technique YOLOv5, followed by tracking the detected pedestrian across the frame using our proposed pedestrian tracking algorithm. We test our framework on the publicly available pedestrian movement direction recognition dataset and compare it with DBSCAN and Trajectory clustering model for its efficacy. The results show that the OEIC framework provides efficient clusters with optimal radius and minlines.

Keywords

Introduction

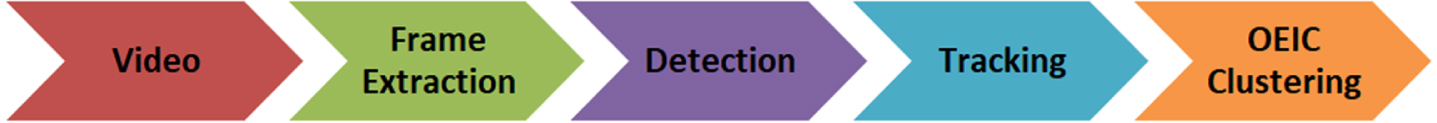

Pedestrian movement direction recognition is important in autonomous driver assistance and security surveillance systems. In real-time pedestrian detection and predicting their behavior have been extensively explored in the field of computer vision [1]. In recent years, various deep learning and machine learning techniques have been developed to learn pedestrian dynamic motion based on trajectory, motion, gait etc. [2, 3]. But these techniques need large labeled data and high computational cost to perform the task. The various factors that impact pedestrian movement detection are: occlusion, illumination and body pose [4–6]. To solve these challenges, we proposed a simple and intuitive origin-end point incremental clustering (OEIC) framework for pedestrian movement direction recognition by extracting spatial linkage information using OE line data. The proposed framework learns pedestrian movement dynamics in road scenes. The frames are extracted from the input videos and further processed for pedestrian detection and tracking. Similar pedestrian tracks are clustered together that capture the pedestrians motion activities in the surrounding environment using origin-end point incremental clustering (OEIC). Fig.1. shows the basic pipeline of our work.

Motivation

From related work, as discussed in Section 2, we observe the pedestrian direction of motion recognition have following three research gaps. First, the authors used machine learning or deep learning classifiers based on spatial data, i.e. body pose, head orientation, etc., to classify the direction of motion. These methods may fail due to the impossibility of properly handling and adapting to changes in pedestrians movements [7]. Another is limited pedestrian datasets are available for training supervised classifiers. Second, pedestrian trajectory analysis focusing on the visual representation of temporal movements. These methods take high computational costs. Third, it focused on pedestrian motion recognition using sensor data. These approaches are very intrusive from the pedestrian viewpoint and need to provide more information to distinguish between different pedestrians actions. These research gaps motivate us to develop a computationally efficient framework for the pedestrian direction of motion recognition without labeled data and has applications in finding mobility patterns of pedestrians in critical areas like shopping malls, square, zebra crossings, stations and public gatherings.

Basic flowchart of our proposed work.

The OEIC framework starts with the idea that the origin and end (OE) line of pedestrians with similar flows or trajectory are in the same region within a certain radius (D r ). These OE flows allow us to search pedestrian direction of motion such as front, right and left movements. To accomplish this task, we need to first detect the pedestrian using YOLOv5 algorithm and then track multi-pedestrians across the frame using centroid coordinates from the output of the detected bounding box and then apply the OEIC framework. We evaluate our proposed work using Q measure and entropy to showcase dominant mobility patterns from a large number of flow data effectively.

We summarize our contributions as follows: We use a state-of-the-art YOLOv5 object detection deep learning model for pedestrian detection. After detecting the pedestrian, their trajectories are estimated across the video frames. A novel clustering method is proposed to cluster origin-end point trajectory for pedestrian movement direction recognition named Origin-End point Incremental Clustering (OEIC). The cluster quality is estimated using Entropy (H) and Quality measure (Q

measure

). The proposed method is evaluated on a publicly available dataset named- Pedestrian movement direction recognition for its efficacy. OEIC framework performed better than other State-of-the-art methods.

The remainder of the paper is organized as follows: The overview of previous work is presented in Section 2. Section 3 discusses pedestrian detection, tracking and Origin-End incremental Clustering (OEIC) framework. The experimental results are presented in Section 4. Finally, in Section 5, we conclude the paper.

Pedestrian detection and tracking are the basis for pedestrian safety in ADAS and system monitoring. Various techniques have been discussed in recent years for pedestrian detection and tracking [8–12]. However, occlusion, low resolution, illumination, weather conditions and day timing are challenging tasks for video pedestrian detection.

In recent years classic as well as machine learning (ML) techniques have been used for pedestrian detection [13–17]. Authors in [13, 17] discussed hybrid techniques for pedestrian detection. The major concern with these techniques is high computational complexity. Authors in [18] discuss sixteen pedestrian detectors and test them over several public datasets. The obtained results do not handle pedestrians occlusion and high dimensional variations.

The hybrid machine learning approach incorporating a Support Vector Machine (SVM) with Adaboost has been discussed in a complex video scene covering roads and surrounding objects [19]. Authors in [20, 21] discussed person detection methods using Kalman Filter(KF) over publicly available video dataset capturing dash cam videos and wearable camera. The optical flow feature has been used to detect humans and vehicles in traffic scenes [22]. This method resolves illumination and occlusion problems.

Another study to handle occlusion and visibility issues for pedestrian detection in videos [1] uses Restricted Boltzmann Machine (RBM). In [23], the authors used Deep Convolutional Neural Networks (DCNN) for detection. However, the discussed techniques for detection fails for low illumination data. FlowNet2 CNN with optical flow technique is used for person abnormal behavior detection in the crowded scene [22]. It overcomes various challenges for person detection. YOLOv5 [24] state-of-the-art object detection model is used to detect various objects with over 80 classes trained over the Common Objects in Context (COCO) dataset. It is a reliable and efficient method for pedestrian detection.

Pedestrian tracking is the next crucial step for understanding the intent of pedestrians. Authors in [25] discussed the heuristic-based technique for tracking human gait. Traditionally KF, histogram of Oriented Gradients (HOG), intensity, optical flow, Scale-Invariant Feature Transform (SIFT), Local Binary Pattern (LBP) etc. have been used for estimating the pedestrian trajectory [26, 27]. The approaches discussed above focus on detection but cannot handle pedestrian movement which plays a vital role in pedestrian safety.

In recent years, machine learning and Deep Learning have been discussed for pedestrian tracking. ANN-based tracking used in [28] for pedestrian tracking and intent prediction. They used person head coordinates for tracking the person across the frames in videos. In paper [3] person’s neck position have been used for tracking on traffic roads. In paper [29] dense optical flow features have been discussed for pedestrian tracking. In paper [30] authors discussed combined CNN-based pedestrian detection, tracking and pose estimation to predict the crossing action from dash-cam based videos. Paper [31] discusses an IMU-based pedestrian positioning tracking technique that assists bedridden patients by tracking the patient indoor environment. A generic deep learning and color intensity-based technique is discussed for person tracking in [32]. It overcomes the challenges i.e. failing at night and low illumination weather conditions. A multi-object real time tracking technique is discussed in the field of computer vision application in [33]. The authors used MobileNet based custom CNN techniques for feature extraction and a set of algorithms to generate associations between frames and tested them on the publicly available Towncentre dataset.

Pedestrian dynamic motion recognition is an important task in ADAS and pedestrian safety. In paper [5] author discussed three CNN-based models (AlexNet, GoogleNet and ResNet) for pedestrian direction. The results show that ResNet gives 79% accuracy in comparison to other models. Authors in [34] discussed Capsule Network for classifying pedestrian walking direction in order to alert the driver. Pedestrian body pose feature were used to predict pedestrian motion on road scene in [35]. In paper [36] LSTM encoder-decoder is discussed to learn abnormal events in a complex environment. The hybrid method failed to differentiate between two different actions. To study abnormal behavior detection on video surveillance, incremental semi-supervised learning has been discussed [4]. Using the trajectory data feature, the classifier distinguishes between normal and abnormal behavior patterns. Recurrent neural network based technique have been discussed by [37] for embedding pedestrian dynamics in multiple moving agents. It captures the pedestrian’s motion using Long-Short-Term Memory networks. Results were evaluated on publicly available Stanford Drone and SAIVT Multi-Spectral Trajectory datasets. Authors in [38] discussed Micro-Doppler (MD)-based classification technique for the accurate direction of motion estimation. They used support vector regression and multilayer perceptron-based regression algorithms.

The primary challenge in the supervised learning technique is that we need labeled data for training the model. Therefore, we study unsupervised learning techniques that clusters similar activities in one cluster based on their similarity. This results in finding clusters grouped together having similar features. In [39], authors discussed an unsupervised learning algorithm that can form clusters of human motion for road surveillance data. Authors in [40] discussed an extended version of the Variational Gaussian Mixture model (VGMM) based probabilistic trajectory prediction technique. It sub-classifies pedestrian trajectories in an unsupervised manner based on their estimated sources and destinations and trains a separate VGMM for each sub-category. K-mean algorithm has been discussed in [41] for activity recognition using static frames features.

In [42] authors discussed hierarchical clustering technique to detect small groups of people traveling together that can be used in evacuation planning and handling real-time public disturbances. An unsupervised mobile robot have been developed to learn human motion patterns in a real-world environment to predict future behaviors [43]. In [44] authors discussed group sparse topical coding (GSTC) technique to study motion patterns. In [45] used trajectory clustering based on low level trajectory patterns and high level discrete transitions for predicting anomalous pedestrian detection. All the methods discussed above predict pedestrian motion based on trajectory data or pedestrian captured from top view.

To the best of our knowledge, vision base unsupervised learning for pedestrians direction of motion recognition has not been studied earlier. We propose an unsupervised learning framework that clusters the direction of pedestrian motion provided an initial and end point of pedestrian dynamics so that it can be used in ADAS to alert the driver and raise risk alarm.

Methodology

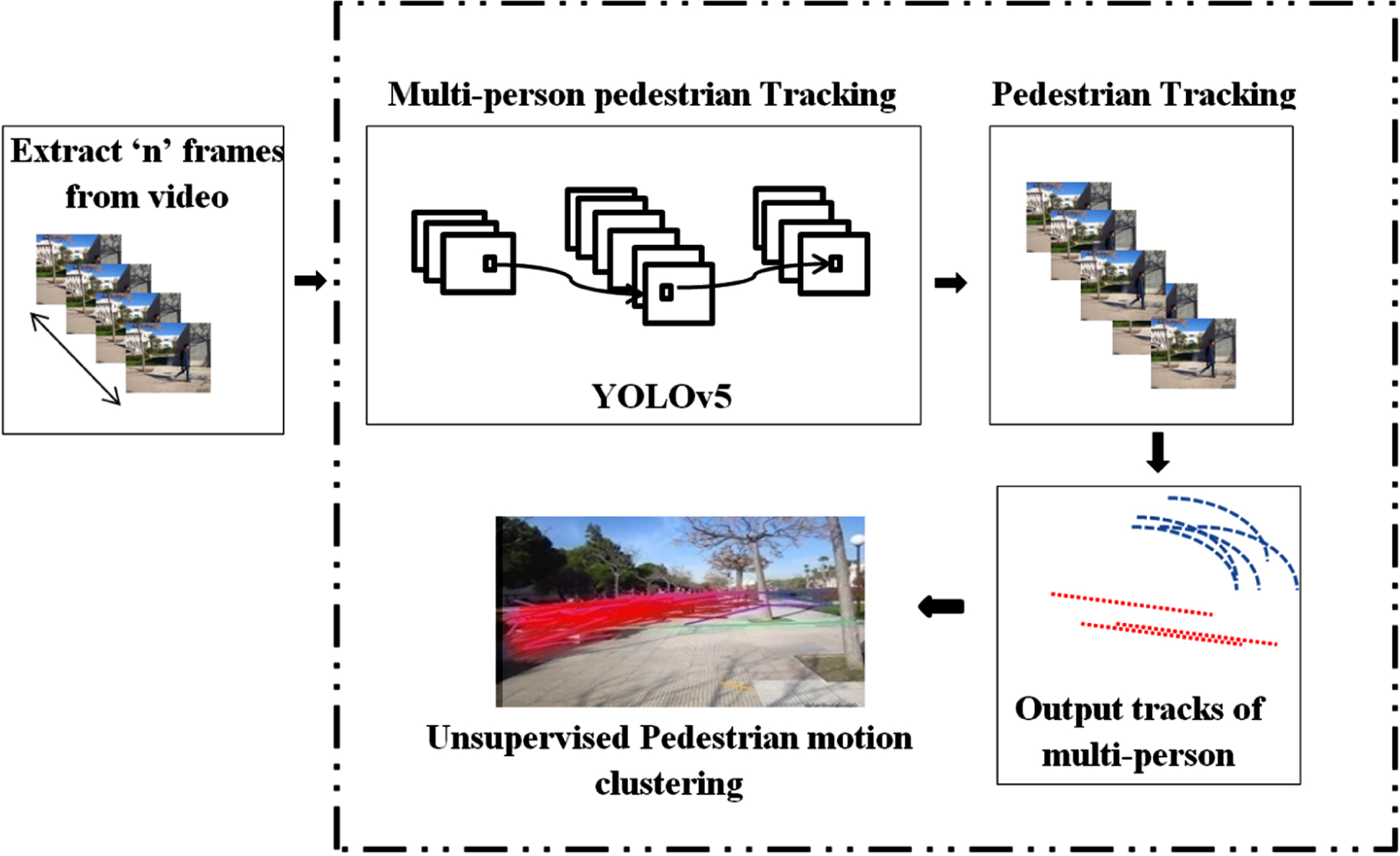

The objective of our work is to develop a fast and efficient unsupervised framework for pedestrian direction of motion recognition. We first detect a pedestrian in the scene using the YOLOv5 model. Then we extract the origin and end point coordinates by tracking the detected pedestrian across the frames. Further, we apply unsupervised incremental clustering to capture possible pedestrian dynamics in the given scene. In Fig. 2, we explain the architecture of our proposed framework in detail.

Proposed framework for pedestrian direction of motion recognition. In step 1, we take video as input and then perform a deep learning model YOLOv5 for pedestrian detection. In step 2, we track pedestrians across the frame. In step 3, we perform unsupervised incremental clustering of the pedestrian direction of motion recognition.

YOLO is the first object recognition model that integrates object detection and bounding box into a single end-to-end differential network. All the variants of YOLO are developed and maintained in darknet environment and are based on CNN. The YOLO model divides the image into regions in the form of a bounding box and calculates each region’s probability. These bounding boxes are weighted using the predicted probabilities. We "only look once" at the image since the technique only requires one forward propagation run through the neural network to produce predictions. After a non-max suppression, it produces known items along with the bounding boxes (which ensures that the object detection algorithm only recognizes each object once). Due to its real-time adequate pedestrian and road surrounding object recognition, we used the recently released architecture YOLOv5.

The YOLOv5 is an object detection model specifically pre-trained over the Common Objects in Context (COCO) dataset. The COCO dataset is primarily utilized for various tasks like recognition of objects, segmentation of objects and labeling tasks. It contains approx. 2,00,000 images and have 80 different classes. In our study, we run the YOLOv5 algorithm related to person classes. The detected output of YOLOv5 [46, 47] gives bounding box coordinate file of each object detected on each frame in the video. These coordinates are further used in the tracking of the person’s class.

Pedestrian tracking

Tracking sequences of objects in video is an essential task for the pedestrian direction of motion recognition. We use spatial information of the frames across the video. Our approach for tracking is to track the detected person across the frames using output obtained from YOLOv5. The output of YOLOv5 is in the form of bounding box coordinates signified by (x i , y i , h i , w i ) and class-id (c i ). Where, (x i , y i ) are the top left coordinate of the detected bounding box and h i , w i are the height and width of the bounding box. Corresponding to person class, we track each person based on its nearest centroid position (x i , y i ) across the frames which form sequence of paths. This centroid is calculated from detected points (x i , y i , h i , w i ) of person class (c i ). If the position of point is far from the sequence, it’s not considered part of that path. We use euclidean distance to calculate the nearest centroid position discussed in equation (1) below. This results, in obtaining sequence of paths T={t1, t2, . . . . . . . , t n }, where each t i ={(x1, y1), (x2, y2),......, (x p , y p )} for each person at the end of the video. To better understand our work, we discuss our approach in algorithm 1 shown below:

1:

2: if t == 1

3: assign unique_id to t k and add centroid (x t , y t ) to t k

4: add t k to T

5:

6: calculate euclidean distance (dist) between centroids (x t , y t ) detected in frame ft-1 and f t using equation (1)

7:

8: add (x t , y t ) to t k of (xt-1, yt-1)

9:

10: assign new unique_id of tk+1

11: add (x t , y t ) to tk+1

12:

13: add t k to T

14:

15:

To calculate the euclidean distance (dist) between two points (x

i

, y

i

) and (x

j

, y

j

) following equation is used.

The main goal of our study is to cluster similar pedestrian motion trajectories in one cluster based on pedestrian paths. The problem is formulated as:

Let L={L1, L2, . . . , L n } be set of lines and each is a directed line connecting origin-end point coordinates i.e. (x o , y o ) , (x e , y e ) and n=|L|, represents the number of lines. We cluster the set of lines, L using proposed OEIC framework to form cluster of similar flow.

Origin-end point incremental clustering(OEIC) framework for pedestrian movement direction recognition

Origin-End (OE) data are a special case of trajectory data that focuses on the origin and end coordinates of the trajectory. Whereas in pedestrian trajectory and intent prediction, the actual trajectory information is important. Therefore, it is essential to monitor the location of each movement actively. In the pedestrian direction of motion, the origin and end points are enough to analyze the connections between locations and their spatial characteristics for detecting their dynamic motion. The key idea is to cluster similar OE lines with sufficient neighbor lines. To better understand the OEIC framework, we discuss our approach in algorithm 2.

1:

2: if j == 1

3: add L j to C

4: add L j to Nls(L i )

5:

6: calculate length of L j using equation euclidean distance formula from equation (1)

7:

8:

9: Select L i which is nearest to L j and assign L j to Nls(L i )

10:

11: Add L j to C

12:

13:

14: ignore the line L j

15:

16:

17:

18:

19: L i is valid cluster

20:

21:

We now discuss the parameters and their definitions used in our framework:

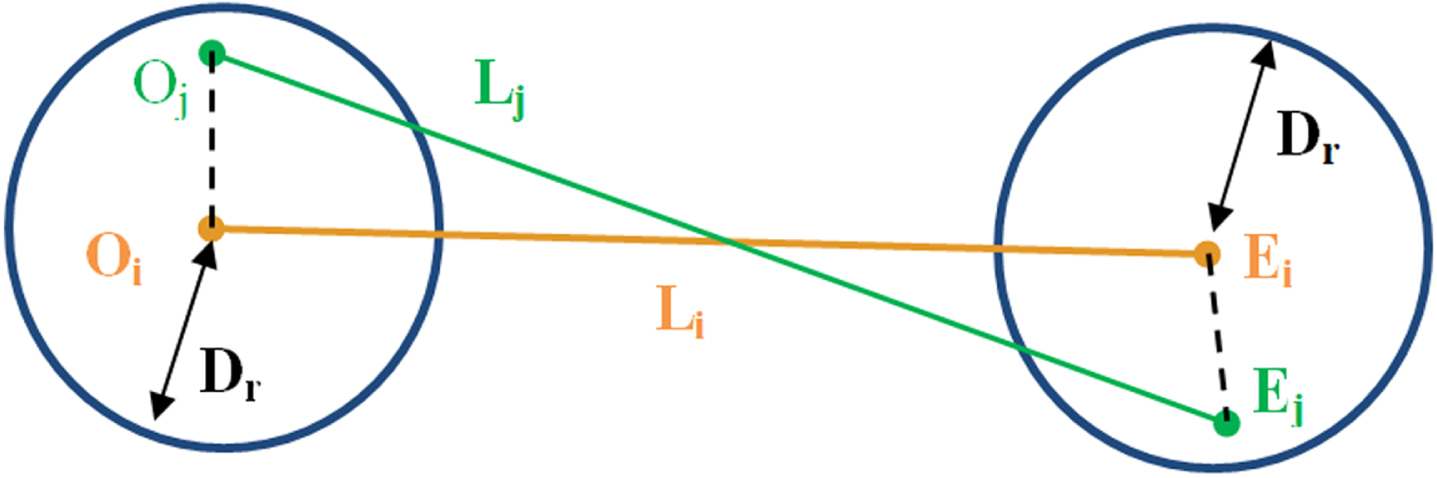

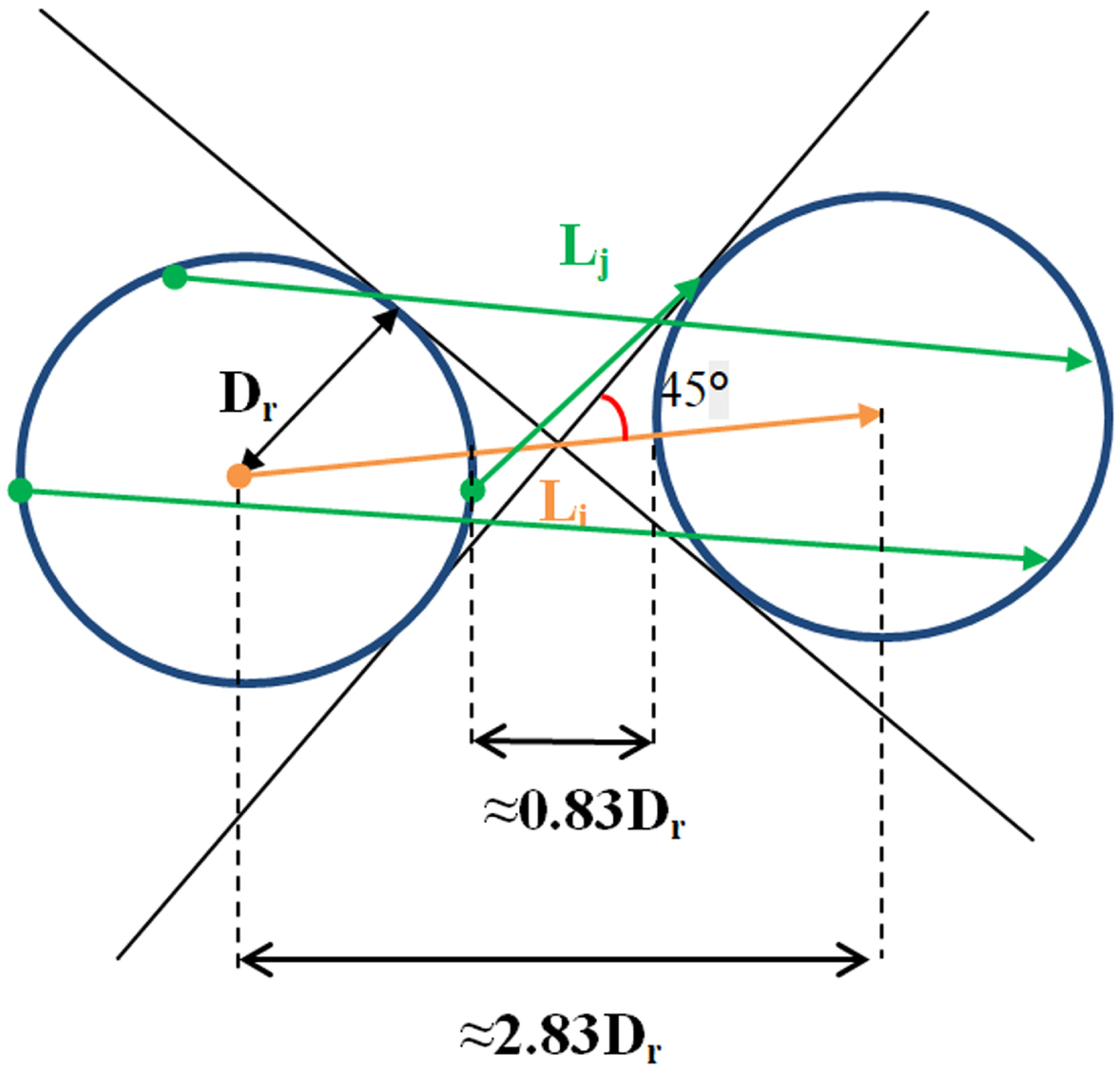

Let O and E be the origin and end points and L j is line joining j th point of O and E. If line L j lies within searching radius of O i and E i , then L j is defined as neighboring line of centerline L i as shown in Fig.3. A centerline may have many neighbouring lines; the number of these neighbouring lines Nls (L i ) is represented as:

Illustrating the neighboring line of a centerline.

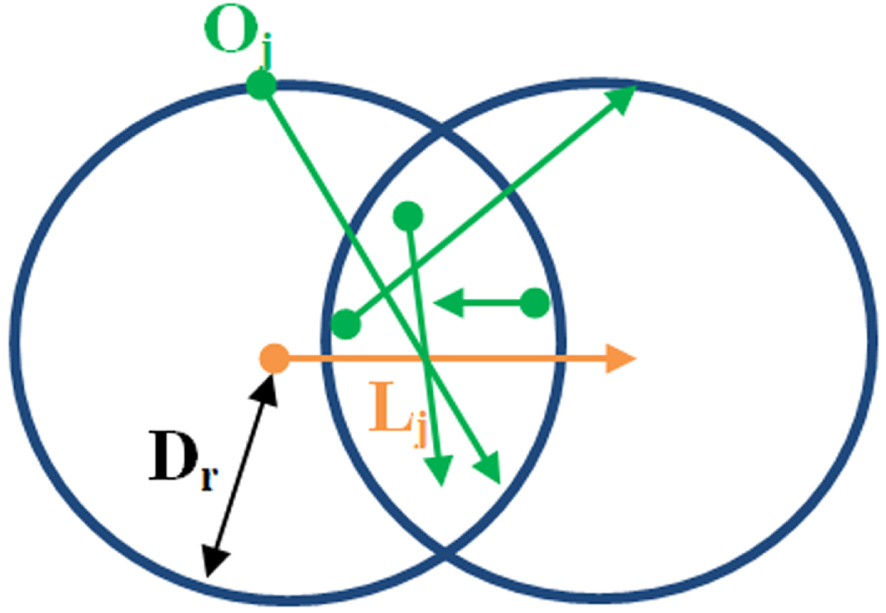

Particular cases of search radius (D r ) (Origin and End points are represented as ’.’ and arrow).

To ensure that our framework should cluster all the lines that are geographically close to each other and in the same direction. We consider only those line L i that are greater than 2.83 D r (calculated and explain in fig 5 i.e. L i > = D r /sin45°). Hence, in our framework, only those OE lines can participate in the clustering whose length is greater than 0.83*D r . This threshold ensures that the angle between the centerline and its neighboring line will always be less than 45°, and the length change won’t differ by more than twice D r .

The length constraint of L i (Origin and End points are represented as ’.’ and arrow).

Determining the values of the two parameters discussed above, we propose the following strategy.

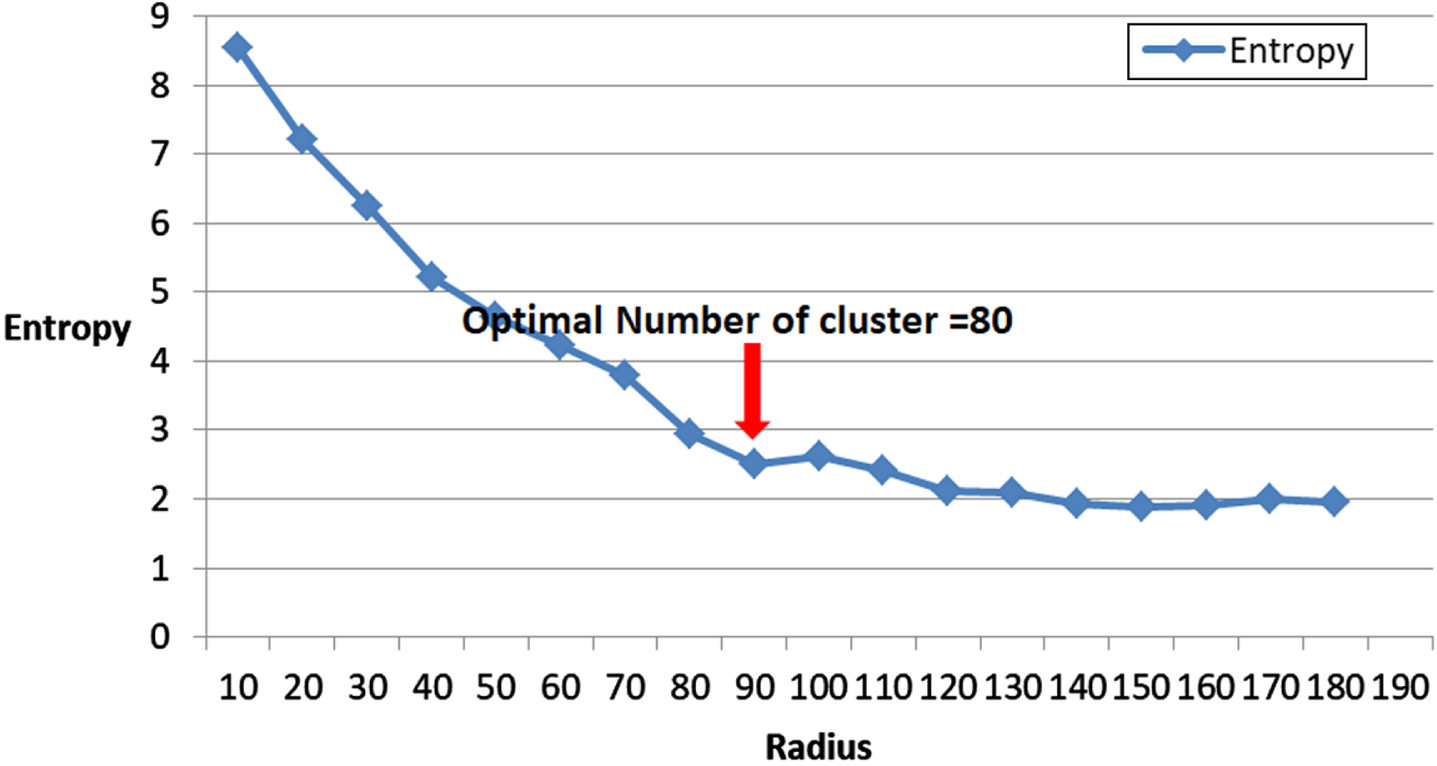

We initialize D r with a small value and increase it gradually. Simultaneously at each D r , we also calculate entropy for all Nls. This heuristic method provides a reasonable range where the optimal value is likely to reside. This method gives a good estimation of the number of clusters and the value of search radius D r [48].

As trajectories are closely related to time series, Euclidean distance is also adopted in measuring trajectory distance [50]. For two line L i and L j with the same size n, the Euclidean distance dist (L i , L j ) is defined as follows:

Major notations used in OEIC framework

Dataset

To test our proposed framework’s performance, we need a specific dataset of pedestrians moving in different directions and covering various road scenarios like a zebra crossing, signal area, sidewalk etc. We use the publicly available dataset Pedestrian direction recognition [5]. It’s the only dataset that captures the pedestrian movement direction. This dataset consists of several hours of videos with 10800 video frames recorded in five different locations captured at 30 fps with 640 x 480 resolution. The hand-held tripod is used to capture pedestrian movement statically and dynamically.

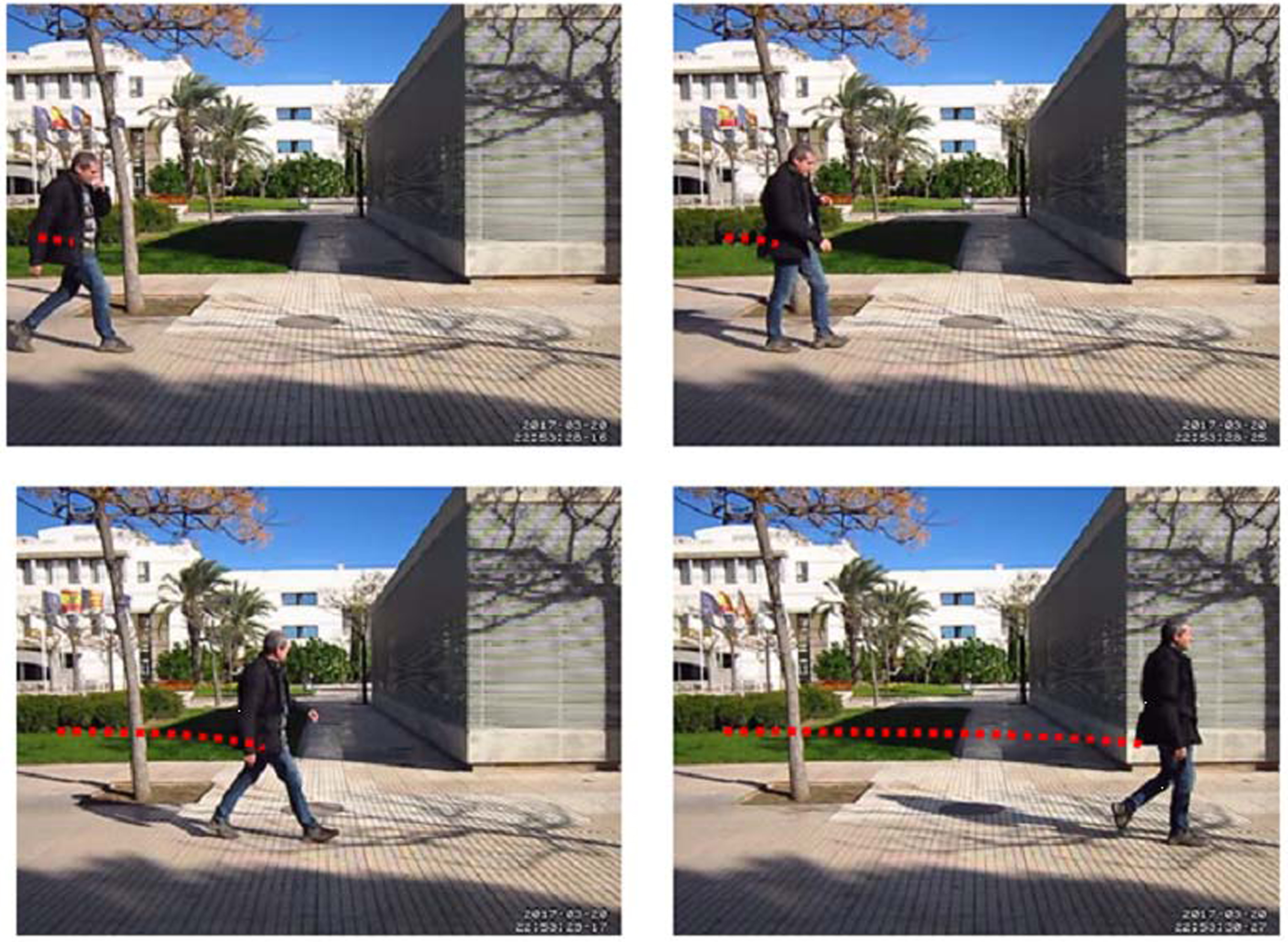

Other datasets related to pedestrians like PEI, INRIA, KITTI, CityPersons, TUD, and EuroCity etc, have been discussed in the literature [9]. These datasets focus on detection and their action/activity, but no information about their direction and intention is provided. The Daimler dataset is the only dataset that provides ground truth information about detection and their intention. It has short video clips with one of the four labeled classes labeled i.e. crossing, walking, standing and bending. So, we used the pedestrian direction recognition dataset developed specifically for the direction of motion recognition. Fig. 6 shows the sample frames of the pedestrian direction recognition dataset.

Dataset images: Left, front and right moving frames from videos.

The experimental result of the proposed framework is discussed in this section.

Detection and tracking

We prefer the YOLOv5 deep learning method over other methods due to its efficient and fast pedestrian detection response in road scenarios [46, 47]. The results are shown in Fig. 7.

Results of pedestrian detection using YOLOv5 on three frames taken from the video dataset.

After detection, we get a bounding box of the pedestrian detected in each video frame. Corresponding to the pedestrian class, we perform pedestrian tracking across frames to get the tracks of each pedestrian. Fig. 8 shows the result of tracking pedestrians in red color.

Result of single-pedestrian tracking on a video frame.

To understand spatial variability of the pedestrian direction of motion, we use OE data lines. To obtain optimal parameters of D r and Minlines, we initialize D r with a small parameter value of 10 and iterate it over an equal time interval. The analysis progress and results are shown in the following section.

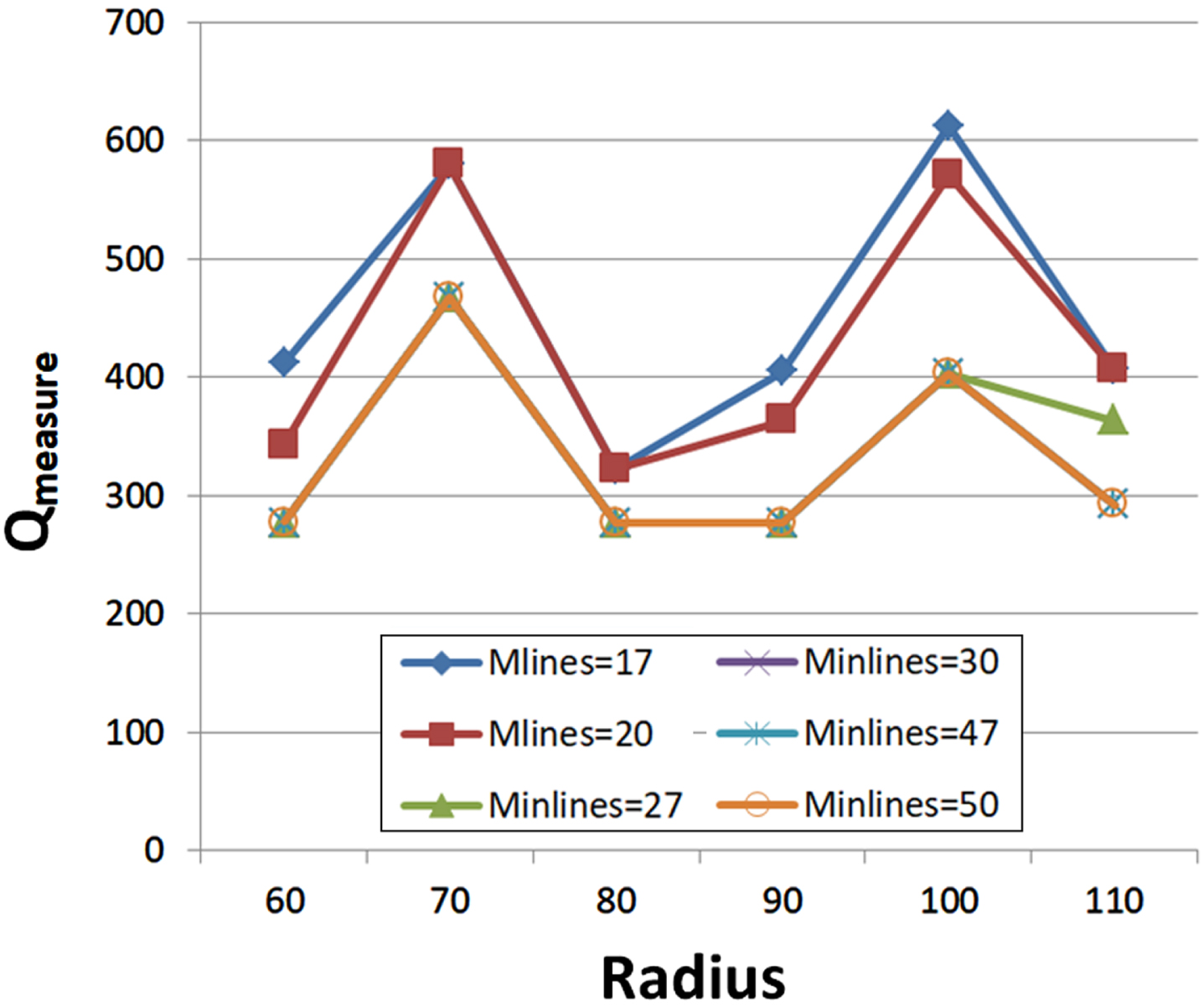

With various D r values, we determined the entropy of each Nls value. To determine the optimal number of clusters, we have to select the value of D r at the "elbow," i.e. the point after which the distortion becomes steady. We noted from Fig. 9 that with an increase in D r , the entropy of Nls decreases and after D r =80, entropy becomes steady. Together with entropy, we calculated Q measure to determine the minimum number of OE lines for stating as a right cluster. Fig.10 shows the quality measure Q measure as D r and Minlines are varied. We observe that our Q measure becomes nearly minimal when the optimal parameter values are used. The minimum H(L) is achieved at D r = 80 and Q measure possess minimum error value at Minlines > =27.

Entropy of all Nls values with different radius from 10 to 180.

Quality measure (Q measure ) with different values of Radius (D r ) and Minlines.

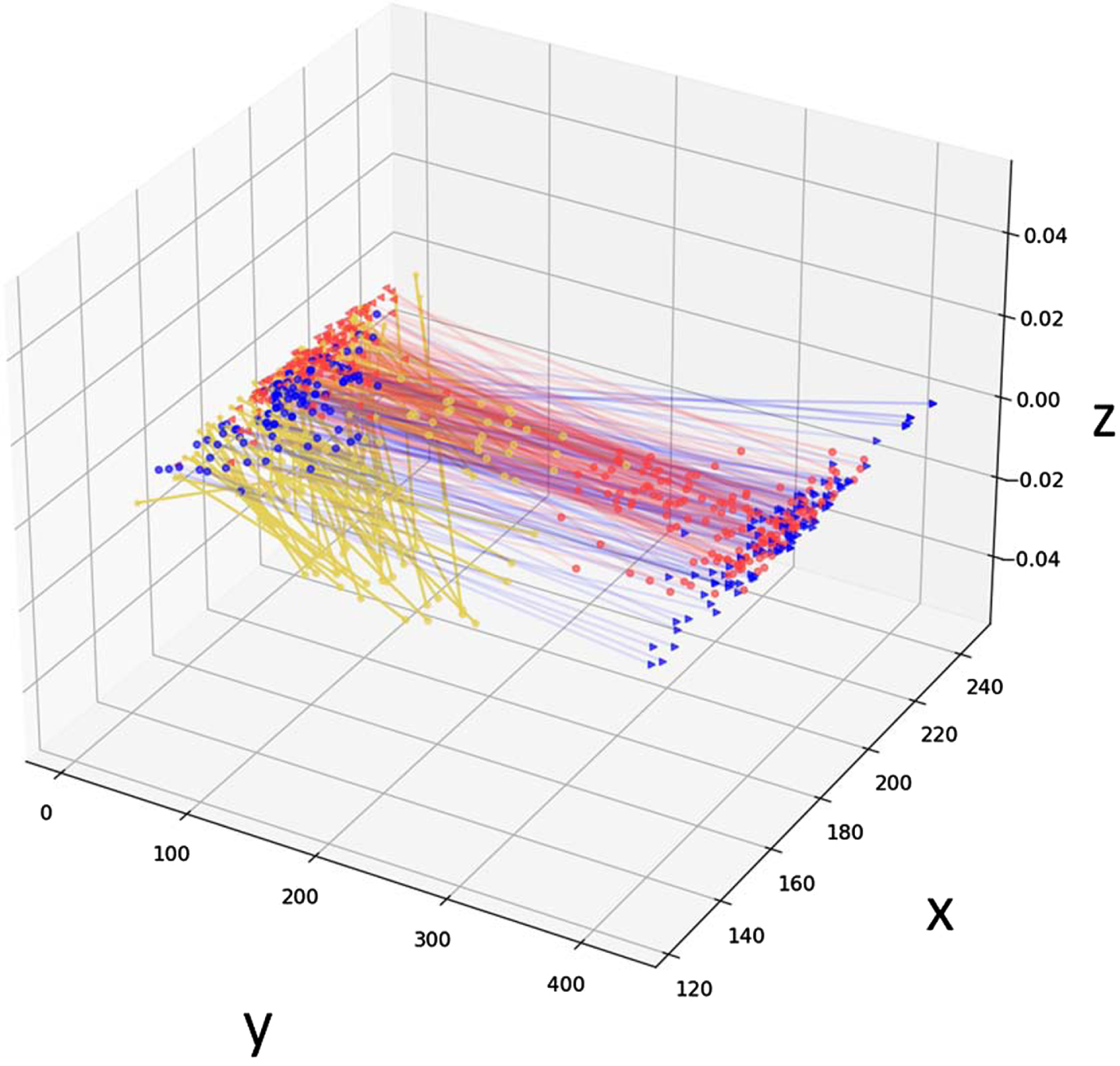

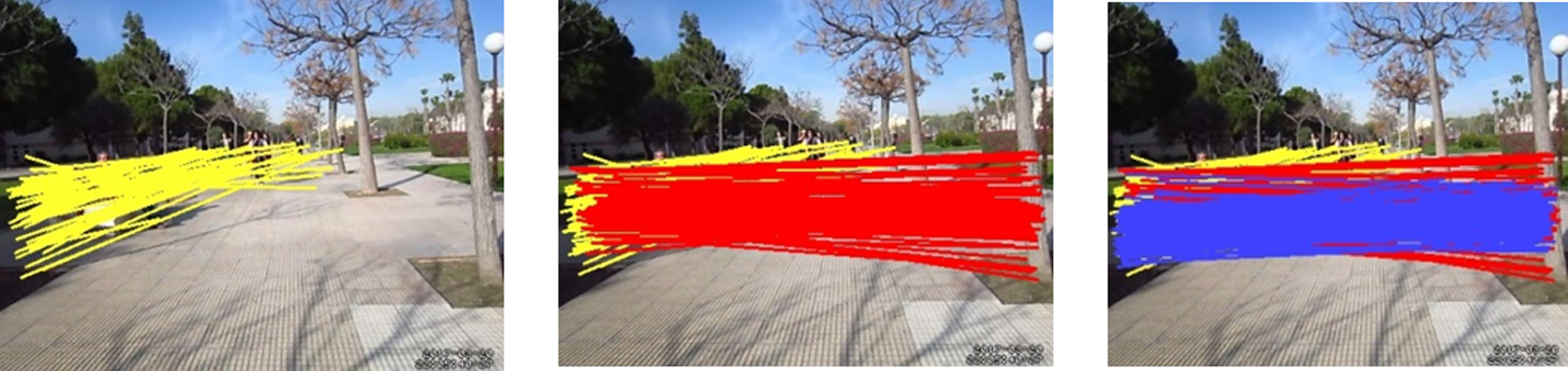

Table 2 shows the outline information of the OEIC clustering result. Figure 11 and 12 show the clustering results in 3D and image frame for better visualization of the proposed framework using the optimal parameter values of D r and Minlines. We observe that three clusters are discovered in most of the dense regions. A dot (.) in the graph represents the origin point and an arrow/star (<,>,*) represents the end point of pedestrians movement. Fig. 11 and 12 show three clusters in the region, verified from the pedestrian direction recognition dataset.

Features of the clusters with different fixed radius

Table 2 shows the outline information of the OEIC clustering result. Fig. 11 and 12 show 3D and 2D views of three clusters verified from the pedestrian direction recognition dataset. We are interested in the computational efficiency of the proposed framework. Therefore, different experiments were carried out to measure the execution time for each stage of the pipeline i.e. from pedestrian detection to clustering as shown in Table 3. We also run two other well-known clustering algorithms: DBSCAN and Trajectory clustering for performance comparison purposes. It is clear from the results that the proposed methods give a better value of Q measure than the other two clustering methods. Our model takes less computational time than Trajectory Clustering but a little more than DBSCAN as shown in Table 4.

3D representation of clustering result. Yellow, red and blue colored lines represent OE lines cluster results. Here three clusters are formed using the OEIC framework.

Clustering results of OEIC framework on pedestrian direction recognition dataset.

Runtime of each stage of the framework

Comparison w.r.t runtime of our framework with other clustering algorithms on pedestrian direction recognition dataset

To the best of our knowledge and exploration, this is the first work that uses unsupervised learning with origin-end clustering methods for pedestrian direction of motion. The authors of the dataset we used in our experiment have applied the CNN model for pedestrian direction of motion classification [5]. Since we are performing clustering on this data, the OEIC framework is revalidated by the label vector that all the pedestrians walking toward left, right and straight on the road are differentiated with an accuracy of 85%. We also find studies in the literature that use supervised and unsupervised methods related to our work using different datasets. The comparative results are shown in Table 6.

We have tested the effects of D r and Minlines values on the clustering result. If we use a smaller D r or a larger Minlines compared with the optimal ones, our algorithm discovers a larger number of smaller clusters(i.e., having fewer line segments). In contrast, if we use a larger D r or a smaller Minlines, our algorithm discovers a smaller number of larger clusters. For example, when D r = 10, then 467 clusters are found, and each cluster contains 1-2 line segments on average; in contrast, when D r = 80, three clusters are discovered, and each cluster includes 30 lines on average.

Error analysis

For pedestrian dynamics learning, our proposed framework performs well. But to better understand the errors made by the OEIC framework, we investigate and discuss below:

Same cluster predicted as two clusters due to overlapping regions. This may be due to partial difference in their spatial movement.

Conclusion and future work

This paper discusses a simple and intuitive Origin-End point Incremental Clustering (OEIC) framework for pedestrian movement direction recognition by extracting spatial linkage information from an origin-end (OE) line data. It identifies neighboring lines by searching the endpoints of OE lines within a circular region (D r ) of the centerline (L i ). Entropy and Q measure have been used to determine the optimum value that helps to select quality clusters. To demonstrate the effectiveness of the proposed framework, we performed experiments on a publicly available dataset-pedestrians movement direction recognition, which contains videos capturing pedestrian movement in different directions on road scenes. The results show that the proposed framework performs better than the DBSCAN and Trajectory Clustering algorithms. The advantage of our framework is that it is simple and backed by extensive experimentation. It can be used in various applications especially in pedestrian safety and detecting anomalous trajectories and understanding pedestrian movement behavior in monitoring and ADAS systems.

Result comparison of our framework with other clustering methods in terms of Q measure on pedestrian direction recognition dataset

This work is a base for analyzing pedestrian motion direction recognition. We would develop a hybrid-clustering technique to obtain new insights into pedestrian dynamics using other spatio-temporal features.

Declaration of competing interest

The authors have no competing interest.

Acknowledgment

The authors thank the anonymous reviewers for their insightful comments and suggestions.