Abstract

Meta-heuristic optimization algorithms are versatile and efficient techniques for solving complex optimization problems. When applied to clustering algorithms, these algorithms offer numerous advantages over traditional optimization methods, including global search capabilities, iterative refinement processes, robustness to initial conditions, and flexibility in handling diverse clustering objectives and constraints. Employing meta-heuristic optimization in clustering algorithms leads to improved accuracy, scalability, robustness, and flexibility in finding optimal or near-optimal clustering solutions. These algorithms generate new individuals iteratively using nature-inspired operations to obtain high-quality results. However, they often suffer from slower convergence and lack guarantees of finding the best solution for every problem, posing ongoing challenges in algorithm development. This study focuses on addressing the issue of premature convergence in metaheuristic algorithms by introducing an automatic cuckoo search (AuCS) algorithm. The AuCS algorithm aims to strike a balance between exploration and exploitation by dynamically updating the step size in each generation, thereby avoiding premature convergence. To evaluate the effectiveness of the proposed algorithm, experiments were conducted on 13 standard benchmark functions and 14 CEC 2005 benchmark functions. In overall performance, AuCS has the best optimum value in 72.22% of cases. This demonstrates the efficacy of the proposed algorithm in achieving improved clustering accuracy and minimizing intra-cluster distance. The proposed AuCS algorithm was applied to data clustering and compared with four swarm optimization algorithms. Here, AuCS outperforms these well-known algorithms in 5 out of 7 datasets. The experimental evaluations in both benchmark functions and clustering problems confirm the promising results of the proposed algorithm, suggesting that AuCS could be considered as a potential improvement over the cuckoo search algorithm.

Introduction

Meta-heuristic optimization algorithms offer versatile solutions for efficiently solving complex optimization problems, transcending the limitations of traditional methods. In the realm of clustering algorithms, leveraging meta-heuristic optimization techniques presents numerous advantages. These include the ability to perform global searches, enabling comprehensive exploration of the solution space, iterative refinement for improved results, robustness in handling large and intricate datasets, and adaptability to diverse clustering objectives and constraints. Employing meta-heuristic optimization in clustering algorithms can lead to enhanced accuracy, scalability, robustness, and flexibility in obtaining optimal or near-optimal clustering solutions.

Metaheuristics can be categorized into two types, such as evolutionary algorithm and swarm optimization algorithm. Algorithms that are evolved by getting inspired by biological evolution are coming under the evolutionary algorithm category. However, algorithms that simulate the social behaviour of living creatures are coming under the second category. Genetic algorithm (GA) [1] and differential evolution (DE) [2] are two good examples of evolutionary algorithms, whereas particle swarm optimization (PSO) [3, 4], artificial bee colony (ABC) [5, 6], cuckoo search (CS) algorithm [7] and ant colony optimization (ACO) [8] are some of the popularly used swarm optimization algorithms. These algorithms generally follow specific nature-inspired operations to produce new individuals, and a continuous iteration may lead to a quality result. However, not very well-written metaheuristics sometimes suffer from premature convergence due to a lack of balance between exploration and exploitation of the search space.

This has always been a challenge among researchers to find the global optimum value. The metaheuristic performs way better than the traditional optimization approaches in dealing with the local minima trap issue. However, the lack of balance between exploration and exploitation always results in premature convergence. This study focuses on addressing the challenge of premature convergence, a common limitation in meta-heuristic algorithms. To overcome this issue, an Automatic Cuckoo Search (AuCS) algorithm is proposed, aiming to strike a balance between exploration and exploitation. By dynamically updating the step size in each generation, the AuCS algorithm effectively avoids premature convergence and achieves superior optimization performance. The contributions of this manuscript can be summarized as follows: (a) introduction of the AuCS algorithm, designed to achieve a balance between exploration and exploitation; (b) mitigation of premature convergence in Cuckoo Search (CS) through the adaptive updating of the step size; (c) comparative analysis of the proposed AuCS algorithm with CS and adaptive cuckoo search (ACS) [12] using 27 benchmark functions in 30 and 50 dimensions; and (d) evaluation of the AuCS algorithm’s efficacy in clustering, with a focus on minimizing intra-cluster distance and improving clustering accuracy. The experimental results demonstrate the effectiveness of the proposed AuCS algorithm, highlighting its superiority in addressing premature convergence and its potential for enhancing optimization performance in various problem domains. This manuscript serves as a valuable contribution to the field of meta-heuristic optimization, offering insights into adaptive optimization techniques and their applicability in clustering algorithms.

The rest of the manuscript has been outlined as follows. Related works, such as CS and its different variations have been briefed in Section 2. Section 3 provides a brief overview and a method-wise comparative analysis of CS, ACS, and AuCS. Section 4 presents the experimental outcomes and analysis. Finally, Section 5 concludes the work.

Related works

As metaheuristics can efficiently explore the search space without necessarily knowing about the problem and its derivatives, these have become more popular among researchers and have been associated with handling challenging optimization problems. The CS proposed by Yang et.al. [7] is a highly efficient optimization algorithm and is mainly known for its capability to deal with diversified optimization problems. With the benefits of the simple concept, high efficiency, ease of implementation, and few parameters to adjust, CS has been applied in various fields. The efficiency of CS had been proven by Yang and Deb compared with other metaheuristics such as GA and PSO.

Moreover, researchers have devoted their time and knowledge to the direction of improving the performance of CS. Specifically, they have contributed in three specific directions. People have hybridized the CS with some of the existing optimization algorithms to increase convergence by overcoming the weakness of the CS. Secondly, people try to modify the mechanism or strategy to improve search capability. Lastly, researchers have contributed to developing methods that control the associated parameters of CS differently for better convergence and to improve the versatility and robustness to handle different optimization problems.

Dhabal et al. developed a gbest-guided CS algorithm [9], proposing a replacement strategy based on gbest. Instead of considering a fixed, they thought of an adaptive

To find a better solution than the existing one, many variants of different metaheuristic algorithms have been developed in the recent past. However, the convergence of metaheuristics algorithms is slow as compared to traditional optimization techniques, and yet there is not a single one that can guarantee to find the best value for any problem. Hence, it remains an open challenge for all to explore an efficient algorithm.

Methodologies

This section provides a comprehensive analysis of the CS and ACS algorithms, focusing on implementation details, advantages, and limitations. Emphasis is placed on evaluating the exploration and exploitation capabilities and the impact of algorithm parameters. These observations justify the need for the proposed research by identifying areas for improvement. By examining the intricacies of CS and ACS, this section uncovers opportunities to enhance their performance, particularly in achieving a better balance between exploration and exploitation.

Comparative analysis: Advantages and limitations of cuckoo search (CS) and adaptive cuckoo search (ACS)

The CS optimization algorithm, inspired by the behaviour of cuckoo birds, offers a simple yet effective approach to solving optimization problems proposed by Yang and Deb in 2009. CS combines global random search and local search, achieving a balance between exploration and exploitation. Its scalability and efficiency make it suitable for large-scale problems. However, CS has limitations. It is sensitive to initial settings and struggles with complex multimodal functions. Slower convergence speed in high-dimensional problems is another challenge. CS relies on a biased random walk for exploration. Customizing parameters is vital to address these limitations and optimize CS’s performance for specific problem characteristics. In CS, to generate a uniformly randomized population, key parameters like population size (NP) and solution dimension (

The Levy flight probability approach is utilized for searching new solutions. Levy flight involves a random walk where the step length follows a Levy distribution. This approach is preferred in the CS algorithm due to its fast convergence and efficient exploration of large search spaces [20]. To generate a new solution, the following steps are taken starting from Eq. (2).

In the context described,

Here,

A crucial step in CS, similar to other swarm optimization algorithms, is the selection of solutions for the next generation. The fitness of the newly generated

In the given context,

The ACS algorithm, an extension of CS proposed by Naik et al. in 2016, introduces adaptive strategies to improve its performance. Adaptive step size and levy flight parameters allow the algorithm to dynamically adjust during optimization. This adaptation improves the exploration-exploitation balance, convergence speed, and solution quality. ACS excels in exploring complex multimodal problems with narrow peaks. Its adaptive nature enables it to dynamically adapt to problem characteristics, leading to better performance. However, implementing and tuning ACS is more complex than the original CS algorithm. Optimal parameter configuration requires expertise and time. Additionally, the adaptive nature may introduce randomness, potentially increasing computational time or yielding suboptimal solutions. ACS offers advantages like improved balance, faster convergence, and adaptability. Yet, challenges include complexity, tuning difficulty, potential increased computational time, and the risk of suboptimal solutions.

While most steps in Algorithm 1 remain unchanged, there are notable differences in the way the algorithm handles solution generation. Specifically, ACS incorporates an adaptive approach where the step size is determined based on the fitness of each individual nest in the current iteration. Interestingly, this algorithm deviates from the conventional use of Levy distribution and completely disregards the variable

Here,

Here,

In ACS, though the algorithm adaptively considers the step size, it has not considered the concept of levy flight. Hence, the value of

This work aims to address the issue of local minima by achieving a delicate balance between exploration and exploitation in the search space. An analysis of the CS algorithm reveals that the parameters

In this context, the symbol NoG denotes the total number of generations, while

It becomes evident that

When considering Eq. (2) involving

In contrast to exploiting the entire population as described in Eq. (12),

In this work, the traditional greedy selection approach has been replaced by adopting the elitism principle inspired by NSGA II [21]. This methodology involves merging the old and new populations and sorting them based on their fitness values. From this combined population, the top NP solutions (where NP represents the population size) are selected from 2NP for the subsequent generation. Unlike the greedy approach, this selection method ensures that every high-performing solution from the current generation is retained, without the risk of losing any promising individuals. By incorporating elitism, the algorithm preserves the best solutions, promoting their continued influence and enhancing the potential for improved performance in subsequent generations.

To summarize, these strategies, including the adaptation of

The proposed AuCS algorithm shows promise in addressing clustering problems and has been successfully applied in this domain. Clustering involves grouping data points based on similarity, and the AuCS algorithm offers a unique approach by optimizing the clustering process. Implementation of AuCS in clustering entails data preprocessing, algorithm application, and parameter tuning. Preprocessing ensures data suitability, while the algorithm’s steps are tailored for clustering, including generating solutions, adjusting step sizes, and maintaining exploration-exploitation balance. Parameters like

Result analysis: Benchmark functions and data clustering

This section provides a comprehensive examination of the results and a discussion of the newly introduced approach. The discussion encompasses two aspects. Firstly, an analysis is conducted using 13 fundamental benchmark test functions [12], as well as 14 additional test functions from CEC 2005 [22]. Secondly, the performance is assessed based on data clustering.

Parameter settings

Parameter settings

In this section, we analyse and discuss the performance of the proposed algorithm using 27 benchmark test functions. These include 13 standard test functions and 14 test functions from CEC 2005. By comparing the obtained results with the global optimum values of these functions, we evaluate the effectiveness of the proposed algorithm. Specifically, we compare the results of the proposed AuCS algorithm with those of the CS and ACS algorithms. The total number of function evaluations for all 27 benchmark functions is fixed at

Details of basic unimodal functions

Details of basic unimodal functions

Details of basic multimodal functions

Among the 13 test functions considered, the first 7 functions listed in Table 2 are unimodal functions, while the remaining functions are multimodal functions with multiple local minima, as described in Table 3. Furthermore, these functions have been evaluated using dimensions of 30 and 50.

Details of CEC 2005 unimodal functions

Here,

Details of CEC 2005 multimodal functions

Here,

Details of CEC 2005 expanded multimodal functions

Here,

Likewise, among the 14 CEC 2005 test functions, f1–f5 are unimodal functions with a single optimal value, f6–f12 are basic multimodal functions, and f13–f14 are categorized as expanded multimodal functions. Detailed descriptions of these functions can be found in Tables 4 to 6, sourced from [22]. It is worth noting that as the solution space dimension increases, the complexity of finding the optimal value also increases. To assess the scalability of the proposed model, all 14 benchmark test functions have been evaluated using solution spaces of dimensions 30 and 50. To ensure a fair comparison, the number of solutions considered for all algorithms is fixed at 50, regardless of the specific benchmark function.

Performance evaluation of basic uni-modal and multi-modal benchmark test functions with dimension 30D

Performance evaluation of basic unimodal and multi-modal benchmark test functions with dimension 50D

Performance evaluation of CEC 2005 unimodal and multimodal benchmark test functions with dimensions 30D

To compare the proposed approach with existing methods, a standardized evaluation method is utilized. This method assigns ranks to algorithms based on their performance across specific test functions. The algorithm with the best result receives a rank of one, the second-best receives a rank of two, and so on. Averaging these ranks determines the average rank for each algorithm. Lower rank values indicate superior performance. Table 7 showcases the performance of the evaluated algorithms on 13 basic unimodal and multimodal test functions with 30D, highlighting their relative efficiency.

The proposed AuCS algorithm demonstrates superior performance compared to CS and ACS for various unimodal and multimodal functions. In the case of unimodal functions, AuCS outperforms CS and ACS for f1, f2, f3, and f4, consistently finding the global minimum for f4. For f6 and f7, AuCS performs similarly or slightly better than CS and ACS. Among the multimodal functions (f8 to f13), AuCS significantly outperforms both CS and ACS, except for f13 where ACS achieves a better mean value. It can be observed that, the AuCS outperforms the other algorithms in 10 out of 13 cases, showcasing its effectiveness in solving both unimodal and multimodal optimization problems.

To ensure transparency in the comparison, a rank-based approach is employed. Each algorithm is ranked based on its performance for each test function. Average ranks are calculated for each algorithm across all functions. Based on this approach, AuCS outperforms ACS and CS, with average rank values of 1.30 and 1.46 for mean and standard deviation, respectively. AuCS consistently achieves the best optimum values among the algorithms. ACS performs better than CS in terms of average rank for mean and standard deviation, but CS exhibits less result fluctuation. AuCS demonstrates robustness with the minimum standard deviation, indicating stability. Table 8 presents the performance of the algorithms on the same 13 test functions with an updated dimension of 50 in the solution space.

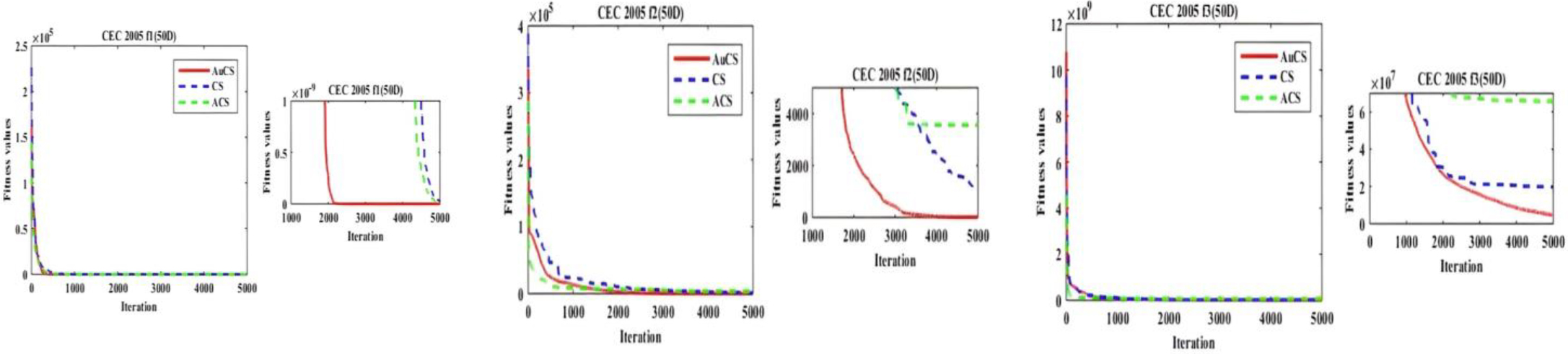

As the dimension increases, the difficulty of finding the optimum value also rises. To accommodate the higher complexity, the number of function evaluations is increased to 500,000. Analyzing Table 9, it is evident that the proposed AuCS algorithm maintains consistent performance as complexity increases. For the first 7 unimodal cases, AuCS significantly outperforms the other algorithms, consistently achieving the target optimum value for f4 and f6. However, neither CS nor ACS reach the global optimum for f6, indicating performance degradation for this test function. Among the 7 benchmark functions, AuCS outperforms CS and ACS in 6 cases, while ACS outperforms CS in 4 cases. For the 6 multimodal test functions, AuCS demonstrates considerably better performance. Overall, AuCS dominates CS and ACS in 11 out of 13 benchmark functions, highlighting its superior performance as complexity increases. Table 9 provides a comparative study of the three algorithms using CEC 2005 test functions in a 30D solution space.

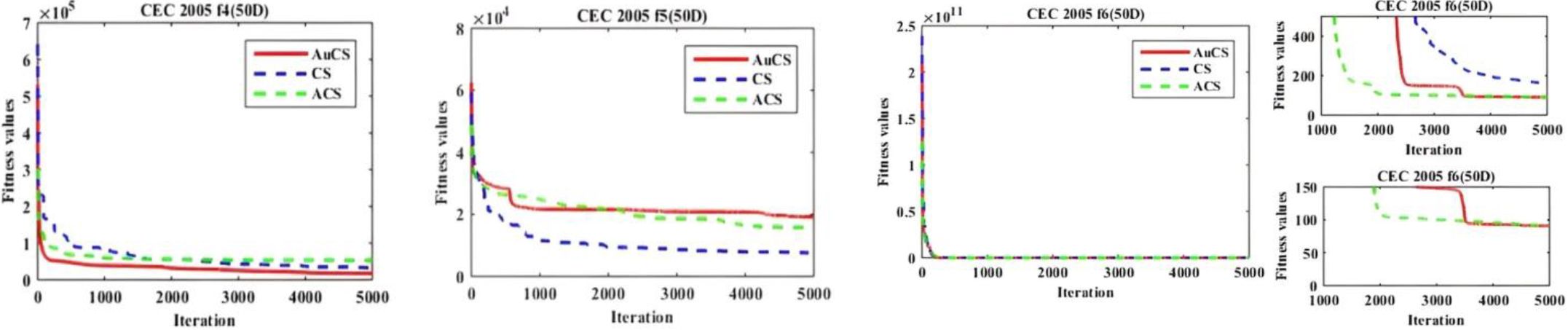

Performance evaluation of CEC 2005 uni-modal and multi-modal benchmark test functions with dimensions 50D

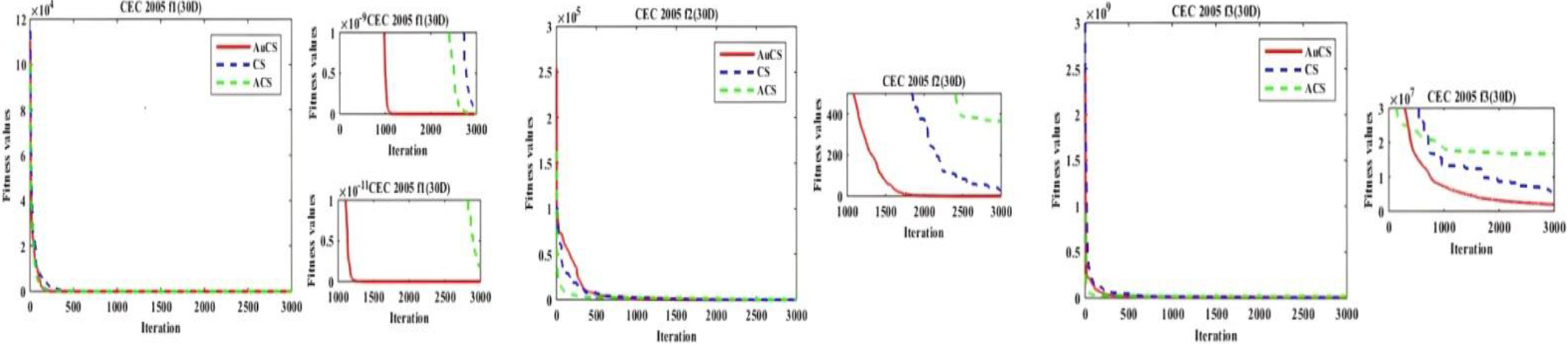

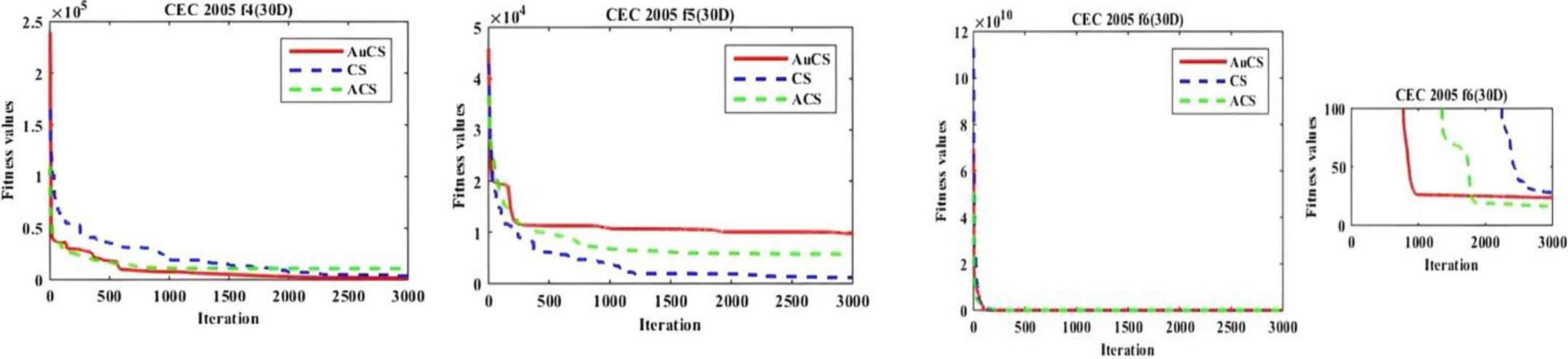

Among the first 5 unimodal benchmark functions, AuCS outperforms the other algorithms in 4 cases, while CS performs best only for f5. CS provides better results than ACS in 4 out of 5 cases. AuCS achieves the best optimal values for f1, f2, f3, and f4. For the 7 basic multimodal functions, AuCS outperforms CS and ACS in 4 cases, while CS performs best in 2 cases. Among the expanded multimodal functions, AuCS significantly outperforms CS and ACS for f13. Overall, AuCS dominates with the best results in 9 out of 14 benchmarks. Based on average rank, AuCS outperforms CS and ACS, with a mean rank value of 1.35. In terms of fluctuation, CS performs better than AuCS and ACS. Increasing the dimension to 50, AuCS continues to dominate the other algorithms (see Table 10).

Among the 5 unimodal functions, AuCS outperforms CS and ACS in both mean and standard deviation values in 4 cases. For the multimodal functions, AuCS achieves the best mean value in 3 cases, while ACS performs best in 2 cases and CS performs best in 1 case. AuCS dominates in terms of standard deviation. In the two expanded multimodal functions, AuCS outperforms CS and ACS in the mean value for f13 and achieves the highest optimal value for f14. Using the average rank method, AuCS easily dominates with average rank values of 1.571 for mean, 1.426 for reaching the best optimal value, and 1.642 for standard deviation. This indicates AuCS’s superiority in all aspects, with reduced result fluctuation and increased robustness compared to CS and ACS.

Evaluation of performance gain of AuCS over CS and ACS

Moreover, in order to find the overall performance gain of AuCS, root mean square error (RMSE) have been considered as performance measurement tool in this work. Here, the average optimized result along with each global optimum value for all 27 test functions are considered to calculate the RMSE. These 27 test functions have been evaluated for both 30D and 50D. So, for each set of functions, the evaluated RMSE score have been provided in Table 11. Considering the RMSE, one can easily see the overwhelming performance of AUCS over others. Considering the performance gain, AuCS performs 2.38 and 2.62 times better than CS algorithm for 30D and 50D respectively for first set of basic 13 test functions. Similarly, the AuCS also outperforms CS in case of 14 CEC 2005 benchmark functions. For 30D, AuCS performs 2.92 times better and for 50D, it is 5.12 times better. Also, for 1

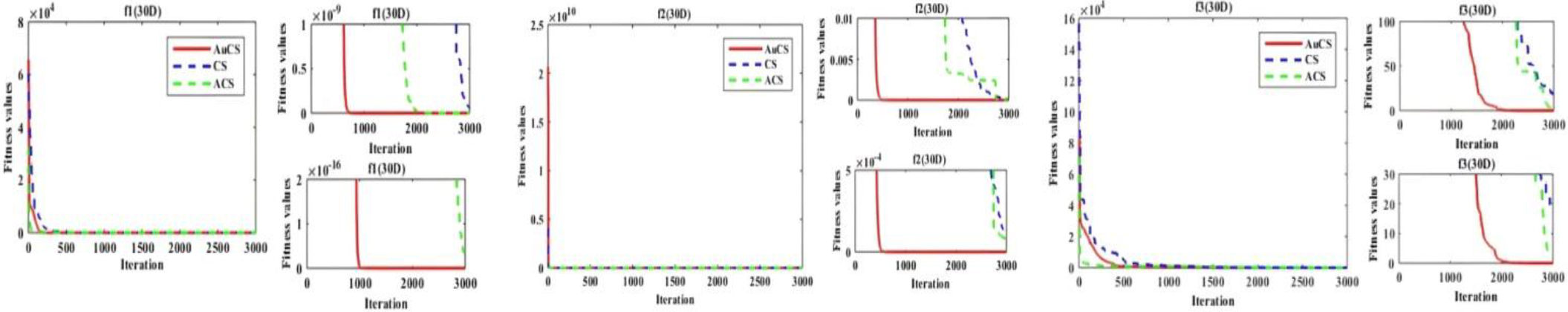

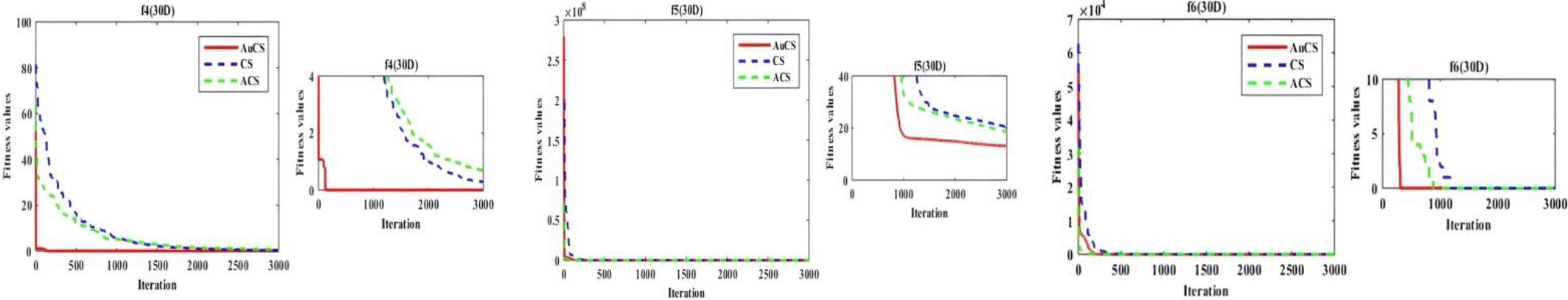

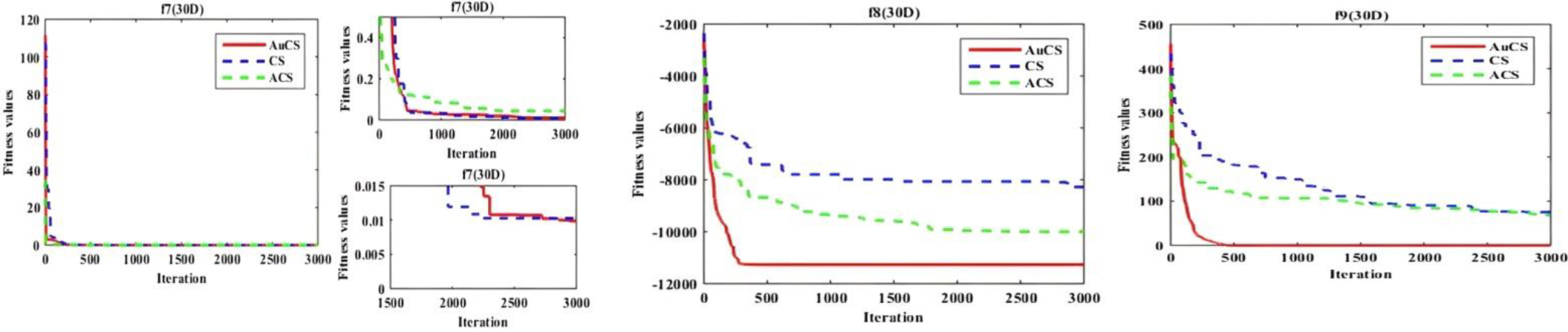

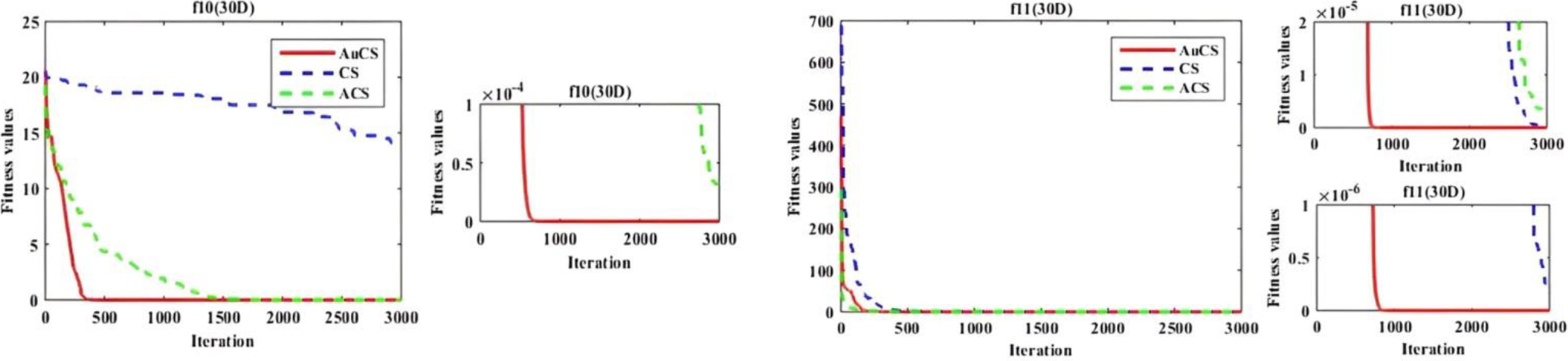

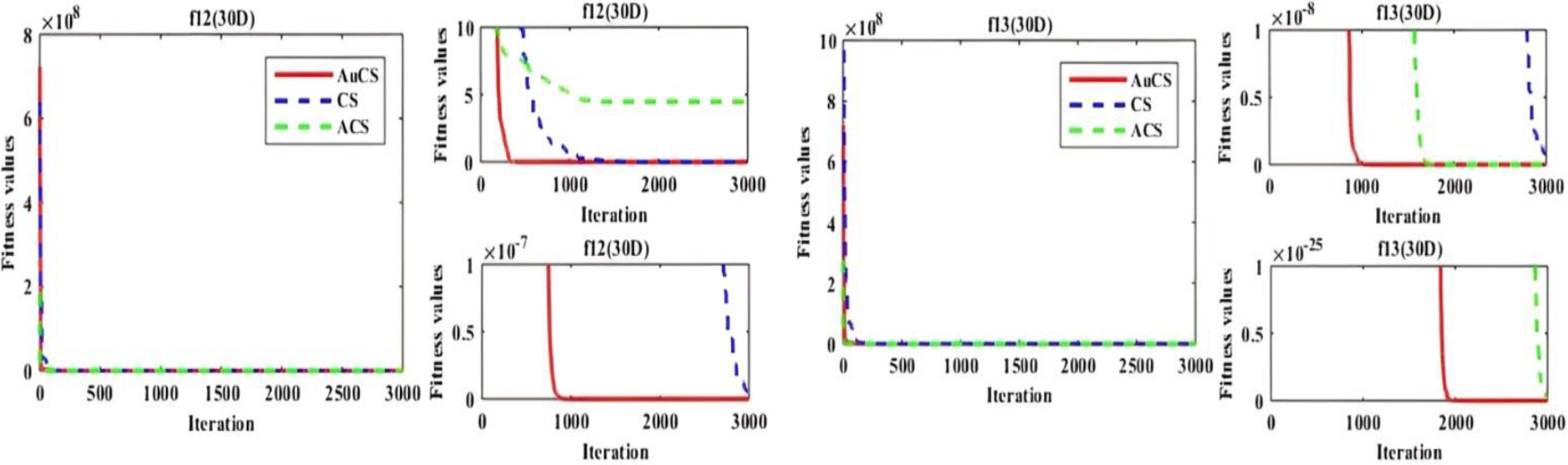

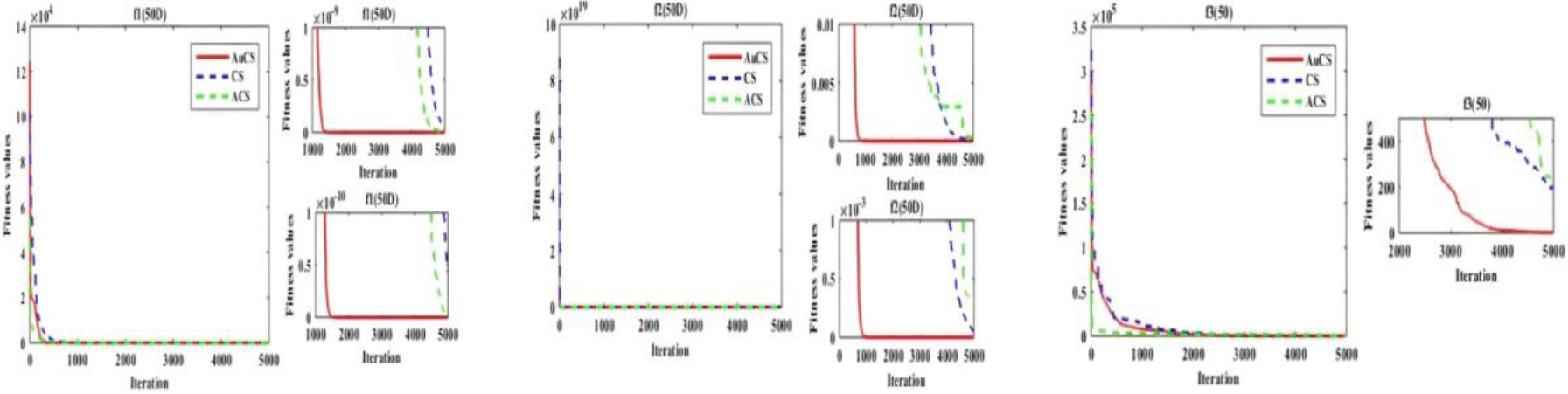

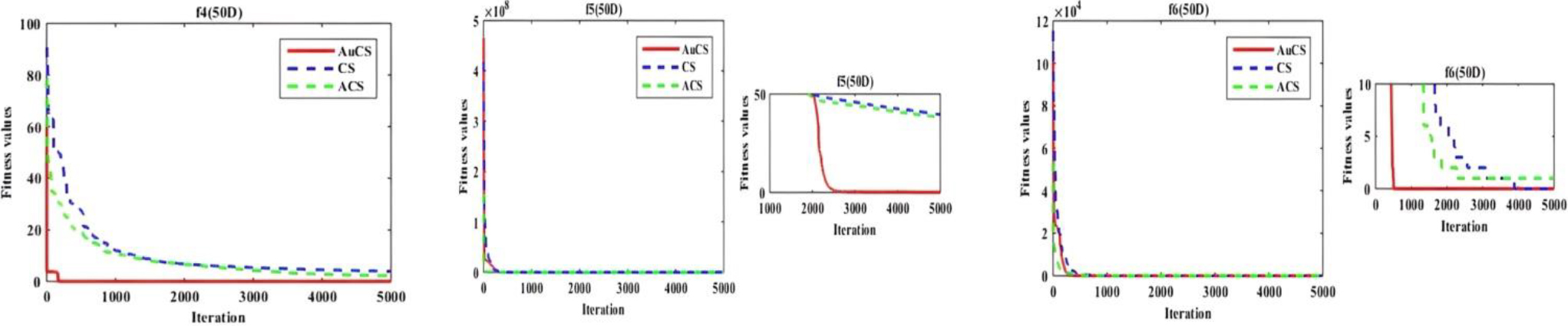

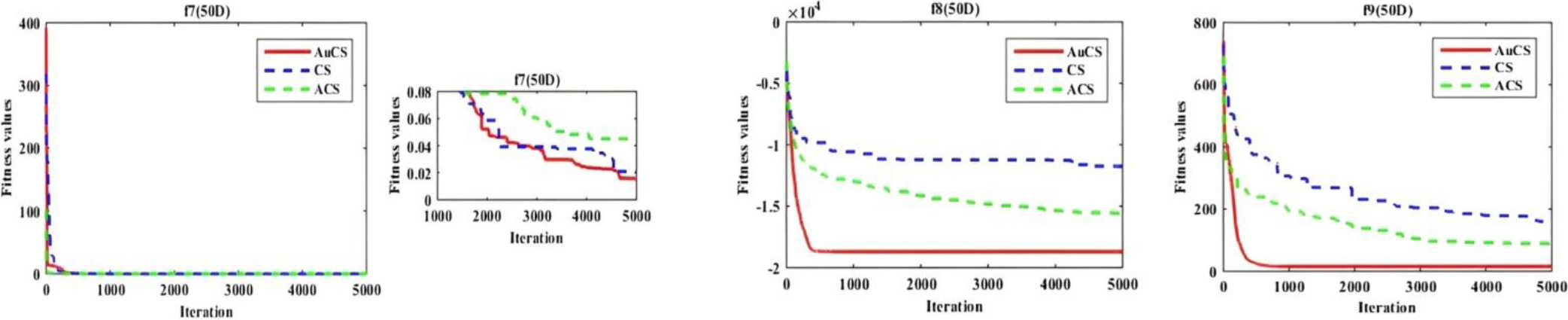

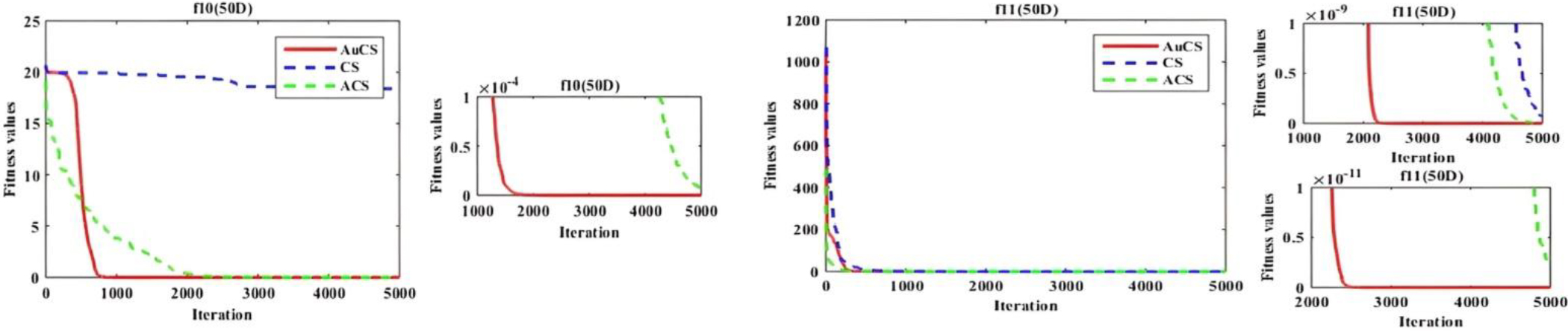

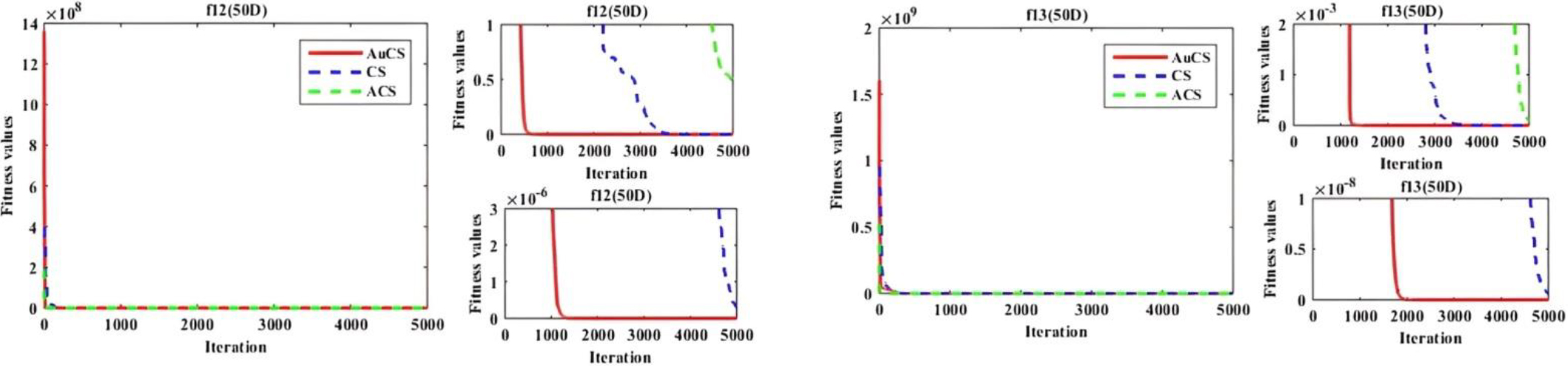

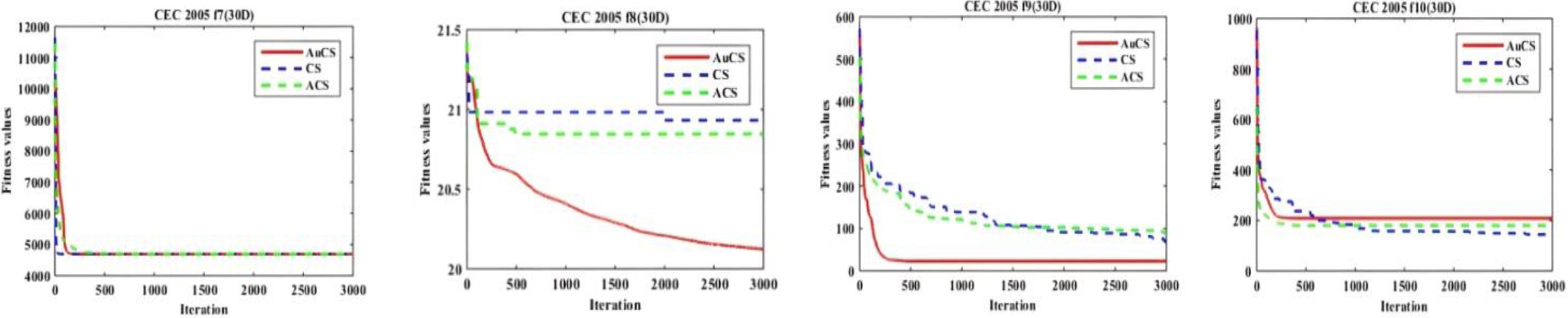

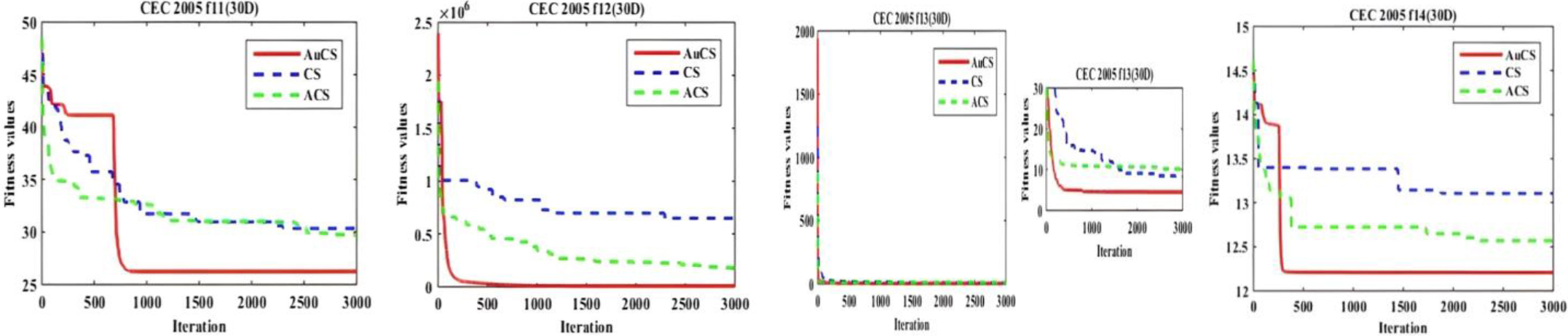

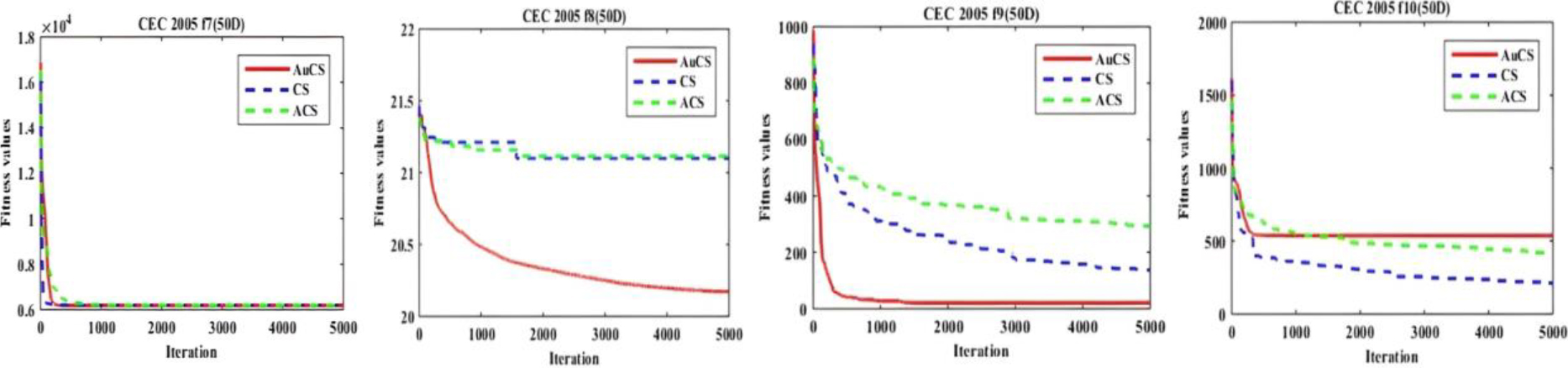

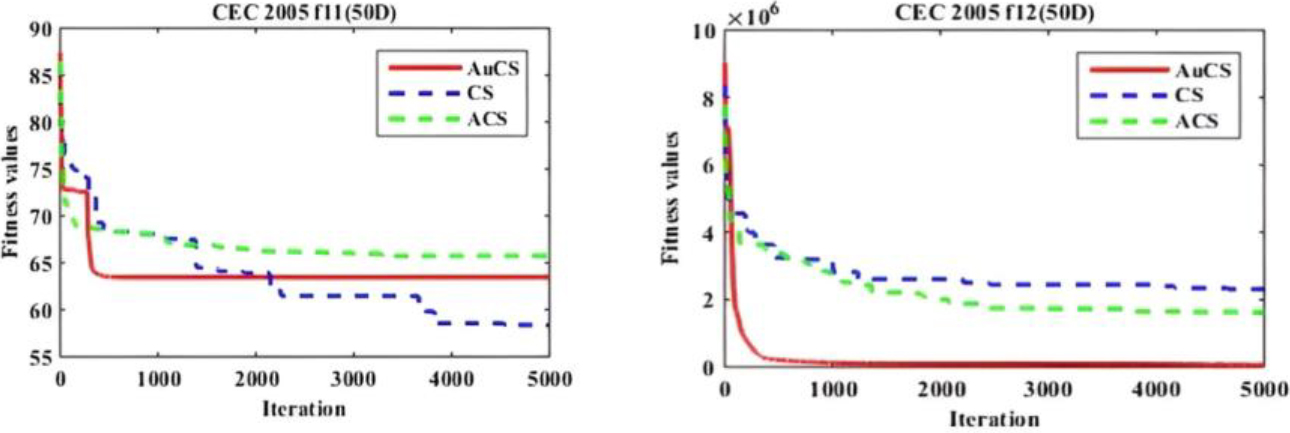

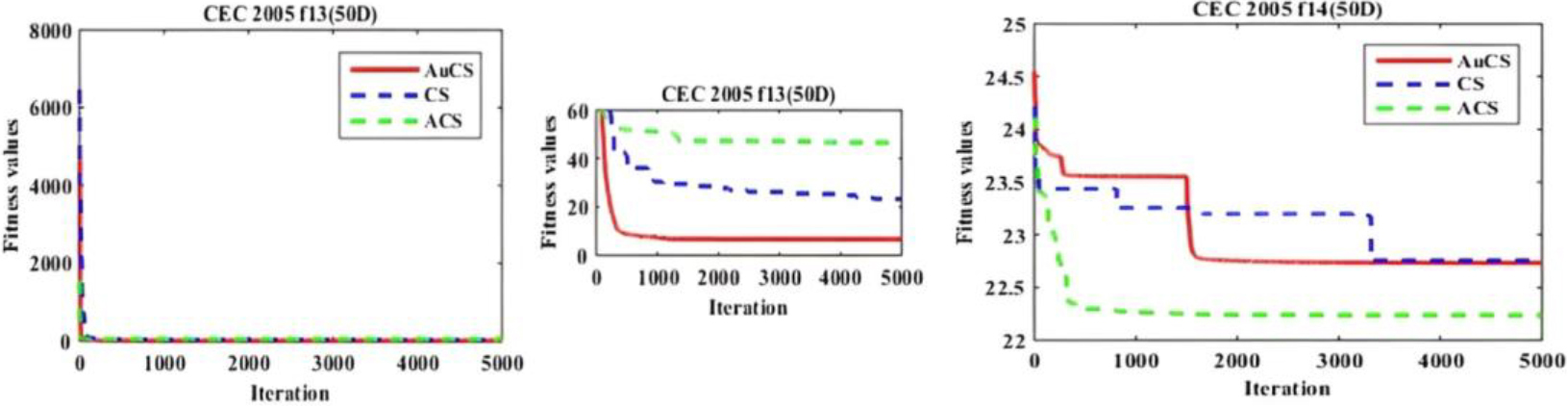

Convergence performance comparison based on

Convergence performance comparison based on

Convergence performance comparison based on

Summary of the datasets

Convergence performance comparison based on

Convergence performance comparison based on and

Convergence performance comparison based on

Figures 1–19 display the convergence graphs for all 13 standard and 14 CEC 2005 benchmark functions in both 30D and 50D cases. Graphs are magnified to showcase differences in convergence. AuCS demonstrates faster convergence, overcoming local minima issues. These graphs illustrate the efficiency of AuCS in solving unimodal and multimodal problems. For the remaining 28 CEC 2005 cases, AuCS exhibits faster and more accurate convergence in most instances. The strategy of increasing the step size at a later stage aid in avoiding local optima.

Analyzing all these tables and figures, AuCS completely outperforms CS and ACS in 39 out of 54 cases that include both 30D and 50D solution space for performance comparison. So in overall performance, AuCS results in the best optimum value in 72.22% cases. These cases not only include unimodal problems but also consist of highly complex multimodal problems. Hence, this reflects the proposed one is quite scalable and robust in dealing with different challenges in different environment.

Convergence performance comparison based on

Convergence performance comparison based on

Convergence performance comparison based on

Convergence performance comparison based on with 50D.

Convergence performance comparison based on

Convergence performance comparison based on

Convergence performance comparison based on

Convergence performance comparison based on

Convergence performance comparison based on

Convergence performance comparison based on

Convergence performance comparison based on

Convergence performance comparison based on

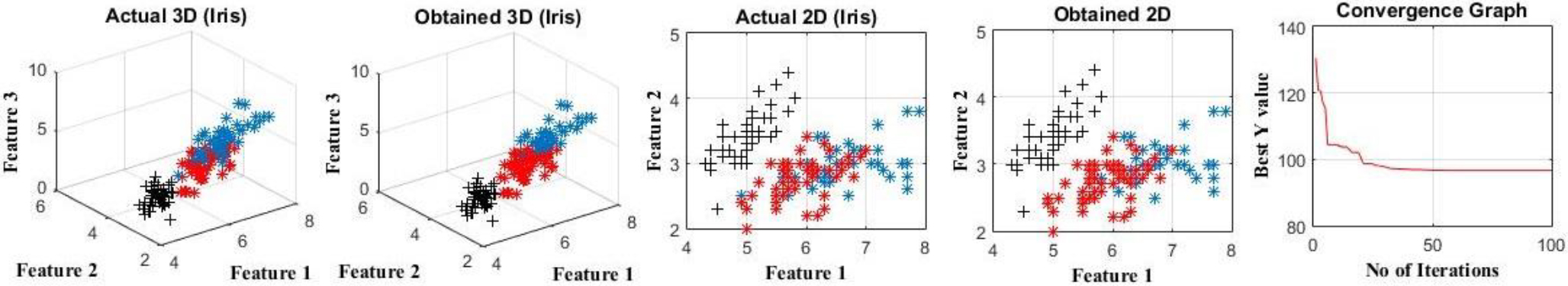

This section presents an analysis of clustering using five swarm optimization algorithms. Seven benchmark datasets are used to assess robustness. Results for compared methods (excluding AuCS) are referred from [23], ensuring a fair comparison. Experimental conditions remain unchanged, with fixed 50D search space dimension and maximum 10,000 function evaluations as suggested in [23]. To assess stability, AuCS is tested over 30 independent runs for each dataset instead of 20. Results consider both optimized intra-cluster distance and accuracy. Table 12 provides a summary of each dataset, while complete details can be found in [24].

Comparative analysis based on minimization of the intra-cluster distance

Comparative analysis based on minimization of the intra-cluster distance

Comparative analysis based on accuracy percentage

Convergence performance comparison based on and

Table 13 presents the analysis based on intra-cluster distance, whereas Table 14 demonstrates the comparison based on accuracy achieved by each of the meta-heuristics namely ACO, ABC, PSO and particle swarm optimization with age-group topology (PSOAG) [25] (a variation of PSO) along with the proposed AuCS.

Table 13 compares algorithms based on intra-cluster distance for seven real datasets. AuCS outperforms other algorithms in 5 out of 7 datasets. It also exhibits significantly better results in terms of standard deviation. AuCS excels in Iris, Wine, Cancer, and CMC datasets for both average intra-cluster distance and standard deviation. PSOAG performs better than AuCS in Thyroid and Vowel datasets, but AuCS still outperforms PSOAG by a significant margin in terms of standard deviation. This highlights the robustness of the proposed methodology. To ensure fairness, the average rank comparison is employed. The algorithm with the minimum intra-cluster distance receives rank one, while the algorithm with the maximum value receives rank five. The same ranking process is applied to standard deviation. AuCS outperforms others with average ranks of 1.28 for average intra-cluster distance and 1.42 for standard deviation. This validates the effectiveness of AuCS in achieving optimized results and demonstrates its robustness across multiple runs.

Convergence performance of AuCS for Iris dataset.

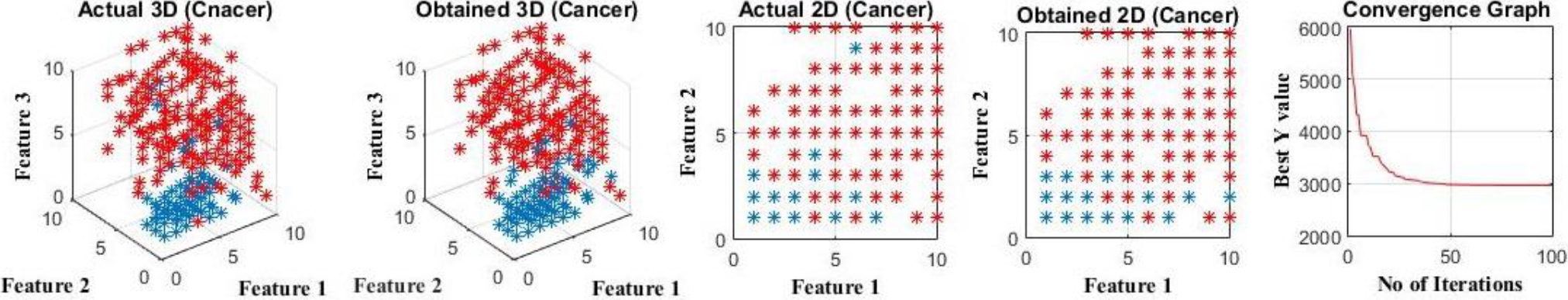

Convergence performance of AuCS for Cancer dataset.

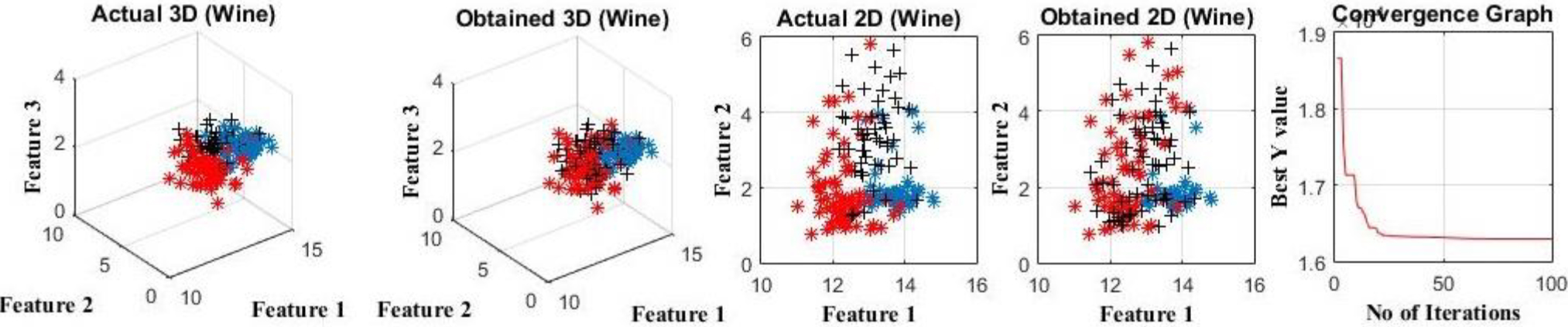

Convergence performance of AuCS for Wine dataset.

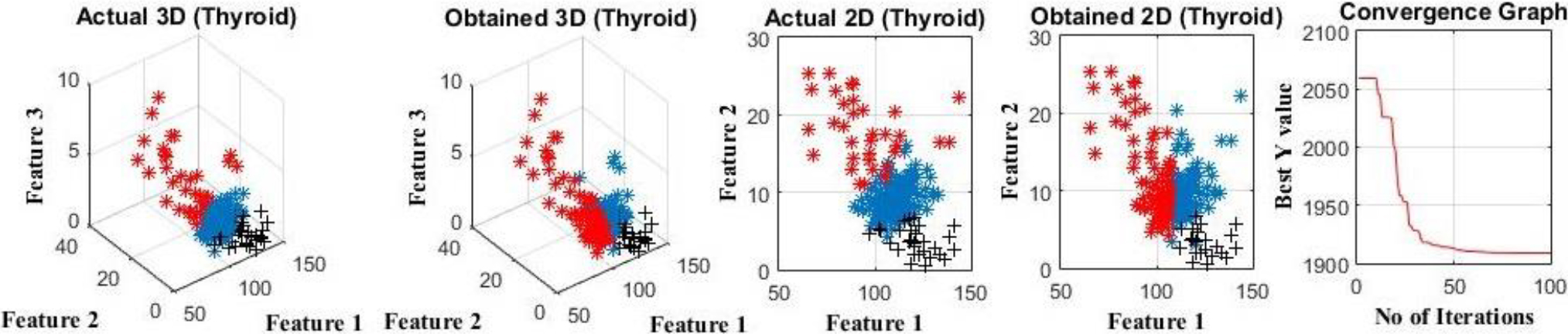

Convergence performance of AuCS for Thyroid dataset.

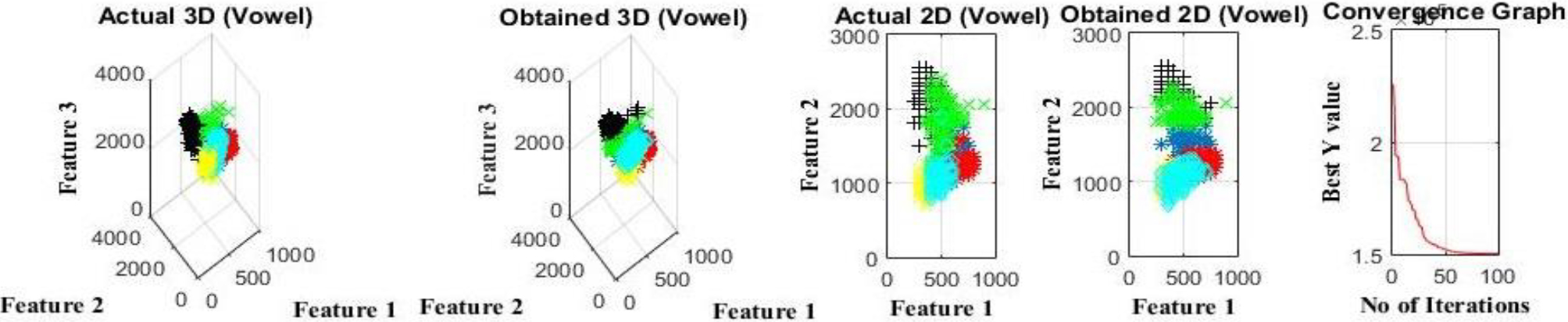

Convergence performance of AuCS for Vowel dataset.

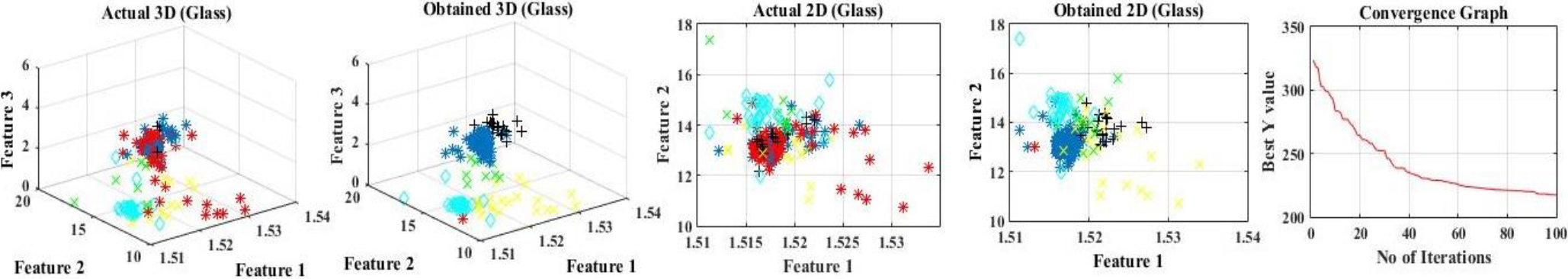

Convergence performance of AuCS for Glass dataset.

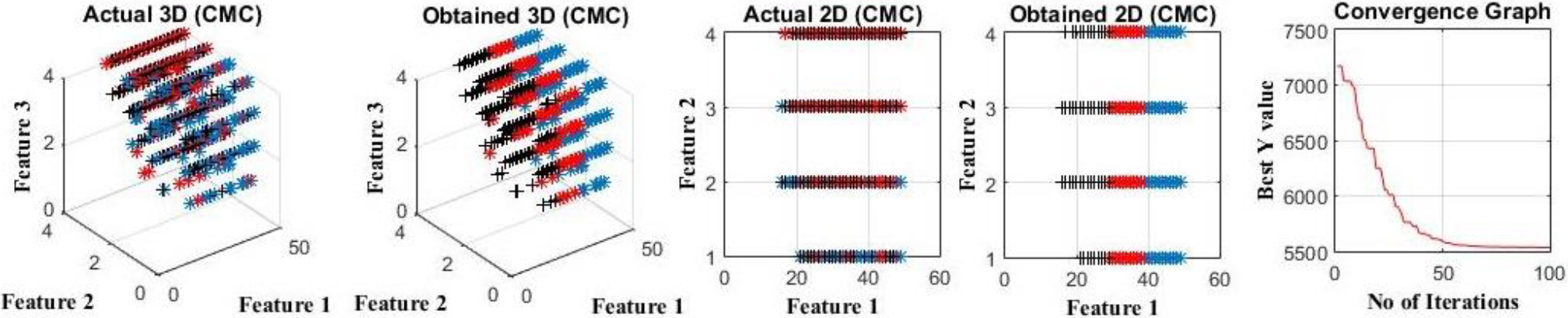

Convergence performance of AuCS for CMC dataset.

Table 14 displays the evaluation of algorithms based on average accuracy. Note that the algorithms were not specifically designed for accuracy optimization; centroid calculation determined accuracy. AuCS outperforms others in four out of seven datasets, while PSOAG excels in the remaining datasets. Notably, AuCS performs exceptionally well in Wine, Cancer, and CMC datasets. Using the average rank strategy, AuCS achieves the highest average rank of 1.85. It also demonstrates comparable performance to PSOAG in terms of standard deviation. Figures 20–26 provide visual representation of clustering outcomes obtained from AuCS algorithm and depict each dataset’s clustering outcomes using AuCS. Each dataset is represented by five sub-figures, showcasing the disparities between actual clustering and AuCS clustering. The visualizations include both 2D and 3D perspectives, providing a comprehensive understanding of the results. Additionally, the convergence performance of AuCS for each dataset is graphically presented. These convergence graphs offer insights into how effectively AuCS identifies optimal points. Analyzing these graphs provides valuable information about the rate at which AuCS achieves optimal solutions for each dataset.

This work introduces the AuCS algorithm, which addresses the challenge of exploration-exploitation balance in the cuckoo search algorithm. Through extensive analysis, AuCS demonstrates superior performance on benchmark functions and clustering tasks. It outperforms the cuckoo search algorithm and ACS swarm intelligence algorithm, showcasing scalability and effectiveness. It can be noticed the AuCS excels in 72.22% of all test cases. Also, it stands top against all swarm optimization algorithms in 5 out of 7 datasets. Future research directions include evaluating AuCS on additional benchmarks, optimizing its parameters, comparing it with other algorithms, exploring hybridization approaches, and validating its practical applicability. Also, can further be implemented as a multi-objective algorithm to solve many multi-objective optimization problems. AuCS emerges as a powerful and promising optimization algorithm with wide-ranging potential in solving complex problems across various domains.