Abstract

Parliamentary and legislative debate transcripts provide an informative insight into elected politicians’ opinions, positions, and policy preferences. They are interesting for political and social sciences as well as linguistics and natural language processing (NLP) research. While exiting research studied individual parliaments, we apply advanced NLP methods to a joint and comparative analysis of six national parliaments (Bulgarian, Czech, French, Slovene, Spanish, and United Kingdom) between 2017 and 2020. We analyze emotions and sentiment in the transcripts from the ParlaMint dataset collection, and assess if the age, gender, and political orientation of speakers can be detected from their speeches. The results show some commonalities and many surprising differences among the analyzed countries.

Keywords

Introduction

The development of natural language resources and technologies opens new possibilities for social and political sciences [48]. After decades of analyzing individual political speeches and transcripts, natural language processing (NLP) allows orders of magnitude larger studies. Parliamentary corpora are available for many parliamentary democracies and include draft bills, amendments to bills, adopted legislation, committee reports, and transcripts of floor debates. Processing these heterogeneous records is challenging. However, the recent ParlaMint project has produced unified corpora of parliamentary debates in 17 European parliaments, making them widely accessible [20]. This allows to broaden the scope of analyses from individual countries to joint issues and differences. Modern monolingual and cross-lingual NLP techniques applied to the collected data can provide new insight into the language used, expression of speakers, as well as similarities and differences in emotions and sentiment in different parliaments.

National parliaments have developed their own code of behavior and speech [38]. The comparison between them is difficult. We propose a novel technological approach, combining monolingual and cross-lingual prediction models with machine translation to analyze political, sociological, and linguistic phenomena. Recent research has shown that such approaches are possible for analysis of social media but they have not yet been applied to parliamentary speech corpora. We analyze parliamentary debates from six national parliaments: Bulgarian, Czech, English, French, Slovene, Spanish, and United Kingdom (UK) in the period from 2017 to 2020.

The main contributions of our work can be summarized as follows:

A methodological framework for a comprehensive comparison of parliamentary speeches in cross-lingual setting using cross-domain transfer learning. Comparison of linguistic effects based on age, gender, and political position of speakers for six parliaments. Comparison of sentiment and emotions in six parliaments extracted with prediction models. A novel approach to analyse difference in sentiment based on speakers’ age using Bayesian statistical models.

The paper is structured into five further sections. In Section 2, we present background and related work on analyzing parliamentary speech split into age, gender, sentiment and emotions. Section 3 describes the used ParlaMint datasets. In Section 4, we present our methodology, split into prediction of metadata (age, gender, and political wing), sentiment, and emotions. The results are covered in Section 5, while we draw the conclusions and present ideas for further work in Section 6.

In this section, we first present the necessary background information, discussing also aspects not strictly related to the parliamentary context. Sections 2.1, 2.2, 2.3, and 2.4 cover related works referring to age, gender, sentiment, and emotions in the political discourse. Finally, in Section 2.5, we outline the use of Bayesian methods in parliamentary contexts.

Language and age

Age as a factor of language variation is one of the most salient and productive objects of research in the field of sociolinguistics [44]. The description of differences between young and older generations focuses on (in)formality [34, 56]. In the research of group membership through speech [23], sociolinguists describe two types of prestige, overt and covert prestige. Overt prestige is related to standard and more formal linguistic features, which are normally associated with those who hold more power and status. Covert prestige, on the other hand, is the non-standard variety employed in a scenario that encourages cooperation, communality, communication ease, and engagement [57]. Based upon these considerations, some linguistic differences age may explain are adults’ preference for syntactic complexity [22], swearing [31], lexical conservativism [32], usage of positive politeness strategies [19], teenagers’ tendency towards language change [40], the use of slang [50] or abruptness [13].

Several authors covered the prediction of age and other personal traits such as gender or political affiliation, e.g., Dahllöf [12], who analyzed the wording of political speeches in Swedish. The results show that it is possible to classify politicians according to their age, ideology, and gender to some degree. We analyze six parliaments at once, which opens a broader perspective and gives more general conclusions.

Language and gender

Since 1922, a number of studies have addressed the role of gender in language expression – for an overview, see Hidalgo-Tenorio [27]. For example, the debate ranges on whether gender is a social construct, whether there exist different genderlects with different characteristics, and whether a so-called “women’s language” is the result of culture or power relations [10, 35]. The linguistic features claimed to characterize females range from articulatory phonetics and grammar to pure pragmatics, e.g., the tendency for hypercorrection, conservativism, self-disclosure and attentiveness; abundance of intensifiers and restricted vocabulary associated with domesticity; preference for simple syntax, minimal responses, emotion(al) language, expressive speech acts, diminutives and terms of endearment; usage of rising intonation, questions and epistemic modality to mark their lack of confidence; and, finally, neither swearing nor turn-taking control, interruption or topic selection in conversation.

In our work, we predict the gender of speakers available as metadata. In this way, we establish a level of differences between speeches used by members of parliament (MPs) of different genders. In the gender detection, we find some interesting research that successfully applies machine learning and/or sentiment analysis [4, 33, 39, 47]. An important consideration in the prediction of speakers’s gender is grammatical gender. In the four of the six languages we cover, Bulgarian, Czech, Slovenian, and Spanish, there are three grammatical genders (masculine, feminine, and neuter); in French there are two genders (masculine and feminine), while English has no grammatical gender. Grammatical gender can generally be inferred from the ending of nouns, adjectives, determiners or past participles. In some cases (not all), this means that the gender of a speaker can be determined. Next, we give some examples of these phenomena for the analyzed languages.

Az sam sigurna (I am sure: feminine), Az sam siguren (I am sure: masculine). In Bulgarian, there are synthetic and analytic tenses/moods. The former contain no indication of gender. Here is an example of a synthetic form: Az kazax (I said – no gender marking, thus it might apply to either feminine or masculine). However, when an analytic form with a participle is used, the gender is indicated by this participle: Az bix predlozhila (I would suggest: feminine) vs. Az bih predlozhil (I would suggest: masculine). Já bych řekl (I would say: masculine), Já bych řekla (I would say: feminine). Again, if a synthetic form is used, then there is no indication of a specific gender: Já si myslím (I think: feminine and masculine). Estoy harta del populismo (I’m sick of populism: feminine); Estoy harto del populismo (I’m sick of populism: masculine). Je suis prête (I am ready: feminine), Je suis prêt (I am ready: masculine). Again, there are many cases where the gender is not revealed, e.g., Comme j’ai dit (As I said: feminine and masculine). The gender is revealed when using the first person singular in the past and future tense, e.g., Rekla sem (I said: feminine), Rekel sem (I said: masculine). The gender is not revealed in the present tense, e.g., Mislim (I think: both masculine and feminine).

In English, the gender is not a grammatical category but a lexico-sematic feature that can be inferred from the personal and relative pronouns used (the person who arrived; he is nice) and a few morphemes (actor vs actress; policeman vs policewoman); adjectives, determiners or past participles do not show it (this happy man vs this happy woman; she was kissed vs he was kissed). These features are not revealing of the speakers’s gender.

The problem of computational sentiment analysis for parliament discourses has been tackled extensively but with relatively little cross-country comparison. In most cases, sentiment analysis involves document, sentence, and aspect-level analysis.

The research of Dzieciatko [17] and Rheault et al. [48] have applied sentiment analysis to entire corpora at the highest granularity, i.e. their analysis of the Polish and UK parliaments aggregates sentiment scores of all speeches. Honkela et al. [28] explore the overall sentiment of EU Parliament transcripts on the dataset level, whereas Sakamoto and Takikawa [53] consider the polarity of US and Japanese datasets.

While in NLP sentiment analysis is often fine-grained (such as at the level of speech, speech segment, paragraph, sentence, or phrase), in political science, the unit of analysis is primarily an actor (individual politician whose contributions are pooled together). This is the focus of most works on position scaling, a task very much associated with that field. It appears that this confirms to some extent Hopkins and King [29] assertion that while computer scientists are interested in finding the needle in the haystack, social scientists are more interested in characterizing it. The exceptions come from works in the social and political sciences [29, 30] that propose ways to optimize speech-level classification for social science purposes and from computer science [24], which also consider the position scaling issue.

Sentiment detection has advanced considerably in the last few years with the advent of large pretrained language models such as BERT [16]. This has allowed applications to social media, stock market predictions, user stance detection in reviews, hate-speech detection, etc. However, parliamentary discourse is hard to analyze for established techniques due to specific formal speech and linguistic differences to existing training datasets [48]. Rudkowsky et al. [51] study several machine learning approaches based on word embeddings for Austrian parliamentary speeches. Similarly, Abercrombie and Batista-Navarro [1] and Elkink and Farrell [18] investigate predicting votes based on the parliament speeches.

In this paper, we follow political sciences and predict the sentiments of speakers based on their speeches. The results are cross-lingual for six different parliaments, which, to our knowledge, has not been done before.

Emotions in politics

Alba-Juez [2] states that whatever we say, write, hear, and read is produced and processed through the filter of affect. Cognition and emotion are, therefore, two mutually interconnected systems [6]. In this regard, Van Dijk [58] argues that one of the most distinguishing features of manipulation lies in shaping and framing messages in such a way that they accord with their recipients’ negative emotions, usually deriving from feelings of powerlessness and injustice. In the current political landscape, which is imbued with populism, this idea is of utmost importance, especially at a moment when the emotional is preferred to the intellectual.

Research shows how resentment, anxiety, panic, anger, and disgust can help populist politicians seduce their voters [7]. They may use the same discursive strategies to attack and bring their rivals into disrepute. For instance, they can spread unreliable news about their opponents and other sensationalist information with bombastic but simple expressions; plentiful negative ethical and aesthetic evaluative terms; swear words and colloquialisms; and adversarial vocabulary echoing 20th-century propaganda.1

Our analysis is unique in detecting and comparing emotions in six national parliaments at once. This reveals some similarities but also surprising differences.

Bayesian methods allow for the estimation of the posterior probability of a model given data, which provides a measure of both the quality of the model and the uncertainty of the estimates. This is particularly useful when dealing with small data sets or noisy data. In our previous work, we applied Monte Carlo Dropout to deal with prediction uncertainty [41, 42] in natural language processing. Within the parliamentary context, Hansen [26] estimated the positions of the Irish parliamentary parties using the Bayesian ideal point estimation framework and showed that it is possible to distinguish only two blocs of parties in each period, one block supporting the government and one forming the opposition. Han [25] explored the performance of spatial voting models to the roll calls of the EU parliament. Montalvo et al. [43] proposed a new methodology for predicting electoral results that combines a fundamental model and national polls within an evidence synthesis framework.

In this work, we propose to use the Bayesian AB testing to determine the age threshold with the largest difference between positive and negativce sentiment. In this way, we avoid setting a fixed threshold for all parliaments and get interesting differences between the tested parliaments.

The data

In this section, we describe the datasets used in our analysis. Section 3.1 describes the ParlaMint project, which collected and preprocessed the data, while in Section 3.2 we provide information on the actually used datasets and graphically present distributions of MP’s age and gender across parliaments.

ParlaMint project background

ParlaMint2

We use the multilingual comparable corpora of parliamentary debates ParlaMint 2.1 containing parliamentary debates mostly starting in 2015 and extending to mid-2020, with each national corpus containing various amounts of words varying from 800 000 words (for Hungarian) to 109 000 000 words (for UK). The sessions in the corpora are marked as belonging to the COVID-19 period (after November 1st 2019), or being “reference” (before that date). The data is freely available through the CLARIN.SI repository.3

The corpora contain extensive metadata, including many aspects of the speakers (name, gender, MP status, party affiliation, party coalition/opposition). The data are structured into time-stamped terms, sessions, and meetings. Speeches are marked by the speakers and their roles (e.g., the chair or regular speaker). The speeches also contain marked-up transcriber comments, such as gaps in the transcription, interruptions, applause, etc. More information about the creation of the corpora, the common standard, and specifics of each national corpus can be found in Erjavec et al. [20].

At the time of writing this paper, the ParlaMint project released data for 16 languages: Bulgarian, Croatian, Czech, Danish, Dutch, English, French, Hungarian, Icelandic, Italian, Latvian, Lithuanian, Polish, Slovenian, Spanish, and Turkish. In total there are 3 774 204 utterances and 494 949 904 words. The quality of the textual corpora and metadata varies across the languages. In our experiments, we studied parliaments in six countries: Bulgaria (BG), Czech Republic (CZ), France (FR), Slovenia (SI), Spain (ES), and the United Kingdom (UK). The criteria for this selection were mainly the quality of the provided corpora and that we, as authors, understand the languages and the political situation in these countries. The available data for the specific year varies across the parliaments, as shown in Table 1. We decided to analyze data from 2017 to 2020 expecting this selection to be the most informative and provide the most interesting insights.

The data per parliament and year are organized as parliament session documents. Every text document with the talks is paired with the document containing the session metadata such as title, time, term, number of session and meeting. This supplement document also includes the speaker information (speaker type, speaker party, party parliament status, speaker name, speaker gender, and speaker birth). The number of parliament session documents per country and year are presented in Table 2. The number of sessions varies per parliament. For the Czech parliament, the number is the largest, while Slovene and Spanish parliament exhibit lower numbers of sessions.

Number of words per parliament and per year in the analyzed part of the ParlaMint corpus

Number of words per parliament and per year in the analyzed part of the ParlaMint corpus

Number of the sessions per parliament and per year in the analyzed part of the ParliaMint corpus

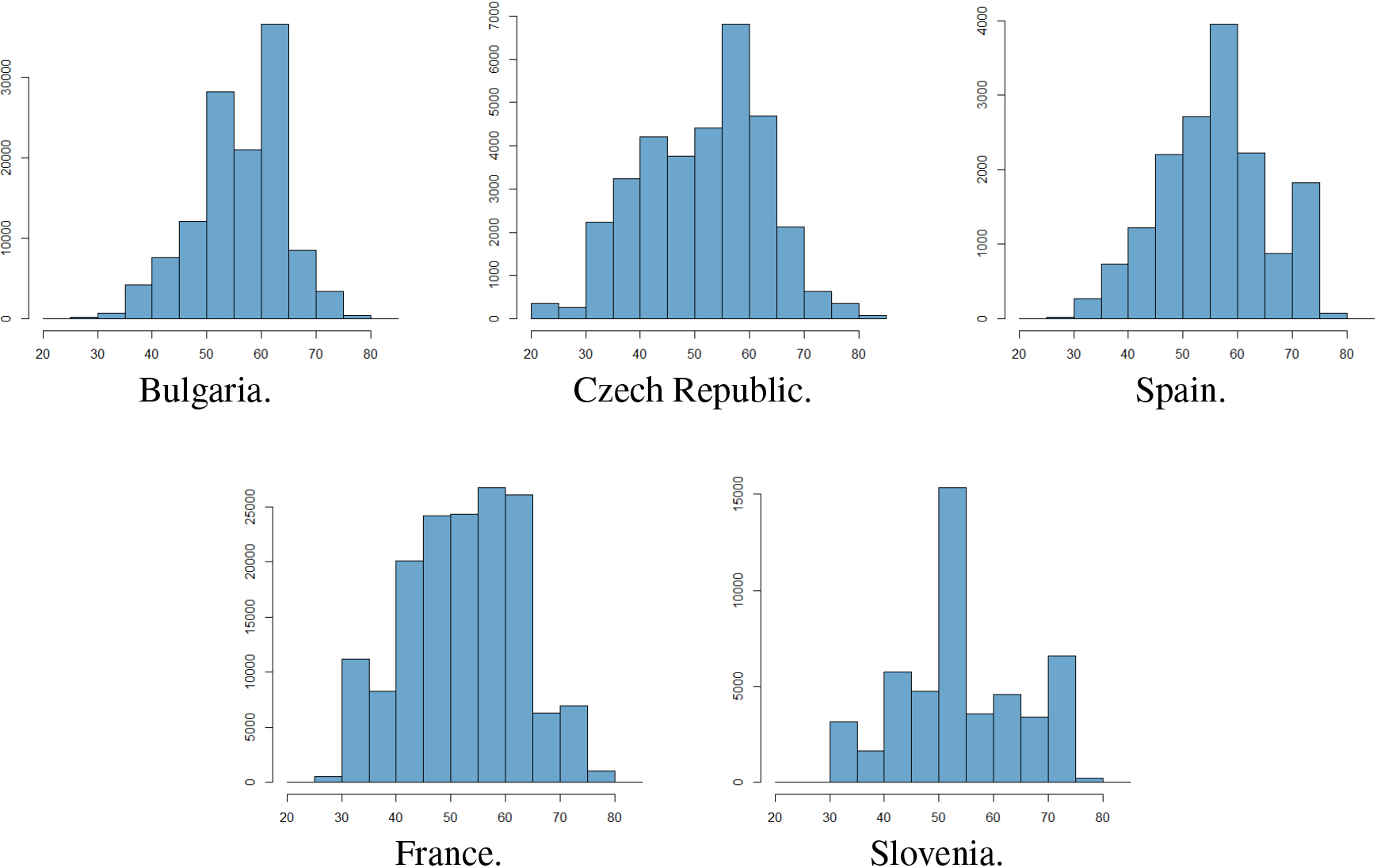

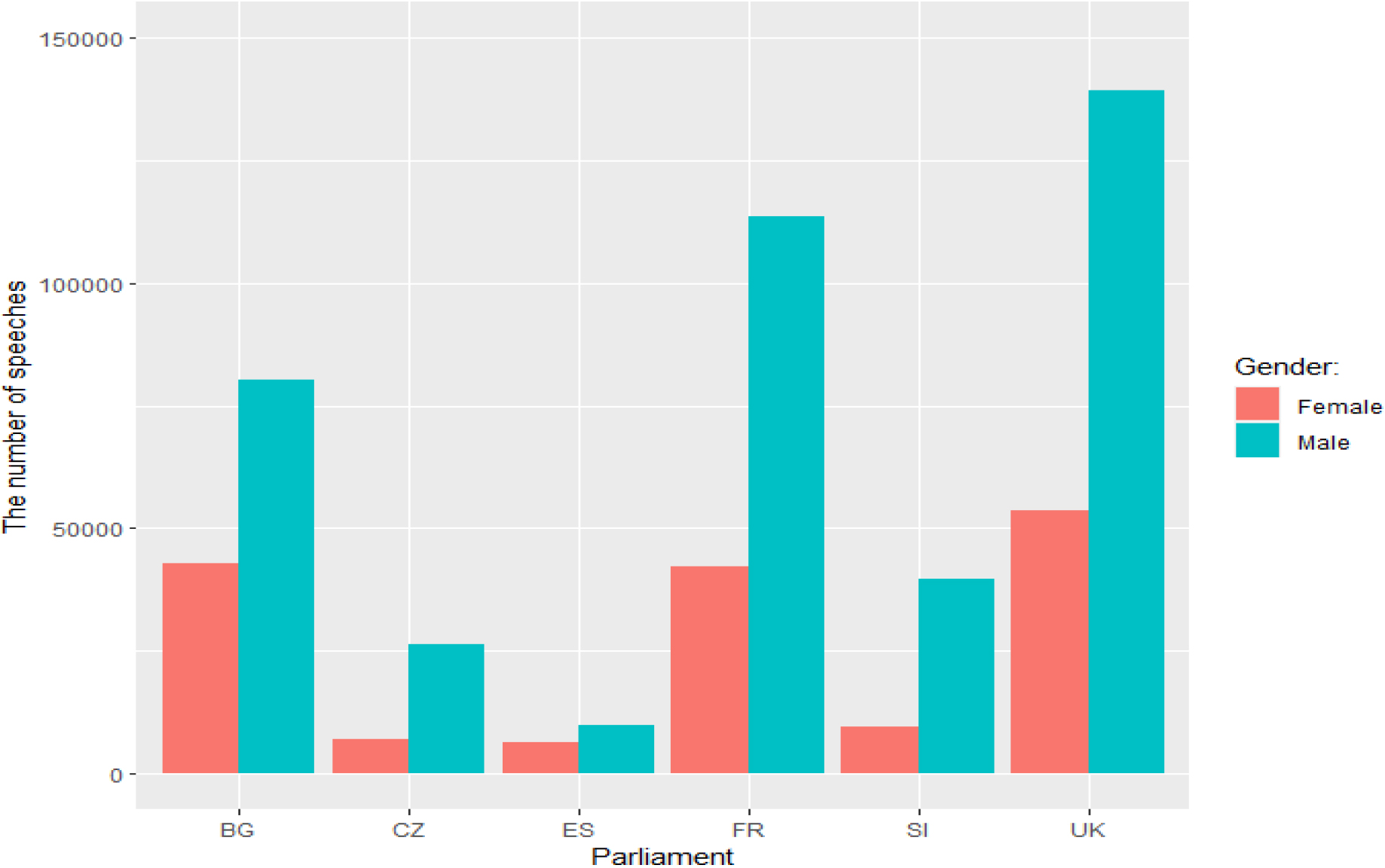

We show the distribution of the number of speeches based on MP’s age and gender across different parliaments in Figs 1 and 2, respectively. To get representative age cohorts, we merge MPs into 5 year intervals for Fig. 1. The distributions based on speakers’ age tend to peak between 50 and 60, except for France where there are no peaks. Concerning gender, there are considerably more speeches of male MPs in all parliaments, with the smallest difference in gender in Spain and the largest in Slovenia and Czechia.

The distribution of the number of speeches relative to the MP’s age across the parliaments. The age information is not available for the UK.

The distribution of the number of speeches relative to the MP’s gender across the parliaments.

We selected several modern NLP approaches to analyze the speeches in chosen languages. We use supervised text classification for sentiment and emotion analysis, as well as to predict the age, gender, and political wing of a speaker. Since 2019, a standard approach to text classification has been fine-tuning one of the large pretrained language models such as BERT [16] to the specific task. We followed this approach and used multilingual BERT (pretrained on 104 languages) to predict desired variables in a uniform way accross the tackled languages. The trained models were used to predict speakers’ meta-information (gender, age, political wing), as described in Section 4.1, as well as their sentiments and emotions based on the language and contextual information in speeches, as outlined in Sections 4.2 and 4.3, respectively. The details are presented below.

Meta data prediction

Datasets from the ParlaMint project contain information about the speakers’ age, gender, and political party. Thus, we fine-tune the multilingual BERT model to predict each of the three metadata variables from individual speeches of parliament members. The prediction accuracy of the models reveals the amount of information about the metadata stored in the parliament speeches. The variables that we predict are:

Age of the speaker. The original corpora contained the birth year of the speaker, from which we computed the speaker’s age. We dichotomized the age into two groups, separately for each country, using the Bayesaian AB testing to maximize the difference in sentiment between the younger and older group of MPs. The details of this process are contained in Section 5.3. Gender of the speaker. The original datasets contain a meta-data variable with the value ‘F’ for female and ‘M’ for male speakers, which we converted into 0 for females and 1 for males. We randomly selected 2 500 speeches by male and 2 500 speeches by female MPs. Political wing of the speaker. We assumed that both left and right political parties can be split into moderate (center-leaning) and extreme (far-left and far-right). We separately predicted the differences between the moderate parties (i.e. center-left and center-right) and extreme parties (extreme-left and extreme-right). For both center-leaning and extreme party comparisons, we selected 2 500 speeches from the left and 2 500 speeches from the right-wing of the political spectrum.

Each of the created datasets was split into training and testing data parts (80% for training and 20% for testing). The training set was used to fine-tune the multilingual BERT (mBERT) model.4

For automatic classification of sentiment in text data, various approaches have been developed, the most successful being machine learning classifiers trained on human-annotated corpora. The main challenge of these approaches is that they tend to be domain-specific and work best when trained with labeled data from the target domain but are less effective in other domains. However, as producing labeled datasets is expensive, researchers often apply the trained models across domains and languages. The cross-lingual transfer is possible either by the machine translation from a language without a suitable dataset to a language where such a dataset exists or by using a pretrained multilingual language model such as mBERT.

As there are no specific parliamentary language sentiment datasets, our cross-lingual and cross-domain approach relies on a collection of sentiment datasets from different languages in the domain of news and media. The reason to choose the news sentiment datasets is that the language and the context used are relatively similar to the parliament discourse. We use two-class sentiment prediction with the negative sentiment labelled 0 and the positive labelled 1. As our previous work has shown that sentiment classifiers can be successfully transferred between languages (especially similar ones) with mBERT [49], we combined datasets from three languages as follows.

The financial news headlines dataset [37] was labelled with the sentiment from the perspective of a retail investor and constructed based on the human-annotated finance phrase bank. The data contained 604 negative and 1 363 positive headlines. The SEN dataset [5] is a recent human-labelled dataset for entity-level sentiment analysis of political news headlines. The dataset consists of 3 819 human-labelled political news headlines from several major online media outlets in English and Polish. Each record contains a news headline, a named entity mentioned in the headline, and a human-annotated label (positive, neutral, or negative). The original SEN dataset package consists of two parts: SEN-en (English headlines that split into SEN-en-R and SEN-en-AMT), and SEN-pl (Polish headlines). The English dataset names are comming from the way the annotation process was done. For SEN-en-R each headline-entity pair was annotated via the open-source annotation tool doccano5

By combining all the above datasets, we obtained our final training dataset with 12 636 labeled instances, of which there are 6 357 negative and 6 279 positive.

Besides informative content, texts also communicate attitudinal information, including emotional states [3]. As there exist many emotional states and feelings, the emotion detection is a much more challenging compared to the sentiment analysis, where typically three attitudes (positive, negative or neutral) are predicted from the given user input. In contrast to that, the emotion detection task deals with both primary emotions (e.g., happiness, sadness, anger, disgust, fear and surprise) as well as more complex emotion models involving different dimensions of emotions and psychology theories [52]. Apart from this, emotion analysis from texts suffers from relatively small and homogeneous annotated corpora [11]. While there exist several English language datasets, emotion analysis for less-resourced European languages is much more problematic [45]. Most languages have very few, if any, well-annotated dataset that can be used to train text classification models. To overcome this issue, we use multi-lingual and cross-lingual approaches and restrict the covered emotions to positive and negative to get better statistical coverage and also reduce the error due to machine translations and/or cross-lingual transfer.

Similarly to the sentiment analysis, we use the mBERT model to detect emotions in the parliamentary speech. Our preliminary investigation showed that precise detection of many emotions is not possible in the multilingual setting, so we only categorized emotions into positive and negative. Again, the emotion detection datasets for parliamentary domain and our languages (except English) do not exist, so we use several English language datasets from various domains to enable better generalization across domains. We fine-tune the mBERT model with the following four emotion-labelled datasets.

The Kaggle Twitter dataset7

The HuggingFace8

GoEmotions9

XE10

Our final emotion detection dataset contains 23 282 instances from which 8 918 are labeled as containing negative and 14 364 as expressing positive emotions.

In this section, we report and interpret the obtained results. We present results related to prediction of metadata (age, gender, and political wing) in Section 5.1, and sentiment and emotions analysis in Section 5.2.

Metadata prediction: Age, gender, and political wing

As we described in Section 4.1, we find-tuned the multilingual BERT language model to predict speaker’s metadata such as age, gender, and political position. The mBERT model was fine-tuned for each of the metadata variables and six countries separately, and we present the predictive performance measured on the testing datasets. Being able to predict any of these three variables indicates considerable differences in the language used by specific groups of parliamentary speakers. The differences and similarities between different countries are discussed below.

Predicting the age of speakers

For each of the analysed parliaments, we split the speeches into three groups according to the first and the third age quartile. For each country, we created two prediction models, one trying to distinguish between the speeches of MPs younger than the first quartile and aged between the first and third quartile (Table 3), and the second distinguishing between the speakers aged between the first and third quartile and speakers older than the third quartile (Table 4). In this way, we investigate language differences between three generations of MPs, checking if their language is age-specific.

Tables 3 and 4 show that age is a relatively well-predicted characteristic of speakers in Spain, Bulgaria, Slovenia and Czech Republic, while in France, there are very few language differences between speakers of different ages. The higher the prediction performance, the easier it is to distinguish between speakers’ age groups, and the language generation gap is more significant.

The only notable difference between Tables 3 and 4 are the scores for the Czech Republic. Here the gap between the youngest and middle-aged MPs is considerably larger than between the middle-aged and older MPs. We hypothesize that this might be the result of transition between the communist-rule and parliamentary democracy, where the language of younger generations is less affected by the previous social system.

Classification accuracy, precision, recall, and

-score for predicting speakers’ age (younger than the first quartile or between the first and third quartile) in different parliaments. For the UK parliament, the age of speakers is not available

Classification accuracy, precision, recall, and

Classification accuracy, precision, recall, and

Below we try to explain the two extreme cases, Spain with the largest gap between age groups and France with the smallest.

Flaherty [21] presents a historical development of French political discourse, which is directed toward uniformity in discursive strategies and may explain their similarities. A similar conclusion was drawn by Lehti and Laippala [36] who show that the language used in French politicians’ blogs is relatively standard.

The Spanish case, with the largest differences between younger and older parliamentary speakers, may be explained by the fact that after the end of the two-party system, new parties with younger leaders wanted to contrast with more senior and more socially privileged individuals [9].

As discussed in Section 2.2, the information about speakers’ gender may be detected from the grammatical structures used in their speech for all the analyzed languages but English if speakers use phrases related to their personal beliefs and feelings. Another possibility to detect gender is if speakers of different gender indeed use different language. We test differences in the speech between genders in two ways: first, we predict the gender in the original language, and second, we translate all speeches to English, and predict the gender of the translated speeches, thereby avoiding possible leakage from grammatical structures in the original languages.

As Table 5 shows, gender in the original texts is detectable to some degree in all analyzed countries. Slavic language (Slovenian, Czech, and Bulgarian) speakers express their gender the most explicitly, followed by Spanish, English and French speakers. The last two (English and French) are surprising for different reasons. In French, where gender may be expressed with the language, there is little evidence that speakers express it. Similarly to the age, we hypothesize that the case of French could be explained by the tendency toward language uniformity in French political discourse. Contrary to that, in English, where gender expression is not part of the grammar, the speakers’ gender can be detected nevertheless, indicating differences in expression between male and female MPs.

Predictive performance of speakers’ gender prediction for six original parliamentary datasets

Predictive performance of speakers’ gender prediction for six

Predictive performance of speakers’ gender prediction for five parliamentary datasets

In Table 6 we see that gender differences are detectable also if the speeches are first translated to English (as gender neutral concerning grammar), indicating differences in the expression between male and female MPs. For Bulgarian, Spanish, and French, we can observe small differences in prediction scores compared to the original languages (in Table 5), while Czech and Slovene show substantially lower prediction performance for the translated speeches. Nevertheless, in Slovene the gender differences are still the most pronounced.

Based on both original and translated speeches, we can conclude that gender differences exist in all analyzed countries.

This section investigates the speech differences between parliament members with different political orientations. Our approach is again based on prediction models that predict the metadata (party membership) available for speakers. A successful prediction would testify that speakers of different political orientations use different language, while low success in prediction would indicate that the compared parties use similar discourse. We investigate two scenarios of different difficulty:

Predicting the left/right positioning of speakers from firmly or extreme left and right political parties. This problem shall not be very difficult, as we expect significant differences in the political stance between these parties, which we assume will be expressed in different content and possibly other linguistic features. The results are presented in Table 7. Predicting the left/right positioning of speakers from the center-left and center-right political parties. This shall be a more complex problem as we try to distinguish between speakers from relatively similar parties. The results are presented in Table 8.

Classification accuracy, precision, recall and

Classification accuracy, precision, recall and

As expected, the differences in speech between extreme left- and right-wing parties are relatively well predictable for all countries, indicating big differences in the discourse of these parties. The classification accuracy between countries ranges from 88% (Czech Republic) to 74% (France).

Surprisingly, the differences are still large between center-left and center-right parties, ranging from 87% (Slovenia) to 54% (France). France is an exception with its low predictability (again, likely due to the tendency for uniform political discourse), which is much more prominent in other countries. For two countries, Slovenia and Bulgaria, the differences between central parties are larger than between extreme parties, which may indicate strong political competition between the central parties.

This section presents the results obtained from the sentiments and emotion detection experiments. For these experiments, we fine-tuned multilingual BERT on the training datasets described in Section 4.2 (sentiment) and Section 4.3 (emotions). First, we try to establish the quality of the trained models. For that purpose, during the fine-tuning process, a small part of the training data (10% of all training instances) was used for the validation after each training epoch. This classification accuracy is shown in Table 9 for both the sentiment and emotion detection tasks. The results show that sentiment and emotions can be relatively well-predicted, which is a positive indication of the reported results’ reliability. Slightly better results in predicting positive and negative emotions are expected, as emotion datasets are all in English, all collected from social media, and therefore relatively homogeneous. The sentiment detection datasets are multilingual and collected in different domains; thus, the training accuracy reported in Table 9 is expected to be lower. However, this does not mean that the obtained sentiment model would provide less good generalization on our out-of-domain parliamentary data.

Classification performance on the validation sets during fine-tuning the mBERT model on sentiment and emotion prediction tasks

Classification performance on the validation sets during fine-tuning the mBERT model on sentiment and emotion prediction tasks

To further assess the quality of the produced sentiment prediction model, we selected 20 talks with the highest probability of the negative sentiment for each of the parliaments and manually validated weather predictions are correct. The results are presented in Table 10. Based on the results, we consider the model’s accuracy good enough (and comparable to other sentiment prediction models in the literature) to provide a reliable picture of the sentiment in our study. The fine-tuned models were used on our six parliamentary speech datasets. To obtain reliable and comparable statistics, we randomly selected 10 000 speeches that have more than 30 characters of regular parliament members from 2020 for each of the six parliaments. For each speech, the trained mBERT model returned the sentiment score between 0 and 1 (0 indicating the negative and 1 indicating the positive sentiment).

Manually determined percentage of instances with correctly predicted negative sentiment in parliamentary speeches for compared parliaments

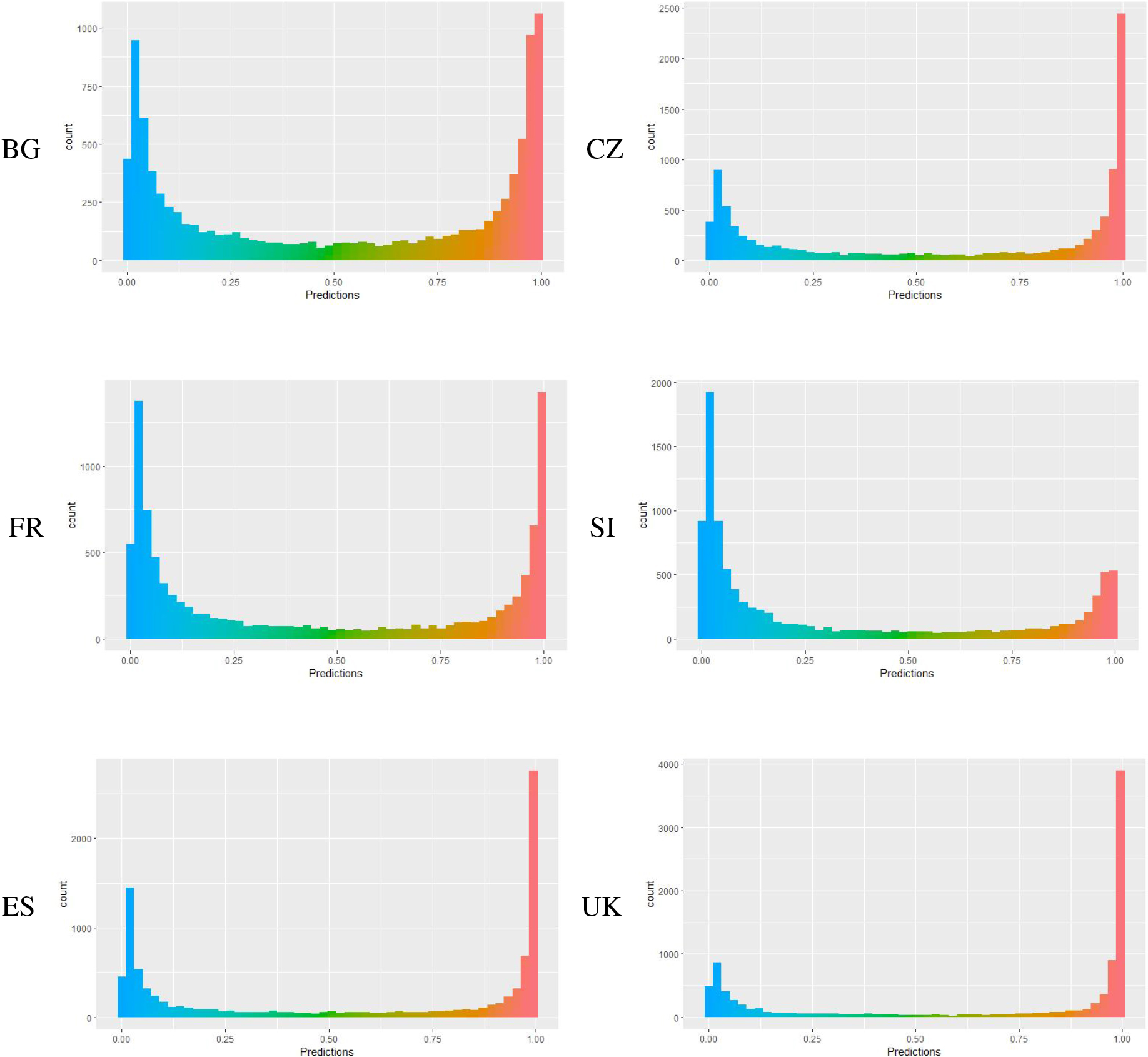

Predictions are summarized in Fig. 3 and Table 11 from which we can compare the parliamentary sentiment across the countries. Figure 3 shows the histogram of sentiment distribution in each country. The Czech, Spanish and United Kingdom parliaments seem to express less negative sentiment than positive; in the Bulgarian and French parliaments, there seem to be a relatively balanced situation, while the Slovenian parliament shows the least positive sentiment. We attribute the results for Slovenia to the poisonous exchanges between the pro-government and opposition parties at the observed time when the previous opposition took over the government in the middle of the mandate. To get a numeric overview of the sentiment, we set the decision threshold for negative sentiment at 0.2 and for positive sentiment at 0.8 and counted the number of negative and positive speeches. The results are presented in Table 11. As before, we conclude that the parliament with the highest percentage of negative sentiment is Slovenian, while the UK parliament speeches contain the highest positive sentiment rate.

The histograms showing the distribution of sentiment predictions for six parliaments. The score of 0 indicates completely negative and the score of 1 completely positive sentiment.

Percentage of negative and positive sentiment in parliamentary speeches for observed countries

Similarly to sentiment, we process emotions. To validate how good the emotions detection models are, we selected 20 speeches predicted to be the most negative for each parliament and manually checked if predictions were correct for them. The results are presented in Table 12. We can observe significantly lower accuracy in all countries compared to the sentiment (shown in Table 10). While this is not surprising as the emotion prediction is considered harder compared to sentiment, this makes the results and interpretations presented below less reliable compared to the sentiment.

Manually determined percentage of instances with correctly predicted negative emotions in the parliamentary speeches of compared parliaments

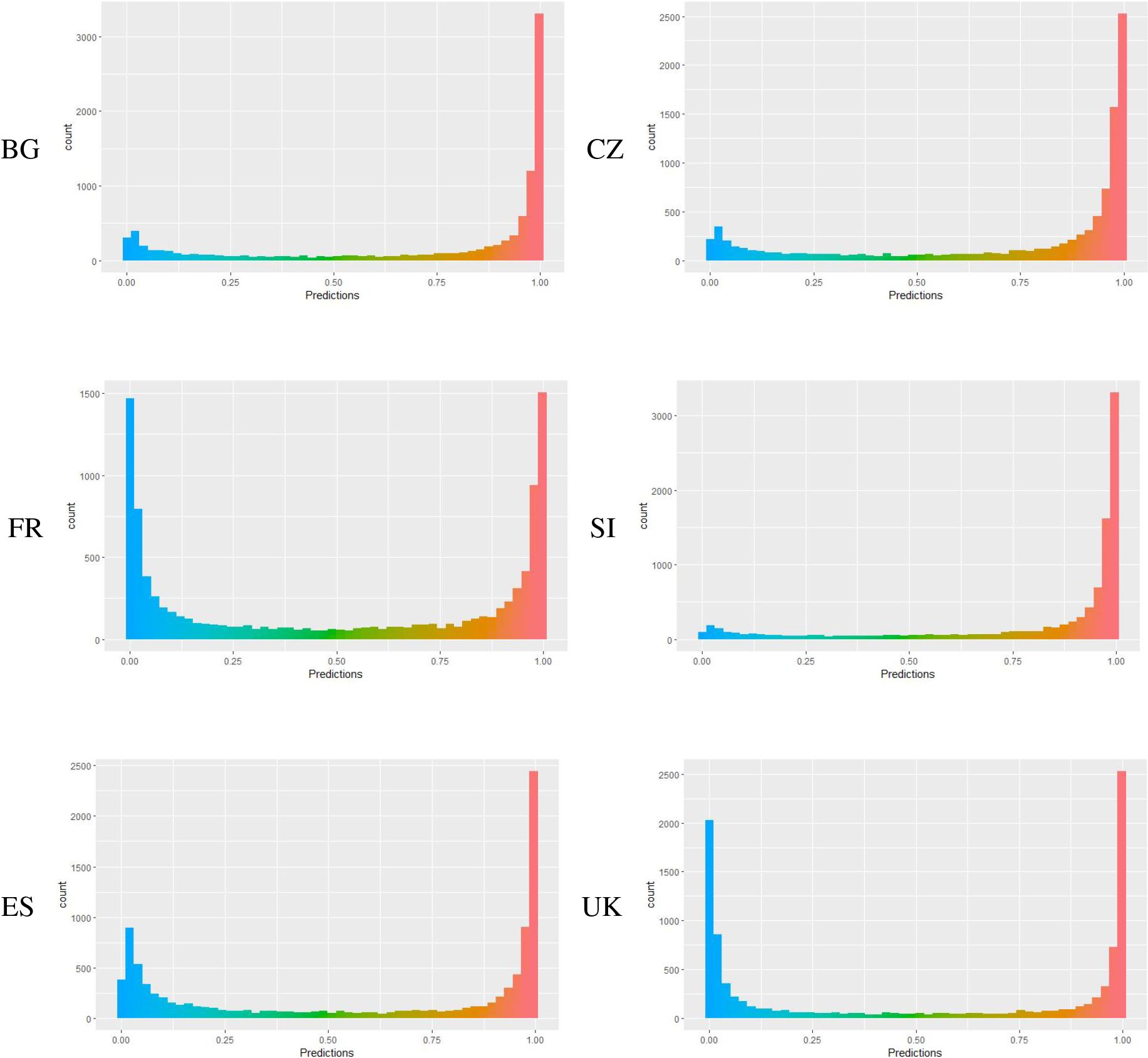

We show the results for the emotion detection in Fig. 4 (distribution of sentiment predictions) and Table 13 (the percentage of positive and negative emotions, taking 0.2 and 0.8 as the decision threshold values). As the results show, positive emotions are strongly dominant in all countries except France and UK, where positive and negative emotions are almost balanced.

Percentage of negative and positive emotions in parliamentary speeches for observed countries

The distributions of emotion predictions for six parliaments. The score of 0 indicates completely negative emotions and the score of 1 completely positive emotions.

In this section, we investigate the impact of age on the sentiment. We apply Bayesian statistics to find the age which best distinguishes between the positive and negative sentiments of the speakers.

We start with the hypothesis that older speakers more openly express the negative sentiments compared to younger speakers (the reverse hypothesis would be equally suitable for our approach), and form two hypotheses to use in the Bayesian hypothesis test:

To determine the age which best separates the younger from the older MPs in terms of positive sentiments, we estimated the posterior distribution for multiple age cutoff points as shown in Table 14. The resulting numbers were constructed as follows:

We separated the speakers to the younger and older based on the For both, the younger and older population, we dichotomized the sentiment scores to 1 (scores higher than 0.5) or 0 (scores lower than 0.5), and assumed that they are drawn from the binomial distribution. Assuming the beta prior and the binomial likelihood, it is possible to estimate the closed-form Bernoulli posterior distributions. Using the

The results show certain differences between the countries, In Bulgaria, Spain, and France, the MPs between 50 and 65 express the most positive sentiment, i.e. the thresholds for 55, 60, and 65 show high probabilities that younger MPs express positive sentiment while for the threshold of 50, this probability is lower. In the Czech Republic the positive sentiment is prominent in the age group of less than 55. In Slovenia, the MPs between 50 and 55 are the most negative, while other age groups are predominantly positive.

The probabilities that MPs younger than the Age Cutoff express more positive sentiments compared to older ones, as calculated with the Bayesian AB test. For UK parliament the age of MPs is not available

In this section, we further discuss some interesting questions related to the language of extreme left and right-wing politicians, i.e. we try to discover the language differences in political orientation in relation to age and gender. As explained in Section 4.1, we separated political parties according to their political orientation (extreme left or extreme right), based on the publicly available information and parties’ self-declarations.

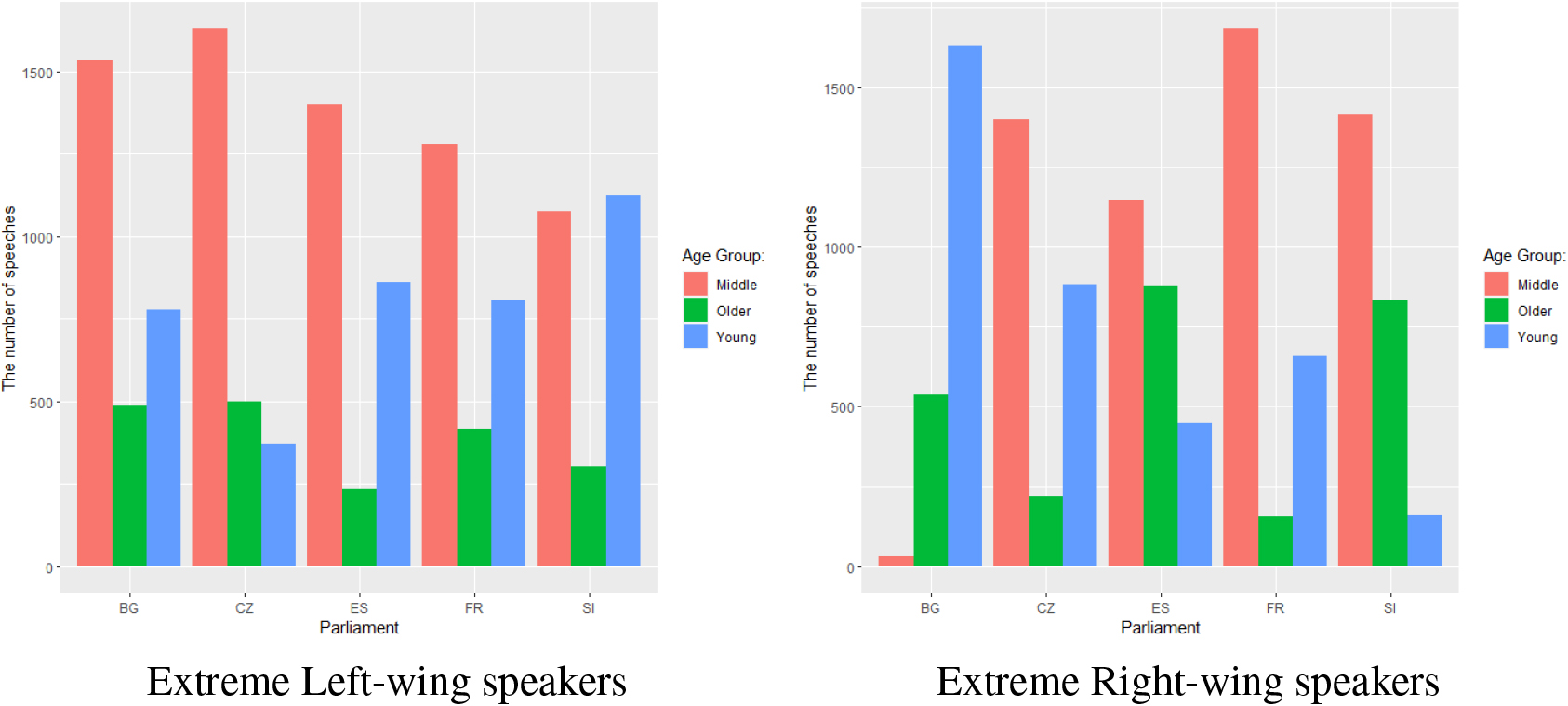

The distribution of the number of speeches relative to the MP’s age group across the parliaments for extreme left- and right-wing MPs.

The distribution of the number of speeches relative to the MP’s age group across the parliaments for left- and right-wing MPs.

We first plot the differences in political orientation based on the age of speakers. Similarly to Section 5.1.1, we split the age span into three intervals, using the first and third quartile as the thresholds: younger MPs (less than the first quartile), middle-aged MPs (between the first and third quartile), and older MPs (more than the third quartile). The distribution plots for left- and right-wing speeches are presented in Fig. 5. We can observe that for most of the parliaments, the extreme-left speakers are predominantly middle-aged. The exception is Slovenia, where both younger and middle-aged speakers form the the majority of extreme left-wing speakers. The extreme-right politicians in most parliaments are also predominantly middle-aged, except in Bulgaria, where the younger MPs for the majority of this group.

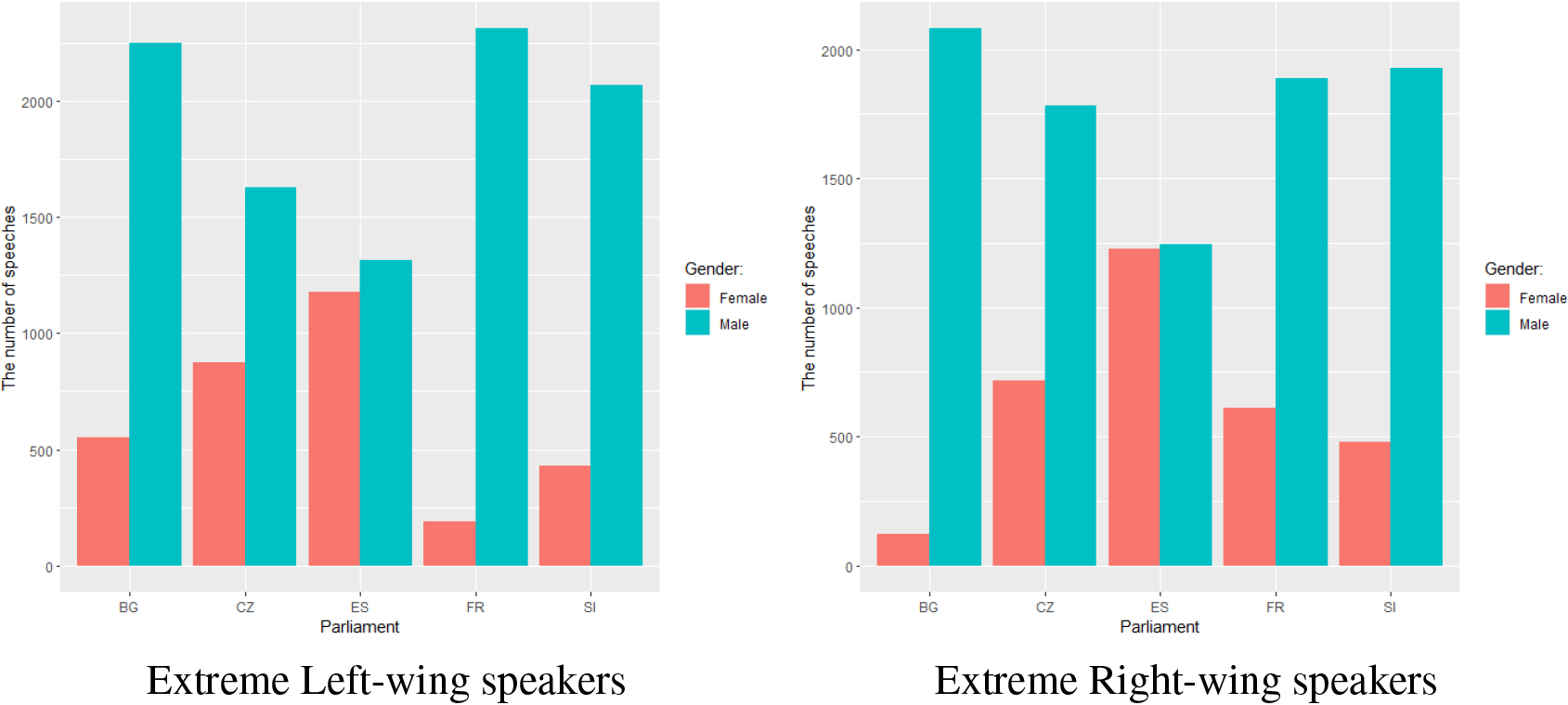

Similarly, we compared gender differences for both extreme-left and extreme-right positioned speakers in Fig. 6. The difference in gender distributions are the most pronounced in Bulgaria and France. While for Bulgaria the number of female speakers drops going from extreme left to extreme-right, for France this number increases.

We presented the mono- and cross-lingual methodology based on the cross-domain transfer learning and Bayesian statistical testing for the analysis of parliamentary speeches. The proposed methodology and constructed models can be applied in a uniform way to the parliamentary speeches in the ParliaMint corpora collection (and other parliamentary datasets with similar information). Our methodology covers analysis of sentiment and emotions, as well as prediction of metadata such as age, gender, and political orientation of the speakers. The source code of the developed methods and evaluation scenarios is publicly available.12

We discovered that in all countries except France, the age and gender of speakers is a strong factor in the political discourse. Further, we found a big difference in the discourse between extreme left- and right-wing parties in all analyzed countries. Surprisingly, there is also a considerable difference between center-left and center-right parties in all countries except France. The sentiment analysis shows considerable differences between parliaments. The Czech, Spanish and United Kingdom parliaments express less negative than positive sentiment, the Bulgarian and French parliaments have a balanced distribution, and in the Slovenian parliament, the negative sentiment dominates. The sentiment is also significantly different for different age groups. The situation is different with the emotions, where positive emotions are strongly dominant in all countries except France and UK, where positive and negative emotions are almost balanced.

There are many open avenues for further work. A larger analysis of all 16 parliaments in the ParliaMint collection would require a much larger research team who would be able to interpret the results but would produce a very interesting comparison between the parliaments. The proposed methodology could be extended with better training datasets for sentiment and emotions when they become available. We could analyze a broader spectrum of emotions, but currently, existing datasets are inadequate for our purpose due to differences in the covered domains.

Footnotes

Acknowledgments

This work is based upon the collaboration in the COST Action CA18209 – NexusLinguarum “European network for Web-centred linguistic data science”, supported by COST (European Cooperation in Science and Technology). Marko Robnik-Šikonja received financial support from the Slovenian Research Agency through core research programme P6-0411 and projects J6-2581, J7-3159, and V5-2297. Encarnación Hidalgo Tenorio was financially supported by the European Social Fund, the Andalusian Government, and the University of Granada (Project References: A-HUM-250-UGR18 & P18-FR-5020). Petya Osenova was partially supported by CLaDA-BG, the Bulgarian National Interdisciplinary Research e-Infrastructure for Resources and Technologies in favor of the Bulgarian Language and Cultural Heritage, Grant number DO01-377/18.12.2020.