Abstract

This paper presents an improved extension of the previous algorithm of the authors called KAdam that was proposed as a combination of a first-order gradient-based optimizer of stochastic functions, known as the Adam algorithm and the Kalman filter. In the extension presented here, it is proposed to filter each parameter of the objective function using a 1-D Kalman filter; this allows us to switch from matrix and vector calculations to scalar operations. Moreover, it is reduced the impact of the measurement noise factor from the Kalman filter by using an exponential decay in function of the number of epochs for the training. Therefore in this paper, is introduced our proposed method

Introduction

In a previous work of the authors [2], the KAdam algorithm was introduced as a first-order method for stochastic function optimization. For the design of the KAdam algorithm, it was assumed that for each layer in a neural network there is an associated true state vector from a linear dynamic system, where, the predicted state estimated vector has the same dimension as the sum of the dimensions from the stacked weights and biases of its layer.

With the presentation of KAdam, it is confirmed that by using the Kalman filter [5] with Adam [6], the filter can add significant and relevant enough variations to the gradient (similar to the additive white noise randomly included to the gradient [7]) in order to find better solutions in the loss function. Furthermore, this version shows that it could be used as an initializer in on-line settings compared with: Momentum [9], RMSProp [11], and Adam [6].

However, even that KAdam presents a great performance in the carried out experiments, the time that takes to train a neural network is out of comparison with other gradient-based optimizers. The problem is that the size of the matrices from the Kalman filter depends on the number of neurons used per layer in the neural network architecture. Hence, if the number of neurons is increased, the dimension of the matrices directly affects the time that takes to compute the matrix inverse. Furthermore, the authors realized that when the loss is near to the neighborhood of the optimal minimum, KAdam starts to present a noisy behavior due to the fixed measurement noise considered for the Kalman filters. Thus, if the number of epochs needed to converge is increased, the algorithm present problems to reach lower values in the loss function.

In order to solve the identified problems, firstly, in the design of the new algorithm, it is proposed to use 1-D Kalman filters for each parameter from the loss function instead of using one Kalman filter per layer on the neural network. On the other hand, it was used an exponential decay over the measurement noise considered in the Kalman filters.

With these changes to the original algorithm, it is presented

This paper is organized as follows, in the next section a quick description of the gradient descent algorithm is made, their variants and some of their most popular state-of-the-art optimizations. Then, in Section 3 a brief introduction to the Kalman filter and its implementation for a 1-D problem is provided. After that, in Section 4 the

Gradient descent

Gradient descent is a first-order method to perform stochastic functions optimization.

Let

The gradient descent algorithm in neural networks is one of the most popular techniques used to update the parameters (weights and biases) of the model, using the loss function as the objective function to optimize.

Variants

The gradient descent algorithm has three main variants: Stochastic gradient descent (SGD), Batch gradient descent (Batch GD a.k.a. Vanilla GD) and Mini-batch gradient descent (Mini-batch GD); which differs in the amount of data used to compute the gradient.

Usually this variant is more accurate while it is updating the parameters, but it takes longer to finish the optimization because of the number of updates that the algorithm performs.

Compared to SGD this variant is faster when it is updating the parameters. However, this speed improvement directly affects the accuracy of the parameter updates.

This variant has the best from both worlds; it combines part of the accuracy from SGD and the speed improvement from Batch GD. Theoretically, there is an optimal mini-batch size where the performance and the computational complexity are balanced.

There are different methods designed in order to accelerate and improve the gradient descent algorithm and its variants. Each state-of-the-art method present its own strengths and weaknesses, see [10] for further details with a deeper overview.

From here, let

The momentum term drives the parameters into a relevant direction, accelerating the learning process and dampening oscillations on the parameters update. However, the same term could present a blind-rolling behaviour on the algorithm, because it keeps accumulating momentum from the previous update vectors.

In Eq. (6),

One of the best features from AdaGrad is that it reduce the importance of tuning the learning rate manually. Nevertheless, this main advantage is also a problem at a certain point of the optimization because the learning rate start to shrink and eventually it will be infinitesimal, hence the algorithm will be no longer able to keep learning.

where, RMS stands for Root Mean Square. In Eq. (7), the numerator is used as an acceleration term by accumulating previous gradients similar to the momentum term, but with a fixed size window. The denominator is related to AdaGrad by saving the squared past gradient information, but with a fixed size window to ensure progress is made later in training.

Similar to AdaGrad the RMSProp’s algorithm is storing the past squared gradients, but instead of keep accumulating them over the training, it is using an exponential moving average

Curiously, in the Adadelta’s method derivation in [13], it can be observed that before the second idea of its authors the update parameters rule is just like the update rule from RMSProp; but the authors of Adadelta considered an extra term to make a units correction over the parameters and not the gradient.

Adam has the best from both worlds, the algorithm is using exponential moving averages over the past gradient (

The Kalman filter [5] is a recursive state estimator for linear systems. First lets assume a dynamic linear system in the state space format:

where given the time-step

The true state vector

where,

Therefore, the Kalman filter provides estimates of some unknown variables given observed measurements over time. The estimation can be divided into a two-step process: prediction and update.

First, a predicted (a

Then, for the update phase, the optimal kalman gain matrix

In Eqs (16) to (17) the true state, dynamical model, and measurements are described with vectors and matrices. On the other hand, if the problem described lies in 1-D vector space, then it is represented in terms of scalars and constants. Thus, the equations for the Kalman filter can be rewritten as follows:

where, the matrix operations are replaced with scalar operations and the matrix inverse is replaced with the scalar multiplicative inverse (reciprocal value).

In the design of the KAdam’s algorithm one Kalman filter for each layer of the neural network was used, i.e. assuming a neural network with

As can be seen in Eq. 20 there is an inverse matrix computed for the Kalman filter, then the algorithmic complexity is in function of the architecture for the neural network. Moreover, if this first version is used in on-line training settings the algorithm takes longer to finish the optimization compared with other gradient-based optimizers.

For sKAdam design, it is proposed to use one Kalman filter for each parameter of the loss function. Hence, the Eqs (23) to (27) can be used because now the problem lies in 1-D.

Kalman filter parameters

Similar to the first version of KAdam, the variables

where

With these considerations, the Eqs (23) to (27) of the scalar Kalman filter for this particular implementation can be rewritten as follows:

The equations for sKAdam use a

Then the equations for the first Eq. (11) and second Eq. (12) moments estimated from Adam now use the estimated gradient:

and the equations for the bias-corrected first moment estimate Eq. (13), the bias-corrected second moment estimate Eq. (14), and the parameters update rule Eq. (15), they remain the same as in the Adam algorithm.

In Algorithm 1 is shown the pseudocode for sKAdam.

To empirically evaluate the performance of our method, different experiments werew carried out with popular benchmark classification and regression problems. In the experiments there is a comparison over the loss reduction in mini-batch and full-batch settings against other gradient-based optimizers, using feed-forward neural networks with fixed architectures (experimentally selected according each experiment) and the mean squared error (MSE) as the loss function.

In every experiment, all the models start the optimization with the same parameters (weights), which are randomly initialized. The settings for the hyper-parameters of the optimizers are the recommended by the authors of each algorithm, except for the learning-rate which is fixed here to the value of

Each experimental result and comparison is presented in two figures in order to the reader can clearly appreciate the dynamic of each algorithm because the lines that represent each algorithm behaviour overlap very often and they can occlude each other.

MNIST database classification experiment

The popular classification problem for the hand written numbers. Originally the dataset consists of images of 28

MNIST 2-D visualization using the t-SNE implementation from scikit-learn.

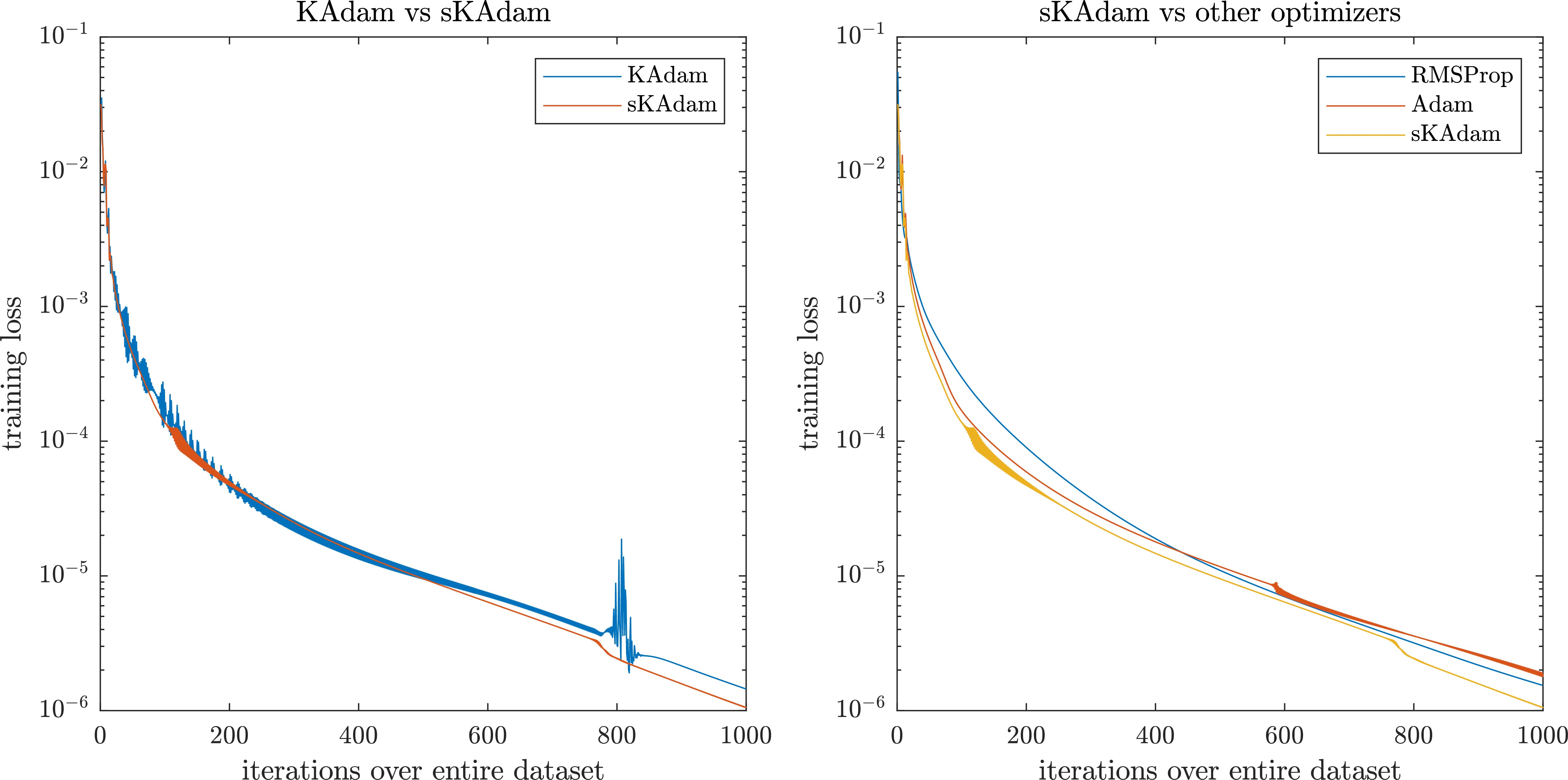

MNIST experiment in mini-batch settings – comparison of the loss optimization over the trainings.

For the architectures of the neural networks were set to (10, 10) neurons, hyperbolic tangent and sigmoid as the activation functions respectively. All the algorithms performs the optimization with 60,000 patterns from the transformed dataset over 1,000 epochs.

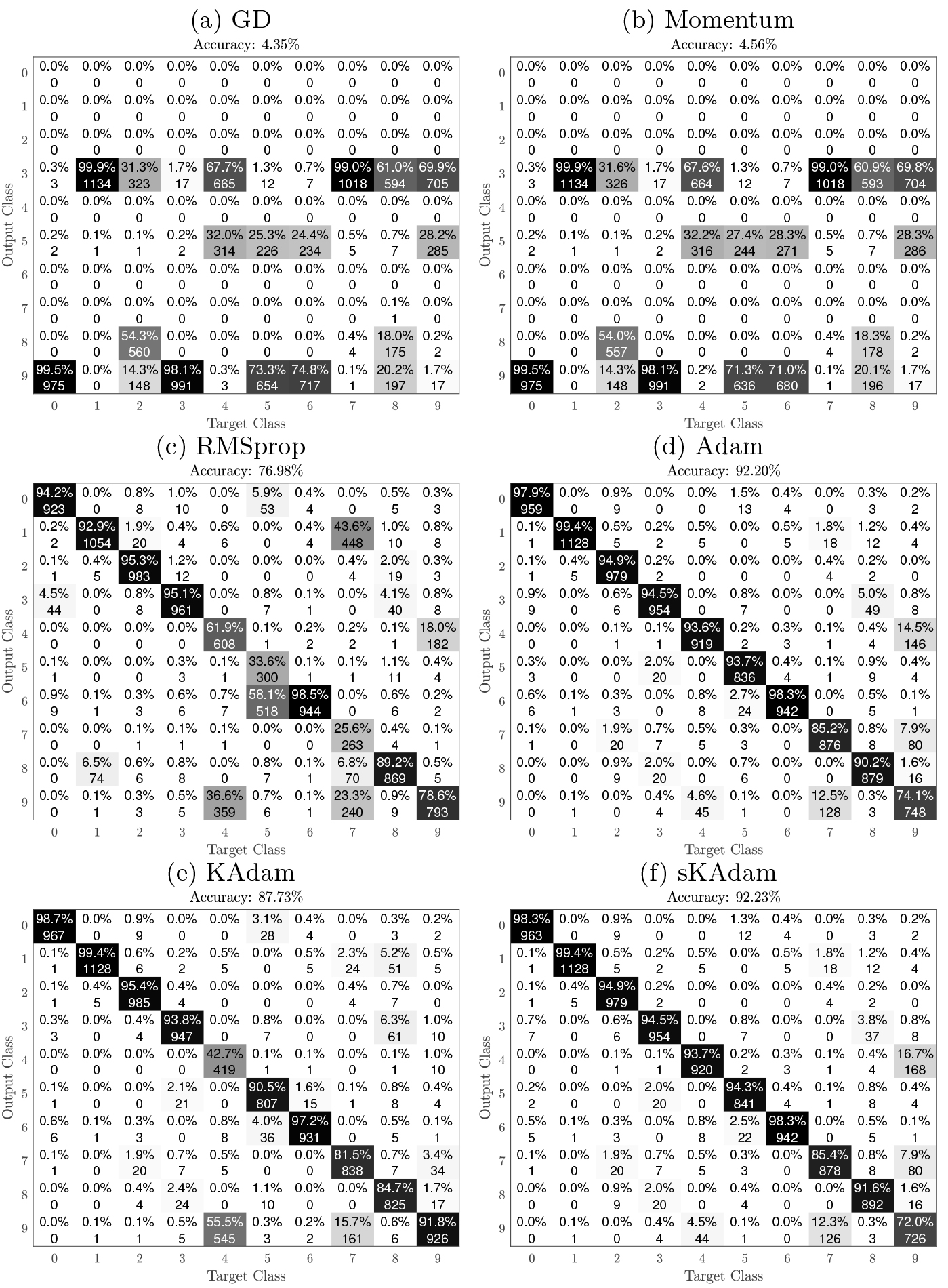

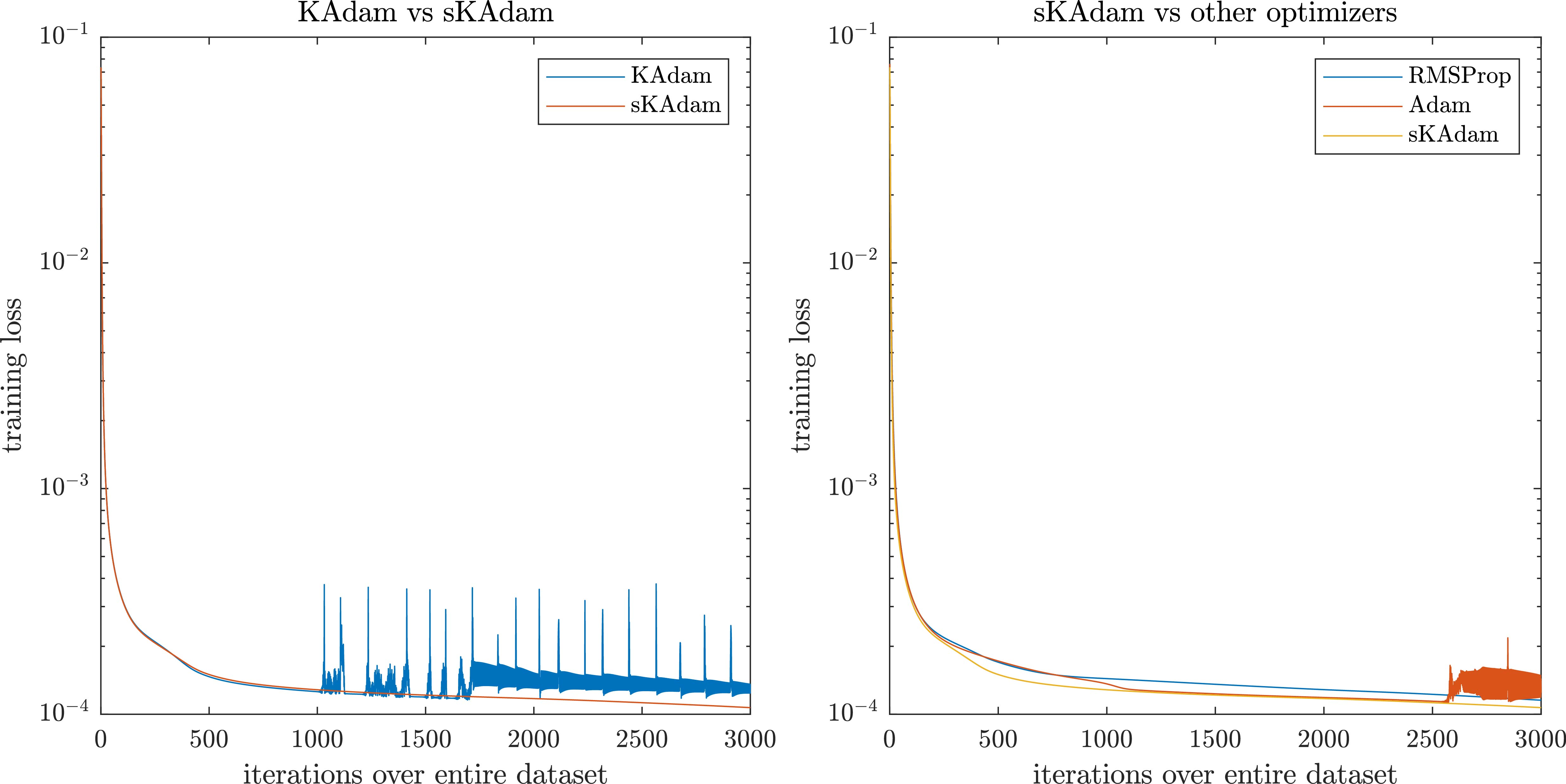

As a first comparison, the training was run in mini-batch settings to test the improvement in the execution time that sKAdam reaches. Also, the performance in the optimization was tested and the loss reduction of our method was compared against GD, Momentum, RMSProp, Adam, and KAdam.

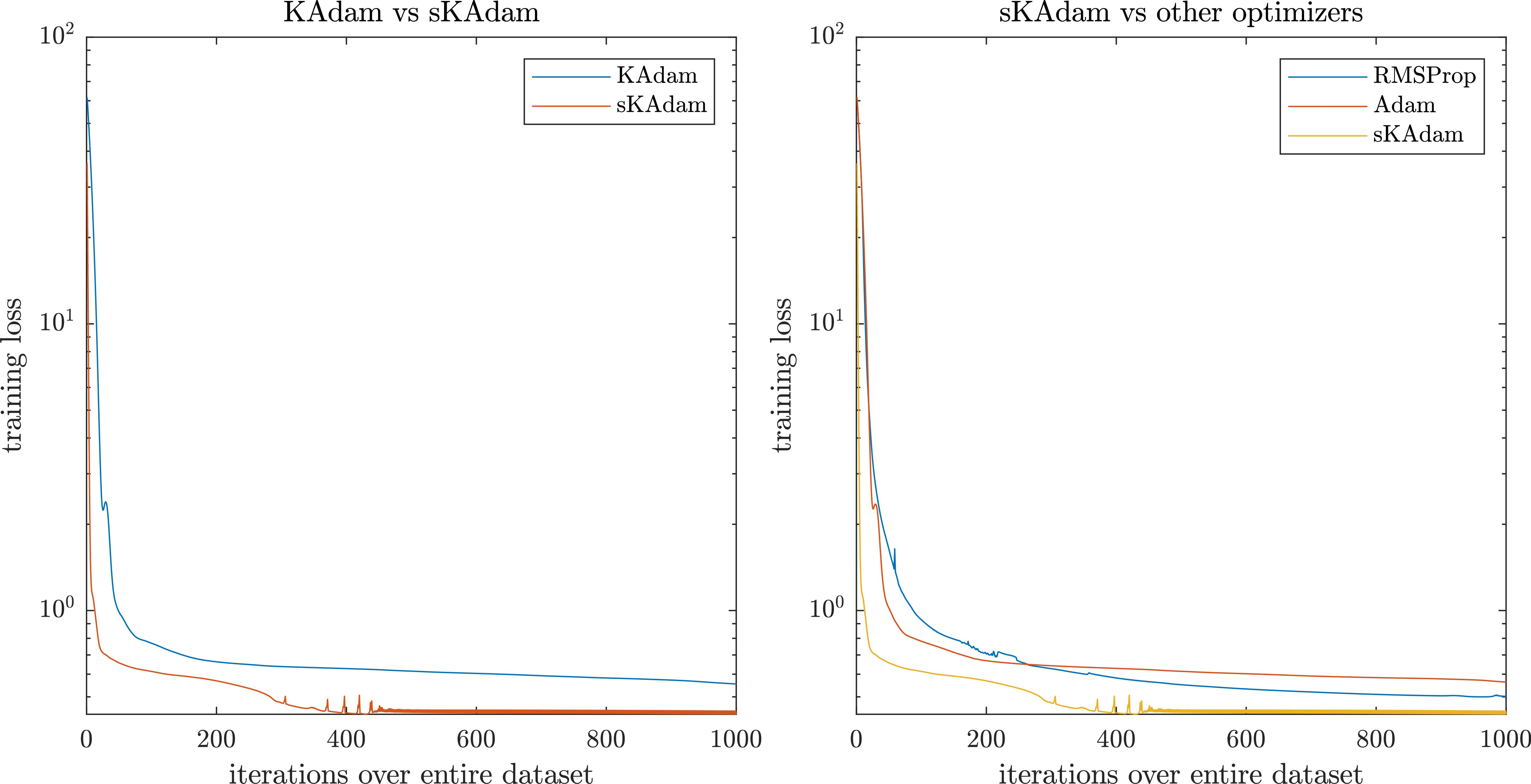

In Fig. 2 it can be observed the experiment in mini-batch settings. Here, on the left side, it can be seen that

In a second comparison, the training was run in full-batch settings, where the authors already know that the execution time may not present a considerable difference with the original KAdam’s algorithm and the other optimizers.

MNIST experiment in full-batch settings – comparison of the loss optimization over the trainings.

MNIST experiment – last 100 epoch of the trainings.

This time, at the left side of Fig. 3 it can be seen that again sKAdam presents a smoother behavior compared with KAdam. However, on the right side, it can be observed that the adaptive learning-rate methods present similar results, and only RMSprop presents noisy behavior.

In Fig. 4 there is a close up to the dynamics of KAdam and

Nevertheless, as it can be observed the

Table 1 presents a summary of the results obtained over the optimization in both settings. Where it can be seen how even that KAdam and

MNIST – Comparison table

As it can be seen either in the comparisons and at the Table 1 of the experiment, the adaptive learning-rate methods as RMSProp, Adam, KAdam, and sKAdam present the best performance over the optimization. However, for the other methods, only the execution time is a remarkable feature in the comparison. Therefore in the following experiments, the gradient descent and momentum methods were removed from the comparison results.

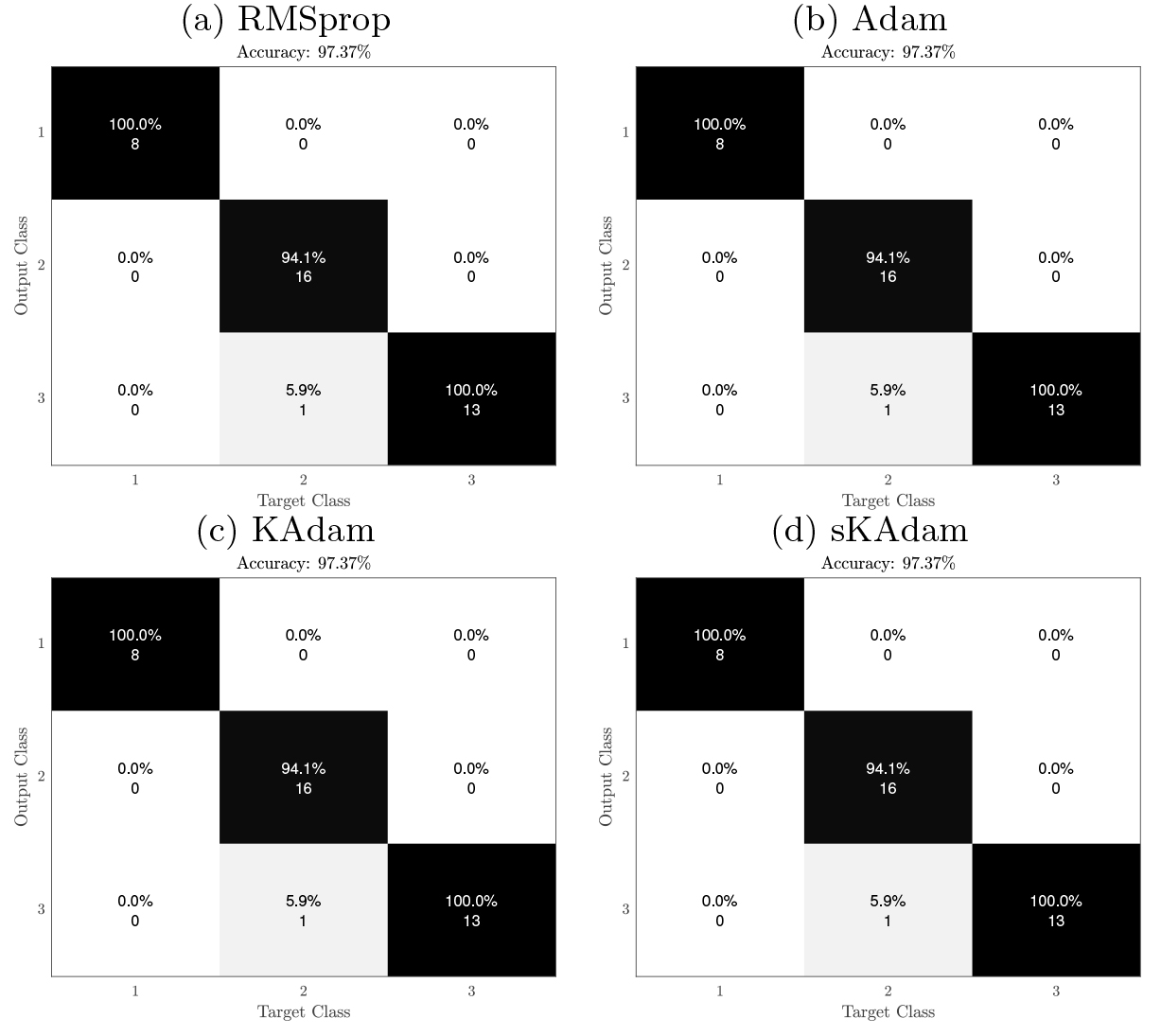

In Fig. 5 it can be observed the confusion matrices from all the algorithms in the comparison. These matrices were calculated from the generalization performed with the trained neural networks in full-batch settings and using the test dataset, which contains 10,000 patterns.

Confusion matrix for MNIST Generalization.

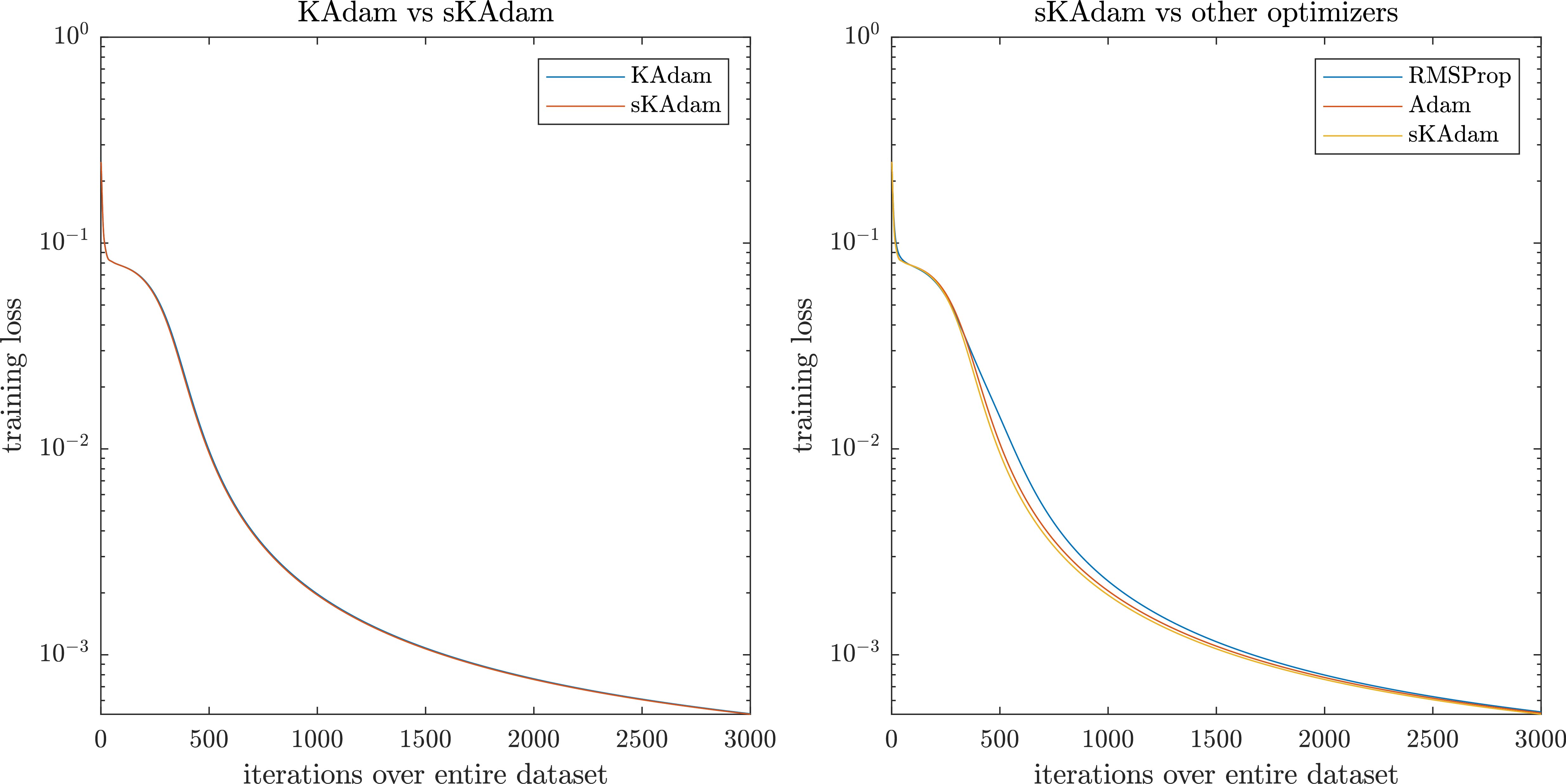

In order to test the behavior of sKAdam with datasets that present a low noise factor, it was selected this experiment that deals with the classification problem of two interleaving half circles in two dimensions. The dataset contains 10,000 patterns, generated by a function2

The loss reduction comparison was run over 3,000 epochs and using a mini-batch size of 256. For the architecture of the neural networks, two layers were used with (10, 1) neurons, hyperbolic tangent and sigmoid as the activation functions respectively.

In Fig. 6, it can be seen again that using mini-batch settings

Moons optimization in mini-batch settings – comparison of the loss reduction over the trainings.

On the other hand, Fig. 7 shows that using full-batch settings there is not a remarkable difference in the performance from all the algorithms.

Moons optimization in full-batch settings – comparison of the loss reduction over the trainings.

Nevertheless, in the summarize presented on Table 2 it is confirmed that KAdam and

Moons – Comparisons table

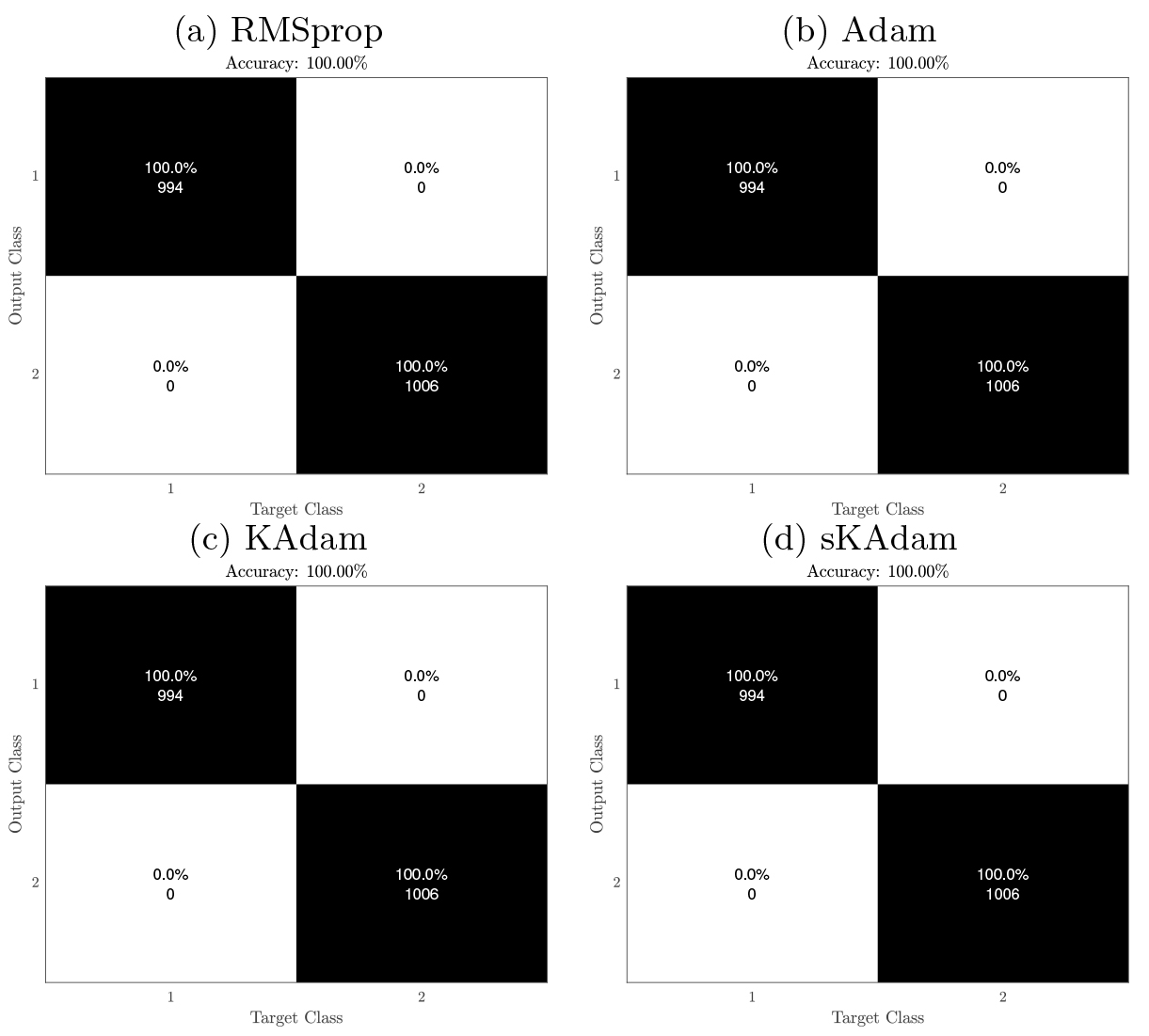

In Fig. 8 it can be observed the confusion matrices from all the algorithms in the comparison. These matrices were calculated from the generalization performed with the trained neural networks in full-batch settings and using the test dataset, which contains 2,000 patterns.

Confusion matrix for Moon Generalization.

One of the best-known dataset for pattern recognition. The problem (obtained from [4]) has four features (sepal length/width and petal length/width) to classify different iris plant species. In the classification, there are three different kinds of iris plants, but only one class is linearly separable from the others and also the latter are not linearly separable from each other. The dataset consists of 150 patterns (112 used for training) with 50 instances per class.

The loss reduction comparison was run over 1,000 epochs and using a mini-batch size of 32. For the architecture of the neural networks, two layers were used with (10, 3) neurons, hyperbolic tangent and sigmoid as the activation functions respectively.

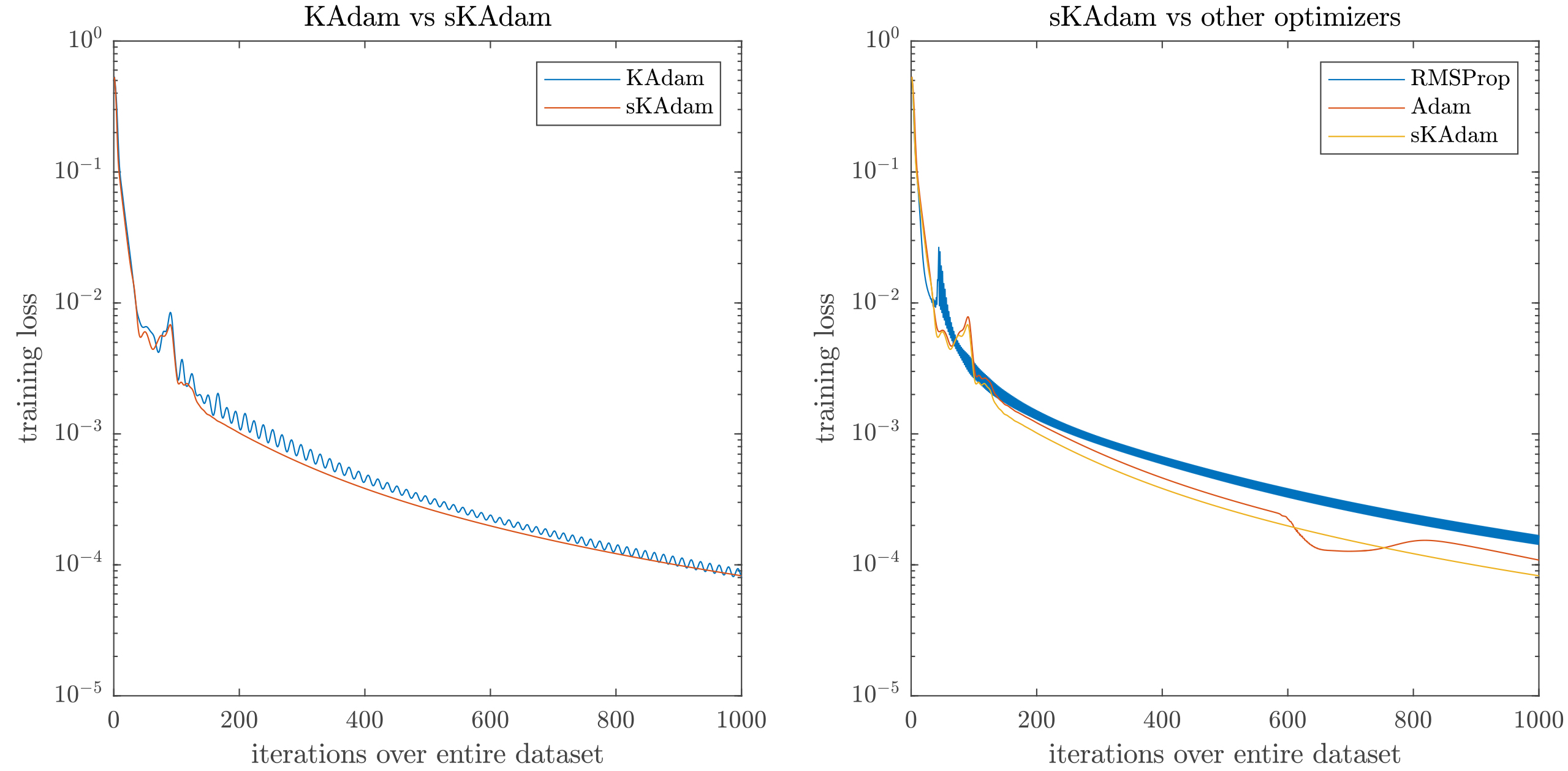

As it can be observed in Fig. 9, this time in the mini-batch settings is where all the algorithms present close result in the loss function.

Iris optimization in mini-batch settings – comparison of the loss reduction over the trainings.

On the other hand, Fig. 10 shows that in full-batch settings

Iris optimization in full-batch settings – comparison of the loss reduction over the trainings.

In Table 3, this time KAdam presents closer achievements in comparison with

Therefore, the execution time in KAdam increases as the the mini-batch size decrease. Thus, if its algorithm is used in a problem with a large numbers of patters and the optimization use mini-batch settings or online-settings, the problem of the execution time becomes serious.

Iris classification – Comparisons table

Confusion matrix for Iris Generalization.

In Fig. 11 it can be observed the confusion matrices from all the algorithms in the comparison. These matrices were calculated from the generalization performed with the trained neural networks in full-batch settings and using the test dataset, which contains 38 patterns.

The regression/classification problem [3] for the quality of different wines. As an evaluation for the quality, there are 11 features related to physicochemical tests for each wine sample. The authors of the database shared two datasets related to the quality of different red and white wines. For this experiment, it was used the red wine dataset, which contains 1,599 samples (1,119 used for training).

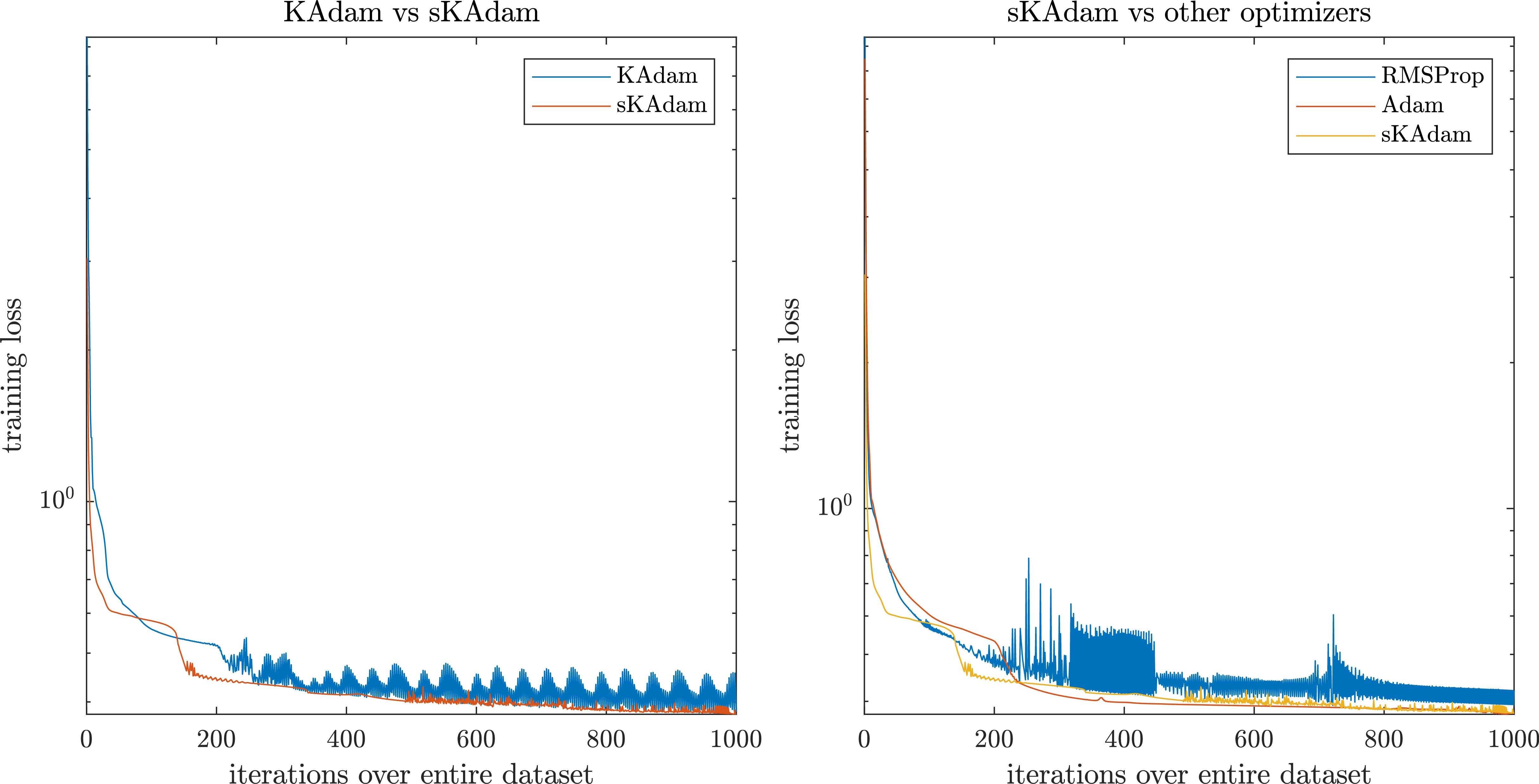

The loss reduction comparison was run over 1,000 epochs and using a mini-batch size of 256. In the architectures of the neural networks, two layers were used with (20, 1) neurons, hyperbolic tangent and linear as the activation functions respectively. This experiment uses more hidden units with the objective of test the impact in the execution time.

Red wine regression/classification – Comparisons table

Red wine regression/classification – Comparisons table

Red wine optimization in mini-batch settings – comparison of the loss reduction over the trainings.

As Fig. 12 shows, in the mini-batch settings

On the other hand, it can be seen in Fig. 13 that in full-batch settings

Red wine optimization in full-batch settings – comparison of the loss reduction over the trainings.

It can be seen in Table 4 that the architecture used in this experiment increase dramatically the execution time for the KAdam’s algorithm in both mini-batch and full-batch settings due to the matrix inverse computed in KAdam vs the simpler calculation of the scalar multiplicative inverse that

Furthermore, it can be observed that

In Table 4, the columns min. loss present the minimum value for the loss function found for each algorithm, the columns min

In this work, it was presented a new version of our state-of-the-art gradient-based optimizer. An extension of KAdam improved with the scalar Kalman filter and an exponential decay over the noise considered in the measurement process. This allows the algorithm to explore in a fraction of the optimization as well as keep following the original gradients later in training, aiming to find new and potentially better solutions.

As it has been shown with our new proposed method, it was overpassed the problem of the inverse matrix from the KAdam algorithm by using scalar operations with 1-D Kalman filters. This allows

For future work, the authors want to extend this work using the proposal in deep neural networks and with famous architectures like the ResNet.

Footnotes

Acknowledgments

The authors thank the support of CONACYT Mexico, through Projects CB256769 and 443 CB258068 (“Project supported by Fondo Sectorial de Investigación para la Educación”) and PN-4107 (“Problemas Nacionales”).