Abstract

The need to map the evolution of trends in any field of activity arises when a large amount of data is available on that activity, thus making impossible a manual exploration of the data in order to understand how the field or the activity is evolving. Topic and trend mapping is a mature field with hundreds of publications on approaches, methods and tools for data collection, analysis, feature extraction and reduction, clustering and visualisation tools and algorithms. Our study aims to map the evolution of topics published by the journal

Keywords

Introduction

The need to map the evolution of trends in any field of activity arises when a large amount of data is available on that activity, thus making impossible a manual exploration of the data in order to understand how the field or the activity is evolving. As digital traces of any field of activity accumulate and grow, domain specialists have to resort more and more to computer software to help them track the evolution of their activity and therefore better plan for the future. Topic and trend mapping is a mature field with hundreds of publications on approaches, methods and tools for data collection, analysis, feature extraction and reduction, clustering and visualisation tools and algorithms. The availability of many powerful and user-friendly data analysis and visualisation packages has made topic mapping an increasingly popular endeavour not only in the original fields from whence the methods originated such as bibliometrics and scientometrics, but also in any given field and on any activity provided a significant amount of data is available in digital format (see Ibekwe-SanJuan, 2007 for a review).

Our study aims to map the evolution of topics published by the journal

While many of the previous studies in these areas analysed data from several journals (Janssens et al., 2006) or authors, it is not uncommon to find studies focusing on mapping the intellectual structures of a single author or of a single journal provided the available data is sufficient for clustering and mapping schemes. For instance, Li and Xu (2019) studied the evolution of titles published in the

Our goal is somewhat different from that of Li and Zu (2019) in that they were looking at the informativeness of titles whereas we are studying the evolution of thematic trends (i.e., topics on which the journal has been publishing and not on stylistics and informativeness of its titles). Also, more fundamentally, Li and Zu (2019) derived a typology of titles according to some semantic features and their feature extraction and analysis of the linguistic properties of constructs found in the titles was performed manually. They furnished hardly any details on how this was done practically. Was it done by one person or more? According to what rules and parameters? Previous research has shown that manual semantic analysis of contents raises huge methodological issues and biases which should be laid bare in the methodology section. The article gave no details about the difficulties encountered in deciding to which semantic category to assign a title.

By contrast to Li and Zu (2019), our study of the evolution of topics published in EFI relies on automatic processes and tools developed over several decades which reduce the biases linked to the human interpretation. This is not to say that automatic methods do not have their own biases and limitations but these are known and documented in the literature. We will return to this point in the discussion section.

Analysis methodology

We conducted an analysis of the titles of the articles published in the journal

Corpus splitting

Our corpus covers more than 36 years, from 1983 when this journal was created to the first quarter of 2019 when the data collection was done. Thus 2019 data is incomplete as only the first issue of the journal had been published and entered into the database at the time of corpus collection. In such diachronic studies, it is customary to split the corpus into manageable time spans in order to obtain intelligible maps and better follow the trends. We tried three approaches to corpus splitting:

i- by Editor in Chief of the journal (EIC). The journal has had three EICs to date. This yielded a very unequal partition of the corpus (see Table 1) as the first EIC ran the journal from 1983 till 2013, thus for 30 years. The second EIC ran the journal for three years (2014–2017) although 2014 was a blank year as no issues were published, hence no titles for that year. The last and current EIC had only 1 year of running the journal at the time of data collection (2018 to first quarter of 2019). Hence, the partitioning of titles by EIC is very skewed as shown in Table 1.

Number of papers published during each Editor-in-chief’s term of office

Number of papers published during each Editor-in-chief’s term of office

Bearing in mind this skewness, we believe that mapping the titles by EICs can still tell us something about the topics prevalent during the mandate of each EIC during and how the journal’s center of gravity has evolved over time.

A more traditional way of splitting a corpus spanning a long period of time is to partition it into an equal interval of years. Previous studies tended to split the corpus by periods of five or six years, as this time span was judged sufficient to perceive trends (Chen et al., 2010). In this study, we tested two intervals of 6 years and 5 years.

The distribution of titles in the two intervals is given in Table 2 above. P1

Distribution of titles published in the journal by intervals of 5 and 6 years

As shown in Table 2, there is not a significant difference in splitting the corpus by 5 or 6 years. In the following, we will analyse the evolution of titles by EICs and by 6 year interval.

As the focus of our analysis is the titles published by the journal, we used the Vosviewer package (Van Eck & Waltman, 2010; 2020). VosViewer constructs bibliometric maps taking as input several types of bibliographic units such as authors, journals, keywords or text data from titles and abstracts of documents. In the latter case, significant text units have to be extracted first. For this, VosViewer uses the sentence detection algorithm provided by the Apache OpenNLP library to detect sentences and perform part-of-speech tagging (POS). It then identifies noun phrases (NPs) using surface morphological rules. VosViewer seeks to extract compound NPs, i.e., the longest possible NPs found in a sentence. This ensures that more meaningful text units are preferred over uniterms (one word terms) which tend to be more vague. The software then performs some normalisation (stemming) on the NPs in order to regroup identical terms, i.e., convert plural forms to singular, lower case all letters, remove accents and alphanumeric characters. This has the effect of increasing the occurrence of some NPs (Van Eck & Waltman, 2010; pp. 34-35).

After NP extraction, the analyst has to decide which terms should be kept for the later stages of analysis. To this end, the software allows the analyst to set an occurrence threshold below which terms will not be considered for input into the clustering scheme. Habitually, terms that occur only once (hapax) are excluded. Depending on the corpus characteristics, a higher threshold can be set empirically.

In VosViewer, term occurrences can be counted in two ways: full or binary counting. In the first case, the total occurrences of a term in all the documents is calculated (full counting). In the second case, only the number of documents in which a term occurs is recorded irrespective of how many times the term appeared in each document (binary counting). Notice that in the case of our corpus of titles, the impact of this distinction is minimised given that a term is likely to occur only once in a title, although we cannot rule out the probability that some common terms may occur twice in a title but that probability is low.

We opted for the binary counting since our objective is to identify trends in the publications across time. Hence it was more relevant to highlight topical terms that appeared in many documents which can signal recurring topics. We also set a minimum of 2 occurrences for a term to be considered for further stages of analysis.

The next step is term filtering. To do this, VosViewer calculates a relevance score for each term which is similar to the well known

Given the small size of our corpus, we decided not to apply the relevance score calculated by VosViewer in order to keep all the terms that met the minimum occurrence of 2 and avoid a massive elimination of terms from the analysis.

Clustering process

The terms selected from the titles with an occurrence of

While the software recommends not to use the ‘no normalisation’ option, the visualisation of the maps using any of the other three options did not show significant differences regarding the contents (see below), so we opted for fractionalization (option 3) that normalises the forces of the connections between the nodes.

VosViewer builds non-overlapping clusters, also known as “hard clustering” in the literature, and some terms may not be included in a cluster. Clusters are labeled using cluster numbers and are chosen automatically by the program based on the number of occurrences of the term and the relevance score (Van Eck & Waltman, 2020).

With the processes described in Sections 2.1–2.3 above, we obtained the following characteristics for the three methods of corpus splitting that we tried.

Clustering parameters for corpus split by Editor in Chief (EIC)

When looking at the corpus characteristics by intervals of 5 or 6 years (Tables 3 and 5), the first observable trend is the sharp decline in the productivity of the journal after the first 18 years (1983–2000). The journal published 232 titles in the first five-year period (1983–87) or 280 papers in the first six years (1983–88). By 1998–02 (Table 5) and 2001–2006 (Table 5), these numbers had dropped to 89 (a 62% drop) and 81 (a 72% drop) respectively for the same intervals.

Also, applying a threshold of 2 for minimum occurrence has the effect of reducing the number of terms considered for each period by approximately 80% which is the standard observation in data analysis studies: about 80% of the initial items are filtered out in some way or the other using occurrence or co-occurrence thresholds and do not appear on the final visualisations produced. Only about 20% of the data or less end up on the maps. For instance in Table 3 (corpus analysed by EICs), we went from a total

Clustering parameters for corpus split by interval of 5 years

Clustering parameters for corpus split by interval of 6 years

of 1658 extracted terms for EIC 1 to 341 terms which met the 2 occurrence threshold and furthermore co-occurred with other terms in that period covered by EIC. We will return to the impact of this feature reduction in the discussion section.

VosViewer offers different types of maps for viewing links in a co-occurrence matrix:

VosViewer offers further parameters to display the maps of which we mention attraction and repulsion since they influence the way in which items are located in a map by the VOS layout technique. We selected the values of attraction 6 and a repulsion value of 1 for a best visualization of the terms and their connections. For more details on these options, see Van Eck and Waltman (2020: 21).

Results

In the following sections, using the cluster density view, we will analyse the maps of titles obtained by Editor-in-chief (EICs) and by six-year interval.

Evolution of topics by Editor-in-Chief (EIC)

We recall that the journal has had three EICs since its creation and that we split the corpus along these lines (see Tables 1 and 3 above for details of the corpus parameters per EIC). The first EIC’s mandate covered the period from 1983–2013 (30 years), EIC2 covered the period 2014–2017 (3 years) while EIC 3 only had 1 year at the time of corpus collection (2018–2019).

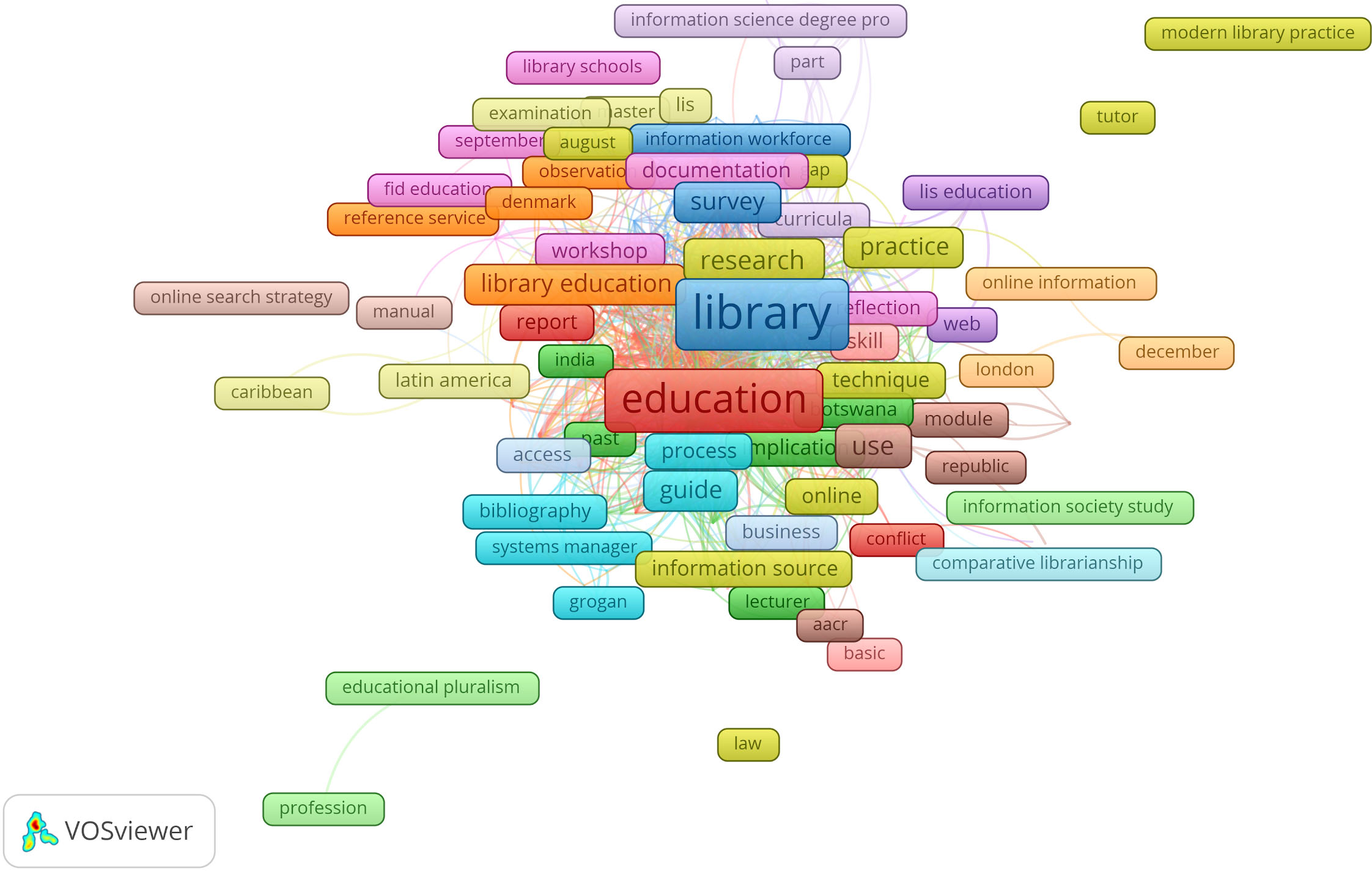

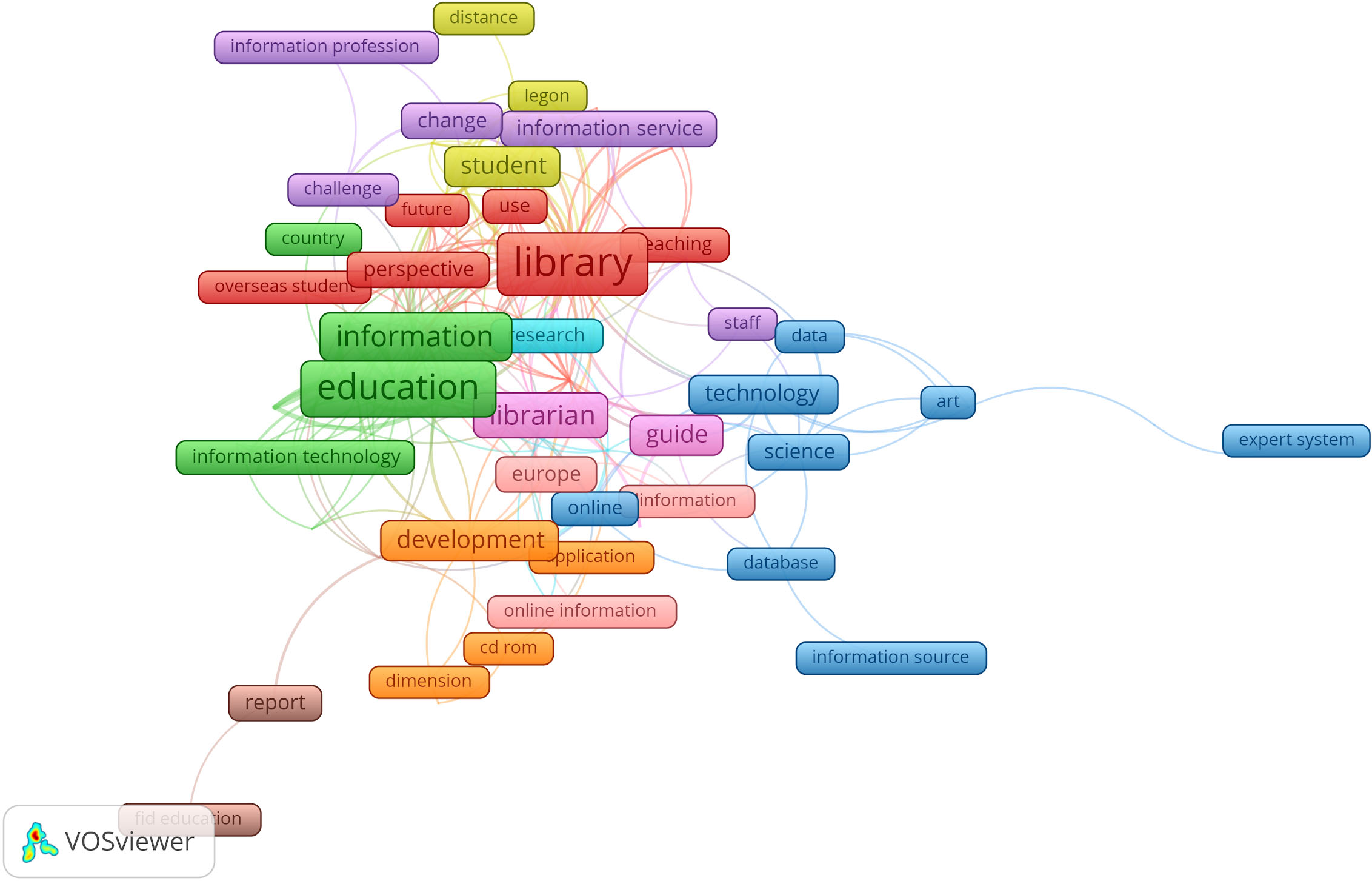

Figures 1–3 respectively display the prominent terms from the titles published by the three EICs.

EIC 1 published 799 titles yielding 1658 terms out of which only 341 (20%) met the occurrence threshold of 2 and were connected and thus went on to the mapping stage. In the map, terms belonging to the same cluster bear the same colour. Hence in the above map, terms such as “education, conflict and report” are part of the largest cluster with 52 terms. The terms such as “india, botswana, implication, lecturer, past” belong to the second biggest cluster with 39 terms.

The top 10 terms by order of frequency in titles published by EIC 1 (1983–2013)

The top 10 terms by order of frequency in titles published by EIC 1 (1983–2013)

Map of terms in titles published during EIC1 (1983–2013).

The titles published during the first 30 years of EIC 1’s mandate showed a focus on traditional core topics in LIS as evidenced by the size of the terms “education”, “library” and the recurrence of terms evoking library education and institutions such as “library education, bibliography, library schools, reference service, aacr, information source, documentation, information science degree programme, online information, lis education, fid education, research, practice, comparative librarianship”. We also observe the presence of terms such as “latin america, caribbean, botswana, india, denmark, london” which seem to point to the geographic locations concerned by some of the publications.

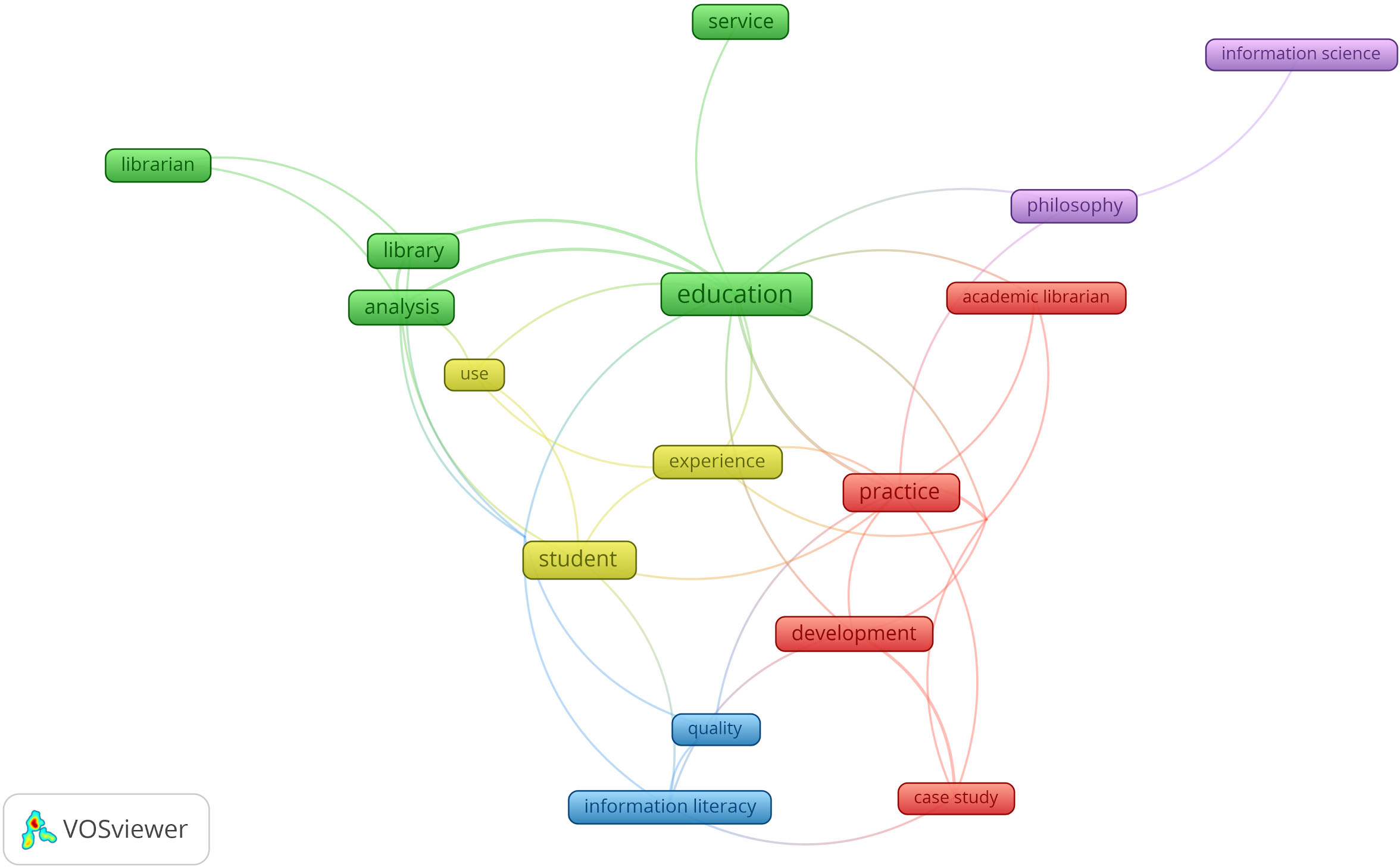

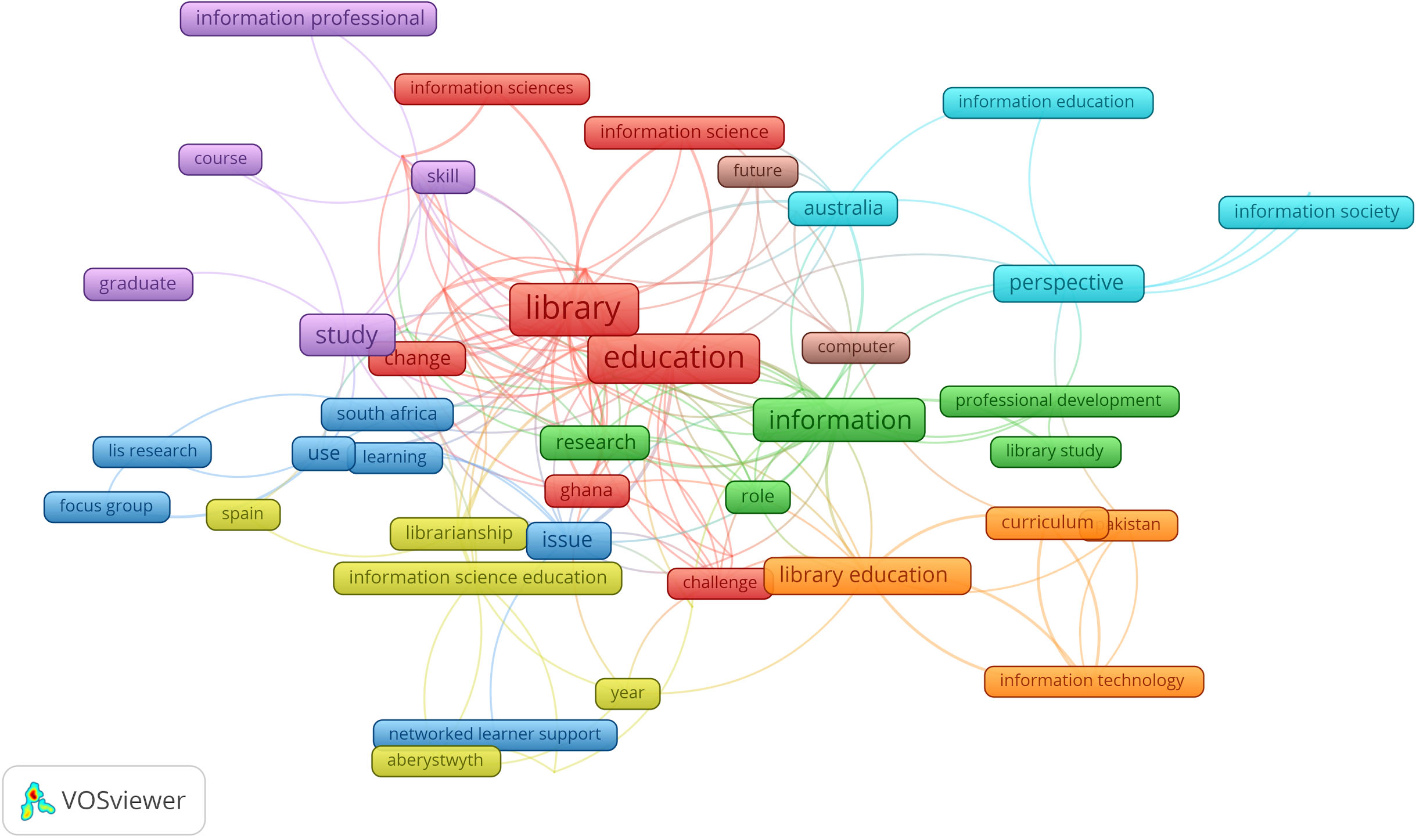

The maps obtained for EICs 2 and 3 are understandably much sparser as their periods of mandate were much shorter. The occurrence threshold of 2 resulted in the elimination of 90% of the terms contained in the 51 titles published by EIC 2, hence only 18 out of the 177 terms extracted from titles were used for mapping.

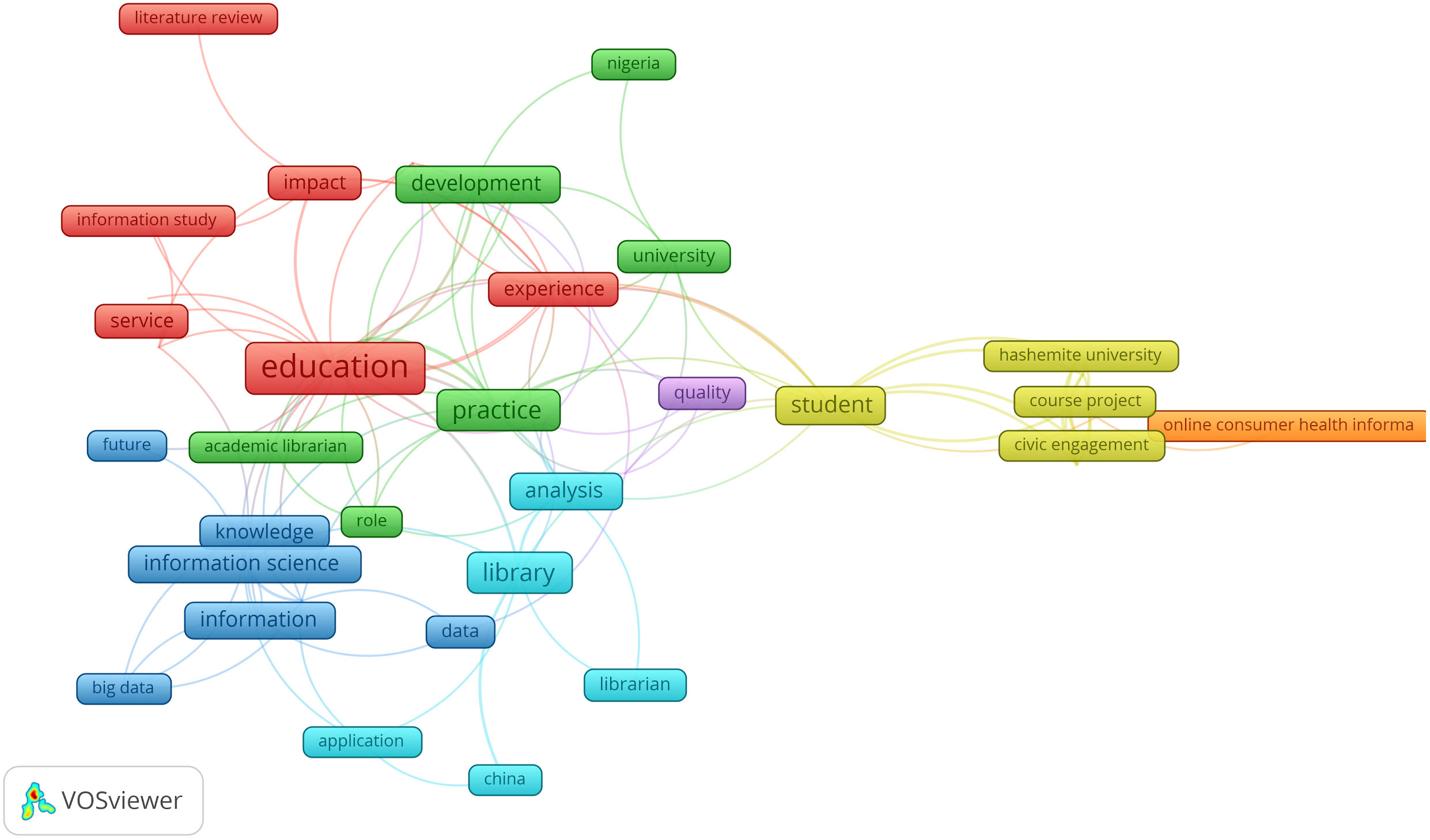

Map of terms in titles published during EIC2’s mandate (2014–2017).

In the period covered by EIC 2, the focus of publications seems to remain on the core issues of education in LIS as evidenced by the terms “education, library, librarian, analysis, service” which belong to the same cluster and the terms “academic librarian, practice, case study, development” in a second cluster. Other terms seem focused on issues of “quality, assessment, information literacy” and student and user experience. The presence of the term “philosophy” is explained by a special issue of the journal on “philosophy of information” published in 2017 (issue 33, number 1), unfortunately, not extracted as a multiword by VoSviewer. Table 7 below shows the top 10 terms by order of frequency in this period, all of which appeared on the map.

The top 10 terms by order of frequency in titles published by EIC 2 (2014–17)

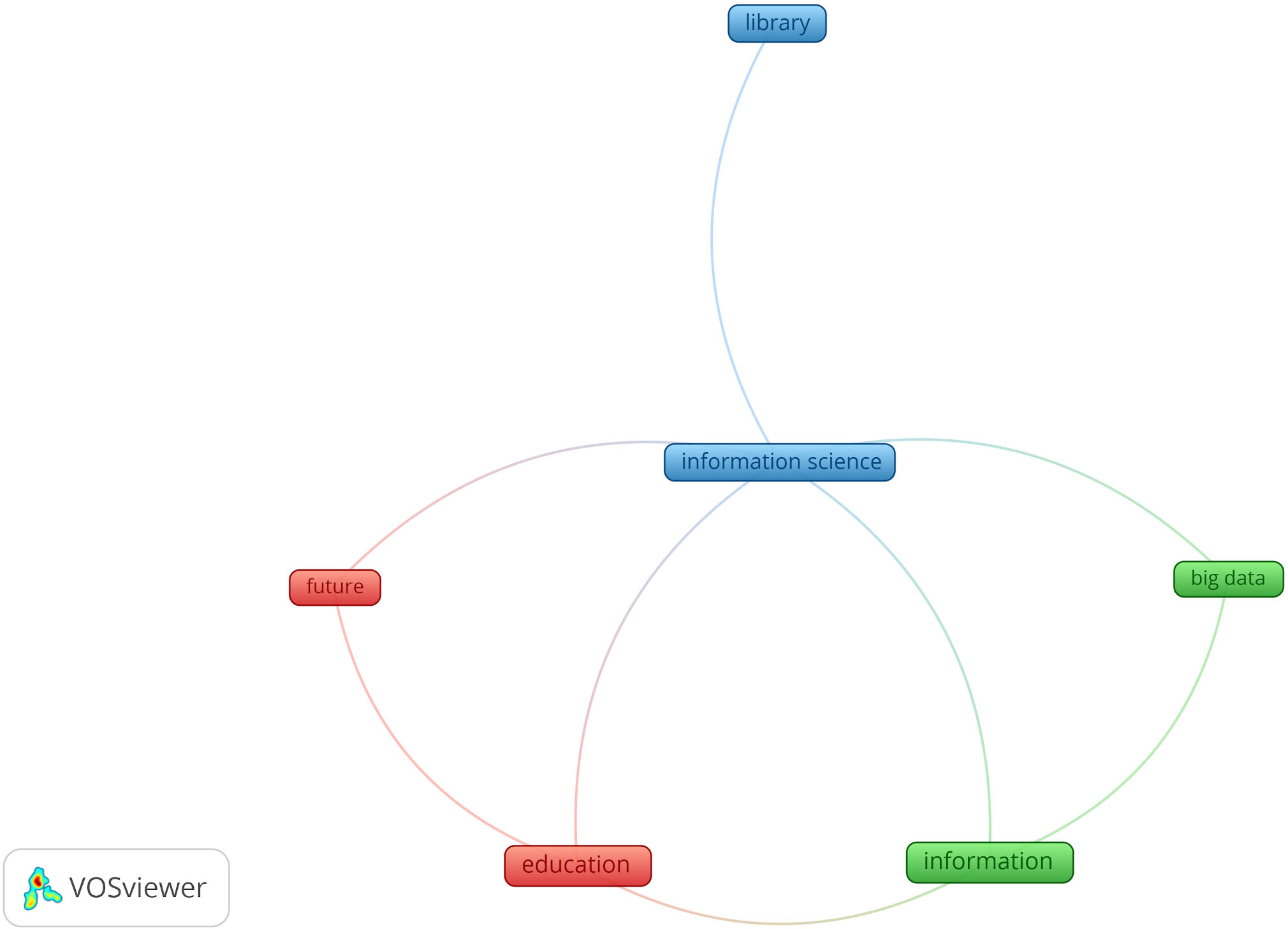

Map of terms in titles published during EIC3 (2018–2019).

Top 10 terms by frequency extracted from titles published by EIC 3 (2018–2019)

Like for EIC2, 95% of the terms extracted from 35 titles published by EIC 3 were eliminated by the occurrence threshold of 2, thus only 6 out of 119 terms were left for the clustering process. While this map is not relevant because of the sparse data, it is noticeable to see the terms such as “big data” appear on the map. This could signal a shift of focus to more technologically current topics.

In the next section, we will analyse the maps obtained by splitting the corpus of titles by interval of six years which should yield a less skewed distribution of terms and thus a more balanced representation of the evolution of topics published by this journal.

Table 9 below shows the top 10 terms in each period ranked by decreasing order of frequency for each period.

The top 10 terms by frequency in the six periods

The top 10 terms by frequency in the six periods

The prevalence of terms such as “

Below, we will analyse the six maps were produced for each period, each showing the prominent terms that co-occurred more than twice in titles published during that period.

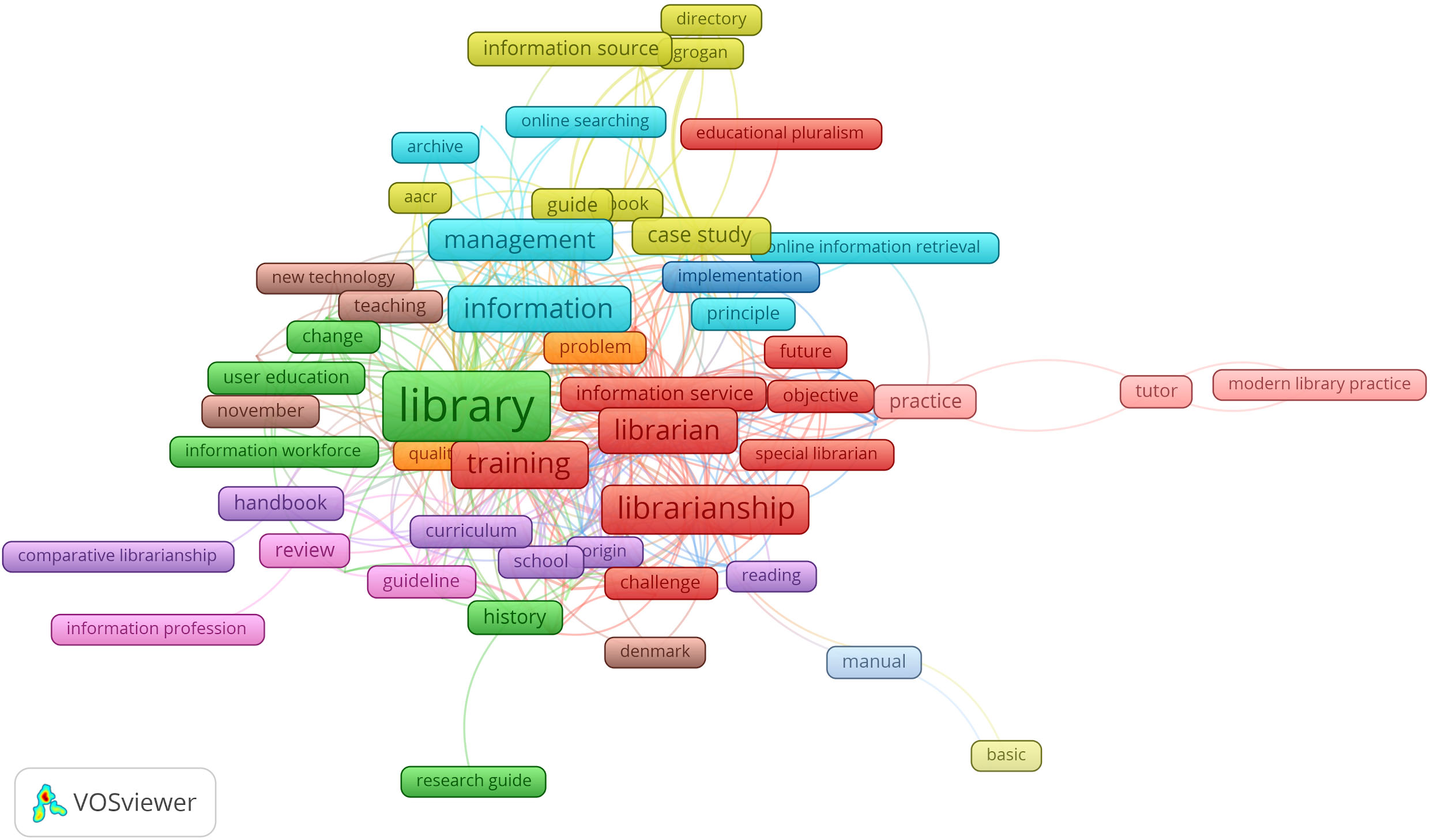

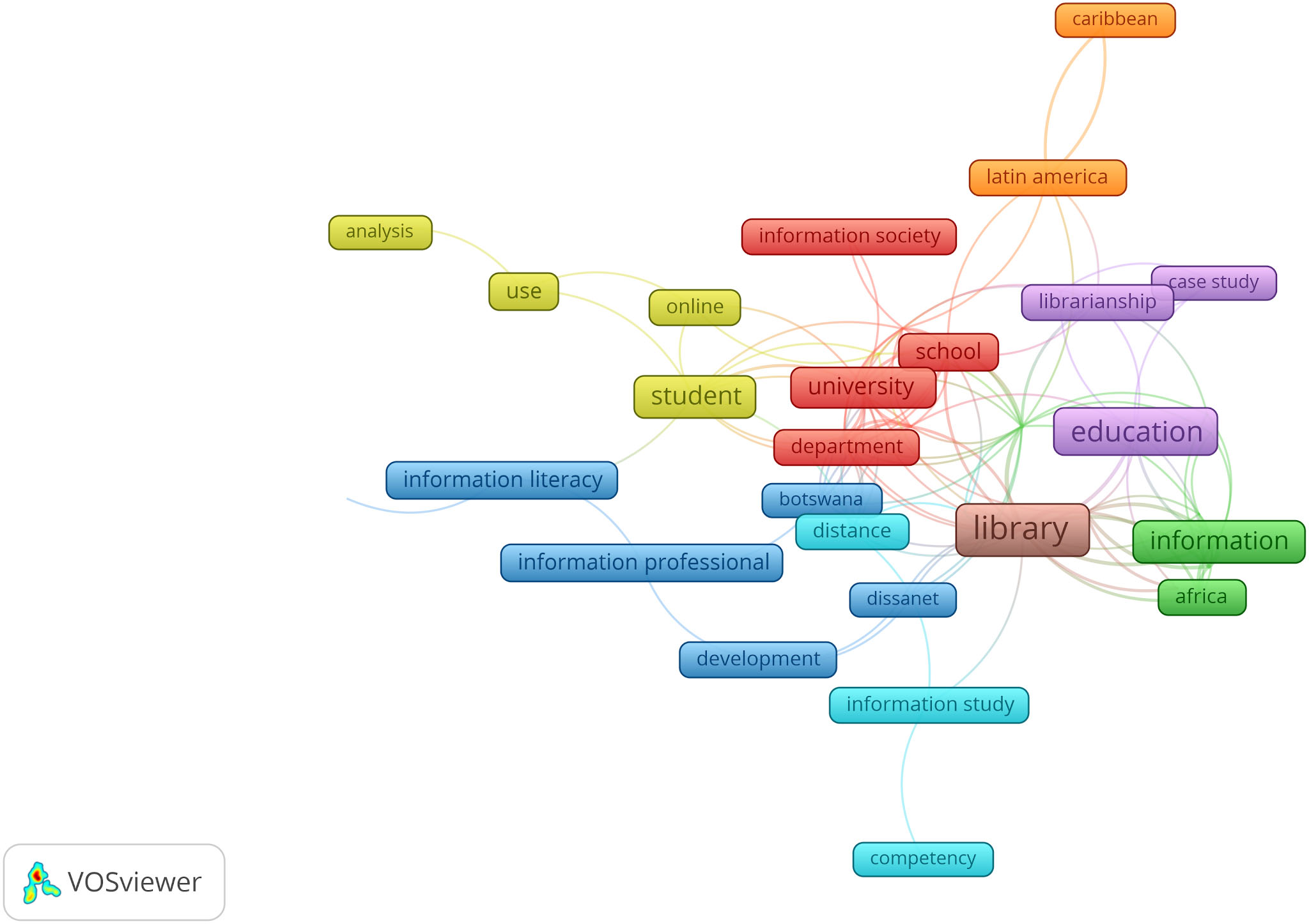

Figure 4 below displays the prominent terms in clusters. The topics in this first period are solidly centred on the core themes of the journal, i.e. library training as evidenced by the prominence of terms in the cluster “

Another group of clusters appear to reflect publications on library services to users as evidenced by the presence of the terms like “

Map of prominent topics in 1983–1988.

Figure 5 below displays the prominent terms appearing in clusters which show continued focus on the core topics of the journal such as “

Map of prominent topics in 1989–1994.

Map of prominent topics in 1995–2000.

While the traditional topics of library education identified in periods 1 and 2 continue to be prominent here, more publications appeared on “

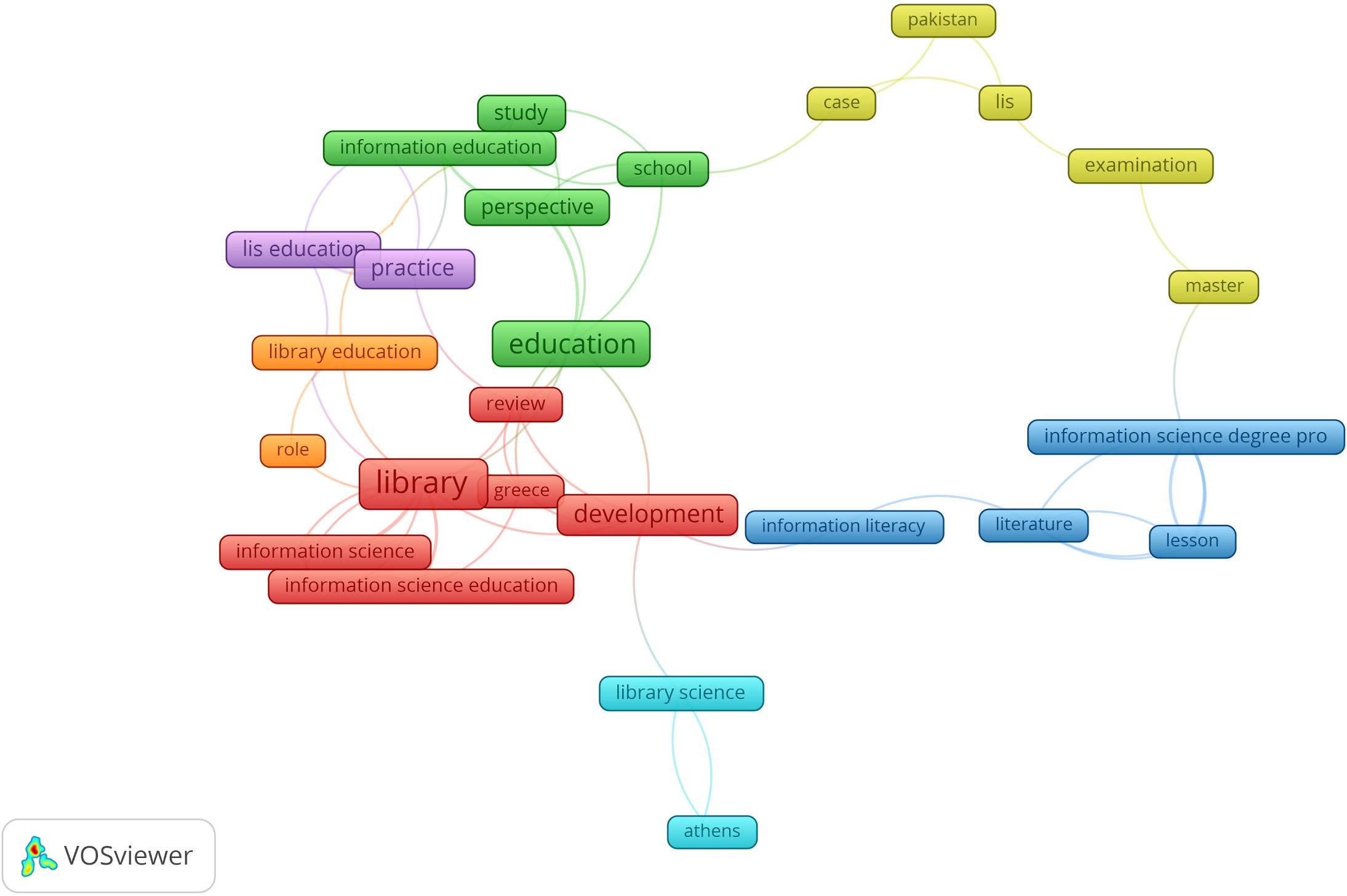

Period 4: 2001–06

The first noticeable difference with maps in the previous 3 periods is the sharp drop in the number of published titles for the same interval of years. The journal went from 280 titles in period 1 to 81 titles in period 4 (thus a 72% fall). Hence it is unsurprising that from this date, the maps became more and more sparse. The topics observed for this period continue some of the trends already observed in the preceding periods (see Fig. 7 hereafter). The terms “

Map of prominent topics in 2001–2006.

Map of prominent topics in 2007–2012.

The topics in this period continue to reflect the core topics of the journal also perceptible in EIC1’s map as evidenced by the terms “

Period 6: 2013–2019

This last period covers titles published in the last year of EIC 1’s mandate (2013), those published during EIC 2’s mandate (2014–2017) and the first year of EIC 3’s mandate (2018–2019). Hence, the map obtained continued to show a continuity in the trends observed above.

Figure 9 hereafter shows the traditional topics on Library education and services with terms such as “

The presence of the terms

Map of prominent topics in 2013–19.

The chronological analysis of titles published by

The maps we have produced show only a facet of the information contained in the corpus of publications. The feature reduction mechanism inherent in all data analysis methods often results in about 80% of the input data being eliminated from the analysis. By setting a minimum occurrence threshold at 2 which is quite low, more than 80% of the terms extracted from the titles were thus eliminated from further analysis and therefore did not appear on the maps. Previous studies in information retrieval and term weighting (Sparck Jones 1972) have demonstrated the relevance of highly or moderately frequent items because they represent the main topics in the corpus. The dilemma has always been finding the right balance between retaining these highly or moderately occurring items as well as some low frequency items which could signal novel topics. To date, no theoretical or methodological solution exists for this hard problem. Hence, the visual artifacts resulting from data analysis methods and tools should be viewed with circumspection because they are the result of multiple parameter fine tuning by the analysts and thus reflect his/her world views and assumptions on what items should be “seen”. Thus, if decision making is to be based on such machine generated visualisations, the viewer will do well not to forget the famous quote of the Polish American scientist and philosopher Alfred Korzybski that “

Finally, we should also bear in mind that the maps and data do not speak in and of themselves. They need interpretation, a time-consuming and highly cognitive enterprise that requires a high level of expertise in the domain of the corpus as well as analytical skills. The interpretation stage is therefore highly hermeneutic and riddled with subjectivity (see Ibekwe-SanJuan 2006; Ekbia et al. 2015 for a discussion of the epistemological, theoretical and methodological issues inherent in data analysis processes).

In the future, we aim to extend this analysis to other journals in the information science fields in order to get a sense of how research in this interdisciplinary field is evolving.