Abstract

Designing an ontology that meets the needs of end-users, e.g., a medical team, is critical to support the reasoning with data. Therefore, an ontology design should be driven by the constant and efficient validation of end-users needs. However, there is not an existing standard process in knowledge engineering that guides the ontology design with the required quality. There are several ontology design processes, which range from iterative to sequential, but they fail to ensure the practical application of an ontology and to quantitatively validate end-user requirements through the evolution of an ontology. In this paper, an ontology design process is proposed, which is driven by end-user requirements, defined as Competency Questions (CQs). The process is called CQ-Driven Ontology DEsign Process (CODEP) and it includes activities that validate and verify the incremental design of an ontology through metrics based on defined CQs. CODEP has also been applied in the design and development of an ontology in the context of a Mexican Hospital for supporting Neurologist specialists. The specialists were involved, during the application of CODEP, in collecting quality measurements and validating the ontology increments. This application can demonstrate the feasibility of CODEP to deliver ontologies with similar requirements in other contexts.

Keywords

Introduction

Ontologies have been used to support the representation and management of data in several domains. For example, FIRO (Espinoza, Abi-Lahoud, & Butler, 2014) is an ontology used for reasoning over data in the financial domain to support anti-money laundering. Also, ontologies exist in the medical domain such as OpenGalen (OpenGalen Foundation, 2012) or SNOMED-CT (SNOMED International, 2015); OpenGalen is expressive enough and SNOMED-CT represents taxonomies, which standardizes medical concepts. One of the most powerful applications for ontologies is to build a knowledge base that can be populated and queried. For instance, in medicine, a knowledge base can be used to support the medical diagnostic record identification and medical dissections, and surgical procedures (Napel, Rogers, & Zanstra, 1999). Also, an ontology-based knowledge base can be queried by users as well as information systems, which can be used for inferencing or most commonly known as reasoning over data. This feature makes ontologies a powerful means to support intelligence and automation in information systems, which is often called a marriage (Pisanelli, Gangemi, & Steve, 2003). Ontologies that are application-oriented need to answer queries representing functional requirements and they also need to satisfy several quality attributes (e.g., accuracy, efficiency, availability, etc.). This means that these ontologies should have a balance between expressiveness (the type of axioms it implements, such as inheritance, symmetry, or functional relationships among others) and the ability to answer the queries according to end-user requirements. To build application-oriented ontologies is the main motivation for our work and we suggest that this kind of ontologies will need a unique process that includes activities, which constantly evaluate the satisfaction of end-user requirements, as well as the verification of the ontology quality.

Creating an ontology is usually time-consuming, error-prone, and requires extensive training and experience (Pazienza & Armando, 2012). In addition, creating a very expressive ontology cannot guarantee its implementation in a knowledge base, to be effectively queried. End users and/or other software systems need to perform queries to support practical tasks. In this support stems the importance of continuously validating and verifying the ontology design, for evaluating whether the ontology is truly delivering the required support. This checking should not be postponed after the completion of the ontology.

In the process of designing an ontology, end-user requirements are captured through Competency Questions (CQs; Grüninger & Fox, 1995, p. 3). CQs are natural language questions that need to be answered by querying an ontology, for solving practical tasks of end-users. Several methodologies and processes do not use CQs to drive the ontology design and therefore, knowledge bases do not support CQs. Those that do use CQs barely use the results of the CQs’ responses (by querying), for driving improvements in the ontology design. Some examples are NeOn by Suárez-Figueroa M. C. (2010), which uses the Ontology Requirements Specification Document (including a list of CQs) to guide the development, and METHODOLOGY that defines CQs in the Specification Phase (Fernández, Gómez-Pérez, & Juristo, 1997). If CQs are not used to drive the design process, then it is difficult to validate whether an ontology truly supports the end-user needs. Therefore, in this paper, we propose an approach that considers CQs as drivers for an ontology design, through translating them into queries to be executed in an implemented Knowledge Base (KB), and by including the CQs in the Validation & Verification activities (through the metrics definition and application).

Also, we have found that there are many knowledge engineering processes and methodologies for creating ontologies (as reviewed in the Section Related Work), but the Validation and Verification (V&V) activities that ensure the satisfaction of users and their requirements, are barely included as a backbone for driving the improvements in ontology iterations. In addition, none of the existing approaches propose well-structured processes to be followed, with clear activities and roles. In this paper, we tackle these gaps by proposing an approach called CQ-Driven Ontology DEsign Process (CODEP), an iterative and incremental process for designing ontologies and developing knowledge bases that truly satisfy end-user needs, defined as CQs. The contributions of CODEP are as follows: 1) It defines a well-structured process to be followed, with clear activities and roles; 2) it includes Validation & Verification (V&V) activities based on quantitative metrics. These metrics are helpful because they (a) support KEs in improving the ontology (b) allow users to quantitatively indicate their satisfaction towards an increment of an ontology and its KB from different perspectives; 3) it supports an incremental and iterative life cycle where ontology versions are produced until users are satisfied and an applied KB is produced. The process produces an ontology that is validated against the expected end-users quality metrics, which ensure effective support for practical purposes, such as the ontology implementation in a KB which can also be mined by information systems.

Additionally, this paper presents how CODEP has been applied in every activity. The application has been performed in collaboration with a medical team at a Mexican Hospital, to create a medical ontology that supports the identification of patients with diagnostic features after suffering traumatic head injuries, to enroll them in a rehabilitation program. Through the application of CODEP, it is shown, how the ontology and its respective implemented KB responds to the CQs, and how several metrics are used to validate the satisfaction of the medical team’s requirements. This validation is used to improve the ontology iteratively and incrementally by obtaining feedback from the medical team. The medical team evaluates the accuracy and comprehensibility of queries’ responses and knowledge rules, the coverage and completeness of the CQs, and the responses’ comprehensibility.

The paper is organized as follows: Section 2 presents an overview of CODEP. Section 3 illustrates in detail how CODEP has been applied to create a medical ontology and develop a knowledge base. Section 4 presents the protocol conducted to validate that the medical ontology satisfies the CQs. Section 5 analyses related work, and finally Section 6 presents the conclusions.

The CQ-driven ontology design process

This section presents the CQ-Driven Ontology DEsign Process (CODEP), which is driven by end-users CQs. CODEP has been inspired by taking proved practices from ontology designing experiences in three previous projects (Espinoza, Abi-Lahoud, & Butler, 2014; Nieves, Espinoza, Penya, Ortega De Mues, & Peña, 2013; Espinoza et al., 2013). This process is defined for creating ontologies that will be implemented in Knowledge Bases (KBs) that can be mined. Therefore, this is an application-oriented ontology design process.

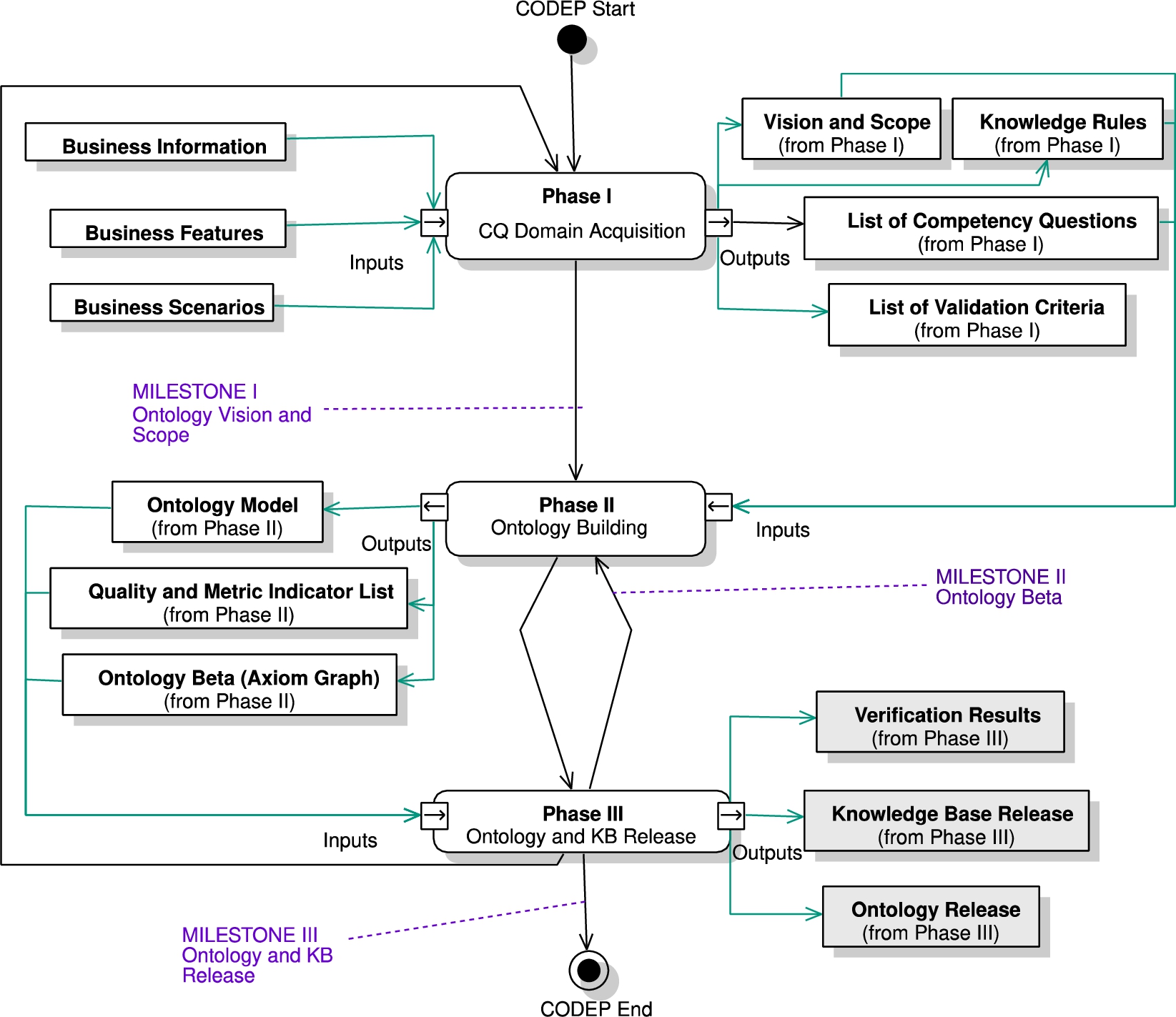

CODEP is divided into three main phases:

CODEP is a process that stems from V&V activities, for obtaining feedback from

Life cycle of CODEP.

CODEP activities

CODEP defines a life cycle model based on the Incremental-Build Model (IEEE-SWEBOK, 2014), which includes from modeling to V&V. It can be observed from Fig. 1 that CODEP defines an

In the following CODEP specification, the activities description is done along with the case study application, to facilitate the CODEP understanding. The activities’ description is stated for a general application, while the case study describes how the process can be performed in a practical setting.

Case study description

The ontology supports a medical team composed of neurologists, who are specialized in rehabilitating patients with head injuries, in the context of the National Rehabilitation Institute (Instituto Nacional de Rehabilitación – INR, http://www.inr.gob.mx/r08.html), a Mexican hospital and leader in attending patients in rehabilitation services. INR also promotes research projects in several medical areas about rehabilitation. The medical team conduct one of the INR’s research protocols called the Cerebrolysin Research Protocol (CRP; INR, 2013). CRP focuses on investigating the functional, cognitive, psychological, and physical effects of the Cerebrolysin® drug, as adjuvant treatment for several sequelae of Traumatic Brain Injury (TBI). The CRP team is constantly seeking patients with specific diagnosis conditions, who meet the CRP’s requirements and analyses whether they are candidates for neurological rehabilitation in an in-hospital program that lasts for a year. The medical team, who is specialized in neurology at INR and manages the CRP, needs to perform a specialized evaluation of the patients, who are sent from other hospitals with a preliminary diagnosis of TBI. However, these hospitals are the first-contact medical place after the patient has suffered an accident, and commonly the general physicians in charge are not specialized in making such specific diagnoses for the criteria evaluation. Currently, two situations can happen: 1) INR’s medical staff need to travel to the first-contact hospitals to perform the diagnostic analysis and considering the high number of hospitals with A&E services in Mexico City and the time it takes to get there, this becomes an unpractical activity; 2) The first-contact hospitals’ general physicians perform a preliminary diagnosis to send potential patients to the INR for the Cerebrolysin treatment. However, as they are not specialists in medical rehabilitation, such preliminary diagnostic, could be incomplete or wrong. Both situations cause the loss of candidate patients to be enrolled in the CRP, which affects the medical research and the rehabilitation of current and future patients.

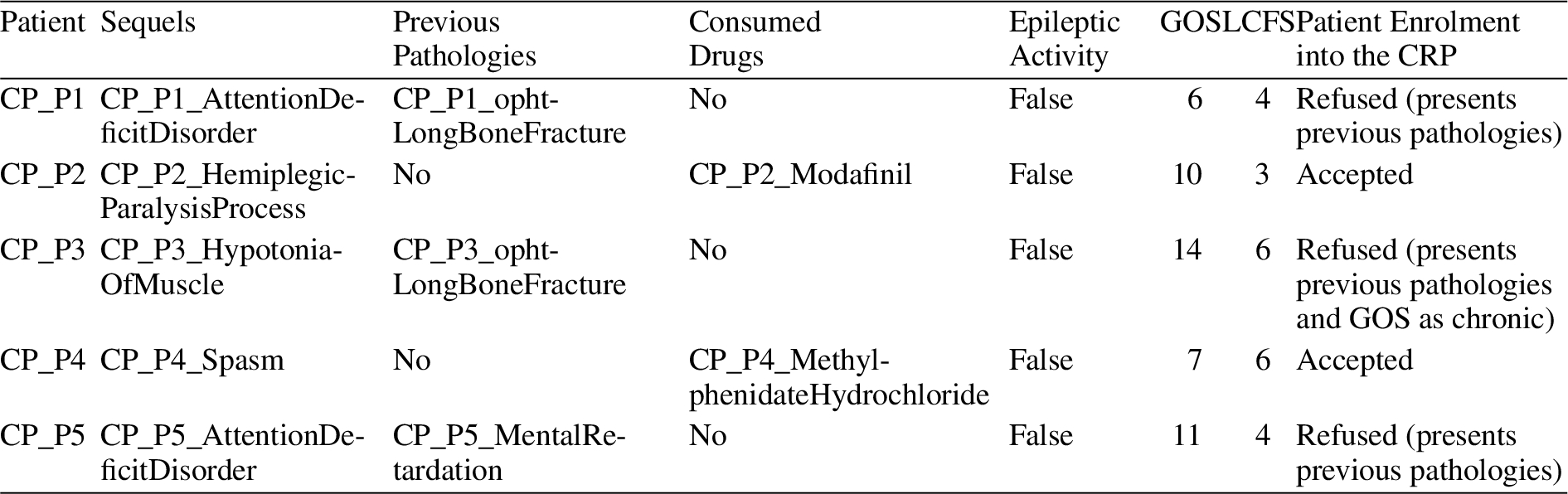

Thus, in the Case Study, it is important to analyze whether the patient fulfills a set of criteria, which is followed by the INR’s medical team, to determine if the patient can be treated under the CRP (INR, 2013). Table 2 shows these criteria as a list of Inclusion Criteria and a set of Exclusion Criteria. The application of the inclusion/exclusion criteria follows an order which is determined by the INR’s medical team. In this Case Study, some of these criteria might be

The Inclusion/Exclusion Criteria, as Business Policies (BP) and Business Constraints (BC)

The Inclusion/Exclusion Criteria, as Business Policies (BP) and Business Constraints (BC)

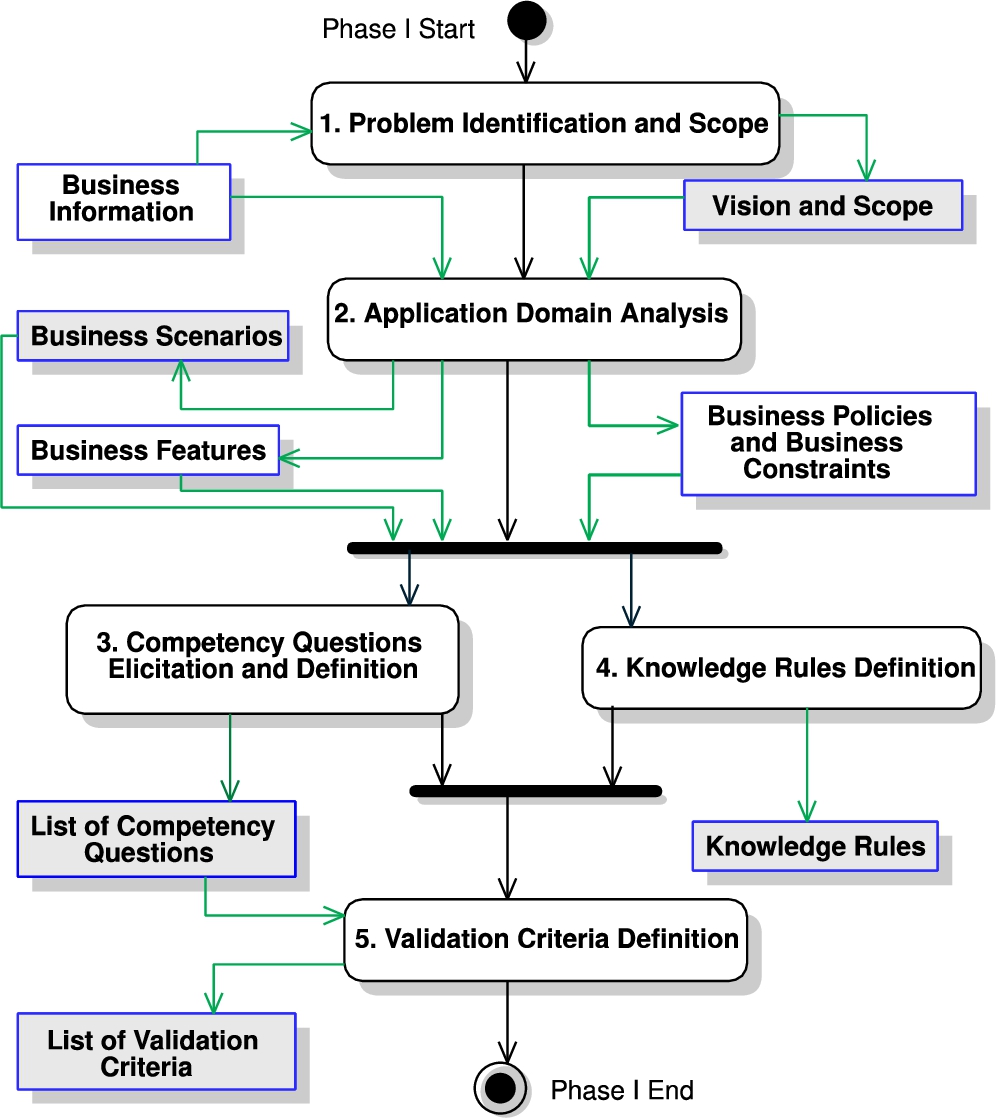

Phase I flow.

In this scenario, the ontology will be the means to organize and model the medical rationale required to process patients to be treated under the CRP. The KB (based on the ontology) will support the first-contact GPs at the hospital to perform the specialized diagnostic analysis, through the implementation of the medical criteria as knowledge rules. It is worth mentioning that before starting the ontology design, research was performed to identify processes that are oriented to developing application-oriented ontologies. As commented in Sections Introduction and Related Work, even there are several well-known processes, we were not able to find one which focused on developing ontologies to be used in an application setting, with frequent activities to perform quantitative validation (for measuring if the ontology and KB are truly answering the CQs, from the SMEs’ perspective), with a guide to applying the process, and that the resulting ontology can be used to set up a knowledge base, able to execute queries from a software application. Therefore, CODEP is inspired by many of these processes but fills the gap by proposing a new process that is structured, includes V&V, and can be driven by the CQs to create an application-oriented KB.

In the following Section 3.2, the description and application of CODEP in every phase are explained.

The objective of Phase I is to understand the domain to obtain the list of Competency Questions and Knowledge Rules that will be used to drive the ontology (see Fig. 2).

The problem identification of an ontology is key for avoiding the modeling of irrelevant aspects of the domain application, and the scope states the expectations that the ontology must be compliant. The problem identification and scope are defined by conducting several meetings between the knowledge engineer and the SME to identify what are the needs for the ontology. The SMEs are the experts of the domain and they can be end-users of the ontology. From this step, the KE identifies the actors, end-users, and stakeholders of the ontology. The Input is the

The objective is that the KE gets deep knowledge about the concepts that need to be included in the ontology and the level of expressiveness. Specifically, 1) the application domain context (the institution features, the company’s business policies and constraints from relevant standards, and a glossary of terms) is studied; the doubts should be solved with the involved people in the domain (staff, customers, or technicians); 2) the set of scenarios (including the tasks that are performed by the scenario’s actors) where the ontology is applied are identified; here each actor can have a set of scenarios or many actors can share them. The Input is the

Scenarios for ontology usage

Scenarios for ontology usage

This activity focuses on capturing the set of CQs. The CQs are the questions that SMEs need to answer to support their daily work. They are usually the queries that need to be supported with the ontology, and they are defined as focusing on obtaining knowledge (explicitly or implicitly) from the KB. Usually, CQs are in the mind (tacit) of SMEs, and the KE works with SMEs to make them explicit. To be able to explicitly define the CQs, the KE analyses as Inputs: The

(Excerpt) Competency Questions (CQs)

This activity aims to identify constraints or restrictions in the knowledge domain, such as regulations, the vision, and scope document (from Activity 1), and relevant domain documentation provided by the SMEs. Constraints are defined as

Before the creation of the ontology, we recommend defining the

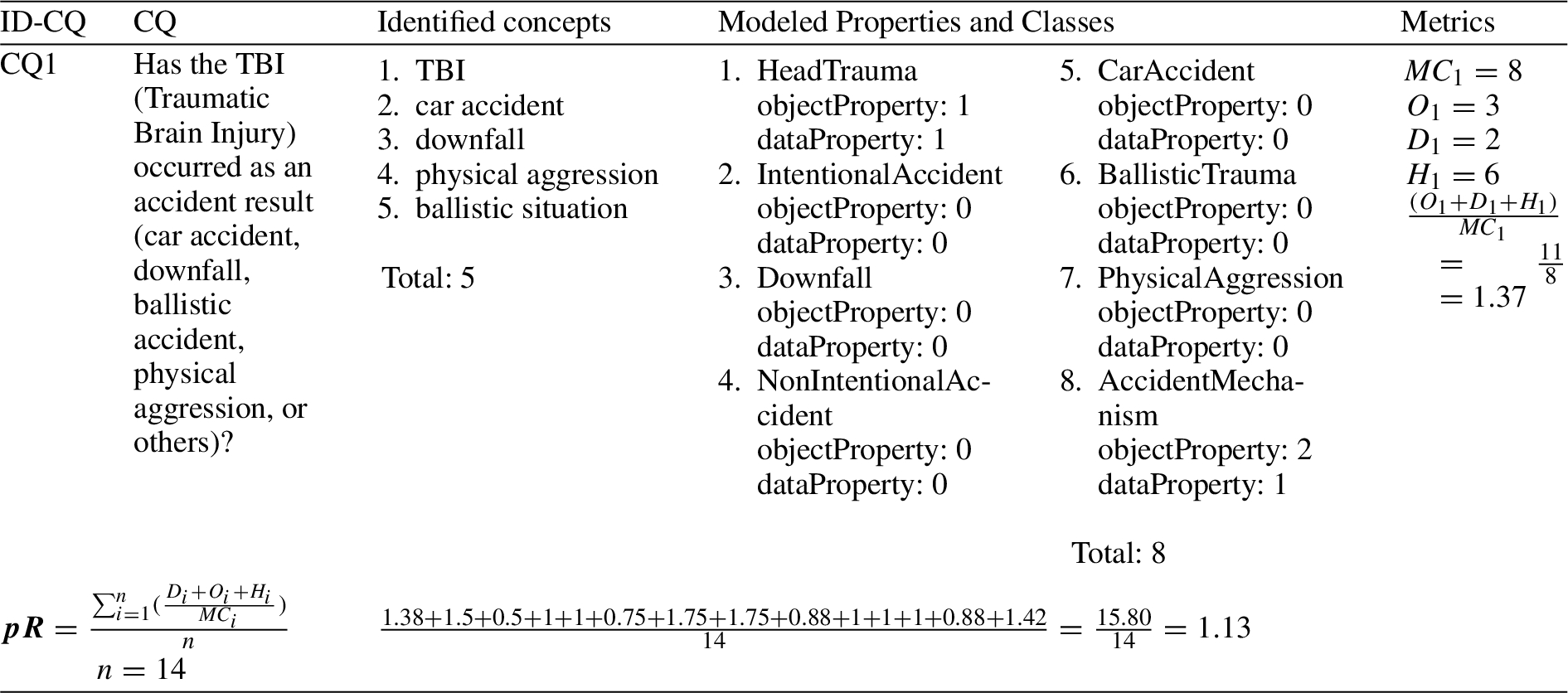

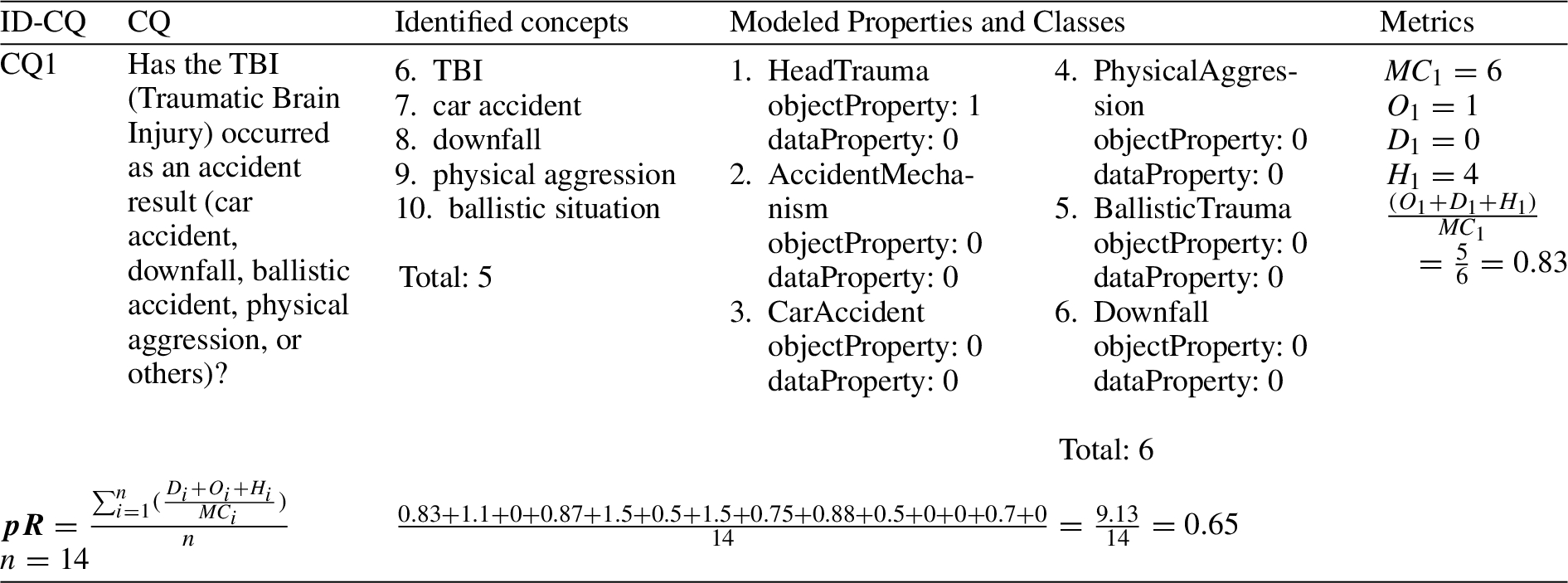

The

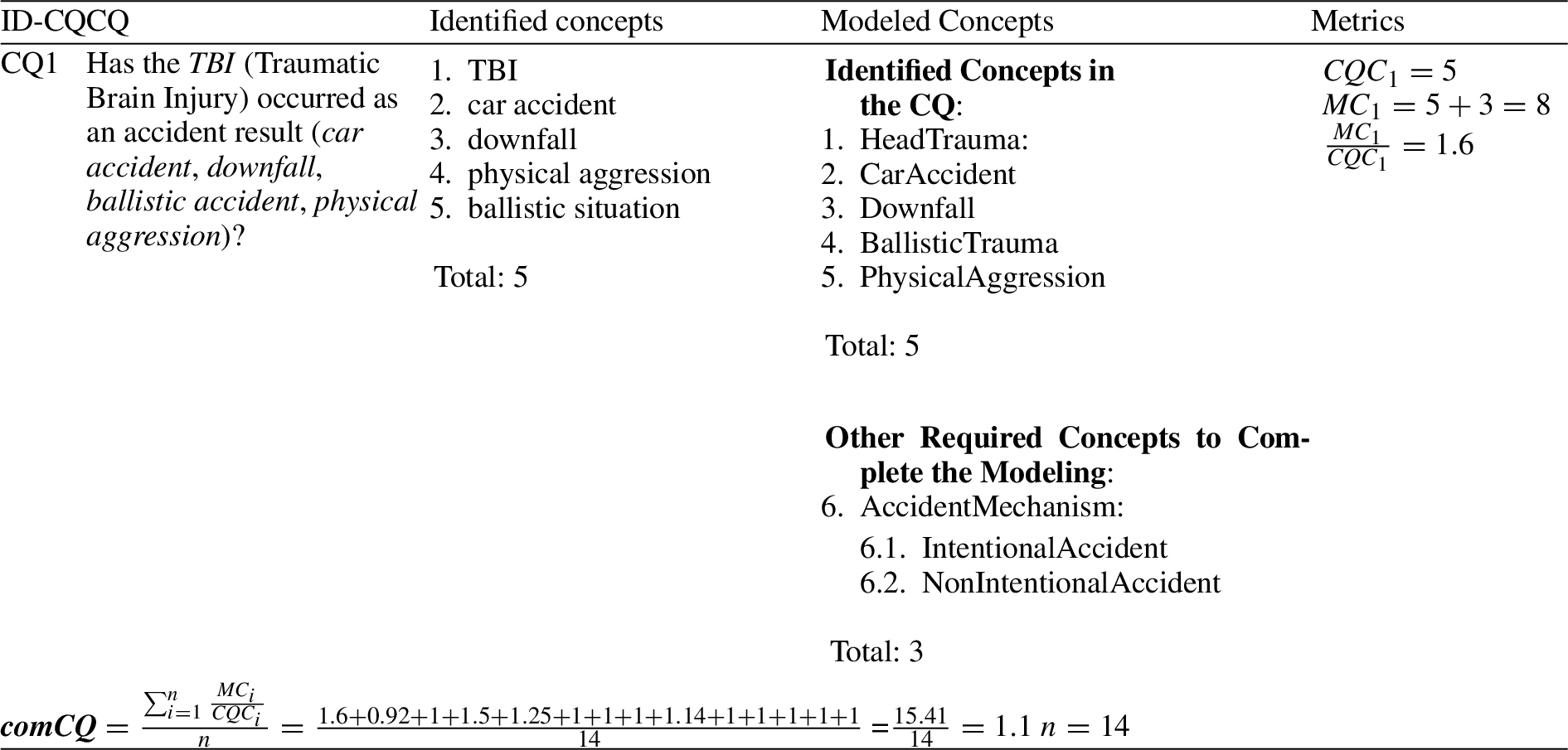

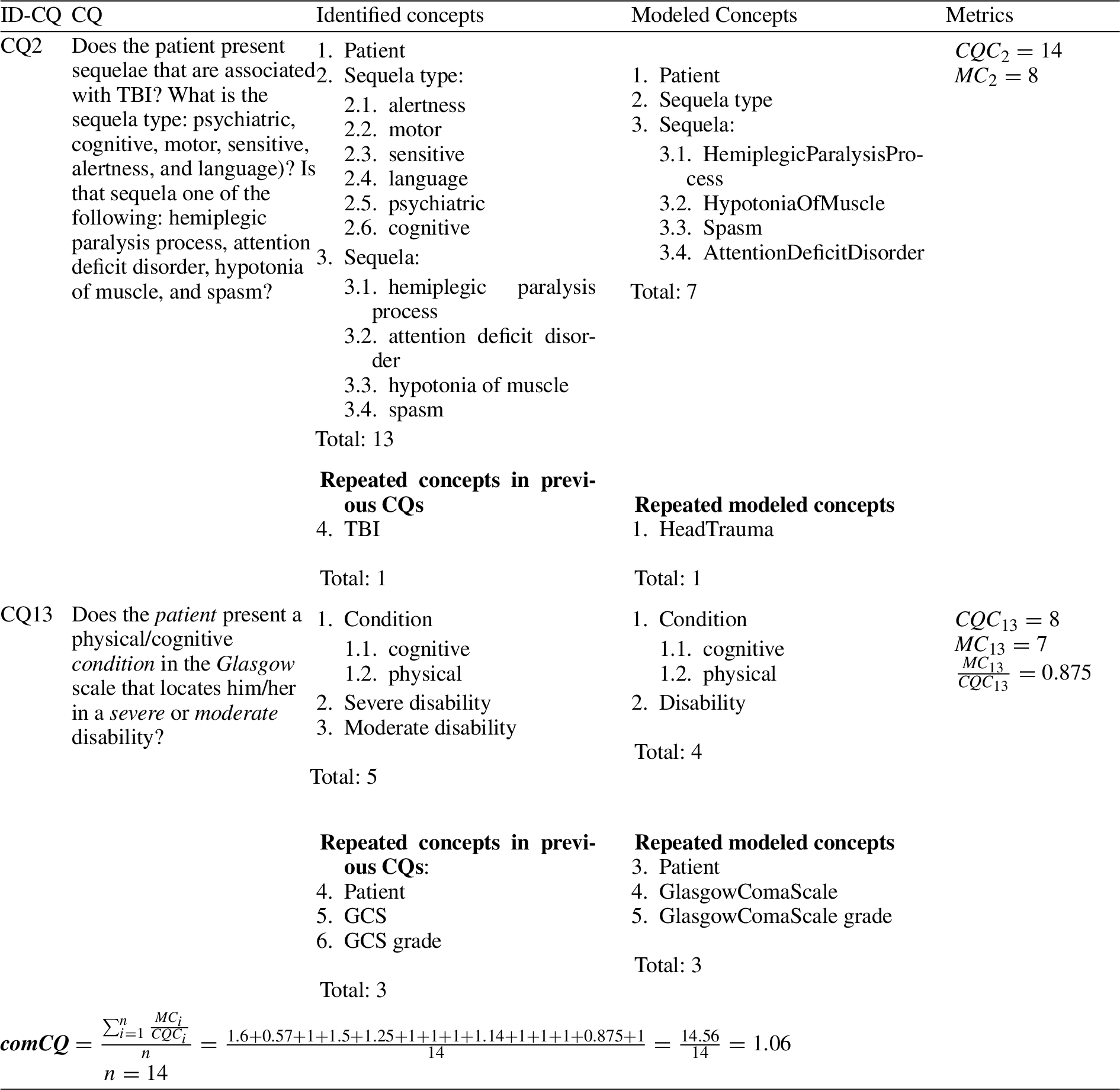

TCQs: The total number of CQs, which were provided by the medical team.

Thus, in Activity 15, these metrics are applied to obtain feedback about CERPRO’s quality from the SME’s perspective, to identify deviations and to correct them, till

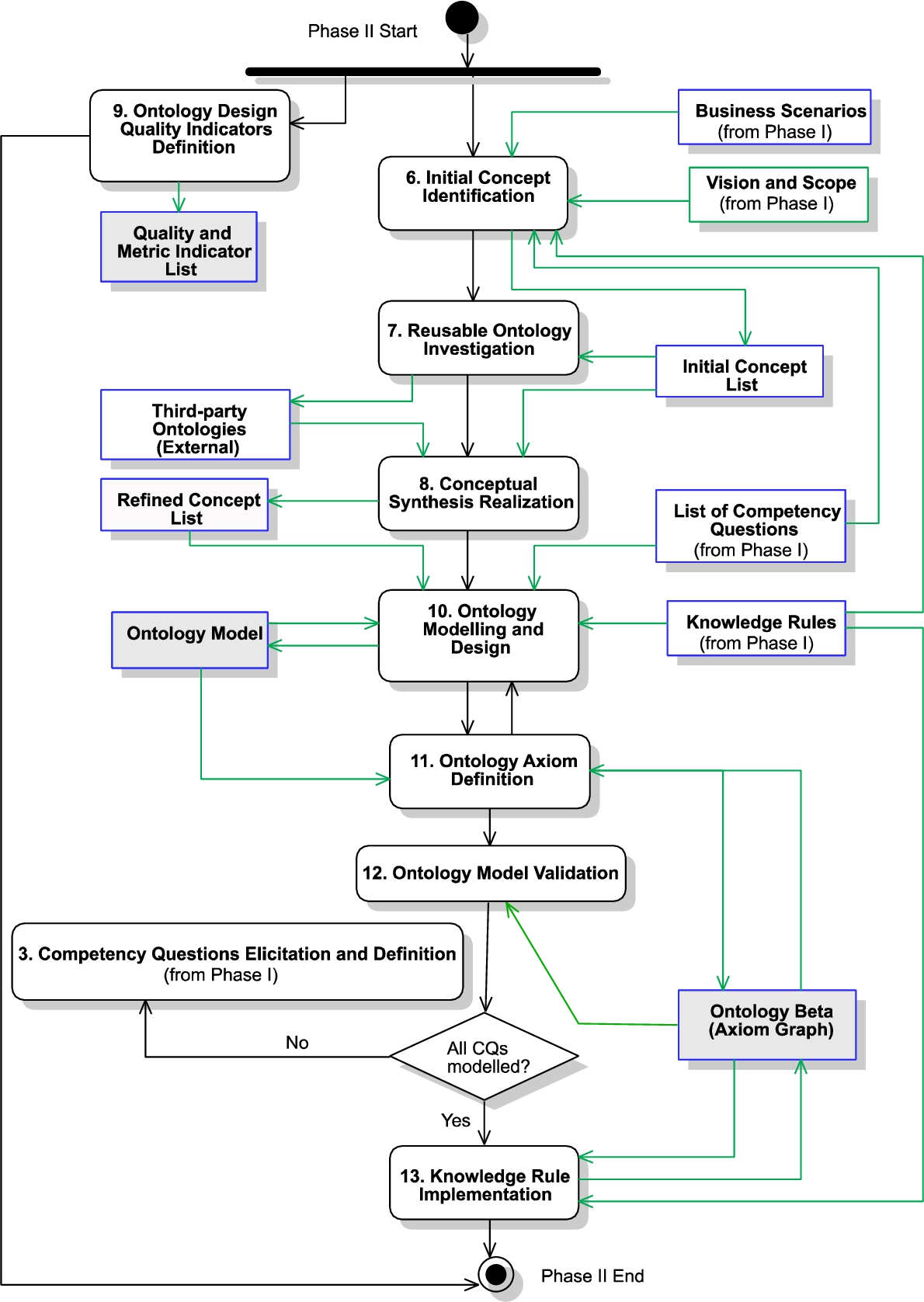

The objective of this phase is to build an ontology model and its axioms (see Fig. 3). The activities for this phase are as follows:

Phase II flow.

This activity aims to extract the relevant domain nouns and business actions from the outputs of previous activities. The Input to this activity is the

“

From this statement, the following nouns can be identified as concepts: Patient, Diagnostic, Sequela, and TBI. Then, more information can be discovered about the features which describe

As a result, new relationships and concepts not identified in Activity 6 can be discovered and added to the

This activity aims to synthesize the concepts obtained from the

(Excerpt) refined concept list

This activity aims to allow the KE to set quality indicators about the ontology design to be verified in later activities. The KE sets quality indicators related to the design of the ontology. In this way, the design of the ontology is driven by these quality indicators and checked after the modeling stage. Quality indicators are set to assure that the ontology follows quality standards. It allows the KE to verify that the ontology has been designed and implemented according to the quality indicators and if not, the KE can refine the ontology. Quality indicators are different from validation metrics defined in Activity 5. Validation metrics are end-user-oriented and are checked with users to achieve end-user expectations. Quality Indicator Metrics can be adopted from already defined ones available in the literature such as by Gangemi, Catenacci, Ciaramita, & Lehmann (2005) and by Tartir, Budak Arpinar, Moore, Sheth, & Aleman-Meza (2005). However, these could need to be adapted to be defined in terms of CQs. The Output of this activity is the

2 the worst (concepts are modelled in both ontologies).

The metrics will be used in Activity 13 to measure the CERPRO’s quality, to improve the quality design through the iterations, till the 3 metrics are near 1. For example, if

As this activity is part of an iterative process, the KE selects a set of CQs that will create an increment of the ontology model. Therefore, the input of this activity is the

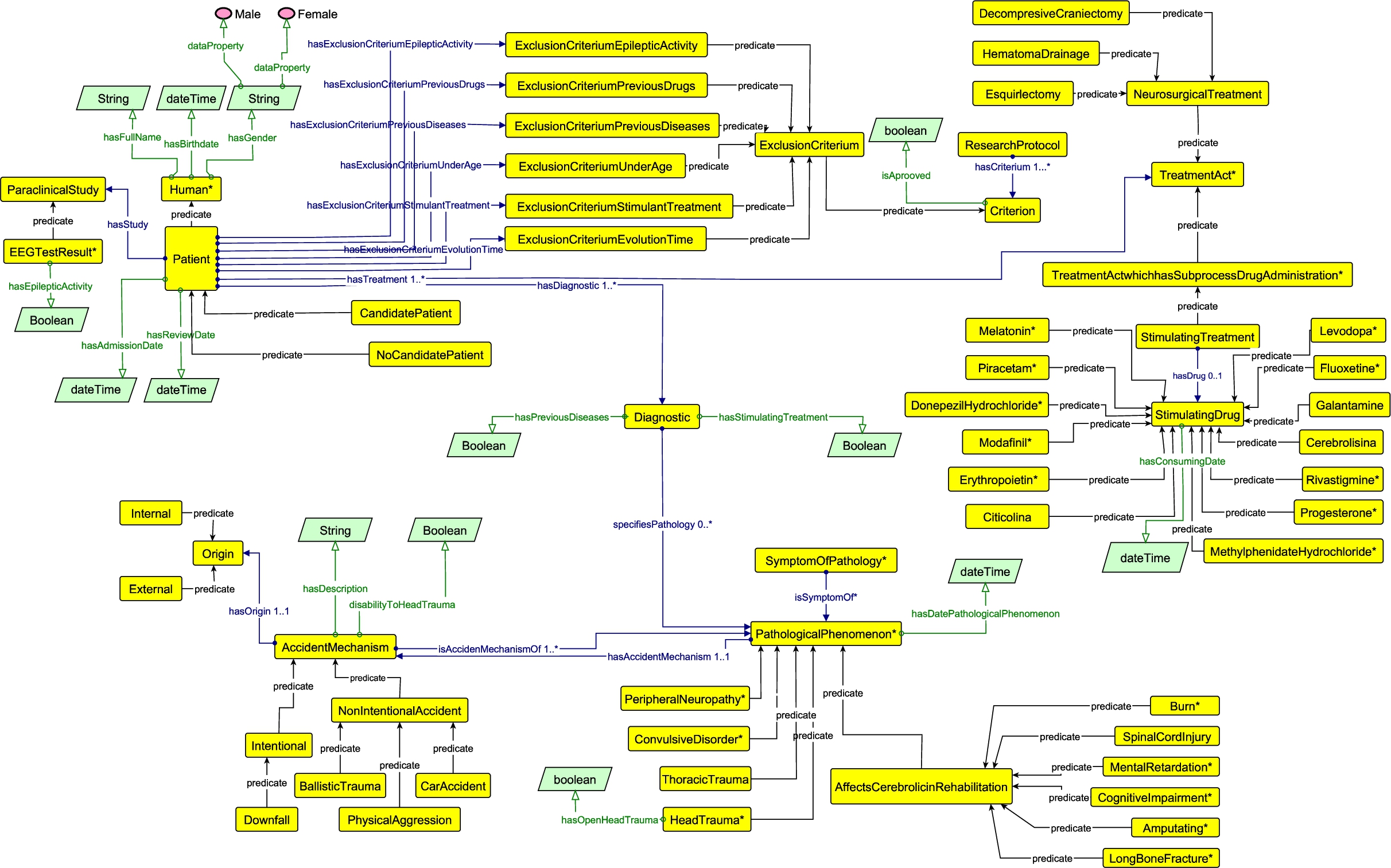

During the activity, concepts and their relationships are represented in a graphical model, before designing the ontology, to create a consensus among the participants about the ontology axioms, knowledge rules, and expressiveness level. Also, the graphical ontology model is a means to visually verify if all the concepts and their relationships from the

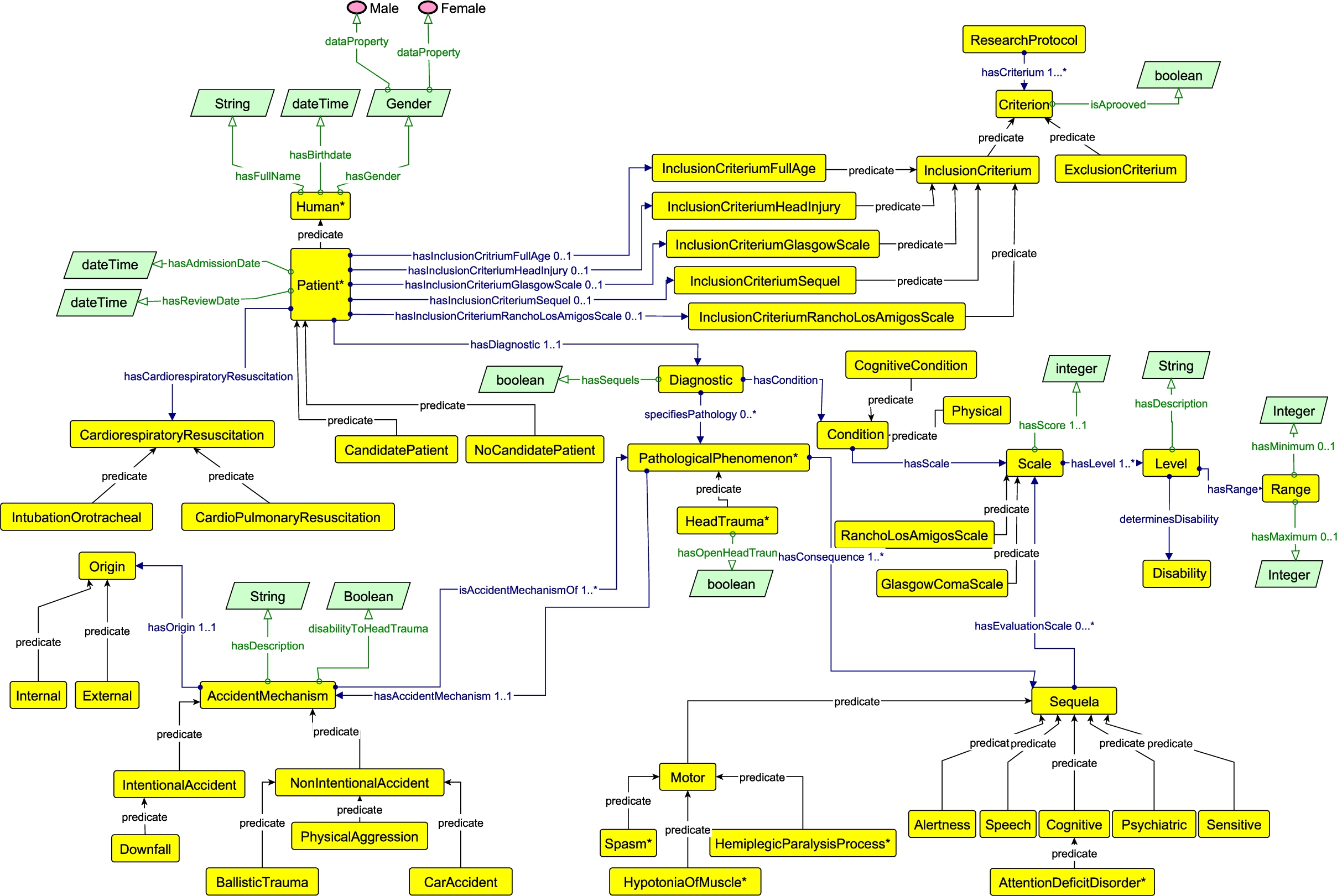

CERPRO model in yED, showing the INR Inclusion Criteria.

CERPRO model in yED, showing the INR Exclusion Criteria.

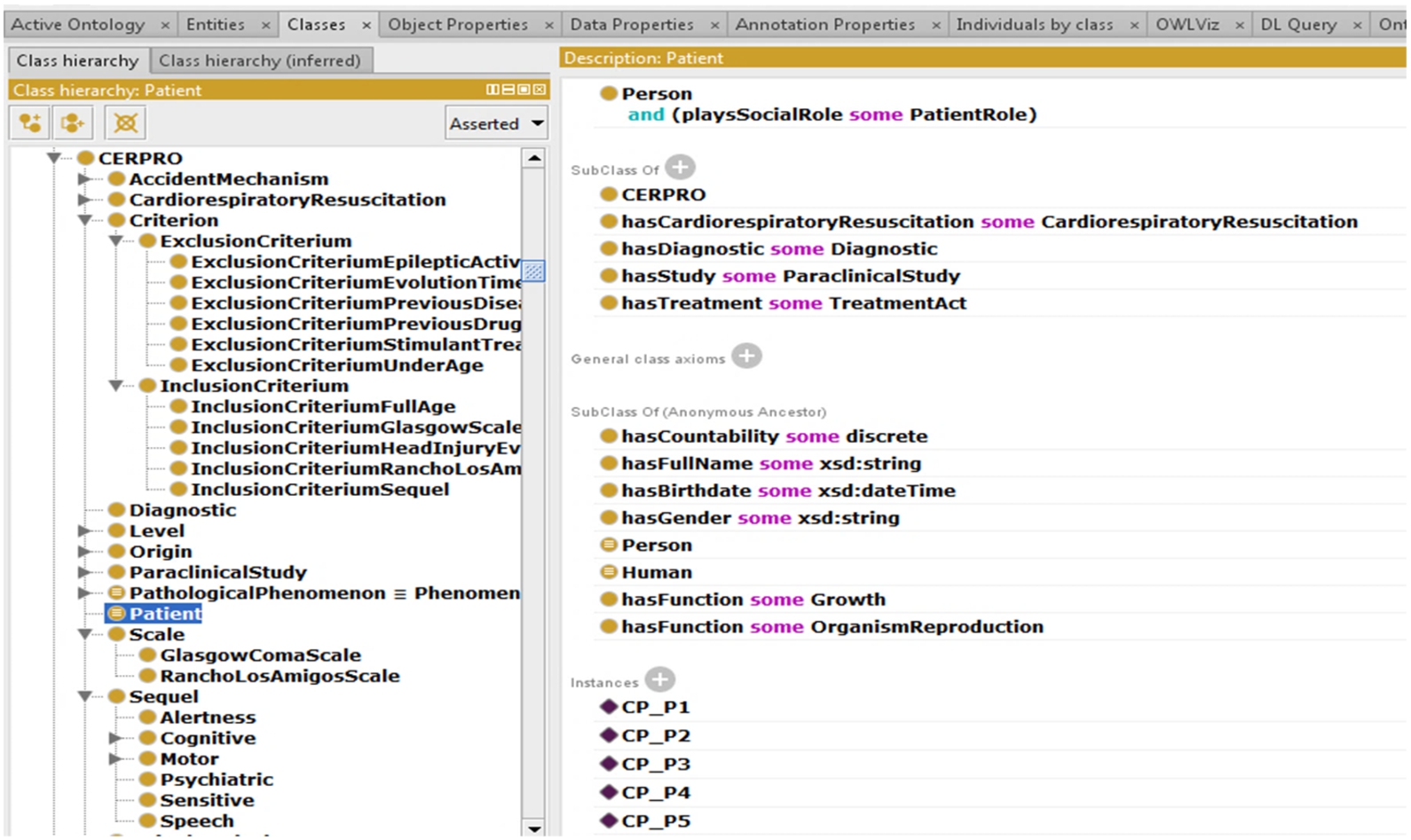

In this activity, the axioms already specified in the model from Activity 10 are implemented. The Input for this activity is the

In this step of this Activity, the ontology consistency checking must be done as many times as the reasoner reports inconsistencies in the ontology until there are no more reported errors. The Output of this activity will be an

CERPRO in Protégé, displaying the OWL axioms for the patient class, and patients as instances (CP_P1, CP_P2, etc.).

This activity aims to receive feedback on the ontology design as it is easier and less costly to make any changes/updates at this stage than in later activities in the process. In this activity, the KE shows the ontology model and axioms to the SME. For this purpose, it is recommended to use a graphical ontology representation to facilitate the comprehension of the SME. Thus, this is a qualitative validation – as it captures the SME’s comprehension about missing or misrepresented concepts, attributes, and relationships.

After performing this activity, a decision can be made either, to perform more iterations to other activities in this phase, specifically activities 6, 10, and 11; or not. The input of this activity is the

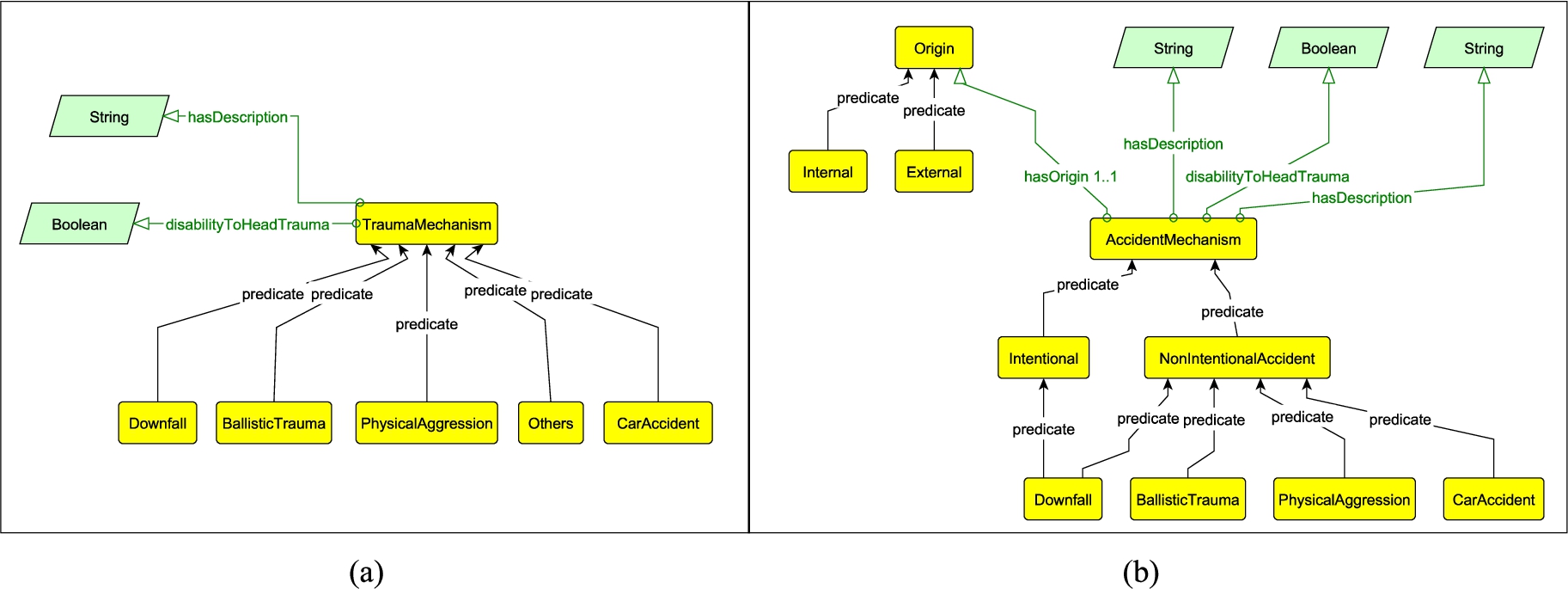

Fig. 7 shows how the ontology was changed after this validation. The trauma accident concept was not detected by the KE from the CQs and domain analysis. This situation can happen because SMEs are not aware that they need this in the model early on, until the KE walks them through the model.

The (a) shows the previous version of the

In this Activity, the KE implements the

(Excerpt) Inclusion and Exclusion Criteria (IC and EC respectively) in combination, comprises the Knowledge Rules (KR). In each KR, the IC/EC must be simultaneously executed according to the rows below in this table. For example, KR1 requires to simultaneously execute IC-1 and EC-1. Inclusion and Exclusion Criteria must be simultaneously executed in each KR

Rule R-3 implementation in SPARQL

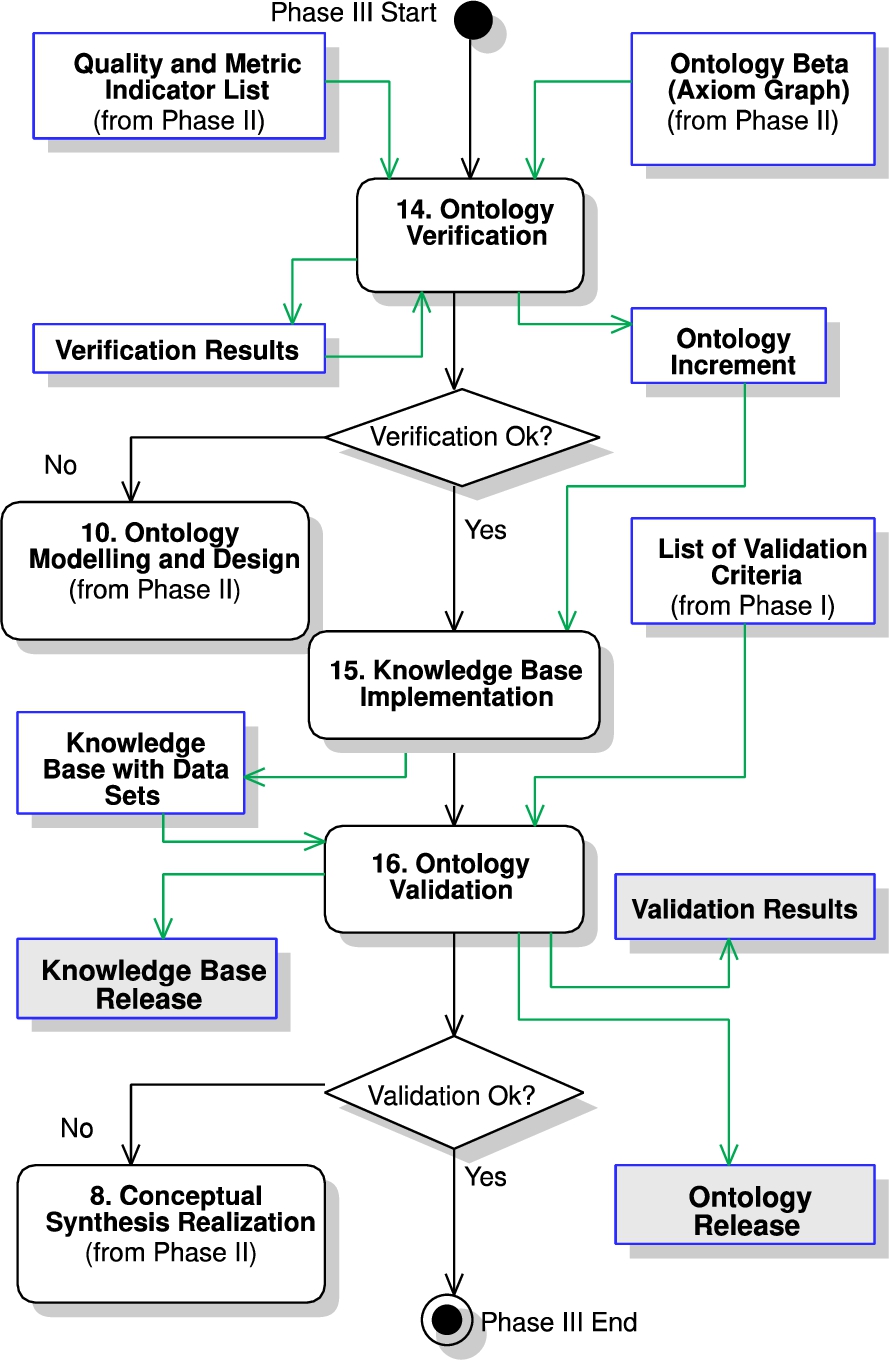

The objective of Phase III is to implement a Knowledge Base from the ontology that is verified and validated (see Fig. 8). The activities for this phase are as follows:

Phase III flowchart.

This activity aims at verifying the ontology design by measuring its quality, according to the defined

The verification is run by applying the metrics included in the indicators to obtain measurements, which will give insights about the ontology design, and then to correct deviations if the results are under the expectations. This will guide the KE to review and improve the design of the ontology and conduct further iterations if needed. Examples of improvements that can be performed are the addition of missing concepts, axioms, and properties and changes in the hierarchies. Metrics that can be measured are model expressiveness, completeness, and semantic consistency between the target ontology and reused ontologies. The Output will be either: The

(Excerpt) metrics application for measuring the model completeness indicator

The advantages of using the

Check the traceability of the concepts from the CQs to the ontology model. As it can be noticed from Table 8, the calculation of the Model Completeness indicator has allowed us to document and keep track of the traceability of the Ontology Model concepts: the ones that are identified directly from the CQ and the ones that are not.

Add missing concepts and axioms in the Ontology. For example, in iteration 1 the

Update hierarchies. For example, in iteration 1,

(Excerpt) previous model completeness metric calculation, where CQ2 and CQ13 metrics had lower figures

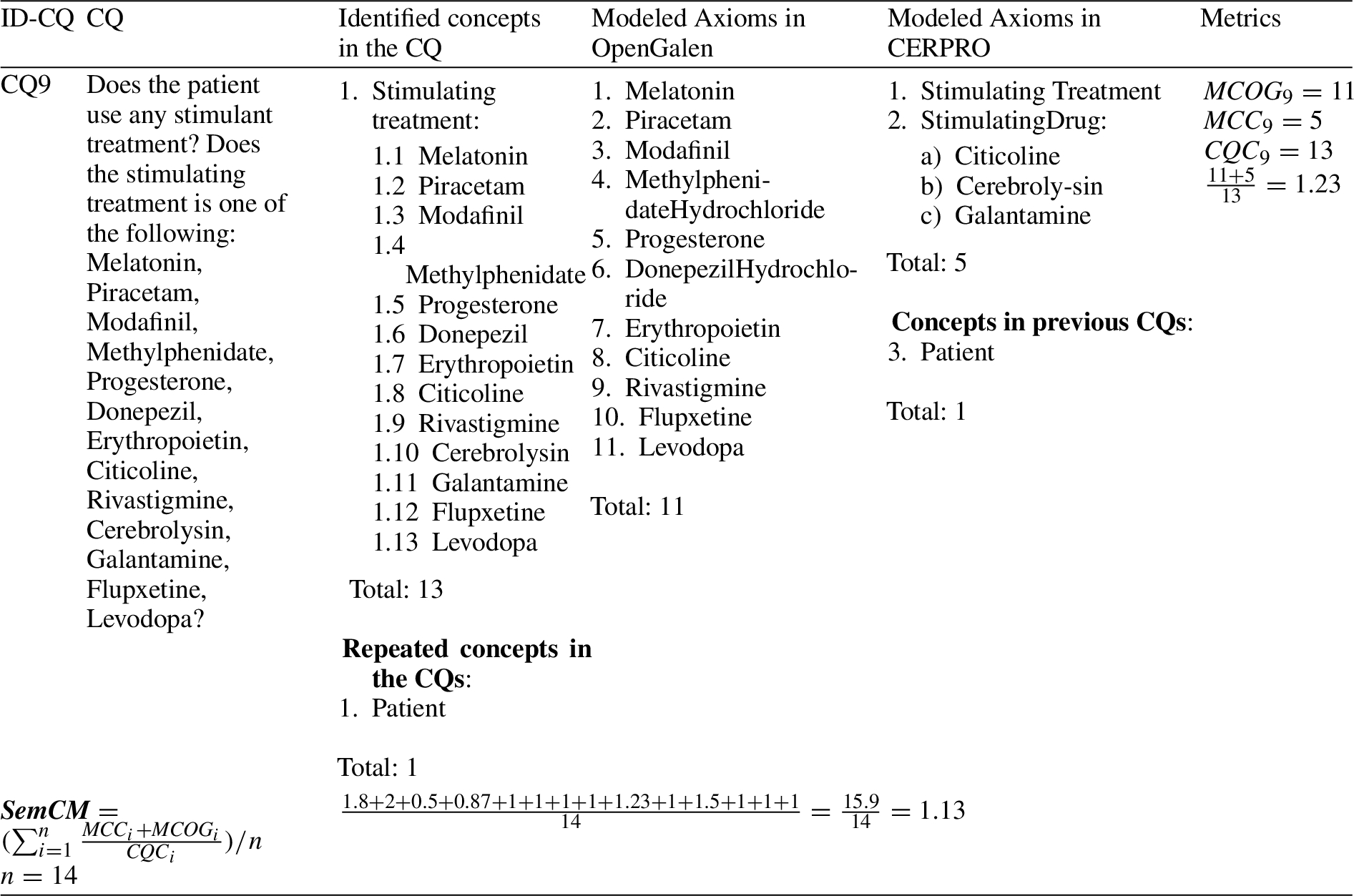

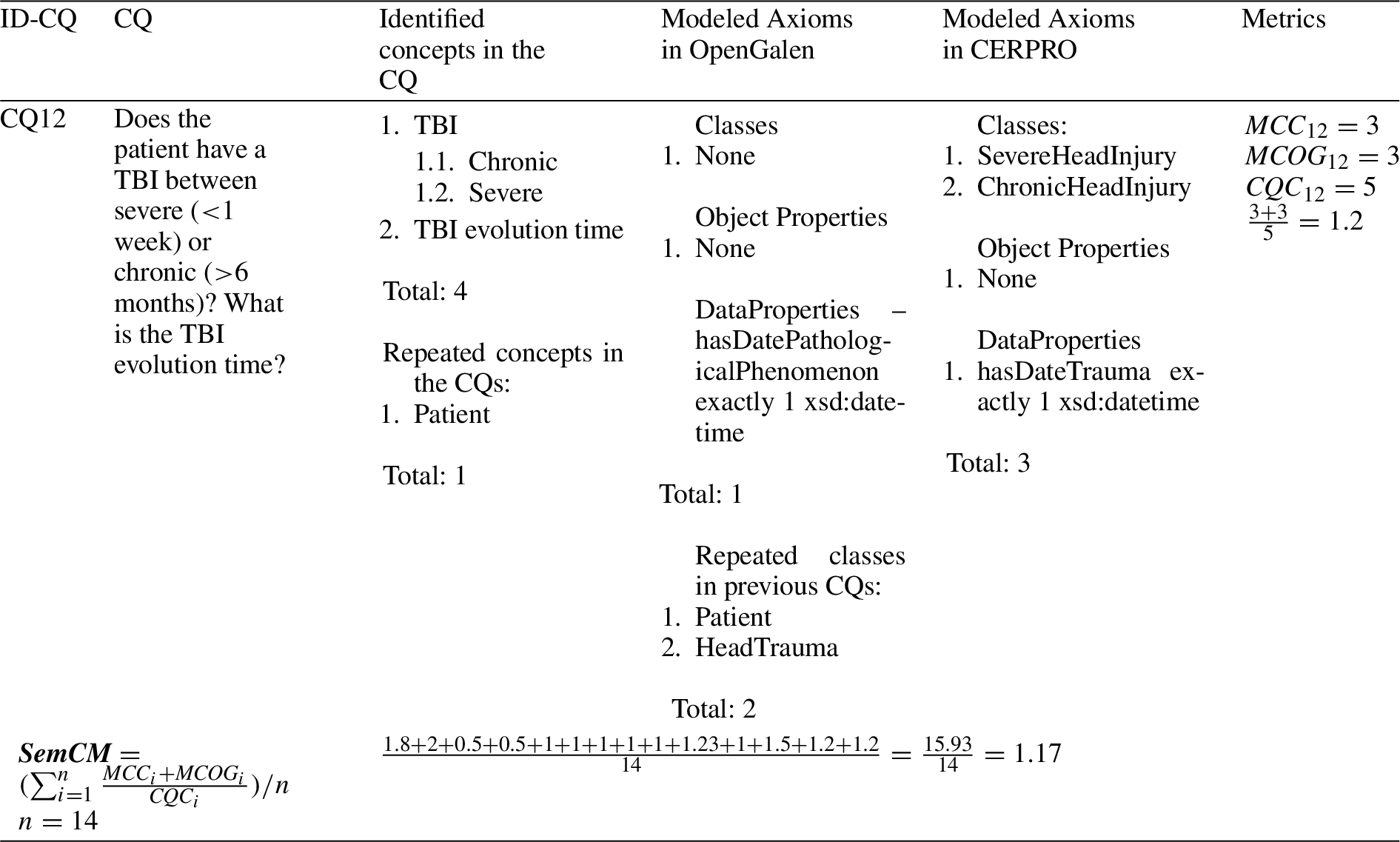

Table 10 presents the results for S

(Excerpt) metrics application for measuring the indicator: semantic consistency between models

(Excerpt) previous semantic consistency between models metrics calculation, where CQ12 has repeated axioms

The advantages of using the S

Table 12 presents the results for the

(Excerpt) metrics application for measuring the model expressiveness indicator

The advantage of using the

(Excerpt) previous model expressiveness calculation, where CQ1 has a low ratio

Therefore, after iteration 11, CERPRO achieved the verification expectations in terms of these 3 metrics.

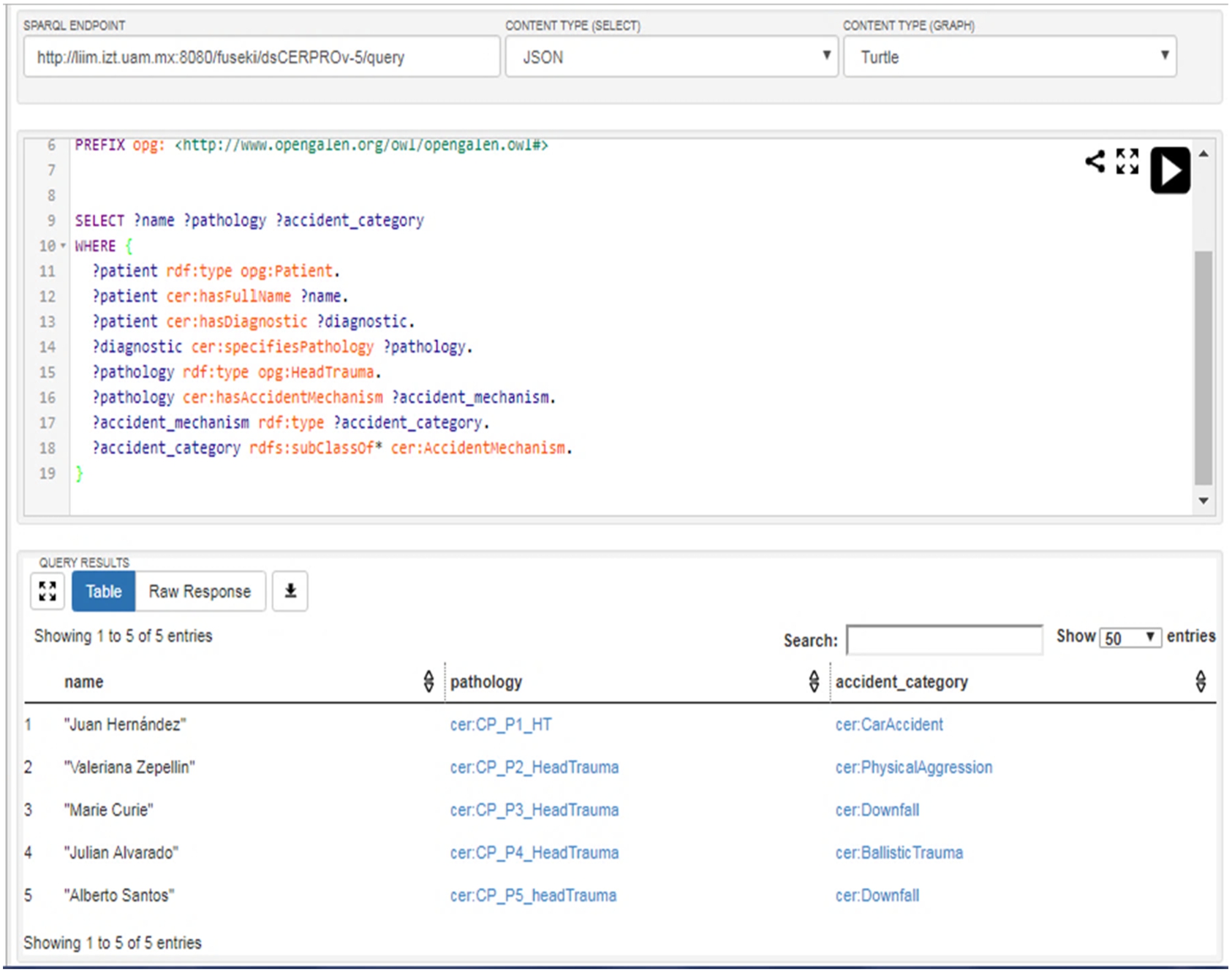

The aim is to create a KB to test if the ontology is properly answering the CQs. This is entirely a software installation task. Thus, the

The SPARQL implementation of CQ-1 and the CERPRO response.

(Excerpt) trial data with patients data

This activity aims to validate whether the

The Input is the

The Output of this activity is either: 1) the

This Validation Protocol was followed in Activity 16 of CODEP to validate whether the CERPRO-KB truly responds to the medical team’s needs (stated in the CQs in Activity 3).

The validation research question pursued is as follows:

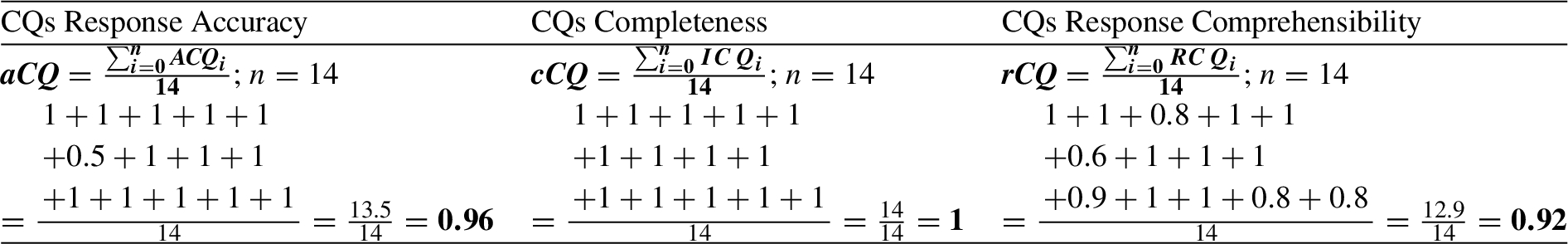

These 3-validation criteria follow the definition from Activity 5 (defined according to the medical team’s needs), thereafter they are measured through Equations 1–3, respectively.

Validation of CERPRO: response accuracy calculation, completeness, and comprehensibility results

Validation of CERPRO: response accuracy calculation, completeness, and comprehensibility results

Another example for new iterations based on validation metrics is regarding the

There have been approaches in the literature to support the design and development of ontologies. In this section, we review them and compare them to CODEP.

On-To-Knowledge (Staab, Studer, Schnurr, & Sure-Vetter, 2001) proposes to design an ontology with four main sub-processes:

DILIGENT (Pinto, Staab, & Tempich, 2004) is one of the former ontology development methods, and it focuses on managing the ontology design with geographical-distributed teams; its activities are

Methontology (Fernández, Gómez-Pérez, & Juristo, 1997) considers CQs in the initial Specification phase, but there are never used in the next phases of the life cycle to validate the ontology release. Methontology supports verification in the Implementation phase, but verification results are not used to formally improve the ontology (for example through metrics). It is neither an iterative nor an incremental approach.

In the approach by Katsumi & Grüninger (2010), a life cycle is proposed for ontology development, which is strongly centered on integrating the use of theorem provers to ensure that ontology models are semantically correct. CQs are used, but end-users or SMEs are not involved since there is not a specific validation activity, which would be necessary to determine that the ontology truly meets end-user needs. The cycle is not iterative or incremental. In the approach by Garcia Castro et al. (2006) an ontology development process is proposed, which is iterative between the modeling and evaluation activities, and with the involvement of end-users in the early stages. The approach stems from defining and eliciting CQs by using Conceptual Maps (CMs), which are considered as an informal ontology model. The CMs are used to get users’ feedback and to improve the CMs. It proposes an iterative evaluation phase to formalize the ontology; however, the approach does not apply metrics and/or measurements to do this evaluation, which means that the ontology improvements are not quantitatively guided and no formal increments can be produced.

In the approach by Lasierra, Alesanco, Guillén, & García, (2013), a three-stage solution is presented: ontology development, ontology deployment in the application domain, and software implementation with the ontology incorporation for the KB. A waterfall lifecycle is proposed, and users are not involved in the process. It is not formally structured (does not describe the involved roles, the inputs/outputs, metrics usage, etc.) and does not include V&V activities.

There are additional approaches that stem from using CQs as the backbone for building the ontology design. In the approach by Malheiros & Freitas (2013), the authors present a system based on an algorithm to iteratively build the ontology based on CQs. It is a promising approach as it attempts to automatically generate the ontology, however, the system does not consider a deep analysis of the domain and does not consider using metrics to quantitatively validate whether the iterative ontology fulfills the CQs. In the approach by Ren et al. (2014), the authors present an approach for testing the ontology with the CQs formulated by stakeholders to automatically find out whether the ontology can answer such CQs, in terms of analyzing the Description Logic (DL) axioms. In the approach by Sousa, Soares, Pereira, & Moniz (2014), the authors suggest a template for writing CQs for building lightweight ontologies (defined as: with poor computational processing and no inferable constructs) that are mainly used by domain experts with no knowledge of OWL-DL. They propose a structure to specify the CQ’s approach which can later represent in conceptual maps for ontology building.

In the approach by Grüninger & Fox (1995) and Uschold & Grüninger (1996), a methodology for the ontology design is proposed, which focuses on defining informal CQs as the means to capture the ontology requirements, which will be used to test whether the ontology is answering to the CQs. The formal CQs are used as the driver to implement the ontology expressiveness (axioms in a description logic), and as the means to formally evaluate whether the CQs have been answered by the ontology and its instances. This is an approach in line with ours in the sense that it is also grounding the CQs as a driver for ontology design, and the formal CQs concept is an approach to explore a more automatic evaluation. However, the methodology does not include an activity to consider metrics to evaluate quantitatively the ontology, nor there is guidance about how to proceed with the CQs when iterating the ontology. Since the process is not well-defined as it does not indicate inputs/outputs, roles for its activities it would be difficult to follow.

In the approach by Blomqvist & Öhgren (2006), the authors propose a methodology for manually constructing an ontology, with phases: requirements analysis, building, implementation, evaluation, and maintenance. The evaluation phase is used to compare the consistency between a manually created ontology against a semi-automatic created one. However, the methodology does not evaluate whether an ontology satisfies the goals and its applicability in answering queries and CQs, and the evaluation feedback (ontology verification) is not used for re-designing the ontology in further increments. In the approach by Noy & Mcguinness (2001) authors present a guide to developing ontologies based on the waterfall model (no-iterations). The guide considers a deep domain knowledge analysis. However, it does not consider V&V, nor the configuration of the KB platform; and the ontology development is not driven by CQs.

Some approaches provide verification and validation techniques, such as OntoQA (Tartir, Budak Arpinar, Moore, Sheth, & Aleman-Meza, 2005) and OOPS! (Poveda-Villalón, Suárez-Figueroa, García-Delgado, & Gómez-Pérez, 2009). OntoQA proposes a method to measure the ontology’s quality in 3 different perspectives: 1) the schema ontology quality, 2) the populated ontology, and 3) the KB compliance to the ontology schema. Particularly, the third perspective could be incorporated into CODEP in Activities 14 in further work, to enhance the CODEP evaluation for the ontology schema (Activity 14) and the populated ontology (Activity 16); and, the first and second approaches could be additional sources for defining metrics, as required in Activities 14 and 16. OOPS! (Poveda-Villalón, Suárez-Figueroa, García-Delgado, & Gómez-Pérez, 2009) provides a method (and online tool) for evaluating the ontology quality from the verification perspective, by providing a catalog of 40 good practices for ontology development and metrics to evaluate the ontology. However, OntoQA does not provide guidance or a process for applying the method in the ontology quality evaluation, but it can be complemented by CODEP to formally update the ontology, based on the suggested validation metrics, as part of CODEP’s Activity 16.

This shows that CODEP provides a framework for building ontologies, which can be enriched and tailored with third-party approaches/techniques in specific activities. In fact, as future work, the verification method with (Ren et al., 2014) and the CQs structure proposed by Sousa, Soares, Pereira, & Moniz (2014) could be used to support the automatization of the CODEP process.

CODEP evaluation and further discussion

Comparing CODEP’s features to other ontology development processes

This section discusses the CODEP’s features in comparison to other available approaches in the literature for ontology design. To compare these approaches, we have used the features described in Table 16.

Features used for the comparison

Features used for the comparison

Ontology process models benchmarking

From Table 17, it can be observed that many approaches do not mention involving the user or domain experts in the process. Those that do, involve users in activities acquiring knowledge about the domain. Two approaches in addition to CODEP do involve the user invalidating the ontology or knowledge base to ensure that the user is satisfied and collect/update the ontology and define new CQs. On-To-Knowledge and Diligent allow the user to propose changes to the ontology, therefore they can be viewed as the user to participate in the Ontology Building/validation.

In terms of approaches supporting validation and verification activities, only 3 approaches include both, the rest include one or the other. CODEP is the only process that includes validating the ontology quantitatively using metrics. The validation in CODEP is performed in two phases: one where the ontology model is validated and then where the knowledge base is validated with the users/domain experts.

It can be noticed that there are few approaches where the ontology is driven by CQs (3 approaches and CODEP). From the 3, only 2 support the development of a knowledge base. But it can also be observed that only approaches that are CQ-driven can develop knowledge bases. This demonstrates the importance of these criteria when suggesting processes for creating ontologies that can be applied.

From the evaluation in Table 17, it can also be observed that none of the approaches propose well-structured processes to be followed, with clear activities and roles. This is one of the factors that motivated us to define the CODEP process, as we could not identify any approach from the literature which can be followed.

CODEP includes 3 main phases, each with several activities. The phases cover the life cycle of ontology development. In addition, the end-user is highly involved in most of the activities. This has been appropriate for developing the CEPRO ontology where the usage of the results of the ontology are critical to the health of humans and ensure that medical doctors (users) trust the quality of the diagnosis produced by the ontology. The effort of following the CODEP activities can be high from the perspective of the knowledge engineer and the users (domain experts). In certain kinds of projects, a trade-off should be considered between applying all CODEP activities and the effort required without influencing the quality of the produced artifacts (ontology, knowledge base, etc.). In some cases, not all activities have to be applied. In our further work, to provide better guidance on the application and adaptation of CODEP, we can design experiments to evaluate which activities can be skipped and which activities must be kept for certain domains or characteristics of end-users.

We also plan on conducting further studies to evaluate other aspects of the performance of the CODEP process and the quality of the ontologies it produces. One of the aspects that would be of interest to study is bottlenecks. Identifying possible bottlenecks, analyze them, and suggest improvements to CODEP activities to handle such issues. One approach we can follow is the process improvement concept (CMMI Institute, 2020), where we can design measurements and adjust CODEP’s activities to solve identified process issues. The process improvement can be performed based on quality attributes of the process such as process comprehension (if the process is well-defined from the knowledge engineer’s perspective), visibility (if the activities produce clear results), acceptance (if the process is understood by the end-users, knowledge engineers, etc.), support (which activities are performed with tool support), reliability (if the process allows identifying failures which can affect the resulting ontology), maintainability (if the process can easily evolve) and velocity (in the speed of performing activities to complete the knowledge base).

Further studies comparing CODEP to existing ontology development approaches would be of interest. For this objective, we would have to define metrics where we can measure aspects of CODEP and compare them with the results of the other approaches. There are challenges we can encounter in performing this kind of study to make these comparisons meaningful. We have to apply the same metrics used and published by other approaches and develop ontologies of similar domains and complexities.

Conclusions

CODEP focuses on driving the ontology design through competency questions, aiming in producing an accurate ontology in terms of compliance with end-user requirements. Metrics are used to validate quantitatively the compliance of the CQs in the ontology. An innovative aspect of CODEP is that it implements incremental and iterative cycles for designing the ontology, in which the V&V tasks are the backbone to indicate if more increments and iterations are needed or not. Specifically, the significance of designing an ontology by adhering to CODEP is as follows:

Some limitations are:

The CODEP application in the case study shows an example of exhaustive metrics definition and collection since elements are counted from the ontology (for verification measurements), and the KB responses (for validation measurements). However, it distinguishes our work from others as it guides the KE in verifying and validating whether the ontology fulfills the quality standards stated at the beginning of the process. This also supports the delivery of a high-quality ontology from the end-user’s perspective, since their feedback determines whether to stop the modeling and design or not based on meeting their expectations. Thus, it can be argued that this consumed time is valuable against the benefits gained.

If CODEP is used for designing ontologies with a non-practical purpose, some activities do not have to be followed. For example, Activities 13–16, are oriented to validate the ontology for practical usage. Also, Activities 1–5 must be also revised, since the CQs must be adjusted to a more general scope instead of supporting specific purposes of specific end-users. An example of this situation is related to ontologies which are developed for standardizing concepts in an application domain such as FIBO (EDM Council, 2020) for finance, SNOMED (SNOMED International, 2015) for medicine, and FRO-Solvency Ontology (Jayzed Data Models Inc., 2019). These ontologies commonly define the body of knowledge in their domain intending to avoid ambiguities of concepts and they provide a baseline for further operational ontologies (specific to case studies in organizations). However, even in this case, CQs are still the driver for defining the ontology’s objectives and goals, and for the ontology development and verification.

Footnotes

Acknowledgements

The authors thank Sc. Dr. Paul Carrillo-Mora and his team from Instituto Nacional de Rehabilitación, México, for the support in the case study and advising about the medical background for designing CERPRO. This work was partially funded by Enterprise Ireland (project MF20180009) through the Career FIT program, which has received funding from the European Union’s Horizon 2020 research and innovation program under the Marie Skłodowska-Curie grant agreement No. 713654; The Royal Academy of Engineering (NRCP1516/1/39) and The Royal Society (NI150203) through the Newton Fund scheme.