Abstract

There are many benefits of using argumentation-based techniques in multi-agent systems, as clearly shown in the literature. Such benefits come not only from the expressiveness that argumentation-based techniques bring to agent communication but also from the reasoning and decision-making capabilities under conditions of conflicting and uncertain information that argumentation enables for autonomous agents. When developing multi-agent applications in which argumentation will be used to improve agent communication and reasoning, argumentation schemes (reasoning patterns for argumentation) are useful in addressing the requirements of the application domain in regards to argumentation (e.g., defining the scope in which argumentation will be used by agents in that particular application). In this work, we propose an argumentation framework that takes into account the particular structure of argumentation schemes at its core. This paper formally defines such a framework and experimentally evaluates its implementation for both argumentation-based reasoning and dialogues.

Introduction

Argumentation schemes are patterns for arguments (or for inferences), representing the structure of common types of arguments used both in everyday discourse as well as in special contexts such as legal and scientific reasoning [89]. Argumentation schemes have become a well-known concept from Douglas Walton’s book “

Considering that argumentation is a complex and important ability that demonstrates cognitive capacity [5], the Artificial Intelligence (AI) community sees argumentation schemes as an important component to model and implement intelligent-agent technologies embedding such cognitive capacity, enabling argument mining [28, 35, 36], agent decision-making, reasoning, and communication [32, 33, 77, 82]. Applying such technologies on a variety of domains such as legal reasoning, medical argumentation, e-government, debating technologies, and many others [5, 69, 86].

Particularly in Multi-Agent Systems (MAS), argumentation plays an important role in both agent reasoning and communication [37], providing an expressive communication method [33], as well as a powerful reasoning mechanism under conditions of conflicting and uncertain information [5]. Consequently, interesting multi-agent system applications using argumentation-based techniques have been developed in the last few years, for example [32, 46, 52, 78]. Most of those applications define argumentation schemes (often only one) that are used by agents to instantiate arguments used for reasoning and/or communication, fulfilling the application needs regarding argumentation. However, the link between argumentation schemes and the (few) practical approaches and frameworks for argumentation, particularly in multi-agent systems, has not been fully investigated in the literature. We believe that it is necessary to address the particular structure of argumentation schemes, keeping the essence of the argumentation schemes as devised by Walton [89] and reflecting natural (human-like) argumentation, in such practical frameworks and in particular in agent-oriented programming languages, which will allow us to implement multi-agent systems empowered by argumentation techniques in a more principled way. This is so because, firstly, Walton’s approach provides an elegant model for knowledge engineering in multi-agent systems based on argumentation schemes, which seems an essential step towards developing multi-agent system applications that take advantage of argumentation schemes. Secondly, because Walton’s approach provides a refined and modular mechanism for agents to reason and communicate using argumentation schemes, reflecting the essence of how humans argue. Also, our approach allows implicit information in argumentation schemes to be kept implicit at the implementation level, whereas other practical approaches explicitly represent them as additional premises or need to break an argumentation scheme into separate arguments.

In this work, we propose a novel argumentation framework, formally defined and implemented, that takes into consideration the general structure of argumentation schemes and agent-oriented programming languages as its core. Thus, after modelling (or importing) argumentation schemes into our framework, agents are directly able to construct, communicate, and reason with arguments instantiated from those argumentation schemes, also addressing important properties when using argumentation schemes, e.g., scheme awareness [91]. This work extends our previous paper [50], in which we took the initial steps towards the development of this framework. In this paper, we not only present the framework, but we also describe in detail how it can be used to implement argumentation-based reasoning and argumentation-based dialogues using argumentation schemes, presenting various examples of their use.

The main contributions of this work are: (i) we propose an argumentation framework that has the general structure of argumentation schemes as its core. Using that general structure, we can represent argumentation schemes of different levels of specificity, including those in which there is implicit information pointed out by critical questions; our framework maintains the essence of argumentation schemes as defined by Walton [89], in which it is the matching between the argument and its reasoning pattern (argumentation scheme) that brings to light the relevant implicit information necessary to judge the validity of an argument; (ii) we show how our framework can be used to implement argumentation-based reasoning mechanisms based on argumentation schemes, in which agents first instantiate and evaluate each argument individually using its respective argumentation scheme and then, considering the

This paper is organised as follows. In Section 2, we present the background of this work, giving an overview of how argumentation techniques have been used in artificial intelligence and multi-agent systems, also introducing the notion of argumentation schemes. In Section 3, we introduce our argumentation-scheme-centred framework and formally define it. In Section 4, we describe our approach to argumentation-based reasoning using argumentation schemes, presenting examples and an empirical evaluation of our implementation. In Section 5, we describe our approach to argumentation-based dialogues using argumentation schemes, introducing a dialogue protocol based on argumentation schemes, and showing various examples of dialogues generated according to the implementation of that protocols. In Section 6, we discuss the main properties of our framework. In Section 7, we describe some work related to our approach. In Section 8, we conclude the paper also pointing out future directions for our research.

Background

Argumentation in artificial intelligence and multi-agent systems

Computational argumentation has been largely studied as an abstract formalism [19], which provides important insights into the nature of argumentation. In abstract argumentation, arguments are represented as atomic entities and they have no internal structure. Thus, arguments and attacks between arguments are left unspecified. This abstract perspective provides some advantages in studying argumentation [10], in which it is possible to consider only the semantic level, i.e., given a set of arguments and an unspecified attack relation it is possible to analyse which arguments are acceptable in that set/context/perspective.

When we need a more detailed formalisation than abstract argumentation, regarding the structure of arguments and the nature of attack relations, we need to adopt a formalism based on

Studies in computational models of argument can be classified into two classes:

In artificial intelligence, particularly in multi-agent systems,

The history of development, from abstract argumentation to structured argumentation, have made the field of computational argumentation mature in theory, and a few approaches to applying argumentation-based techniques in multi-agent systems started to appear in practice. Most of this practical work focuses on using an argumentation scheme (reasoning pattern) as the core component of the argumentation mechanism. Normally, the argumentation scheme used is specified for particular applications, modelling the needs of that application domain. For example, in [81] argumentation schemes have been specified for analysing the provenance of information, in [57] argumentation schemes have been specified for reasoning about trust, in [77] argumentation schemes have been specified for arguing about transplantation of human organs, in [46] argumentation schemes have been specified for implementing data access control between smart applications, and so forth. Thus, we believe that argumentation schemes can play an important role in the development of applications that take advantage of argumentation-based techniques, as the literature also suggests.

However, even though some authors (e.g., [48, 63, 70]) have suggested that argumentation schemes could be represented into such structured argumentation frameworks, for example using defeasible inferences rules, there is no formal and general framework that takes into account the particular structure of argumentation schemes in multi-agent systems, e.g., capturing the role of the implicit critical questions associated with each argumentation scheme. Similar claims that reinforce the missing computational representation for argumentation schemes can be found in papers by others, for example in [7]: “Douglas Walton’s influential argumentation schemes remain an important insight, one which needs to be embraced by computational modelling of argument. His work comes from informal logic and was designed for manual analysis of argumentation. For computational purposes much detailed work remains to be done before the use of argumentation schemes can be as standard and well understood as logical deduction” [7].

In this work, we propose a formal argumentation framework in multi-agent systems in which argumentation schemes are the core of the framework. Our approach considers a general structure for representing argumentation schemes (with or without implicit critical questions).

Argumentation schemes

Argumentation schemes are patterns for arguments (or inferences) representing the structure of common types of arguments used both in everyday discourse as well as in special contexts such as legal and scientific argumentation [87, 89]. Besides the familiar deductive and inductive forms of arguments, argumentation schemes represent forms of arguments that are Sometimes called

Many arguments include non-explicit (implicit) conditional premises or warrants, linking the explicit premises to the conclusion. Critical questions pointed by the argumentation scheme used to instantiate an argument are used to reveal such implicit information, playing an important role shifting the dialogue (or reasoning) to the revealed information [87]. Thus, the acceptance of a conclusion from an instantiation of an argumentation scheme is directly associated with so-called

Arguments instantiated from argumentation schemes and properly evaluated utilising their critical questions can be used by agents in their reasoning and communication processes. In both situations, other arguments, probably instantiated from other argumentation schemes, are compared in order to arrive at an acceptable conclusion. After an argument is instantiated from an argumentation scheme and evaluated by its set of critical questions, the process follows the same principle of any argumentation-based approach, where arguments for and against a point of view (or just for an agent’s internal decision making) are compared until eventually arriving at a set of acceptable arguments.

To exemplify our approach, we adapted the argumentation schemes

“Agent

The associated critical questions are:

The argumentation scheme from role to know (and the argumentation scheme from position to know as well) exemplify some aspects of argumentation schemes pointed out by Walton in [87]: “

Some approaches in the argumentation literature have suggested that argumentation schemes [87, 89] could be translated into defeasible inferences [48, 49, 63], and the acceptability of arguments, instantiated using these rules, could be used to extend frameworks such as ASPIC+ [43], DeLP [27], and others [47, 53]. However, those approaches do not take into consideration that most of the critical questions related to the argumentation schemes are not explicitly represented in the argument, as clearly argued by Walton [87]. Thus, those approaches would require to make the critical questions related to the scheme used to instantiate that argument explicit in the representation of that argument; that is, it would be necessary to include an explicit representation of all critical questions (usually representing presumptions and/or exceptions for the application of that scheme [66]), as premises of the argument, or even modelling those critical questions using other arguments undercutting the main argument (i.e., an argument saying that defeasible inference rule does not apply in that particular case) [64]. Otherwise, when such critical questions are not represented as part of the argument, and an agent is not able to infer from which argumentation scheme that argument comes from, all that information is lost.

Although approaches that explicitly represent critical questions on arguments do not face the problem of losing information, we believe they do not embrace the essence of argumentation schemes2 Extensively used in other areas, for example teaching critical thinking skills.

In this work, we present an argumentation framework in which argumentation schemes are the core of our framework. We propose a computational representation for argumentation schemes, and of arguments instantiated from those reasoning patterns, that overcomes the problem of making explicit information that should be implicit in an argument. Moreover, we describe how agents may reason and communicate using our proposed structure of arguments.

In order to make explicit the representation of arguments, we introduce the language

Further, all knowledge available to an agent

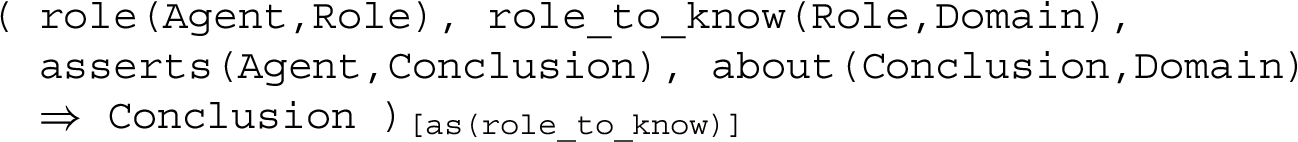

We introduce the formal definition for argumentation schemes used in this paper as follow:

Formally, an argumentation scheme is a tuple

Considering the logic language We took inspiration from Labelled Deductive Systems (LBS) [24, 25] to model annotated formulæ.

For example, the argumentation scheme Recall that an argumentation scheme is a non grounded reasoning pattern. with the argumentation scheme name

with the argumentation scheme name

The associated critical questions

An argument is a tuple

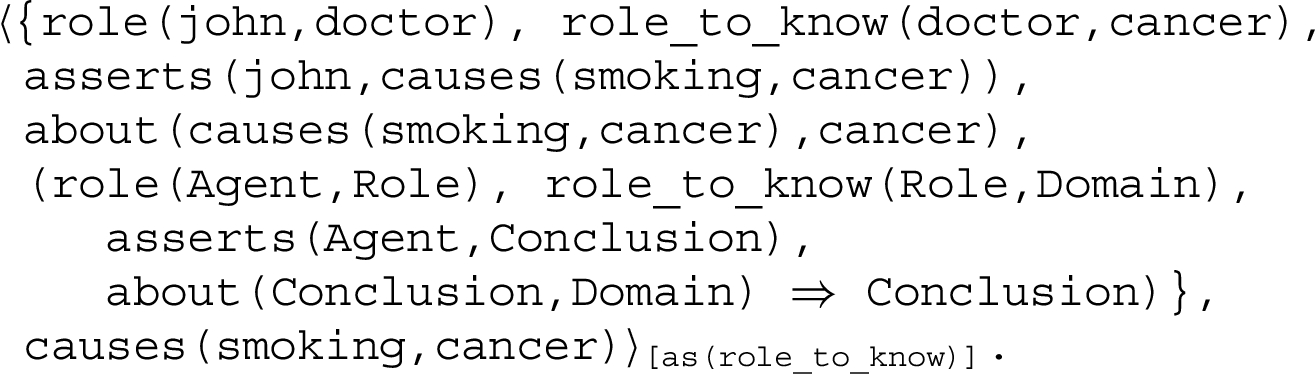

For example, considering the argumentation scheme

Note that an agent can instantiate different arguments, say Different agent profiles can be explored, for example, those presented in [58, 60]; they will be considered in our future work when applying our framework in argumentation-based dialogue. When using our framework for argumentation-based reasoning, it makes sense an agent to evaluate such arguments being able to answer all critical questions related to them.

An argument

Note that instantiating an argumentation scheme requires an agent checking the acceptability of the premises used in that argument, this automatically refers to the agent checking attacks against the premises of that argument instance.6 In ASPIC+ for example, attacks against the premises are described as

After instantiating arguments from the argumentation schemes available to it, an agent needs to check if those arguments result in conflicts. Considering a dialectical point of view, two types of attack (conflict) between arguments can be considered, according to [89]: (i) a strong kind of conflict, where one party has a thesis to be proved, and the other party has a thesis that is the opposite of the first thesis, and (ii) a weaker kind of conflict, where one party has a thesis to be proved, and the other party doubts that thesis, but has no opposing thesis of their own. In the strong kind of conflict, each party must try to refute the thesis of the other in order to win. In the weaker form, one side can refute the other, showing that their thesis is doubtful. When considering the computational model of arguments, this difference between conflicts is inherent from the structure of arguments and can be found in the work of others (e.g., [63]). Both types of conflicts are also considered in monological argumentation frameworks. On the one hand, the stronger kind of conflict refers to arguments supporting conflicting conclusions, in which each argument has its own set of evidence (i.e., its support). On the other hand, the weaker kind of conflict refers to an argument attacking (in conflict with) part of the support of another argument, that is, an argument does not imply that the conclusion of another argument is false, but it implies that some information used as support of that conclusion (e.g., some piece of information in the support of another argument) is not true.

Two pieces of information

For both cases we adopt a general operator for conflict

Attacks between arguments are identified by looking at their structures and finding conflicting information, as formalised below.

(Attacks between arguments).

An argument

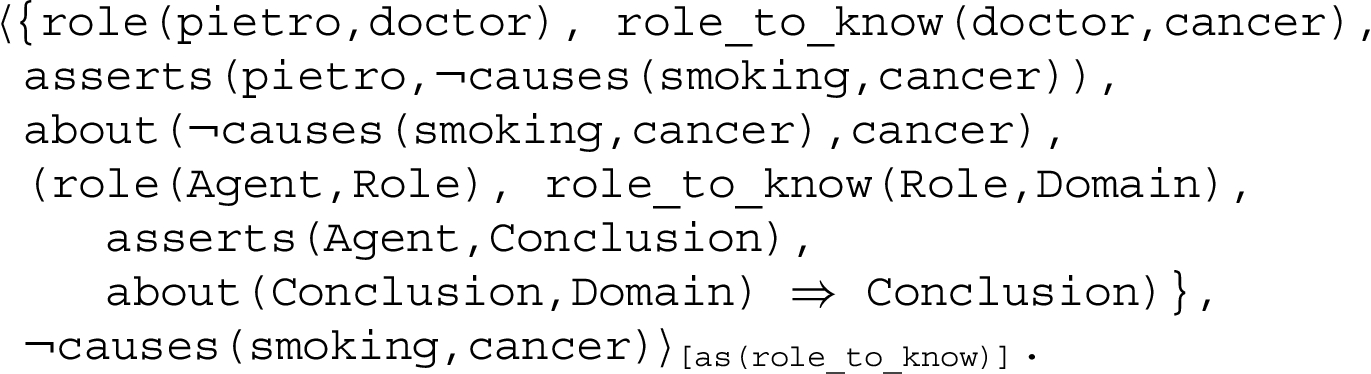

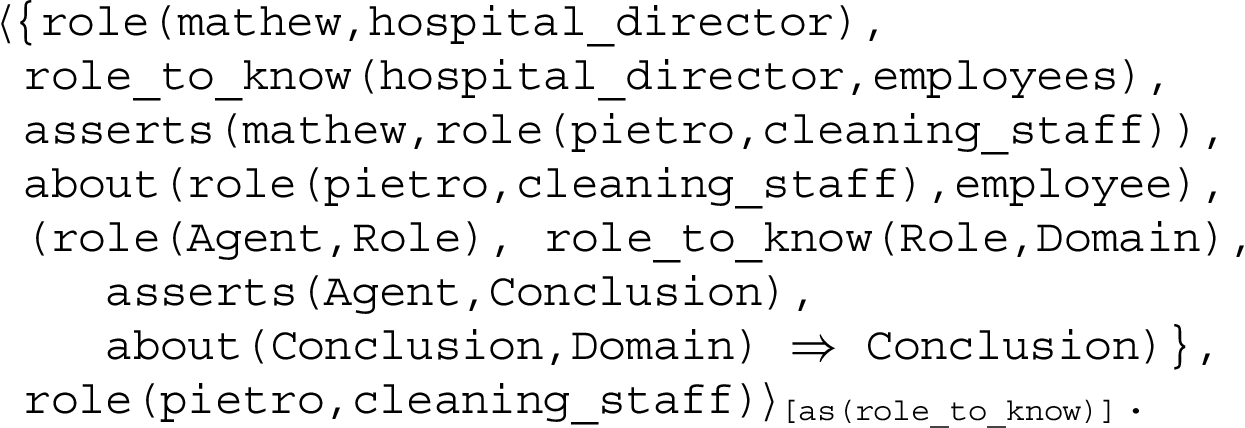

For example, considering an agent

Note that both arguments above are acceptable instances of the argumentation scheme

Given the knowledge that

Thus, the argument concluding

(Acceptable arguments).

An argument

Considering the three arguments above, the argument concluding that

Argumentation-based reasoning using argumentation schemes

One characteristic we desire in our framework is

Representing and reasoning with argumentation schemes

It is important to mention that in our approach we are able to specify argumentation schemes for agents with different levels of bounded rationality, depending on the application needs. This is an important characteristic of our approach, given that different applications might require different levels of specificity for argumentation schemes [84]. The most common approach when applying argumentation schemes in multi-agent systems is to consider only one argumentation scheme without chained arguments. Examples of this kind of argumentation schemes are found in [28, 34, 57, 79, 81]. In all those cases, during reasoning, agents check if they are able to construct acceptable instances of arguments from the argumentation schemes, according to Definition 3, used in a particular application domain. When the application domain does not require chained arguments, then premises and critical questions related to an argument are directly asserted (or omitted from) agents’ belief bases. Thus, after constructing acceptable instances of arguments, an agent will check for conflicts between the conclusions of arguments,7 We are considering that argumentation schemes are consistently modelled, and they do not allow circular arguments [90].

Consider the

Imagine a scenario in which an agent

However, initially

Considering the assertions made by Ana, Jana, and Carlo (the reliability advisers), and also considering that

Using that information,

We have implemented our approach8 The implementation is available in

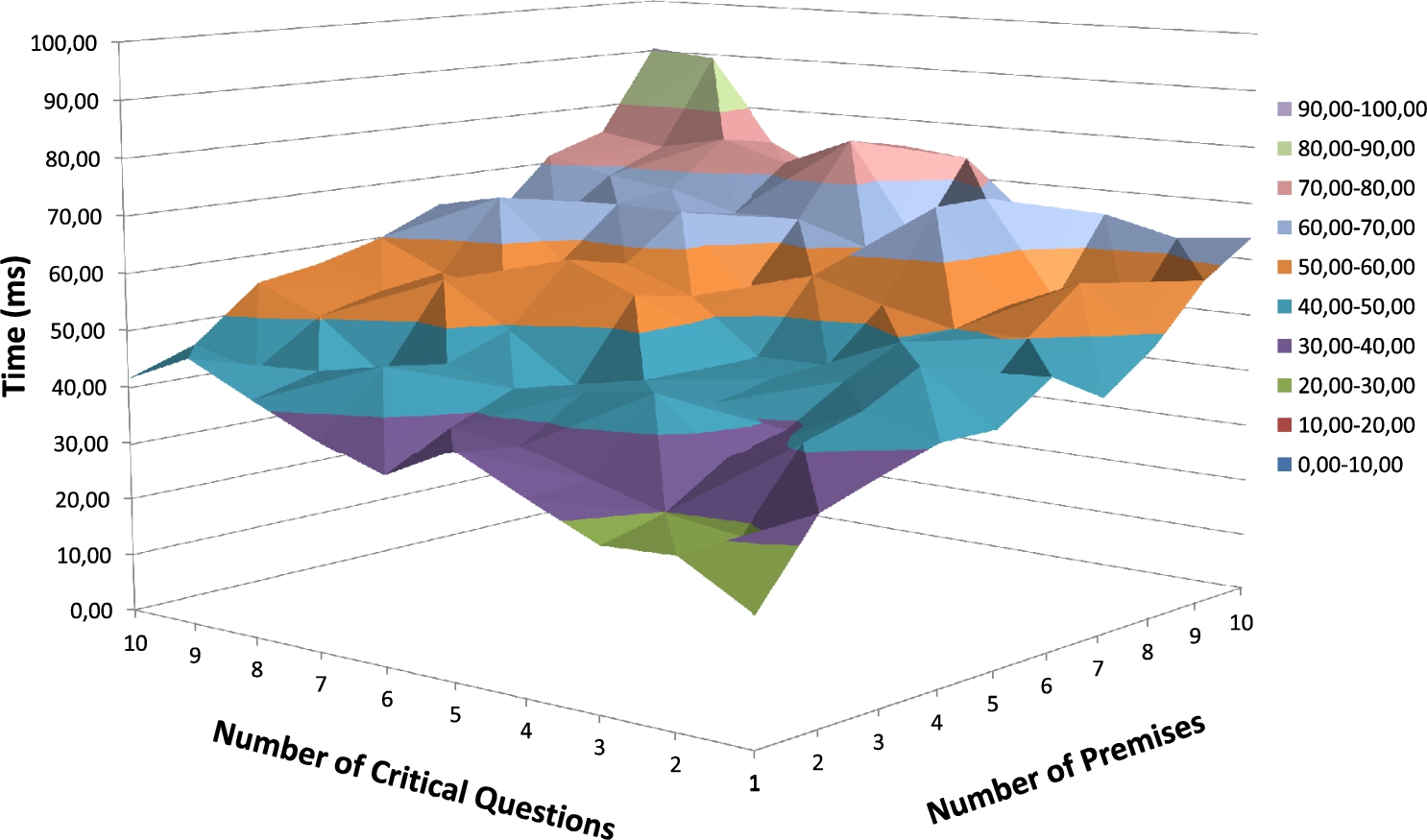

Time (ms) for reaching a conclusion considering one level of inference.

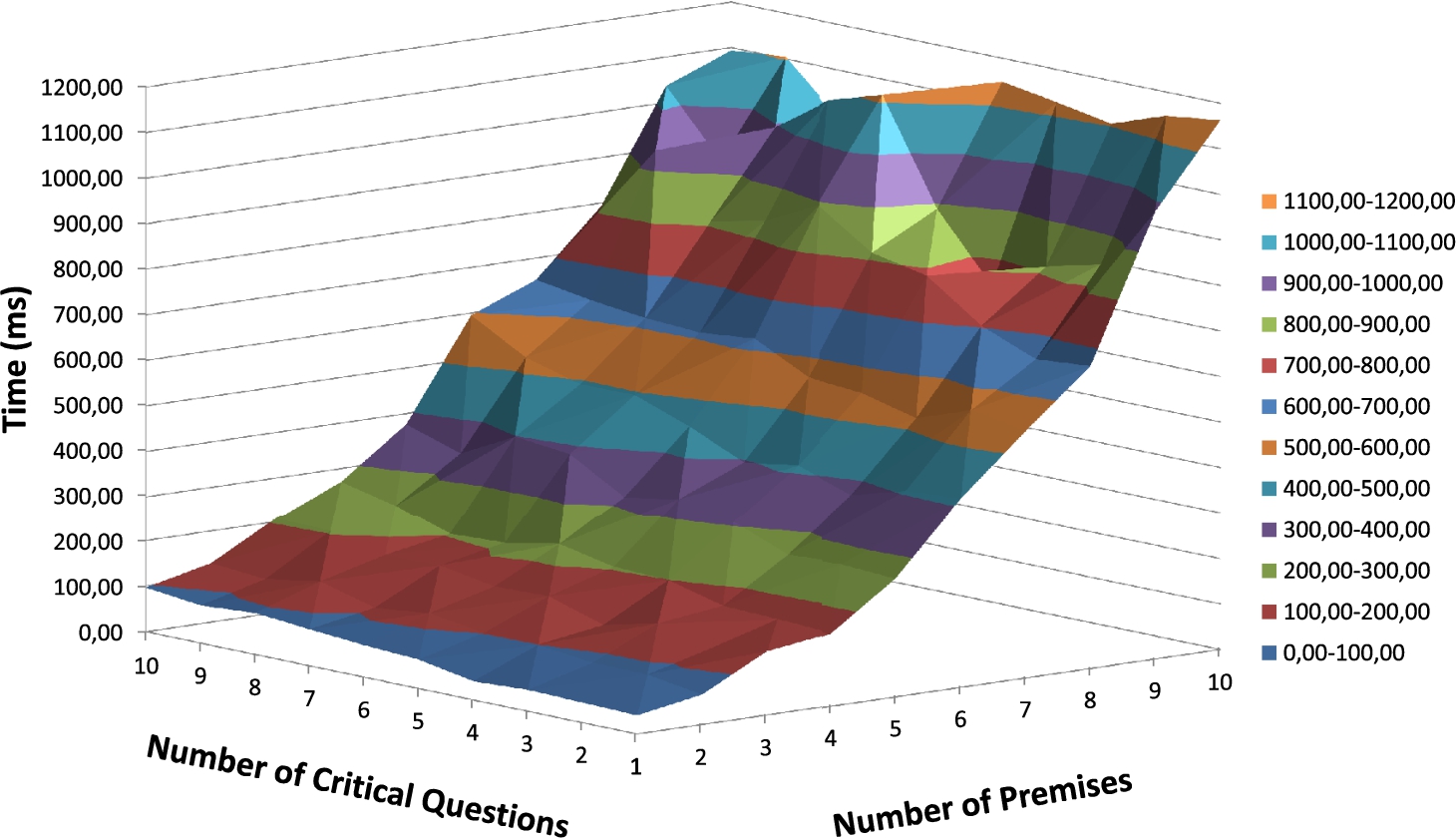

Time (ms) for reaching a conclusion considering 2 levels of inference.

First, we evaluate our implementation when modelling argumentation schemes without chained arguments, in which premises and critical questions are asserted into agents’ belief base. Figure 1 shows our results, varying the number of premises and critical questions from 1 to 10. It can be noted that there is a similar influence of the number of premises and critical questions in the final time for an agent to construct an acceptable instance of an argumentation scheme.

We then evaluate our implementation considering chained arguments, in which the premises of the main argument are conclusions of other arguments constructed from different argumentation schemes. Thus, first an agent constructs acceptable instances of arguments from their respective argumentation schemes for each premise of the main argument, and using those premises it constructs the main argument as an acceptable instance of its respective argumentation scheme. Figure 2 shows our results, varying the number of premises (and respective argumentation schemes, given each premise is the conclusion of an acceptable instance from a different argumentation scheme) and the critical questions. It can be noted that the number of premises has a greater influence on the time to construct the main argument than the number of critical questions. This results from the fact that premises of the main argument are conclusions of other arguments, which are constructed and evaluated from their respective argumentation schemes,9 For example, when considering chained argumentation schemes with 2 premises and 2 critical questions, besides answering the 2 critical questions of the main argumentation scheme, the premises of the main argument are the conclusion of 2 different arguments constructed from different argumentation schemes which also have 2 premises and 2 critical questions to be positively answered.

Our results provide a guide for the process of knowledge engineering of argumentation schemes in multi-agent systems (specially useful when using our implementation), showing how those reasoning patterns could be modelled depending on the multi-agent application requirements. On the one hand, when defining chained argumentation schemes (generating chained arguments), which will allow agents to execute a more detailed and refined reasoning process based on argumentation, as in [46], more time is required for agents to analyse and evaluate a particular conclusion from those argumentation schemes. On the other hand, when defining a single argumentation scheme, such reasoning is simplified but faster results are reached.

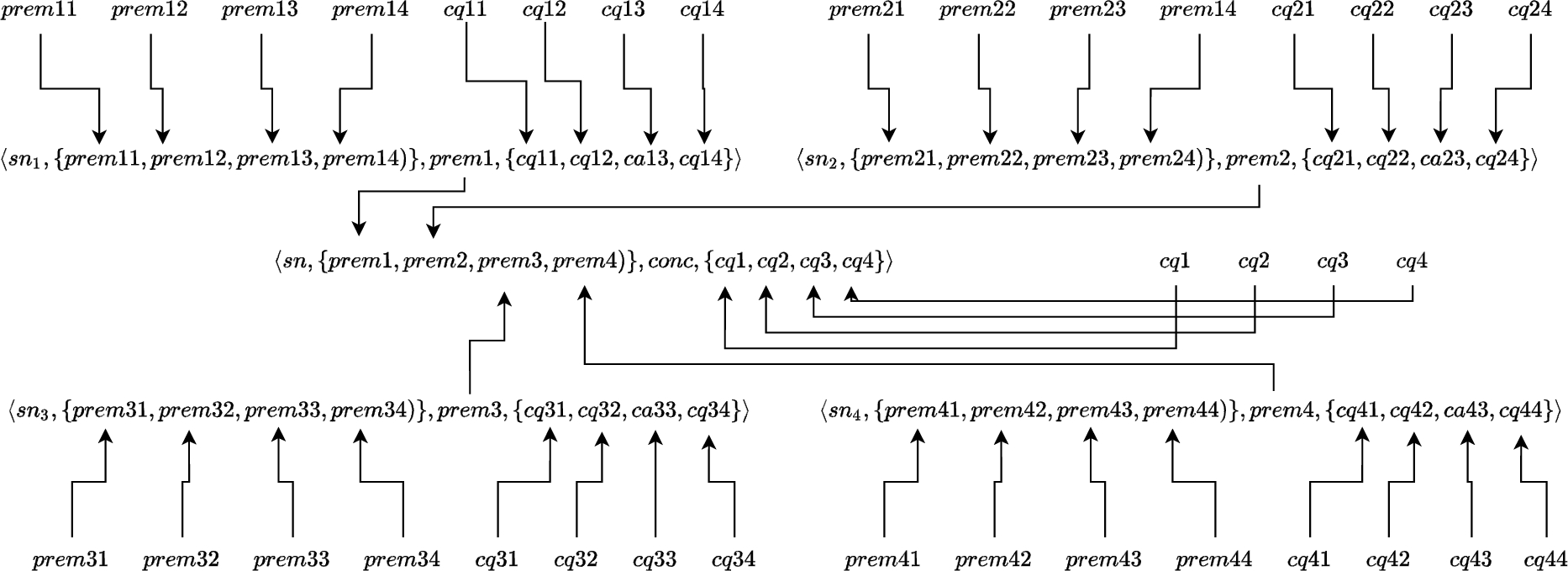

Abstract inference tree generated for chained argumentation schemes with 4 premises and 4 critical questions.

Figure 3 shows an abstract example for an inference tree generated by an agent reaching a conclusion

When participating in argumentation-based dialogues using argumentation schemes, agents must be aware of such reasoning patterns in order to have a full understanding of what is being communicated, which has been referred to as “scheme awareness” by Wells [91]. Being aware of the argumentation schemes used by others, when receiving an argument, allows agents to understand which argumentation scheme has been used to instantiate that argument, identifying the critical questions related to it, being able to understand what implicit information was used by the speaker when building that argument.

(Scheme awareness).

An agent

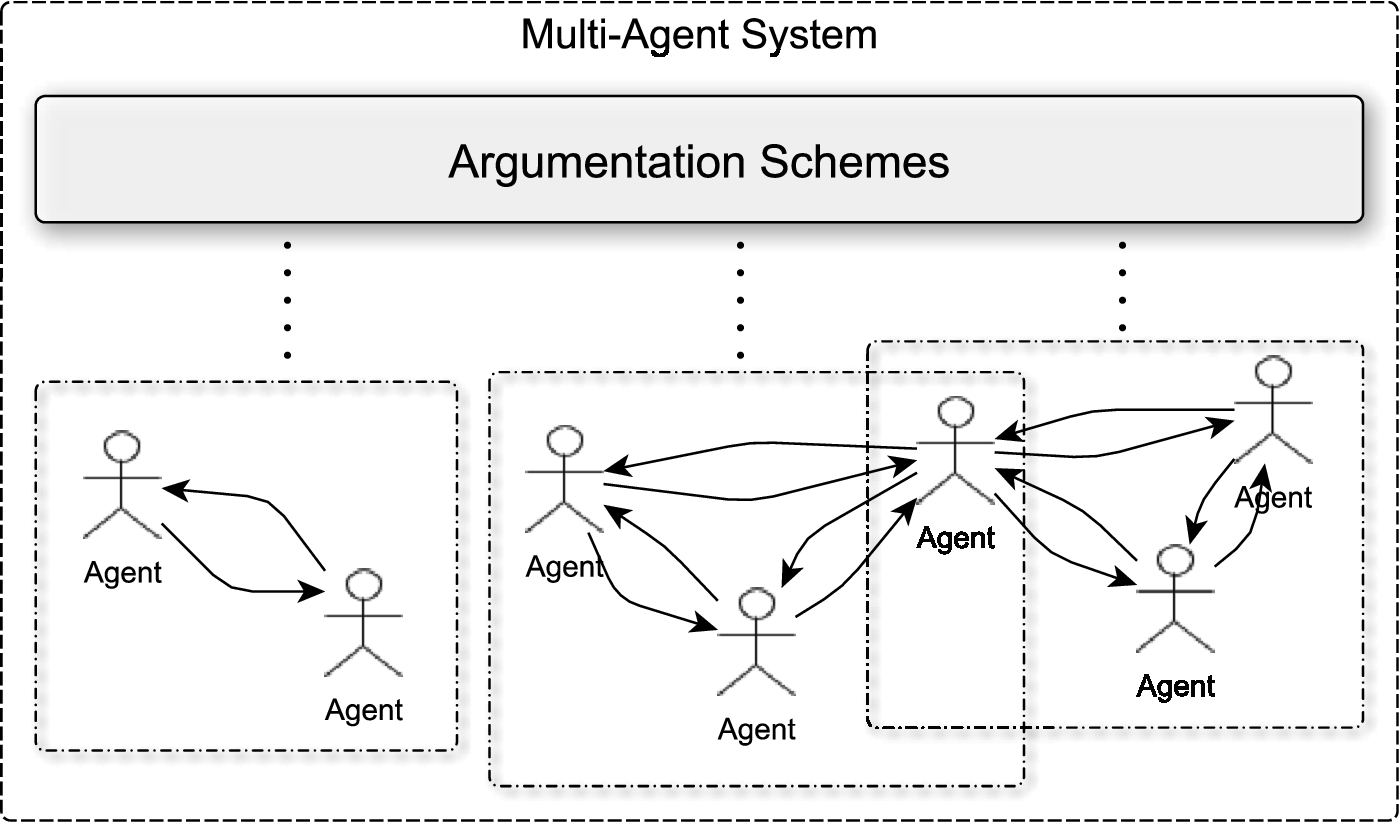

A few approaches in the literature, for example [48, 49], propose that agents should openly share such argumentation schemes in order to deal with the inherent problem of scheme awareness. An overview of those approaches to argumentation schemes in MAS is shown in Fig. 4, where all agents are able to instantiate arguments from shared argumentation schemes at runtime. Whilst those approaches solve the problem of scheme awareness as defined by Wells [91], and it is adequate to many multi-agent architectures using shared domain-specific knowledge [23, 52, 74], we propose a more general approach, allowing agents to have knowledge of specific argumentation schemes and to be able to communicate them when necessary.

Argumentation schemes shared by all agents.

In communication, the acceptability of arguments received from other agents is directly associated with the agents’ rationality, i.e., when an agent

We use

In the multi-agent paradigm, communication is often based on the speech-act theory [75]. In such approaches, messages have the following format

Recall that

In the course of argumentation-based dialogues, agents make commitments based on which speech act they use. These commitments are stored in the commitment store (CS) that consists of one or more structures, accessible to all agents in a dialogue.11 Other names are used for CS, such as

In this section, we describe a framework for the specification of dialogue games. In previous work [54], we used this framework to model argumentation-based dialogues based on a particular protocol. Here, we use the same framework to specify an argumentation-based protocol based on our approach for argumentation schemes in multi-agent systems. The framework for dialogue games is based on work by McBurney et al. [38, 39], in which the elements that correspond to the dialogue game specification are:

In particular,

(Dialogue game protocol [54]).

A dialogue game protocol is formally represented as a tuple

(Dialogue move [54]).

We denote a move in

The dialogue rules in

(Dialogue rules [54]).

Dialogue rules can assume one of two forms:

we have dialogue rules that specify which moves are allowed given the previous move and conditions (corresponding to the combination rules of the dialogue game).

we have the initial conditions (corresponding to the commencement rules of the dialogue game), which do not require any moves to have been previously executed.

Using this framework for dialogue games, we will define a protocol that takes argumentation schemes under consideration. we then show how we implement that protocol (and respective dialogue rules) for Jason agents [12].

In order to define the dialogue rules we use the following notation:

We write We write We write We write

A protocol using argumentation schemes

Our protocol combines persuasion and information-seeking dialogues. A persuasion dialogue is used when an initiator agent believes something that it wants another agent to be convinced to believe as well [76]. In this type of dialogue, an agent starts the dialogue with an

In the previous sections, we have used examples of arguments typically used in persuasion dialogues, instantiated from the argumentation scheme from role to know. For example, in a hospital nurses usually use arguments based on doctors’ assertions in order to convince patients to accept a treatment, a diagnostic, etc.

In order to evaluate our framework, we have implemented the following protocol, which is an extended version of [54], making reference to components of our framework for argumentation schemes in multi-agent systems, as well as allowing agents to question the argumentation scheme used by other agents during the dialogue:

an agent an agent an agent an agent Note that an agent an agent an agent

The dialogue starts when an agent wants to argue about a given subject. The initial rule states that an agent must have an argument that supports its claim in order to start an argumentation-based dialogue (as the agent will be committed to defend the initial assertion). Considering our framework for argumentation scheme in multi-agent systems, it requires the agent being able to instantiate an acceptable instance of an argument from an argumentation scheme in

The options of the agent are: (i) to accept the previous claim, where condition

Considering that the agent has asserted

When the agent is not aware of the argumentation schemes used by the other agent, it questions the other agent about such scheme, so that it will be able to properly evaluate that argument when receiving the information about the argumentation scheme used to instantiate it. Otherwise, the agent will accept the subject of the dialogue, The argument is new because, as defined in the protocol, the agent cannot repeat a move with the same content.

When the agent receives an accept move it will close the dialogue. Only the proponent will receive an accept move when the opponent accepts the subject of the dialogue.

An agent is supposed to be aware of the argumentation scheme previously used to instantiate an argument used in that particular dialogue move, thus it will provide such an argumentation scheme when questioned.

The dialogue then follows as when the agent receives a justify move being aware of the argumentation scheme used by The argument is new because, as defined in the protocol, the agent cannot repeat a move with the same content.

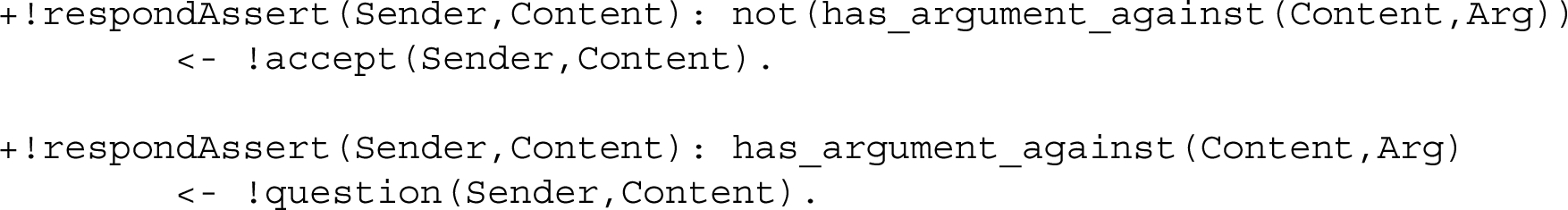

The dialogue rules presented in the previous section can be easily implemented in multi-agent platforms in which agent practical reasoning is inspired by reactive planning systems, such as the Jason platform [12]. In Jason, when an agent receives a message, an event of the form

The number of plans to handle the event of receiving a particular message will be equivalent to the number of dialogue rules that restrict the next possible move with which the agent can respond. For example, the

Similarly, other conditions used in dialogue rules can be easily implemented using our framework through a code library that we implemented and made publicly available [47, 55].

Experiments

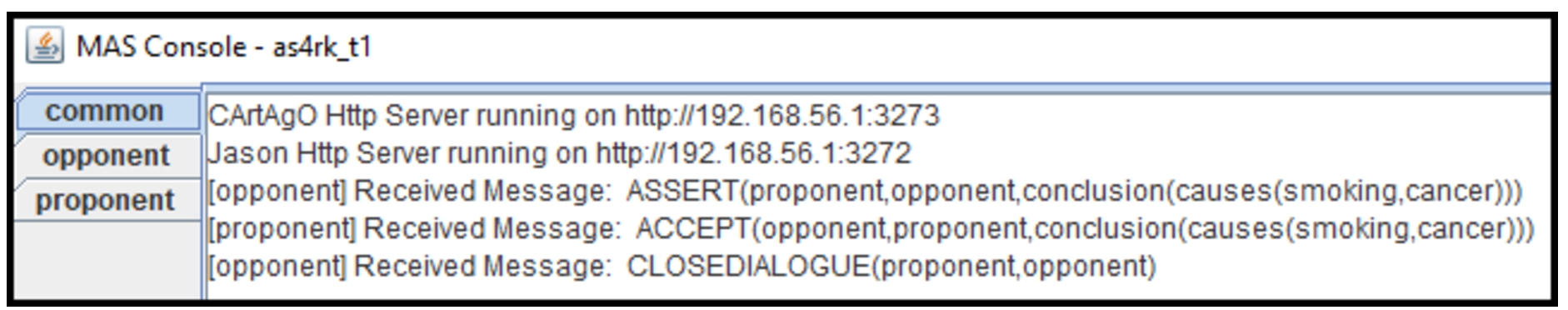

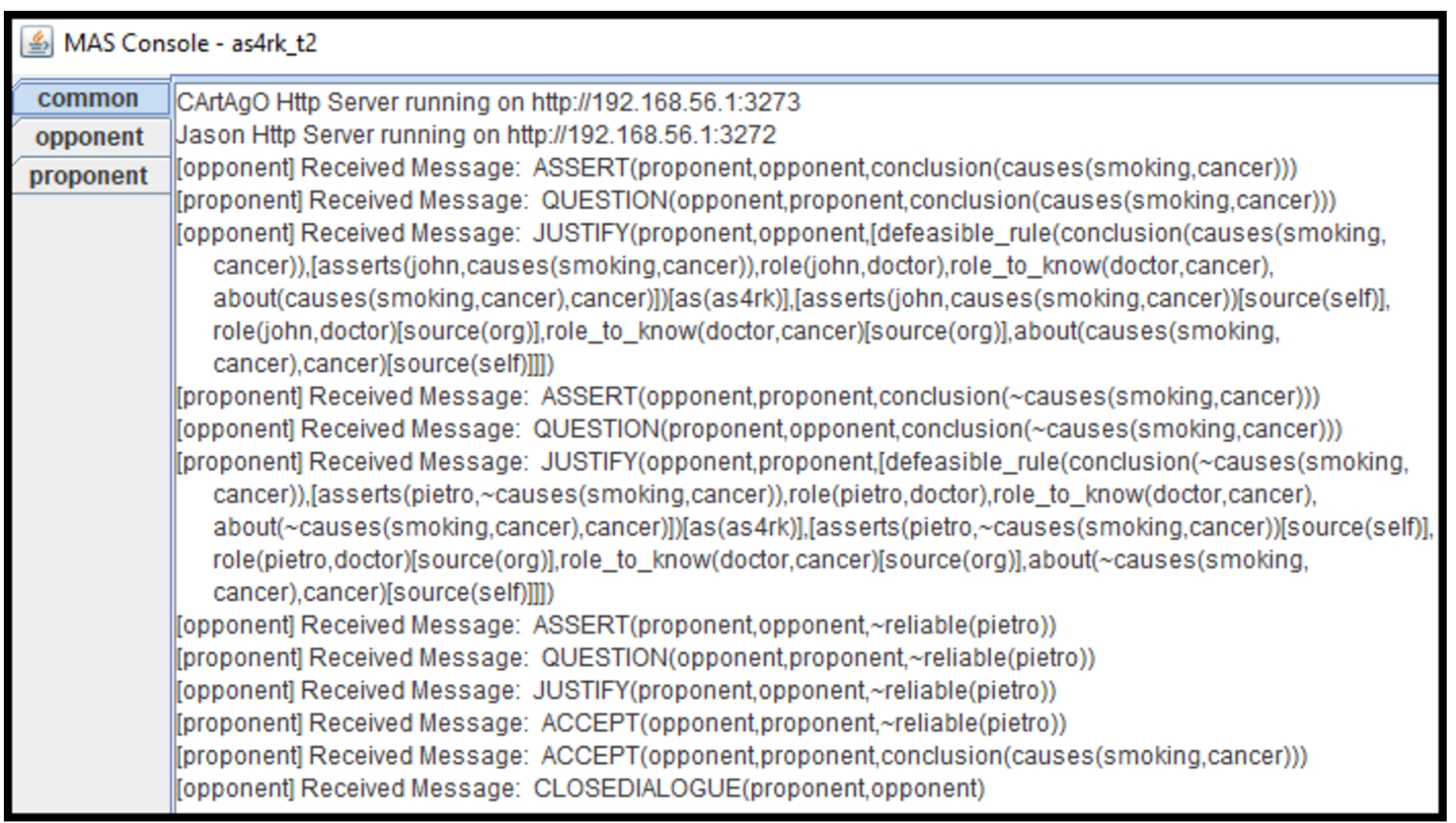

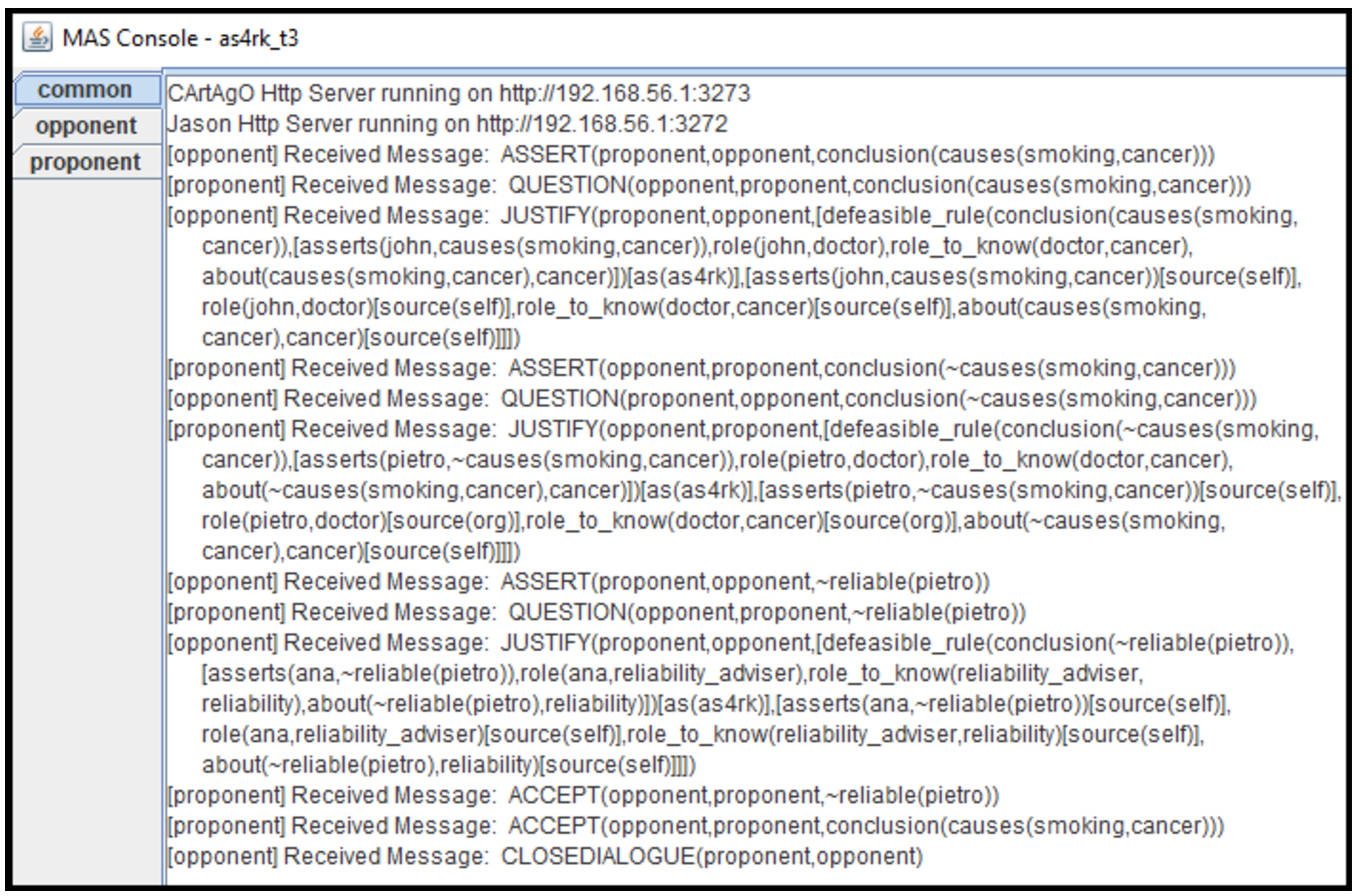

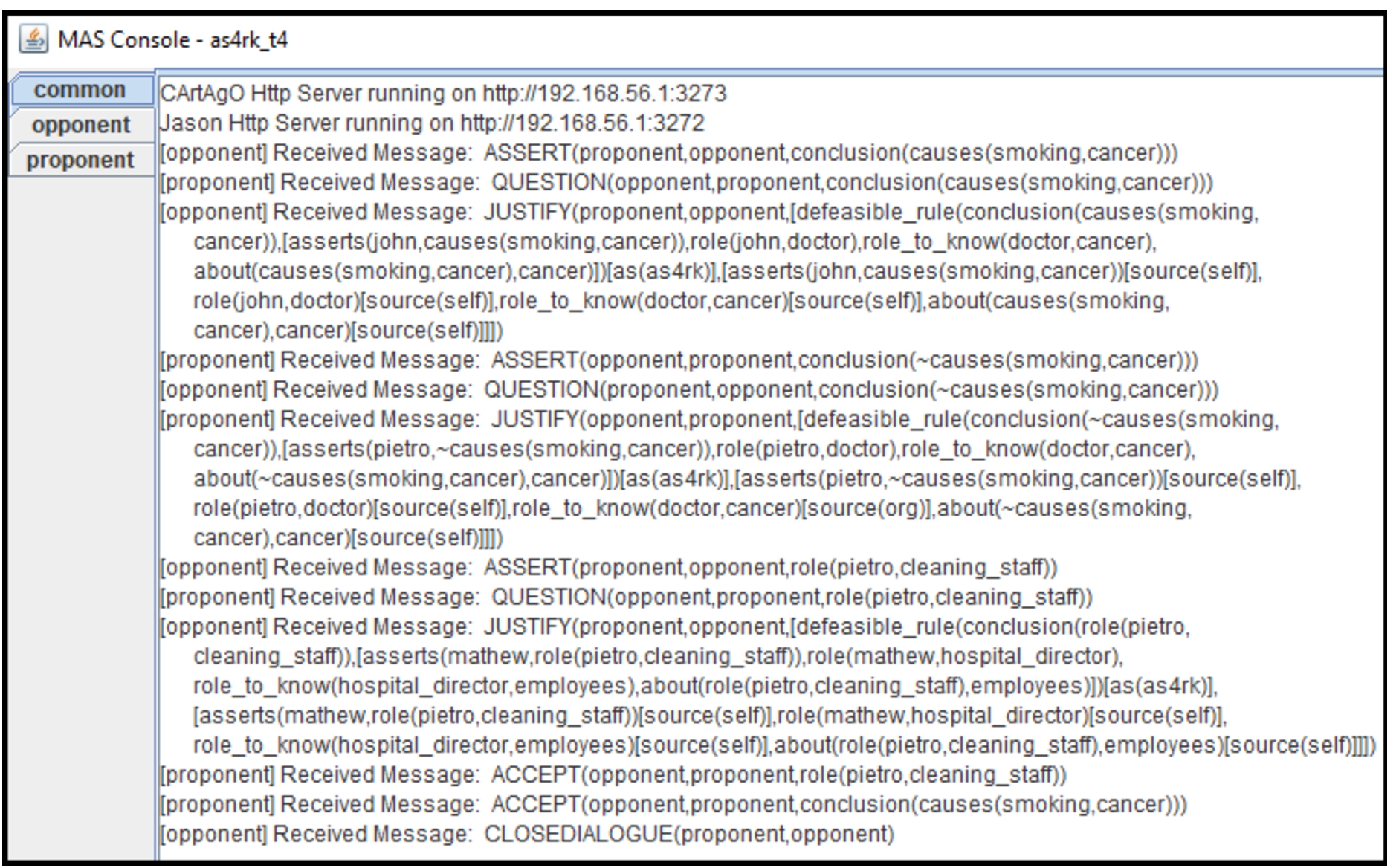

We ran some experiments to show different dialogues resulting from the protocol presented in Section 5.3 and implemented in our framework. In our experiments, two agents, named

In the case where agent

First example.

In the case where agent

Considering the

Second example.

These two first examples demonstrate dialogues that occur when we do not have chained arguments. Using chained arguments, agents may have more interesting dialogues. For example, continuing our example from Section 4.1, imagine that the

Third example.

Fourth example.

The dialogue shown in Fig. 7 demonstrates an example in which agents are able to argue about the critical questions pointed by the argumentation scheme used to instantiate the arguments used during the dialogues. Besides, agents are also able to argue about the premises of arguments. For example, imagine that the

There are some interesting properties of our approach that are important for the development of multi-agent systems when applying our argumentation framework. One important property is that our approach is

Our approach keeps the essence of argumentation schemes discussed by Walton [89] into the knowledge representation, reasoning, and dialogues, while also keeping the knowledge engineering of argumentation schemes as straightforward as possible. To model an argumentation scheme into our approach, engineers only need to think about the premises, conclusion, and critical question that will implement that reasoning pattern, representing such information using first-order formulas and linking them by a defeasible inference rule (premises and conclusion) and labels (inference rule and associated critical questions). After that, in case there are critical questions or premises resulting from other argumentation schemes (if the application requires nested argumentation schemes), they only need to model those argumentation schemes in the same way, matching the conclusion of that argumentation scheme with the corresponding premise or critical question. When reasoning, agents are going to evaluate arguments that are instances of those argumentation schemes, and being aware of the reasoning pattern being used to instantiate a particular argument, agents are able to link the critical questions associated with it, bringing to light that implicit information used to construct the argument, so properly evaluating its validity. Most importantly, during dialogues, agents will communicate arguments similar to how humans do. Also, by identifying the reasoning pattern used by others when instantiating an argument, agents will have a complete understanding of all information used by the speaker to evaluate the validity of the argument, including the implicit information pointed by the critical questions. It is very different from including those critical questions as additional premises into the arguments: in our approach they need not be communicated by agents, which reflects rationality and intelligence as observed in human dialogues, also reflecting some of Grice’s maxims [29]. Also, it is fundamentally different from modelling critical questions as undercutting arguments, in which the receiver of an argument only will be able to evaluate those critical questions when aware of such arguments (which would not be provided by a speaker, typically). Providing the argumentation scheme used to instantiate an argument would be natural for many types of dialogues and in various scenarios such as legal argumentation, for example.

Our approach takes into consideration the role of critical questions in argumentation schemes, covering all the possible roles of critical questions pointed out in [84], i.e., critical questions that: (i) criticise premises, as shown in our examples when criticising the role of an agent; (ii) point out exceptional situations in which the scheme could not be used, as shown in our examples when criticising the reliability of an agent; and (iii) representing conditions for the schemes’ use, given that we are able to model context-dependent argumentation schemes including critical questions of the type: “Is C (the current context) the right context?”, representing it as

Using this computational representation for argumentation schemes combined with the consideration of all roles of the critical questions, we provide a refined and modular argumentation-based reasoning mechanism, in which agents first instantiate and evaluate arguments individually, according to Definition 3, using those acceptable instances of argumentation schemes to check which ones are collectively acceptable given a particular argumentation semantics, according to Definition 6. To the best of our knowledge, there is no other work that proposed argumentation-based reasoning using a general structure for argumentation schemes with implicit critical questions. Also, we provided an empirical evaluation, showing how our (open source) implementation performs according to different levels of specificity for argumentation schemes (single and chained argumentation schemes, with varying numbers of critical questions and premises). Those results might guide the knowledge engineering process of modelling argumentation schemes according to the application needs.

Further, our framework has important properties regarding argumentation-based dialogues: (i) first, we allow agents to be able to communicate argumentation schemes when necessary, solving the problem of scheme awareness as discussed by Wells [91]. Thus, when an agent receives an argument and it is not able to identify which argumentation scheme has been used to instantiate that argument (and consequently not being able to evaluate individually that argument), the agent can ask other agents to inform which argumentation schemes have been used; (ii) second, when engineering multi-agent systems, our approach may guide the modelling of argumentation-based protocols, in regards to the purpose of such dialogues and supporting agents with different levels of bounded rationality; (iii) third, when considering (deep) disagreements among the participants, our approach allows agents to engage in subdialogues concerning the critical questions related to the argumentation schemes used to instantiate arguments, in the fashion of a dialogue-subdialogue structure. Althgouh this is part of our ongoing research efforts, note that it means agents possibly entering into a subdialogue about some implicit information used in that argument, as shown in the dialogue in Fig. 7, which has feature that may be regarded as intelligent behaviour for autonomous agents.

Finally, our work provides interesting directions for the development of Explainable AI [30, 31] and the interaction between humans and agents in the context of Hybrid Intelligence [1]. When modelling argumentation schemes to this end (explainability), agents are directly able to create, evaluate, and communicate arguments providing explanations for one another or for humans [41]. Templates of the argumentation schemes can be used to provide a translation between the natural language and the computational representation of arguments, allowing for more sophisticated human-agent interactions [51], or even using chatbot technologies [20–22]. These research directions are part of our ongoing work.

Related work

Related work exists using argumentation schemes in multi-agent systems [28, 34, 46, 57, 79, 81], all of which concern the modelling and using one (two in [46]) argumentation scheme that covers the application needs. However, none of those approaches proposes a general representation of argumentation schemes in agent-oriented programming languages.

In [71], the authors have described a formal account for argumentation schemes; however, such formal representation does not directly give to agents a mechanism either for reasoning with schemes or for constructing arguments using schemes. That work focused on the integration of the ARAUCARIA [70] tool (used to specify argumentation schemes) and agent platforms. Although we took inspiration from [71], we proposed a general structure for argumentation schemes in agent-oriented programming languages, which directly enables agents to use such representation for reasoning and communication.

In [84], the authors described a method to investigate argumentation schemes, which consists of: (i) determining the relevant types of sentences; (ii) determining the argumentation schemes; (iii) determining the exceptions blocking the use of the argumentation schemes; (iv) determining the conditions for the use of the argumentation scheme. We took inspiration from [84], using the desiderata for computational models of argumentation schemes, and [89], as a guide to propose a general structure for argumentation schemes, also considering all roles that critical questions could play.

In [15], the authors introduce a proposal to represent and share arguments, called the Argument Interchange Format (AIF), which represents a standard “abstract model” established by researchers across the fields of argumentation, artificial intelligence, and multi-agent systems. As described in [15], one of the major barriers to the development and practical deployment of an argumentation system is the lack of shared, agreed notation for argumentation and arguments. AIF aims to establish the following principles: (i) a machine-readable syntax for argumentation schemes; (ii) an explicit and machine-processable semantics for argumentation schemes; (iii) a unified abstract model with multiple reifications; (iv) core concepts within multiple extensions. AIF has at its core arguments and argument networks, communication (locutions and protocols), and context (the environment in which argumentation takes place). The AIF has been extended to capture dialogic argumentation in [72]. Furthermore, in [66], the authors also extend AIF to incorporate the representation of argumentation schemes by Walton [89]. Thus, critical questions are considered through a particular ontological relation named

In [93], the authors classify different levels of representation for argumentation schemes found in the literature, namely: (i) atomic level; (ii) functional roles and typed propositional functions; (iii) functional roles and instantiated predicates; (iv) labelled roles and strings; and (v) canonical sentences. Thus, the authors in [93] propose a functional language for computational analysis of argumentation schemes, so that different argumentation schemes can be compared. Although our aim is not to investigate the relationship between different argumentation schemes but to represent argumentation schemes in agent-oriented programming languages in order for the agents to be directly able to manipulate them, we took some inspiration from [93], inheriting the most refined and detailed representation for argumentation schemes given by (ii).

In [91], the authors describe how argumentation schemes could be exploited in dialogue games, introducing the idea of “scheme awareness”, providing not only a guideline for the development of new dialogue games using argumentation schemes but also a mechanism to extend dialogue-game frameworks to account for scheme awareness. They claim that current approaches to dialogue games can be categorised into three levels: (i) games unable to utilise argumentation schemes; (ii) games able to utilise a single scheme; and (iii) games able to utilise multiple/arbitrary schemes. Also, they claim that currently there are no approaches to games at level (iii). Whilst our work focuses on how agents represent, manipulate, and instantiate arguments from an internal representation of argumentation schemes to carry out argumentation-based reasoning, our approach forms the basis for argumentation-based dialogues using multiple argumentation schemes, i.e., as in (iii), given that agents can reason over any number of argumentation schemes, communicate those arguments, and communicate the argumentation schemes used to instantiate those arguments when necessary.

In [33], the authors present an approach for structured argumentation in multi-agent system dialogues. The authors claim that the use of argumentation in inter-agent dialogues may be beneficial to the agents. Also, they state that existing work on the experimental evaluation of the benefits of argumentation in agent dialogues make use of very simple models of argumentation, in which arguments have no or very little structure. We follow some directions pointed out in [33], defining a complete structure for arguments based on argumentation schemes, and experimentally evaluating our approach for argumentation-based dialogues.

The idea of labelling arguments with meta-information used in this paper comes from Gabbay’s work [24], further developed by other authors in [13, 14, 16, 45, 56]. While all that work focuses on modelling an argumentation framework in which the labels play an important role in the inference mechanism (mostly propagating strength of premises to the conclusions of arguments), here we propose a more modest use in which labels are used to make reference to argumentation schemes used to instantiate those arguments, pointing out the critical questions related to those instances of arguments.

In [41], the authors explore two important contributions of Walton’s work to AI (particularly in the design of autonomous software agents able to reason and argue with one another), namely argumentation schemes and dialogue protocols, describing how they may apply to current research on Explainable AI by automated decision-making systems. The authors also mention an example of how argumentation schemes could be chained, not only regarding their premises and conclusion but also regarding the critical questions, in which the answer of a critical question could be the conclusion of an argumentation scheme. The work presented by [41] inspired us to develop our argumentation-scheme-centred framework, keeping the essence of argumentation schemes presented by Walton’s work. Our approach moves towards the directions pointed out in [41] for using argumentation schemes in multi-agent systems, which also can be used to support explainability. Some initial steps towards this direction are found in [51].

In [6, 64], the authors present a formal account of legal reasoning, which can be seen as moving from evidence to facts, from facts to factors, and from factors to legal consequences. Their previous work, the CATO system presented in [2] and [8], implemented a single step of argumentation, concerning the last phase for legal reasoning, i.e., moving from factors to legal cases. Thus, they extend CATO system to bring the assignment of factors within the scope of the system, i.e., moving from facts to factors, and so open this aspect to explicit argumentation [6]. The authors propose CATO style argumentation schemes, in which schemes and undercutting attacks associated with them are formalised as defeasible inference rules within the ASPIC+ framework [42]. Differently from our approach, they use CATO style argumentation schemes. Argumentation schemes correspond to defeasible inference rules in ASPIC+, in which premises can be axioms (indisputable facts) or the conclusion from another argumentation scheme. Attacks are captured by defining undercutting CATO style argumentation schemes, which allow instantiating arguments against the application of another CATO style argumentation scheme. Undercutting arguments also may have undercutting arguments against their application. The work in [6, 64] moves towards a similar direction, which considers first using argumentation schemes to instantiate arguments (for and against deciding for a plaintiff, in their case), rebutting each other, and later solving those conflicts using argument graphs (with preference, in their case). Their formalism allows using nested argumentation schemes, similar to our approach. However, while undercutting schemes enable capturing the critical questions related to an argumentation scheme, their approach requires using nested argumentation schemes to model critical questions. That is, undercutting schemes allow agents to look for arguments concluding that the previous scheme does not apply in that particular case, implementing one particular role for critical questions [84]. Also, the approach in [64] may require more effort from the knowledge engineering point of view, in which modelling critical questions requires additional argumentation schemes.

Conclusion

In this paper, we have presented an argumentation framework developed on top of an agent-oriented programming language, in which argumentation schemes are at the core of the framework.

We first proposed a general structure for argumentation schemes represented in agent-oriented programming languages, in which argumentation schemes of different levels of specificity can be modelled, allowing the development of applications that use argumentation schemes with and without implicit critical questions, as well as using single or chained argumentation schemes.

We then presented an argumentation-based reasoning mechanism, in which agents consider those modelled argumentation schemes in order to construct and define the acceptability of arguments. In order to evaluate our framework in regards to argumentation-based reasoning, we implemented our framework and ran some experiments to show the performance of agents that follow our approach, considering different levels of specificity of argumentation schemes, i.e., using single and chained argumentation schemes. Our results confirm our initial hypothesis that using argumentation schemes without chained arguments is computationally more efficient than using chained arguments. These results reflect on the development of intelligent agents with different levels of bounded rationality, and also might guide knowledge engineers in the process of modelling argumentation schemes. While using a single argumentation scheme has been sufficient for the development of many multi-agent applications using argumentation [28, 34, 57, 79, 81], there are application domains in which a more complex reasoning process is required, for example, [46, 64], and our implementation supports both approaches.

We also presented an approach for argumentation-based dialogues using argumentation schemes, defining a protocol for argumentation-based dialogues that combines deliberation and information-seeking dialogues. We implemented that protocol for Jason agents [12], and showed different lines of argumentation using that protocol. Not only did we validate our framework through those examples, but we also provided a solution for the problem of scheme awareness described in [91].

Finally, we discussed how our work contributes to the development of the area of argumentation in multi-agent systems. We also discuss how our work moves towards the practical use of argumentation as a technique for explainable AI [30, 31]. In future work, we intend to implement applications with human-agent interaction using argumentation schemes. We intend to use argumentation schemes represented in both natural language and the formal representation presented in this work, as in [51], so that agents can use the appropriate representation depending on whether they are communicating with software agents or with human beings.

Footnotes

Acknowledgements

The authors dedicate this work to the memory of Professor Douglas Walton. Alison had the pleasure to meet Professor Douglas Walton in person during COMMA 2018. During one of Alison’s presentations during that conference, Professor Walton asked a question, concluding his speech with a compliment, saying that our approach to knowledge representation for argumentation schemes was

Semantics for speech-acts using argumentation schemes

In this appendix, we formalise the operational semantics for the speech acts described above. We define the semantics of speech acts for argumentation-based dialogues in AgentSpeak [68] (and in particular the Jason dialect [12]) using a widely-known method for giving operational semantics to programming languages [61]. Although we use AgentSpeak, the formal semantics makes reference for general components of the BDI architecture, therefore any language based on concepts such as beliefs, intentions, etc., could also benefit from our formalisation.

The operational semantics is given by a set of inference rules that define a transition relation between agent configurations We use here only the components that are needed to define our semantics.

In the interest of readability, we adopt the following notational conventions in our semantic rules:

If We write: (i) We use a function