Abstract

Argumentation schemes have played a key role in our research projects on computational models of natural argument over the last decade. The catalogue of schemes in Walton, Reed and Macagno’s 2008 book,

Keywords

Introduction

Argumentation (or argument) schemes [60] have played a key role in our research projects on computational models of natural argument over the last decade. Argumentation schemes have been described as patterns of acceptable, presumptive arguments in law, science and everyday conversation. The Walton et al. catalogue of schemes served as our starting point for analysis of the naturally occurring1 “Naturally occurring”, as opposed to arguments created by research participants or by students in response to a school assignment.

Research projects involving argument schemes

Among computational researchers, the main interest in argumentation schemes has been for use in argument mining by machine learning (ML) methods [41, 56]. Feng and Hirst [13] proposed that after an argument’s premises and conclusion had been identified automatically, recognition of its argumentation scheme could be used to infer implicit elements of the arguments (enthymemes). They created ML classifiers to recognize several argument schemes of the Walton et al. catalogue in the Araucaria corpus, which contains annotated premises and conclusions of arguments from newspaper articles and court cases [52]. Lawrence and Reed [40] experimented with a corpus of arguments extracted from a 19th century philosophy text, in which proposition types had been identified by ML. Then groups of propositions that could belong to the same argumentation scheme (several schemes from the Walton et al. catalogue) were recognized as arguments, where missing elements of a scheme were assumed to indicate enthymemes.

In contrast, a primary goal of our research has been to learn more about written arguments themselves in various contemporary fields. Our approach has been to manually analyze semantics, discourse structure, argumentation, and rhetoric2 However, we did not analyze rhetoric systematically until we read the 2017 8(3) special issue of Argumentation and Computation on rhetoric.

This paper is organized as follows. First, the various projects are described, more or less chronologically, in sufficient detail to describe the methods, the argument schemes that were identified, and how they were used. Then a synthesis of the results is given with a discussion of open issues.

GenIE (Genetics Information Expression) assistant

The GenIE Assistant [32] was a prototype system for generation of the first draft of genetic counseling letters. It was implemented as a testbed for research on natural language generation of transparent biomedical argumentation. The system architecture included (1) a knowledge base (KB), a causal network representation of domain knowledge about genetic disorders and of information about a patient’s case; (2) a discourse grammar with genre-specific rules for creating an abstract representation (DPlan) of a letter to a client; (3) an argument generator which generated arguments in propositional form for claims in the DPlan; (4) an argument presenter which made changes in the DPlan for the sake of text coherence and transparency; and finally, (5) a linguistic realization component to render the DPlan as English text. The DPlan contained propositions about the patient’s case from the KB and was structured by text coherence relations of Rhetorical Structure Theory (RST) [43].

GenIE’s argument generator created arguments for claims in the DPlan using its argument schemes and information in the KB. The argument schemes and the format of the KB were informed by a corpus study of genetic counseling letters.3 Nine letters ranging in length from 446 to 1537 words on seven genetic conditions, representing the three main types of single-gene inheritance (recessive, dominant, new mutation), written by four genetics counselors from different institutions. Although small in comparison to corpora used for machine learning, the corpus was representative of the genre and contained a variety of argument patterns. Although not incorporated into the final GenIE system, we also analyzed the different uses of probability statements in arguments in the corpus [15, 16].

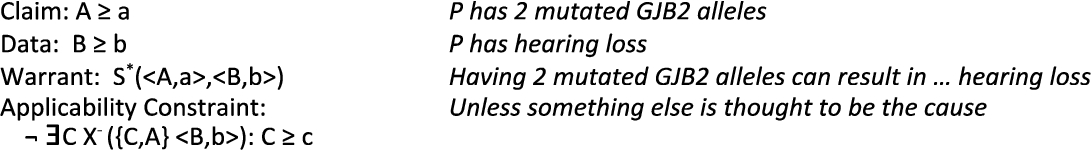

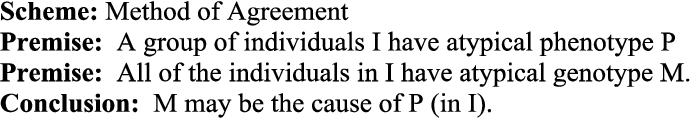

Rather than refer to domain content, the argument schemes were formulated at a higher level of abstraction in terms of qualitative network node variables and relations. For example, to paraphrase one of GenIE’s Effect to Cause schemes shown in Fig. 1, the claim that a node A in the KB was at or above a certain threshold is supported by the Data (premise) that a node B in the KB is at or above a certain threshold, and by the Warrant (premise) that there is a positive influence relation from A to B. Such a scheme could be used to generate an argument that a certain patient has a genotype of two mutated alleles of a GJB2 gene based on the data that he has hearing loss, and the warrant that this genotype can result in hearing loss. Note that, unlike the schemes in the Walton et al. catalogue [60], the GenIE schemes distinguished premises as Data or Warrant [57]. The Data premise represented patient-case-specific information (stored in nodes of the KB), while the Warrant represented general biomedical principles (represented as qualitative constraints in the KB). The Data/Warrant distinction played a role in the organization of the text (DPlan) and in decisions of the argument presenter. In the DPlan, the Warrant was represented as the satellite of an RST Background relation, whose nucleus was an RST Evidence relation; the satellite of the Evidence relation was the Data, and the nucleus was the Claim [17]. The argument presenter applied heuristics to the DPlan to make a letter more concise. One heuristic was if two adjacent arguments in the DPlan included the same warrant, the warrant in the second argument was replaced by an adverb such as ‘similarly’.

Simple effect to cause scheme in GenIE assistant (example in italics).

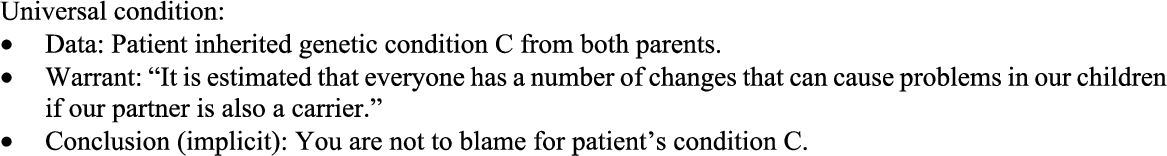

Universal condition affective argument scheme.

GenIE’s schemes also included “applicability constraints”, which were used to determine the applicability of a scheme during the argument generation process. In Fig. 1, the Effect to Cause scheme included an applicability constraint that can be paraphrased as “there is no other node in the KB which has positive influence on B.” The applicability constraints were used as critical questions in an interactive version of GenIE.

In subsequent work we identified several affective argument schemes in the corpus for mitigating the client’s possible negative reaction to information presented in the letters [33]. For example, see Fig. 2. If the partially generated letter contained the information in Data, then the Warrant would be inserted into the letter. The phrasing of the sentence expressing the Warrant was derived from the corpus. The Conclusion of the scheme was not explicitly stated in the generated letter, consistent with use of this scheme in the corpus.

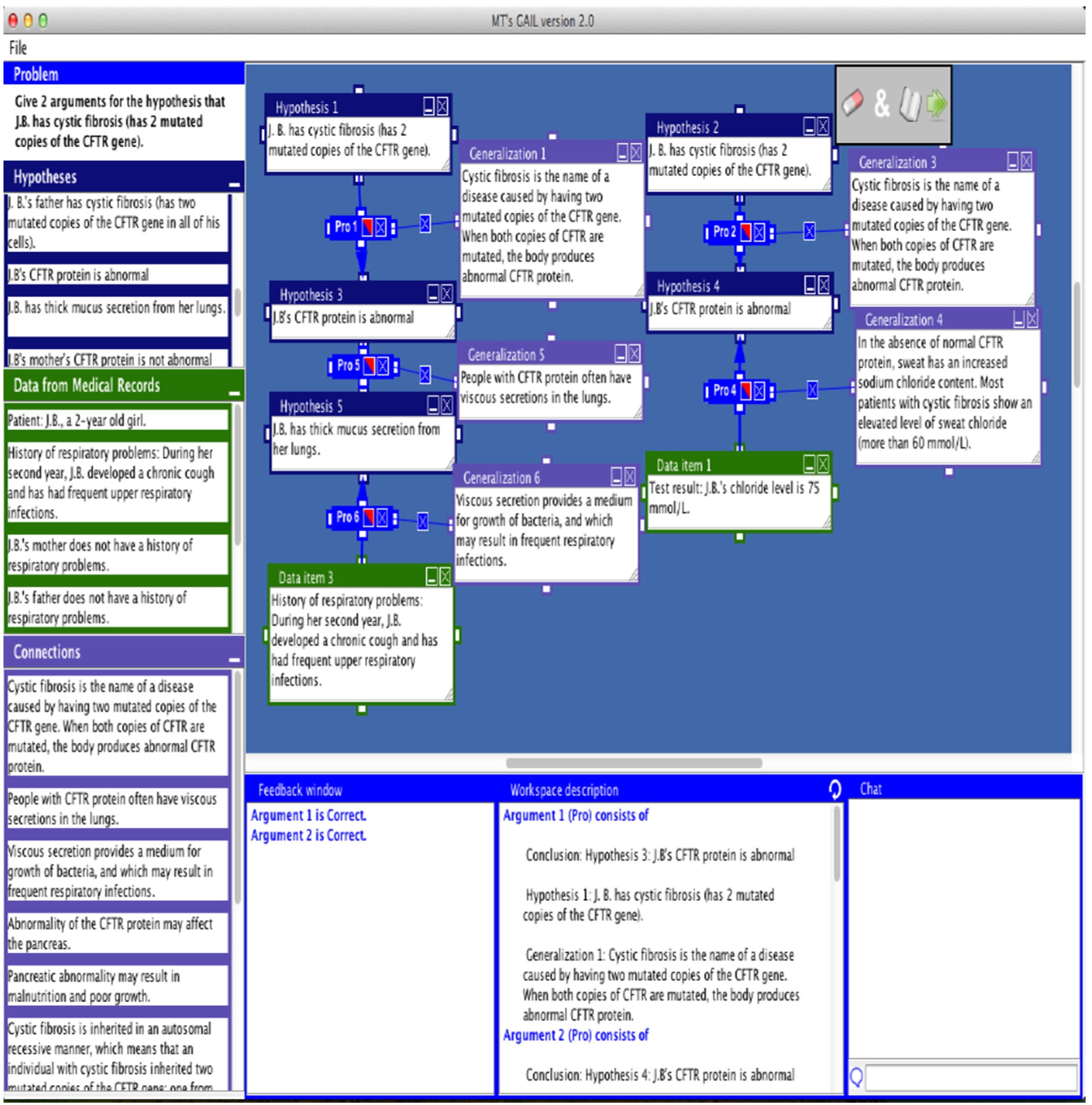

The argument generator and schemes developed for GenIE were repurposed as the core of an educational argument modeling system for biology students, GAIL [22, 34]. GAIL’s user interface presented the student with information to use in constructing graphical arguments: the Problem (to give an argument for a certain claim), the Data (information from a fictitious patient’s medical record), several possible Hypotheses, and Connections (possibly relevant principles of genetics, i.e. warrants). See a screenshot in Fig. 3. For example, a Problem was “Give two arguments for the hypothesis that the patient, J. B., has cystic fibrosis (has two mutated copies of the CFTR gene).” The Data about the patient included items such as “History of respiratory problems. During her second year, J.B. developed a chronic cough and has frequent upper respiratory infections”, and “J.B.’s mother does not have a history of respiratory infections”. The Connections included items such as “When both copies of CFTR are mutated, the body produces abnormal CFTR protein”, “Abnormality of the CFTR protein may affect the pancreas”, “People with CFTR protein often have viscous secretions in the lungs”, etc. To construct an argument, the learner could select appropriate items from the Data and Connections lists, drag them into the argument diagramming area, and connect them into a Toulmin-style (Claim-Data-Warrant) diagram of an argument (or chain of arguments as shown in Fig. 3).

GAIL screenshot showing two arguments. (Main claim at top of each chain; warrants at right-angles to chain.)

An authoring tool enabled instructors to create a knowledge base (KB) in the same format as used in GenIE to represent domain knowledge and information about a patient’s case. The authoring tool also created a mapping from the English text to be presented to the student on the screen to the underlying KB. After a student constructed graphical arguments for a claim, GAIL mapped the student’s arguments to their internal representations. Then GAIL used GenIE’s argument generator to create expert arguments for the problem. By comparing the student’s arguments to GenIE’s arguments, GAIL was able to generate feedback on the structure and content of the student’s arguments. Previous educational argument modeling systems required manual authoring of expert arguments in order to enable generation of feedback on content. Also, although not implemented in GAIL, it was noted that use of the critical questions of the argument schemes could support generation of additional feedback, such as “Can you make an argument for a diagnosis other than cystic fibrosis that explains the patient’s malnutrition as well as his frequent respiratory infections?”.

In this project we analyzed written arguments and persuasive visual devices in a five-page patient brochure published by a genetic testing company [18]. The goal of the brochure was to motivate patients to seek genetic testing from that company. We identified variants of causal and practical reasoning arguments and a specialization of fear appeal arguments based upon Protection Motivation Theory [49].5 When not otherwise noted, in this paper lower-case initial schemes refer to schemes in the Walton et al. catalogue [60].

After considering arguments addressed to the lay reader in the GenIE project, we investigated how argumentation is used in genetics research articles. Such articles are written by and for other scientists. Whereas the genetic counseling letters conveyed a simplified, accepted model of genetics to warrant the claims of the letters, the goal of scientific research is to discover new knowledge by rejecting or refining current models or proposing a new model. Thus, the argument schemes used in GenIE were not sufficient to model scientific research.

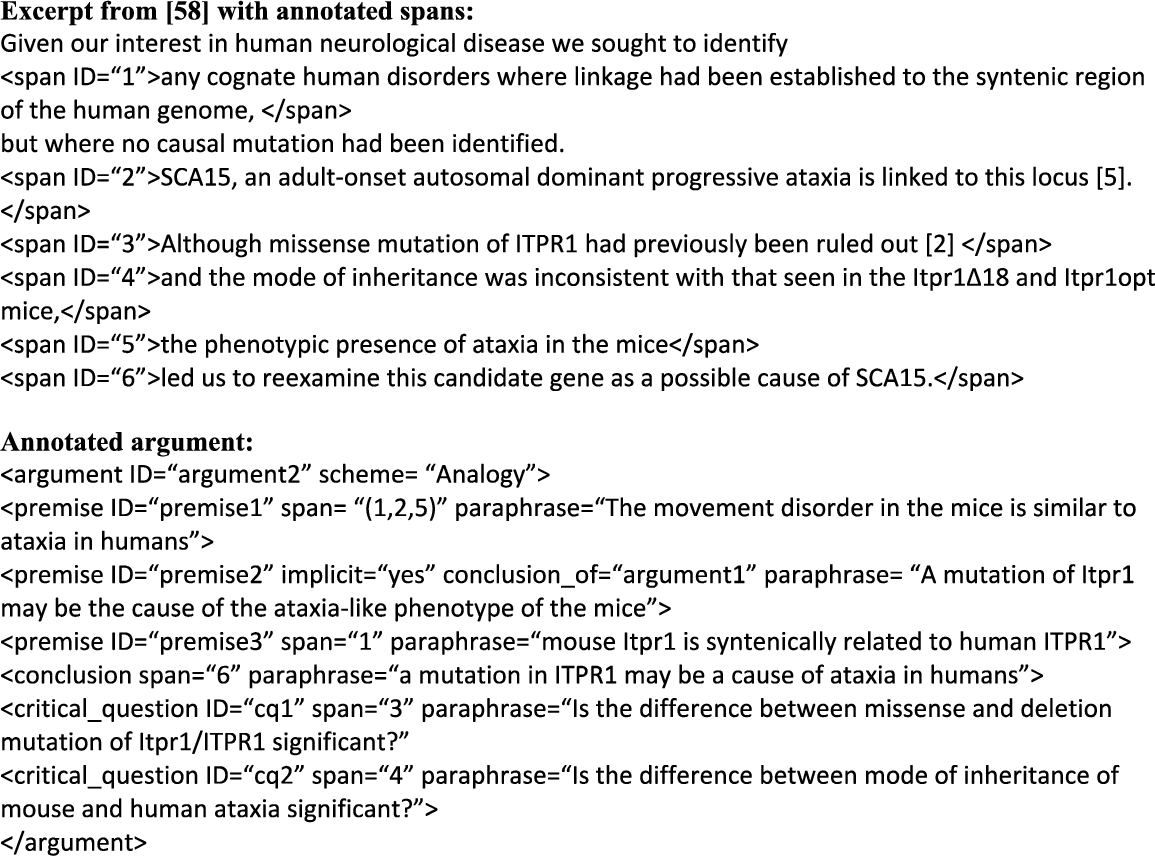

As a step towards creating an argument-annotated corpus of genetics research articles [19], we analyzed some of the arguments in a representative article [55]. The analysis distinguished premises as Data and Warrant. It found that some missing (implicit) Warrants were necessary for argument acceptability.6 Our analysis of the arguments was later confirmed by a domain expert. Also, in these definitions the premises did not distinguish Data and Warrant.

A pilot study [21] on using our proposed scheme definitions to analyze arguments in genetics research articles found that undergraduate biology students had considerable difficulty applying the schemes correctly.8 Factors that may have contributed to this difficulty include lack of motivation (the students did not volunteer for the task, which was given to them during their regular laboratory meeting) and lack of experience reading research articles.

Next, in order to create a freely available argument-annotated corpus, we selected a genetics research article [58] from the CRAFT corpus (CRAFT 17590087) to be annotated.9 Articles in the CRAFT corpus [5, 59] may be redistributed. The corpus includes annotations of concepts and linguistic structure.

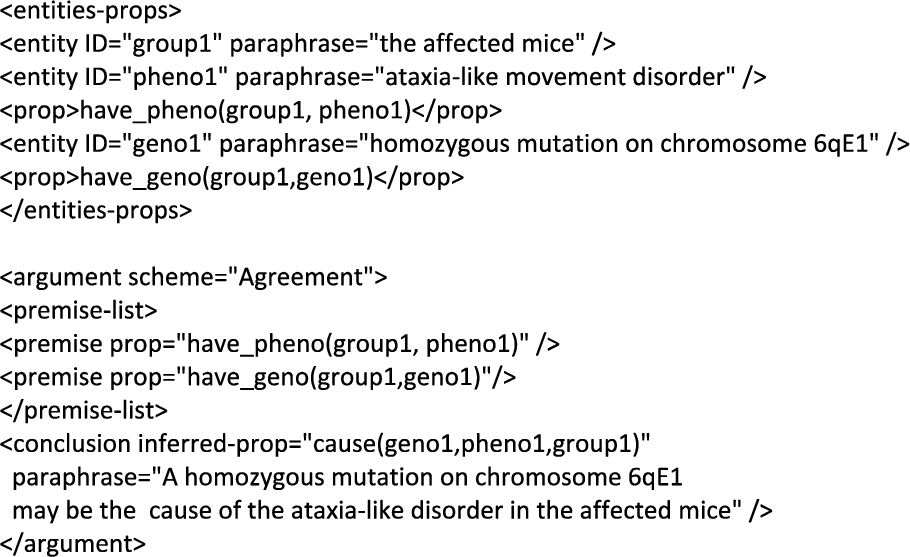

Example of proposed annotation of article in [20].

Genetics-specific method of agreement argument scheme.

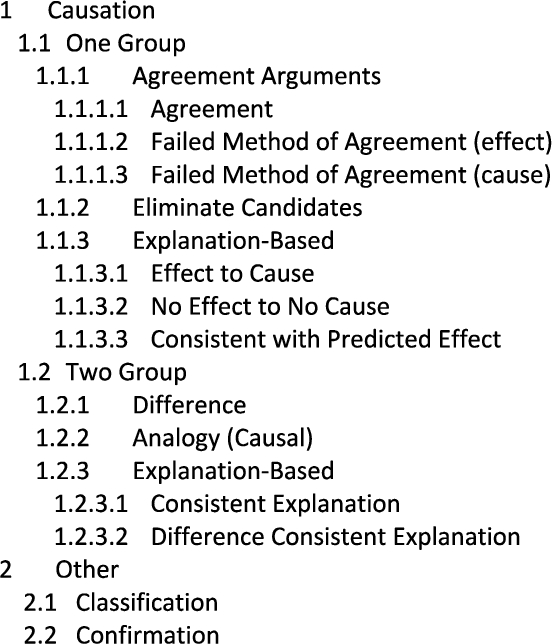

Taxonomy of argument schemes in genetics research articles from annotation guide.

Prolog rule for extracting argument based on method of agreement.

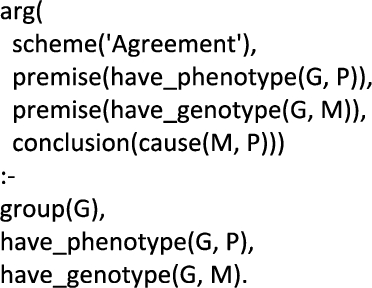

Note that the definitions of argument schemes in [21] did not refer to genetics concepts. However, given the results of the pilot study with undergraduates, we wanted to make the task easier for future annotators. Thus we redefined the argument schemes in terms of a small set of domain concepts such as genotype and phenotype, e.g. as shown in Fig. 5. The revised annotation guidelines10 Available at

After revising the scheme definitions in terms of genetics concepts and relations,11 Unfortunately we never received extramural funding to support creation of an argument-annotated corpus of genetics research articles. Thus, we refocused on the argument mining research described in the rest of this section. Although the rule can be said to extract the arguments in the sense of finding arguments in a text, in another sense, it is generating arguments like the argument generator did in GenIE. This is different from the sense in which arguments are extracted by current machine learning approaches to argument mining. In fact, due to the flexibility of Prolog, the rules could be used to restrict the arguments that are returned by partially instantiating the rule, e.g., to find all arguments such that some genotype causes a certain phenotype.

As described in [23], to make use of the rules to extract arguments, first, BioNLP tools [51] would be used to extract relations from a text for the predicates in the argument schemes, such as The annotated article is available at

Annotation of some entities and relations, and an argument.

In [23] we suggested that in the future argument schemes might be acquired by inductive logic programming [48] from articles whose entities, relations, and arguments had been similarly annotated. We are currently experimenting with use of inductive logic programming to derive the argument schemes from the annotated article [27].

In our next project, we approached a very different domain from the biology-related domains of our previous work. We found that international relations arguments involve the beliefs, goals, appraisals, actions and plans of social actors such as countries, governments, and politicians. The goal of this project was to develop a prototype argument modeling tool, AVIZE (Argument Visualization and Evaluation), to assist in the construction and (human) evaluation of arguments in this domain, based upon evidence of varying plausibility collected from sources of varying reliability. In addition to diagramming tools, AVIZE came with a set of argument schemes that we identified by analysis of several articles written by international relations experts.15 The schemes were based on an in-depth analysis of a 33-paragraph article [62] and a reading of several others.

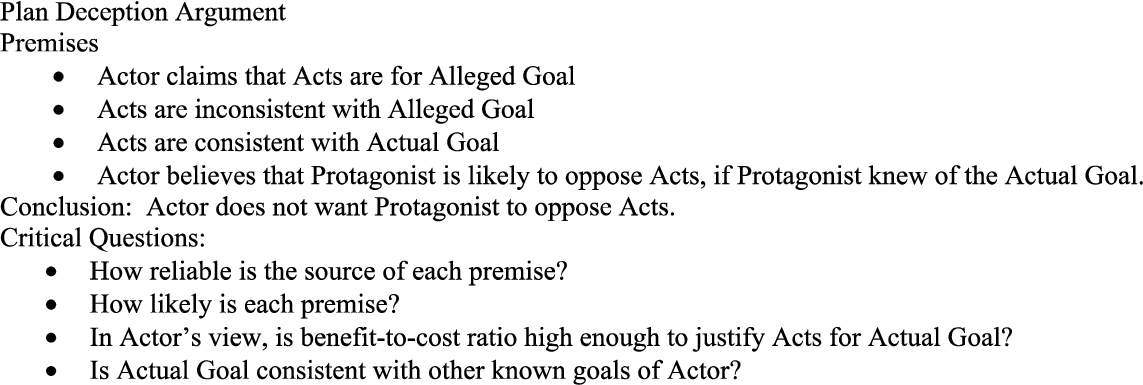

Plan deception scheme in AVIZE.

We identified the following other schemes used to argue about an Actor’s intentions: Plan Distraction, Inferred Plan, Coercion, Increasing Boldness, Behavior Pattern, Inferred Positive Appraisal, Inferred Negative Appraisal. Like the Plan Deception scheme, these schemes are domain-specific but are related to AI plan recognition heuristics. In addition, we identified the following schemes for arguing in favor of a planned action of the protagonist: Practical Reasoning, Resist Coercion, and Avoid Negative Consequences.16 A kind of summary can be provided by listing the names of the schemes that were used from beginning to end of the Weinberger [62] article: Plan Distraction, Coercion, critical question of Coercion, Resist Coercion, Plan Deception, Inferred Plan, Coercion, Increasing Boldness, Coercion, Inferred Plan, Inferred Plan, Resist Coercion, Practical Reasoning, Avoid Negative Consequences, Avoid Negative Consequences, Practical Reasoning.

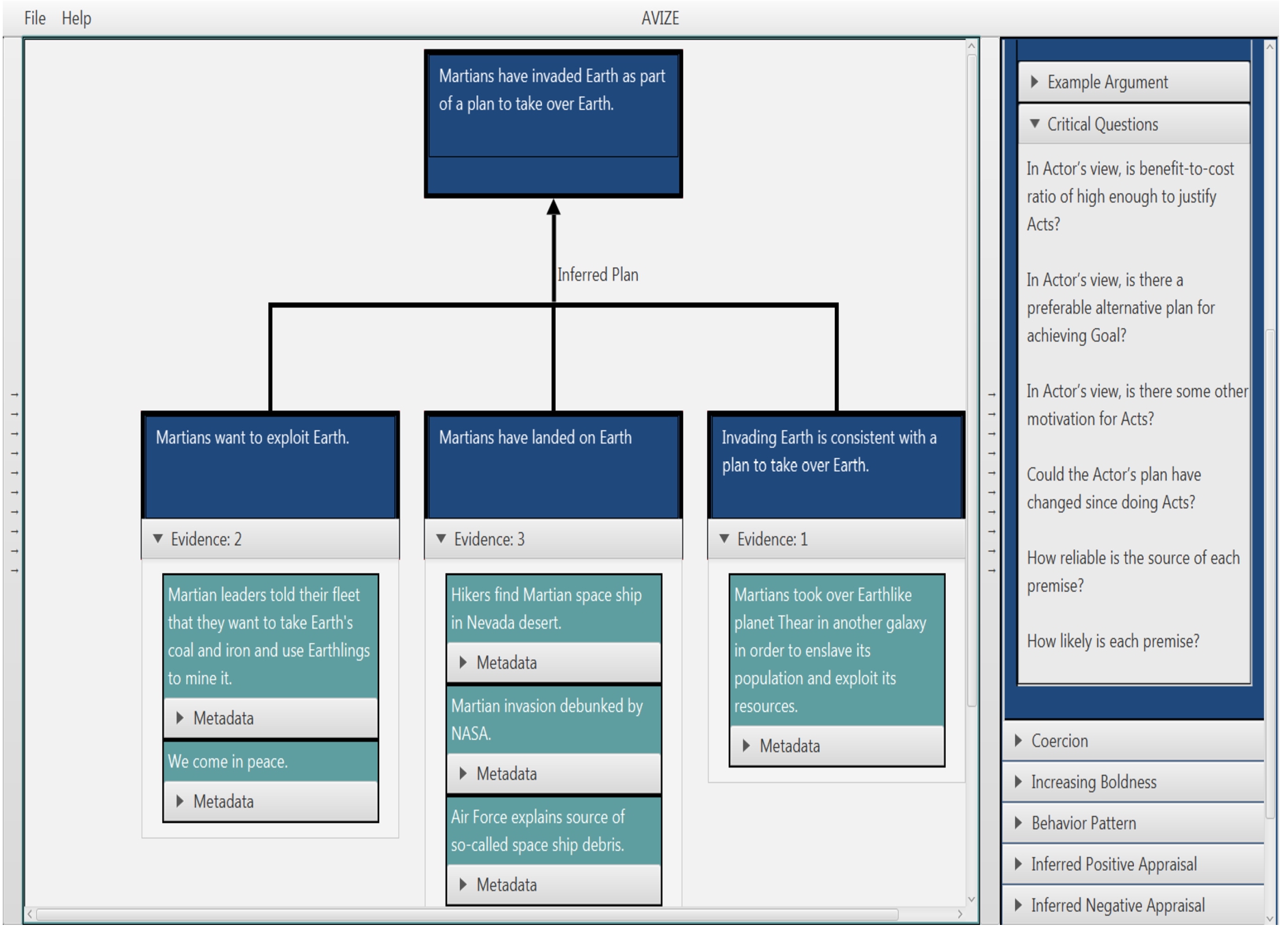

AVIZE screenshot of inferred plan argument with evidence for each premise.

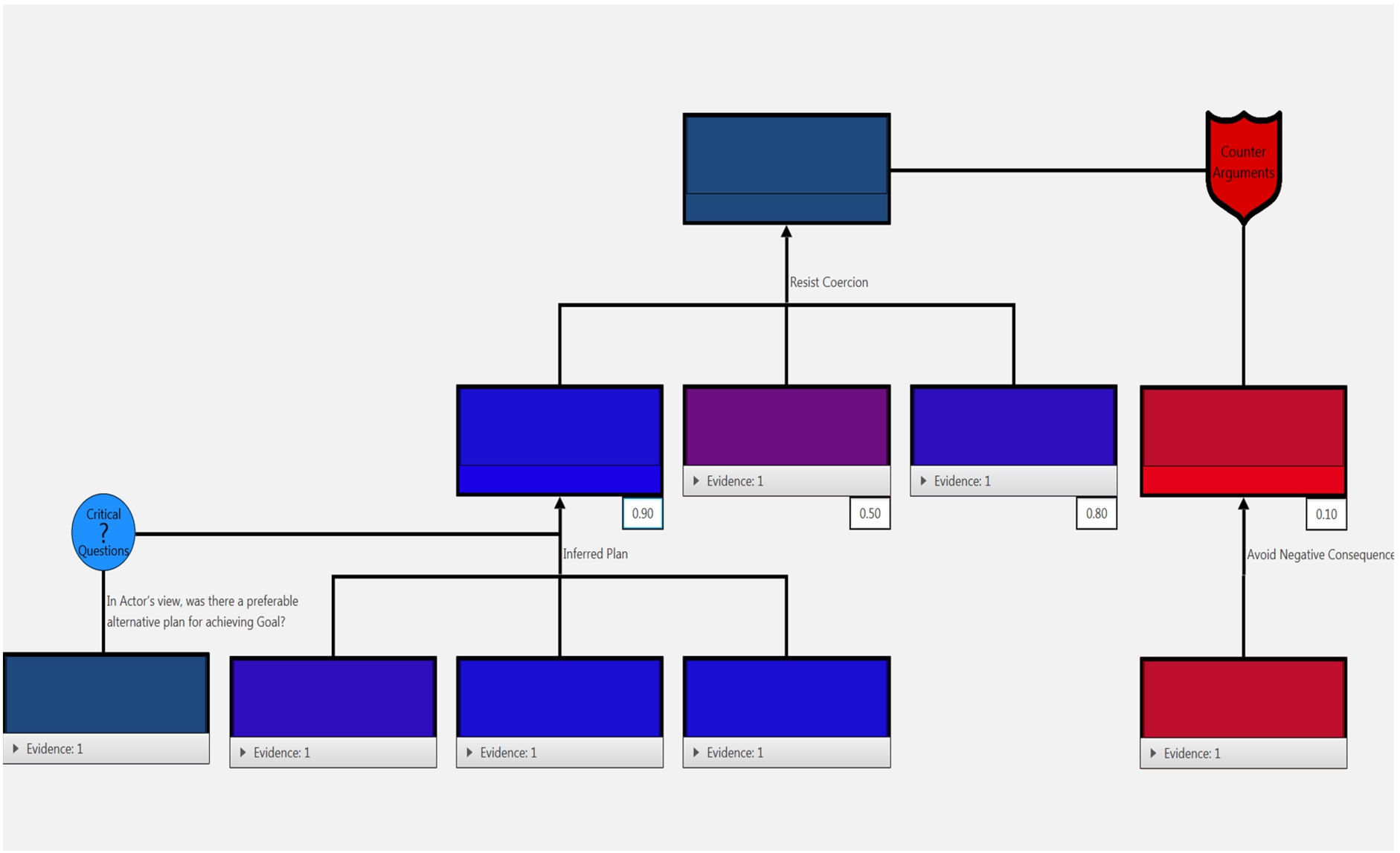

AVIZE screenshot of arguments supporting and attacking a claim.

Challenges to arguments were represented in several ways in AVIZE. First, the user could attach both supporting and opposing pieces of data to the premise of an argument and decide which to credit. In this application, it was possible for sources to conflict and to have varying credibility. As shown in Fig. 10, for example, the premise that Martians have landed on Earth was supported by a news item that hikers had found a Martian space ship in the Nevada desert but challenged by two other items attached to that premise. Second, AVIZE enabled the user to attach counterarguments and provide answers to critical questions challenging a claim (Fig. 11). Also, Fig. 11 shows that users could color-code claims and premises to show their confidence in a premise or conclusion.

In our next project, we analyzed uses of rhetoric in a genre combining scientific and policy arguments in two journal articles in the domain of environmental science [38, 39]. The long-term goal was to build a rhetorically-annotated digital corpus for research on rhetorical persuasion in argumentation. The short-term goal was to inform the design of tools to help students analyze use of rhetoric in science policy arguments. The overarching arguments in the articles were instances of value-based practical reasoning (VBPR), including a variant (VBPR-Knowledge Precondition) which argues for the need to acquire information as a precondition for action. In addition to the main VBPR arguments, in one article we noticed use of enthymematic VBPR arguments in the introduction to signal the authors’ values and position on climate change. The articles also used two “rhetorical” argument strategies [45]: protocatalepsis (“the rebuttal or refutation of anticipated arguments” (p. 241) and prolepsis as presage (warning of environmental catastrophe as motivation for action).

The analysis of rhetorical figures in the two articles was based on descriptions of figures in [11, 35, 36]. We found instances of a variety of types in each class of rhetorical figure as defined in [35]: schemes17 Not to be confused with argumentation schemes.

In an AI Ethics18 Winfield et al. [63, p. 510] defines AI ethics or robot ethics as concerned with “how human developers, manufactures and operators should behave in order to minimize the ethical harms that can arise from robots or AIs in society, either because of poor design, inappropriate application, or misuse”. They define machine ethics as concerned with “the question of how robots and AIs can themselves behave ethically.” Despite its name, our course addresses the latter question, especially “the (significant) technical problem of how to build an ethical machine.”

Arkin [4] has done extensive research on design and implementation of explicit ethical agents for autonomous machines capable of lethal force, e.g., autonomous tanks and robot soldiers. In this highly codified domain, it is possible to implement military principles such as the Laws of War and Rules of Engagement, which are based on Just War Theory [61], rather than more abstract ethical approaches such as utilitarianism or deontological theories. These principles are encoded in Arkin’s system as constraints in propositional logic. After Arkin’s agent has proposed an action, the action is evaluated in accordance with these constraints by an “ethical governor”. Note that the action of an autonomous agent in Arkin’s system is limited to the action of firing (or not firing) on a target, with the only variation being in terms of choice of armament. Arkin states that the problem of accurate target identification, which involves subsymbolic reasoning, is outside of the scope of his research.19 We address this problem shortly in discussion of AIED’s critical questions.

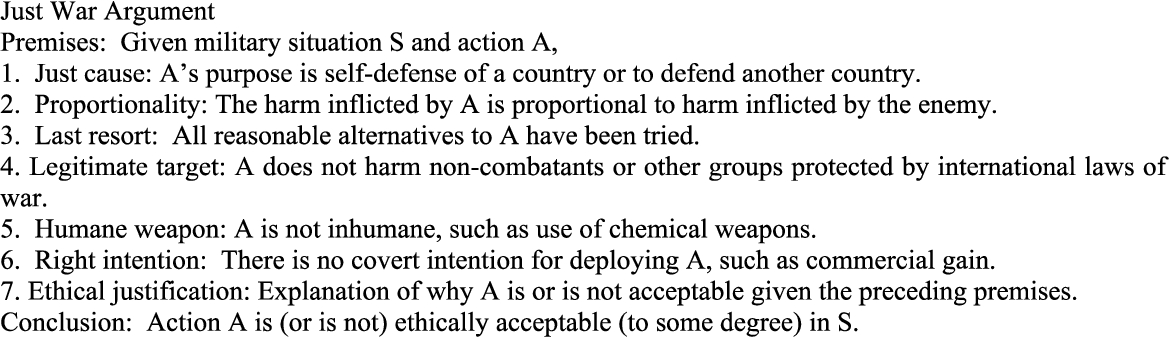

We defined an argument scheme (Fig. 12) which is more abstract than the specific military rules encoded in Arkin’s agents, yet which summarizes many of the key principles of international law and norms on just warfare [50]. To illustrate an application of this argument scheme to a fictitious case study, consider use of lethal force by Homelandia’s AI-controlled drone against a missile base in the country of Malevolentia that has been firing missiles across the border into Homelandia. The missiles have caused damage to several apartment buildings in Homelandia and killed several occupants. The Just cause premise is satisfied since the purpose of the action is defense of Homelandia. Suppose that all reasonable alternatives, such as proposing a cease fire to negotiate a truce, etc., have been exhausted, so the Last resort premise is satisfied as well. According to international norms, drone-fired missiles are not considered inhumane weapons, and Homelandia has no covert reason for attacking the Malevolentian missile base, respectively satisfying the Humane weapon and Right intention premises. However, suppose that it is not possible for Homelandia’s counterattack against the Malevolentian missile base to succeed without inflicting a very high number of civilian casualties since the missile base is located on the grounds of a hospital. In this case, the Proportionality and Legitimate target premises are not satisfied. Thus, there is conflicting support and opposition from the premises. In the Ethical justification, one may provide additional explanation as to why the action is ethically acceptable to some degree (or not).

Just war argument scheme.

To give a related example, Arkin’s agent is forbidden from doing an action if that would violate certain constraints relating to Proportionality and Legitimate target. (Deployment of Arkin’s agent assumes that the others of the first six premises have been satisfied.) However, in Arkin’s system a human operator can override the agent’s prohibition after providing a justification for purposes of later oversight of the operator’s override. Similarly, the Ethical justification of the Just War scheme can be used to explain a justifiable exception to the requirement to satisfy a certain premise. (See also the related discussion of the Ethical justification premise of the Healthcare Argument scheme.)

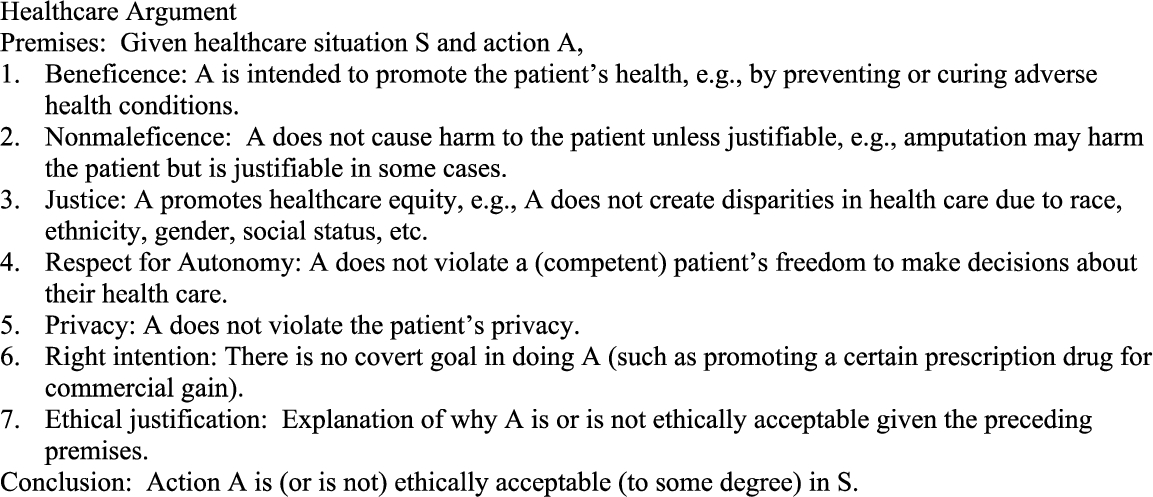

The field of biomedical ethics has provided ethical principles for the design of healthcare applications. Anderson and Anderson [2, 3] have done extensive research on design of explicit ethical agents in this domain. Adapting Ross’ prima facie duty approach to ethics [53], they implemented several duties of biomedical ethics [7]: beneficence (e.g. promoting a patient’s welfare), nonmaleficence (e.g. intentionally avoiding causing harm), justice (e.g. healthcare equity), and respect for the patient’s autonomy (e.g. freedom from interference by others). In addition to the above principles, the healthcare literature also cites the need to respect the patient’s privacy. Under the virtue of fidelity, Beauchamp and Childers [7] discuss the need for the professional to give priority to the patient’s interests, e.g. that the agent has no covert goal such as to endorse a particular medical device or prescription drug. The following Healthcare Argument scheme encodes these principles as premises (Fig. 13).

AI ethics healthcare argument schemes.

However, as Ross noted, prima facie duties may conflict. To handle such situations, Anderson and Anderson created a process for training their system using inductive logic programming to derive rules that generalize the decisions of medical ethicists on training cases. The ethicists first assigned numerical ratings to represent how strongly each duty was satisfied or violated in a particular training case. An example rule that was induced was “A healthcare worker should challenge a patient’s decision [e.g. to reject the healthcare professional’s recommended treatment option] if it isn’t fully autonomous and there’s either any violation of nonmaleficence or a severe violation of beneficence” [2, p. 71]. Such a rule, or a student’s justification for favoring certain principles in S, could be used to provide the Ethical justification.

To illustrate, this scheme can be used to analyze an argument as to whether it is ethically acceptable for a robot, Halbot, to steal insulin from Carla (a human) to give to Hal (a human) to save Hal’s life. This is a variant of an account given in [8] in which Hal stole the insulin. In both accounts, Hal and Carla are diabetics who need insulin to live. Hal will die unless he gets some insulin right away. In our version, Halbot breaks into Carla’s house and takes her insulin without her knowledge or permission. Presumably, Carla does not need the insulin right away. Applying the above Healthcare Argument scheme, the Beneficence premise is that the action A will prevent Hal’s death. However, the Nonmaleficence premise opposes A since A might cause harm to Carla if she is unable to obtain a replacement dose of insulin in time. The Justice premise is that Hal deserves equal access to insulin. However, Halbot’s action of taking away Carla’s insulin without her knowledge or permission is a violation of Respect for (Carla’s) Autonomy. An Ethical justification premise in the style of [2] could say that the positive contribution to Beneficence and Equity due to A in S outweighs A’s negative contribution to Maleficence and Autonomy in S.

Critical questions of AI ethics schemes.

Figure 14 shows a number of critical questions for challenging the acceptability of an action A of an artificial ethical agent shared by the preceding schemes. (To save space, they are listed here rather than with each scheme.) The Data question is especially significant for explicit ethical agents that must rely in part on subsymbolic processing such as facial recognition.20 For many other data-related issues that could be used for critical questions, see [42].

The purpose of these argument schemes is to help the student to analyze whether an artificial agent’s action is or is not ethically acceptable to some degree. (As the state-of-the-art advances, it might be possible for an artificial explicit ethical agent to use such a scheme to explain its actions.) Unlike the argumentation schemes described in [60], the premises are not considered to be jointly necessary conditions. Also, the hedge ‘to some degree’ in the conclusion is intended to reflect the intuition that some actions are more ethically acceptable than others, e.g., that telling a lie with the goal of cheering someone up is more acceptable than lying to secure investors in a pyramid scheme. In some cases some of the premises may support the conclusion that the action is ethically acceptable while other premises may oppose that conclusion, weakening its degree of ethical acceptability.

These schemes are related to value-based practical reasoning (VBPR) schemes proposed by argumentation researchers to model an agent’s argument for why the agent should do some action in consideration of the agent’s goals, values, and available means of achieving those goals [60] and in the current context or circumstances [12]. In addition to its use to describe what a

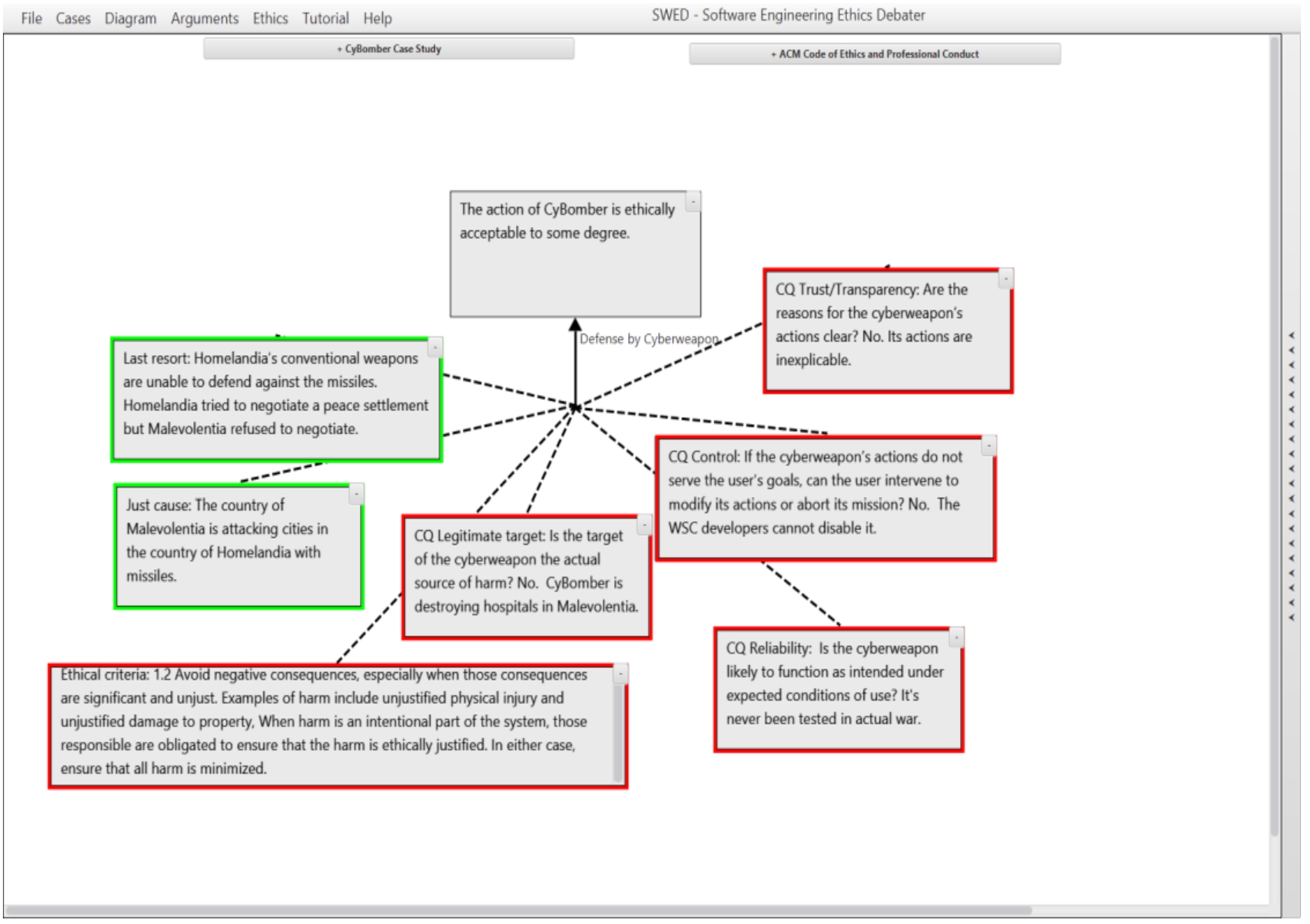

AIED (AI Ethics Debate) was designed to support creation and graphical realization of AI ethics arguments using argument schemes such as those described above as templates for constructing arguments. AIED provides the student with drop-down menus for selecting case studies and ethics materials, which when selected appear in windows on the screen. Argument scheme definitions are listed in a panel on the right-hand side of the screen. Course instructors may provide case studies and ethics materials of their choosing. If desired, they can author their own argument schemes too.21 A formative evaluation of AIED is described in [31]. The version of AIED used in the formative evaluation is freely available for non-commercial use from

Screenshot of AIED with case study and ethics windows and argument scheme panel minimized. The argument scheme has been dragged into the argument diagram construction workspace, creating a box-and-arrow template for the user’s argument, as shown. Boxes have been marked red (con) or green (pro) by the user. The user left the conclusion uncolored since there are both pro and con issues regarding its ethical acceptability.

The center of the AIED screen is a drag-and-drop style argument diagram construction workspace. When the student selects an argument scheme from the right-hand side panel, a box-and-arrow style template is rendered in the center of the screen (Fig. 15). The student may cut-and-paste text from the case study and ethics windows, and enter their own words into the diagram. Critical questions can be selected from a menu and are rendered as text boxes attached to the argument. Premise and critical question boxes can be colored green or red to indicate support or opposition, respectively, to the conclusion. It was decided that premises and critical questions would be rendered in the same way in the argument diagram (i.e. as text boxes attached to the conclusion) since they both could be used to support or weaken the conclusion. The factors that we considered most important for the student to consider were listed as premises, while other factors were listed as critical questions.

Looking back over our past projects, the main focus was on “naturally occurring” arguments witnessed in monological text, i.e., genetic counseling letters and information on genetic testing, and journal articles from the fields of genetics research, international relations, and environmental science policy.22 In the AIED project, rather than analyzing examples of “naturally occurring” arguments, we read analyses of case studies by ethics experts. The characterization of argument patterns in schemes resembles the characterization of discourse patterns in discourse plan operators (or “recipes”), e.g., for the interpretation and generation of certain conversational implicatures [30].

For the goal of corpus annotation, our schemes were redefined several times (in English) at different levels of specificity. The motivation for greater specificity was to help future annotators of genetics research articles, but the non-genetics-specific formulation of schemes was applied successfully by independent researchers analyzing biochemistry research articles. In our computer implementations, the schemes were defined in the language of formal logic. For argument generation in GenIE/GAIL, the Claim, Data, Warrant and Applicability Constraint (a kind of critical question) of argument schemes were represented as logical expressions specifying abstract (non-domain-specific) properties of the qualitative probabilistic networks used as knowledge bases. For argument mining from genetics research articles, argument schemes were encoded as logic programming rules specifying certain genetics relations.

Argument schemes played a key pedagogical role in the argument diagramming systems that we designed. In AVIZE, argument schemes were presented to the user for constructing arguments to make sense of evidence of varying plausibility from sources of varying reliability. The schemes were specialized for international relations to bridge a possible conceptual gap between generic descriptions of schemes and the domain of international relations.24 Based upon the undergraduate students’ difficulties in applying generic schemes to genetics, our assumption was that field-specific international relations schemes would be easier for AVIZE users to apply.

The modeling of argument strength is an open issue which is relevant to the other areas we covered. In genetic counseling, often arguments are based upon probabilistic information. Arguments in genetic testing advertising may be challenged by critical questions, reframing, qualification and rebuttals. In genetics research articles, multiple arguments for the same conclusion may be given, as well as arguments for the premises of arguments. Some types of data are considered stronger than other types, e.g. data from human studies vs. mouse studies, or data obtained from one experimental method vs. another. Also some types of argument, such as argument from analogy, are considered weaker than others. In international relations, the user’s argument may rest upon data of varying plausibility from sources of varying reliability. Also, the user may construct networks of challenges and counter-challenges.

Another open issue is the taxonomic organization of and the “proper” level of specificity of argumentation schemes. For practical reasons, in some cases we provided human analysts and students with field-specific descriptions of schemes. For argument mining, we defined domain-specific schemes to make use of semantic information that could be provided by relation extraction tools. On the other hand, argumentation scholars (or cognitive scientists, perhaps) may wish to relate the definitions to more abstract descriptions of schemes. Also, by specifying schemes at a higher level of abstraction they may be more widely applicable, as was the case when our genetics schemes were adopted by other researchers for analysis of biochemistry research articles. Other open questions involve how best to organize the taxonomy: as a strict hierarchy or allowing a scheme to have more than one “parent”, and could schemes inherit critical questions from schemes higher in the taxonomy?

Finally, in all the domains except genetics research, the analyses revealed a significant role for affect: coping strategies in genetic counseling, fear appeals in promotion of genetic testing, inference of hostile intentions in international relations, rhetorical figures in environmental science policy, and ethical issues in biomedicine and warfare. While some affective strategies can be characterized via argumentation schemes, others operate at the level of linguistic expression. How is it possible to calculate their combined effect on the audience?

Footnotes

Acknowledgements

We thank the numerous students who have helped us on these projects. We thank the computer science departments at the University of Pennsylvania and the University of Delaware for offering courses on linguistic pragmatics and discourse and dialogue. The GenIE project was supported by the National Science Foundation under CAREER Award No. 0132821. The AVIZE project material is based upon work supported in whole or in part with funding from the Laboratory for Analytic Sciences (LAS). Any opinions, findings, conclusions, or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the LAS and/or any agency or entity of the United States Government. Finally, we thank the Argument and Computation reviewers for their helpful feedback.