Abstract

We present a high-level declarative programming language for representing argumentation schemes, where schemes represented in this language can be easily validated by domain experts, including developers of argumentation schemes in informal logic and philosophy, and serve as

Keywords

Introduction

Argumentation schemes [25] serve at least two functions:

They provide normative standards for critically evaluating arguments, by matching arguments to schemes to see if they fit acceptable patterns of argumentation, to identify missing premises, and to facilitate the asking of critical questions.

They provide guidance for making (constructing, inventing, generating) good arguments in the first place, i.e. arguments that will satisfy the normative standards specified by the schemes.

Computational models of argument can model either or both of these functions of argumentation schemes. In this paper, we focus on the second function. Whereas some prior work on computational models of argumentation schemes consists of procedural programs for generating arguments for specific schemes, e.g [3], our aim is to develop a high-level declarative programming language for representing, ideally,

Argumentation schemes [25] are defeasible inference rules. Most, like argument from expert opinion, are to some extent domain-dependent, because they include predicates intended to be interpreted in a particular, domain-dependent way. Some, like defeasible modus ponens, are more generic. Let’s take a closer look at these two schemes.

First, here is a simplified version of the scheme for argument from expert witness testimony, which is the prototypical argumentation scheme most often used to introduce the concept of argumentation schemes.

Argument from expert witness testimony

The expert witness scheme makes use of two domain-dependent predicates:

is-an-expert/1

asserts/2

The numbers indicate the arity of each predicate. In the presentation of the scheme, the predicates are shown using an infix notation close to natural language.

In the scheme,

The second argumentation scheme we want to discuss is defeasible modus ponens.

Defeasible modus ponens

if

(Presumably)

Defeasible modus ponens has the same form as modus ponens, except that the conclusion is only presumably true, rather than necessarily true. (The modality, “presumably”, is made explicit in the example only for emphasis. The conclusions of all argumentation schemes are presumably true.) An argument constructed by instantiating this scheme can be attacked in the usual ways, for example by a rebuttal, an argument for

Notice that the major premise of defeasible modus ponens, “if

Argumentation schemes like these, with second-order variables, are quite common. It is particularly common for the conclusion of schemes to be a second-order variable, as in both of these examples. Other examples include schemes for argument from abduction, analogy, established rule, ethos, ignorance, position to know, and precedent.

We are aware of no The TOAST implementation of ASPIC+ [19] is propositional. Its rules are fully instantiated axioms, with no variables. These earlier versions of Carneades allowed argumentation schemes with second-order variables to be represented and used to manually construct arguments, by filling in forms, and to check whether arguments correctly instantiate schemes, but not to automatically construct arguments from a set of assumptions.

To understand more clearly the difficulties in representing argumentation schemes using Horn clause logic, the subset of first-order logic used by logic programming languages such as Prolog, let us see how far we can get in representing the scheme for arguments from expert witness testimony in Prolog. Let us first represent, as Prolog “facts”, the following assumptions about a case:

Given these facts, the challenge is to represent the expert witness scheme as a single Prolog rule (Horn clause), in such a way that the following query can be proven by Prolog, answering

The above representation of the assumptions already shows how one hurdle can be overcome. Although Horn clause logic is a subset of first-order logic, it is possible to represent second-order propositions about atomic formulas, such as global warming being caused by humans here, by reifying such atomic formulas as terms. So far, so good.

But how can the expert witness scheme be represented? Here is one approach, suggested to me by Trevor Bench-Capon but also used by ABA [8, 200–201]:

The idea here is to represent the second-order conclusion,

While axioms can be viewed as a basic form of inference rule, the attempt to represent more general inference rules using a

From a knowledge-representation point of view, this seems rather verbose and cumbersome, but presumably these additional rules could be generated automatically, using some kind of preprocessor. However this approach suffers from a more serious problem: Such a rule would need to be generated for

With these clauses, the following query causes an endless loop and runs out of stack space:

It is clear why this happens: The query causes an endless loop between the

...

Since this encoding of argumentation schemes requires the definition of every predicate to have an additional

The event calculus [14] uses a

There is one final and fatal problem with representing argumentation schemes directly in Prolog this way that is important to mention: no arguments are constructed! Thus there is no way to resolve conflicts among arguments, to balance arguments or to use the arguments to help understand or explain the results, for example using argument diagrams.

All of these problems might be overcome by writing a meta-interpreter for argumentation schemes in Prolog, but this would be using Prolog in its capacity as a general-purpose programming language, rather than as an inference engine for Horn clause logic. Some expert system shells, in particular APES [13], were implemented as meta-interpreters in Prolog. APES was able to generate explanations which can be viewed as arguments from the traces of rule applications [4]. However rules in APES were Horn clauses and could not represent argumentation schemes with second-order variables, for the reasons discussed above, and also did not generate counterarguments or use a structured model of argument to resolve attack relations among arguments. The alternative approach we investigate in this paper, using Constraint Handling Rules to represent argumentation schemes, can also use Prolog as an implementation language. Indeed several implementations of Constraint Handling Rules in Prolog exist and we make use of the one provided by SWI Prolog.

As suggested in the previous paragraph, this paper explores the idea of representing argumentation schemes using another kind of rule-based programming, Constraint Handling Rules, introduced by Thom Frühwirth in 1991 [9], to overcome all of the problems identified above by meeting the following requirements:

Allow second-order variables in the premises and conclusions of schemes

Not require additional rules for bringing second-order propositions down to the object-level.

Generate arguments as output

Guarantee termination

The rest of this article is organized as follows. Section 2 introduces Constraint Handling Rules, including examples. Section 3 shows one way to represent argumentation schemes using Constraint Handling Rules, in such as way as to generate arguments and overcome the other problems identified in this introduction. The section also briefly describes two implementations of this approach, one using the Constraint Handling Rules interpreter provided as a library by SWI Prolog and the second based on our custom implementation of Constraint Handling Rules in the Go programming language. Section 4 validates the rule language by demonstrating how to use it to represent twenty argumentation schemes, selected by Douglas Walton as being representative and widely used. Finally, Section 5 presents our conclusions and summarizes the main results.

Constraint Handling Rules (CHR) is a See also the CHR homepage at

While production rule systems have been widely and successfully used for expert systems and implementing so-called “business rules”, they do not have a

One of the achievements of CHR is to realize a forwards-chaining rule language, similar to production rule languages, but with a declarative semantics. CHR is so-named, because the language was initially intended to be used to implement constraint solvers. A constraint solver takes as input a set of relationships among variables, called constraints, and derives further information about these variables. Early constraint solvers were for particular domains, for example propositional constraints over Boolean variables, or equations and inequalities over integers. CHR is more general purpose. It enables constraint solvers for a variety of domains to be specified, using rules.

To make this clearer, let us take a look at the standard example used to illustrate CHR, which defines rules for partial orderings:

The predicate

There are three kinds of rules in CHR: simplification, propagation and “simpagation’ rules, where simpagation rules are a hybrid kind of rule combining the features of simplification and propagation rules. All three kinds of rules are illustrated in the example. The reflexivity and antisymmetry rules are simplification rules; the transitivity rule is a propagation rule; and the idempotence rule is a simpagation rule.

Operationally, CHR rules are applied to a multiset of constraints (similar to Prolog facts) in a data structure called the

When simplification rules, such as the reflexivity and antisymmetry rules, are applied (“fired”), the constraints matching the patterns on the left-hand side of the

Simplification and simpagation rules also

It might seem counterintuitive at first that a declarative language is allowed to delete constraints from the store. But in CHR this is done in principled way, in a way which does not change the meaning of the constraints in the store. Simplification rules and simpagation rules are used to simplify constraints, as their names suggest, by replacing constraints matching the head with fewer constraints having the same meaning. Consider the idempotence rule, for example. Since the constraint store is a multiset, it may contain duplicate, redundant constraints. The idempotence rule simplifies the constraint store by removing duplicate constraints of the form

In addition to heads and bodies, CHR rules may also include, in so-called “guards”, further

To get an idea of how the CHR inference engine works, let us see what CHR derives when applying the rules defining partial orderings above to the following “query”, i.e. giving the initial state of the constraint store:

First, the transitivity propagation rule is fired and adds

In addition to supporting forwards-chaining, CHR has some other properties which may be desirable, depending on the application:

Turing completeness: Any computable function can be represented using CHR rules.

Every algorithm can be implemented in CHR with the best known time and space complexity [20].

CHR rules can be executed concurrently [15].

The execution of CHR rules can be interrupted and restarted at any time, with intermediate results approximating the final solution.

Constraints can be input incrementally as they become known, during rule execution, without requiring recomputation.

Inference rules, rewrite rules, sequents, proof rules, and logical axioms can be directly written in CHR [1].

The last three properties, in particular, appear attractive for representing and implementing argumentation schemes. Argumentation typically takes place in dialogs, with evidence and arguments brought forward and asserted by the participants incrementally, during the course of the dialog. It would be useful if CHR could be used to incrementally and efficiently construct arguments from evidence during dialogs. Moreover, since argumentation schemes are (defeasible) inference rules, the ability of CHR to represent inference rules directly would appear to be quite useful.

In this section we show how to represent argumentation schemes using CHR rules, and present an overview of the implementation of the component for generating arguments with argumentation schemes represented using CHR in this way, provided by Version 4 of the Carneades argumentation system.4

First we need a notation for argumentation schemes. Let us use the syntax for schemes we have developed for Carneades 4, which is based on the YAML markup language,5

This representation of argumentation schemes is, we claim, very high level and quite close to the usual way schemes are represented in informal logic. In our experience, informal logicians are able to read, understand and validate schemes represented in this form.

There are a few things to notice about this syntax. First, the schema variables are declared explicitly. This may seem burdensome, but is useful for checking for misspelled variables in schemes, among other purposes. Second, as proposed in [11], the two types of critical questions are represented by exceptions and assumptions. Thirdly, argumentation schemes may now have more than one conclusion, though there is only one in this example. This change was motivated by the desire to support the full CHR rule language. There can be multiple conclusions in the body of CHR rules. But it has the further advantage of reducing the number of schemes required when several conclusions can be derived from the same premises. Fourthly, note that this rule is an example of a scheme having a second-order variable as its conclusion,

We now show, by way of this example, how argumentation schemes are translated into CHR rules. The expert opinion scheme is translated into the following rule:

As illustrated here, each argumentation scheme is translated into a single CHR rule, in this example a propagation rule, with the same name (identifier). The premises of the scheme are translated into constraints in the head of the rule. The conclusions of the scheme are translated into constraints in the body of the rule. Moreover, each of the assumptions of the scheme are also added to the body of the CHR rule, allowing them to be used to derive further information by applying other rules. (The assumptions can be questioned and retracted later, when evaluating the arguments constructed.) Finally, an additional constraint is added to the end of the body of the rule, of the form

Notice that the exceptions of a scheme are not translated and do not appear in the resulting CHR rule. To understand how exceptions are handled, we first need to explain the steps in the process for generating and evaluating arguments:

The argumentation schemes are translated into CHR rules, as illustrated above.

A set of assumptions, represented as ground atomic formulas, are translated into CHR constraints and added to the initial state of the constraint store.

The CHR inference engine is run, repeatedly applying the rules to the constraint store until no rules match or until the

The argument constraints in the store, i.e. the constraints of the form

Assumptions of arguments are added to the assumptions of the argument graph.

For each argument constructed by translating an argument constraint, undercutting arguments are constructed and also added to the argument graph for any exceptions of the applied scheme. The appropriate scheme is retrieved using the identifier of the scheme in the argument constraint.

Finally, the arguments are evaluated, using the formal model of structured argument in [12], to weigh and balance the arguments, resolve attack relations among arguments and label the statements in the argument graph

In addition to supporting multiple conclusions in schemes, we have extended argumentation schemes in further ways, in order to support the full expressiveness of Constraint Handling Rules. To illustrate one of these extensions, supporting simplification, here is a reconstruction of the CHR rules for partial orders, represented as argumentation schemes:

All of the premises which are to be deleted from the constraint store when the scheme is applied are listed in a

One caveat is order: Although all CHR

Carneades can be configured to use one of two different implementations of CHR for generating arguments from argumentation schemes using the method presented above: the implementation of CHR which comes pre-installed with SWI Prolog,7

Since CHR is Turing complete, termination of CHR programs cannot in general be guaranteed. Our implementation of CHR allows the user to set a maximum number of rule firings, to assure termination within roughly predictable time limits, and returns the arguments constructed before the limit was reached. The engine can be restarted to generate further arguments. This is very much in line with the purpose and spirit of argumentation, as a rational method for problem solving and decision-making when information is inconsistent or incomplete.

The second example argumentation scheme in the introduction, for defeasible modus ponens, cannot be implemented using the SWI Prolog version of CHR. While it allows second-order variables in the body (conclusion) of rules, it does not allow them in the head (premises). We are not sure whether this is a limitation of the SWI Prolog implementation of CHR, or the CHR specification. Either way, our implementation of CHR removes this restriction and allows second-order variables in both the head and body of rules, enabling defeasible modus ponens to be represented.

In this section we attempt to validate the rule language for argumentation schemes and demonstrate its expressiveness by using it represent twenty argumentation schemes, mostly from [25], selected on the basis of their representativeness and wide-use in practice. To allow the reader to evaluate for him- or herself the adequacy of the representations, we present the original formulation alongside our representation for each scheme. When the original source of a scheme is not [25], the source text will be referenced.

The schemes are presented in alphabetical order. To save space, the declarations of the predicates of the language are not presented. The complete source code, including the missing declarations, is available on Github.9

Each one of a set of accounts

Therefore,

Argument from analogy

Generally case

Are there differences between Is A true (false) in Is there some other case

In our reconstruction of the scheme from analogy, an implicit current case is compared to the precedent case,

Argument from appearance

This object looks like it could be classified under verbal category

Therefore this object can be classified under verbal category

Could the appearance of its looking like it could be classified under Although it may look like it can be classified under

Argument from cause to effect

Generally, if In this case,

Therefore, in this case,

How strong is the causal generalization (if it is true at all)? Is the evidence cited (if there is any) strong enough to warrant the generalization as stated? Are there other factors that would or will interfere with or counteract the production of the effect in this case?

The first two critical questions are not explicitly included in the reconstruction, because they both attack the first premise, rather than articulating exceptions (undercutters) or assumptions. Premise attacks and rebuttals are modeled with separate argumentation schemes, rather than by exceptions and assumptions of a scheme.

Argument from correlation to cause

There is a positive correlation between events

Argument from defeasible modus ponens

In the original version, the ⇒ symbol denotes a defeasible conditional, not a strict (material) conditional. Similarly, in the reconstruction the

Argument from definition to verbal classification

For all

What evidence is there that Is the verbal classification in the classification premise based merely on a stipulative or biased definition that is subject to doubt?

Argument from established rule

The version of the argument from established rule we use here is from [22]. No critical questions were formulated for this version of the scheme.

If rule Rule

In case

In our reconstruction of the scheme for argument from established rule, the facts and case have been abstracted away, using an

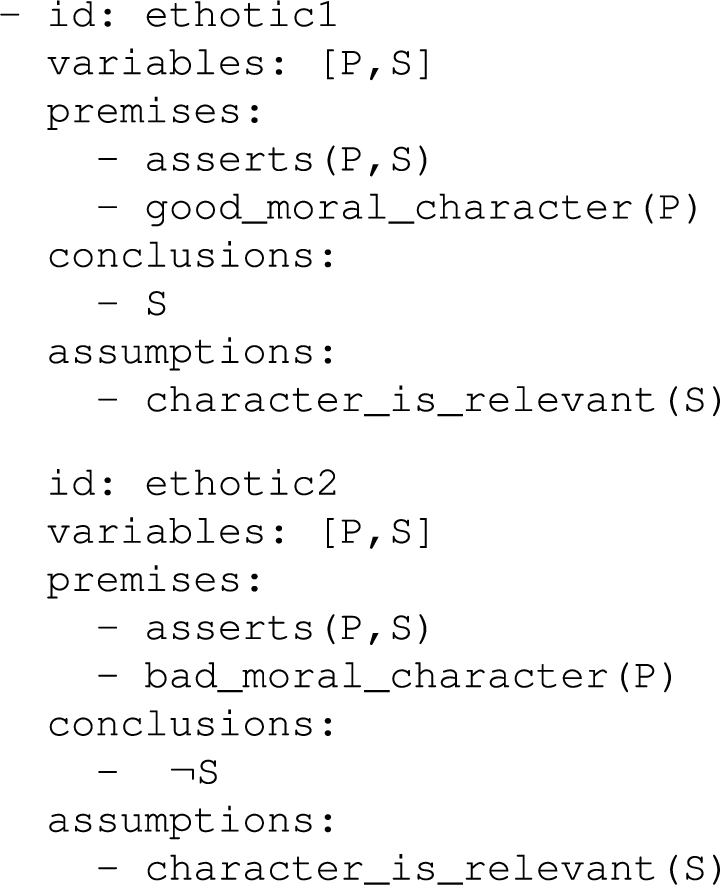

Ethotic argument

If

Therefore, what

Is Is character relevant in the dialogue? Is the weight of presumption claimed strongly enough warranted by the evidence given?

The first scheme for ethotic arguments,

Argument from expert opinion

Source

How credible is Is What did Is Is Is

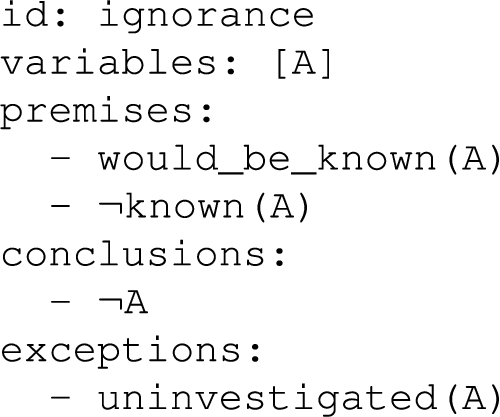

Argument from ignorance

This version of the scheme from ignorance is in the 2008 compendium [25], but the two critical questions of the scheme were added later, in [24].

If It is not the case that

Therefore,

How deep has the search been? How deep does the search need to be in order to prove the conclusion that A is false to the required standard of proof in the investigation?

In the reconstruction, the two critical questions are modeled by a single exception, where the

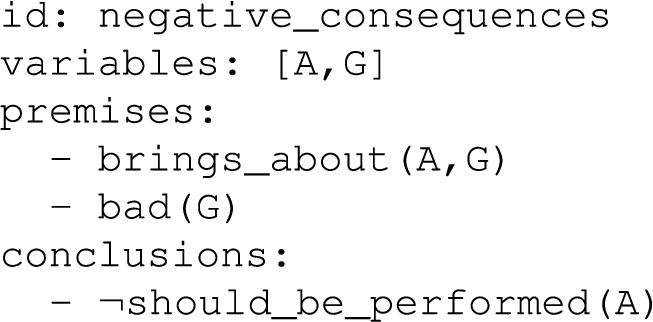

Argument from negative consequences

If

Therefore,

How strong is the likelihood that the cited consequences will (may, must) occur? What evidence supports the claim that the cited consequences will (may, must) occur, and is it sufficient to support the strength of the claim adequately? Are there other opposite consequences (bad as opposed to good, for example) that should be taken into account?

The critical questions are not modeled as exceptions or assumptions in the reconstruction. The first critical question asks about the weight of the argument. Weighing arguments is built in to the model of structured argument we are applying [12] and applies to all schemes, without the need for explicit exceptions or assumptions. We have interpreted the second critical question as merely challenging the first premise. Every premise can be questioned in our model of structured argument, without needing to explicitly enumerate critical questions for each premise. Alternatively, this critical question could have been interpreted as meaning that the premise is actually an assumption, not requiring proof until the question is asked. The final critical question asks whether there are any rebuttals. Rebuttals too are handled directly by the model of structured argument, without needing to add an explicit critical question asking for possible rebuttals to each scheme.

Argument from position to know

Source

Is Is Did

In the reconstruction, the source is denoted by

Only one of the critical questions is explicitly modeled in the reconstruction, with an exception asking whether

Argument from positive consequences

If

Therefore,

How strong is the likelihood that the cited consequences will (may, must) occur? What evidence supports the claim that the cited consequences will (may, must) occur, and is it sufficient to support the strength of the claim adequately? Are there other opposite consequences (bad as opposed to good, for example) that should be taken into account?

The critical questions of the scheme for argument from positive consequences are the same as for the scheme for arguments from negative consequences. The reasons for not including exceptions or assumptions for these critical questions in the reconstruction are the same.

Argument from practical reasoning

Here we present two schemes for practical reasoning, from Atkinson and Bench-Capon [2] and Walton et al. [25]. We show how to represent both, simply because they have both been influential in the literature.

Atkinson and Bench-Capon’s value-based version is: In the current circumstances R We should perform action A Which will result in new circumstances S Which will realise goal G Which will promote some value V.

Notice that Atkinson and Bench-Capon do not distinguish premises and conclusions of the scheme. They do however list critical questions:

Are the believed circumstances true?

Assuming the circumstances, does the action have the stated consequences?

Assuming the circumstances and that the action has the stated consequences, will the action bring about the desired goal?

Does the goal realise the value stated?

Are there alternative ways of realising the same consequences?

Are there alternative ways of realising the same goal?

Are there alternative ways of promoting the same value?

Does doing the action have a side effect which demotes the value?

Does doing the action have a side effect which demotes some other value?

Does doing the action promote some other value?

Does doing the action preclude some other action which would promote some other value?

Are the circumstances as described possible?

Is the action possible?

Are the consequences as described possible?

Can the desired goal be realised?

Is the value indeed a legitimate value?

Here is are reconstruction of Atkinson and Bench-Capon’s scheme for practical reasoning:

Now, here is Walton’s scheme for instrumental practical reasoning, called “practical inference” in [25]:

I have a goal Carrying out this action

Therefore, I ought (practically speaking) to carry out this action

What other goals that I have that might conflict with What alternative actions to my bringing about Among bringing about What grounds are there for arguing that it is practically possible for me to bring about What consequences of my bringing about

Argument from precedent

The version of argument from precedent we have chosen is from [23]. The critical questions included here are new. They were not explicated in [23].

In case

Rule

There relevant differences between Rule

In our reconstruction of the argument from precedent, the second case,

Slippery slope arguments

Bringing up

What intervening propositions in the sequence linking up What other steps are required to fill in the sequence of events, to make it plausible? What are the weakest links in the sequence, where specific critical questions should be asked on whether one event will really lead to another?

Notice that our reconstruction of the slippery slope scheme splits the scheme into two, where the second scheme represents an inductive step in a recursive argument for proving that events which cause events with horrible costs also have, indirectly, horrible costs. The base case in such a recursive argument would be covered by facts or assumptions stating that some particular events have horrible costs.

The reconstruction does not explicitly model the critical questions, using exceptions and assumptions. The first two critical questions are handled by the recursive (second) scheme, which chains together a sequence of events. The third critical question merely attacks the causality premise of the second scheme. Premise attacks are built into the model of structured argument and do not require critical questions to be expressed or represented.

Argument from sunk costs

The version of the scheme for argument from sunk costs below is from [25], except for the critical questions, which are new. No critical questions for the scheme were formulated in [25].

In the following scheme, let

There is a choice at At

Therefore, I should choose

Is there some hope of completion of the course of action? Should the projected future losses of continuing this course of action outweigh the value of my commitment to continuing with it? Are my prior commitments important enough to warrant continuing this course of action, even though continuing might not lead to success in the future? Are cost benefit calculations applicable?

In the reconstruction, we have not modeled the first premise, which states there is a choice to be made between

The first critical question, about there being some hope to complete the course of action, has been modeled in the reconstruction as an assumption,

Argument from verbal classification

For all

What evidence is there that a definitely has property Is the verbal classification in the classification premise based merely on an assumption about word usage that is subject to doubt?

Argument from witness testimony

Witness Witness Witness

Is what the witness said internally consistent? Is what the witness said consistent with the known facts of the case (based on evidence apart from what the witness testified to)? Is what the witness said consistent with what other witnesses have (independently) testified to? Is there some kind of bias that can be attributed to the account given by the witness? How plausible is the statement

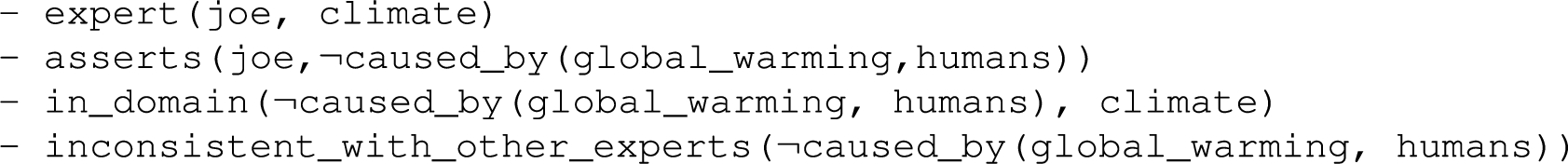

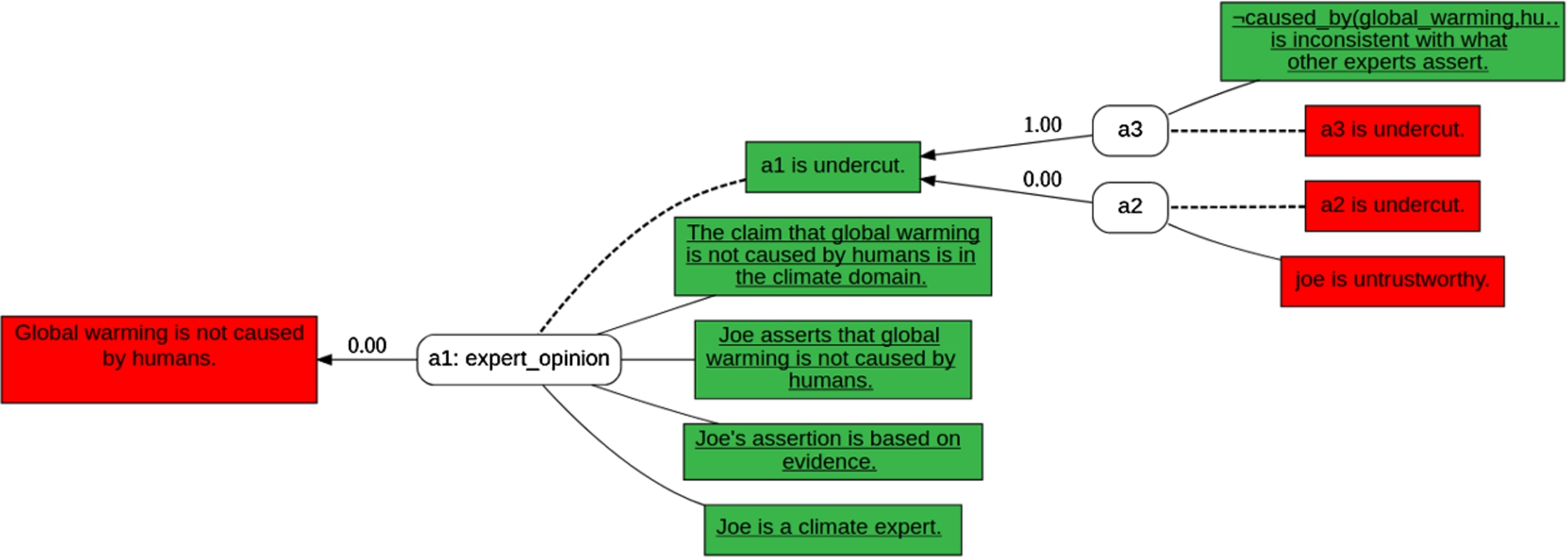

Figure 1 shows a simple example of how these schemes can be used by Carneades 4 to automatically generate, evaluate and visualize an argument graph, given the following assumptions:

Global warming example.

Our experiments with using Constraint Handling Rules (CHR) to represent argumentation schemes for the purpose of generating arguments have been encouraging.

We have successfully implemented twenty representative argumentation schemes [25], including their critical questions.10

Using CHR as a foundation for implementing argumentation schemes provided us with an opportunity to extend the concept of an argumentation scheme in various ways, to make it possible to represent any CHR rule as an argumentation scheme. This method for representing and implementing argumentation schemes inherits all of the attractive features of CHR, including Turing completeness, the possibility of concurrent execution, support for stopping and restarting computation at any time, with intermediate results available for use, and support for inputting further information incrementally during dialogues and other argumentation processes.

Conversely, the synthesis of CHR and argumentation provided by Carneades provides additional benefits not provided by CHR alone. CHR has no concept of negation. Carneades issues can model negation or, more generally, a set of conflicting positions of issues. Moreover, CHR provides no built-in support for defeasible reasoning. We use CHR to generate pro and con arguments, which are then evaluated in a post-process, using a model of structured argument, to support defeasible reasoning by weighing and balancing arguments and resolving attack relations among arguments. Most importantly, our system produces arguments which can be used to explain and understand CHR inferences, for example by visualizing the arguments in argument maps.

While the method presented here for generating arguments using Constraint Handling Rules was developed for the latest version of the Carneades model of structured argument [12], it can be adapted for use in any model of argument in which arguments are constructed by instantiating argumentation schemes. We leave it for future research by others to adapt the method to other models of structured argument.

Footnotes

Acknowledgements

This work was partially funded by the Social Sciences and Humanities Research Council of Canada (SSHRC), in the Carneades Argumentation System project (Insight Grant 435-2012-0104), and by Microsoft, in the DUCK project.