Abstract

In many domains of public discourse such as arguments about public policy, there is an abundance of knowledge to store, query, and reason with. To use this knowledge, we must address two key general problems: first, the problem of the knowledge acquisition bottleneck between forms in which the knowledge is usually expressed, e.g., natural language, and forms which can be automatically processed; second, reasoning with the uncertainties and inconsistencies of the knowledge. Given such complexities, it is labour and knowledge intensive to conduct policy consultations, where participants contribute statements to the policy discourse. Yet, from such a consultation, we want to derive policy positions, where each position is a set of consistent statements, but where positions may be mutually inconsistent. To address these problems and support policy-making consultations, we consider recent automated techniques in natural language processing, instantiating arguments, and reasoning with the arguments in argumentation frameworks. We discuss application and “bridge” issues between these techniques, outlining a pipeline of technologies whereby: expressions in a controlled natural language are parsed and translated into a logic (a literals and rules knowledge base), from which we generate instantiated arguments and their relationships using a logic-based formalism (an argument knowledge base), which is then input to an implemented argumentation framework that calculates extensions of arguments (an argument extensions knowledge base), and finally, we extract consistent sets of expressions (policy positions). The paper reports progress towards reasoning with web-based, distributed, collaborative, incomplete, and inconsistent knowledge bases expressed in natural language.

Keywords

Introduction

In many domains of public discourse, participants have the opportunity to present their

views.1 A

version of this paper was previously presented at The ECAI 2010 workshop on

Computational Models of Natural Argument, 16 August 2010, Lisbon, Portugal.

Standard, current tools which are used to gather knowledge of policy are face-to-face meetings and rounds of consultation/commenting on written position reports. On line forums are also used to broaden participation and make policy more efficiently [31]. Broadly speaking, the meetings and on line forums serve to record the uncertain and potentially inconsistent points of view of the participants. Analysts subsequently try to identify and reason with the inconsistency in order to formulate and clarify the policy, a task which is done “manually”. Moreover, the tools do not support participants to submit their contribution in a way that facilitates further processing.

None of the current tools for policy-making address the knowledge acquisition bottleneck, nor knowledge representation and reasoning (KR) as understood in the subfield of artificial intelligence. The knowledge acquisition bottleneck is the problem of translating between the form in which participants know or express their knowledge and the formal representation of knowledge which a machine can process; the bottleneck has limited the advance of artificial intelligence technologies [24]. KR is understood as a structured, logical representation of the knowledge for automated reasoning, querying, and processing. Currently, knowledge engineers (or legislative assistants) manually structure and formalise unstructured linguistic information into a knowledge base (or legislative act) which supports information extraction, identification of relationships among statements, inference, query, development of ontologies, redundancy, identification of contradictions, reasoning with uncertain and inconsistent knowledge, or visualisations. Along the way, knowledge engineers might interpret sentences relative to context as well as fill in the semantic representation with pragmatic background knowledge. Yet, in doing so, the knowledge engineers rely on their own fine-grained, unstructured, implicit linguistic knowledge, which itself is problematic.

In order to support policy-making, we address the knowledge acquisition bottleneck.

However, we decompose the overall problem in order to focus on the specific aspects –

processing of statements with automated techniques in natural language processing,

instantiated arguments, and reasoning with the inconsistent arguments in argumentation

frameworks. We discuss application and The term policy position is intended to be related to the

While each of these components is developed independently, little consideration has been

focused on

Many questions arise. Where we rely on input from public participants without training in

building well-formed rules, ill-formed arguments could be entered. What prompts can be

introduced to make KB construction systematic and meaningful? What is the appropriate

semantic formalism and grainedness for natural language (Propositional, First-order, Modal)?

What limitations are imposed by using a controlled language? How can context and dynamics be

addressed? In the face of these questions, our approach is pragmatic and incremental, using

available technologies while acknowledging limitations. For example, we do not address the

problem of ill-formed arguments; First-order logic is known to have a range of limitiations;

controlled languages are inherently less expressive than ‘full’ natural languages; and we

consider only

In the paper, we discuss each of the processing modules in turn, including considerations

and related work. In Section 2, we provide a sample

policy-making discussion, a graphical representation of the relationships among the

statements, and our translation methodology using the ACE tool. In this section, we develop

a literals and rules knowledge base (LRKB), which represents the domain knowledge. In

Section 3, we take LRKB and instantiate arguments for and

against statements, forming an argument knowledge base (AKB). In Section 4, we take the arguments and relations defined in AKB as input to an

argumentation framework

In this section, we discuss our source material and working example, the methodology of using the ACE tool to translate the sentences to parse and semantically translate the sentences, and considerations which arise in developing a set of sentences for input to an argumentation system.

Sources and examples

[61] present a policy-making discussion adapted

and abstracted from a BBC See

The comments as they are present a knowledge engineer with a wealth of unstructured textual data since the discussion list is unthreaded and has very few substantive constraints on what participants can contribute or how. Among the many issues such a discussion raises for the analyst, we see the following. Participants may use any vocabulary or syntactic structure they wish (so long as it does not lead to the rejection of their comment); they may use novel words or expressions, metaphors, misspellings, and ungrammatical sentences. There is no check that the meaning as interpreted is the meaning as intended. Implicit or pragmatic information in the comments must be inferred by the reader. There are no over indications of inter-comment relationship (unless the commenter explicitly refers to one or more previous comments). The comments can represent uncertain or inconsistent information. The comments can be vague and ambiguous. The interpretation of comments is left to the analyst to infer.

Beyond these empirical observations, no current computational linguistic or text-mining

systems accurately parse the large majority of sentences, yield the acceptable correlated

semantic interpretation, and can be used to support the acquisition of the knowledge for

argument representation. Text-mining can extract some useful information (e.g., sentiment

analysis [33], and some aspects of rules [56,57],

and contradictions [47]), but is still limited,

error prone, and does not capture the complex meaning of each comment, much less the

complex web of meanings comprised of the semantic relationships among the comments. Large

corpora can be queried for extraction of argument elements, thought this is not parsing

and semantic representation [39,58,59].

There are parsers with semantic interpretation that work on large scale [10], but require detailed examination and refinement

to produce appropriate output [55]. Other

parsers with semantic interpretation work on a fixed range of corpus-specific

constructions [38,46]. There is work on textual entailment [18], some aimed at argumentation at a coarse-grained level [13,14];

however, this is insufficient to represent the internal structure of arguments and

reconstruct relevant relationships. Uncertainties and inconsistencies (other than explicit

contradictions) are difficult to identify in current approaches. It remains a very

significant, manual task to take a list of comments from a blog and transform them into a

knowledge base that is suitable for a KR task. There has been intensive recent interest in

and progress on

Given the current state of the art in argument mining, an alternative approach to input

of sentences and rules is to use a controlled language, e.g., ACE used here (also see

[52,60]. This allows us to input homogenised, structured information with respect to

the lexicon and syntactic forms, allowing inferences and contrast identification. We can

take controlled expressions as an intermediate form between source unstructured text and

what is needed for formal argument evaluation; expressions mined from unstructured text

might be mapped to the intermediate form so as to facilitate argument evaluation.

Moreover, the focus of this paper is on the flow through issues rather than NLP or

sentence input

[61] scope the issues, which is a common practice in semantic studies in Linguistics. By scoping the issues, the data is filtered, normalised, and reduced in scale so as to define a problem space that is on the one hand close to or derived from the source data and is on the other hand amenable to yet challenging for the theoretical and implemented techniques. It allows us to address and solve particular problems, abstract issues, and make progress which otherwise is prohibited by an ill-defined scope. While the resulting example is relatively far from the source material, it is still a useful starting point. By addressing relatively artificial examples, we can highlight issues, then extend the approach incrementally to solve additional and more natural problems and examples.

With these programmatic points in mind, we adopt and adapt the approach and sentences in

[61], where 16 sentences are automatically

translated into First-order logic (with some modal operators), manually indicating where

there are rules and contradictions: users input sentences using the controlled language

editor, then indicate

In the current paper, we have 19 example sentences; in Section 2.3, we justify our revisions and discuss the sentences. For the purposes of

this paper, we only work with Propositional Logic because the theory of argument formation

for expressions in First-order logic is more complex than we need here and because the

implemented system that we use for generating arguments and relations only works for

Propositional Logic. A representation in First-order Logic is crucial in order to

One general issue that arises about the pipeline

concerns the representations which suit each application. This is discussed further

below.

p1: Every household should pay some tax for the household’s garbage.

p2: No household should pay some tax for the household’s garbage.

p3: Every household which pays some tax for the household’s garbage increases an amount of the household’s garbage which the household recycles.

p4: If a household increases an amount of the household’s garbage which the household recycles then the household benefits the household’s society.

p5: If a household pays a tax for the household’s garbage then the tax is unfair to the household.

p6: Every household should pay an equal portion of the sum of the tax for the household’s garbage.

p7: No household which receives a benefit which is paid by a council recycles the household’s garbage.

p8: Every household which does not receive a benefit which is paid by a council supports a household which receives a benefit which is paid by a council.

p9: Tom says that every household which recycles the household’s garbage reduces a need of a new dump which is for the garbage.

p10: Every household which reduces a need of a new dump benefits the household’s society.

p11: Tom is not an objective expert about recycling.

p12: Tom owns a company that recycles some garbage.

p13: Every person who owns a company that recycles some garbage earns some money from the garbage which is recycled.

p14: Every supermarket creates some garbage.

p15: Every supermarket should pay a tax for the garbage that the supermarket creates.

p16: Every tax which is for some garbage which the supermarket creates is passed by the supermarket onto a household.

p17: No supermarket should pay a tax for the garbage that the supermarket creates.

p18: Tom is an objective expert about recycling.

p19: If an objective expert says every household which recycles the household’s garbage reduces a need of a new dump which is for the garbage, then every household which recycles the household’s garbage reduces a need of a new dump which is for the garbage.

As in [61], where a rule has more than one

premise, we take it that the premises conjunctively hold for the claim to hold. The rules

are

r1:

r2:

r3: p10 → p3.

r4:

r5:

r6:

r7: p14 → p15.

r8: p16 → p17.

In addition to rules, we have what we express here as several constraints (or meaning

postulates) on models which express contradiction between propositions. For example, p18

c1: ¬p1 ∨ ¬p2.

c2: ¬p11 ∨ ¬p18.

c3: ¬p15 ∨ ¬p17.

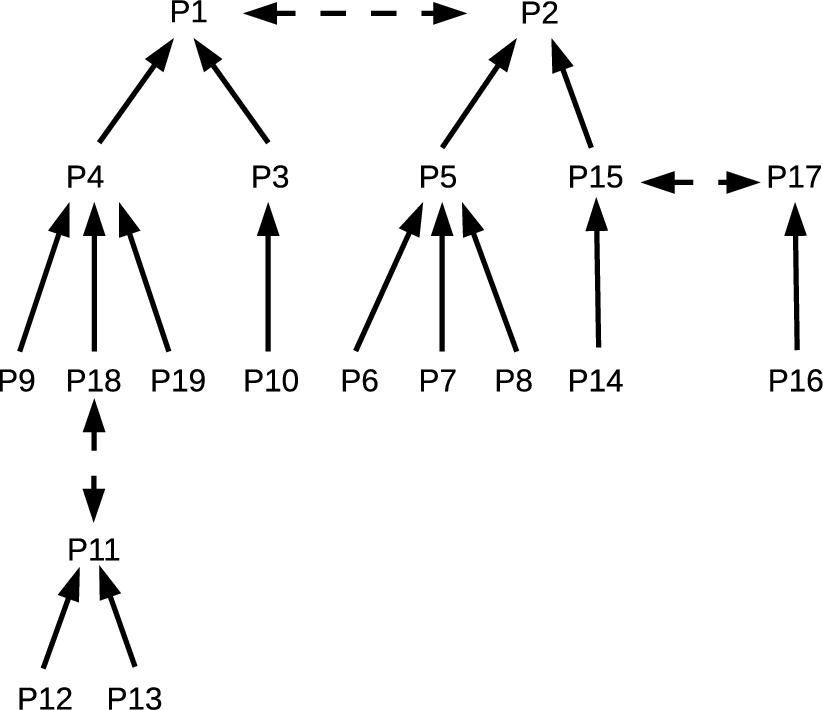

We can represent the structure of the literals and rules into a graphic where

Relationships between statements – premises, claims, contradictions.

In the next section, we briefly outline issues bearing on the syntactic parsing and

semantic interpretation provided by

[61] provide a detailed discussion of the application of ACE to our candidate sentences. In the context of this paper, where the emphasis is on providing the relationship between the input statements, rules, and an argumentation system which works only on propositions, the importance of using ACE is reduced. Yet, it is relevant here to give a brief overview in that ACE controls the language of the policy discussion and also provides expressions in First-order Logic (with some modal expressions) that can be used in future implemented argument instantiation systems [5,21].

To facilitate the processing of sentences, we use a well-developed controlled natural language system – ACE (for an overview of the properties and prospects of controlled natural languages, see [52]). A controlled language has a specified vocabulary and a restricted range of grammatical constructions which are a subset of a natural language (e.g., English). Sentences written and read in the controlled language appear as normal sentences, but can be automatically translated into a formal representation.

ACE supports a large lexicon, a range of grammatical constructions, discourse anaphora,

and correlated semantic interpretations: negation on nouns or verbs, conjunction,

disjunction, conditionals, quantifiers, adjectives, relative clauses, discourse anaphora,

modals (e.g.,

As discussed in [61], the syntactic form must

be ACE translates sentences such as

In developing the sentences for input to a generator of instantiate arguments, four issues arose – material development/user groups, making contradictoriness explicit, the introduction of implicit premises, and the construction of well-formed rules in the LRKB. We discuss these in turn.

As mentioned above, input to ACE must be crafted, which highlights the problem of engaging members of the public as knowledge engineers and using a natural language since this gives rise to a range of expectations which must be addressed. The user must accommodate to the restrictions of the language, which limits the spectrum of users of the system; however, research indicates the language is not unduly difficult to learn or use [29], university students (among others) could learn to use it. On the other hand, the system allows users to communicate in a form that they are most familiar with, and in this aspect the approach improves over other policy-making tools. As a controlled language, we work with a (large) fragment of natural language that can be incrementally developed with further linguistic capabilities. Finally, it is our assumption that the system is used in “high value” contexts by participants who are willing and able to adapt to some of the constraints (topic, expressivity, explicit marking of statement relations) in order to gain the advantages of collaboratively building a clear, explicit knowledge base which represents a range of diverse, and possibly conflicting statements. Related issues arise with wide-scale parsers [55].

To generate instances of arguments and their relations, we require explicit indicators of

contradictoriness. Consider statements such as

There are two approaches to addressing such contradictions. One strategy is for the user

to introduce an explicit

To overcome this, an alternative strategy is to explicitly express the rules that

represent the implicit knowledge which gives rise to the inconsistency. Such rules may be

encoded in auxiliary KBs, e.g., ontologies or lexical semantic structures. For instance,

A final issue relates to the construction of well-formed rules. Given a set sentences,

participants can, in principle, introduce any combination of literals to produce a rule.

Yet, some of these appear to be well-formed and meaningful, while others do not. For

example, the following argument is formally and syntactically well-formed as well as

semantically coherent:

On the construction of well-formed rules, we have some of the following general questions. What are the conditions on semantic well-formedness for the rules provided to the knowledge base? What makes a set of statements semantically cohere as premises of a claim? What makes a set of statements semantically imply a conclusion? While the mathematical or syntactical conceptions of a rule may be clear, and logicians or knowledge engineers may implicitly know how to write well-formed rules, the principles which underlie the linguistic semantic instantiations are not clear. If we allow non-logicians or knowledge engineers to build rules, what guidance can be provided to build meaningful rules? That is, how can we make explicit what logicians and knowledge engineers implicitly know?

These topics touch directly on complex issues relating to the interface of human

reasoning, language, and formal reasoning [20,44]; indeed, in addition to the

issues that arise about controlled languages, it is problematic to presume that

participants can, without training, contribute to a normative

While all of these issues are substantial, they are partially addressed in our approach

to collaborative, incremental, distributive knowledge base construction. For example,

suppose there are two sentences in the knowledge base which are mutually incompatible

(e.g., our

Finally, we should touch on one representational issue. At different stages of the pipeline, different forms of representation are useful to support distinct reasoning processes. There is a balance to be struck at every step between abstraction and expressiveness. For instance, while we have used lower case letters and numbers, we could have used the sentence strings themselves as propositional variables. While we have left resolution of these issues to future work, it is not particularly problematic so long as we are clear about and consistent about the conversions at each state.

Instantiating arguments and relationships from a knowledge base

In this section, we discuss instantiating arguments and their relationships from a

We work with a

[6,7] provide numerous examples of knowledge bases and instantiated arguments primarily in Propositional Logic. The novelty of our example is twofold: our example is an abstraction from input sentences which have been automatically translated to First-order Logic; we have related the arguments and relations to an argumentation framework, where, as we see, additional issues arise.

Instantiating arguments and their relationships is necessary for calculating extensions of

arguments, which are arguments which are mutually consistent. Abstract argumentation

frameworks (

In a logic-based approach, statements are expressed as atoms (lower case roman letters),

while formulae (greek letters) are constructed using the logical connectives of conjunction,

disjunction, negation, and implication. The classical consequence relation is denoted by ⊢.

Given a knowledge base Δ comprised of formulae and a formula

The knowledge base Δ may be inconsistent, which here arises where Δ contains contradictory

propositions (and not necessarily just constraints); this bears on issues discussed in

Section 2.3. With contradictory propositions, we can

construct arguments in relations, where the propositional claim of an argument is

contradictory to the propositional claim of another argument or is contradictory to some

proposition in the support of another argument. These are There is an additional notion of

Given a large and complex LRKB, arguments will have structural relationships such as

subsumption of supports, where one support is a subset of another support, and implication

between claims, where one claim entails another. Moreover, there may be more than one

argument which undercuts or rebuts another argument. [6,7] define and discuss a range of these

relationships among arguments; however, additional definitions are not directly relevant to

our key points in this paper. For our purposes, given an LRKB, we can generate not only the

arguments, but also the counterarguments, the counterarguments to these arguments

(counter-counterarguments), and so on recursively; such a structure is an

Requests for JArgue should be addressed to Tony

Hunter e-mail:

From Section 2.1, we have the propositions p1–p19 and rules r1–r8. Several preliminary comments are needed before presenting the input LRKB. First, since incompatible literals is a significant issue, we did not presume to represent statements which were incompatible as logical contradictions, e.g., p1 and p2, p11 and p18, and p15 and p17, even though ACE translates these pairs as contradictory. In other words, we presumed the problematic examples of contradiction rather than the unproblematic.

p1: Every household should pay some tax for the household’s garbage.

p2: No household should pay some tax for the household’s garbage.

p11: Tom is not an objective expert about recycling.

p18: Tom is an objective expert about recycling.

p15: Every supermarket should pay a tax for the garbage that the supermarket creates.

p17: No supermarket should pay a tax for the garbage that the supermarket creates.

However, to determine attack relations, the logic-based approach requires the explicit expression of contradiction as in classical logic. We therefore make the following substitutions: p2 ≡ ¬p1, p11 ≡ ¬p18, and p17 ≡ ¬p15. This is an issue relevant to the illustration, not to the functionality of either the logic-based approach or JArgue since expressions of contradiction can be added to the knowledge base as it is built. However, the choice here is to represent such incompatibilities as logical contradiction rather than as constraints of the form ¬[p1 ∧ p2] (or in conjunctive normal form for JArgue !p1|!p2). We explore this choice in future research.

Given these equivalences, the related rules are adjusted by substituting in equivalent expressions:

r1:

r2:

r3: p10 → p3.

r4:

r5:

r6:

r7: p14 → p15.

r8: p16 → ¬p15.

Our LRKB for JArgue will be comprised of literals and (conjunctive normalised) rules.

However, there is a somewhat tangential issue of whether we need include

This may be a more substantive issue since what

arguments and relations are generated and used for input to the abstract framework may

depend on what is explicitly available in the knowledge base rather than the explicit

and inferred statements. We discuss this further below, and it may need further

examination.

With these points in mind, our input LRKB for JArgue is as follows: p6,

p7, p8, p9, p10, p12, p13, p14, p16, p18, p19, !p10|p3, !p9|!p18|!p19|p4,

!p12|!p13|!p18, !p3|!p4|p1, !p5|!p15|!p1, !p6|!p7|!p8|p5, !p14|p15,

!p16|!p15.

To run JArgue, we add this LRKB, then query the KB to generate an argument. Querying the

KB can only be done for one literal at a time. For our example, if we query for p1, we get

the following argument, which we label a1:11 Labelling the arguments is not a functionality

of Logic-based argumentation or JArgue. We do so to provide elements for the

AEKB. a1:

JArgue then requests the user to select from an argument with respect to which it should

generate an argument tree. Selecting a1, JArgue generates an argument tree with two

counterarguments a2 and a3 along with one counterargument a4 to a3; for the sake of

discussion, we discuss each node and their relationship rather than present the graph. The

first counterargument a2 is an undercutter of a1 since the claim of a2, !p18, is the

negation of a literal which appears in the support of a1:12 Logic-based argumentation and

JArgue represent the claim of a canonical undercutter with *; for the purposes of our

illustration, we make it explicit. a2: a3: a4:

If we query the LRKB for !p1 and keep the argument labeling the same, we have a3. The

argument tree for a3 gives us a1 as a counterargument; this shows that where we have

contradictory

Querying just the argument trees for p1 and !p1, we can create an AKB, which is a

knowledge base of generated arguments in attack relations (indicated with

Arguments: a1, a2, a3,

a4. Attack Relations: att(a1,a3), att(a3,a1), att(a2,a1),

att(a4,a3).

Where we choose to argue for and against literals at the root of an argument tree such as

about p1 and !p1, the arguments and relations among arguments appear relatively

straightforward. It is important to note that we have used JArgue to generate argument

trees with respect to a query on a proposition. However, we are using it as well to

creating AKBs for input to abstract argumentation frameworks, which is the reason to

investigate the argument trees for a proposition

A relevant general question arises – how many arguments and attack relations among them

can be generated from the underlying LRKB? Given that we have classical logic,

a’: a”:

For our purposes, we only include those arguments and relations relative to a debate

under investigation, here p1 and !p1; these are the arguments and relations as generated

by JArgue. Other arguments and counterarguments such as for p3 are not clearly relevant.

Indeed, perhaps all the other arguments and relations arise via contraposition are

problematic; indeed, it is questionable whether contraposition is a

The hedge, however, bears on how we use AKB as input to the abstract argumentation framework discussed in Section 4, where we find the AKB with four arguments and four attacks is not sufficient to get a correct result. This is so because while a2 attacks a1, and a4 attacks a3, nothing attacks a2 or a4; thus, these arguments attack and defeat a1 and a3. The extensions leave just a2 and a4, which is contrary to our desired goal, which is that a1 and a4 could hold together, while a3 and a2 could hold together. We need, then, to generate an attack on a2 where we query for the claim of a1, and to generate another attack on a4 when we query for the claim of a3.

This would seem to require that where we query for a proposition and its negation, we

want to generate at least one additional argument to counterattack attackers, if we can.

In other words, we want to generate attacks (either rebuttals or undercutters) to a2 and

a4. For example, we could do so by finding a rebuttal which uses (in a sense to be

explained) the claim of the queried argument. In other words, we want to find one rebuttal

a5 where the claim of a5 is the negation of the claim of a2 and where the claim of a1,

namely p1, is used is found among the support for the claim of a5; similarly, we want to

find another rebuttal a6 where the claim of a6 is the negation of the claim of a4 and

where the claim of a3, namely ¬p2, is used. Such considerations yield the following

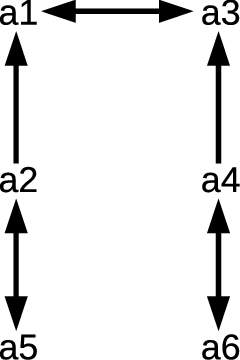

arguments: a5: a6: Arguments: a1, a2, a3, a4, a5, a6. Attack Relations:

att(a1,a3), att(a3,a1), att(a2,a1), att(a4,a3), att(a5,a2), att(a2,a5), att(a6,a4),

att(a4,a6).

Arguments and relationships.

The result is a graph as in Fig. 2. Note that arguments a1–a4 are clearly related to our earlier representation of the relationships among statements in Fig. 1, but that a5 and a6 are not.

However, to find a5 and a6, we have some additional issues to consider, in particular the

argument trees of each of these arguments. We consider the issues by example. The argument

a2 has as claim ¬p18, thus the rebuttal a5 must have p18 as claim. In fact, we have an

argument by assertion for this claim:

While these are remarks about what is required to have an AKB suitable for reasoning with

Having generated an AKB of arguments and their relations, we input them to an abstract

argumentation framework

af s

For our purposes, we consider the

An argumentation framework

Some of the relevant auxiliary definitions are as follows, where

We say that

In the next section, we use the ASPARTIX system to generate argument extensions.

The ASPARTIX program computes extensions of argumentation frameworks [22];13 We thank the ASPARTIX team, and particularly

Sarah Gaggl, for making the ASPARTIX system available to us. See

See

To run ASPARTIX, we input to the program a text file for the AKB, containing the arguments and relations, as well as the desired sort of extension. We used our previously defined AKB, which has the arguments and attack relations:

ASPARTIX generates the following preferred extensions, where

Such extensions which are generated by ASPARTIX we refer to as the

This seems to be an attractive result. As we are only interested in extensions with p1

and ¬p1, we will not look at {in(a2), in(a4)}. Preferred extensions, which are maximal

sets of “consistent” arguments (and related propositions), correlate to the notion of

Our sets of arguments are an abstract representation of the contents of the arguments themselves, which are comprised of literals and rules. Thus, given the sets of arguments, we can extract the literals and rules that are consistent and which represent the correlated linguistic expressions of the policy positions. While we can reconstruct the content of arguments a1, a2, a3, and a4, the additional arguments for a5 and a6 are less clear. We will suppose that these are, as we suggested, generated as rebuttals.

Recall that our arguments are: a1: a2:

a3:

a4:

a5:

a6:

Let us informally define a policy position (PP) as supports and claims of all the arguments

in the extension (we could do without the claim, but in a sense, the objective is making

explicit what is otherwise implicit and inferred). Formally, we have two functions: F1 is a

function on the extension such that it outputs the union of the result of the application of

F2 on every argument of the extension; F2 is a function on arguments which outputs a set

comprised of the union of the support with the claim. We suppose these functions.

Furthermore, since we have sets, any redundancy is removed. PP1:

PP2:

PP3: PP4:

The propositions and rules here correlate directly with the propositions and rules introduced in Section 2.1, so we can substitute them here. We will put in the sentences and indicate the rules for PP2:

!p1: No

household should pay some tax for the household’s

garbage. p5: If a household pays a tax for the

household’s garbage then the tax is unfair to the

household. p6: Every household should pay an equal

portion of the sum of the tax for the household’s

garbage. p7: No household which receives a benefit which

is paid by a council recycles the household’s

garbage. p8: Every household which does not receive a

benefit which is paid by a council supports a household which receives a benefit which

is paid by a council. p12: Tom owns a company that

recycles some garbage. p13: Every person who owns a

company that recycles some garbage earns some money from the garbage which is

recycled. p14: Every supermarket creates some

garbage. p15: Every supermarket should pay a tax for the

garbage that the supermarket creates. !p18: Tom is not an

objective expert about recycling. r4:

r5:

r6:

r7:

p14 → p15.

This seems an accurate representation of this policy

position from the onset. While PP2 and PP4 seem acceptable representations of policies.

Considerations

The policy positions of PP1 and PP3 seem to have some additional superfluous information.

For instance, PP1 contains the propositions p14 and p15, which are propositions in the

support of !p1, which itself is the rebuttal of the main point of PP1. Since other

portions of the support for !p1 do not hold, !p1 does not hold. Yet, it would seem, in an

intuitive argument, odd to have as part of one’s position statements in support of the

opposite point, even if these statements are not inconsistent with one’s position. We see,

then, that

Discussion

In this paper, we have shown how statements expressed in natural language can be

represented as a formal knowledge base of literals and rules (LRKB), that the LRKB can be

fed into a framework for generating arguments and relations to create an knowledge base of

arguments (AKB), and then in turn, the AKB can be provided to an

The novelty of our approach is that formal, implemented approaches to the syntax and semantics of natural language have been used to develop the LRKB, that we have used implemented systems for generating AKB and AEKB, and that we have identified some important novel issues and problems for future research in the development of the argument pipeline. In these respects, our approach is distinct from similar work such as [6,7,34,36,45,53,54], where disputes expressed in natural language are translated manually into AKB and AEKB. While manual analysis can work, it does not address the problem of the knowledge acquisition bottleneck, nor does it make explicit, formal, or implemented the knowledge engineer’s knowledge in making the analysis. Our approach is also distinct from related work on the integration of various argumentation tools and technologies, such as the Argument Interchange Format, ArguBlogging, and OVA, which do not bear on the natural language issues, nor argument generation [41].

In addition to observations made in the