Abstract

The ‘Information Era’ is awash with data, and accordingly, in any given medical or scientific field, tools to help readers and researchers readily identify and rank ‘like’ journals are surely welcome. Historically, the journal impact factor (JIF), published by Thompson-Reuters via Journal Citation Reports (JCR®), has been the ‘holy grail’ of ranking journals within pre-defined journal sets. As many readers will know, the JIF is defined as the number of citations to a journal's articles published in the previous 2 years divided by the number of articles in the journal during those 2 years [1]. However, many journals are not given a JIF by Thompson-Reuters and thus are at a disadvantage as more and more authors are only submitting papers that have a JIF from this source.

We, and others, have argued that the JIF is an imperfect measure and that, despite common practice, the scientific quality of a paper cannot be judged simply on the basis of the impact factor of the journal in which it appears [2–5]. Moreover, for the purposes of comparison, how journals are selected for a given set of ‘like’ journals is somewhat arbitrary, as exemplified by the fact that some journals appear within several sets, and others that should be included are, mysteriously, not. For example, in the child and adolescent psychiatry field, two of the leading journals are listed on the ‘psychiatry’ journal set, but only one appears on the ‘pediatric’ list of journals, and many influential journals dealing with child psychology and development are not listed by JCR at all, thus appearing invisible using this method of comparison.

Further to recent Editorials [6,7], here we demonstrate how to identify a core set of journals that frequently publish articles within a particular field and then, moreover, we suggest a new method of ranking journals, derived from several measures rather than the JIF in isolation. The child and adolescent psychiatry field is used to illustrate our proposals.

Method

Journal sample

In order to identify journals that publish within the field of child and adolescent psychiatry/psychology/ neurology, we initially found journals related to two major journals in that specialism, the Journal of the American Academy of Child and Adolescent Psychiatry (JAACAP) and the Journal of Child Psychology and Psychiatry (JCPP), using the Journal Citation Reports feature of the Web of Science (WoS), which lists the number of references to and from these journals in 2009. As there are many influential journals not listed in JCR that deal with psychological and developmental issues pertaining to children and adolescents, we also examined lists provided by SCImago (Journal and Country Rank (SJR) from http://www.scimagojr.com) which employs the Scopus database to compare the impact and influence of journals [8,9] similar to the way JCR uses the Web of Science database.

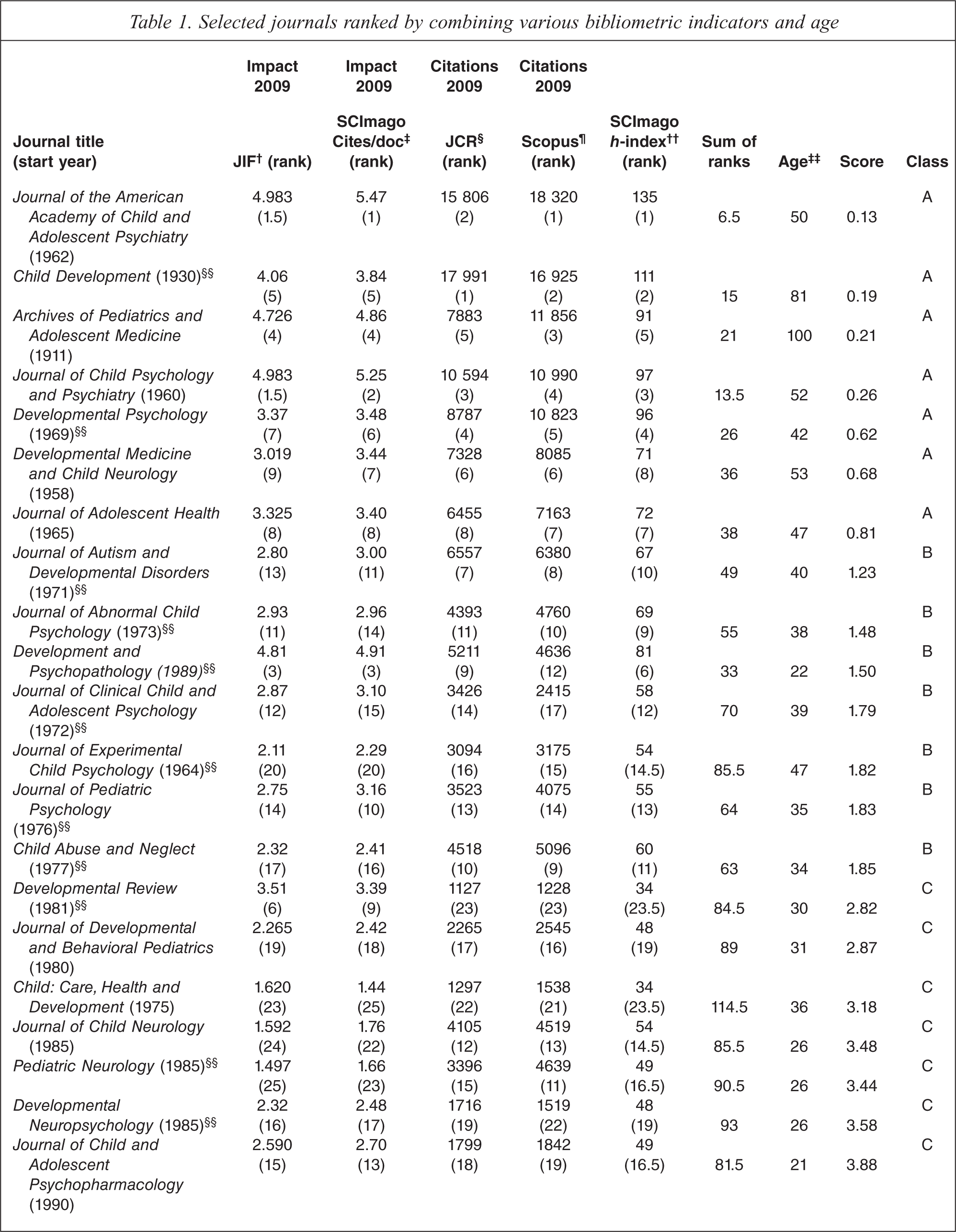

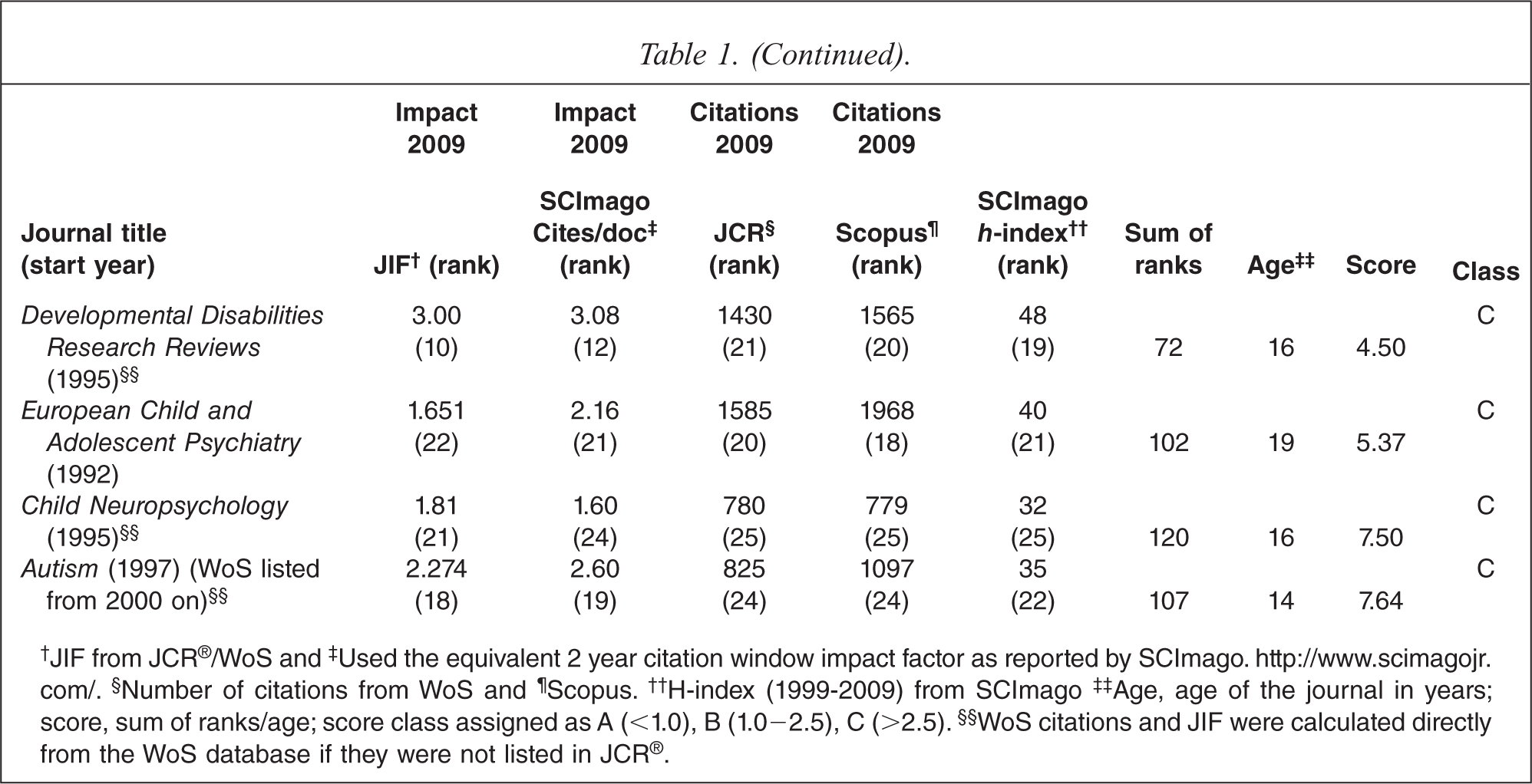

Table 1 lists the 25 journals chosen for analysis. Twelve of the 25 titles were selected from ‘relatedness’ tables in JCR; the other thirteen were not listed on JCR, but derived from two SCImago lists, (i) subject area medicine; category pediatrics, perinatology and child health, and (ii) subject area psychology; category developmental and educational psychology. General pediatric or psychiatry journals were not included in the analysis because they do not publish a large percentage of articles dealing with psychiatric issues in children. Other journals were excluded if they did not appear on both Scopus and WoS, had low impact factors (< 1.5), received less than 700 citations in 2009 or had short publication histories (less than 4 years). Ulrich's Periodicals Dictionary (https://ulrichsweb.serialssolutions.com) was used to find the first year of publication and any name changes of journals.

Selected journals ranked by combining various bibliometric indicators and age

†JIF from JCR®/WoS and ‡Used the equivalent 2 year citation window impact factor as reported by SCImago. http://www.scimagojr.com/. §Number of citations from WoS and ¶Scopus. ††H-index (1999–2009) from SCImago ‡‡Age, age of the journal in years; score, sum of ranks/age; score class assigned as A (<1.0), B (1.0–2.5), C (>2.5). §§WoS citations and JIF were calculated directly from the WoS database if they were not listed in JCR®.

Journal ranking

In order to rank the journals, we derived five bibliographic measures (Table 1), as follows. First, the JIF for 2009 was derived from listings in WoS (www.isiknowledge.com) and Scopus (www.scopus.com) listed in columns 2 and 3 (Table 1). If the JIF was not listed on JCR, this was calculated manually in WoS, selecting the publication name and limiting the search to years 2007 and 2008. The number of citations received in 2009, from all sources, divided by the number of articles, reviews and other citable material (minus editorials, corrections, abstracts, book reviews etc.), provides a proxy JIF. The other citation indicator from SCImago, uses Scopus to derive citations. We used the number of citations/docs (2 years) as this is equivalent to JIF within Scopus for 2009. Second, the number of citations received in 2009 for all published articles listed in WoS and Scopus was determined and listed in columns 4 and 5. The reason this indicator was included in the ranking formula was to offset the potential bias of some journals that publish a low volume of articles and therefore acquire an ‘over-inflated’ JIF. If a journal was listed on JCR, then the number of citations for 2009 was used. Otherwise, this was calculated manually from each database [8]. The h-index listed for each journal by SCImago (column 6) was used as a bibliometric indicator of scientific output [10–12]. The main advantage of the h-index is that it combines quantity (number of publications) and impact (number of citations) and is relatively easy to calculate [11]. The h-index ignores JIF, but this does not mean the two are unrelated; we previously showed a strong correlation between JIF and the h-index for articles published in psychiatric journals [10].

Data for each bibliometric measure was collected over one week (1–8 June 2011) and rank ordered from 1 to 25 for each journal. Ranks were then summed over the five indicators (column 7). The ranked score was subsequently divided by the journal's age (column 8) to factor in the long-standing role some journals have had in the dissemination of scientific knowledge. This latter concept has been used by others when ranking journals [13,14]. Table 1 lists the journals in rank order using this final score (column 9). The final rankings were then assigned a classification (column 10) as follows: A level (< 1.0), B level (1.0 to 2.5) and C level (> 2.5).

The highest ranked journal of the 25 listed on Table 1 was the JAACAP. In total, there were eight journals given a classification of A, eight were given a classification of B and the rest were ranked C. Within this journal set, the common features of the A-ranked journals were their high impact factors (3.0–5.47), high citation rates for 2009 (> 6000), and having an h-index exceeding 71 (and this was before the journal's age (> 40 years) was factored in the final score). The B-ranked journals in this set had JIFs ranging from 2.11 to 4.9, citations for 2009 of 2415 to 5211 and h-indexes of 54 to 69 with publication histories of more than 20 years (range 22 to 47 years). The C-ranked journals, in general, had lower impact factors and citations for 2009, and most of them had ranking on all five indicators of 10 or more. However, two journals ranked C with JIFs greater than 3.00 were not ranked higher as they received less than 1600 citations in 2009, their h-indexes were 34 and 48 and, as quarterlies, they publish fewer articles.

Discussion

The method described here for identifying and comparing journals that focus on child and adolescent psychiatry may be used to identify and compare journals belonging to any general or specialist field within psychiatry, medicine or other area of research. Differences exist between sub-disciplines and speciality areas within medicine, nursing and other fields that need to be considered when assessing journal impact between and within various journal sets [15,16]. There are other ranking methods [e.g. 17], but these are not as transparent as the one described here and elsewhere [14]. The method we have suggested, with a derived table, has practical utility. For example, this approach will be useful to researchers when considering to which journal their latest article might be submitted (and, if rejected by the first journal, the next preference etc.). Clinicians will be able to readily identify a more inclusive family of journals relevant to their particular field and interests. Inevitably, any method of ranking journals based on a quantitative method will be regarded as incomplete simply because a journal's overall quality needs to be judged in the context of the whole journal, taking into consideration other factors, just as an individual article needs to be judged on its own merits. Therefore, we suggest that sourcing and then ranking journals using several measures from a variety of perspectives, as we have proposed, is preferable and perhaps more meaningful than relying on a solitary measure from a single source. Just as Cantor and Joyce [18] recently asked psychiatry to integrate clinical practice into an evolutionary framework, we respectfully suggest that perhaps it is time for our journal appraisal measures to gradually evolve. The method outlined here is more inclusive as it incorporates journals not listed on JCR and less prone to certain biases leading to some journals receiving an over-inflated impact factor.

Footnotes

Acknowledgements