Abstract

We introduce ClusterCirc, a new clustering method for circumplex instruments. ClusterCirc finds item clusters with optimal circumplex spacing of items and clusters. In our simulation study, ClusterCirc outperformed Ward and k-means cluster analysis in revealing circumplex clusters, especially for larger within-cluster distances, also when clusters were not equally spaced and for hierarchical models. In empirical data, ClusterCirc yielded subscales with good scale properties and greater circumplex fit than the original subscales and subscales based on Ward cluster analysis. We provide an R package for ClusterCirc (https://github.com/ancleo/ClusterCirc) and SPSS codes (https://github.com/ancleo/ClusterCirc_SPSS) with three functions: ClusterCirc-Data performs ClusterCirc on empirical data. ClusterCirc-Simu performs a tailored simulation study to assess circumplex fit of the data. ClusterCirc-Fix computes ClusterCirc indices for user-defined item clusters.

Keywords

Introduction

Circumplex structure is used to describe many psychological traits, especially in the interpersonal domain, such as interpersonal behavior (Adams & Tracey, 2004; Alden et al., 1990; see Figure 1), interpersonal values (Locke, 2000), interpersonal strengths (Hatcher & Rogers, 2009), interpersonal problems (Horowitz et al., 1988), and social support (Trobst, 2000). It has also been applied to the Big Five and HEXACO model of personality (Barford et al., 2015; DeYoung et al., 2013) and used as a meta-model for personality (Strus et al., 2014). The circumplex model is thus a relevant conceptualization of human personality and behavior in both research and practical applications like diagnostics, coaching, and therapy.

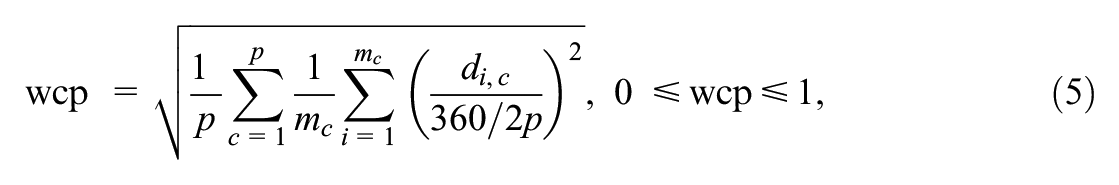

The interpersonal circumplex: structure of the Interpersonal Adjective Scales with eight subscales.

The Circumplex Model

When researchers wish to develop a psychometric instrument with circumplex structure, the intended circular arrangement imposes some amount of overlap between the subscales and specific a priori constraints to be met (Gurtman, 1993; Gurtman & Pincus, 2000; Guttman, 1957). To put it bluntly, variables of a circumplex instrument should be positioned on a circle. Traditionally, the circumplex is modeled by two orthogonal axes and several interstitial variables that span the circumference of the circle (Gurtman, 1995). Most circumplex models are concerned with the spacing between variables and their radius when they are projected onto a circular structure (Gurtman & Pincus, 2000). The perfect circumplex would impose that variables are evenly distributed (equal spacing) across the circle and that they have the same radius within the circle (equal radius). For example, the interpersonal circumplex (Alden et al., 1990; Gurtman, 1993) is spanned by the two axes Dominance and Love and comprises eight facets (subscales) that are supposed to be positioned on the line of the circle (equal radius) and evenly distributed in 45° distances to neighboring facets (equal spacing; Figure 1).

Different circumplex models vary in the constraints they put on spacing between variables and/or their radius. The least restrictive spatial representation model (Shepard, 1974) requires that the structure of an instrument is projected onto a circle in Euclidean space without any further assumptions. The model can be evaluated by nonmetric multidimensional scaling/smallest space analysis (SSA) and thereby subjectively interpreted with respect to spacing and radius of the variables (Guttman & Levy, 1991; Schlesinger & Guttman, 1969). The circular order model (Tracey & Schneider, 1995) imposes ordinal constraints on spacing of the data by requiring that variables that are supposed to be closer on the circle should have larger correlations than the ones that are supposed to be further apart. The circular order model can be tested by specifically designed programs to test the order of correlations (RANDALL; Tracey, 1997). The most prominent model of circumplex structure is the circular stochastic process model for the circumplex (SPMC; Browne, 1992; Grassi et al., 2010; Nagy et al., 2019). The SPMC is a type of factor analytic model that allows for the separate specification of equal spacing (angular distribution of variables) and equal radius (communalities of the variables). Model fit of the SPMC can be evaluated by a circumplex-tailored implementation of structural equation modeling (CIRCUM; Browne, 1992; CircE in R; Grassi et al., 2010; Nagy et al., 2019).

Items Versus Subscale Level in Circumplex Instruments

A major concern in circumplex instruments with several subscales is whether circumplex constraints are investigated on the level of subscales or on the level of individual items. It should be noted that circumplexity of subscales can be reached even if items without circumplex structure are aggregated in a way that the subscales correspond to a circumplex structure. In contrast, a circumplex structure of items can only be reached if the items are worded in a way that they conceptually represent a circumplex. As the item-level circumplexity is theoretically more compelling, it is most convincing to find subscales or clusters for which the item circumplexity is at maximum. The subscale circumplexity then corresponds to the item circumplexity. However, if circumplexity is modeled only for items, equal spacing of items would imply that they are evenly distributed across the circle, making item-subscale assignment arbitrary or impossible because no clear-cut clusters could be found. Therefore, when circumplex subscales are intended, it would be most compelling to cluster items in a way that they are close to their angular cluster centroid and that their distances to neighboring clusters are optimal regarding circumplex spacing. This approach ensures that both the item and subscale levels are considered, enhancing the circumplexity of the instrument.

To our knowledge, there is no model or method which combines these two levels for circumplex instruments. Typically, circumplex constraints are defined for the subscales. For example, instruments that measure interpersonal behavior, like the Interpersonal Adjective Scales (IAS; Adams & Tracey, 2004), the Inventory of Interpersonal Strengths (IIS; Hatcher & Rogers, 2009), and the Inventory of Interpersonal Problems (IIP; Horowitz et al., 1988), define the circumplex structure on a subscale level, such that the eight subscales are supposed to be equally spaced across the circle (see Figure 1). Within this approach, the suitability of items for the respective subscale can be investigated by their angles or spacing. The radius of the items is less informative regarding the assignment of items to subscales because it only refers to the items’ communalities, which are typically allowed to vary between items and for each subscale. However, item communalities are related to item reliabilities and therefore relevant in item selection procedures, which is why they should not be completely ignored.

Current Practice and Related Methods

As is usual in the development of psychological instruments, circumplex scales undergo multiple loops of theorization and statistical analysis to find suitable items and sort them into meaningful subscales that adhere to the theoretical model. A typical, data-driven approach that is often applied in the development and evaluation of circumplex scales is performing principal component analysis (PCA) on items and projecting their loadings on two orthogonal (circumplex) axes with a circle drawn around them (Fabrigar et al., 1997; Hatcher & Rogers, 2009; Locke, 2000). Based on visual inspection and more or less theoretical consideration, the circle is divided into segments—usually of the same size—that should represent circumplex subscales (Adams & Tracey, 2004; Gurtman & Pincus, 2000; Horowitz et al., 1988, 2017; Locke, 2000; Trobst, 2000). Items are sorted into a subscale if they fall into the angular range of a subscale. For example, this procedure was performed in the development of the IIS (Hatcher & Rogers, 2009) and the Circumplex Scale of Interpersonal Values (Locke, 2000). The IIP was originally not designed as a circumplex instrument (Horowitz et al., 1988), but circumplex structure was superimposed later on the items, and new subscales were found by this approach of slicing PCA plots (Alden et al., 1990; Horowitz et al., 2017). Suitability of items for a subscale is often evaluated in a similar fashion at a later stage of scale development. For example, in the IAS (Wiggins et al., 1988) and in the IIP (Horowitz et al., 2017), the acceptable distance of an item from its subscale’s angle is limited to ±22.5°, corresponding to subscale segments of 45°. Thereby, a moderate degree of item heterogeneity is allowed, but distinction between subscales is supposed to be maintained. It should be noted that item heterogeneity in circumplex models does not need to be perfectly proportional to the item intercorrelations because it is based on the angular distances between the items. Items with identical angular positions in the circumplex and lower communalities may have lower intercorrelations than items with different angular positions and higher communalities.

It is also possible to apply SSA (Schlesinger & Guttman, 1969) to find circumplex clusters and sort items into suitable subscales. SSA allows for a graphical inspection and topological sorting of items and can be used similarly as the PCA-plot slicing procedure described above (Fabrigar et al., 1997; Guttman & Levy, 1991). In the approach of slicing PCA plots and in SSA, there is a certain degree of arbitrariness in item-subscale assignment because it relies on visual inspection and subjective decisions regarding the segmentation of the circle. The question is: Where exactly should the segments be positioned, where should the lines be drawn? Theoretical aspects and item content are usually considered in assigning items to subscales, but it might be particularly challenging in the case of circumplex structure because of the intended conceptual overlap of neighboring subscales. For example, differentiating between Tradition and Conformity in human values (Schwartz & Boehnke, 2004) or Social Facilitating and Helping in vocational interests (Etzel et al., 2021) solely based on item wording and theoretical considerations can be difficult. To our knowledge, there is no circumplex-specific sorting method to cluster items as a basis for suitable subscales. Instead of being sorted into subscales, items are only selected or dismissed based on visual inspection of their angular positions, their content, and traditional item analysis. Thereby, items that could be useful if sorted into another subscale could be dismissed. Similarly, even if items fit within the angular range of an a priori assigned subscale, an alternative positioning of the subscales and thus different item-subscale assignment could lead to better overall circumplex properties of the instrument. Therefore, it would be helpful to use the angular information on the items to decide on overall sorting of items into subscales and thereby optimize circumplex spacing of the whole instrument.

Another approach to find item clusters is cluster analysis. Cluster analysis typically makes use of sorting algorithms based on the proximity of items (Bridges, 1966). This procedure could lead to ideal circumplex sorting if items are very homogeneous and clustered closely around their central angle with small within-cluster variance. However, some degree of item heterogeneity within clusters is often tolerated or even desired in circumplex models because it implies a broader conceptual scope. Moreover, when circumplex structure can be expected from theory, proximity of items as implied by conventional cluster analysis might not be the best criterion to optimize item-cluster assignment because it can reach an optimal clustering of items even without any circumplex structure of the clusters. For circumplex models, proximity of items to the cluster centroid is only of interest when the cluster centroids themselves are arranged according to the circumplex. If circumplexity can be expected, it could therefore be beneficial to have a sorting method that performs item-subscale-clustering by optimizing circumplex spacing of clusters and items simultaneously. Therefore, we introduce ClusterCirc, a new method that is specifically designed for circumplex instruments to help sort items into clusters based on the circumplex spacing criterion.

ClusterCirc: Intended Use and Practical Considerations

ClusterCirc primarily aims at finding item clusters with optimal circumplex spacing as a basis for circumplex subscales. Thus, if researchers have generated items for a circumplex instrument, but they are uncertain about the item clustering (i.e., which items should be sorted into which subscale, and where exactly should the subscales be positioned in the circle), ClusterCirc can help with this decision. If the number of clusters is not clear, researchers can try out different numbers of clusters and choose the one with the best results. If researchers already have preferences regarding item-subscale assignment, there are two options: First, if there is greater certainty for some items than for others, they can introduce weights in ClusterCirc. Items with larger weights (for which subscale-assignment might be clearer) will have a greater impact on overall clustering than items with smaller weights, which can help with (partial) item reallocation. Researchers can adjust the weights to their level of (un)certainty about the allocation of individual items. Second, it is possible to compute ClusterCirc indices for completely fixed, user-defined item clusters representing primary subscales and compare these results to the suggested ClusterCirc solution (with or without a priori weights) to decide whether items should be re-allocated or not. To this end, researchers can inspect the overall results as well as ClusterCirc indices for individual clusters and items. If ClusterCirc is used at a stage where item selection is relevant, ClusterCirc item indices can also be used to help decide which items should be retained and which ones could be dismissed or modified, both in the case of newly found or predefined, fixed subscales. However, this should be embedded in other procedures of psychometric evaluation, item selection, and development. Hence, ClusterCirc can be used to find new item clusters as a basis for circumplex subscales, evaluate existing subscales and re-allocate items from their intended subscales to enhance circumplexity, and select, dismiss, or improve items from a circumplex instrument. The procedure by which these goals should be attained will be elaborated in the following section.

Description ClusterCirc

ClusterCirc is based on item angles. They can be obtained either by translating PCA loadings on two orthogonal components into angular positions as described above (Alden et al., 1990; Horowitz et al., 2017) or by performing Browne’s procedure CIRCUM (CircE in R, Grassi et al., 2010) on the items’ intercorrelation matrix. Many circumplex models comprise more than two factors. Often, a third general factor is considered alongside the two circumplex axes (Acton & Revelle, 2002; Hopwood & Good, 2019; Wilson et al., 2013). In this case, ClusterCirc should be performed on those two components for which circumplex structure can be expected from theoretical considerations. To counteract overfitting of ClusterCirc, the effect of sampling error on the PCA loadings should be minimized. It is therefore recommended to perform ClusterCirc only for loadings of components with eigenvalues that are greater than the mean eigenvalues computed from parallel analysis (Crawford et al., 2010). However, traditional PCA might not be the best fit to obtain circumplex item angles, especially when there are more than two components to be extracted (Fabrigar et al., 1997). In the case of hierarchical models with a third, general factor in addition to the two circumplex factors, CircE item angles can be advantageous because the procedure automatically takes into account a possible third factor (Browne, 1992; Grassi et al., 2010).

ClusterCirc finds an optimal sorting of items based on angular distances between items and clusters (spacing criterion). Like in SSA and PCA-plot slicing, ClusterCirc uses topographical information to find suitable clusters within the circular structure. For example, an instrument with an a-priori expected number of eight clusters would be divided into eight subsections with 45° angles (see Figure 1; Alden et al., 1990; Gurtman, 1993). However, item-cluster sorting is not based on subjective visual inspection like in SSA or PCA-plot slicing (Fabrigar et al., 1997). Instead, ClusterCirc objectifies the spatial information to find an ideal position of the clusters within the circle by iterating across different divisions of the circle.

Like many cluster analytic approaches, ClusterCirc maximizes proximity of items within clusters for an a-priori defined number of clusters. However, it also optimizes spacing between clusters (on an item and cluster level) to approximate the equal spacing criterion of the circumplex model. Thereby, ClusterCirc allows for larger distances between items within each cluster, and thus greater item heterogeneity, than cluster analysis. It should also be noted that ClusterCirc differs from cluster analysis because traditional cluster analysis does not use PCA or CircE results as input like ClusterCirc. Furthermore, hierarchical cluster analysis is based on sequential merging of clusters, whereas ClusterCirc is concerned with optimal partitioning of objects with a similarity structure of rank two. ClusterCirc thereby uses the angular distance of items on a circle to maximize the proximity of items within each cluster and optimize distances between items from different clusters.

A priori assumptions in ClusterCirc include the desired number of clusters, which can be varied manually to find a suitable solution, and the division of the circle into segments of the same size. Equal spacing of clusters and minimal within-cluster distances of items are not forced onto the data, but it is assumed that they are to be approximated by the ClusterCirc search. ClusterCirc allows to manually vary the relative importance of equal spacing between clusters versus within-cluster proximity, whereby each of them can also be completely ignored. If, for example, researchers assume that equal spacing of clusters is violated in their data and also do not wish to optimize between-cluster spacing, it is possible to disregard it and only optimize within-cluster proximity by the ClusterCirc search.

The ClusterCirc Spacing Index

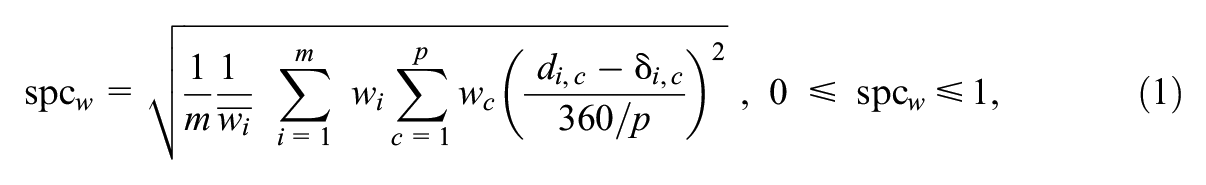

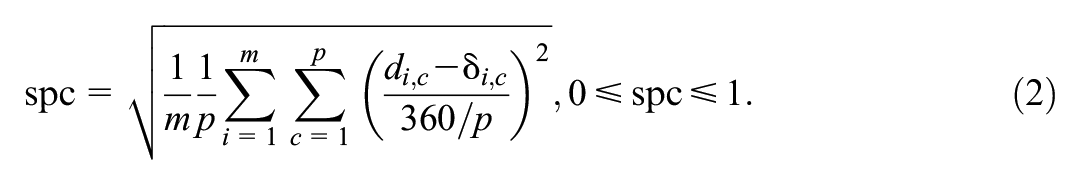

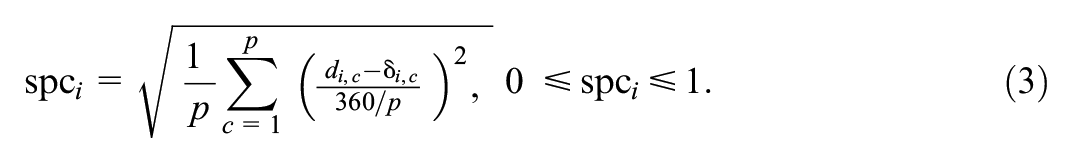

ClusterCirc aims at minimizing a spacing index that is defined as the deviation from perfect circumplex distances of items to clusters, weighted by communalities (default) or user-defined item weights. The relative importance of equal spacing between clusters versus proximity of items within their respective clusters can be adjusted and is included in the term. Thus, “spacing (weighted)” is an error term. For any given classification of items into clusters, the spacing index spc w is formalized as

where m is the number of items, p is the number of clusters, wi is the item weight (default = item communality), and

The parameter e allows to specify the relative importance of equal cluster spacing as opposed to within-cluster proximity (0 ≤ e ≤ 1). Thereby, e = 1 means that between-cluster distances are completely ignored (no equal spacing assumption) and only item homogeneity within clusters is to be maximized. In contrast, e = 0 means that only between-cluster distances are considered (maximum importance of equal spacing between clusters). A value of e = 0.5 means that both between-cluster and within-cluster distances are considered to the same extent. The default value is e = 1/p, which means that distances between items and their respective cluster centroid are considered to the same degree as distances between items and the other clusters (e.g. e = 1/8 for eight clusters, where each cluster is considered by one-eighth in the analysis). If 0 < e < 1, the spacing index is 0 if clusters are equally spaced across the circumference of the circle, and if items within each cluster are perfectly aligned on their angular cluster centroid without any heterogeneity of items. Otherwise, the spacing criterion gives the proportional deviation from this perfect circumplex spacing of items and clusters. Spacing indices of spc w > 0.90 indicate extreme departures from circumplexity, which cannot be resolved by eliminating a few items. In such cases, a completely different theoretical approach or a substantial revision of the instrument would probably be most appropriate. For example, the spacing index would mathematically become 1 if all items were displaced in a way that they would be perfectly aligned on the cluster centroid of their neighboring cluster, with distances computed in the direction of displacement. To eliminate any effect of the number of clusters on the spacing index, the maximum angular within-cluster range (360°/p) given the number of clusters p is included in the term.

Furthermore, item weights are also included in spc w , such that some items can have a greater effect on overall clustering, for example, if their positioning and subscale-assignment is more important or more certain than it is for others. Default weights are communalities of the items (implicitly, their radius on the circle in a two-dimensional model). They can be used if there are no a-priori expectations regarding item-subscale assignment. This should counteract effects of measurement error and potential overfitting and ensure that items with small communalities affect the suggested item-cluster sorting to a lesser degree than items with larger communalities. Researchers can also adjust the degree of prior theorization versus empirical reality and combine both of them by choosing weights accordingly. For example, items for which cluster allocation is clearer could receive large weights, those for which cluster allocation is less clear could be weighted by their communalities, and items that should be classified but should not affect the overall solution may receive weights that are smaller than their communality. After summation across items, spc w is divided again by the mean weight across all items for scaling purposes, that is, to make results comparable across different analyses where item weights are not the same (communalities or user-defined).

Description of the ClusterCirc Procedure (ClusterCirc-Data)

ClusterCirc allows for different analyses. The typical first step in a circumplex analysis is to find item clusters with optimal circumplex spacing in a dataset. This is performed by ClusterCirc-Data, a brute force search algorithm for finding the angular position of an a priori given number of item clusters for which the spacing index is minimal.

For this end, the circle is divided into segments (clusters) of the same size with a maximum angular within-cluster range of 360°/p, for example, 45° for eight clusters or 120° for three clusters. The decision to divide the circle in segments of the same size is based on circumplex models of many psychological traits, where such same-sized segmentation is inherently part of the theory (Adams & Tracey, 2004; Gurtman & Pincus, 2000; Horowitz et al., 1988, 2017; Locke, 2000; Trobst, 2000).

ClusterCirc for p clusters begins with the cluster limits 0°, 360°/p, 2 × 360°/p, …, (p − 1) × 360°/p. For example, the procedure would begin with the cluster limits 0°, 120°, and 240° for three clusters. All items that fall into the maximum range of a cluster are automatically assigned to it. Then, ClusterCirc iterates across 360 × q/p divisions of clusters in 1/q° displacements of the cluster limits. Thereby, q is an integer to adjust precision of the solution. The default value in ClusterCirc is q = 10, which should ensure fast computation and simultaneously allow for enough precision of segment displacement (1/10°). For very fast devices, q > 10 can be feasible, although it will typically not substantially affect the solution.

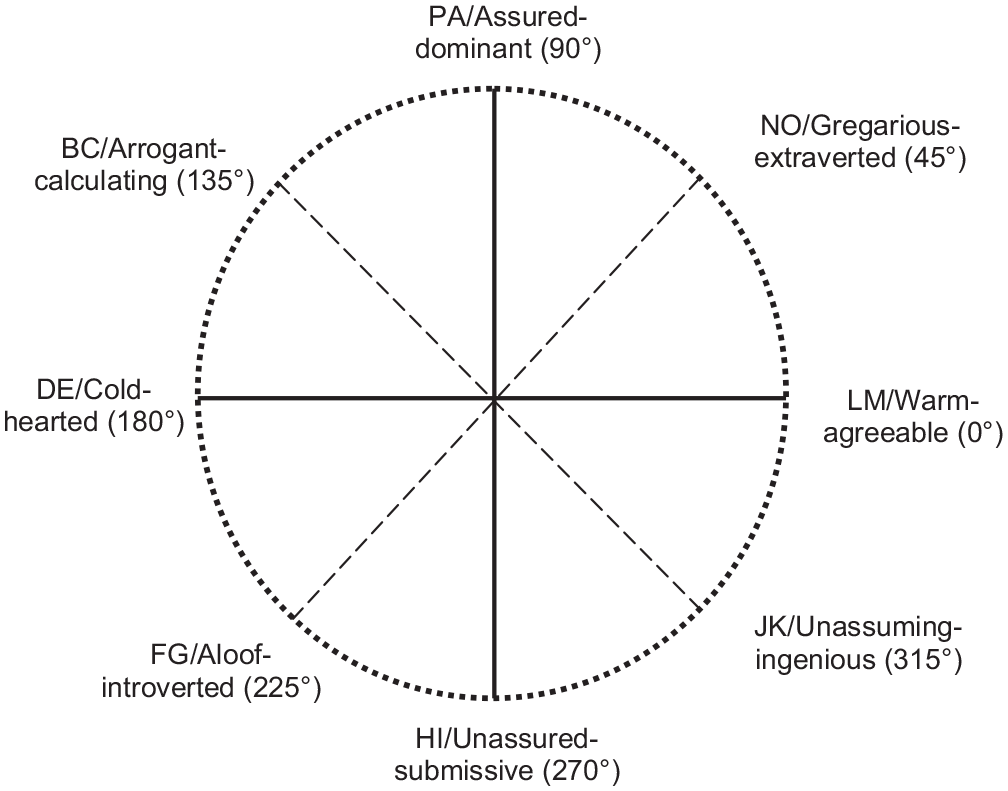

Figure 2 illustrates the ClusterCirc procedure for three clusters and q = 1. In the ClusterCirc procedure, the spacing index spc w is replaced, and the respective item clusters are stored if spc w is smaller for a particular division than for the previous ones. Finally, the item-cluster-assignment with the smallest spacing index is retained. Given that the assignment of items to clusters is solely based on angular position of items, the number of items per cluster can be different for each cluster.

Illustration of the ClusterCirc procedure for an example of three clusters.

Additional ClusterCirc Indices

In addition to spc w , ClusterCirc also computes other indices that could be of interest to the user because they allow for the evaluation of specific aspects of the circumplexity of items and clusters (see Supplement A in the online version of the journal). For example, spacing is also provided without the inclusion of item weights and relative importance of within- versus between-cluster distances to yield more easily interpretable information on the average deviation from perfect circumplexity. As such, unweighted spacing is given as proportional information, ranging from 0 (best) to 1 (worst possible circumplex spacing):

This unweighted spacing index can either be inspected as an overall index of circumplex spacing or for individual items, for example, if item spacing is supposed to be taken into account in item selection. Item spacing is given by

Like the weighted spacing index spc w , spc, and spc i would become 0 if items were perfectly aligned on their respective cluster centroid and 1 if, for example, all items were positioned on the cluster centroid of their neighboring cluster.

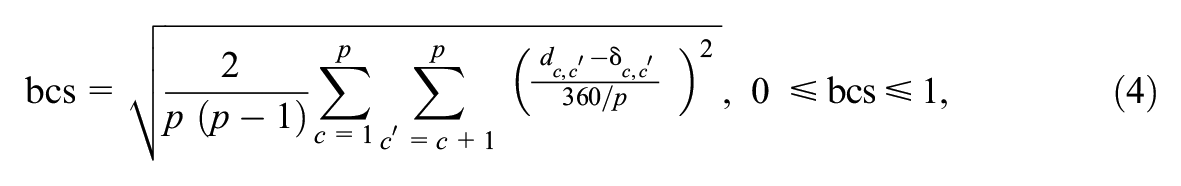

Spacing is also computed solely on the level of clusters (leaving the item-level apart) as between-cluster spacing. Between-cluster spacing is computed as the mean deviation from perfect (equal) spacing between clusters:

where only the relevant distances are included in the global term, that is, the upper triangle of the matrix of angular distances between clusters. Therefore, the overall term is divided by the number of relevant comparisons

Furthermore, ClusterCirc yields indices of item heterogeneity within clusters. For this end, within-cluster proximity, an index based on angular distances of items from their cluster centroids, is computed. Mean within-cluster proximity across all clusters is given by

where p is the number of clusters, mc is the number of items in cluster c, di,c is the angular distance (in degrees) between item i and the central cluster position of its respective cluster c. The division by 360/2p ensures a proportional value between 0 and 1, and division by p and mc yields a mean value across clusters as an overall indicator of within-cluster proximity in the instrument. In contrast to spc and spc

w

, only distances between items and their own central cluster position are included in

For individual clusters, wcp c yields proximity of items within this particular cluster (see Supplement A in the online version of the journal). On the item level, ClusterCirc yields the item’s proportional distance to its cluster centroid di,c/(360/2p), which can also be embedded in item selection in addition to other item indices. All ClusterCirc indices are given as overall values across items and clusters (expressed in Equations 1, 2, 4, and 5) and for items and clusters individually (see Supplement A in the online version of the journal).

Model Fit of the ClusterCirc Solution (ClusterCirc-Simu)

As spc w is not expected to become exactly zero, it could be hard to decide whether a given value for spc w can be regarded as acceptable. The combined effect of the number of items per cluster, the number of clusters, communalities of the items, the level of item heterogeneity within clusters, and the sample size on spc w can be complex. In addition to ClusterCirc-Data, which performs ClusterCirc on a given dataset, ClusterCirc therefore allows to perform a small simulation study, ClusterCirc-Simu. ClusterCirc-Simu defines perfect circumplex spacing of clusters (equal cluster spacing) in the population and uses specifications of the empirical dataset to get an ideal spcw-simu value for comparison with the empirical spcw-data. This comparison can be done with PCA or CircE angles as input and with any value for e, the relative importance of within-cluster proximity versus between-cluster spacing. ClusterCirc-Simu uses an ideal circumplex structure in the population as a reference model to test circumplexity in the empirical data. Using an ideal circumplex structure in the population is more reliable than, for example, the reverse approach with random distribution of items because even random distribution can constitute a fairly decent circumplex. This would make it difficult for spcw-data to statistically deviate from values of such random distributions (i.e., be classified as circumplex and not random) even if circumplex structure is indeed suitable for the data.

ClusterCirc-Simu can be considered a parametric bootstrap method. It uses the sample size, number of items, number of clusters, observed mean communality, empirical within-cluster range (indicating item heterogeneity) from the empirical data set to define a population. For adequate comparison of ClusterCirc-Simu with the results of ClusterCirc-Data, the latter should be performed with communalities as item weights. For the population structure of the user simulation, items are evenly distributed within their clusters, and cluster centroids have an equal distance of 360°/p to neighboring cluster centroids. The empirical spcw-data (weighted by communalities) is compared to the results from the simulation study with ideal circumplex specifications.

Circumplex fit of the data is assessed by a one-tailed significance test using the distribution of simulated spcw-simu values. Circumplex fit is regarded as acceptable if the empirical spcw-data is within the acceptance region of the spcw-simu distribution (translated from the standard normal distribution). In principle, the percentiles of the spcw-simu distribution may also be considered. For example, it is possible to test whether spcw-data is larger than the highest 5% of the spcw-simu distribution. With a sufficiently large number of items and clusters, however, a normal distribution of spcw-simu is very likely because departures from the simulated optimal circumplex should only depend on random error. Users can choose between different Type-I error rates (α levels) to adjust strictness of the significance test, whereby α = 1% is the default value. The null hypothesis states that the empirical data fits the circumplex structure, which is likely to be desired. The alternative hypothesis states that the empirical data deviates from circumplex structure. Larger α levels would result in greater power to detect possible deviations from perfect circumplexity. If researchers wish to increase the power of the test, they can increase the α level (e.g., 25%), which implicitly decreases the Type-II error rate (falsely assuming circumplexity) and thus makes it easier to detect deviations from circumplexity. However, the assumption of perfect circumplexity as stated by the null hypothesis is very unlikely to be met in empirical data for several reasons. For example, unreliability of items and heterogeneity of samples might lead to deviations from perfect circumplexity even if the latent structure might be regarded as sufficiently fulfilling circumplex structure. Therefore, the test is already rather strict as it is, which is why the default α level is 1% as a rather small Type-1 error rate to not make the test overly strict.

ClusterCirc Indices for User-Defined Clusters (ClusterCirc-Fix)

If researchers wish to obtain ClusterCirc indices for a-priori defined item clusters (e.g., preliminary subscales) without performing the ClusterCirc search for optimal circumplex clusters, this is possible by means of ClusterCirc-Fix. Results from ClusterCirc-Fix can be compared to results for ClusterCirc-Data. ClusterCirc indices can be compared on all levels (overall values like spc w , cluster indices, or item indices), and the suggested clustering by ClusterCirc-Data can be compared to the a-priori clusters. Such a comparison might help to decide whether preliminary subscales should remain the same or may be adjusted to optimize circumplexity, for example, by shifting some items to different subscales or by modifying or dismissing items from the instrument.

ClusterCirc R Package and SPSS Code

We provide an R package for ClusterCirc and corresponding SPSS codes. A detailed description of the use of the ClusterCirc R package (https://github.com/ancleo/ClusterCirc) as well as of the ClusterCirc SPSS (or alternative freeware PSPP) syntax (https://github.com/ancleo/ClusterCirc_SPSS) is given in Supplement B (available in the online version of this article).

Simulation Study: Comparison of ClusterCirc With Cluster Analysis

We performed a simulation study to investigate the effect of different conditions in the population and sample size on the spacing index spc w and on sorting accuracy of ClusterCirc. We also wanted to compare ClusterCirc to existing methods of finding item clusters. The procedure of PCA-plot slicing, which is often applied in circumplex settings, cannot be investigated in a large-scale simulation study because it relies on visual inspection and subjective decisions of researchers instead of an objective selection criterion. However, cluster analysis procedures are based on objective statistical criteria and can thus be investigated in simulation studies. Since ClusterCirc has similar goals as cluster analysis, we included traditional cluster analysis to examine to what extent ClusterCirc can be beneficial beyond conventional clustering techniques in the case of circumplex cluster structure. We included both hierarchical cluster analysis with Ward’s linkage method and non-hierarchical k-means clustering to examine whether possible differences between ClusterCirc and cluster analysis can be attributable to the hierarchical (Ward) versus non-hierarchical (ClusterCirc, k-means) nature of the performed procedures. Ward clustering is by default performed with squared Euclidean distances. However, as there is a maximum distance of items on the circle, and as the largest distances are very similar to the maximum, they have small variation and are therefore less informative than the smaller distances. In order to reduce the effect of large distances resulting from squaring item distances on Ward clustering results, we also performed Ward clustering with Minkowski (2) distances.

To examine the effect of different inputs, we used both PCA results (angular positions of items and communalities) and item positions and communalities from CircE based on item intercorrelations (Grassi et al., 2010) to enter into ClusterCirc and all cluster analysis procedures. We expected that CircE angles could enhance sorting accuracy if there were more than two factors in the latent model (hierarchical models) because the procedure automatically accounts for a possible third factor (Browne, 1992; Grassi et al., 2010). All analyses were conducted with R version 4.4.1 (R Core Team, 2021) and SPSS/SPSS matrix version 29 (IBM Corp., 2023). In the simulation study, the precision index q was set to 10 as the default value in ClusterCirc.

Population Models and Conditions of the Simulation Study

For the simulation study, we created five non-hierarchical population models with two factors, which represented the two circumplex axes, and four hierarchical population models with three factors, where there was a general factor in addition to the two circumplex factors.

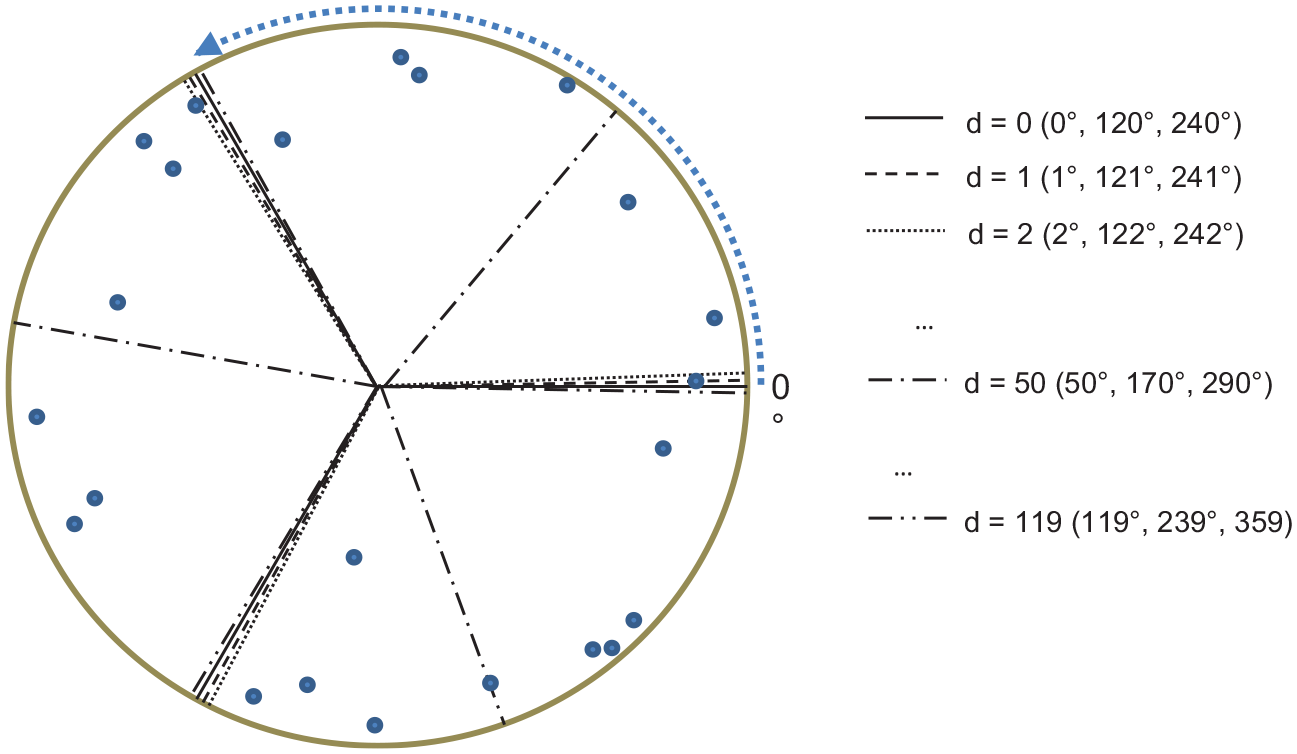

In non-hierarchical population models, there were four models with equal spacing between cluster centroids (e.g., perfect 120° distances between cluster centroids for three clusters) and one model with unequal spacing between clusters (e.g., 107°, 120°, and 133° distances between cluster centroids for three clusters; see Figure 3) to include conditions where assumptions of perfect circumplexity were violated. Unequal cluster spacing was simultaneously designed to result in a division of the circle in segments of different sizes. We modeled population clusters in a way that items within clusters were closer to each other than to items of neighboring clusters for all conditions. This should ensure fair chances of detecting population clusters for both ClusterCirc and cluster analysis, both of which favor clusters with small distances between items (maximum item homogeneity). In non-hierarchical population models with equal cluster spacing, we modeled four different levels of item heterogeneity within clusters: none, small, medium, and large. For the first level (none), items within each cluster were perfectly aligned on their cluster centroid (see Figure 3A, left). In the other three levels, items within each cluster were further apart. For the level with small item heterogeneity, the distance of neighboring items within each cluster was 25% smaller than the distance of neighboring items between two different clusters (see Figure 3B, left). In the level with medium item heterogeneity, the distance between neighboring items within each cluster was 50% of the distance between neighboring items of two different clusters (see Figure 3C, left). And in the last level with large item heterogeneity, the distance between items within each cluster was 75% of the distance between neighboring items of two adjacent clusters (see Figure 3D, left). In all conditions, within-cluster distances of items were equal for all clusters. For conditions with unequal cluster spacing, we used medium item heterogeneity of items within clusters (Figure 3E, left).

Circumplex population structure in the simulation study for an example of 18 items in three clusters and sorting by ClusterCirc versus cluster analysis.

Moreover, hierarchical population models were also created with medium item heterogeneity within circumplex clusters. Item loadings on the third, general factor were varied with a3 = 0.30 and a3 = 0.40 for all items in the population model. The circumplex clusters in the hierarchical models were modeled with equal spacing and with unequal spacing as described above, resulting in four population models with hierarchical structure.

For all nine population models (five non-hierarchical plus four hierarchical models, see Table 1), the radius of all items was 0.80 on the two population circumplex axes. Furthermore, the number of clusters was varied with p = 3, 4, 5, and 6, and the number of items per cluster was also varied with mc = 3, 4, 5, and 6. Sample sizes were n = 100, 300, 500, and 1,000. These specifications resulted in 9 (population model) × 4 (number of clusters) × 4 (number of items per cluster) × 4 (sample size) = 576 conditions in the simulation study. We drew 500 simulated samples for each condition.

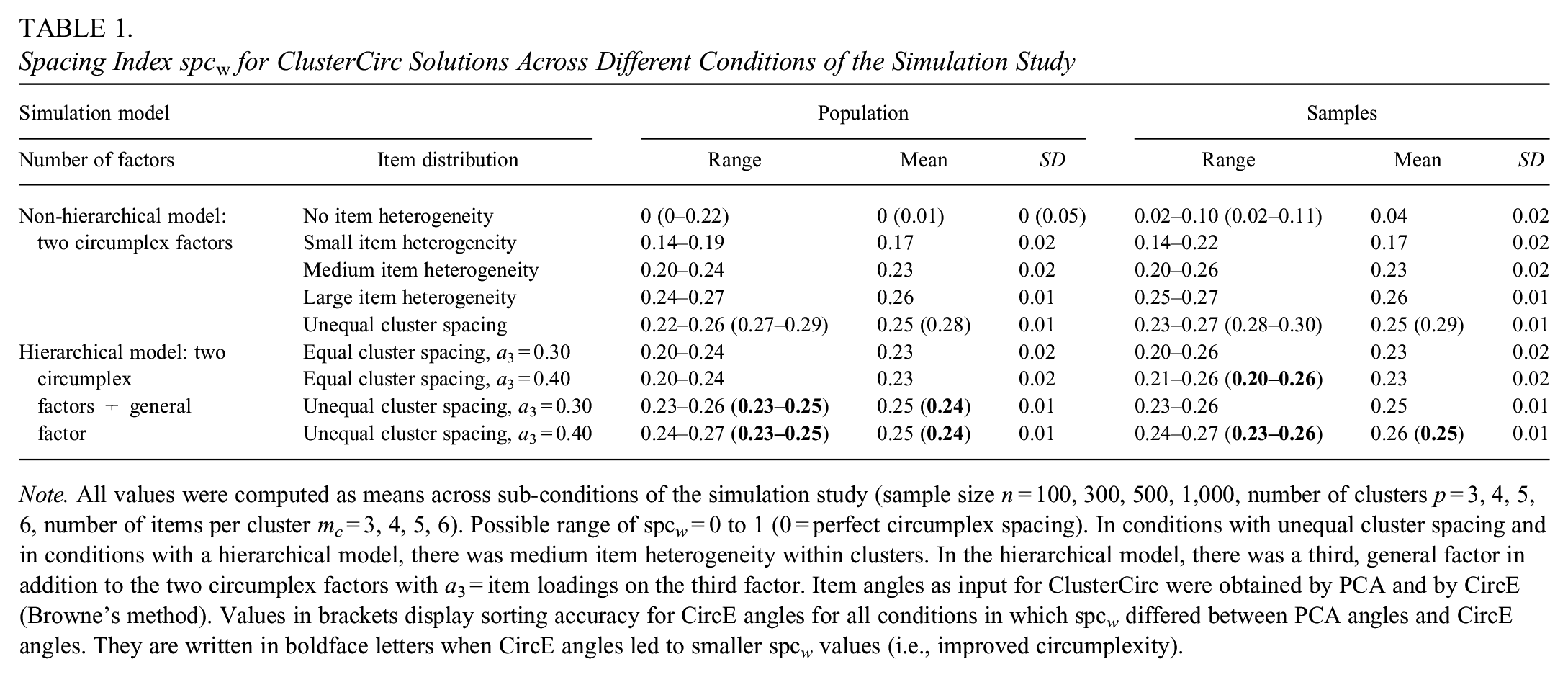

Spacing Index spcw for ClusterCirc Solutions Across Different Conditions of the Simulation Study

Note. All values were computed as means across sub-conditions of the simulation study (sample size n = 100, 300, 500, 1,000, number of clusters p = 3, 4, 5, 6, number of items per cluster mc = 3, 4, 5, 6). Possible range of spc w = 0 to 1 (0 = perfect circumplex spacing). In conditions with unequal cluster spacing and in conditions with a hierarchical model, there was medium item heterogeneity within clusters. In the hierarchical model, there was a third, general factor in addition to the two circumplex factors with a3 = item loadings on the third factor. Item angles as input for ClusterCirc were obtained by PCA and by CircE (Browne’s method). Values in brackets display sorting accuracy for CircE angles for all conditions in which spc w differed between PCA angles and CircE angles. They are written in boldface letters when CircE angles led to smaller spc w values (i.e., improved circumplexity).

For two of these conditions, we also modeled variability in item communalities between the clusters to investigate the effect of including communalities in the spacing index. The conditions were p = 3, mc = 6, n = 100 as well as p = 6, mc = 3, n = 100, both with equal cluster spacing and medium item and a nonhierarchical population model. Item radius was 0.90 (h2 = 0.81) in two clusters and 0.70 (h2 = 0.49) in all other clusters. The details of data generation and SPSS syntax for two example conditions can be seen in Supplement C (available in the online version of this article).

Results of the Simulation Study

Table 1 gives an overview of the size of the spacing index spc w for the different population models in simulated population and sample data. For the simulation study, item communalities were used as weights in spc w and e was set to 1/p (default in ClusterCirc). As can be seen in Table 1, spc w increased with greater item heterogeneity and was larger when clusters were not equally spaced but remained below 0.30 for all simulated conditions. In hierarchical models with a third, general factor in addition to the two circumplex factors, spc w was similar to conditions with a non-hierarchical model, when the level of item heterogeneity (medium) and equal vs. unequal spacing was taken into account. The depicted range in spc w within each model of item distribution was mainly driven by an increase in spc w when the number of items per cluster increased. The number of clusters or sample size had only minimal effects on spc w . PCA angles and CircE angles yielded similar spc w results for most conditions. However, in non-hierarchical conditions with no item heterogeneity within clusters and those with unequal cluster spacing, CircE angles resulted in slightly larger spc w values than angles based on PCA. In contrast, CircE angles yielded slightly smaller spc w values than PCA angles in some conditions with a hierarchical latent model (Table 1).

Table 2 displays sorting accuracy of ClusterCirc versus cluster analysis for nine latent models across the sub-conditions in the simulation study in the population data as well as in the simulated samples. Sorting was defined to be accurate if the modeled population clusters were discovered by the sorting method, that is, if items that were closest to one another in the population models were clustered together. Overall, ClusterCirc resulted in greater sorting accuracy than all cluster analysis procedures that were investigated in all conditions.

Sorting Accuracy of ClusterCirc Versus Cluster Analysis in the Simulation Study

Note. All values were computed as means across sub-conditions of the simulation study (sample size n = 100, 300, 500, 1,000, number of clusters p = 3, 4, 5, 6, number of items per cluster mc = 3, 4, 5, 6). In conditions with unequal cluster spacing and in conditions with a hierarchical model, there was medium item heterogeneity within clusters. In the hierarchical model, there was a third, general factor in addition to the two circumplex factors with a3 = item loadings on the third factor. Ward cluster analysis was performed with squared Euclidean (E) distances (default) and Minkowski (2) distances (M). Item angles as input for all methods were obtained by PCA and by CircE (Browne’s method). Values in brackets display sorting accuracy for CircE angles for all conditions in which sorting accuracy differed between PCA angles and CircE angles. They are written in boldface letters when CircE angles improved sorting accuracy.

In population data, ClusterCirc (with PCA angles) found the intended clusters in all 576 conditions. The only conditions in which Ward cluster analysis yielded the same clusters for population data as ClusterCirc across all sub-conditions were non-hierarchical models with no and small item heterogeneity within clusters. In all conditions with medium and large item heterogeneity (non-hierarchical and hierarchical models, equal and unequal cluster spacing), Ward and k-means cluster analysis found different clusters than ClusterCirc and did not yield the modeled clusters in many sub-conditions for population data. Ward cluster analysis performed better in population data than k-means in conditions with equal cluster spacing, whereas k-means performed better than Ward cluster analysis in conditions with unequal cluster spacing (non-hierarchical and hierarchical models).

In hierarchical conditions and equal cluster spacing, sorting accuracy for population data of Ward cluster analysis was similar to sorting accuracy in the medium item heterogeneity condition without a third factor. However, adding a third factor improved sorting accuracy of Ward cluster analysis in hierarchical conditions with unequal cluster spacing as compared to non-hierarchical conditions with unequal cluster spacing in population data. This effect was more pronounced with a more prominent third factor and thus a stronger hierarchical model (a3 = 0.40). Differences between the distance measures inserted in Ward cluster analysis (squared Euclidean vs. Minkowski (2)) were strongest in population data in conditions with non-hierarchical data structure and medium item heterogeneity.

Regarding PCA loadings versus CircE angles as input for the analysis, we found that CircE input decreased sorting accuracy in population data for most conditions and sorting methods. However, in conditions with unequal cluster spacing and a non-hierarchical model as well as in conditions with unequal cluster spacing and a less prominent third factor (a3 = 0.30), CircE input improved sorting of Ward cluster analysis in population data.

Different sorting results for exemplary population conditions with a non-hierarchical model and p = 3, mc = 6 are depicted in Figure 3. It illustrates that Minkowski (2) distances in Ward cluster analysis yielded item clusters that were more similar to ClusterCirc clusters (and thereby the modeled clusters) than squared Euclidean distances. Especially, conditions with medium item heterogeneity (Figure 3C) were correctly classified by Ward with Minkowski (2) distances, but they were incorrectly classified by Ward with squared Euclidean distances. Also, in conditions with large item heterogeneity, Minkowski (2) classified fewer items than squared Euclidean distances in different clusters than the intended ones (see Figure 3D, one item Minkowski (2), two items Euclid2). Figure 3 also shows that k-means clusters differed to a greater extent from intended clusters (left column) than Ward clusters in these non-hierarchical conditions.

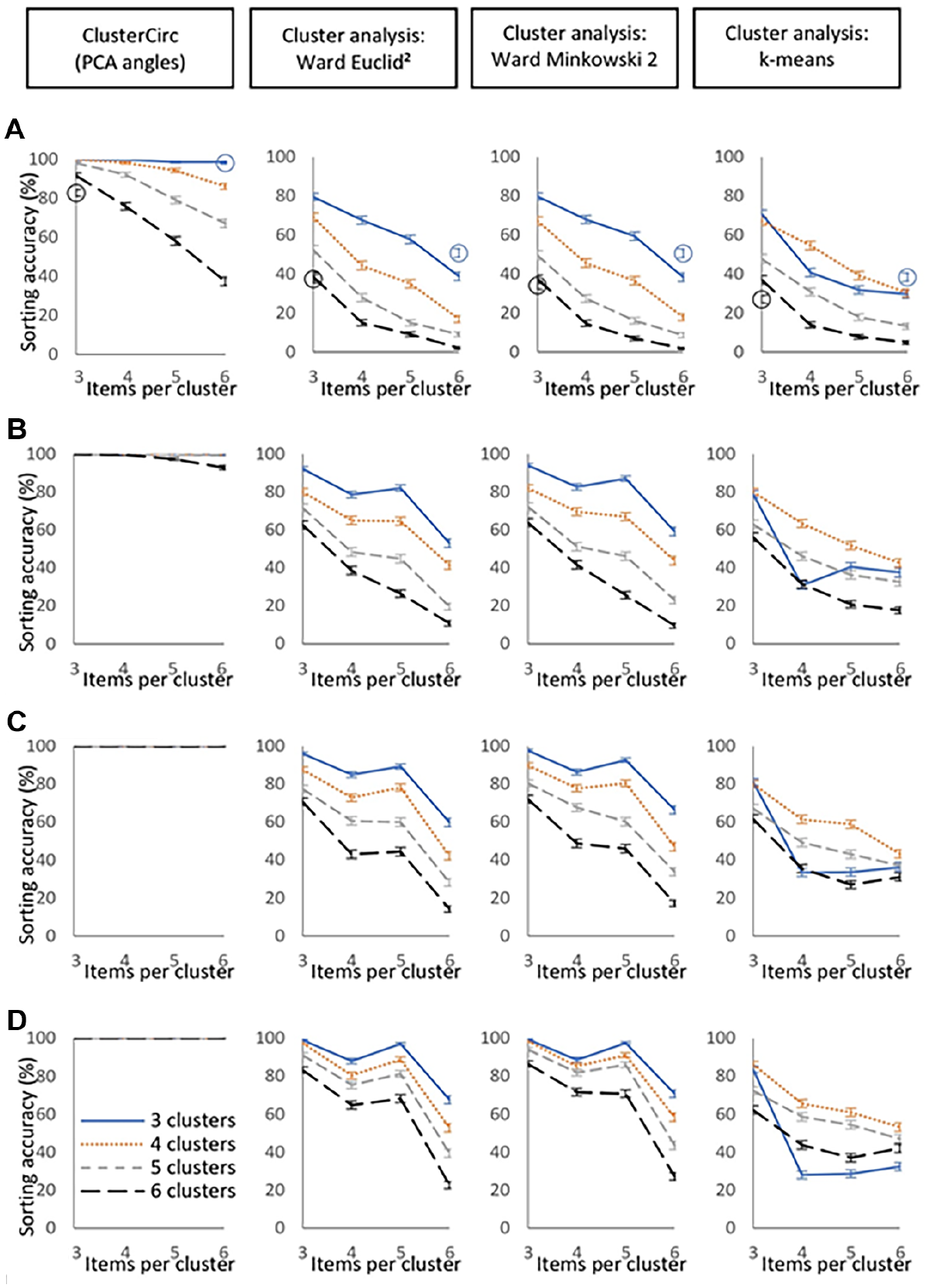

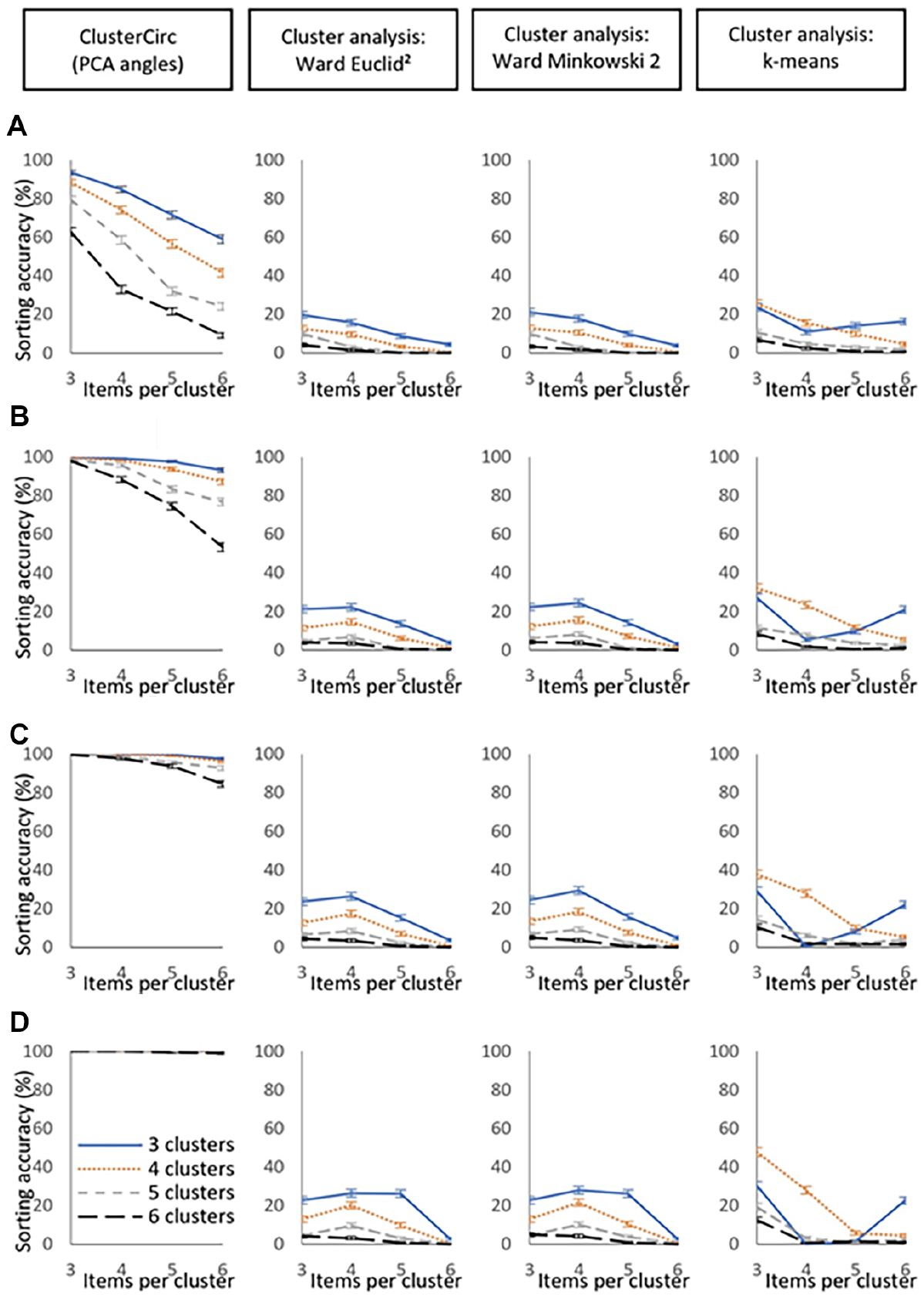

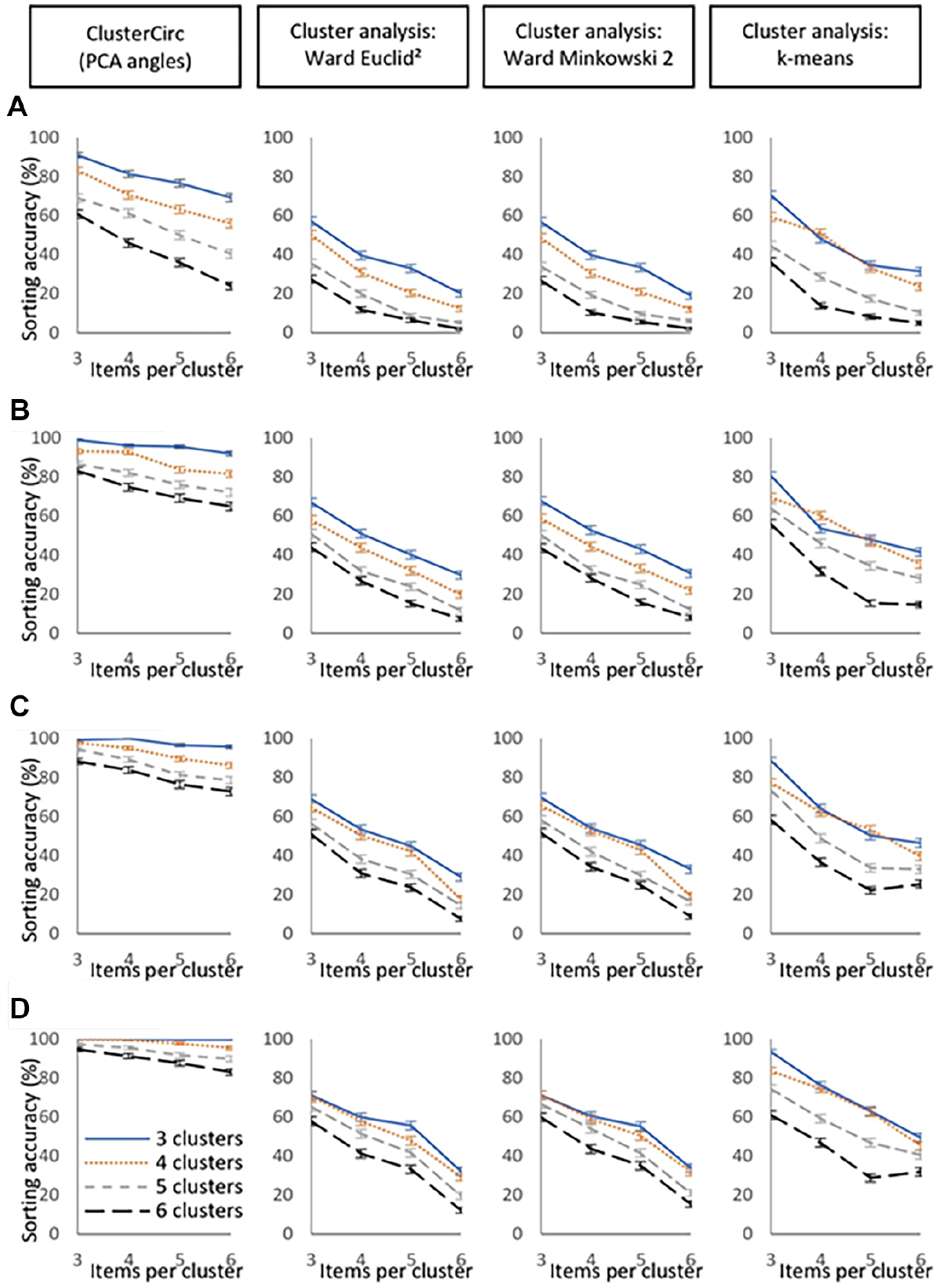

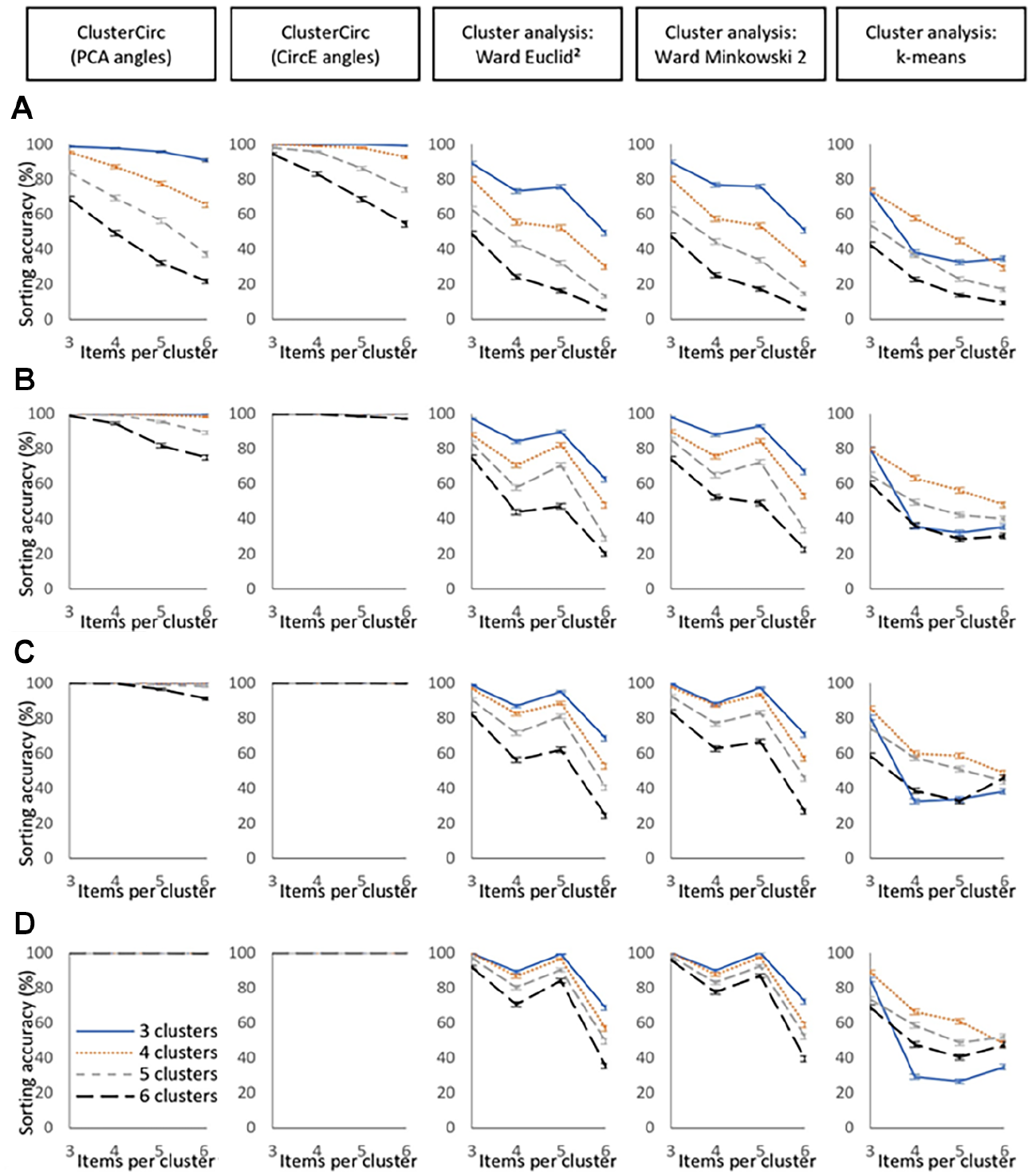

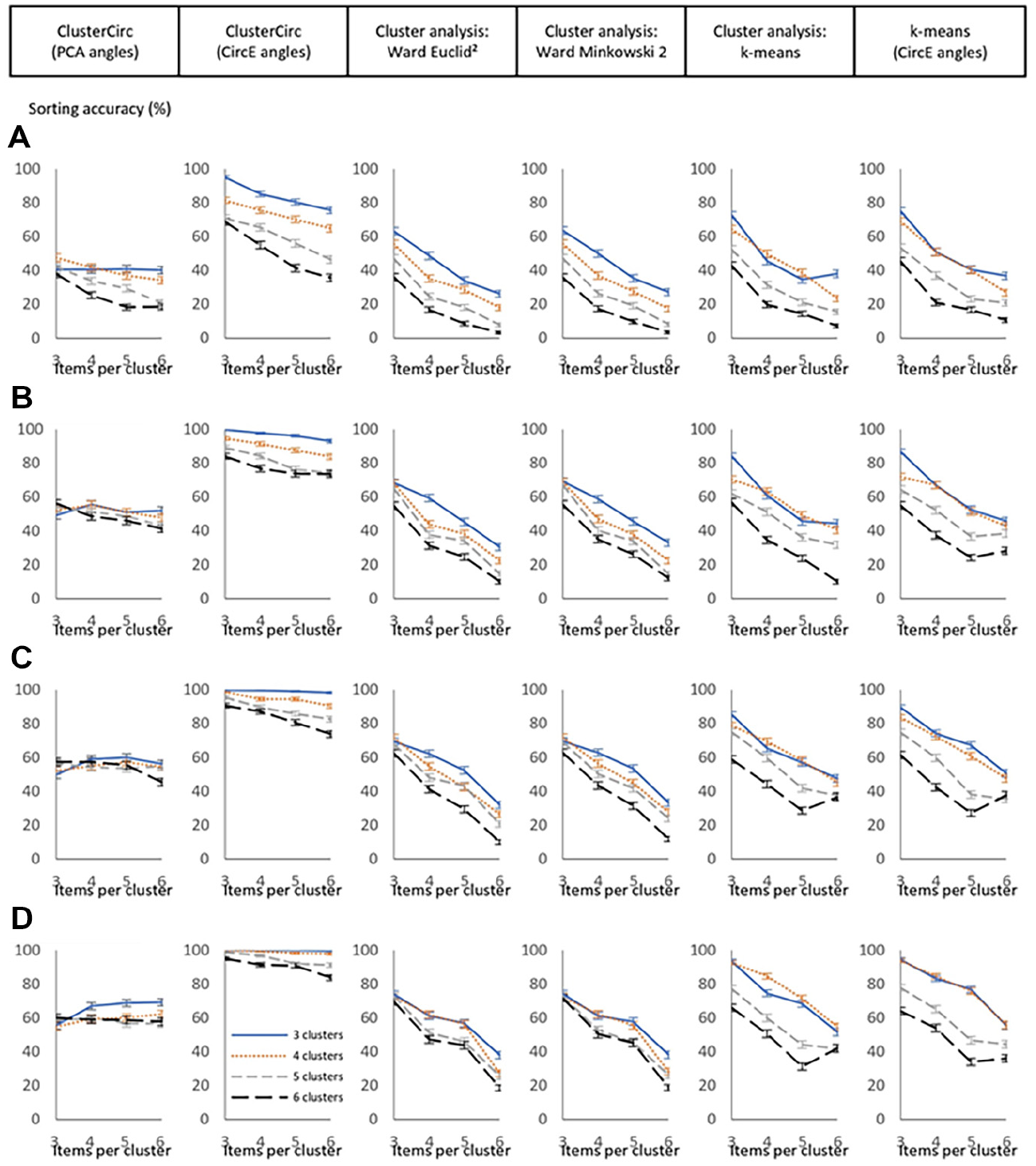

Table 2 also summarizes sorting accuracy in the samples, for which results of the sub-conditions can be inspected in Figures 4 to 8 and in Supplement D (available in the online version of this article). Results for CircE input are only displayed if overall differences between PCA and CircE input were 2% or more (see Table 2). When there was no third factor, equal cluster spacing, and no item heterogeneity, sorting accuracy in the samples was almost perfect (>99% correct) in all sub-conditions for both ClusterCirc and all cluster analysis methods. When item heterogeneity increased, sorting accuracy decreased. Across all conditions, modeled clusters were detected more often by ClusterCirc than by cluster analysis in the samples. Overall, sorting accuracy decreased with complexity of the data and increased with sample size. However, in many conditions, sample size did not improve sorting accuracy of Ward and k-means cluster analysis to the same extent as it did for ClusterCirc (see Figures 4–8).

Sorting accuracy of ClusterCirc versus cluster analysis in the simulation study for equal spacing of circumplex clusters and medium item heterogeneity within clusters. (A) n = 100. (B) n = 300. (C) n = 500. (D) n = 1,000.

Sorting accuracy of ClusterCirc versus cluster analysis in the simulation study for equal spacing of circumplex clusters and large item heterogeneity within clusters. (A) n = 100. (B) n = 300. (C) n = 500. (D) n = 1,000.

Sorting accuracy of ClusterCirc versus cluster analysis in the simulation study for conditions with unequal spacing of circumplex clusters. (A) n = 100. (B) n = 300. (C) n = 500. (D) n = 1,000.

Sorting accuracy of ClusterCirc versus cluster analysis in the simulation study for hierarchical data structure (a3 = 0.40) and equal spacing of circumplex clusters. (A) n = 100. (B) n = 300. (C) n = 500. (D) n = 1,000.

Sorting accuracy of ClusterCirc versus cluster analysis in the simulation study for hierarchical data structure (a3 = 0.40) and unequal spacing of circumplex clusters. (A) n = 100. (B) n = 300. (C) n = 500. (D) n = 1,000.

In non-hierarchical conditions with equal cluster spacing and small item heterogeneity, ClusterCirc reached an accuracy of >80% for n = 100 and >99% for all sub-conditions with n ≥ 300. Sorting of Ward cluster analysis greatly improved by increasing sample size from n = 100 (sorting accuracy of >56%) to n ≥ 300 (sorting accuracy of ≥95%) for both distance measures. Increasing sample size also improved k-means clustering, however, to a lesser extent (see Supplement D, Figure S.1 in the online version of the journal).

In non-hierarchical models with equal cluster spacing and medium item heterogeneity, ClusterCirc reached an accuracy of >92% for all conditions with n ≥ 300, whereas increasing sample size improved sorting accuracy of cluster analysis only slightly (Figure 4). In this condition, the effect of the distance measure in Ward cluster analysis was largest, that is, Minkowski (2) distances slightly increased detection rates of modeled circumplex clusters as compared to squared Euclidean distances. k-means clustering produced overall worse results than Ward cluster analysis in the samples.

In non-hierarchical models with equal spacing and large item heterogeneity, sorting accuracy of ClusterCirc was between 84% and 100% for all conditions when sample size was n ≥ 500, whereas it remained below 30% in all conditions for Ward cluster analysis and below 48% for k-means cluster analysis (Figure 5).

In non-hierarchical conditions with unequal cluster spacing, ClusterCirc found the intended clusters in the majority of cases (see Table 2). However, it did not reach the same sorting accuracy as in conditions with equal cluster spacing (compare Figures 4 and 6). In sub-conditions with greater complexity (many clusters and many items per cluster), sorting accuracy of ClusterCirc decreased. Results of ClusterCirc and cluster analysis were more similar in this condition than in conditions with equal cluster spacing. However, cluster analysis did not reach the same levels of sorting accuracy as ClusterCirc in this condition either. As can be seen in Table 2 and Figure 6, k-means clustering reached better sorting accuracy than Ward cluster analysis in all sub-conditions of unequal cluster spacing. Differences between distance measures in Ward cluster analysis were small in non-hierarchical conditions with unequal cluster spacing, with slightly higher overall sorting accuracy in the samples for Minkowski (2) distances (see also Table 2).

In conditions with hierarchical models, ClusterCirc sorting accuracy in the samples was generally lower than in conditions without hierarchical models. However, overall sorting accuracy for ClusterCirc was still much higher than for cluster analysis (see Table 2). The pattern of results for hierarchical models was similar for a3 = 0.30 as for a3 = 0.40, which is why results for sub-conditions with a3 = 0.30 are presented in Supplement D (Figures S2 and S3 in the online version of the journal). In hierarchical models with equal cluster spacing, ClusterCirc yielded the best results for sorting accuracy in the samples, which was greatly enhanced by using CircE angles instead of PCA angles, especially in smaller samples (see Figure 7). For example, sorting accuracy in samples of n = 100 was between 22% and 99% for ClusterCirc with PCA angles as opposed to 54% to 100% for ClusterCirc with CircE angles. When sample size was n = 500 or larger, differences between item angles in ClusterCirc were only marginal. Sorting of Ward cluster analysis improved in hierarchical models as compared to non-hierarchical models with equal cluster spacing, especially for smaller samples (n = 100 and n = 300, see Figures 4 and 7).

In hierarchical conditions with unequal cluster spacing, the difference between PCA angles and CircE angles was even more pronounced for ClusterCirc (see Table 2 and Figure 8). While PCA angles produced results that were close to and even worse than cluster analysis for some conditions, CircE angles yielded much better sorting accuracy with 69% to 100% for all conditions with n ≥ 300. For Ward cluster analysis, the hierarchical conditions improved sorting accuracy in the samples as compared to the non-hierarchical conditions for unequal cluster spacing as well, with no significant differences between PCA and CircE input. Similar to conditions without a third factor, k-means reached higher rates of sorting accuracy than Ward cluster analysis in conditions with unequal cluster spacing. CircE angles also improved sorting accuracy of k-means clustering, however, only slightly in comparison to ClusterCirc (Table 2 and Figure 8). With respect to distance measures in Ward cluster analysis, Minkowski (2) distances improved sorting by 1% to 2% in the hierarchical model (Table 2).

Regarding the variability in item radius/communalities, we found that Ward cluster analysis yielded the intended structure for the condition with p = 6, mc = 3, but not for p = 3, mc = 6 (non-hierarchical model with equal cluster spacing and medium item heterogeneity, n = 100 for both) in the population. k-Means clustering did not find the modeled clusters in either condition. Results for the simulated samples are included in Figure 4, because these conditions were sub-conditions of medium item heterogeneity. Variability in item radius/communalities affected ClusterCirc and cluster analysis in a similar direction, whereby ClusterCirc still outperformed cluster analysis (Figure 4). For the condition with three clusters and six items in each cluster, variability of communalities slightly increased sorting accuracy. For the condition with six clusters and three items per cluster, variability of communalities lowered sorting accuracy of all methods, while ClusterCirc still had the highest sorting accuracy (Figure 4).

Empirical Example

To illustrate the practical use of ClusterCirc, we performed ClusterCirc on empirical data measuring the interpersonal circumplex with eight clusters (Figure 1; Alden et al., 1990).

Method (Sample, Measure)

We used data from an online-questionnaire study, from which a different part of the data has been published within another project (Weide et al., 2021). The study conformed with the Declaration of Helsinki and was approved by the ethics board of the University of Bonn. Participants were volunteers who were recruited by staff and students from the Institute of Psychology. Participation took between 45 and 60 min and was recognized with course credit for participation in psychological studies if needed for an undergraduate degree. We only used complete data without missing values, resulting in 823 participants (517 female, 306 male, no non-binary participants). Participants were between 16 and 89 years old (M = 32.68, SD = 14.79).

We performed ClusterCirc on the German version of the IAS (Jacobs & Scholl, 2005). The IAS consists of 64 items that are supposed to be divided into eight subscales with eight items per subscale (see Figure 1). Each item of the IAS is an adjective that describes an interpersonal attribute. For each item, participants self-rated on a five-point Likert scale to what degree the presented adjective was suited to describe their own interpersonal tendencies (not at all to very much). For example, items were “assertive” (PA/Assured-dominant), “cynical” (BC/Arrogant-calculating), “empathetic” (LM/Warm-agreeable), “shy” (HI/Unassured-submissive), and “obedient” (JK/Unassuming-ingenuous).

Results

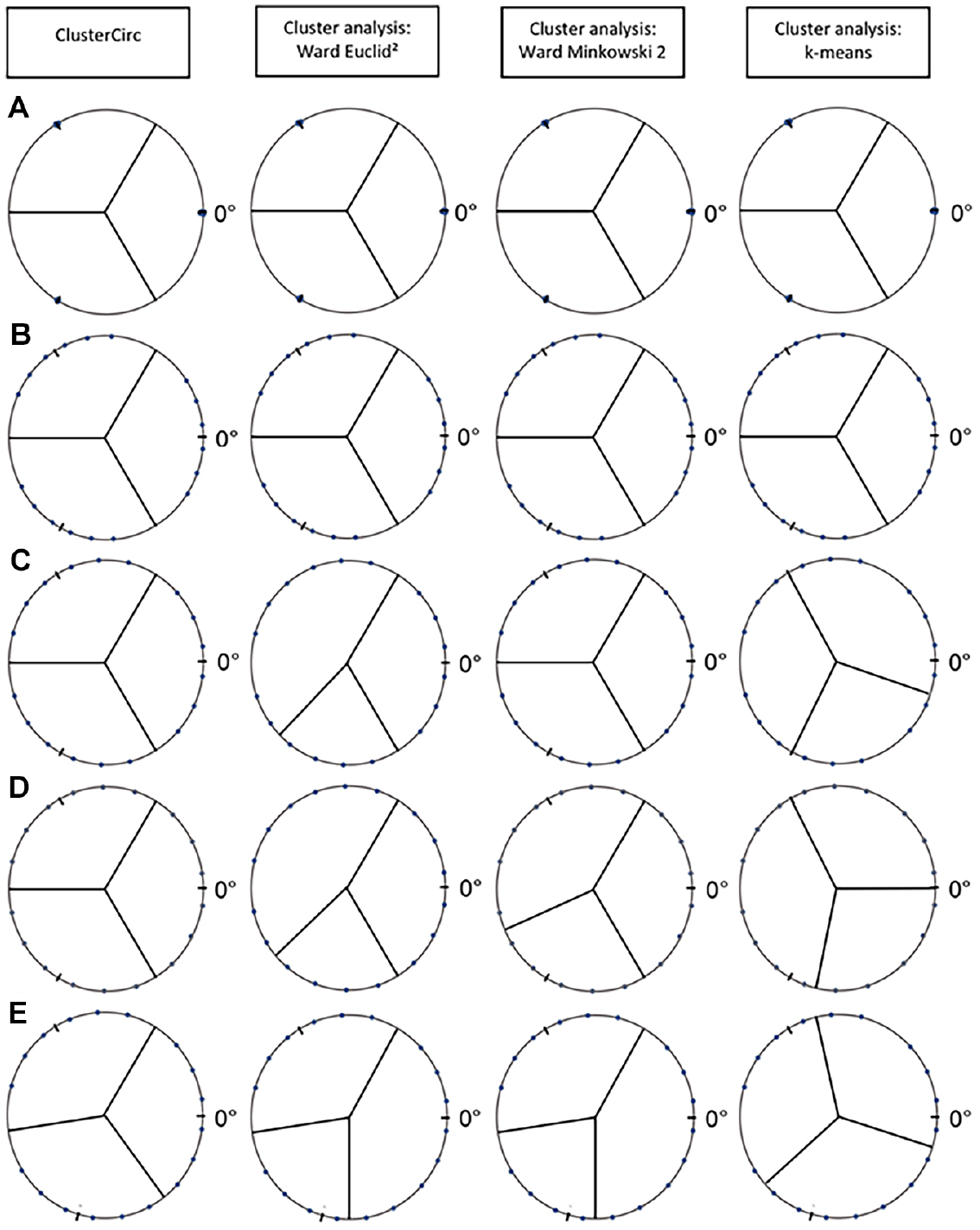

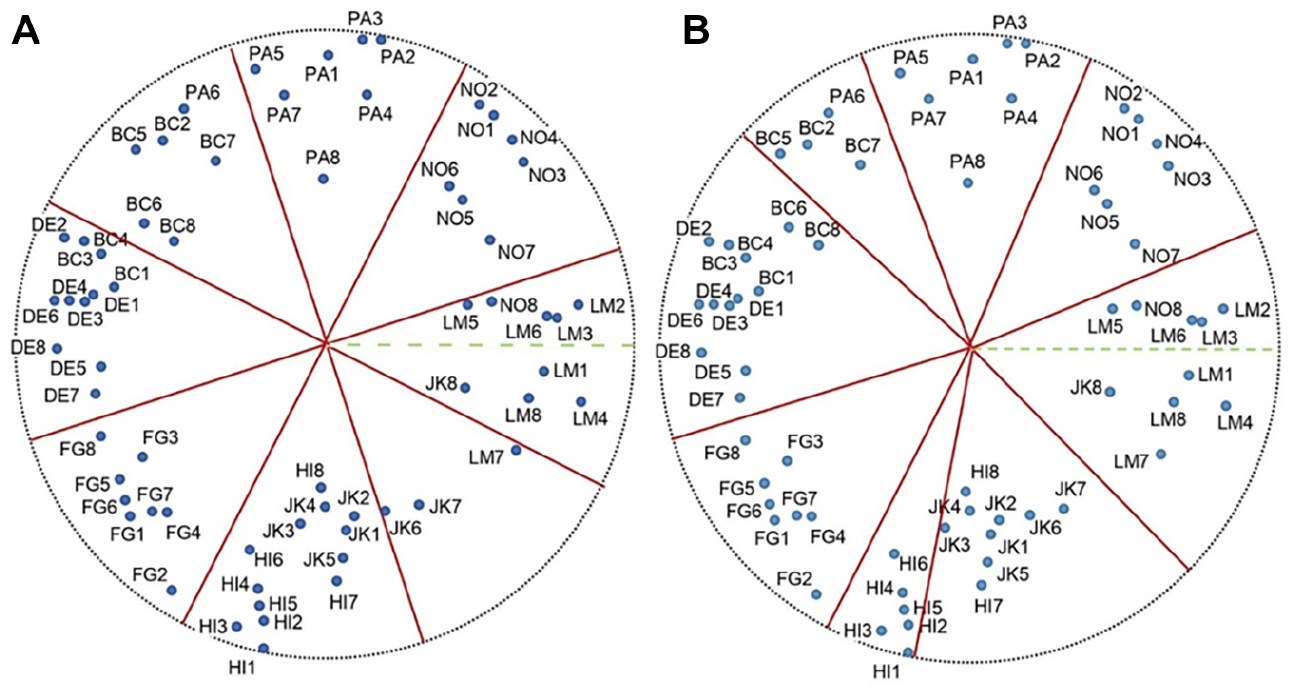

We performed ClusterCirc on the IAS data (ClusterCirc-Data with PCA input, communalities as item weights, and default e = 1/p for importance of within-cluster proximity vs. between-cluster spacing) and subsequently inserted the specifications of the data in the tailored simulation syntax for users (ClusterCirc-Simu, α = 1%) for better interpretation of results. The precision index of the ClusterCirc procedure was varied with q = 1 and q = 10, which yielded the same results. The suggested ClusterCirc sorting of the IAS items can be inspected in Figure 9A. Among all 64 items, 52 items were clustered together by ClusterCirc according to the intended structure of the inventory (e.g., LM items in one cluster, NO items in the next, etc.), whereas the remaining 12 items were sorted into different clusters. Sorting by conventional cluster analysis with Ward’s linkage method also differed from original and ClusterCirc sorting, whereby cluster limits did not conform to the equal spacing criterion (Figure 9B).

Item clusters found by ClusterCirc versus Ward cluster analysis for the Interpersonal Adjective Scales in the empirical example. (A) ClusterCirc. (B) Cluster analysis.

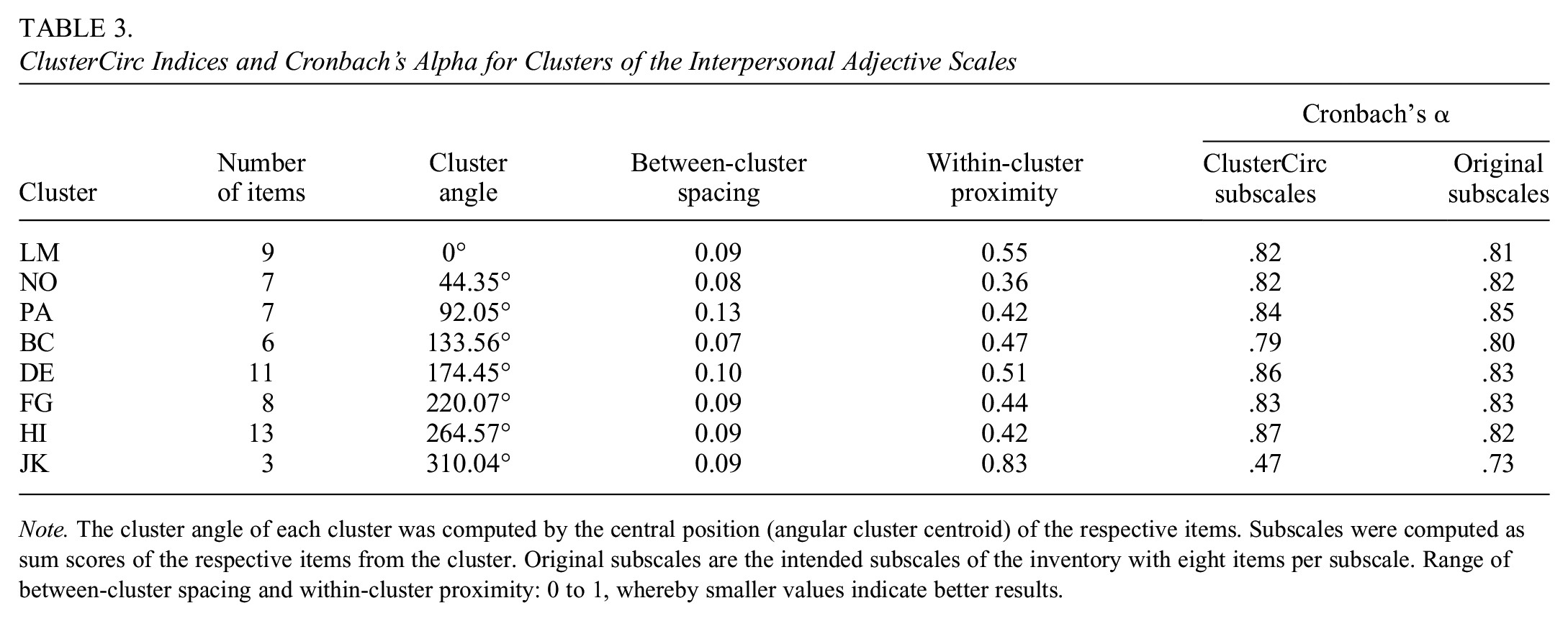

For the IAS data, ClusterCirc resulted in spc w = 0.26, spc = 0.26, bcs = 0.09, and wcp = 0.52. Table 3 shows the results for ClusterCirc indices on the level of clusters and Cronbach’s α for the suggested and the original subscales of the IAS. Between-cluster spacing was close to 0 for the clusters, indicating good circumplex spacing of clusters, where distances between cluster centroids were close to 45° (360°/p), thus close to equal cluster spacing across the circumference of the circle. Within-cluster proximity differed to a greater extent between clusters, indicating that items were more heterogeneous in some clusters than in others (Table 3). Comparing Cronbach’s α between the ClusterCirc subscales with the original subscales of the IAS revealed that Cronbach’s α was larger for ClusterCirc sorting in three subscales, equal in two subscales, and smaller in the remaining three subscales.

ClusterCirc Indices and Cronbach’s Alpha for Clusters of the Interpersonal Adjective Scales

Note. The cluster angle of each cluster was computed by the central position (angular cluster centroid) of the respective items. Subscales were computed as sum scores of the respective items from the cluster. Original subscales are the intended subscales of the inventory with eight items per subscale. Range of between-cluster spacing and within-cluster proximity: 0 to 1, whereby smaller values indicate better results.

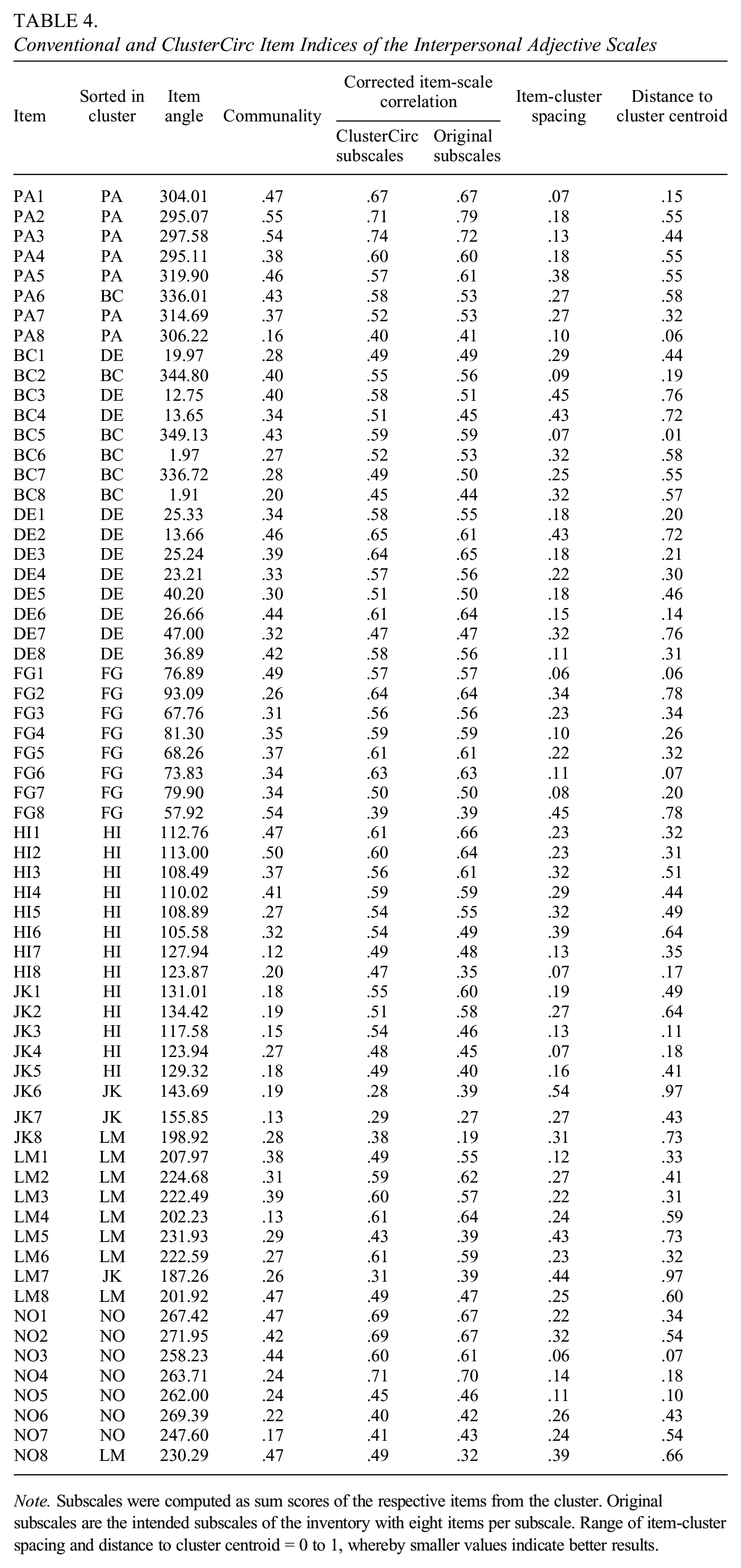

Table 4 shows results for the items of the IAS. Communalities ranged from 0.12 to 0.55 with a mean h2 of 0.33 (SD = 0.11). Corrected item-scale correlations ranged from rit = 0.28 to rit = 0.74 (M = 0.54, SD = 0.10) for items when sorted into ClusterCirc subscales and rit = .19 to rit = 0.79 (M = 0.53, SD = 0.11) for items when sorted into the original subscales. Item-scale correlations below 0.40 were found for five items when sorted into ClusterCirc subscales and eight items when sorted into the original subscales. Item-cluster spacing, that is, proportional deviations from perfect circumplex spacing, ranged from 0.06 to 0.54 with a mean of 0.24 (SD = 0.12). Proportional item distances from cluster centroids (0–1), ranged from 0.01 to 0.97 with a mean of 0.43 (SD = 0.23).

Conventional and ClusterCirc Item Indices of the Interpersonal Adjective Scales

Note. Subscales were computed as sum scores of the respective items from the cluster. Original subscales are the intended subscales of the inventory with eight items per subscale. Range of item-cluster spacing and distance to cluster centroid = 0 to 1, whereby smaller values indicate better results.

We conducted ClusterCirc-Simu with the specifications of the dataset with m = 64, p = 8, n = 823, the empirical mean communality of Mh 2 = 0.33, and an angular item range within each cluster of 31.24° (average distance of the outmost items of the same cluster in the IAS data). In the population model of ClusterCirc-Simu, items had equal communalities (h2 = 0.33) and were evenly distributed within each cluster. Population clusters fulfilled perfect equal circumplex spacing, that is, 45° distances between cluster centroids. ClusterCirc-Simu simulated 500 samples of n = 823 for the population model. It revealed that ClusterCirc found the population structure in 72% of all simulated samples, whereas conventional cluster analysis with Ward’s linkage method found the population structure in 5% of the simulated samples. However, when analyzing the population data (without any sampling error), Ward cluster analysis found the same (intended) circumplex structure as ClusterCirc. Overall ClusterCirc indices were spc w = 0.23, spc = 0.23, bcs = 0.00, and wcp = 0.46 for simulated population data. Mean ClusterCirc indices across the simulated samples were M(spc w ) = 0.24 (SD = 0.01), M(spc) = 0.24 (SD = 0.01), M(bcs) = 0.06 (SD = 0.02), and M(wcp) = 0.47 (SD = 0.02). These values would be expected for perfect circumplexity of clusters in the population and the given sample size. As the empirical spcw-data did not exceed the cutoff value for deviation from circumplexity (mean spcw-simu + 2.33 standard deviations, α = 1%), circumplex model fit of the IAS would be considered acceptable by the cut-off introduced before.

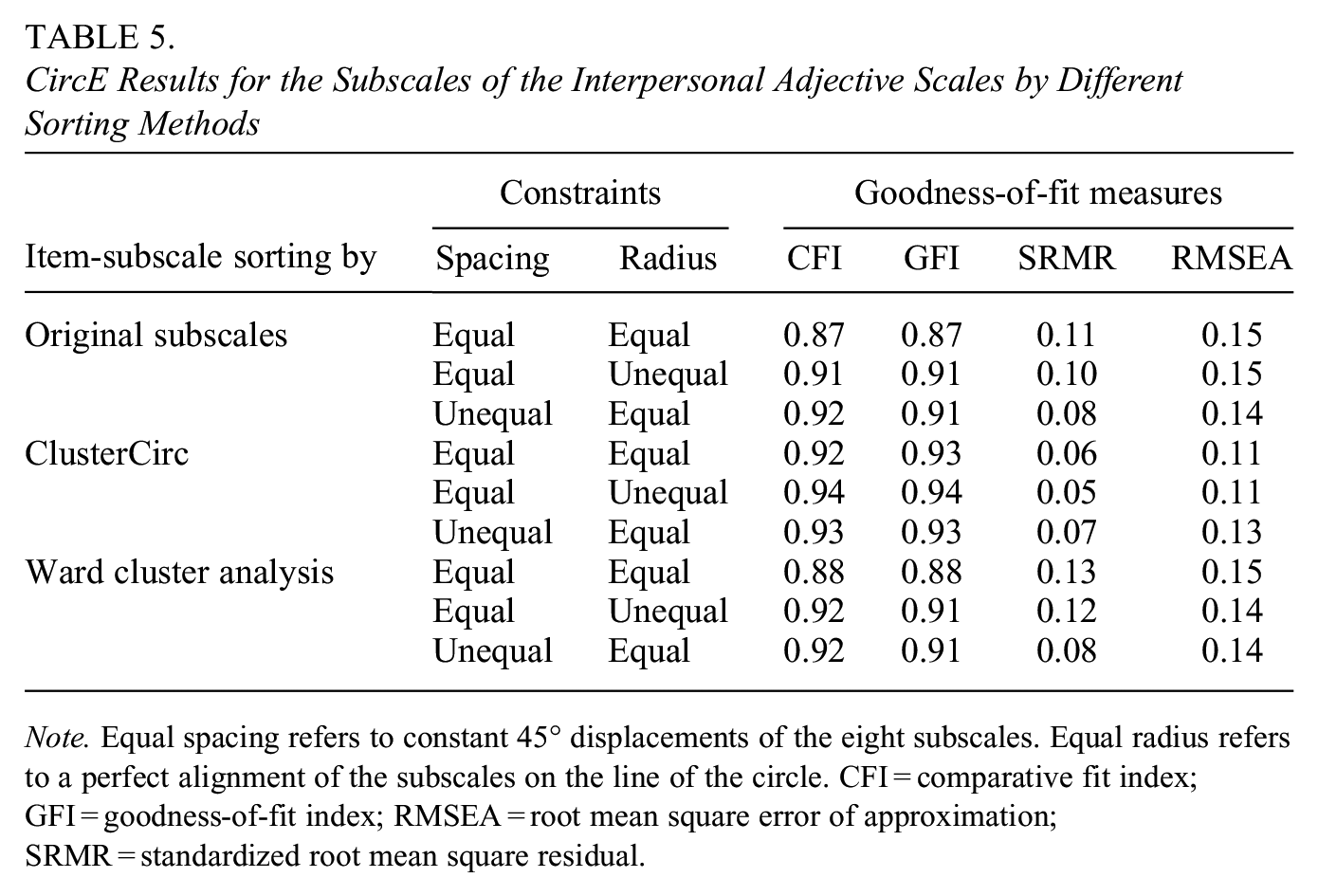

To further investigate the subscales suggested by ClusterCirc sorting, we performed model tests by CircE on the level of subscales, which allows for a confirmatory analysis to test the circumplex model, on the IAS data (Grassi et al., 2010; Nagy et al., 2019). For this end, we calculated sum scores of the items for the original subscales, the subscales according to ClusterCirc sorting, and those suggested by Ward cluster analysis with squared Euclidean distances as the default setting when using Ward’s linkage method. We entered the sum scores into CircE and put different combinations of equality constraints for spacing (equal 45° displacements) and radius (perfect alignment on the circle) on the subscales. As can be seen in Table 5, the circumplex fit of the sum scores based on ClusterCirc sorting was better than the circumplex fit of the sum scores according to the original subscales and cluster analysis for all combinations of constraints.

CircE Results for the Subscales of the Interpersonal Adjective Scales by Different Sorting Methods

Note. Equal spacing refers to constant 45° displacements of the eight subscales. Equal radius refers to a perfect alignment of the subscales on the line of the circle. CFI = comparative fit index; GFI = goodness-of-fit index; RMSEA = root mean square error of approximation; SRMR = standardized root mean square residual.

Discussion

In this research, we present ClusterCirc, a new method that sorts items into clusters based on optimal circumplex spacing of items and clusters. In contrast to other sorting and clustering procedures, it is specifically designed for the investigation and development of circumplex models and inventories. In contrast to existing methods to investigate circumplex structure, ClusterCirc analyzes circumplexity on the level of items and clusters simultaneously based on an objective circumplex criterion. ClusterCirc projects the items on a circular structure and divides the circle into segments of equal size to find item clusters for which the item-cluster spacing index spc w is optimal. In this process, ClusterCirc minimizes angular within-cluster variance of items and simultaneously optimizes the equal spacing criterion of circumplex structure. The relative importance of each of them can be adjusted (up to ignoring one of them completely) depending on the researcher’s preference.

Interpretation of Results: Simulation Study

The results of the simulation study support the use of ClusterCirc for revealing circumplex item clusters when circumplex structure can be expected in the population. With increasing item heterogeneity (as indicated by greater within-cluster variance), ClusterCirc seems to be beneficial beyond conventional cluster analysis to reveal circumplex clusters. Differences in results between ClusterCirc and cluster analysis can be attributed to three aspects: The distance measure, the criterion to be optimized, and the clustering procedure. Item input was the same for all procedures in the simulation study (item positions from PCA or CircE) to counteract possible differences due to different inputs. However, ClusterCirc was based on angular distances of item positions, whereas Ward cluster analysis was performed with squared Euclidean and Minkowski (2) distances (Euclidean distances), and k-means clustering was also performed with Euclidean distances. Both ClusterCirc and all cluster analysis procedures minimize distances between items within clusters, while ClusterCirc also optimizes equal spacing between clusters. For the clustering procedure, ClusterCirc performs a brute-force search for optimal division of the circle in same-sized segments, Ward cluster analysis pairs nearest items and successively enlarges clusters in a hierarchical fashion (Breckenridge, 2000; Morey et al., 1983), and k-means iterates across different cluster centroids and assigns items to the closest centroid (MacQueen, 1967).

Results for ClusterCirc, Ward, and k-means cluster analysis were similar only in non-hierarchical conditions with equal cluster spacing in the population and zero within-cluster variance, where items were perfectly clustered on their cluster centroid. However, this scenario is rather unlikely in real-life data. In all conditions with greater-than-zero item heterogeneity, ClusterCirc outperformed cluster analysis in revealing the intended population circumplex structure.

In conditions with small item heterogeneity, differences between ClusterCirc and Ward cluster analysis were small, non-existent in population data, and almost diminished in samples of n ≥ 300. However, performance of ClusterCirc was substantially better than Ward and k-means cluster analysis in all conditions with medium or large item heterogeneity within clusters, for both population and sample data. Apart from minimizing within-cluster distances, which is a goal of both cluster analysis and ClusterCirc, ClusterCirc also maximizes equal spacing between clusters (if not disabled by the user). Thus, results for modeled clusters with equal spacing can also be attributed to the fact that ClusterCirc includes cluster spacing in its search criterion spc w , whereas cluster analysis does not. Therefore, results for conditions where equal spacing of clusters was violated are of interest in evaluating ClusterCirc’s ability to find circumplex clusters. Results for conditions with unequal spacing show that ClusterCirc can also reveal these clusters in the majority of cases. However, the difference in sorting accuracy of ClusterCirc in the samples between equally spaced and non-equally spaced clusters (medium item heterogeneity within clusters) was approximately 14%. Hence, ClusterCirc is most effective if population clusters are close to equal spacing but is also helpful in detecting population clusters that are not equally spaced. Ward and k-means cluster analysis, however, are likely to find different clusters than ClusterCirc in the case of unequally spaced clusters and might not necessarily reveal population circumplex clusters with greatest within-cluster proximity of items. Even though k-means resulted in better results than Ward cluster analysis in these conditions, our results suggest an advantage of ClusterCirc over cluster analysis in the case of non-equally spaced clusters as well.

In many circumplex measures, there is a third, overarching factor in addition to the two circumplex factors (Acton & Revelle, 2002; Hopwood & Good, 2019; Weide et al., 2021; Wilson et al., 2013). In such hierarchical models, it could be possible that hierarchical methods like Ward cluster analysis could have an advantage over ClusterCirc as a non-hierarchical procedure in detecting population clusters. In contrast, we found overall better performance of ClusterCirc in the hierarchical conditions as well. This suggests that ClusterCirc is suitable to detect circumplex clusters even in the presence of a third, overarching factor in addition to the circumplex factors. Nonetheless, sorting accuracy in the samples decreased for ClusterCirc in data with hierarchical models as opposed to equivalent conditions without a third factor. However, ClusterCirc detection rates were substantially improved by using CircE angles instead of PCA angles as input in the analysis, especially when population circumplex clusters were not equally spaced. This could be attributed to the fact that CircE automatically takes into account a possible third factor by including the latent correlation of items with a distance of 180° (Browne, 1992; Grassi et al., 2010), whereas PCA components might not be perfectly suited to distinguish between the circumplex axes and the general factor (Fabrigar et al., 1997). Similarly, the ClusterCirc spacing index spc w was also slightly smaller for CircE input when compared to PCA input in some conditions with hierarchical data structure, which indicates enhanced circumplexity of the results.

As could be expected based on the hierarchical approach of the method, Ward cluster analysis performed better in conditions with a hierarchical model than in the equivalent conditions without a hierarchical model. CircE angles did not improve sorting accuracy of Ward cluster analysis. k-Means cluster analysis also performed slightly better in hierarchical when compared to equivalent non-hierarchical conditions, and sorting accuracy could be enhanced by using CircE angles as input, however, to a much lesser extent than for ClusterCirc. Thus, ClusterCirc with CircE angles as input resulted in the best results in conditions with a hierarchical model as compared to all other procedures that were investigated.

Interestingly, item distances within the intended population clusters were modeled to be smaller than distances between neighboring items of adjacent clusters in all conditions. Yet, Ward and k-means cluster analysis, which aim at minimizing such within-cluster distances, sorted items into different clusters than the intended ones in many conditions, even for population data (without any sampling error). In contrast, ClusterCirc found the clusters with smallest within-cluster distances in all conditions for population data and in the majority of cases for sample data. Regarding differences between cluster analysis techniques, we found an advantage of hierarchical Ward clustering in conditions with equal cluster spacing and an advantage of k-means clustering in conditions with unequal cluster spacing. Using Minkowski (2) distances in Ward cluster analysis slightly improved sorting accuracy of Ward cluster analysis (0–4% depending on the condition), showing that the distance measure in clustering techniques is of relevance when circumplex structure can be expected.

For all procedures, complexity of the data as modeled in the sub-conditions (e.g., larger item heterogeneity, many variables, many clusters, different item communalities) affected sorting accuracy in the samples. For ClusterCirc, this effect was compensated, and sorting accuracy was enhanced, by increasing sample size in all conditions, especially when the appropriate input (CircE angles in hierarchical models with unequal cluster spacing) was chosen. In contrast, increasing sample size reliably improved sorting accuracy of Ward and k-means cluster analysis only when circumplex clusters were equally spaced without the presence of a third factor and when item heterogeneity was small. In many conditions with medium or larger item heterogeneity, sample size hardly improved sorting accuracy of Ward and k-means cluster analysis. Hence, the adverse effects of data complexity on sorting accuracy can reliably be counteracted by increasing sample size in ClusterCirc, whereas such linear effects cannot be expected for Ward and k-means cluster analysis.

When using ClusterCirc on complex data, sample size should be large enough to ensure a high chance of detecting circumplex clusters in the population and sorting items accordingly. The detection or sorting accuracy can be estimated by ClusterCirc-Simu, the tailored simulation study for the conditions of a given dataset. ClusterCirc-Simu also gives ClusterCirc indices for the simulated population and samples (mean and standard deviation) to compare them with the empirical ClusterCirc results. As suggested by the present large simulation study, spc w can be expected to be below 0.30 in most cases with population circumplex structure.

Interpretation of Results: Empirical Example

The empirical findings for the circumplex inventory IAS (Alden et al., 1990; Jacobs & Scholl, 2005) illustrate the usefulness of ClusterCirc to find circumplex clusters as a basis for subscales in real data. For most IAS items, ClusterCirc suggested to sort them into the same subscales as the original IAS subscales, which validates circumplex spacing of the IAS. However, confirmatory CircE analysis of the resulting subscales revealed that the slightly modified ClusterCirc IAS subscales yielded improved circumplex fit than the original IAS subscales and subscales suggested by Ward cluster analysis. Nonetheless, even though circumplex model fit of subscales improved by ClusterCirc, none of the suggested subscales reached great model fit, with CFI < 0.95 for all sorting methods (Hu & Bentler, 1999). This could be due to the fact that ClusterCirc does not optimize circumplexity solely on the subscale level, but it considers items and subscales simultaneously. Furthermore, model and measurement error of the IAS data might reduce circumplex fit of the subscales as well.

Interestingly, ClusterCirc sorting seems to improve not only circumplex spacing, but also the radial alignment of the subscales on the circle, as suggested by the CircE results for equality constraints on radius. Hence, ClusterCirc could be used to optimize both spacing and radius of subscales in accordance with the circumplex model (Browne, 1992; Gurtman & Pincus, 2000).

Regarding Cronbach’s α as a conventional scale measure, our findings suggest that ClusterCirc produces subscales with similar Cronbach’s α as the original IAS subscales. However, Cronbach’s α of the ClusterCirc subscale “JK/Unassuming-ingenuous” was substantially lower than for the original JK subscale. This is probably due to the small number of items in the ClusterCirc JK subscale: ClusterCirc sorted most of the original JK items into the neighboring subscale “HI/Unassured-submissive.” The JK items produced most of the deviations between ClusterCirc and original IAS sorting. This suggests that the original JK items might be too similar to the HI items. For example, “compliant” (JK1) and “obedient” (JK2) are very close to “submissive” (HI6) in meaning. Likewise, “susceptible” (JK3) can be used as a synonym for “influencable” (HI8), and being “cautious” (JK5) can be paralleled with being “hesitant” (HI4). And someone who is “conflict averse” (HI7) is likely to be “indulgent” (JK4) as well. This finding is in line with previous research on the interpersonal circumplex, where the two subscales HI and JK have been found to be closer than the expected 45° as well (Alden et al., 1990; Jacobs & Scholl, 2005; Weide et al., 2021). To better differentiate between the two subscales, it could be beneficial to generate new items that better capture the domain “JK/Unassuming-ingenuous” and disentangle it from its neighboring domain. Considering item indices of the IAS data, our results suggest that ClusterCirc sorting can improve conventional item indices in addition to improving overall circumplexity of the instrument, which further supports the use of ClusterCirc.

Limitations of the Study Design and Future Research

We examined ClusterCirc in an extensive simulation study and for an empirical example using the IAS. It is appropriate to recognize some limitations of the present research, which should be addressed in future research. First, ClusterCirc could be tested in more extensive simulation studies. It should be noted that we defined sorting accuracy as detection rate of modeled population clusters. In our simulation study, many modeled population clusters were evenly distributed across the circle (equal spacing), dividing the circle into segments of the same size. The reason for preferring symmetric segmentation in circumplex models is that asymmetric segments would not necessarily be interpreted as supporting a circumplex structure. If, for example, most items are on the right side of the circumplex, this would indicate that there is a rotational position of the axes where most items will have large loadings on the first component, and a few items will have large loadings on the second component (like in unrotated PCA). Therefore, only slight deviations from symmetric segmentation are compatible with a circumplex interpretation (unless the rotational position of the axes is a-priori fixed).

However, violations of assumptions are of interest in simulation studies, which is why we included conditions with unequal cluster spacing or asymmetric segmentation of the circle. In future research, it would be possible to model more conditions in which equal cluster spacing or same-sized segmentation of the circle is violated, for example, by further shifting of cluster centroids or by uneven distribution of items within clusters. Such scenarios would probably lead to less favorable results for ClusterCirc and favor cluster analysis to a greater extent. It is also possible to model greater variation of communalities. It would also interesting to investigate the effects of such scenarios on the size of the ClusterCirc indices to enhance interpretation of empirical ClusterCirc indices.

Moreover, we decided that a cluster would consist of items that were closer to one another than to items of adjacent clusters. While we found this definition of clusters suitable for the present research, it is of course possible to define intended clusters in a different way depending on the underlying model. Therefore, the present results are limited to the definition of population clusters with smallest within-cluster distances of items.