Abstract

Aggregated data meta-analyses indicate a correlation between instructional leadership and student achievement. However, it is unclear to what extent this relationship can be generalized across cultural contexts, as most primary studies stem from Anglophone regions. Drawing on international large-scale assessment data, this 3-level individual participant data (IPD) meta-analysis examines this relationship over a 6-year period using a sample of 1.5 million students in more than 50,000 schools from 75 countries. The findings show that the mean correlation is close to 0 and that the relationship between instructional leadership and student achievement varies significantly across contexts. This is mainly due to the level of human development and cultural factors. Implications for policy, practice, and education research are discussed.

Keywords

However, context plays an important role in how leadership is defined and practiced in schools, as well as whether, how, and in what ways it becomes effective (Luschei & Jeong, 2021; Shaked, 2020; Tan, 2018). While research on educational leadership has identified a set of generic leadership styles and behaviors school leaders in various contexts fall back on, the enactment, mechanisms and results of educational leadership remain context-dependent (Walker & Ko, 2011). Consequently, educational leadership, its modus operandi, and its effects are shaped by culture, as well as by other national, regional, and local circumstances (Hallinger, 2018). Nevertheless, until now, “the context in which leadership is enacted has not received much attention” (Antonakis et al., 2004, p. 60) in empirical leadership studies and furthermore is clearly undertheorized, particularly regarding IL (Gümüş et al., 2021; Hallinger, 2018).

This is surprising, as scholars have long indicated that differences in context can be so great that entirely different concepts can be required to answer the same research question about leadership in different contexts (Rousseau & Fried, 2001). In the management and organizational literature, some use the terms “emic” and “etic” to describe the tension that arises from this (Den Hartog et al., 1999; He & van de Vijver, 2015; Klein et al., 2022), with “emic” relating to context-dependent uniqueness and “etic” referring to cross-contextual generalizability (Berry, 1989; Helfrich, 1999). As van de Vijver and He (2017) and others (F. M. Cheung et al., 2011; Schaffer & Riordan, 2003) argue, this dichotomy is nevertheless problematic for cross-cultural research, as it implies that cultural phenomena are either culture-specific or universal, consequently rendering it impossible to study their combination. Following this critique, in cross-cultural leadership research, “a combined approach taking into consideration both the methodological rigor and cultural sensitivity in a study may be preferred” (He & van de Vijver, 2015, p. 190). Thus, He and van de Vijver (2015) suggest three different routes to bridge the emic–etic divide: (a) combining emic and etic approaches, (b) minimizing method bias to reduce nuisance and increasing equivalence between different contexts, and (c) employing multi-level analyses considering culture-level predictors.

Most studies to date empirically investigating IL (and its relation to student achievement) have originated from the Anglophone region (Wu & Shen, 2022). Quantitative cross-cultural comparative studies even with three or more countries are rare in educational leadership research, and there have been nearly no large-scale quantitative comparative studies on the topic of IL (Hallinger, 2018). However, a recent cross-cultural meta-analysis conducted by Karadag (2020) indicated that the majority of studies between 2008 and 2018 investigating the relationship between educational leadership and student achievement came from the Anglophone world. Approximately 95% of all research on the topic is from the “core Anglosphere” (Vucetic, 2020), a group of anglophone countries representing about 5% of the global population in 2023. As a result, research on the IL–student achievement relationship is emic, as it examines leadership in schools connected to the Anglophone region (Schaffer & Riordan, 2003), which—as in other research areas (e.g., Arnett, 2008; Henrich et al., 2010; Thalmayer et al., 2021)—raises the question of how far scholars can generalize such findings on a global scale when they come “from a small and unrepre-sentative portion of the global population” (Thalmayer et al., 2021, p. 116).

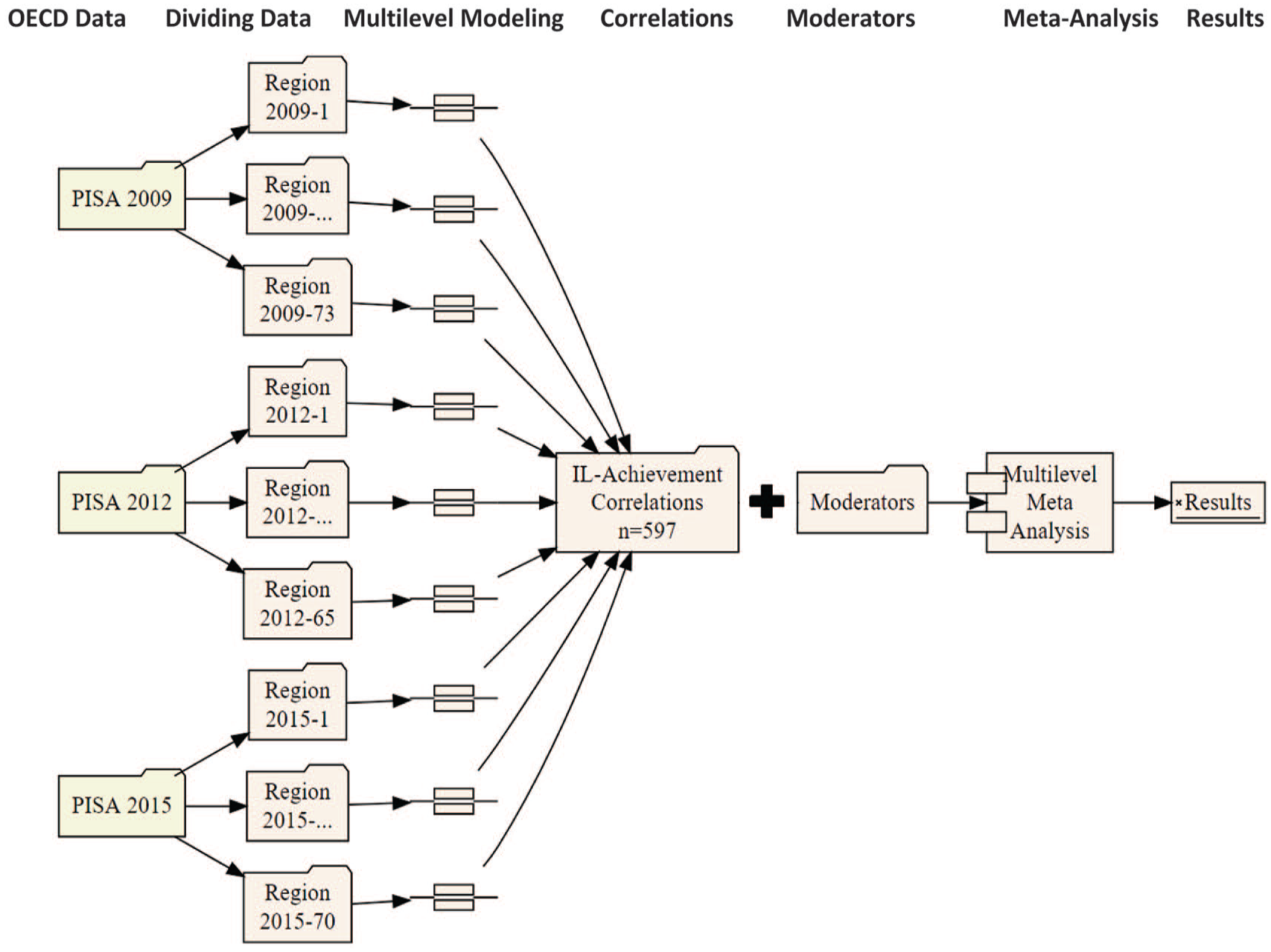

Following the works of van de Vijver (He & van de Vijver, 2015; van de Vijver et al., 2010; van de Vijver & Leung, 2021), we, therefore, bridge the emic/etic tension in cross-cultural research by adopting a novel methodological approach to large data sets called split/analyze/meta-analyze (SAM; M. W. L. Cheung & Jak, 2016). This approach is comparable with a two-step individual participant data (IPD) analysis (Brunner et al., 2022; Campos et al., 2023; Jak & Cheung, 2020) for performing meta-analytical integrative and multilevel data analyses that follow comparable analytical protocols (Brunner et al., 2022; L. Keller et al., 2021).

Using this approach, referred to by some as the “gold standard” of meta-analysis (Chalmers, 1993; Debray et al., 2015; Stewart & Tierney, 2002), we re-analyzed raw data from three cycles of the Organization for Economic Co-operation and Development’s (OECD’s) Program for Inter-national Student Assessment (PISA). Exclud-ing countries with no leadership survey data, the sample included K1 = 73, K2 = 65, and K3 = 70 (for a total of K = 75) countries or regions; J1 = 18,162, J2 = 17,549, and J3 = 16,400 schools; and N1 = 515,958, N2 = 480,174, and N3 = 519,334 students across 2009, 2012, and 2015 cycles, respectively.

By this means, our study addresses the following two research questions:

Literature Review

Origins of the IL Concept and Relevant Research

The concept of IL emerged in the United States in the 1930s (Hallinger et al., 2020) and has been one of the most popular research topics in the field of educational administration since the 1980s. From the outset, IL’s focus was on optimizing student learning by actively influencing teaching and instruction in such a way that “develops boys and girls in their full of their capacities in accordance with the rights and responsibilities of the American way of life” (Spears, 1941, p. 31). Consequently, as Gray (1934, p. 417) explained in one of the first scholarly works on the subject: “The unique function of instructional leadership is the improvement of teaching.”

In the early years, “instructional leader,” was correspondingly used as a general term to refer to school principals who frequently engage in practices focusing on teaching and learning activities (Bridges, 1967; Brieve, 1972; Edmonds, 1979). While a range of practices related to IL was identified in these years, it was not until the 1980s that comprehensive frameworks were developed to delineate the boundaries of IL and clarify what exactly it entails. The concept’s increased popularity after the 1980s can be attributed at least partly to the emergence of more concrete conceptual models of IL (Bossert et al., 1982; Hallinger & Murphy, 1985; Murphy, 1990). Among others, Hallinger’s model, including its related mea-surement tool—the Principal Instructional Man-agement Rating Scale (PIMRS)—has become predominant (Hallinger, 2011b). The model proposes three dimensions of IL: defining a school mission, managing the instructional program, and developing a positive school learning climate. While the first dimension includes framing and communicating the school goals, the second dimension covers a range of practices such as coordinating the curriculum, supervising and evaluating instruction, monitoring student progress, protecting instructional time, and providing incentives for teaching and learning. Finally, the last dimension involves promoting professional development among teachers and maintaining high visibility in the school.

Extant research on IL has paid great attention to its impact on various school characteristics and outcomes, such as teacher behaviors and development (e.g., efficacy, job satisfaction, commitment, and professional learning), school climate, school effectiveness, and instructional quality (Boyce & Bowers, 2018; Hallinger, 2005; Neumerski, 2013; Pietsch & Tulowitzki, 2017). This line of research has mostly confirmed the positive role of IL in the improvement of such characteristics and outcomes. As expected, the link between IL and student achievement has also received increasing attention in the literature, with encouraging positive findings from various contexts (Hallinger et al., 1996; Robinson et al., 2008; Shatzer et al., 2013). It should be mentioned, however, that most of these studies are based on a traditional conceptualization of IL which prioritizes the role of principals in the improvement of teaching and learning processes. Such studies are also largely based on single sources of data, typically principals or teachers, and cross-sectional designs (Hallinger, 2011b; Özdemir et al., 2022).

IL and Student Achievement

Both the actual conceptualization and the research on IL are deeply rooted in the 1970s effective schools movement (Sun & Leithwood, 2015), which centered on the assumption that improving a school’s quality has the power to increase student achievement (Teddlie & Reynolds, 2000). Seen from this angle, “an effective school is roughly the same as a ‘good’ school [and] performance of the school can be expressed as the output of the school, which in turn is measured in terms of the average achievement of the pupils” (Scheerens, 2000, p. 18). Hence, based on the initial finding that a school leader’s focus on teaching and instruction is strongly associated with improved learning outcomes for students in low-socioeconomic status (SES) schools (Edmonds, 1979), it is not surprising that the relationship between IL and student achievement has been central to the study of the determinants of effective schooling ever since (Hallinger, 2005; Neumerski, 2013).

While it was originally assumed that school leaders had a direct impact on student learning through hands-on leadership practices (Brookover et al., 1979; Rutter et al., 1979), it has become clear over time that such influences are more indirect and mediated by other within-school factors (Bossert et al., 1982; Heck et al., 1990; Scheerens, 2012). Existing research suggests that school leaders are most likely to affect student achievement through their influence on teacher beliefs, practices, and emotions, as well as on school structure and climate (Hallinger & Heck, 1998; Leithwood et al., 2019; Sebastian & Allensworth, 2012). In addition, the effects of leadership might be differentiated based on contextual factors, such as the socioeconomic background of students (Tan, 2018). However, only a few studies have utilized moderation or moderation–mediation analyses to investigate the conditional effects of IL on student achievement (Gümüş et al., 2022; Sebastian & Allensworth, 2019).

Although there are now various research approaches to explain how and to what extent leadership is related to student achievement (Leithwood et al., 2019; Seashore Louis et al., 2010; Witziers et al., 2003) and thus try to clarify the modus operandi, Hallinger’s (2011a, 2011b) reviews of decades of research in this area have concluded that the relationship between leadership and student achievement remains complex and ambiguous. It is clear that such a relationship cannot be fully explained by narrowly defined leadership approaches. Recent research, therefore, assumes that IL merges into leadership for learning (Boyce & Bowers, 2018), a blend of leadership practices that is not solely the responsibility of individuals in formal leadership roles (Daniëls et al., 2019), and that such blended leadership unfolds its impact as a school-wide practice of all those involved in the school (J. Ahn et al., 2021).

Although it is still largely unclear how IL and student achievement are related, various meta-analytical studies suggest that there is an observable and significant relationship between these two aspects. In this regard, it should be noted that all available meta-analyses are limited in various aspects. First, no study has yet to incorporate integrative (Curran & Hussong, 2009) or IPD meta-analysis (Debray et al., 2015), which allows for direct meta-analysis of all raw data at the unit level (e.g., students, schools, and so on) that come from multiple studies, which is clearly superior to pooling (Bravata & Olkin, 2001). Second, all available aggregated data meta-analyses include an excessive number of primary studies from the Anglophone world, especially the United States (Wu & Shen, 2022). Third, as the extant studies apply different methodologies and varying research protocols, there is a great amount of methodological heterogeneity between these studies, which can be considered an important bias factor (L. Keller et al., 2021). Consequently, Debray et al. (2015, p. 249) argue: “Unfortunately, when synthesizing published AD [aggregated data], even rigorously conducted meta-analyses can be of limited value.”

Taking these limitations into account, the relationship between school leadership and student achievement is generally rather moderate and, according to the results of a recent meta-meta-analysis by Wu and Shen (2022), should be around r = .17. Grissom et al. (2021), considering only studies with panel data, found in another meta-analysis that school leadership was associated with r = .06 in mathematics achievement and r = .05 in reading achievement. Scheerens (2012) found similarly high effect sizes with regard to direct (r = .05) and indirect effect (i.e., mediation, r = .06) models in his meta-analysis. In this respect, the effects differ significantly depending on the study design and are significantly smaller in longitudinal than in cross-sectional analyses. However, there seems to be hardly any difference between analyses that take mediator variables into account and those that do not.

In a recent cross-cultural meta-analysis of the relationship between school leadership and student achievement based on 151 articles and dissertations across nations, Karadag (2020) showed that IL has the highest effect (r = .34) on student achievement compared with distributed (r = .30) and transformational (r = .27) leadership models, as well as leadership practices (r = .27). Moreover, in their well-known meta-analysis of studies from the 1970s to the 2000s, Robinson et al. (2008) demonstrated a mean correlation between IL and student achievement of r = .21. Hattie (2017) identified the similar value in his research synthesis on correlates of student achievement. However, Tan et al. (2020) showed in a recent second-order meta-analysis that the correlation of IL with various school outcomes is r = .27, while the correlation is relatively higher for distributed (r = .30) and transformational leadership (r = .34).

On the contrary, other recent meta-analyses suggest that this association might be too high. Tan et al. (2021) found that a focus by school leaders on enhancing teaching and learning was associated with student achievement with an effect size of r = .11 and that effects further varied by achievement domain, with the effects of leadership being strongest in languages and mathematics but undetectable in science. A similar result was reached by Liebowitz and Porter (2019), who found a correlation of r = .11 between IL practices and student achievement in a meta-analysis of 51 studies from 2001 to 2019; they thus concluded that “previous literature may overstate the unique student achievement effects of principals’ time spent on and skill in instructional leadership behaviors” (p. 814).

The Influence of Context on IL and Student Achievement

School leadership and educational performance are context-dependent (Jacobson, 2011; Luschei & Jeong, 2021; Miller, 2018). Nonetheless, the concept of IL as an indicator of successful or effective schooling has spread around the globe, gaining momentum since the turn of the millennium (Hallinger et al., 2020; Reynolds et al., 2014). This is not least due to the fact that ILSA programs conducted around the world since the late 1990s, such as the Progress in International Reading Literacy Study (PIRLS) or PISA, have served as catalysts for the global transfer of educational concepts and policy solutions (Heffernan, 2018; Lewis & Lingard, 2015; Lingard et al., 2015). Thus, as Smith (2016, p. 6) notes, on the one hand, “the reinforcing nature of the global testing culture leads to an environment where testing becomes synonymous with accountability which becomes synonymous with education quality.” On the other hand, ILSAs have led to a proliferation of evidence-based education that, in the tradition of school effectiveness research, focuses on the question of what works (Ydesen & Andreasen, 2020), and thus also to the global transfer of educational concepts, including IL (Bush, 2022; Hallinger & Wang, 2015).

Concepts developed mostly in the Anglophone countries, however, may not be transferable to other parts of the world or, at least, might be “incomplete” (Bowers, 2020, p. 53) in other contexts. Cultures matter on the subject of leadership in all regards (Dickson et al., 2012). Culturally endorsed implicit leadership theories include the cognitive schemata, prototypes, or societal or cultural leadership ideals people in different cultures hold about leaders and leadership, and shape what is understood by leadership, how and what people think about leadership, how leadership is enacted, and how leadership is examined in the context of scientific studies (Den Hartog et al., 1999; T. Keller, 1999; Shaw, 1990). Ultimately, this also affects the effectiveness of leadership, as with regard to cultural sensitivity “effective leadership is . . . in the eye of the beholder” (Judge et al., 2006, p. 203).

There are, however, recent efforts to turn around the concepts exported from the respective cultural and national contexts of the Anglophone world and adapt them to new educational traditions and circumstances (Fischman et al., 2019; Lewis & Lingard, 2015; Niemann et al., 2018). As for the concept of IL, increased studies indicate that it is contextually adapted and shaped not only by culture but also by national, regional, and local circumstances (Bowers, 2020; Brauckmann et al., 2016; Gümüş et al., 2021; Shaked, 2020; Shaked et al., 2021). This contextual sensitivity is also observable in the relationship between IL and potential dependent variables, like student achievement. While studies from Anglophone countries suggest that there is a positive relationship between IL and student achievement, findings from other countries and cultures are sometimes contradictory. For instance, Mora-Ruano et al. (2021) re-analyzed PISA 2015 data from Germany and found negative associations between IL and student achievement. A meta-analysis by Witziers et al. (2003) confirmed that studies conducted in the Netherlands reported significantly lower effect sizes than studies conducted in the United States. Another recent meta-analysis highlighted the differentiated effects of school leadership in various cultures by show-ing “that the effect of educational leader-ship on students’ achievement is significantly higher in vertical-collectivist cultures (r = .52) than horizontal-individualistic cultures (r = .31), (Qb = 11.5, p < .05)” (Karadag, 2020, p. 59).

But what could be the reason for this? As Osborn et al. (2014) state, there are three strands of discussion on the relationship between leadership, leadership effects, and context: (a) leader-centric approaches, where context is seen as an influence on various criteria that can be influenced and changed by leadership; (b) situational approaches, which assume that leadership and its effects on dependent variables are contingent; and (c) embedded approaches, where “context is a dominant factor that establishes boundary conditions on the type of leadership displayed as well as the effectiveness of leadership” (Osborn et al., 2014, p. 593).

Cross-cultural research on IL suggests that the third approach may be instrumental in explaining variation in the relationship between IL and student achievement. For example, according to the OECD’s (2009a) Teaching and Learning International Survey (TALIS) findings, school leaders in countries such as Denmark, Iceland, Spain, and Belgium are much less likely to practice IL than their counterparts in Bulgaria, Turkey, Malaysia, or Mexico. Correlations with other school variables, in turn, varied greatly depending on the context. Associations with teaching quality were found only in very few countries (e.g., Malta and Iceland), but with teacher professionalization and innovative teaching practices in many countries. It is striking that the correlations are most evident in countries where IL is used frequently. The related research has also highlighted that emphasis on high-stakes tests at the national level is an important driver of school principals’ focus on improving student achievement (Ng et al., 2015; Walker et al., 2011). This indeed has the power to influence how and to what extent school principals enact various IL practices. In sum, both IL itself and its relationship with other variables, and thus eventually its effectiveness, depend on the context in which IL is enacted.

The reasons to which these differences might be attributed are numerous, although the subject is largely undertheorized (Clarke & O’Donoghue, 2017; Hallinger, 2018). One of the few exceptions is the model of Dimmock and Walker (1998, 2005), which is based on Hofstede et al.’s (2010) framework of cultural dimensions. Here, the authors assume that the cultural code—shared attitudes, values, goals, and practices, “from society, region and locality [and] organizational culture” (Dimmock & Walker, 2005, p. 24)—interacts with educational leadership, its modus operandi, and its effects. There is growing evidence showing that educational leadership and its effects may vary due to partially interacting local, regional, national, cultural, and economic factors (Bowers, 2020; Hallinger, 2018; Miller, 2018).

Furthermore, there is increasing empirical evidence showing that the interaction of educational leadership, student achievement, and the environment is closely interwoven in everyday life (Day et al., 2016; Forrester & Gunter, 2010). Hallinger (2018) posits that the economic, political, and socio-cultural context should be of general influence, while community, personal, and institutional context should have a direct influence on IL, its modus operandi, and its effectiveness. On the one hand, rather proximal factors, like the culture of a school (Sahin, 2011) and trust (Lubis et al., 2021), along with the socioeconomic environment of the school (Gümüş et al., 2022; Hallinger et al., 1996; Pietsch & Leist, 2019; Urick et al., 2022) play a major role in how IL is understood and enacted effectively. On the other hand, more distant factors, such as cultural values (Karadag, 2020) and educational policies (M. Lee & Hallinger, 2012), which are largely beyond the influence of local actors and require specific interpretation in concrete situations (Noman & Gurr, 2020), may also influence the effectiveness of IL.

This Study

With the literature in mind, the follow-ing desiderata can be identified: (a) It is well known that leadership and its effectiveness are context-dependent, with the cultural context, in particular, having a major influence; (b) a majority of the empirical studies on the relationship between IL and student achievement come from the Anglophone world, namely the “core Anglosphere”; (c) large-scale quantitative cross-cultural studies investigating the IL–student achievement relation are nearly absent; (d) the studies conducted so far show a high degree of methodological heterogeneity, which might have an own impact on the generalizability of findings; and (e) all previous best evidence studies (i.e., systematic reviews and aggregated data meta-analyses) are based on these data.

It is therefore unclear to what extent the previous results can be generalized at a larger level and are thus “etic” (Berry, 1969; Brett et al., 1997; Den Hartog et al., 1999), which “emic” contextual factors (can) modulate differences, and how robust the results reported in traditional meta-analyses are when both methodological heterogeneity is reduced and a variety of contexts are considered simultaneously. Hence, this study has two main goals. The first goal is to evaluate the “etic” generalizability of the IL–student achievement association across cultures and time (within cultures, or more precisely, countries). The second goal is to clarify which “emic” macro factors moderate this relationship and thus limit generalizability.

Methods

Data Source

Against this background, this study examines the association between IL and educational achievement in 75 countries and regions worldwide over the course of 6 years. In this regard, the study employs secondary data analysis of the OECD’s PISA 2009, 2012, and 2015. The main objective of PISA studies is to regularly collect and provide internationally comparable data on the performance of education systems, as measured by student achievement or literacy. The target group is 15-year-old secondary school students. The domains of mathematics, reading, and science are continually assessed with the help of competency tests. However, PISA also collects a wide range of other information on education-related topics. In 2009, 2012, and 2015, the international school questionnaires, completed by school leaders, also asked questions about leadership in schools.

Participating countries include OECD nations, as well as partner countries and regions. Typically, a minimum of 150 schools and 4,500 students per country are required; however, if a participating country had fewer than 150 schools, all schools participated. To ensure nationally representative samples for each participating country, PISA uses a two-stage sampling design, with students nested within schools. At stage 1, a random sample of schools is selected; at stage 2, 15-year-old students (35 students per school) are randomly selected within schools. A total of 18,162 schools with 515,958 students were included in the PISA 2009 data set, 17,549 schools with 480,174 students in PISA 2012, and 16,400 schools with 519,334 students in PISA 2015. Most nations participated in all 3 years, but some countries participated less frequently (e.g., Malta only in PISA 2009 and 2015, Panama only in PISA 2009, and Algeria only in PISA 2015). In addition, in rare cases, relevant variables were not collected; for example, in PISA 2009, the school questionnaire was not administered in France, so no data on leadership in French schools are available for that year. For an overview of which data from which countries were included in our analyses, see Supplemental Material in the online version of the journal.

Analytical Approach

Our study applies a novel meta-analytical big data approach suggested by M. W. L. Cheung and Jak (2016), called SAM. In this approach, a big data set is split into many smaller data sets, each of which is analyzed separately and then combined by using meta-analyses. This approach is comparable to a two-step IPD meta-analysis (Brunner et al., 2022; Campos et al., 2023; Jak & Cheung, 2020) by explicitly reducing method heterogeneity (or bias) between primary studies (Debray et al., 2015). Recently, Zhang et al. (2018) extended this approach by integrating a multilevel framework. Furthermore, Brunner et al. (2022), Campos et al. (2023), L. Keller et al. (2021), and Blömeke et al. (2021) adapted the approach to ILSAs and expanded it to an integrative data analysis, applying the same data analysis procedure for each subset of data.

In this context, one of the major advantages of using ILSA data for IPD meta-analyses is that these studies are based on large, representative probability samples, thus reducing sample selection bias and determining very precise effect sizes (Brunner et al., 2022). Moreover, such an analysis can simultaneously overcome several limitations of classical single-stage meta-analyses with aggregated data, such as the non-consideration of differences in survey characteristics and aggregation bias, as well as the non-comparability of findings between studies (through the use of different instruments and methods; Campos et al., 2023).

We also followed this procedure, whereby we performed a content validation of the PISA leadership scales in advance to keep them as comparable as possible to the PIMRS. Figure 1 shows a simplified graphical scheme of our SAM approach.

Simplified scheme of the SAM procedure.

To address the concept of context, we followed the suggestions by S. Ahn et al. (2012) for evaluating effect heterogeneity in meta-analyses. We thus applied a systematic procedure to study the influence of different contextual characteristics on the variation of the identified correlations and therefore evaluate generalizability. Hence, in our understanding, context is anything that can threaten the generalizability of the IL–student achievement relation. In the final step, we evaluated the results of this theory driven-analysis by applying a fully data-driven machine-learning approach (Li et al., 2020), which enables identifying the interactions between moderators in meta-analyses by using a regression and classification tree mechanism (CART).

Computation of Leadership and Achievement Association

To compute the association between leadership and the school-level component of student achievement, the first step involved splitting the available three cycles of PISA data sets for each country, for each achievement domain, and for each plausible value. For example, the 2009 data set was first split by country variable, resulting in 73 different data sets; then, each country’s data set was further split by 3 achievement domains, resulting in 73 × 3 = 219 data sets. Finally, these 219 subsets were further split by plausible values, given that there were 5 values for PISA 2009; this final step resulted in 219 × 5 = 1,095 subsets. In other words, the data setup process was automated to split the PISA 2009 data set into 1,095 pieces. This was required to compute the relationship, as explained in the next paragraph.

The first two steps of SAM were completed using an R code (see Supplemental Material in the online version of the journal) to automatize the following: (a) separately for each cycle, the PISA data set (i.e., created by merging individual- and school-level PISA data) was split into subsets across countries, achievement domains, and further across each plausible value, resulting in a subset that had an achievement score, relevant sampling weights, and leadership scale item-level data; (b) for each subset, multilevel models using Mplus (Muthén & Muthén, 2021, see Supplemental Material in the online version of the journal) were employed to compute the association between the school-level component of the achievement and a school-level latent factor defined by leadership item scores; and finally, (c) the results were then collected and stored for the second stage.

The multilevel model mentioned in (b) utilized a multiple imputation approach to combine results obtained from the analysis of each plausible value (as recommended by Arıkan et al., 2020; OECD, 2009b) to analyze PISA data that recorded 5 plausible valuables in the 2009 and 2012 cycles and 10 values in 2015. In other words, following Campos et al. (2023), the imputation procedure at this point of analysis was not utilized to address missing data, but rather to combine results for different plausible values. Further in the multilevel model, both student and school weights (W_FSTUWT and W_FSCHWT resp. W_SCHGRNRABWT) were taken into account; the full information maximum likelihood to address missing data was used, as recommended by Hox et al. (2015); the student-level achievement variable was not declared as a within or between level variable, to activate the latent mean decomposition (Hamaker & Muthén, 2020); and finally, the standardized coefficients were computed to obtain the correlation between the school-level leadership latent factor and school-level latent component of the achievement variable, using STDYX command (Liu et al., 2014). In the end, a total of 597 correlation coefficients were computed separately for 75 countries across 3 cycles and 3 achievement domains.

Meta-Analysis

In the third step of SAM, we analyzed those 597 correlation coefficients by applying a meta-analysis. Treating the number of participating schools as the corresponding sample size, the correlation between IL and achievement was transformed using Fisher’s r-to-z (Fisher, 1921) formula implemented in the metafor package (Viechtbauer, 2010). These effect-size measures and their heterogeneity were investigated in detail, similarly to the meta-analysis steps followed by Williams et al. (2022). Conditional models included both cycle-specific and country-specific moderators, as explained in the “Measures” section. The mixed-effects meta-regression models were estimated via the rma.mv function in metafor using restricted maximum likelihood, in which effect sizes specific to achievement domains (i.e., math, reading, or science) were allowed to nest in each cycle, and cycles were allowed to nest in countries to account for the dependencies. Using Konstantopoulos’s (2011) notation, a three-level empty meta-analytic model reads as follows:

where

After each mixed-effects model, heterogeneity information was saved as follows: the estimate at the third level (i.e., country), hereafter τL3, and at the second level (i.e., cycles in countries), hereafter τL2, and a combined estimate

Multiple Moderator Information Search

To identify interactions between the moderators, we utilized Li et al.’s (2020) multiple moderator meta-analytical CART method by employing the R package metacart with a limitation of ignored nested structure. This procedure searches for homogeneous subgroups within the given studies by combining moderators: “Unlike logistic and linear regression, CART does not develop a prediction equation. Instead, data are partitioned along the predictor axes into subsets with homogeneous values of the dependent variable—a process represented by a decision tree that can be used to make predictions from new observations” (Krzywinski & Altman, 2017, p. 757). As single regression trees are sensitive even to minor changes in the data, it is advisable to perform the corresponding analyses several times to achieve reliable results (Krzywinski & Altman, 2017). Accordingly, we ran Meta-CART analyses 100 times, each time for a multiple imputed data set. A successful Meta-CART implementation is expected not only to reveal additional predictive information but also to serve as a sensitivity analysis.

Measures

Student Achievement

PISA achievement scores are in the domains of reading, mathematics, and science. As each student only takes a subset of items from the bank of questions, the OECD reports multiple plausible values for each student in each domain, with the aim of approximating the true distribution of the latent achievement variable being measured. The data are available at the student level, and each has an international mean of 500 with a standard deviation of 100 test score points.

Instructional Leadership

School Leadership was introduced into PISA for the first time in 2009 and is measured at the school level through self-reports from school leaders. The scales used in PISA are based on scales developed in another OECD study, the TALIS 2008 (OECD, 2010). In TALIS, the concept of school leadership was mainly framed around the IL model. Therefore, the IL model has also dominated leadership items across different cycles of PISA, while other popular leadership models, such as transformational and distributed leadership, were not systematically represented (see Supplemental Material in the online version of the journal). However, rather than using items directly from any of the existing IL measurement tools (e.g., PIMRS: Hallinger & Murphy, 1985), the OECD developed its own questionnaires to measure school leadership, with some items that might go beyond the IL approach. At present, the OECD has created leadership scales based on data from three PISA cycles (2009, 2012, and 2015). The relevant scales included 14 items in 2009 and 13 in 2012 and 2015 (OECD, 2012, 2014, 2017). However, only five items with exactly the same wording were used at all three measurement points. Moreover, from PISA 2009 to PISA 2012, the measurement was changed from a 4-point to a 6-point Likert-type scale.

Nevertheless, establishing comparability of the measured variables is critical for making reliable statements under the approach we have chosen. However, if different measured variables are used, invariance tests are not applicable, so it is necessary to establish the conceptual comparability of the measured variables (Campos et al., 2023). With this in mind, we chose to reconsider the relevant items to measure IL and create new scales that come closer to the concept of the PIMRS across three PISA cycles. We intended to achieve content validity of the IL scales for different cycles by using expert judgment, following Lawshe’s (1975) method.

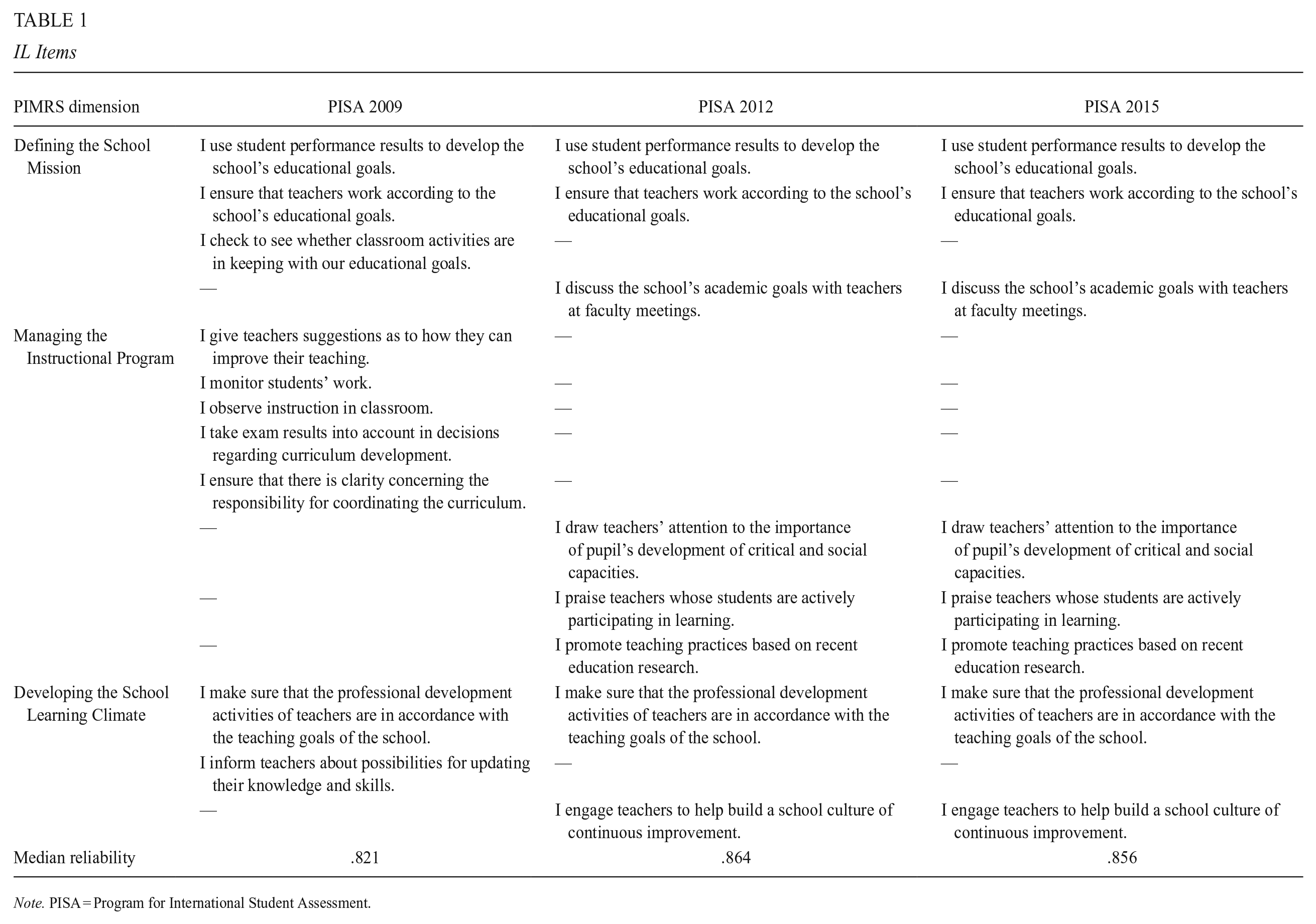

In the first step, we created an expert panel that included 10 international scholars from 5 different countries who had recently published on IL in the field’s core journals (e.g., Education-al Administration Quarterly; Educational Man-agement, Administration, and Leadership; Jour-nal of Educational Administration; etc.). We sent all 21 items included in OECD’s leadership scales across three PISA cycles to the panelists and asked them to rate items as “essential,” “not essential but useful,” and “not necessary” against the PIMRS framework. After collecting the experts’ ratings, we kept 15 items, based on the assumption that items receiving the rating of “essential” from more than half of the panelists had some degree of content validity (Lawshe, 1975; see Supplemental Material in the online version of the journal). Next, we assigned each of the selected items to three main dimensions of PIMRS, again based on the views of the panelists, to ensure that all dimensions were represented in each cycle (see Table 1).

IL Items

Note. PISA = Program for International Student Assessment.

In the next step, we ran bifactor models to investigate the uni-dimensionality of the IL measure. The bifactor s−1 model (Eid et al., 2017; Pokropek et al., 2022) treating IL as the general factor with Defining the School Mission (as it was the most conceptually stable dimension across all three PISA cycles) as a reference category and two sub-dimensions (Managing the Instructional Program and Developing the School Learning Climate, respectively) was tested across each country and each cycle to compute the explained common variance (ECV), hierarchical Omega (OmegaH), and percent-age of uncontaminated correlations (PUC) to examine the uni-dimensionality, as suggested by Dueber and Toland (2023). If PUC ≥ 0.80, modeling uni-dimensionality is supported; if PUC ≤ 0.80, then ECV should be ≥0.60 and omegaH ≥ 0.70 for the overall score to support uni-dimenionality (Reise et al., 2013). The analyses were conducted with the R package Bif-actorIndicesCalculator (Dueber, 2021), and the model successfully converged (i.e., OmegaH < 1) in 76% of the cases, resulting in median values of 0.87, 0.83, and 0.89 for ECV, OmegaH, and PUC, respectively. These high bifactor indices, along with the presence of convergence issues (which in itself suggests that even modeling sub-scores is problematic), are considered evidence for a defensible use of the total IL score.

Consequently, in the final step, we conducted confirmatory factor analyses (CFA) to assess the construct fit of the uni-dimensional latent construct, named IL, across each country and each cycle. The IL factor was defined by 10 items in 2009 and 8 items in 2012 and 2015. The CFA were conducted with the R packages lavaan (Rosseel, 2012) and semTools (Jorgensen et al., 2021) across countries and cycles. To address missing data in these analyses, full information maximum likelihood was utilized (see Supple-mental Material in the online version of the journal). The items used, along with their assignment to the PIMRS dimensions, can be found in Table 1 (see Supplemental Material in the online version of the journal, for reliability and model fit statistics).

Moderators

As most studies on the associations between IL and student achievement have been conducted in the Anglophone world, and moreover, there are only a few studies that investigate several measurement points, we examined the generalizability of the findings in a further step of our analysis. Here we followed the suggestions by Cronbach (1982) and applied the UTOS (

Since the psychometric characteristics of the IL measures might be affected by context (Eryilmaz & Sandoval Hernandez, 2021) and further might also interact with other model variables in the sense of a method factor (Becker, 2017), we include the standardized versions

SRMR (

McDonald’s Omega (

Regarding the Unit component of MUTOS, we included the following characteristics of the samples (for details regarding both the rationales and analytical details, see Appendix).

Student–teacher ratio (U )

Reported in the available school-level PISA data, the student–teacher ratio (STRATIO) is aggregated for each country across cycles. In contrast to the other class size variable (CLSIZE), which is essentially a discrete variable collected as part of the school/principal questionnaire in PISA, the STRATIO variable is metric and based on direct information—that is, the number of students enrolled and the number of teachers on staff (Giambona & Porcu, 2018). The country average for this moderator ranged between 7.33 and 31.1, with a mean of 13.9 and distributed approximately normally with a median of 12.9.

Teacher shortage (U )

Computed similarly, the country average for teacher shortage (TCSHORT) was also distributed approximately normally, with mean and median values of 0 and ranging between −1.12 and 2.13.

Accountability pressure (U )

This variable is also available in the school-level PISA data (SC22Q01, SC19Q01, and SC036Q01TA) as a yes/no question. Originally, these variables were coded as 1 for yes and 2 for no. We subtracted 1, hence coding 0 for yes and 1 for no. The average accountability scores are computed for each country and each cycle. These scores were distributed with a slight negative skew and ranged between 0.07 and 0.99, with mean and median values of 0.6, in which a high score indicates that achievement data are less frequently made public.

Student SES (U )

Available in the student-level PISA data, the country-level averages of the student SES (i.e., ESCS in PISA) showed an approximately normal distribution, with −1.84 and 0.78 as the minimum and maximum values and −0.26 and −0.15 as the mean and median values.

Student sample size (U )

The PISA technical reports include country-level overall student sample sizes across cycles, ranging between 293 and 38,250. The values showed a positively skewed distribution, with a mean of 7,049 and median of 5,366.

School size (U )

Similar to student sample size, the technical reports provide the average within-school sample size for each country. These values ranged between 16.8 and 132, with a mean of 32.4 and a median of 27.8.

Share of private schools (U )

The technical reports provided the ratio of private schools, also distributed positively, with scores ranging between 0 and 93.3, a mean value of 17.6, and a median of 9.36.

Several studies have shown that both student achievement and school leadership may depend on the level of human development (e.g., Crede et al., 2019; Eriksson et al., 2021; Ramírez, 2006); macroeconomic factors such as gross domestic income (e.g., Hanushek & Woessmann, 2017; He et al., 2017; M. Lee & Hallinger, 2012; Stoet & Geary, 2013), wealth inequality within countries (e.g., Condron, 2011; M. Lee & Hallinger, 2012; Stoet & Geary, 2013; Thorson & Gearhart, 2018; Urick et al., 2022; van Ewijk & Sleegers, 2010), and government expenditure on education (e.g., Agasisti, 2014; Gupta et al., 2002); as well as cultural factors (e.g., Fang et al., 2013; Hanushek et al., 2020; He et al., 2017; M. Lee & Hallinger, 2012). Therefore, we consider the following

Human development index (HDI) (

Gross domestic product (GDP) (

GINI index (

Government expenditure on education as a percentage of GDP (GEX) (

Hofstede’s cultural dimensions (

Since we did not examine an intervention in the sense of Cronbach, we did not consider

Results

Overall Relation and Heterogeneity Between IL and Student Achievement

To answer our first research question, we conducted SAM analysis. In the analysis phase, measurement models of IL were first estimated for each country and cycle (see Supplemental Material in the online version of the journal). The fit statistic SRMR ranged from 0.031 to 0.162. For example, particularly good fit values were found in Vietnam, Greece, and Germany, and particularly poor fit values were found in Malta, Jordan, and Montenegro. Regarding the internal consistency of the IL scale, the omega values ranged from 0.656 to 0.944. We found that this also varied between nations; for example, the values were particularly high at 0.944 and 0.920 in Romania and Thailand, and particularly low at 0.656 and 0.699 in Jordan and Malta.

Second, we estimated correlations between IL and student achievement at the school level for each achievement domain nested in each cycle for each country. The correlations between IL and student achievement were positive on average in the first PISA cycle in 2009 (r = .033) and negative on average in the two subsequent cycles (r = −.019 and −.012). Furthermore, large differences in correlation coefficients were observed both between and within countries. The largest positive correlation coefficient was calculated for Luxembourg in the 2015 data set between math and IL (r = .436), but this correlation was lower in the 2009 data set (r = .239). The largest negative correlation was computed for Liechtenstein in the 2012 data set between reading and IL (r = −.478); however, the correlation was nearly 0 (r = .031) for Liechtenstein’s relation between science and IL in 2009. Overall, relatively high positive correlations (r = .10–.30) were observed for a few countries (e.g., Luxembourg and Peru) across 3 cycles, while the correlations were always negative or very close to 0 for some others (e.g., Australia and Belgium).

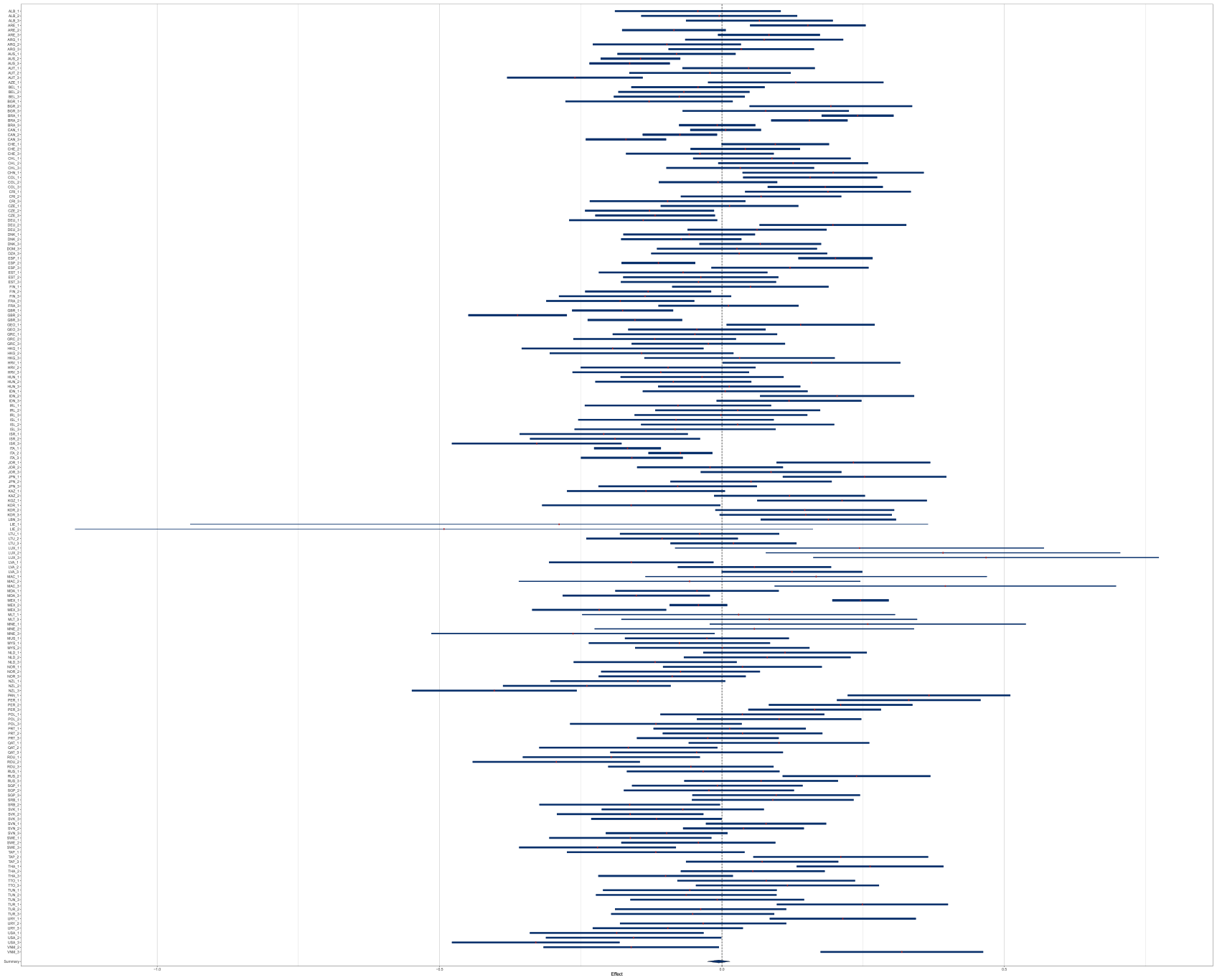

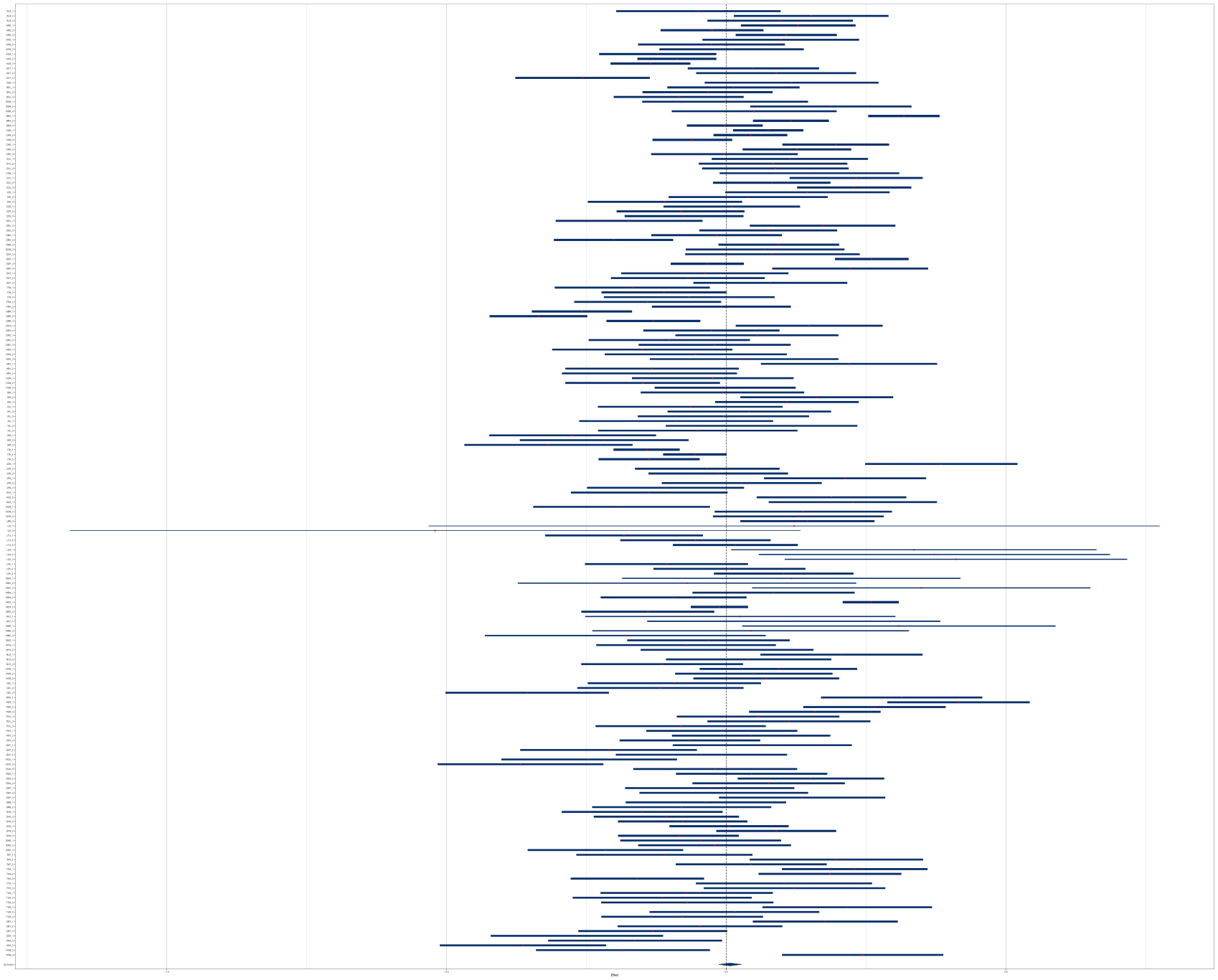

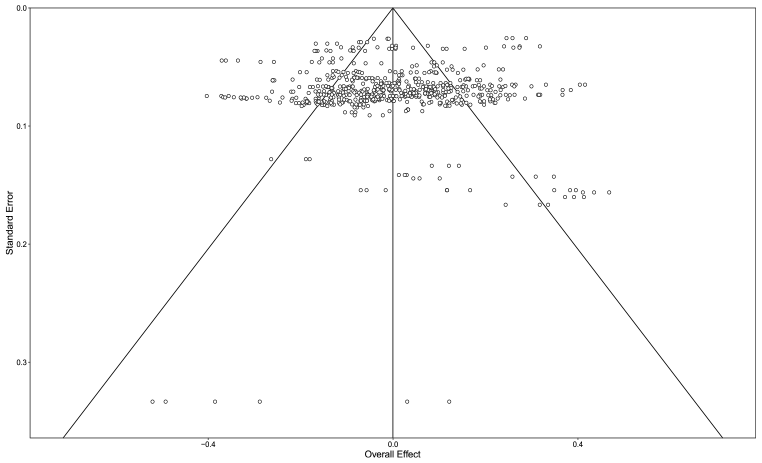

In the next phase, we conducted an integrative data analysis by applying a multilevel meta-analysis. Using schools as the corresponding sample size, the correlations were transformed using Fisher’s (1921) r-to-z formula. The unconditional 3-level model resulted in an average effect size of 0.005, with a lower boundary of −0.021 and an upper boundary of 0.030. The τL3 and τL2 were estimated as 0.082 and 0.116, respectively, hence τ = 0.142. The test for heterogeneity resulted in a Q value of 3,448.416 (df = 596, p < .001). To increase the readability of the forest plots (Kossmeier et al., 2020), domain-specific unconditional models were tested; for the association between IL and math achievement, the average effect size was −0.002 [−0.027, 0.023], for the reading achievement, it was 0.011 [−0.015, 0.036], and for the science achievement, it was 0.001 [−0.025, 0.027], as depicted in Figures 2 to 4. Overall, the heterogeneity was due to differences in effect sizes across cycles and countries but not due to achievement domains; in other words, the relation between IL and achievement was consistent across domains but varied from year to year and among countries. The heterogeneity can be seen in Figure 5, which depicts all 597 correlation coefficients and indicates no bias pattern.

Forest plot for the math and IL association.

Forest plot for the reading and IL association.

Forest plot for the science and IL association.

Funnel plot of the 597 studies included in the IPD meta-analysis.

Explanation of Heterogeneity Through Culture and Other Contextual Characteristics

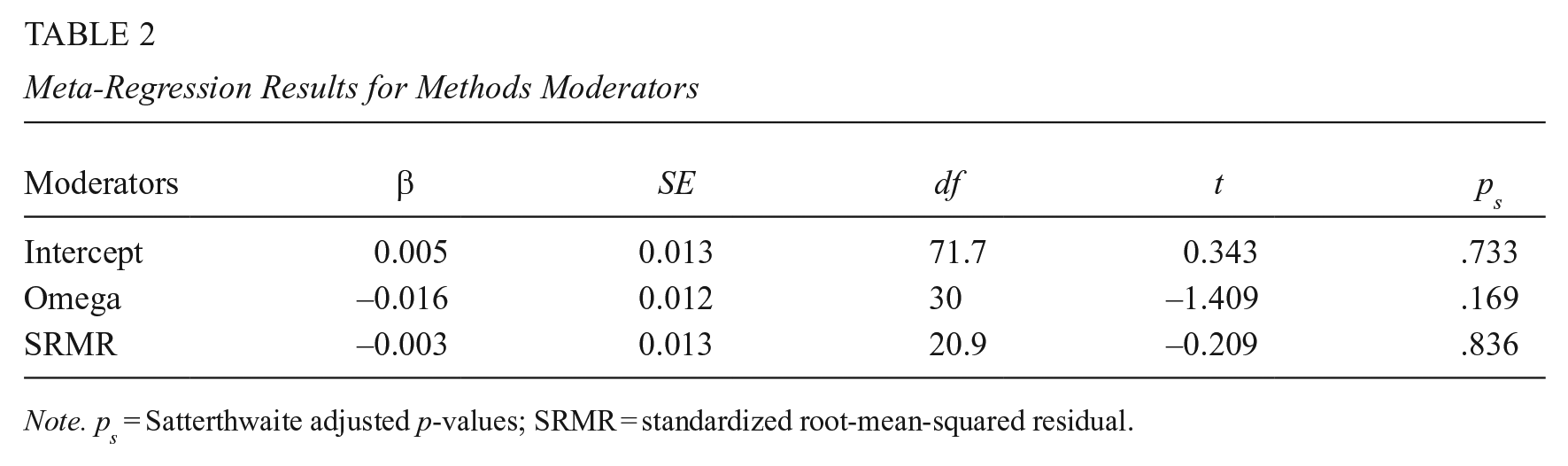

We addressed our second research question by integrating MUTOS moderators into our three-level meta-analysis model. The first conditional model included moderators from subset M, and the SRMR and omega values from the CFA for the IL scale across each cycle were standardized and entered the model. There were no missing data for the methods moderators. The overall effect-size estimate was 0.005 (SE = 0.013, df = 71.7, pS = .733). Compared with the unconditional model, heterogeneity was explained by less than 1%, τL3 = 0.085, τL2 = 0.114, and τ = 0.142. The test for residual heterogeneity resulted in a QE value of 3,423.027 (df = 594, p < .001). The results showed that neither of the moderators significantly explained the heterogeneity. As reported in Table 2, both the coeff-icient for the scale reliability (βomega = −0.016, SE = 0.012, df = 30, pS = .169) and the fit index (βSRMR = −0.003, SE = 0.013, df = 20.9, pS = .836) were not significantly different from 0.

Meta-Regression Results for Methods Moderators

Note. ps = Satterthwaite adjusted p-values; SRMR = standardized root-mean-squared residual.

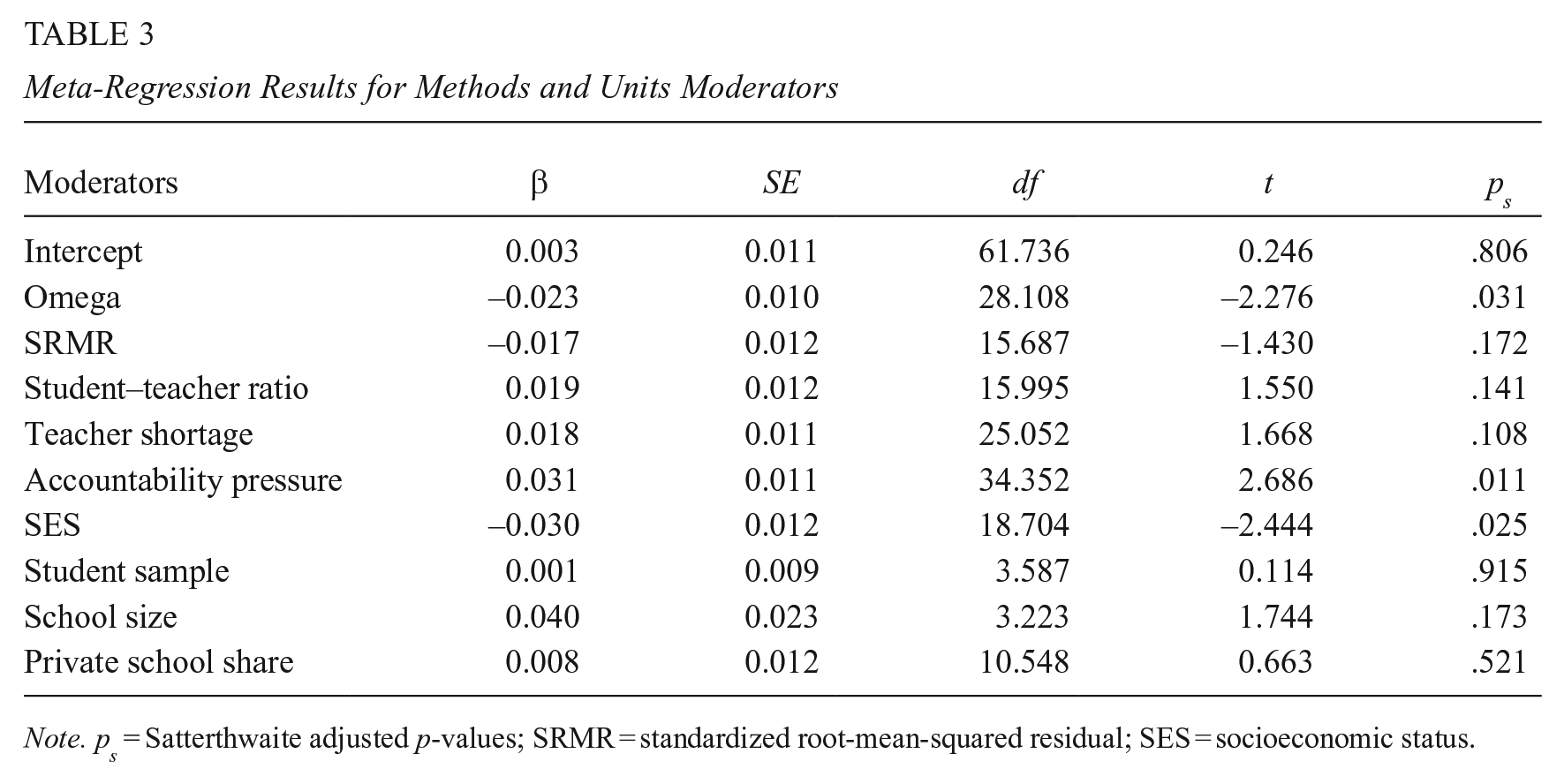

The second conditional model included moderators from subsets M and U; in addition to omega and SRMR, student–teacher ratio, teacher shortage, accountability pressure, student sample size, school size, and share of private schools were standardized and entered the model. Even though there were missing data for the U moderators at a negligible rate, multiple imputations were performed to prevent loss of information. The combined results indicated that, when all moderators held constant at their average (i.e., a value of 0 after standardization), the overall effect-size estimate was 0.003 (SE = 0.011, df = 61.7, pS = .806). Compared with the unconditional model, heterogeneity was explained by 22%, τL3 = 0.046, τL2 = 0.116, and τ = 0.125. The average QE was 2,433.787. Table 3 reports the meta-regression results for M and U moderators, among which only 3 were flagged as statistically significant at .05 level: the scale reliability (βomega = −0.023, SE = 0.010, df = 28.11, pS = .031), the accountability pressure (βaccountability = 0.031, SE = 0.011, df = 34.35, pS = .011), and SES (βSES = −0.030, SE = 0.012, df = 18.70, pS = .025).

Meta-Regression Results for Methods and Units Moderators

Note. ps = Satterthwaite adjusted p-values; SRMR = standardized root-mean-squared residual; SES = socioeconomic status.

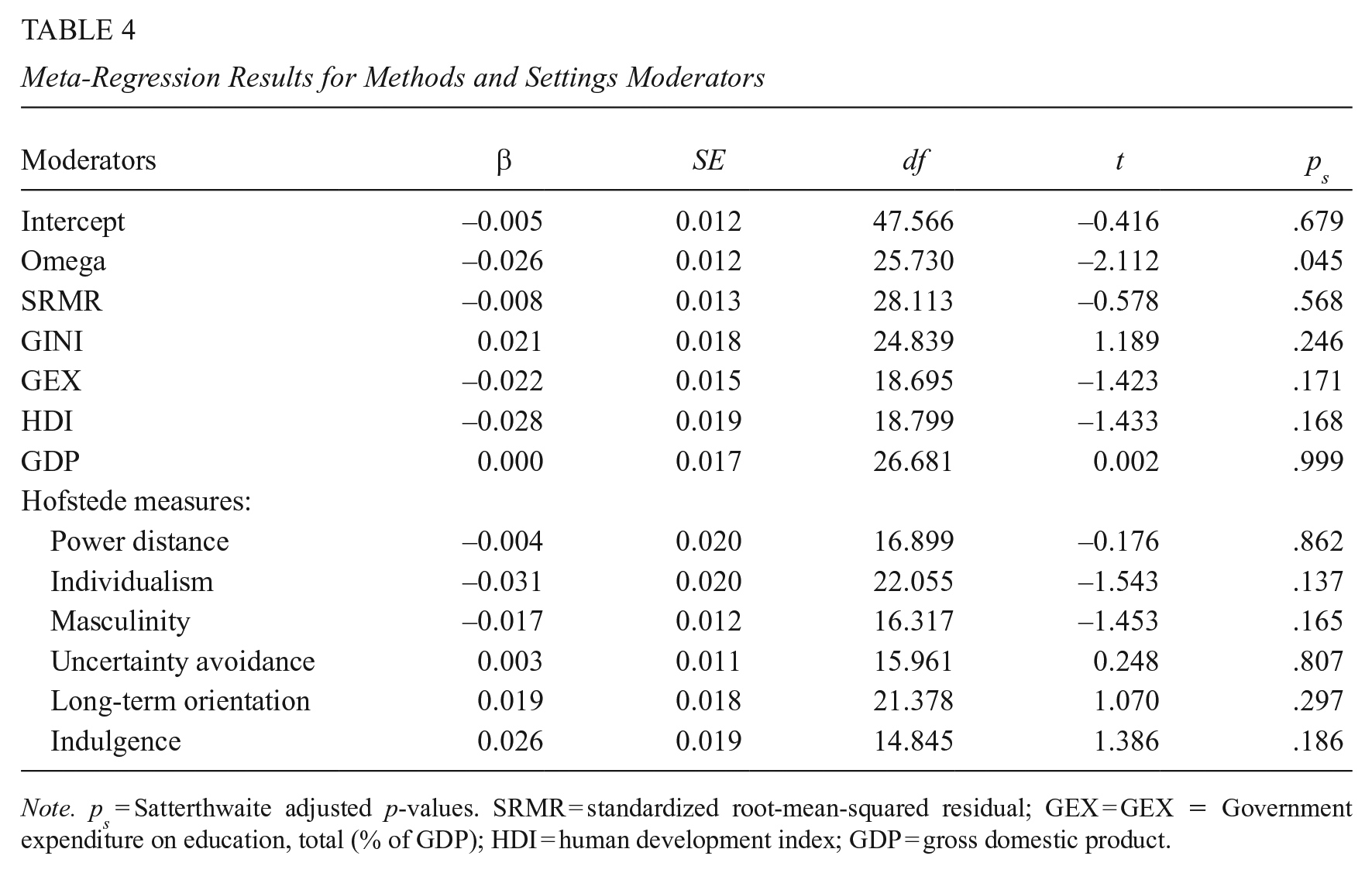

The third conditional model included moderators from subsets M and S; in addition to omega and SRMR, GINI, GEX, HDI, GDP, and Hofstede measures were standardized and entered the model. The missing data rate for the S moderators reached 25%. The multiple imputation combined results indicated that, when all moderators held constant at their average, the overall effect-size estimate was −0.005 (SE = 0.012, df = 47.57, pS = .679). Compared with the unconditional model, heterogeneity was explained by 20%, τL3 = 0.061, τL2 = 0.111, and τ = 0.127. The average QE was 2,339.796. As reported in Table 4, none of the moderators were flagged as significant except the scale reliability (βomega = −0.026, SE = 0.012, df = 25.73, pS = .045).

Meta-Regression Results for Methods and Settings Moderators

Note. ps = Satterthwaite adjusted p-values. SRMR = standardized root-mean-squared residual; GEX = GEX = Government expenditure on education, total (% of GDP); HDI = human development index; GDP = gross domestic product.

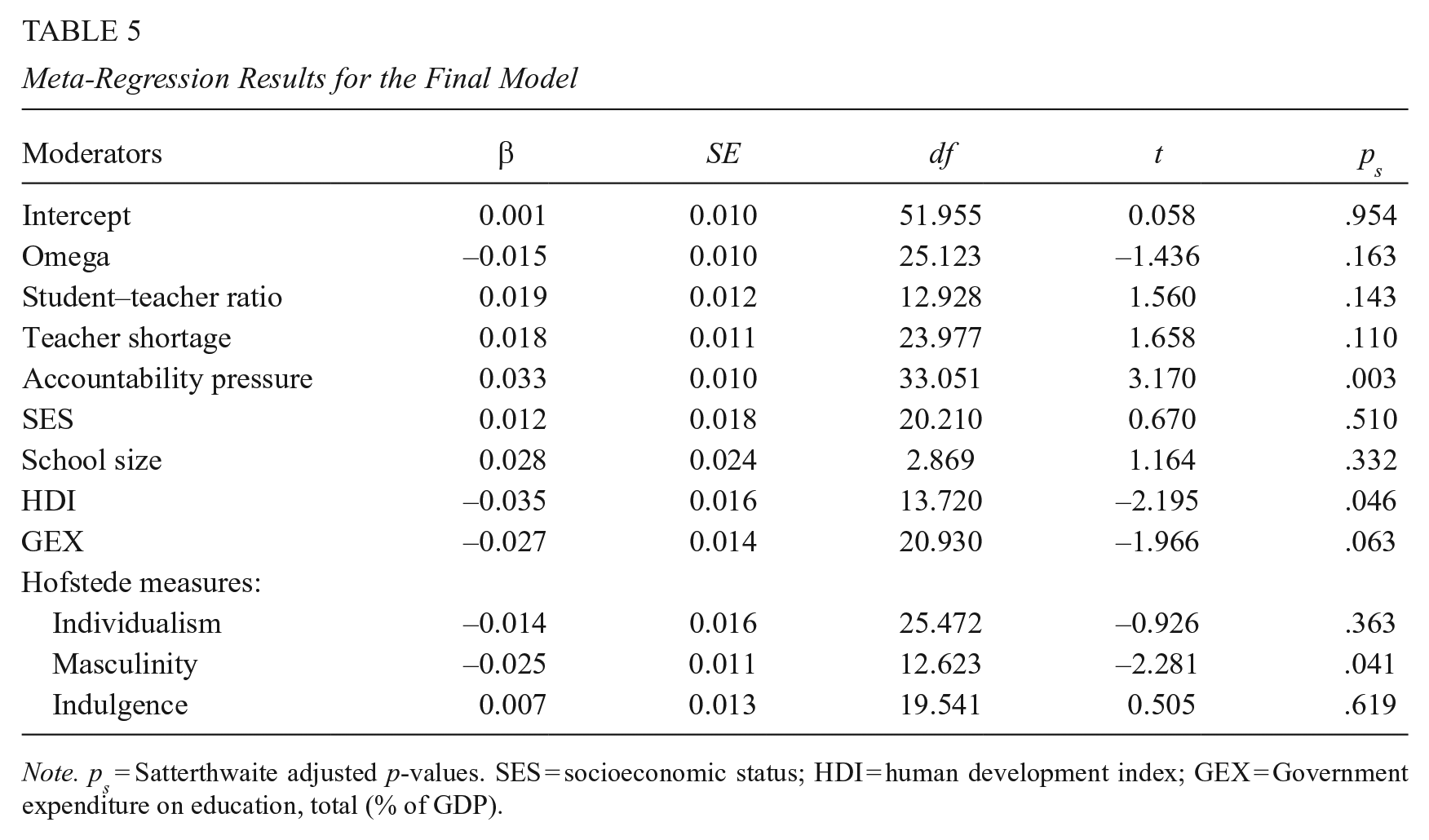

The final conditional model for the MUTOS organizing process included important moderators from prior models. We used relatively liberal selection criteria; thus, the following moderators with an adjusted p-value smaller than .20 were included in the final model: omega; student–teacher ratio; teacher shortage; accountability pressure; SES; school size; HDI; GEX; and Hofstede measures of individualism, masculinity, and indulgence. Compared with the unconditional model, the final model explained 31.3% of the heterogeneity, τL3 = 0.028, τL2 = 0.114, and τ = 0.118. The average QE was 2,144.674. Table 5 reports results from the final model; accountability pressure (βaccountability = 0.033, SE = 0.010, df = 33.05, pS = .003), HDI (βHDI = −0.035, SE = 0.016, df = 13.72, pS = .046), and masculinity (βHof_mas = −0.025, SE = 0.011, df = 12.62, pS = .041) were significant at p < .05.

Meta-Regression Results for the Final Model

Note. ps = Satterthwaite adjusted p-values. SES = socioeconomic status; HDI = human development index; GEX = Government expenditure on education, total (% of GDP).

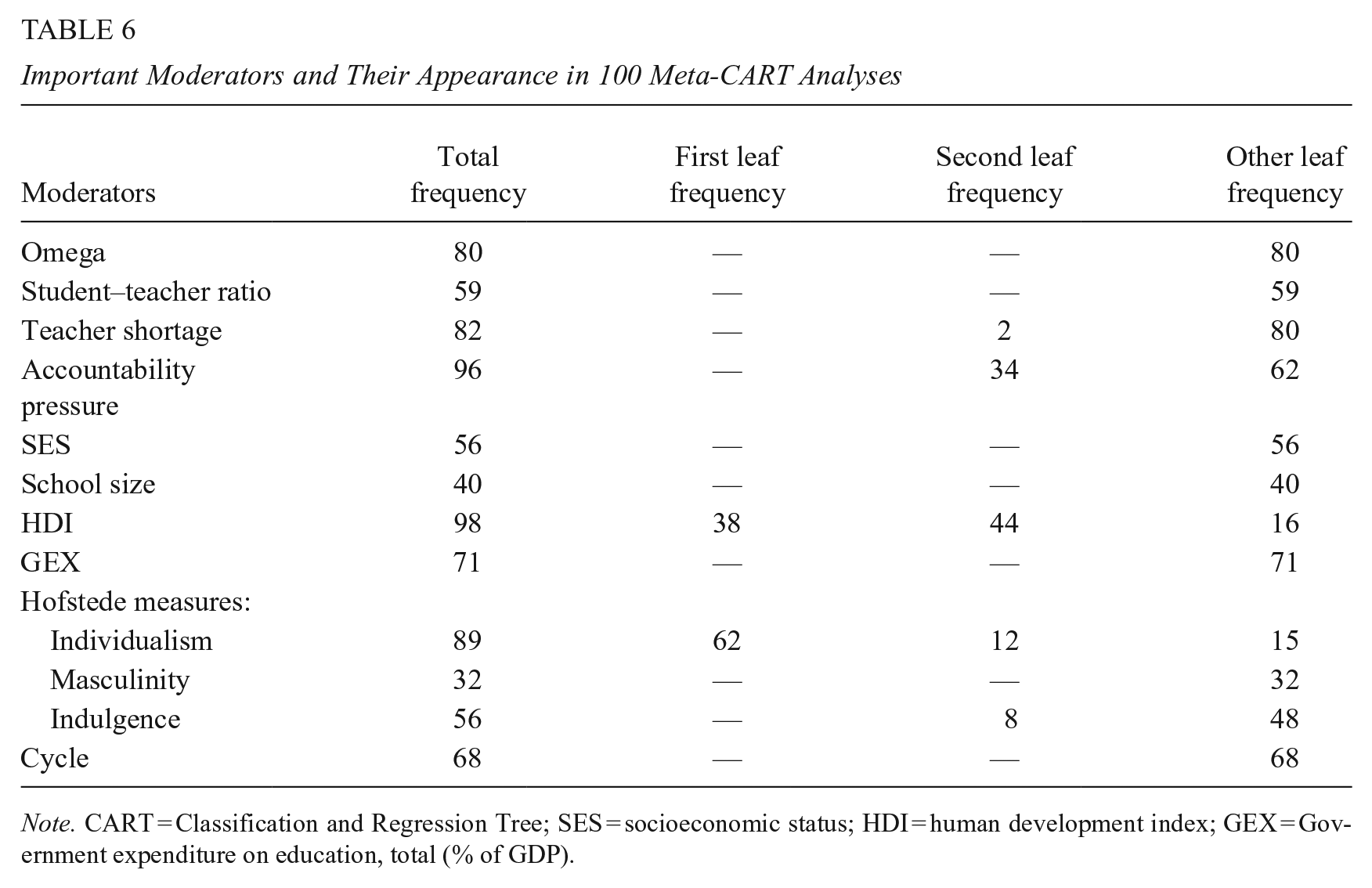

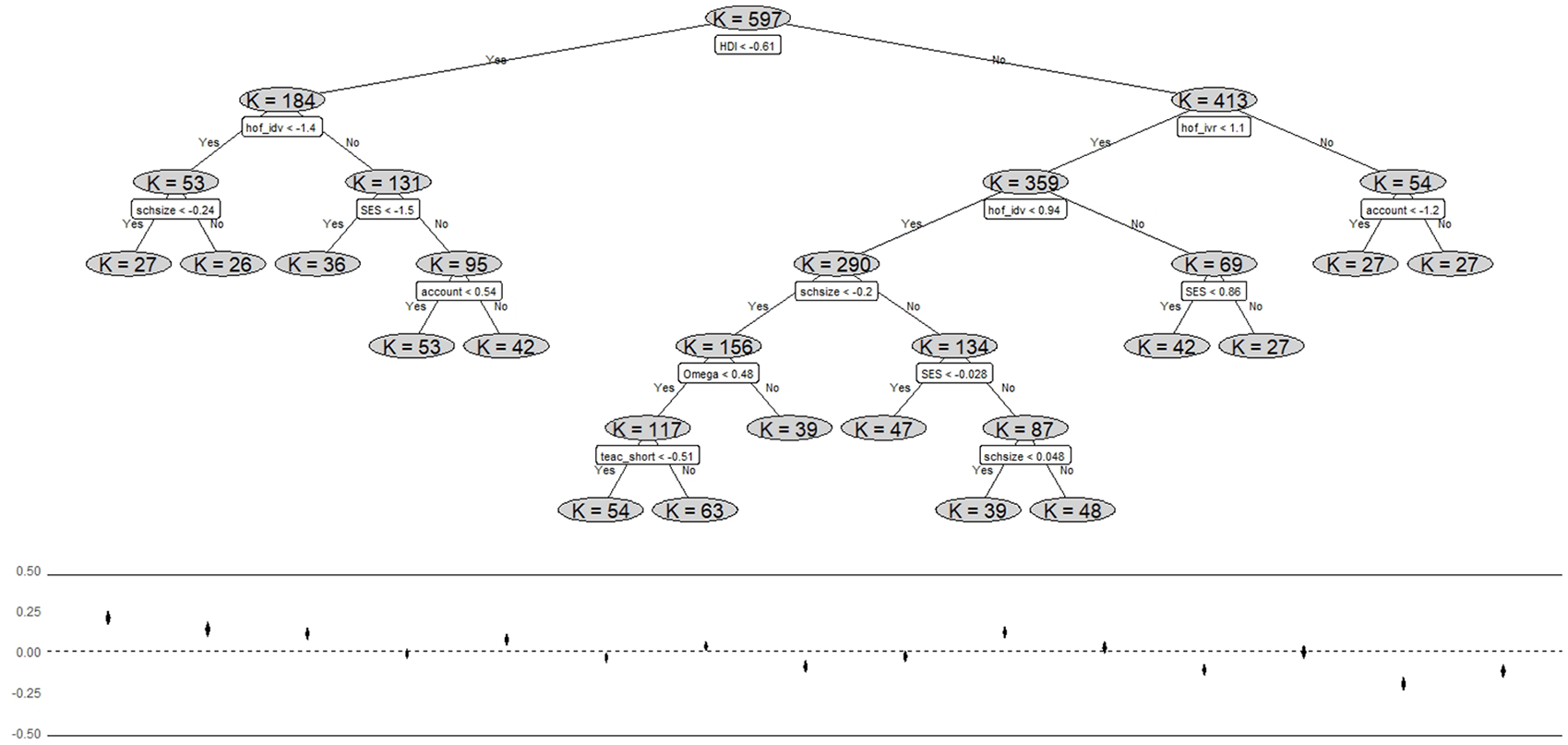

Robustness Check With Meta-CART

Finally, we compared the theory-driven MUTOS approach with a data-driven regression-tree analysis by using Meta-CART. A total of 12 predictors entered the random-effects model, with 11 predictors from the final model and the year as the cycle indicator. For the 100 imputed data sets, 100 Meta-CART analyses were completed, and each predictor was listed at least 32 times as an important moderator (see Table 6). Among the moderators that were listed as important, HDI, accountability pressure, individualism, and teacher shortage appeared in 98, 96, 86, and 82 of the 100 analyses, respectively. Specifically, either HDI or individualism was listed as the first parent leaf in 100 analyses, indicating that the human development and cultural aspects are the most relevant moderators with regard to the IL–student achievement relation. Even though individualism was not listed as a significant moderator in the MUTOS process, it appeared 74 times as the first or second parent leaf in the Meta-CART analyses, indicating that it formed interactions with other variables. For example, as depicted in Figure 6, the Meta-CART analyses of an imputed data set list HDI as the first parent leaf; as it interacted with individualism, lower individualism values along with lower HDI values resulted in two different subgroups, both with an average effect size larger than 0 (g = 0.203 and g = 0.133), with Hedges’s g values < 0.20 indicating small effects, values around 0.50 indicating moderate effects, and values about 0.80 indicating large effects (Cohen, 2013).

Important Moderators and Their Appearance in 100 Meta-CART Analyses

Note. CART = Classification and Regression Tree; SES = socioeconomic status; HDI = human development index; GEX = Government expenditure on education, total (% of GDP).

An example plot and Hedges’ g values for one of the Meta-CART analyses.

Discussion

Through this study, we investigated the relationship between IL and student achievement in 75 countries across different cultures and a 6-year time span. Based upon data from more than 1.5 million students nested in more than 50,000 schools across the globe, we demonstrated with the aid of a novel meta-analytical big data analysis approach that the relation between IL and student achievement in the domains of reading, mathematics, and science, with a mean value of 0.005, is significantly lower than the effect sizes estimated in traditional meta-analyses. Furthermore, our analyses demonstrate that the relation between IL and student achievement is context-dependent, as the effect sizes ranged from −0.48 to 0.44 for different countries in different cycles. Accordingly, the findings on the investigated relationship cannot be generalized in principle, as they are not etic. It is nonetheless striking that the heterogeneity in the data can only be attributed to a very small extent to achievement domains. On the one hand, this means that our study did not confirm findings from other studies that reported varying correlations in this regard (Tan et al., 2021). On the other hand, the time of measurement and/or the time of data collection, along with the location of data collection, have a major influence on the correlation between IL and student achievement, indicating that, even within countries, the findings on the relationship between these two variables are hardly stable over time.

The most important finding from the first part of the analysis is a relatively weak relationship between IL and student achievement on average compared with the previous meta-analyses. First, previous aggregated data meta-analyses were mostly limited to studies from the core Anglosphere, while our analysis covers large numbers of countries with various educational and socioeconomic contexts. Therefore, the more conflicting results largely canceled each other out. Another reason could be the understanding of student achievement in most of the existing studies and in PISA. While student achievement is often measured based on school grades or national exams based on school curricula, “PISA examines how well students are prepared to meet the challenges of the future, rather than how well they master particular curricula” (OECD, 2014, p. 3). Therefore, school principals’ IL practices may not be directly transferable to students’ PISA performance. Future research, therefore, might investigate the nature of IL and its relation to student achievement based on various orientations.

By applying the MUTOS (an extended form of UTOS for meta-analyses) approach, meta-regressions revealed which country-specific contextual factors threaten the generalizability of the IL–student achievement relation. The key findings here include that (a) considerable variability stems from the level of human development in a country; (b) cultural factors have a significant influence; and (c) accountability pressure is inversely related to the coupling of IL and student achievement, meaning that, in school systems with a strong emphasis on publicly reporting the outcomes of schooling, associations become looser. A fully data-driven machine-learning regression-tree analysis (CART) supported these findings and demonstrated that the level of human development and cultural factors are the main factors limiting the possibility of generalization. This means that all other potentially influencing variables are subordinate to, respectively, interact with these two factors—that is, they exert an influence on the relationship between IL and student achievement in dependence on them. However, the main motives and mechanisms behind the roles of human development and cultural factors deserve specific attention and more in-depth analyses. Therefore, we suggest that scholars pay greater attention to these aspects in future research.

However, it is also striking that the results within countries were hardly stable over time, resulting in an overall heterogeneity formed dominantly by within-country differences, rather than between-country variation. Furthermore, the within- and between-country variance values were significantly higher than those reported in other ILSA-based IPD meta-analyses that examine individual-level effects—that is, the relationship between individual students’ SES and student achievement (Brunner et al., 2022). This suggests that the relationship between IL and student achievement at the school level is context-sensitive, rather than a universal phenomenon, and that the results are also dependent on the samples, instruments, and measures used. This result is even more surprising given that (a) the sampling design of PISA should reduce sample selection bias, (b) the large sample size allows for very precise effect-size estimates, and (c) we controlled for possible unreliability of the measures and contextual factors in the analyses. Therefore, in line with Campos et al. (2023), we argue that future studies should investigate in more detail the effects (or biases) in IPD meta-analyses arising from heterogeneity between samples, the types and characteristics of the measures, and the sampling procedures involved.

Limitations and Areas for Future Research

Many of the key limitations of our study are due to the data we used. First, PISA data are cross-sectional, thus preventing us from drawing causal conclusions. Second, PISA has a focus on the OECD and associated countries. Therefore, the data are far from allowing generalizable statements to be made on a global level, as large parts of Africa, for example, do not participate in the study (van de Vijver et al., 2010). Third, the school sampling in PISA means that, in small countries, data were collected repeatedly in the same schools during all three cycles, whereas in nations with many schools, data were rarely collected within the same schools over time. Thus, in some countries, there nearly exist longitudinal data at the school level, while, in others, there is not, which may affect the stability of effects over time. Unfortunately, we cannot control this based on the available data. Fourth, although we made great efforts to validate the items and scales for IL, we were only able to handle method heterogeneity to a limited extent due to the items and scales changed by the OECD between cycles. Therefore, the use of raters was the only effective way to ensure conceptual comparability of the scales between countries and cycles. However, whenever possible, statistical comparability should be tested (Campos et al., 2023). This also applies to PISA’s (original) school leadership scale, as it is already evident that a high level of invariance between countries is usually not achieved within individual PISA cycles, and consequently “comparisons made across countries using this scale should be made with caution” (Eryilmaz & Sandoval Hernandez, 2021, p. 17). Fifth, the information on IL comes from school leaders’ self-reports, which may limit the validity of the data. Sixth, this study focuses on only one aspect of school leadership, IL, since there were not enough items in the existing PISA cycles to form scales for other common approaches to school leadership.

In addition, there were other methodological challenges that are important to note. The first methodological challenge was the missing data at the country level, which caused the S moderators to reach up to 25%. We used joint modeling and 100 imputations to address the missing data, as Leite et al. (2021) showed that joint modeling performs satisfactorily with 10 predictors, a 30% missing data rate, and only 10 multiple imputations to detect a treatment effect. The second challenge was implementing Meta-CART for a nested data structure, which we could only partially address by introducing cycle as a moder-ator. The Meta-CART analyses produced consistent findings compared with the MUTOS results, as HDI and accountability pressure were listed as relevant moderators. However, Meta-CART revealed that Hofstede’s cultural measures might form interactions that were not evident in the MUTOS results. We are sharing the relevant codes, data sets, and additional graphs (see Supplemental Material in the online version of the journal) to help others further explore the heterogeneity in the relation between IL and school-level components of student achievement.

Given these limitations and considering the results of our study, we recommend that differences between countries, as well as between measurement occasions within countries, should be considered when surveying and analyzing the relationship between IL and student achievement. We also recommend paying more attention to method heterogeneity in the context of traditional meta-analyses and following, for example, the approach of Schmidt and Hunter (2015). This is because findings from individual studies do not seem to be generalizable either within a country or between countries, according to our results—not even when comparable analysis protocols and methods are used. In light of this fact, the question then arises as to how to strengthen the significance and usability of ILSAs for cross-cultural research through the stronger inclusion of emic factors. In this context, one may heed the suggestions of F. M. Cheung et al. (2011) to already consider emic and etic aspects when developing IL measures. This is also the first route mentioned by He and van der Vijver (2015), and the only one we were unable to take with the available data. For instance, by using cross-cultural multi-matrix designs (e.g., Gonzalez & Rutkowski, 2010; Kaplan & Su, 2016), etic items could be used to generalizable measure the construct on the one hand, and, on the other hand, depending on the context, emic items could be used that take respective cultural uniqueness into account.

Considering that, according to our data, the level of human development is an important determinant of the relationship between IL and student achievement, it is important to investigate how this plays out in countries that do not fall within the OECD framework (van de Vijver et al., 2010)—that is, countries that are less developed and have a low HDI. In this regard, studies show that aspects of human development and culture are partially interconnected and interdependent (Gamlath, 2017; Pereira Sartori Falguera et al., 2021), a finding that our Meta-CART analyses demonstrate too in terms of moderating joint effects in school settings. As in other meta-analyses (Crede et al., 2019), leadership effectiveness was also inversely related to human development in our study: the higher the HDI, the weaker the relationship between IL and student achievement. Since the concept of human development focuses on two simultaneous processes, namely, (a) the building of human capabilities and (b) the ways people use these capabilities to function in society and freely choose between different options (Dasic et al., 2020), this suggests that IL may be particularly effective in contexts where people have fewer “real freedoms” (Sen, 2001, p. 36) to achieve actual functioning, such as being and doing (Sen, 1995). However, since learning in itself can lead to an increase in such freedoms (Nussbaum, 2006), that is, by promoting “reason, personal autonomy, and independence” (Flores-Crespo, 2007, p. 54), one might assume that IL could be a tool for enhancing human capabilities and that its effectiveness diminishes with increasing human development. Clarifying whether this is the case could be another important research topic.

Since we only considered correlations in this study, but IL can be assumed to have an indirect effect on student achievement, our finding of the overall correlation being close to zero indicates no direct linear relation. Thus, it would be worthwhile to conduct further comparable studies using more complex meta-analytical structural equation models (Jak & Cheung, 2020). The challenge will be to systematically include theoretically well-justified mediator variables in such a model. Scheerens (2012) shows that the variables included in such models so far have been very heterogeneous. Consequently, the effect sizes in direct and indirect models hardly differ from each other in his meta-analysis, which makes him argue: “A key question that indirect effect models address is the question whether a stable set of intermediary school conditions can be identified” (Scheerens, 2012, p. 133). In our view, it is particularly useful to incorporate variables on classroom instruction/teaching, as this is by definition the main object of IL, and data on the subject have been collected in almost all PISA cycles (OECD, 2014); surprisingly, though, this variable has rarely been used in indirect effect models so far (Scheerens, 2012).

In addition, it should be noted that the PISA sample includes only secondary schools, whereas the available aggregated data meta-analyses on this topic are mostly based on primary studies conducted in elementary schools (see, for example, Robinson et al., 2008). This may also explain the differences. As the results of our ILSA-based IPD meta-analysis differ from those of traditional aggregated data meta-analyses, we further propose to integrate both types of data in a two-stage meta-analysis, with random effects varying between effect sizes, to explore the sensitivity of the data and measurement between IPD and aggregated data meta-analysis approaches (Campos et al., 2023; Scherer et al., 2021).

Finally, because of the findings presented, it seems important to also consider measurement occasions, especially time, as a context variable in further studies.

Conclusion and Implications

In conclusion, our study makes it clear that previously reported findings on the relationship between IL and student achievement should be taken with caution, as it is likely that the results are not generalizable at the global scale. IL and student achievement are not positively correlated per se, and their relationship varies greatly depending on the context of whether a school leader’s focus on teaching and instruction resonates with students. Consequently, the implications for policy and practice are manifold. On the one hand, policy borrowing is not advisable; after all, what works in one context does not necessarily work in another. Since we found negative correlations in some cases, we cannot rule out the possibility that IL may even have counterintuitive effects in certain contexts (although PISA data do not allow us to make such statements due to cross-sectionality). On the other hand, in practice, our findings do not mean that it is useless for school leaders to focus on the core business of school: instruction. At the same time, we should not expect a magic bullet here when it comes to student achievement. Moreover, one should constantly evaluate whether IL actually achieves the intended goals under the respective contextual conditions and adjust leadership practices accordingly.

Our study is one of the few large-scale quantitative cross-cultural studies of educational leadership to date. Accordingly, we present findings on the relationship between IL and student achievement for the first time for many countries and regions; this applies particularly to countries in Central Asia, South America, and Southeast Europe. The results of our study are therefore highly significant and original, providing empirical results where previously there were only frameworks for action or small-scale studies (i.e., Sindhvad et al., 2020, 2022). In doing so, our article also addresses another issue, namely, that systematic knowledge production in educational leadership is often rooted in and connected to a select few influential knowledge producers (Hallinger & Kovačević, 2019), and that existing best evidence reviews and analyses are often products of this relationship (McGinty et al., 2022). The analysis of the freely available PISA data makes it possible to generate findings independently of this dominance. Our findings are therefore timely, as issues of effective school leadership are coming to the fore in many countries (Gümüş et al., 2018; Hallinger et al., 2020), and highlight the need to consider IL in its specific context.

In addition, our study has implications regarding the interpretation and implementation of PISA and other ILSAs for policy, practice, and education research, particularly when providing information for educational practice and research (Oh, 2020) and evidence-based decision-making in education (Slavin, 2008). First, using various items for the same construct (e.g., school leadership) over cycles without any clear conceptual foundation makes it hard to interpret changes and make meaningful comparisons. In addition, given the increasing research and policy attention to other leadership models, such as distributed leadership and transformational leadership (Leithwood & Sun, 2012; Spillane, 2012), as well as integrated views (Printy et al., 2009), the school leadership questionnaires used by the OECD seem quite narrowly designed, as they are mostly based on a single leadership approach, IL.

We, therefore, suggest that the OECD should use more concrete frameworks of various leadership models and strive for consistency in the application of questionnaires across different cycles and studies (e.g., TALIS and PISA). This is particularly important to empirically test the measurement invariance between cycles and studies, which in turn contributes to the robustness of results in IPD meta-analyses. Furthermore, moving beyond cross-sectional data sets and designing longitudinal ones is advisable for both governments and international organizations, since cross-sectional data do not always provide insights into the complex relationships between various organizational variables (which usually require a longer time to create influence) and student achievement. The self-reporting nature of leadership scales could also be an issue because of the tendency for self-presentation or extreme responses, as discussed in the literature (Paulhus & Vazire, 2007). This becomes even more problematic for cross-national studies since such tendencies might also be sensitive to cultural differences (Guo & Lu, 2018; McNabb, 1990). We suggest collecting data from multiple sources (e.g., principals and teachers), so scholars could use various designs, including the self-other agreements approach (Atwater & Yammarino, 1992; Ham et al., 2015).

Supplemental Material

sj-pdf-1-epa-10.3102_01623737231197434 – Supplemental material for Putting the Instructional Leadership–Student Achievement Relation in Context: A Meta-Analytical Big Data Study Across Cultures and Time

Supplemental material, sj-pdf-1-epa-10.3102_01623737231197434 for Putting the Instructional Leadership–Student Achievement Relation in Context: A Meta-Analytical Big Data Study Across Cultures and Time by Marcus Pietsch, Burak Aydin and Sedat Gümüş in Educational Evaluation and Policy Analysis

Supplemental Material

sj-csv-2-epa-10.3102_01623737231197434 – Supplemental material for Putting the Instructional Leadership–Student Achievement Relation in Context: A Meta-Analytical Big Data Study Across Cultures and Time

Supplemental material, sj-csv-2-epa-10.3102_01623737231197434 for Putting the Instructional Leadership–Student Achievement Relation in Context: A Meta-Analytical Big Data Study Across Cultures and Time by Marcus Pietsch, Burak Aydin and Sedat Gümüş in Educational Evaluation and Policy Analysis

Footnotes

Appendix

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Marcus Pietsch is supported by a Heisenberg professorship of the German Research Association (DFG; Project ID: 451458391, PI 618/4-1).

Authors

MARCUS PIETSCH, PhD, is a DFG Heisenberg professor at the Leuphana University of Lüneburg, Germany. His research uses quantitative methods to explore innovation and change in schools and education systems, and how school leaders can contribute to creating better schools for all.

BURAK AYDIN, PhD, is a faculty member at the Ege University, Türkiye, and a researcher at the Leuphana University of Lüneburg, Germany. He is a quantitative data analyst, and his research mainly focuses on the theory and application of multilevel modeling, structural equation modeling, and propensity score analyses.

SEDAT GÜMÜŞ, PhD, is an associate professor in the Department of Educational Policy and Leadership at The Education University of Hong Kong. His current research focuses on the relationship between school leadership and various teacher and student outcomes as well as the contextualization of leadership models and practices.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.