Abstract

Problem-solving has been identified as a critical 21st-century skill. This review integrated evidence on the effectiveness of existing approaches to teaching and assessing problem-solving in an interpersonal context (IPS) for children from birth to age six, in early childhood education and care (ECEC) settings. A comprehensive search (i.e., PsycINFO, ERIC, Education Source, Child Development and Adolescent Studies) yielded 13,030 abstracts, 38 eligible papers representing 7,627 children, and 26 papers for the meta-analysis. Multilevel meta-analyses for the pooled effect revealed strong relationships between IPS interventions and children’s IPS outcomes, Hedges’ g = 0.76 (SE = .10, p < .001, 95% CI [0.57, 0.95], τ = .26). The evidence suggests that skills training specific to IPS (e.g., brainstorming) and using assessment formats that allow children to generate novel solutions may best capture the learning process. More research is needed to expand investigations to infant and toddler samples.

Keywords

Core psychosocial skills such as interpersonal problem-solving, creativity, collaboration, and communication are considered important building blocks for positive relationships, academic achievement, and sustained overall well-being from the early years throughout the lifespan (Merrill et al., 2017; National Research Council, 2012; Singh Dubey, 2022). Interpersonal problem-solving (IPS) has been identified as an essential skill for children’s positive developmental trajectories (Araujo et al., 2019; Mahoney et al., 2021). IPS involves a complex, interconnected set of social, emotional, and cognitive abilities and refers to “one’s skill in determining [how] to achieve a specific end or overcome a specific problem” in an interpersonal context (Blissett & McGrath, 1996, p. 173). Given its importance, we must identify ways of supporting the development of IPS skills in young children.

Recent meta-analyses have investigated the effectiveness of interventions promoting IPS skills in young children with a focus on improving children’s social-emotional learning (SEL; Barnes et al., 2018; Murano et al., 2020; Yang et al., 2019). SEL skills include constructs such as empathy, cooperation, and prosocial behavior. The purpose of the present study was to conduct a comprehensive review of instructional approaches promoting IPS skills, focusing on improving “problem-solving” skills in an interpersonal context. Effective assessment of IPS skills comprises not only the development of SEL skills but also children’s successful mastery of interpersonal dilemmas (Joseph & Strain, 2010). Mastery of interpersonal dilemmas involves a stepwise process involving social, emotional, and cognitive elements. Thus, in addition to SEL skills, children require skill-building such as planning, brainstorming, consequential thinking, and critical evaluation.

We conducted a meta-analysis integrating evidence on the effectiveness of existing curricula and instructional methods in enhancing IPS skills. We provide a comprehensive qualitative summary and description of the published data on this topic. The meta-analyses provide a quantitative synthesis of a smaller subset of homogenous papers that met specific criteria. We focused on children from birth to age six in early childhood education and care (ECEC) settings. The overarching goal was to identify evidence-based ways of improving young children’s IPS by examining success in problem-solving rather than SEL constructs as our outcome. We focused on studies that targeted the IPS process (i.e., the steps involved in IPS) and IPS success (i.e., successful achievement of a specific end or resolution). In the following sections, we present primary theories underlying the development of IPS skills in young children, define IPS, and summarize the literature on how it can be taught. We then identify potential moderators of the effectiveness of different approaches to teaching IPS (e.g., program length). Finally, we justify our focus on ECEC as an important context for teaching IPS.

Theoretical Framework

The development of IPS skills in early childhood can be understood through four primary theories: ecological systems theory, constructivist learning theory, social cognitive theory, and experiential learning theory. Ecological systems theory emphasizes the role of environmental contexts, including early learning settings, in shaping developmental trajectories (Bronfenbrenner, 1979). Given the high rate of participation in ECEC in children’s early years (OECD, 2019), such settings are particularly influential in supporting development through social interaction and access to rich learning materials.

From a constructivist perspective, Piaget’s (1971) theory of cognitive development highlights children as active learners who build knowledge through interaction with their environment. Vygotsky’s sociocultural theory (Vygotsky & Cole, 1978) further underscores the importance of adult support/scaffolding, especially within the child’s zone of proximal development. Within this zone, educators can scaffold skills by helping children transfer knowledge from familiar to novel contexts. Interactions with developmentally appropriate materials also support this process (Lockman, 2000).

Social cognitive theory posits that learning occurs through observation and imitation (Bandura, 1986). Educators serve as social models, demonstrating problem-solving behaviors and reinforcing children’s efforts. These experiences contribute to the development of self-efficacy—children’s beliefs in their own ability to solve problems (Budzyna & Buckley, 2024). Finally, children learn to problem-solve through the concrete experience of actively applying their solutions to social dilemmas and learning from their experiences by reflecting and evaluating their choices (Kolb, 1984). Together, these learning theories inform how IPS skills are taught as discussed later. Next, we provide an overview of the steps involved in the IPS process and important skills children require for success in resolving interpersonal dilemmas.

Definition of IPS and Related Skills

Young children encounter a myriad of interpersonal problem situations, such as sharing of resources; disagreements about rules, opinions, and facts; exclusion and rejection; peer aggression; and competing roles when collaborating on tasks (Halmatov, 2018; Kinoshita et al., 1993). Children maneuver through these interpersonal problem situations by engaging in multiple interconnected skills in an iterative sequential process (Merrill et al., 2017; Murano et al., 2020). Each attempt at solving the problem ideally involves improved approximations toward appropriate solutions to address the identified goal or situation. Researchers (Barnes et al., 2018; Joseph & Strain, 2010) have identified the following basic steps in the IPS process: (1) identify the problem; (2) generate various alternative solutions; (3) think about the consequences of your actions; (4) try one of the solutions you thought of; (5) check to see if that solution worked; (6) if not, try the whole process of generating and executing solutions again. See Supplementary Materials Table S1 (online only) for the definitions of IPS provided in the papers included in the current review.

Navigating the steps involved in IPS requires a blend of complex thinking skills (O’Reilly et al., 2022) and social competencies (Grover et al., 2020; Milligan et al., 2017). Executive functioning abilities are needed to underpin the whole process (Bierman & Motamedi, 2015; Mattera et al., 2021). Comprehensive reviews have identified several specific skills to target when teaching IPS to young children (Jones et al., 2021; Lawson et al., 2019; White et al., 2017). These are briefly described in the following sections.

Social-Emotional Competencies

Social-emotional competencies have been explored extensively (Engler et al., 2023; Grove et al., 2020; Lawson et al., 2019; Milligan et al., 2017) and include social skills (e.g., communication, cooperation, empathy), emotional intelligence (e.g., self/social-awareness, diversity sensitivity, emotion recognition), emotional regulation (e.g., adaptive control, appropriate expression of feelings and needs, support seeking), and perspective taking. These abilities are important to consequential thinking to generate possible solutions during social disputes. For example, children with a good knowledge of emotion and social situations know what is expected of them and are more likely to choose effective tactics to resolve their issues (Djambazova-Popordanoska, 2016; Milligan et al., 2017). Children with strong perspective-taking skills at ages 3 to 5 also showed more prosocial behavior (Cigala et al., 2015) and better appraisals of the cause of events in peer interactions (Cesur & Yarali, 2020; Emen & Aslan, 2019).

Cognitive Competencies

As executive functioning skills (e.g., working memory, inhibitory control, persistence, attention, goal-directed behavior, transfer skills) improve with age, children learn to anticipate and plan their actions (e.g., setting goals, developing plans, and implementing solutions; Corbetta & Fagard, 2017; Palmer & Wehmeyer, 2003) in response to social interactions (e.g., making reasoned/informed judgments; Engler et al., 2023). They can retain, recall, and manipulate multiple facets of information in their minds (i.e., working memory), as well as suppress distractors (i.e., attention, inhibitory control) to manage their environments (Pascual et al., 2019). Children begin to recognize cause-and-effect patterns as toddlers (ELECT, 2014) and show cognitive flexibility as early as preschool (e.g., shifting attention, consequential thinking, and generating alternate strategies and solutions to problems; Ardila, 2016; Deák & Wiseheart, 2015). With these skills, children can learn to assess their own and others’ behavior, test and assess choices, and make causal inferences (Baird & Fugelsang, 2004; Shure & Spivack, 1980).

Other important cognitive skills that play a role in the IPS process are critical thinking (Papadopoulos & Bisiri, 2020), divergent or innovative thinking (e.g., curiosity, open-mindedness, and ability to generate novel solutions to problems), and metacognition (Pawlina & Standford, 2011; Marić & Sakać, 2018). When solving social problems, children need to be able to analyze, test hypotheses, interpret, make causal inferences, and evaluate outcomes (O’Reilly et al., 2022; Papadopoulos & Bisiri, 2020). Thinking about thinking involves metacognition, the awareness of what is known and unknown, and the ability to control one’s own reflections regarding a situation (Meichenbaum, 1985). Research examining cognitive problem-solving tasks in children ages 3 to 6 found that children with stronger metacognitive abilities were more successful and efficient in switching strategies, transferring learning to novel situations (McMahon et al., 2000), and resolving problem situations (Marić & Sakać, 2018).

Developmental Considerations for Teaching IPS

Teaching IPS skills to children in the early years requires knowledge and understanding of the intricately interwoven and sequential nature of the development of social, emotional, and cognitive skills (Babik et al., 2019). Early learning begins with a wide range of exploratory behaviors that become more refined and goal-directed with maturation and learning (Babik et al., 2019; Solby et al., 2021). Raz and Saxe (2020) assert that infant learning is active, endogenously motivated, and dependent on the frontal cortices. Toddlers recognize the difference between themselves and others, feel empathy, and learn prosocial behaviors (Babik et al., 2019; ELECT, 2014). At around 30 months, many children can identify problems, plan and set goals, develop strategies and make decisions, act to achieve goals, generate solutions, connect consequences to actions, evaluate the outcomes of their IPS, and transfer their learning from one situation to another (Babik et al., 2019). Due to the evolving nature of children’s skills, educators have an essential role to play in maximizing their developmental trajectories (Almond et al., 2018; Gradovski et al., 2019). Research shows that IPS skill development can be enhanced by training; early identification of delays is needed to help children reach age-expected outcomes (Walker et al., 2023).

Approaches to Teaching IPS Skills

Reviews of the approaches to teaching IPS skills have shown that most programs tend to combine several approaches to teaching IPS skills, but with some variation in underlying theoretical models, skills targeted, and components included (Bierman & Motamedi, 2015; Jones et al., 2021; Lawson et al., 2019; White et al., 2017). Research highlights scaffolding, modeling, and rehearsal techniques as effective in promoting cognitive flexibility and critical thinking in young children, skills important in resolving interpersonal dilemmas (Beyer, 2008). Several of the IPS programs have incorporated these procedures (White et al., 2017). As noted previously, scaffolding and the “zone of proximal development” (Vygotsky & Cole, 1978) refer to optimal learning taking place when learners interact with experts at a level that is just beyond what they can do on their own. Thus, optimal teaching of IPS skills involves guided social interactions with an expert who knows the child well (Beyer, 2008; Saracho, 2023) and can model/coach, encourage questions, and offer cues (e.g., using the skill name, stating the first steps in the IPS procedure). Children also benefit when given feedback on both the accuracy of their work, as well as their performance (Hattie, 2009; Saracho, 2023). Exposure to such exchanges with experts helps children identify missteps in their thinking and generate new solutions. Repeated practice and rehearsal strengthen recall of the skills and steps involved (O’Reilly et al., 2022).

The Tools of the Mind program is rooted in Vygotskian theory, emphasizing that children learn problem-solving through social interaction (Bodrova & Leong, 2007). The program’s aim is to teach children emotion regulation skills and cognitive skills. For example, educators scaffold children’s learning by providing guided practice in solving social issues that arise during play and other activities. The Preschool (PATHS) Promoting Alternative Thinking Strategies Curriculum (Domitrovich et al., 2007) focuses on emotional skills (e.g., emotional knowledge and expression) and promoting effective use of language and communication to solve interpersonal conflicts. Informed by social learning theory, children learn by observing prosocial behaviors modelled by educators and peers and then imitating them. PATHS emphasizes the interconnectedness of systems and the interplay between cognitive, behavioral, and environmental influences on learning. The Incredible Years (IY) Classroom Dinosaur Curriculum (Webster-Stratton & Reid, 2008) emphasizes social competence. New skills are taught through video-based modeling and responsive positive interactions with educators. Shure developed the I Can Problem Solve program, which emphasizes constructivism, cognitive learning theory, and problem-based learning (ICPS; Shure, 2001). Educators focus on teaching children “how to think,” an essential skill in the IPS steps (Joseph & Strain, 2010).

Recent meta-analyses investigating programs developed to promote IPS skills have revealed significant effect sizes for increasing social-emotional outcomes among preschool-aged children ages three to six for both typical (Barnes et al., 2018; Murano et al., 2020) and at-risk samples (Yang et al., 2019). For example, Murano et al. (2020) reported significant effects for the overall development of social and emotional skills (Hedges’ g = 0.34). In the current study, we examined whether the instructional approaches reported in the reviewed papers involved specific training in IPS skills (Csapó & Funke, 2017) and whether or not this would have differential effects.

Assessment of IPS Skills

A few reviews (e.g., Campbell et al., 2016; Halle & Darling-Churchill, 2016) have been conducted on the various instruments developed to assess IPS skills in preschool-aged children, with an SEL focus. The primary method of evaluating IPS skills has been to present children with a group of social dilemmas using picture cards (e.g., the Preschool Interpersonal Problem-Solving Test [PIPS]; Shure et al., 1972) or by using a peer confederate in a live “enacted” play situation (Behavioral Interpersonal Problem Solving [BIPS]; Ridley & Vaughn, 1982). Children are then asked to generate as many solutions as they can to the dilemmas (this is only one of the multiple steps in the IPS process). In the play situation with the peer, the child’s behavioral responses to the “setup” dilemmas are also assessed (Ridley & Vaughn, 1982). The verbal and behavioral responses are then categorized, such as appropriate (prosocial) or inappropriate (aggressive).

Shure (2001) extended this format to include thinking styles involved in the IPS process (i.e., alternative thinking, causal thinking, and consequential thinking). Further work is needed to examine children’s growth and development of these thinking styles, as well as attainment of the skills involved in the steps in IPS (see examples in science and engineering; Anggoro et al., 2021; Bahar & Aksut, 2020). These steps include behaviors such as recognizing and articulating the problem; exploring, obtaining information, and asking questions; generating multiple possible solutions; predicting potential outcomes, advantages, and disadvantages; describing the solution and its steps; carrying out the solution; evaluating the effectiveness of the solution; revising the plan; and sharing the plan with others.

Most instruments assessing IPS have been developed by United States researchers, but a few have been adapted for use in other countries. For example, the PIPS has demonstrated good inter-rater reliability (IRR = .94–.95) and good 1-week test-retest reliability (r = .72) for preschoolers in the United States (4-year-olds; Shure & Spivack, 1979) and in Turkey (5- and 6-year-olds; IRR = .82–.99; r = .85; Anlıak & Dinçer, 2005). The Wally Social Problem-Solving Test (Wally; Webster-Stratton, 1990) also demonstrates good inter-rater and good test-retest reliability for both United States (IRR = .85, Odom et al., 2019; r = .67–.92, Johnson, 2000) and Turkish preschool samples (Kuder-Richardson = .79–.81; r = .95; Bayrak & Akman, 2018).

No reviews were found examining the effectiveness of IPS programs in relation to improvements in (a) each of the behavioral steps involved in the IPS process or (b) the child’s overall success in overcoming interpersonal problems or achieving the specific end goals in an interpersonal context. These IPS skills are the outcomes we examined in the current integration of evidence.

Potential Moderators of Approaches in Promoting IPS Skills

In keeping with past research, we expected some variability in the findings from the research literature examining IPS skill development. First, study designs that account for more variance between groups (e.g., random assignment at the individual level, controlling for covariates) were expected to show smaller effect sizes than quasi-experimental or correlational studies (Evans, 2003; Murphy et al., 2014). Murano et al. (2020) conducted a meta-analysis summarizing the effects of universal and targeted SEL interventions and found significantly smaller effect sizes associated with RCT study designs. However, they noted that they had only a small number of studies using quasi-experimental designs. We also expected age effects for IPS success due to child maturation (e.g., Babik et al., 2019; Keen, 2011). For example, maturation may enhance effects if better baseline skills enable students to benefit more from interventions. However, maturation may diminish the effects of interventions if older students have already mastered the skills being taught.

Finally, interventions for IPS skill development vary on several dimensions such as duration of the intervention, group size for instruction, persons delivering the curriculum, and assessment type. In a meta-analytic review of childhood curriculum-enhancing, social-emotional competence in low-income children, Yang et al. (2019) reported significant moderator effects for the duration, with shorter interventions (less than one school year) showing stronger effects than those greater than one school year. They also found that content-specific, skill-based curricula showed stronger effects than skills training that was not SEL focused. As Yang et al. (2019) have suggested, curriculum focus may be more influential than the duration of the intervention. Other studies have also found that small group instruction (compared to individual, whole class, or mixed) was associated with greater skill gains in cognitive and social domains (Camilli et al., 2010). Murano et al. (2020) reported smaller effect sizes when educators, as opposed to researchers, delivered the SEL interventions to students. Additionally, they found larger effect sizes for task measures and observer reports compared to parent- and teacher-reported measures (Murano et al., 2020).

Rationale for Focusing on ECEC

We concentrated on ECEC settings because ECEC is a critical context for child development (Britto & Pérez-Escamilla, 2013) during a period of incredible growth and learning (Gradovski et al., 2019). Early childhood education (ECE) is important in preparing children for tomorrow’s classrooms, workplaces, and communities (Araujo et al., 2019). Investments in the early years yield high returns in determining children’s developmental trajectories (Almond et al., 2018; Gradovski et al., 2019). Numerous studies demonstrate that children with access to high-quality early learning environments are more prepared for formal schooling (Bastos et al., 2017), post-secondary education, and the labor force (Fessler & Schneebaum, 2019). While these findings have been most consistent for children from low socioeconomic backgrounds (Elango et al., 2016; Shaw et al., 2021), it is important to note that even within low socioeconomic backgrounds, findings have been mixed. For example, Durkin et al. (2022) found that exposure to state-run prekindergarten programs had negative outcomes for primary school-aged children from low-income families.

Research indicates that high-quality ECEC settings positively affect children’s cognitive, academic, social, and emotional outcomes (Almond et al., 2018); they may also level the playing field for disadvantaged children (Shaw et al., 2021). In a review of IPS interventions, Merrill et al. (2017) report that universal instruction was effective, especially for students at risk of behavioral problems. Similarly, in another meta-analytic review of preschool SEL interventions, Murano et al. (2020) found larger effects for at-risk students receiving care in the home and center settings than for children not at risk. Therefore, we also examine whether some methods for teaching IPS skills may be particularly effective for at-risk samples to reduce the gap for disadvantaged children (Shaw et al., 2021).

Study Objectives

Our primary goal was to integrate evidence on the effectiveness of instructional approaches in promoting IPS skills in children from birth to age six delivered in ECEC settings. Given findings from recent meta-analyses investigating SEL (Barnes et al., 2018; Murano et al., 2020; Yang et al., 2019), we expected significant effects. This review provides data on effect sizes and moderators that explain variability across studies. It highlights what features make programs more/less effective in teaching IPS to young children. Such guidance can have important implications for both policy decision-making and research planning (Pigott & Polanin, 2020). As Hattie (2015, p. 79) points out in his visible learning research and synthesis of 1,200 meta-analyses relating to influences on achievement, the question is not so much “What works?” but “What works best?” and “What works best for whom?” In keeping with Hattie’s thinking, our second objective was to explore moderating variables to account for the expected range of effect sizes within and across studies based on the abovementioned research (Murano et al., 2020; Yang et al., 2019).

Methods

Inclusion Criteria

An initial literature review was conducted to inform the definition of IPS skills, develop relevant search terms for the research topic, and establish project inclusion and exclusion criteria (Bramer et al., 2018). The goal was to gather a comprehensive range of instructional approaches used to promote IPS skills in young children. The search was part of a larger project examining problem-solving across subject areas in the ECEC context. This paper focuses only on studies that examined methods for fostering children’s IPS skill development in ECEC settings.

Sample

ECEC for this study was defined as “regulated arrangements that provide education and care for children from birth to compulsory primary school age in integrated systems, or from birth to pre-primary education in split systems” (OECD, 2017). ECEC settings encompassed nurseries, daycares, childcare centers, preschools, prekindergarten, and kindergarten programs. Compulsory school ages range from age three in Hungary to age eight in some U.S. states (“Compulsory Education,” 2021; Mobbs, 2021). To capture the most common age range for ECEC settings globally, we focused on children from birth to age six.

Country, Language, and Publication Type

Studies in English from all geographic regions were considered for inclusion. Publication types included scholarly journal articles, reports, books, and book chapters.

Instructional Approaches

We considered all aspects of ECEC settings as potential contributors to IPS skill improvements. These aspects encompassed program characteristics and practices, classroom materials and equipment, educator characteristics, and various types of instruction, as well as curricula and activities implemented to promote IPS skill development. These instructional approaches were categorized into two groups: interventions with IPS content-specific, skills-focused curricula (e.g., a comprehensive IPS program) and more general interventions that did not have an IPS content-specific focus but were still identified by study authors as promoting IPS skills (e.g., educator and program characteristics). We refer to the former as having potential direct effects while the latter has potential indirect effects on IPS.

Outcomes

Only studies in which IPS skills as an outcome were considered—that is, SEL outcomes such as social skills, social competence, and aggressive behavior were not included. IPS skills outcomes included measurements of success in an IPS task (e.g., number of correct responses) as well as measures of the IPS steps/process (e.g., use of causal statements). IPS skills could be assessed by parents, educators, researchers, or with computer assistance. Measures of IPS skills could be based on qualitative coding of child behaviors or quantitative testing.

Study Design

For studies to be included, the researchers had to report a statistical link between the ECEC settings characteristics/instructional approach and the IPS skill outcomes. Single-group pre-test-post-test, randomized controlled trials, quasi-experimental, and observational designs were all included for retrospective, cross-sectional, and longitudinal studies.

Exclusion Criteria

Sample

All samples of children ages seven and older and all samples of children in home care were excluded. Studies that solely focused on children with specific developmental challenges such as Down syndrome, Williams syndrome, or autism were excluded because of their unique neurocognitive and neurobehavioral developmental profiles. Studies examining skill development in young children have shown that effect sizes in these populations tend to be significantly lower than those in typically developing children (e.g., Bernard-Opitz et al., 2001; Camp et al., 2016; Grieco et al., 2015).

Country, Language, and Publication Type

Studies not available in English, as well as dissertations, theses, and conference papers, were all excluded due to the high resource demands of reviewing the large number of abstracts identified in our broad preliminary search.

Instructional Approaches and Outcomes

We excluded all intervention studies that did not assess IPS outcomes as defined previously.

Study Design

Qualitative research with no statistical findings was excluded.

Search Strategy

We consulted four librarians with expertise in electronic databases to guide the selection of search terms and databases. We conducted extensive searches of four electronic databases: PsycINFO and ERIC in ProQuest, as well as Education Source and Child Development and Adolescent Studies in EBSCO. These searches were performed for studies published before April 1, 2020. The detailed syntax used in these searches can be found in Table S2 (online only). Additionally, we employed a reference-chasing strategy by manually searching the reference lists of studies that met our inclusion criteria to identify additional relevant papers.

Study Selection and Data Extraction

The current study employed a systematic review of the literature to utilize systematic and explicit methods to identify, select, extract, and critically evaluate all relevant research (Doria et al., 2018). The process of study selection and data extraction consisted of three phases. Each phase was conducted by pairs of independent coders following a clearly outlined criteria checklist (Pigott & Polanin, 2020). Initially, titles and abstracts of papers were screened for relevance. Next, a pair of coders performed a full-text review of each relevant paper to determine whether it met all inclusion criteria. Finally, two coders using a predetermined template independently extracted the following information from each study: general study descriptors (e.g., country, sample size), educational factors (e.g., curriculum, program length), child outcomes (i.e., assessment of IPS skills), descriptive statistics (e.g., means, standard deviations), and inferential statistics (e.g., statistical tests, effect sizes). Any discrepancies between data coders/extractors at each phase were resolved through discussion until consensus was reached.

Risk of Bias and Quality Assessment of Included Studies

We assessed the risk of bias for the selected papers using the Quality Assessment Tool for Studies with Diverse Designs (QATSDD; Sirriyeh et al., 2012). The QATSDD was chosen because it is a flexible instrument that can accommodate various study designs using the same criteria. It consists of 14 items measured on a 4-point Likert scale (0 = not at all to 3 = complete). Item examples include explicit reporting of the theoretical framework, objectives, target population, data collection procedure, and fit between the stated research question and method of analysis (Sirriyeh et al., 2012). Total scores on this instrument range from 0 to 42, with higher scores indicating better completeness in reporting and a better fit of the research design. For example, random assignment at the individual level and control for family factors would be considered better quality than correlations or quasi-experimental designs where children are not randomly assigned. Based on Sirriyeh et al.’s (2012) recommendation, we used a cutoff score of 25 to distinguish between low-risk and high-risk-of-bias studies.

Prior to coding the papers, assistants were trained to use the instrument until they reached 80% agreement with the first author before rating the studies in this review. Risk of bias of each paper was then assessed by pairs of independent coders. The ICC statistic was used to calculate reliability. The interrater reliability for the six pairs of independent coders for these quality ratings was good, with an intraclass correlation coefficient (ICC) of .91 (95% CI [.83, .95]). Challenging items to code for the research assistants were as follows: Did the study authors provide (a) a good justification for their analytic method, (b) evidence for their involvement in the design, and (c) a discussion of their strengths and limitations.

Meta-Analytic Procedure

We found that several papers conducted multiple models of analysis. To address the presence of dependent effect sizes from the same studies, we conducted a multilevel meta-analysis with robust variance estimation (Pustejovsky & Tipton, 2022). This methodology accounts for data dependencies. The “metafor” package in R (Harrer et al., 2021; R Core Team, 2020) was used for the analyses. Hedges’ g is a common metric of effect size. This standardized measure of effect size is commonly used for measuring the difference between the group means. Hedges’ g was computed using different formulas depending on the information available in the eligible studies that compared results in two (or more) groups (see Table S3 for the manual, online only). Random-effects multilevel meta-analysis models were employed, with the Q Statistic used as the overall measure of heterogeneity. However, considering the multilevel nature of meta-analyses in this study, within-study and between-study variance (I2) were examined (at each level) to assess the proportion of heterogeneity in effect sizes that can be attributed to within and across-study variance. Low I2 values indicate statistical homogeneity in findings, while high I2 values prompted further investigation of heterogeneity using within-study and between-study characteristics as moderators (Higgins et al., 2003). Since both the Q statistic and I² are dependent on sample size, we report tau (τ) alongside traditional heterogeneity statistics. Tau is defined as the standard deviation of the true effect sizes across studies, providing a direct and interpretable measure of the dispersion in underlying effects. As recommended by Borenstein (2019), τ captures the extent of true heterogeneity in a way that is not confounded by sample size or measurement error, unlike Q or I². We utilized multilevel meta-regression analyses to test hypothesized continuous and categorical moderator variables (Pigott & Polanin, 2020).

Moderator variables included child’s age (median score); child’s risk status (>50% of the sample yes/no); curriculum type (curriculum used in multiple studies); delivery - location (center pull-out method, classroom); delivery - implementor (researcher, educator); dosage (number of sessions of skills training); measure (outcome used in multiple studies); and research design (children randomized to group yes/no). Comparison type included pre-post models of analyses for the intervention alone (referred to as single group), pre-post analyses for the intervention plus a comparison group (referred to as pre-post two-group models), and two-group analyses at post-treatment. This moderator accounted for differences in the effects with and without control groups. Adding a comparable control group accounts for some of the threats to internal validity, as both groups encounter the same threats, such as maturation and testing. Due to insufficient data, we were unable to assess the child’s sex, gender, analyses with covariates, follow-up results, or fidelity as moderators.

Only studies with direct interventions targeting IPS skills training were included in the meta-analyses. Observational studies and indirect interventions (such as a music program believed to enhance IPS skills) were excluded. To be eligible for meta-analysis, the intervention studies had to meet the following criteria: (a) a direct link between the intervention and the IPS skill outcome was reported; (b) sufficient statistical data was provided to compute an effect size for this relationship; and (c) the study included both an intervention and a no intervention control (or comparison control) group. Observational studies and single-group designs were not included in the meta-analysis.

Results

Descriptive Statistics

Description of Studies and Samples

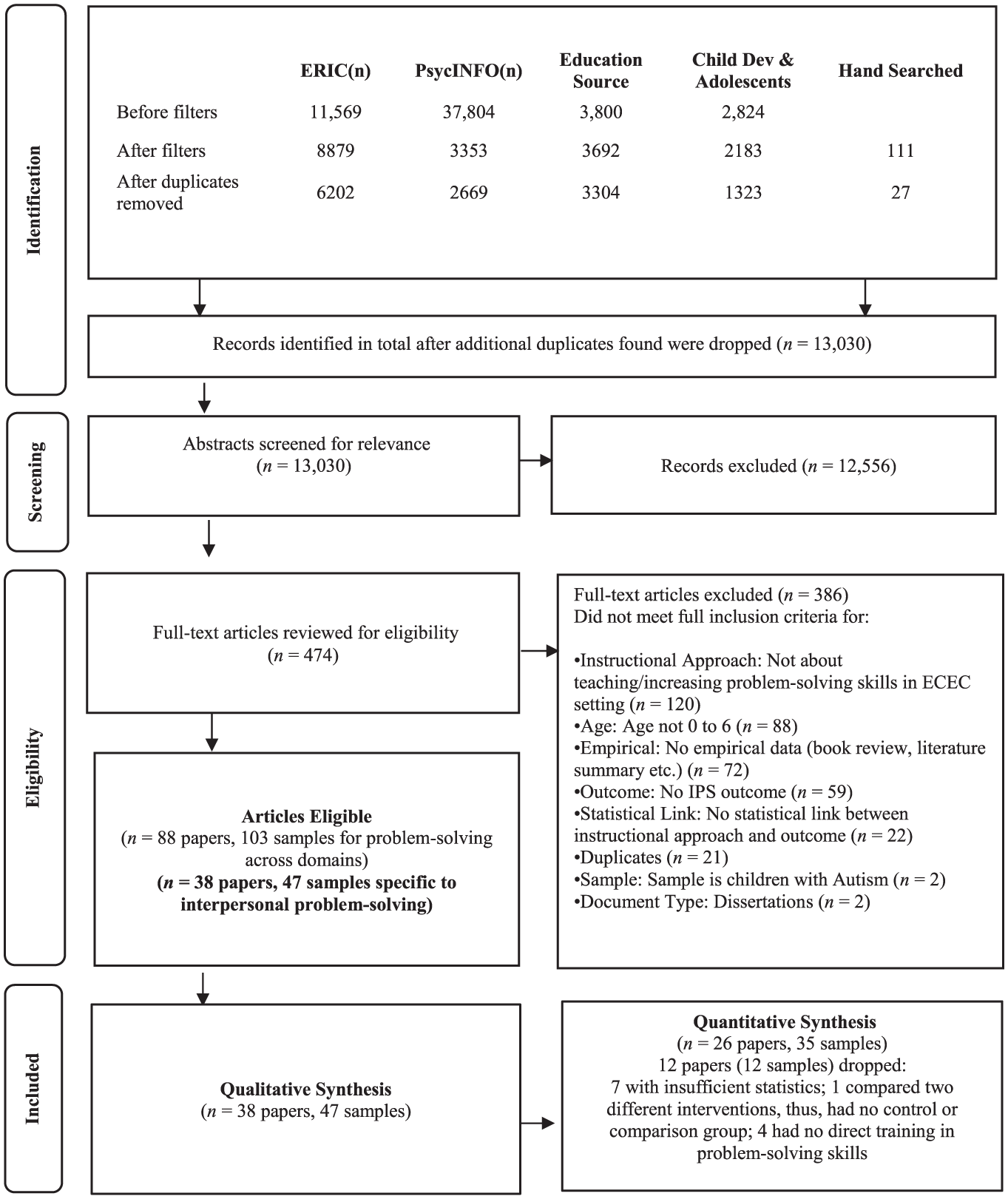

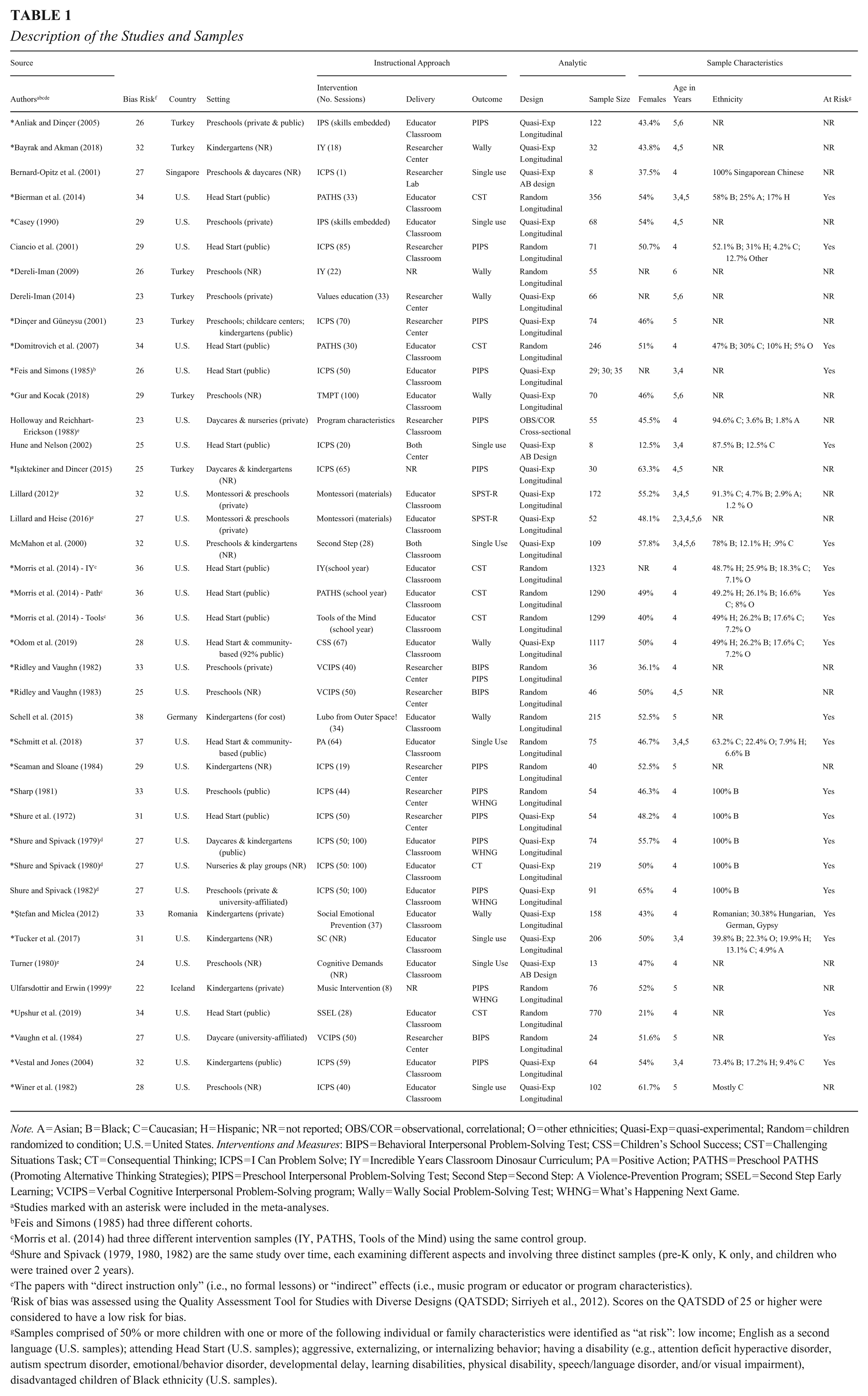

Our comprehensive literature search yielded 13,030 abstracts for review. Refer to Figure 1 for the PRISMA flow diagram (Page et al., 2021). Of these abstracts, 474 papers underwent the full-review process, resulting in the inclusion of 38 papers that encompassed 47 different samples of children in our review. For a description of the studies and samples, see Table 1. The publication types included 37 journal articles and one report. These papers were published between 1972 and 2019, with a median publication year of 2001. The total sample across all 38 papers consisted of 7,627 children from three continents, with a mean sample size of 222 and a median of 68 (range: 8 to 1,323). Among the included studies, 71% (n = 27) were conducted in North America, 21% (n = 8) in Asia, and 8% (n = 3) in Europe. The highest number of studies were conducted in the United States (n = 27), followed by Turkey (n = 7). Refer to Table 1 for information on the ethnic composition of the samples and the definition for “at risk” status.

PRISMA flow diagram of study selection.

Description of the Studies and Samples

Note. A = Asian; B = Black; C = Caucasian; H = Hispanic; NR = not reported; OBS/COR = observational, correlational; O = other ethnicities; Quasi-Exp = quasi-experimental; Random = children randomized to condition; U.S. = United States. Interventions and Measures: BIPS = Behavioral Interpersonal Problem-Solving Test; CSS = Children’s School Success; CST = Challenging Situations Task; CT = Consequential Thinking; ICPS = I Can Problem Solve; IY = Incredible Years Classroom Dinosaur Curriculum; PA = Positive Action; PATHS = Preschool PATHS (Promoting Alternative Thinking Strategies); PIPS = Preschool Interpersonal Problem-Solving Test; Second Step = Second Step: A Violence-Prevention Program; SSEL = Second Step Early Learning; VCIPS = Verbal Cognitive Interpersonal Problem-Solving program; Wally = Wally Social Problem-Solving Test; WHNG = What’s Happening Next Game.

Studies marked with an asterisk were included in the meta-analyses.

Feis and Simons (1985) had three different cohorts.

Morris et al. (2014) had three different intervention samples (IY, PATHS, Tools of the Mind) using the same control group.

Shure and Spivack (1979, 1980, 1982) are the same study over time, each examining different aspects and involving three distinct samples (pre-K only, K only, and children who were trained over 2 years).

The papers with “direct instruction only” (i.e., no formal lessons) or “indirect” effects (i.e., music program or educator or program characteristics).

Risk of bias was assessed using the Quality Assessment Tool for Studies with Diverse Designs (QATSDD; Sirriyeh et al., 2012). Scores on the QATSDD of 25 or higher were considered to have a low risk for bias.

Samples comprised of 50% or more children with one or more of the following individual or family characteristics were identified as “at risk”: low income; English as a second language (U.S. samples); attending Head Start (U.S. samples); aggressive, externalizing, or internalizing behavior; having a disability (e.g., attention deficit hyperactive disorder, autism spectrum disorder, emotional/behavior disorder, developmental delay, learning disabilities, physical disability, speech/language disorder, and/or visual impairment), disadvantaged children of Black ethnicity (U.S. samples).

The children in the studies ranged in age from 2 to 6 years, with a mode of 4 years. Only one study included 2-year-old children (Lillard & Heise, 2016). Fifty-three percent (n = 20) of the papers reported samples identified as “at risk.” The authors of the remaining papers (47%; n = 18) did not mention the at-risk characteristics of their samples. Among the papers, 42% (n = 16) had samples from low-income families, 16% (n = 6) from low-to-middle or middle-income households, 3% (n = 1) had samples from middle-to-upper or upper-income households, and 39% (n = 15) did not report socioeconomic status information. Out of the 38 papers meeting the eligibility criteria, 37% (n = 14) reported that children were randomly assigned to intervention and comparison/control conditions, 61% (n = 23) had quasi-experimental designs, and 2% (n = 1) were correlational studies. Furthermore, 91% (n = 34) of the studies were longitudinal, 7% (n = 3) used AB designs, and one study (2%) was cross-sectional.

Programs and Outcomes

Detailed information about the instructional approaches and outcomes used in the current studies can be found in Tables S4 and S5, respectively (online only). Various interventions were employed to teach IPS skills to children. These included the classic Montessori program (e.g., Lillard & Heise, 2016; 2 studies), the Preschool PATHS curriculum (Greenberg & Kusche, 2006; 3 studies), the Verbal Cognitive Interpersonal Problem-Solving program (VCIPS: Ridley & Vaughn, 1983; 3 studies), and the IY curriculum (Webster-Stratton & Reid, 2008; 3 studies). Fourteen studies (16 samples) used a version of the IPS program, while the remaining 15 studies utilized different interventions.

Most studies (n = 34, 89%) utilized training interventions specifically focused on IPS skills. However, there was substantial variation in the content of teaching modules (e.g., some combination of emotion regulation, empathy, perspective taking, friendships, anger, and thinking skills) and the activities used to scaffold learning (e.g., puppetry, pretend play, role play, cooperative games, books, and stories). One study involved direct instructions without lessons (Turner, 1980). Four others involved indirect effects. These studies addressed very different approaches to improving IPS, including a music program (Ulfarsdottir & Erwin, 1999), program characteristics and practices (Holloway & Reichhart-Erickson, 1988), educator characteristics (Lillard, 2012), and classroom materials and equipment (Lillard, 2012; Lillard & Heise, 2016). Given the very small and heterogeneous nature of these studies, we did not attempt to interpret their effects on IPS.

The review included various outcome measures used to assess IPS skills. Two studies employed the Social Problem-Solving Test-Revised (SPST-R; Rubin & Mills, 1988), which quantified the number of sharing, fairness, or justice strategies generated by children in problem situations. Three studies utilized the What’s Happening Next Game (WHNG; Shure & Spivack, 1980), three studies employed the BIPS (Ridley & Vaughn, 1982), four studies (six samples) utilized the Challenging Situations Task (CST; Denham et al., 1994), and seven studies (12 samples) utilized a version of the Wally (Webster-Stratton, 1990). Additionally, 13 studies (17 samples) utilized the PIPS (Shure & Spivack, 1980). The remaining nine studies employed unique measures to assess skill development.

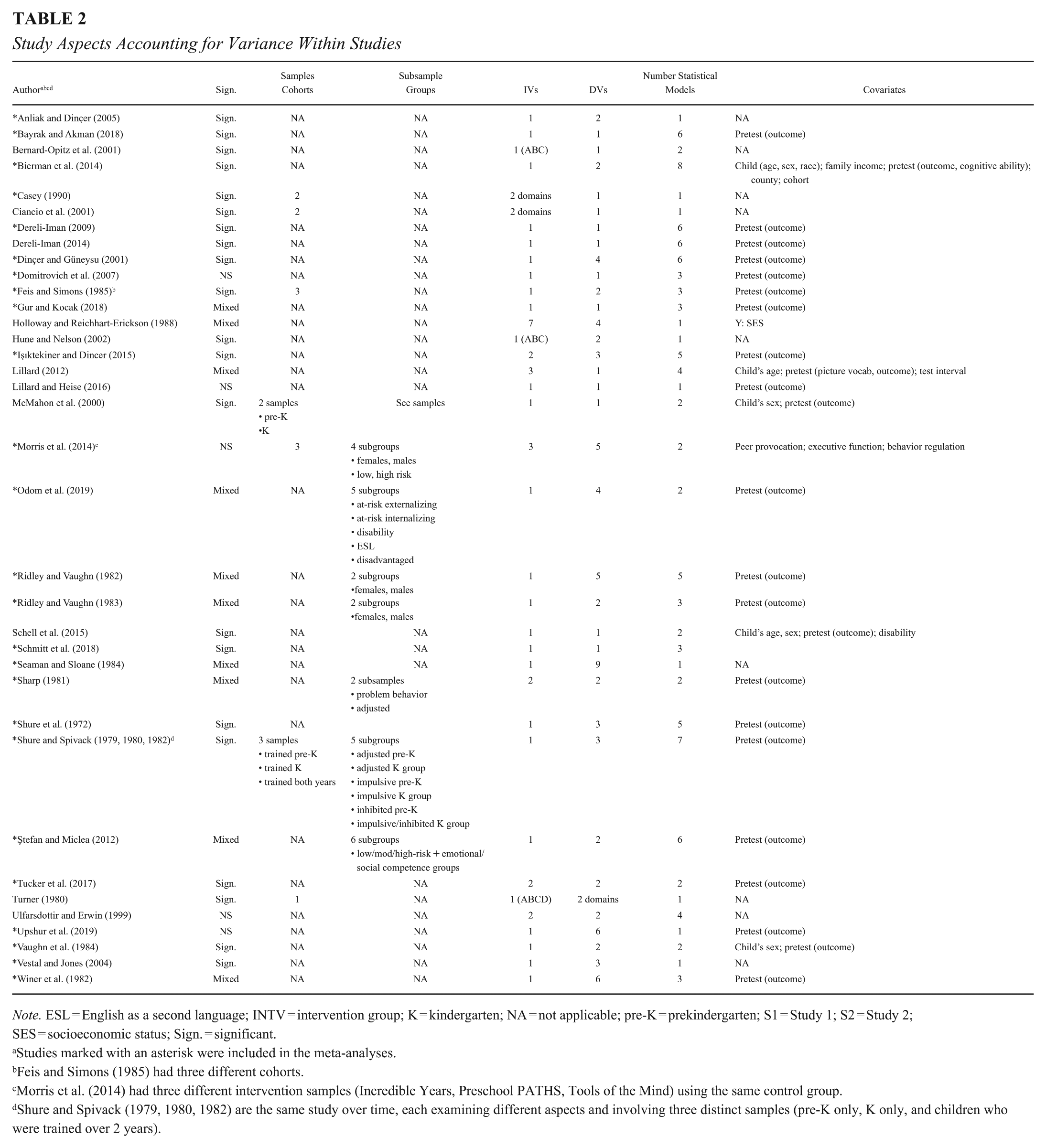

Within- and Between-Study Variances

Within-study variance was attributed to several factors, including the author’s use of multiple subsamples (n = 7), instructional approaches (n = 6), outcomes (n = 23), and/or models (n = 17). Refer to Table 2. Most interventions were delivered by educators (55%, n = 21) or researchers (32%, n = 12), with a few involving both educators and researchers (5%, n = 2) or not reporting the information (8%, n = 3). Most skill-training sessions took place in classrooms (63%, n = 24) or in separate rooms within the center (26%, n = 10). One study (3%) was conducted in a lab, and three studies (8%) did not report the location.

Study Aspects Accounting for Variance Within Studies

Note. ESL = English as a second language; INTV = intervention group; K = kindergarten; NA = not applicable; pre-K = prekindergarten; S1 = Study 1; S2 = Study 2; SES = socioeconomic status; Sign. = significant.

Studies marked with an asterisk were included in the meta-analyses.

Feis and Simons (1985) had three different cohorts.

Morris et al. (2014) had three different intervention samples (Incredible Years, Preschool PATHS, Tools of the Mind) using the same control group.

Shure and Spivack (1979, 1980, 1982) are the same study over time, each examining different aspects and involving three distinct samples (pre-K only, K only, and children who were trained over 2 years).

Risk of Bias and Quality Assessment of Included Studies

The average quality rating scores using the QATSDD (Sirriyeh et al., 2012) for the 38 eligible papers in the current review were 29.05 (range of 23 to 38) out of a total score of 42. Eighty-seven percent (n = 33) of the papers had scores indicating a low risk of bias (i.e., scores of 25 or higher; Sirriyeh et al., 2012). Items showing the highest level of risk of bias were authors’ failure to report evidence for (a) sample size consideration a priori, (b) reliability and validity of instruments, (c) involvement in study design, and (d) discussion regarding the strengths and limitations of their study. Across studies, some data were missing for sample characteristics (e.g., age, sex, gender) and/or program delivery (i.e., implementor, location, number of sessions).

When examining the risk of bias in studies, it is important to consider factors that can lower this risk, such as: (a) using published and unpublished studies; (b) employing larger sample sizes; (c) accounting for baseline equivalence; (d) ensuring a minimum duration of 12 weeks for interventions; (e) having a minimum of two teachers per group to control for teacher effects; (f) ensuring fidelity of the intervention delivery; (g) excluding researcher-developed measures due to potential researcher effects; and (h) using outcome measures with sufficient reliability and validity (Cheung & Slavin, 2016; Kim et al., 2021; Gilbert & Soland, 2024; Wolf & Harbatkin, 2023; What Works Clearinghouse [WWC], 2014, protocol for ECE interventions). Regarding sample size, 16% (n = 6) of the papers included data samples of greater than 250 children. Among the 22 nonrandomized quasi-experimental studies, 59% (n = 13) of the authors reported assessing baseline differences, using change scores, or using pretest scores as a covariate to account for baseline equivalence. Twenty-seven percent (n = 6) of authors provided no information, and 14% (n = 3) did not employ a control sample.

For papers in which the intervention was delivered by teachers (60% of the data), only 57% (n = 13) of them reported the number of teachers in each group. Only 21% (n = 8) of the papers in the current study reported some information about the fidelity of the intervention, and 16% (n = 6) used researcher-developed programs and measures (Ridley & Vaughn, 1982, 1983; Shure & Spivack, 1979, 1980, 1982; Shure et al., 1972). Regarding the quality of the outcome measures used to assess IPS skills, 39% (n = 15) of the studies reported some psychometric information on their outcome measure prior to the study, and 61% (n = 23) provided some psychometric information on their outcome measure for their current study (psychometric information provided for the measures used by studies in this review can be found in Table S5, online only).

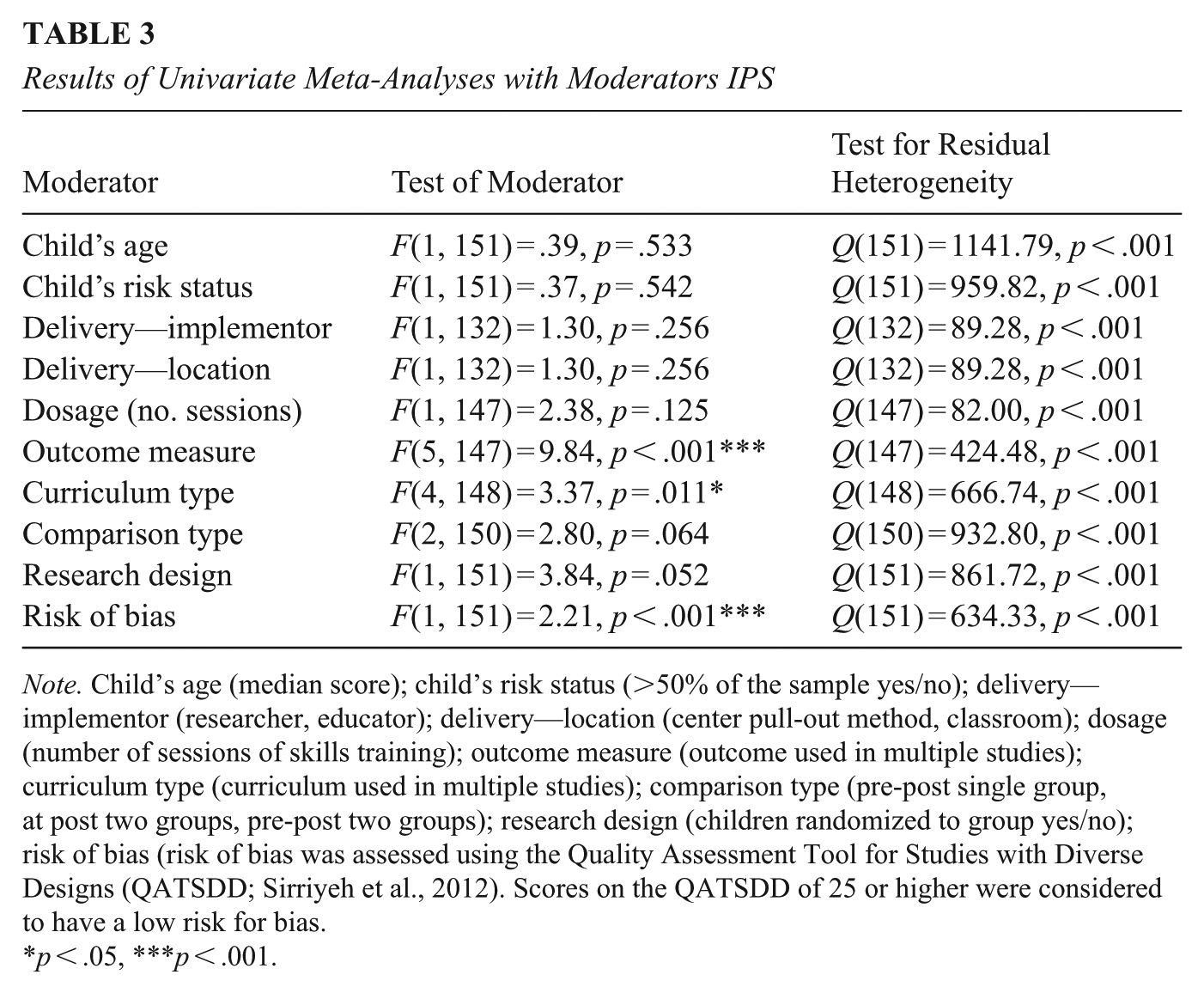

Meta-Analyses Results 1

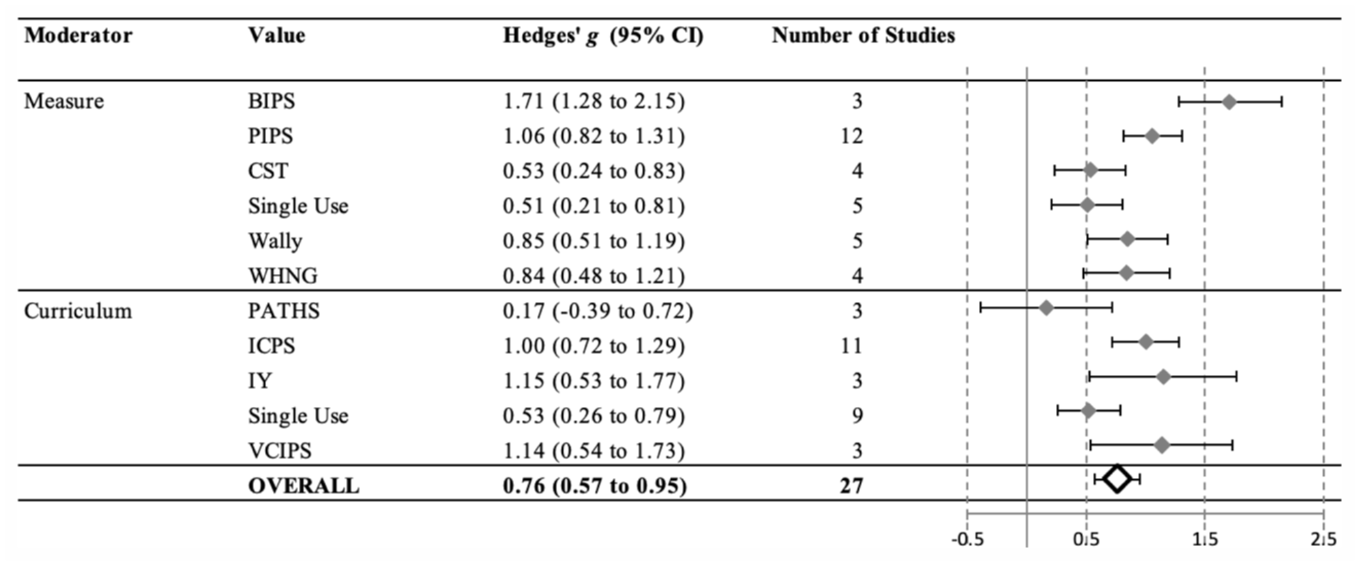

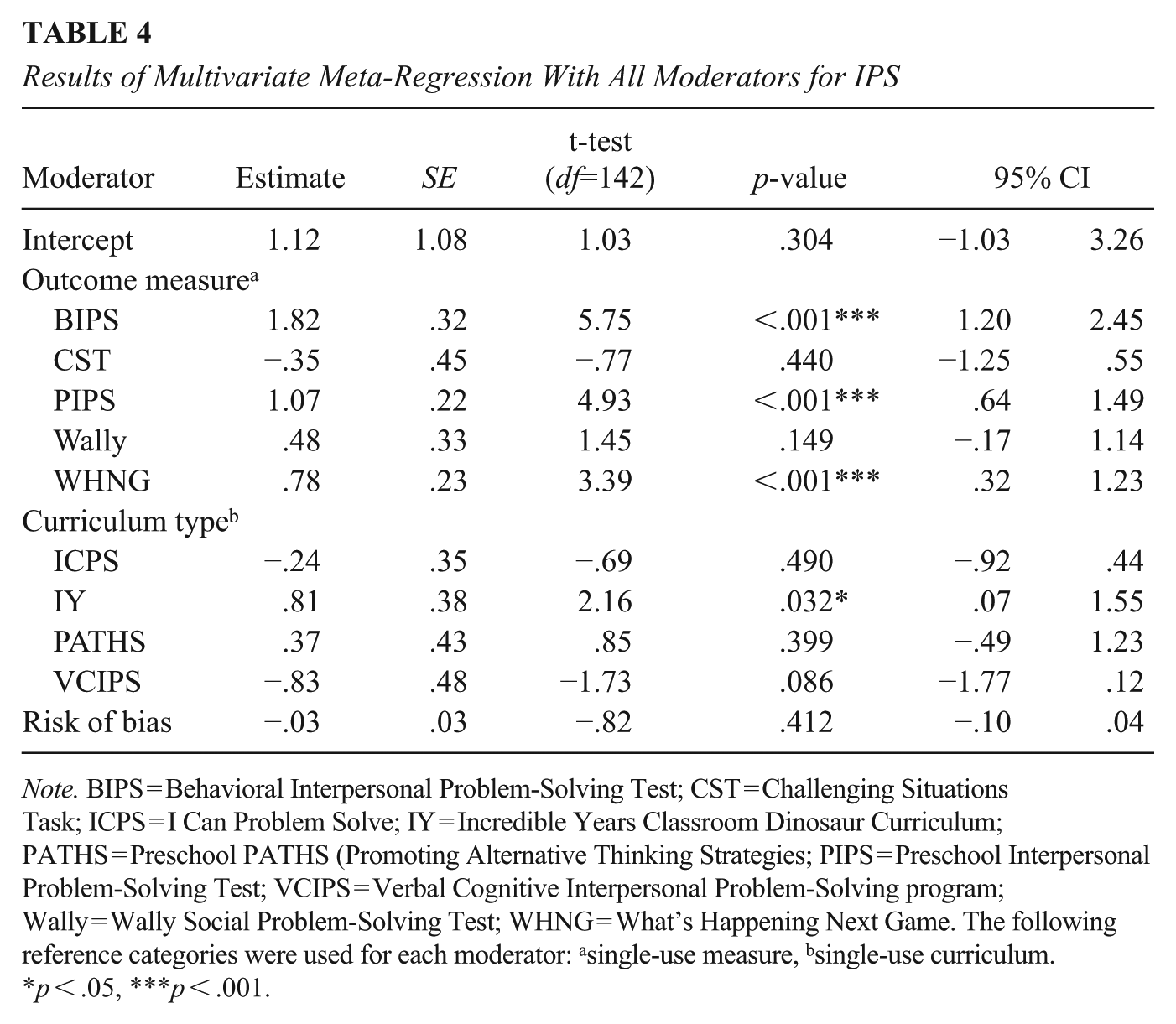

To investigate the extent to which IPS skills can be taught to young children, meta-analyses were conducted on studies with experimental and quasi-experimental designs (n = 26). The report begins with a multilevel meta-analysis to determine the pooled effect size and the amount of heterogeneity among the effect sizes. Next, the results of meta-analyses with individual moderator variables are presented. Refer to Table 3 and Figure 2 for detailed information on moderators that had a significant impact. Finally, the report includes the results of a meta-analysis incorporating all significant moderators (Table 4).

Results of Univariate Meta-Analyses with Moderators IPS

Note. Child’s age (median score); child’s risk status (>50% of the sample yes/no); delivery—implementor (researcher, educator); delivery—location (center pull-out method, classroom); dosage (number of sessions of skills training); outcome measure (outcome used in multiple studies); curriculum type (curriculum used in multiple studies); comparison type (pre-post single group, at post two groups, pre-post two groups); research design (children randomized to group yes/no); risk of bias (risk of bias was assessed using the Quality Assessment Tool for Studies with Diverse Designs (QATSDD; Sirriyeh et al., 2012). Scores on the QATSDD of 25 or higher were considered to have a low risk for bias.

p < .05, ***p < .001.

The results of univariate meta-analyses for significant moderators.

Results of Multivariate Meta-Regression With All Moderators for IPS

Note. BIPS = Behavioral Interpersonal Problem-Solving Test; CST = Challenging Situations Task; ICPS = I Can Problem Solve; IY = Incredible Years Classroom Dinosaur Curriculum; PATHS = Preschool PATHS (Promoting Alternative Thinking Strategies; PIPS = Preschool Interpersonal Problem-Solving Test; VCIPS = Verbal Cognitive Interpersonal Problem-Solving program; Wally = Wally Social Problem-Solving Test; WHNG = What’s Happening Next Game. The following reference categories were used for each moderator: asingle-use measure, bsingle-use curriculum.

p < .05, ***p < .001.

The dataset for the meta-analyses consisted of 153 effect sizes extracted from 26 studies (36 samples). Each study contributed between 1 and 18 effect sizes. The outcome variables were categorized into six types of instruments: single-use measures (5 studies, 16 effect sizes), BIPS (3 studies, 10 effect sizes), PIPS (10 studies, 14 samples, 57 effect sizes), Wally (5 studies, 10 samples, 34 effect sizes), CST (4 studies, 6 samples, 29 effect sizes), and WHNG (2 studies, 4 samples, 7 effect sizes). The effect sizes were normally distributed.

Unconditional Multilevel Meta-Analysis

The multilevel meta-analysis produced the pooled effect size of hedges’ g = 0.76 (SE = .10, p < .001, 95% CI [0.57, 0.95]). The heterogeneity index was significant, Q(152) = 1211.13, p < .001. The log-likelihood-ratio test showed significance at the between-study level but not at the within-study level, indicating that study characteristics likely contribute to the heterogeneity among effect sizes. Specifically, approximately 95% of the variability in effect sizes at the between-study level comes from true differences between the studies’ effects rather than random sampling error (I2 between = 95.22% and I2 within = .00%). The standard deviation of true effect sizes at the study level (τ) was .26, indicating a moderate degree of heterogeneity in effects across studies. In contrast, the estimated variance at the effect size level (within studies) was near zero (τ = .00), suggesting minimal variation among multiple effect sizes reported within individual studies. Both I2 and τ statistics indicate that most of the heterogeneity is attributable to differences between studies rather than within them.

Multilevel Univariate Meta-Analyses for Individual Moderators

Further analyses were conducted to investigate potential sources of heterogeneity based on study characteristics. The univariate moderator analyses revealed that curriculum type, outcome type, and risk of bias significantly contributed to the heterogeneity of effect sizes (refer to Figure 2 and Table 3). However, the heterogeneity index (Q) remained significant in all univariate analyses, indicating that none of the examined moderators fully explained the heterogeneity of effect sizes. Moderating effects were nonsignificant for children’s characteristics (age and at-risk status), intervention delivery characteristics (implementor, location, number of sessions), and study design features.

Multilevel Meta-Analysis With All Significant Moderators

The multivariate analysis incorporating all significant moderators simultaneously showed that they did not completely account for the heterogeneity among effect sizes, as the test for heterogeneity remained significant, with Q(151) = 634, p < .001. Although between-study variance remained significant, it was reduced by 40.5%. The estimated between-study standard deviation (τ) was .21, a 19% reduction (τ =.26 to .21) compared to the model without moderators), still indicating a modest degree of residual heterogeneity in true effect sizes across studies. In the multivariate model, the curriculum type, and outcome type moderators remained significant, while risk-of-bias moderator became nonsignificant (Table 4).

For easier interpretation, we discuss the effect sizes from the univariate analyses. Risk of bias scores were negatively related to the magnitude of the effect size (B = −.09, SE = .02, 95% CI [−.13, −.05])—that is, scores rated lower risk of bias tended to have stronger effects. Figure 2 shows the effect of the two significant categorical moderators on the magnitude of effect size. As can be seen from this figure, the confidence interval for effect sizes for studies that used the PATHS curriculum cross zero, indicating no effect of intervention teaching IPS skills. However, larger effect sizes were observed for other types of curriculum. The interventions had significant effect on improvement of IPS skills regardless of the way the outcomes were measured. However, for the studies that used BIPS and PIPS measures, the effect sizes were the largest.

Discussion

Decades of research highlight the importance of IPS skill acquisition for positive developmental trajectories in young children (Araujo et al., 2019; Elango et al., 2016; Mahoney et al., 2021; Merrill et al., 2017; Murano et al., 2020). Early childhood educators are in a unique position to enhance young children’s IPS abilities during a period of tremendous growth (Gradovski et al., 2019). Thus, the primary objective of the current review was to integrate global evidence for instructional approaches designed to improve IPS skills in ECEC settings in children from birth to age six. Our goal was to highlight the features that make programs more/less effective in teaching IPS to young children. Our comprehensive search of the literature resulted in 38 papers outlining several intervention programs promoting IPS skills using a variety of instruments. Most papers (60%) reported significant findings, suggesting that IPS skills can be taught to very young children. Multilevel meta-analyses summarizing the effects of 26 papers (153 effects) produced pooled effect sizes of significant magnitude, Hedges’ g = .76. These findings are consistent with recent meta-analysis examining IPS interventions in relation to SEL skills (Barnes et al., 2018; Murano et al., 2020; Yang et al., 2019).

Moderating Variables and Between-Study Variances

The multilevel meta-analyses revealed significant variance in effect sizes across studies. Child’s age, risk status, program length, implementor, location, and study design features were not significant moderators in the multilevel analysis. The type of curriculum, assessment instrument used, and study scores reflecting lower risk of bias accounted for much of this variance. Next, we discuss those moderators that seem most promising in explaining what works best in teaching IPS skills to young children in ECEC settings. We then discuss the limitations found in this field of research, as well as the limitations of the current study.

Children at Risk

We expected that scaffolding of IPS would be especially effective for children at risk (Merrill et al., 2017; Murano et al., 2020; Shaw et al., 2021). To be considered “at-risk” in this review, researchers had to report that 50% or more of the children in their samples were identified as low-income, majority language learners, having behavioral challenges, or having a disability. Twenty of the 38 studies met this definition of “at-risk.” However, the remaining 18 studies did not report the “at-risk” status. The lack of clarity of the “at-risk” status in these 18 studies meant that we could not compare the effectiveness of IPS interventions for “at-risk” versus not-at-risk samples.

Some research suggests that children growing up in environments that provide fewer learning opportunities benefit most from interventions in ECEC (Burchinal et al., 2016). It is possible that children from all backgrounds can benefit from exposure to IPS-enriching ECEC environments. One explanation is that curriculum type interacts with children’s at-risk status when it comes to the effectiveness of promoting children’s IPS skills. For example, Murano et al. (2020) found that students identified as at-risk benefited more from targeted than from universal SEL programs when compared with children who were not considered at-risk. The high rate of missing information about children’s “at-risk” status in the literature reveals a need for more comprehensive reporting of sample characteristics.

Program Length (Number of Sessions of IPS Training)

The number of training sessions was not a significant moderator. In an integration of evidence examining IPS interventions and SEL outcomes, Yang et al. (2019) draw attention to the fact that in their study, many of the shorter interventions had a strong skill-focused content, suggesting that program content may, in fact, be driving their findings about lower doses being more effective. In the current study, short and long interventions both had strong content-specific, skills-focused training, which may explain why program length was not a strong driver of effects in this review. Dosage, however, may also be important. Burchinal et al. (2016) found greater gains on school readiness measures for 2- versus 1-year intervention programs. More research is needed to parse out whether lower, as compared to higher, dosage programs can be just as effective, since shorter interventions would be more scalable and easier to implement.

Intervention Delivery (Implementor and Setting)

The moderating effects of delivery of the interventions (i.e., educators implementing training in the classroom or researchers offering small group training outside of the classroom) were not significant. This finding is contrary to other meta-analyses examining setting and implementor for SEL interventions (Blewitt et al., 2018; Murano et al., 2020), where stronger effects were found for researchers (or specialists) than for educators. Educators may have some advantage as they know their individual students’ needs, know the school environment best, and provide a consistent presence in the classroom. On the other hand, trained researchers have pedagogical expertise and can implement the intervention with higher fidelity (Han et al., 2005; Peltier & Vannest, 2017). These results may reflect principles of social cognitive theory (Bandura, 1986), where consistent modeling of IPS strategies by trusted educators enhances observational learning and self-efficacy in children. More research is needed to test whether there are consistent patterns of advantage for certain models of intervention delivery.

Brunsek et al. (2020) reviewed associations between professional development (PD) of early childhood educators and children’s outcomes and found positive associations when child outcomes aligned with the content of PD programs. In-depth training that promotes personal development, performance feedback, or coaching and supports the implementation of the curricula may be an important factor supporting educators in the delivery of interventions with high fidelity (Han et al., 2005). Although there was insufficient data to explore fidelity as a moderator in the current review, all the interventions delivered in classrooms demonstrated that they provided training for the educators. These results demonstrate the capacity of classroom-based interventions to be effective without added pull-out sessions and/or the controlled environment of labs (e.g., Ştefan & Miclea, 2012). As ECEC policymakers revamp curricula incorporating more inquiry-based learning and IPS-focused education, it will be essential to use curricula that can be easily taught to educators and easily incorporated into the classroom (Barnes et al., 2018).

Curriculum Focus

To shed light on the important question of “what works best,” we examined whether the instructional approaches reported in the reviewed papers involved specific training in IPS skills (Csapó & Funke, 2017). Across meta-analyses looking at different aspects of SEL instruction that included preschool-aged children, findings reveal that more focused curricula show stronger relationships to children’s SEL outcomes (e.g., Murano et al., 2020; Yang et al., 2019). This finding supports constructivist perspectives (Piaget, 1971), which suggest children build IPS capabilities through active engagement with cognitively structured experiences. Further support for content-specific curricula focused on IPS skills as a critical component of IPS training was found in the univariate meta-analyses. Specifically, studies using more developed programs (ICPS, IY, VCIPS) had stronger effects compared to single-use interventions. The more developed programs tended to focus on the steps involved in IPS behaviors (e.g., recognizing/articulating the problem, asking questions, generating possible solutions, evaluating the effectiveness of the solution, revising the plan, and so on). Many single-use interventions did not involve a focus on the cognitive steps involved in IPS. For example, Schmitt et al. (2018) implemented the Positive Action program (Schmitt et al., 2014) that focused on skills such as self-respect, honesty, and self-improvement; Tucker et al. (2017) used the Sunshine Circles group theraplay (Booth & Jernberg, 2010), encouraging empathy, positive relationships, and nurturing. Thus, the IPS process-oriented interventions were more effective in improving children’s IPS skills. One exception to the findings was the PATHS program, which was also used in multiple studies. It did not have a significant effect. The PATHS program has a strong focus on emotional skills and promoting effective communication. While this focus is an essential part of IPS and problem behavior change, these components may not align with the outcomes of interest in the current integration of evidence (i.e., IPS steps and outcomes).

Measurement of IPS Skills

Univariate moderator analysis for measurement type also revealed some differences among the assessment instruments. Effect sizes for the better-developed instruments (i.e., the Wally, WHNG, and PIPS) were strong (g = .84 to 1.06). These measures use verbal scenarios or picture vignettes to assess children’s performance. The BIPS, which involves presenting children with interpersonal problems using a peer (i.e., confederate child) in real-life situations in a testing room, had the largest effect (g = 1.71). Both types of instruments assess the child’s ability to generate good solutions to the dilemmas. Assessment formats that allow children to generate novel solutions (rather than select from limited options) may better capture the learning process described in constructivist theory. Furthermore, BIPS assessments reflect observable, context-based behaviors central to social cognitive learning (Bandura, 1986). More current research is needed to explore the BIPS and the use of this form of data collection.

Of the studies that used the CST, only one study was significant, and this study had a smaller effect size than for other instruments (i.e., Bierman et al., 2014). The CST also involves asking children to provide solutions to interpersonal dilemmas. However, children are presented with a specific set of response options where they pick the best response from the pictures shown (Denham et al., 1994). The pictures include prosocial, avoidance, crying, or aggression choices. Thus, compared to other measures (e.g., the Wally and PIPS), the CST offers children fewer opportunities to generate their own alternative solutions. While the CST is a well-used, valid instrument, the constrained response format may not capture IPS skill development as well as other measures.

Risk of Bias, Comparison Type, and Study Design

Research designs accounting for more variance (e.g., controlling for pretest scores, children randomly assigned to groups, lower risk of bias) are considered stronger designs (Evans, 2003; Murphy et al., 2014). Researchers examining early childhood interventions in relation to child outcomes have found that studies using stronger designs tend to yield smaller effect sizes (e.g., Murano et al., 2020). Having a comparison group can control for threats to internal validity such as maturation and testing. In this review, the effects for research design (i.e., children randomized to groups, yes or no) and for comparison type (i.e., pre-post versus at-post analyses) were not significant. We may not have been able to detect differences because many of the study authors using quasi-experimental designs reported assessing their sample at baseline for pretest scores and reported no significant differences.

Univariate meta-analyses revealed smaller effects for the studies, indicating a lower risk of bias (i.e., better designs and completeness of reporting). However, in the multivariate moderator analysis, when all significant moderators from the univariate analyses were entered together into the model, the moderating effects for the risk of bias did not hold. It is worth noting that papers using the CST as an outcome had the higher scores and lower risk of bias. Results of our study may be skewed by the null findings of this one instrument.

Limitations of the Present Review

This review provides a comprehensive summary and integration of the literature to date on IPS skill development in young children in ECEC settings. However, it also presents several limitations. We included studies of all types of designs, and both direct and indirect educational approaches (although too few studies were found to address the latter) to promote IPS skills. This comprehensive strategy may have increased heterogeneity in our studies, making it harder to integrate across them. We also focused this review on published papers and excluded gray literature. This decision may have resulted in potential bias and inflated the effect sizes (Paez, 2017). In addition, although we attempted to capture diverse cultural contexts globally, several papers were not accessible to us because they were not available in English.

Our findings do not suggest that age was a prominent driver of the effects; however, our sample was restricted to children of preschool age. To reduce heterogeneity, we excluded samples made up entirely of children with disabilities, although we included many studies that included such children as part of their samples. Our sample size of only 26 papers for the meta-analysis posed a potential issue of statistical power, especially when examining moderating variables. In addition, sample sizes within studies were low, with only 7% (six papers) having sample sizes greater than 250 children. The number of studies we found and the sample sizes within them limited our ability to examine important child/family (e.g., at-risk status, child age, and gender) and program (e.g., measure informants) characteristics. The absence of research on IPS in infants and toddlers is one example. The limitations of the literature in this area are discussed next, but it is important to recognize that these research limitations also constitute limitations to the current study.

Limitations of the Research Literature

There were several limitations to the existing literature (Cheung & Slavin, 2016; Pigott & Polanin, 2020; WWC, 2014). First, while we set out to integrate evidence for children from zero to six, our search led to no papers on infants and toddlers. Second, there was substantial within-study heterogeneity as authors explored different analyses, models, and outcomes, suggesting more research is needed to clarify which outcomes are most useful in assessing gains in skills. Finally, as the risk of bias assessment revealed, several key aspects of the studies, such as sample characteristics (e.g., gender, risk status), methodology (e.g., recruitment method, instrument reliability, blind observers, and hours/weeks of IPS training), and the fidelity of the intervention were either not reported or were unclear (Frick et al., 2023). This lack of information in eligible papers highlights a need for better consistency and completeness in reporting (Michie et al., 2009). These potential moderators may have accounted for some of the variance within studies. For dosage, we were able to calculate the number of sessions (but not hours of IPS skills training), which may or may not be the best indicator of exposure to the IPS skills intervention (Burchinal et al., 2016; Frick et al., 2023). Despite the limitations of our study and the literature in this area, the current integration and summary of the IPS literature has important implications for policy decision-making and research-planning in the future (Pigott & Polanin, 2020).

Directions for Future Research

Primarily, researchers conducting interventions examining IPS skills need to clearly report their methodology and findings; use targeted, well-developed interventions; select outcome measures with good psychometric properties that are aligned with skills developed in the programs; and use high-quality designs with adequate sample sizes (Cheung & Slavin, 2016; WWC, 2014). These practices would include accounting for baseline differences in children’s skill level (Lin et al., 2021). Skills associated with IPS, such as EF and cognitive-processing abilities, may also need to be controlled for (Mattera et al., 2021). More research is needed that covers all age groups, including infants and toddlers, about whom little is known in this area (Lally, 2010). Use of subgroups such as children at-risk or children identified as neurodivergent also requires further exploration where enough literature exists (Shaw et al., 2021).

Research on which intervention components work best, and for whom, in promoting which IPS skills in the early years is also critical (Hattie, 2015). Optimal assessment of IPS cognitive skills requires further development and clarification, as generating alternative solutions, while important, does not capture all the skills involved in the IPS steps. Tools developed for STEM studies of this nature exemplify the benefit of exploring these observable IPS behavioral steps specifically (Anggoro et al., 2021; Bahar & Aksut, 2020). Finally, progress monitoring tools (e.g., Walker et al., 2023) may be helpful in identifying children in greater need of specific skill development, not only in situations where resources are limited, but also as feedback for educators (LeBuffe & Shapiro, 2004). Our findings map out important gaps in the literature that need to be addressed. While more research is needed in this area, existing studies allow us to draw some important conclusions.

Conclusion: Implications for Research, Policy, and Practice

The current review provides strong evidence that very young children can be taught IPS skills. Consistent with prior research (Barnes et al., 2018; Murano et al., 2020; Yang et al., 2019), we found that content-specific, skills-focused training (e.g., training on the steps involved in IPS, including cognitive aspects such as planning, brainstorming, and consequential thinking) was particularly effective in supporting the development of children’s problem-solving skills in an interpersonal context. Program length, implementor, and location of the intervention were not significant moderators in the multilevel analysis. These results suggest that there may be substantial flexibility in how and by whom these programs can be delivered. Finally, this review provides evidence that ECEC settings are a natural and effective environment for teaching IPS. Given the growing number of children who are exposed to ECEC, the current study provides an important context for supporting the development of their IPS skills.

Supplemental Material

sj-docx-1-rer-10.3102_00346543251399668 – Supplemental material for Teaching Interpersonal Problem-Solving Skills to Young Children in Early Childhood Education and Care (ECEC) Settings: An Integration of Evidence

Supplemental material, sj-docx-1-rer-10.3102_00346543251399668 for Teaching Interpersonal Problem-Solving Skills to Young Children in Early Childhood Education and Care (ECEC) Settings: An Integration of Evidence by Evelyn McMullen, Michal Perlman, Samantha Burns, Olesya Falenchuk, Linda White and Elizabeth Dhuey in Review of Educational Research

Footnotes

Acknowledgements

A special thanks to all the lead research assistants, Sabrina (Sin Wah) Chan, Dalia Elramly, Anika Ganness, Zhangjing (Ryan) Luo, Atul Manmohan, Tommy Paparizos, Sumayya Saleem, Nadhiena Shankar, Chelsea (Ziyi) Song, Brendan Toles, and Nadina Mahadeo-Villacis, for their work and supervisory help. Thank you also to the research assistants, Alessandra Badia, Kavita Balsara, Anara Burambayeva, Henry Duong, Aljandra Fandino, Michael Joe, Darrell Jose, Doris Lin, Indira Quintasi Orosco, Ryan (Jinseong) Park, Linh Que Pham, Lifei Qian, Affaf Rahman, Nithila Sivakumar, Bilijana Vujicic, and Kexin Wang, for their help working on this project. A special thank you to Sabrina (Sin Wah) Chan, Zhangjing (Ryan) Luo, and Chelsea (Ziyi) Song for their work on the teaching manuals.

Author’s Note

Samantha Burns is now affiliated to Department of Family Relations and Applied Nutrition, University of Guelph.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Special thanks to the Social Sciences and Humanities Research Council (SSHRC) for providing the grant (#890180020) that supported this project. We thank OISE/UT’s Public Services librarian Jenaya Webb for their invaluable assistance throughout this project.

Notes

Authors

EVELYN MCMULLEN is a postdoctoral researcher in the Department of Human Development and Applied Psychology at the Ontario Institute for Studies in Education, University of Toronto; email:

MICHAL PERLMAN is a professor at the Department of Human Development and Applied Psychology at the University of Toronto; email:

SAMANTHA BURNS is an assistant professor in the Department of Family Relations and Applied Nutrition at the University of Guelph (email:

OLESYA FALENCHUK is a research systems analyst at the Ontario Institute for Studies in Education, University of Toronto; email:

LINDA WHITE is a professor of political science at the University of Toronto; email:

ELIZABETH DHUEY is a professor of economics at the University of Toronto in the Department of Management and in the Department of Leadership, Higher, and Adult Education; email:

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.