Abstract

When beginning readers read aloud, the teacher’s feedback affects their reader identities. Teacher’s feedback may also imprint a strong model of what reading is and what proficient readers do. This systematic review investigates the characteristics of teachers’ feedback on elementary students’ reading and furthers its potential to support students’ agency in learning to read. A total of 52 empirical studies in K–5 settings were identified and analyzed. Findings suggest clear associations between how feedback was presented and what aspects of reading were targeted: typically, either explicit feedback on decoding or implicit feedback on meaning. Further, support for student agency was more strongly associated with implicit feedback practices. Finally, two groups of students—struggling readers and L2 learners—tended to receive feedback that does not promote agency. The review concludes by discussing the potential of feedback practices to support students in becoming proficient and independent readers.

Keywords

Feedback is essential for learning (e.g., Hattie & Timperley, 2007; Kluger & DeNisi, 1996) and can be defined as “any information related to a performance that learners can use to improve their performance or learning” (Lipnevich & Smith, 2022, p. 2). In primary-school reading instruction, teachers listen to students reading in class, in small groups, or in one-on-one interaction to promote their reading development by effectively addressing their individual needs (Duke, 2020). Research supports the practice of teachers providing feedback during reading sessions to enhance student learning outcomes (Dietrichson et al., 2017; Hall & Burns, 2018; Toste et al., 2020). In this context, teachers provide immediate feedback that students can use to improve their ability to decode and comprehend text. Additionally, teachers’ feedback plays a pivotal role in shaping students’ emergent reading experiences and influences their identities as readers and their engagement with reading materials, thereby facilitating the development of reading skills (Johnston, 2004; Lipnevich & Smith, 2022).

Within the modern student-centered feedback paradigm (Nieminen et al., 2022; van der Kleij et al., 2019), feedback is expected to motivate students to use the information they receive to enhance their learning outcomes (Lipnevich & Smith, 2022; Molloy et al., 2020). In the context of early reading instruction, this implies that, when listening to students’ reading, teachers communicate feedback through dialogue about texts and provide opportunities for students to actively use feedback to engage in the reading process. However, in practice, teachers’ real-time monitoring and assessments of young students learning to read, and the subsequent feedback, may motivate or demotivate the student (Johnston, 2004). This disparity of outcomes is likely related to self-efficacy, defined as an individual’s belief in their capacity to execute actions (Bandura, 1997), which plays a critical role in motivation for reading and learning (Toste et al., 2020; Unrau et al., 2018).

Ideally, feedback is an important source to enhance self-efficacy and should aim to strengthen students’ confidence in their reading abilities. Hence, teacher feedback during reading should not only support skills and strategies but also ignite a lasting interest and engagement in reading among students. This highlights the importance of tailoring feedback to reading skills and strategies while providing young readers with opportunities to see themselves as active readers and experience reading as a valuable and enjoyable activity. There is thus a need to investigate the characteristics of teacher feedback during oral reading with regard to how well it meets the complex needs of students to become active, motivated, and confident readers. Despite extensive research on feedback (e.g.; Hattie & Timperley, 2007; Shute, 2008; van der Kleij et al., 2019), updated systematic knowledge on feedback in the context of early reading instruction is lacking. The present study reviews the characteristics of teacher feedback in early reading instruction, specifically in kindergarten through fifth grade (K–5), with particular emphasis on how the feedback practices support student agency. We explore potential factors related to how feedback influences students’ agency in reading instruction by examining students’ opportunities to actively use feedback to develop as readers in various practices. Furthermore, we address diverse groups of students.

The theoretical framework guiding our study rests on three main pillars: (a) theories and models of feedback, (b) theories of the reading process, and (c) theories explaining the concept of student agency in learning processes. These three pillars also mirror the authors’ scholarly views on the object of study: Reading is complex and can be viewed in different ways. Theory matters and has consequences for how studies are evaluated and for what inferences are made from them. As authors of this review, we declare that we view reading in line with recent positions that emphasize its complexity and acknowledge the need for multiple sciences to inform its scientific foundation (Afflerbach, 2018; Duke & Cartwright, 2021; Tønnessen & Uppstad, 2015). One characteristic of these views is that the performance of reading involves both cognitive and affective dimensions.

Feedback in Reading Instruction

Historically, feedback research focused on the teacher’s role in delivering feedback. Essential works by Hattie and Timperley (2007), Shute (2008), and Kluger and DeNisi (1996) have offered comprehensive insights into how feedback influences learning processes. However, over the past 20–30 years, there has been a paradigm shift toward a more student-centered approach (Nieminen et al., 2022; van der Kleij et al., 2019). This shift involves moving from viewing students as passive recipients of feedback to exploring how they interpret, engage with, and act upon feedback (Lipnevich & Smith, 2022; Molloy et al., 2020; Nieminen et al., 2022; van der Kleij et al., 2019). There is a growing consensus that feedback is less about the information given and more about how students process and use that information. In the new feedback paradigm, the importance of examining feedback within its specific context is emphasized. Factors such as the learning environment and student age affect feedback effectiveness (e.g., Tunstall & Gipps, 1996; van der Kleij et al., 2015). In this study, our focus is on oral reading in early reading instruction. Based on Lipnevich and Smith (2022), we define teacher feedback in this context as any information that students can use to develop their reading skills, strategies, and motivation.

Given that we study feedback in the specific domain of reading, it is also of importance how we define reading. Prevailing theories consider reading to be a complex activity involving both cognitive and motivational dimensions, where the reader finds meaning in text through an evolving process (Afflerbach, 2018; Duke & Cartwright, 2021; Tønnessen & Uppstad, 2015). Afflerbach (2018) and Duke and Cartwright (2021) describe reading as a complicated task requiring skills, strategies, and prior knowledge to comprehend text, with the development of these skills and strategies being influenced by the reader’s self-efficacy, self-regulation, and metacognition. Tønnessen and Uppstad (2015) emphasize this multilayered process of reading as an interpretive skill where readers, to construct meaning, navigate back and forth between details such as letters, sounds, and words and the text as a whole. When reading is viewed as a dynamic, interpretative skill, readers’ motivation and interest are seen as driving forces in their interpretation, thereby facilitating the process of learning to read (Walgermo et al., 2018).

Teachers’ feedback in the context of reading instruction may support the dynamic reading process by addressing details such as phonics and decoding strategies, activating comprehension, and providing motivational support to build self-efficacy through various content areas. Feedback can take diverse forms, including correcting errors, offering comments, providing guidance, or giving praise. To support students in developing as readers, feedback should reflect the complexity of reading by providing information and opportunities to act upon all aspects of reading, including motivation, metacognition, comprehension, and decoding skills (Afflerbach, 2016).

It is important to keep in mind that reading skill cannot be directly observed, and the fleeting nature of oral reading makes it challenging for teachers to assess students’ reading skill and provide adequate feedback to them. The only thing that can be observed is the realization of the skill. However, the student’s reading may be affected by mood, fatigue, and other factors, and skill remains inside the student as a potential even after reading ends (Tønnessen & Uppstad, 2015, p. 52). When students read aloud, the teacher observes the realization of their reading skill in real time in order to provide instant feedback and take action based on observations made.

Teachers’ feedback practices may vary across different groups of students, including low and high achievers as well as students from diverse social backgrounds (Griffiths et al., 2023). One source of this variation is teachers adapting their instruction and tailoring their feedback to meet students’ needs (Reynolds, 2017). On the less conscious side, teachers’ feedback often tends to reflect their expectations of students (Gentrup et al., 2020; Rubie-Davies, 2007), and differing expectations can systematically affect the learning opportunities provided to various groups of students (Winstone et al., 2017).

Student Agency

Given the importance of students actively using feedback (Griffiths et al., 2023; Lipnevich & Smith, 2022), the concept of student agency becomes particularly relevant when examining feedback practices (Adie et al., 2018; Nieminen et al., 2022). Student agency is a relatively new dimension to literacy motivation (Guthrie & Wigfield, 2023) and was defined by Johan Marshall Reeve as students being proactive agents of their own social and academic growth within the learning environment (Reeve & Tseng, 2011, p. 258). Reeve and Tseng (2011) found that agency, combined with emotional and cognitive engagement, enhanced academic achievement. In line with this, Guthrie and Wigfield (2023) concluded that students’ belief in their proactive role (agency) significantly contributes to their achievements and learning environment, and Vaughn et al. (2020a) emphasized students’ participation by defining agency as students’ opportunities to influence their learning through their intentions, decisions, and actions. Engagement is often considered as the realization of motivational factors, including agency.

From a psychological and cognitive perspective, student agency focuses on individuals’ ability to make choices and engage in independent, intentional actions (Winstone et al., 2017). This perspective highlights students’ capacity to self-regulate and actively participate in their learning processes, emphasizing their active role in feedback processes. An ecological perspective on student agency examines how structures within the learning environment influence students’ actions (e.g., Nieminen et al., 2022). This view underscores how students’ opportunities for agency are embedded within the temporal patterns of thought and action in the learning environment, resulting from the interplay between individuals and their learning environments. Students bring their experiences and beliefs from previous learning situations, which influence their perceptions of future achievements. This ecological notion of agency explains why a person may achieve agency in one situation but not in another. In our context—the feedback process in early reading instruction—student agency is also studied through positioning theory, which considers how cultural norms and pedagogical practices shape students’ self-conceptions as readers (Moses & Kelly, 2017; Walgermo & Uppstad, 2023). This perspective highlights the role of social interactions in specific cultural environments and their impact on the development of reader identities.

The approach of Vaughn et al. (2020a) combines both individual and social or environmental aspects to illuminate how agency is interconnected with the individual and social environments, using five inter-related constructs to illustrate how student agency is realized within the context of learning to read: self-perception, which concerns how students view themselves as readers; intentionality, referring to students’ beliefs, ideas, and thoughts about their reading; choice-making, involving students’ willingness and opportunities to make choices and decisions; persistence, encompassing students’ strategies to solve problems and their resistance to difficulties; and interactiveness, relating to how students interact with and influence their learning environment, including teacher responses to their reading, mistakes, or ideas shared during oral reading.

It is crucial to understand how teacher feedback may impact students’ agency both by providing opportunities and by setting limitations. Johnston (2023) illustrates this by comparing two feedback messages to a young student: “Good job!” and “How did you do that?” The first message evaluates the student’s reading as “good,” possibly suggesting that the task is completed. In contrast, the second message invites the student to reflect on the process, fostering a sense of problem-solving and agency. This perspective helps us understand how feedback can support or hinder student agency, influencing motivation and engagement in reading.

The Present Study

Previous feedback research has been criticized for not paying enough attention to contextual features or to students’ perspectives on the use of feedback (Lipnevich & Panadero, 2021). By focusing on early reading instruction, this study aims to explore the characteristics of feedback in the context of students reading aloud to their teacher, where the teacher’s comments, discussions, and instructions are pivotal. This niche is crucial for identifying how feedback supports young students in learning to read during their first years of formal schooling (K–5).

Summaries of research on feedback during oral reading from the 1980s and 1990s (Heubusch & Lloyd, 1998; McCoy & Pany, 1986) took a teacher-centered approach, focusing on error correction and repetition of the correct response. Since the 1990s, teachers’ practices for feedback on students’ oral reading have been studied with reference to concepts such as scaffolding (e.g., Ankrum et al., 2014; Rodgers et al., 2016; Rojas Rojas et al., 2019), feedback in the form of questions (e.g., Blything et al., 2020), learning dialogues (e.g., Skidmore et al., 2003), and guided reading (e.g., Olszewski, 2019), where teachers provide support and guidance as students use reading strategies. These practices emphasize instructional support that gradually shifts from the teacher to the student, promoting independent reading skills through techniques such as modeling. While these studies give more prominence to student perspectives, they still lack a comprehensive explanation of how feedback impacts student learning, and there is no more recent summary. In fact, recent reviews on feedback and reading have primarily focused on reading comprehension (Swart et al., 2019) and feedback characteristics in teachers’ manuals for reading programs, decontextualized from classroom settings (Schrauben & Witmer, 2020). These studies have not utilized frameworks from the new feedback-research paradigm, and students’ perspectives have been given limited attention. Despite the importance of a student-centered approach in new feedback theories, these perspectives have been minimally explored in research on feedback and early reading instruction (Grønli et al., 2024). Given the widespread practice of teacher feedback during oral reading, there is a pressing need for an updated systematic literature review on this topic.

In this study, we aim to address these gaps by conducting a qualitative thematic synthesis that adds contextual understanding to the knowledge generated by previous research on feedback and reading. The overall aim is to map out the landscape and describe the topography of practices for giving feedback on students’ oral reading. Because of the fleeting nature of oral reading, quantitative data have certain inherent limitations. For this reason, researchers have suggested that quantitative research on feedback should be complemented with research analyzing qualitative data (e.g., Rasinski & Hoffman, 2003). To gain a comprehensive understanding of the phenomenon investigated, our thematic review includes both qualitative and quantitative studies (Thomas et al., 2017). We also applied a sophisticated quality-screening scale for the papers included, developed from a review of existing quality scales. This ensures a thorough assessment of quality and strengthens the reliability of our results. Additionally, we seek to explore the role of student agency in feedback practices and to contribute new insights into how feedback on oral reading can develop active, proficient readers. By focusing on student perspectives and agency, we aim to identify feedback practices that improve reading skills and also enhance students’ motivation and engagement.

To address the gap identified, our systematic review is guided by the following research questions:

1: What is the topography of feedback practices in terms of what the feedback contains and how it is provided?

A) How is feedback on students’ oral reading provided to them?

B) What is the content of teachers’ feedback on students’ oral reading?

2: How is student agency in various dimensions supported by the characteristics of feedback practices?

3: To what extent do different groups of students receive different forms of feedback?

Method

This systematic review was conducted following the methodology of a thematic systematic review as described by D.Gough et al. (2017), Thomas et al. (2017), and Thomas and Harden (2008). The work on the review consisted of five phases: framing the research question; searching for and screening relevant literature; quality assessment; synthesizing the evidence from the studies included; and presenting and discussing the findings. The systematic review was conducted by a team of four researchers who were all involved in all phases of the process. The authors’ backgrounds included extensive experience both as literacy teachers and as researchers, including previous work on review studies.

Searching and Screening

The wording of the research questions and the development of a relevant search string were discussed in several rounds. The main author conducted several pilot searches, whereupon the team analyzed the results, and the search string was adjusted accordingly. The final search was conducted in January 2023. The first author initially screened titles and abstracts. Approximately 10 percent of the studies required clarification on one or more aspects and were discussed with coauthors in this phase. Similarly, the first author made an initial full-text screening of articles and identified those for which it was unclear whether they should be included (n = 96).

To find relevant studies to answer our research questions, we conducted a systematic search in five databases: ERIC, Academic Ultimate Search, Web of Science, Scopus, and PsycINFO were searched for papers and dissertations published between 1995 and 2022 that addressed teachers’ feedback on students’ oral reading. There were two reasons for choosing 1995 as the starting point. First and foremost, this review builds on the results of Heubusch and Lloyd (1998), who ended their search in 1995, and our search begins where theirs concluded. Their review represents the most recent comprehensive summary available at the time and serves as a logical starting point for our investigation. Second, as described in the previous section, the conceptualization of feedback in research has changed over time, from a teacher-directed approach to a deeper interest in learners’ perspectives on feedback (van der Kleij et al., 2019), and it is likely that studies from 1995 onward may bring new perspectives. We initially designed a search string consisting of synonyms for “feedback” and “reading.” This, however, yielded over 30,000 hits. To restrict the search to a manageable number of studies for screening, we included additional keywords related to “K–5.” While this focus might exclude some articles, it was necessary to ensure a feasible number of studies for screening. Additionally, this allowed us to focus specifically on the K–5 age group, emphasizing the importance of early reading instruction and the need to investigate feedback practices in this specific context. The final search string used in our study included synonyms for “feedback,” “oral reading,” and “K–5” (see Appendix A). The search conducted in January 2023 yielded 3,772 hits after the removal of duplicates.

The studies were exported to the EPPI-Reviewer software (Thomas et al., 2010) and were screened manually for title and abstract. To select relevant studies, the following inclusion criteria were applied: (a) the study includes qualitative or quantitative data on teachers’ practices regarding feedback on students’ oral reading of words or text; (b) the study is related to K–5 students (age 5–11 years); (c) the primary focus of the study is on initial reading instruction; (d) the study is a peer-reviewed article or a dissertation; (e) the study is written in English; and (f) the study was published between January 1, 1995, and December 31, 2022. Hence, the inclusion criteria included no limitations with regard to student characteristics. In this review, the term “struggling readers” refers to students who have demonstrated specific impairments in learning to read as well as students whose standardized test scores indicate reading skills below grade level. Terms such as “reading difficulties,” “at risk,” “low-achieving,” and “poor readers” are all pragmatically considered synonymous. Further, “L2 learners” refers to students who obtain their first reading instruction in a language different from their home language. Finally, to maintain a focus on reading instruction in school, we excluded studies that investigated feedback in after-school or summer courses, as these settings can differ significantly from regular school environments in terms of culture, learning opportunities, and teacher practices.

After screening for title and abstract, 256 studies met the inclusion criteria and were reviewed based on their full text. As a result, 38 studies were selected for inclusion. Finally, we conducted a backward and forward snowball search from the references in those studies. This yielded another 14 relevant studies, meaning that a total of 52 studies were included. (See Appendix B and C for details on the selection process.)

Coding, Data Extraction, and Analysis

Thematic analysis—and synthesis—is particularly suitable for analyzing multidisciplinary datasets deriving from both quantitative and qualitative paradigms (Thomas et al., 2017, p. 190). The first stage of a thematic synthesis involves identifying themes across studies. In the second stage, the initial themes are organized into a framework relating them to one another. In the third stage, the aim is to answer the research questions based on a synthesis of the themes having emerged.

The studies included were coded in several stages to extract relevant descriptions and identify key themes. The following information was coded: (a) study characteristics (author, title, publication type, year, country), (b) study-sample details (participant characteristics, grade, sample size, unit of analysis), (c) research design (method, research question(s), design), (d) data type, (e) feedback characteristics (feedback construct, content), (f) reading-instruction characteristics (context, reading activity, reading construct in focus), (g) main findings, (h) recommendations for practice and further research, and (i) study-quality indicators (consistent and sound use of theory, analysis, discussion of results, research methods presented and outlined, and evidence for validity/reliability presented).

In the first round of coding, we identified and coded descriptions of activities, specifically what teachers and students said and did during feedback and reading activities. This ensured feedback practices were evaluated comparably across all studies, regardless of size or unit of analysis. As a result of the first round of coding, two main themes were identified in relation to RQ1: (i) mode of feedback: explicit or implicit; and (ii) content of feedback: decoding or meaning characteristics. This initial coding for RQ1 was informed by categories (e), (f), (g), and (h). Additionally, coding of sample characteristics (category b) was conducted to address RQ3, examining the extent to which different groups of students received different forms of feedback.

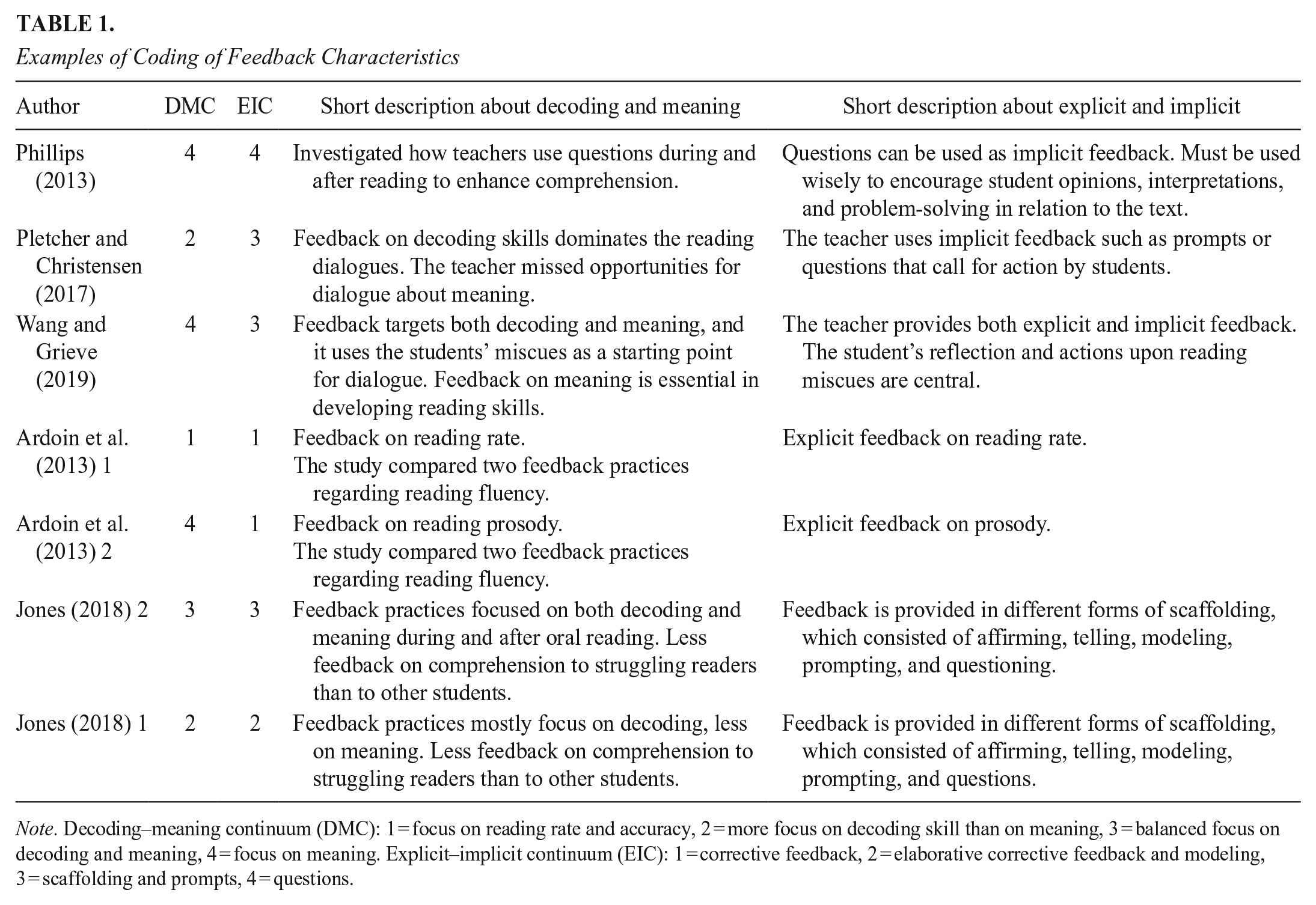

The analytic process involved reading and rereading each study, while continuously revisiting the descriptive and analytic coding, and developing themes that emerged after mapping and comparing the studies. During this process, it became clear that our main concepts, as reflected by our themes, were continuous rather than dichotomous. This yielded two descriptive continua, each consisting of four overarching characteristics per continuum identified as central to understanding feedback practice. Table 1 provides examples of coding within these themes. The continua describe the characteristics of feedback with respect to, first, “how it is said” (explicit–implicit), and second, “what is addressed” (decoding–meaning). The explicit–implicit continuum ranges from detailed, specific feedback at one end to hints or questions at the other. The second continuum, labeled “decoding–meaning,” reflects the extent to which feedback targets decoding skills or emphasizes strategies for understanding new words or searching for meaning in the text. In a second coding round, data describing these themes were extracted from the procedure sections, and the theoretical framework of the studies allowed us to position them on the continua developed from the first-round themes, further elaborating on RQ1.

Examples of Coding of Feedback Characteristics

Note. Decoding–meaning continuum (DMC): 1 = focus on reading rate and accuracy, 2 = more focus on decoding skill than on meaning, 3 = balanced focus on decoding and meaning, 4 = focus on meaning. Explicit–implicit continuum (EIC): 1 = corrective feedback, 2 = elaborative corrective feedback and modeling, 3 = scaffolding and prompts, 4 = questions.

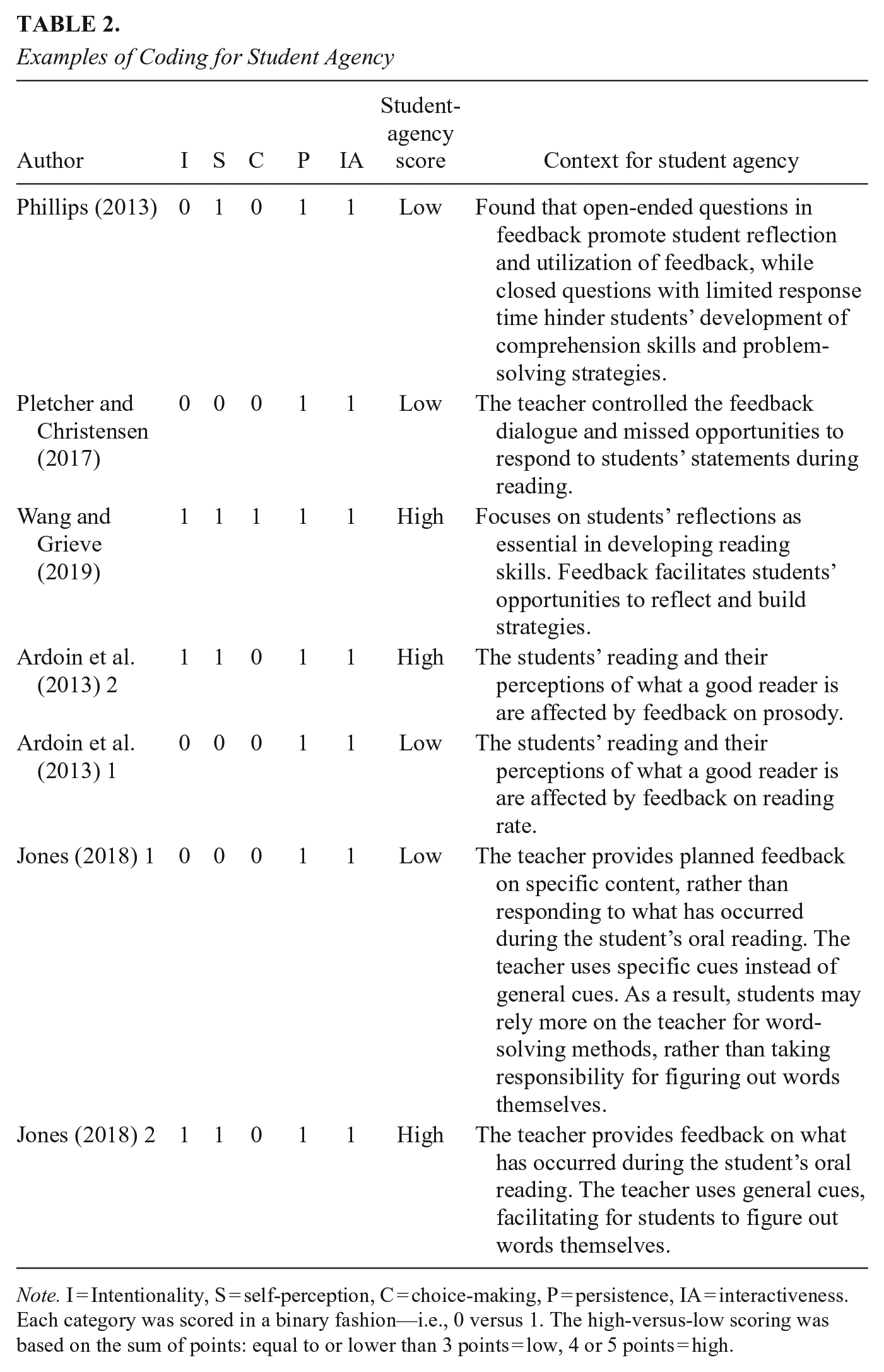

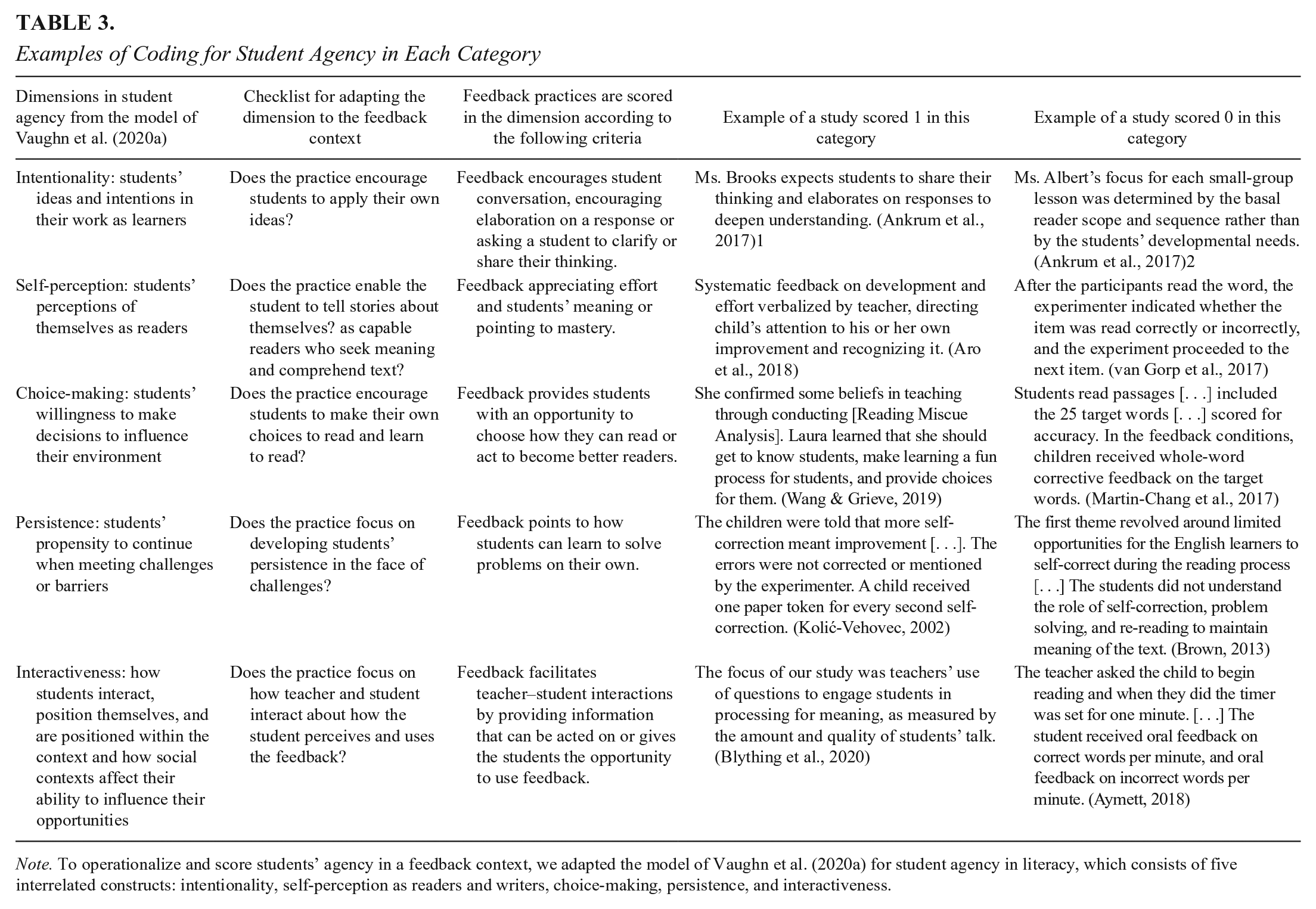

In a third round of coding, we specifically examined the teachers’ and students’ actions within the feedback context, focusing on the extent to which student agency was supported. This coding was related to RQ2 and used categories (e), (f), and (g). Given our goal of investigating students’ roles in feedback practices, based on recent feedback theories emphasizing student agency, the dimensions of student agency were predefined in our coding framework. These dimensions, proposed by Vaughn et al. (2020a, 2020b), were adapted to the feedback context, resulting in predefined codes for assessing student agency in feedback practices (presented in Tables 2 and 3). These codes allowed us to analyze the descriptions extracted of feedback context, mode, and content to determine the level of student agency reflected in the feedback practices in each study. Three authors independently coded all the articles and then discussed the coding together to ensure consistency. In addition to using the categories from the first round of coding, we revisited the articles and read them holistically to capture the full context.

Examples of Coding for Student Agency

Note. I = Intentionality, S = self-perception, C = choice-making, P = persistence, IA = interactiveness. Each category was scored in a binary fashion—i.e., 0 versus 1. The high-versus-low scoring was based on the sum of points: equal to or lower than 3 points = low, 4 or 5 points = high.

Examples of Coding for Student Agency in Each Category

Note. To operationalize and score students’ agency in a feedback context, we adapted the model of Vaughn et al. (2020a) for student agency in literacy, which consists of five interrelated constructs: intentionality, self-perception as readers and writers, choice-making, persistence, and interactiveness.

As reflected in the quality assessments, the procedures in the studies were described in detail, allowing us to form a reliable picture of the feedback interactions during feedback sessions and to code the agency score for each study. As a result of the third round of coding, the studies were assigned scores indicating the strengths and weaknesses of student-agency conditions in the respective practices (see online Tables S2 and S3 for coding details).

To ensure appropriate extraction of relevant information and to identify key themes across the 52 papers, all studies included were coded in parallel, and inter-rater agreement (IRR) was calculated for each phase of the data extraction. In the first round, coding was done by the first and second authors on aspects such as study characteristics, sample details, research design, data type, feedback characteristics, and reading-instruction characteristics, resulting in an IRR of 90 percent. In the second round of coding, which focused on the 65 feedback practices identified, the first and second authors coded for the continua, and in the third round, three authors independently coded the articles for student-agency scores. After independent coding, 23 percent of the feedback practices needed discussion regarding codes on the continua, and 7 percent regarding student-agency scores. The three authors discussed the results together to ensure consistency and accuracy, resolving all discrepancies through discussion. This thorough approach in all three rounds of coding ensured that our analyses were robust and reliable, providing a solid foundation for our findings.

Assessment of the Quality of the Studies

This thematic review takes a configurative approach and aims to explore emergent patterns and to develop concepts, as described by Thomas et al. (2017). This type of systematic review combines the results of “qualitative,” “quantitative,” and “mixed-methods” studies investigating the same phenomena and analyzes them to address the research questions (Thomas & Harden, 2008). The inclusion of both quantitative and qualitative studies was essential for a comprehensive understanding of feedback practices. However, this required the use of convergent approaches to synthesize data from the various studies included. To assess the quality of the studies, we adapted a Quality Assessment of Method scale used in various forms by Risko et al. (2008), Miller et al. (2015), C. E.Scott et al. (2018), and Sirriyeh et al. (2012). We sought to adapt our scale so that it would not favor one methodological approach over the other, allowing us to evaluate the studies uniformly since we consider both approaches valuable for addressing our research questions. Specifically, we assessed each study in four categories (theory, method, findings, and discussion) to determine whether it had been designed, conducted, and reported in such a way that it was reliable and made a meaningful contribution to the answers to our research questions.

The quality assessment ensured that our coding of feedback characteristics (RQ1) was based on robust and credible data from the studies’ method and results sections. This helps in accurately characterizing the continuum from explicit to implicit feedback and from decoding to meaning-focused feedback. Similarly, our analysis of how feedback supports student agency (RQ2), evaluating dimensions such as self-perception, intentionality, choice-making, persistence, and interactiveness, is grounded in studies with solid evidence and clear methodologies (see Tables S4 and S5).

A predetermined scale was used to categorize each study as of low, moderate, or high quality. Of all studies, 90 percent (n = 47) were deemed to be of high quality and 10 percent (n = 5) of moderate quality. No study was found to be of low quality. The five studies found to be of moderate quality were specifically assessed in a second round, targeting the specific weaknesses identified. Those studies represented different research designs and publication years, and some information was missing with regard to reporting on reliability/validity or links to theory. As a result of the second assessment round, the results of the studies could be reliably used to interpret the findings and draw conclusions in the systematic review.

Results

The literature search for the present systematic review yielded 52 studies—15 dissertations and 37 peer-reviewed journal articles—published between 1995 and 2022. The majority of the studies were conducted in the United States (n = 44); the others were from the United Kingdom and Canada (each n = 2) and from Finland, the Netherlands, Turkey, and Croatia (each n = 1). The studies were evenly distributed by year of publication (see Figure S1). Regarding participants’ grade level, 18 of the studies included students from more than one grade (in the K–5 range). Overall, the grades covered were kindergarten (n = 6), grade 1 (n = 22), grade 2 (n = 18), grade 3 (n = 20), grade 4 (n = 17), and grade 5 (n = 7). The students included in the studies were typical readers (n = 17), struggling readers (n = 30), or L2 learners (n = 3); two of the studies reported findings for multiple groups of students. Taken together, there is a clear overweight of studies targeting struggling students.

The 32 study samples coded as “struggling readers” are students enrolled in regular schools and classes, receiving intensive instruction or additional support. They were identified through teachers’ subjective assessments (n = 5), school assessment systems (n = 14), or both (n = 13). Four studies included only students within a special-education setting, while four others combined students having diagnosed learning disabilities with struggling readers without formal diagnoses. In total, most studies from this category (n = 24) and most participants coded as struggling readers are at-risk students in regular classes.

Regarding sample size, 11 studies covered 1–4 students, 9 had a sample size of 5–9 students, 16 had a sample size of 10–49 students, 11 had a sample of over 50 students, and 5 did not report the student-sample size, only the teacher-sample size. Small sample sizes were common in both qualitative (n = 8) and quantitative (n = 12) studies. The teacher-sample size was reported in 32 studies; of those, some had a sample of 1–2 teachers (n = 11) while others covered 3–4 teachers (n = 9), 5–9 teachers (n = 4), or 10–19 teachers (n = 6). For the remaining 20 studies, the teacher sample was not relevant, as the designs focused solely on the feedback practice being investigated in a student sample.

Of the studies included, 28 used quantitative methods, 20 used qualitative methods, and 4 were mixed-methods studies. The studies differed in terms of research design and data sources. For example, some involved the collection of observational data on teachers’ practices, students’ actions, and students’ responses during and after oral reading (n = 28), some had an experimental design involving the examination of the effects of different feedback practices in interventions of varying duration (n = 24), and some included teachers’ and students’ voices obtained from interviews or surveys of teachers (n = 16) and students (n = 9).

Research Question 1: What Is the Topography of Feedback Practices in Oral Reading in Terms of What the Feedback Contains and How It Is Provided?

In the 52 studies, we identified a total of 65 practices regarding teachers’ feedback on students’ oral reading. The findings show that teachers’ practices for feedback on oral reading vary in terms of the extent to which the feedback is explicit or implicit and in terms of what parts of the reading process are targeted by the feedback. To provide an overview of the feedback practices, we use the simile of “topography” to reflect the multidimensional nature of the feedback “landscape.” The research questions regarding feedback mode and feedback content each reflect one dimension of this landscape.

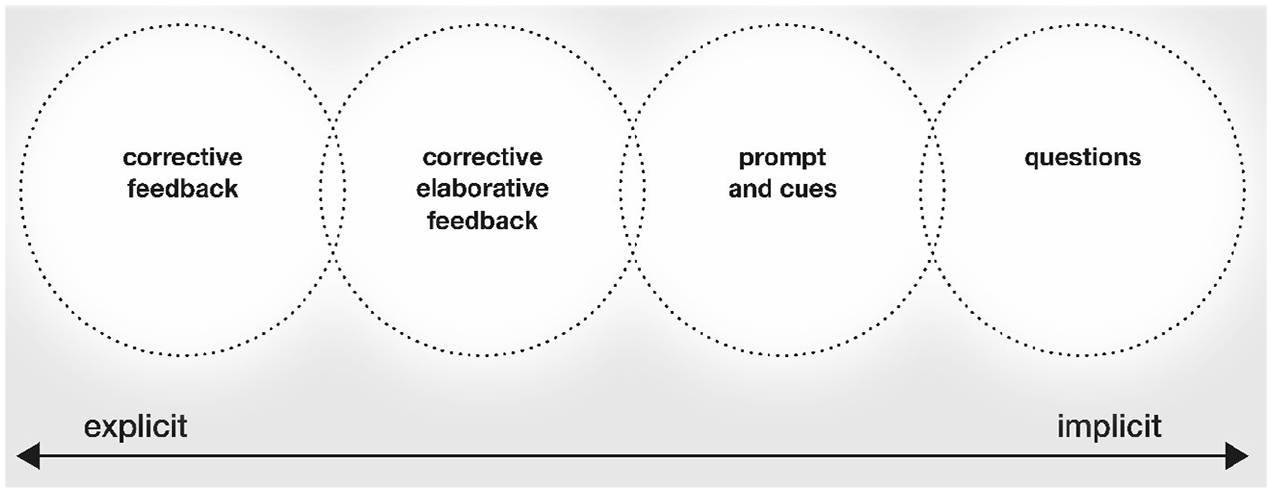

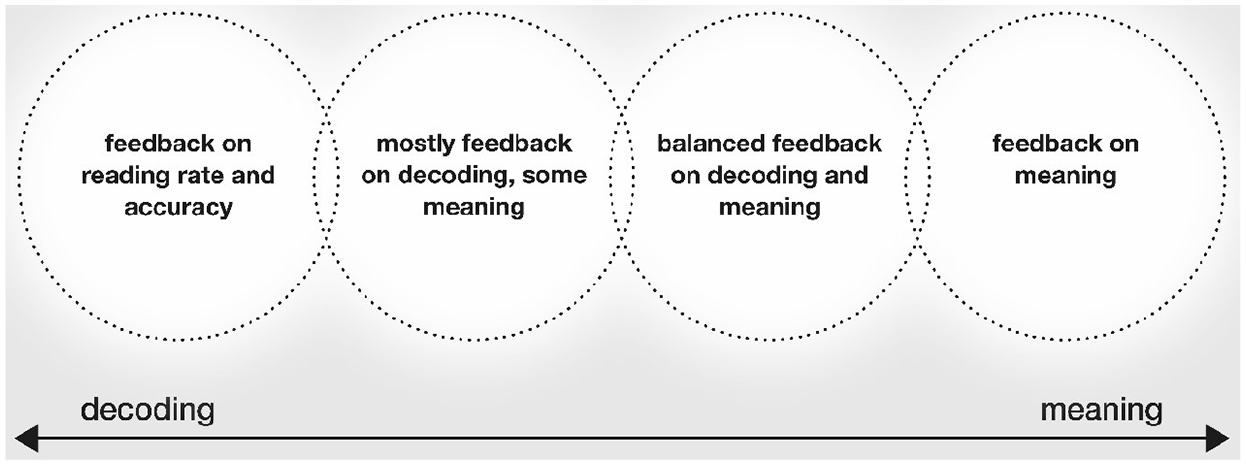

To answer the first part of RQ1, we differentiated between explicit and implicit feedback along a continuum. We identified four main overarching characteristics of teachers’ feedback practices, ranging from explicit corrective feedback on one extreme to implicit feedback provided through questions on the other (see Figure 1). To answer the second part of RQ1, we used a continuum of content, ranging from decoding to meaning. Since decoding and comprehension are both considered essential components of reading (Afflerbach, 2018; P. B.Gough & Tunmer, 1986; Tønnessen & Uppstad, 2015), we focused on these aspects. Based on the data, we identified four overarching characteristics of practices across studies, ranging from a strong emphasis on decoding at one extreme to a strong focus on meaning at the other (see Figure 2).

Explicit–implicit continuum for teachers’ feedback practices.

Decoding–meaning continuum for teacher’ feedback practices.

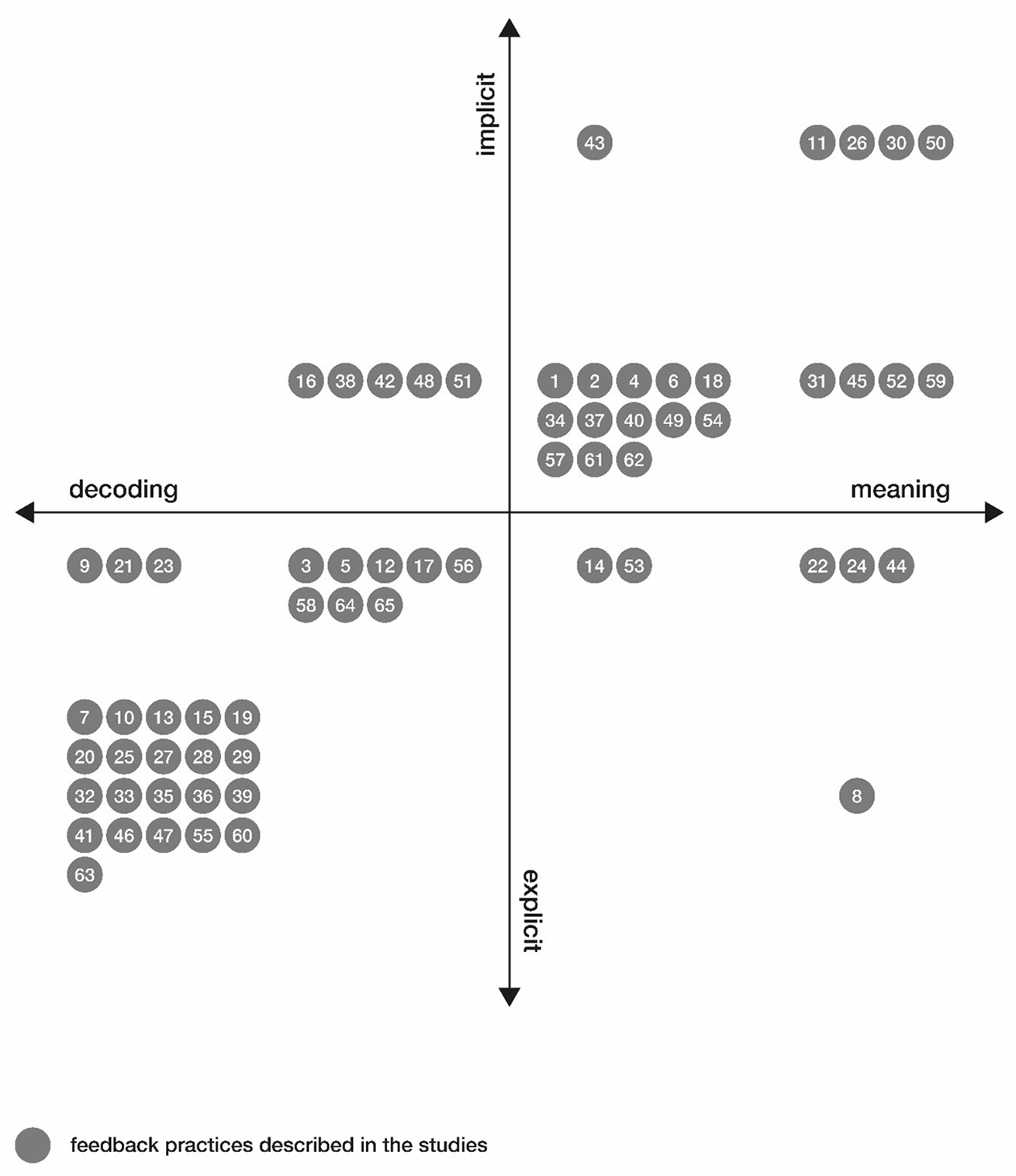

When we combined the two dimensions presented previously—mode and content—in a dual-continuum model, a first topographic picture can be sketched based on the main findings regarding the characteristics of the 65 feedback practices identified in the review (see Figure 3). This model reveals four main categories of feedback practices distributed across the quadrants. Explicit feedback on decoding skills (32 practices) and implicit feedback on meaning (22 practices) are more prevalent, while implicit feedback on decoding (5 practices) and explicit feedback on meaning (6 practices) are less common. This illustrates the range of targets of teachers’ feedback from explicit decoding skills to implicit comprehension strategies. Notably, of the 24 intervention studies, 22 focused on explicit feedback practices, while the 28 observational studies were evenly distributed across the topography. This shows that some quadrants have significantly more practices while others have fewer, with almost none at the extreme ends. In the next section, we present the characteristics of the practices on the continua to provide a detailed understanding of the feedback practices identified.

Relationship between feedback content (RQ1a) and feedback mode (RQ1b).

How Is Feedback on Students’ Oral Reading Provided? (RQ1a)

The first overarching practice, corrective feedback, represents settings where the teacher gives students specific information about their reading performance. Of the 65 feedback practices identified, 22 were associated with this extreme. Examples include feedback on reading rate (e.g., Aro et al., 2018; Aymett, 2018; Chafouleas et al., 2004; Cottingham, 1997; Eckert et al., 2002; Henze & Williams, 2013; Watson & Boon, 2009), accuracy (e.g., Daly et al., 2016; Martin-Chang et al., 2017; Mason et al., 2016; van Gorp et al., 2017; Yang, 2011), or prosody (e.g., Ardoin et al., 2013). Typically, the studies examined one or two specific feedback actions and analyzed student outcomes in terms of reading performance (e.g., Carroll, 2008; Cottingham, 1997; Eckert et al., 2000, 2002, 2006; Henze & Williams, 2013). Further, three practices involved explicit feedback in a visual format, such as graphs or symbols indicating students’ reading rate (Aymett, 2018; Conte & Hintze, 2000; Mason et al., 2016). Finally, four practices addressed rewards as reinforcement in feedback (Carroll, 2008; Chafouleas et al., 2004; Eckert et al., 2000; Henze & Williams, 2013).

Moving to the right on the continuum in Figure 1, we find 16 practices reflecting the overarching practice termed corrective elaborative feedback. This type of feedback is characterized by the teacher providing students with explicit corrective information about their reading and guidance on how to develop their reading skills or solve problems. For instance, teachers may model sound–letter combinations or chunking, or explain how to sound out words (e.g., Ankrum et al., 2014; Brown, 2013; Cole, 2006; Crowe, 2003, 2005). This feedback often involves stopping students during reading to correct letter–sound combinations and model how to read a sentence (e.g., Arcidiacono, 2020; Aro et al., 2018; Cerbone, 2022; Cole, 2006). Another typical characteristic of explicit elaborative feedback is the teacher modeling problem-solving strategies in reading (Brown, 2013; Mariage, 1995; Rodgers, 2004; R. C.Scott, 2021; Silliman et al., 2000; Wymer, 2016).

On the implicit side of the continuum, we identified 22 practices characterized by the teacher’s use of prompts and cues to encourage students’ reading (e.g., Arcidiacono, 2020; Cerbone, 2022; Cole, 2006; Haner, 2012; Jones, 2018; Perrin, 2008; Pletcher & Christensen, 2017; R. C.Scott, 2021; Worthen, 2021). For instance, Ankrum et al. (2014) and Malicky et al. (1997) describe how the teacher may use prompts to remind students of decoding strategies by asking a question—such as “What is the middle vowel?”—prompting students to think independently instead of giving them the correct sound. If that question does not help the student, a prompt may be provided, such as “That looks like coat, but look at the middle vowel. Could that be coat?” The prompt to seek meaning in the reading process may help the student read the words correctly. Prompts are also used to help students identify the meaning of a text. Worthen (2021) observed how teachers prompted students by asking further questions to help them engage more deeply with their reading, when their responses showed little understanding of the text. Teachers use implicit feedback to encourage students to use comprehension strategies (e.g., Lee & Schmitt, 2014; Mariage, 1995) and to develop metacognitive strategies, such as self-correction and self-awareness (e.g., Lee & Schmitt, 2014; Malicky et al., 1997), at various stages of the reading process. In studies by Poock (2017), Goodman et al. (2016), and Watson and Boon (2009), investigations of reading miscue analysis show that feedback prompts students to analyze incorrect words or misunderstandings and learn from their mistakes.

Finally, at the implicit extreme of the continuum, we located five practices that investigated teachers’ use of questions as feedback. Frey and Fisher (2010) described a pattern of questions, ranging from closed to open-ended, that support students’ decoding and comprehension during oral reading. Phillips (2013) found that effective questioning techniques can lead to reflection and activity, which can improve students’ reading comprehension. Similarly, Blything et al. (2020) showed that high-level questions yielded longer, more complex student answers.

It should be kept in mind that the explicit–implicit continuum discussed previously is an abstraction and a simplification. In real-world settings, teachers’ feedback practices will typically involve both implicit and explicit feedback. However, some of the studies describe the dynamic relationship between implicit and explicit feedback. For example, teachers first provide implicit feedback but switch to explicit feedback when students encounter problems (e.g., Ankrum et al., 2017; Frey & Fisher, 2010; Rodgers, 2004; Rodgers et al., 2016; Worthen, 2021). Conversely, other studies suggest starting with explicit modeling or explanations and then shifting to implicit support as students gain experience (e.g., Cerbone, 2022; Kolić-Vehovec, 2002; Rodgers, 2004; R. C.Scott, 2021). These perspectives should be seen as complementary and as representative of the responsiveness inherent in teachers’ practices, suggesting that moving between explicit and implicit feedback is typical and reflects adaptation to students’ needs.

In summary, addressing the first part of RQ1, our findings identified four overarching strategies used by teachers for feedback on students’ oral reading: (1) corrective feedback, providing specific information about reading performance; (2) corrective elaborative feedback, offering corrections and guidance for skill improvement; (3) prompts and cues to encourage strategic reading and self-reflection; and (4) questions as feedback to engage students and assess comprehension.

What Is the Content of Teachers’ Feedback on Students’ Oral Reading? (RQ1b)

As noted, to answer the second part of RQ1, we used a decoding–meaning continuum emphasizing that both decoding and comprehension are core elements of reading ( P. B.Gough & Tunmer, 1986). Indeed, seeing decoding and comprehension as points on a continuum rather than as categories with strict boundaries is a better fit with recent understandings of how feedback practices target all sides of reading practice, with a varying emphasis on specific aspects. Fluency, described as the bridge between decoding and comprehension (LaBerge & Samuels, 1974), exemplifies this interdependence. Our choice of the term “meaning” over the closely related notion of “comprehension” reflects our intention to include both interpretation, which is commonly seen as a process, and comprehension, which is commonly seen as a product. Many studies targeting fluency were found to emphasize feedback on decoding aspects and were therefore coded on that side of the continuum. Other studies, such as that by Ardoin et al. (2013), focus on comprehension aspects of fluency and are thus placed on the meaning side of the continuum. In the following section, we present the characteristics of the four main overarching practices identified, ranging from a strong emphasis on decoding to a strong focus on meaning.

At the left-hand side of the continuum, 24 feedback practices aimed solely at decoding skills were identified. These studies involved experiments or interventions exploring the effects of different forms of performance feedback on students’ reading development. Some focused on how feedback on oral-reading rate or accuracy can develop reading skills or reading motivation or strengthen self-efficacy. In 13 practices, rewards were added (e.g., Chafouleas et al., 2004; Eckert et al., 2000, 2002; Henze & Williams, 2013; Kolić-Vehovec, 2002) or performance feedback was combined with visual representations showing reading progress and goals (e.g., Conte & Hintze, 2000; Cottingham, 1997; Eckert et al., 2000; Mason et al., 2016; Schoen & Ogden, 1995). Only two studies reported on students’ perceptions of the feedback. Aymett (2018) found that students felt the numbers they were given did not help them improve, as they did not know whether a number was “good” or “bad.” However, feedback on effort combined with decoding skills was associated with enhanced self-efficacy (Aro et al., 2018).

In the overarching practice of decoding-focused feedback, three prevalent content themes were identified: reading rate, reading accuracy, and students’ strategies for decoding words. Twelve practices explored various combinations of feedback on reading rate and accuracy given immediately after reading. Feedback included total time used (Ardoin et al., 2013; Aro et al., 2018; Eckert et al., 2000; Guzel-Ozmen, 2011), the number of words read per minute (Eckert et al., 2002), the number of words read correctly/incorrectly per minute (Aymett, 2018; Carroll, 2008; Chafouleas et al., 2004; Conte & Hintze, 2000; Eckert et al., 2002; Henze & Williams, 2013), or the number of words read incorrectly per minute (Ardoin et al., 2013; Aro et al., 2018; Cottingham, 1997; Eckert et al., 2000, 2002; Guzel-Ozmen, 2011). Twelve studies focused on accuracy alone. Eckert et al. (2006) and Little (2015) provided counts of correct and incorrect words. Other studies offered elaborative feedback, such as stating the correct word or explicitly correcting and modeling word reading (Crowe, 2003, 2005; Martin-Chang et al., 2017; van Gorp et al., 2017; Watson & Boon, 2009; Yang, 2011).

Moving toward the second position on the continuum, we identified 13 practices where students received feedback primarily on decoding strategies, with some feedback on how they worked to find meaning in the text. Some studies describe how teachers either plan to focus on decoding beforehand (e.g., Ankrum et al., 2008; Silliman et al., 2000) or respond to what they notice during the student’s reading (e.g., Arcidiacono, 2020; Brown, 2013; Cole, 2006; Jones, 2018; Mertzman, 2008; Pletcher & Christensen, 2017; Rodgers, 2004; R. C. Scott, 2021; Wymer, 2016). Teachers often unintentionally focus on decoding. R. C.Scott (2021) observed that teachers provided mainly decoding feedback when students struggled with words, even if they believed that they were addressing meaning. Additionally, teachers tend to overlook opportunities for broader text feedback, focusing instead on details such as words and sounds (Cole, 2006). Even experienced teachers prioritize accuracy over comprehension and vocabulary (Pletcher & Christensen, 2017).

In the moderate position on the meaning side of the continuum, 16 practices involving feedback on both decoding and comprehension were identified. Teachers noticed both students’ strengths and their difficulties, but the feedback provided focused on areas where students needed support to progress, with less time spent on aspects mastered (Ankrum et al., 2008, 2014, 2017; Arcidiacono, 2020; Cole, 2006; Lee & Schmitt, 2014; Perrin, 2008; Rodgers, 2004; R. C.Scott, 2021; Worthen, 2021; Wymer, 2016). The feedback in dialogues after reading targeted various reading skills such as decoding (letters/sounds) (e.g., Ankrum et al., 2008, 2017; R. C.Scott, 2021), meaning-making (information retrieval, recognizing structure, cross-checking, re-reading) (e.g., Rodgers, 2004), and metacognitive aspects such as self-correcting and asking for help (Lee & Schmitt, 2014; Malicky et al., 1997). Haner (2012) describes how the teacher may make comments or ask questions to encourage deep thinking about the text during reading conferences. Similar dialogues have been investigated, showing that teachers alternate their feedback between decoding and meaning (Cole, 2006; Lee & Schmitt, 2014; Malicky et al., 1997; Rodgers, 2004; R. C.Scott, 2021; Worthen, 2021; Wymer, 2016). Cole (2006) refers to this alternation as taking place between “micro-cues” (details and decoding) and “macro-cues” (larger parts and meaning), which helps learners use information from both the whole text and details to find meaning.

Finally, at the extreme right of the continuum, we identified 12 feedback practices focused on meaning. These feedback practices fall into three main themes: enhancing reading fluency, developing strategies for finding meaning, and evaluating comprehension.

Feedback targeting fluency may focus on the meaning of the text, such as feedback targeting whether the prosody matches the text’s meaning. Ardoin et al. (2013) investigated how teachers’ feedback encouraged students to pause, pay attention to punctuation, and match tone to text content. They found that feedback on prosody and expression helps students understand the text. Similarly, other studies found that feedback on using context to read words correctly aids comprehension by explaining misread words (Goodman et al., 2016; Poock, 2017; Wang & Grieve, 2019).

Feedback on reading strategies, often followed by discussions about the student’s understanding, was described in several studies (Cole, 2006; Crowe, 2003, 2005; Goodman et al., 2016; Mariage, 1995; Wang & Grieve, 2019). This feedback may address the use of context to understand words and sentences (Goodman et al., 2016), concern how to make connections in the text (Phillips, 2013), or encourage students to extend their thinking (Mariage, 1995). Meaning-focused feedback affects how students work to find meaning in texts, with retellings being longer when the feedback focused on meaning rather than accuracy (Ardoin et al., 2013). Additionally, teachers’ feedback on finding meaning in the text was found to engage students more in text discussions (Frey & Fisher, 2010; Lee & Schmitt, 2014).

Feedback addressing meaning also included feedback practices evaluating comprehension, based either on what the student noticed in the text during reading or on criteria predetermined by teachers (Frey & Fisher, 2010; Phillips, 2013). In the first case, the teacher builds on the student’s response; in the second, the student receives feedback on correct and incorrect answers. Teachers frequently use questions to evaluate comprehension, promoting open-ended discussions or checking for understanding (Cole, 2006; Frey & Fisher, 2010). Higher-order questions lead to more complex answers about meaning than simpler questions (Blything et al., 2020). However, higher-order questions do not always improve comprehension for beginning readers unless they have received modeling on how to think independently about the text (Dickey, 2018).

In summary, addressing the second part of RQ1, our findings identified four main types of content in teachers’ feedback on students’ oral reading: (1) feedback focused solely on decoding skills; (2) feedback primarily on decoding strategies with some attention to finding meaning in the text; (3) feedback addressing both decoding and comprehension; and (4) feedback focused on understanding and interpreting meaning. These feedback practices illustrate the range from decoding to comprehension in the varied approaches used by teachers.

Research Question 2: How Is Student Agency Supported by Feedback Practices?

Our second research question pertains to patterns of student agency in feedback practices. Practices were scored on the dimensions adapted from the framework of Vaughn et al. (2020a), with a total score of 4–5 indicating high student agency (26 practices) and a score of 0–3 indicating low student agency (39 practices) (see online Table S2).

Only three studies (Goodman et al., 2016; Poock, 2017; Wang & Grieve, 2019) scored a point for all five dimensions, meaning that they explored feedback that helped students to think about the reading process and about themselves as readers, to notice and analyze miscues, and to choose strategies to improve their reading. The remaining 23 high-agency practices scored on all dimensions except choice-making. These involved implicit and meaning-based feedback, where students were invited to foster evolving conversations about reading. Intentionality is an essential feature in these practices; it is evident in how students’ ideas and talk about how to find meaning in the text are included and can be beneficial in promoting engagement (Ardoin et al., 2013; Cole, 2006; Crowe, 2003, 2005; Haner, 2012). Moreover, intentionality is reflected in the use of high-level questions that promote advanced thinking (Blything et al., 2020; Dickey, 2018). Self-perception is visible in those studies that highlight how feedback practices, by praising students’ reading progress and problem-solving abilities, help them see themselves as capable of progress (e.g., Perrin, 2008; Worthen, 2021). Persistence was encouraged by prompting students to think independently before seeking help (Frey & Fisher, 2010; Lee & Schmitt, 2014; Jones, 2018; Rodgers, 2004). The feedback focused on metacognitive strategies and self-awareness (Clark, 2004; Malicky et al., 1997). Finally, interactiveness involved dialogue and invitations to participate in conversations fostering autonomy (Ankrum et al., 2008, 2014, 2017; Arcidiacono, 2020; Perrin, 2008; R. C.Scott, 2021; Wymer, 2016).

In summary, high-agency feedback practices focus on building students’ self-efficacy and problem-solving abilities and on dialogue, text meaning, independent thinking, and metacognitive strategies.

On the other side of the scale, 39 practices were identified as low in student agency. Two scored 3 points, and 13 scored 2 points. These practices varied in the dimensions of the agency scale emphasized. Several studies (e.g., Cole, 2006; Jones, 2018; Malicky et al., 1997; Mariage, 1995; Pletcher & Christensen, 2017; Wymer, 2016) describe what they call “missed opportunities” where teachers did not value student contributions or provide sufficient dialogue time. These missed opportunities were identified by researchers based on their observations within natural classroom settings and are not a result of intentional practices predetermined by the researchers.

A lack of uptake of student responses or invitations to dialogue has been found to be a barrier for student agency. Crowe (2005) found that students were less engaged when they were not allowed to express their ideas. Similarly, Arcidiacono (2020) and Brown (2013) observed that frequent interruptions or redirections by teachers limited students’ thinking time, often leading the teacher to provide the answer or redirect the student’s focus back to decoding (Ankrum et al., 2014; Cole, 2006; Pletcher & Christensen, 2017). Aymett (2018) reported that students lacked information on how to use feedback effectively. Phillips (2013) and Jones (2018) found that teachers often dominated conversations, interrupting students’ thinking or asking closed-ended questions that hindered independent problem-solving. Malicky et al. (1997) noted that difficult or unfamiliar texts led to more teacher talk, thereby reducing student engagement in feedback and reading dialogue.

Of the 39 low-agency practices, 12 scored only one point for providing performance feedback related to word-reading strategies and/or rewards without dialogue about how the student can use feedback for progress. These practices, focusing on accuracy, could help build persistence in reading challenging words. Twelve practices providing only reading rate or correct/incorrect scores received zero points for student agency.

Expanding the topography of feedback practices to include the explicit–implicit and decoding–meaning continua and student agency reveals important patterns (see Figure 4). Practices facilitating high student agency tend to focus on meaning and involve more implicit feedback. In contrast, decoding-focused practices with explicit feedback do not support student agency to the same extent.

Feedback content (RQ1a) and feedback mode (RQ1b) by student agency (RQ2).

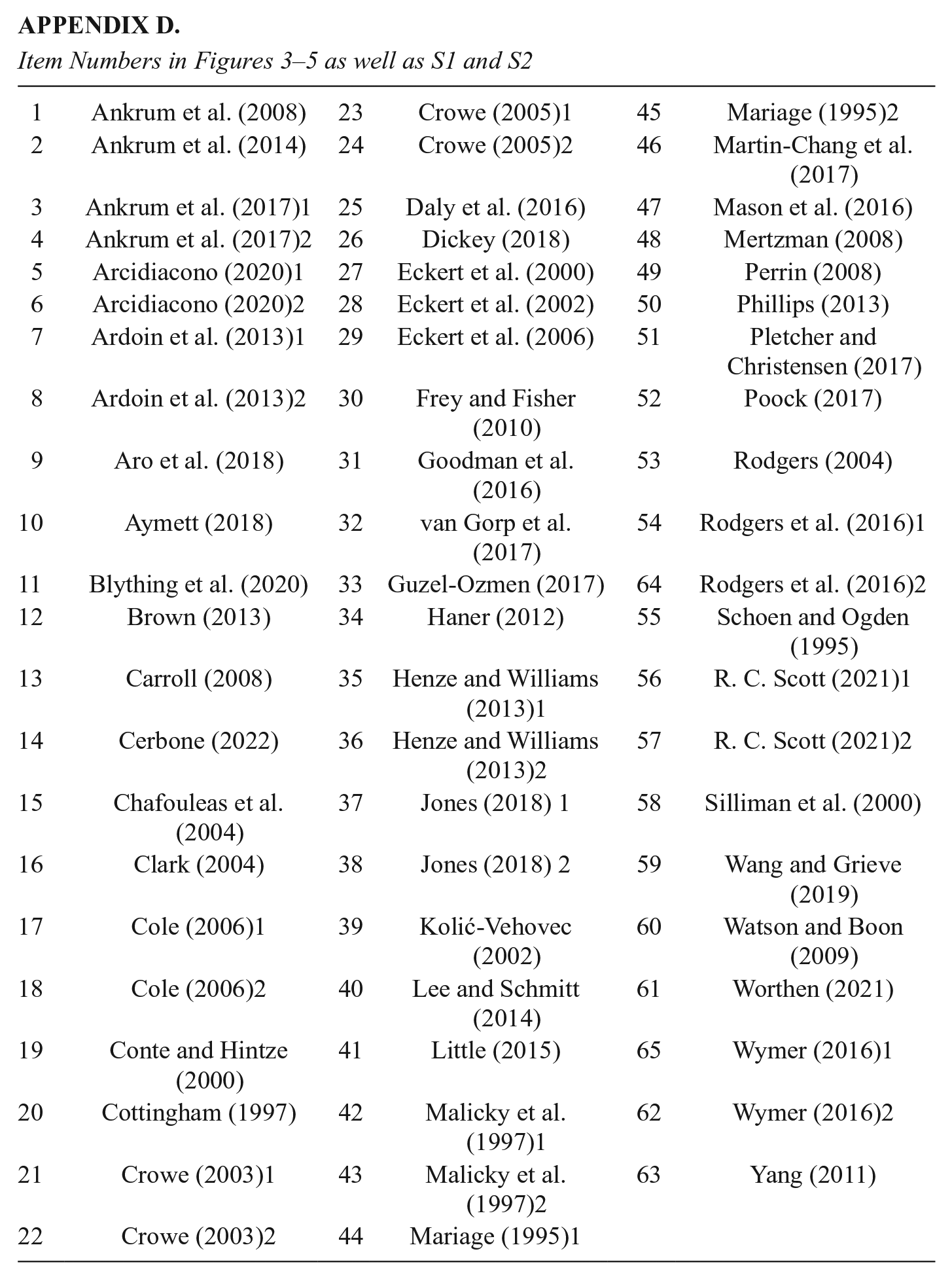

Research Question 3: Do Different Groups of Students Receive Different Kinds of Feedback?

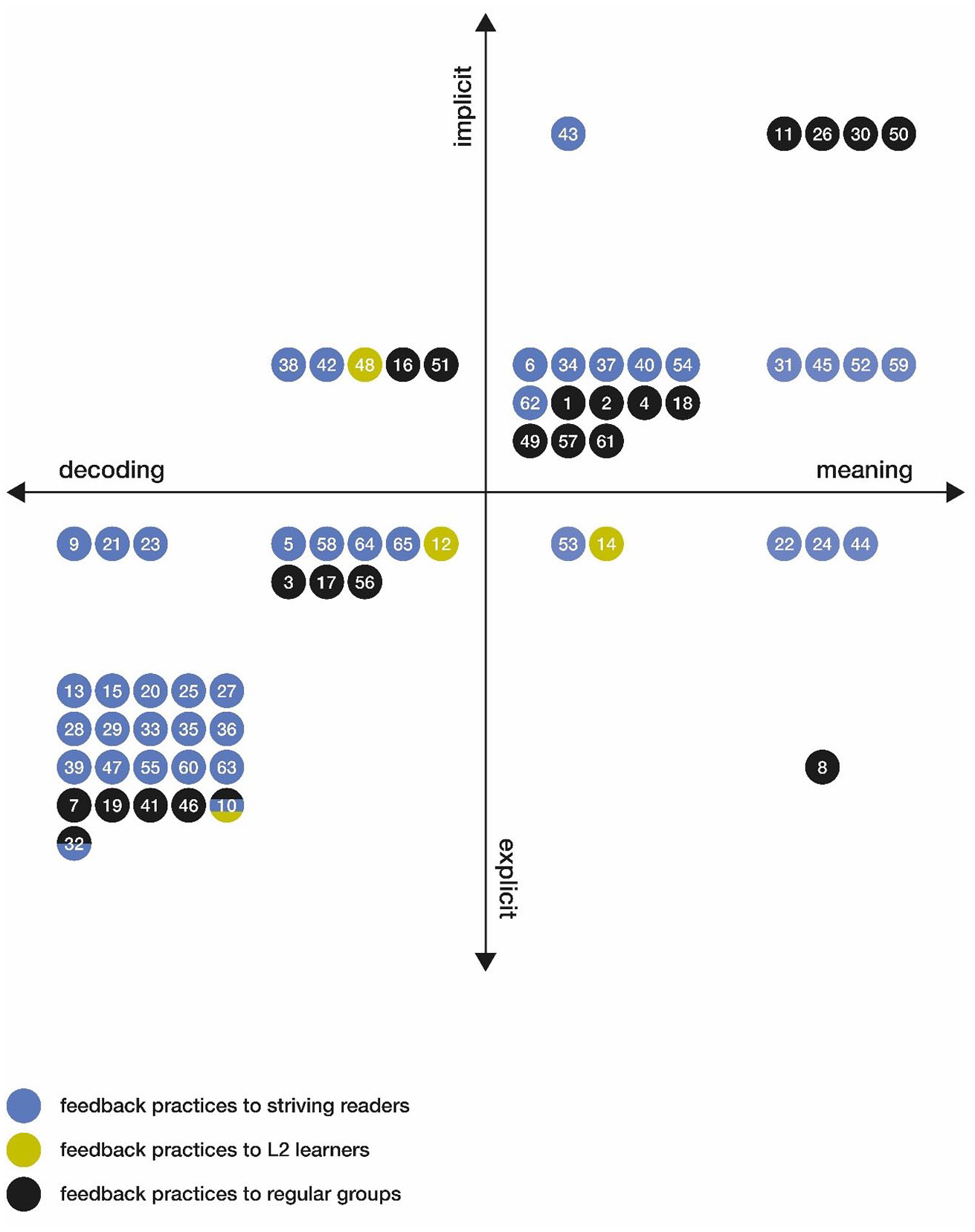

Our third research question required us to examine patterns of feedback in relation to student characteristics. The 65 feedback practices (see Appendix D) identified in the review were coded with regard to the specific groups of students that they targeted: 39 of them targeted struggling readers, 3 targeted L2 learners, and 21 targeted students in regular groups. Two studies (Aymett, 2018; van Gorp et al., 2017) examined feedback practices targeting mixed samples: L2, struggling, and regular readers. Figure 5 shows the distribution across reader groups of the feedback practices broken down by mode (explicit–implicit) and content (decoding–meaning).

Feedback content (RQ1a) and feedback mode (RQ1b) by student group (RQ3).

A closer look at Figure 5 reveals that the practices aimed at struggling readers favored explicit feedback: there are 26 practices on the explicit side but only 13 on the implicit side. The feedback practices targeting L2 learners and students from regular groups were more balanced in this respect. The three practices for feedback to L2 students are all found near the center: two are slightly on the explicit side, while one is slightly on the implicit side. Among the 21 feedback practices recognized in studies with samples from regular classes, 9 are on the explicit side and 12 are on the implicit side. Both of the studies with samples from multiple groups are at the explicit extreme.

Similarly, when it comes to feedback content, the practices aimed at struggling readers tended toward decoding: 24 of them displayed characteristics from the decoding side of the continuum, while 15 focused more on meaning. Of the feedback practices targeting L2 learners, two focused mostly on decoding skills, while one focused on both decoding and meaning. Finally, the 21 feedback practices recognized in studies with samples from regular classes were relatively balanced in this respect: 4 were aimed at decoding only, 5 had a primary focus on decoding, and 12 had characteristics that emphasized text comprehension. The studies with samples from multiple groups both explored feedback on decoding skills.

Further, the feedback practices were coded with respect to three age (grade) groups: early beginner instruction (grades K–1; n = 21), students with some experience (grade 2; n = 14), and late beginner instruction (grades 3–5; n = 28). Four practices had samples from grades 2–5; they are included in the grades 3–5 category. Eight practices had samples from grades 1 and 2; they are included in the grade 2 category. Finally, there was an additional category encompassing all of K–5 (n = 2).

The analysis of feedback practices across age groups reveals a pattern of feedback addressing both decoding and comprehension. However, the distribution across age groups broken down by mode (explicit–implicit) and content (decoding–meaning) shows a differential pattern. While feedback practices in early beginner instruction target a variety of practices across the full span of the two continua, for the oldest group there is a clear focus on explicit decoding: 19 out of 28 practices in this group are at the explicit/decoding extreme; of these, 16 target struggling readers. The students included in the studies were typical readers (n = 17), struggling readers (n = 30), or L2 learners (n = 3); two of the studies reported findings for multiple groups of students. Taken together, there is a clear overweight of studies targeting struggling students. This finding may be counterintuitive, as one would assume that students will receive more meaningful feedback as they grow older (see Figure S2). This suggests a potential bias in the feedback literature and may be a result of the strong focus on explicit decoding within special education reading research. The four feedback practices documented in samples consisting of struggling readers with diagnosed learning disabilities and students without any formal diagnosis are concentrated in the explicit/decoding part of the topography. In contrast, the five practices with samples specifically targeting students with reading disabilities in special education settings are spread across the topography. It should thus be noted that most of the samples of struggling readers consist of “at risk” students rather than students actually receiving special needs education.

Discussion

In the previous section, we presented results that we refer to as the “topography” of feedback practices regarding oral reading in early grades, a metaphor that inherently suggests differences among multiple dimensions. Importantly, in this study, the continua are applied as descriptive entities characterizing the content and mode of feedback given in the studies included. The descriptive approach creates a stage for reinvestigating established feedback practices. It is clear from our review that researchers have shown an increasing interest in examining patterns of interaction and conversation in feedback practices. However, the overall picture of the topography shows that explicit feedback remains a central feedback characteristic in reading research as well as being widespread in classroom practice.

The four-square model of the two continua specifically gives insights into the variation in characteristics of teachers’ feedback practices, particularly regarding the explicitness or implicitness of the feedback and the aspects of reading it targets. Explicit feedback is often associated with decoding, while implicit feedback tends to target the meaning aspects of reading. However, we do not intend to present explicit and implicit feedback practices as competing with one another. The topography also highlights notable trends in how student agency manifests in the context of learning to read. Ultimately, the goal of this work is greater understanding of how feedback can promote student agency and of the specific conditions in which a given type of feedback may be more valuable than the other.

To that aim, we first discuss the characteristics of feedback practices that may support student agency in learning to read. Second, we seek to understand the nuances in how feedback characteristics are related to various aspects of reading. Third, we discuss the tendency shown in the topography (Figures 4 and 5) that struggling readers receive feedback of a type that the agency literature does not consider advantageous for supporting their agency.

Characteristics of Feedback Promoting Student Agency

Recent feedback research has shifted toward a student-centered approach, increasingly focusing on understanding the impact of feedback by exploring how students interpret and use it (Lipnevich & Smith, 2022). In parallel, a related research literature has acknowledged that fostering student agency is a way to increase the likelihood that students will develop into independent and engaged learners (Adie et al., 2018; Reeve & Tseng, 2011), resulting in the emergence of the term “agentic feedback” (Griffiths et al., 2023), which reflects the relatedness of the two fields. The present study, which focuses on the youngest students, adds to existing knowledge about how feedback may support student agency (Winstone et al., 2017). The findings in our study suggest that practices for feedback on oral reading tend to enhance student agency more when there is a large component of implicit feedback, implying that feedback practices emphasizing explicit information and correction tend to limit students’ opportunities to achieve agency. Such a pattern aligns well with previous studies of early reading instruction highlighting that student agency tends to be lower in teacher-centered learning environments (Vaughn et al., 2020a).

A potential mechanism for implicit feedback supporting agency may be its very nature: its subtleness and supportiveness encourage students to think and act independently. Such feedback helps students explore and interpret text meanings. Research suggests that promoting such independence significantly enhances the likelihood that students will possess a positive reader identity, self-efficacy, and motivation for reading (Afflerbach, 2022).

Our topography of extensive feedback practices emphasizing explicit information and corrections may suggest that opportunities for students to achieve agency have inadvertently become limited. While explicit instruction and modeling are both necessary and important, relying mainly on this type of feedback may restrict the development of autonomous learning behaviors. Based on the literature in the field, we therefore advocate for a nuanced mix of both explicit and implicit elements in feedback on oral reading by young readers. Integrating metacognitive challenges, offering choices, and fostering strategies to build persistence are pivotal in maximizing the potential of feedback to support student agency (Afflerbach, 2022).

Some features of our findings indicate that the mode and content of feedback, particularly its support for student agency, may partially reflect the purposes and aims of the original studies. Intervention studies often featured more explicit feedback, likely due to the researchers’ specific goals, while observation studies presented a broader spectrum of feedback practices. It is important to note that researchers’ interests in the field may influence what teachers and what feedback practices they study. However, the intervention studies included in the review were not decontextualized experiments but interventions conducted in collaboration with teachers and linked to real students’ educational context. The support for student agency found this may be partially due to the goals of the research reviewed rather than to typical behaviors or practices of teachers. This being said, the emphasis on narrow, measurable practices in studies aimed at struggling students is a significant finding in itself, especially considering that student agency is limited compared with the strategies that researchers recommend for these students.

Feedback Characteristics Across Different Aspects of Reading

Our topography of feedback practices shows that the characteristics of feedback vary significantly across different aspects of reading (see Figure 3), with a close link between explicit feedback and decoding, both across the studies included and over time, while implicit feedback typically aligns with the comprehension and interpretation of text.

From one perspective, this pattern of feedback characteristics is not surprising, given that an explicit focus on decoding is typically derived from contemporary theories of reading development. While the Simple View of Reading ( P. B.Gough & Tunmer, 1986) clearly postulates decoding and comprehension as the two sole factors in an equation (reading comprehension = decoding × listening comprehension), those two factors have, in fact, received different amounts of attention in research and practice. Specifically, decoding has received particular attention as a result of the central role played by the notions of phonemic and phonological awareness in theories of reading development. We should add that research on how children learn to read has largely been shaped by an established view of reading as involving the mastery of various subskills (e.g., Ehri, 1995; Spear-Swerling & Sternberg, 1994). For this reason, traditional theories of reading development have a strong emphasis on decoding skills, particularly for struggling students (Alexander & Fox, 2004). In summary, given these dominant strands in theoretical perspectives, the pattern of explicit feedback focusing on decoding was an expected finding.

The patterns found for feedback characteristics may be due to the fact that decoding is more straightforward to measure and can be directly influenced through immediate corrective feedback. Uppstad and Solheim (2011, p. 163) indeed suggest that one reason for the different status of the two factors is related to their different characteristics. Another potential explanation for the prevalence of explicit feedback on decoding may be related to the simplicity of the texts used in initial reading instruction. Lacking any deeper content that can be discussed and reflected upon, these texts may leave little room for teachers to provide feedback targeting meaningful aspects of them. Thus, the texts themselves may elicit a focus on decoding.

The pattern of explicit feedback on decoding identified in our review is also consistent with previous studies demonstrating that explicit feedback is a typical feature of feedback given to young students or low-achieving learners (Hattie & Timperley, 2007; Kluger & DeNisi, 1996; Shute, 2008). In line with this, Brooks et al. (2021) found that most feedback in elementary education focused on the task level rather than on the process and self-regulation levels. The present study offers valuable insights regarding the widely applied feedback model of Hattie and Timperley (2007) in how teachers’ feedback practices at the task level are differentiated along the dimensions of mode and content in the context of oral reading. Inspiration for models of feedback dynamics across the span of decoding and meaning-making skills can also be found in modern definitions of reading that emphasize text comprehension as an interpretive process that concerns both decoding and comprehension (e.g., Kintsch, 1994; Langer, 2015; Tønnessen & Uppstad, 2015).

We would like to emphasize that all practices—regardless of their position in our topography—have a legitimate and important function in feedback on young students’ oral reading. However, the feedback literature does suggest that some sparsely populated areas in our feedback topography—such as implicit feedback for decoding—may hold significant potential for enhancing student agency and engagement. Exploring these underrepresented feedback modalities could provide new avenues to support students’ agency as they work on crucial skills such as decoding.

The Impact of Patterns in Feedback Characteristics on Various Groups of Students

The picture of a strong relationship between explicit feedback and decoding is nuanced by the interesting finding that struggling readers and L2 learners often receive feedback on decoding. To a large extent, this pattern makes sense, as their efforts to master decoding persist. However, these practices may still shape their reading instruction in a way that leaves fewer opportunities to develop and practice comprehension skills. Consequently, the focus of struggling readers and L2 learners may be guided toward decoding alone, at the expense of developing meaning-making strategies, resulting in a more superficial understanding of texts. Proficient readers, on the other hand, are more likely to receive feedback that enhances their comprehension strategies, leading to a divergence in reading development. It is important not to impose a uniform standard for all students but rather to support all students in developing the interpretive skill that reading constitutes (Tønnessen & Uppstad, 2015, p. 97).

However, contrary to the general pattern described previously, a few feedback practices aimed at struggling readers are thought to support student agency strongly. These practices involve implicit feedback through dialogues, illustrating how struggling readers are able to understand and use feedback to reflect on their learning when the context facilitates it. From the “ecological” perspective on feedback (Nieminen et al., 2022), these features illustrate how feedback is embedded in a broader social and relational context where the means available, such as dialogue, facilitate students’ achievement of agency. For example, studies of implicit feedback practices found that when teachers talk less in the feedback situation and give students more time to read and discuss the text, students tend to actively use the information to solve problems rather than having the teacher provide them with the solution. This approach is widely assumed to enhance students’ reading skills (e.g., Arcidiacono, 2020; R. C.Scott, 2021). Correspondingly, the explicit feedback practices considered to entail weak support for student agency are characterized by lower levels of interaction and dialogue. One reason for limited interaction may be time constraints, as interaction takes longer than teacher-dominated instruction. However, from an overall learning perspective, the more time-consuming interaction may, in fact, be more efficient. It is appropriate yet again to invoke the idea that explicit feedback relates to measurable skills and that when the focus of feedback is on correct versus incorrect information, there will be little room for students’ perspectives and dialogue.

As noted previously, promoting student agency through feedback practices requires teachers to make continual efforts to ensure that students’ voices are heard during and after oral reading. However, the present study highlights several challenges in this context, including time constraints, limitations to expertise, and varying expectations of different groups of students. Several studies in our review suggest that specific “teacher-talk moves,” starting with implicit feedback and transitioning to explicit feedback based on student needs (e.g., Ankrum et al., 2017; Cole, 2006; R. C.Scott, 2021), can foster students’ independent development of ideas and their comprehension of the text, moving beyond simply accepting the teacher’s assessment. Such talk moves may be an example of “means” in the feedback environment, facilitating students’ achievement of agency (Nieminen et al., 2022).

Another relevant aspect to consider in relation to the topography of feedback practices is how teachers’ expectations of their students can influence feedback practices. Research shows that students with higher teacher expectations tend to receive more high-level feedback, such as higher-order questions, than those with lower expectations (Gentrup et al., 2020; Rubie-Davies, 2007). This suggests that unconscious biases affect teachers’ actions, possibly explaining why low-achieving students often receive feedback focused on low-level reading processes such as decoding. To counter this, teachers need to be mindful and plan their feedback carefully.

Teachers may also overestimate the amount of feedback on meaning that they provide through scaffolding, which is considered effective for supporting learning and helping students solve problems they could not solve independently (Reynolds, 2017). A scaffolding approach may, in fact, yield more teacher-directed conversations and explicit feedback through modeling and explanation. Studies reviewed (e.g., Ankrum et al., 2017; Arcidiacono, 2020; Brown, 2013; Pletcher & Christensen, 2017) show that scaffolding feedback led to less dialogue about the meaning of the text with students. The teachers thought they were supporting learning through scaffolding, but they ended up providing corrective feedback on decoding skills. This is consistent with findings from Kluger and DeNisi (1996) and Shute (2008), who showed that teachers’ spontaneous verbal feedback tends to be inaccurate and not aligned with students’ actual needs. Several studies in our review (e.g., Cole, 2006; R. C.Scott, 2021) suggest that teachers may benefit from using specific conversational procedures that remind them of how to assess and provide feedback on their students’ oral reading.

Implications for Practice and Further Research