Abstract

Students with reading disabilities require instruction and intensive intervention targeted to their current learning needs. Through data-based instruction, educators can monitor student’s performance and make adjustments to instruction to better address student needs using both progress monitoring and diagnostic assessment. This article provides educators with a systematic approach to using error analysis of oral reading fluency data as a diagnostic tool to guide instructional decision-making. Additionally, it examines potential causes of word reading errors—such as phonemic, orthographic, morphemic, or high-frequency word challenges—and offers educator strategies for adapting and intensifying instruction based on individual student error patterns.

Students with disabilities face disparities in the education system and beyond (Lipscomb et al., 2017). Such disparities are particularly evident among students with reading disabilities (RDs) due to the potential impact of literacy on lifelong education, health, and social outcomes (DeWalt & Pignone, 2005). Students with RD often require access to rigorous, individualized interventions to support their learning needs (D. Fuchs & Fuchs, 2006; Torgesen, 2000). One method with substantial evidence of effectiveness for intensifying interventions is data-based instruction (DBI).

To effectively implement DBI, educators need a continuous flow of information about student learning. Formative assessment, such as curriculum-based measurement (CBM), has proven to be a valuable tool for guiding instructional decision-making (Deno, 1985; Hosp et al., 2016). Through ongoing measurement of student’s performance, educators can identify students who need additional support and assess whether they are making adequate progress in response to targeted instruction (L. S. Fuchs & Fuchs, 2002; Stecker et al., 2005). However, while research supports the use of CBM for identifying at-risk students and monitoring growth, there is less guidance on how educators can use these data to intensify interventions for students who require more significant, individualized support.

By engaging in fine-grained analysis of student data, particularly through examining errors made during assessments such as CBM Oral Reading Fluency (ORF), educators can gain deeper insights into students’ learning needs. Analyzing student errors and the features of the words wherein these errors occur allows for a more tailored understanding of the area in which students are struggling and provides guidance on how to adjust instruction accordingly. Thus, the purpose of this article is to guide educators in conducting error analysis of CBM ORF data to enhance the effectiveness of intensive, individualized reading interventions. This article provides an overview of DBI and explains how to use student data to monitor progress, along with a description of error analysis as a diagnostic assessment tool. It also details steps to engaging in fine-grained analysis of CBM ORF data to inform instructional decisions.

Primary Data Sources for Data-based Instruction (DBI)

Research has demonstrated that DBI results in significant improvements in the academic performance of students with learning disabilities, with effects ranging from 0.24 to 0.38 (Filderman et al., 2018; Jung et al., 2018), and even larger effects associated with the greater use of data to guide instruction (L. S. Fuchs et al., 2021). The DBI process is iterative, and the ongoing analysis of student assessment data to inform the intensification and individualization of an intervention is essential to this process (see Toste & McMaster, in press).

DBI begins with the implementation of a validated intervention—an evidence-based instructional program that has been shown to be effective for similar groups of learners. During intervention delivery, student assessment data are used to inform instructional decisions. There are two key decision points in DBI. The first is to decide whether to adjust instruction. To inform this decision, progress monitoring data are collected and graphed to assess whether the student is demonstrating adequate growth in response to the intervention. Then, if there is a need to adjust instruction, educators decide how to do so. Diagnostic assessment or fine-grained analysis of student data is used to identify specific skill gaps and adjust the intervention accordingly. The following sections outline the two sources of data relevant to these decision points: progress monitoring and diagnostic assessment.

Progress Monitoring

As previously noted, a common form of progress monitoring used within DBI is CBM. Unlike norm-based assessments, which compare a student’s performance to a larger population, CBM tracks individual progress over time and offers more actionable data for instructional decisions (Deno, 1985). Scores from CBM assessments are reliable and valid indicators of performance and progress in reading (see Reschly et al., 2009; Shin & McMaster, 2019; Wayman et al., 2007). Specifically, CBM ORF is considered a proxy for general reading proficiency (Baker et al., 2015; L. S. Fuchs et al., 2001; Petscher et al., 2013). CBM ORF assessments, or probes, are brief passages of connected text that students read aloud for 1 min. Educators record student’s errors and calculate the words read correctly per minute (WCPM), which reflects students’ reading automaticity (Hasbrouck, 2020). CBM ORF data are collected on a frequent (e.g., weekly) basis and scores are placed on a graph to depict progress.

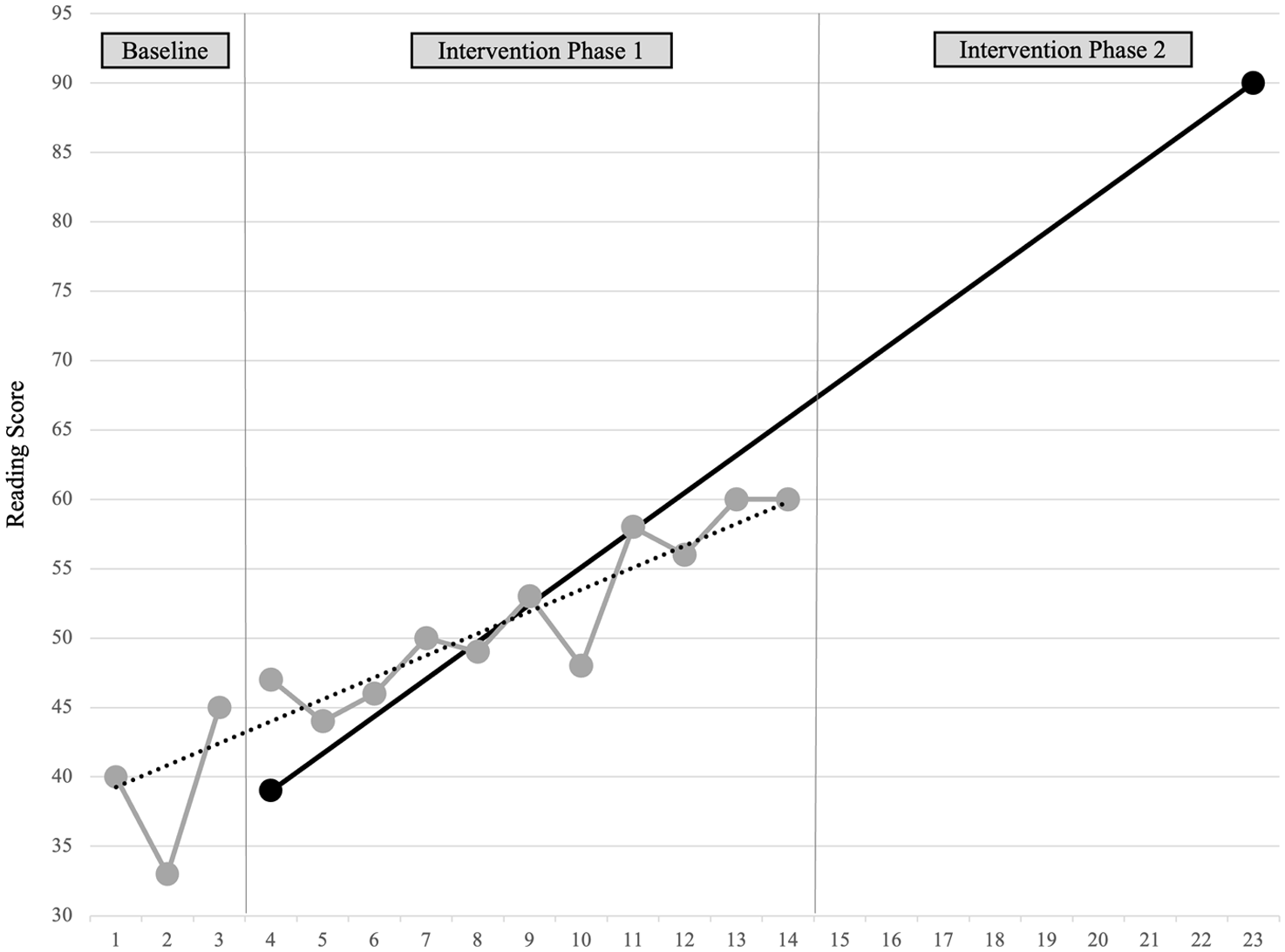

The CBM graph guides educators in decision-making (Filderman & Toste, 2018). Figure 1 presents a sample CBM ORF progress graph for an individual student. To make instructional decisions, the educator first implements a well-reasoned intervention (Phase 1) and, after 10–12 weeks, inspects the graph to compare the actual rate of growth (dotted trend line) to the expected rate of growth (solid goal line). If the actual rate of growth is lower than expected, it signals a need for an instructional adjustment. The subsequent step is to collect diagnostic assessment data to inform the educator’s decision about how to adjust the intervention to meet their student’s needs.

Sample Curriculum-Based Measurement Oral Reading Fluency Progress Graph.

Diagnostic Assessment

Diagnostic assessment data provide information about the student’s areas of strength and need. There are various types of diagnostic assessments that can be used to inform an educator’s instructional decision-making. For example, it can be helpful to collect information about behavioral and psychosocial factors that may be affecting student’s performance (e.g., attention, engagement) through direct observation, student interviews, and data from behavior charts. Most commonly, diagnostic assessment data are used to better understand a student’s current skills profile. Educators might consider administering a phonics inventory or conducting a spelling error analysis. If CBM ORF data have been collected, educators can conduct an error analysis to identify patterns in the student’s word reading errors that reflect the areas of instructional need.

What Is Error Analysis (and What It Is Not?)

When administering CBM ORF, educators identify the words that were read incorrectly (i.e., mispronunciations or deviations from the printed text) and then calculate the score (i.e., WCPM). Then, to conduct an error analysis of ORF data, the student’s inaccurate responses are examined to determine if there are specific features of the words where they experience more difficulty (e.g., consonant blends at the start of words, vowel teams, and multisyllabic words). The focus is on determining specific word features that students are unable to read accurately and fluently, and those features may become an instructional target within the intervention (Weiss & Friesen, 2014). Error analysis is sometimes overlooked as an effective approach to diagnostic assessment. This oversight is likely due to confusion with approaches, such as miscue analysis and running records. Although all of these approaches are used to assess a student’s reading performance, they differ in their purpose and methods.

Miscue analysis focuses on the quality of the errors (or “miscues”) a student makes while reading to gain insight into the strategies a reader might be using to process written words (K. S. Goodman, 1969; Y. M. Goodman et al., 2005). Miscues can include substitutions of words, additions, omissions, and alterations to word sequence within a sentence. Y. M. Goodman and Burke (1972) consider this to be a qualitative approach to reading analysis that focuses on how a student’s miscues are related to the meaning, syntax, and graphophonic cues of the text. For example, under miscue analysis, the insertion of a word that is not written in the sentence would be considered a miscue and then is further analyzed to determine if the insertion changed the meaning of the sentence. Running records focus on tracking a student’s word reading behavior in real time during oral reading (Clay, 2000, 2013). The educator records all errors (miscues) a student makes while reading a leveled text. The student’s comprehension is also assessed with retellings or questions. This technique is another qualitative approach wherein errors are analyzed to determine if the substituted word reflects an error in meaning, structure, visual information, or a combination.

Both miscue analysis and running records rely heavily on judgments made by the educator. The subjectivity of these approaches can lead to inconsistent results, reducing reliability and validity (Schwanenflugel & Knapp, 2016). In miscue analysis, educators must determine whether a student’s error retains meaning or grammatical structure, which can vary depending on interpretation. The emphasis is on meaning-making at the expense of word-level skills; however, there is a lack of empirical evidence supporting the assumption that miscues are reliable indicators of reading comprehension (Rayner et al., 2001; Tunmer & Chapman, 2012). Similarly, running records require the educator to classify errors and assess comprehension, but criteria are often applied inconsistently across different educators. These assessments are not strong predictors of overall reading performance, and they lack reliability for instructional decision-making (Fawson et al., 2006; Paris & Carpenter, 2003).

A more effective approach is to conduct an error analysis that focuses on the features of the words that are misread by a student (Deno et al., 1982). CBM ORF data are reliable and valid, and the fine-grained analysis of a student’s errors can directly inform instructional decision-making. The detailed analysis of student errors helps educators understand why students struggle, which is essential for tailoring instruction (Deno, 2003).

CBM ORF Error Analysis for Instructional Decision-Making

For DBI implementation, collect frequent (i.e., weekly) CBM ORF data. Scoring WCPM and graphing these data for the purpose of monitoring progress will inform the first DBI decision point—whether to adjust instruction. Educators can extend the use of these CBM ORF data by conducting an error analysis to inform the second DBI decision point—how to adjust instruction. This approach reduces the need to collect additional student assessments, optimizes the use of CBM ORF data, and increases the usefulness of these data by providing direct insight into a student’s skills.

This section offers guidance on “how to” conduct an error analysis as a form of diagnostic assessment. This guidance is organized in four key steps: (a) administer ORF assessment, (b) identify all student errors, (c) categorize and analyze errors, and (d) use error analysis to inform instructional adjustments.

Step 1: Administer ORF Assessment

The majority of CBM ORF assessments have similar administration and scoring procedures, although one should always be sure to adhere to the published guidelines for the specific test being used. This section references examples from the CBM ORF from the Dynamic Indicators of Basic Early Literacy Skills (DIBELS) 8th Edition (University of Oregon, 2021). The choice of DIBELS for the example materials used throughout this article is not intended as an endorsement for this system over other similar assessment instruments. Educators may wish to consult the academic progress monitoring charts from the National Center on Intensive Intervention (NCII) for a summary of the psychometric properties of popular CBM systems (NCII, 2021). This section also explains procedures for the administration of paper-and-pencil versions of the assessment. There is some emerging evidence that automatic speech recognition software can be trained to support the administration and scoring of ORF passages (Bolanos et al., 2013; Nese et al., 2023), although these programs do not necessarily provide the level of detail required to conduct a fine-grained error analysis.

To administer a CBM ORF, the student is given a copy of the passage, and the educator (or “examiner”) also has a copy for scoring purposes. The student reads orally from a grade-level passage for 1 min, which is timed with a timer. While the student reads, the educator records errors and the total number of words read, as described in Step 2. To ensure consistency and reliability of administration, read the test directions verbatim to the student on every occasion. The exact wording may vary across assessments, but directions encourage the student not to rush and to try to do their best reading.

The timer is started when the student reads the first word—or after 3 s if they hesitate before beginning to read. After 1 min, stop the student and immediately mark the last word read by the student within that minute. While the student is reading the passage, follow along to record errors. Ideally, testing will occur in a quiet location with minimal distractions so the educator can accurately capture all errors; however, audio recording of these sessions is also strongly recommended. Access to audio recordings can assist with scoring and analyzing reading errors. Moreover, a recent study observed greater discrepancies in the number of errors identified by live scoring of CBM ORF passages than through scores calculated when testers could also refer to audio recordings as compared with scores obtained through expert consensus (Reed et al., 2019).

For educators who may be new to the administration of CBM ORF assessments, there are various freely available training materials. The IRIS Center at Vanderbilt University offers online modules that describe how to collect and interpret CBM data (IRIS Center, 2024). (Toste et al., 2023) developed the EXPERT Program as part of a project funded through the U.S. Department of Education, Institute of Education Sciences (R324A190126). This project team developed a video library, including a series of CBM ORF training videos. For each video, users can watch a video of a student reading a CBM ORF passage and score along with the video using the administrator copy of the passage included in a linked practice packet. Afterward, scoring can be compared against the provided answer key. These videos can be accessed at youtube.com/@Project_EXPERT.

Step 2: Identify All Student Errors

While listening to the student read a passage, mark any deviations from the printed text. The paragraphs below describe the types of deviations identified and the notations used to demarcate these deviations on the examiner copy of the ORF passage. Although all deviations from the printed text are noted, some of these deviations are counted as errors while others are not (i.e., they do not affect the student’s score or WCPM). Consult the procedures for the specific ORF assessment being used to determine which deviations are counted as errors. For example, DIBELS 8th Edition does not count insertions or repetitions as errors, whereas the ORF subtest of a standardized assessment such as the Woodcock-Johnson IV Tests of Achievement (Schrank et al., 2014) does count these deviations as errors for scoring purposes. Because this article focuses on the use of error analysis to inform instructional decisions within the context of DBI, procedures commonly used by CBM ORF assessments are emphasized.

Deviations From Printed Text

Generally, there are seven types of deviations noted as the student reads a CBM ORF passage. The four types of deviations typically counted as errors include (a) mispronunciations and substitutions, (b) reversals, (c) omissions, and (d) hesitation of ≥ 3 s. Mispronunciations are when the student produces an utterance that may be a nonword or something phonetically similar but not precisely the same as the presented word (e.g., reading father as “faster”), while substitutions involve replacing the presented word with another word that may be semantically related (e.g., replacing front of the car with “front of the truck”). Reversals are when the student changes the order of words (e.g., reading she said as “said she”) or phrases in the text. Omissions involve the student skipping over a word or phrase, including skipping an entire line in the passage (e.g., reading if they have a well-developed sense of smell as “if they have a developed sense of smell” [omitting the word well]). Finally, a lengthy hesitation or pause is noted as an error. If the student pauses for 3 s, provide the word so the student can continue reading.

Mispronunciations due to dialectal differences or speech variations are not counted as errors (Hosp et al., 2016; Shapiro, 2011). This is important so that the resulting ORF score (i.e., WCPM) is an accurate assessment of a student’s reading ability rather than their speech patterns while also recognizing the validity of various American English dialects. This practice promotes equity and reduces bias, ensuring that students’ scores reflect true reading fluency.

Other types of deviations that are noted but generally not counted as errors for CBM ORF include insertions, repetitions, and self-corrections. Insertions are when the student says additional words or phrases that do not appear in the printed text (e.g., reading the green balloon as “the big green balloon”), whereas repetitions involve the student repeating a word or a phrase as they are reading (e.g., printed text is it was floating up and away, and the student says “it was floating. . . floating up and away”). Self-corrections also do not count as errors on any ORF assessment. This is when the student repeats a word by producing an utterance that is now correct (e.g., the printed word is blank, and the student says “black. . . blank”).

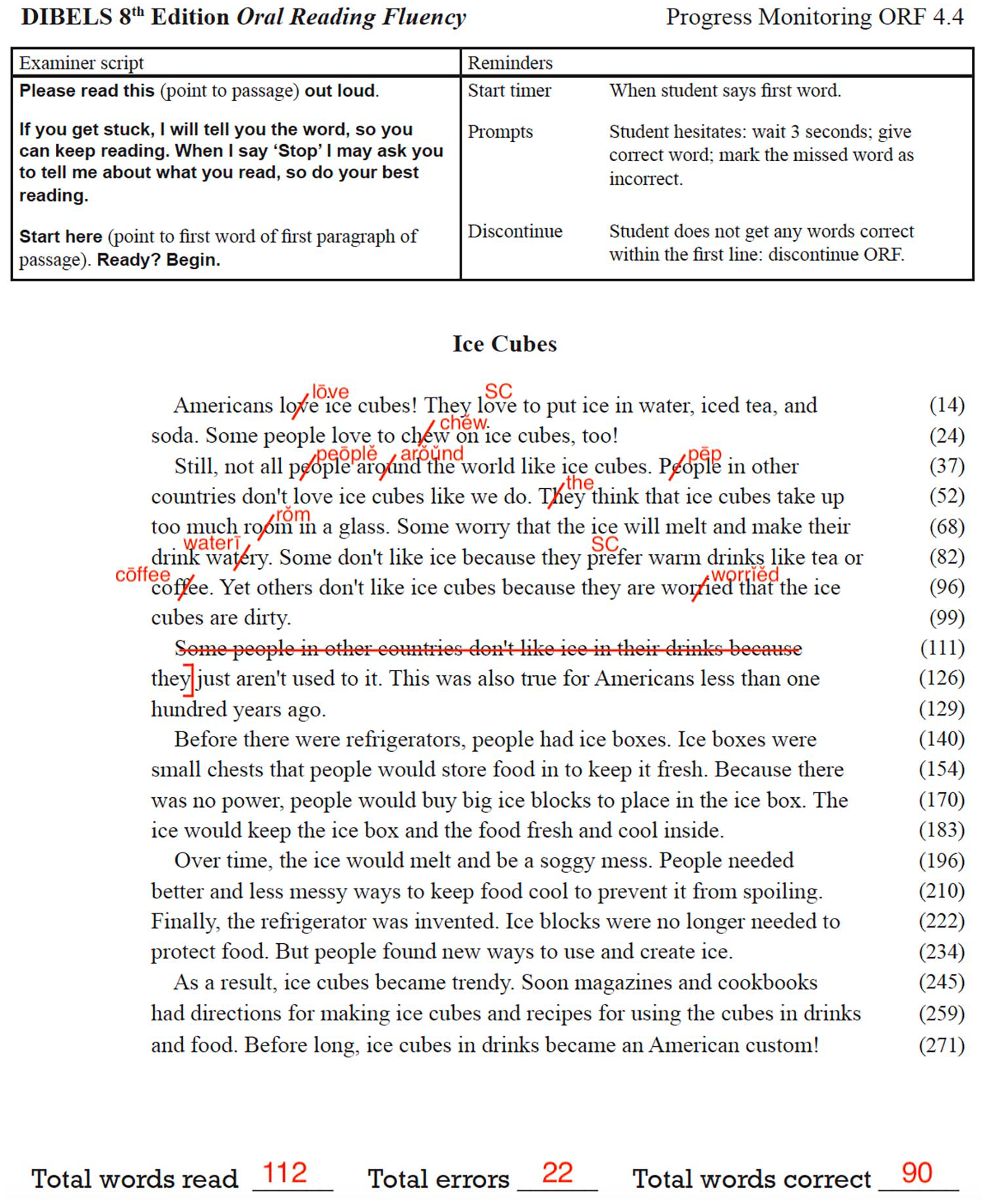

The ways in which the different types of deviations are noted on the examiner copy of the passage differ somewhat across various ORF assessments. Notations may include circling, underlining, or placing a slash through a word—but always strive to follow the error notation procedures that align with the ORF assessment being used. Figure 2 presents a sample examiner copy of a fourth-grade passage from DIBELS 8th Edition (Progress Monitoring ORF 4.4). The main notation for this ORF assessment is to place a slash (/) through any words pronounced incorrectly—including mispronunciations, substitutions, reversals, omissions, or hesitations wherein the word is provided by the examiner. The notation “SC” is used to indicate any self-corrected words. Furthermore, if an entire row is skipped (as shown in this example), then the row is crossed out, and each skipped word is counted as an error. To support the fine-grained analysis of errors, whenever an inaccurate utterance is produced, the error should be written above the misread word.

Curriculum-Based Measurement Oral Reading Fluency Passage With Sample Student Errors.

Preparing for Error Analysis

Once all deviations have been marked, the ORF score can be calculated. Refer to Figure 2 for an example. Using the word count running down the righthand side of the passage, count and record the total number of words read in 1 min (i.e., 112 words). Count and record the total number of errors (i.e., 22). Then, subtract the number of errors from the total number of words read to obtain the number of words correct per minute (WCPM; i.e., 112 − 22 = 90).

Finally—and very importantly—if one intends to conduct an error analysis with a student’s CBM ORF, it is essential that all deviations are marked and the educator also notes exactly what was said by the student. For example, in addition to putting a slash ( /) through a word read incorrectly, also write the spoken word as it was pronounced by the student (e.g., if the printed word is <drew>, but the student says /draw/) above the slashed printed word. This step is where it can be helpful to return to audio recordings to ensure that the student’s errors are accurately reflected on the examiner’s copy of the passage.

All of the student’s responses are then recorded on an error analysis chart. Table 1 includes an error analysis chart that has been populated with all student errors from the passage shown in Figure 2. Educators may elect to use a simple table like this or access other freely available options (e.g., IRIS Center, 2024; Tennessee Center for the Study and Treatment of Dyslexia, 2022). Each row in the chart represents a word from the passage where the student provided an inaccurate response. In some cases, educators may also choose to track words that were skipped to help determine whether there are common features across words the student did not attempt to read aloud. On the chart, record the word as presented in the text in the first column (Printed Word) and the specific utterance produced by the student in the second column (Student Response). The subsequent columns correspond to the various word features of interest for the error analysis, as described in Step 3.

Sample Error Analysis Chart.

Note. Responses correspond to errors marked in Figure 2.

Step 3: Categorize and Analyze Errors

To analyze student’s word reading errors, it is helpful to categorize them based on the three major word knowledge sources: phonology, orthography, and semantics (i.e., morphology; Kim, 2020). According to theories of word reading, these three linguistic knowledge sources contribute to accurate and rapid word identification (Perfetti & Hart, 2002). Essentially, the better a student knows a word in all these aspects, the more fluently they can read and understand it.

To begin analyzing errors, first categorize the errors based on the type of word knowledge error the student made. Although there are many potential types of word reading errors, this section focuses on four theoretically based error types that can directly inform instructional decision-making: phonemic, orthographic, morphemic, and high-frequency word errors.

Phonemic Errors

Phonemic errors occur when a student does not accurately represent all of the sounds (i.e., phonemes) represented in a printed word. A phonemic error may involve retrieving the incorrect phoneme for the corresponding graphemes in a word or omitting a phoneme from the word. For example, a student may skip over or unsuccessfully blend a consonant blend in a word (e.g., saying “cap” for clap), misidentify a visually similar grapheme (e.g., saying /b/ when the letter <d> appears), or retrieve the wrong consonant phoneme (e.g., saying /v/ for <f>). Phonemic errors are more common in early readers but may persist longer through reading development for students with RD (Hulme & Snowling, 2016). The sample error analysis chart in Table 1 identifies one phonemic error made by the student, not representing the /l/ sound in people.

Orthographic Errors

Although phonemic-based errors are often easier to pinpoint, orthographic errors can introduce additional complexity. Orthographic word reading errors include deviations from simple one-to-one grapheme-phoneme mappings (e.g., <ea> can be retrieved as /ē/ as in each, /ĕ/ as in dead, /ā/ as in great, or /ə/ as in ocean), violations of common English rules (e.g., when the letter <g> is followed by <e>, or <y>, it makes a soft /j/ sound), or a syllable-based boundary error when pronouncing vowels in a multisyllabic word (e.g., reading hotel as “hŏtel”).

These types of errors are common among students with RD, as standard English orthography is estimated to represent more than 250 graphemes that connect to 44 phonemes (Moats, 2020). In particular, vowel teams bring complex challenges to students with RD. These students may treat each letter comprising a vowel team as its own sound (reading rain as “răĭn”) or retrieve a common vowel phoneme for the grapheme that is incorrect in the context of the word (reading head as “hēd”). Multisyllabic words compound vowel-related difficulties because these words often contain less-predictable letter–sound correspondences and vowel-stress reduction (Venezky, 1999). For example, a struggling reader may read the word spoken as “spah-ken” rather than “spō-ken,” pronouncing the short /ŏ/ sound instead of the long /ō/ in the first syllable. These errors are represented in Table 1 by mispronunciations of vowel patterns in the following words, with their correct pronunciation in parentheses: love (vowel is correctly pronounced as /ə/), chew (/ü/), around (/ow/), watery (/ē/), room (/ü/), and coffee (/ō/).

Morphemic Errors

Morphemes—the smallest meaningful unit of language—occur in all words. However, errors are categorized as morphemic when students misread free (i.e., standalone) or bound morphemes within multisyllabic words. A morphemic error occurs when a student misreads bound morphemes such as prefixes (e.g., de-, pre-, in-) and suffixes (e.g., -s, -ing, -ed) in multisyllabic words. Students may change an affix (e.g., saying “inperfect” instead of “imperfect”), drop affixes (e.g., saying “represent” instead of “representative”), or have not yet generalized the understanding that in English, the phonology of morphemes in multisyllabic words often diverge even when the spelling remains the same. For example, a student who has not generalized this understanding may incorrectly pronounce the word national as “nā-shun-al,” not realizing that the pronunciation of nation is influenced by the added bound morpheme.

These types of errors likely occur when phonics instruction does not advance beyond single-syllable word reading instruction (Apel & Masterson, 2018). Thus, students with RD are likely to struggle with accurately retrieving proper morpheme pronunciations in multisyllabic words. Furthermore, students with RD have been shown to have significantly lower levels of morphological awareness compared with their peers, which further exacerbates the acquisition of accurate multisyllabic word reading skills (Siegel, 2008). There are a limited number of complex multisyllabic words in the fourth-grade CBM ORF passage presented in Figure 2; however, morphemic errors can be seen in the words worried and watery (see Table 1).

High-Frequency Word Errors

The last type of word reading error is a high-frequency word error. These errors occur when a student has difficulty reading a word that is commonly occurring in the English language and often includes irregular spelling. Words such as does, was, put, and there frequently appear in text; however, these words cannot be easily decoded by relying on simple grapheme–phoneme correspondences (GPCs) alone. Therefore, students with RD may continue to struggle with these words despite receiving a systematic phonics-based intervention. The sample in Table 1 indicates that the student mispronounced two high-frequency words: they and people.

Step 4: Use Error Analysis Data to Inform Instructional Adjustments

Identifying the specific types of word reading errors—phonemic, orthographic, morphemic, and high-frequency word errors—allows educators to provide targeted, individualized adaptations based on student diagnostic assessment data. After categorizing student errors, educators can identify patterns from the resulting diagnostic snapshot indicating areas of need and adjust their instruction accordingly. For example, the pattern an educator may notice from the provided sample is that the student makes more orthographic and morphemic errors (see Table 1). The sections below provide instructional recommendations related to each error type to guide ongoing intervention intensification and individualization.

Phonemic Errors? Focus on Phonemic Awareness and Simple GPCs

Educators can address phonemic errors by reinforcing phonemic awareness and instruction in simple associations between letters and their sounds—GPCs. Adaptive instruction can involve modeling how to blend sounds slowly and carefully, followed by guided practice where students practice blending with the educator’s feedback. Additionally, use systematic phonics instruction with the explicit teaching of simple GPCs that the student struggles with, accompanied by multiple opportunities to read the taught GPC in both isolated words and connected text (Ehri et al., 2001).

Orthographic Errors? Emphasize Vowel Patterns and Syllable Awareness

Orthographic errors reflect gaps in students’ knowledge of common English spelling rules and the complex grapheme–phoneme relationships present in English orthography (Moats, 2020). Students with RD may struggle to recognize vowel teams or syllable boundaries, leading to inaccurate word reading (Bhattacharya & Ehri, 2004). Therefore, to address orthographic errors, explicitly teach high-utility vowel spelling patterns, such as the multiple sounds of common vowel teams such as <ea>. Facilitate opportunities to practice these patterns in both isolation and connected text. Educators can also engage students in activities that focus on breaking words into syllables to build awareness of how vowels are pronounced differently in various syllable types.

From the student’s responses in Table 1, the educator may begin with explicit instruction on the concept of vowel teams paired with a high-utility vowel team this student will come across in the grade-level text. This instruction may sound something like this: Let’s talk about vowel teams! A vowel team is when two or more vowels work together to make a single sound. One example is the vowel team “ea.” When you see “ea” in a word, it often makes the /ē/ sound, like in the word “beach.” Let’s say the word “beach” together slowly: /b/ /ē/ /ch/. Great! Did you hear the long /ē/ sound in the middle? That’s the vowel team “ea.” Let’s look at some more examples where “ea” makes the /ē/ sound. Listen as I read them: leaf, mean, and team. Can you hear the /ē/ sound in each word? Now let’s say them together: leaf, mean, team. Now it’s your turn! I’ll show you a word, and you read it out loud. If you see the “ea” vowel team, say the /ē/ sound. Ready? (Student practices with the following words: seat, dream, neat, read) Great job! Remember, if you see the “ea” vowel team, it usually makes the /ē/ sound. Let’s get reading.

Morphemic Errors? Support Morphological Awareness

Students may make morphemic errors when they struggle to accurately read affixes—such as prefixes and suffixes—within multisyllabic words. These errors highlight the need for targeted instruction that builds students’ morphological awareness by helping them recognize and understand the meaning and function of common morphemes (Apel & Masterson, 2018). Effective instruction in this area involves explicitly teaching students how words are constructed from free and bound morphemes and providing them with opportunities to practice breaking words into their morphological components (Giazitzidou & Padeliadu, 2022; Siegel, 2008).

For example, when introducing the prefix im-, educators can explain that it means “not” and demonstrate how it alters the meaning of base words. Using examples such as impossible (“not possible”) and impatient (“not patient”), educators can model how students can generalize their understanding of this prefix to decode and comprehend new words. To reinforce this concept, educators might engage students in word-building activities where they add different prefixes or suffixes to root words and discuss the resulting meanings (e.g., improper, immature).

Students need to understand that the pronunciation of morphemes can vary across different words, even though the spelling remains consistent. For example, educators might guide students in recognizing that the morpheme nation is pronounced differently when it appears in national or international than in the word nation itself. Through this type of instruction, students learn to be more flexible in applying their knowledge of morphemes, which can improve both word reading accuracy and comprehension.

High-Frequency Word Errors? Build Automaticity

High-frequency word errors occur when students struggle to read common words that do not follow regular phonics patterns. To reduce high-frequency word errors, it can be helpful to focus on building automatic recognition of these words. Repeated practice with immediate, corrective feedback is essential. One effective explicit instructional strategy educators can use is strategic incremental rehearsal (see the work by Novelli & Ardoin, 2024). To employ this strategy, begin by introducing a singular high-frequency word on a flashcard. Once students are familiar with the new word, educators gradually introduce previously learned high-frequency words alongside it to create a flashcard deck where students practice reading through the cards in random order, incorporating new words with known words to enhance retention focused on mastery.

Final Thoughts

The title of this article asked what’s in a word? The answer is—a lot! By analyzing and categorizing word reading errors, educators can make targeted instructional decisions that address the underlying causes of word reading difficulties. Phonemic errors require strengthening students’ phonemic awareness and simple GPC skills, orthographic errors call for explicit instruction on spelling patterns and syllable boundaries, morphemic errors benefit from activities that build morphological awareness, and high-frequency word errors are best addressed through repeated mastery-based practice. With intentional and responsive instruction tailored to the specific types of errors students make, educators can support improved word reading accuracy and fluency, ultimately enhancing students’ reading comprehension and academic success.

Footnotes

Correction (April 2025):

Typographical errors in Table 1 and Figure 1 of this article have been corrected since its original publication.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This research was supported in part by the U.S. Department of Education, Institute of Education Sciences (grant no. R324A190126) to The University of Texas at Austin. Nothing in this case necessarily reflects the positions or policies of the agency and no endorsement by it should be inferred.