Abstract

In this study, we examined the relation of observed classroom practices to language and literacy achievement and the moderation of this relation for students from pre-K to sixth grade. A total of 136 studies (N = 107,882 participants) met the inclusion criteria, of which 108 studies were included for meta-analysis and the other 28 studies were narratively synthesized. The average zero-order (r = .12) and partial correlations (rp = .04) were statistically significant but weak in magnitude. The relation was slightly weaker in upper than in lower grade levels, and stronger for observations capturing macro quality and instructional dimension than those capturing micro measurement and emotional or structural dimension, respectively. The relation did not vary by observation duration, frequency, adopted statistical approach, or type of covariates. Taken together with the narrative synthesis, the results highlight the complex nature of classroom observation and a need for more classroom research, particularly on higher grade levels.

Keywords

The classroom is the primary setting for students where formal learning and social interactions take place. A considerable portion of the variance in student learning can be explained at the classroom level (Foorman et al., 2006; Hanushek, 2002). It is well established that effective instruction is contingent on multiple instructional components (e.g., content, organization; Pressley et al., 2001), dynamic teacher-child interactions (Cabell et al., 2013; Crosnoe et al., 2010; Pianta & Hamre, 2009), and transactional child-instruction interactions (Connor, Piasta, et al., 2009; Morrison & Connor, 2009). To capture this complex and multidimensional construct, classroom observation has long been used as a measurement tool (Pianta & Hamre, 2009). However, early reviews in the 1950s and 1960s consistently revealed inconclusive or confusing associations between teaching acts and student outcomes (Ackerman, 1954; Flanders & Simon, 1969; Morsh & Wilder, 1954), which could be attributed to subjective classroom observations, untenable hypotheses, problematic statistical methods (Frick & Semmel, 1978; Gage, 1963; Medley & Mitzel, 1963), and equivocal predictor and criterion variables (Yamamoto, 1963). More recently, quantitative research has used standardized observations at scale with adequate reliability and validity and has contributed more generalizable and robust empirical evidence on effective teaching (e.g., Connor, Jakobsons, et al., 2009; Early et al., 2006; La Paro et al., 2004).

In the present study, we aimed to extend the prior literature on classroom observations to examine the relation of the quality and quantity of classroom practices to students’ language and literacy performance from prekindergarten (pre-K) to sixth grade, using meta-analysis. Previous meta-analyses and qualitative systematic reviews focused more on early childhood education with macro-level observations (e.g., overall classroom quality) and reported weak associations between childcare quality and preschoolers’ academic, socioemotional, and behavioral outcomes (Brunsek et al., 2017; Burchinal et al., 2011; Keys et al., 2013; Perlman et al., 2016; Ulferts et al., 2019). Furthermore, few of them examined students in upper elementary grades and their language/literacy development. In this study, we addressed these gaps by investigating the classroom observation–student achievement relations, including (a) both macro- and microlevel observation characteristics and (b) students from pre-K to grade six. Moreover, we explored a series of potential moderators (e.g., student grade level, observation type and dimension, observation duration and frequency, child language and literacy outcomes, adopted statistical approach, and covariates).

Observation Type and Dimension

Numerous observation instruments have been created and developed. Given the complexity of classroom instruction, there is no consensus on how to classify classroom teaching practices. However, one broad approach that has been adopted in previous work is conceptualizing classroom instruction as the macro-level classroom quality and as the microlevel discrete classroom practices (Gosling, 2002; Wragg, 1999; Connor et al., 2014). The macro-level observation typically rates the global quality of the teacher–child interactions (e.g., teacher responsiveness, student engagement) and/or the classroom structural features (e.g., physical environment, class size, teacher qualifications), and these typically have high-inference composite scores or indices. Examples include the Early Childhood Environment Rating scale and its revised edition (ECERS and ECERS-R; Harms et al., 1998), the Classroom Assessment Scoring System (CLASS; Pianta, La Paro, & Hamre, 2008), the Early Childhood Classroom Observation Measure (ECCOM; Stipek, 1996), and the Classroom Practice Inventory (CPI; Hyson et al., 1990). In contrast, the microlevel observation uses a low-inference, time-sampling coding system, commonly measuring the discrete occurrences (e.g., amount, ratio) of certain teacher/student behaviors, pedagogical strategies, and settings, such as the Individualizing Student Instruction classroom observation (ISI; Connor, Morrison, et al., 2009). In addition, there are validated observation instruments that incorporate both global ratings and counts of discrete instances, such as the Observational Record of the Caregiving Environment (ORCE; National Institute of Child Health and Human Development Early Child Care Research Network [NICHD ECCRN], 1996) and the Classroom Observation System-K-5 (COS-K-5; NICHD ECCRN, 2002).

In addition to the differences in the evaluation approaches such as macro and micro aspects, extant classroom observation instruments also vary in the content of instruction that they evaluate. In general, classroom observation instruments examine three dimensions of content of instruction: (a) the instructional dimension, such as quantity and quality of literacy content delivery, explanation and monitoring, and stimulation and feedback; (b) the emotional dimension, such as classroom climate and organization, praise and discipline, behavior management, sensitivity, responsivity, detachment, and disengagement; and (c) the structural dimension, such as classroom physical environment, book category, and writing materials category. We acknowledge variation within the macro- and microlevel observation systems—different observation instruments were developed with different goals and conceptualizations. For example, sociocultural frameworks such as culturally responsive teaching (Gay, 2002) and critical literacy (Luke, 2012) emphasize that learning occurs through social interactions and encourage learning from experience and discourse (Vygotsky, 1980), whereas social cognitive perspectives highlight individual cognitive skills such as self-regulation (Connor, 2016), socioemotional aspects such as self-efficacy, outcome expectancies, and sociostructural impediments and reinforcements in the learning and performance of actions (Bandura, 2001; Schunk, 2012). Classifying observation systems into observation types and dimensions was not to ignore these differences, but instead to examine whether any global differences in classroom observations such as high-inference rating versus low-inference quantification are differentially related to elementary students’ language and literacy skills. The same is true for the content of instruction. Therefore, the results of the present meta-analysis should be interpreted with this in mind.

Relation Between Teaching Practices and Student Achievement

Previous studies have found that the association between global process quality (i.e., emotional and instructional interactions, materials and activities) and student achievement was not consistently significant, and even when significant, the relation was modest in magnitude (Burchinal et al., 2008, 2011; Guo, Connor, et al., 2012; Howes et al., 2008; Mashburn et al., 2008; Taylor et al., 2000; Weiland et al., 2013). The early childhood education and childcare support systems vary geographically, and many European studies have further corroborated this significant but small effect of global process quality on children’s development (Abreu-Lima et al., 2013; Cadima et al., 2010; Ulferts et al., 2019). Researchers have suggested that factors such as short pre-post interval and lack of valid, reliable, or suitable measures might help explain the modest or non-significant relations (Burchinal et al., 2011; Keys et al., 2013; Weiland et al., 2013). Furthermore, emerging evidence has suggested a nonlinear relation where the effect is larger in classrooms with a higher quality of instruction (Burchinal et al., 2010, 2016; Cadima et al., 2010; Hatfield et al., 2016).

Despite the limited relation to general academic achievement, previous studies have linked the global quality of teacher-child interactions to students’

Teacher, Student, and Classroom Factors

A variety of teacher, student, and classroom features have been examined in prior research. Teacher credential/education, teaching experience, and teacher knowledge and beliefs have been found to significantly influence student achievement though to a limited extent or indirectly through classroom practices (Cash et al., 2015; Darling-Hammond & Youngs, 2002; Early et al., 2006; Pianta et al., 2005; Wayne & Youngs, 2003). Children’s age, gender, and initial skills as well as their home literacy, parent education, and socioeconomic status (SES) are also common predictors/covariates in classroom research (e.g., Burchinal et al., 2000; Connor et al., 2005; Ponitz & Rimm-Kaufman, 2011), though findings are inconsistent. For example, researchers found that student age was negatively related to the classroom quality–student outcome association (Burchinal et al., 2011), students’ baseline skills and primary language were significantly associated with instructional effectiveness in reading (Park et al., 2019), and childcare quality varied across and within geographic regions and countries (Vermeer et al., 2016). Furthermore, evidence has suggested that classroom instruction varies by child characteristics (e.g., baseline skill level), such that students differentially benefited from given instruction (Connor, Morrison, & Petrella, 2004). In contrast, Keys et al. (2013) found nonsignificant moderating effects of children’s demographic characteristics (race, gender, socioeconomic status), baseline skills, and behaviors. Likewise, previous literature has shown nonsignificant associations between the structural features (e.g., program infrastructure and design such as teacher–child ratio, teacher qualifications, class size) and student academic and social development (Howes et al., 2008; Mashburn et al., 2008). For instance, some found few class-level characteristics were associated with or predictive of classroom quality or children’s academic outcomes (Early et al., 2007; Justice et al., 2008; NICHD EECRN, 2002; Walsh & Tracy, 2004). Overall, these studies indicate that the relation between classroom instruction and student achievement might change as a function of a multitude of student-, family-, teacher-, and class-level features.

Other Factors

Factors such as the outcome domain (e.g., language versus mathematics) and analytic approaches (e.g., multilevel, latent approaches) as well as different types of predictors/covariates are also potential moderators. Specifically, measurement error attenuates the relation of interest. Latent variable approaches account for measurement errors and the dimensionality of constructs with a potential increase in effect size. Multilevel models account for the nested data structure where students are clustered at the classroom level so they yield less biased estimation and significance tests. For example, in a longitudinal meta-analysis, Ulferts and colleagues (2019) detected a lasting impact of child care quality on language and mathematics development throughout the primary school phase, with larger effects found in studies that applied multivariate analyses while controlling for child and family background characteristics.

Current Study

Tremendous heterogeneity exists in how observation is operationalized and measured, what child outcomes are measured, and what statistical approaches are employed (Brunsek et al., 2017; Perlman et al., 2016; Ulferts et al., 2019). However, insufficient research has been conducted to synthesize how both macro- and microlevel classroom practices and different dimensions of instruction, as well as teacher and child factors, are related to early childhood and elementary students’ language and literacy achievement (an exception is Park et al., 2019, which is a narrative review). In the present study, we extended prior reviews to examine the relation between both the quality and quantity of classroom practices and students’ language and literacy performance from pre-K to sixth grade, using a meta-analysis.

Two research questions guided our investigation: What is the relation of observed classroom practices to students’ language and literacy performance from pre-K to sixth grade? Does the relation vary by student grade level, observation type and dimension, observation duration and frequency, child language and literacy outcomes, adopted statistical approach, and covariates? According to the previous research findings, we hypothesized a weak association between the observational results and student language/literacy outcomes, with the association expected to be stronger for younger students than older students (Burchinal et al., 2011). In addition, we expected a varied relation by the features of observation and assessed skills (Brunsek et al., 2017; Keys et al., 2013; Perlman et al., 2016; Ulferts et al., 2019).

Because we focused on only the domain of language and literacy outcomes, we classified child outcomes as reading (phonological awareness, print concept, letter knowledge, word reading, reading fluency, reading comprehension), language (oral language, listening comprehension, vocabulary), and writing (spelling, handwriting, writing quality) in this study. Although the effects of observation duration and frequency have not been widely examined in previous studies, we noticed great ranges of the observation length and interval across instruments and studies. Hence, it is an open question whether observation duration and frequency might influence results.

Method

Literature Search

To identify relevant studies, six electronic databases (Academic Search Complete, Education Source, ERIC, Primary Search, Teacher Reference Center, and psychINFO) were searched using the following combination of terms: all(class* observ*) AND all(teach* OR instruct* OR organiz* OR act* OR practi* OR control* OR support) AND all(litera* OR lang* OR lingu* OR lexic* OR read* OR letter OR word OR comprehen*). This search strategy was built on the three core constructs in the current review: classroom observation, teacher instruction, and language/literacy outcome. For each construct, we included multiple synonyms and added truncation wildcards to ensure high searching productivity (i.e., replacing word’s ending with an asterisk to recruit all possible words with the same root). Moreover, we used the above Boolean operators to ensure any study that simultaneously contains at least one term from each construct to be retrieved. To further restrict the search to include the target population, additional filters and limiters (embedded in the database system by default) were applied (see Table S1, available on the journal website). In addition, 17 relevant journals were digitally searched:

Inclusion and Exclusion Criteria

Following the above search procedures, a total of 9165 records from database searches (through February 2020), 352 records from journal searches (through June 2020), 16 records from author requests, and 65 records from a manual search of reference lists were identified and imported to web-based systematic review software, Rayyan (Ouzzani et al., 2016). To ensure that the included studies were most relevant to our research topic and questions, the following inclusion and exclusion criteria were applied in the title and abstract screening phase. First, participants were students from pre-K to sixth-grade level (or 3–12 years old). For example, we excluded studies that solely focused on infants and secondary or higher education (e.g., Lau, 2012; Lucero & Rouse, 2017; Shin & Partyka, 2017). Second, studies quantitatively measured teacher language and/or literacy instruction through classroom observations. Accordingly, qualitative case studies (e.g., Reyes, 2006), studies that adopted only teacher or student self-reported practices (e.g., Certo et al., 2010; Ollin, 2008), or studies that focused on observation of teaching in subjects other than language/literacy (e.g., science teaching, Arias et al., 2016; math learning, Sun, 2019) were excluded. Third, studies reported the effect sizes (correlation coefficients or linear regression coefficients) between observational variables (e.g., quality rating, the occurrence of certain teaching practices) and student academic outcomes on language and/or literacy achievement. Some studies investigated teacher effectiveness through quantitative classroom observations, but they did not provide the correlations with students’ language and literacy skills (e.g., focusing on the development and validation of observation framework, Kington et al., 2011) or provided teacher/expert-rated students’ behavior or language use instead (e.g., Hale et al., 2005; patterns of language use based on observations rather than summative assessments, Markova, 2017). Fourth, studies were reported in English (see Table S1 for a detailed description of data sources).

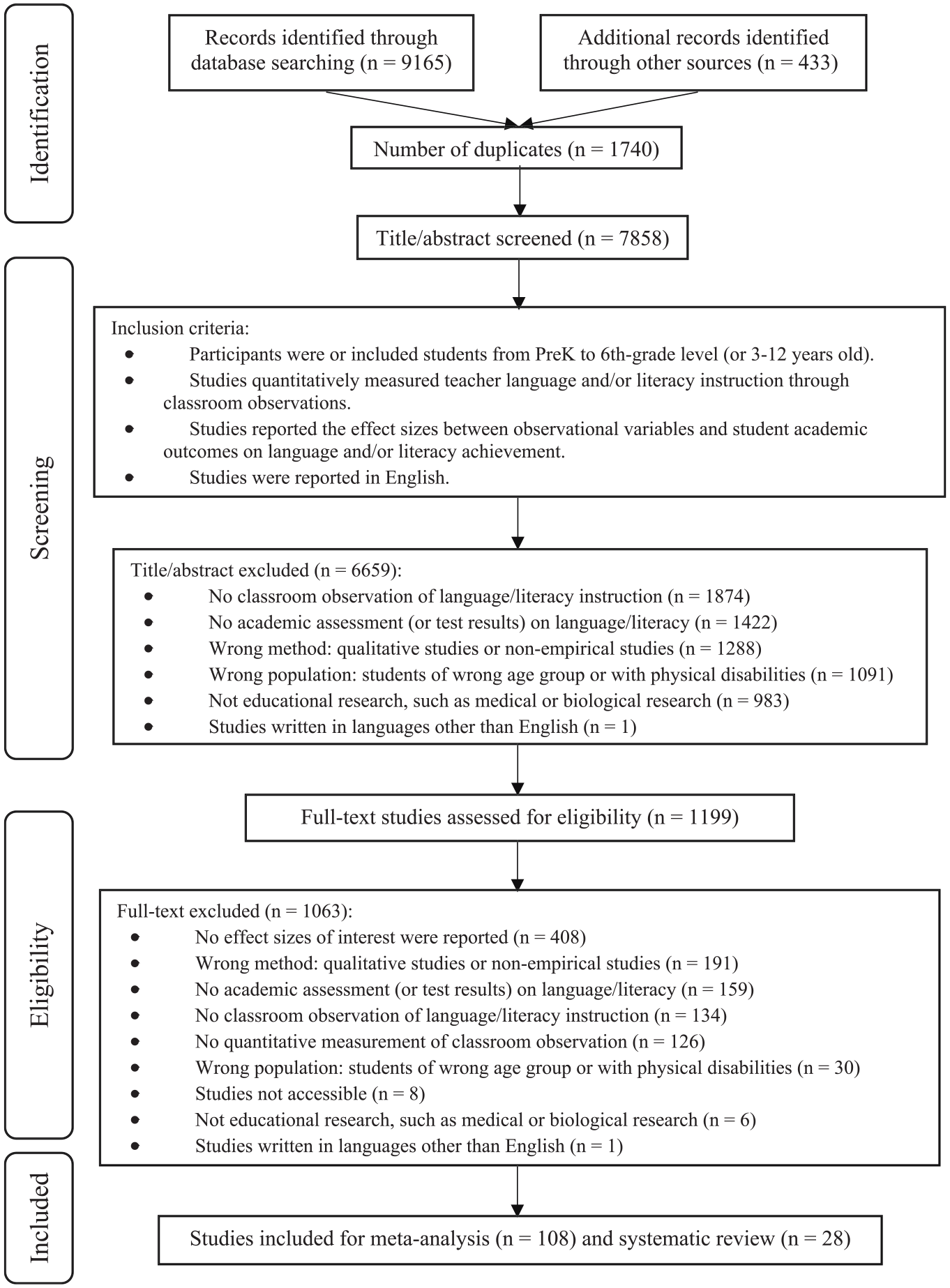

The detailed screening process is shown in Figure 1. After removing the duplicate records, we screened the title and abstract information for 7858 studies. During the initial screening phase, many studies were excluded due to the absence of classroom observation (

PRISMA chart of screening process.

The title/abstract and full-text screenings were conducted by the authors (trained researchers who have expertise in literature search and literacy education) with adequate reliability (91.24%) established prior to the formal screening process.

Coding Procedures

The coding scheme was developed through an iterative process. First, five studies were randomly selected and coded by the first author to generate preliminary coding categories. Then, we modified and consolidated the coding categories based on a random set of 10 studies. Using the finalized coding scheme, two authors independently coded the remaining studies after they reached an agreement of 92%. Finally, 20% of the included studies were randomly selected for double coding, and the overall interrater reliability was 94% agreement.

The main study information for each study was coded to facilitate statistical analysis (see Appendix A). Specifically, we coded basic study information including authors, publication year and type, study location, and sampling strategy. Student participants were coded for their race/ethnicity, primary language, maternal education, SES, gender, learning disability status, grade level, and sample size. For teacher participants, we coded their education level, teaching experience, gender, and sample size.

The classroom observation data were coded based on two general categories: macro rating and micro measurement. Under each category, multiple features were coded: measurement point (grade and semester when observation was conducted, duration and frequency of the observation), name of the observation instrument, dimension(s) of the observation (instructional, emotional, structural, mixed; see Table S2 for detailed examples), reliability of the observation instrument, the observer identity (e.g., researcher, teacher), observation mode (e.g., video-based coding, live/real-time rating), interrater/intercoder reliability, the language of instruction, and class size (the number of students per class or teacher–child ratio). With regard to the academic outcome, the measurement point of the test, test name and type (experimental or standardized), assessed skills (reading, language, or writing skills), and test reliability were coded. Lastly, the following were recorded: the correlation coefficients, standardized regression coefficients and corresponding

Statistical Analysis

We conducted a correlational meta-analysis including both the zero-order correlations and the partial correlations from more complex models. Typically, the correlational meta-analysis extracts only zero-order correlation coefficients. Considering that most classroom research employs multiple and multivariate regression models, eliminating the studies that use complex models would result in a great loss of the studies of interest and thus might misrepresent the population parameters. Therefore, in addition to collecting the zero-order correlations reported in studies, we also collected standardized partial correlations from the linear regression models and analyzed them when the corresponding zero-order correlations were not provided by the authors after contact. For the studies reporting partial correlations, we classified the covariates based on their shared pool of common covariates. Specifically, we identified four types of covariates: student features such as initial achievement level, gender, and race; family characteristics such as parental education, employment, marriage status, and poverty; teacher characteristics such as teacher age, teaching experience, and teacher education level; and classroom features such as class composition and program type. We dummy coded their presence or absence when performing meta-regression (Aloe & Becker, 2012; Aloe & Thompson, 2013).

The effect size indices in this meta-analysis are correlation coefficient,

All calculations and statistical analyses were conducted in the RStudio open-source software (Version 3.6.3; R Core Team, 2018; Version 1.2.5033; RStudio Team, 2016) using functions available in the metafor package (Version 2.0-0; Viechtbauer, 2010). To ensure that the sampling distributions of

A multilevel random-effects model was used to account for the dependency of the effect sizes with restricted maximum likelihood estimation (Borenstein et al., 2011). Although multiple effect sizes seemed to be nested at the levels of grade, study, and research team, no significant variance was detected at the study or researcher level. Hence, the data set was fitted into a more parsimonious two-level model where individual effect sizes were nested within the grade level. We acknowledge the superiority of robust variance estimation (RVE) for handling dependent effect sizes. However, it has a few important limitations. First, it neither models heterogeneity at multiple levels nor provides corresponding hypothesis tests. Second, the power of the categorical moderator highly depends on the number of studies and features of the covariate (Tanner-Smith, Tipton, & Polanin, 2016). When the number of studies is small, the test statistics and confidence intervals based on RVE can have inflated Type I error (Hedges et al., 2010; Tipton & Pustejovsky, 2015). Relating to our cases, many of our moderators had imbalanced distributions (e.g., see Tables 1 and 3; such as observational dimension and outcome type where some had over 100 cases and some had less than 20). Consequently, tests of particular moderators may be severely underpowered. Given these limitations, we prioritized the multilevel meta-analysis given that many studies contained independent groups of students from different grades and it could meet the goals of operating heterogeneity, moderation, and sensitivity analyses that are not currently available for RVE. In addition, we adopted RVE in sensitivity analysis for robustness check.

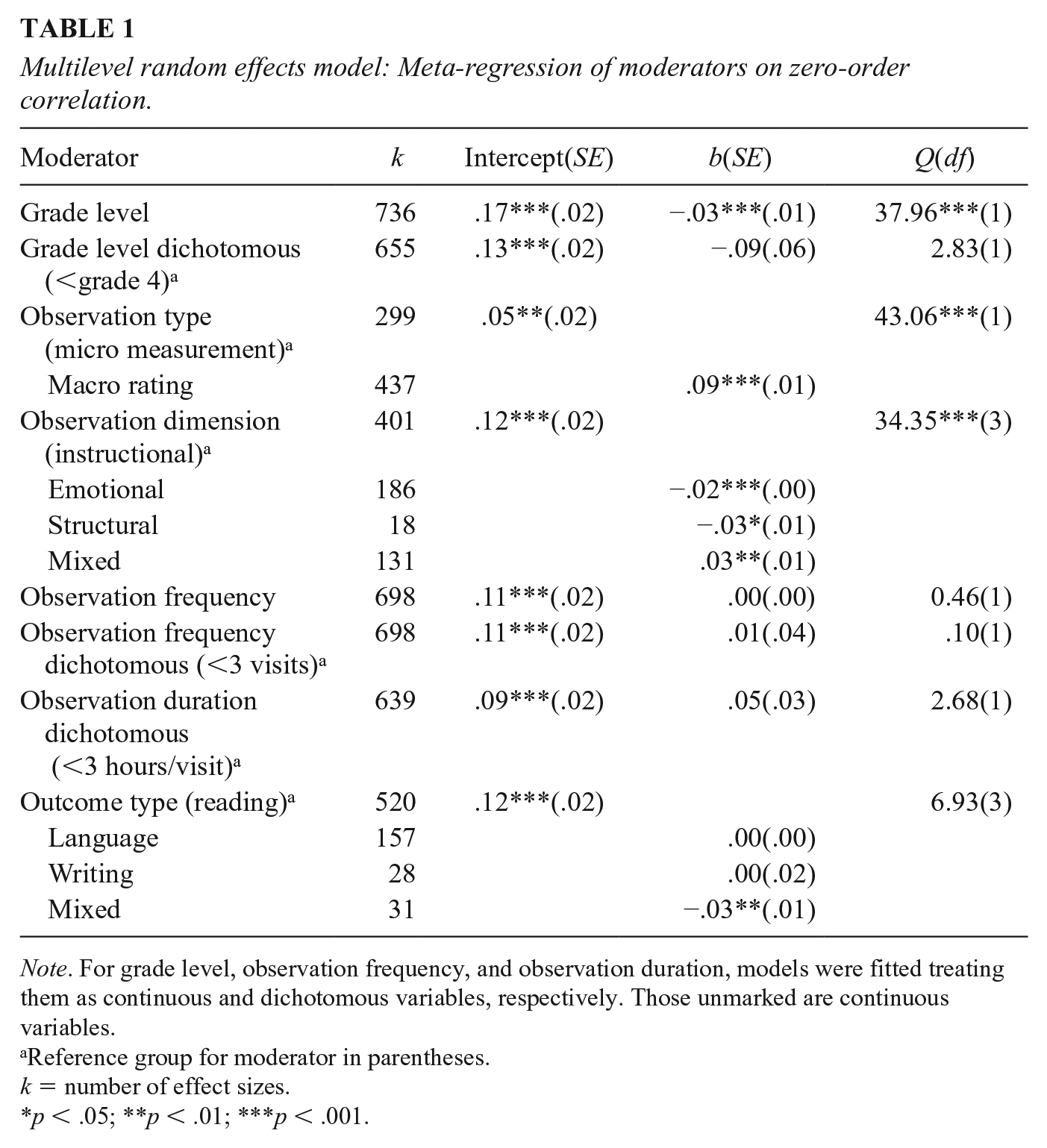

Multilevel random effects model: Meta-regression of moderators on zero-order correlation.

Reference group for moderator in parentheses.

The homogeneity statistic

Results

Descriptive Information

A total of 136 studies (

In all, the majority of the studies (

RQ1. What is the relation of observed classroom practices to students’ language and literacy performance from pre-K to sixth grade?

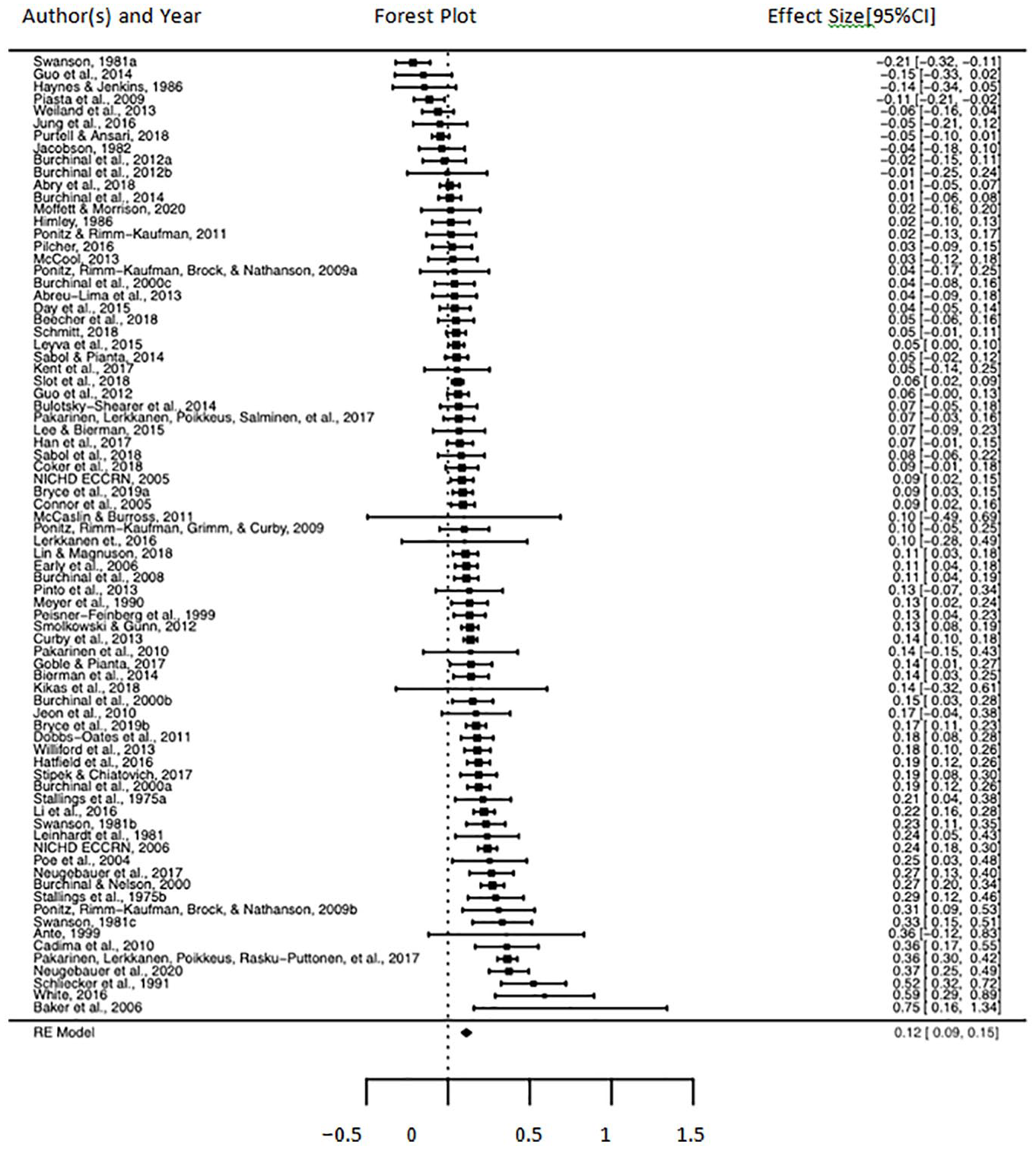

As shown in Figure 2, the overall magnitude of the zero-order correlation between observed classroom practices and students’ language/literacy outcomes was weak but significant (

Forest plot for zero-order correlation.

RQ2. Does the relation vary by student grade level, observation type and dimension, observation duration and frequency, child language and literacy outcomes, adopted statistical approach, and covariates?

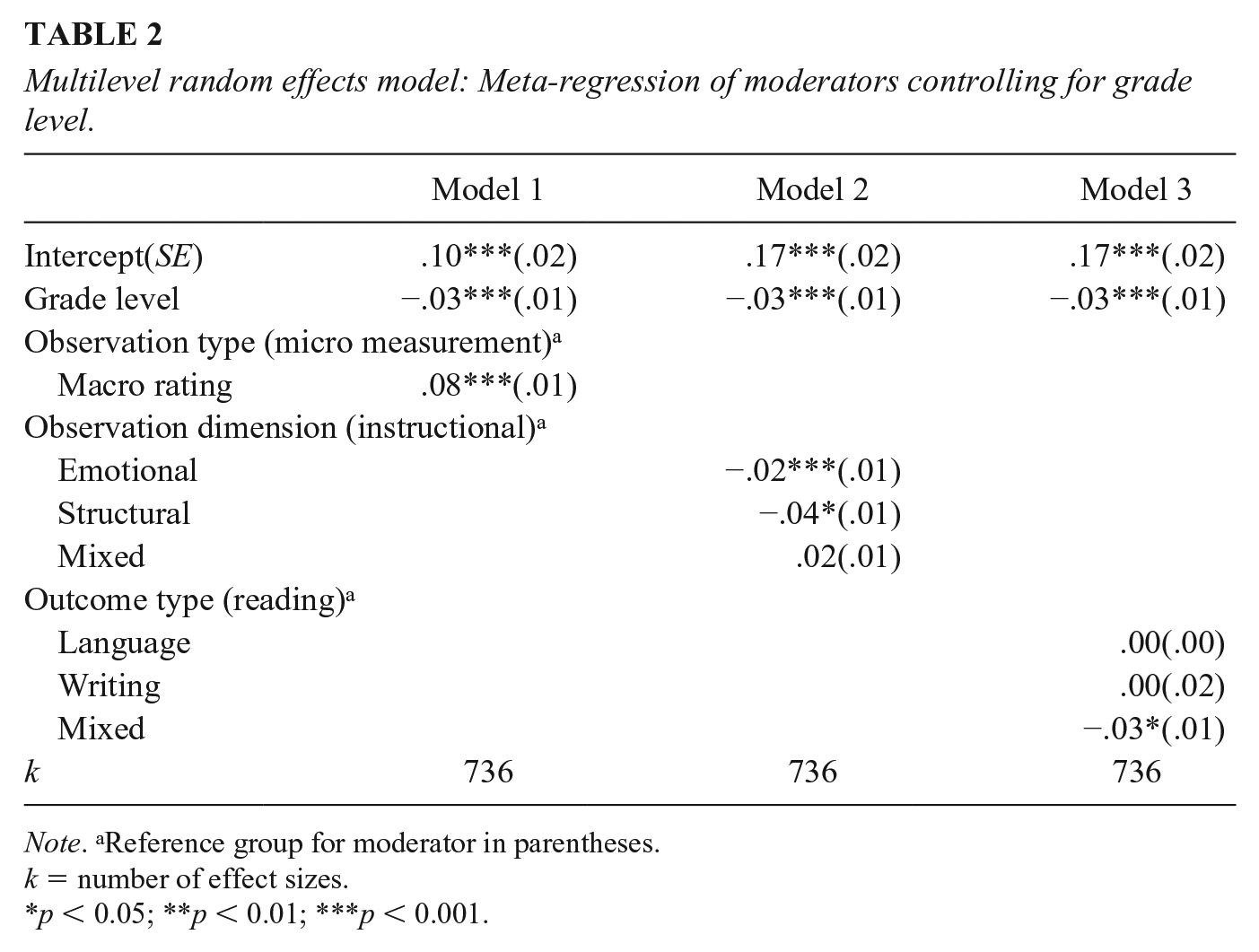

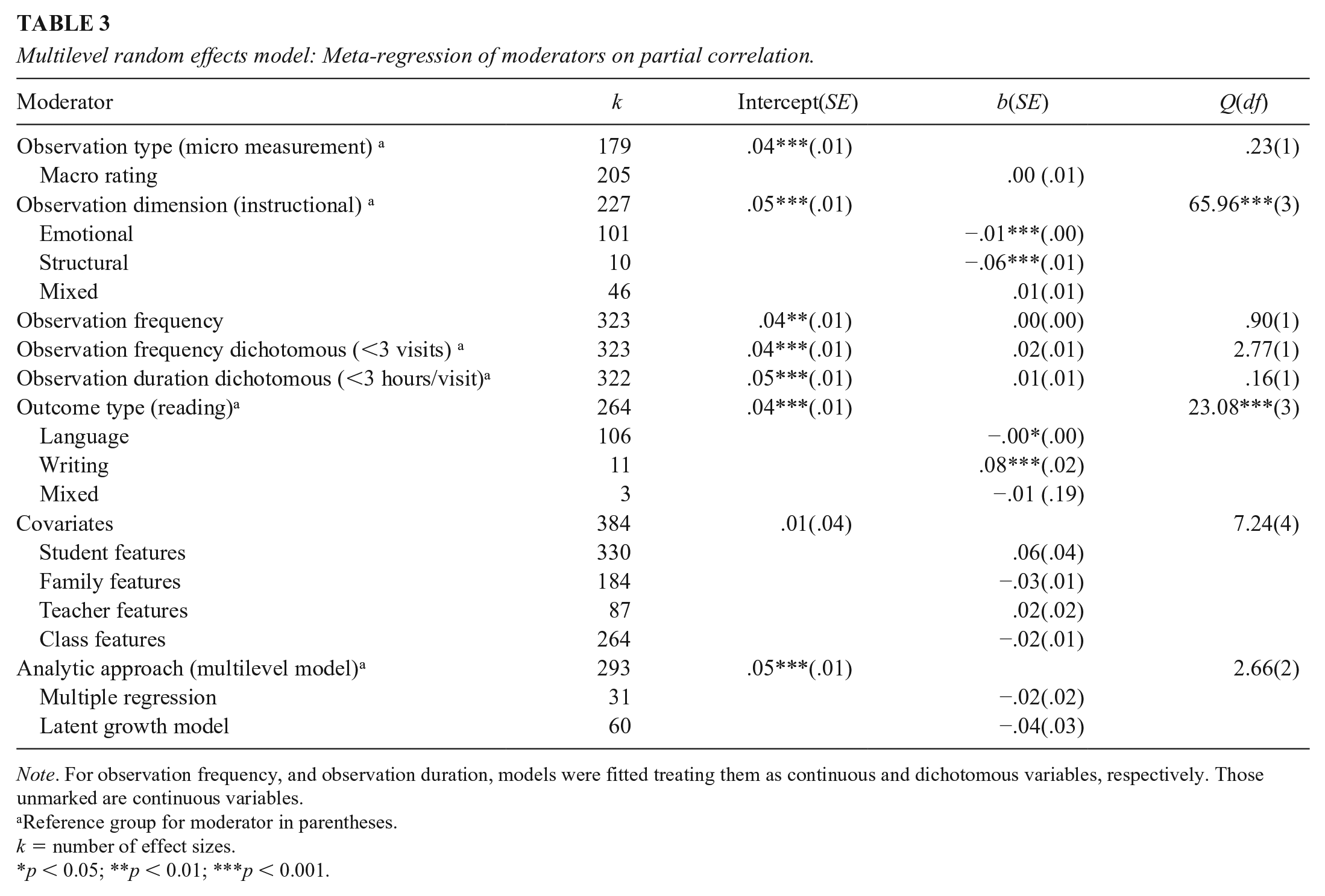

Given the substantial heterogeneity among the effect sizes, we conducted moderation analysis for grade level, observation type and dimension, observation frequency and duration, and child language and literacy outcomes (Tables 1 and 2). Because grade level had already been controlled in many studies reporting partial correlations, we did not carry out a moderation analysis of grade level for this set of studies (see Table 3).

Multilevel random effects model: Meta-regression of moderators controlling for grade level.

Multilevel random effects model: Meta-regression of moderators on partial correlation.

Reference group for moderator in parentheses.

Grade Level

We first treated grade level as a continuous variable in the moderation analysis and used (weighted) average grade level for the aggregated effect size associated with multiple grades. A significant and negative relation of grade level on the overall zero-order correlation was found between observed classroom practices and students’ language/literacy outcomes (

We then treated grade level as a dichotomous variable (preK3rd versus 4th–6th grade) given the shift in instructional focus from learning to read in lower grades to reading to learn in grade 4. The relation was slightly weaker in upper grades, but it was not statistically significant (

Observation Type and Dimension

As shown in Table 1, when using zero-order correlation data, we found a slightly stronger relation for the studies using macro-level observations with quality ratings (

Observation Frequency and Duration

There was no significant moderating effect of either observation frequency or duration (see Tables 1 and 3). We treated frequency as a continuous and then a dichotomous variable (whether or not the class was observed less than three times), and the overall zero-order correlation did not vary by the number of visits (continuous:

Child Language and Literacy Outcomes

Compared to reading skills (see Table 1), the zero-order correlation was weaker for mixed skills (

Covariates and Analytic Approach

Based on the nature of the covariates and the analytic approaches employed in the studies reporting partial correlations, we classified four types of covariates (student, family, teacher, and class features) and three types of analysis (multilevel model, multiple regression, and latent growth model). We found that neither of them significantly moderated the overall partial correlation between observed classroom practices and students’ language/literacy outcomes (see Table 3).

Sensitivity Analysis

Prior to the substantive meta-analysis, diagnostic tests for outliers, influential cases, publication bias, and potential threats from studies with lower quality and small sample sizes were performed in an effort to justify the robustness of our analysis.

To identify the potential outliers and influential cases, we plotted the studentized residuals, Cook’s distances, and covariance ratios of our main model (i.e., overall zero-order correlation estimation). Four studies were consistently identified as unusual cases (see Figure S1). However, refitting the model without the four studies still led to essentially the same overall correlation:

To visualize and statistically determine the existence of publication bias, we performed the funnel plot and Egger’s regression test (Sterne & Egger, 2006). The distribution of our data points was symmetric by and large with a few missing to the left, especially near the bottom, suggesting a lack of smaller effect sizes associated with studies of lower precision (see Figure S2). Likewise, the Egger’s test indicated that the intercept significantly deviated from zero (

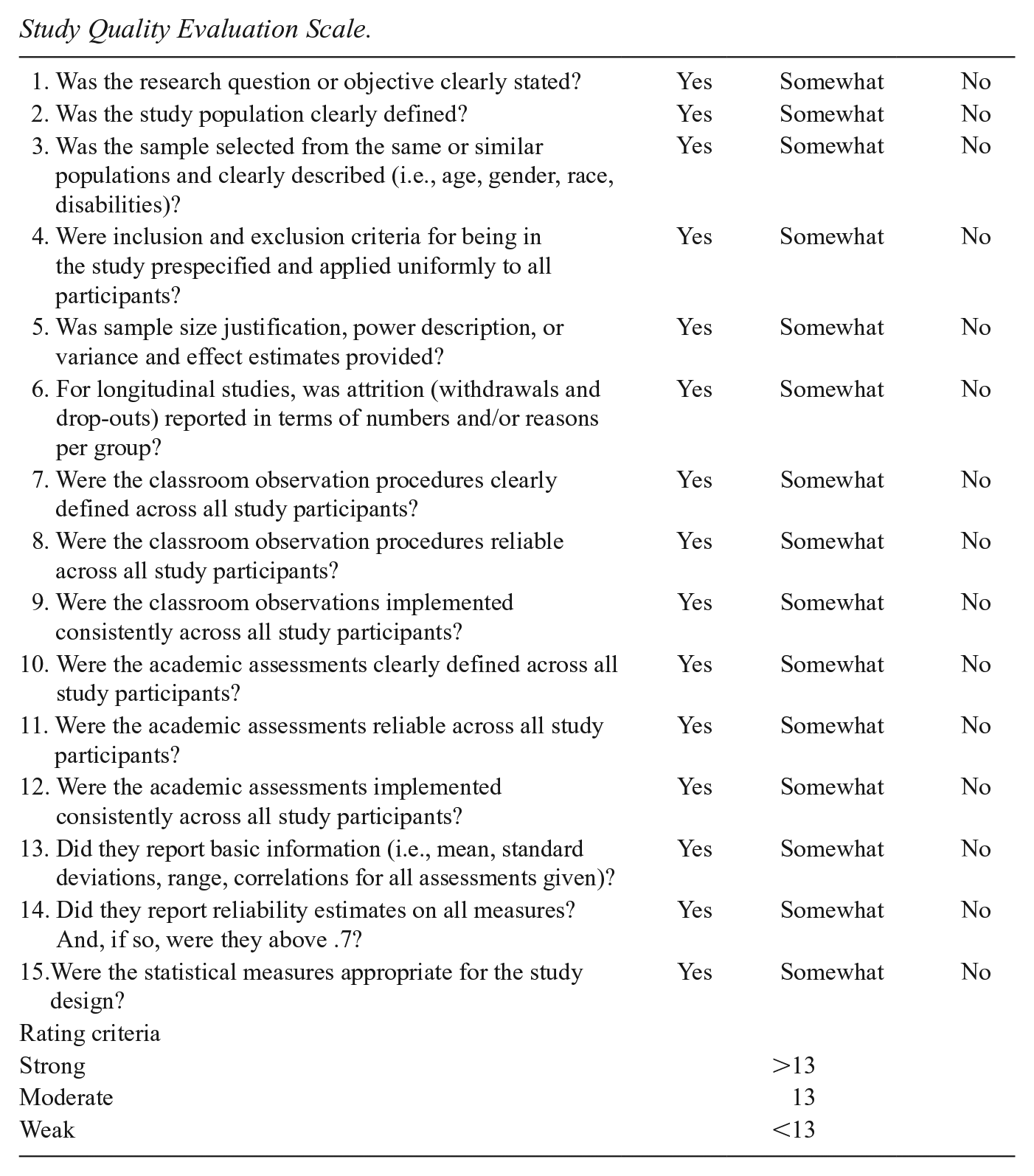

Moreover, we evaluated the quality of the included studies in terms of the research question, study population, classroom observation, outcome report, and statistical analysis. We rated the overall quality of each study as “strong,” “moderate,” or “weak” using the criteria shown in Appendix B (National Heart, Lung, and Blood Institute, n.d.). The comparison between the models fitted with and without the effect sizes associated with the “weak” studies was conducted and a nonsignificant difference was detected (

Additionally, we accounted for both hierarchical effects cases (i.e., multiple studies nested within a larger cluster) and correlated effects cases (i.e., the same participant group provides multiple effect sizes) with small-sample corrections using robust variance estimation (RVE). We ran RVE with the Robumeta package (version 2.0; Fisher et al., 2017). The RVE meta-analysis confirmed a weak but significant overall zero-order correlation,

We had 11 studies that reported partial correlations originally but provided zero-order correlations after we contacted the authors. For these studies, we refitted the main model (i.e., overall partial correlation estimation) by adding back these original partial correlations. The overall partial correlation was similar to the model results without these effect sizes,

In sum, our main analysis of the overall zero-order correlation between observed classroom practices and student language/literacy outcomes was not subject to any potential outliers/influential cases, study quality, or small sample issues except publication bias. Also, removing the studies that provided zero-order correlations upon author request from the partial correlation analysis did not statistically affect the main estimation for partial correlation either.

Narrative Synthesis

A total of 28 studies (see online supplementary materials) were included in the narrative analysis. Overall, the majority of the studies reported weak positive partial correlations between classroom practices and students’ language/literacy outcomes (e.g., Gersten et al., 2010; Howes et al., 2008; Kwan et al., 1998) whereas some studies reported moderate to strong positive partial correlations (e.g., McCartney, 1984; McIntosh et al., 2007) and a few reported weak negative partial correlations (e.g., Crocker & Brooker, 1986; Howes et al., 2008). Aligning with our meta-analysis results mentioned previously, Crocker and Brooker (1986) reported that the relation between classroom practices and students’ achievement was weaker for higher grades. Furthermore, there was a general tendency that the studies using macro-level observations with quality ratings yielded more stable positive relations (Connor et al., 2014; Kwan et al., 1998; McCartney, 1984; McIntosh et al., 2007; Pianta, Belsky, et al., 2008) whereas those using quantitative measurements of microlevel practices showed either negative or relatively weaker positive relations (Crocker & Brooker, 1986; Gersten et al., 2010). In line with the meta-analysis results, studies also showed that the association between classroom practices and student outcomes was consistently stronger when the observation was focused on the instructional dimension rather than the emotional or structural ones (Crocker & Brooker, 1986; Guo, Justice, et al., 2012; Howes et al., 2008).

In addition, these studies suggested several notable moderation effects. First, the direct and indirect relations between classroom practices and student achievement might differ by the language/literacy outcome of interest (Baroody & Diamond, 2016). For example, Kwan and colleagues (1998) found that child center quality was related to students’ verbal fluency but not word reading. McCartney (1984) reported that the strength of the relation was stronger for mixed measures incorporating both reading and oral language components than for measures examining only oral language.

Second, there was an interaction between observation types or dimensions in predicting students’ language/literacy outcomes (Connor et al., 2014; Guo, Justice, et al., 2012; Pianta, Belsky, et al., 2008). Students’ gains in vocabulary and comprehension were greater not only when teachers provided a high-quality classroom learning environment but also when they spent greater amounts of time on meaning-focused instruction in small groups (Connor et al., 2014). In addition, the classroom physical literacy environment (i.e., presence of writing materials) was positively related to children’s growth in alphabet knowledge and name-writing ability only in the context of high-quality, instructional supportive classrooms (Guo, Justice, et al., 2012). It was also found that the negative relation between the quantity of time spent on reading instruction and improvement in reading was mitigated when there was a higher level of emotional support in the classroom (Pianta, Belsky, et al., 2008).

Third, there was a moderation between classroom quality and student characteristics in predicting student achievement (Gosse et al., 2014; Vitiello et al., 2012). The relation between instructional support and language development was stronger for those children who had higher initial language skills (Gosse et al., 2014). It was also found that high emotional support was more positively associated with children’s gains in language/literacy for children who were resilient than those who were overcontrolled (Vitiello et al., 2012).

Lastly, teachers and peers also influenced the association between classroom practices and student achievement (Guo et al., 2011; Mashburn et al., 2009). For example, Guo and colleagues (2011) demonstrated that teachers’ sense of collegiality (i.e., collaboration among teachers within schools, implying shared responsibility and commitment to common educational goals) in combination with higher language and literacy instructional quality, predicted greater gains in children’s vocabulary scores. Another example is Mashburn and colleagues’ (2009) study, where better-managed classrooms had stronger relations between peers’ expressive skills and children’s growth in receptive language.

Discussion

The purpose of this study was to summarize and characterize the relation between observed classroom practices and children’s language/literacy achievement. Because of the variety of the models and the analytic approaches employed in the reviewed studies, we analyzed both zero-order and partial correlations and narratively synthesized studies containing interaction terms or indirect effects. This meta-analysis did not focus on the relation to student academic

First and foremost, aligning with our hypotheses—a weak association between the classroom observation and student language/literacy outcomes, and the association expected to be stronger for younger students than older students (Burchinal et al., 2011)—we found that the observed classroom practices were significantly but weakly associated with the language/literacy outcomes of students from pre-K to sixth grade (

There are several possibilities to explain the overall weak association. As suggested in Perlman et al. (2016), the weak association could reflect the unneglectable impact of family and other factors. In fact, compared to the zero-order correlation estimation, our smaller pooled partial correlation and its corresponding moderate heterogeneity estimate implied that on top of the teacher/child classroom behaviors and interactions, a multitude of student, family, teacher as well as class features could predict the teaching and learning outcomes. According to our narrative review, there were multiple moderation effects among different observation types, dimensions, and individual characteristics, which showed that the relation between classroom quality and students’ gains in language/literacy is a function of a complex set of factors. In addition, the measurement issues regarding the difference in the measurement unit for classroom observation and student performance should be noted. Specifically, the majority of studies reported observation results at the classroom level, not at the child level. Given that students differ in the extent to which they engage in and learn from the same instruction, observation results at the classroom level are not precise estimates of student learning.

There is another mismatch between the scope and specificity of the classroom observation and testing construct. Researchers have pointed out the validity issues inherent in present observation instruments as the majority were developed by child development experts based on conceptual rather than psychometric considerations. Consequently, although they capture a broad context (i.e., general interpersonal interaction and environmental/structural provisions), there is a lack of focus on cognitive and academic skills (Burchinal et al., 2011). Also echoing Connor’s statement (2013, p. 4) “observation tools are most useful when developed to serve a particular purpose and are put to that purpose,” it is possible that more focused observation on a particular dimension would yield a stronger relation to its targeting academic outcome (e.g., the relation between instructional quality or time spent on spelling and its corresponding spelling outcome). Ulferts and colleagues (2019) found instruments that measured teacher–child interactions outperformed those measuring material–spatial surroundings (more of a precondition for quality teaching) in capturing what matters for student learning. However, there are findings showing that more positive associations were found between ECERS/ECERS-R global scores and student outcomes as compared to its subscale scores (e.g., teaching and interactions, provisions for learning; Brunsek et al., 2017). In sum, efforts on improving observation validity are warranted and the tradeoff between broadly and narrowly focused observation scopes is well worth considering.

In line with the above findings and our hypothesis of a varied relation by the features of observation and assessed skills (Brunsek et al., 2017; Keys et al., 2013; Perlman et al., 2016; Ulferts et al., 2019), our results from zero-order and partial correlation data showed a stronger relation between observed classroom practices and student language/literacy outcomes for studies capturing the instructional dimension (quantity and quality of literacy content delivery, cognitive explanation and monitoring, stimulation and feedback) than the emotional dimension (classroom climate and organization, praise and discipline, behavior management, sensitivity/responsivity/detachment/disengagement) or the structural dimension (classroom physical environment, book category, writing materials category). These findings suggest that for students’ academic outcomes, variation in the instructional dimension matters more than that in the emotional and structural dimensions. Despite statistically significant moderation effects, these findings should be taken with caution because the difference in magnitude was small and the relevant measures varied in reliability (see instrument reliability and observer reliability coefficients in Table S4).

In addition, the types of student outcomes—language, reading, or writing—showed consistently significant moderating effects in both sets of analyses, but the patterns of results were opposite for zero-order data versus partial correlations. We found a larger effect size associated with comprehensive tests (assessing mixed skills) for zero-order correlations whereas a larger effect size was associated with writing tests among partial correlations. Keeping in mind that the two sets of analyses contained different samples and used diverse observation measurements as well as child language and literacy outcomes, the two sets of analyses are not comparable, but the overall findings underscore that the relation is contingent on both the observation dimension and child outcomes.

Our meta-analysis findings did not show a varied relation by observation frequency and duration, type of covariates, or analytic approach, although the latter two had significant moderating effects in Ulferts and colleagues’ (2019) study. There might be a couple of explanations. For example, observation frequency and duration were congruent and limited among the reviewed studies (most researchers observed twice or three times in total with 2 to 3 hours per visit despite a few extreme values that extended the overall ranges) such that their moderating effects may have been underestimated. Another reason is the variation in covariates included in studies. There was a great deal of variability among the covariates, and we broadly classified them into four types, which might have disguised some significant factors such as baseline skills and teacher education level. More consistent approaches across studies would help illuminate consistency in findings.

Limitations and Future Directions

There are several limitations of this study. First, although the aim of this review was to investigate the relation between observed classroom practices and students’ language/literacy outcomes from pre-K to sixth grade, more than half of the studies meeting our criteria focused on a lower grade level (pre-K to third grade). Therefore, the generalization of our results to upper-grade levels is more limited. These results indicate a need for more classroom research beyond early primary grade levels. Second, as mentioned earlier, the complexity and variability of the employed observation instruments and child outcomes rendered a relatively broad classification that might also obscure many findings. For example, we classified observational instruments according to their evaluation approaches and instructional content, which only allowed us to test the global differences among classroom observations. Another caveat was that too few studies assessed writing and mixed skills in comparison to the number of studies that assessed reading skills though we detected differential relations among studies assessing reading versus those assessing mixed or writing skills. Third, many studies did not report information on potential moderators such as teachers’ years of teaching, education level, class size, students’ learning disability status, and SES (see Table S3). Therefore, we could not explore whether relations differ by these features. Lastly, it should be noted that the pooled zero-order and partial correlations were generated from independent samples so they are not directly comparable. Hence, our comparative findings from zero-order versus partial correlations should be taken with caution. In addition, for the studies that provided both zero-order correlations and partial correlations, we prioritized zero-order correlations in the data analysis phase. We recognize that zero-order correlation does not account for the teaching impact on student academic growth over the school year because it does not account for students’ previous skill levels. Finally, this study cannot draw any conclusion about whether the instrument captures or fails to capture the latent construct of teaching or teaching practices. This is an important validity question that should be addressed in the original studies. Meta-analysis is a powerful tool but it relies on the quality of the original studies that are included. In this review, many included studies did not report validity information. Future reviews may need to further account for the validity and reliability of observation instruments.

Conclusion

This study adds to our understanding of the relation between observed classroom practices and language/literacy development for elementary students. Our study found a significant but weak association on average and brought to light several challenges in synthesizing various classroom research. Extant observation instruments differ in the observational scope, dimensions, and purposes. The present findings indicate that classroom observations provide only a limited picture of students’ language and literacy skills. Of course, this does not deny the importance of attending to teaching practices in the classrooms. Instead, what the results suggest is a need for a comprehensive picture of the factors that influence students’ language and literacy skills, including student factors (e.g., traits and behaviors) and their language and literacy environments and resources in the home and community as well as classroom instruction.

Classroom instruction is a complex construct that requires a highly reliable and valid observation system that can capture the complexity and its relation to students’ language and literacy development. In addition, we highlighted the need for more consistent measurement approaches across studies in order for the field to develop a clearer picture of the relation between classroom practices and student achievement.

Supplemental Material

sj-doc-1-rer-10.3102_00346543221130687 – Supplemental material for Are Observed Classroom Practices Related to Student Language/Literacy Achievement?

Supplemental material, sj-doc-1-rer-10.3102_00346543221130687 for Are Observed Classroom Practices Related to Student Language/Literacy Achievement? by Yucheng Cao, Young-Suk Grace Kim and Minkyung Cho in Review of Educational Research

Footnotes

Appendix A

Appendix B

Study Quality Evaluation Scale.

| 1. Was the research question or objective clearly stated? | Yes | Somewhat | No |

| 2. Was the study population clearly defined? | Yes | Somewhat | No |

| 3. Was the sample selected from the same or similar populations and clearly described (i.e., age, gender, race, disabilities)? | Yes | Somewhat | No |

| 4. Were inclusion and exclusion criteria for being in the study prespecified and applied uniformly to all participants? | Yes | Somewhat | No |

| 5. Was sample size justification, power description, or variance and effect estimates provided? | Yes | Somewhat | No |

| 6. For longitudinal studies, was attrition (withdrawals and drop-outs) reported in terms of numbers and/or reasons per group? | Yes | Somewhat | No |

| 7. Were the classroom observation procedures clearly defined across all study participants? | Yes | Somewhat | No |

| 8. Were the classroom observation procedures reliable across all study participants? | Yes | Somewhat | No |

| 9. Were the classroom observations implemented consistently across all study participants? | Yes | Somewhat | No |

| 10. Were the academic assessments clearly defined across all study participants? | Yes | Somewhat | No |

| 11. Were the academic assessments reliable across all study participants? | Yes | Somewhat | No |

| 12. Were the academic assessments implemented consistently across all study participants? | Yes | Somewhat | No |

| 13. Did they report basic information (i.e., mean, standard deviations, range, correlations for all assessments given)? | Yes | Somewhat | No |

| 14. Did they report reliability estimates on all measures? And, if so, were they above .7? | Yes | Somewhat | No |

| 15.Were the statistical measures appropriate for the study design? | Yes | Somewhat | No |

| Rating criteria | |||

| Strong | >13 | ||

| Moderate | 13 | ||

| Weak | <13 | ||

Note

This research was partially supported by grants from the Institute of Education Sciences (IES), US Department of Education, R305A170113, R305A180055, and R305A200312 to the second author. The content is solely the responsibility of the authors and does not necessarily represent the official views of the funding agencies.

Authors

YUCHENG CAO is a postdoctoral researcher in the School of Education at University of California, Irvine; 3200 Education Bldg, Irvine, CA 92697;

YOUNG-SUK GRACE KIM, Ed.D. is a professor in the School of Education at University of California, Irvine; 3200 Education Bldg, Irvine, CA 92697;

MINKYUNG CHO is a Ph.D. candidate in the School of Education at University of California, Irvine; 3200 Education Bldg, Irvine, CA 92697;

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.