Abstract

This article presents a thematic analysis of the research evidence on assessment feedback in higher education (HE) from 2000 to 2012. The focus of the review is on the feedback that students receive within their coursework from multiple sources. The aims of this study are to (a) examine the nature of assessment feedback in HE through the undertaking of a systematic review of the literature, (b) identify and discuss dominant themes and discourses and consider gaps within the research literature, (c) explore the notion of the feedback gap in relation to the conceptual development of the assessment feedback field in HE, and (d) discuss implications for future research and practice. From this comprehensive review of the literature, the concept of the feedback landscape, informed by sociocultural and socio-critical perspectives, is developed and presented as a valuable framework for moving the research agenda into assessment feedback in HE forward.

A focus on assessment feedback from a higher education (HE) perspective is pertinent given those debates on enhancing student access, retention, completion, and satisfaction within college and university contexts (Eckel & King, 2004). This article will provide a timely and thorough critique of the latest developments within the assessment feedback field. The focus of the review is on the feedback that students receive within their coursework from multiple sources. In the introduction, feedback is defined and the HE context articulated. This is followed by an analysis of 460 articles on assessment feedback in HE produced in the past 12 years. Key themes and dominant discourses are explored, including an examination of the feedback gap. Relevant theoretical perspectives are drawn upon and extended to conceptualize the feedback landscape in order to provide a valuable framework for considering the issues and processes implicit in implementing effective assessment feedback designs within HE contexts and to facilitate future research agendas in assessment feedback in HE.

Defining Assessment Feedback

There is no generally agreed definition of assessment, and few studies have systematically investigated the meaning of assessment feedback. As noted by Clark (2011), for some, assessment is a kind of measurement instrument (Quality Assurance Agency, 2011), whereas for others, assessment feedback is an integral part of assessment (Angelo, 1995). In this review, the term assessment feedback is used as an umbrella concept to capture the diversity of definitions and types of feedback commented on in the literature to include the varied roles, types, foci, meanings, and functions of feedback, along with the conceptual frameworks underpinning feedback principles. Assessment feedback therefore includes all feedback exchanges generated within assessment design, occurring within and beyond the immediate learning context, being overt or covert (actively and/or passively sought and/or received), and importantly, drawing from a range of sources.

In navigating the literature, it is important to acknowledge different conceptions of assessment feedback. For some, it is seen as an end product, as a consequence of performance: “information provided by an agent (e.g., teacher, peer, book, parent, self, experience) regarding aspects of one’s performance or understanding ” (Hattie & Timperley, 2007, p. 81). For others, assessment feedback is seen as an integral part of learning (Cramp, 2011) and as a “supported sequential process rather than a series of unrelated events ” (Archer, 2010, p. 101). The conceptualization of feedback as part of an ongoing process to support learning both in the immediate context of HE and in future learning gains into employment beyond HE is captured in the terms feed-forward and feed-up, respectively (Hounsell, McCune, Hounsell, & Litjens, 2008).

Functionally, building on the work of Ramaprasad (1983) and Sadler (1989), the aim of feedback is to enable the gap between the actual level of performance and the desired learning goal to be bridged (Lizzio & Wilson, 2008). Significantly, for many, however, it is only feedback if it alters the gap and has an impact on learning (Draper, 2009; Wiliam, 2011). Feedback can have different functions depending on the learning environment, the needs of the learner, the purpose of the task, and the particular feedback paradigm adopted (Knight & Yorke, 2003; Poulos & Mahony, 2008). Many distinguish between a cognitivist and a socio-constructivist view of feedback, with much emphasis currently being placed on the latter framework. The cognitivist perspective is closely associated with a directive telling approach where feedback is seen as corrective, with an expert providing information to the passive recipient.

Alternatively, within the socio-constructivist paradigm, feedback is seen as facilitative in that it involves provision of comments and suggestions to enable students to make their own revisions and, through dialogue, helps students to gain new understandings without dictating what those understandings will be (Archer, 2010). Developing this further, a co-constructivist perspective emphasizes the dynamic nature of learning where the lecturer also learns from the student through dialogue and participation in shared experiences (Carless, Salter, Yang, & Lam, 2011). In such situations, interactions between participants in learning communities lead to shared understandings as part of the development of communities of practice (Wenger, McDermott, & Synder, 2002), with the student taking increased responsibility for seeking out and acting on feedback. The complexity of networks can be challenging for students and lecturers in the giving, taking, and adapting of feedback from one learning community to the next.

The cognitivist and constructivist feedback perspectives are not mutually exclusive. They should be seen as reinforcing rather than as opposite ends of a continuum when considering the precise nature and emphasis of feedback to support task, individual, and contextual needs. In considering the level of attunement of feedback to individual needs, the emphasis in the literature is on feedback as a corrective tool, whereas it should also be seen as a challenge tool, where the learners clearly understand very well and the feedback is an attempt to extend and refine their understandings.

A number of researchers have sought to deconstruct feedback in attempts to highlight the main purposes of it. For example, Hattie and Timperley (2007), building on the work of Hannafin, Hannafin, and Dalton (1993), differentiated between four types of feedback (task, process, self-regulation, and self), which they argue have variable impacts on student learning gains. In defining these terms, task feedback is seen as emphasizing information and activities with the purpose of clarifying and reinforcing aspects of the learning task, process feedback focuses on what a student can do to proceed with a learning task, self-regulation feedback focuses on metacognitive elements including how a student can monitor and evaluate the strategies he or she uses, and self-feedback focuses on personal attributes, for example, how well the student has done. Building on these types, Nelson and Schunn (2009) identified three broad meanings of assessment feedback: (a) motivational—influencing beliefs and willingness to participate; (b) reinforcement—to reward or to punish specific behaviors; and (c) informational—to change performance in a particular direction. They drew attention to the importance of being able to demonstrate acquired knowledge through transfer of learning to new contexts. In considering these frameworks, it is important to acknowledge that feedback usually comprises an amalgamation of these elements, the precise balance of which is likely to be variable and also differentially received by students. It is important that these constructs be seen as integrated dimensions in the process of giving and receiving feedback rather than separate dimensions.

The Higher Education Context

There is a substantial and growing body of research in HE contexts considering feedback and its importance in student learning. Feedback is seen as a crucial way to facilitate students’ development as independent learners who are able to monitor, evaluate, and regulate their own learning, allowing them to feed-up and beyond graduation into professional practice (Ferguson, 2011). The potential impact of feedback on future practice and the development of students’ identity as learners were highlighted by Eraut (2006): When students enter higher education . . . the type of feedback they then receive, intentionally or unintentionally, will play an important part in shaping their learning futures. Hence we need to know much more about how their learning, indeed their very sense of professional identity, is shaped by the nature of the feedback they receive. We need more feedback on feedback. (p. 118)

Although there is a large amount of evidence supporting the usefulness of feedback to promote student learning, it is also evident that feedback alone is not sufficient to improve outcomes (Lew, Alwis, & Schmidt, 2010). Enhancing the quality of feedback to students needs to be considered against the backdrop of the massification and consumerization of HE in the 21st century with increasing numbers and a more diverse student body than ever before (Hunt & Tierney, 2006).

In spite of claims about the power of feedback to produce positive learning effects (Black & Wiliam, 1998; Hattie & Timperley, 2007), within the HE context, there are concerns regarding the perceived lack of impact of feedback on practice (Perera, Lee, Win, Perera, & Wijesuriya, 2008). Evidence of progress in improving feedback practices is seen to be lacking (Orrell, 2006), conflicting, and inconsistent (Shute, 2008). However, others note significant progress in the field, with student feedback becoming an increasingly central aspect of HE’s learning and teaching strategies (Maringe, 2010; S. Brown, 2010). A new culture of assessment within HE has been identified, with evidence of peer assessment being used to promote student self-regulatory practice (Cartney, 2010; Nicol, 2010; Rust, 2007).

At the same time, there are claims that higher education institutions have not been as mindful as they might of the emerging findings from schools in order to enhance assessment feedback (Kluger & DeNisi, 1996). Black and McCormick (2010) contended that in HE, a greater focus should be on oral as opposed to written feedback, that greater explication is needed on strategies to enhance independence in learning, and that greater harmony is needed between formative and summative assessment. Furthermore, it has been argued that although student-centered approaches to learning have led to shifts in conceptions of teaching and learning within HE, “a parallel shift in relation to formative assessment and feedback has been slower to emerge ” (Nicol & MacFarlane-Dick, 2006, p. 200) and that “approaches to feedback have, until recently, remained obstinately focused on simple ‘transmission’ perspectives ” (Nicol & MacFarlane-Dick, 2004, p. 1) underpinned by narrow conceptions of the purposes of feedback (Beaumont, O’Doherty, & Shannon, 2011; Maringe, 2010).

Research identifying the type of feedback that works in HE is not firmly based on substantive evidence, and there is little systematic empirical evidence on what type of feedback is best for what situations and contexts (Mutch, 2003). Concerns have been raised about the paucity and quality of the empirical research base (Case, 2007; Walker, 2009) and a lack of consistency in patterns of results (Carillo-de-la-Pena, Casereas, Martinez, Ortet, & Perez, 2009; Shute, 2008). Kluger and DeNisi (1996) wondered whether the questions about what assessment feedback works and what does not are answerable or if they are even the right ones to ask, with Sadler (2010) commenting, “There remain many things that are not known about how best to design assessment events that lead to improved learning for students in higher education ” (p. 547).

Student and lecturer dissatisfaction with feedback is well reported. From the student perspective, most complaints focus on the technicalities of feedback, including content, organization of assessment activities, timing, and lack of clarity about requirements (Higgins, Hartley, & Skelton, 2001; Huxham, 2007), and from the lecturer perspective, the issues revolve around students not making use of or acting on feedback; both perspectives lead to a feedback gap. This feedback gap will be explored to contextualize and assist in understanding the mixed findings on the power of feedback to influence learning (Higgins et al., 2002; Lew et al., 2010).

Aims of the Study

The aims of this study are to (a) examine the nature of assessment feedback in HE through the undertaking of a systematic review of the literature, (b) identify and discuss dominant themes and discourses and consider gaps within the research literature, (c) explore the notion of the feedback gap in relation to the conceptual development of the assessment feedback field in HE, and (d) discuss implications for future research and practice. There have been several systematic reviews of the assessment feedback literature focusing on specific aspects of feedback and specific methodologies within a range of learning contexts (school, higher education, and workplace). Specific foci include (a) task feedback (Shute, 2008), (b) peer feedback (Falchikov & Goldfinch, 2000; Topping, 1998; Van Zundert, Sluijsmans, & Van Merrienböer, 2010), (c) the relationship between feedback and performance (Hattie & Timperley, 2007), and (d) feedback interventions with a focus on performance information (Kluger & DeNisi, 1996).

In the majority of these studies, with the exception of Topping’s (1998) work, researchers have conducted meta-analyses focusing on experimental studies. The review reported here is significant in its adoption of a much wider research lens in order to comprehensively explore the nature of assessment feedback within the specific and current contexts of HE where previous reports are lacking (Hounsell, 2011). I do this by examining all types of feedback and research methodologies to enable the reader to have a more complete overview of the nature of work currently being undertaken within the HE context and the issues associated with this work.

Method

A systematic and extensive literature search focusing on assessment feedback within higher education was undertaken using five international online databases: (a) the Education Resources Information Center (ERIC), (b) Education Research Complete (ERC), (c) the ISI Web of Knowledge (ISI), (d) the International Bibliography of Social Sciences (IBSS), and (e) the American Psychological Association’s largest database (PsycINFO). The search process was carried out in two stages over a period of 1 year. In Phase 1, to be included in the review, the keywords, assessment, feedback, and higher education, needed to be evident in the abstract. The initial search was confined to empirical or theoretical articles written in English and published in academic peer-reviewed journals between January 2000 and May 2011. The automated advanced search of each of the five databases was conducted using increasingly refined search criteria to identify potentially relevant studies for use in the review. A broad and varied definition of assessment feedback was adopted to include different types of feedback including feed- forward and feed-up from different sources, different foci, time scales, and from different theoretical positions. The inclusion criteria were as follows: (a) predominantly about assessment feedback, (b) focused on student and/or lecturer perspectives on assessment feedback, and (c) directly related to higher education contexts.

The five databases initially yielded 1,131 possible articles. Further screening of abstracts and full articles resulted in 267 articles being selected for further scrutiny: ERC (n = 1), PsycINFO (n = 38), IBSS (n = 4), ISI (n = 24), and ERIC (n = 200). The reading and rereading of complete articles led to the rejection of 27, leaving a total of 240 articles. Articles were excluded if the central focus was not on higher education or if the focus was more broadly on assessment rather than on assessment feedback. Thirty additional articles were identified using the snowball method, raising the total number of articles considered to 270. The snowball method, frequently employed in systematic reviews, enabled consideration of references that key articles had drawn upon, some of which predated 2000 (Greenhalgh & Peacock, 2005).

Thirty of these selected articles (11% of 270) were related to four specific higher education research projects: the (a) Formative Assessment in Science Teaching (FAST) project (2002–2005; Gibbs & Simpson, 2004), (b) Re-engineering Assessment Practices (REAP) project (2005–2007; Nicol & MacFarlane-Dick, 2006), (c) Engaging Students with Assessment Feedback project (2005–2008; Handley, Price, & Millar, 2008), and (d) Learning-Oriented Assessment (LOAP) project (2002–2005; Carless, Joughin, & Mok, 2006). Further scrutiny of these four projects and related works produced an additional 21 articles, resulting in the detailed analysis of 291 articles.

In Phase 2, a repeated and extended search was undertaken to take into account potential bias due on the keywords used in Phase 1. Initial analysis of the data identified that certain literature was excluded by the use of the term higher education. To address this limitation, additional keywords related to higher education were added, including college, postsecondary, and university. The review period was also extended to include January 2000 to the end of January 2012. In this second phase, a potential further 3,043 abstracts were identified. Detailed analysis using inclusion and exclusion criteria and protocols already established (reading and rereading of articles) and cross-checking with existing articles resulted in the selection of an additional 169 articles from the databases: ERC (n = 35), PsycINFO (n = 6), IBSS (n = 15), ISI (n =35), and ERIC (n = 78). Thus, 460 articles were used in this review.

Analysis yielded descriptive information on journal type, country of origin of lead author, focus of study on student and/or lecturer perspectives, and the balance of empirical versus theoretical articles. The empirical studies within the data set were classified according to their methodologies, that is, whether the studies reported were experimental (control and experimental groups) or quasi-experimental (participants were not randomly assigned to the conditions). Samples were also categorized in terms of size, discipline, and student level (e.g., undergraduate vs. postgraduate). The length of studies was also noted along with the nature of the interventions. The data were entered into an SPSS Version 18 database. The SPSS data underwent screening and multiple checks to ensure accuracy in the entering and recording of data. To analyze the content of the articles, a thematic review of the literature was undertaken. This involved careful reading and rereading of the full articles (at least two occasions) and integration of the findings (Slavin, 1986). The selected literature was subjected to in-depth thematic analysis (Braun & Clarke, 2006) to identify key themes covered within the literature.

Results and Discussion

Characteristic Features of the Research

Origin of articles

Approximately 42% of articles were written by lead authors from the United Kingdom, followed by United States (23%), Australia (10%), the Netherlands (5%), Hong Kong (2.5%), and Taiwan (2%). New Zealand, Canada, Belgium, and Spain each contributed 1% of articles. The relatively lower percentage of U.S. authored papers compared to the United Kingdom may reflect Clark’s (2011) concern that within the U.S. context, “FA [formative assessment] practices lack the statistical reliability expected of assessment practices in the US at the current time ” (p. 165). Alternatively, the dominance of U.K. writers may reflect the influence of the English National Student Survey (introduced in 2003) and its subsequent impact on assessment policies within the landscape of increased competition for student places (recruitment) and accountability (retention, progression), as well as support from agencies such as the Higher Education Academy.

Perspectives

There is a lack of work addressing feedback from the lecturer perspective (Topping, 2010; Yorke, 2003) and postgraduate perspective (Scott et al., 2011). Approximately one third of articles consider the perspectives of both lecturer and student; 57% of articles (n = 255) were focused on the student context and 7.1% focused on the lecturer perspective. Only 19% of studies considered the postgraduate experience compared to 69% (n = 273) focused on undergraduate populations; 11% considered both under- and postgraduates. The role of feedback in addressing student performance and retention is a key focus of the articles focusing on undergraduate perspectives. The assumption within the literature that postgraduate students have fewer problems in negotiating learning environments is questionable. Indeed, at higher academic levels students may have more problems given that unlearning may be necessary to move forward. Scott et al. (2011) have suggested that those returning to education from workplace environments may face considerable difficulties in accessing discourses within HE.

The nature and design of research

The majority of articles were case studies. There were 380 empirical articles and 80 theoretical articles. Of the 380 empirical articles, 12.6% (n = 48) used experimental designs and a further 5.3% used quasi-experimental designs, a similar ratio to that found in the SRAFTE review (Gilbert, Whitelock, & Gale, 2011). The lack of true experimental designs and dominance of small-scale case studies is not necessarily problematic as elaborated on by Yorke (2003): Quantitative, quasi-experimental, research methods are difficult to employ satisfactorily when educational settings vary, often quite considerably. Qualitative (often action) research has a particular power to produce evidence that stimulates deeper reflection and—if handled programmatically—has the potential for developing the theory and practice of formative assessment to an extent that analogous activities by individuals (or even institutionally-based groups of individuals) cannot. (p. 496)

The relatively small number of experimental designs is also not unexpected, given the difficulties inherent in randomization in educational settings (Scott & Usher, 2011). A more pressing concern is the lack of clarity and detail given about interventions. The lack of commonality in approaches and tools used makes a more systematic approach to test the potential efficacy of pedagogic interventions across a range of contexts difficult (Gipps, 2005; Van den Berg, Admiraal, & Pilot, 2006). The inadequacy of research designs is a focus of Van Zundert et al.’s (2010) detailed review of 26 studies (1990–2007; see p. 277 for a useful summary). In only 12 of the studies on effective peer assessment processes were clear relationships among methods, conditions, and outcomes articulated. Interventions were often described globally, and outcomes were discussed without being attributed to particular causes, making inferences about the causes of effects difficult. Conversely, in other articles, assumptions were made about causality that could not be substantiated.

The assertion that most studies are small scale, single subject, opportunistic, and invited (Hounsell et al., 2008) is supported. Fifty-three percent of the articles had sample sizes of fewer than 100, with 32% of these having samples sizes fewer than 50. The sample size in the empirical articles ranged from 3 to 444,030 (M = 346.2, SD = 2,383.6). Five articles did not give sample sizes. In many studies, only a relatively small percentage of students took part in specific interventions, and those involved in feedback often represented a very small percentage of the cohort. This was especially true of those articles on peer feedback, raising reliability and validity issues and questions about whether these populations were representative of the larger group and whether the results can be generalized.

Only 24% of samples are drawn from a mixture of subjects, and most of these are opportunistic. The majority of students are drawn from specific subject areas reflecting the focus of some of the larger funded projects: health (15.2%), education (12.8%), business (9.5%), sciences (8.6%), psychology (5.0%), information and communication technology (ICT; 5.0%), and engineering (4.3%). Bennett’s (2011) observation that “formative assessment would be more profitably conceptualised and instantiated within specific domains ” (p. 20) is pertinent here, as although studies are drawn from specific subject domains, the importance of the domain and relevance of specific types of feedback are often not developed and the context not sufficiently explained (Bloxham & Campbell, 2010).

Another perceived weakness of the research literature is that effectiveness outcomes were often based on self-judgments. The extent to which a specific outcome is quantifiable, especially given the short time scale of most interventions, is an issue (only 13% of articles featured longitudinal designs and in 87% of cases, the time scale over which interventions were applied was less than 1 year and often less than one semester). The importance of capturing the potential of feedback to inform learning in the longer term is a well-founded concern (Boud, 1995; Hendry, Bromberger, & Armstrong, 2011). To address this issue, it is important to consider how lecturers and students not only apply but also develop and adapt the knowledge and skills acquired from feedback to new and different learning contexts.

Feedback interventions

Seventy-six percent of the empirical articles (n = 288) commented on feedback interventions to enhance learning. The interventions were classified according to whether (a) the student was an active participant in the process (e.g., peer marking, interaction with computer technology, design of assessment; 70%; n = 203), (b) there was an e-learning focus (37%; n = 107), (c) the intervention involved aspects of curriculum redesign (88%; n = 259), or (d) focused on teacher feedback (e.g., forms of feedback, assessment criteria, rubrics, written and oral feedback; 65%; n = 189). Sixteen percent of articles were holistic intervention designs involving all four areas of activity, 34% involved three areas of activity, 46% featured two areas of activity, and 4% featured one area of activity only. Research considering the relative impact of one variable compared to another needs further development as identified by DeNisi and Kluger (2000), who found that only 37% of studies considered this.

As part of the thematic analysis, a core theme and a maximum of two related subthemes were identified for each article. Eleven core themes were identified: peer feedback, e-learning to support self- and peer feedback, self-feedback, technicalities of feedback, student perceptions, curriculum design, process of feedback, individual needs, feedback gap, performance, and affect. The most frequent theme represented in the articles was the use of e-learning to support assessment feedback (22%), followed by peer feedback (17%), self-feedback as part of self-regulation (17%), technicalities or types of feedback (15%), the feedback process (9.1%), and student perceptions of feedback. Only 4% of the articles’ central focus was on individual learning needs, including aspects such as gender, culture, learning styles, and how individuals make sense of and use feedback. This pattern supports Shute’s (2008) assessment regarding the lack of work in this area (Burke, 2009; Evans & Waring, 2011a, 2011b, 2011c).

Despite the importance of affect in learning, only 2% of articles had this as their central theme. This paradox is not lost on Cazzell and Rodriguez (2011), who argued that the affective domain is the most neglected domain in higher education although it is deemed to be the gateway to learning. Student perceptions of assessment feedback were the core focus of 7% of articles (n = 32), confirming Poulos and Mahony’s (2008) observation that work of this nature remains thin. When considering the key theme and two related subthemes covered within articles, the same top four areas are identified; however, a focus on the technicalities of feedback dominates and is featured in 15% of articles. The least developed areas in research are individual needs (4%), performance (4%), feedback gap (3%), and affect (3%). It will be important in this review to explore some of these underrepresented areas in more detail, especially the notion of students’ perceived lack of ability to act on feedback through a detailed exploration of the feedback gap issue.

Principles of Effective Feedback Practice

Although Shute (2008) argued that there is no simple answer as to what type of feedback works and Nelson and Schunn (2009) commented that “there is no general agreement regarding what type of feedback is most helpful and why it is helpful ” (p. 375), the principles of effective feedback practice are clear within the HE literature. There is a significant and growing body of evidence of what is seen as valuable. There is general agreement about the importance of holistic and iterative assessment feedback designs drawing on socio-constructivist principles (Boud, 2000; Juwah et al., 2004; Knight & Yorke, 2003), although Nicol (2009) noted that such designs limit the inferences that can be made regarding what exactly has caused the learning effect.

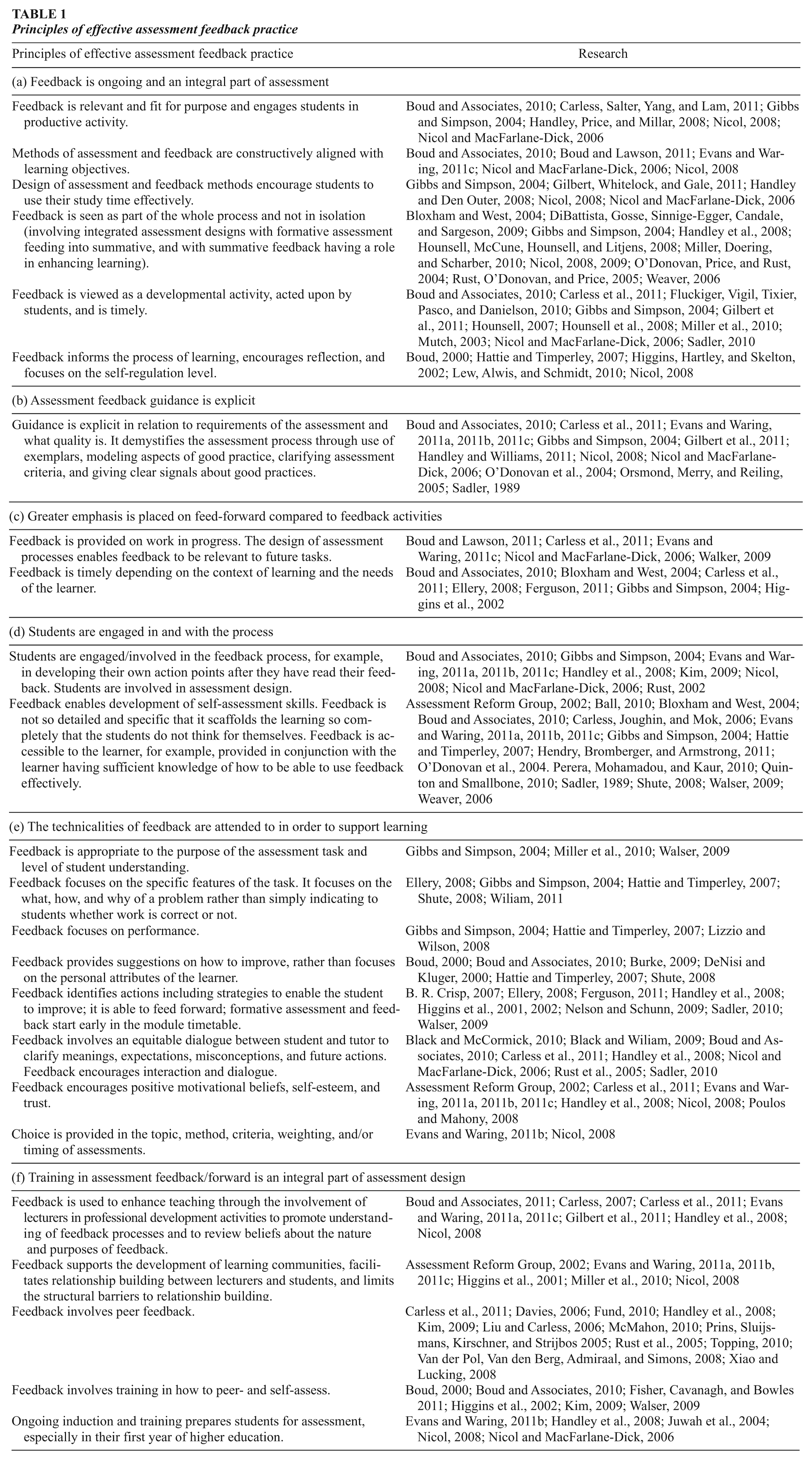

Table 1 contains key principles of effective feedback practice grouped under six subheadings supported by evidence from this literature review. To acknowledge and promote the translation of research into informed practice within HE, I have synthesized the principles of effective feedback and feed-forward design presented in Table 1 into 12 pragmatic actions:

ensuring an appropriate range and choice of assessment opportunities throughout a program of study;

ensuring guidance about assessment is integrated into all teaching sessions;

ensuring all resources are available to students via virtual learning environments and other sources from the start of a program to enable students to take responsibility for organizing their own learning;

clarifying with students how all elements of assessment fit together and why they are relevant and valuable;

providing explicit guidance to students on the requirements of assessment;

clarifying with students the different forms and sources of feedback available including e-learning opportunities;

ensuring early opportunities for students to undertake assessment and obtain feedback;

clarifying the role of the student in the feedback process as an active participant and not as purely receiver of feedback and with sufficient knowledge to engage in feedback;

providing opportunities for students to work with assessment criteria and to work with examples of good work;

giving clear and focused feedback on how students can improve their work including signposting the most important areas to address;

ensuring support is in place to help students develop self-assessment skills including training in peer feedback possibilities including peer support groups;

ensuring training opportunities for staff to enhance shared understanding of assessment requirements.

Principles of effective assessment feedback practice

The greater potential of feed-forward compared to feedback is highlighted in the work of De Nisi and Kluger (2000) in their exploration of the relative lack of effectiveness of feedback. They offered their own feedback intervention theory emphasizing the importance of the affective dimension of feedback in impacting on how feedback is received. They argued the need for feedback to focus on the task and task performance only, not on the person or any part of the person’s self-concept, a concept built on by Hattie and Timperley (2007). Feedback should be presented in ways that do not threaten the ego of the recipient, include information about how to improve performance, include a formal goal setting plan along with the feedback, and maximize information relating to performance improvements and minimize information concerning the relative performance of others. They found that interventions associated with complex tasks were more usually linked to declines in performance and that interventions providing comparative information about past performances were more likely to result in performance increases when that information indicated that performance had improved over time. However, even if feedback is targeted at the task level, it may still be received at the self-concept level.

At a finer grained level of analysis, the relative impact of feedback on individual performance varies as would be expected. Hounsell (2011) referred to feedback practice as a blurred concept given the different interpretations that lecturers and students may have regarding its purpose. Feedback can be implemented in a variety of ways and in differing contexts, which has an impact on the nature of effects (Hattie & Timperley, 2007; Wiliam, 2011). Bearing this in mind, Bennett (2011) urged caution in how we evaluate sources of evidence and in the attributions we make about them. The complexity inherent in the untidier reality of educational environments makes it difficult in practice to be sure of the relationship between cause and effect (McKeachie, 1997).

Although Ball (2010) argued that “there is little published evidence on ‘what works best’ in student feedback ” (p. 142), others have argued that what constitutes good feedback is varied. The delivery, form, and context of feedback are important (Ellery, 2008). However, there is less consensus as to what practices are most effective. There is some support for the mode of feedback to be varied to support the needs of individual students and the nature of the task (Walser, 2009). Opinion is mixed about the ideal volume of feedback (Lipnevich & Smith, 2009). Where timing of feedback is concerned, both immediate and delayed feedback can be useful, dependent on user and task variables (Fluckiger, Vigil, Tixier, Pasco, & Danielson, 2010). Delayed feedback may be more appropriate for tasks well within the learner’s capability and where transfer to other contexts is important (Poulos & Mahony, 2008). There is mixed evidence regarding the effectiveness of students submitting drafts and resubmitting work (Fisher, Cavanagh, & Bowles, 2011). Where such opportunities are provided, it is evident that large numbers of students do not make use of such opportunities. There is some consensus suggesting students prefer individual to group feedback (Cramp, 2011), although studies also point to the benefits of group discussion (Hayes & Devitt, 2008). There is less consensus and detail within the literature on how principles of effective feedback can be applied to practice within and across different subject domains (Crossouard & Pryor, 2009).

In considering the principles of effective assessment feedback and feed-forward practice, there are tensions. Questions have been raised regarding the relative ability of students to make informed choices and to have sufficient information, knowledge, and skills to participate fully and effectively in such processes (Krapp, 2005). Although Gibbs and Simpson (2004) focused on what lecturers should be doing to support students, others stressed the importance of involving students in dialogue to facilitate self-judgment and self-regulatory practice (Black & McCormick, 2010; Carless et al., 2011; Handley et al., 2008; Nicol, 2008, 2009).

The greater role and responsibility of students in the learning process permeates discussions of sustainable feedback practice along with the acknowledgment of the importance of training for both lecturers and students in how to give and receive feedback (Carless, 2007; Nicol & MacFarlane-Dick, 2006). An alternative perspective questions whether student participation is always useful for the development of sustainable feedback practice. Central to this theme is the importance of developing student self-judgment skills to enable the student to improve, independent of the lecturer, within current and future learning contexts. Although links between the accuracy of self-assessment and performance have been identified, it is also true that it takes time for students to develop such skills and assessment design needs to be aligned to support such skill development (Boud & Lawson, 2011). The works of Boud (2000), Carless et al. (2011), and Hounsell (2007) are important in raising questions concerning the sustainability of assessment feedback practice. The extent to which assessment feedback practice within HE supports students in developing self-regulatory and content-related skills and prepares them for lifelong learning is important.

The role of emotions as a key component of self-regulatory behavior and consideration of effective feedback behaviors from the lecturer and student perspectives (Scott et al., 2011) are important. Questions have been raised concerning the nature and appropriateness of support offered to students. For example, Nicol (2008) has questioned how much we should be supporting students to assimilate into different learning cultures and how much institutions should be adapting to embrace the cultures students bring with them as they move between different communities of practice. He argued that to support the development of self-regulatory practice, attention needs to be directed toward student levels of engagement and empowerment if students are to be given opportunities to self-regulate and take responsibility for their own learning. How academic and social experiences combine to support students’ learning and development is an important element of this.

Given that that students and lecturers may simultaneously inhabit several different learning environments traversing different domains, sociocultural and institutional contexts are problematic when promoting sustainable assessment. This highlights the importance of considering both individual and contextual factors when trying to unpack how individuals experience feedback in order to move them forward in their learning (Boud & Falchikov, 2007). In the next section, key debates surrounding effective feedback practice will be considered under the three most frequently cited core themes identified in this review: e-assessment feedback, self-feedback, and peer feedback.

Core Themes

The effectiveness of e-assessment feedback

There has been significant growth in the number of articles focusing on e-assessment feedback learning possibilities within the past 10 years. E-assessment feedback (EAF) includes formative and summative feedback delivered or conducted through information communication technology of any kind, encompassing various digital technologies including CD-ROM, television, interactive multimedia, mobile phones, and the Internet (Gikandi, Morrow, & Davis, 2011). It is wide ranging in that it can be a/synchronous, face to face, or at a distance; involve automated or personal feedback and different mediums; and be used to support individual and group learning. It is important to make the distinction between computer-generated scoring and feedback where the former provides a mark but no feedback guidance (Ware, 2011).

Advocates of EAF argue that such approaches encourage students to adopt deeper approaches to and greater self-regulation of learning. Frequently cited benefits of EAF for students include higher achievement and retention rates (Ibabe & Jauregizar, 2010; Nicol, 2008) through the provision of more relevant and authentic assessment feedback experiences (Gilbert et al., 2011) and collaborative learning opportunities (Nicol, 2008). Such technology affords immediacy and “anytime, anywhere, anyhow approaches ” and is suitable for use with large numbers of students (Gikandi et al., 2011; Juwah et al., 2004). The potential of EAF to impact on student motivation and engagement is noted (DeNisi & Kluger, 2000; Hatziapostolou & Paraskakis, 2010); however, engagement does not necessarily translate into better student performance (Virtanen, Suomalainen, Aarnio, Silenti, & Murtomaa, 2009), and EAF may actually limit student participation in assessment feedback (Rodriguez Gomez, 2011).

Much has been made of the importance of students fully engaging in self- assessment. Although data collected via the USA National Student Survey of Student Engagement (NSSE) has convincingly demonstrated the importance of student engagement in connection with learning gains (Pascarella & Terenzini, 2005), evidence of the relationship between student engagement and learning gains within the HE assessment feedback literature is mixed. The NSSE work pinpointed the importance of three variables in supporting student engagement and enhanced performance outcomes. These included the level of academic challenge, the extent of active and collaborative learning, and the extent and quality of student-faculty interactions, all of which need to be addressed as part of assessment feedback.

In this review, over 100 articles focusing on EAF were scrutinized. The impact of EAF interventions on student performance was found to be highly variable. Some studies reported enhanced student learning outcomes (Nicol, 2009; Xiao & Lucking, 2008). In others, significant numbers of students did not engage or paid no attention to feedback delivered via EAF (Timmers & Veldkamp, 2011), leading to alternative perspectives on EAF as faulty, irrelevant, and more easily dismissed (Lipnevich & Smith, 2009). In considering the impact of EAF on student learning, few articles related the intervention directly to performance, many involved small sample sizes, and even where learning gains were reported, significant numbers of students did not make progress as measured by attainment outcomes. The difficulty of isolating the effect of a specific intervention from other variables is an issue. Frequently, correlations between variables are interpreted incorrectly to imply causation. These findings are corroborated by Gilbert et al. (2011) who in their Synthesis Report of Assessment and Feedback With Technology Enhancement (SRAFTE) of 124 studies found that although technology-assisted feedback could be beneficial, the diversity of approaches and EAF modes coupled with the difficulty of measuring effects made confirmation of findings difficult. However, they identified principles of effective practice, provided useful case studies, addressed key questions, and concluded that a crucial factor in the success of e-assessment feedback interventions “depends not on the technology but on whether an improved teaching method is introduced with it ” (Gilbert et al., 2011, p. 26).

Articles reporting positive impacts on student learning performance predominantly employed holistic designs (Nicol, 2009). They identified that lecturers and students had an appropriate understanding of constructivist epistemology in that they considered the nature of learning and the role of the student and lecturer in the process (Tsai & Liang, 2007). To meet the needs of the “contemporary learner ” (Gilbert et al., 2011), the focus is on a constructivist perspective of learning that emphasizes the agency and active engagement of the learner in authentic assessments, acknowledging the individual strengths and needs of each learner through tailored assessment feedback provision. However, in this review, even where feedback support was tailored to individual learning needs and gains in learning were reported, it was evident that the interventions did not suit all students, with some failing to engage with the learning tool(s) at all (Handley et al., 2008; Timmers & Veldkamp, 2011). A key factor in the efficacy of e-feedback technologies is the nature of the interaction between students and their lecturers within the process; EAF does not automatically imply a shift in the perception of the student role by the student and lecturer.

The value of interactive online tools and training in the use of these (peer online feedback, use of blogs and wikis) is highlighted by Dippold (2009) and Xiao and Lucking (2008). Opportunities to allow for frequent self-testing to support self-regulation activities has been seen as beneficial by students (Epstein et al., 2002) and having positive impacts on performance (Ibabe & Jauregizar, 2010). Although articles comment on the importance of training in the use of EAF for both students and lecturers, few articles actually develop this focus (< 4%). The importance of feedback from an “expert ” in several studies reported greater learning gains than that from self- and peer feedback and automated exchanges (Chang, 2011; Porte, Xeroulis, Reznick, & Dubrowski, 2007). This highlights the importance of supplementing peer feedback with lecturer feedback (Kauffman & Schunn, 2011). In addressing the ways in which students use EAF, there is a lack of research exploring student beliefs about the value of such approaches (O’Connor, 2011), as well as little attention afforded to the influence of factors such as cultural beliefs concerning the nature and location of expert knowledge and EAF affordances.

The effectiveness of self-assessment feedback

Boekaerts, Maes, and Karoly (2005) defined self-regulation as “a multilevel multi-component process that targets affect, cognitions, and actions, as well as features of the environment for modulation in the service of one’s own goals ” (Boekaerts, 2006, p. 347); self-assessment is an important component of this. Self-regulation is often ill-defined. Self-assessment is frequently used interchangeably with self-regulation; however, self-assessment is only one component of the former. In many studies, it is not always clear what aspects of self-regulatory practice have been targeted in the development of necessary generic and subject-specific skills. Vermunt and Verloop (1999) acknowledged the interactive nature of the dimensions of self-regulation and resulting learning patterns or learning styles and dissected them into six cognitive, five affective, and four metacognitive regulation activities. Learners will vary in their competence on these dimensions of regulation and require targeted support to develop those areas of relative weakness. It may be possible to identify those dimensions that have the most impact on the development of effective feedback-seeking and -using practice.

Given that disciplines achieve educational quality in different ways (Gibbs, 2010), little is known about how individuals self-regulate within and across disciplines and learning contexts (Eraut, 2006). In developing self-regulatory practice, the interaction of metacognition, motivation, and attribution theories are highlighted (Wiliam, 2011); however, insufficient attention has been paid to how student self-assessment skills can be developed (Boud and Falchikov, 2006). To support the development of self-assessment as part of self-regulation, the concept needs deconstructing. As argued by Archer (2010), the synonymous use of reflection with the term self-assessment is of limited value as there is little evidence to support the idea we come to know ourselves better by reflecting. Archer’s definition of self-monitoring highlights the role of individual and contextual variables at work at any time: “Self monitoring is the ability to respond to situations shaped by one’s own capability at the moment in that set of circumstances, rather than being governed by an overall perception of ability ” (p. 104). Achieving such objectivity may be difficult as it may require altering long-term beliefs about ability.

If we are to better understand the processes involved in self-assessment, greater attention needs to be placed on “the varied influencing conditions and inherent tensions to progress in understanding self-assessment, how it is informed, and its role in self-directed learning and professional self-regulation ” (Sargeant et al., 2010, p. 1212). This requires consideration of cognitive and affective aspects of self-monitoring and regulation (Ruiz-Primo, 2011). There is support for self-assessment as a mechanism for lifelong learning (Boud & Falchikov, 2007; Carless et al., 2006; Taras, 2003); however, doubts have been raised regarding the ability of individuals to interpret the results accurately (Galbraith, Hawkins, & Holmboe, 2008). Archer (2010) has argued that there is no evidence for the effectiveness of self-assessment and has recommended the need to move from individualized, internalized self-assessment to self-directed assessment utilizing and filtering external feedback with support. To implement such a model, careful organization of training for students and supervisors is seen as essential to ensure that “students [are] an integral part of the assessment process, but not as vulnerable novices ” (Taras, 2008, p. 90).

The thematic analysis of self-assessment feedback literature identified as part of the overall review revealed six key themes. First, self-regulation support strategies need ongoing development (Parker & Baughan, 2009). Focused interventions (discussion groups, workshops on writing, self-checking feedback sheets, rubrics, discussion of criteria, marking workshops, reflective writing tasks, writing frameworks, coaching, and testing) can make a difference to student learning outcomes as long as their value in the learning process is made explicit and seen as valuable by students and lecturers (Ibabe & Jauregizar, 2010; Perera, Mohamadou, & Kaur, 2010). The evidence suggests that students find it difficult to develop self-assessment skills. Students need time to make sense of instruction and to incubate and develop self-regulatory skills in order to apply these to new and other learning contexts. One-off workshops and self-checking tools, however comprehensive, are not sufficient to bridge gaps in student understanding (Quinton & Smallbone, 2010).

Second, the development of self-assessment skills requires appropriate scaffolding, with the lecturer working with the student as part of co-regulation. Taras (2008) found integrated lecturer feedback helped students identify and correct more errors than self-assessment alone. Helping students to internalize and use feedback from a variety of sources is an important aspect of co-regulation (Nicol, 2007). To understand self-regulatory mechanisms, more attention has to be given to the nature and context of discourse (Black & McCormick, 2010) and “more sophisticated guidance for teachers [is needed] to help them both to interpret students’ contributions, and to match their contingent responses to the priority of purpose which they intend ” (Black & Wiliam, 2009, p. 27). This includes the need to ensure that lecturers do not assume responsibility for students, thus precluding them from considering the learning possibilities for themselves both in the present and the future (Hattie & Timperley, 2007).

The need to balance instruction and facilitation in supporting the development of self-regulatory skills has been much debated. O’Donovan, Price, and Rust (2004) advocated a carefully considered combination of transfer methods along a spectrum of explicit/tacit options in preference to an explicit telling approach. Others have focused on the timing of interventions, with comprehensive induction into purpose, procedure, and criteria (McMahon, 2010). Of critical importance in the development of self-regulatory skills is a clear understanding and working knowledge of task compliance and quality (Sadler, 2010). Rust, O’Donovan, and Price (2005) concur with Sadler (1989) that students should be trained in how to interpret feedback, how to make connections between the feedback and the characteristics of the work they produce, and how they can improve their work in the future. It cannot simply be assumed that when students are “given feedback ” they will know what to do with it. (p. 78)

Although students need some directive and explicit guidance in navigating the rules of HE, there is the inherent danger that providing too much explicit guidance may result in student dependence and limited thinking. At the same time, the students’ call to have greater guidance on the rules of engagement within HE should not be taken as a sign of dependence, when it may be the reverse, with them seeking to understand the requirements of assessment in order to navigate these independently. An assessment design and underpinning philosophy encouraging independence is important to enable students to develop appropriate expertise themselves, in order to self-monitor and to control the quality of their own work (Sadler, 2005). One also needs to be mindful of the fact that inadvertently lecturers, by providing explicit guidance, may impair the quality of learning inasmuch as students may be successful in meeting the demands of assessment for progression and certification purposes but ironically may not be well prepared for lifelong learning (J. Brown, 2007; G. Crisp, 2012; Crook, Gross, & Dymot, 2006). The level of transparency required as part of effective assessment feedback practice may be replacing learning with instrumentalism and “criteria compliance ” (Torrance, 2007, p. 278).

Third, although all students, regardless of their backgrounds, need support in developing certain dimensions of self-regulation, some need more assistance than others (Alkaher & Dolan, 2011). We also know that some students reap greater benefits from interventions (Quinton & Smallbone, 2010). For Handley and Cox (2007), citing Butler and Winne (1995), “the most effective students . . . generate internal feedback by monitoring their performance against self-generated or given criteria ” (p. 24) and seek out feedback from external sources. More effective self-regulators are able to make better use of the affordances offered within and beyond learning environments to support their learning (Covic & Jones, 2008; Fisher et al., 2011; Scott et al., 2011). We need to know more about students’ ability to make judgments about their own learning process, namely, the act of self-monitoring their learning development, identifying strengths and weaknesses, and adapting learning in light of experience and feedback from lecturers and peers (Lew et al., 2010). For Nicol (2009), students enter with the ability to self-regulate and therefore the emphasis should be on developing this capacity rather than putting energy into providing expert feedback. However, the need for differentiated practice is also important because students enter HE with differing abilities, capacities, and willingness to self-regulate, leading Wingate (2010) to question whether we should be focusing more on why some students are able to use systems and self-regulate but others cannot.

Fourth, students’ perceptions of the value of self-assessment and previous experiences of managing this are important (Boud & Falchikov, 2007). The value and relevance of feedback to future tasks needs to be explicitly discussed and exemplified. Mutch (2003) argued that if students have not been prepared to connect with their feedback, they may show little evidence of development or intrinsic motivation to learn, and according to Burke (2009), they may also “utilise the inadequate learning strategies . . . that they brought to higher education ” (p. 49).

Fifth, much is predicated on the value of collaborative learning and peer support in promoting self-regulatory practice from a socio-constructivist perspective. Cain (2012) argued that such an emphasis is ill-conceived with its emphasis on group thinking and working at the expense of individual independent thinking, given the evidence suggesting that lone working may be more productive in certain situations.

Sixth, those reportedly successful approaches to enhancing self-regulatory practice focus on student responsibility and ways of generating genuine involvement in the feedback process. Examples include students awarding their own grades based on feedback from lecturers and providing a 100 word justification (Sendzuik, 2010); examinations where students assess their own competence, respond to specific tasks, receive an expert answer, and then prepare their own comparison document (Jonsson, Mattheos, Svingby, & Attstrom, 2007); and self-revised essays where students develop their work building on all sources of feedback gained throughout the course both formally and informally (Graziano-King, 2007). These approaches advocate the central involvement of the student in authentic learning experiences whereby students are required to demonstrate their ability to apply knowledge and skills acquired to real-life situations and to solve real-life problems (Herrington & Herrington, 1998). The concept is problematic given the differences of opinion about what constitutes authenticity, with some authors emphasizing the task and context and others performance assessment (Gulikers, Bastiaens, & Kirschner, 2004).

The effectiveness of peer assessment feedback

Peer feedback as a component of peer assessment has grown considerably within HE; Gielen, Dochy, and Onghena (2011) noted a tripling of studies since 1998. Much of this work is concerned with the effect of peer assessment on learning processes and outcomes from cognitive and affective perspectives (Kim, 2009). Van der Pol, Van den Berg, Admiraal, and Simons (2008), drawing on Falchikov’s (1986) work, have defined peer assessment “as a method in which students engage in reflective criticism of the products of other students and provide them with feedback, using previously defined criteria ” (p. 1805). Building on this, Gielen et al. (2011), citing Topping’s (1998) work, described peer assessment as “an arrangement in which individuals consider the amount, level, value, worth, quality or success of the products or outcomes of learning of peers of similar status ” (p. 137). The diversity of work in this area is vast. Topping’s typology of peer assessment offers a detailed perspective on this area, although Gielen et al. suggested that Topping’s typology is inadequate to capture the diversity of peer assessment today given the expansion of variation in peer assessment practices.

There are mixed opinions regarding the value of peer assessment. How peer assessment is enacted and how students are prepared for such practices can lead to very different results (Topping, 2010). The value of peer assessment as an element of holistic assessment design has been articulated by Nicol and MacFarlane Dick (2006) and others (Handley et al., 2008; Price, Handley, Millar, & O’ Donovan, 2010). However, for some, peer assessment is seen as a way of compensating for heavy lecturer workloads by offloading some of the burden of assessment to students; however, this is far from an easy option and the planning and organization required to provide peer assessments has to be balanced against students’ perceived benefits (Bloxham & Campbell, 2010; Friedman, Cox, & Maher, 2008).

Advocates of peer assessment feedback argue that it is motivational; it helps the development of metacognition by enabling students to engage in their own learning to know which learning, teaching, and assessment strategies work best for them. It shows them how to monitor their own progress and that of others, adapt strategies and develop specific skills, enhance communication and interpersonal skills, and enable a sense of self-control (a useful summary can be found in Ballantyne, Hughes, & Mylonas, 2002). Peer assessment is therefore seen as an important way of engaging students in the development of their own learning and self-assessment skills (Davies, 2006; Nicol & MacFarlane-Dick, 2006; Orsmond, 2006; Topping, 2010; Vickerman, 2009; Xiao & Lucking, 2008). Furthermore, mutual benefits to the learner and lecturer have been identified (Van den Berg et al., 2006; Vickerman, 2009).

Sadler (2010) advocated the use of peer assessments to enable students’ conceptual understandings of task compliance, quality and criteria, and tacit knowledge. He challenged the view that feedback from the lecturer should be the automatic choice as the primary agent for improving learning if students are to develop capability in making complex judgments. Whether peer assessment facilitates development of self-assessment skills is debated, and for some, peer feedback is perceived as ineffective (Boud, 2000), unpredictable (Chen, Wei, Wu, & Uden, 2009), and unsubstantiated (Strijbos & Sluijsmans, 2010). The multiplicity of peer assessment practices and diversity of instruments to measure student attitudes make it hard to compare studies and to measure effectiveness (Gielen et al., 2011; Van Zundert et al., 2010). The perceived benefits of collaboration have been questioned in that peer assessment feedback can lead to regressive collaboration where students with appropriate understanding or explanations are persuaded to change to a less appropriate or incorrect alternative or situations where interactions between students result in conceptual confusion rather than clarification (Sainsbury & Walker, 2008).

A specific criticism of peer feedback research is that the many variables underpinning the complexity of peer assessment have not been thoroughly and independently evaluated in relation to outcomes (Topping, 2010). To enhance understanding of peer feedback, Falchikov and Goldfinch (2000) have argued for the need to explore interactions between variables and the effects of repeated experience of peer assessment. The lack of impact on performance, despite positive feedback from students (O’Donovan et al., 2004), presents concerns and also raises a question about a possible incubation period in that the benefits may not be immediately apparent.

A more detailed look at the HE literature on peer feedback highlights the following:

For accuracy: Multiple peer markers are preferred over single markers (Bouzidi & Jaillet, 2009).

Peer assessment is most effective when included as an element within a holistic assessment design (Nicol & MacFarlane Dick, 2006).

Peer feedback can be a positive experience for many students but not for all (Fund, 2010).

The nature of the implementation and roles of assessor and assessee influence outcomes (Gielen et al., 2011).

Receiving feedback has less impact on future performance than giving feedback (Kim, 2009).

The academic ability of the feedback giver and recipient is important (Van Zundert et al., 2010).

The affective dimension is very important, as is the provision of choice—most recommend the formative use of peer assessment rather than summative (Nicol, 2008).

The nature and type of feedback peers are asked to give impacts on performance (Tseng & Tsai, 2010).

The importance of training students in how to give feedback (Sluijsmans, Brand-Gruwel, & Van Merrienboer, 2002).

The need to enhance research design and reporting of results (Strijbos & Sluijsmans, 2010).

Peer feedback can be a positive experience for students (De Grez, Valcke, & Berings, 2010; Fund, 2010), leading to enhanced performance (Carillo-de-la-Pena et al., 2009; Sluijsmans et al., 2002). However, the majority of these studies also report the variable impact of peer feedback on student performance (Ballantyne et al., 2002; Bloxham & West, 2004). Loddington, Pond, Wilkinson, and Willmot (2009) found that only more mature students appreciated peer assessment as an educational support and teamwork development tool. Gielen et al. (2011) noted that performance of students did improve but not for high-ability assessees. Nicol (2008) identified that not all students were comfortable working in groups. Papinczak, Young, and Groves (2007) found peer assessment was positive in developing student responsibility for others and improving learning but that there were also a number of negative effects, including student discomfort; they noted, “It may be that students need years of practice in peer assessment in order to become comfortable with the process ” (p. 184).

The varied ability of students to give and receive feedback is a very important factor (Van Zundert et al., 2010). Fund (2010) identified the need for a “willing receiver ” and “willing donor ” as well as the importance of timing in the process as to whether the student was “ripe ” for developmental change. Vickerman (2009) found that students who were more independent preferred self-assessment to peer assessment. Davies (2006) identified that “better ” students were more willing to criticize their peers than weaker students. Asghar (2010) also found that those with high self-efficacy were reluctant to be involved in group processes as they felt that they were more likely to succeed on their own.

The impact of giving and receiving feedback on future performance requires more attention (Van der Pol et al., 2008; Van Zundert et al., 2010). The beneficial aspects of peer assessment by taking on the assessor role have been noted compared to the impact of receiving feedback (Blom & Poole, 2004; Kim, 2009). Topping (2010) found that justification of feedback was more effective with lower competence assessees whereas elaborate feedback from high-competence assessors was not effective. An interesting study by Kim (2009) identified that when assessees were asked to give feedback on their feedback to peers, it enabled them to gain greater metacognitive awareness of the process, resulting in enhanced performance.

The authentic use of peer assessment feedback is stressed within the literature; however, there are few studies that explain in detail the nature of the intervention and considerations made to ensure authenticity. The use of peer feedback in summative marking of work is contentious and an area where academics need to tread carefully in their decisions about how to use participative assessment (Rushton, Ramsey, & Rada, 1993). The importance of peer feedback and assessment remaining formative in nature to support student autonomy is highlighted within the literature (Alpay, Cutler, Eisenbach, & Field, 2010). The issue of student choice in collaborative and peer feedback designs is a feature of many articles. An alternative perspective is that peer assessment should be part of summative assessment (Rust et al., 2005).

In support of the summative use of peer assessment, Keppell, Au, Ma, and Chan (2006) argued If we value peer learning . . . we need to include peer assessment within the formal assessment for the course. . . . It is essential that we do not use peer assessment inappropriately, as it can also inhibit learning and . . . a blended approach to assessment of both group and individual items should appease both students and staff who are concerned about “freeloaders. ” (p. 462)

Liu and Carless (2006) stressed the importance of creating the appropriate climate for peer feedback and agreed with Fallows and Chandramohan (2001) that peer feedback may be more relevant to some tasks rather than others. Falchikov and Goldfinch (2000) viewed peer feedback as more valuable and reliable when focusing on overall performance using well understood criteria than individual elements of a piece of work. In similar vein, Tseng and Tsai (2010) found provision of explanations from peers was not very helpful and could hurt performance, arguing that novices may be unable to provide clear explanations, limiting peer understanding (Bitchner, Young, & Cameron, 2005). Considering the role of affect, Topping (2010) found that nondirective peer feedback was more effective due to the greater psychological safety afforded.

The importance of training students in the use of peer feedback and assessment is a common thread throughout the literature (Lindblom, Pihlajamaki, & Kotkas, 2006; Vickerman, 2009). Gielen et al. (2011) provided an inventory of peer assessment diversity to act as a checklist, an overview, a guideline, or a framework. However, Topping (2010) argued that training alone would not suffice. What is needed is constructive alignment between learning objectives and methods of teaching and assessment that take account of individual and contextual variables.

In most of the studies, training constitutes a workshop or relatively short one-off input, whereas Sluijsmans et al. (2002) argued that the training period needs to be extended considerably. To support work in this area, Sluijsmans and Van Merrienboer’s (2000) peer assessment model has identified that any training needs to take account of the following: (a) defining assessment criteria—thinking about what is required and referring to the product or process; (b) judging the performance of a peer—reflecting upon and identifying the strengths and weaknesses in a peer’s product and writing a report; and (c) providing feedback for future learning—giving constructive feedback about the product of a peer.

Training needs to be ongoing and developmental, must address student and teacher beliefs about the value and purposes of peer feedback, demonstrate key principles, and be formalized. Alignment of peer assessment with other dimensions of the learning environment is important. Brew, Riley, and Walta (2009) argued that to optimize the use of participative assessment, staff need to better prepare their students by modeling and communicating their reasons for adopting such practices. For Vickerman (2009), the design and structure of peer assessment need to ensure that students learn to appreciate the technicalities and interpretation of assessment criteria, alongside making sound judgments about subject content.

Exploring the Feedback Gap: Student Inability to Benefit From Assessment Feedback

There are numerous examples of student inability to capitalize on feedback opportunities by failing to make use of additional feedback offered (Bloxham & Campbell, 2010; Burke, 2009; Fisher et al., 2011; Handley & Cox, 2007). Even when “good ” feedback has been given, the gap between receiving and acting on feedback can be wide given the complexity of how students make sense of, use, and give feedback (Taras, 2003). Explanations of this phenomenon include the role of individual difference variables impacting on perceptions and use of feedback (Young, 2000), the complexity of specific learning contexts, temporal variations in learner and teacher openness, and ability to give and receive feedback, respectively. The importance of context and the relationship between feedback giver and receiver is captured in Krause-Jensen’s (2010) summation: There is no such thing as a single “magic bullet. ” The “magic ” of the bullet is highly context dependent, and so the bullets must be fashioned according to local circumstances, the shooters and the targets. The university teacher . . . has to make “intelligent choices in complex situations ” . . . under ever-changing conditions, government reforms and revised curricula. (p. 64)

Acceptance and use of assessment feedback is complicated (Sargeant, Mann, Sinclair, Vleuten, & Metsemakers, 2008). In terms of mediator variables, we need to know how these variables interact and what types of feedback are most applicable to task and specific learner variables. Fundamental beliefs about learning and the learning process will strongly influence how individuals see the role of feedback, as commented on by Price et al. (2010, p. 278): “The students’ ability or willingness to do this [act on feedback] might depend on the emotional impact of feedback . . ., a student’s pedagogic intelligence or the student’s past experiences. ” Furthermore, students’ willingness to maintain learning intentions and persist in the face of difficulty depends on their awareness of, and access to, volitional strategies (metacognitive knowledge to interpret strategy failure and knowledge of how to buckle down to work; Vermeer, Boekaerts, & Seegers, 2001). The inability to capitalize on feedback may reflect the fact that some students lack both the critical ability to be able to do so and the requisite domain knowledge and understanding (Quinton & Smallbone, 2010). This can be perceived as both a training and ability issue. Although Sadler (2010) argued that “students cannot convert feedback statements into actions for improvement without sufficient working knowledge of some fundamental concepts ” (p. 537), Hattie and Timperley (2007) stressed the need for instruction over feedback if lack of knowledge was the problem.

The lack of a learning effect is an important area to explore. Weaver (2006) points to students’ levels of intellectual maturity and previous experiences as factors affecting receptivity to feedback. Students bring with them their own schema or rules about how to write and may only be aware of the gaps associated with these rules (Bloxham & Campbell, 2010). Fritz and Morris (2000) argued that “prior knowledge plays a major role in the perceptions and responses that . . . students . . . construct [and that] errors engendered by these misconceptions . . . are very resistant to correction ” (p. 494), even with further training and feedback. It is known that individual differences variables such as gender, culture, and income impact on student and lecturer access to feedback, perceptions of feedback, and performance (Crossouard & Pryor, 2009; Draper, 2009; Evans & Waring, 2011b; Maringe, 2010; Shute, 2008). The ways in which individuals process information, their cognitive and learning styles, are important given their potential impact on the ways in which individuals makes sense of information (Liu & Carless, 2006; Vickerman, 2009). Such diversity in styles may also pose instructional issues for lecturers (Evans & Waring, 2011c; Orsmond, Merry, & Reiling, 2005).

Värlander (2008) highlighted the importance of the role of affect on how feedback is received and acted upon. Developing this line of argument, Boud and Falchikov (2007) acknowledged that cognitive processing can be impaired by certain emotional states. Yorke (2003) also noted the “importance of the student’s reception of feedback cannot be over-stated . . . they vary considerably in the way that they face up to difficulty and failure ” (p. 488). We need to know more about the role of personality variables and how self-perception of the extent to which intelligence is mutable also impacts on the feedback process. Poulos and Mahony (2008) argued that “how the student interprets and deals with feedback is critical to the success of formative assessment and involves both psychological state and disposition ” (p. 144). The students’ emotional resilience as a dimension of self-regulation is an important area of focus along with consideration of facilitators and barriers to student self-management of the emotional dimensions of feedback (for further elaboration on this, see Scott et al., 2011, p. 64).

Many have argued how important positive feedback is on student confidence and motivation (Ferguson, 2011), although evidence of the impact of positive feedback on student performance is mixed. Martens, de Brabander, Rozendaal, Boekaerts, and van der Leeden (2010) found no difference in student performance whether feedback was positive, neutral, or negative. Draper (2009), commenting on Dweck’s (2000) work in the U.S. context, argued that widespread practices aimed at making learners feel positive about their work damages learning. Fritz and Morris (2000) have commented on the power of emotion in mediating feedback, arguing that the emotional and psychological investment in producing a piece of work for assessment may have a much stronger effect on the student than the relatively passive receipt of subsequent feedback.

The individual goals of students are also important and linked to emotional investment “since this provides a framework for interpreting, and responding to, events, that occur ” (Yorke, 2003, p. 488). Explaining student responses to feedback, DeNisi and Kluger (2000) suggested that performance goals are arranged hierarchically in three levels. The highest level is the meta-level or self-level where goals relate to self-concept, the middle is the task level where goals relate to task performance, and the lowest is the task learning level where goals relate to task details and the specifics of performing it. They suggested that negative emotional responses most commonly occur when feedback intended for the task level is interpreted at the self-level, diverting attention from the task and instead focusing it upon the self where it is perceived as a generalized criticism leading to negative feelings like self-doubt, anger, or frustration. To what an individual attributes their success or failure is fundamental to understanding how students use feedback; learned helplessness, self-worth, and mastery orientations are important considerations in this respect (Dweck, 2000), although there is little reference to such constructs within the literature.

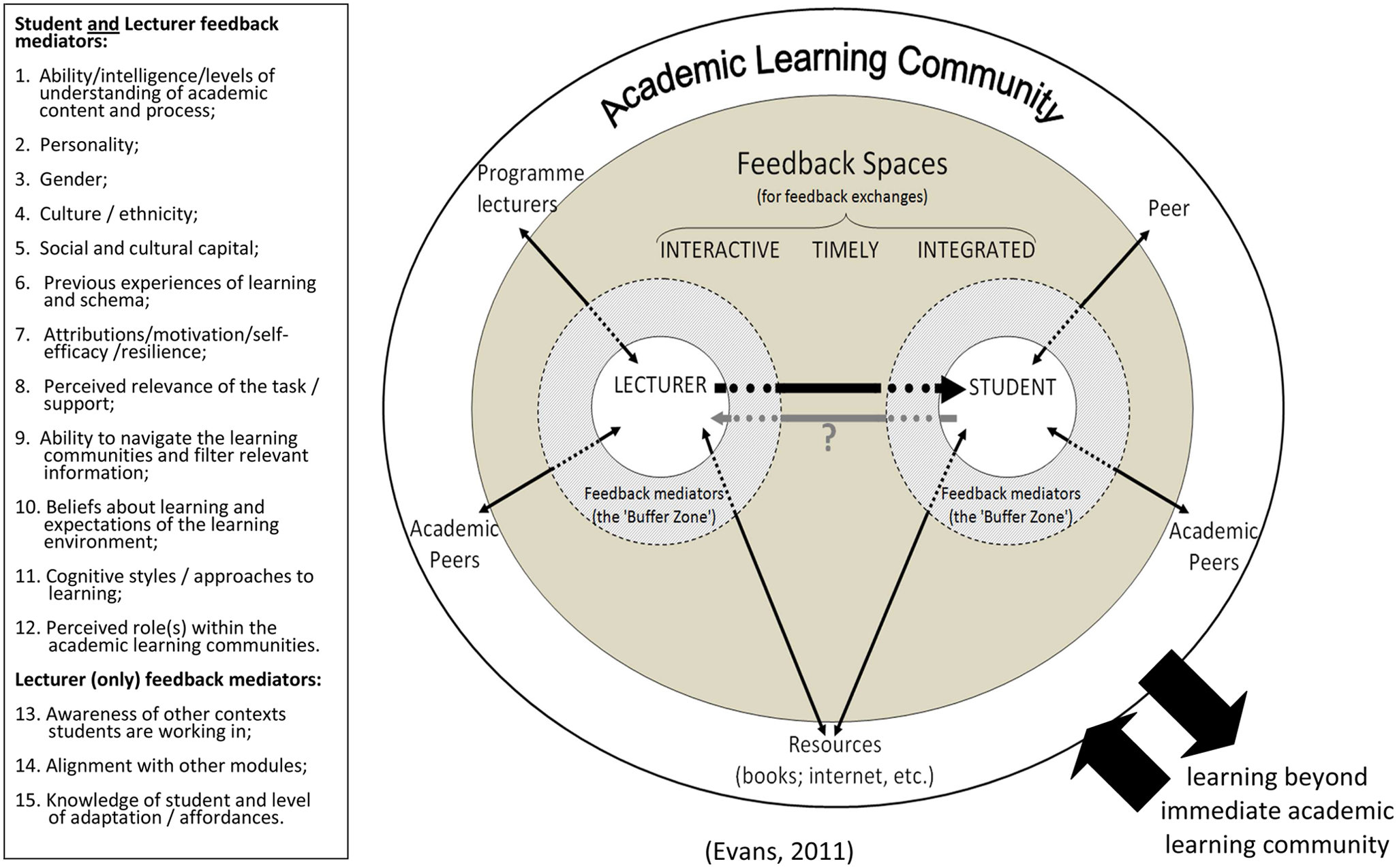

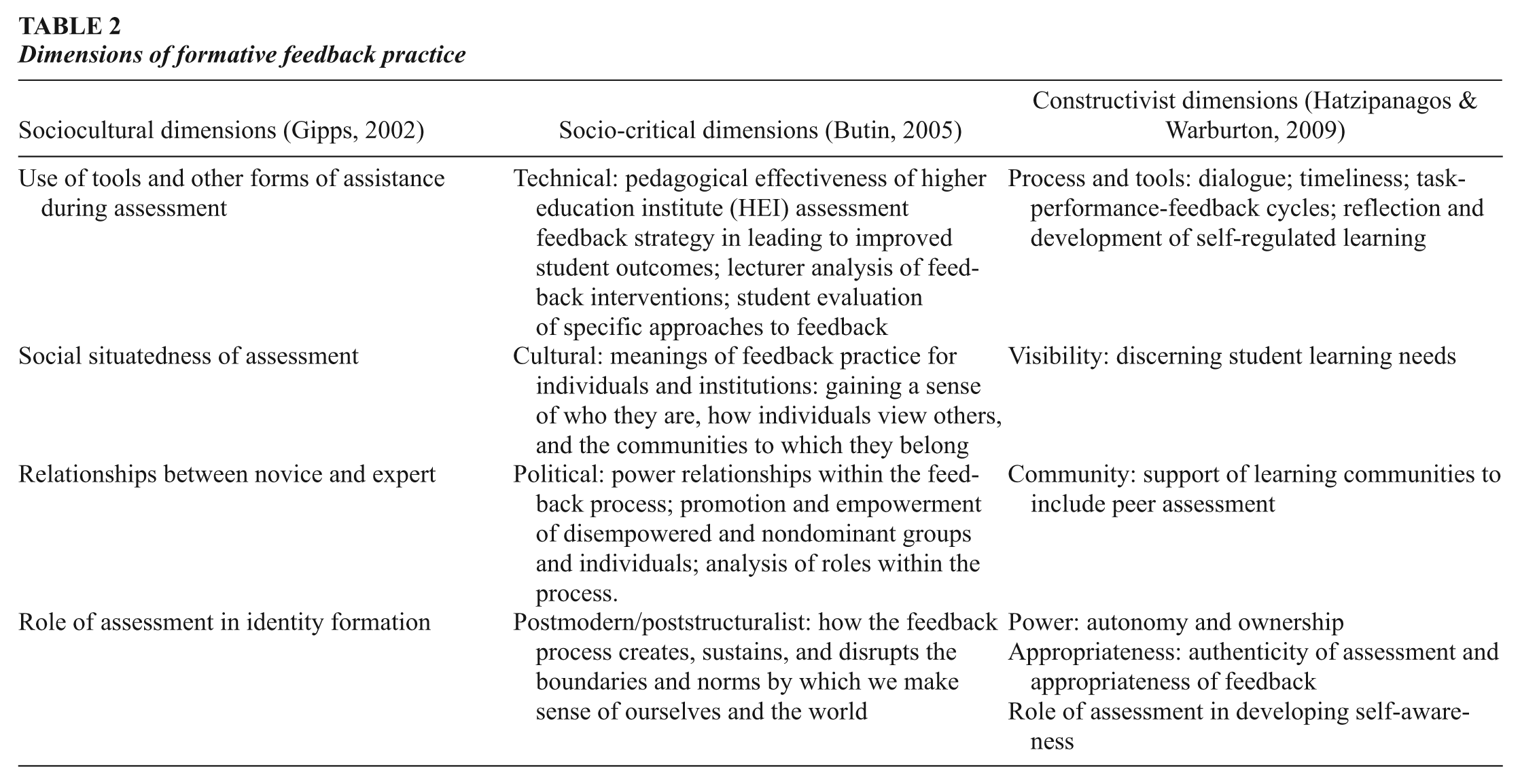

The level of investment made by a student is an important mediator along with their expectancy of success and value they attribute to a task (expectancy value theory). Wingate (2010) argued that if students have little expectation of being successful and the tasks seem difficult, they will have little motivation for engaging with feedback information. The expectancy of success is determined by self-efficacy and learners’ self-perception of their capability (Bandura, 1991). Hattie and Timperley (2007), building on DeNisi and Kluger’s (2000) work, argued that it is both the level at which feedback is focused and students’ levels of self-efficacy that impact on their responses to feedback; it is the nature of this interaction that is important to explore. For Draper (2009), only the subset of students who judge both that they need to improve and that their effort is adequate are likely to have a rational interest in the content of typical written feedback, and that may correspond to the subset who do pay attention to it. How feedback contributes to the development of identity (self-attitudes; Stryker, 1968) and to the role-related behavior of students is an area worthy of further study. This may be especially relevant to part-time students. In addressing student inability to make the most of feedback situations, Sadler (2010) argued that the fundamental problem lies less with the quality of feedforward and feedback than with the assumption that telling . . . is the most appropriate route to improvement in complex learning . . . the issue is how to create a different learning environment that works effectively. (p. 548)