Abstract

This study investigated the impact of a flipped feedback approach on student academic performance and evaluative judgement. Flipped feedback involved providing generic feedback information on common errors before students self-assessed against the marking criteria and requested targeted feedback. Undergraduate students completed two coursework assignments featuring flipped feedback. Draft and final submission scores were compared, and students completed an evaluation survey. Results showed substantial gains between draft and final submission scores. Survey responses were overwhelmingly positive, with most students agreeing that flipped feedback aided self-evaluation and subject comprehension. However, some expressed concerns about self-assessment accuracy and draft/final weighting. This study indicated that flipped feedback can empower learners to engage with and act upon the feedback process while increasing academic performance compared to previous cohorts by enhancing evaluative judgement. Further research should systematically investigate the approach’s potential across diverse subjects and assessment modalities.

Keywords

Introduction

The feedback process, including the provision of feedback information, as defined by Winstone et al. (2022), is an essential component of the learning cycle, as it can provide students with personalised guidance to support their learning, help them identify areas for improvement, and develop important skills such as critical thinking and problem-solving (Hattie & Timperley, 2007; Sadler, 2010). Learners, however, need a specific skill set to be able to make the most of their feedback information, often termed feedback literacy, which spans affective, cognitive, and social domains (Carless & Boud, 2018). Feedback information can take many forms, such as written comments, oral feedback, marking rubrics, and peer review. However, despite the importance of the feedback process, students may face several barriers to engaging with it. These challenges include the timing of feedback information, the clarity or volume of feedback comments received, their perceived relevance, and the students’ confidence to engage with the feedback information and accept constructive criticism (Jonsson, 2013; Pitt & Norton, 2017; Poulos & Mahony, 2008; Winstone et al., 2017).

The timing of feedback information is central to its effectiveness so that students can directly apply comments to future work (Boud & Molloy, 2013); if feedback information is provided too long after an assignment has been submitted, it may no longer be seen as relevant or meaningful to the student. This can be especially true for formative assessments, where timely feedback information can help students make changes and improvements to their work and help close the feedback loop by providing an opportunity to implement the feedback information and enhance future learning (Nicol & Macfarlane-Dick, 2006).

Another important factor is the clarity of feedback information; if the comments are vague, unclear, or difficult to understand, they may not be perceived as helpful to the students, and they may not engage with the feedback process or understand what to do with the comments. Students need to be able to understand what they did well and where they can improve their work in the future (Jonsson, 2013; Winstone et al., 2017).

Students may feel overwhelmed by the quantity or volume of feedback information. Academics often spend a great deal of time writing detailed comments, but this may actually be a disincentive to student engagement, as they may feel daunted by the number of comments, which may lack sufficiently focussed detail, making it difficult for students to process and apply the feedback information (Price et al., 2010). Additionally, the time-intensive nature of providing personalised feedback information places a significant burden on educators, making it crucial to explore strategies that balance student engagement with sustainable workload demands (Amrhein & Nassaji, 2010; Gibbs & Taylor, 2016). Therefore, the provision of more detailed, targeted feedback information that is specific to the student’s work and, importantly, actionable may help them focus on the most important areas for enhancement (Ferris, 1997; Hepplestone & Chikwa, 2016). Alongside this, the relevance of the feedback needs to align with the needs of the student to encourage engagement. Tailored feedback comments are likely to be far more beneficial to the student’s learning (Uhm et al., 2015).

Confidence is another challenge that can impact students’ engagement with the feedback process. If students lack confidence in their abilities, they may be reluctant to engage with feedback information that highlights their weaknesses (Handley et al., 2011; Hyland & Hyland, 2001). It is important to provide feedback information in a way that is supportive and encourages students to build on their strengths and work on the areas that require improvement. By providing positive and constructive feedback information, educators can help students feel more confident in their abilities, motivating them to continue learning and developing their evaluative judgement of their work (Tai et al., 2018).

Evaluative judgement relates to the capability of students to make informed decisions about the quality of their work or the work of others (Tai et al., 2018). It is a skill that requires students to comprehend assessment criteria and apply critical judgement to their own work or that of their peers. Jonsson (2013) explored the role of developing evaluative judgement, suggesting that for feedback information to be effective, it needs to enhance the student’s ability to review their own learning. This necessitates opportunities for students to engage with the feedback information, reflect on it, and subsequently use it to make judgements about their work (Jonsson, 2013). Similarly, Panadero (2017) emphasised the importance of self-regulation in the evaluative judgement process, outlining how students can be trained to better judge their own work by experiencing self-assessment practices (Panadero, 2017). The integration of self-assessment opportunities in the learning process helps enhance students’ self-regulation and metacognition, which ultimately benefits their learning as they become more aware of the criteria for success and are better able to regulate their own learning (Andrade, 2010). Key to the success of evaluative judgement is the provision of clear criteria, such as well-designed rubrics and structured guidance to facilitate accurate self-assessment (Tai et al., 2018).

In higher education, the ongoing research into enhancing effective assessment and feedback practices has led to a range of innovations being trialled. These include withholding marks to encourage student engagement with feedback information, incorporating elements of self-assessment, and soliciting targeted feedback requests. Each approach carries its own set of advantages and potential challenges. Withholding marks until students have engaged with feedback has been shown to shift the focus from marks to learning, fostering deeper engagement with feedback information (Jackson & Marks, 2016; Kuepper-Tetzel & Gardner, 2021). This method aligns with pedagogical models that prioritise formative learning experiences, which can enhance students’ self-regulatory skills (Handley et al., 2011). However, it is not without its drawbacks; the delay in receiving marks can be a source of anxiety for students, potentially overshadowing the benefits of engagement with the feedback process (Mires et al., 2001). The practice of students predicting their grades encourages self-reflection and can promote a more active role in the learning process. Nonetheless, inaccuracies in self-assessment may lead to dissonance between expected and actual outcomes, which can impact student motivation, confidence, and self-efficacy (Brown, 2007). Targeted feedback requests, where students articulate specific areas they would like to receive feedback information on, can lead to more personalised and actionable insights. This approach encourages students to engage critically with the assessment criteria and their own work. However, it may also narrow the scope of feedback information, as students might not always identify the most pertinent areas for improvement, potentially missing out on comprehensive feedback information that could facilitate broader learning (Zacharias, 2007).

In isolation, these strategies offer pathways to enhance student engagement with the feedback process and promote self-regulated learning. However, taking into consideration their individual limitations means they must be implemented with consideration of student diversity and the potential emotional and cognitive impacts. A balanced approach that considers the individual needs and responses of students is essential for optimising the benefits of these feedback strategies while mitigating their limitations. In this study, we present our concept of flipped feedback as a comprehensive approach to addressing many of these challenges and improving student engagement with their feedback information.

Flipped feedback involved students submitting an initial draft of their work before receiving generic feedback that highlighted common errors. Evidence suggests that generic feedback alone is not an effective feedback tool (Breslin, 2021); however, here, the comments were used to allow students to evaluate their work against a detailed mark scheme and to apply this feedback information before undertaking the next stage of the task, as proposed by Henderson et al. (2021). In this scenario, which aligns well with Andrade’s (2010) definition of self-evaluation, generic feedback has developmental potential, as the students must self-critique their work to make the best use of the generic feedback comments and identify where they can be applied. Students were asked to predict the mark they perceived their work to be worth at the draft stage (as described by González-Betancor et al. (2019) and were then given the opportunity to submit an improved final version, along with requesting two or three specific areas they would like to receive more in-depth feedback on. Flipped feedback recognises that the feedback process is not one-size-fits-all but is deeply embedded within the socio-cultural context of the learning environment. This aligns with the understanding that feedback literacy must be seen as contextually situated, reflecting the complex interplay between individual capacities and the specific academic and social environment in which learning occurs (Andrade & Du, 2007; Nieminen & Carless, 2023).

Flipped feedback, as conceptualised in this study, refers to an inversion of the traditional feedback process. Typically, students receive individualised feedback information after submitting their final work, which they then use retrospectively to reflect on their learning. In contrast, the flipped feedback approach begins with the provision of generic feedback information, highlighting common errors and successful strategies, before students engaged in a self-assessment of their draft submissions. This self-assessment was guided by detailed marking criteria, enabling students to predict their own grades and identify areas for improvement before submitting a final version of their work. The process is ‘flipped’ in that it prioritises student-led evaluation and revision before they receive individualised feedback information on their refined work. This approach is grounded in the principles of active learning and formative assessment, where students are active participants in the feedback loop rather than passive recipients (Boud & Molloy, 2013; Nicol & Macfarlane-Dick, 2006). This approach aligns with recent developments in the concept of feedback literacy, which emphasises the need for students and teachers to develop the skills and dispositions necessary to engage effectively with feedback processes (Carless & Boud, 2018). By foregrounding students’ active role in the feedback cycle, flipped feedback contributes to the development of feedback literacy, which is increasingly recognised as essential for optimising learning outcomes (Nieminen & Carless, 2023).

The concept of ‘flipped feedback’ drew inspiration from the broader pedagogical model of flipped classrooms, where traditional instruction is reversed, placing more responsibility on students to engage with content before class time (Bergmann & Sams, 2012). While generic feedback information followed by individualised feedback information is not entirely novel, the structured integration of self-assessment prior to receiving detailed comments is what distinguishes the flipped feedback approach. Previous studies have highlighted the benefits of generic feedback information in promoting students’ ability to recognise common pitfalls and strengths (Breslin, 2021). By first exposing students to general trends in performance, flipped feedback empowered them to critically engage with their own work using established criteria, thus fostering evaluative judgement and self-regulation (Boud & Molloy, 2013; Tai et al., 2018). This method, while rooted in established educational practices, innovates by systematically placing the initial onus on students to apply feedback information proactively, thus enhancing their capacity for self-directed learning.

The model of flipped feedback explored in this study aligns with the ‘Feedback Mark 2’ model, which emphasises the active role of the learner in generating and soliciting feedback (Boud & Molloy, 2013). Additionally, it draws on the work of Carless (2019) and the notion of ‘feedback loops’ or ‘feedback spirals’, which involve iterative cycles of feedback and improvement, and highlight the importance of student engagement and self-regulation in the feedback process.

The objective of this research was to build on these theoretical foundations by investigating the impact of combining these different strategies, rather than treating them in isolation. We aimed to determine whether a combination of approaches can enhance students’ self-evaluation of their work and satisfaction with the feedback information they receive, leading to improved grades.

Methods

Participants and Context

Clinical Biochemistry and Physiology (PM-243) was an optional undergraduate module delivered to second-year students at Swansea University over a 5-week period during the second half of the second 11-week teaching block. It was, therefore, one of the last modules that students undertake immediately prior to the summer examination period. The module was primarily taken by (medical) biochemistry students, with a small number of (medical) genetics and joint honours (Biochemistry and Genetics) students. In the year of this intervention, it was taken by 60 students and was assessed through a combination of an end-of-module exam (75% contribution) and two equally weighted, semi-structured laboratory reports (25% contribution). The practical classes were held during the first 2 weeks of the 5-week teaching block, with 2 weeks for the students to complete the reports post-class (see Student requirements below). The study compared the performance of this cohort receiving the intervention with two previous cohorts (combined

Study Design and Procedure

Guidance on the Flipped Feedback Approach

Students were introduced to the flipped feedback approach during their first practical class in the first week of the 5-week teaching block, and documentation providing full details of the requirements was placed on the virtual learning environment. The marking rubric and guiding questions that needed to be completed as part of the laboratory write-up were provided in advance of the first laboratory class, along with the practical pro-forma.

Student Requirements

Students had 7 days following each practical class to submit a draft based on a semi-structured laboratory report with guiding questions. On the seventh day, following the draft submission deadline, generic feedback, which comprised common errors from previous cohorts, was released along with a marking outline that detailed the breakdown of marks for each of the criteria. Students were asked to reflect on their draft submission and rate, on a scale of 1 (not at all) to 5 (completely), how well they felt they had satisfied each element of the marking criteria. Based on this self-reflection, students were asked to predict the mark that they thought the draft report deserved. To incentivise students, they were told that if their mark was within 5%, but higher, than the actual mark awarded, then they would receive the higher mark, that is, if the academic marked the work at 60% and the student predicted 64%, then they would receive the higher mark. There was no penalty for predicting a mark lower than that awarded by the academic marker. Students were given a further 7 days to complete this self-reflective element and improve their draft report based on this reflection. As part of the final submission, students were asked to request two or three things that they wanted detailed feedback on. The draft report was weighted at 64%, and the final report was at 36% of the overall coursework mark. If no draft report was submitted, then students still received the generic feedback but could only gain marks for the final submission.

Staff Requirements

Staff marked the draft report against a rubric within Turnitin; the rubric automatically calculated the final percentage based on the individually weighted criteria (range: 0–100). No additional feedback comments beyond those provided in the generic feedback document and the marking rubric were given on the draft report. Following the submission of the final report, the changes made by the students were identified, and the work was regraded. If no changes were identified between the draft and final report, then the same mark was awarded for each submission. Detailed feedback comments on those aspects requested by the students were then provided.

Data Collection

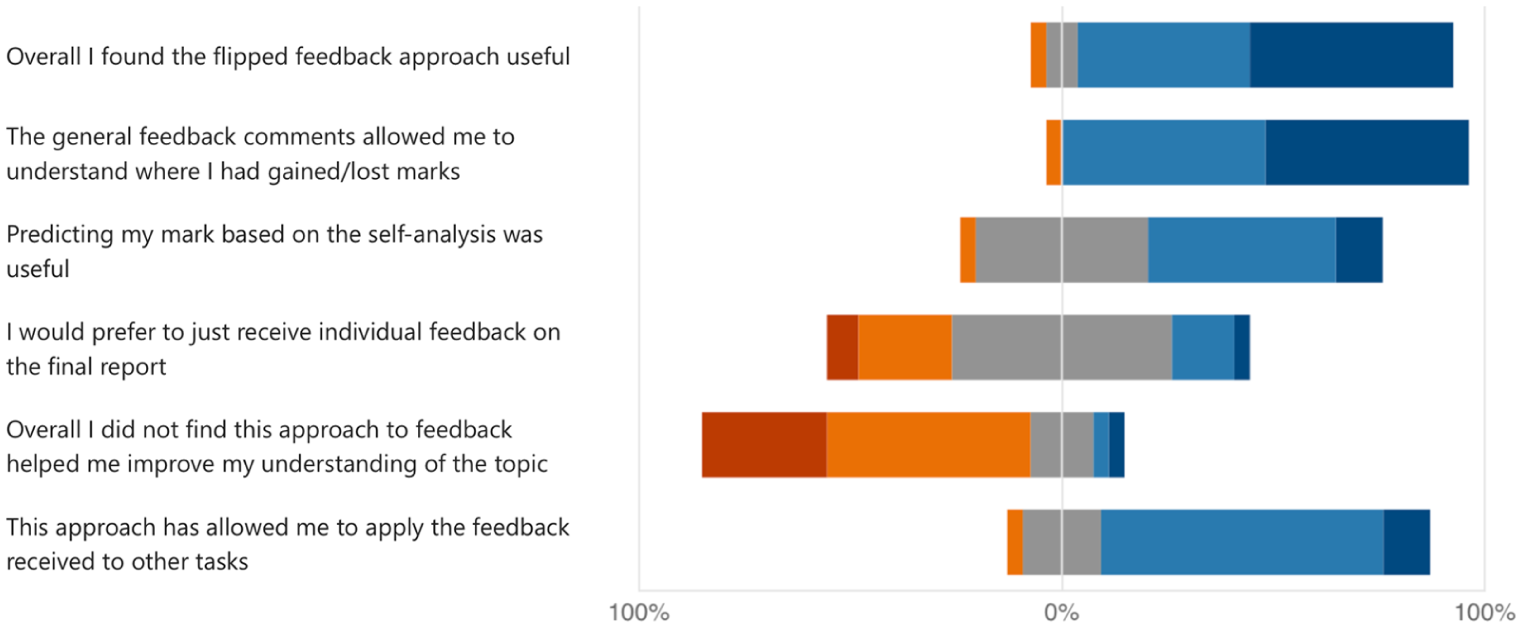

A mixed methods approach was adopted, incorporating draft and final report marks, which were collated by the academic markers. Alongside this, an electronic survey (Microsoft Forms) consisting of six questions using a 5-point Likert scale (Strongly Disagree to Strongly Agree; see Figure 3 for full details) with three further free-text responses (‘What did you like about this approach?’, ‘What did you dislike about this approach?’ and ‘How could this approach be improved?’) was administered 1 week after the final report was submitted with a further follow-up reminder via email. The survey was designed to assess students’ experiences with and perceptions of the flipped feedback approach. The items were specifically formulated by the authors to capture opinions about all aspects of the flipped feedback approach. Completion of the anonymous evaluation was voluntary; students were not offered any incentive to complete the questionnaire, and were able to request that their module scores were not included in the analysis at any point without penalty.

Ethical Approval

The study was approved by Swansea University Medical School’s Ethics Sub-Committee (2019-0039).

Statistical Analysis

There were three parts to the analysis, a quantitative analysis of the marks before and after the intervention, a self-evaluation survey of the participants and a thematic analysis of qualitative data on their experience. Only the first contained a statistical analysis.

The student marks were continuously distributed, unimodally distributed with a reasonable sample size, and so parametric methods were used. Since the treatment and control groups comprised different cohorts, an independent samples

We reported test statistics, degrees of freedom,

Thematic Analyses of Free-Text Data

This was undertaken using inductive thematic analysis as described by Braun and Clarke (2006) following the best practice standards for reporting qualitative data (O’Brien et al., 2014).

Participants were asked three free text questions; (1) ‘What did you like about this (flipped feedback) approach?’ (2) ‘What did you dislike about this (flipped feedback) approach?’ and (3) ‘In what way could this approach (flipped feedback) be improved?’. Data were downloaded from Microsoft Forms and placed into an Excel spreadsheet.

A manual inductive approach was adopted to determine the frequency of keywords and identify themes in the student responses. Themes were then grouped together and checked for reliability through a process of reading and re-reading comments. This process was performed independently by both raters (NF and KS), who then reviewed and compared analyses to agree on the final themes and selected quotes. An inductive approach was chosen as the primary aim of the project was to explore how students engaged with and responded to the flipped feedback approach rather than to test any predefined hypotheses. This exploratory aim aligns well with an inductive approach.

Results

Module Performance

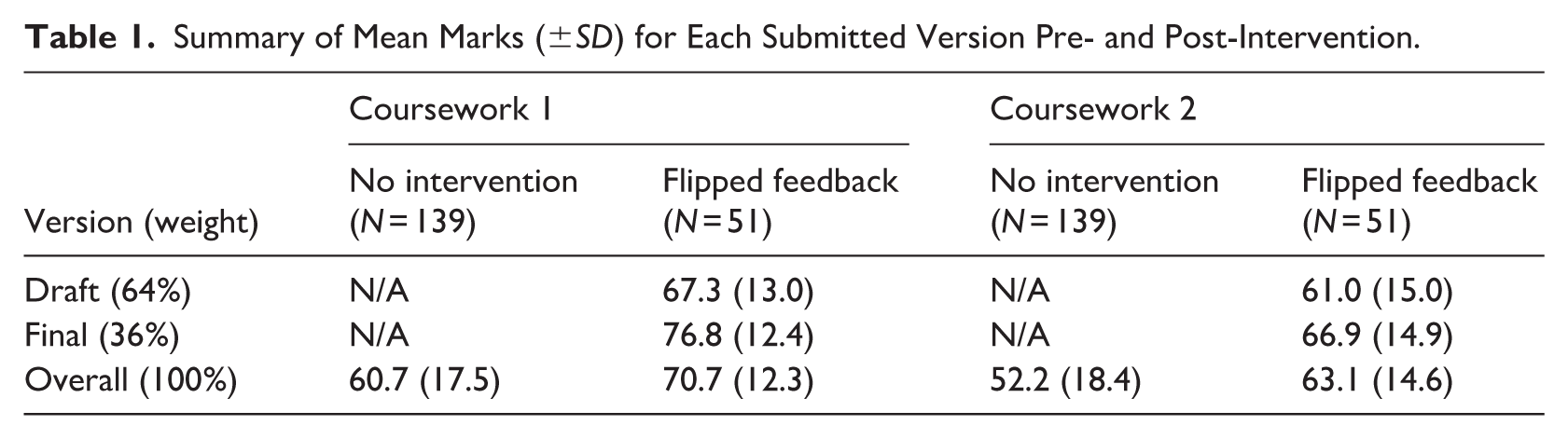

Our analysis dataset consisted of the marks obtained for two coursework assignments for two control cohorts (no intervention group), who did not receive the intervention, and one cohort (flipped feedback intervention group), who received the flipped feedback. The intervention and control cohorts contained 60 and 149 records, respectively. The final data set consisted of only those students who had submitted all components of both assessments, giving sample sizes of 51 and 139 (for the intervention and control groups, respectively) for all reported analyses. A summary of the draft, final, and overall marks received by each group can be found in Table 1.

Summary of Mean Marks (±

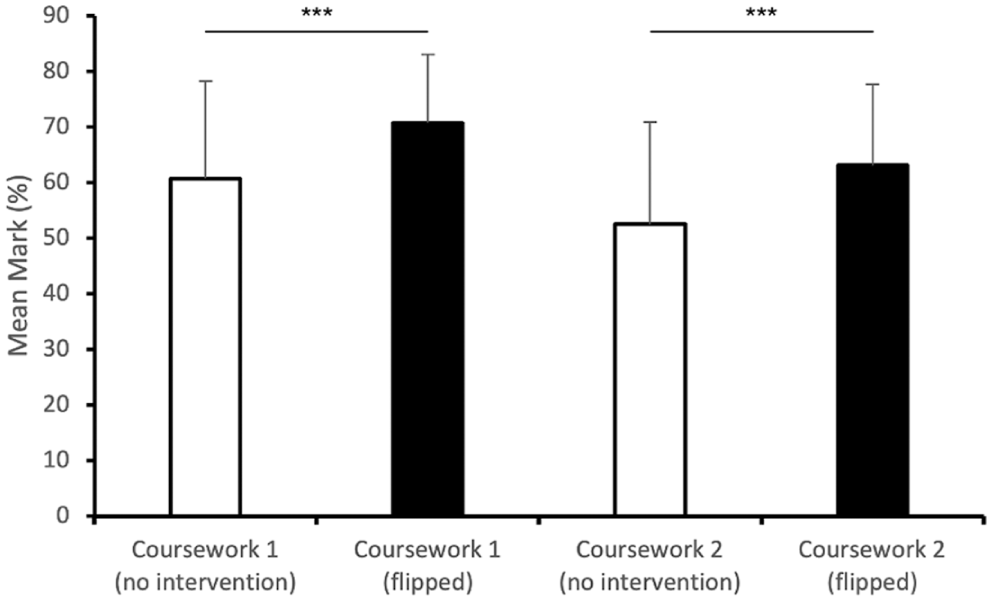

With four different sets of marks (two assessments, two cohorts), several different comparisons were possible. First, we determined whether the flipped feedback approach impacted the overall marks between the intervention and control cohorts. For coursework 1, students in the intervention group had a higher overall mark (

Mean overall marks (±

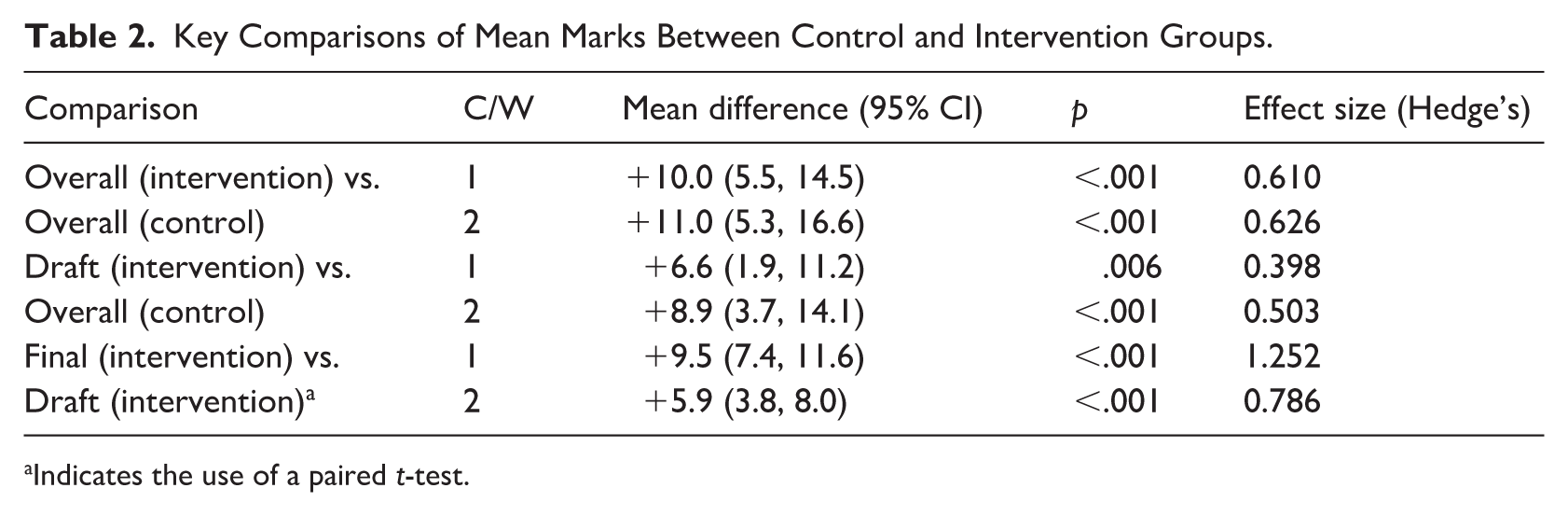

To ensure our conclusions are robust, we also made a comparison between the overall marks for the control cohort(s) and the marks for the draft reports in the intervention cohort since these were submitted before the students received the intervention (Table 2). We observed that, for Coursework 1, the baseline is higher in the intervention group (

Key Comparisons of Mean Marks Between Control and Intervention Groups.

Indicates the use of a paired

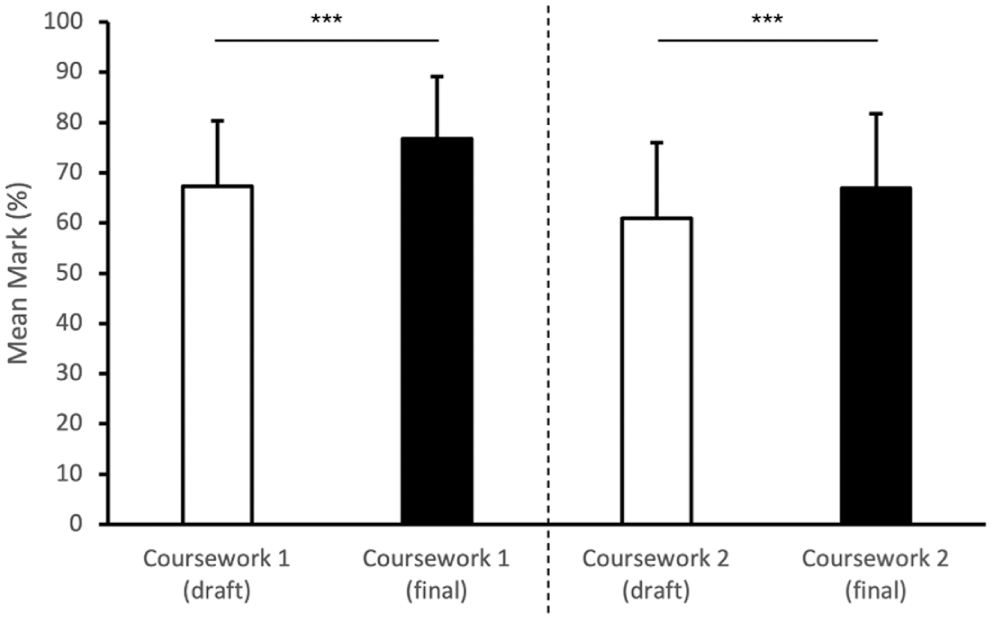

The key comparison, however, was between the draft and final submission for each piece of coursework to determine whether engagement with the flipped feedback approach resulted in improved academic performance (Figure 2). For Coursework 1, we saw an increase from the draft version (

Comparison of mean marks (±

Finally, to try to ascertain whether students were able to use feedback from Coursework 1 in Coursework 2, we looked at the correlation between the improvement in marks between the two pieces of coursework (draft and final submission). Spearman’s rho indicated a weak but significant correlation (

Student Evaluation

Overall, 27/60 (45%) of students completed some or all questions in the evaluation survey. A vast majority (89%) of the students who completed the survey indicated that they felt that the flipped feedback approach was useful (Figure 3; Q1). This was also reflected in the understanding that students gained from the general feedback comments as to where they gained or lost marks (96%) (Figure 3; Q2). The self-analysis was generally well received, with 55% of respondents agreeing that this was a useful part of the approach (Figure 3; Q3). Overall, 79% of respondents agreed that this approach would allow them to apply the feedback they had received in this task to future tasks (Figure 3; Q6). A negative-facing question was included, with 78% of respondents seeing the intervention as having a positive effect towards their understanding of the subject matter (Figure 3; Q5). Interestingly, therefore, despite this general positivity, when asked whether they would like to have just received the individual mark, opinion was split, with 30% disagreeing and 19% agreeing (Figure 3; Q4).

Stacked bar chart indicating the percentage of student responses to the Anonymous Questionnaire.

Thematic Analysis

Students were asked three free-text response questions about what they liked, disliked, and thought could be improved about the flipped feedback approach. These questions were answered by 26/27 (96%) students. This section synthesises the key themes derived from the qualitative data to present an overview of the student experience.

What Did Students Like About This Approach?

Students highlighted several positive aspects of the flipped feedback approach. These were grouped into two key themes: ‘opportunities for iterative learning’ and ‘clarity and guidance from feedback information’.

Opportunities for Iterative Learning

A major perceived benefit of the flipped feedback approach was the opportunity for students to reflect on their initial submissions and improve their work based on the feedback information. Students appreciated the iterative nature of the process, which allowed them to refine their understanding and skills.

I liked the fact that before we get marks, we could assess ourselves and understand where we may be losing marks. I feel that I learnt more from the approach because I had the chance to go back through my report and correct things I would never have otherwise known I did wrong. Nit-picking at my own work, allowed me to be critical in what I had already written.

This iterative process also provided a sense of progress, as students could directly see the impact of their revisions.

I was able to improve on my draft to improve my mark and hand in a final copy to show what I had learnt and how I applied that. I liked the fact that I was able to use the self-analysis to gain a better understanding of the requirements of the task and how I could improve my work. I appreciated the chance to then directly incorporate this feedback into a final draft which would contribute to my mark.

Clarity and Guidance From Feedback Information

Students valued the clarity provided by the feedback information and its alignment with the marking criteria. They highlighted how this guidance helped them focus their efforts on specific areas for improvement.

I liked how you could see where I had potentially gained and lost marks as it was a good way of working out how well I’d potentially done. I was able to see where I hadn’t properly answered the questions and add more information so I could gain more marks for the final submission.

The availability of detailed feedback information and access to the marking criteria also provided reassurance and helped students feel more in control of their learning.

The access to the mark scheme allowed for potential improvement in the final version; it allowed me to see which areas I needed more detail too.

What Did Students Dislike About This Approach?

Students identified several challenges associated with the flipped feedback approach. These were grouped into two broad themes: ‘clarity of task requirements and feedback information’ and ‘time and process challenges.’

Clarity of Task Requirements and Feedback Information

Although several students mentioned the guidance as a positive aspect of the approach, there were also recurring concerns among other students that the generic feedback lacked clarity and task instructions were unclear. While the generic feedback information provided a foundation for improvement, some students felt it lacked the specificity needed to address their concerns effectively.

Sometimes, when reflecting on our work, it was difficult to know whether we were right or wrong. For example, it said “correct units given” but didn’t state what the correct units were, so it wasn’t clear whether I was originally right or not. The scheme that was given was sometimes unclear about how much detail it wanted for each point.

These quotes highlight the importance of providing actionable and detailed guidance to support students in meeting expectations. Another issue related to guidance was the difficulty students experienced with self-prediction. A number of students reported challenges in evaluating their own work and estimating their grades.

I found it a little hard to predict my own percentage. I did not want to be overly confident, but I also did not want to score myself too low! I think with practice, I will become more neutral in judging and critiquing myself not too harshly, and not too hopefully! It was difficult to decide on a grade for myself as I do not know what standard of work would normally correspond to what grade.

This highlights the need for additional support and scaffolding to help students develop confidence in their evaluative judgement.

Time and Process Challenges

Students also raised concerns about the time constraints imposed by the flipped feedback process. A common issue was the tight timeline for draft submissions, which left participants feeling rushed and stressed.

Felt a bit rushed to write the first draft (short amount of time). Increased pressure to finish the draft within a week.

Participants further disliked that the draft was more heavily weighted than the final report.

I didn’t like that we had to do a draft which was worth most of the grade and then do more work at a later stage. I didn’t see the benefit. That the first submission counted for a higher percentage.

These concerns suggest adjusting timelines and/or mark balance to better accommodate students’ workload and priorities would be beneficial to the flipped feedback approach.

How Could This Approach Be Improved?

Student feedback provided valuable suggestions for improving the flipped feedback approach. These insights were consolidated into two overarching themes that aligned with the areas that students disliked with the approach: ‘enhanced feedback information clarity and guidance’ and ‘adjustments to timing and workload’.

Enhanced Feedback Information Clarity and Guidance

A recurring suggestion was for feedback information to be more specific and actionable. Students felt that some feedback lacked sufficient detail, making it difficult to apply improvements effectively.

More specific feedback in certain areas of the reflection. If a model answer could be provided so that we could see the structure and flow of answering the questions. Points such as ‘sufficient depth of biochemistry’ could be clearer as to how much is enough/needed.

In addition to more detailed feedback, students requested clearer marking criteria and instructions. These would provide a better understanding of the expectations and how to meet them.

Clearer criteria on how to achieve higher marks would make it easier to focus on the right areas.

Adjustments to Timing and Workload

Students frequently mentioned the need for additional time to complete draft submissions.

More time between practical and draft submission.

Suggestions included extending the draft deadlines or adjusting the submission schedule to better align with students’ workloads.

Increase the amount of time allowed for the draft submission even if the time allowed for feedback application is shortened.

These comments suggest that reducing stress associated with the timeline for submissions would enhance satisfaction and enable students to engage more with the process.

Discussion

The results of this study suggest that students engage well with the flipped feedback approach, with an associated improvement in academic performance. This study contributes to the broader discourse on feedback literacy by providing empirical evidence of how flipped feedback can effectively enhance students’ self-evaluation skills, a critical component of feedback literacy. While previous research has emphasised the importance of feedback literacy in fostering student engagement with feedback processes (Carless & Boud, 2018; Nieminen & Carless, 2023), our study extends this by suggesting that integrating self-assessment within a flipped feedback model not only improves students’ engagement with feedback but also strengthens their capacity for accurate self-evaluation. By encouraging students to critically assess their own work against established criteria before receiving personalised feedback, the flipped feedback approach directly supports the development of self-evaluation skills, which are essential for effective feedback literacy and lifelong learning.

The flipped feedback model incorporated several key strategies, including the provision of generic feedback on common errors, self-assessment against the marking criteria, and requests for specific feedback, that empowered students to take ownership of their learning and actively engage with the feedback process (Pitt & Winstone, 2023). The flipped feedback approach, as described here, represents an advance in developing students’ ability to engage with and benefit from feedback. By flipping the traditional feedback model, this approach places students at the centre of the feedback process, encouraging active engagement and self-assessment. This aligns with contemporary research, highlighting the importance of student agency in evaluative judgement. The flipped model not only complements existing theories by providing practical applications but also enriches them by demonstrating how student engagement in feedback can be enhanced, thereby deepening their evaluative skills. This is a crucial step in developing autonomous learners capable of critical self-reflection, a key goal in higher education.

Importantly, this model also has implications for staff workload. Traditional feedback approaches require markers to provide detailed, often repetitive comments on multiple student submissions, which can be time-consuming (Amrhein & Nassaji, 2010; Gibbs & Taylor, 2016). By integrating self-assessment and targeted feedback requests, flipped feedback redistributes some of this workload, allowing for more efficient use of feedback resources. While initial implementation may require additional guidance for students, the structured nature of this approach has the potential to improve the efficiency of feedback provision without compromising quality.

The flipped feedback approach shows great promise in simultaneously developing evaluative judgement and enhancing engagement with feedback. Actively involving students in the feedback cycle through self-assessment and targeted feedback requests equips them with the critical skills necessary to assess their own work against academic standards, developing a deeper connection with the learning process. The significant improvements in overall coursework scores following the flipped feedback intervention, compared to previous cohorts, suggest that this approach was highly effective, with the significant gains seen between draft and final submissions also indicating that the process motivated students to implement feedback to improve their work. Disappointingly, the weak correlation in mark increase between the two pieces of coursework implies that students were only able to apply the feedback received in Coursework 1 to Coursework 2 to a limited degree. Ways to make the link between the two pieces of work more explicit so that students can see where the feedback lands should be investigated, possibly disentangling the feedback from the assessments (Winstone & Boud, 2022). It could also be that the short submission turnaround between the two pieces of work did not allow students sufficient time to process the feedback comments and, therefore, apply them effectively. Trialling the flipped feedback approach on more spaced assessments might shed light on this.

The overwhelmingly positive perceptions of flipped feedback revealed in the thematic analysis provide an understanding of the observed quantitative improvements. Themes such as iterative learning opportunities and enhanced clarity and understanding illustrate how students actively engaged with the feedback process to identify and address weaknesses, resulting in improved performance. However, challenges such as a lack of clarity around guidance and time constraints highlight areas for refinement, particularly the need for more explicit guidance and optimised submission timelines. Addressing these concerns could enhance the flipped feedback model’s effectiveness, further aligning it with pedagogical principles emphasising student agency and feedback literacy (Carless & Boud, 2018; Nieminen & Carless, 2023).

These findings align with previous studies that highlight the importance of specific, individualised comments for engaging students with feedback (Ferris, 1997; Tai et al., 2018). The provision of specific and individualised feedback has been shown to be crucial for effective feedback uptake and student engagement. Ferris (1997) found that students who received more specific feedback were more likely to revise their work and showed greater improvement in their writing. Similarly, Uhm et al. (2015) highlighted the positive impact of tailored feedback on medical students’ communication skills, demonstrating its effectiveness in enhancing both student motivation and academic performance. Therefore, the incorporation of specific, individualised feedback in the flipped feedback approach is likely to have contributed to the observed improvements in student performance and their positive perceptions of the feedback process.

It is important to note that there is some critique of using open-ended, short-answer survey questions for qualitative analysis (LaDonna et al., 2018). However, in combination with the quantitative analysis, the qualitative findings add valuable insight into student perceptions of the flipped feedback approach.

Some students did, however, express concerns over the self-assessment accuracy and weighting of the draft versus the final submission. This aligns with previous research, which has shown that students may not always be accurate or confident in their self-assessment (Andrade & Valtcheva, 2009). Factors such as gender, academic performance level, and the clarity of assessment criteria have been found to influence self-assessment accuracy (Jackson, 2014). The split opinion regarding receiving an individual mark prior to self-evaluation implies that there is still work to do to refine this approach to further increase student satisfaction with flipped feedback. This finding agrees with the research on the impact of mark withholding on student engagement with feedback. While some studies suggest that withholding marks can encourage students to focus on feedback and improve their work, others have raised concerns about the potential negative impact on student motivation and anxiety. Kuepper-Tetzel and Gardner (2021) found that temporary mark withholding had positive effects on academic performance, whereas Mires et al. (2001) reported that the delay in receiving marks could be a source of anxiety for students. The mixed opinions in this study suggest that the timing of mark release in the flipped feedback process warrants further investigation to optimise student engagement and satisfaction. Nonetheless, the generally high agreement that flipped feedback enhanced subject understanding suggests that most students recognise the potential longer-term learning benefits beyond simply improving their marks.

While this study provides initial evidence for the value of flipped feedback, some limitations exist. The small cohort from a single institution and discipline limits the generalisability of the quantitative results; the lack of demographic data is an institutional limitation; however, the cohort is representative of the discipline more broadly. Additionally, as we are comparing different cohorts, we cannot definitively attribute the improvements in marks to the flipped feedback intervention. One significant limitation relates to the study design, which was implemented in a specific educational context that may not fully capture the diversity of student experiences and learning environments. The effectiveness of flipped feedback may vary across different socio-cultural contexts, as the development of feedback literacy is influenced by the specific academic and social settings in which learning occurs (Nieminen & Carless, 2023). Additionally, the reliance on self-assessment as a key component of the flipped feedback model may present challenges for students who are less confident in their evaluative judgement or who may not fully understand the criteria. Future research should, therefore, explore how flipped feedback can be adapted to different contexts and how it can be supported by additional scaffolding to address these challenges. Therefore, further research should explore whether similar outcomes are achieved in other settings and compare flipped feedback to standard practices. Investigating how well students can transfer feedback literacy skills to new modules would also be insightful.

Refinements that would potentially enhance student satisfaction with the intervention include a longer time frame for students to complete the draft submission and a shorter period for the final submission. This suggestion aligns with Carless’ (2019) concept of the ‘temporal and iterative nature of feedback spirals’, which emphasises the importance of providing students with ample time to engage with and act upon feedback. Extending the draft submission timeframe could allow for more thorough self-assessment and reflection, leading to more meaningful revisions. Conversely, a shorter final submission period could encourage students to focus on incorporating the feedback received and avoid unnecessary delays in completing the feedback loop. By optimising the timeframes for each stage, the flipped feedback approach may better support the iterative nature of learning and enhance the development of students’ feedback literacy. Increasing the detail in the general feedback comments may also enable students to make more sense of the reflective task. From a staff perspective, asking students to submit a tracked changes document would decrease the time taken to identify changes between the draft and final versions.

In conclusion, this study demonstrates that flipped feedback may help overcome engagement barriers by enabling students to self-evaluate, apply feedback information, and request personalised comments. Extension of this model across different disciplines, assessment types and institutions is warranted to determine the wider potential of flipped feedback to enhance the student evaluative judgement and their experience of assessment and feedback. Overall, this study provides important insights into the potential of flipped feedback to enhance feedback literacy and improve student learning outcomes. By situating this approach within the broader discourse on feedback literacy, we offer a new lens through which to view the role of feedback in higher education. Future research should continue to explore how different feedback models, including flipped feedback, can be optimised to support diverse student populations and how these models can be integrated into broader institutional practices to foster a more inclusive and effective learning environment. The role of technology is also an important consideration moving forward, including alternative forms of feedback information provision, such as video or audio (Mahoney et al., 2019), and the emergent role of generative AI (Usher, 2025). It would also be valuable to explore the influence of student individual differences on engagement with flipped feedback (Winstone et al., 2021).

Footnotes

Ethical Considerations

The study was approved by Swansea University Medical School’s Ethics Sub-Committee (2019-0039).

Consent to Participate

Informed consent was obtained in writing via the completion of the online, anonymous survey. Participant information was provided in writing via email to all enrolled students on the module.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available from the corresponding author* upon reasonable request.