Abstract

The evolution of machine learning and large language models (commonly referred to as “artificial intelligence” [AI]) presents both opportunities and challenges for teaching and learning across K–12 and higher education contexts globally. Among the most pressing concerns is that these tools can undermine the integrity of student assessment and evaluation systems. This article investigates this timely issue by examining the intersections between AI, academic integrity, and assessment innovations through a cross-national research synthesis, resulting in a novel model for educators, policymakers, and researchers. The proposed model promotes assessment policies and practices that support high integrity, authentic learning, and innovative student assessment in an era of generative AI.

Artificial intelligence (AI)—a field founded on “the conjecture that every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it” (McCarthy et al., p. 12, 1955/2006)—has permeated public consciousness for decades. From Stanley Kubrick’s HAL 9000 to Gene Roddenberry’s Data to Roy Thomas’s Ultron, dozens of depictions have engaged the appeal and threat of machines capable of simulating human intelligence. Yet teaching and assessment have long been seen as impervious to these science fictions. Frey and Osborne (2013), for example, estimated that teachers’ work was less vulnerable to computerization than clergy, dancers, nuclear engineers, and hundreds of other types of work (pp. 61–77). Enter ChatGPT.

Released by OpenAI in November 2022, ChatGPT 3.5 led to an explosion of interest in AI (Bond et al., 2024), particularly in the form of large language models. In the ensuing 3 years, dozens of tools for generating language, code, images, video, and music have captured public and economic attention (UNESCO, 2023), with new “earthquakes” upturning norms faster than many teachers can adapt (Bloom, 2025). Perhaps most impressively, current AI tools can already generate responses that teachers cannot distinguish from human creations (Kumar & Mindzak, 2024).

As classroom assessment researchers, we have seen the effects of these changes in our own contexts. With each new AI tremor, students, teachers, administrators, and fellow instructors have asked for guidance on how to approach assessment in this digital age. They wonder: Should I bar students from using AI? Can I realistically do so? Should I use these tools to reduce my own workloads? What are the promises and pitfalls of doing so? Drawing on literature related to AI, academic integrity, and classroom assessment, this article responds to such questions by presenting a novel model for the future of classroom assessment in a digital world. We use this model to articulate essential components for forward-looking assessment systems and then explain how the model can guide decisions for policy and practice.

We begin, however, by defining classroom assessment and the concurrent purposes it serves in K–12 and postsecondary classrooms. “Classroom assessment” refers to the ongoing process of gathering information about student learning and using multiple forms of evidence to make decisions about students’ performance and progress. It includes diverse assessment purposes—reasons to gather, interpret, act on, and communicate assessment data—that are undertaken daily by students, teachers, administrators, families, and policymakers. These purposes encompass formative and summative assessment, also called assessment for/as/of learning (Black & Wiliam, 2018; Shepard et al., 2018), which attend not only to the assessment events and products students create but also the ongoing adaptive processes necessary to understand students’ current learning and the implications for future teaching, learning, and assessment (Council of Chief State School Officers, 2023). Contemporary classroom assessment is understood as a socially, culturally, and contextual act (Shepard et al., 2018) that requires teachers to negotiate knowledge in relation to context and make intentional decisions about how best to support student learning (Coombs & DeLuca, 2022; DeLuca et al., 2023; Pastore & Andrade, 2019).

Rethinking Assessment in a Digital Age: The AI3 Model

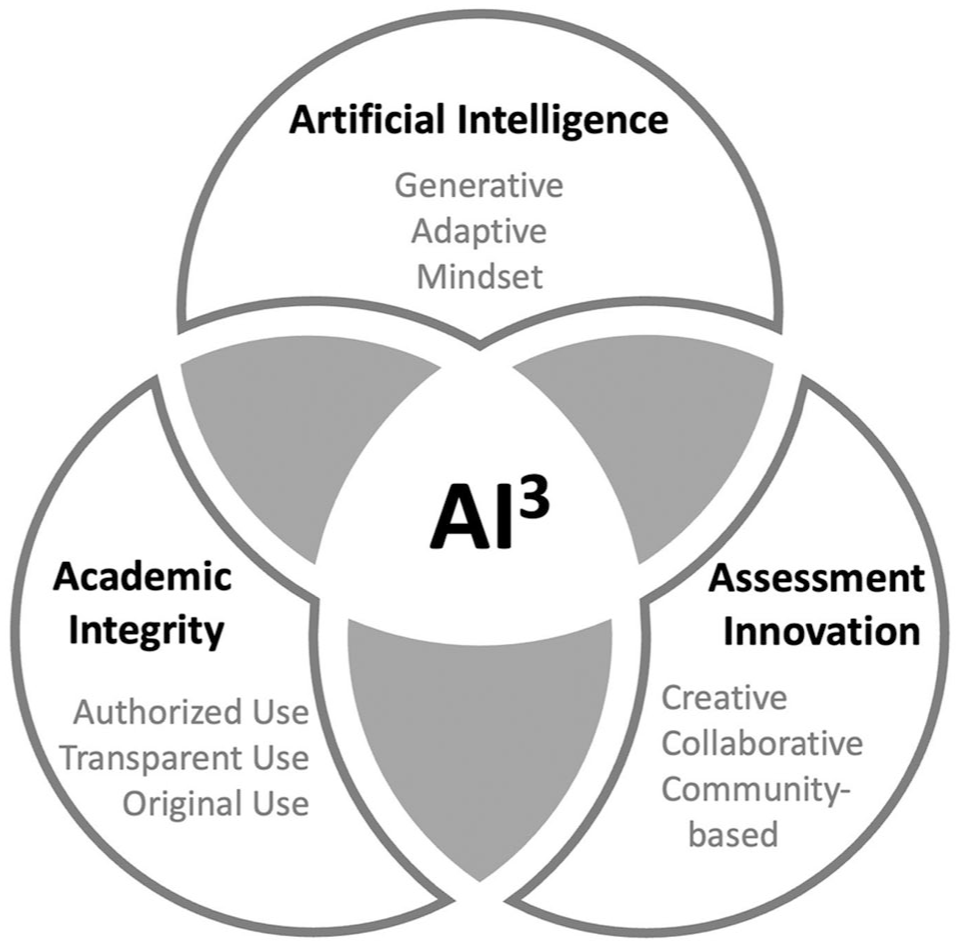

In response to the rapid advances made in AI and their implications for education and to propel forward-looking systems of assessment in education, we propose a conceptual model that intersects three scholarly perspectives: AI, academic integrity, and assessment innovation. We call this approach the AI3 model. We contend that all three perspectives are necessary for innovative and equitable teaching and assessment in a digital age. Ignoring AI is a head-in-the-sand approach where students are left to use (or not use) AI tools without pedagogical support, such as when schools articulate no AI policy whatsoever. This first ignorance increases the risk of students misunderstanding these tools’ capabilities and limitations (Celik et al., 2022; Gros et al., 2022) and exacerbates gaps in students’ knowledge of, comfort with, and access to increasingly ubiquitous technologies (Dieterle et al., 2024). Similarly, ignoring academic integrity—“the values, behaviours, and conduct” of people in academic spaces (Macfarlane et al., 2012, p. 340)—has serious implications for privacy, data literacy, and equity. For example, absent an academic integrity framework, teachers may use AI detection software without considering its limitations, students’ intellectual property rights, or biases against language learners (Liang et al., 2023). Finally, ignoring assessment principles—and particularly the assessment innovations teachers leverage in their daily practice (DeLuca et al., 2024)—risks creating inconsistencies between espoused pedagogies and students’ actual assessment experiences (Black & Wiliam, 2018).

The AI3 model (Figure 1) is intended to help policy, education, and research communities promote assessment with integrity in the face of AI. As a starting point, in the following sections, we briefly describe research from each of the three perspectives.

AI3 model: the future of classroom assessment in a digital world.

Artificial Intelligence in Education

As a field, artificial intelligence in education (AIED) is now decades old (McCarthy et al., 1955/2006; Roll & Wylie, 2016; Turing, 1950). AIED scholars have argued that rather than promoting tools to replace teachers, “the central role of AI in education . . . is to facilitate learning and teaching processes” to meet specific classroom needs (Molenaar, 2022, p. 633). Focusing on possibilities, AI tools can reduce the amount of time teachers need to spend on administrative minutiae (Celik et al., 2022), personalize assessment and learning opportunities to students’ specific needs (Cope et al., 2021), gather real-time feedback about student learning (Holstein et al., 2019), support cognitive and emotional engagement (Guo & Wang, 2025), and foster student metacognition (Lodge et al., 2023). Yet these promises are intertwined with safety and security concerns, bias issues, and general reluctance (European Commission, 2012; Pew Research Center, 2025). These issues are well founded because AI tools can perpetuate racial, gender, and socioeconomic bias (Akgun & Greenhow, 2022); invite incorrect anthropomorphic comparisons (e.g., that a tool can think or know; Gros et al., 2022); sidestep teachers’ professional expertise (Swiecki et al., 2022); and facilitate undetectable cheating that undermines student learning and assessment validity (Tlili et al., 2023; UNESCO, 2023). Far more than just another technology in a long line of innovations (e.g., social media, the internet, calculators, the printing press, the written word), AI tools necessitate a fundamental rethinking of the structures of assessment (Corbin et al., 2025; Lodge et al., 2023).

This need to rethink assessment directly implicates teaching and learning. Consider two common learning goals for student research: (a) gathering and synthesizing information and (b) evaluating the quality of that information and its sources. Uncritical use of AI tools could lead to invalid assessment results and superficial (or absent) learning, particularly if the output includes nonexistent sources or simplistic treatments of complex or controversial materials (de Fine Licht, 2024; Maleki et al., 2024; Schlemmer, 2024). Part of the complexity of AIED is rooted in questions of which tasks are performed by AI tools (i.e., offloading), which tasks are performed by students (i.e., learning), and how certain tasks might be co-conducted by students and AI tools concurrently (Lodge et al., 2023). Specifying how, when, and why students should (not) use AI (Perkins et al., 2024) and providing them with formative feedback throughout their learning supports students in developing more defensible searches and digital literacy skills and creates opportunities for critiquing AI outputs against set criteria.

The generative and adaptative nature of AI means that AI tools and models continue to evolve, rapidly feeding on the proliferation of content produced. Some have cautioned that this could lead to an ouroboros effect, where new machine-generated content is based almost exclusively on previously generated content, with less and less human-generated content available. Such projections may be overstated but illustrate that it is precisely the generative context of AI that gives rise to both opportunity and concern for classroom assessment: opportunity to harness tools to scaffold and support learning and concern for academic integrity and authentic learning. Our argument is that these concerns can be addressed by foregrounding academic integrity alongside assessment innovation.

The AI applications currently at the gates of today’s classrooms are only the seedlings of possible future ecologies for students’ and teachers’ daily lives. ChatGPT 3.5, for example, has already been followed by ChatGPT Plus and ChatGPT 4 and new competitor products and plugins that did not exist a decade ago (Hines, 2023). Meta, Microsoft, and Google all utilize AI in user-facing products that directly shape how people engage the world around them. It is not enough to respond with a mindset that embraces innovations of the past, one that repeats past architectures of assessment. Nor is it sufficient to respond only to the risks or opportunities of the present (e.g., ChatGPT, DeepSeek, Midjourney, Voicemod). Instead, forward-looking educators are flexible enough to adapt to the changing and continuously evolving AI landscape by anchoring their assessment decisions in models like AI3. Such educators engage in ongoing learning to adapt to new technologies and can pivot toward more innovative assessment practices that consider student learning, safety, and academic integrity.

Academic Integrity

In some educational spaces, academic integrity is invoked primarily in terms of student (mis)conduct—namely, cheating and plagiarism. Yet academic integrity extends beyond mere student (mis)conduct to include a wide range of practices, policies, and values. Synthesizing the most recent scholarship, Eaton (2023, pp. 1–9) identified eight layers of academic integrity: (a) everyday ethics, such as how students, teachers, and communities are expected to treat one another; (b) institutional ethics, such as whether a school articulates its ethical standards and whether those standards actually reflect students’ lived experiences; (c) ethical leadership, such as whether principals and superintendents model ethical behaviors in their schools/districts; (d) professional and collegial ethics, including professional standards, codes of conduct, and formally regulated norms, such as the duty to report or teachers acting in loco parentis; (e) instructional ethics, including the alignment between teachers’ instructional practices and the ethical standards that students are held to; (f) student academic conduct, which articulates what students are expected to do (or not do) in academic work; (g) research integrity and ethics, including safeguards for acceptable research practices; and (h) publication ethics, including knowledge creation and mobilization. Layers b, e, and f are particularly important to classroom assessment; however, Layers a, c, and d support broader ethical practices in teachers’ work and schools.

Fundamentally, this means that AIED goes beyond headline-grabbing concerns about plagiarism or cheating on essays and exams. In the AI3 model, academic integrity calls attention to (a) authorized use: making policies about the authorization of AI use in classroom assessments explicit (i.e., Layer b); (b) transparent use: making clear how students should report on their use of AI in their learning and assessment (i.e., Layer e); and (c) originality: cultivating an understanding in students that their work must maintain original components reflective of their learning to align with academic integrity and conduct (i.e., Layer f).

Consider the following examples. Taha and Ashton teach in a school whose district encourages responsible AI use but leaves specific policies at teachers’ discretion. Concerned about bias, reliability, and student learning, Ashton forbids students from using AI. He redesigns assessments to occur mostly in person (e.g., written tests and oral examinations) and gives students explicit instructions not to use AI tools on take-home tasks. Ashton facilitates a detailed discussion at the start of term on why AI use constitutes academic misconduct but is frustrated when he receives work that he believes is AI generated. Taha, who teaches next door, has embraced AI, using a variety of tools with students to explain new concepts, provide formative feedback, and teach about editing, revisions, and argumentation. Taha provides guidelines for using AI (e.g., essay outlining and later editing) and incorporates feedback opportunities on students’ works in progress to examine how they “go beyond” the tools’ outputs. By the end of the semester, their vice principal has noticed vastly different learning outcomes between the two classes and has heard grumblings about “fairness” from students and families. Some of Ashton’s students have complained they were punished for the same acts Taha’s students were encouraged to pursue, and parents of some students in Taha’s class are concerned that “AI is doing the work for them” and “their child isn’t really learning.”

These cases—de-identified examples from our own research and practice—highlight the complexities and interconnection of AI and academic integrity. Ashton’s response may do little to address how students (mis)learn outside his classroom walls, especially in contexts where many students are using AI tools, often without pedagogical guidance (Gruzd et al., 2025; KPMG, 2024). Likewise, Taha must be attentive to what processes and products are from students and which are artificially generated. Although learning to navigate AI tools can be valuable, teachers like Taha must distinguish between uses that enhance rather than supplant student learning (i.e., whose learning, of what, to what ends). Their vice principal, too, must navigate issues of fairness, context, professional discretion, and divergent views about AI. Asking teachers, students, and administrators to navigate these dilemmas without school-, district-, and state-level policies undermines their ability to act ethically and equitably. It is also not enough to ban AI or declare an open season. Teachers must attend concurrently to AI, academic integrity, and assessment innovation.

To meet these challenges, we argue that teachers and schools should develop a three-level policy to guide practice. Level 1 includes providing students with clear guidance on authorized use—when AI can be used in their assessment task. At the classroom level, this can be co-articulated with students to enhance understanding and agency with exemplars. For example, a teacher may break down the final task of creating a brochure about global warming and show that students can use ChatGPT to identify key headings or key points but that they must verify these points with additional research. Level 2 of the policy—transparent use—should include clear guidance to students on how they should declare their use of AI in assessment activities. This level involves students showing how AI influenced their learning, including agreed-on practices for citing or documenting AI use (e.g., Committee on Publication Ethics, 2023; Moya & Eaton, 2023). This principle could be enacted not only by methodologically describing how AI tools were used in the creation of work (i.e., an AI log with prompts and outputs) but also by discussing how such outputs contributed to learning (e.g., What did the student change from the output? Did the tool provoke new lines of thought in the student’s work?). Pedagogically, teachers can engage students in conferences to discuss the role of AI in their assignments or invite student reflections and AI-graphies. The final level involves educating students about the value of original work in specific contexts. For example, a teacher may ask students to analyze case studies exploring the ethics of originality in different situations (e.g., grading student work, at the doctor’s office, creating art). The grounds for ethical, original use will understandably vary depending on the learning outcomes at play. Just as it is ethically indefensible for teachers to rely on AI tools to prove academic misconduct (Elkhatat et al., 2023; Weber-Wulff et al., 2023), student work needs to be distinct from the outputs of AI applications for it to be ethically considered representative of actual student learning. This is consistent with the long-standing contention that for student learning to occur, students need to have agency and ownership over products of that learning (Brookhart, 2013).

Assessment Innovation

AI tools have laid bare the need to rethink assessment (Volante et al., 2023a, 2023b). Indeed, AIED researchers have long advocated for assessment practices that account for teachers’ specific contexts and the capabilities of new tools (Celik et al., 2022; Cope et al., 2021). Yet creating pedagogical changes in assessment is no simple task (Black & Wiliam, 2018). Teachers and students alike struggle with various aspects of assessment, including widespread grading obsession, managing large amounts of assessment data, conflicting assessment beliefs, and inequitable assessment practices (DeLuca et al., 2024). AI tools did not create these challenges, nor may teachers simply abdicate their assessment responsibilities to tools developed by private interests (Swiecki et al., 2022). AI simply necessitates a different—more innovative—approach to assessment.

Elsewhere, we have argued that assessment innovation “involve[s] educators in addressing assessment challenges and their underpinning dilemmas to advance teaching and learning by implementing and experimenting with creative ideas that are new in-context” (DeLuca et al., 2024, p. 109). Assessment innovation involves identifying innovating in relation to a challenge (e.g., academic integrity issues caused from the onset of AIED). Importantly, teachers’ innovations—including innovations in response to AIED—need not be transformative or revolutionary (Roll & Wylie, 2016; UNESCO, 2023). Instead, teachers’ assessment innovations may involve “small-I innovation” (DeLuca et al., 2024)—an informed shift to local practice that enhances teaching, learning, and assessment in context. Small-I innovation seeds our argument for AI3: not to ignore AI, or embrace it uncritically, but instead to leverage AI and academic integrity to make intentional choices about those challenges to develop forward- thinking assessment systems that align teachers’ pedagogical goals with the realities they now face (Black & Wiliam, 2018). Explicitly attending to assessment innovation, especially small-I, teacher-led innovations, emphasizes the need for ongoing work toward significant structural changes in assessment practice (Corbin et al., 2025).

By necessity, such assessment innovations involve moving beyond test-based and largely written forms of assessment. For too long, these narrow, albeit efficient, formats have dominated student assessment, shaping a societal zeitgeist on what it means to assess learning and by proxy, how learning is understood. But alternatives exist that AI is forcing to the fore. Importantly, performance assessment alternatives are not new; however, they have struggled to gain prominence and value in systems driven by accountability mandates that value psychometric and test-based evidence of student learning. AI is a dramatic catalyst changing this dynamic. Here, we argue for three principles to guide the reassertion of performance assessment as an assessment innovation in the context of AI: (a)

In practice, applying these principles includes reframing assessment toward active tasks that connect students with one another and with community issues and groups. For instance, a teacher might invite students to interview community members about important features of their neighborhood’s past and present, identifying challenges and goals for change. The students might then synthesize this learning and ask an AI tool to create visual renderings of how that community could change to meet those goals in ethical, relational ways. The outcome of this approach is the use of AI in assessment while ensuring learning remains connected to students and communities.

Future Directions for Policy, Research, and Practice

Our interest in this article is to support changes to the status quo in schools, what Cuban (1988) considers second-order changes, by fundamentally addressing how AI requires innovative approaches to assessment and academic integrity. Cuban observed that first-order changes, such as incremental tweaks to policies, syllabi, and assessment practice, yield little impact on teaching and learning because they largely reinforce existing ways of thinking and operating in schools. In contrast, second-order changes require a reimaging of historical practices to accommodate new technologies, actions, and intentions. Our contention is that AI has the potential, if harnessed and used ethically, to contribute toward second-order school change; to fundamentally alter how teaching, learning, and assessment happen.

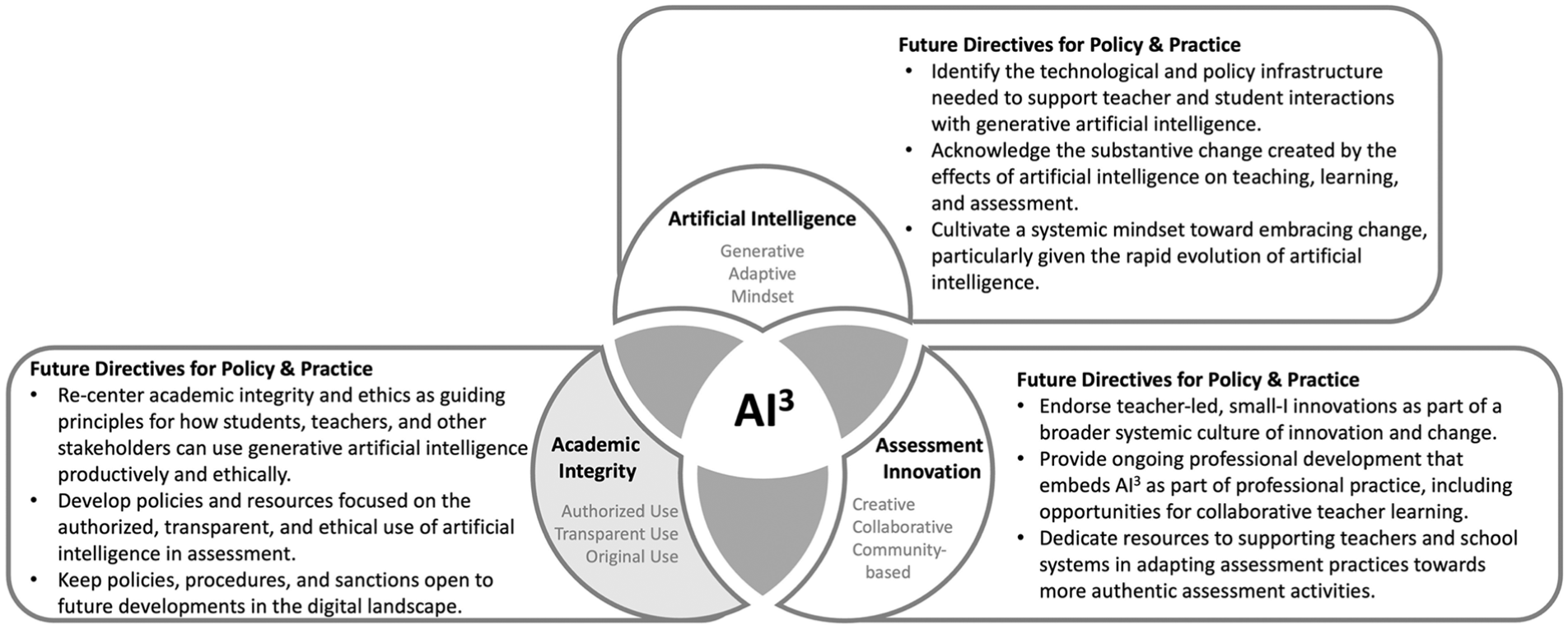

A positive and productive future with AIED will depend on individual and collective capacity to support responsive policies and practice rooted in evidence-based research. Our AI3 model provides researchers, policymakers, and practitioners with a heuristic to guide future actions related to AIED and to avoid the trappings of simplistic or siloed solutions to complex challenges in a generative digital age. In this section, we employ the AI3 model to set an agenda for future directions to ensure a productive and ethical digital future (see Figure 2). These calls for future research, policy, and practice are articulated in relation to the complexity of school climates, classrooms, and decision-making processes and understood to be interwoven with broader pedagogical, curricular, technological, assessment, and professional learning trends.

Leveraging AI3 in policy and practice.

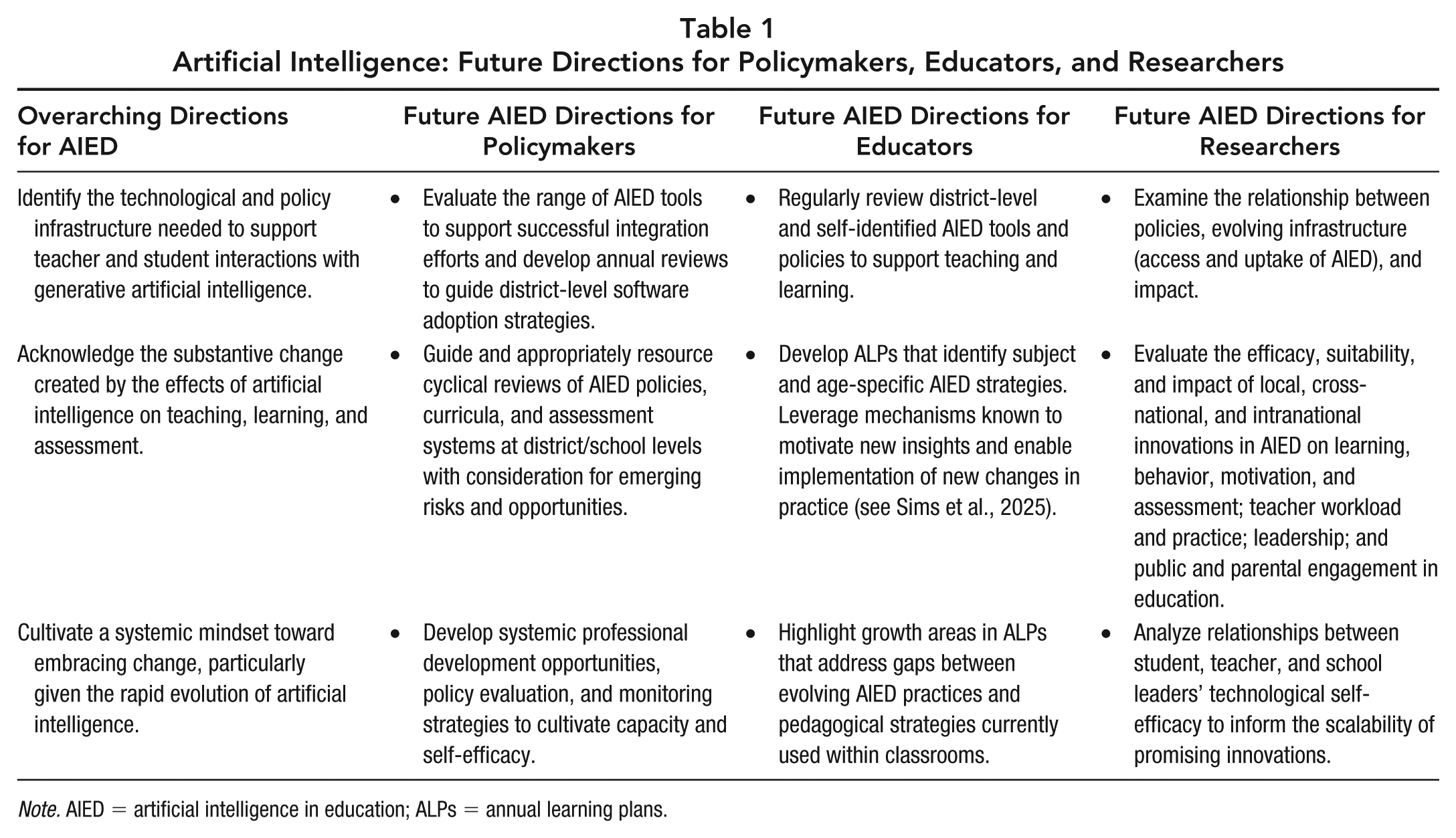

Artificial Intelligence

Educators and policymakers cannot put the AIED genie back in the bottle. Discomfort with AI’s uncertainty and imperfections has not prevented it from shaping the way that students, teachers, and citizens engage the world around them. Table 1 provides key directions to guide future work of policymakers, researchers, and educators related to AIED. Applying these directions includes regularly reviewing policies, curricula, and assessment systems; engaging in ongoing and targeted professional learning for educators; and advancing focused programs of research related to the impact of AIED on teacher practice, student learning, motivation, behavior, and assessment.

Artificial Intelligence: Future Directions for Policymakers, Educators, and Researchers

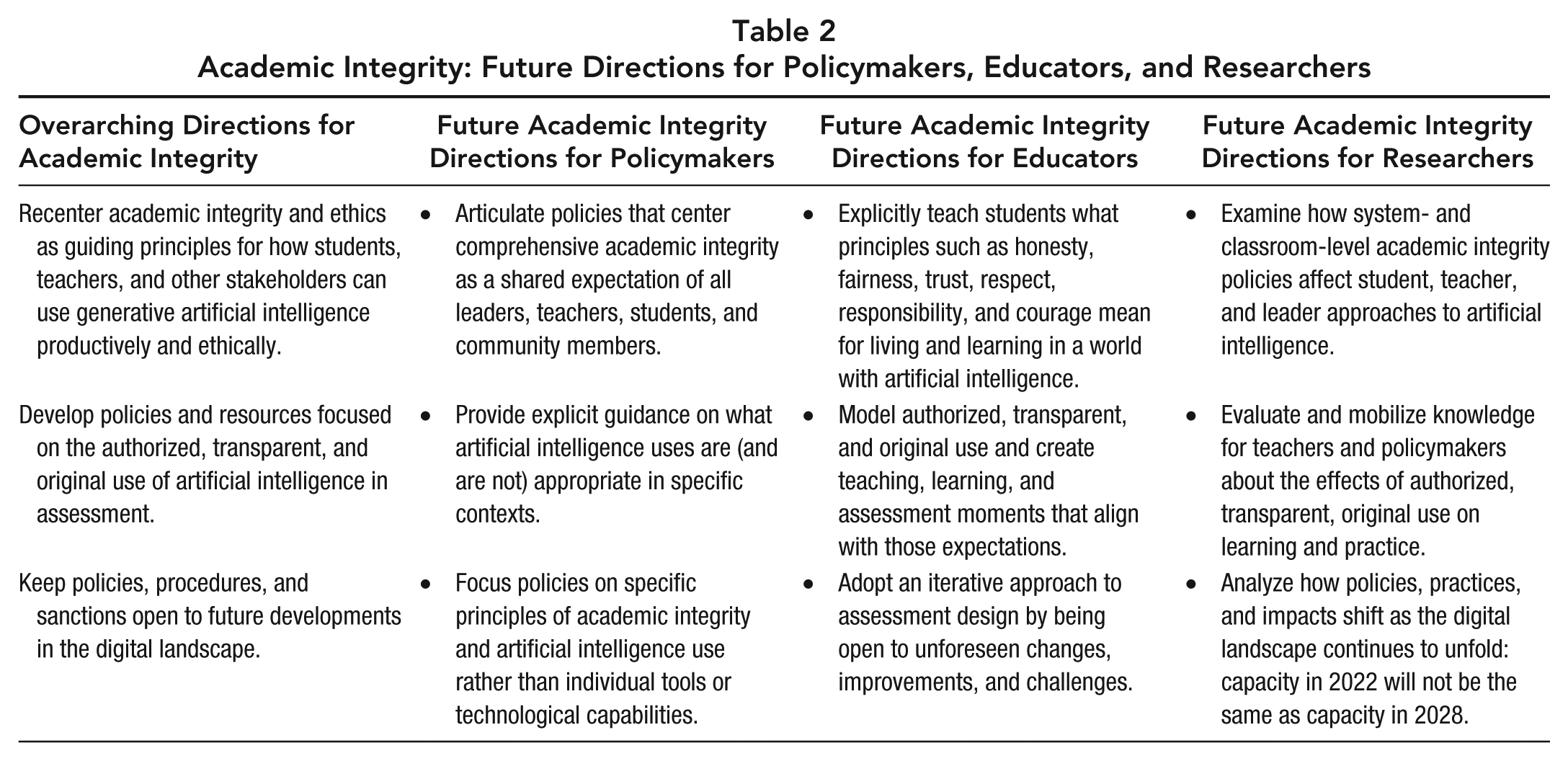

Academic Integrity

Assessment guidelines need to incorporate provisions that foster a comprehensive view of academic integrity in an ever-changing landscape (Eaton, 2023). Teachers can hardly be expected to become experts in the plethora of applications that continue to emerge. Rather, education leaders need to develop policies that both enable and recommend limits to educators’ and students’ use—evolving with future developments in the generative landscape. Table 2 details specific future directions for policymakers, educators, and researchers related to academic integrity.

Academic Integrity: Future Directions for Policymakers, Educators, and Researchers

Assessment Innovations

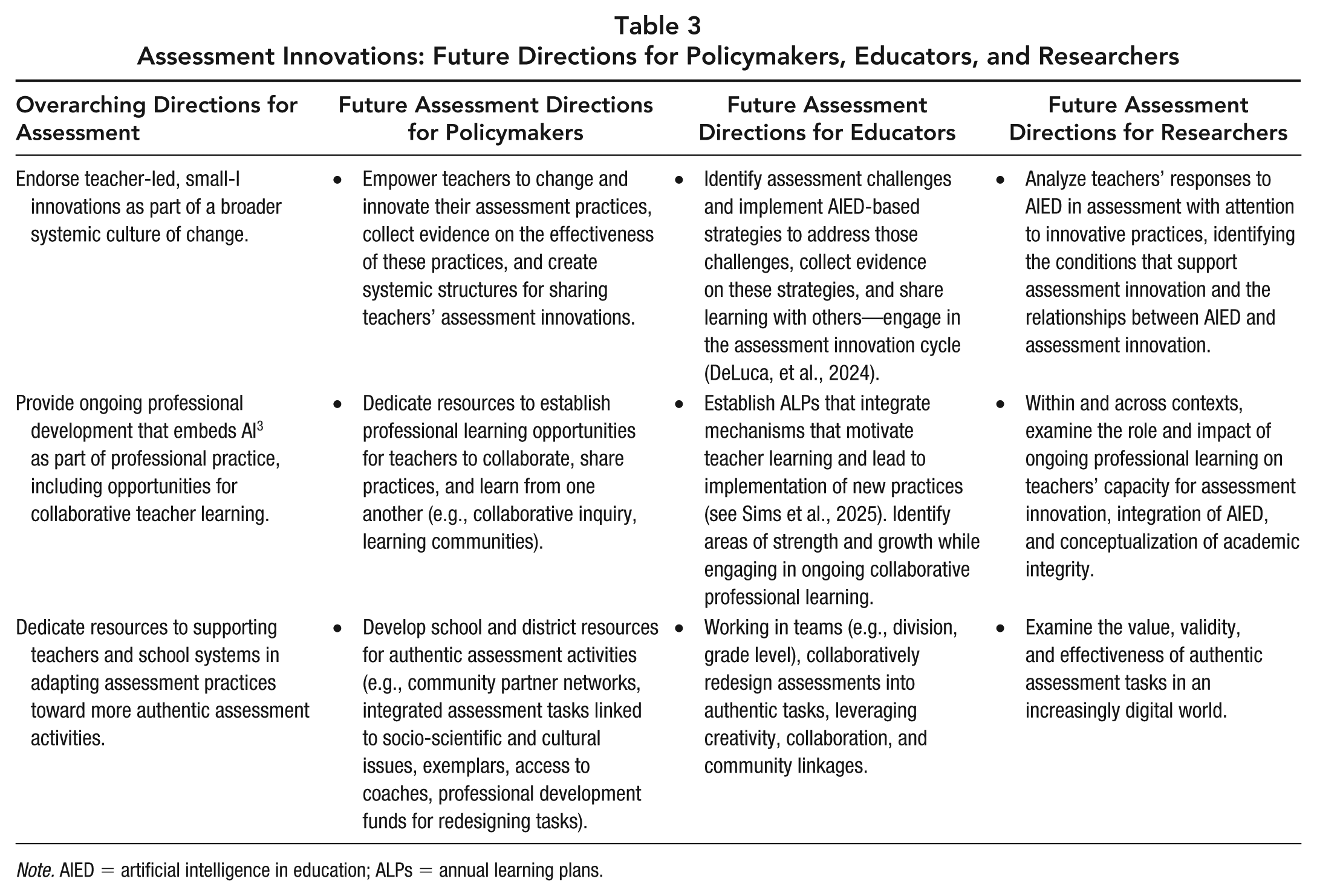

Undoubtedly, AI continue to be a force driving innovation in assessment. The key directions presented in Table 3 detail specific actions for policymakers, educators, and researchers to guide assessment toward responsive, productive ends. Underpinning these directions is a commitment to supporting teachers’ ongoing professional learning, including their redesigning of assessment tasks toward authentic assessment experiences and encouraging teachers’ assessment innovations and engaging in research that propels knowledge on synergies between AIED and new forms of assessment.

Assessment Innovations: Future Directions for Policymakers, Educators, and Researchers

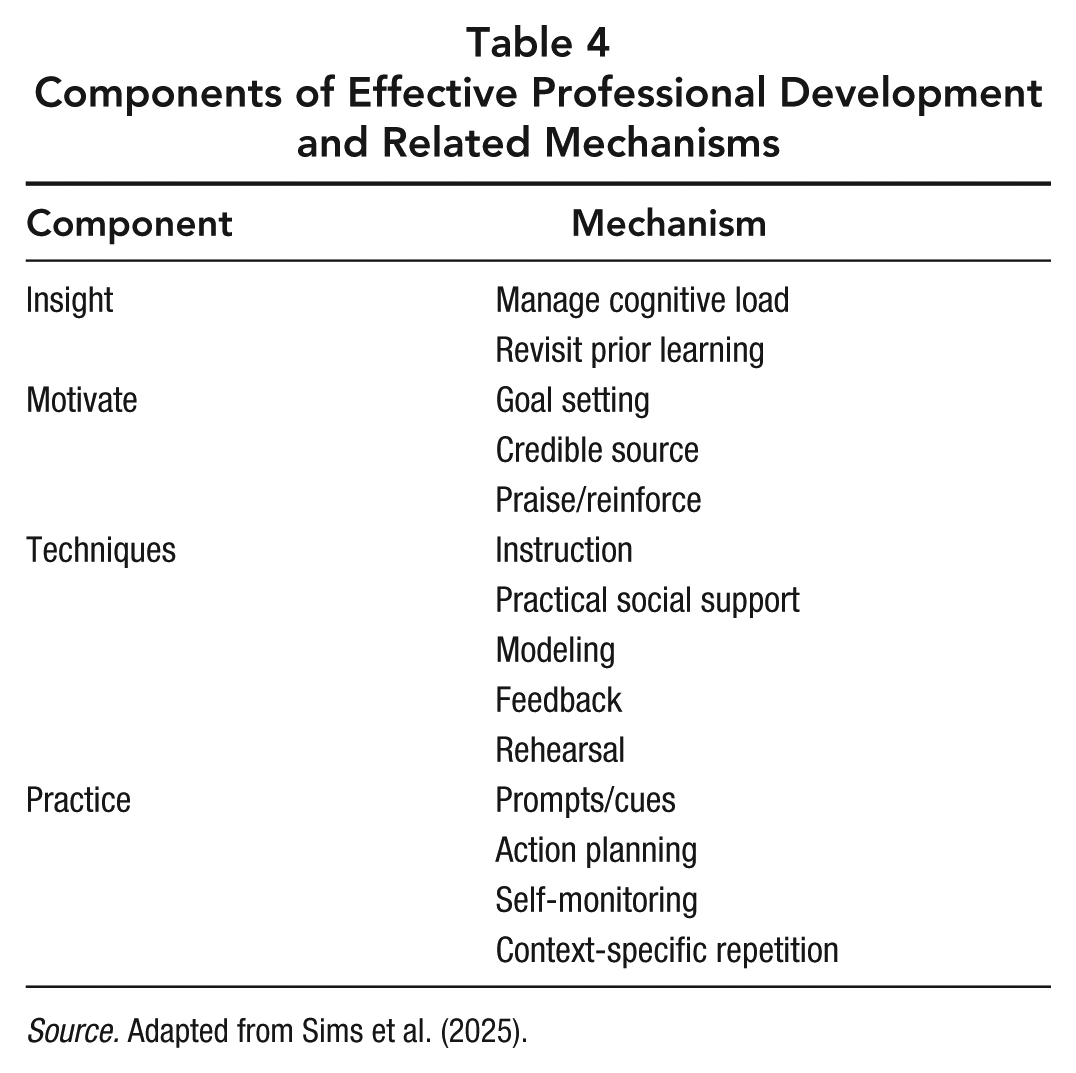

A Generative Learning System

To effectively respond to AI in scalable and equitable ways requires continuous professional learning by all—teachers, districts, policymakers, and researchers. Such professional learning cannot be construed as an “add on” for teachers; rather, it must be firmly embedded in the education ecosystem and considered in relation to its intersections with academic integrity and assessment innovations, as underscored by the AI3 model. To support systemic professional learning related to AI3, we support findings from Sims et al.’s (2025) recent review and meta-analytic test. Specifically, they recognized that effective professional development involves attention to four components. Professional development should (a) yield insights related to teaching and learning for educators, (b) motivate changes in teachers’ practices, (c) enable techniques for implementing insights, and (d) embed changes in practice. Sims and colleagues further identified a series of mechanism that activate each component of effective professional development (see Table 4). These mechanisms can be leveraged to design and support professional learning in schools and districts. This work also highlights the necessary linkage between teacher beliefs and practice—in effect, the role of generating new insights motivated by a desire to change practice—and the concomitant ability to implement practices into teaching contexts. This linkage is an essential part of AI3 professional learning.

Components of Effective Professional Development and Related Mechanisms

By drawing on established principles for effective professional learning, policymakers and educators can engage in advanced learning about AI3, a requirement for all educators in an increasingly digital world (Moorhouse & Kohnke, 2024). One of the greatest areas of focus for AI3 learning is attending to educators’ prior learning and cognitive understandings of assessment, technology, and academic integrity. Given the potentially seismic shifts in conceptions and practices that AIED provokes, professional learning must respond to teachers’ background knowledges and mindsets to yield meaningful insights, motivate change, and sustain everyday implementation. Adding to these priorities, we assert the importance of sharing learning—the idea that individual teacher learning can stimulate and support others’ development through a social-constructivist view of professional learning (Theodorio, 2024). In practice, this involves resourcing professional learning groups, establishing cross-district networking opportunities and knowledge dissemination, and encouraging teacher leadership within schools and systems. Adopting such a view will enable generative learning communities that propel the entire system to keep pace with evolving technologies.

Conclusion

The AI3 model foregrounds the complex intersections of AI, academic integrity, and assessment innovation. Rather than advocating for wholesale uncritical adoption or a head-in-the-sand approach, we contend that the evolving digital landscape represents a critical opportunity for change. Teachers and policymakers can leverage the AI3 model to foster a generative, adaptive mindset; identify what authorized, transparent, original use means in context; and develop creative, collaborative, community-focused assessments. Forward-looking assessment systems will incorporate each of these principles as central to daily assessment policy and practice and evolve in tandem with burgeoning AIED research that examines the evolving relationships between new technologies, teaching, learning, and assessment.

Finally, we recognize that our AI3 model is not static—rather, components within this triarchic framework will undoubtedly evolve as new technologies emerge that present both opportunities and challenges within education ecosystems around the world. These technologies impact the emergence of new AI applications that help facilitate assessment innovations, such as timelier formative feedback on authentic assessment tasks, as one example. Thus, our proposed model is both a response to the generative technologies in which we live but also a heuristic that will “regenerate” to adapt to the evolution of teaching and learning contexts in contemporary society.