Abstract

The allocation of students to ability tracks is often based on teacher recommendations. These recommendations tend to be biased in favor of students from higher socioeconomic status (SES) backgrounds. While tracking procedures and criteria have been proposed to play a role herein, empirical research is lacking. Using a survey experiment and information from 221 teachers in 69 Dutch schools, I find that teachers in the same school vary in their interpretation of their school’s procedure and (relatedly) their own tracking criteria. Teachers who perceive the school procedure to put more weight on students’ home environment, and/or (relatedly) put more weight on this themselves, show a stronger SES bias in track recommendations in the survey experiment.

Keywords

Introduction

Sorting students into different, hierarchically ordered, educational programs on the basis of their academic performance is omnipresent in schools and educational systems in contemporary societies, and commonly referred to as “tracking” or “curricular differentiation” (Chmielewski, 2014; Domina et al., 2017). While great variety exists in the degree to which students are tracked and the permeability of the different tracks, tracking practices seem to enhance inequality in educational outcomes by students’ socioeconomic status (SES) (Chmielewski, 2014; Domina et al., 2017, 2019). Students from disadvantaged SES backgrounds tend to enroll in lower ability tracks, which limits their educational opportunities (Terrin & Triventi, 2023). Research has traditionally focused on student and family processes to explain this inequality, particularly pointing at SES differences in student performance and educational choices (Dumont et al., 2019). Yet recent studies also stress the potential role of schools, and particularly teachers (Batruch et al., 2023; Dumont et al., 2019). More specifically, track allocations are often based on teacher recommendations, which have been found to be biased by student SES (for a review, see Batruch et al., 2023). That is, students from advantaged SES backgrounds are recommended to higher ability tracks than their equally performing peers from disadvantaged backgrounds. Especially in contexts in which upward track mobility is difficult, such as the Netherlands, biases in track recommendations can have lasting effects: After several years, students still tend to be in the track that they were initially allocated to, even when their track recommendation did not align with their academic performance and effort (De Boer et al., 2010).

While studies consistently find an SES bias in track recommendations, the underlying mechanisms remain unclear (Batruch et al., 2023). One potential explanation is that teachers do not base their track recommendations solely on student performance, but rely on a multitude of student traits. Such traits may (inadvertently) correlate with student SES. Accordingly, a few studies have examined the extent to which SES biases (partly) stem from the fact that track recommendations are also impacted by factors such as students’ academic attitudes and behaviors (Timmermans et al., 2016) or parental school support (Barg, 2013). However, even after accounting for this, systematic biases in track recommendations remain (Batruch et al., 2023). Moreover, a few recent studies show that track recommendations, as well as biases herein, do not only depend on individual student traits, but also vary across teachers, classes, or schools (De Boer et al., 2010; Timmermans & Rubie-Davies, 2018; Timmermans et al., 2023, 2015). Together these findings suggest that we need to move beyond student factors to understand the SES bias in track recommendations, and to also consider aspects of teachers and schools.

A factor that has been suggested to play a role in (SES) biases in track recommendations are the procedures and criteria that teachers (try to) follow, or are subject to, when forming recommendations (cf. Vanlommel & Schildkamp, 2019). In various educational systems, teachers have considerable discretionary power in determining their tracking procedures (Cohen-Zamir, 2021; Korpershoek et al., 2016; Vanlommel & Schildkamp, 2019). Relatedly, studies show that teachers differ in their applied criteria, that is, the extent to which they explicitly weigh certain student traits, such as students’ academic behavior or home situation (Cohen-Zamir, 2021; Sneyers et al., 2017; Vanlommel & Schildkamp, 2019). Scholars argue that such teacher differences may (partly) stem from school-level variations in tracking procedures and norms.

While scholars propose that tracking procedures and criteria may affect biases in track recommendations (cf. Cohen-Zamir, 2021; Sneyers et al., 2017; Vanlommel & Schildkamp, 2019), there are no studies testing this relation. However, the link is far from trivial. Research on discrimination suggests that certain procedures could reduce discriminatory practices (Reskin, 2003), yet according to work in organizational sociology, daily practices in organizations do not always align with what organizational procedures prescribe (cf. Coburn, 2004; Meyer & Rowan, 1977). For example, teachers may disregard a procedure, making it ineffective (cf. Coburn, 2004). Different teachers can also vary in their interpretation and navigation of a procedure (Coburn, 2004; Golann, 2018), causing it to play out distinctly across teachers. Finally, the criteria that teachers describe as guiding their decisions may not reflect how they form decisions (cf. Vaisey, 2009). For example, teachers may state that they do not consider students’ home situation, yet are subconsciously affected by it. So while the idea is that certain tracking criteria and procedures can reduce biases in track recommendations, we do not know if and which procedures and criteria help to reduce them.

This article aims to answer the following research question: How do tracking procedures and criteria relate to the SES bias in track recommendations? By answering this, I aim to shed more light on the understudied teacher and school variation in the SES bias in track recommendations. Tracking procedures and criteria may explain both teacher- and school-level variations in tracking decisions, as they can be held/applied by individual teachers, but could also operate at the level of the school as (in)formal institutions shared by all teachers (i.e., rules, norms, values, cultural beliefs). While biases in track recommendations are often labelled as “teacher biases,” it is unclear whether they are attributable to individual teachers, or merely due to a specific school climate.

Aside from the aim to contribute to studies on track recommendations, this article intends to speak to a larger literature on categorization processes and the (re)production of inequality within the organizational context of the school (cf. Domina et al., 2017; Tomaskovic-Devey & Avent-Holt, 2019). In doing so, I pay specific attention to the agency of teachers as (potential) “street-level bureaucrats” in these processes (cf. Coburn, 2004; Hallett, 2010; Lipsky, 2010).

I use survey data specifically designed to address this topic, that were recently collected in a large and diverse set of primary schools in the Netherlands (n = 69), and that include responses by most of the personnel involved in forming tracking decisions in these schools (n = 221). I assess the SES bias in track recommendations using a factorial survey experiment in which teachers are asked to form track recommendations for fictional students whose traits are experimentally manipulated (n = 1,723). Hence, the SES bias captures differences in track recommendations for students who vary in terms of SES, yet who are the same on other factors that teachers tend to consider (e.g., performance, work habits). This design suits the comparative purpose of the study. In observational data, teacher and school differences may stem from differences in (unobservable) traits of the student population. In the factorial survey experiment, different teachers evaluate the same fictional students.

The Netherlands forms a particularly interesting case to study teacher and school variations in tracking procedures, criteria, and decisions. It is marked by nationwide ability tracking institutions with relatively high levels of formalization and accountability (cf. Geven et al., 2021). Accordingly, it can be considered a “least-likely case” for finding teacher- and school variations, as such variations are arguably more pronounced in contexts in which tracking is less externally regulated, such as the United States (cf. Geven et al., 2021; Kelly & Price, 2011). Moreover, since the school year of 2023–2024, Dutch primary schools are required to have an explicit tracking procedure in order to enhance transparency and equity. This also makes it highly relevant from a practical perspective to study how tracking criteria and procedures relate to the SES bias in tracking recommendations.

To provide a clearer understanding of the specific national institutions that Dutch teachers face when forming track recommendations, I start with a description of these institutions. Subsequently, I discuss how—within these constraints—tracking criteria and procedures can differ, and could condition the SES bias in track recommendations.

The Dutch Institutional Tracking Context

The Dutch educational system is commonly described as an early between-school tracking system. More specifically, students are sorted into entirely different educational programs (tracks) on the basis of their academic performance at the end of primary school, when they are in sixth grade (i.e., ±12 years old) (Van de Werfhorst, 2019). There are six different tracks: one for students with special educational needs (pro), 1 three prevocational tracks (vmbo-b, vmbo-k, vmbo-gt), a general intermediary track (havo), and an academic track preparing students for university (vwo). Research shows that this type of rigid tracking (i.e., at an early age, with a relatively high number of tracks, and for the full curriculum) is related to inequality in academic achievement and attainment by student SES (for a meta-study and review, see Terrin & Triventi, 2023; Van de Werfhorst & Mijs, 2010). Students from disadvantaged SES backgrounds tend to enroll in lower ability tracks than students from advantaged backgrounds, also when accounting for student performance (Terrin & Triventi, 2023). Differences across tracks in school and teacher quality, the demandingness of the curriculum, and student composition (e.g., ability level, motivation) are likely to further exacerbate SES inequalities in educational outcomes.

One reason for why students from disadvantaged SES backgrounds generally enroll in lower ability tracks is that track allocations are often based on teacher recommendations, which have been found to be biased by student SES (Batruch et al., 2023). In the Netherlands, national law requires primary schools to formulate a track recommendation for each final-year student, and secondary schools are obliged to follow this (Korpershoek et al., 2016). Research consistently finds that these track recommendations are higher for students from higher SES backgrounds (e.g., De Boer et al., 2010; Timmermans et al., 2015, 2018).

In the Netherlands, track recommendations are formulated before the first of March, before students take a final mandatory standardized test (i.e., the school leaver’s test). Recommendations need to be reconsidered, and can be adjusted upwardly (not downwardly), if the final test score exceeds a school’s initial recommendation. A student’s sixth-grade teacher is largely responsible for a student’s track recommendation (Hebbink et al., 2022); the track recommendation is sometimes even referred to as “the teacher’s recommendation” (advies van de leerkracht/ leerkrachtadvies). Typically, this teacher has instructed the student for almost all subjects since at least the start of the school year (i.e., half a year), and occasionally for longer, as, in some schools, students stay with the same teacher for 2 or 3 consecutive years. While a student’s teacher has the prime responsibility, a few other colleagues (±1–3) tend to be involved in most schools (e.g., educational support coordinator[s], principal, other teachers; Inspectie van het Onderwijs, 2014). Different actors may formulate a recommendation, which are then compared and discussed (Ministerie van Onderwijs, Cultuur en Wetenschap, 2020).

While recommendations are formed prior to the school leaver’s test, other test scores are available to inform the decision (Korpershoek et al., 2016). Schools are required to monitor students’ academic performance with an administration system containing scores on expert-approved standardized tests. This distinguishes the Dutch context from tracking systems in several other European countries where teachers cannot rely on such tests (e.g., Flanders [northern part of Belgium], France, Germany).

The Dutch inspectorate of education monitors the quality of track recommendations by checking (a) whether primary schools have an administration system, (b) the extent to which final track recommendations correspond with scores on the school leaver’s test, and (c) the schools’ tracking procedures (Korpershoek et al., 2016).

While national policies constrain the tracking procedure, schools and teachers still have considerable discretionary power when forming recommendations, as they can decide how to weigh different aspects in their decisions (Korpershoek et al., 2016). Many schools have their own tracking procedure outlining, among others, which student traits or test score thresholds to use. Sometimes, regional procedures are used to professionalize and enhance uniformity in track decisions (Korpershoek et al., 2016). So far, no studies exist on the effects of such procedures.

The current Dutch educational climate is also marked by a strong focus on equality of opportunity. The fact that students from disadvantaged SES backgrounds receive lower track recommendations than their equally performing peers has received considerable attention in the public debate in the past decade. Accordingly, the Dutch Ministry of Education provides guidelines to schools to improve and equalize track recommendations (Ministerie van Onderwijs, Cultuur en Wetenschap, 2020). While not formally enforced, these guidelines may affect tracking procedures and cultures. Despite these efforts, SES inequality in tracking recommendations has remained an issue. Partly for this reason, the Dutch government has required schools to have an explicit tracking procedure since the school year of 2023–2024.

All in all, the Dutch tracking context is marked by considerable levels of formalization and accountability (e.g., frequent standardized testing, an inspectorate checking the quality of tracking decisions), and a strong focus on the optimization of track allocations. While this may lead to relatively high levels of uniformity in the applied tracking procedures and criteria, recent scholarly work suggests that even in such a “stringent” national institutional context, there can be important variations. In the next section, I first discuss potential variations in tracking procedures and criteria across teachers from different schools that may contribute to school variations in the SES bias in track recommendations. The idea is that schools are the central site where nationwide institutions are translated into educational practices. Subsequently, I outline how teachers—even in the same school—can differ in their (interpretation of) tracking procedures and criteria. I discuss how this may relate to teacher variations in the SES bias, also within schools.

Theory

Tracking Procedures and Criteria Shared Among Teachers at the School Level

Developments in organizational sociology stress that organizations take a central place in the (re)production of inequalities (Hallett & Hawbaker, 2021; Tomaskovic-Devey & Avent-Holt, 2019). While organizational practices are constrained by nationwide institutions, there is not a passive conformation to these institutions. Instead, through interactional processes within organizations, “workable” responses are created that may reproduce as well as challenge institutions. From this perspective, organizations are seen as the prime foci for the (re)creation of social categories that people use to make sense of the world and that are also key in the (re)production of inequalities.

While originally applied to labor market inequalities, this framework can also be relevant to understand biases in track recommendations (cf. Domina et al., 2017). Nationwide institutions require a track recommendation for each student and, therefore, the categorization of each student into an ability group (cf. Geven et al., 2021). The categorization process by which students are matched to tracks is in some ways comparable to the matching of employees to labor market positions. Just as potential employees are discriminated by the extent to which their skills, experiences, and traits are (un)suited for a particular job (Avent-Holt & Tomaskovic-Devey, 2019), students are discriminated by the extent to which their traits and skills are deemed (un)fit for a particular track. These categorizations are by nature hierarchical, and can thereby (re)produce inequalities.

While institutions of the larger educational system may provide “scaffolds” to “match” students to tracks, processes in schools (as organizations) arguably play a central role in how categorization takes shape (cf. Avent-Holt & Tomaskovic-Devey, 2019; Domina et al., 2017; Oakes & Guiton, 1995). Teachers’ interpretation and navigation of tracking institutions, and (relatedly) tracking procedures, may vary across schools. School policies and culture could affect which differences between students are prioritized, how specific student traits are weighted in the categorization process (cf. Avent-Holt & Tomaskovic-Devey, 2019), or how student information is interpreted (Bertrand & Marsh, 2015).

One aspect of the school that may affect biases in track recommendations is the level of tracking formalization, that is, the availability of predefined and clear criteria or rules to determine the allocation of resources (Avent-Holt & Tomaskovic-Devey, 2019; Reskin, 2003). Work on labor market discrimination stresses that formalization can reduce inequality, as it limits the impact of allocators’ discretion and enforces the evaluation of different people according to the same principles. When procedures are vague, allocators lack “scaffolds” in their decision-making and are left to their own devices, which can enhance (implicit) stereotypes.

I apply this idea to teachers’ track recommendations. I define formalization as the extent to which teachers perceive they can rely on clearly predefined criteria and guidelines in their tracking decisions, including the existence of clear decision-rules for determining a student’s track as well as guidelines on how to proceed in tracking decisions (e.g., what to do in case of doubt or disagreement with parents). 2 Such formalization is expected to enhance uniformity and comparability in teacher decisions by reducing the risk that teachers rely on different standards or practices across students (i.e., particularism) (cf. Vanlommel & Schildkamp, 2019). For example, a qualitative study in Flanders (Belgium) suggests that teachers who base their track recommendations on intuitive and personal, rather than predefined standardized criteria, apply different performance thresholds for different students (Vanlommel & Schildkamp, 2019). Moreover, formalization may reduce confirmation bias, i.e. the interpretation of student information in ways that merely confirms preexisting beliefs.

Hypothesis 1 (H1) The SES bias in track recommendations is smaller in schools that provide tracking procedures that are perceived to be more formalized (i.e., provide clearer decision rules and practice guidelines).

Formal procedures may not inevitably reduce biases in decision-making, and their effect is likely contingent on their content. Formal procedures can even implicitly induce allocators to base rewards on ascribed traits if they are embedded in larger structures that themselves are biased or unequal (Avent-Holt & Tomaskovic-Devey, 2019; Kalev, 2014; Reskin, 2003). While often employed to enhance equity, formal standards may unintendedly lead to a disproportionate allocation of rewards to groups with more standard-relevant resources (Lamont et al., 2014).

Tracking decisions are highly complex, often featuring many different types of student information and possible ways to interpret this information (cf. Vanlommel & Schildkamp, 2019). Relatedly, there are a plethora of aspects of the tracking procedure that may impact decisions. Yet one aspect that is arguably particularly relevant in the light of this study is a procedure’s perceived emphasis on a student’s home situation. Research shows that in a majority of Dutch schools, an unstable home environment or lack of parental support are considered as valid reasons to recommend students to lower ability tracks (Inspectie van het Onderwijs, 2014). This may (inadvertently) cause SES biases in track recommendations. Dutch teachers tend to rate parental school involvement to be lower for students from lower SES backgrounds (Bakker et al., 2007), and may perceive high-SES parents as better equipped to help, especially with more difficult (secondary school) tasks (cf. Bol, 2020).

When teachers believe that the school procedure prescribes them to incorporate a student’s home situation in their tracking decisions, this may also legitimize an explicit consideration of a student’s SES. 1 It could foster statistical discrimination, including the provision of lower track recommendations to lower SES students, as these students’ secondary school performance is more likely to below the level expected of them based on their primary school performance (e.g., grade repetition; downward track mobility) (Timmermans et al., 2013).

H2a) The SES bias in track recommendations is smaller in schools in which the procedure is perceived to put less emphasis on a student’s home situation.

Formal procedures may not always be present or (jointly) followed by all teachers in a school. Nevertheless, schools may still be characterized by an informal tracking culture (cf. Oakes & Guiton, 1995). Hence, I also consider the extent to which teachers in the same school report to attach the same weight to a student’s home situation in their tracking decisions (irrespective of the procedure). To the extent that such a “home emphasis” culture exits, I hypothesize:

H2b) The SES bias in track recommendations is smaller when the school culture puts less emphasis on a student’s home situation.

Teacher Autonomy and the Reproduction of Inequality

So far, I have theorized how aspects of a tracking procedure and/or culture operating at the school level can affect student categorizations and relate to school differences in the SES bias in track recommendations. While responses to nationwide institutions have been argued to take shape in interactions at the organizational level, not only schools, but also individual teachers, have agency. This can lead to teacher variations within schools (Coburn, 2004; Golann, 2018).

Recent work in organizational sociology stresses that organizations are made up of individual members who may misalign in their perceptions of the institutional context (Tomaskovic-Devey & Avent-Holt, 2019, p. 50). Variability in inequality-generating processes can be primarily located at the organizational level, but also at the level of its members. In the former case, differences in student categorization are mainly induced by, and/or operate at, the larger school context (cf. Tomaskovic-Devey & Avent-Holt, 2019). Teachers in the same school will show (considerable) uniformity in their categorizations, while important variations exist across teachers from different schools. In the latter case, variations mainly exist at the teacher level, so also among teachers in the same school.

Especially in the case of inequality-generating processes in schools, teacher variations may be considerable. Some scholars characterize the teacher profession by a norm of autonomy (Coburn, 2004; Hallett, 2010), and teachers are described as typical examples of “street-level bureaucrats,” that is, professionals with high levels of freedom to determine their courses of action (Lipsky, 2010; Taylor, 2007). While educational standardization and accountability may limit this discretion, discretionary power remains because teachers are eventually the ones forming (everyday) responses to external demands (Taylor, 2007).

Studies find differences in teacher responses to external pressures, also within schools. Teachers’ background, prior experiences and beliefs, (Coburn, 2004; Everitt, 2012, 2013) skills and preferences (Golann, 2018), and student population (Weick, 1976) may affect how they interpret, perceive, and interact with external pressures. For example, Coburn (2004) shows how teachers vary in their responses to school, district, and state demands for new teaching styles in reading. Responses range from a full rejection (decoupling) to a full accommodation, with various alternatives in between. Similarly, teachers in the same school could differ in the extent to which they adhere to external pressures in their tracking criteria.

Coburn’s (2004) findings suggests that teachers may not only differ in the extent to which they reject or accommodate to external pressures, but also in their interpretation of them. For example, some teachers assimilated to new reading approaches in ways that matched their own approach, partly because their preexisting beliefs and practices influenced how they perceived the new approach. In other words, teachers may interpret and navigate school or national procedures in their own way, and (accordingly) end up applying their own criteria.

Teachers can differ on a plethora of aspects in both their own applied tracking criteria as well as their interpretation of the school tracking procedure. Here, I focus on the importance of a student’s home situation in a teacher’s own tracking criteria, and a teacher’s perceived importance of this in the school procedure. As described in the previous section, I expect this specific aspect to particularly contribute to differences in the SES bias in track recommendation.

H3) The SES bias in teacher track recommendations is weaker among teachers who interpret the school procedure to put less weight on a student’s home situation (H3a) and/or (relatedly) put less weight on this in their own tracking criteria (H3b).

The relationship between teachers’ tracking criteria and their eventual tracking recommendations may seem trivial. However, research suggests that the principles that people describe as motivating their decisions may deviate from how they (unintentionally) form decisions (cf. Vaisey, 2009). Variations in teachers’ tracking criteria may therefore not necessarily translate into teacher differences in track recommendation biases.

I have argued that tracking procedures/criteria may be formed, and operate at both the school and the teacher level. On the one hand, responses to nationwide tracking institutions can take shape at the school level: Interactions among school personnel may create (in)formal procedures and criteria that affect the school in its entirety. On the other hand, research has highlighted teacher autonomy and differences in teachers’ interpretation and translation of external pressures in everyday situations. By considering both the teacher and the school level, I aim to shed more light on the extent to which variations in the SES bias in tracking decisions merely stem from variations across schools or teachers. If the first is true, inequalities should be tackled at the school level. If the second is true, organizational efforts aimed to reduce inequalities may not suffice. While prior studies find teacher differences in (a) how teachers weigh student traits in tracking decisions (Sneyers et al., 2017; Vanlommel & Schildkamp, 2019) and (b) biases in tracking decisions (Timmermans et al., 2015), it is unclear whether these variations mainly stem from teacher- or school-level processes (cf. Sneyers et al., 2017).

Method

Participants

Data were collected in the school year of 2019–2020 among Dutch elementary school staff involved in the track recommendation procedure that school year (Geven, 2021). Data collection took place between October 2019 and the beginning of March 2020, before the outbreak of the COVID-19 pandemic in the Netherlands.

The current project involved a collaboration with the Peer Relations in the Transition from Primary to Secondary education (PRIMS) project on students’ transition from primary to secondary school (Zwier et al., 2023). For the PRIMS project, a stratified sampling procedure was applied to select schools from all regular Dutch primary schools, with an oversampling of larger schools and those with a higher share of students from disadvantaged SES backgrounds. Seventy-eight schools agreed to partake in the PRIMS project, and the principals of these schools were approached first to participate in the current project on track recommendation procedures (i.e., as this would allow researchers to combine teacher and student information). A total of 154 staff members from 47 of these schools agreed to participate in the online survey. To obtain the desired sample size of 70 to 80 schools, an additional sample of 62 schools was drawn from the schools that refused to partake in PRIMS. Using the PRIMS strata, I oversampled schools from strata that were still underrepresented in the current project (i.e., either because they refused to partake in PRIMS or this study). Accordingly, I tried to ensure that schools from different strata were equally represented in the data. An additional 71 staff members from 23 schools participated.

In total, the data include information on 225 staff members in 70 schools (school-level response 50%, staff-level response 62%). The schools are representative of Dutch elementary schools with respect to denomination, SES composition, region, and the track recommendations students received in the school year of 2018–2019. Due to the oversampling of large schools in the PRIMS project, sampled schools are larger than average.

I exclude four respondents who failed to provide track recommendations for the fictional students in the survey experiment. I impute missing values on other teacher responses by means of multiple imputation (n = 15). The final analytical sample includes 221 respondents from 69 schools, 3 that is, 3.2 respondents per school; and involves a large number of schools and teachers across the entire country. While the absolute number of teachers within each school may appear low, this largely reflects empirical reality. In each primary school, only a few teachers are involved in formulating track recommendations (in small schools sometimes even one), and it is only these teachers that are invited for the study. Given the study’s response rate, the sample includes most of the staff members involved in tracking decisions. This is confirmed by research showing that, besides the Grade 6 teacher(s), on average 2.3 other colleagues are involved in the final tracking decision in the Netherlands (Inspectie van het Onderwijs, 2014).

Experimental Design

To examine the impact of student traits on track recommendations, I use a vignette experiment in which respondents were asked to formulate track recommendations for fictional students whose traits were experimentally manipulated. Hence, in the data, student traits are uncorrelated with each other. Moreover, different teachers evaluate the same set of fictional students, and variations across teachers and schools are thus not due to (unobservable) differences in the populations they evaluate.

Vignette experiments capture behavioral intentions rather than real-world behavior. Hence, a potential problem with this design is that teacher responses may differ from how teachers act when providing actual tracking recommendations for their own students (i.e., the real-world scenario that this study pertains to). In the real-world situation, teachers know their students through daily interactions for at least half a school year, and may also (occasionally) interact with students’ parents. For the fictional students in the case vignettes, teachers have to base their tracking decisions on more limited and less complex information. This may cause them to artificially rely on other information available in the vignette to compensate for these missing pieces of information.

A recent study tested the ecological validity of case vignettes in research on tracking recommendations in Luxembourg and Germany, contexts in which tracking decisions are also based on teacher recommendations (Krolak-Schwerdt et al., 2018). More specifically, the tracking decisions that teachers made for their own (real-world) students were compared to the tracking decisions that these teachers made for fictional students in case vignettes. The authors concluded that the findings based on the case vignettes were ecologically valid: Teachers’ judging processes for the case vignette were comparable to the judging processes for real students.

For the current experiment, several measures were taken to enhance ecological validity. Prior to data collection, educational professionals commented on an initial version of the survey. Among others, a pretest was conducted among Dutch staff members responsible for formulating track recommendations. Moreover, the information on academic performance in the vignettes corresponded with the performance information that teachers usually rely on, while taking into account the distinct ways in which different standardized tests present test results (i.e., test performance was presented in figures, accompanied with information on a student’s “level” as well as “percentile score”). Additional analyses indicate that academic performance explains about 81% of the student-level variation in track recommendations in the case vignettes (see Table A1 in the appendix in the online version of the journal). This is similar to findings from recent observational studies in the Netherlands involving real students (i.e., between 76 and 84%) (Timmermans et al., 2015; van Leest et al., 2021).

Six student traits were manipulated in the vignettes: academic performance, parental occupation as an indicator of their SES, student names to signal ethnic background, student gender, student work habits (i.e., a description of diligence, perseverance, concentration, and ability to work independently according to planning), and parental school interest and help (see Table 1; Figure A1 in the appendix in the online version of the journal). The selection and design of these traits was based on findings from previous studies and the pretest. All traits vary on two levels (e.g., boy or girl), except for student performance, which varies on four levels: bottom 40%, top 40%, top 20%, and average performer. The average performing student profile involves a student with an incongruent (disharmonious) profile, whose mathematics performance is substantially higher than that in reading. A recent Dutch study indicates that about 20% of the students have such an inconsistent profile, and that this may alter the impact of SES on tracking recommendations (Van Leest et al., 2024). Also during the pretest, teachers indicated that such profiles are common, and that formulating track recommendations is particularly hard for these students, causing them to rely on aspects other than academic performance.

Student Characteristics Manipulated in Vignette Experiment

The percentile score refers to the percentage of test candidates with the same test score or lower in the country.

The total vignette population (i.e., Cartesian product of all vignette levels; 128 vignettes) was divided into 16 sets of eight vignettes using a D-efficient design (Auspurg & Hinz, 2014). Within each set, correlations between the manipulated student traits and their two- and three-way interactions were minimized, while maximizing variation in the different levels. All vignettes are represented in the data, and student traits and their interaction effects are uncorrelated in the full dataset. Teachers were randomly assigned to one of the D-efficient sets. This allocation method maximizes statistical power. Moreover, by standardizing the vignette sets—instead of assigning each teacher to a different random combination of vignettes—between-teacher differences are less likely to be due to teacher differences in vignette sets. This is primarily important when estimating cross-level interactions between teacher- and student-level variables (Auspurg & Hinz, 2014), which is of key interest here. Each vignette was evaluated by at least 12 respondents (with a mean of 13.5), thereby exceeding the recommended minimum of 5. In total, the analytical sample includes track recommendations for 1,723 fictional students.

Building on the counterfactual approach to causation, a factorial survey experiment has important advantages in terms of internal validity, particularly with respect to the satisfaction of the conditional independence assumption (CIA; i.e., the ability to compare the track recommendations of students who differ on one dimension [e.g., SES], yet are identical in other respects) (Petzold, 2022). First, the experimental manipulation ensures that the levels of the vignette dimensions (i.e., treatments) are not correlated with each other. Second, the random allocation of respondents to vignette sets should limit the confounding of treatment effects with respondent characteristics.

A potential pitfall to internal validity is that the design violates the stable unit treatment value assumption (SUTVA; i.e., no interference between units exposed to different experimental conditions). Since respondents are assigned to multiple vignettes, they are in essence exposed to different experimental conditions. The order in which respondents evaluate the vignettes may lead to spillover, learning, and fatigue effects (Petzold, 2022, p. 9). To reduce the impact of such effects, I randomized the order in which vignettes were presented. Moreover, before participants were allocated to a vignette set, they were asked to evaluate a test vignette that was identical across all respondents (i.e., an anchoring vignette). In this way, I tried to ensure that respondents started from the same reference point, and to help them to get used to the exercise. Track recommendations for this “example” vignette were not included in the analyses.

Participants were asked to imagine that the fictional students attended their school, to form track recommendations in ways that corresponded with how they usually form recommendations, and to adhere to school procedures if they would usually do so (see Figure A1 in the appendix). They were also told that academic performance was stable between grade 4 and 6 (without any outliers), the students never repeated a grade, and were born in the Netherlands.

I tried to include information potentially relevant in tracking decisions either as fixed or varying across fictional students. In this way, I aimed to avoid that the effects of experimentally manipulated traits subsume the effects of other relevant information. For example, country of birth was added to ensure that ethnic background effects would not incorporate nativity effects (Fish, 2017). Including relevant information is also important to compare track recommendations across teachers, as teachers may differ in (a) how, and the extent to which, they fill in pieces of missing information; and (b) the extent to which this constructed information affects their decisions. For example, if country of birth would not be mentioned, teachers may make different assumptions about the country of birth of a student with a Turkish/Arabic signaling name, and may also vary in how they weigh this “constructed” nativity in their decisions. Accordingly, the ethnic background effect may vary across teachers only because for some teachers it subsumes nativity effects, while for others it does not.

The information that is included in the vignette also has implications for what qualifies as an SES bias in this study. The found impact of SES will reflect the difference in tracking recommendations for fictional students whose SES differs, but who are the same with respect to the other information in the vignette. As this information does not only include student performance, but also e.g., work habits and the level of parental support/interest, I use a narrower qualification of the SES bias than most studies (i.e., who consider all differences by student SES that are not explained by student performance).

Measures

Dependent Variable

Respondents provided a track recommendation for each fictional student in the vignette set. They were provided 10 different answer categories, ranging from the special educational needs track (i.e., praktijkonderwijs) to the academic track (i.e., vwo). As none of the teachers recommended fictional students to the special educational needs track (i.e., in real-world situations, only 2.5% of all Dutch students receive this recommendation), teacher answers ranged from the lowest ability prevocational track (vmbo-b) to the academic track (vwo). Answers also included combinations of two adjacent tracks, as teachers are also allowed to provide such “double” recommendations in real world situations.

In the Dutch educational system, there is a clear and consistent hierarchy in the tracks, a hierarchy also referred to as “the educational ladder” (see, for a discussion, Bosker & Van der Velden, 1989; De Boer et al., 2010; Timmermans et al., 2019). Students who complete a specific track can continue with a track one step higher on the ladder, yet this requires an additional year of schooling. For example, after completing the 5-year intermediary track (havo), students can continue in the 5th year of the 6-year academic track (vwo). Similarly, students who finish a 4-year prevocational track (e.g., vmbo-b) can continue with a prevocational track one step higher on the scale (e.g., vmbo-k) by entering this higher track at the start of the final 4th year. Since a track difference translates into 1 school year, researchers commonly treat track recommendations as an interval scale (see Bosker & Van der Velden, 1989). I follow this, as this also eases statistical estimation and interpretation. I recoded the variable, such that 0.5 points refer to a difference of half a track (e.g., a recommendation for two adjacent tracks versus a recommendation for one of these tracks) and 1 point to a full track difference.

Tracking Procedures and Criteria

The formalization of the school procedure is measured with four items tapping into teachers’ perceptions of the clarity of the decision rules and practice guidelines of the school’s tracking procedure, that is, the procedure (a) has clear decision rules for the determination of the track recommendation, (b) has clear rules on what to do when there is disagreement between parents and the school, (c) has clear rules on what to do when the score on the final test exceeds the school’s track recommendation, and (d) provides clarity on what to do in case of doubt. Answer categories include options ranging from completely untrue (1) to completely true (5), and a “don’t know” category (6). I calculate the average score on all items, excluding “don’t know” and missing responses (Cronbach’s alpha = .94). Scores are set to 0 for respondents who answer all questions with “don’t know” (5%), or who indicate that there is no set school procedure (9%). I include control variables to account for these groups (see control variables).

Three items capture the weight of the home environment in the school’s tracking procedure (i.e., weight home environment school) by asking respondents the extent to which their school procedure allows them to account for (a) wishes/ambitions of parents, (b) school support at home, and (c) parental education. Answers include four categories ranging from never (1) to always (4), a category “not mentioned in the procedure,” and a “don’t know” category. I calculate respondents’ average score on the three items, excluding missing values, and “don’t know” or “not mentioned in the procedure” (Cronbach’s alpha = .82). Scores are set to 0 for respondents reporting there is no set school procedure (9%), or answering “don’t know” or “not mentioned in the procedure” on all items (4%). I include control variables to account for these groups of respondents (see control variables). Items measuring the weight of the home environment in the procedure load on a different factor than those measuring formalization. 4

The weight of a student’s home environment in a teacher’s own reported tracking criteria is measured with similar items as those tapping into the perceived “home emphasis” of the school procedure. Questions involve the extent to which teachers themselves account for the wishes or ambitions of parents, school support at home, and parental education when forming recommendations (i.e., weight home environment teacher). Answers range from never (1) to always (4). A mean score is calculated, excluding items with missing values (Cronbach’s alpha = .66). The questions concerning teachers’ own criteria were asked after the school procedure items, and teachers were unable to go back to their answers. Questions about teachers’ own criteria and the school procedure were asked after the vignette experiment.

Controls

In all models predicting teacher track recommendations for the case vignettes I include all student traits that were experimentally manipulated in the vignettes. In the models including school procedure variables, I include a dummy for teachers who report that their school does not use a (regional, school-board specific, or school-specific) set procedure (i.e., no fixed school procedure), and the interaction of this dummy with student SES. I also include dummies that indicate whether a respondent answered all questions on the school procedure variables with “don’t know” or “not mentioned in the procedure” (i.e., clarity rules school procedure: DK; weight home environment school: DK). These dummies are also interacted with student SES.

The (perceived) absence of a school procedure, a lack of knowledge about this procedure, or perceiving information to be missing in this procedure are arguably also indicators of the procedure’s formalization. However, I include these variables as controls, since most teachers indicate there is a procedure, and do not answer questions about the procedure with “don’t know” or “not mentioned in the procedure.” Table 2 provides descriptives for all variables.

Descriptives

Note. Descriptive statistics are based on nonimputed data.

Analytical Strategy

I first model the SES bias in tracking recommendations for the hypothetical students. I predict teachers’ recommendations using multilevel linear regression models that account for the nesting of vignettes in teachers, and teachers in schools. 5 Subsequently, I assess school- and teacher- variations in the SES bias in track recommendations by including random slopes for student SES at the teacher and school level. If adding these random slopes does not statistically significantly improve the model, I find (respectively) no significant teacher/school variation in the SES bias in the track recommendations for the fictional students. This would imply that I fail to find that teacher and/or school traits—including tracking criteria/procedures—contribute to differences in the SES bias in the case vignettes (i.e., no support for my hypotheses).

In a next step, I consider aspects of the tracking procedure/criteria that I hypothesized to operate at the school level and to relate to school differences in the SES bias (H1–H2b). I first examine whether these aspects (i.e., standardization, “home emphasis” in tracking procedure/criteria) in fact “exist” at the school level. I consider this to be the case if they form characteristics of a school’s ‘climate’ (cf. Marsh et al., 2012). 6 More specifically, teachers were individually asked to rate aspects of their school’s tracking procedure and their tracking criteria. If an aspect is part of a school’s climate—and thereby operates at the school level—different teachers in the same school should show considerable agreement in their ratings. I can then create a climate construct that expresses teachers’ shared ideas about this aspect. If teachers do not show sufficient agreement, perceptions about this aspect of the tracking procedure/criteria are individually rather than commonly held in a school (i.e., there is no shared school “climate”), and may explain differences between teachers but not schools.

I use a one-way random effects analysis of variance (ANOVA) with teachers nested in schools (i.e., loneway STATA 16) to explore the agreement among teachers in a school on the clarity of their school’s procedure (i.e., standardization) as well as the “home emphasis” of this procedure and their own tracking criteria. For these three variables (i.e., clarity rules school procedure, weight home environment school, and weight home environment teacher), I estimate intraclass correlations (individual ICCs) and the reliability of a school mean. The individual ICC is a measure of the absolute agreement among teachers in the same school, and indicates which share of the variance is explainable by a teacher’s school (Koo & Li, 2016). The reliability of the school mean is calculated with the Spearman-Brown formula. If this reliability—and thereby the agreement among teachers in a school—is insufficient (<0.5; Koo & Li, 2016), a variable does not operate at the school level and cannot explain school variations in the SES bias (i.e., no support for hypothesis). If it is sufficient, I interact the school mean of this variable with student SES to tests its association with the SES bias in tracking decisions for the case vignettes (H1–H2a/b).

Next, I move to the teacher level. I first explore the level of alignment between (a) a teacher’s interpretation of the school procedure’s “home emphasis” and (b) a teacher’s own emphasis on this in the teacher’s reported tracking criteria. I do so, as the home emphasis of a tracking procedure may have little impact on tracking recommendations if teachers deviate in this respect from what they believe the procedure prescribes. Subsequently, I examine how both the “home emphasis” in a teacher’s interpretation of the school procedure and in a teacher’s own criteria relate to a teacher’s SES bias in tracking decisions in the case vignettes (i.e., interact the variables with student SES to test H3).

In all models that include interactions, I account for the main effects underlying the interactions. All continuous variables are grand-mean centered at the appropriate level of analysis (e.g., teacher-level measures are centered at the level of the teacher).

Results

Biases in Track Recommendations, and School and Teacher Variations

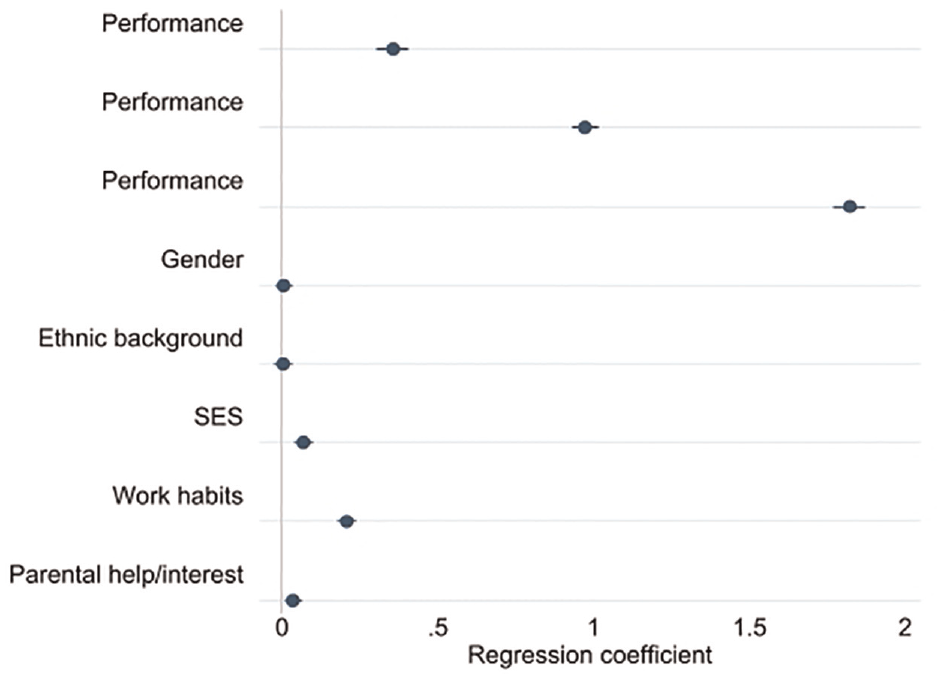

Figure 1 summarizes the findings of a multilevel model predicting track recommendations by traits of the fictional students in the case vignettes (Table 3, Model 1). As expected, track recommendations for high SES-students are higher than those for low-SES students. 7 While the difference is small (7% of a full track; 0.06 of a standard deviation [SD] in Dutch track recommendations), 8 it pertains to students who are the same with respect to all other experimentally manipulated traits, including academic performance, level of parental support/interest, and work habits. Previous research shows that SES biases are generally small but persistent in the Netherlands (Timmermans et al., 2018). For example, observational studies that measure SES with parental education find a difference in the track recommendations for students with low and high educated parents that ranges between 0.09 and 0.19 of an SD (Timmermans et al., 2015, 2016). 9 However, parental school involvement/support is not accounted for in these estimates.

Estimates of multilevel regression model of teacher track recommendations.

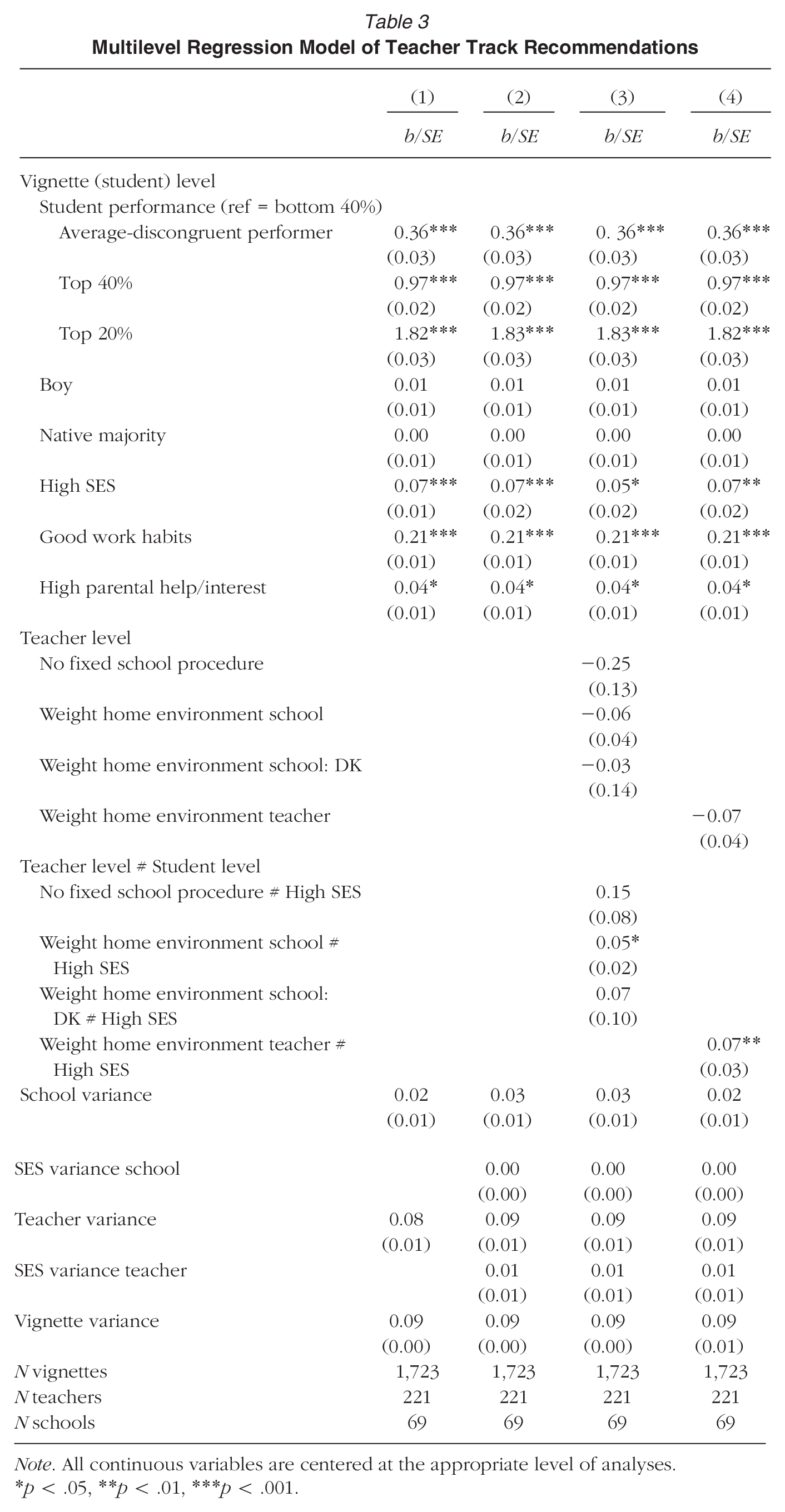

Multilevel Regression Model of Teacher Track Recommendations

Note. All continuous variables are centered at the appropriate level of analyses.

p < .05, **p < .01, ***p < .001.

Aside from SES, track recommendations are higher for fictional students with higher academic performance, good work habits, and high levels of parental involvement/support.

In Model 2 (Table 3), I include random slopes for student SES at the school and teacher level. A likelihood-ratio test (not shown here) indicates that a model with random slopes fits the data better than a model without random slopes. However, when testing the teacher- and school-random SES slopes separately, I only find a statistically significant random slope at the teacher level. 10 This may be due to the fact that there is less power to find school-level effects. However, teacher-level variation in the SES bias is also considerably higher than school-level variation (σ = 0.10 at the teacher level (√0.0095); σ = 0.04 at the school-level (√0.0013). This suggests that processes at the teacher level contribute more to variations in the SES bias in track recommendations for the fictional students than processes at the school-level. The predicted regression slopes for SES (i.e., the SES bias) range between −0.06 and 0.28 across teachers. This means that not all teachers show a bias in favor of high-SES students in the case vignettes, yet at the extremes, the bias is more than quarter of a full track or 0.23 SDs in Dutch track recommendations. So while the average SES bias is small (7% of a full track), it is considerable for some teachers.

Agreement on the School Procedure

The absence of a statistically significantly random slope for student SES at the school level implies that, with these data, I am unable to find support for the existence of school-level processes that contribute to differences in the SES bias in the track recommendations for the fictional students (H1–H2a/b). Nevertheless, it remains interesting to examine whether the aspects of the tracking procedure/criteria that I hypothesized to operate at the school level indeed “exist” at this level. This is especially so, as these aspects may in theory still contribute to potential school differences in the SES bias in real-world tracking situations.

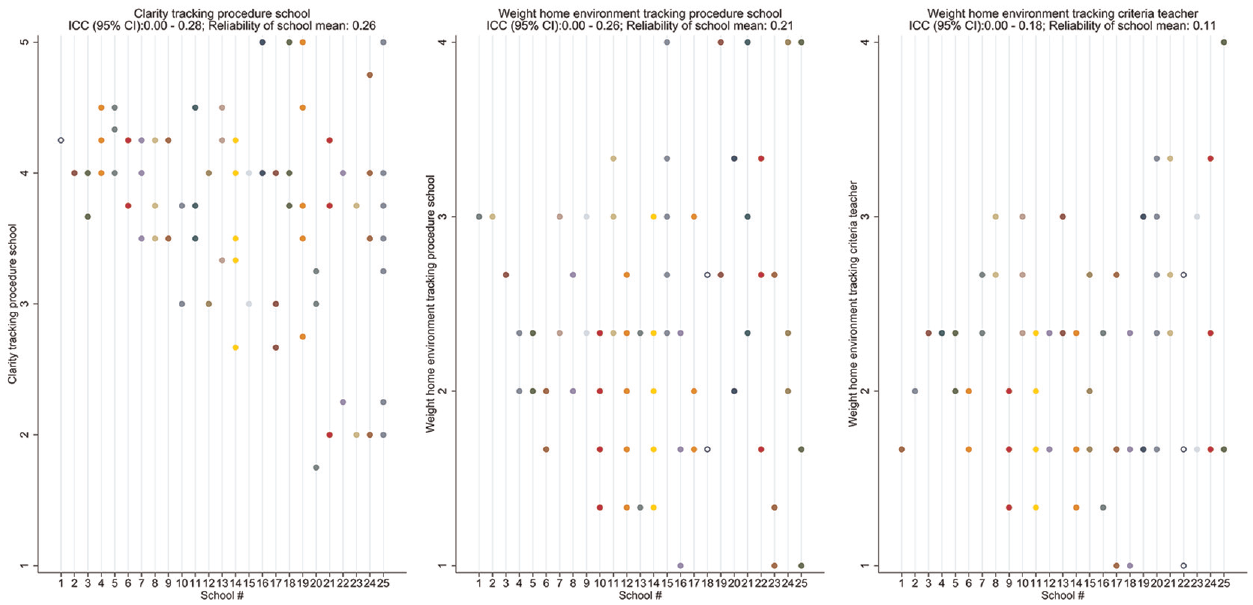

I first inspect the level of agreement among teachers in the same school on the variables concerning the school procedure. For the clarity of the procedure, the ICC is .11 with a 95% confidence interval of 0–0.28. For the importance of the home environment in the procedure, the ICC is .14, with a 95% confidence interval of 0–0.26. Reliability of the school mean scores are respectively .26 and .21. Hence, there is little agreement on these variables among teachers in the same school, and teacher responses cannot be aggregated to reliable school-level measures (Koo & Li, 2016). On average, teachers deviate about 0.5 points from the school mean on these variables (i.e., SD of the school-centered variables). Figure 2 illustrates the level of (dis)agreement among teachers within schools on these variables for 25 randomly selected schools (left and middle panel). The vertical dots (in the same color (shade of gray), on the same vertical line) show the scores of different teachers in the same school. On the x-axis, schools are ordered from low to high levels of teacher dispersion on the variable. These findings suggest that teachers in the same school do not hold a uniform interpretation of these aspects of their school procedure. Since these aspects do not seem to operate at the school level, they are unable to explain differences in the SES bias in track recommendations across schools (no support for H1; H2a).

Agreement between teachers in the same school on the school tracking procedure and their own tracking criteria.

Although teachers hold different interpretations of the “home emphasis” of their school procedure, there may still be a “home emphasis” tracking culture among teachers (H2b). That is, teachers in the same school may (informally) attach the same weight to this aspect in their tracking decisions. However, similar to the school procedure variables, the importance teachers attach to a student’s home environment primarily varies between teachers (rather than schools). The ICC has a 95% CI of 0–0.18, with a school-mean reliability of .11. Figure 2 (right panel) illustrates that the “home emphasis” in tracking criteria does not tend to be more similar among teachers from the same school than teachers from different schools. Hence, schools cannot be characterized by an (informally) agreed-upon “home emphasis” culture. I thus fail to find support for the idea that such a culture contributes to school differences in the SES bias in tracking recommendations (H2b).

Teachers’ Personal Interpretations of the School Procedure and Own Criteria

The statistically significant SES slope at the teacher level implies that teacher factors do play a role in differences in the SES bias in track recommendations for the fictional students. Accordingly, I examine how a teacher’s personal perception of the school procedure and reported tracking criteria relate to teacher variation in the SES bias (H3).

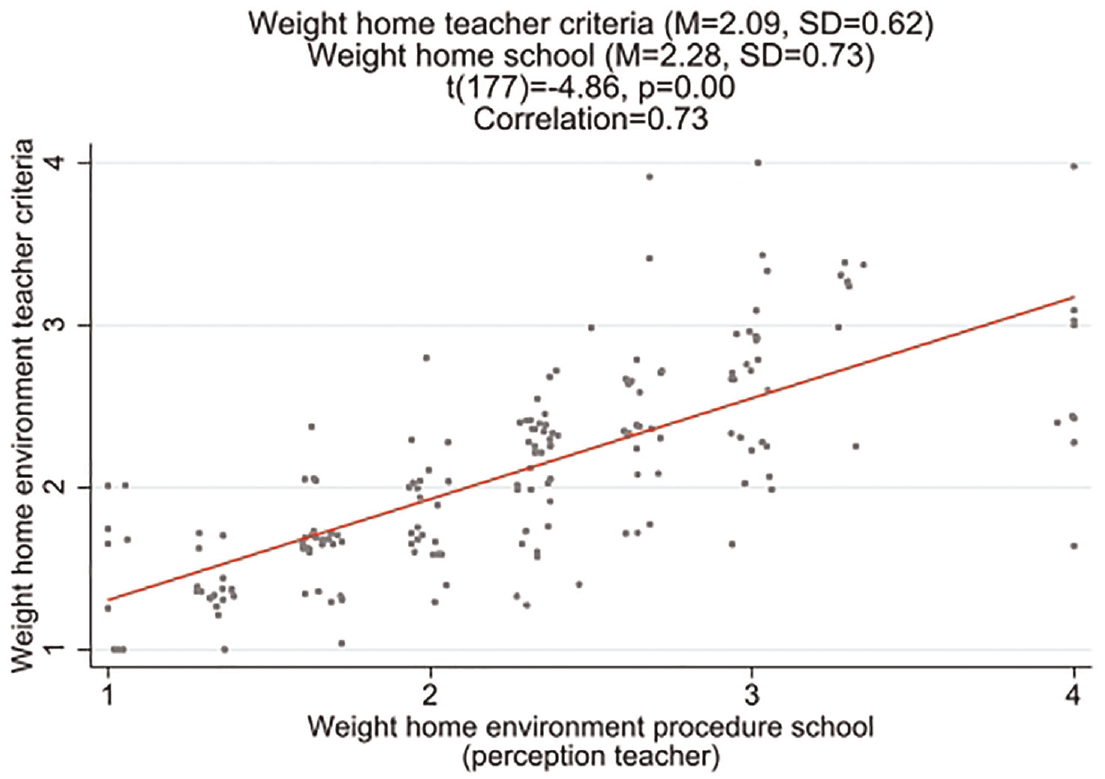

I first explore the (mis)alignment (or [de]coupling) between teachers’ perception of the “home emphasis” of their school’s tracking procedure and the “home emphasis” of their own reported tracking criteria. This can shed light on the extent to which teachers (implicitly) reject the “home emphasis” they believe their school procedure prescribes. I find that teachers who perceive the school procedure to put more weight on a student’s home environment also tend to attach more importance to this in their own criteria (correlation of .73). 11 However, there is not always a complete overlap. On average, teachers tend to report that the school procedure puts more weight on the home environment than they do themselves (t[177] = −4.86, p < .01). 12

Correlation between weight home environment in school procedure and teachers’ own criteria.

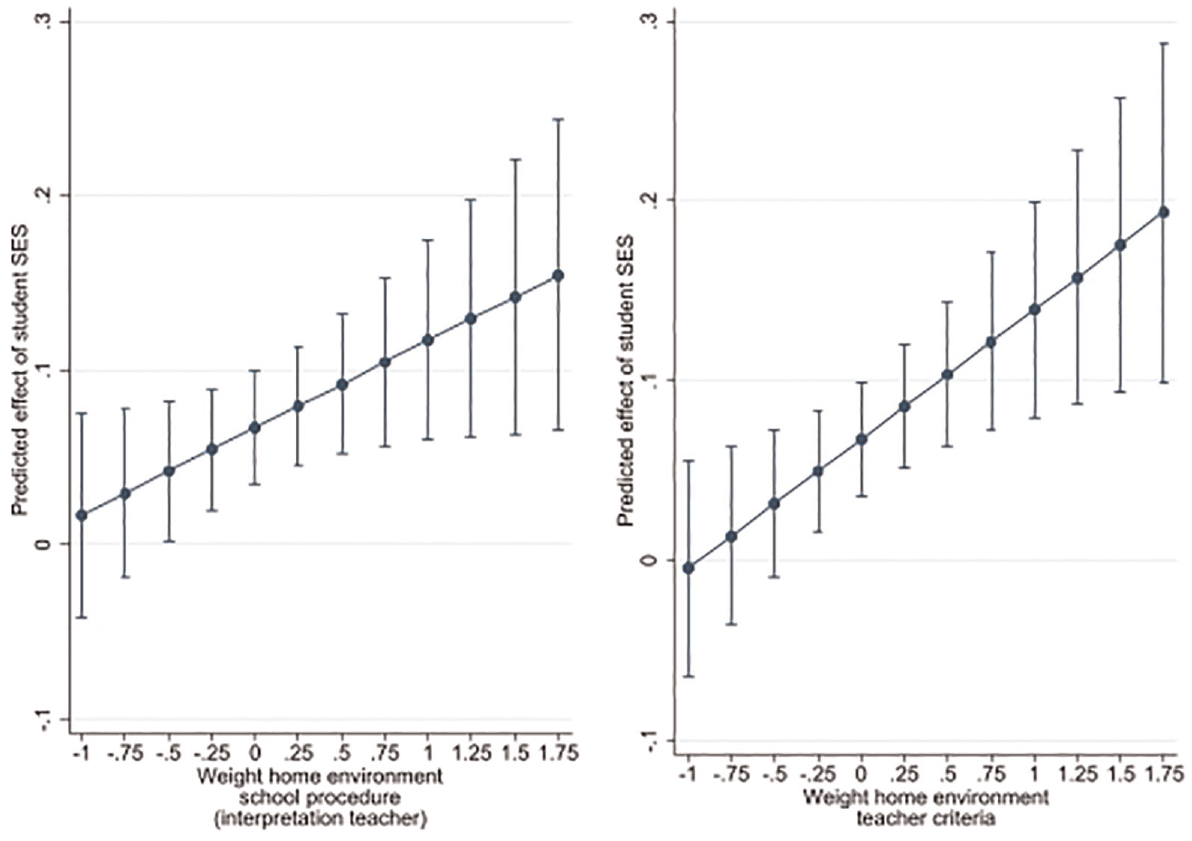

To examine the extent to which the SES bias in track recommendations is conditional on a teacher’s personal interpretation of the “home emphasis” of the school procedure, I estimate a model with an interaction between student SES and the weight home environment school variable (Model 3, Table 3). In line with H3a, I find a statistically significant positive interaction. Figure 4 (midpanel) shows that teachers who perceive a student’s home environment to be relatively unimportant in the procedure (i.e., ~1 SD below average) show no statistically significant SES bias in their track recommendations in the case vignettes. For teachers who score an SD above average, there is about a 0.1 difference in the track recommendations for low- and high-SES students in the case vignettes (i.e., 10% of a full track; 0.08 SDs in Dutch track recommendations). Note that the found interaction effect pertains to teachers who report that their school has a tracking procedure that is explicit about how to weigh a student’s home environment (i.e., who are in the reference category for the dummy variables “Weight home environment school: DK” and “no set school procedure”).

Average marginal effects of student SES on teacher track recommendations.

Finally, I test the relation between a teacher’s emphasis on a student’s home environment in his/her own tracking criteria and the SES bias (H3b; Model 4, Table 3). Again, I find that there is no statistically significant SES bias among teachers who put relatively little weight on a student’s home environment (±1 SD below average; Figure 4, right panel). Among teachers who score an SD above average, the track recommendations for high-SES students are ±0.15 points higher than those for low-SES students in the case vignettes (15% of a track).

Importantly, I cannot estimate a model in which I jointly include the importance of the home environment in a teacher’s own criteria as well as a teacher’s perceived importance of this in the school procedure. The correlation between these two variables is too high. Given there is a set school procedure, it seems that a teacher’s applied criteria cannot be seen independently of a teacher’s interpretation of the procedure.

The found interaction effects (i.e., between student SES and the importance of a student’s home environment in a teacher’s own tracking criteria and/or interpretation of the school procedure) are correlational in nature, and may be confounded by other factors. However, if a teacher’s “home emphasis” is truly influential for the SES bias in track recommendations, I expect it to primarily contribute to teacher variations in the impact of SES, and less to teacher variations in the impact of other student traits in their tracking decisions. Additional analyses indicate that a teacher’s home emphasis only helps to explain differences across teachers in the SES bias in tracking recommendations for the case vignettes, and does not contribute to differences across teachers in how other student traits impact their recommendations. 13

Conclusion

Students from advantaged SES backgrounds receive higher track recommendations than their equally performing disadvantaged peers. This bias seems to vary across teachers and/or schools, yet few studies examine factors to explain this variation (cf. review by Batruch et al., 2023). In this article, I aimed to shed more light on this variation by considering aspects of tracking procedures and criteria. While it is often suggested that tracking biases may be combatted through the implementation of adequate tracking criteria and procedures, I argued that this is not self-evident. More specifically, (a) teachers may not always fully conform to what (they perceive) the procedure to prescribe, (b) the same procedure can play out differently across teachers due to variations in teachers’ interpretation and navigation of it (cf. Coburn, 2004), and (c) the procedure/criteria that teachers describe as motivating their behavior may not correspond with how they (subconsciously) form decisions (cf. Vaisey, 2009).

Using data from a survey experiment on 221 teachers in 69 Dutch schools, I found support for an SES bias in track recommendations. While the average size of this bias was small, it considerably varied across teachers. At the extreme, it was about 25% of a full track. I found considerably less school variation in the SES bias in the case vignettes. Moreover, schools could not be characterized by teachers’ shared perception about aspects of their tracking procedure or by a “home emphasis” tracking culture. While most schools followed a procedure, teachers in the same school had different ideas about the clarity of the procedure, the extent to which it allowed them to account for a student’s home environment, as well as the extent to which they reported to account for this themselves. This suggests that these factors do not operate at the school level, and could therefore not contribute to potential school variations in the SES bias in track recommendations. However, I did find that a teacher’s personal interpretation of the “home emphasis” of the school procedure and own tracking criteria were related to the SES bias in track recommendations in the survey experiment. The SES bias was larger among teachers who believed their school procedure to attach more importance to a student’s home environment, and/or who reported to weigh this more in their own decisions.

These study’s findings may seem somewhat at odds with the literature that stresses organizational differences in inequality-generating categorization processes (e.g., Avent-Holt & Tomaskovic-Devey, 2019). While this literature suggests uniformity in the categorization processes among members of the same organization, I found substantial variation in the applied tracking criteria and biases among teachers in the same school. However, my findings do not necessarily negate the idea of schools as a prime locus for the (re)production of inequality (cf. Avent-Holt & Tomaskovic-Devey, 2019; Domina et al., 2017). First, the fact that track recommendations are (sometimes) biased by student SES underlines the idea that schools are not only a site for the compensation of inequalities between students from different backgrounds, but that categorization processes in school may contribute to their (re)production (cf. Domina et al., 2017). Second, the SES bias in track recommendations does not appears independent from the school context and fully attributable to individual teachers, which is sometimes implied in their labeling as “teacher bias.” Yet the current findings suggest that it is a teacher’s own interpretation of the school context that matters most, instead of the shared rules, norms, or culture that operate at the school level and that affect all teachers uniformly. This ties in with work in organizational sociology emphasizing the agency of organizational members (Coburn, 2004; Golann, 2018).

Another reason why little support was found for the role of school-level processes may be due to the fact that there was less statistical power to detect school-level processes, and that other aspects of the tracking procedure/criteria than those considered here operate at the school level and contribute to school differences in the SES bias. All variables concerning the school’s tracking procedure were collected using teacher surveys, and different conclusions may be reached with distinct data. Nevertheless, it is important to remember that there was also considerably more variation across teachers than across schools in the SES bias in the case vignettes. This also hints at a relative importance of teacher-level processes.

A final reason to not negate the role of the school environment is the finding that school procedures do not merely seem to function as “institutional myths” (cf. Meyer & Rowan, 1977), with “teachers closing their classroom doors to unwanted pressures” (Coburn, 2004, p. 211). That is, I found considerable overlap between a teacher’s interpretation of the school procedure and a teacher’s own reported criteria. While this may be due to social desirability, teachers were not asked directly about their adherence to the procedure, and were unable to view their answers about the procedure when responding to questions about their own criteria. Again, this finding corresponds with research emphasizing teacher agency. Teachers may not simply reject external pressures, but navigate them in their own ways (Coburn, 2004), for example, by interpreting procedures in ways that align with their pedagogy.

This study also knows limitations. While the experimental design is well suited to shed light on the causal impact of student traits on track recommendations, the findings concerning the role of teacher variables are correlational in nature. Teachers’ tracking criteria or interpretation of the school procedure may not causally impact the SES bias in track recommendations. Yet additional analyses did indicate that these teacher variables were only associated with the SES bias in track recommendations in the case vignettes, and not with differences in track recommendations by other student traits. Future studies could use vignettes that also manipulate school procedural or cultural aspects (see Batruch et al., 2019). However, differences in teachers’ interpretation (or knowledge) of a procedure may be hard to experimentally manipulate.

The reliance on case vignettes may have implications for the generalizability of the current findings to teachers’ behavior in real-world situations. While the study involved real Dutch teachers who were concerned with tracking decisions in their school, I asked teachers to form recommendations for fictional students. Such judging processes may differ from the processes that teachers engage in when evaluating their own students with whom they interact on a daily basis for at least half a school year. A potential worry is that teachers miss pieces of information in the vignettes causing them to unnaturally rely on other student traits as a proxy (e.g., SES). I tried to reduce this risk by consulting Dutch educational professionals and prior studies to improve the design of the vignettes. Some empirical patterns also enhance my confidence in the relevance/transferability of the current findings to real-world situations. The average (small) impact of student SES that I found across all teachers is in line with, and does not exceed, the SES effect in observational studies in the Netherlands. Similarly, the share of variance in track recommendations that is explained by academic performance also highly corresponds with findings from Dutch observational research. In relation to this, a prior study demonstrated the ecological validity of case vignettes for track recommendations in Germany and Luxembourg. These countries are similar to the Dutch context in that students are tracked at a relatively young age, and primary school teachers are required to form tracking recommendations. Nevertheless, the fact that this study relied on teachers’ judging processes for fictional students should be borne in mind.

The inclusion of potentially relevant student information in the case vignettes likely reduced the risk that teachers artificially relied on student SES as a proxy for other student traits. However, it also had implications for what I qualified as an SES bias. That is, the SES bias pertained to differences in the track recommendations for students with distinct SES information, who were the same with respect to the other information in the vignette (e.g., parental school support/interest, and student diligence, perseverance, concentration, and independent work). In many other studies, the SES bias pertains to all differences by student SES that are not due to student performance. Hence, this study’s conceptualization may be considered “narrow.” For example, if teachers provide higher recommendations to students whose parents provide more support, this may (inadvertently) contribute to SES differences in track recommendations (cf. Batruch et al., 2023). This is often considered a bias, as parental support does not reflect a student’s own talents. What constitutes a “bias” is a matter of (political) debate. Even if differences in track recommendations would be fully explainable by academic performance, this may still not imply that the situation is “fair.” Student performance may have been affected by prior teacher expectations or a student’s resources at home or in school (cf. Mijs, 2016).

This study has important implications for efforts aimed at reducing biases in teacher recommendations and expectations. Scholars have argued that standardized tracking (or placement) procedures may help to reduce biases in tracking decisions (e.g., Vanlommel & Schildkamp, 2019). While Dutch nationwide tracking institutions are characterized by relatively high levels of standardization and accountability (e.g., obligatory standardized tests to inform decisions, a uniform time frame for making decisions, and an inspectorate checking the quality of track recommendations), tracking decisions continue to differ across students with the same academic performance. This may be due to the fact that schools and teachers still have considerable autonomy in the decision-making. To enhance uniformity, the Dutch government is currently trying to further standardize the process by requiring schools to have an explicit tracking procedure (Ministerie van Onderwijs, Cultuur en Wetenschap, 2020). Yet this study underlines that this may not inevitably help to enhance equality, as (formal) procedures may not reach all teachers in uniform ways. It is key that teachers know, understand, and implement procedures in the ways intended. The mere existence of a standard (and “adequate”) procedure may not suffice to have all noses point in the same direction. Even when a procedure is in place, teachers still seem to use different criteria and thereby provide distinct recommendations for exactly the same students. It is remarkable that I find such diversity—also among teachers in the same school—in a context marked by such high levels of accountability and standardization at the national level. The successful implementation of procedures aiming to equalize teachers’ categorizations of students is likely even more challenging in contexts with less stringent tracking institutions, such as the United States (cf. Geven et al., 2021; Kelly & Price, 2011).

Supplemental Material

sj-pdf-1-aer-10.3102_00028312241288212 – Supplemental material for Tracking Procedures and Criteria and the SES Bias in Teacher Track Recommendations

Supplemental material, sj-pdf-1-aer-10.3102_00028312241288212 for Tracking Procedures and Criteria and the SES Bias in Teacher Track Recommendations by Sara Geven in American Educational Research Journal

Footnotes

Notes

S

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.