Abstract

Field supervisors are central to clinical teaching, but little is known about how their feedback informs preservice teachers’ (PSTs) development. This sequential mixed methods study examines over 3,000 supervisor observation evaluations. We qualitatively code supervisor written feedback, which indicates two broad pedagogical categories and nine separate skills. We quantize these feedback codes to identify the variation in the presence of these codes across PST characteristics, and then use several modeling techniques to indicate that specific feedback codes are negatively associated with evaluation score. Managing student attention was most detrimental to scores in early observations, whereas instructional feedback (e.g., lesson delivery) and verbal corrections were prioritized later in clinical teaching. Findings inform teacher preparation policy on understanding PST development and improving supervisory feedback.

Introduction

Field supervisors hold a consequential role in preservice teacher (PST) development, offering an external evaluation of PST instruction, speaking to objective notions of pedagogy across contexts, and serving as a conduit between the preparation program and the placement (Jacobs et al., 2017). These multifaceted responsibilities correspond to their potential in influencing PST quality, entry into the profession, and where PSTs choose to teach (Bartanen & Kwok, 2021).

However, little is known about the variation in supervisor feedback, particularly in how it can promote PST development across teacher preparation. Feedback is essential for both students (Hattie & Timperley, 2007) and beginning teachers (Hunter & Springer, 2022), but given the contextual difference of clinical teaching—including the unique role of supervisors and the nascent development of PSTs—systematically understanding supervisor feedback is uniquely necessary. Specifically, understanding PSTs’ developmental growth through feedback of authentic practice is vital to enhancing teacher preparation and policy. Incremental change through this central system of feedback could improve how teachers are prepared.

Our study examined a large-scale set of field supervisor feedback from one teacher education program (TEP). We qualitatively analyzed nearly 3,500 supervisor observational responses of early childhood through eighth-grade clinical teachers from 2017 to 2019 to unearth skills targeted for early pedagogical improvement (i.e., feedback codes). Then, we investigated the variation in feedback codes, identifying the extent to which certain contextual features were associated with supervisor recommendations. Finally, we examined the predictive validity of codes on clinical teaching overall evaluation ratings to understand how specific feedback influenced PSTs’ pedagogical development. The purpose of these analyses was to explore how supervisory feedback informs a baseline of PST practices throughout clinical training. The research questions (RQs) guiding this study are:

In what pedagogical areas do clinical teaching field supervisors provide written feedback?

How do supervisor feedback codes vary by context?

What is the relationship between clinical teaching evaluation rating and supervisor feedback codes?

How does feedback type affect evaluation rating across observation number?

From supervisor written feedback, qualitative analyses identified two categories of feedback (instructional development and classroom management) and nine separate skills. We examined patterns across the presence of these codes by observation number (i.e., first through fourth observation) and to a lesser extent evaluation score, subject area, and grade level. From this heterogeneity, we tested for a significant relationship between these codes and PST clinical teaching evaluation score, utilizing different modeling techniques to account for the nested nature of the observations within PST as well as PSTs by supervisors. Consistently, three feedback codes were found to be negatively associated with their evaluation score: maintaining student attention, using non-verbal techniques to manage student behavior, and lesson delivery. That is, PSTs who received critiques in these three areas were more likely to receive lower overall evaluation scores.

Further, to understand the longitudinal nature of our data, we explored PST development through the change in feedback codes by observation number. We identified significant variation through an early focus on maintaining student attention and non-verbal techniques towards later improvement on lesson delivery, lesson cycle, and behavioral corrections. These results may provide an initial framework for how supervisors evaluate clinical teacher development and offer insight into foundational skills for maturing pedagogy.

Background

Our examination of field supervision feedback requires an understanding of the role of field supervisors during clinical teaching alongside the value of instructional feedback within teacher education. Below, we review studies in both fields and follow with an overview of theoretical perspectives on instructional feedback.

The Role of Field Supervision During Clinical Teaching

Throughout clinical teaching, PSTs participate in professional activities under the guidance of a cooperating (or mentor) teacher for at least one full semester (National Council for Accreditation of Teacher Education, 2010). The entirety of this experience has been consistently identified as one of the most influential teacher preparation structures (Anderson & Stillman, 2013; Goldhaber et al., 2022; Ronfeldt, 2021). A report from the National Council on Teacher Quality stated that within clinical teaching, an essential component is that a “supervisor from the program observes a candidate at least four times during the semester … providing written feedback with each observation” (Pomerance & Walsh, 2020, p. 4). Field supervision occurs throughout the span of preparation (e.g., early field experiences; Kwok & Bartanen, 2022), with greater resources and time generally dedicated to clinical teaching (Jacobs et al., 2017). Despite the ubiquity of field supervision, it has received limited attention, likely for several reasons.

First, there is a range of individuals who serve or are hired as supervisors, from tenure line professors to adjunct instructors (Jacobs et al., 2017), providing little consistency in the role. This variation likely underlies teacher education policies that have reduced the requirements to become a supervisor, despite evidence that effective supervisors can positively influence PST development (Darling-Hammond, 2014; Grossman et al., 2008; Mok & Staub, 2021; Wasburn-Moses & Noltemeyer, 2018). Outright, field supervision has been deemed second-class work of clinical field experiences (Slick, 1998; Zeichner, 2021), in which faculty members rarely want to participate, and of which programs often overlook, despite the investment and coordination required.

Second, the work of PST supervision is expanding and becoming increasingly sophisticated (Burns et al., 2016). Duties regularly include observation, evaluation, and instructional or social emotional support (Caires et al., 2010). In addition, supervisors are often responsible for dynamics outside of the field classroom, such as building collaborative relationships amongst the PSTs, cooperating teachers, students, and administration (Campbell & Lott, 2010; Nguyen, 2009), in conjunction with facilitating connections between the field classrooms and university courses (Hertzog & O’Rode, 2011; McDonnough & Matkins, 2010; Ward et al., 2011). Supervisors may even be involved in university teacher education efforts of curriculum planning (Turunen & Tuovila, 2012) and research for innovation (Clift & Brady, 2005; Ronfeldt et al., 2013; Sewall, 2009). Altogether, supervisors play an integral role in teacher development, but the complexity of their role makes it difficult for them to balance their responsibilities (Burns & Badiali, 2016).

Third, given the myriad of responsibilities, supervisors often face internal conflicts about what they can and should do, restricting their influence on PST development. Supervisors deal with a duality between assisting and assessing (Slick, 1997), struggling to maintain relationships within field experiences, yet hold to expectations of the program. This tension was reiterated by Valencia et al. (2009), who found that supervisors feel constrained in what they can offer as feedback given their outsider status, commitment to preserving harmony, or deference to the cooperating teacher. A critique of the structures of field supervision is that supervisors do not spend enough time with either the teacher education program, mentor teacher, or PST to build the necessary trust for a strong feedback process; this prompts to what extent supervisors can offer authentic and rigorous analysis of teaching (Richardson-Koehler, 1988; Sandholtz & Shea, 2012). This context also coincides with disparate visions that PSTs have about the purpose, modes of development, and perceptions of quality instruction (Bartanen & Kwok, 2021; Valencia et al., 2009). Even with thoughtfully supportive supervisors, PSTs struggled to reconcile differences in vision between programs and cooperating teachers (Anderson & Stillman, 2013; Matsko et al., 2023). Overall, this contextual dynamic establishes that supervisors need structural support to improve their practice of giving feedback (Levine, 2011; Valencia et al., 2009).

The role of supervision reflects larger calls for strengthening clinical practice within teacher education, particularly from large-scale, mixed methods data (Goldhaber, 2019; Sleeter, 2014). Despite its importance, models of clinical practice have overall been deemed unsystematic or unintentional in their design (Cochran-Smith & Zeichner, 2005; Dennis et al., 2017). Therefore, understanding supervisors’ feedback—and its associations with PST quality—can contribute to work that advocates for greater support systems for supervisors who share a vital role in teacher preparation (Cuenca et al., 2011).

The Role of Feedback in Teacher Preparation

TEPs rely on observation evaluations—primarily during clinical teaching—to develop instructional practice and serve as a form of assessment (American Association of Colleges for Teacher Education, 2018; Richmond et al., 2019). These observational evaluations are regarded as data that needs to be utilized more throughout teacher preparation to raise PST quality (National Council for Accreditation of Teacher Education, 2010). However, there is little guidance for teacher educators on how to effectively implement and improve clinical teaching observations (Boguslav & Cohen, 2024; Caughlan & Jiang, 2014; Dennis et al., 2017; van de Grift et al., 2014), even though receiving feedback from a supervisor could be equally as important as from a cooperating teacher (Anderson & Radencich, 2001).

Recent evidence corroborates the benefit of feedback within teacher education (Weber et al., 2018). For instance, Prilop et al. (2021) utilized a quasi-experimental design to identify the benefits of expert feedback in PST video analysis. The intervention group improved professional vision of classroom management, suggesting the promise of targeted feedback. However, this study offers little attention towards the timing or sequential nature of content (Feiman-Nemser, 2001), let alone within a structural component such as field supervision. Rather, evidence on novice teacher skill development indicates areas and order of importance for pedagogy (Headden, 2014; Kraft et al., 2020; Ost, 2014; Watzke, 2007). Additionally, Malmberg et al. (2010) examined the change in novice teacher classroom quality over time through observational feedback on the pedagogical areas of emotional support, classroom organization, instructional support, and student engagement. The authors found that only classroom organization linearly improved over time, whereas the other skills eventually plateaued in observational rating.

Most recently, Bartanen et al. (2023) investigated administrator evaluations of novice teachers and found that classroom management and presenting content were the two most important novice skills, with low scores on the former linked to high rates of attrition. The authors recommended that these two skills were fundamental for novices to master first before implementing more complex skills such as questioning. These findings suggest a framework for learning pedagogical skills for novice in-service teachers, but not necessarily for clinical teachers. Thus, our study addresses this gap by exploring supervisor feedback to improve clinical teacher development. In addition, a greater understanding about how PSTs learn pedagogy is needed to better support them prior to entering the profession. Given that supervisors provide a unique external perspective and that feedback is crucial for educator development, this intersection is invaluable for informing teacher preparation policy.

Theoretical Perspectives on Teacher Feedback

The overarching goal of providing PSTs with feedback is to reduce the gap between their current teaching performance and their ideal teaching (Hattie & Timperley, 2007; Scheeler, 2008; Scheeler et al., 2004). Studies in this area generally fall within two domains: how feedback can be received and the nature of feedback. Several frameworks focus on the emotional and social aspect of receiving and interpreting feedback (Copland, 2010; Ilgen et al., 1979; Voerman et al., 2014), which overall highlight PSTs’ desire to receive reliable feedback (Glenn, 2006).

Another body of research that more closely aligns with our study elucidates the nature of feedback. In their meta-analysis, Thurlings et al. (2013) confirmed that effective feedback should be “goal oriented, specific, and neutral” (p. 1). Likewise, Scheeler et al. (2004) emphasized that feedback should be positive, corrective, and immediate to elicit changes in teaching. Offering a framework that is immediate and includes actionable steps toward an identified goal, Hattie and Timperley (2007) explained that effective feedback must answer three major questions asked by a teacher and/or by a student: Where am I going? (What are the goals?) How am I going? (What progress is being made toward the goal?) and Where to next? (What activities need to be undertaken to make better progress?). These questions respectively correspond to notions of “feed up,”“feed back,” and “feed forward.” A follow-up study by Ellis and Loughland (2017) found that PSTs tended to receive more “feed back” than the other two types of comments. Most comparable in sample to our study, Hunter and Springer (2022) examine written feedback throughout new teacher evaluations and find a positive association between improvements in performance and feedback that (a) is aligned with an improvement area, (b) discusses the feedback’s evidential basis, (c) sets specific improvement goals, and (d) includes actionable next steps.

Taken together, these frameworks for instructional feedback highlight the importance of providing feedback that is timely, goal-oriented, and actionable. However, evidence is needed to address the specificity of teacher preparation—which focuses on professional training—as well as insight for field supervisors, who have a distinct vantage to aid in development. As such, we consider a central critique structure within a large TEP, in which supervisors provide such timely and actionable feedback to PSTs during clinical teaching.

Method

Context

We draw our sample from one of the largest Texas undergraduate TEPs, with PSTs earning credentials in early childhood–sixth grade or middle grades math/science or English language arts/social studies in a three-semester program. The first semester requires foundational pedagogy courses (i.e., lesson planning, classroom management) and a 1-day a week field placement in a local school district. The second semester consists of literacy and discipline-specific methods courses, with 2 days at a different local school district. The final semester concludes with a semester-long clinical teaching experience, where the TEP solicits PSTs’ preferred district placement and often places them in their top choice. These districts then assign PSTs to a campus and cooperating teacher according to individual district procedures.

Clinical teaching placements are spread throughout the entire state, and the university hires and assigns supervisors according to respective geographic regions. Under state law, supervisors must hold current teacher certification in the same area as the PST’s classroom, have at least 3 years of teaching experience, may not be employed at the clinical teaching placement school, and have completed training for the state-approved teacher observation instrument. Customarily, supervisors are retired educators remaining involved in the profession and are part-time contractors of the university hired solely for this position.

Throughout clinical teaching, PSTs receive four 45-minute formal observations from one field supervisor approximately every 3 weeks. PSTs do not take any coursework or have any other required professional development during this time and exclusively focus their efforts on this culminating experience. Observations are designed for developmental purposes to provide PSTs with formative feedback about their teaching practice. Supervisors contact PSTs prior to receiving their lesson plan or any other pertinent information, and then again afterwards for any additional notes. Upon completion of the observation, supervisors are expected to provide PSTs with feedback within a 24-hour period. Twice a semester, supervisors facilitate meetings with the PST and cooperating teacher to collectively discuss PST development, but otherwise, there are no required interactions between the supervisor and PST.

Data

We utilized PST clinical teaching observational evaluations from 2017 to 2019, representing four academic semesters. This observational rubric mimics the state’s Texas Teacher Evaluation and Support System (T-TESS) instrument, which was implemented in the 2016–2017 school year. Our focus was on a mandatory comment box and rating at the beginning of the instrument. Field supervisors reported an overall rating score (i.e., exceeds expectations, proficient, growth in progress, and needs significant improvement) and provided an open feedback response within the prompt: “Overall Comments and Recommendations.” There were initially 3,365 total observations, though 16 observations were additional optional observations and 3 were missing an evaluation rating. This resulted in a final analytical sample of 3,349 observations for a nearly 100% response rate, indicating nearly no missing observations within the data analyzed.

The remainder of the evaluation included Likert scale items separated by the pedagogical areas of planning, instruction, and learning environment, though these items were not required for submission. There were comment boxes available for each of these sections that were similarly optional. Furthermore, although these domains mimic the state T-TESS rubric, the individual items differ. 1 The same scale applies for the individual items of the survey and the request for an overall rating of the PST’s performance. For each of the four observations, PSTs could receive ratings for each item on a 1 (improvement needed) to 4 (accomplished) scale or NA, “not applicable/observed.” In cases where an NA was given, the numeric score was missing. Evaluations were predominantly utilized for formative purposes and were submitted to the program as well as the field placement mentor teacher and principal. Previous work examining these exact observation scores indicated that variation in scores reflect differences in PST quality and was consequential relative to entry in K–12 public school teaching, particularly at full-time teaching in that same campus; additionally, our focus on overall score rather than individual Likert scale items was because previous analyses indicate one underlying construct across items (Bartanen & Kwok, 2021).

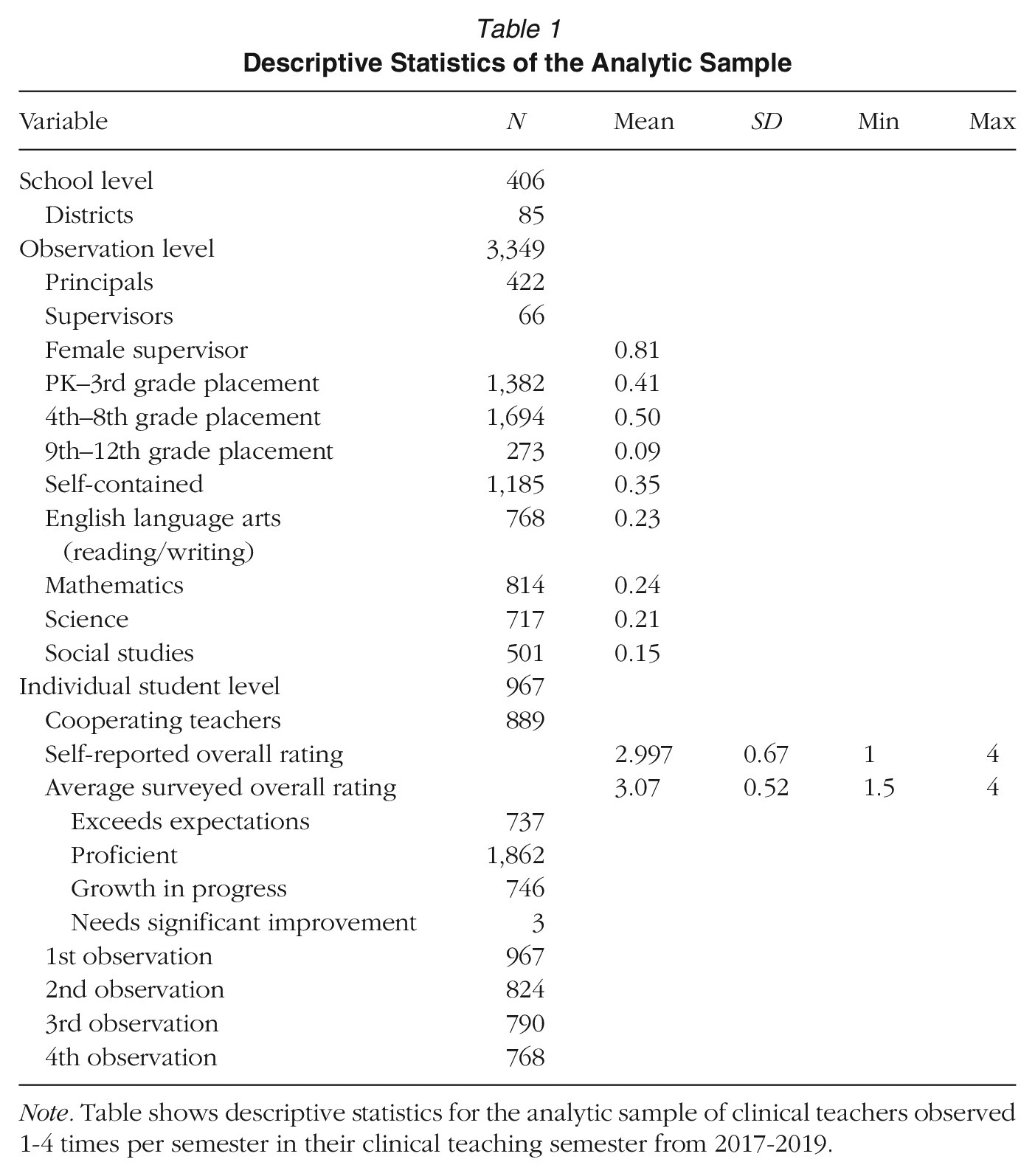

Descriptive statistics of the analytic sample are shown in Table 1. Collectively, the observation data represent 3,349 separate evaluations across 967 PSTs within 85 districts and 406 campuses by 66 supervisors. Most PSTs were at the elementary or middle grade levels (41% and 50% respectively), though the sample is relatively equal across subject areas including at the secondary level. 2 On average, a single supervisor conducted 50 observations within a semester approximating 12 PSTs assigned to a single supervisor in any given semester. While PST demographic data were accessible through the program, no additional data was collected from the supervisors besides contact information and their preferred maximum number of PSTs to observe (M = 7.79).

Descriptive Statistics of the Analytic Sample

Note. Table shows descriptive statistics for the analytic sample of clinical teachers observed 1-4 times per semester in their clinical teaching semester from 2017-2019.

Data Analysis

We took a sequential mixed methods approach (Ivankova et al., 2006; Tashakkori & Teddlie, 2021) to address our research questions. Due to the open-ended nature of the observation form, we sought to first analyze the data inductively. That is, the supervisors were not instructed to provide written feedback based on any a priori rubric, so we decided to align our methods of analysis by inductively identifying patterns for areas of PST development. These identified codes then informed our quantitative analyses.

Throughout our process of inductive analysis, we considered our respective positionalities within the TEP and to the data. All three authors work and have taught in this TEP, though none of us have served as a clinical teaching field instructor or within the clinical teaching experience in any capacity. The first and third author are faculty in the teacher education program and have served as field supervisors in other contexts. The first author also went through a teacher preparation program, is familiar with the role, and has done research in this area. The second author is a postdoctoral researcher in teacher education. We are invested in this program but have no role in the structure or design, particularly regarding clinical teaching. In fact, the analytic sample were collected prior to us becoming involved in the program. While we seek its success, we evaluate it from an external lens and take precautions throughout our empirical process to ensure trustworthiness of the study, detailed below.

Qualitative Analysis

The large-scale nature of the data and robustness of responses required multiple steps for analysis. We took an emergent approach because no prior framework appropriately fit our data. There were aspects of Hunter and Springer’s (2022) and Hattie and Timperley’s (2007) frameworks that peripherally aligned with some of our data, but neither of these frameworks were situated in a clinical teaching, field supervisor, or PST context. We also had familiarity with PST beliefs and pedagogy from our prior research (Kwok, 2018, in particular), and while we cannot ultimately rule out these previous understandings, we sought to inductively analyze the data sample as best as we could to learn from this novel data.

First, we identified a unit of analysis throughout the data. In our initial reviews of supervisor feedback, we recognized that feedback responses were composed of three distinct parts: a retelling of what was observed, an offering of praise for actions observed, and corrective feedback to improve PST pedagogy. We identified retelling portions as generally neutral observations of what was happening in the lesson and, as such, subsumed a large proportion of the overall feedback. However, these retellings did not provide much actionable insight for the PSTs, and thus, we excluded them from our analysis. The second component, praise, offered more content about PST development but did not contain additional information beyond lauding a singular action, so we also excluded statements of praise from our analyses.

Instead, corrective feedback was most generative in description and captured specific areas for PST growth. This decision to limit our sample to corrective feedback is affirmed by previous meta-analyses that indicate lower effect sizes for praise versus critique of a specific task (Hattie, 1999; Kluger & DeNisi, 1996). We acknowledge that there is value in examining the entirety of supervisor responses, but we found that focusing on corrective feedback manageably reduced the data within response and offered the biggest contribution to understanding areas of PST development during clinical teaching. An example of a supervisor observation evaluation in its entirety along with our analytical procedures is included in Appendix 1.

The next analytical task was to specify the parameters of our unit of analysis. A unit included distinct, corrective statements about attributes of the PST, aspects of pedagogy, or actions related to instruction, with units spanning from within sentence to multiple sentences. The number of units per response ranged between 1 to 11, with an average of 4 units. Once units were identified, we conducted open coding (Miles et al., 2018) of a random sample of 10% of the overall data (i.e., 300 responses). We routinely met to discuss our developing findings and consolidate the identified patterns by writing summary statements, informed by our analytic memos written during the open coding process (Charmaz, 2006). These summary statements became our initial codes. Throughout, we engaged in constant comparative analysis (Glaser & Strauss, 2017) by iterating between the raw data and our evolving interpretations to ensure our codes accurately represented the patterns identified within the supervisor responses.

Once codes were assigned, we classified them into two categories: instructional development and classroom management. To ensure the coding scheme represented the sampled data, we tested this scheme on 25% of the overall data (i.e., 750 responses), which denotes theoretical saturation of the data (Trotter, 2012). Within this process, we continued to meet to discuss any discrepancies of coding particular units and adjusted the coding scheme accordingly. We also agreed on several coding decisions at this time. First, because our purpose was to identify distinct areas of PST development within supervisor feedback, we decided that units of analysis would only be single-coded. However, one observation could include multiple units of analysis, and therefore, the entire feedback response could include multiple codes (i.e., written feedback often contained multiple critiques). Additionally, due to the unstructured nature of the feedback, supervisors often reiterated previous points in their feedback. Thus, we decided that one type of code could only be applied once within a response. This reduced the inflation of frequency of codes and maintained our aim of identifying distinct areas that supervisors noted as areas for PST development. The last decision regarded an Other code. The Other code represented feedback that did not conceptually fit under instruction or classroom management. These constituted 168 units of analysis that were excluded from the sample. Conceptually, the Other included feedback where the supervisor noted that they could not give feedback due to an absence of instruction (“Unfortunately, since most of the class time was spent on the brown bag activity, I didn’t observe much teaching” [3490]), general comments about confidence (“She needs to believe in herself” [4188]), and notes about professionalism and reflective practice (“Keep up with attendance log and journal” [3709]).

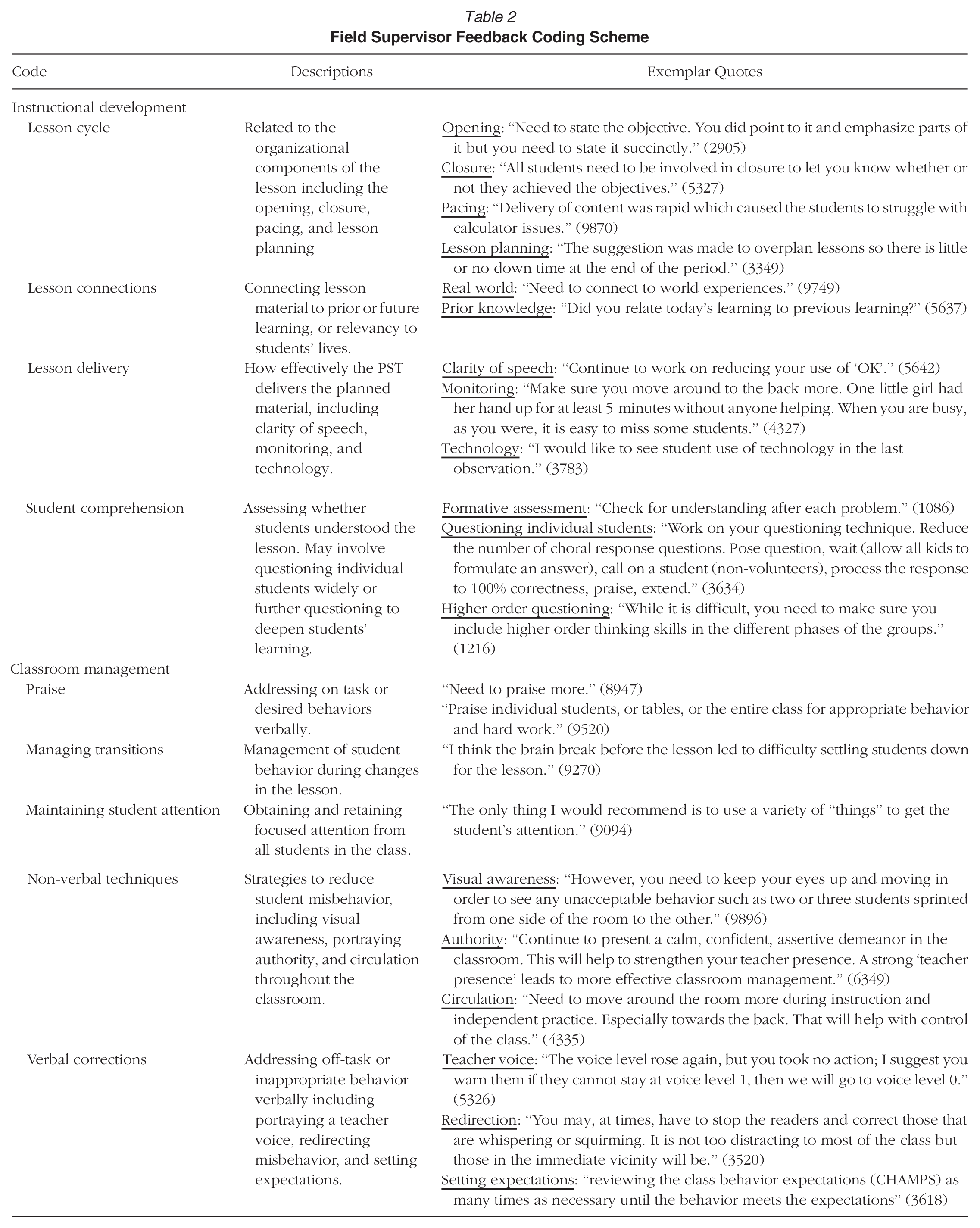

We engaged in several rounds of axial coding to consolidate our codes conceptually. For example, an initial code of monitoring was collapsed into lesson delivery, and lesson planning was merged into lesson cycle. Circulation and authority were consolidated into non-verbal techniques. We also utilized code frequencies to ultimately identify whether a code remained. For example, instances of the code use of technology were so infrequent (n = 16) that we combined it with lesson delivery. Once the coding scheme was finalized, we applied it to all (100%) of the data. In this final step, we did not find any need to adjust the scheme further, as it captured the entirety of our data. The final coding scheme is shown in Table 2.

Field Supervisor Feedback Coding Scheme

The first author began this analysis and then worked with the third author and two experienced graduate researchers. Collectively, we held numerous and consistent meetings, where we discussed our observations and the coding scheme to ensure reliability amongst researchers. We worked collaboratively throughout, conducting numerous rounds of interrater reliability. In each stage of sampling the data (i.e., at sampling 10% and 25% of the overall sample set), we compiled a set of responses across years (2017–2019), supervisors (135 separate supervisors), observations (1–4), and PSTs (967) to represent the variability of the data. We conducted interrater reliability tests four separate times to (a) identify cursory open codes, (b) establish an idea unit, (c) establish codes, and (d) finalize the coding scheme. We repeated tests until we achieved a Cronbach’s alpha of .85 or better across multiple raters. All coding and reliability tests were performed in Dedoose.

Quantitative Analysis

To understand how supervisor feedback changes throughout clinical teaching, we quantified the qualitative feedback codes as binary variables representing the presence (or not) of the content for each response. We merged these data with the original observation evaluation data and then descriptively analyzed the presence of codes as well as the variation across characteristics (subject area and grade level) and development (time of observation and observation rating). All quantitative analyses were conducted using STATA 18.

We first conducted chi-square tests of independence on contingency tables of the presence of qualitative categories to determine whether the proportions of present qualitative codes and their combinations by placement characteristics (e.g., grade level, subject area) differed from those without either qualitative category. That is, the proportion of observations that were assigned a feedback code of both/either category compared to proportions of observations receiving no feedback whatsoever were significantly different across all placement characteristics of interest. This was our first test of feedback codes relating to contextual characteristics of the PST’s observation placement.

To answer our second research question, we next chose to test whether feedback would be predictive of the PST’s next observation evaluation. Because of the repeated nature of our data, we controlled for the observation times and treat all data as equal to first establish that a relationship exists between feedback codes and overall rating. The linear regression model explained any relationship between supervisor feedback and the PST’s overall rating. The following model was estimated via restricted maximum likelihood:

where Y is a standardized evaluation score and X represents the vector of feedback codes as predictors and placement and development characteristics as control variables. Indices s, g, r, and t represent subject area, grade level, supervisor, and observation time, respectively. To check for the validity of feedback codes, we calculated the variance inflation factor to determine the tolerance for each predictor and found no concern for multicollinearity; qualitative feedback variables were not highly correlated with each other (Cohen et al., 2003).

Following this, we next accounted for the contextual factors of the PST placement affecting feedback on average overall rating over time. We controlled for supervisor and the observation number, focusing on the nested nature the data, or the nonindependence between observations conducted by a same supervisor. Using a hierarchical linear model, we then estimated the explained variance and changes in standardized influence of supervisor feedback to the PST overall rating, accounting for the nonindependence by supervisors. Group variation at the clustering by supervisors and their observations was calculated via the intraclass correlation coefficient (ICC) at .297, just greater than desired (e.g., Cohen et al., 2003).

Finally, we returned results from a repeated measures analysis of variance (RM-ANOVA) to examine the effect of each feedback subcode on a PSTs’ observational evaluation overall rating between observations. However, we must reiterate the issue of nonindependent observations since supervisors evaluate various PSTs and assign feedback to multiple PSTs. The within-subject factor was of most interest in this final component of our overall research design—we wanted to measure the effect a feedback code had on a PST’s next observation evaluation rating. Tests were conducted using the Huynh-Feldt adjusted p-value because the sphericity assumption, or the equal variances across observations, was not met as expected. Moreover, we conducted these simple effects analysis to test differences among observation numbers for each feedback code. Marginal effects of feedback codes on overall rating by observation number were reported for significant codes.

Results

RQ1: In What Pedagogical Areas Do Clinical Teaching Field Supervisors Provide Written Feedback?

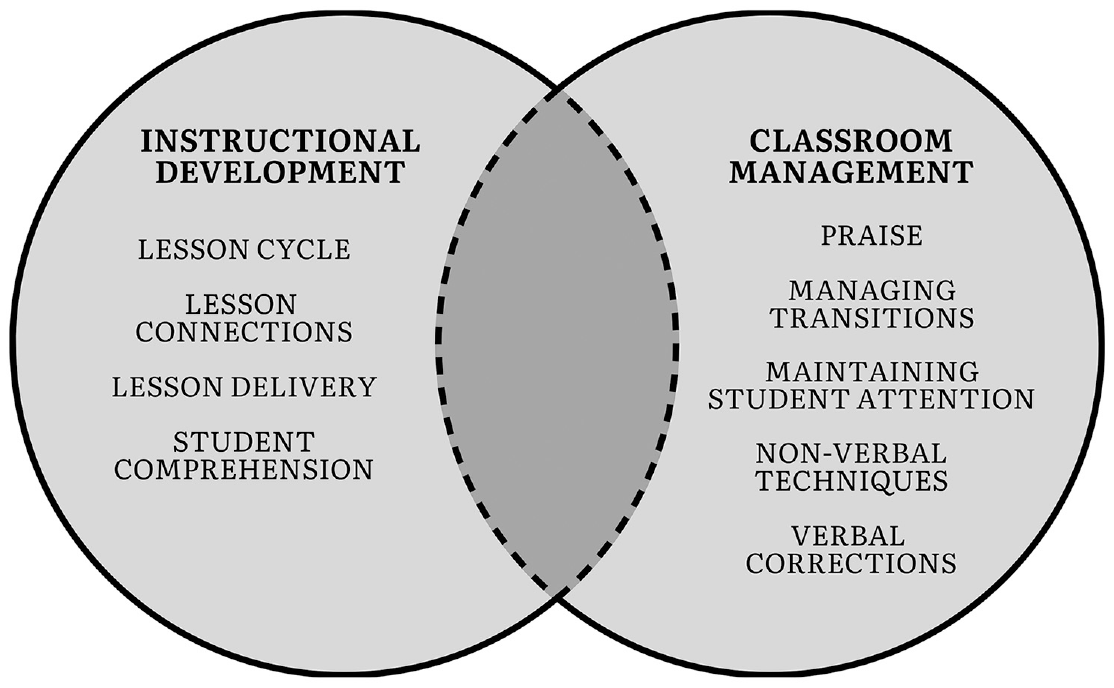

We organized the codes of supervisor feedback into two categories: instructional development and classroom management, represented in Figure 1. These overarching categories are visualized with some overlap to represent how the conceptual purpose of the feedback might intersect between instruction and classroom management. That is, an individual code could sometimes fit into the other category due to the supervisor’s purpose or interpreted intention behind providing that segment of feedback, although this code remained aligned to the definition we identified using our coding scheme. For example, we noticed that the code maintaining student attention was primarily applied when the supervisor gave feedback on how to improve classroom management, as the following, “You need to implement some form of signal to gain attention and don’t start giving any information until all students are looking at you and silent” (3347). On the other hand, we also noticed that the purpose in giving feedback on improving student attention could sometimes fit under instructional development, such as drawing attention towards the learning goals: “One suggestion for future lessons is hold to the expectation that the students will pause and listen when you are presenting information or showing the solution to a problem” (3748). Despite some outliers, the units of analysis held by code, and we maintained these outliers within their predominant category for consistency. Below, we document each code within the overarching categories of instructional development and classroom management and provide exemplar quotes from across supervisors.

Field supervisor feedback categories and corresponding codes.

Instructional Development

The first category of codes relates to academic pedagogical practices, which we refer to as instructional development. Supervisors provide corrective feedback pertaining to the lesson cycle, lesson connections, lesson delivery, and supporting student comprehension.

Lesson Cycle

The structural elements of a lesson—including the opening and closing of the lesson as well as pacing and planning—is defined as the lesson cycle. To begin a class, supervisors recommended that PSTs needed “to state the objective specifically” (8944 3 ) and “appropriately” (9051). During the lesson, supervisors commented on time management, offering suggestions if the pacing was too fast, “the lesson was very short; extensions needed” (3216); or on the other hand, if the pacing was too slow, “The end of class was also rushed, mainly as a result of pacing” (3548). Nearing the end of the lesson, supervisors suggested that PSTs explicitly close the lesson to align with its goals, with statements of “closure needed to be clearer; make sure it matches the given objective at the first of the lesson” (1548) and “continue working on closure so that it becomes as much of a routine in your organization as your excellent opening” (9565). Feedback about lesson planning specifically related to these structural and pacing elements of the lesson were also included within this code (e.g., “It is hard to know how fast a lesson will go; do you need more time or less? Make sure to extend lessons with open-ended questions and have an activity planned that is about the lesson just in case you finish the lesson early” [2895]).

Lesson Connections

Another area of feedback was to promote the connection of ideas in the lesson to other information that was relevant to students or their prior learning. Supervisors underscored the importance of purpose setting and relevance: “students need to understand the purpose for the lesson and how it applies to their daily lives” (9074) by “connecting to world experiences” (9749) and “using more examples coming from student’s life rather than your own life” (9374). Relatedly, PSTs were advised to incorporate students’ prior learning to enhance lessons. PSTs should “prepare students for the new learning by quickly reviewing previous concepts taught and having students demonstrate they know and understand” (9074). Explicitly recommended by one supervisor: “I suggest you emphasize what they already know and relate the learning to future learning” (9076). Throughout the lesson, PSTs should consider the content relative to their students’ past and developing understandings.

Lesson Delivery

Supervisor feedback in this area pertained to observable aspects of instruction implementation, including clarity of speech, intentionality of movement, and use of technology. Movement centered on monitoring, or how PSTs traversed the room to track and assess student progress, with stated recommendations of, “Be sure to walk the room so you know what students are doing and assessment can be seen quickly” (3611) and “monitoring is present, but look at the students’ work and comment on it as you monitor” (9809). Another strategy that supervisors suggested was clarity of speech, stating the “need to eliminate saying, ‘um’ and ‘okay’ for pauses in instruction” (8944) or noting that PSTs should “be careful of your use of ‘OK’; I recorded 10, but I know I missed some” (2551), in an effort to make the lesson less verbally distracting for students. A few supervisors also noted a need for increased use of technology: “Need to involve student use of technology” (2940).

Student Comprehension

Supervisors advised that PSTs support student comprehension and understanding through targeted classroom questioning. PSTs received recommendations to use questioning as a means of formative assessment: “How do you know that every student is ready to go to the next part of the lesson if you don’t question them?” (8946). Other supervisors reiterated that such formative assessment discussions needed to involve all students: “Lots of questioning but it would help if you spread it out more. Ask more non-volunteers” (1536); or “My only comment was there needs to be a more structured way of dealing with responses to questions (pulling sticks, turn to your partner and then give a thumbs up when you have an answer) rather than raised hands” (1546). Through intentional questioning, PSTs could elicit responses from students to assess the extent of their understanding and consequently engage in higher levels of questioning: “We agreed that additional development of questions requiring students to utilize the concepts and vocabulary contained in their task would raise thinking levels and interaction with the content being studied” (2924).

Classroom Management

The other category of supervisor feedback was classroom management, which entailed ways of preventing or dealing with misbehavior or suggestions to encourage model behavior in the classroom. Five codes addressed distinct aspects of managing student behavior: praise, managing transitions, maintaining student attention, non-verbal techniques, and verbal corrections.

Praise

Supervisors encouraged PSTs “to praise more consistently” (8946), “praise student for appropriate behavior and effort” (5821), and “use positive reinforcement to encourage appropriate behavior” (1566). Integrating praise allowed PSTs to highlight students who excelled in the classroom or complied with instruction. As stated by one supervisor, “Suggestion: Notice out loud what students are doing when they are doing what you asked instead of noticing students that do not comply” (8957). In such a way, praise highlighted model behavior without disparaging students who had yet to exhibit it.

Managing Transitions

Another technique that supervisors recommended was the management of transitions, a key time when students could get off task during changes in the lesson or activities. Supervisors advised that PSTs explicitly communicate the expectations of how students should move around in the classroom, such as “prior to leaving the carpet and after telling the students they will work in pairs, have a brief discussion how we work in pairs” (9076). As explained by another supervisor, “So, the same way you transition students from PE, set students down in chairs afterwards, lower your voice, set expectations, then transition into lesson” (9270). The importance of managing transitions is to “take less time, with the students learning a more proficient way to obtain their materials and getting to the carpet for instruction” (2072), reducing time wasted and having students “get back on track quicker” (3290) after a change in activity.

Maintaining Student Attention

Supervisors also advised PSTs to reduce misbehavior by maintaining student attention. Supervisors provided similar statements of, “Suggestion: Practice procedure to get and maintain student attention” (8956); and “Remember if they stop being attentive or start talking, just stop and use wait time for compliance” (9076). Such statements reinforced that without student attention, PSTs’ efforts for instruction would be futile: “Be sure ALL students are paying attention before you begin a lesson or give instructions” (9081).

Non-verbal Techniques

In dealing with misbehavior, supervisors noted several non-verbal techniques. They suggested that PSTs practice their visual awareness skills, or using their eyes to identify off-task behavior, with statements such as, “She still needs to work on her classroom awareness which will develop with time” (9426); and “you need to keep your eyes up and moving in order to see any unacceptable behavior such as two or three students sprinted from one side of the room to the other” (9896). Another technique revolved around teacher presence, including exhibiting authority through a firm, consistent, and confident demeanor: “Continue to focus on presenting a confident, assertive demeanor in the classroom” (6154). As exemplified by one supervisor, “Continue to work on presenting a confident, assertive demeanor so that students will see you as ‘the teacher.’ Establishing a strong ‘teacher presence’ is your priority. It leads to more effective classroom management” (9105). Presence also included circulation, with suggestions to “make sure to use proximity when off-task talking occurs” (3347) with the idea that physical proximity would reduce misbehavior.

Verbal Corrections

Supervisors gave feedback on an array of verbal corrective classroom management techniques. One included emphasizing a teacher voice, or having a tone that stressed more confidence. As best described by one supervisor, “Assert yourself with confidence, you clearly know the content and let the students feel your excitement that you want to share the content with them. Work on a strong, (deeper) confident voice, and inflection and emphasis to your delivery” (9480). Others discussed it broadly, as supervisors recommend that PSTs should be “working on [their] teacher voice” (9088). In situations when students were off-task or displayed inappropriate behavior, supervisors reminded PSTs to redirect students: “make sure you correct students who are talking during the prior learning exercise” (9071), particularly “correcting students individually, by name, [which] is more effective than punishing the whole class” (8945). To prevent misbehavior, supervisors commented on the importance of setting clear and explicit expectations: “identify expected behavior and voice level when students work together at their tables” (3240). Overall, supervisors favored techniques that provided precise corrections for students to stay on task.

RQ2: How Do Supervisor Feedback Codes Vary by Context?

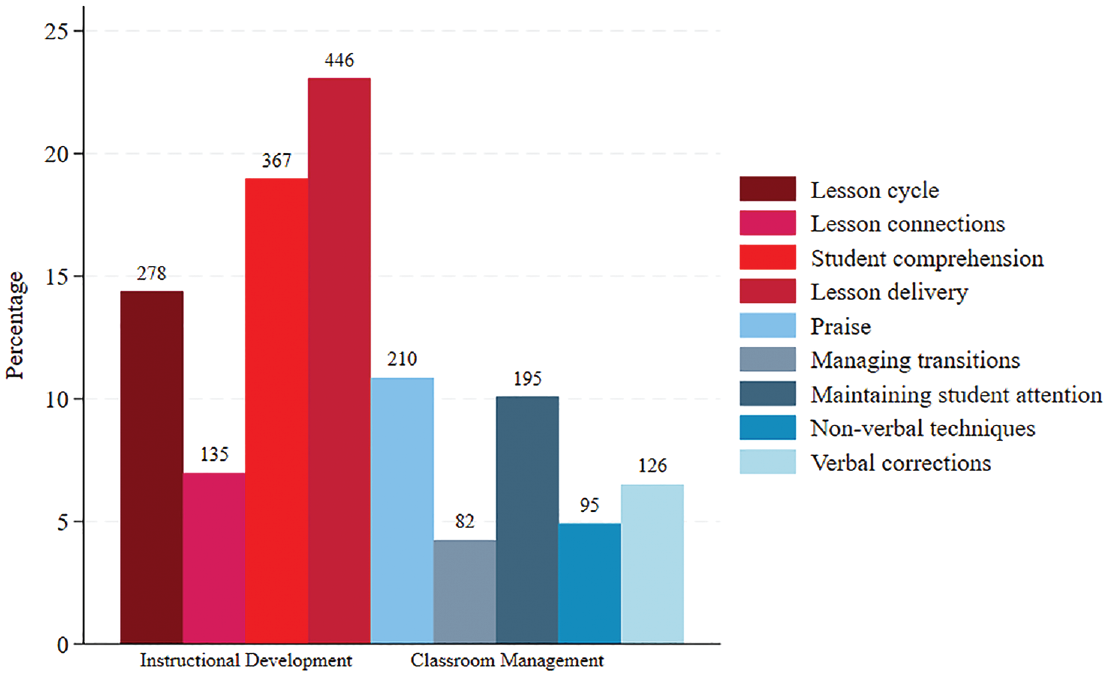

We leverage our application of the scheme to the entire data by next identifying the variation in supervisor feedback. Figure 2 illustrates the presence of codes throughout the data, indicating instructional features that are most prevalent across PSTs. Feedback around student comprehension and lesson delivery are the most common codes—19% and 20%, respectively—with praise and maintaining student attention as the most frequent management codes.

Supervisor feedback code frequency (N = 3,349).

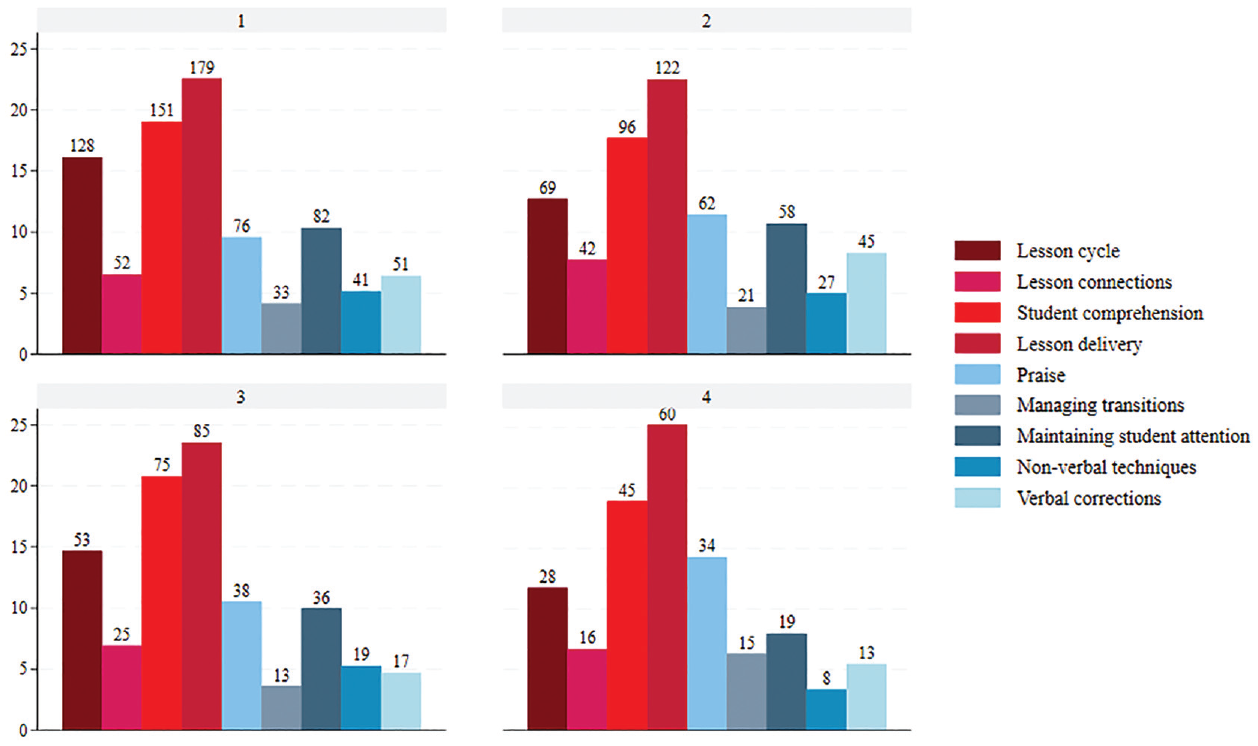

Then, we examine how feedback codes vary by placement context. Most relevant, we investigate the presence of codes across observation number to see whether feedback changes throughout clinical teaching. Chi-square tests for each code category, shown in Appendix 3, indicate statistically different correlations with overall ratings and observation number. 4 Appendix 4 illustrates the presence of feedback codes across teaching placement characteristics for both the whole sample and subset of PSTs who received all four observations. Figure 3 indicates that lesson delivery is the most present throughout the first two PST observations at 22%, with the next most present at least 3% less—student comprehension (19%). Similar patterns are noted in the final two observations. Lesson delivery makes up 25% of the occurring feedback and student comprehension remains the same at 19%. On the classroom management side, maintaining student attention drops from 11% of the occurring feedback to 8% by the final observation and verbal corrections remains proportionally the same all throughout.

Supervisor feedback code frequency by observation number (N = 3,349).

RQ3: What Is the Relationship Between Clinical Teaching Evaluation Rating and Supervisor Feedback Codes?

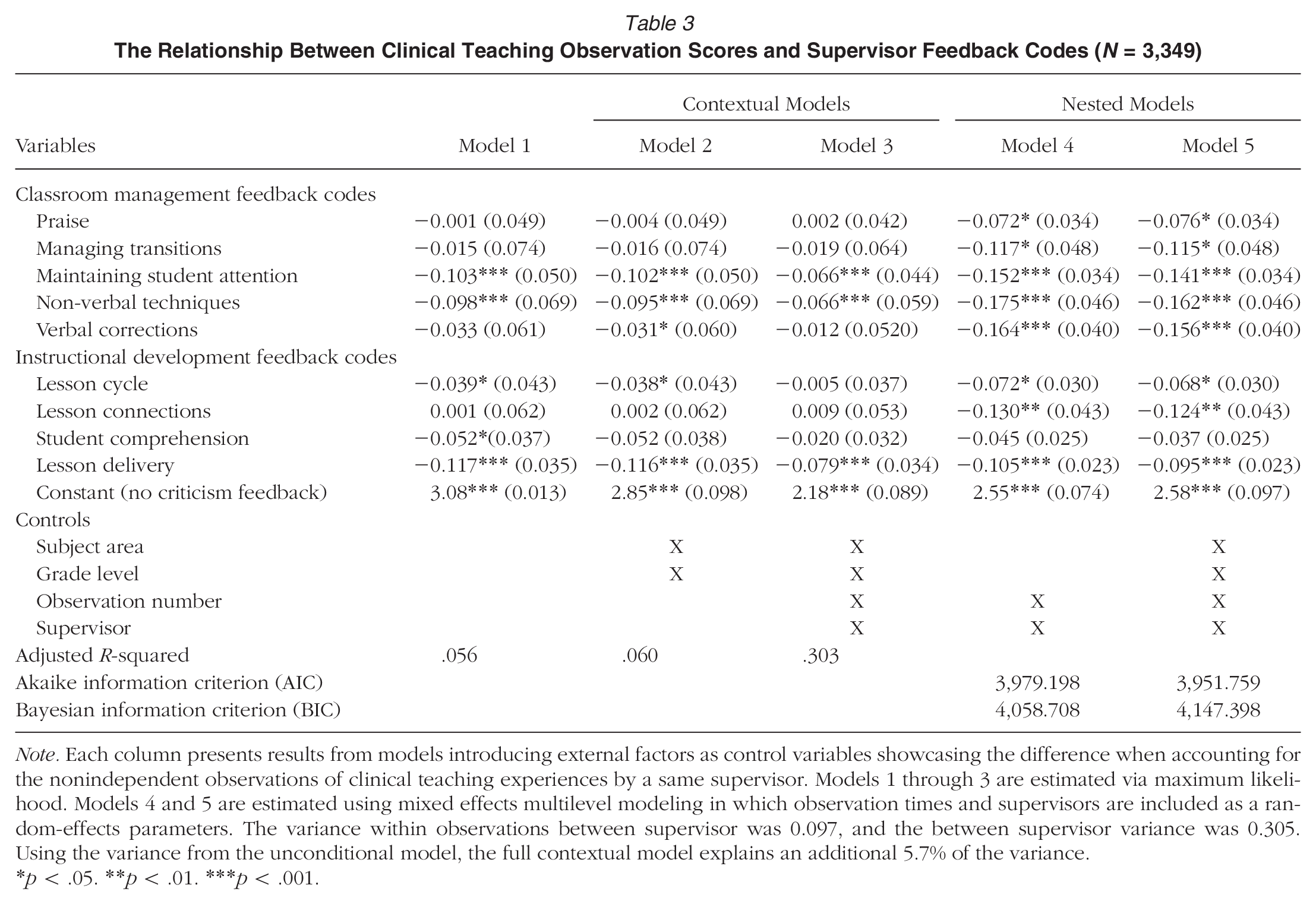

Given these statistically significant differences in code presence across placement characteristics, we then examine whether feedback could predict observation evaluation scores. 5 We model overall rating by codes in Table 3, with Model 1 indicating a base linear regression and Models 2 and 3 incorporating placement and observation time variables, respectively. 6 Model 3 showcases that the change in the relationship between feedback code and the dependent variable is minor yet significant when the model controls for supervisor and observation number in addition to subject area and grade level.

The Relationship Between Clinical Teaching Observation Scores and Supervisor Feedback Codes (N = 3,349)

Note. Each column presents results from models introducing external factors as control variables showcasing the difference when accounting for the nonindependent observations of clinical teaching experiences by a same supervisor. Models 1 through 3 are estimated via maximum likelihood. Models 4 and 5 are estimated using mixed effects multilevel modeling in which observation times and supervisors are included as a random-effects parameters. The variance within observations between supervisor was 0.097, and the between supervisor variance was 0.305. Using the variance from the unconditional model, the full contextual model explains an additional 5.7% of the variance.

p < .05. **p < .01. ***p < .001.

Model 3 displays the changes with these controls. Across all models, the statistically significant variables’ coefficients decrease, and we see this negative relationship between ratings and supervisor feedback codes get weaker. This linear regression model results in an adjusted R2 = .303, meaning over 30% of the variance is explained when controlling for the supervisor, observation time, and placement characteristics. Comparatively, we examined whether the supervisor and observation number would have an effect on clinical teachers’ overall rating of their observation as level 2 predictors in Model 4.

Placement contextual factors about the clinical teaching experience are accounted for as level 1 predictors—or at the PST level—in Model 5, including subject area and grade level. Changes in the standardized coefficients predicting change in overall rating are minimal between the models. We note little to no differences between the nested models in that all feedback code coefficients remain negative and statistically significant (except for student comprehension). Non-verbal techniques and verbal corrections continue to return the largest coefficients of change on overall rating; when these codes are present in a clinical teacher’s feedback, their overall rating decreases on average by more than 0.15 points (γ4 = −0.162, SE = 0.046, p < .001; γ5 = −0.156, SE = 0.040, p < .001). The constant average overall rating between Models 4 and 5 stays practically the same at 2.58 with a notably similar standard deviation of 0.097. When supervisors provide feedback on PSTs skills to maintain student attention, use non-verbal techniques, or their lesson delivery, they are likely to attribute fractionally lower overall ratings.

RQ4: How Does Feedback Type Affect Evaluation Rating Across Observation Number?

The hierarchical linear model incorporates the nested nature of our data—PST repeated observations grouped by their supervisors. Conceptually, we consider the supervisor as a higher-level predictor of a clinical teacher’s overall rating seeing as the single supervisor conducts repeated observations. Therefore, we organize our multilevel models in the following ways. First, the unconditional model includes PST observation evaluation ratings as the dependent variable with no Level 1 predictors in the model and supervisor and observation number set as Level 2 parameters. The constant starts at 2.55 (SE = 0.074) in Model 4 and is statistically significant (p < .001). The random-effects parameters for the unconditional model included supervisor and the observation time with a within observation variance of 0.097 and a variance between supervisors of 0.305. We add indicators in the final two columns of Table 3.

Model 4 in Table 3 incorporates the feedback codes that PSTs receive from their supervisor as part of their observation evaluations to a hierarchical linear model, which accounts for PSTs nested by supervisor within observation numbers. Compared to Model 1, where controls for contextual factors and development are not included nor clustering, the average overall rating starts at 3.08 (SD = 0.013) when all predictors are held constant at zero, indicating no marked codes. By Model 4, which accounts for the nonindependence of observations, still without control characteristics, we see a decrease by more than half a unit (2.55; SD = 0.074). Furthermore, all feedback code effects are also significantly negative on PST overall rating in both Models 1 and 4. There is minimal change in effect observed between the nested models (Models 4 and 5) with or without controlling for grade level and subject area.

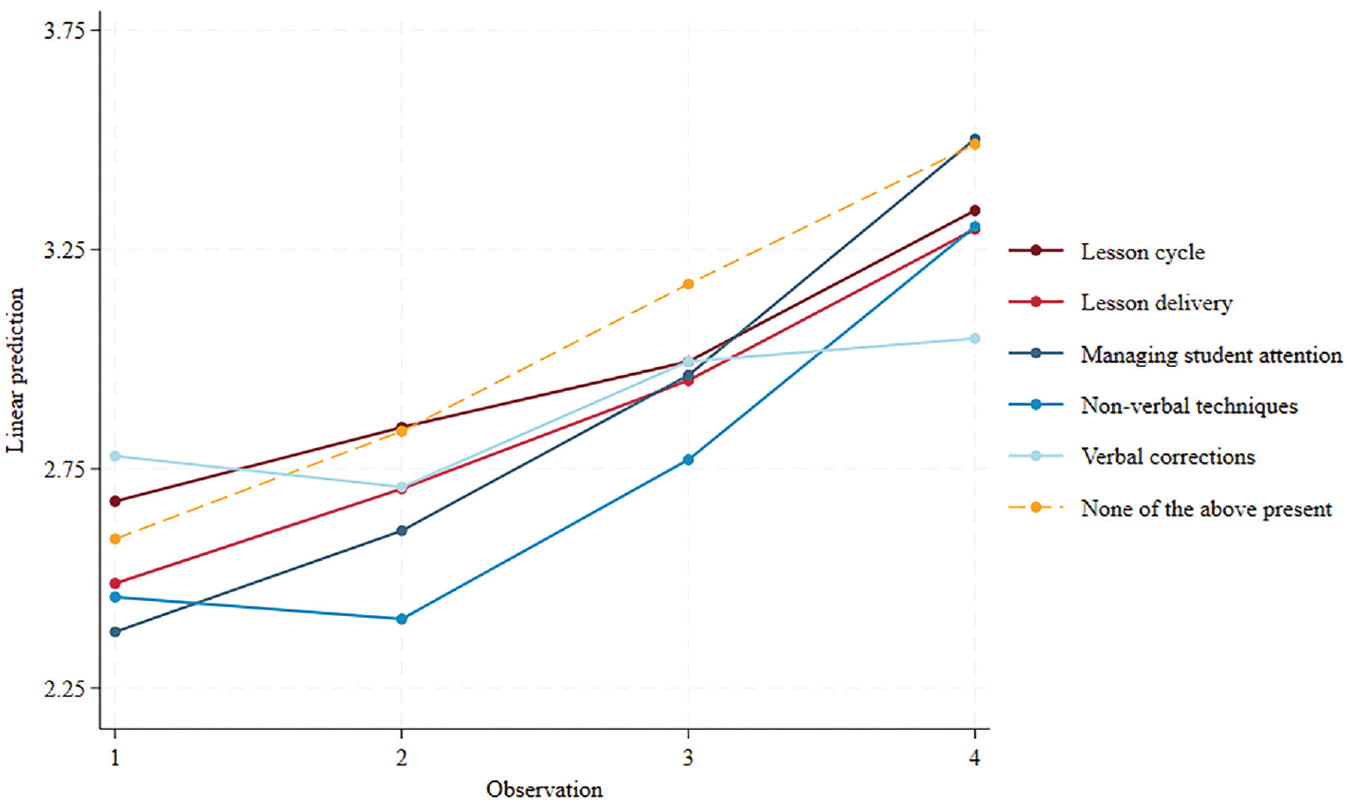

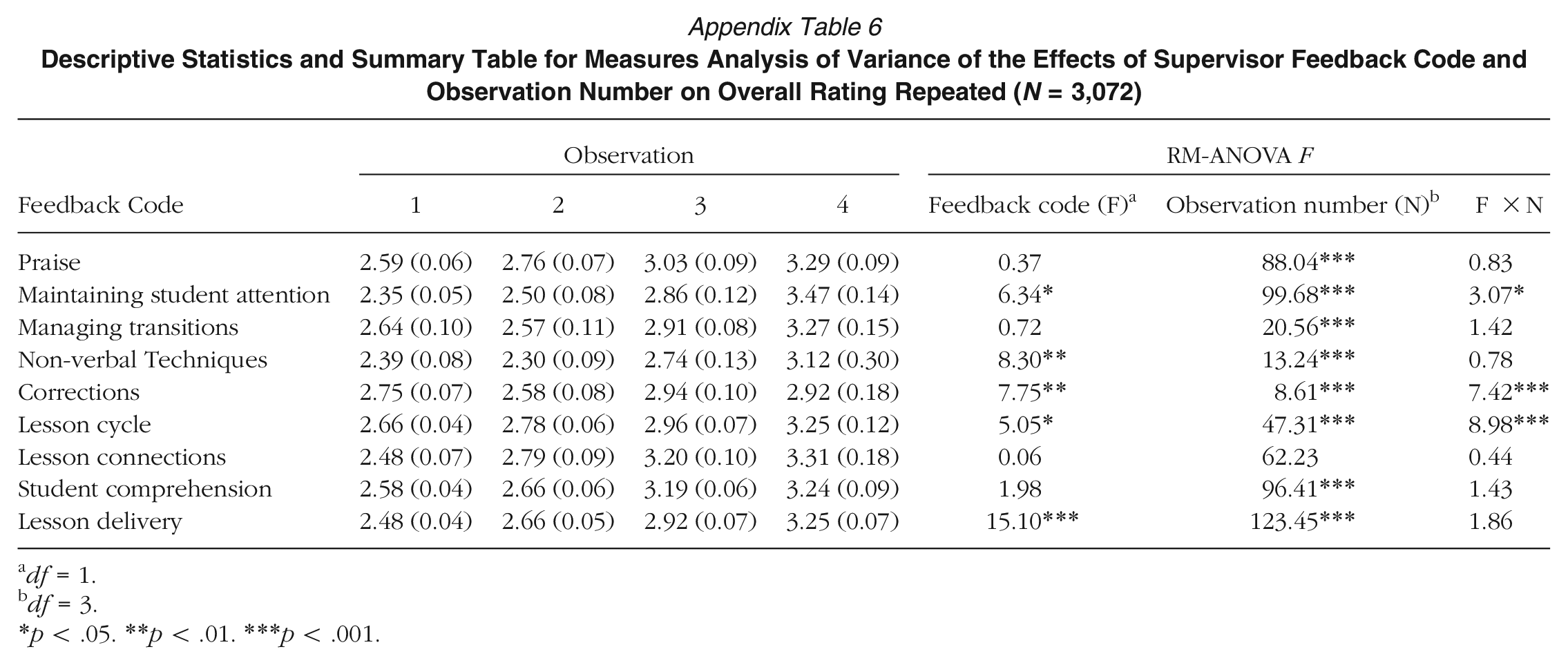

Results from the RM-ANOVA were significant at shared confidence levels for five out of the nine feedback codes all shown in Appendix 5. Results for main effect and interaction effects were calculated using the Huynh-Feldt correction shown in Appendix 6. In Appendix 7, margins plots for each feedback category of codes compare linear predictions of observation evaluation ratings for PSTs who received that feedback code versus those who did not. Whereas Appendix Figure 7c presents margins plots for the remaining non–statistically significant feedback codes compared to a single graph of linear predictions for those who did not receive feedback. Figure 4 shows the change in linearly predicted overall rating for receiving each individual feedback code across observations. Figure 4 demonstrates the changes in predicted average overall ratings over time for PSTs who received none of the significant critical feedback (yellow dashed line) compared to those who were attributed feedback in any of the five codes which returned significant interaction effects (solid lines).

Margins plots of occurring feedback codes significant by observation number.

The main effect for Maintaining student attention is significant (F = 6.34, p = .01), as is its interaction effect with observation number (F = 3.07, p = .036). This code starts off the most negative (harshest feedback leading to a less positive evaluation) but then makes the largest gains that having feedback in this area by the fourth observation is essentially similar to not receiving the feedback. Similarly, the Verbal corrections main effect and interaction effect with observation number are significant (F = 7.75, p = .006; F = 7.42, p < .001). Interestingly, this starts at the first observation as positive (above the dotted line) but then ends at the final observation as the most detrimental to PST evaluation scores. Further, Non-verbal techniques had a significant main effect (F = 8.30, p = .004) but an insignificant interaction effect with observation number (F = 0.78, p = .486). That is, it remained consistently as an area of feedback evaluation despite observation number. The instructional feedback codes Lesson cycle and Lesson delivery both return significant main effects (F = 5.05, p = .025; F = 15.1, p < .001) and a significant interaction effect between Lesson cycle and observation number (F = 8.98, p < .001). These trend initially towards the dotted line but the line for Lesson cycle demonstrates that rating does change because of the observation number when receiving that feedback; between the first and last observation, lesson cycle feedback significantly tampers evaluation rating.

Discussion

This sequential mixed method study investigated clinical teaching field supervisor feedback. We qualitatively coded 3,349 observations, identifying areas of critique that supervisors provided to their PSTs. Then, we examined patterns of feedback codes by context to identify relationships between codes and PST observation evaluation score. Finally, we examined how feedback changes throughout clinical teaching. Through these analyses, we offer several important findings.

Supervisors focused heavily on instructional and behavioral components of pedagogy. This provides a glimpse into the range of content that supervisors focus on and prioritizes PST developmental needs, reiterating evidence about the early importance of lesson planning (Jacobs et al., 2017) and classroom management (Kwok, 2020; Prilop et al., 2021). However, our results contribute to wider systematic substantiation and in specific areas that supervisors may centralize their observational feedback, pointing to areas that, according to supervisors, are foundational skills for successful teaching. Specifically, three feedback codes (maintaining student attention, using non-verbal techniques, and lesson delivery) were consistently significant across regression models, suggesting that these skills offer important predictive effects on PST evaluation ratings over time, relatively comparable to what Malmberg et al. (2010) found for novice teachers. This is vital in pinpointing areas that are most crucial—in the eyes of supervisors—in evaluating PST success, suggesting topics of foundational pedagogy for PST practice and areas of improvement; otherwise, observational evaluations could hinder that progress moving forward (Bartanen & Kwok, 2021).

Further, our results showed that feedback differs by context—specifically observation number, evaluation rating, subject area, and grade level—which points to systematic changes in supervisor feedback and suggest a trajectory of PST development. Namely, supervisors tend to focus on maintaining student attention and non-verbal techniques during early clinical teaching versus later improvement on lesson delivery, lesson cycle, and verbal corrections. These results align with Bartanen et al. (2023), where classroom management and presenting content were fundamental to novice teacher evaluations and even eventual retention. Not only do supervisors prioritize classroom management as foundational, which aligns with previous research (Jacobs et al., 2017), we found that supervisors address nuance within classroom management through five respective codes. Altogether, we contribute foundational evidence of how feedback—through supervisors’ evaluations—matters for PST development and specifically, when and what that feedback could look like.

Limitations

This study should be held within the context of its design. First, supervisor feedback was within one teacher education program. Future research could examine whether findings are replicable across other contexts. We believe the breadth and depth of data draw to the strength of the study, but we cannot rule out programmatic idiosyncrasies that guide supervisor feedback. One distinction was that the observational tool allowed supervisors to write their evaluation of the PSTs’ instruction without a set form or structure (i.e., the evaluation prompt stated, “Overall Comments and Recommendations”). Other institutions might ask their supervisors to give feedback guided by set standards. Thus, the findings of our study illuminate the pedagogical areas that supervisors tend to give feedback on when left to themselves. We similarly cannot rule out the Likert scale items on the evaluation rubric influencing supervisors’ feedback. While there were similarities between those items and our codes, it was not fully representative and could account for the absence of feedback around certain pedagogical areas (e.g., culture, identity, and to some extent relationships). Future research could examine how different modalities in observation evaluation could shape the content of supervisor feedback.

Second, we do not know the root of the supervisor feedback. That is, we cannot connect what the supervisors gave feedback on with what the PSTs actually did in their classroom teaching. This could be examined in future studies through observations or video to identify what PSTs are enacting compared to what supervisors write about as feedback. Similarly, we do not account for whether PSTs utilized supervisor feedback in their subsequent teaching. Future studies could observe PST actions after supervisor observations or examine PST reflective responses to supervisor feedback.

Third, we do not know if there were any additional interactions between supervisors and PSTs. We examined only one piece of information from supervisors: their written feedback. We are unsure how other informal or undocumented interactional moments of feedback (e.g., emails, brief conversations) could have mediated the trajectory of PST development or supervisory feedback. Interviews and observations of field supervisor visits could illuminate necessary programmatic context to study this aspect of feedback further.

Implications

Despite these empirical restrictions, our findings offer implications for practice, policy, and research. Most relevant, teacher preparation needs to acknowledge the importance of field supervisors and how their feedback contributes to the foundation of PST pedagogical training. Given the consequential nature of supervisor feedback, a greater investment is needed for supervisor training to guide how written feedback can pinpoint particular skills for improvement and how feedback on certain skills may yield more meaningful improvement than others. These minute adjustments with the content and timing of supervisory written feedback could systematically improve PST development.

Identifying pedagogical areas of supervisor feedback can inform both support for supervisors and TEPs. Providing supervisors with more training and support is an identified need (Cuenca et al., 2011) to which the findings of this study offer a possible implication. By identifying the pedagogical areas that supervisors naturally tend to, programs can bolster or identify gaps between these areas of feedback and the pedagogical standards that the TEP expects of their PSTs to inform areas of training that supervisors may need. Such training for supervisors could contribute to a greater alignment between what PSTs are practicing in the field and what they are learning in their university courses. For teacher preparation, programs might consider structuring PST development around these areas of feedback, embedding supports in the field or extending learning opportunities around these areas of focus. This could include offering workshops, professional development, or informing mentor teachers of evolving skill development, such as maintaining student development at the beginning of clinical teaching and behavioral corrections towards the end of the semester. Similarly, PSTs may benefit from training of certain skills (e.g., non-verbal techniques) early in the program so that they can successfully implement it at the start of clinical teaching. Programs could delay PST learning of other skills (e.g., lesson delivery, lesson cycle) after PSTs have shown mastery with the previous techniques. This would provide a broad framework for a PST learning progression that affords gradual skill development.

For policy, findings suggest minimum standards for field supervisor responsibilities and a potential framework for clinical teaching experiences. Supervisor written feedback may be necessary across TEPs to enhance clinical teacher development. Policymakers may also want to consider certain pedagogical skills as foundational to PST development, which could influence how TEPs structure their training on these foundational pedagogical skills, specifically as they are enacted within clinical training.

For research, this study contributes to understanding how clinical teaching remains the most influential structure in teacher preparation; however, there remains much left to examine. Although there is a significant amount of evidence for cooperating teachers (Ronfeldt, 2021), more research is needed about other structures within this experience, particularly concerning the role of supervisors. Future studies could examine other aspects of supervisor feedback as noted previously, such as analyzing the supervisor feedback in its entirety and studying the relationship between supervisor feedback and PST response, specifically using large-scale samples. Furthermore, understanding interactions and communications between field supervisors and PSTs (or with cooperating teachers) can maximize the value of this structure. Alongside our findings, other large-scale and systematic data in this area would be instrumental to our understanding of PST development (Goldhaber, 2019; Sleeter, 2014) for the aim of preparing a more effective teaching workforce.

Footnotes

Appendix

Descriptive Statistics and Summary Table for Measures Analysis of Variance of the Effects of Supervisor Feedback Code and Observation Number on Overall Rating Repeated (N = 3,072)

| Observation | RM-ANOVA F | ||||||

|---|---|---|---|---|---|---|---|

| Feedback Code | 1 | 2 | 3 | 4 | Feedback code (F) a | Observation number (N) b | F ×N |

| Praise | 2.59 (0.06) | 2.76 (0.07) | 3.03 (0.09) | 3.29 (0.09) | 0.37 | 88.04*** | 0.83 |

| Maintaining student attention | 2.35 (0.05) | 2.50 (0.08) | 2.86 (0.12) | 3.47 (0.14) | 6.34* | 99.68*** | 3.07* |

| Managing transitions | 2.64 (0.10) | 2.57 (0.11) | 2.91 (0.08) | 3.27 (0.15) | 0.72 | 20.56*** | 1.42 |

| Non-verbal Techniques | 2.39 (0.08) | 2.30 (0.09) | 2.74 (0.13) | 3.12 (0.30) | 8.30** | 13.24*** | 0.78 |

| Corrections | 2.75 (0.07) | 2.58 (0.08) | 2.94 (0.10) | 2.92 (0.18) | 7.75** | 8.61*** | 7.42*** |

| Lesson cycle | 2.66 (0.04) | 2.78 (0.06) | 2.96 (0.07) | 3.25 (0.12) | 5.05* | 47.31*** | 8.98*** |

| Lesson connections | 2.48 (0.07) | 2.79 (0.09) | 3.20 (0.10) | 3.31 (0.18) | 0.06 | 62.23 | 0.44 |

| Student comprehension | 2.58 (0.04) | 2.66 (0.06) | 3.19 (0.06) | 3.24 (0.09) | 1.98 | 96.41*** | 1.43 |

| Lesson delivery | 2.48 (0.04) | 2.66 (0.05) | 2.92 (0.07) | 3.25 (0.07) | 15.10*** | 123.45*** | 1.86 |

df = 1.

df = 3.

p < .05. **p < .01. ***p < .001.

Notes

A

I

M