Abstract

Color is the foundation of well-put-together ensembles in clothing collocation. The way people coordinate and match different hues within looks may bring different visual feelings. However, clothing color collocation is an extremely challenging task due to great changes of public tastes and differences of consciousness towards color collocation. We propose a clothing color collocation model that consists of two deep neural networks based on color histograms calculated from a quantized color space and design features from clothing images. Given a clothing collocation, the model produces a score that indicates the degree that these clothes match. Experiments on Polyvore409k data sets validate the proposed model for clothing color collocation.

Introduction

Clothing collocation is a ubiquitous problem in our daily life. People's clothing collocation choices can reflect their fashion tastes, social status, emotions, characters, and so on. In collocation, as we all know, color is a key factor to be considered, and the way people coordinate and match different hues is of great importance. Often, the collocations of clothes with the same designs, but different colors, may bring different feelings to people. For example, the upper-body clothes in Fig. 1 collocates with the jeans, which have the same clothing designs but different colors. Due to the similar color of clothing in Figs. 1a and b, it is not very impressive in visual impact, but looks layered and delicate. The clothes in Figs. 1c and d have contrasting colors, which gives a sense of jumping. It is clear that clothing color plays a significant role in clothing collocation. However, it is widely accepted that clothing color collocation is extremely difficult, and that elegant clothing color collocation expertise is only acquired by a limited group of fashion experts.

Collocations of clothing with the same designs and different colors.

In this study, we teach the machines the capability for clothing color collocation so that people can obtain guidelines whenever they have the clothing color collocation questions. Our work focuses on the clothing color collocation for upper- and lower-body clothes. Considering that clothing designs (e.g., texture, fabric, and shape) are also the foundations of clothing collocation, we involve the clothing designs into the collocation for supplementing color information.

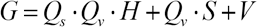

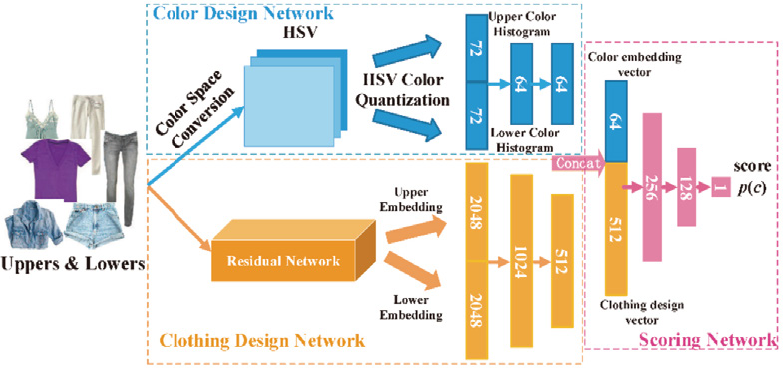

To this end, we propose a deep neural network to score clothing color collocation for upper- and lower-body clothing. Given a pair of upper- and lower-body clothing images, the model produces a score that indicates the degree that the pair of clothing matches. Fig. 2 shows the workflow of our proposed clothing color collocation model. The model takes a pair of clothing images as input and outputs the collocation score for the pair of clothes. We use two deep neural networks to automatically learn the clothing color representation based on a quantized color space and the clothing design representation based on clothing images, respectively. We then concatenate the color representation and the design representation and feed it into another deep neural network to predict the collocation score. Due to the subjectivity of the collocation scores, we train the model in a ranking formulation 1 in which the model learns by comparing pairs of clothing collocations. The contributions of the work are summarized as follows.

Workflow of the collocation scoring model.

We propose a deep neural network that produces scores for clothing color collocations of upper- and lower-body clothing. The clothing designs are also involved into the prediction of the scores for the supplement of color information.

We design a ranking formulation for the training of the proposed model to tackle the problem of clothing color collocation in which the model learns by comparing the pairs of clothing collocations.

The rest of this paper is organized as follows. The related works on clothing collocation are introduced, the clothing color representation method for clothing images is discussed, the collocation scoring network is presented, and the ranking formulation for model training is introduced. Next, the experimental results demonstrate the effectiveness of the proposed clothing color collocation model, followed by the conclusion.

Related Work

Fashion has attracted lots of research interest in computer vision and multimedia. Many research efforts have been made in this domain, focusing on clothing recognition,2–5 clothing retrieval,6–9 clothing parsing,10–12 clothing collocation, and recommendation.13–16 We therefore focus on the research areas of clothing collocation. Clothing collocation measures whether a group of clothing items collocate with one another. Serro et al. learned the collocation of an outfit by predicting its fashionability from a photograph using a conditional random filed model. 17 Instead of learning the collocation of the outfit in a photograph, McAuley et al. measured the compatibilities of an outfit by learning a distance metric between clothes with convolutional neural network (CNN) features, 18 and Veit et al. proposed use of the Siamese CNN to improve metric learning. 19 These works focus on learning the collocation for images of clothes, but neglect the relationship among different fashion items. Considering this problem, Han trained a Bidirectional Long Short-Term Memory (LSTM) to jointly learn visual-semantic embedding and the relationship among fashion items for collocation learning. 20 Vasileva et al. presented an approach to learning an image embedding that respects item type, and jointly learns notions of items collocation in an end-to-end model. 21 Tangseng built a deep neural network-based system that can take variable-length fashion items and predict scores for the collocations of outfits. 22 Yang addressed the interpretable fashion matching task with rich attributes associated with fashion items. 23 Chen proposed a deep mixed-category metric learning framework to address fashion collocation by combining deep CNNs and deep metric learning. 24 Besides the designing visual features, Hidayati took the users’ basic body shapes into consideration of clothing collocation. 25

Clothing Color Representation

A color model is an abstract mathematical model describing the way colors can be represented as tuples of numbers, which is used for visualizing the image more perfectly. There are several color models for representing the images (e.g., RGB, HSV, and CMYK). Compared with other color models, the HSV model aligns with the way human vision perceives colors more closely. 26 Therefore, we extract the color features in the HSV space represented by color histograms.

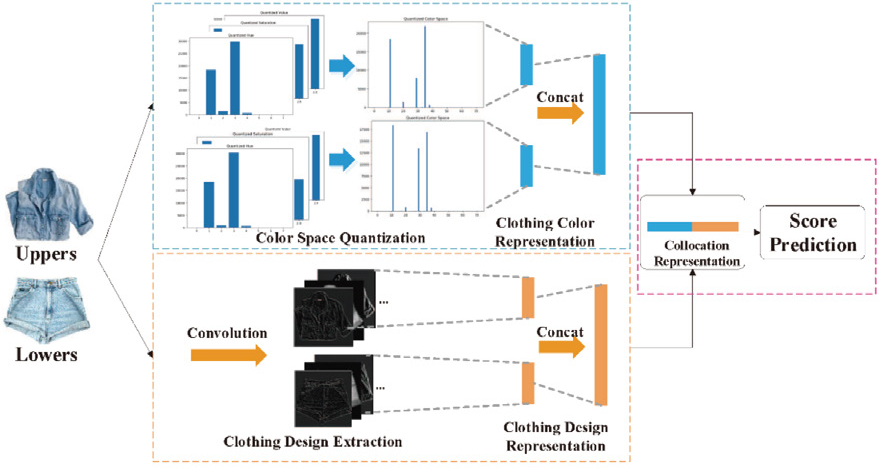

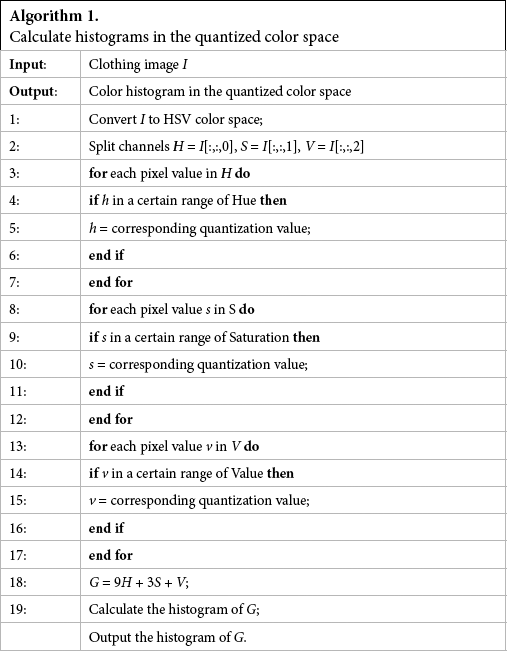

However, calculating the histograms in the original HSV color space may result in high dimensions of features and large numbers of colors, which will lead to a large amount of computation and color confusions by machines. To tackle the above problems, we first quantized the values of HSV histograms into several bins and then calculate the histograms in the quantized color space. In the HSV model, hue is the color portion of the model, expressed as a number from 0 to 360. Saturation describes the amount of gray in a particular color, from 0% to 100%. Value works in conjunction with saturation and describes the brightness or intensity of the color, from 0% to 100%. We quantize the HSV color space based on thresholding, which is a widely-used color quantization method in image retrieval.27–29

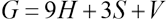

The pixel values in each component of color H, S, and V are quantized to corresponding quantization values according to the threshold ranges shown in Table I. Hue, saturation, and value are quantized into 8, 3, 3 bins, respectively. Then, based on the quantized HSV color space, we combine the three components according to Eq. 1. 28

Table I. Thresholds for Quantization of Hue, Saturation, and Value

H, S, and V are the three components, and Q s and Q v denote the quantized series of S and V. In accordance with the quantization level, Q s and Q v are set to 3. Thus, Eq. 1 can be written as Eq. 2.

According to Eq. 2, we can obtain a 72-dimensional color feature vector. The detailed algorithm is shown in Algorithm 1. The quantification method focuses more on hue and saturation, and reduces the effects of brightness or intensity in the color.

Algorithm 1. Calculate histograms in the quantized color space

Collocation Scoring Network

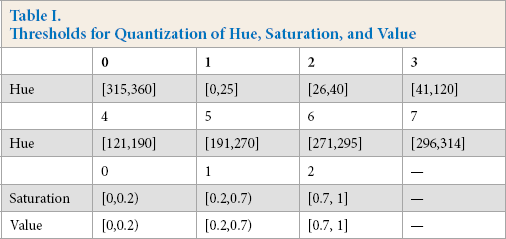

In this section, we present our proposed collocation scoring network. As illustrated in Fig. 3, the collocation scoring network consists of two subnetworks (i.e., the color design and the clothing design networks), which are based on the clothing color representation and the clothing design representation, respectively. The collocation scoring network takes a pair of upper- and lower-body clothing images as input and outputs the collocation score. The color histograms calculated from the upper- and lower-body images in a quantized HSV color space are fed into the color design network to obtain a color embedding vector, while the pair of clothing images are fed into the clothing design network to obtain a design embedding vector. Finally, the color embedding vector and the design embedding vector are concatenated, and sent through a multi-layer network to predict a collocation score.

Architecture of our proposed collocation scoring network.

Network Architecture

Color Design Network

The color design network is used to extract the color features of pairs of input clothing images. Given a pair of upper- and lower-body clothing images, we first convert the color space of the images to HSV and calculate the histograms described previously. Thus, we can obtain two 72-dimentional vectors, which represent the color information of upper- and lower-body clothes, respectively. The two vectors are then concatenated to be a 144-dimentional vector, which is sent through a deep neural network with two hidden fully-connected layers with Rectified Linear Unit (ReLU) as the activation function. Each fully-connected (FC) layer has 64 units. Finally, we obtain a 64-dimentional color embedding vector.

Clothing Design Network

To involve clothing design information into the process of clothing color collocation score prediction, we explicitly integrate a clothing design network into our collocation scoring network for supplementing the color design network. In the clothing design network, we take ResNet-101 30 as the backbone, which is pre-trained on ImageNet. 31 Specifically, we extract the 2048-dimentional embedding from the penultimate layer of ResNet-101 as the upper- and lower-body clothing image representations. Therefore, we can obtain two 2048-dimentional vectors, which represent the clothing design information of upper- and lower-body clothes, respectively. The two vectors are then concatenated to be a 4096-dimentional vector, which is sent through a deep neural network with two hidden fully-connected layers with ReLU as the activation function. The two fully-connected layers have 1024 and 512 units, respectively. Finally, we obtain a 512-dimentional design embedding vector. A dropout layer is used to prevent overfitting throughout the architecture.

Scoring Network

The color embedding vector and design embedding vector are concatenated and fed into a 3-layer Multi-Layer Perceptron (MLP) network to predict the collocation score. The numbers of units for the first two hidden layers are 256 and 128, respectively. The final output layer has one unit for collocation score.

Model Training

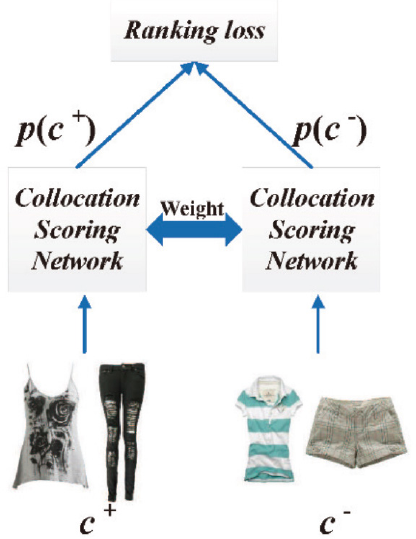

Since the collocation score of a pair of upper- and lower-body clothing images is unknown a priori and very subjective for humans, we train our collocation scoring network in a ranking formulation in which the network learns by comparing pairs of upper- and lower-body clothing images. This allows us to train the network without the need for score-level annotation. As illustrated in Fig. 4, we embed our collocation scoring network into a ranking training framework, which consists of two collocation scoring networks sharing the same weights.

Ranking framework for training the collocation scoring network.

To train our model in the ranking formulation, we define the hinge loss for a pair of clothing collocations (c+,c–) in Eq. 3.

c+ denotes a pair of upper- and lower-body clothes that match well, and c– denotes an unsuitable collocation of upper- and lower-body clothes. The p(c+) and p(c–) are the collocation scores output by the model and m is a positive margin hyperparameter. From the hinge loss, the loss will be 0 if p(c + ) is greater than p(c–) by the margin m. Thus, the hinge loss imposes a ranking constraint to enforce that c+ scores higher than c by a margin m. The greater the margin m, the stronger the constraint. The loss function accords with the fact that a good model will output a higher score for the good clothing collocation than the bad ones.

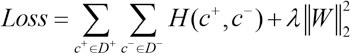

Based on the hinge loss, the total loss of our proposed model over the training dataset D is given by Eq. 4.

The first term is the sum of hinge losses over the training dataset D and the second term is the square of L2 norm to prevent overfitting. D + is the dataset with all positive samples, while D - is the dataset with all negative samples, λ is the regularization parameter, and W denotes the parameters of the model.

In training the collocation scoring network, the two subnetworks are trained simultaneously. By minimizing the total loss function (Eq. 4), the parameters in the color design and clothing design networks are iteratively optimized simultaneously. Therefore, the training of the collocation scoring network are guided by both the clothing colors and clothing designs.

Collocation Score Prediction

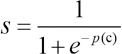

When predicting the collocation score of a pair of upper-and lower-body clothing images, we input a pair of clothing images to the collocation scoring network and then obtain a collocation score for this pair of upper- and lower-body clothing images. To map the score into the range of [0,1], the sigmoid function is used on the output of the collocation scoring network (Eq. 5).

s is the final collocation score of the input pair of clothing images, and p(c) is the output of the collocation scoring network. The final collocation score is a quantitative index to show how suitable the collocation is. However, in many situations, it needs to be known whether a pair of upper-and lower-body clothes matches well or not. To this end, a threshold can be set to determine if a pair of upper- and lower-body clothing images is a good collocation. If the collocation score is greater than the threshold, the pair of upper- and lower-body clothes is thought of as a good collocation. Otherwise, the upper- and lower-body clothes may not match well.

Experiments

Dataset

In this work, we evaluate our model on Polyvore409k 22 dataset, collected from the fashion-based social media website polyvore.com, containing 409,776 outfits and 644,192 items. Every outfit covers the entire body with a variable number of items. In the experiments, we focus on the upper- and lower-body clothing collocation. Thus, we extract the outfits that contain both the upper- and lower-body clothing in the dataset. For each outfit, we only keep the upper- and lower-body clothing and remove the duplicate items in training and testing sets. We thereby obtain 11,542 valid outfits consisting of both upper- and lower-body clothing. We split the data into training and testing data, which contain 10,000 and 1,542 outfits respectively. The positive and negative samples are balanced in training and testing data. We ensure that no outfit overlaps between training and testing sets. Fig. 5 presents some examples from our dataset.

Upper- and lower-body clothes from Polyvore409k dataset.

Experimental Settings

In our experiments, we consider the clothing collocation as a classification problem. Given a pair of upper- and lower-body clothing images, a threshold is set to determine whether this pair of clothing images is a good collocation or not. If the collocation score is greater than the threshold, this pair of clothing images is regarded as the positive sample. Otherwise, this pair of clothing images is regarded as the negative sample. The measures of accuracy, precision, recall, and F1-score are used to evaluate the classification results.

To train our network, we scale all input clothing images to a resolution of 224 x 224. The model is trained on the training set, which was subsequently evaluated. We implement the neural network using the Pytorch framework. We use the Adam optimizer 32 for optimization where the learning rate is set to 0.001. The coefficients used for computing running averages of gradient and its square are set to 0.9 and 0.999, respectively. The dropout rate of 0.5 is adopted for all dropout layers in the collocation scoring network. The weight decay λ is set to 0.005 and the margin m is set to 1.0. We train the network using a batch size of 12 for 1000 iterations on a PC with i5 2.80 GHz CPU, 16 GB RAM and two Titan X (Pascal) GPUs. The threshold is set to 0.5.

Experimental Results

We compare our collocation scoring network with classification-based and ranking-based approaches to verify the effectiveness of our proposed method.

Polyvore Network

The method 22 is proposed for outfit grading and recommendation. It uses pre-trained ResNet-50 to extract the embedding of clothing images and feeds the embedding to a binary classifier, which is a multi-layer perceptron (MLP) for classification. The MLP consists of one 4096-dimentional linear layer, followed by batch normalization and ReLU activation with dropout, and the 2-dimentional layer is followed by softmax activation for classification. A multinomial logistic loss is used to learn the parameters of the model. Instead of using the ResNet-50 as the backbone, we replace it with ResNet-101, which is same as the architecture of the clothing design network for a fair comparison.

Ranking ResNet

We train an end-to-end ResNet30 in a ranking formulation, which is the same as our proposed model. The architecture is also the same as our collocation scoring network, except that it only consists of the clothing design network. The loss function (Eq. 4) is used for training the ranking resnet.

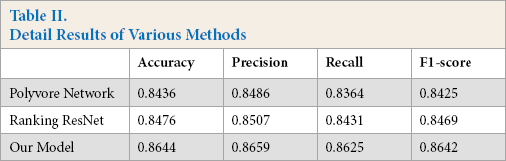

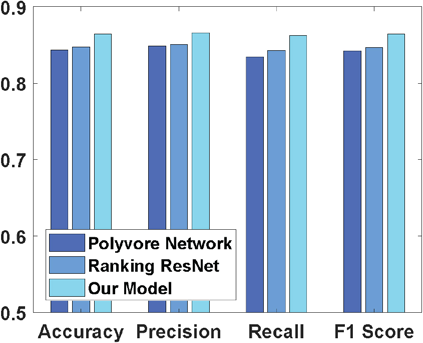

Fig. 6 shows the performance of the Polyvore Network, Ranking ResNet, and our proposed collocation scoring network. The details of accuracy, precision, recall, and F1-score are listed in Table II. Our proposed method gets the best performance among all methods under comparison. It can achieve higher accuracy, precision, recall, and F1-score. Our model outperforms the Polyvore Network by 2.08%, 1.73%, 2.61%, and 2.17% in accuracy, precision, recall, and F1-score, respectively. It outperforms the Ranking ResNet by 1.68%, 1.52%, 1.94%, 1.73% evaluated with the four metrics, respectively. The experimental results demonstrate the effectiveness of our collocation scoring model.

Table II. Detail Results of Various Methods

Comparison of different clothing color collocation methods.

Further Analysis

We further analyze the effects of the color information in clothing collocation and the ranking formulation in model training.

Color

Comparing our model with the Ranking ResNet, the only difference is the color design network. With the color design network, the collocation scoring model can learn the color representation and involve the color information in the collocation score prediction. The experimental results show that our model can obtain better performance on all evaluation metrics. It can be seen that color is an important factor for clothing collocation and the color design network can improve the performance of the model.

Effect of Training in Ranking Formulation

The Ranking ResNet and the Polyvore Network have the same architectures, but different loss functions. The Ranking ResNet is trained in ranking formulation, while the Polyvore Network is trained by minimizing the multinomial logistic loss. The experimental results show that the Ranking ResNet outperforms the Polyvore Network, which justifies the advantage of training in ranking formulation for the problem of clothing collocation.

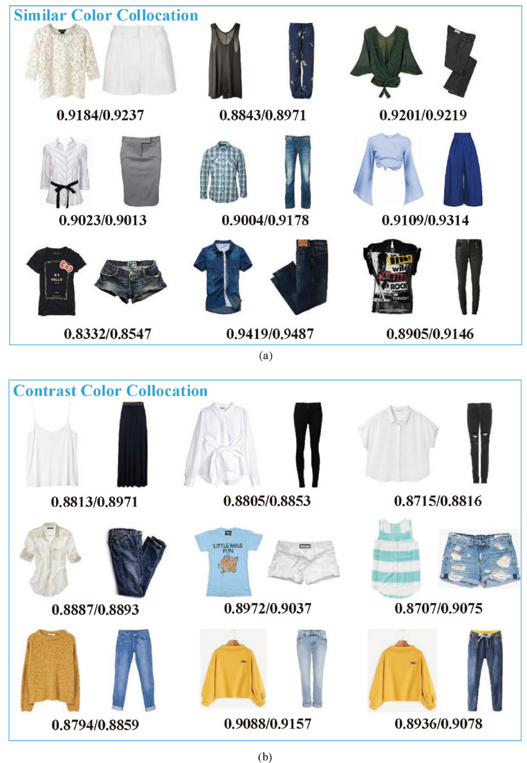

Furthermore, as clothing color plays a key role in our collocation scoring network, we analyze the effect of the clothing color in the process of collocation score prediction with some examples. Fig. 7 shows some cases of clothing color collocation and their collocation scores predicted by Ranking ResNet and our collocation scoring network, which are represented in the form of score predicted by Ranking ResNet or score predicted by collocation scoring network. We focus on two fundamental types of clothing color collocation, which are similar color collocation and contrast color collocation. From Fig. 7a, the scores predicted by our collocation scoring network for the type of similar color collocation is higher than the scores predicted by Ranking ResNet. For the type of contrast color collocation, the scores predicted by our collocation scoring network are also higher than the scores predicted by Ranking ResNet, especially for several color collocations such as white and black, blue and white, and yellow and blue, which are shown in Fig. 7b.

Cases of clothing color collocation.

Conclusion

We have studied the problem of clothing color collocation for upper- and lower-body clothing. We propose the collocation scoring network to predict the collocation score for a pair of clothing images based on both color histograms calculated from a quantized HSV color space and the clothing design features extracted from clothing images, which is trained in a ranking formulation. The experiments validate that the proposed model is effective to predict the collocation scores for upper- and lower-body clothing.

Acknowledgement

This work was supported by the National Natural Science Foundation of China (Nos.61573235, 61976134), and the Open Project Foundation of Intelligent Information Processing Key Laboratory of Shanxi Province (No.CICIP2018001).