Abstract

In 2006, Peters

Many laboratory animal experiments (LAEs) are performed to inform human health. They may play an important role in the identification and development of drugs, medical devices and surgical procedures, in making risk assessments for safe human exposure and increasing biological knowledge.

1

It would seem rational to critically review the relevant LAEs before new LAEs and, in particular, clinical trials in humans are performed. Systematic reviews (SRs) and, where appropriate, meta-analyses (MAs) are suitable tools to summarize the current evidence on a given subject and therefore directly support the ‘three Rs’ (replacement, reduction and refinement), for example, by preventing unnecessary duplication of animal studies. SRs and MAs play an important role in physics, the social sciences and medicine. However, a few years ago, several studies have shown that, especially compared with other research fields, relatively few SRs and MAs had been performed in LAEs. In 2004, about one in every 1000 Medline records about human research were said to be tagged as an MA, compared with one in 10,000 records about animal research.

2

In 2006, Mignini and Khan

3

tried to collect all LAE SRs and found 30. By contrast, around the same time, Peters

Unfortunately, SRs and MAs are sensitive to publication bias. Publication bias is the phenomenon of an experiment's results determining its likelihood of publication, often leading to severe under-representation of negative findings.

5

Since unpublished study results are hard to find, publication bias is the Achilles heel of any SR and may lead to biased syntheses of the evidence in any given field. Publication bias has often been demonstrated in clinical sciences,

6

but little is known about its extent in LAE.3,7,8 However, many authors speculate that publication bias may be common in LAE3,9 and the number of published negative results of LAEs is surprisingly low.

10

Interestingly, authors of SRs and MAs of LAEs often do not (explicitly) consider the fact that their results might be biased by publication bias. Mignini and Khan

3

concluded that only five out of 30 SRs assessed the risk of publication bias. Peters

The objective of this study is to identify all SRs and MAs of LAEs published between July 2005 and 2010, thereby updating the earlier work by Peters

Materials and Methods

We systematically searched Medline, Embase, Toxline and ScienceDirect, all from July 2005 to 2010. There were no language restrictions. For details on the search, see Supplementary file 1 [http://la.rsmjournals.com/cgi/content/full/la.2011.010121/DCl]. In addition, reference lists of relevant articles were manually checked to identify studies missed by our database searches. We did not search any grey literature (i.e. literature that has not been formally published) since Peters

To facilitate a formal comparison, we used similar criteria to identify relevant SRs and MAs of LAEs as Peters

SRs and MAs were included if they involved

A medical intervention was applied, subdivided in measurement of:

Efficacy of a medical intervention;

Side-effects of a medical intervention, or its toxicity;

The mechanisms of action of a medical intervention.

Risk factor research (epidemiological associations or mechanisms of action of disease).

Effects of an exposure to a chemical substance were measured.

An overview of animal models for disease was given.

The accuracy of a test to diagnose disease was measured.

Articles including other experiments, such as human studies, in addition to the LAEs were also included. We excluded genome-wide association studies and LAEs whose main purpose was to learn more about fundamental biology, physical functioning or behaviour and was not directly to inform human health.

One author (DK) extracted relevant data of the included articles. Of the SRs, authors, publication year, country, journal, objective, search strategy, assessment of study quality and any comments on publication bias were extracted. From MAs, additionally, data on species, number of experiments included and details on the methods used (effect estimates reported, assessment of heterogeneity and synthesis method used) were extracted. These data were compared with the results before July 2005. Ninety-five percent confidence intervals (CIs) for differences between proportions were calculated using exact binomial methods using STATA version 10.1.

Results

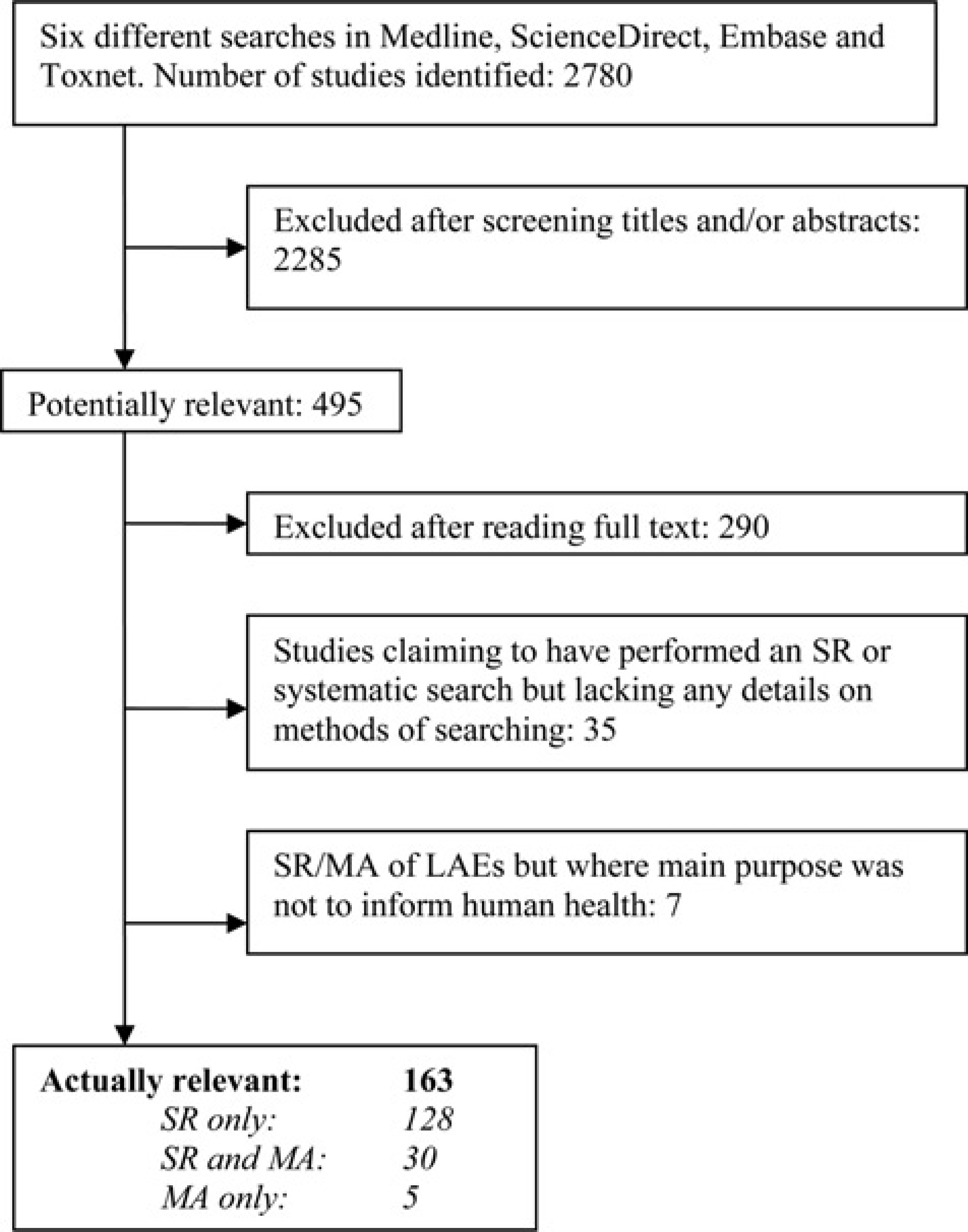

Figure 1 shows the flow of references from electronic searches to final inclusion in our review. The searches identified 2780 references of which 163 fulfilled the inclusion criteria. We excluded 35 studies in whose title, abstract or methods it was claimed that an SR or a systematic search had been performed. However, these papers contained no details on the methods used. Seven studies pertained to SR or MA of LAEs but were excluded because their main purpose was not to inform human health.11–17

Flowchart showing how the papers entered the review SR: systematic review; MA: meta-analysis; LAE: laboratory animal experiment

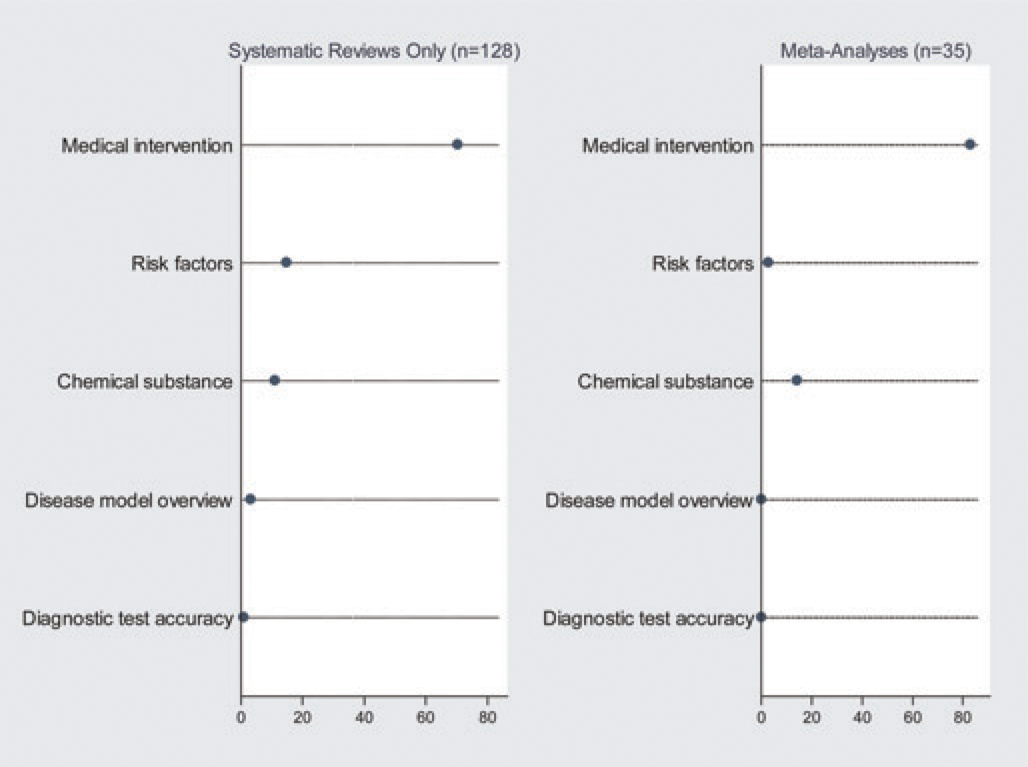

So in total we found 158 SRs of LAEs published between July 2005 and 2010, of which 30 also included an MA. We found five MAs of LAEs which did not fulfil the criteria of an SR. Figure 2 shows to what type of animal research, as defined in the Materials and methods section, the included papers were allocated. Supplementary file 2 [http://la.rsmjournals.com/cgi/content/full/la.2011.010121/DC2] shows a reference list of all the included articles.

Type of research that was reviewed. The first dot plot shows the type of animal research which the included systematic reviews (without meta-analysis) reviewed in percentages. The second dot plot shows the type of animal research which the included meta-analysis (with or without a systematic review) reviewed in percentages

Systematic reviews

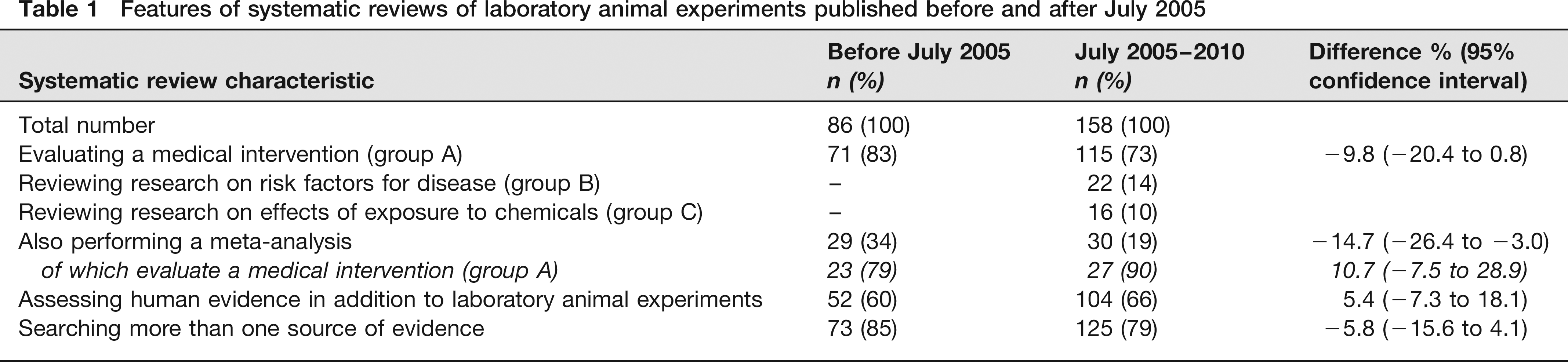

Table 1 compares the SRs performed after July 2005 with those found by Peters

Features of systematic reviews of laboratory animal experiments published before and after July 2005

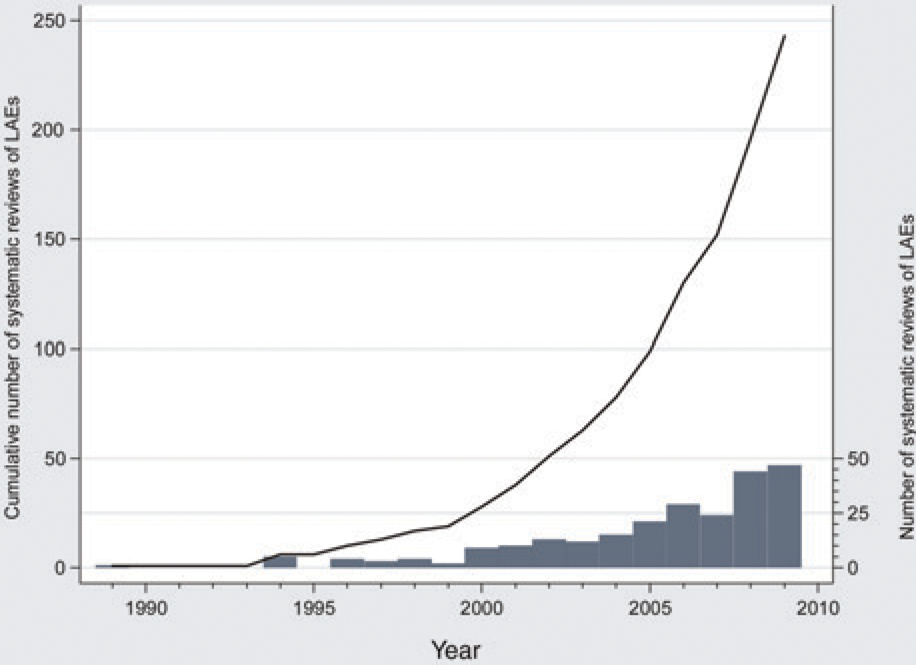

The number of published SRs of LAEs has been growing over the past 15 years. There are now 244 SRs and the number about doubled every three years as of 1997 (Figure 3).

Systematic reviews of laboratory animal experiments. Bar graph shows the number of published systematic reviews (SRs) of laboratory animal experiments (LAEs) for the years 1990–2010. Line graph shows the cumulative number of such reviews. As of 1997, the number of SRs of LAEs about doubles every three years

As before July 2005, the majority of the SRs evaluate a medical intervention. In the subgroup of SRs that performed an MA this percentage was even higher. The percentage of SRs incorporating human evidence was comparable before and after July 2005, as is the case with SRs searching more than one source of evidence. Only 15 SRs not performing an MA assessed study quality of included articles and only 10 commented on the possibility that findings might be distorted by publication bias. How SRs performed before July 2005 scored on these items is unknown.

Meta-analyses

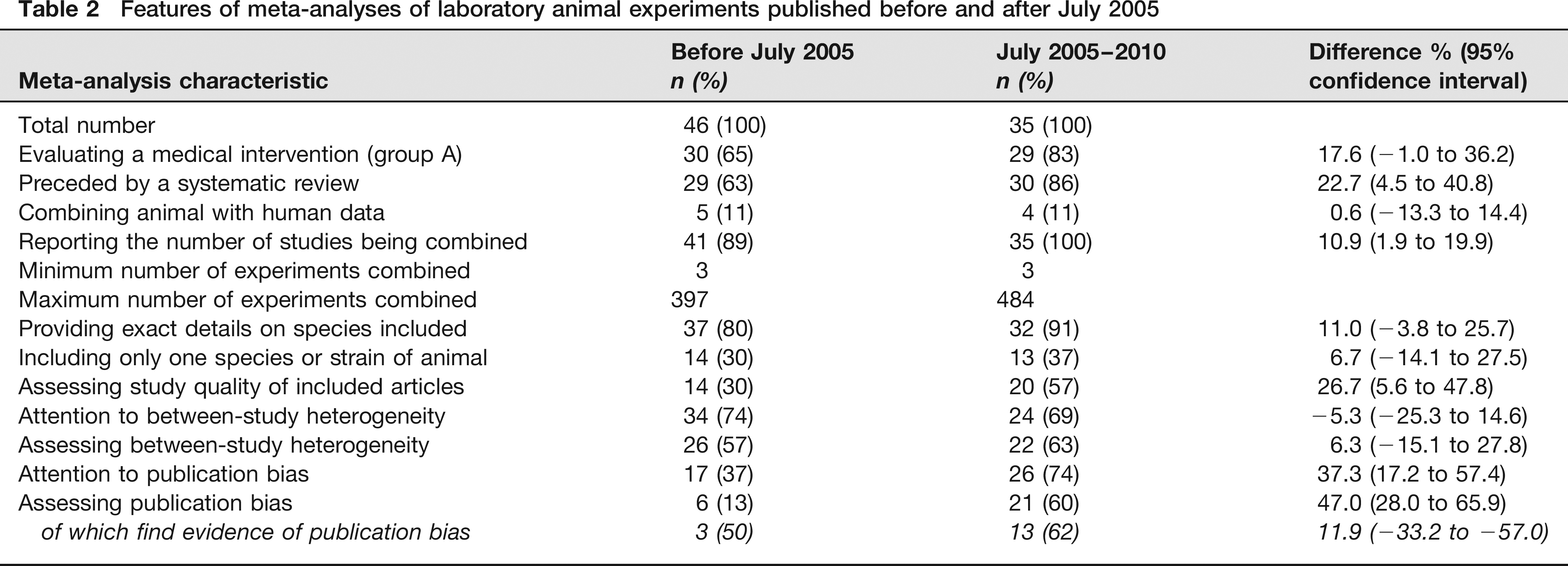

Table 2 compares MAs published before July 2005 with those published later. Individual summaries of all the included MAs are in Supplementary file 4.

Features of meta-analyses of laboratory animal experiments published before and after July 2005

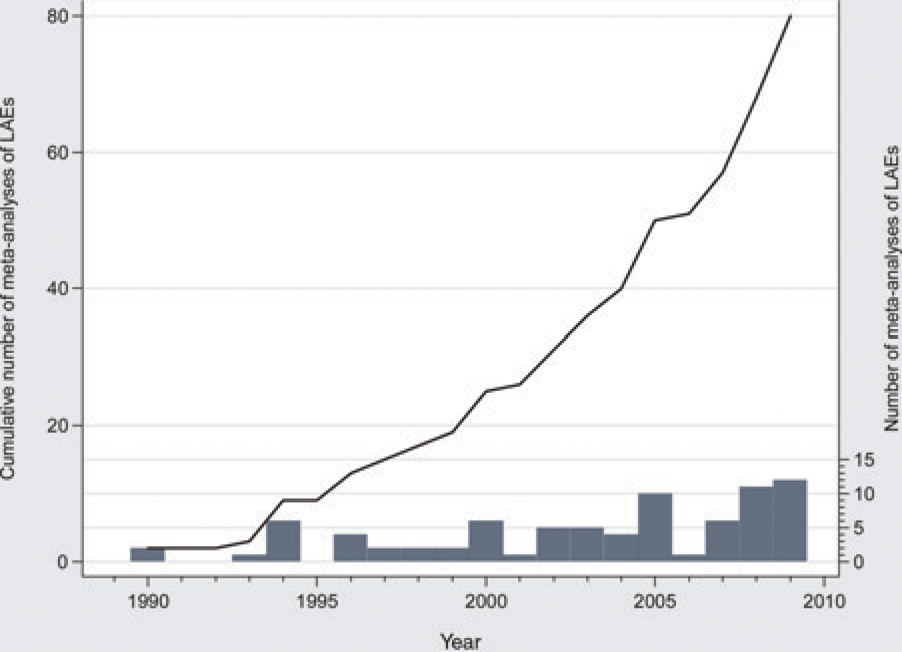

There are now 81 published MAs of LAEs. As of 1999, the number of MAs of LAEs roughly doubled every five years (Figure 4). MAs performed after July 2005 more often evaluate a medical intervention (group A) than those before. In addition, a larger percentage is preceded by an SR.

Meta-analyses of laboratory animal experiments. Bar graph shows the number of published meta-analyses (MAs) of laboratory animal experiments (LAEs) for the years 1990–2009. Line graph shows the cumulative number of such meta-analyses. As of 1999, the number of MAs of LAEs about doubles every five years

Some general features, such as the percentage of studies incorporating human data, the minimum and maximum number of experiments combined and the number of species included, have not changed. All the MAs performed after July 2005 reported the number of studies being combined and the number of MAs providing exact details on animal species has grown. Furthermore, the percentage of MAs assessing study quality and between-study heterogeneity has increased.

Publication bias

The proportion of MAs commenting on the possibility that their results might be affected by publication bias increased from 37% before to 74% after July 2005. Four MAs performed after July 2005 mentioned that their results might be biased by publication bias but made no effort to assess it more formally and one mentioned that the number of publications was too small to test for publication bias statistically. Nine MAs did not mention publication bias. Four of the latter group performed an MA not preceded by an SR.

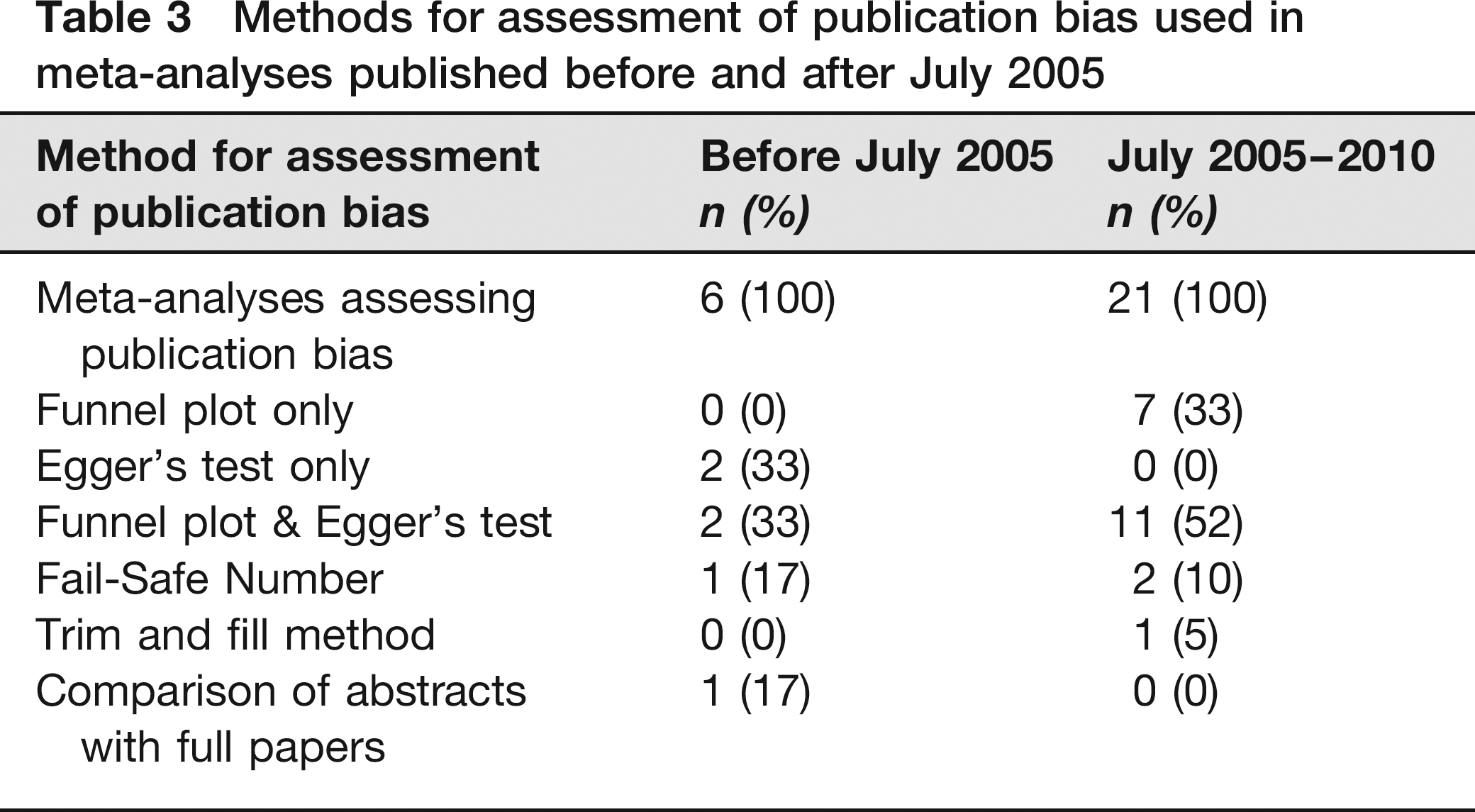

The proportion of MAs trying to assess publication bias increased from 13% before to 60% after July 2005. The ways in which publication bias was addressed were Egger's test for funnel plot asymmetry, the Fail-Safe Number (indicating the number of negative studies necessary to render the pooled estimate non-significant 18 ), the ‘trim and fill’ method (imputes missing studies based on a statistical model 18 ) and comparison of abstracts with full papers (Table 3).

Methods for assessment of publication bias used in meta-analyses published before and after July 2005

Discussion

Using SR methods, we updated previous work by Peters

The quality of MAs seems to be increasing. A larger percentage is being preceded by an SR (statistically significant improvement by 23% [95% CI 4.5–40.8]), thus ensuring proper searches and data extraction methods, reporting of the number of studies combined (statistically significant improvement by 11% [95% CI 1.9–19.9]) and providing details on animal species included (improvement by 11% not statistically significant [95% CI -14.1–25.7]). Furthermore, study quality of included studies is more often assessed (statistically significant improvement by 27% [95% CI 5.6–47.8]), as in the case for between-study heterogeneity (improvement by 6% not statistically significant [95% CI -15.1–27.8]). Unfortunately, in SRs not performing an MA, study quality and publication bias are seldom considered or assessed.

Among meta-analysts of LAEs the awareness of publication bias appears to have grown over the last five years. Seventy-four percent (26/35) mentioned it, compared with 37% (17/46) before July 2005. This is a statistically significant improvement by 37% (95% CI 17.2–57.4). Furthermore, 60% (21/35) formally addressed publication bias, compared with 13% (6/46) before July 2005. This is a statistically significant improvement by 47% (95% CI 28.0–65.9). In the MAs assessing publication bias, evidence was found in 62% (13/21) (up from 50% [3/6]). However, the value of methods to assess publication bias in MAs should not be overestimated. Funnel plot asymmetry cannot be translated into evidence for publication bias straightforwardly because other phenomena may cause asymmetry, as is the same for the ‘trim and fill’ method. 18 In addition, the power of Egger's test for funnel plot asymmetry is low unless there is severe bias. 18 Finally, different versions of the Fail-Safe Number produce different results and no statistical criterion exists for its interpretation. 18 Therefore, publication bias may be underestimated as well as overestimated with these methods. However, we think that its existence is plausible.

Our study has some limitations. Firstly, our definition of SR causes that also several reviews have been included which do not fulfil the definition of SR as defined by the Cochrane Collaboration,

19

an international non-profit organization that promotes the production and accessibility of SRs. Many studies included in this review performed a systematic search but the presentation of their results is more narrative rather than systematic since, for example, study quality of included studies is often not assessed. However, we maintained the definition used by Peters

Secondly, our definition of LAE was slightly different from Peters

Thirdly, our results might be biased by publication bias. Although we did a thorough literature search, we did not search any grey literature since Peters

Finally, we were not able to check to which extent the included MAs adhered to the guidelines for good quality reporting of MAs of LAEs as proposed by Peters

How might SRs and MAs affect the 3Rs? SRs and MAs help to

Results of SRs and MAs provide an overview of all the available evidence on a given subject. Since more data are combined, the precision of estimated effects is usually much increased, resulting in conclusions with more power than those of a single study. This increased precision reduces the number of animals needed in future experiments, directly supporting the principle of

However, performing more of these studies also poses some concerns. Firstly, the large number of studies claiming to have performed an SR or systematic search but lack details of their methods of searching is a cause for concern. The credibility of these studies is low since the reader does not know how thoroughly the literature was searched and replication is not possible. Secondly, SRs and, especially, MAs are sensitive to several types of bias. Poor quality of included studies, between-study heterogeneity and publication bias can significantly affect results, and must therefore always be assessed and, where possible, accounted for. Publication bias may lead to unnecessary duplication of research efforts and waste of time, money and animals. If one agrees that SRs and MAs are important to any field of science, it is also important to tackle the issue of publication bias since it may compromise the validity of these studies. Although there are statistical techniques to estimate the extent of the problem, a more fundamental solution is to simply have all the relevant data available avoiding the need for statistical assumptions that cannot always be checked.

The rise of SRs and MAs in the field of applied clinical medicine caused reviewers to become much more aware of the impact poor study quality, between-study heterogeneity and publication bias may have on their study results. This, in turn, led to a wide debate on these problems and potential solutions, for example trial registries.27,28 Since 2005, many top medical journals vowed to no longer publish unregistered trials although this has proven difficult. 29 Considering the growing number of MAs that assessed study quality and publication bias, it seems that the performance of SRs and MAs has a similar effect in the LAE field. However, little is currently known about the extent of publication bias in this field. The evidence that does exist indicates that LAEs too are affected by publication bias. 10 An obvious approach would be to, either prospectively or historically, follow up a cohort of ethically approved protocols of LAEs.6,30 This way, the proportion of studies that are being finished and published can be established and determinants of (non-)publication studied.

In summary, compared with human research, the absolute number of SRs and MAs of LAEs appears to be low. However, the number has been growing strongly since July 2005. Furthermore, the quality of MAs has increased and publication bias is more often considered and assessed. The majority of the MAs that assess publication bias find evidence of missing ‘negative’ studies, indicating that this problem should probably not be underestimated. Therefore, we recommend that further research is performed to determine the extent of publication bias in the LAE field. In the meantime, given SRs’ potential for reduction and refinement, it seems advisable for animal researchers to consider performing an SR before embarking on new experiments.