Abstract

Demand for laboratory testing is increasing disproportionately to medical activity, and the tests involved are becoming increasingly complex. When this phenomenon is seen in parallel with declining teaching of laboratory medicine in the medical curriculum, a need emerges to manage demand to avoid unnecessary expenditure and improve the use of laboratory services: ‘the right test in the right patient at the right time.’ Various methods have been tried to manage demand, with success depending on the medical context, type of health service and preintervention situation. Because many factors contribute to demand, and the different settings in which these exist, it is not realistic to meta-analyse the studies and we are limited to trying to identify trends in results in particular situations. The studies suggest that education combined with facilitating interventions, such as feedback, prompts and changes to laboratory request forms are the most successful. From the perspective of a whole health service, it is important that results are not exaggerated by assessing benefits in terms of total rather than marginal cost. It would be desirable, although difficult, to include the impact on downstream clinical activity caused or avoided by the interventions. Advances in information and web technology may make the elusive goal of achieving substantial demand control more achievable.

Introduction

The term demand management derives from the macroeconomic principles developed by John Maynard Keynes, but has evolved beyond pure economics into areas including social welfare and health policies. Many different definitions are available, although one good unifying definition is that given by Encarta ‘managing a market by manipulating demand: the management of a market or economy by manipulating demand so that it reaches a stable relationship with supply’. 1

Within a health environment, supply depends on the willingness of an individual or community to pay. Within a health system, the term demand management could be defined as manipulating the use of a health resource in order to maximize its utility.

Because laboratory diagnostics only form one specific component of the overall patient investigation, diagnosis, management and outcome pathway, it cannot be separated from these other processes. While financial demand management can be thought of as restricting demand to meet the resource available, this cannot be separated from appropriate use and interpretation of laboratory tests, within an overall setting of good medical practice. While showing examples of demand management in laboratory medicine, this review will attempt to distinguish between manipulations which only reduce demand and those which contribute to better medical practice and optimal use of the laboratory as a resource.

Any intervention attempting to influence demand must be interpreted in the context of the health-care system in which it is operating. The use of laboratory diagnostics varies between countries, for example, being five times greater as a proportion of medical expenditure in the USA compared with the UK. 2,3 Legal and regulatory frameworks also vary. Many countries operate mixed public/private sector health economies with differing factors driving demand.

This review will draw on work originating from other health-care systems, but will also attempt to identify the limitations of extrapolating the examples to the UK.

The search for this review was carried out through PubMed using the keywords: demand control, laboratory, test utilization and good practice. These were supplemented by searches concentrating on specific areas using the search terms: laboratory order form, laboratory request form, laboratory guidelines, physician behaviours, computerized physician order entry (CPOE) system and test cost feedback, and a search through related citations in the articles found. This is not an exhaustive list of all demand control interventions published.

Changes in test use

Test use has risen inexorably in the UK over the last 15 years or more and is continuing to rise. 3,4 Similar findings have been reported for other countries despite higher baseline activity, although actual figures are difficult to obtain. A survey of approximately 25% of UK laboratories in 2004 suggested an average yearly rise over the previous three years of approximately 10%, accounted for by rises of approximately 5% in non-primary care work and approximately 16% in primary care work. 4 Rises varied between tests and individual years and could partly be explained by national initiatives, e.g. the United Kingdom General Medical Services contract 5 and its Quality and Outcomes Framework indicators 6 and increased awareness of the use of certain tests, such as urine microalbumin in patients with diabetes. The underlying request rise of around 10%, however, was consistent with individual laboratory findings dating back to the early 1990s and earlier. 7

Laboratory testing activity seems to be rising far faster than hospital activity as a whole, probably because it is driven by a large number of factors.

Large inter-practitioner differences in laboratory utilization have been reported in several countries including the UK. 8–11 These differences do not appear to be explained by modest demographic differences between the areas or practices concerned or by social factors, such as deprivation. 12 Even if factors have been found to influence demand, the differences seen in laboratory testing appear to be so great as to swamp those due to sociodemographic factors. These are considered below.

Additional factors which have increased demand on laboratory services include the shift of work towards primary care and increasing use of nursing staff in roles previously undertaken by doctors. 13,14

Why are tests ordered?

Reasons for requesting tests (adapted with permission from Whiting et al. 15 )

Whiting's review specifically examined diagnostic rather than monitoring tests, which may be subject to other factors. It emphasized that many factors may interact in an individual doctor's decision to order a test. One interesting example described is the involvement of factors beyond the performance of the test being included in decision-making, as in a doctor who might decide to perform a test for patient reassurance despite a belief that it may be inappropriate.

This review extended the findings of a Dutch study published by Verstappen et al. in 2004 16 which reported similar influencing factors, including geographic location, practice setting, age and sex of practitioner, specialization, experience/knowledge, belief system, defensive practice, financial incentives, cost awareness and feedback/education, categorizing these as modifiable or non-modifiable physician factors. It does appear from these two reviews that the motivations for requesting are very complex and interacting, suggesting a priori that ways of changing practice may need to be sufficiently flexible to meet the many situations seen.

These findings also broadly coincide with those in a further 2007 systematic review 17 examining non-evidence-based factors influencing test ordering.

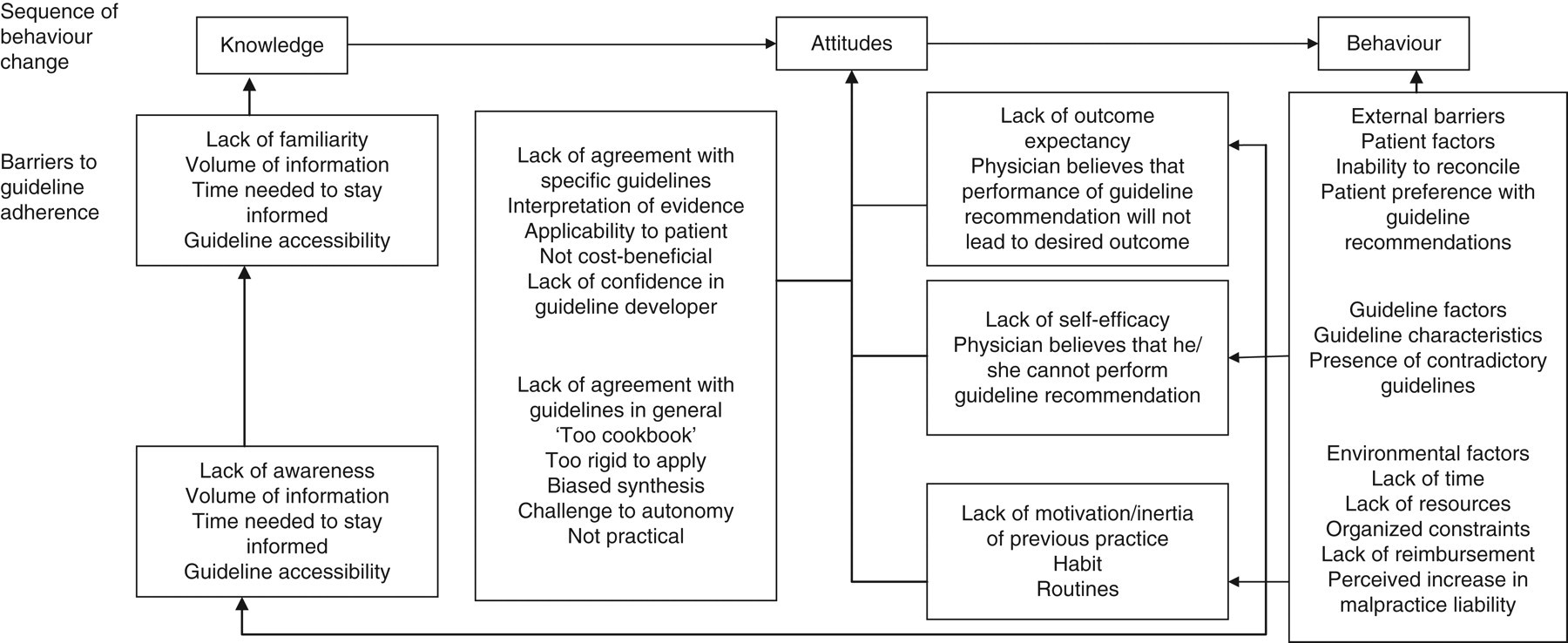

What are the barriers to following guidelines?

Alongside the explanations as to why tests are ordered, it is useful to understand the barriers to making better use of testing. An American review in 1999

18

examined barriers to following clinical practice guidelines (summarized in Figure 1).

Barriers to following clinical practice guidelines (reproduced with permission from Kawamoto et al.

18

)

It also suggested that interventions to improve practice may be insufficient if they do not attempt to influence the specific barriers involved. Both individual and contextual factors influence a doctor's decision to request a test. Unmodifiable individual factors (e.g. age, number of partners in practice) may be compensated for by targeting the modifiable ones.

Lack of ready access to knowledge would appear to be one barrier which should now be more readily addressed with Internet browser technology. The resulting improved access to information could overcome several of the other personal barriers such as the lack of self-efficacy, awareness and familiarity.

The reasons for ordering tests are therefore complex and interact, so behaviour modification initiatives are unlikely to be effective if single strategies are used.

Inappropriate laboratory utilization – history

With ever-increasing use of laboratory tests and the introduction of new and more expensive tests, the demand control agenda is taking on greater meaning at the start of the 21st century. Some of the earlier studies and interventions on laboratory utilization, including the use of informatics, date back to 1980 and before. 19–21 Earlier still, Dr George Lundberg, then editor of the Journal of the American Medical Association, highlighted the issue in 1975 22 with the statement ‘laboratory tests should not be ordered without a plan for using the information gained. What will be done if the test result is abnormal? High? Low?’

The appropriate use of diagnostic services was reconsidered in a series of articles in 1984 in Health Trends. 23 At that time, the author of the introduction to the series estimated that up to 30% of work passing through diagnostic departments might not be necessary and that a realistic saving of 10% was achievable.

Carl van Walraven and David Naylor

24

presented a systematic review in 1998 that considered 44 studies identified between 1966 and 1997 examining inappropriate laboratory utilization. Most of the studies came from North America, and most of the patients were hospitalized. The studies ranged from those of specific individual tests (e.g. blood culture, urine culture, calcium testing) using explicit criteria to define inappropriate testing, to those using broader implicit criteria and considering a wider range of tests. The authors pointed out that the criteria used to judge appropriateness of testing was often weak compared with the evidence available for therapeutic interventions, but that they were soundly based on physiological, pharmacological and probability principles. The studies reported a very wide range of inappropriate testing ranging from 5% to 95%. Most, including most of the non-American studies (UK, Netherlands, Australia, Canada, Egypt and Thailand), reported inappropriate testing rates of 10–50%, slightly under half reporting rates of 30–50%. Two studies examined the classification of appropriateness by different reviewers.

19,20

They found large and significant differences in classification between two groups of assessors (e.g. 42.8% of 339 were tests deemed inappropriate by a panel of three physicians compared with 26.5% as estimated by a single laboratory specialist).

20

The heterogeneity of the patient groups, criteria used to determine appropriateness, preintervention conditions, testing disciplines and results highlight the difficulty producing accurate estimates, which could be extrapolated to cover laboratory medicine as a whole. Some implicit criteria used to define test appropriateness were based on whether the result was normal or abnormal, or whether patient management was subsequently changed. These are overly simplistic criteria. Normal results are often used to make the decision to maintain existing treatment and may be entirely appropriate from a monitoring and safety perspective. They emphasize, therefore, that making allusions to widespread inappropriate laboratory testing needed to be qualified and put in context. This review highlights the difficulty of drawing general conclusions from a heterogeneous group of works, but it does reveal the underlying problem. This was summarized the same year in Bandolier,

25

suggesting that the real answer (how many tests were inappropriate) was probably unknowable but ‘whatever it is, it is large’. In an earlier editorial,

26

Lundberg called for an agenda to determine ‘which laboratory tests should be performed, when and how they should be performed and whether such performance was beneficial or harmful or had no effect’. He offered what might now be called a roadmap to improve physician-testing behaviour:

Know the literature, have the data and be certain that you know the right thing to do; Convene (preferably under the roles of the organized medical staff) a small committee of leading respected physicians in the health-care setting – those who know the most about the subject at issue. These physicians usually will not be department heads but rather middle-level active clinicians; Achieve agreement with this group about what should be done based on available scientific evidence and the best expert clinical opinion; Implement the changes administratively, without seeking broad agreement in advance; Add a large dose of education in writing and in conferences about what was done, why this is best for patients and the institution and how to adjust to the changes; Be open to communication, complaints, letters, visits, telephone calls and even insurrection; Ride out the actions and overreactions, carefully sorting all objections and responding with adjustments, usually minor, to valid complaints; Enjoy the success of providing better, cheaper, faster, more effective diagnostic services in the best interest of patients, physicians, the public, the institution and the payer. Summary of key points and key recommendations from CREST, Northern Ireland 2000

31

The need to improve the evidence base for diagnosis was stated by several authors between 1997 and 2003.

27–30

In 2000, the CREST (Clinical Resource Efficiency Support Team),

31

under the auspices of the Northern Ireland Central Medical Advisory Committee, produced a report on the appropriateness of laboratory investigations raising key points and recommendations. These are shown in Table 2.

Many of the recommendations were intuitive and were not necessarily achievable at the time, but have become more so with advances in informatics and the Internet. Several of these principles were further developed in subsequent reviews.

One continuing difficulty is the limited high-level evidence on either the clinical utility of a test in a given situation or appropriate retesting intervals. Many of these have been driven by local consensus opinion and, until recently, there have been few detailed reviews of evidence-based laboratory medicine in selecting and using tests.

Interventions to change testing behaviour

A large number of studies and audits have been published describing individual initiatives to change testing behaviour. These suffer from the same heterogeneity as those studies which examined levels of inappropriate test use. Solomon et al.

32

published a review in 1998, appraised in Bandolier,

33

which identified 49 studies reporting interventions designed to change physicians’ testing practices. Their report considered studies, which compared diagnostic practices in intervention and control groups. The studies used a range of single or combined interventions that can be grouped crudely into the following categories:

• Educational initiatives; • Dissemination of guidelines; • Computer-based order entry system prompts; • Activity utilization and cost information; • Deleting tests from standard laboratory order forms; • Restricting test numbers allowed; • Vetting requests by a diagnostic specialist.

They categorized the interventions into the following:

(1) Those targeting predisposing factors (primarily educational initiatives);

(2) Those targeting reinforcing factors, principally the provision of activity and costing data;

(3) Those targeting enabling factors, such as limiting number of tests allowed, unbundling the cost of test panels, deleting tests from laboratory request forms or specialist vetting of requests.

They found that 76% of the interventions reduced testing activity. Those which targeted more than one behavioural factor were more successful than those targeting a single factor (86% versus 62%). Guideline dissemination alone had limited effect. Their overall conclusion was that while traditional education alone had limited effect, it was a necessary and effective addition to strategies designed to either reinforce approaches or enable a change (such as changing the laboratory request form). Others had already made similar observations in changing medical practice in primary care. 34,35 The logical conclusion is that although audits of test use can reinforce certain practices, doctors need to be presensitized by the relevant educational information. These findings were similar to an earlier systematic review, 36 examining factors changing behaviour in clinical practice. As reported previously, 32,37–39 the results of several of the interventions appeared to return to baseline after the intervention had finished, suggesting a need for a continuous improvement process. As before with van Walraven, 24 Solomon et al. 32 concluded that the heterogeneity and incompleteness of some of the data made it difficult to draw robust conclusions.

Specific initiatives

Financial control

The success of financial measures, notably in contracting between laboratories and health-care providers in limiting demand, will inevitably depend on the setting of the health-care system. It is important to interpret those initiatives that have been performed in the context of the host health-care system. It is not surprising that several of the financial initiatives listed in Solomon's review 32 showed a favourable impact on demand control as most of these studies were conducted in a North American context, where private contracting between laboratory users and the laboratory will tend to increase awareness about costs, and unnecessary requests will impact financially on the user.

Conversely, purely financial mechanisms could be detrimental to overall health-care provision as they potentially undermine quality, offering no rational system to ensure appropriate changes in requesting.

A simple mechanism described for several of the studies in Solomon's review was feedback of test cost information which was often, but not always, effective in a systematic review. 3 Feedback of testing activity alone was not found to be effective in a UK primary care context. 40

Providing costing information to physicians produced short-term reductions in requesting activity in a set of six Australian studies, although only one of these studies found that the decreased costs were sustained over the 12-month period of the study. 41 However, although these approaches may offer some potential for cost-containment, it is difficult to envisage how they could improve quality of practice in the absence of additional education on correct use and interpretation. Although provision of individual test costs, for example on the request form, has been shown at least in an American and Australian context to influence demand, I found no published studies in the UK. In the UK primary care sector, the current organization of general practices into primary care trusts (PCTs) holding financial responsibility brings the individual requesting doctor closer to the funding source than previously although, at least anecdotally, the relationship between individual general practices and their PCT can occasionally appear strained, with the PCT being seen as a financial controller of clinical practice. The move in England, towards Practice-Based Commissioning, 42 would bring individual requesting doctors closer to the financial responsibility for their practice. Most studies take a multifaceted approach to demand management, as illustrated by van Walraven in 1998 43 who combined funding changes with guideline dissemination and changes to the laboratory request form.

One example of successful implementation of laboratory budget holding, in New Zealand, where the health organization is similar to that of the UK, was published by Kerr et al. 44 In this programme, the Pegasus Medical Group (PMG), a consortium of approximately 40 general practice associations which contracted with regional health authorities, signed a budget-holding contract with their supplier laboratories designed to reduce laboratory expenditure. The contract allowed for PMG to retain any savings achieved in the first pilot group and a large proportion of the savings made in the non-pilot group. A second pilot group, again of 50 members from the original non-pilot group, commenced six months later. Test requesting and costing activity were recorded over the 12-month period and compared with the previous year. The prospective budget incorporated a 5% increase in laboratory test costs from the previous year and an estimated 14% increase in test activity related expenditure. Over the 12-month period, the overall percentage savings for the two pilot groups were 32.9% and 20.7%, respectively, but a saving of 20.3% was also made in the non-pilot group. Variability in the average test cost per consultation fell markedly in the two pilot groups compared with an increase in the non-pilot group (falls of 17.7% and 16.7%, respectively, compared with a rise of 17.5%) indicating more uniform, although not necessarily more appropriate, activity. Average expenditure fell in both high- and low-cost practices but more so in the high-cost group. The initiative included removing selected tests from the laboratory request form, which accounted for approximately one quarter of the reduction in costs. The average cost per test remained similar, suggesting that introducing test costs onto the form produced a general reduction in testing activity rather than a shift from more to less expensive tests. Interestingly, the fall in costs began before the intervention, suggesting that awareness of impending budgetary monitoring influenced activity. When compared with regional and national figures, the groups’ test expenditure fell considerably and the authors concluded that the effects were due to local budget holding and not to any national trends. Given the change in the non-pilot group, awareness of budgetary scrutiny alone may well have contributed to these results.

This example provided good evidence that the introduction of laboratory budget holding combined with changes in the laboratory request form could produce significant savings. The reductions seen have to be interpreted against the overall baseline testing activity per patient, which varies across the world. The study allowed for a 19% increase in expenditure between the two years, which is approximately twice the increase in national total test expenditure reported at that time.

Although the reduction in variability of requesting costs was reduced, which could be taken as a surrogate indicator of improvement in quality, there were no specific quality measurements in the study. The authors themselves questioned whether the reduction in testing activity led to improvements in patient care, or whether it could have led to impairment in some patient outcomes. The model was, nevertheless, proposed by the authors as a potential lesson for other countries in general practice budget-holding. 45

Measuring clinical outcomes is difficult as the impact of changes in laboratory testing is unlikely to be sufficiently large to produce statistically significant changes in unequivocal endpoints such as death or hospitalization, and surrogate endpoints are therefore needed. An earlier study in Israel, 46 involving the primary care sector but initiated by a health insurance fund, found that devolving budget-holding to individual clinics produced cost reductions. These were at least equal to the ongoing increase in expenditure in control practices in the same district. This study used a surrogate quality indicator (patient satisfaction, care staff surveys and care staff attitudes to financial considerations in medical decision-making). Although care staff awareness of financial issues increased over the period of the study, no changes were found in staff motivation or patient perception of clinical services or clinic responsiveness to patients’ needs. 47 This demonstrated that patients did not perceive a reduction in quality of care, although in terms of appropriateness of laboratory services, the correlation between patient perception of testing activity and quality of care is debatable.

The way in which health-care services are organized financially can therefore influence laboratory test requesting. This may introduce perverse incentives such as when purchaser and provider are split, with the effect that successful demand control reduces the laboratory income. As it is the laboratory specialists who are trained in the assessment and utilization of tests and in aspects of evidence-based laboratory medicine, they would appear to be optimally placed to advise on test appropriateness. Whereas the purchaser–provider paradigm may appear entirely logical for consumer goods, in a service which seeks to optimize the cost-effectiveness of health-care delivery, there are difficulties in introducing a financial system which penalizes the people who are best positioned to advise on test utilization. Similarly, as highlighted in the UK government-commissioned report by Lord Carter of Coles 48 ‘Investment decisions are made within a silo with little attention to the impact … on other departments or in primary care’. The same purchaser–provider accounting mechanisms within organizations are such that money saved by avoiding unnecessary downstream activity through a new test (such as brain natriuretic factor as a rule-out test for heart failure to avoid the cost of echocardiography) reduces income to the echocardiography provider to the benefit of the service user. It can prove difficult to introduce new tests which may cost the laboratory money but which make savings in other aspects of medical care. The impact of these paradoxical situations within health-care systems has been highlighted by the British health economist, Professor Christopher Ham, who suggests that integrated health-care systems may perform better without separation of purchaser and provider. 49 This follows on from comments from American authors attributing ‘poor performance of US health care to reliance on market mechanisms and for profit firms.’ 50

There is published evidence that in screening services, simultaneous involvement of different partners in the patient care system (patients, commissioners and providers) can provide positive synergistic effects, 51 again supporting the concept of more integrated care.

Changing the laboratory request form

From a practical viewpoint, the simplest way of reducing test demand is to remove its availability from the test request form. There are numerous examples of this dating from the 1980s, 52 through Solomon's series 2 and in the intervening years. 53–55 This approach, which was also included in the Pegasus programme, is usually initiated by the laboratory rather than by the user. This would appear appropriate as laboratory specialists should be optimally positioned to identify which tests are or are not amenable to removal. It is difficult to provide high-level evidence supporting appropriateness and these decisions rely mostly on consensus expert opinion. Much of the literature guidance, for example, would indicate that gamma glutamyl transferase (gamma GT) need not form part of a routine liver panel, but that it may be useful as a second-line test to investigate the cause of an elevated alkaline phosphatase. 56 We have very little evidence about the merits of investigating modest elevations of the enzyme. The restructuring of the laboratory request form can be done in different ways: the gamma GT can be removed from the routine profile and placed on a separate box on the form, or it can simply be removed from the profile.

However, the success of these measures must be seen against the baseline situation. It used to be commonplace to include large numbers of tests on request forms and panels were often larger, in part because of the low marginal costs for laboratories running multichannel continuous flow analysers. In Bailey's intervention, 54 although reductions of 60–80% were achieved for several analytes, the intervention involved removing calcium from a renal profile. While not unusual practice at the time, few laboratories would now include a calcium measurement as part of a renal panel. Similarly, some might argue that autoantibody screens and C-reactive protein (CRP) might not necessarily be included as tick boxes on the laboratory request form.

Another US intervention 57 unbundled a panel (urea and electrolytes, plus glucose) into its constituent analytes, and restricted advanced ordering of more than 72 h halved the number of tests requested. It might be argued that a rationalization of the ‘urea and electrolyte’ panel removing routine glucose, chloride and bicarbonate would have had a similar effect and avoided the complexity of requestors deciding which individual analytes they required. The availability of test ordering over 72 h in advance, outside of specific circumstances, also appears overly permissive. The same group reported similar experiences in 2007 using a CPOE system. 58

An alternative way of effectively removing a test from the request form is to use cascade testing within the laboratory. An example of this is the ‘frontline TSH’ test for investigating thyroid function (TSH, thyroid-stimulating hormone). While the removal of tests from routine profiles may be clinically legitimate, in many routine situations, there will be subgroups of patients in whom additional measurement would be appropriate. The use of the ‘frontline TSH’ only applies to certain patient groups such as stable treated hypothyroidism 59 and laboratories are advised to measure TSH and thyroxine if there is any question about a new diagnosis or about pituitary hypothyroidism. Similarly, while it may be reasonable not to measure serum bicarbonate in routine electrolyte profiles in fit, ambulatory patients, a low serum bicarbonate can be an unexpected indicator of acute disease in a patient admitted to an emergency department. There are limits as to which tests can be removed from a request form without potentially adversely influencing patient care. There is an increasing trend towards limiting the number of tests available as tick boxes and expecting users to request the specific tests they wish. In this context, the need for education and potential role of informatics in decision support becomes obvious. A complementary policy to removing tests from request forms is to introduce limitations on ordering. This may require a senior laboratory member to approve certain tests, or restrict ordering to senior medical staff. In view of the volumes of tests processed, this is unlikely to be a sustainable mechanism for anything other than immediate troubleshooting. A more integrated alternative is to incorporate the tests into established clinical pathways drawn up by clinical practice groups, so that laboratories and physicians agree on the role of the test within that clinical pathway. Similar policies can be used to agree a period before which the test should not be repeated, with the proviso that clinical situations will exist where it is appropriate to deviate from the agreed policy.

Examples of policies, usually agreed between laboratory and senior physicians, are combined with restrictions on testing include time-determined gating of duplications, 57,60 consultant-level restriction of the test, 55 restricting (emergency) test availability, 61 and combined with audit and feedback to requestors. These have produced reductions in testing, from 8% in high volume haematology and biochemistry and 19% in all tests 61 to an 85% reduction in a specifically targeted test (CRP). 55 One restrictive initiative in an American teaching hospital 62 used a CPOE system to remove duplicated (overlapping) test panels and ordering more than 24 h before testing. This produced a 12% fall in testing activity and 21% fall in phlebotomies over a 12-month period, releasing $72,000 marginal reagent cost and additional marginal phlebotomy and laboratory staff time for deployment elsewhere. However, overlapping test panels suggest that the design of the request form was not ideal. Many would question the wisdom of permitting advance test ordering except in specific circumstances. Considerable opportunity for improvement seems to have been present before the intervention. The Israeli study reported by Calderon-Margalit 61 is interesting as it addressed a whole hospital and not a selected discipline, and considered a wide range of tests. The authors acknowledged, however, that the main intervention used was restrictive testing, although the intervention contained an educational component. It restricted duplicate orders and limited the emergency test repertoire, producing an overall 19% reduction in inpatient tests, half of which were due to five commonly ordered tests. Although this was an interesting intervention, the original repertoire (creatine kinase-MB [CK-MB], troponin T and troponin I all available as cardiac markers) could have lent itself to rationalization to a single preferred marker without any other intervention.

A combination of order form redesign using Medicare-approved, diagnosis-oriented test lists and reflex laboratory testing was used for a range of common tests across primary and secondary care in an American laboratory. The intervention produced falls in test activity from 5–50%, with expected rises in some cascaded tests. 53

These interventions were combined in a total quality management approach using guidelines, educational sessions and prestamped forms containing requesting recommendations in another controlled study, 63 which achieved a significant 14% rise in appropriate testing and 85% fall in inappropriate testing for a single test pair, CK and CK-MB. This was a highly focused initiative and printing guidance on request forms cannot be applied to all available tests. It does, however, suggest the benefits of reinforcing educational guidance at the point of the requesting decision. After the intervention finished, testing behaviour had reverted to preintervention levels. This finding was consistent with earlier quality improvement approaches which have found a similar reversion. 32,38,39,46,64,65

Education and guidelines

Solomon et al. 32 highlighted educational interventions as being present in most of the successful studies identified.

Greco and Eisenberg published a report in 1993 suggesting that multifaceted educational approaches were more effective in changing physician behaviour than single strategies. 66 This was further demonstrated in 1999 in a US hospital study in which a series of parallel educational and cost awareness approaches in emergency department testing achieved a fall of approximately 25% in routine laboratory testing (chemistry, haematology and microbiology). 67 This study was also interesting in that it did not target a restricted test group and included high volume ‘routine’ tests.

One caveat on guidelines can be cited, albeit from outside pathology. Using the example of nerve electrophysiological studies, Shekelle et al. 68 disseminated two forms of guidelines, one general (electrophysiology is not recommended for assessing clinically apparent radiculopathy) and one specific (listing the clinical scenarios in which the test was considered most useful). Clinical vignettes distributed to 900 neurologists (with a 71% response rate) revealed significantly more appropriate and fewer inappropriate testing decisions in the specific guidance group. However, fewer appropriate decisions were made in the group receiving non-specific guidelines compared with a control group which received no guidelines. If extrapolated to laboratory medicine, this would suggest that guidelines may need to be sufficiently specific to be of positive rather than negative use and may partly explain the heterogeneity of results reported.

Several of the more intensive interventions required significant input, both from laboratory workers as educators and from requestors who needed to attend education and feedback sessions. These were only rarely included in the costs of the intervention.

Forsyth and Winarko reported the effects of local peer discussion within a small practice (15 doctors), led from within the practice, and found a 30–35% fall in diagnostic tests, with a corresponding fall in incoming telephone calls to the practice, perceived as one of the modifiable negative factors affecting professional life. Savings as billed costs ($400,000 in the five-month period) were not applicable to the whole health-care system as only the marginal cost is in fact saved to the overall health-care system. The positive effects were maintained for at least a year after the intervention in contrast to observations in other reports. 32,37–39,69,70 Although a small study, it does appear to highlight the merits of physician ‘buy in’ and was centred on educational improvement rather than restrictive ordering. 71

Miyakis 72 reviewed the records of 426 Australian inpatients with respect to 25 common laboratory investigations (haematology, biochemistry and blood gases) and found that 68% were not thought to have contributed towards patient management. Junior trainees ordered a greater percentage of non-contributory tests. An educational discussion meeting acted as the intervention and produced a 20% reduction in avoidable test requesting. Again, numbers had returned to preintervention levels by six months after the initiative. In this study, the authors identified a small but important subgroup of 80 tests (0.3% of the total) which were performed without any apparent relevant clinical context but which revealed abnormalities requiring action.

One of the few studies which has re-examined the long-term sustainability of an educational intervention has been that of Larsson and colleagues in Sweden. 73,74 Using a two-day educational programme delivered by a senior laboratory doctor, they compared laboratory testing requests across a group of tests based on researched recommendations. The majority of the tests targeted increased or decreased in line with recommendations over the six-month study and the results were maintained over the eight-year follow-up. This is surprising given the modest amount of educational top-up offered during the eight-year period. Although some of the tests concerned (serum chloride and lactate dehydrogenase) could have been targeted differently, for example, by changing laboratory panel content, this work nevertheless suggests that a limited educational programme could provide significant long-term improvements in testing behaviour. The cost-savings cited were 400,000 krona for reduced tests offset by 140,000 krona for increased tests which were deemed to be cost-effective as they were included in recommendations. However, these need to be interpreted in the context that a serum calcium for example was quoted as costing 10SEK (approximately £8.70 at present exchange rates), which is far in excess of the marginal laboratory cost and far higher than the total cost in most UK laboratories.

Outreach visits

A Cochrane review published in 1998 75 searched between 1966 and 1997 and found 18 trials on outreach visits. Twelve of the 13 trials which evaluated outreach visits to a health-care provider found positive intervention effects (ranging from 15% to 68% relative improvement), mostly measuring provider performance. Only one trial measured a patient outcome. This review concluded that outreach visits combined with additional interventions did reduce inappropriate practice (mostly prescribing) by physicians.

More recently, Verstappen et al. 63 reported a study in 194 Dutch primary care physicians targeting three specific clinical topics (cardiovascular investigations, lower abdominal investigations and upper abdominal investigations). This study found a 12% fall in testing activity in an active intervention group versus a feedback only group, with reduced inter-doctor variability. The intervention combined personalized graphical feedback, education and small group quality improvement meetings. The reductions in test requesting appear modest compared with the resource in the intervention. However, the cost analysis of the intervention 76 showed a net saving per physician, although once again, costs were expressed as charged costs rather than marginal savings. The savings found were 208 euros per physician over six months in the combined intervention versus 143 euros with feedback alone (although the opportunity cost in the small group arm was excluded which would equate to writing off doctors’ time as part of planned educational activities). The savings quoted only reflect test costs and not any potential downstream savings.

The cost consequences of an outreach programme were investigated in a Canadian primary care setting of 90,000 patients, based on 13 different ‘appropriate’ or ‘inappropriate’ investigation or treatment procedures, such as prostate specific antigen testing in young men (one of eight defined as inappropriate) and Chlamydia screening in sexually active women (one of five defined as appropriate). 77 The intervention used a combination of consensus building, audit, feedback and reminders, and concentrated on population screening topics. It compared the intervention costs with the sum of the additional costs of treating identified disease (positive) and avoiding unnecessary testing (negative) and concluded that an intervention costing $238,000 over 12 months could pay for itself and save an additional $192,000 with a presumed improvement in care quality. Total costs (which were considerably higher than UK laboratory costs) were used to calculate test and treatment costs and are therefore only relevant in a fee-for-service environment to the payer, and not to the overall health funder. The savings from the reduction in ‘inappropriate’ tests were negated by the increased costs of more ‘appropriate’ tests and the majority of savings were achieved through the reduced subsequent clinical costs saved by early detection.

Finally, a UK-based randomized controlled trial comparing two feedback interventions (activity feedback with educational messages, and reminder messages on result reports) found both to significantly reduce requests for a set of nine targeted laboratory tests, 78 with a reduction of approximately 10%.

Overall, therefore, the cost implications of such interventions are difficult to assess and very few consider the savings from subsequent ‘downstream’ activity (referrals and further investigation).

Targeted educational strategies, particularly those involving outreach visits or educational meetings, appear to produce a modest reduction in inappropriate testing, particularly when combined with enabling actions such as changing the laboratory request form. However, in some of the studies, the laboratory request forms reflected practices some 10 or more years ago, when there was a tendency to include larger numbers of tests and overlapping profiles, which may stimulate requesting. Only one study showed sustained change over time. This used continuing education in the form of top-up meetings. 73 It would appear that one-off interventions as a rule do not produce sustained change. This is particularly true in the context of junior trainees who repeatedly change hospital positions. Benefits would appear to be achievable in both primary care and the hospital context. As most of the cost-savings reported reflect total laboratory costs, the marginal savings to the health-care system overall will be significantly smaller. Although total cost-savings are relevant to a primary care user paying on a fee-for-service contract, these savings may simply ultimately result in reallocation of laboratory overheads to those tests which are still performed.

Information technology and decision support

Automated decision support for laboratory testing is in its early stages, although examples date back decades. 18,19,79 A number of more recent examples are discussed, although these mostly relate to specific areas of testing, for example coronary care. 80 Interesting work by Van Wijk et al. in 2001 81,82 showed the effects on requesting of two different types of a computer-based ‘Bloodlink’ decision support system (one offering a restricted ‘front page’ only 15 tests and a second offering specific guidance). The restricted list actually resulted in more tests being performed than the guideline version of the decision support control system. This guideline support was an indication-based decision support tool, which assisted in test selection across a large range of diagnostic and monitoring situations and was based on guidance from the Dutch College of General Practitioners. During the 12 months of the study, approximately three quarters of all participants’ requests were made using the software, indicating good acceptance. A 20% reduction was found with the indication-driven guideline ordering compared with repertoire restriction. Although the authors accept that the use of the guideline system does not provide absolute proof of the quality of requesting, the fact that the tests contained in the guideline-based repertoire were consistent with a national guideline set offers good corroborative evidence. Limiting the immediate repertoire offered to 15 tests was probably overly restrictive, as a study of UK testing suggested that 90% of general practitioners’ (GPs’) requests were accounted for by 22 tests. 10

A second prompt-based system was tested in a randomized, controlled trial and provided the sensitivity, specificity, positive and negative predictive values of tests in clinical contexts. The trial was conducted in a general practice setting, using clinical vignettes in an academic family medicine centre with first and second year residents. It reported a 38% decrease in the numbers of tests ordered using the prompting system at the point of test ordering (

Predictive features for successful interventions to improve clinical practice (from ref. 85 )

Thirty of 32 studies with all four criteria were positive. Additional incremental benefit was obtained by requiring physicians to document their reasons for not following the guideline. Interestingly, none of the trials which required clinicians to seek information from the decision support system were positive, although most predate the rapid knowledge access available through an Internet browser. Studies which added a requirement to document the reason for taking an alternative decision to that recommended, provided feedback on adherence, or included patient access to the decision support, showed incremental benefit. It is probably reasonable to extrapolate these findings to laboratory medicine.

Subtle differences in the content of the decision support system can significantly influence its success. Rosenbloom et al., in 2005, 87 described three different interventions, all involving computer prompting on a CPOE. The first encouraged users not to request unnecessary tests and restricted ordering of tests more than 72 h in advance. All test requests were targeted. The second targeted magnesium, calcium and phosphate requests, displaying recent results, restricting testing to one magnesium, calcium, phosphate series per order (i.e. restricting multiple advance test ordering), and the third, very similar to the second, targeted only magnesium requests and provided a summary of indications for testing and asked requestors to select an indication. The interventions ran sequentially over different periods of four months, 17 months and 15 months, respectively. Magnesium testing fell by 30% then rose by 30%, and then fell by 44%. The intervention which did not give specific information regarding appropriateness and simply restricted multiple advance test ordering (the second), therefore, had a similar effect to no intervention, since the benefit of the first intervention was removed by the second. This fits with the electrophysiology study findings that specific prompting, in this case requesting an indication for use of the test, may be more effective than more general educational material. A recent UK study assessed a CPOE and X-ray archiving and communications system in one 150-bed trust, which implemented CPOE in 2001. 88 The CPOE included warnings of possible test duplication and user-defined rules (not explicitly stated) with access to previous test results. The system produced mixed results, with some reductions (notably outpatient and repeat outpatient tests) but a rise in the amount of testing in day case patients. The rise in the intervention trust was half than that in the two other trusts. The quasi-experimental controlled before and after study may have been biased due to changes in practice during the sequential phases. These changes may have reflected a shift in patient care towards more day case work. The report acknowledged that secondary outcomes, i.e. length of stay, numbers of admissions, readmissions and outpatient attendances, were difficult to interpret because of the tenuous link between the CPOE and outcome. In a systematic review in 2006, 89 Georgiou identified 19 studies on the impact of CPOE on pathology. Eleven of these compared CPOE without decision support with no CPOE for laboratory testing in a range of countries (South Korea, USA, UK, Canada, Norway) and eight compared CPOE with and without specific decision support, all in the USA. Eight of the first group and all of the second group considered outcomes that can be specifically related to appropriateness issues (test volume, clinical indicators, e.g. length or appropriateness of stay). The CPOE systems without decision support showed an overall trend towards reduced test cost and volume (with a few rises which may have reflected an underused test being included in a standard order panel). Overall, fewer tests and, when measured, fewer inappropriate tests were performed in the decision support groups and a significant reduction in the median time to appropriate treatment for critical results was reported in one randomized control trial. 90 Four of the studies found that CPOE systems combined with decision support improved adherence to guidelines provided on the systems. None of the studies found any differences in patient adverse event rates, i.e. death, transfer to intensive care or readmission rates, although this probably reflects the low incidence of these events. It is important to recognize from a UK perspective that most of these studies were in the USA where markedly more test ordering occurs.

Finally, the context of the CPOE must be considered. Edmonson et al. 91 recently reviewed the applicability of the existing literature on automated decision support to physician compliance with clinical practice (not laboratory) guidelines, reviewing evidence between 1995 and 2006. Two-thirds of the 82 studies addressed primary care physicians and one-third specialist positions. It found significant selection bias in terms of primary and specialist representation, academic affiliation, salaried versus non-salaried positions and under-representation of emergency medicine and settings such as nursing homes. This review only considered studies conducted in the USA. Even so, it questioned whether the research on CPOE was a true reflection of how medicine was practiced in that country. This review examined guidelines in a range of disease categories rather than laboratory medicine. Similar selection bias may be present in laboratory medicine studies which examine automated decision support. It would certainly appear prudent to consider primary and secondary care separately, at the very least when assessing CPOE.

Potentially CPOE combined with web browser technology offers the opportunity to display the full history of a patient's previous results and not only those ordered from the current location. Evidence emerged more than 20 years ago that displaying past test results had a moderate effect on test ordering. 92 Web browser technology in the Anglia ICE 93 and Indigo4 94 electronic requesting reporting systems can now provide past test records between hospital and primary care. This can help to avoid an administrative source of test duplication. It is possible that some of the available knowledge resources such as UK Clinical Knowledge Summaries, 95 Map of Medicine 96 or Bettertesting 97 could be linked intuitively into electronic test result systems to provide rapid access to relevant guidance. This could take the form of web browser hyperlinks to appropriate guidance pages to assist clinicians in requesting and interpreting tests.

Conclusion

Improving efficiency in health care is a universal goal and not restricted to laboratory medicine. When we try to reduce unnecessary work, we face an inevitable trade-off between saving precious resources and avoiding unnecessary and potentially harmful procedures, and the fear of missing an unexpected diagnosis. In an increasingly litigious society that fear of missing a diagnosis may become even greater, it is essential that any process to increase efficiency firstly does no harm. Any demand control intervention must be based on the best evidence available, even if in laboratory medicine much of this is limited to consensus opinions on best practice.

Problems

The studies are so heterogeneous in terms of country, health-care system, target user population, choice of intervention (or combination of interventions used) and, most importantly, baseline situations (starting for example with tick boxes offering a vast range of tests), that it would be unrealistic to expect a meaningful result from a systematic review of demand control intervention versus no intervention in reducing testing activity. What is seen from these studies is more a series of trends which support a number of underlying principles that could be used to construct a programme of interventions that have the greatest chance of working and being cost-effective, which will then need to be tested prospectively.

Few, if any, studies provide meaningful information about the change in quality of health care delivered or about downstream events, e.g. additional GP work, avoidable referrals and admissions, additional morbidity and patient worry.

Costing the savings achieved in GPs’ time, referrals, admissions and investigations is difficult to study. These factors are influenced by a large number of factors so that a very large study would be necessary to identify savings specific to laboratory initiatives.

Providing evidence of cost-savings is challenging. Reduced laboratory billing costs may be relevant in a charge per item system to the purchaser but is meaningless in terms of government-funded health-care system costs, when it is the marginal cost which must be used, as total costs effectively reflect changes in individual cost stations within the same overarching organization.

Finally, as seen in the Pegasus experience, 44 awareness bias in a control group, i.e. knowledge that the study was being conducted in another group and that activity was being scrutinized, is a challenge to any randomized study, as true blinding is problematic.

Some of the simpler interventions such as amending request forms to remove lower-volume ‘non-routine’ tests have already evolved in the UK, to the point where evidence of the effect of these interventions has to some extent become redundant. Laboratories that offer indiscriminate ‘tick-box’ options or that are considered moving to order entry systems would do well to re-examine some of the request form redesign studies for information.

Education emerges as a key component to most of the more successful studies. This can be delivered in several ways – small group outreach visits, guideline dissemination, educational programmes or combinations of these. Disseminating guidelines alone appears least effective, although ‘data-push’ (i.e. proactively supplying guidance) have appeared to be more effective than ‘data-pull’ studies that require the practitioner to seek the information. Most of these studies were conducted in ‘pre-web’ days. We have entered an era in which information access has been accelerated to the point at which the opportunity of ‘10 words in 10 seconds’ (Sir Muir Gray, personal communication), i.e. a brief didactic answer to a clinical question, can be available via the Internet in realtime. Perhaps this might tilt the data-push, data-pull balance.

Displaying testing activity can be effective. Benchmarking can also be used to show meaningful increases or decreases in response to an intervention, which could be taken as surrogate markers for improvement. These changes do not guarantee, however, that the patients in whom the tests have or have not been requested are necessarily the right ones.

Blunt financial instruments to influence demand offer no measure of quality unless incorporated in something like the UK General Medical Services Contract Quality and Outcomes Framework, 6 but even then provide no assurance that activity is not just being skewed towards the driving indicators. These types of contractual arrangements are limited in scope. Inclusion of many testing ‘targets’ makes the contractual arrangement too complex. Separating providers from users by a financial mechanism can influence testing activity, but can produce a paradox in laboratory medicine as it is the provider who is best placed to advise on test use, and the user who is faced with an increasing array of more complex and more expensive tests. This introduces a perverse incentive on providers not to work to reduce inappropriate activity, a major challenge to commissioning high-quality services. This may be failure on the part of policy-makers to understand the role played by the laboratory as a supporting clinical service, rather than purely a results generator.

CPOEs can carry or link to knowledge resources, but it is questionable whether these will be able to drive a sufficient number of order prompts to cover the range of tests used without making the process unwieldy. They are, however, clearly going to be a tool of the future 98 and more work on their use in demand control is needed.

Many of the order-entry studies examine a limited number of tests, but the challenge in demand control actually spans the whole of laboratory medicine.

Solutions

How then can this mixed group of interventions be distilled supported by at least circumstantial evidence? I have attempted to rank these in order of possible priority by weighting their potential utility with ease and practicality of implementation. This is necessarily to some extent subjective.

Rationalizing test order forms removing specialist tests which require specific clinical justification from the routine ‘tick box’ menu would appear to be simple and successful, although the message from van Wijk's study

81

suggests that over-restriction may be counter-productive; Defining ‘useful tests’ in specific clinical situations and defining test repeat intervals. Prompting the need for confirmation and explanation by the requestor would appear to be effective; Providing secure computer (or web browser) access to past patient results at the point of test ordering. In a UK context where primary care and secondary care results appear in separate records, this would appear an easy way of avoiding administrative duplication; Establishing agreed guidelines and protocols for disease investigation and monitoring to cover a wide range of indications. But this requires continued educational input to be successful; CPOE systems have been successful. Success factors in non-laboratory studies have automated revision versus manual seeking of decision support, provision of patient specific recommendations rather than non-specific assessments, provision of decision support at the time of decision-making and computer-based decision support. Further incremental benefit may be obtained by adding a requirement to document the reason for taking an alternative decision to that recommended, providing feedback on adherence and including patient access to the decision support added incremental benefit; A multifaceted combination of the above would appear to be more effective than a single intervention; While handing budgets to users may reduce testing, this is to some extent bribing users not to request tests, when the user may not be best placed to decide on appropriateness. Financially driven test reductions offer no promise of improved quality.

Whether these points are understood by policy-makers remains unclear. Managing upstream demand and downstream interpretation and subsequent action through close professional relationships between laboratories and their users remains a crucial part of the laboratory test process. Quantifying the impact of these activities on the overall costs and quality of clinical care is more difficult. As emphasized in a recent editorial in this journal,

99

the future challenges in cost-effectiveness probably lie in demand control in the preanalytical and postanalytical aspects of laboratory medicine, rather than trying to squeeze ever more savings out of the laboratories themselves. That may incur a net cost in the long run.

DECLARATIONS