Abstract

Objective:

The quality of echocardiographic image acquisition is vital for precise quantifications and diagnostic accuracy. However, ultrasound equipment is limited in performance throughput and image quality. It is also governed by the operators’ acquisition competence. Although, a subjective quality control process is adopted for standard procedures; to provide optimal quality image, this further introduces major drawbacks in the degree of consistency, quantifications, and diagnostic accuracy.

Materials and Methods:

A deep neural network model was established that used a large data set containing 40,000 echocardiograms and implemented a guided tool for objective optimization of the apical two chamber (A2C), apical four chamber (A4C) and parasternal long axis (PLAX) images, based on clinical protocols. This tool provided real-time quality feedback on image adequacy and gave the operators’ image optimization experience, as they examined patients.

Results:

An average computational speed at 4.24 ms per frame, with 0.032% model error rate, was achieved on apical visibility, anatomical clarity, depth-gain, and foreshortening graded attributes. The novel pipeline was comparable to the operators’ ultrasound guidance system for quality image acquisition and reliable diagnosis in the health care system.

Conclusion:

The result of a guided acquisition provided novel evidence for an objective optimization process, optimal image quality, diagnostic accuracy, and improved users’ acquisition experiences, in clinical practice. A subjective assessment of a sub-optimal image quality has the potential to negatively impact patients’ clinical care.

Keywords

A two-dimensional (2D) transthoracic echocardiogram has become the diagnostic standard for assessing cardiac functions1,2 and has a vital role in detecting heart abnormalities. Linear measurements of the left ventricle (LV) systolic functions provide major clues to a healthy heart that guides a corresponding diagnostic response for patient care. However, what constitutes the element of quality in cardiac images remains unspecified and elusive among researchers and many clinical experts. In previous publications, 3 it was possible to enumerate and apply a novel method to make a quality assessment using three features of image quality attributes. These are often discussed among clinical experts and relates to image acquisition that aids in clinical measurements. The feasibility of the objective assessment on legacy and domain attributes thus proved the successive reliability on the acquisition of optimum image quality and accurate clinical measurements. Good quality images provide a contrasting structural delineation that yields accurate measurements of cardiac functions. However, the impact of such delineation and the image attributes, which constitute diagnostic clarity, are challenging and is directly addressed in this research. Currently in many practices, the method of image quality assessment is subjective and interpreter dependent but it’s a less reliable process, compared with an objective assessment system that provides reproducible research, consistent quality acquisition, reliable clinical quantification, and improved patient care.

During clinical examinations, echocardiogram in apical-two chamber, (A2C), apical-four chamber (A4C), and parasternal long axis (PLAX) views are primarily recommended, 4 as important images for linear measurements and volumetric quantifications. Consequently, a real-time objective quality scoring systems that comprise two or more standard apical views would provide dynamic on-screen guidance, during image acquisition. It would also allow clinicians to achieve consistency in the adequacy of the image quality obtained. An echocardiogram, with a single value quality score system, is limited in indicating which aspect of quality is lacking, as part of the overall assessment. Hence, practical applicability of such a system of assessment is only limited to experimental demonstration instead of translational implementation. Therefore, a clinically relevant objective assessment should provide insight on objective standards for each defined elements of quality, within a cardiac image. It would also be important to define a dynamic method of accessing the specific element of quality attributes to provide the operator with immediate feedback, optimization, and quantification. Previous work has demonstrated the feasibility of a limited version of such system of assessment 3 and provides details of a complete implementation on three different apical standard views as required in clinical workflow.

Related Work

Cardiac images vary significantly from patient to patient, and it is difficult to define an image with perfect quality compared with nonmedical imaging pathology. Consequently, it is considered impracticable to define a reference image that can be measured by calculating its deviation.5,6 Therefore, it is necessary to develop an anonymous image quality assessment algorithm, which does not depend on a reference image.

Studies have been carried out on anonymous image quality assessments, which exhibited a universal quality index approach. 7 This was largely focusing on the distortion of compression, with some implementing machine learning algorithms and using random/structural noise level, to evaluate image quality. Again, this is considered impracticable because the quality perception of echocardiogram is specific to varying pathological prognosis. In both approaches, it is difficult to apply both methods to echocardiography because cardiac sonography does not present well defined edges. Hence, new measures of image quality need to be developed and tested based on the global properties of the echocardiogram that matches the pathological inferences and clinical recommendation.

One of the earliest works on objective assessment of cardiac image quality was Abdi et al 8 who demonstrated the feasibility of quality assessment using a convolutional neural network (CNN) model using five apical views, based on six criteria scoring methods. Since there was no publicly available cardiac data set to model, the proposed work was reliant on expert’s knowledge, of feature engineering, which was a high resource intensive process. Although Abdi et al’s research yielded plausible outcome, it was technically insufficient for clinical workflow. This is because the defined quality features are limited and do not represent experts’ global characteristics for cardiac diagnosis, using 2D sonography.

Additional research 9 was done by Nagata et al, which addressed the impact of image quality on echocardiogram measurements however, the study failed to address the explicit differentiation on specific elements that constitute quality elements, with respect to image acquisition, presentation, and quantifications. Furthermore, Luong et al, 10 defined 12 criteria to grade each of the nine apical standard views 13 while computing a continuous single variable score to represent objective quantity for respective apical views. Luong’s regression model achieved overall accuracy of 87% with regard to four expert opinions and sufficiently demonstrated the impact of image quality on diagnostic use. However, the assessment method and scores do not represent cardiologists’ conventional assessment in practice, therefore, it did not gain translational acceptance for clinical workflow. The most recent study by Dong et al, 11 represents a current study of objective quality assessment. Unfortunately, the study was limited to apical four-chamber plane (A4C) and did not include the PLAX view or specific score criteria that is independently assessable in clinical practice. For this reason, assessment could be suitable for quantifying image quality for fetal echocardiography, rather than for adult patients. Dong’s argument for using focus/zoom attributes stems from fetal cardiology where specific tissue became the focus of an investigation. However, these attributes, though important should be described as element of clarity. Therefore, a zoomed section of myocardium should exhibit the attributes of clarity, instead of being considered as an independent factor.

Main Contributions

Interpreting the results of the proposed architectures in the literature is not straightforward. This is because a direct comparison of the models’ performance would require access to the same patient data set. At present, no echocardiography data set and the corresponding annotations for the image quality assessment was publicly available. Therefore, the aim of this work was to evaluate the performance of novel deep learning models for the automated image quality assessment, using an independent echocardiography data set. Although the inference time reported in the previous studies reviewed was short enough to make it feasible for real-time applications, the utility of such systems in the clinical practice would be limited. This is because only an overall predicted image quality score is provided by the models. If employed as part of an operator guidance system, the operator is provided with no clues as to why the image is being tagged as low quality, and how to improve it to obtain optimal images would become a time-consuming and guess work. A practical quality control report should contain such information which possibly break down the specific attributes of relevant image or frame quality which this novel solution proposes. In the view of the above, the main contributions of this research can be summarized as follows:

Preparation of (40,000) large independent cardiac data set consisting of A2C, A4C, PLAX apical views for quality assessment and benchmarking standard.

Release of a data set that includes an expert’s ground truth (GT; i.e. the reality you want to model with your supervised machine learning algorithm) and has annotations for apical visibility, chamber clarity, depth-gain, and foreshortening, to the public domain.

Fully optimized deep learning (2D + t) pipeline that simultaneously predict four independents’ cores and views from echo sequences.

Novel method on real-time access of four specific qualities attributes to aid optimum image acquisition and reliable clinical quantification.

Provide evaluation for real-time application pipeline suitable for operator feedback for data acquisition, and real-time optimization, for A2C, A4C, and PLAX cardiac standard views.

Materials and Methods

Unlike previous related works,3,16,17 the study provides the most comprehensive attributes of image quality, as reviewed by cardiologists, and dynamic viewer screen feedback as a guide to obtaining optimum image quality for quantification and clinical measurements. This integrated tool provides easy access to qualitative acquisition of echocardiogram for clinicians, point of care, and consistent, quality quantifications for cardiologists.

Optimization Protocols

In all existing quality assessment studies, criteria defined for objective assessment centered on limited features like subjective clarity of image’s edges, valves, chambers, and gain but are incapable of translational clinical advantage. Nevertheless, these clearly represent some important features by which anatomical details of the myocardium are analyzed. In our studies, we identified subjective correlation between observer perception of image’s anatomical features and the magnitude of distinguishable features present in the image. Thus, we proposed that objective assessment can be defined based on attributes that encompasses the projection of anatomical orientation, cavity clarity, depth-gain, and foreshortens. A system that can provide objective values on these four quality attributes can be utilized as real-time feedback on which aspect of image quality needs to be optimized specifically. The objective image quality attributes defined along with its optimization protocols are as follows:

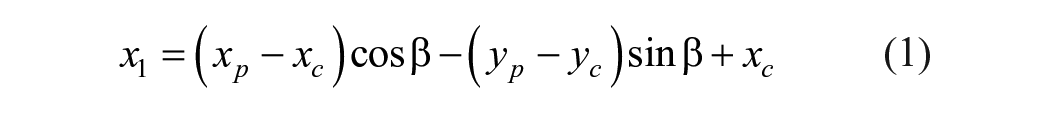

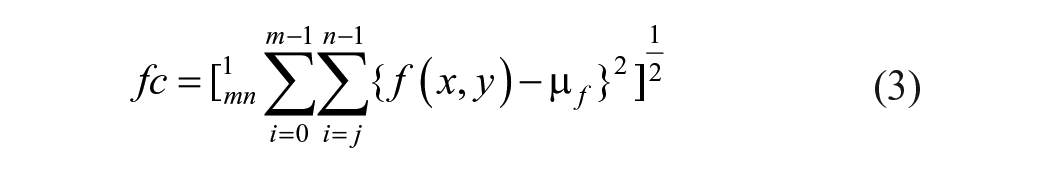

Apical Visibility and Orientation is domain-specific and requires significant acquisition experience. In A4C, on-axis attribute defines the projection of the myocardium with beam cutting through the heart’s apex region, presenting a four chamber views. This view is true for both diastole and systole frames and presents clinical and pathological significance paramount to optimum projection and clinical diagnosis. 2 The model identifies the apical orientation in real-time and applies translation model in equations (1) and (2) to provide on-screen feedback to operator’s probes action for necessary fine tuning. Suppose the intraventricular septum detection (See Figure 1), is oriented along any point Pi on the x-axis, this position indicates a spatial distribution with structural deviation from origin P0. This activates the on-screen feedback system with currently achieved quality score [VS] until optimal [VS] score is achieved. In PLAX view, the left ventricle (LV) apex is not visualized but emphasis is placed on anatomical orientation of the pericardium, RV at the apex, and LV chamber for linear and volumetric measurement. High-quality scores 0.9 is obtain by fine-tuning probe’s translations:

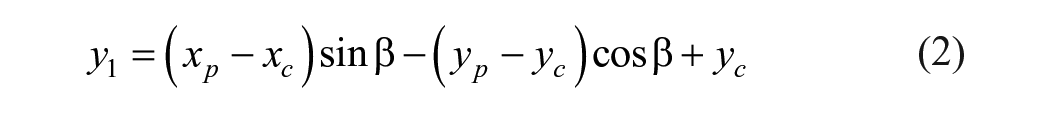

Anatomical Clarity is a legacy attribute of objective assessment.4,6 The chamber cavities, walls and valves are soft tissues which present rough boundaries and contractive edges. Studies have demonstrated the impact of contrast echocardiography, 12 however, with respect to quantification; anatomical clarity is visualized by several distinguishable fast-moving pixels’ formations during cardiac cycles. This attribute addresses the degree of distinguishable pixel element that represent the endocardial border cavities or clear distinction between the intraventricular septum in A4C, pericardium in PLAX, valves, trabeculated pericardial fluids and the endocardial walls. For 2D image, clarity is perception of luminance level summed up by the root means square (RMS) contrast where f (x, y) represents the normalized pixels in equation (3). The best pixel formation is computed in real-time with RMS is applied to delineate anatomical borders while providing on-screen scoring as operators’ feedback until optimum [LS] scoring of 0.9 is achieved. The formula is provided below:

Luminance Depth Gain attributes present a measure of intensity in discrete signal samples on a detected region of cardiac frame. The acoustic beams, some of which passes through trabeculated tissues, presents subtle impedance which influences the intensity of the image signals, or making anatomical details susceptible to depth changes, sector width and patients’ pathological differences. Consequently, signal gain at the near field usually possesses strong intensity or high amplitude and may become excessively high or excessively low at the far field region. Furthermore, signal with excessive gain can present as pulmonary fluid in some cases 11 and images with very low gain attributes but bear significant anatomical details or noticeable artifact are not ignored in clinical practice. Nevertheless, potential introduction of artifact from excessive gain would equally exacerbate visibility issue; yield an incorrect depiction of true anatomical tissues or obscure relevant anatomical details. Therefore, improper depth-gain can induce significant disuniformity in pixel intensities across the image, most often at the lower part of the image sector. For real-time optimization, time gain compensation (TGC) controls are often used to compensate for near-field or far-field attenuation, along with appropriate probe choices and objective score as a guide. The model detects the configuration for improved settings for both high-frequency probes useful for near field of tissue penetration, 13 and low frequency transducers in the far field penetration to achieve optimum [DG] score of 0.9 is achieved.

Poor axial alignment showing off-axis projections as indicated by P(x,y) versus optimum on-axis projection indicated by P (0,0) where the intraventricular septum runs vertically down the middle of the screen.

Apical Foreshortening is domain-specific attributes which identify the perspective deformation during image acquisition. Smistad et al, 14 have described the importance of real-time detection of apical foreshortening with deep learning pipeline. Foreshortening is a nonlinear structural deformation where changes in size of the areas and volumes become geometrically incongruent. 15 It accounts for inaccurate measurements of ejection fraction (EF) 3 and prevents the detection of crucial pathologies in the apical region which exacerbates clinical measurements. However, in PLAX view where LV apex visibility is not required, apex visibility could be taken as “false apex” 13 and counts as LV foreshortening. From clinical standpoint, eliminating foreshortening is paramount to model’s objective assessment, quantifications and diagnosis. For a dynamic optimization experience, the pipeline displayed, on the image, the objective [FS] scores per frame. This score related to the magnitude of foreshortening in the current frame and is updated in real-time when the operator engages any of the combination of five transducer manipulations until minimum foreshortening was achieved. In this case, [FS] score ranges from 0.1 (absence of foreshortening) is considered as optimum [FS] quality to maximum score range of 0.9 as sub-optimum quality. Images with high [FS] score are considered unsuitable under clinical assessment.

Data Set and Ethical Approval

For clinical assessment of myocardial functions, cardiologists place a magnitude of importance on A4C and PLAX for volumetric quantification and linear measurements. 1 Even though cardiac data are highly personalized with health care legislation, it’s rare to have substantial large number of cardiac data sets in public domain. But for the purpose of this research, an ethical approval (ref. 243023) was sought from UK’s Health Regulatory Agency. This study is based on randomly selected patients’ data set consisting of 6216 (A2C frames), 15 476 (A4C frames) and 18 308 (PLAX frames) from patients who had earlier undergone echocardiography TTE with St Mary’s Hospital, Private NHS Trust, which was purposely acquired for this study. These were cardiac images acquired in both standards consist of end systole (ES) and end diastole (ED) frames were completed by experienced cardiac sonographers using Vivid I (GE Healthcare, Wausaukee, WI) and iE33 xMATRIX (Philips Healthcare, Cambridge, Mass) ultrasound equipment systems (See Figure 1). Standard protocol in data protection act (2018) allows for the removal of all patient-identifiable information from DICOM-formatted videos before data analysis and applicable studies. Three frames were randomly drawn from each cine loop video which is split into training (32 000 frames), and testing (8000 frames) subdatasets in 80:20 ratios.

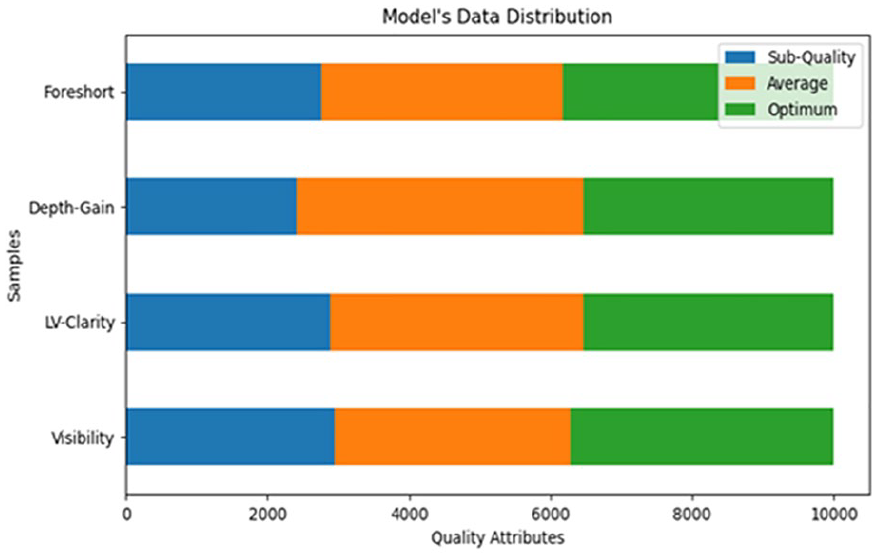

The bar graph (See Figure 2) summarizes the model’s data set distributions with three categorical labels derived from expert’s quality scores previously assigned to each frame; range of zero (0) to 4.5 as poor quality, 4.6 to 6.5 as average quality, and 6.7 to 9.9 as good (optimum) quality, respectively.

A bar graph that displays the distribution for total cardiac images used for model development consists of 40 000 extracted frames of A2C, A4C, and PLAX images, with three quality-levels: suboptimal quality, average quality, and optimal quality, respectively.

GT Annotations

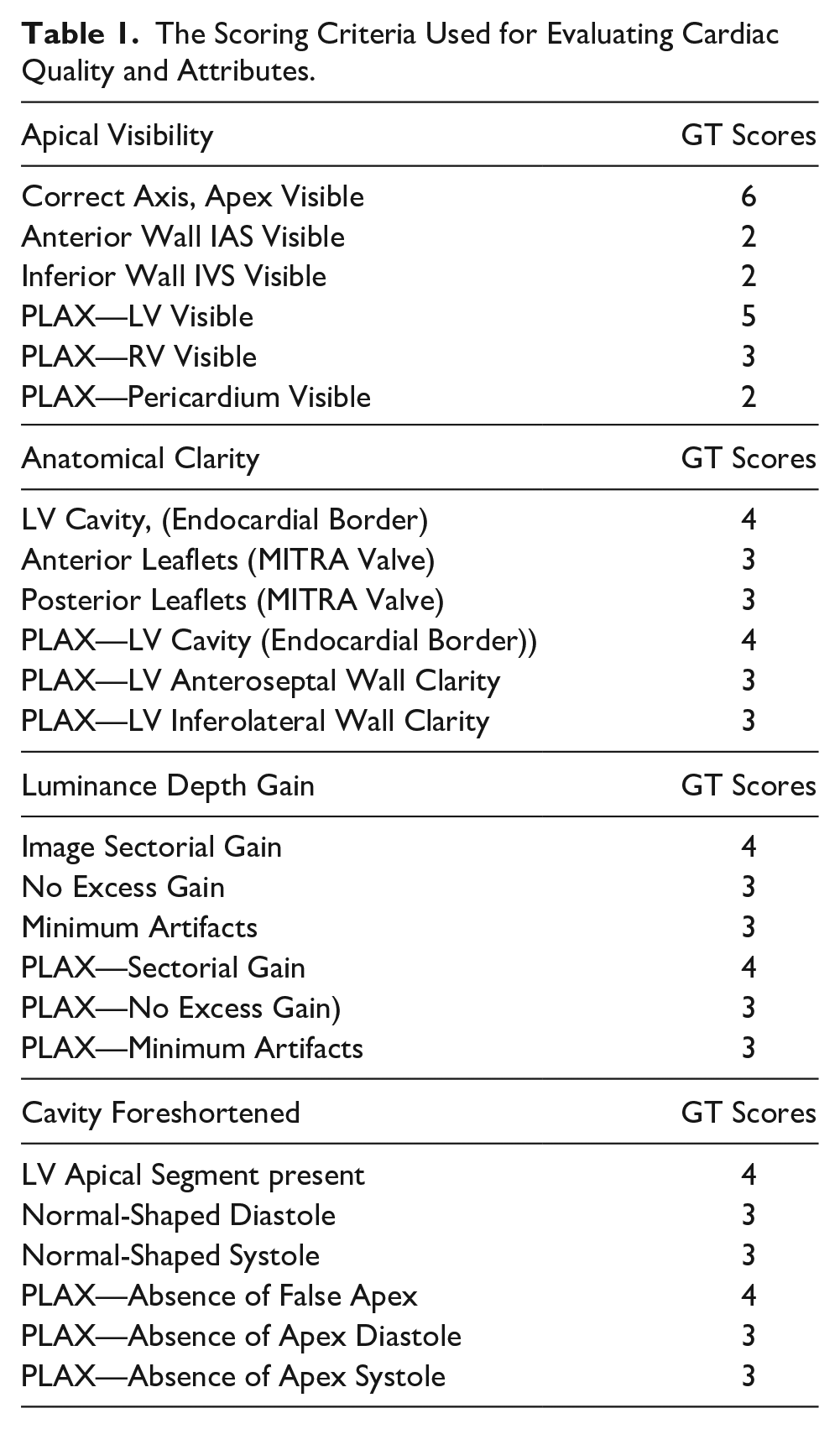

Each of the cardiac cine clips (A2C, A4C and PLAX views) were studied for anatomical characteristics that are congruent to experts’ views on 2D echocardiogram characteristics projection. These features were visually analyzed and were defined by 23 criteria listed in Table 1. Consequently, establishes four qualities attributes by which each frame can be evaluated and accessed for real-time optimization.

The Scoring Criteria Used for Evaluating Cardiac Quality and Attributes.

Model Training

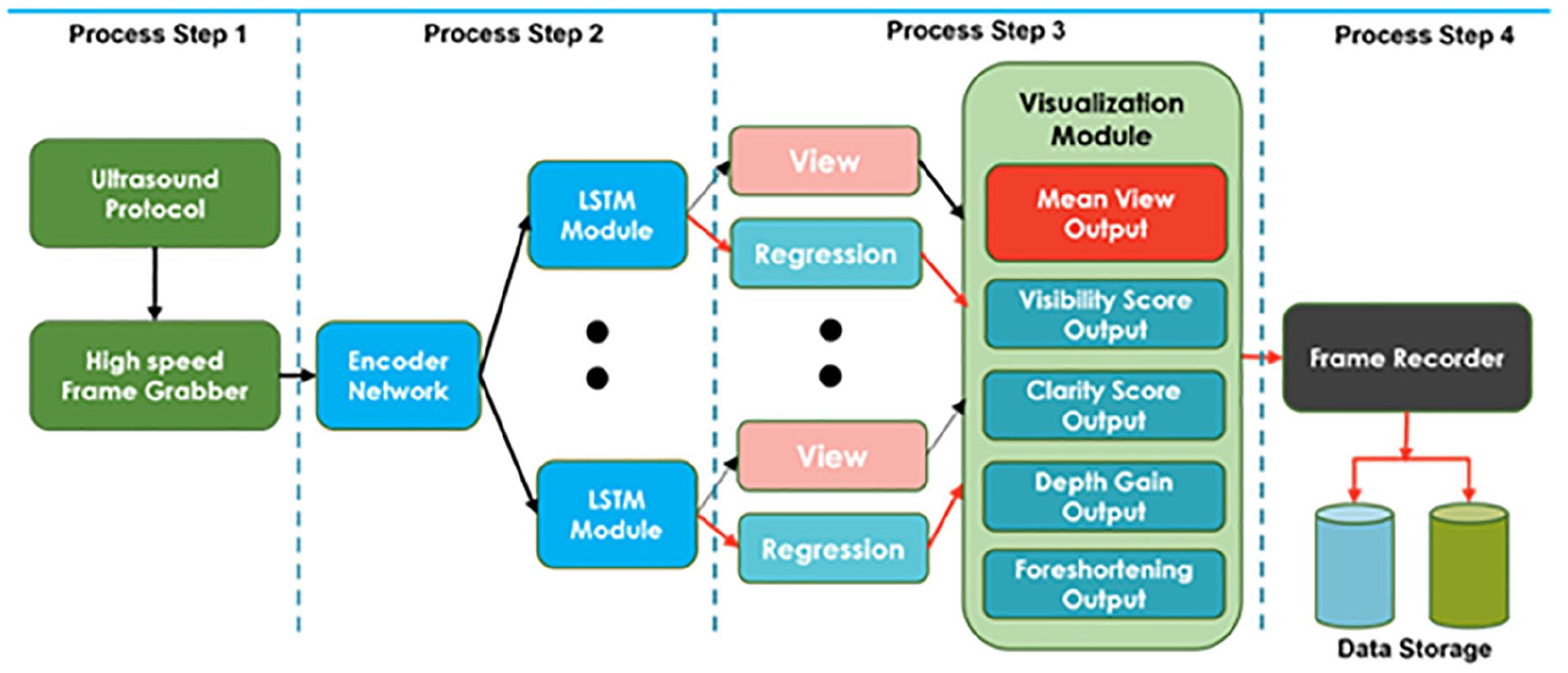

Prior to the implementation of real-time streaming of the scanning protocol, an efficient light-weight spatiotemporal model was established (See Figure 3, process 2 and 3) based on differentiable neural architecture search (NAS) approach. 16 The predictive model was based on earlier work; multi-stream time series regression architecture 17 implemented via model sub-classing object for greater control, each independent stream predicts specific quality attribute proposed in section 2.2 and included a corresponding prediction for view classification simultaneously. The architecture is logically divided into two parts; the first shared layer allows weight sharing through TensorFlow API module, while extracting the hierarchical spatial feature in the frame sequence. The resultant vector is flattened and feed not second part, a single-layered long short-term memory (LSTM) 18 for temporal extraction. The spatiotemporal architecture is trained on 24 000 frames of 227x227x3 spatial size, with 8000 validation samples in 80:20 ratios. Predictions are made via fully connected layers which compute specific quality scores and the probability for discrete labels via logistic regression module, simultaneously. Each layer employs Rectifier Linear Units (ReLU) for its internal activation function while the output layer employs sigmoid function to provide boundary for normalized scores on each model output. The model incorporated dual loss functions; mean squared error (MSE) for regression and binary cross-entropy for view classification are optimized via adaptive moment estimation (ADAM). The resultant output scores are bound normalized in the range of (0 to 1), yielding four quality attributes per frame.

Block diagram of a real-time quality assessment and optimization pipeline showing essential processing steps and threads for user session. Features embedded 4 streams deep learning architecture dedicated to assessment and operators’ feedback on apical visibility, anatomical clarity, depth gain and apical foreshortening attributes of image quality.

Real-Time Optimization Tool

The objective quality assessment pipeline illustrated in Figure 3 is intended for real-time operator’s feedback and optimization of cardiac image quality before clinical measurement and quantification. The experiment was carried out on Z600 Mini server with GeForce GTX 970 chipset’s Maxwell GPU architecture and featuring 4GB RAM coupled to 1664 CUDA cores. The pipeline framework accepts high-speed, streaming (frame) data of any varying length for A2C, A4C, or PLAX from GE Vivid ultrasound source equipment while observing all clinical protocol in trans-thoracic workflow.

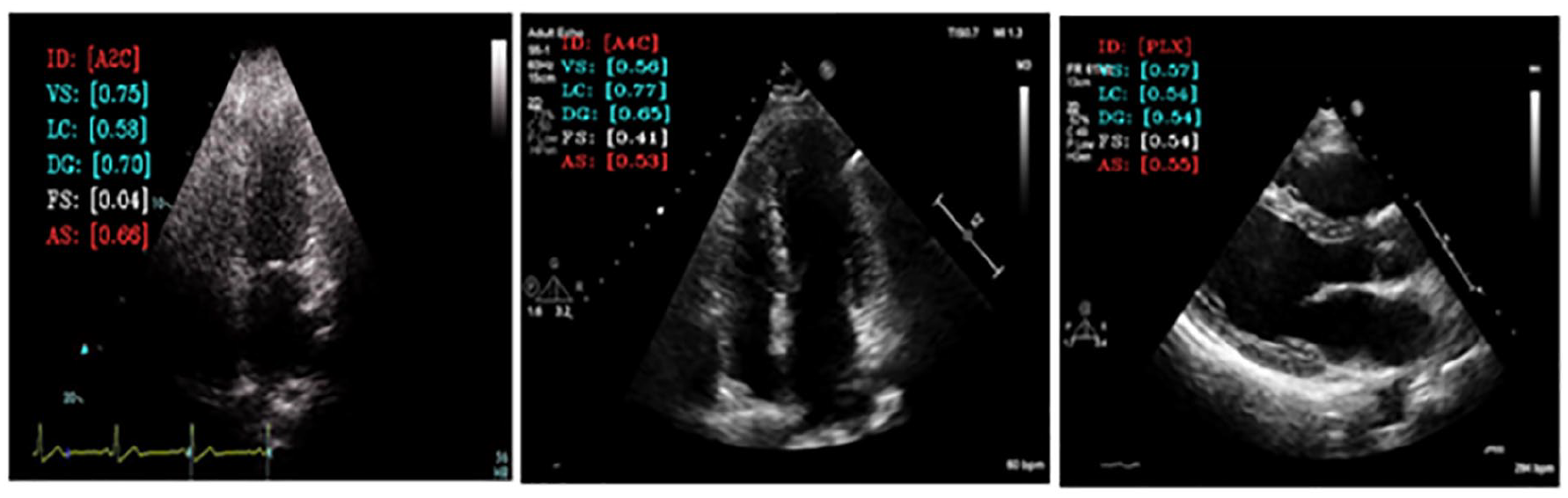

Each user session is divided into four sequential processes, each process with its respective varying threads. The heart of process (See Figure 3, step 1) is an external frame grabber with capability for high data rates, frame buffer, and low latency. This deals with both operators and patients’ specific limitations during image acquisition phase, two-dimensional cardiac frame data is sequentially feed into the encoder module where real-time feature extraction is taken place. While maintaining active connection with ultrasound equipment, process Figure 3, step 2 establishes two major threads of spatiotemporal convolution to predict four specific qualities attributes scores, and logistic probability module handles the prediction class of currently generated image (See Figure 3, step 3). The objective scores are then visualized (superimposed) on the fast-moving frames, in real-time (See Figure 4), providing specific feedback, encoded as [ID, VS, LC, DG, FS, AS], Frame identification Visibility, Clarity, gain, Foreshortening, Mean Score respectively. These scores are updated in real-time as probe’s adjustment progresses. The final process step 4 allows operator to record the optimized cine loop in Microsoft’s audio video interleave (.AVI) format, or a still image from the sequenced frames in Joint Photographic Experts Group (.JPG) format. Each session can thus be recorded and sequentially stored for further analysis.

Showing quality grading for visibility (VS), clarity (LC), depth-gain (DG), and apical foreshorten (FS). Pipeline model also shows image view classification and overall quality score (AS). Each quality grading varies from 0.0 to 1 and reflects the aspect of image quality that could be optimized during acquisition phase.

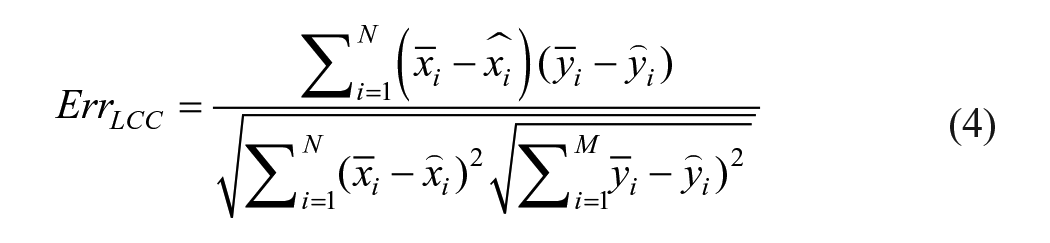

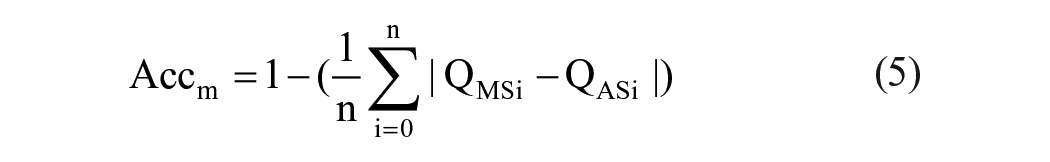

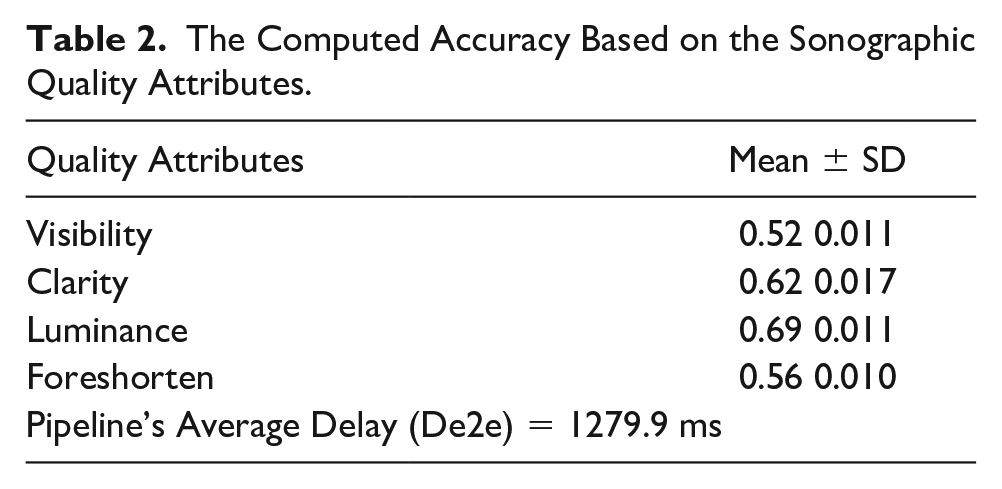

Evaluation Metrics & Model Performance

Since the model uses multiplex variables for each score attributes, performance was evaluated via objective function using linear correlation coefficient (LCC) in equations (4) and (5); measures linear difference between cardiologist’s score (QMS) and algorithm’s predicted score (QPS). Minimal LCC error indicate best fit model, hence better predictions. Figure 4 indicates LCC error distribution per selected quality attributes. Model’s accuracy was determined in equation (6) MAE, while computational inference speed was found at 4.24 ms per frame as detailed in Table 2. Results reinforce possibility for real-time feasibility and clinical deploy ability:

The Computed Accuracy Based on the Sonographic Quality Attributes.

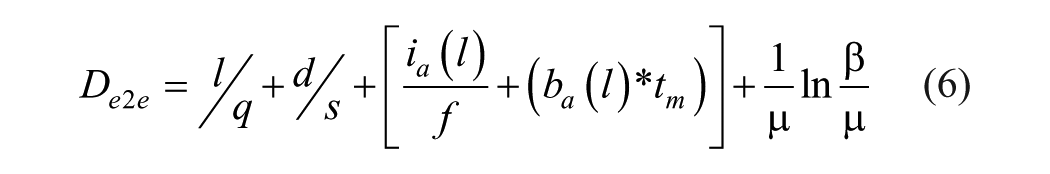

Model Validation

Acquisition of cardiac frames in PLAX, A4C, and A2C was performed using the phased array transducer with Vivid i ultrasound source equipment. This acquisition was done by an experienced clinician under trans-thoracic laboratory protocol. The frames were stored in DICOM and AVI formats for retrospective assessment in performance evaluation and analysis. Our source equipment features relevant hardware interface ports for possible external connectivity. In the setup, an off-the-shelf but a high-end, high speed frame grabber with high-definition media interface (HDMI) 19 input port and universal serial bus (USB) 3.0 20 output port was considered. The USB 3.0 boast of 5Gbps data rates with short cable connection (1 m length) selected to avoid excessive transmission delay. The host equipment, an Intel i7 dual core21,22 laptop running deep learning algorithm for real-time quality assessment as described in our methodology. Hereafter, each cardiac cine loop was play back and visualized on the source and destination screen where quality scoring is performed in real time. In practice, frame length and frame speed are expected to vary significantly while providing real-time feedback to the operator during acquisition phase, pipeline performance was estimated using aggregated values for end-to-end classic characteristic delay in transmission Dtx, propagation Dgt, processing Dpt, and queuing Dqt; given in equation (6):

where delay components are expressed as sum of all the delays; d1, d2, d3, . . ., dn, average values taken over a series of measurements thus calculated as shown in equation 7.

The terms; Dgt and Dpt are negligible due to latest advancement in processing power. Other terms in equation (6) constitutes significant impact to the overall delay mechanism; where l is the data packet length, q for rate of data transmission, d for distance using cable connection, ia pipeline embedded instructions, f processor’s clock frequency, ba buffer delay, tm memory access time, and Dqt which details the queue waiting time using β(0) as arrival and µ departure rate. The overall delay (in milliseconds) must satisfy real-time feedback support for cardiac frames between 40 and 60 fps.

Results

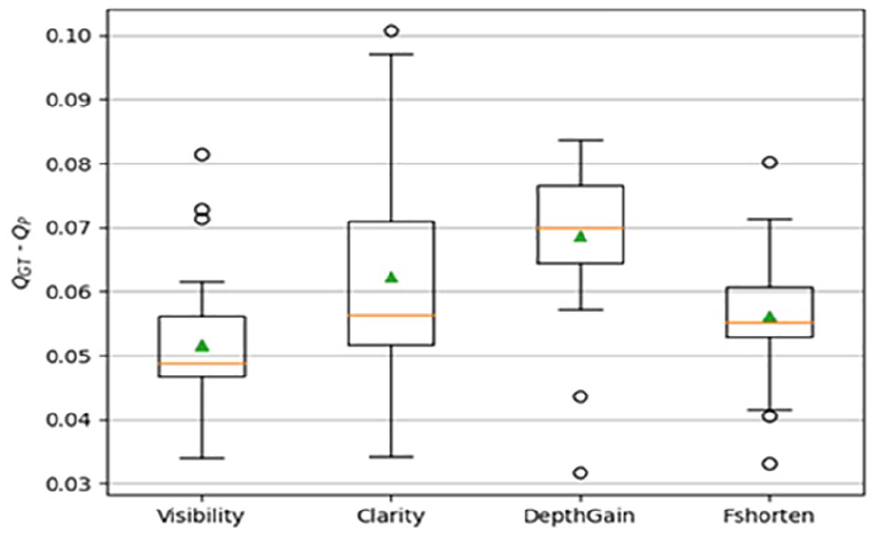

The multivariate model and the pipeline were evaluated using evaluation metrics as detailed in the methods section. Table 2 list the numeric results and error distribution per quality attribute is also depicted by box plot, as well as in Figure 5 for apical visibility, anatomical clarity, depth gain, and foreshortening properties. The model prediction speed was found to be 4.24 ms per frame for input pixel size of 128 × 128 × 3, which is sufficient for real-time deployment. However, this speed was found to be much higher considering the end-to-end delay as detailed in Table 2.

A box plot is provided of the error distributions per model outputs, which are computed as the absolute difference between predicted score per attributes and experts scores. The error distribution was very small (0.032%), and high accuracy is a paramount indicator of reliable quantification.

Discussion

The aim of this study was to test the feasibility for the most comprehensive objective attributes of cardiac image quality with integrated model for user guidance on acquisition and quantifications suitable for wider adoption in clinical workflow. Consequently, we expanded on previous studies which propose a method of real-time assessment and build an end-to-end solution which demonstrated the performance of a real-time application for operator feedback.

The implementation was deployed on GE Vivid i ultrasound hardware; the solution is platform-independent and is integrable on any standard proprietary hardware. This solution is embedded and can detect endocardial boarders, provides on-screen guidance for probe manipulations and on-screen objective scores, which is updated dynamically on each quality attribute. This eliminates the operator’s inherent limitations in image acquisition and helps acquire best image irrespective of patient positioning. This may be a leap in cardiac diagnosis, using echocardiography.

This guided tool may accelerate clinician’s experience in providing a consistent image acquisition and measurements. This model achieved 4.24 ms speed per frame, which is a quarter of a normal frame rate at 60 fps and provided no inhibition to existing hardware or clinical protocol.

The operator’s time to obtain optimum quality images was reduced significantly, while having a qualitative image for further interpretation. The objective scoring system further reinforced the capability to achieve optimum image acquisition and consistent measurement; improving clinician acquisition skills and reducing the extra time in training may be advantages over current clinical practice.

Furthermore, an integrated the tool could provide to be an invaluable learning opportunity for students and medical personnel, involved in point of care or in the laboratory.

Finally, the annotations provided by an expert cardiologist and an accredited annotator were used in the study. Intraobserver variability can be examined by obtaining additional annotations from human experts and compared with the error in the predicted scores.

Limitations

This research was limited by the research design and is based on the image set that was available to conduct this project. Given the threats to internal and external validity, the results are unique to this set of patient images. This research only considered A2C, A4C, and PLAX images as a de facto standard for clinical measurement and quantification in cardiac examination which is recommended by association of American cardiologists. A future study may include wider population and intensive clinical trials with guided tool to explore the support for different image compression and selective quality attributes that would satisfy individual laboratory requirements. Here, we have considered four attributes of image quality and believe it is comprehensive for translational application in clinical domain. A more comprehensive study would include additional criteria for 3D image quality assessments. Caution must be used in generalizing these results to other patient cohorts or practices. Replication of this work is needed to determine the wide clinical applicability of this work.

Conclusion

Sonography has its known limitations but depending on the user, an echocardiographic assessment is based on a subjective quality (scoring) system, which explains why the incessant imaging variability and varying interpretations continue to persist. Echocardiographic variability persists even when the same user reassesses the same set of images the second time. Instead, this work has demonstrated how an objective scoring model which is platform independent could be deployed on ultrasound hardware to achieve the most possible high-quality and consistent dynamic cardiac imaging. This tool could guide the image acquisition process, to ensure optimum image of respective cardiac views with accurate quantification and diagnosis. This may be a valuable tool for improved health care and could help in overcoming the user and patient limitations, due to clinical settings or at point of care scenarios. This should be considered valuable for researchers, clinicians, and cardiologists and could be used for independent measure in global standardization and echocardiogram benchmarking.

Footnotes

Acknowledgements

The authors express gratitude to Dr. WB Labs (Jnr) for his experimental and clinical assistance during model validation exercise.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Ethics Approval

Ethical approval for this study was obtained from the United Kingdom’s Health Regulatory Agency (243023).

Informed Consent

The IRB or ethical review committee determined that neither informed consent nor an information sheet was required.

Animal Welfare

Guidelines for humane animal treatment did not apply to the present study because no animals were used during the study.

Trial Registration

Not applicable.