Abstract

Objective:

This research examined the effects of multiple combined competency-based methods on sonography students’ perceptions of adult echocardiography training components. In addition, clinical preceptor evaluation scores were compared with faculty objective structured clinical examination (OSCE) scores.

Materials and Methods:

A quasi-experimental nonequivalent group research design was used to evaluate students enrolled in an adult cardiac Commission on Accreditation of Allied Health Education Programs (CAAHEP) accredited curriculum. Students’ perceptions pre and post multiple competency-based methods (formative assessment, OSCE, & simulation) intervention were recorded via course evaluations. Questions were analyzed individually using descriptive statistics and the Bonferroni correction. Students’ clinical evaluation and OSCE scores were analyzed using Spearman’s rank correlation.

Results:

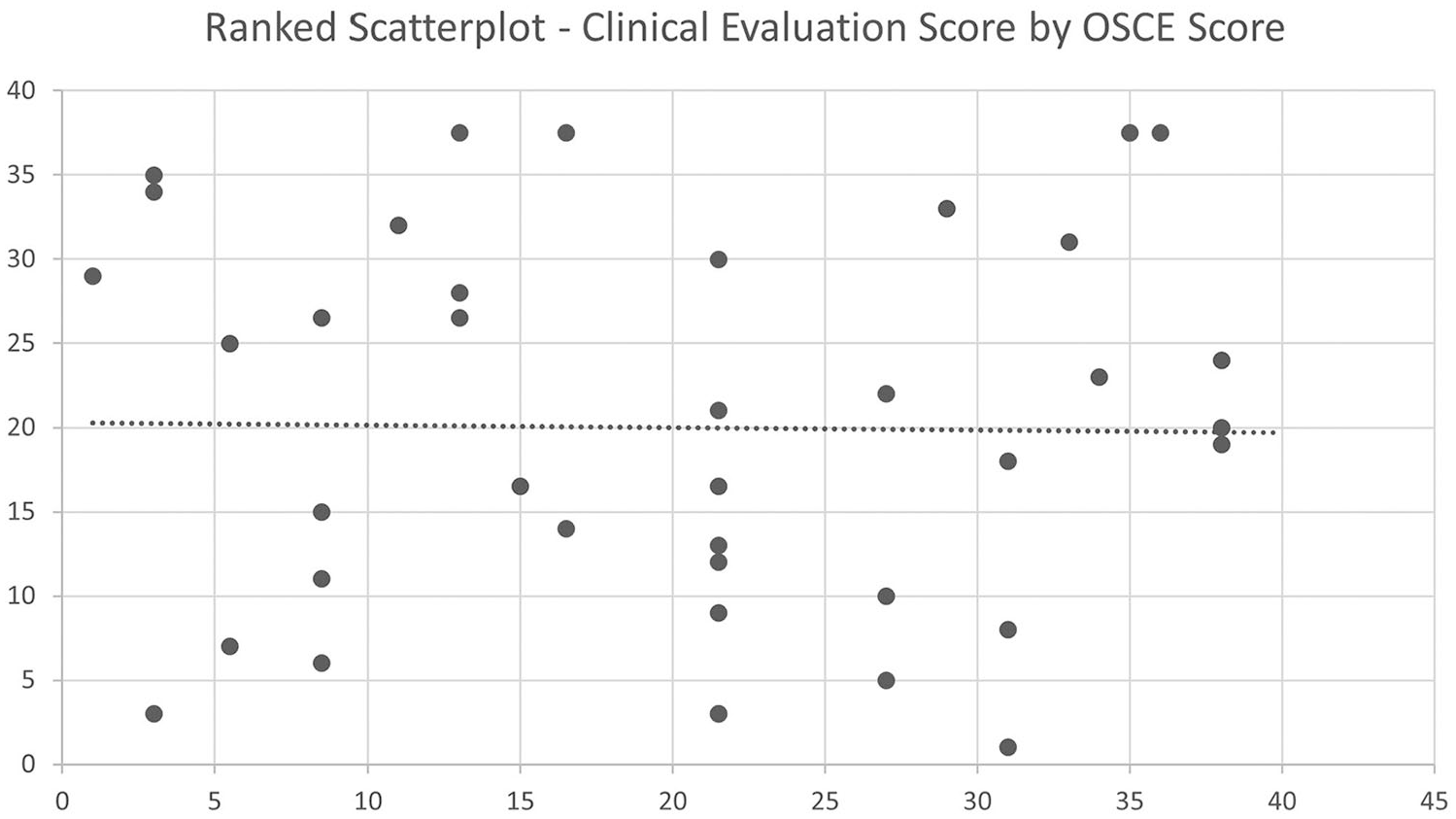

The majority of students’ perceptions pre- and postintervention of multiple competency-based assessments demonstrated significant differences, or they primarily agreed that their echocardiography knowledge or skill set was enhanced. There was weak correlation between students’ clinical competency evaluation scores and OSCE scores—post implementation of multiple competency-based assessments, rs(37) = −.01, P = .93.

Conclusion:

These results suggest further evaluation of the credentialing process’s clinical assessment to ensure clinical competency.

Keywords

The creation and maintenance of an effective curriculum to meet sonography accreditation requirements while producing a competent clinical sonographer is an ongoing process. Numerous studies have individually explored different assessments and techniques to optimally learn sonography.1–3 Methods have ranged from experimental designs encompassing objective structured clinical examinations (OSCEs) to simulation, and formative assessments. Other studies have also analyzed participants’ perceptions of these different techniques;4–6 however, this line of inquiry is the only attempt at encompassing multiple competency-based assessments including formative assessments, OSCEs, and simulation. This work is an extension of assessing multiple competency-based assessments within a Commission on Accreditation of Allied Health Education Programs (CAAHEP) accredited adult echocardiography program, 1 focused on analyzing students’ perceptions, as well as validating clinical assessments.

Use of Simulations

Sidhu et al 2 sought to determine whether procedural simulation led to improved sonography competence in a clinical setting via a systematic review. While most studies demonstrated improved performance in the same simulated environment as well as knowledge acquisition, minimal evidence supported the widespread adoption of simulation-based medical education. 2 Gibbs 3 recognized that limited simulated learning research was apparent and explored methods of integrating simulation-based learning into sonography training to enhance clinical preparation. However, despite clinical preceptors’ positive recognition of simulation in that it provides learning in a less stressful environment, a call for continued research to develop simulation strategies to effectively integrate it to support students, educators, and preceptors is needed. 3 The call for additional strategies is supported by Al Enazi 4 who discovered that health care students in programs such as nursing, respiratory therapy, nutrition, and occupational therapy had positive perceptions toward simulation education after exposure and feel that it should be included in their education. 4 Another study gauged the perceptions of physicians, sonographers, nurses, residents, and medical students. Ninety-eight percent approved simulation for training and teaching, and the authors concluded positive perceptions toward integrating simulation into the curricula. 5 In addition, medical students exposed to seven pathology-simulation ultrasound training models felt that simulation prepared them to use ultrasound in a clinical setting. 6 Delving deeper into the use of simulation’s history is helpful and necessary to understand further exploration of its potential application.

Full-body mannequin simulators first originated in the health care field in the 1960s to help the field of anesthesia. The mannequin, known as Sim One, enabled the training of endotracheal intubation and anesthesia induction. 7 Half a century later, simulation developed and became a common tool to help educate and train allied health and medical professionals.3,8–10 Unlike practicing on real patients that may result in a patient consequence or self-embarrassment, simulation permits trainees and students to identify anatomy and physiology, and make mistakes while facilitating confidence in their knowledge and skills.8,11 Specific simulation tools expose students to rare pathology they may not be exposed to in clinical practice—where students mainly acquire and finesse their skills.3,8,12 In this regard, simulation standardizes education as student clinical exposure (e.g., patient population and pathology) varies from clinical site to clinical site. 8 Simulation provides students the ability to remediate after receiving negative feedback from clinical sites. 8 The simulation should supplement clinical experience, not replace it, by enhancing student readiness for the clinical environment.8,9,13 Platts et al’s 14 research revealed that sonographers found a transesophageal and transthoracic echocardiography simulator process to be realistic, assist in image acquisition, and improve spatial relationship assessment. The study indicated echocardiography simulation to fill an important role in future echocardiographic training. 14

Incorporating simulation into teacher-led instruction (TI) (e.g., didactic methods) may prove useful and helpful to assess competency in a structured and uniform format.8,9,11 However, CAAHEP does not accept simulation to establish student competency. 15 Hence, simulation is designed to supplement learning. Pessin and Tang-Simmons 8 examined CAAHEP Diagnostic Medical Sonography DMS) programs’ use of simulation by surveying program directors to assess their use and perception of simulation and its value, respectively. No assessment of educational outcomes and specific simulation activities transpired. Seventy-five percent of program directors who responded use simulation; 89% stated it was a good teaching tool; 9% were not interested; 81% recorded positive student experience; 55% and 64% stated it was most useful for anatomy and transducer manipulation, respectively. 8 Programs did not report assessment tools. “Seventy-six percent of respondents felt it was necessary to have an instructor during simulation training.”8(p439) However, only 20% and 30% of schools provide faculty for low- and high-fidelity simulation, respectively. 8 Low-fidelity simulation mirrors actions, associated with task trainers, and utilized in procedural training, but may omit some real-life experience. 8 Whereas, high fidelity simulation is intended to be as realistic as possible. 8 Pessin and Tang-Simmons 8 state that faculty training requires resources, as noted by participants, and may be a hindrance for programs to include faculty training. 13 Faculty are crucial to sonography students’ education, but clinical preceptors are essential as well.

CAAHEP requires students to complete their clinical competencies on-site and therefore assessed by clinical preceptors. 15 In addition, credentialing examination prerequisites are aimed to confirm clinical competence, which is assessed by field sonographers or those who are in clinical preceptor positions. Unfortunately, the prerequisites can be subjective and contain nonvalid assessments at clinical sites as clinical preceptors may not be adept at assessments.1,16 Moreover, in a study that assessed nursing preceptors’ assessment strategies, findings revealed that many preceptors did not apply all recommended assessment strategies when assessing students, as well as not completely understanding the assessment process and lacked experience. 17 Additional studies8,17,18 assessed students’ and healthcare personnel’s perceptions of simulation as well as other instructional methods - participants perceptions were positive. Therefore, students’ perceptions of multiple competency-based techniques should also be assessed. An OSCE is an example of a competency-based technique that can be used to emulate the clinical experience and should also be assessed to compare the evaluation process of faculty within a CAAHEP accredited program to clinical preceptors.

Competency Methods in Sonography—OSCE

Medical education has utilized OSCEs since 1975. 19 Effective OSCEs should test Bloom’s three main domains. 20 Variation exists in the composition of an OSCE as it developed over time.19,21,22 Typical OSCEs incorporate standardized patients to ensure uniform scenarios, 19 which comes with a high cost—a primary disadvantage.16,19 Baker et al 16 recommend using students as models to offset the cost. Other advantages of OSCEs are (1) their availability, (2) safety (no danger of injury to patients), (3) no risk of litigation, (4) feedback from actors (if standardized patients are used), (5) stations tailored to the level of skills to be assessed, (6) allows for teaching audit, (7) emergency skill demonstration, and (8) recall. 19 OSCE disadvantages include organizational training, time, reliability, potential variability between the examiner and “patient,” and “textbook” scenarios that may not simulate real-world situations.16,19,23 Shulruf et al 23 stated OSCE examiners’ biases (i.e., low reliability) are likely to affect students performing at a borderline level based on Brannick et al’s 24 comprehensive meta-analysis study for medical students. Implementing an Objective Borderline Method 2 (OBM2) method can address this issue if needed. OBM2 compares the difficulty of an item to student ability; if the ability is less than difficulty, the borderline pass score (one of four) is graded as an F. 23 Investigating how OSCEs affect performance can facilitate educators in designing more effective curricula assessment processes to optimally evaluate students’ instruction. 25 In addition, an OSCE can help identify areas of improvement for the student and the curriculum.16,26 Employing a cogent standard to assess and prove competency is a challenge of OSCEs. 27

Vanda Yazbeck et al 20 analyzed internal structure validity using multiple psychometric measures compared with a standard passing or cut score. “Gathering consequential and internal structure validity evidence by multiple metrics provides support for or against the quality of an OSCE.”20(p6) Messick’s framework assessed five validity sources that should be considered to accept an assessment. The sources are content (construct is assessed accurately), response process (evidence of data coherence), internal structure (psychometric properties of the examination), relation with other variables, and consequences (impact on instructors, learners, and the curriculum). 20 Different medical specialties and education programs have experimented with OSCEs using various assessment methods, design, and success rates—most revealed a positive experience.16,25,28–32

The following research questions guided this portion of the study:

In addition to answering the research questions, the study’s objective was to build on the previously published work 1 that found no relationship between multiple competency-based assessments and clinical evaluation scores administered by clinical preceptors.

Materials and Methods

The quasi-experimental nonequivalent group research design was used to evaluate students enrolled in a CAAHEP accredited adult echocardiographic curriculum since students were not randomly assigned to conditions as intervention was part of the curriculum. Students’ perceptions, clinical evaluation scores, and OSCE scores from multiple cohorts were used for statistical analysis. The study’s control and experimental groups consisted of 42 and 39 students, respectively. The control group was not exposed to Research Question 1’s independent variable, which were the multiple competency-based assessments (cardiac OSCE, cardiac simulation assignment, and formative assessments). The dependent variable for Research Question 2 was students’ Likert scale adult echocardiography course evaluations obtained from the CAAHEP accredited DMS program’s learning management system, which encompassed ordinal data. A Likert scale assesses an individual’s attitude from one extreme to another (e.g., strongly agree, agree, neutral, disagree, and strongly disagree).

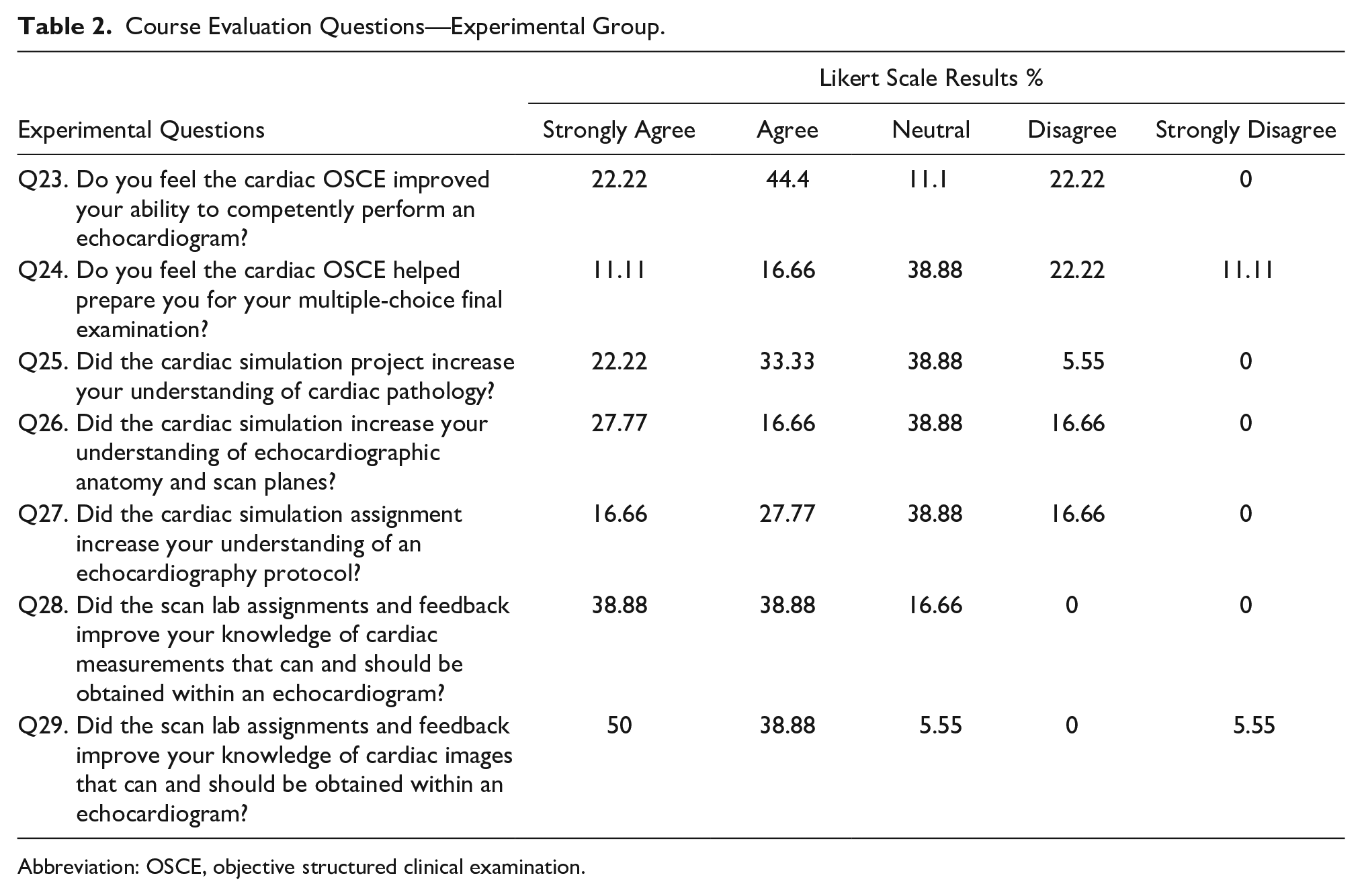

The course evaluations administered to the control group consisted of 22 Likert scale questions, three descriptive questions that asked students to describe the strengths and weaknesses of the courses, as well as asking for suggestions to improve the course. The course evaluations administered to the experimental group entailed the same questions as the control group, in addition to the seven Likert scale questions. The seven Likert scale questions were explicitly related to the competency-based assessments (Q23–Q29).

All course evaluation questions, except those specific to assessing the multiple competency-based assessments, were created by the DMS program and administered after each course. The remaining course evaluation questions were created to assess students’ perceptions of the multiple competency-based assessments. For example, individually asking if the OSCE improved a student’s ability to competently perform an echocardiogram or helped prepare them for their multiple-choice final examination. Other evaluation questions were created to assess if the competency-based assessment such as simulation or formative assessments improved their clinical knowledge.

Of the 22 Likert scale questions, only 11 questions that had the potential to be affected by the implementation of competency-based techniques were included. For example, assignment usefulness, a reflection of course content on examinations, and the instructor’s preparedness and presentation of subject matter; the latter is to determine if a more structured design of assignments affected students’ perception of the instructor’s competency. Omitted questions remained relatively constant or were unlikely to affect training components of echocardiography (e.g., classroom atmosphere, proctoring of examinations, and instructor’s availability and respect for students). Diagnostic Medical Imaging (DMI) students have designated classrooms for each cohort and scanned in the same scan lab; meanwhile, classroom atmosphere questions asked about lighting, heating, seating, and conduciveness to learning. Questions related to the instructor such as availability and respect were omitted as the instructor’s office hours remained constant, and the college has an open-door policy.

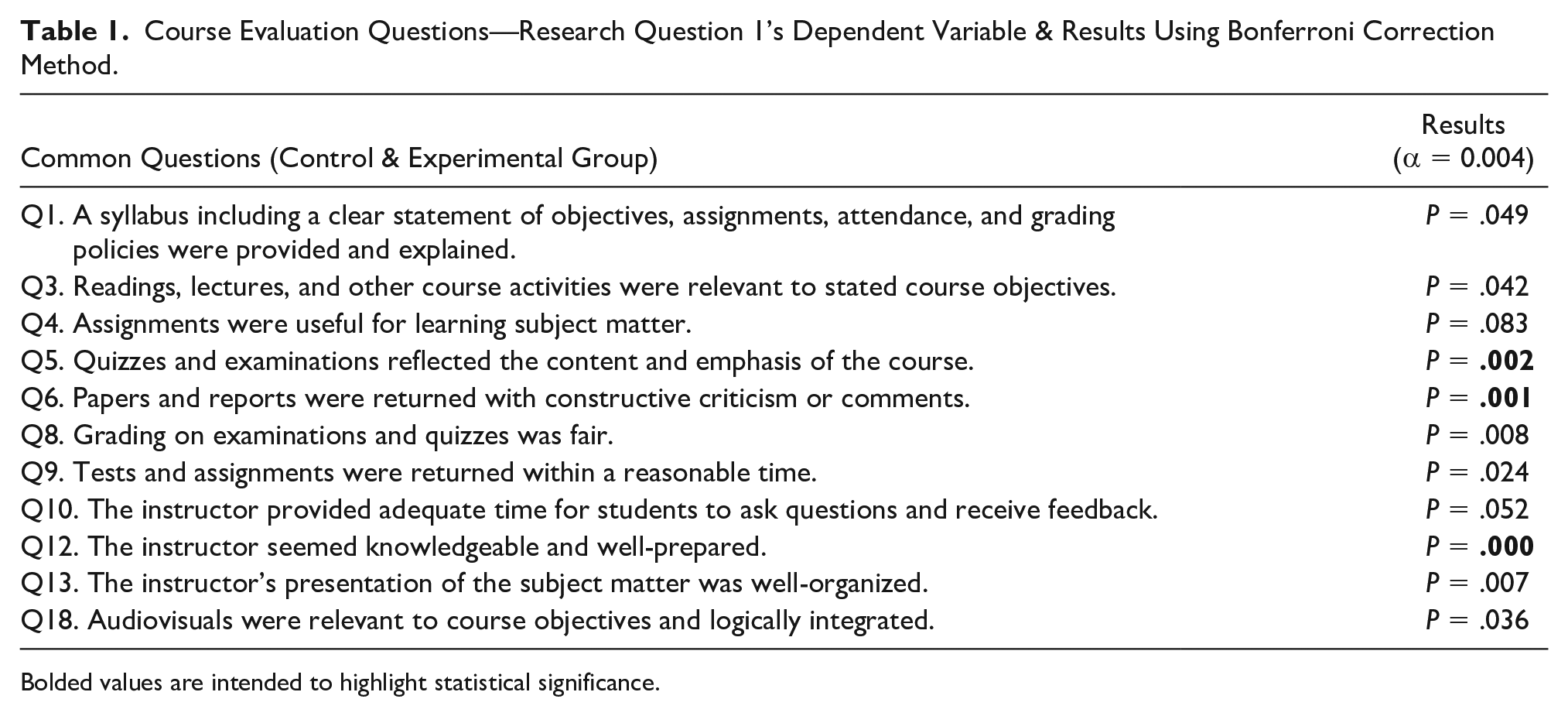

The 11 Likert scale questions administered to both groups were assessed using the Bonferroni correction method with an alpha level of 0.004 significance. The seven Likert scale questions only administered to the experiment group were analyzed using descriptive statistics. Research Question 1 was designed to assess if students perceived there to be a benefit. Theoretically, competency-based assessment training should lead to higher evaluation scores; however, Dunstatter’s 1 results demonstrated no significant difference between students’ clinical competency evaluation scores from clinical preceptors and multiple competency-based assessments. The data types of the clinical evaluation scores, OSCE, cardiac simulation, and formative assessments are interval, interval, nominal, and nominal, respectively.

A Spearman’s rank correlation test was used to analyze Research Question 2. A clinical evaluation summary score was obtained and is an average of four different categories with multiple measures for each. The categories are the psychomotor, cognitive, and affective learning domains, in addition to professional behavior. Considering Dunstatter’s 1 multiple competency-based methods were implemented and there was deemed to be significant relationship between them and clinical evaluation scores, the goal of Research Question 2 was to assess if there was a correlation between clinical evaluation and OSCE scores.

Results

All 11 common Likert scale questions had a 100% response rate, except for Q3 (response rate = 98%) and Q13 (response rate = 99%) (see Table 1). The seven Likert scale questions were only applied to the experimental group and had a response rate of 95% (see Table 2).

Course Evaluation Questions—Research Question 1’s Dependent Variable & Results Using Bonferroni Correction Method.

Bolded values are intended to highlight statistical significance.

Course Evaluation Questions—Experimental Group.

Abbreviation: OSCE, objective structured clinical examination.

The second research question was to explore the relationship between students’ clinical competency evaluation scores from clinical preceptors and their OSCE scores that evaluate competency from faculty. A Spearman’s rank correlation test was run to measure the relationship between students’ clinical competency evaluation scores and their OSCE scores. There was a weak correlation between students’ clinical competency evaluation scores and OSCE scores, rs(37) = −.01, P = .93 (see Figure 1).

Ranked scatterplot demonstrating OSCE score versus clinical evaluation score correlation. OSCE, objective structured clinical examination.

Discussion

The first research question aimed to assess students’ perceptions of adult echocardiography training components within an adult cardiac CAAHEP accredited DMS program through the acquisition of course evaluations. Including perceptions aimed to demonstrate that no other variable (e.g., teaching style, instructor knowledge, organization, etc.) attributed to any potential statistically significant difference that multiple competency-based assessments may have had. The rationale is that often, health care educators such as pharmacists and nurses demonstrate competence in their professional field. However, professional competence does not translate to effective teaching.33–35 Also, sonography educators struggle to teach reasoning and critical thinking skills as these capabilities typically develop with increased clinical experience. 36 A teacher is a primary factor in the educational process, 37 and so the combination of outcomes and course evaluations should be used to assess the instructor and learning environment. Considering the incorporation of multiple competency-based methods affected students’ perceptions in areas that were relatively controlled, 1 the results were somewhat expected and assist in demonstrating relatively consistent teaching methods. Three of the Bonferroni Corrections (Q5, Q6, & Q12) showed a statistically significant difference.

Q1’s results show that the instructor continued to provide clear expectations through the syllabus for all cohorts. For Q3, readings and lectures were constant—only course activities (i.e., competency-based assessments) changed. An instructor may perceive this to be beneficial as prior assignments were also considered relevant by students not exposed to the multiple competency-based assessments. It is important to note that students often ask for more scanning time, which relates to their clinical skills, particularly of the psychomotor and cognitive domains.

Q4’s result is relatively consistent with Q3’s as course activities are part of assignments. Future research may want to encompass questions that are more specific to each assignment.

Q5’s results were ironically statistically significant considering the same final examination was administered. However, quizzes were modified, but questions came from the same question bank. The results could mean that the questions pulled from the question bank were not reflective of the course content or that the competency-based assignments made it more evident what the course emphasized, despite no significant difference in their final examination scores.

Q6’s results were anticipated and verified the weekly formative assessments throughout the course. Formative assessments serve as an ongoing diagnostic tool and are designed to enhance student learning during the instructional process while providing feedback to both the educator and learner.38–40 Future research or course evaluations may want to evaluate feedback effectiveness of competency-based assignments in echocardiography.

Q8 of the course evaluation assessed whether grading on examinations and quizzes was fair and was not statistically significant. This makes sense considering that the final examination was consistent and quiz questions came from the same question bank (Q5). It is important to note that the instructor reviewed any question that 50% or more of the class got wrong via discrimination scores and if the question’s wording was ambiguous.

Q9 of the course evaluation assessed whether tests and assignments were returned within a reasonable time and was not statistically significant; more students within the experimental group strongly agreed. This question semi-aligns with Q6 and confirms the instructor’s appropriate use of formative assessments as test scores were typically returned within a week. Regular formative assessments with timely results and feedback to students related to identifiable learning outcomes are most effective.38,40–42 For Q10, the majority of both groups strongly agreed that the instructor provided adequate time to ask questions and receive feedback.

Q12 was statistically significant, but Q13 was not; however, more students within the experimental group strongly agreed for both. The results for Q12 are surprising as the instructor had over 10 years of sonography experience for both groups, and it is unlikely that the instructor’s knowledge changed significantly during this time. Though, student perceptions of the instructor being well-prepared may have changed due to the acquisition of more teaching experience over time. However, this slightly conflicts with Q13’s results, which demonstrate no statistical difference in instructor’s presentation of subject matter and being well-organized; one may surmise that more teaching experience could enhance the instructor’s skills in these areas. In addition, the feedback from the assessments could have affected student perceptions of instruction knowledge as they had a tangible written resource to review for each of the assignments compared with the control group, where it was mostly verbal. Additional research could look into differentiating between the effects of written, verbal, and combined feedback concerning ultrasound echocardiography. Q18’s results were not significant as the same audiovisuals were used.

The seven Likert questions administered only to the experimental group were fascinating relative to Dunstatter’s 1 findings. Dunstatter’s 1 study demonstrated no relationship between multiple competency-based assessments and students’ summative assessment grades and clinical competency evaluation scores given by clinical preceptors, respectively. However, Q23 demonstrated that students primarily agreed that the OSCE improved their ability to perform a protocol, which was not reflected in their clinical evaluation scores. While perceptions do not necessarily translate to outcomes, this finding may further support the difficulty and inaccuracy of clinical assessment. 1 An alternative option for future research could be to evaluate students’ perceptions of clinical rotations affecting their ability to perform an echocardiogram competently.

Q24 sought to assess whether the OSCE helped prepare students for their multiple-choice final examination, which the majority chose neutral or disagree. These results support what is found in the literature in that didactic assessment (i.e., multiple-choice examinations such as a cumulative final or credentialing examination) do not entirely assess competency.1,16,43–45 In addition, multiple-choice tests and OSCEs combined could provide a more thorough and accurate evaluation of abilities.25,28,30 For the portion that did agree, it may likely be attributed to the real-world application of the OSCE, where students had to scan and acquire images and measurements that assess a particular pathology. The assessment of pathology applies to the cognitive domain as students must be aware of which findings appear within a particular pathology. In addition, 46% of the Registered Diagnostic Cardiac Sonographer (RDCS) credentialing examination is pathology, and 23% is measurement techniques, maneuvers, and sonographic views. 46

The results of Questions 25, 26, and 27 correspond to the literature. Pessin and Tang-Simmons 8 study showed that 81% of CAAHEP directors reported positive student experience with simulation, but the study did not report specific assessment tools. This study can add to the type of assessment tool used in CAAHEP programs. Although one must remember that simulation is designed to supplement learning as CAAHEP does not accept simulation to establish student competency. 15

The literature supports that specific simulation tools expose students to rare pathology they may not be exposed to in clinical practice, which is where they primarily acquire and finesse their skills.3,8,12 Although no statistically significant outcomes were achieved between multiple competency-based assessments and students’ clinical competency evaluation scores, 1 in the eyes of the students, there was an overall benefit that aligns with Alsalamah et al’s 47 study where optimal simulation benefit achievement is acquired through goal-oriented objectives and assignments within a curriculum. Furthermore, the incorporation of simulation into didactic methods proved useful in a structured and uniform format.8,9,11 The findings also aligned with other studies4–6 (though not as positive) where participants had positive perceptions toward simulation. In this study, the instructor designed an assignment based on the simulation that incorporated the didactic component where students had to work together to understand a particular pathology and a protocol to assess the pathology. The assignment was also designed to help students perform better on their OSCE. However, students were not allowed to scan the same pathology of their assignment. It would be interesting to assess students’ perceptions on whether simulation helped them understand a pathology protocol they were tested on via the OSCE.

The results of Questions 28 and 29 indicated that students perceived the formative assessments to benefit them the most. The questions differ from asking solely about a basic echocardiography protocol—as Q23 did with the OSCE. A protocol is merely a baseline of required images for a routine study. However, echocardiography encompasses dozens of measurements that may not be utilized in a standard protocol. The assignments were designed to teach students to be aware of all measurements in case they acquire a job that requires a thorough understanding of all possible quantitative analytical measurements. These results proved most useful to the researcher moving forward for clinical competency and further incorporating formative assignments into the curriculum. Similar to Q23’s analysis, Q28 and Q29 may support the difficulty of clinical assessment1,16 as students are also evaluated on the cardiac images and measurements they acquired during their clinical experience. In other words, students perceived formative assignments to improve their clinical skills, but it was not represented through their clinical evaluation scores. In addition to clinical assessment, it may have proven beneficial to ask the students if the formative assignments helped prepare them for their final examination, OSCE, and to perform a protocol.

Overall, the majority of Research Question 1’s results suggest that multiple competency-based assessments have changed students’ perceptions of the course. The seven additional questions were meaningful, though not statistically significant, as there is no other group who were asked these questions or administered different assignments while asked to perform the same end objective (e.g., OSCE). The additional questions appear to incorporate information with the potential to create new assignments or research moving forward. It is essential to note that sometimes, student perceptions may not correlate with teacher effectiveness, and there are other variables to consider, such as students’ grade expectations, subject, gender, race, and others. 48

The second research question sought to identify the relationship between students’ clinical competency scores from clinical preceptors and their OSCE scores that evaluate competency from faculty using a structured rubric. Due to validation of health care workers’ clinical assessment, and less experienced sonographers awarding higher grades, 43 future research could assess the experience of clinical preceptors. The outcomes of Research Question 2 are relevant, considering it is comparing two scores that both assess scanning competency and support clinical assessment issues in health care. The findings potentially could correlate with Dunstatter’s 1 and be a reason why there was a lack of statistical significance between multiple competence-based assessments affecting students’ clinical evaluation scores, despite the students’ overall perceptions in Research Question 1 that the competency-based assessments help them perform clinical skills. Such evidence could warrant credentialing organizations to focus more on assessing clinical competency in addition to CAAHEP and Joint Review Committee on Education in Diagnostic Medical Sonography (JRC-DMS) reevaluating their competency requirements. For example, the JRC-DMS requires that students must complete specific standards, 49 but they could also assess the reliability of the requirements. Considering that graduating from an accredited ultrasound school is only one pathway for an applicant to sit for their credentialing exam, it would make the most sense for credentialing organizations to reconsider their credentialing process. Unfortunately, limitations do exist and must be addressed before changing the credentialing process. A limitation of Research Question 2’s analysis is the comparison of two different assessments. While the OSCE was assessed by educators (with possibly more assessment experience) in a structured environment, it was not done on an actual patient in a clinical setting. The structured environment, in theory, could be more standardized, and the rubric used could be more thorough than American Registry for Diagnostic Medical Sonography’s (ARDMS) clinical forms. Further research must be conducted to have stricter criteria or, at the least, an official assessment criterion where personnel is specially trained for this role. While this may make the process to become registered more difficult for applicants, it could ensure that those who become registered are competent.

Study Limitations

Due to the study design, these student results are not generalizable due to several threats to validity. However, the study reveals areas that are enlightening to the educational and credentialing process. This study was conducted using one sample of convenience from the host echocardiographic program. Therefore, it restricted the number of cohorts and students analyzed. Another limitation is the implemented OSCE utilized fellow students as suggested by Baker et al, 16 instead of standardized patients, partly due to a limited budget. Although students did not know who they were scanning until the day of the examination, there may have been a bias as the students involved in the research had been completing peer scanning examinations throughout the curriculum. Also, personal bias may exist among evaluators despite multiple evaluators assessing each student’s images. 23 In addition, personal bias may be present from clinical preceptors’ lack of training, knowledge, or education to ensure a completely valid and reliable assessment. 16

Conclusion

Diagnostic Medical Sonography’s credentialing organizations (i.e., ARDMS) have multiple pathways to apply for a credentialing examination. Regardless of pathway, applicants must demonstrate clinical competency, ideally via a reliable and valid assessment. CAAHEP and credentialing organizations have criteria in place to account for learning and assessment. However, this study also reveals potential gaps in the clinical assessment prerequisite process to become credentialed.

Sonography’s clinical competency assessment process could be further evaluated as there was no correlation between educators’ and clinical preceptors’ evaluation of clinical skills despite students perceiving multiple competency-based assignments to help them perform clinical skills. This finding further bolsters the argument of subjective clinical assessment within the literature, and that clinical experience does not translate to reliable and valid evaluation. An option for CAAHEP, JRC-DMS, or credentialing organizations could be to establish or require a more stringent official assessment criterion where personnel are specially trained for this role to ensure that one who becomes registered is clinically competent. This study also contributes to the literature on how CAAHEP programs may want to incorporate multiple competency-based assignments such as simulation, formative assessments, and OSCEs to help students become clinically competent. Of the multiple assignments, students perceived formative assessments to benefit their skills the most.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.