Abstract

The purpose of this research was to employ the audit method to measure performance and identify targets of change, setting a template for future large-scale investigations that may inform decisions involving sonographer role expansion in Canada. The authors conducted an audit of 433 sonographic examinations performed in the ultrasound department of a Canadian hospital. Sonographer reports were contrasted with radiologist final reports, and a degree of agreement (DoA) 1 to 4 was assigned to each exam package. In total, 322 of 429 (75%) exam packages were ranked as DoA 1 (complete agreement between sonographer and radiologist), 86 of 429 (20%) were ranked as DoA 2, 16 of 429 (4%) were ranked as DoA 3, and 5 of 429 (1%) were ranked as DoA 4 (significant discrepancy between sonographer and radiologist). The results revealed a 75% agreement between sonographer and radiologist on imaging findings as they are recorded in technical impression sheets and reports. Discrepancies are usually minor and involve the omission of incidental findings by the radiologist.

Ongoing change in the Canadian health care field has involved greater emphasis on patient care methods, investigation into the structural management of health care institutions, and the analysis of the role development of allied health professionals and other nonphysician health care workers. This interest in the evolution of health care delivery in Canada is spurred by the financial and quality-of-care challenges presented to the current system in the face of factors like increasing rates of chronic illness and a growing and aging population. 1 Also, fragmentation of health care delivery due to the increasing diversity and complexity of services has increased the cost of health care without adding to quality. 2 Due to the current financial environment and the anticipated increase in demand, more efficient allocation of resources is vital.

A promising concept to alleviate the financial and temporal constraints in Canadian health care is described by the interdisciplinary model of health delivery, in which health professionals are semi-autonomous and integrated in a network based on communication and collaboration. 3 Shared decision making and communication has been seen to improve patient care, and economical burdens can be alleviated with the implementation of skill mix and role development. 4 In this model, allied health professionals take over some procedural tasks from physicians in the hopes to free them from work that could be done by someone less expensive to the health care system. The postulation is that an increased workload does not necessitate more staff or longer hours but instead the proper use of the people already available. 4 Literature on the effect of role revision in health care reveals a positive impact on professional performance, improved or unchanged patient outcomes, and mixed effects on costs.3,5 This model of care involving more advanced practice roles in allied health professionals has already seen use internationally; the British National Health Service (NHS) employed this decentralized health delivery model in its plan for investment and reform in 2000. 6 A key feature of its plan for improved systems involves “earned autonomy” and the migration of control away from the center. The plan also describes the goal of establishing protocol-based care, in which allied health professionals and other nonphysician personnel may take over some roles traditionally held only by clinicians. The plan predicts “less central control and progressively more devolution as standards improve and modernisation takes hold.” 6

Sonographers in particular have seen success in role development over the past few decades, especially in the United Kingdom and Australia, 7 where sonographers (in some institutions) are able to independently perform and report on sonographic studies without physician supervision.8,9 In Canada, in addition to petitioning for the long-awaited regulation of the profession, sonographers have begun to undertake advanced practice roles. For example, sonographers taking over the role of injecting intravenous (IV) ultrasound contrast from a physician or nurse has been correlated with positive outcomes, including increased productivity and freeing up of a radiologist’s time.10,11 Given the economic and quality-of-care potential of advanced practice roles and skill mix, sonographer role development, currently ongoing in Canada and worldwide, may present a partial solution to the challenges currently faced by the health system.

The recommendation of further research into imaging technologist role development has been seen in academic journals like Ultrasound9,12 and professional bodies like the Society of Radiographers. 13 However, quantitative evidence for sonographer capability, specifically in Canada, is lacking. To support the regulation and development of the profession, more evidence-based research evaluating the performance of Canadian sonographers is needed. This audit was performed in response to the call for more evidence-based research into sonographers’ role development.

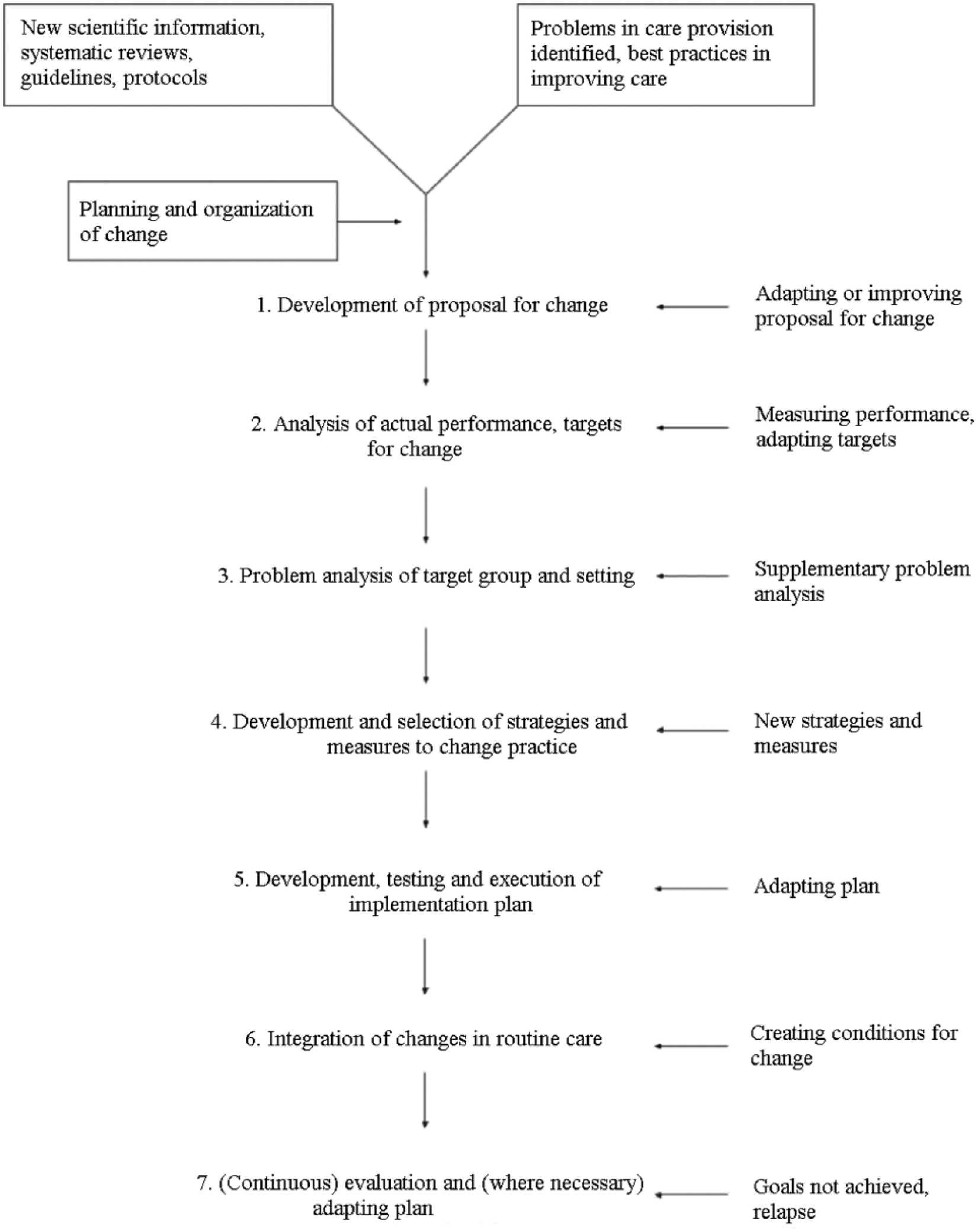

The Grol et al. 5 implementation of change model is used here as a framework for the analysis of sonographers in a Canadian health care institution. In the model (Figure 1), essential steps necessary for the proper implementation of a plan of change are outlined. One such step is the analysis of performance to identify targets of change. According to the model, this is achieved by the measurement of performance, which may shed light on the appropriate objectives of change. 5 As the audit method is a well-established approach to ensuring lasting quality improvement in medical practice, 7 the authors present an audit of standard general sonography examinations performed in the ultrasound department of a Canadian health care institution. This project will assess the feasibility of the audit method to produce, under the framework of the model, quantifiable values to serve as measurements of performance in identifying targets of change.

Implementation of change model, reproduced from Grol et al. 5 Notice that step 2 of the model calls for the measurement of performance to identify targets of change.

Materials and Methods

A random set of 433 sonographic examinations was identified via the study hospital system’s Radiology Information System (RIS) via the Picture Archiving and Communications System (PACS). The search was restricted to examinations reported between April 19, 2016, and May 19, 2016, with the procedure codes denoting the “Complete Abdomen” (ABDUS), “Complete Pelvic” (PELUS), and “High Risk Obstetrical” (OBS3) examination type. This search included examinations performed on outpatients, inpatients, and patients from the emergency department (ED). From each examination, the sonographer’s impression sheet (Appendix A, available online) and corresponding radiologist final report (Appendix B, available online) were anonymized and saved on an electronic drive. The contents/anatomy of interest for each examination as per Headwaters Healthcare Centre (HHCC) protocol are outlined in Appendix C (available online).

The sonographer’s impression sheet, completed by the Canadian Registered Generalist Sonographer (CRGS), represents his or her impression on the results of the study. It is meant to draw the attention of the radiologist to the presence or absence of pathology, sonographic indicators of pathology or normal appearance, measurements of relevant anatomy, and incidental findings. The radiologist report represents the official findings of the investigation and the results of the study as dictated by the radiologist of record. Each pair of sonographer worksheets and radiologist reports represents a single sonographic investigation, although various implements/scanning modalities (different transducers, Doppler sonography, M-mode, etc.) may be used within that examination. The sonographer’s impression sheet(s) and radiologist final report(s) were obtained for each examination, and all patient demographic information was removed from worksheets and reports before data were taken off-site. The unique identifier used to match technical impression sheets to reports was the account number assigned to each particular examination.

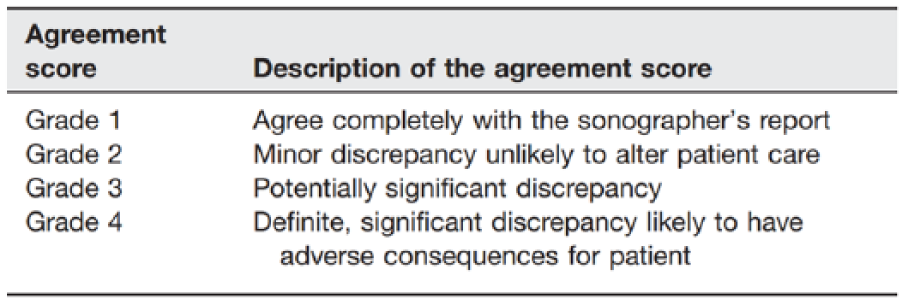

Analysis of the data to determine the degree of agreement (DoA) between sonographer and radiologist was performed by the authors retrospectively and blinded to other imaging or clinical outcomes. The auditing methodology set forth by Riley et al. 14 was used to set a DoA for each examination completed. A ranking of 1, 2, 3, or 4 was assigned to each examination, which, in the current study, represents the level of agreement between the sonographer’s impression sheet and the radiologist’s final report. The system developed by Riley et al. was used because of its value in taking into consideration the potential of discrepancies to alter patient care, as well as its ability to produce quantifiable values from a qualitative analysis, which facilitates data analysis and understanding. Figure 2 provides a description of the ranking system developed by Riley et al.

The data analysis comprised two phases of study. In phase 1, under the supervision of the coauthors, two researchers (R.D. and S.V.) carried out the qualitative analysis on each of the 433 exam packages. Each researcher independently assigned a DoA to each exam package. Exam packages wherein the proper degree of agreement was unclear or debatable were identified as “further review required.” In phase 2 of analysis, all exam packages classed as “further review required” underwent an appraisal/deliberation process with all authors until consensus was reached by the group on the appropriate DoA for each exam package. All scores were recorded into an Excel 2010 (Microsoft, Redmond, WA) database.

Cohen’s κ was used to test for interrater reliability between the qualitative analyses of R.D. (investigator) and S.V. (collaborator). In this study, Cohen’s κ was calculated for total agreement, without individual reliability examined within categories. Reliability scores within categories were not computed as the large discrepancies between the number of elements within categories would yield unreliable results.

This study has undergone and received ethics approval from the study hospital’s Research Ethics Board.

Results

The data set for analysis comprised 184 Complete Abdomen impression exam packages, 160 Complete Pelvic exam packages, and 89 High Risk Obstetrical exam packages.

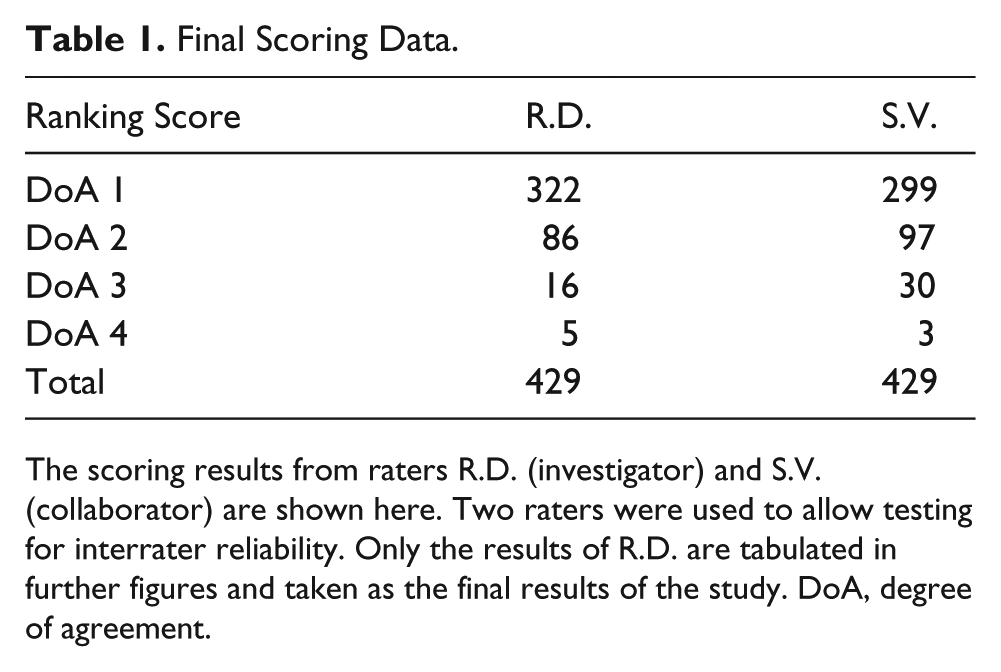

The interrater reliability between the 2 assessors was measured by Cohen’s κ, which takes into account agreement due to chance. With κ = 0.76 for total agreement (including all DoA assessments), agreement between raters was good. 15 The results for each assessor are given in Table 1.

Final Scoring Data.

The scoring results from raters R.D. (investigator) and S.V. (collaborator) are shown here. Two raters were used to allow testing for interrater reliability. Only the results of R.D. are tabulated in further figures and taken as the final results of the study. DoA, degree of agreement.

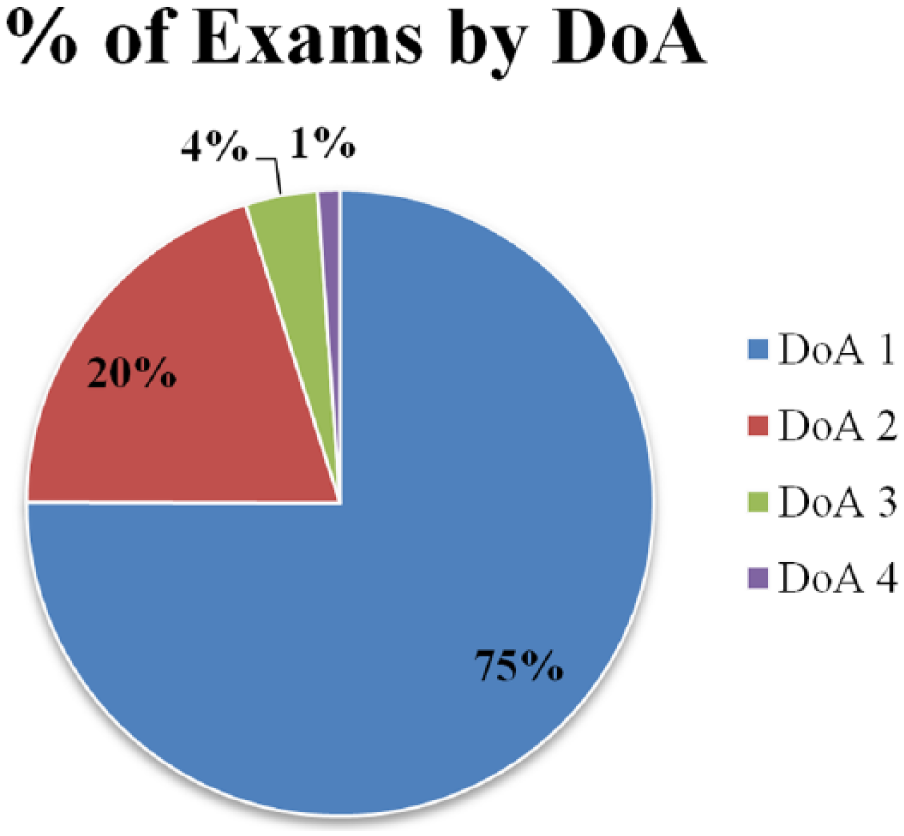

In phase 1, 48 examinations were identified as “further review required” and moved on to phase 2 of data analysis. Four exam packages (three ABDUS, one PELUS) were excluded from data analysis: one was an improperly coded appendix scan, one had two different technical impression sheets for the same report, and two examinations were missing a separately coded accompanying hernia worksheet. At the end of the data analysis period, 322 of 429 (75%) exam packages were ranked as DoA 1, 86 of 429 (20%) were ranked as DoA 2, 16 of 429 (4%) were ranked as DoA 3, and 5 of 429 (1%) were ranked as DoA 4 (see Figure 3).

Graphical representation of the proportion of exams that received degree of agreement (DoA) scores of 1, 2, 3, or 4. Seventy-five percent of technical impression sheets agreed completely with their corresponding radiologist report. A very small fraction of exam packages was scored potentially (DoA 3) or definitely (DoA 4) discrepant.

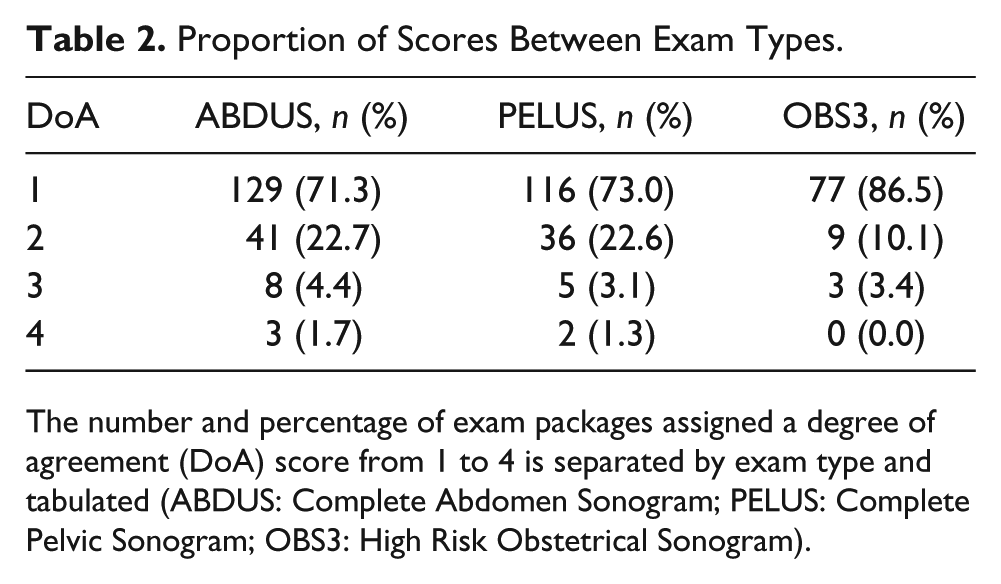

DoA scores are separated by examination type and provided in Table 2. This allowed for the visualization of differences in agreement between sonographer and radiologist based on examination type, should they exist.

Proportion of Scores Between Exam Types.

The number and percentage of exam packages assigned a degree of agreement (DoA) score from 1 to 4 is separated by exam type and tabulated (ABDUS: Complete Abdomen Sonogram; PELUS: Complete Pelvic Sonogram; OBS3: High Risk Obstetrical Sonogram).

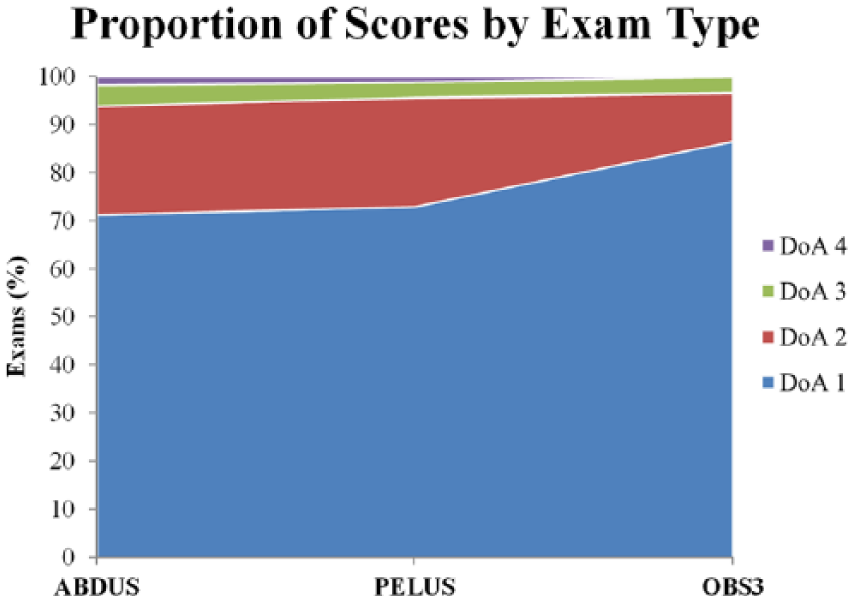

An area graph constructed from the data in Table 2 is given, which represents the distribution of DoA scores for exam packages in each of the three examination types. A greater proportion of DoA 1 exam packages from High Risk Obstetrical (OBS3) examinations is readily visible in Figure 4.

The proportion of degree of agreement (DoA) scores by exam type is shown by this area graph. A greater proportion of DoA 1 scores for the High Risk Obstetrical exam type (OBS3) is evident.

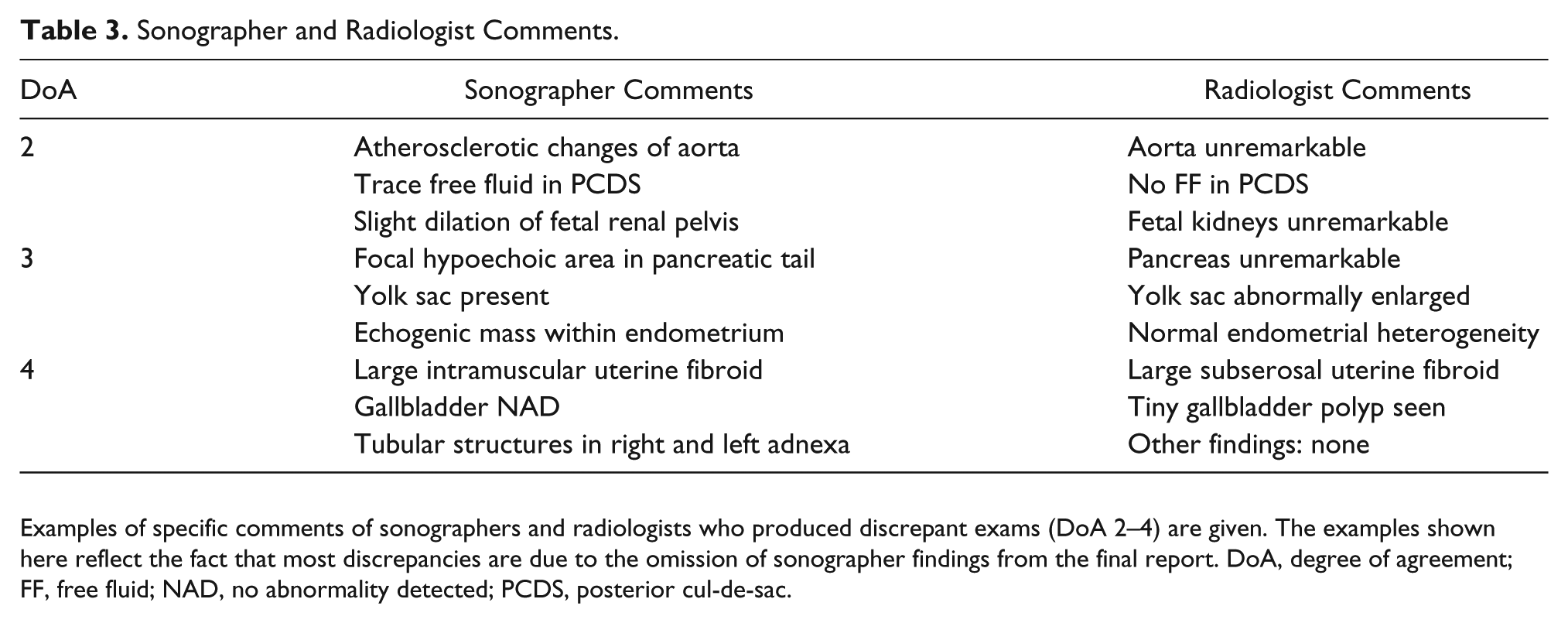

Data analysis identified 107 discrepant exam packages. Examples of the specific comments of sonographer and radiologists who produced these discrepancies are provided in Table 3.

Sonographer and Radiologist Comments.

Examples of specific comments of sonographers and radiologists who produced discrepant exams (DoA 2–4) are given. The examples shown here reflect the fact that most discrepancies are due to the omission of sonographer findings from the final report. DoA, degree of agreement; FF, free fluid; NAD, no abnormality detected; PCDS, posterior cul-de-sac.

Discussion

The results of this research reveal 75% agreement between the technical impression sheets of sonographers and the reports of radiologists. The majority (80.3%, 86/107) of discrepancies were of minor significance (DoA 2) and usually involved disagreement on incidental findings or findings not directly related to the indication of the examination. For example, a significant proportion of level 2 discrepancies in Complete Abdomen were related to disagreement between sonographer and radiologist on the enlargement of the liver. This specific example could be attributed to poor standardization of measurement protocols or controversy surrounding the definition of the upper limit of normal.

In general, the findings would suggest that radiologists tend to omit the comments of sonographers rather than add to the findings recorded in the technical impressionsheet. For example, atherosclerotic development of the aorta reported by the sonographer in Complete Abdominal scans is often omitted from the final report. This could be due to the fact that this finding is incidental and expected in the elderly patient; however, this could not be confirmed in the present study given the absence of patient demographics.

High Risk Obstetrical examinations produced the greatest agreement between sonographers and radiologists, with 86.5% of exam packages revealing complete consensus. However, this could be due to the fact that many of these examinations were simple follow-up anatomy evaluations and consisted mainly of standard measurements. The authors cannot conclude any definite trends in agreement between sonographer and radiologist based on the type of examination being performed.

The results of this study cannot be directly compared to the findings of Riley et al., 14 who discovered 95% agreement (DoA 1) between the sonographer technical reports and the radiologist reports in musculoskeletal sonography. In the study by Riley et al., actual sonographic images were available for consultation, and the independently reporting sonographer produced differential diagnoses in an official report, as opposed to a technical impression sheet.

Limitations

This study has shortcomings that limit its ability to accurately assess the performance of sonographers in general sonography. Foremost, this review comprises a qualitative analysis of medical records on a small scale (n = 433). As a result, it is not meant to inform future practice guidelines but instead to provide a working model to initiate large-scale internal audits. Also, the examination types included in this article do not constitute the whole of generalist sonography; other procedures carried out by sonographers, such as vascular and musculoskeletal studies, were not included in this article due to logistical limitations. This study involved the retrospective analysis of records that are meant to represent imaging findings; it is difficult to determine the potential of discrepancies to alter patient care/management, as well as to ascertain the true origin of the discrepancy, without the greater clinical context, patient demographics, and actual images available for consultation.

The ranking system developed by Riley et al. 14 was used in this project to assess the degree to which the technical impression sheet of the sonographer agrees with the report of the radiologist. This system, although originally designed for an independently reporting sonographer, allowed the authors to assign DoA scores in a systematic and consistent manner. However, to effectively apply this technique to general sonography in Canada, where sonographers are expected only to report findings and not give differential diagnoses, an amendment is suggested. We recommend that the ranking system be altered in a way to reflect the fact that the main indication for an examination is the primary consideration for assessing agreement between sonographer and radiologist. Incidental findings should only have an effect on DoA scores when they are of significant clinical relevance with the potential to alter patient care. However, the audit performed in the present study may facilitate a dialogue between sonographers and radiologists as to the relevance of recording findings unrelated to the clinical question.

Conclusions

The results of this research should be interpreted with caution, keeping in mind that this audit was a retrospective review restricted to medical records that represent imaging findings. As a result, the findings are vulnerable to inaccuracy due to transcription errors and a lack of clinical context.

This research reveals 75% agreement between sonographer and radiologist on imaging findings as they are recorded in technical impression sheets and radiologist reports. When discrepancies arise, they are usually minor and involve the omission of incidental/additional findings by the radiologist.

For accurate analysis of sonographer performance, we suggest that blinded retrospective review of technical impression sheets and reports is not an effective auditing method for general sonography. The lack of clinical context and actual sonographic images was a significant barrier to confidently establishing how much the conclusions of the radiologist agreed with the conclusions of the sonographer. However, the approach described in this article may be suitable for large-scale audits of sonographer performance and reporting practices, since individual review of images and findings may present significant logistical challenges.

Footnotes

Acknowledgements

The authors acknowledge Professor Laura Thomas, whose extensive clinical knowledge and helpful guidance was vital to this projects success. We also acknowledge Stephanie Varga for her tireless efforts during the data analysis process.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplementary material is available online with this article.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.