Abstract

The assessment of clinical, technical, and analytical competence can be a challenging task in sonography education. The assessment process is typically subjective, and because of this, it can be difficult to accurately evaluate students. This study researched the implementation of using an objective structured clinical examination (OSCE) for diagnostic medical sonography students. The examination consisted of two components: (1) image analysis and (2) scanning. This examination involved first- and second-year sonography students. The results indicated that an objective assessment of technical and analytic skills could play an integral role in the evaluation process for sonography education. The objectives of this study were to determine (1) the reliability of the OSCE to test sonography students, (2) the validity of the OSCE in measuring the performance of sonography students at different levels in their education, and (3) the usefulness of the information received from this test.

Keywords

The primary goal of sonography education programs is to produce competent sonographers. In most education programs, the performance of sonography students typically consists of subjective evaluations (both that assess overall clinical performance and clinical competence) and objective standardized multiple-choice tests. The problem that exists with using only these types of evaluation tools is that subjective clinical evaluations can be unreliable due to lack of controlled variables, and the rigor of multiple-choice testing is largely dependent on the knowledge base and test construction experience level of the sonography instructor. 1

Given the problems stated above, it is difficult for educators to ensure that student knowledge, upon graduation, is complete in both clinical skills and didactic knowledge. Currently, certification is suggested to be the minimum standard of practice in sonography, yet national board certification is not a requirement for graduation from an accredited sonography educational program or to practice clinical sonography. 2 When one performs sonography, the individual should be clinically competent to safely perform the examination. This competence cannot be measured through multiple-choice question tests alone. Sonography educational programs can help to ensure that the individual is clinically competent; however, the assessment methods employed by sonography educational programs are limited to subjective clinical evaluations and objective multiple-choice questions. 3 Therefore, sonography students may graduate from their respective programs with widely varied amounts and types of clinical training and objective assessment. This could lead to suboptimal examinations for patients that could result in legal action or, in the worst case, death of a patient. 4

The purpose of this study was to determine whether an objective structured clinical examination (OSCE) for sonography students would aid in objectively assessing clinical competence. This study was based on a similar study that was done using an OSCE to assess echocardiography fellows’ technical and interpretive proficiency skills in cardiac sonography. 5

Background

The clinical competency examination that the authors have researched and implemented into this study is the OSCE. The OSCE assesses clinical competence by using a multidimensional practical examination of clinical skills. 6 The examination consists of students rotating through a series of stations. At each of these stations, the student performs certain clinical tasks and is observed and evaluated using a standardized checklist. 7

In most medical training programs (including sonography), faculty measure student performance through standard multiple-choice tests and subjective faculty evaluations. 6 Multiple-choice question tests can verify didactic knowledge and analytical skills to a certain extent. They cannot fully assess technical proficiency because these tests do not include a way for students to demonstrate an ability to actually perform a sonographic examination. 8 Faculty members use other assessment tools in the clinical setting, including subjective evaluations and competencies. Unfortunately, the environmental factors present in the clinical setting are varied and nonreproducible from student to student. In the situation where a clinical instructor does not want to give a student a “low” score, these subjective tools could potentially be unreliable or could render a higher score than the sonography students’ actual performance. 6 Given the challenges of fully assessing clinical competency, an additional method of objective clinical assessment should be incorporated to bridge the gap between multiple-choice question tests and subjective clinical evaluation tools. The addition of this objective clinical assessment could help to ensure that sonography students are prepared to enter the sonography profession.

An OSCE should consist of clearly delineated tasks that simulate the experience of the student in the clinical setting. These tasks should be performed on standardized patients in a controlled clinical setting to ensure reproducible conditions, which lend a larger degree of objectivity to the examination. These tasks may include (1) obtaining a detailed and relevant history, (2) identifying the patient problem and reaching a diagnosis, (3) identifying the appropriate investigative approaches, and (4) interpreting the results of the investigation. 8 These items would be useful to assess in sonography educational programs because sonography students are expected to perform these four core tasks upon graduation.

Basing the assessment of sonography student performance on these core tasks would provide the educator with a more objective idea of how students are performing in the clinical setting. As sonographers, we take a detailed clinical history (task number 1). From that clinical history, sonographers come to a potential diagnosis, or at least know what disease processes to look for prior to starting the examination (task number 2). Sonographers then tailor the examination based on what is seen (task number 3). Finally, sonographers internally analyze the results of the findings and release those technical findings to the interpreting physician (task number 4). All of these items should be assessed in the clinical performance of sonography students throughout the duration of their training.

In the process of participating in a clinical objective examination, the students are able to prove that they are competent in several domains, including interpersonal skills, problem-solving abilities, physical diagnosis skills, and technical skills. 6 The OSCE allows students to perform in controlled clinical situations that cannot be translated to or evaluated within the confines of a multiple-choice test. The OSCE also demonstrates to students that being clinically competent is valued by faculty members. Last, the OSCE can aid in the identification of problem areas in both the student skill set as well as in the curriculum. 7

In an OSCE, the patients that are used for the examination are standardized patients (SPs). An SP is an individual who is usually paid by the institution to act as a mock patient. 7 A benefit is that with an SP, the clinical problem is the same; therefore, all students are expected to evaluate the same pathology, rendering reproducible evaluation results and objective assessments of clinical skills. Another advantage of using an SP is that the student can practice on this patient without the worry of tiring the person, aggravating the illness, or causing pain. 8 SPs are being used to test medical, pharmacy, physical therapy, physician assistant, genetic counseling, and veterinary medicine students. Because the use of SPs in teaching and assessment is proving effective, more medical education programs are using these individuals to test students’ clinical abilities. 9

Using the information gained from the aforementioned studies, the authors designed an OSCE for sonography students. The objectives of this study were to (1) determine the reliability of the OSCE to test sonography students, (2) determine the validity of the OSCE in measuring the performance of sonography students at different levels in their education, and (3) determine the usefulness of the information received from this test. 6

Methods

Participants in this study included first- and second-year sonography students (N = 25) enrolled in an accredited diagnostic medical sonography program. Institutional review board (IRB) approval was applied for, and exempt status was granted. The OSCE was performed on SPs. The SPs were used to serve as patient models for the scanning component of the OSCE and were used as a carotid or a lower extremity venous. Two registered vascular sonographers served to establish the standard of comparison. These sonographers scanned the SPs prior to the students, noting any pertinent clinical findings. The main incidental findings were noted in the carotid sonographic examinations. These findings included minimal plaque and intima-media thickening. The only findings in the lower extremity venous studies were duplicated femoral veins in some of the SPs.

The vascular sonography OSCE consisted of two components: (1) image analysis and (2) vascular sonography scanning. The image analysis component consisted of five cases displayed in a PowerPoint format on a laptop computer (all cases had patient identifiers removed). The vascular pathology cases included carotid artery stenosis, upper extremity venous thrombus, lower extremity venous thrombus, lower extremity arterial stenosis, and a carotid examination demonstrating a subclavian steal. Students were asked questions for each case and were also asked to identify and record the major relevant findings. The students had 60 minutes to complete this station.

The scanning component consisted of two 30-minute scanning sessions: (1) carotid and (2) lower extremity venous examinations. The rationale for selecting these examinations is that they are commonly performed in many sonography departments. In the interest of time, the students were asked to scan only the right carotid and only the right leg. The students were given a checklist of the structures that they needed to demonstrate in both the longitudinal and transverse planes for both examinations. The carotid examination was performed on an Acuson-Sequoia (Siemens, Malvern, Pennsylvania), and the lower extremity venous examination was performed on a Philips HDI 3000 (Philips Healthcare, Bothell, Washington). The images obtained by the students and sonographers were digitally archived. These particular machines were used for the OSCE examination because all students used these same pieces of equipment throughout their time in the program, during dedicated scanning laboratory time.

After the examinations were performed, the students were asked to write down the velocities and findings within the carotid on a study sheet, as well as any findings on the checklist given at the beginning of lower extremity examination. After the examinations were completed, the primary investigator evaluated the students’ performance of both the carotid and lower extremity venous examinations by using an evaluation checklist and by comparing the students’ images to the sonographers’ images (Figures 1 and 2). Students had to receive a cumulative score of 80% on both components to pass the examination. This grade was part of the students’ clinical education grade for the semester during which the test was administered. The grading criteria and objectives for the OSCE were formulated based on criteria provided by the Society of Diagnostic Medical Sonographers (SDMS) Scope of Practice and the National Education Curriculum (NEC; Tables 1 and 2).10,11

A student’s images compared to the sonographer’s images. Notice that in these images, the student and the sonographer accurately displayed carotid plaque and have similar image quality.

A student’s images compared to the sonographer’s images. Notice that in these images, the student does not display the same quality of image compared to the sonographer and also misses a subtle plaque in the carotid bulb.

Assessment Tool Used for Evaluating the Students on the Lower Extremity Venous and Carotid Scanning Stations

Overall Grade:________ Pass [___] Fail [___] (Passing is anything above a total score of 23)

Comments:

___________________________________________________

___________________________________________________

___________________________________________________

___________________________________________________

___________________________________________________

Evaluating Sonographer’s Signature: __________________ Date: __________

Use to evaluate each examination. There are two examinations for a potential total score of 28.

Comparison Between the OSCE Assessment Objectives to the NEC and SDMS Scope of Practice

NEC, National Education Curriculum; OSCE, objective structured clinical examination; SDMS, Society of Diagnostic Medical Sonographers.

Data Analysis

The measurement of validity and reliability are ways to evaluate the quality of a test or examination. 12 Validity evaluates how well an educational assessment measures the function for which it is being used. 12 Several different types of validity may be evaluated. This examination was evaluated for content and construct validity because these two types of validity require a systematic and rational analysis. Content validity is present when the tasks or objectives being measured represent accepted content standards in a particular subject area. 12 To achieve content validity, this examination was developed using protocols that follow the Society of Vascular Sonography (SVU) for the performance of carotid and lower extremity venous examinations.13–16 Construct validity is present when the test is found to measure the ability or trait for which it was created. 12 In order for this examination to have construct validity, questions and tasks were formulated based on the knowledge requirements for sonographers outlined in the NEC and in the SDMS Scope of Practice.10,11 Reliability of an examination is an important trait to measure, as well. Reliability is present when an examination is found to measure performance, in a consistent manner, across a particular group of students. 12 Reliability for the OSCE was obtained through the calculation of the alpha coefficient. 12

Construct validity was calculated by comparing the total scores of the OSCE with didactic final scores from two different courses in the vascular curriculum (Vascular I and Vascular II). These courses cover the didactic material of both the cerebrovascular and peripheral vascular systems. The purpose for comparing these scores was to see if the clinical (OSCE) test and the didactic tests measure the same construct or trait. The reliability with which construct validity was present in the OSCE was calculated using the Pearson correlation. 12 The Pearson correlation evaluates two sets of scores on tests that are thought to measure the same abilities or traits. 12 The OSCE reliability was determined using the Cronbach alpha coefficient, where 0 equals poor reliability and 1 equals excellent reliability. 1 The alpha coefficient is useful for measuring overall reliability of tests that are administered only once. A well-constructed test should have an alpha coefficient of .70 or greater. 12

Results

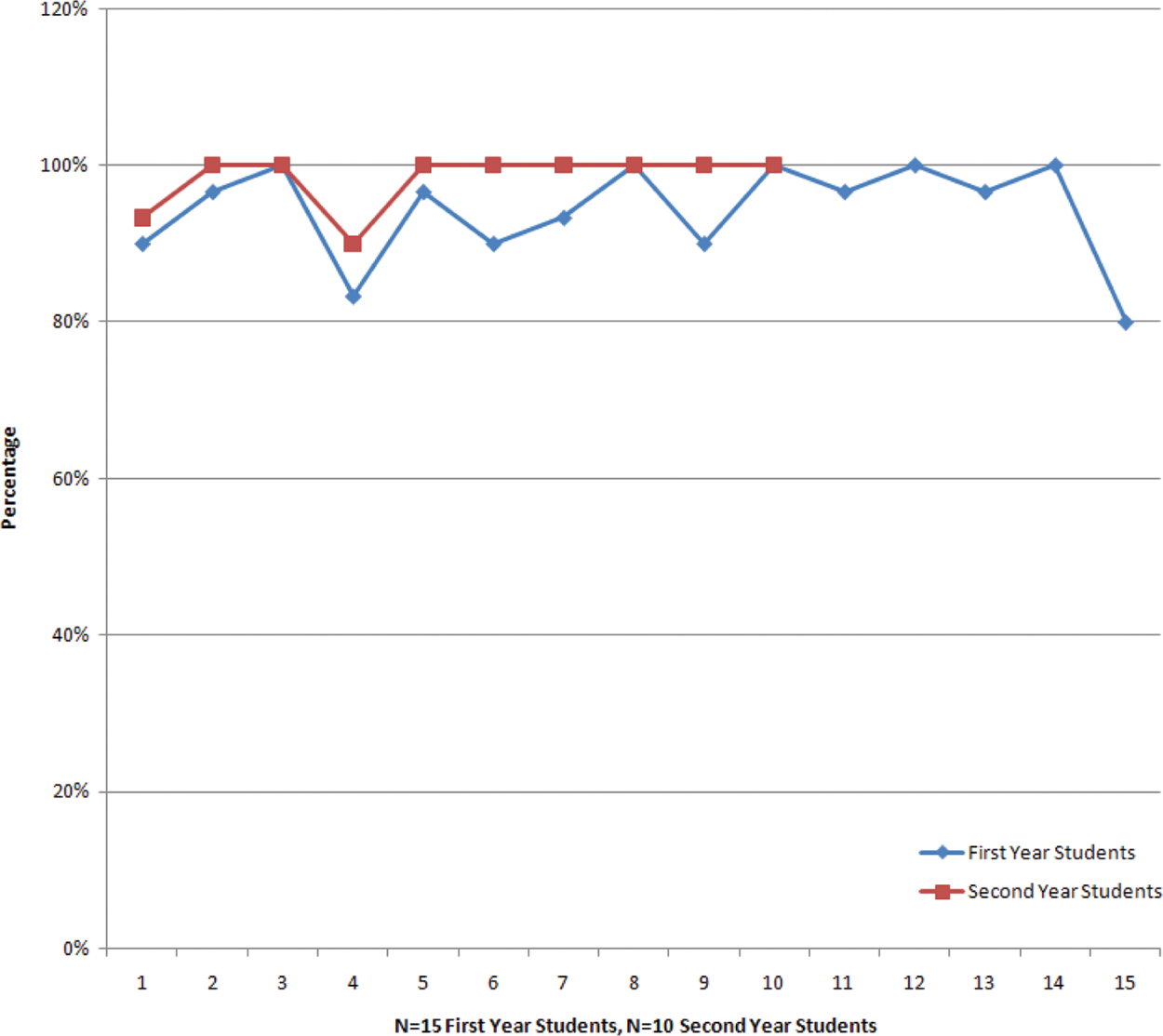

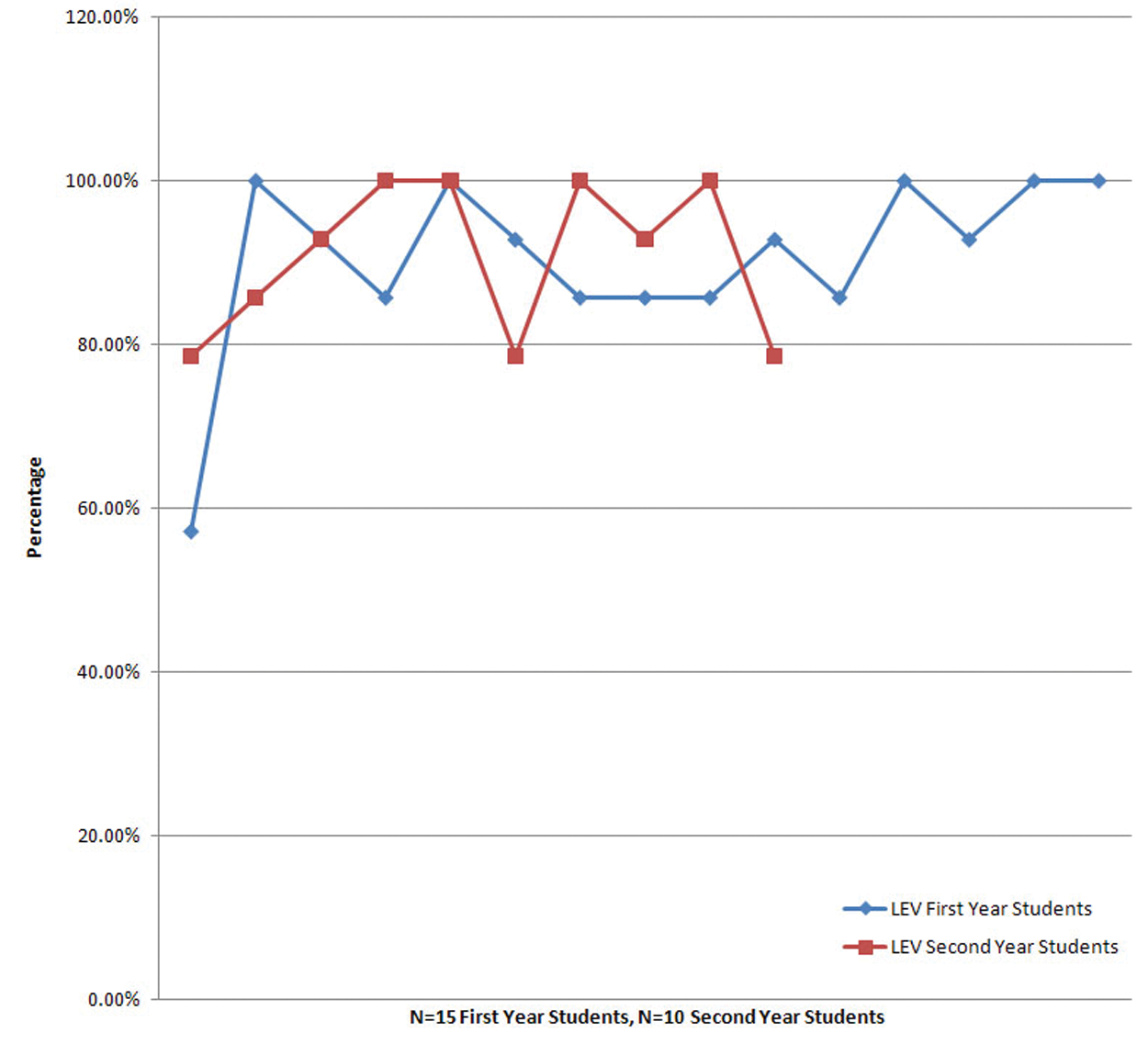

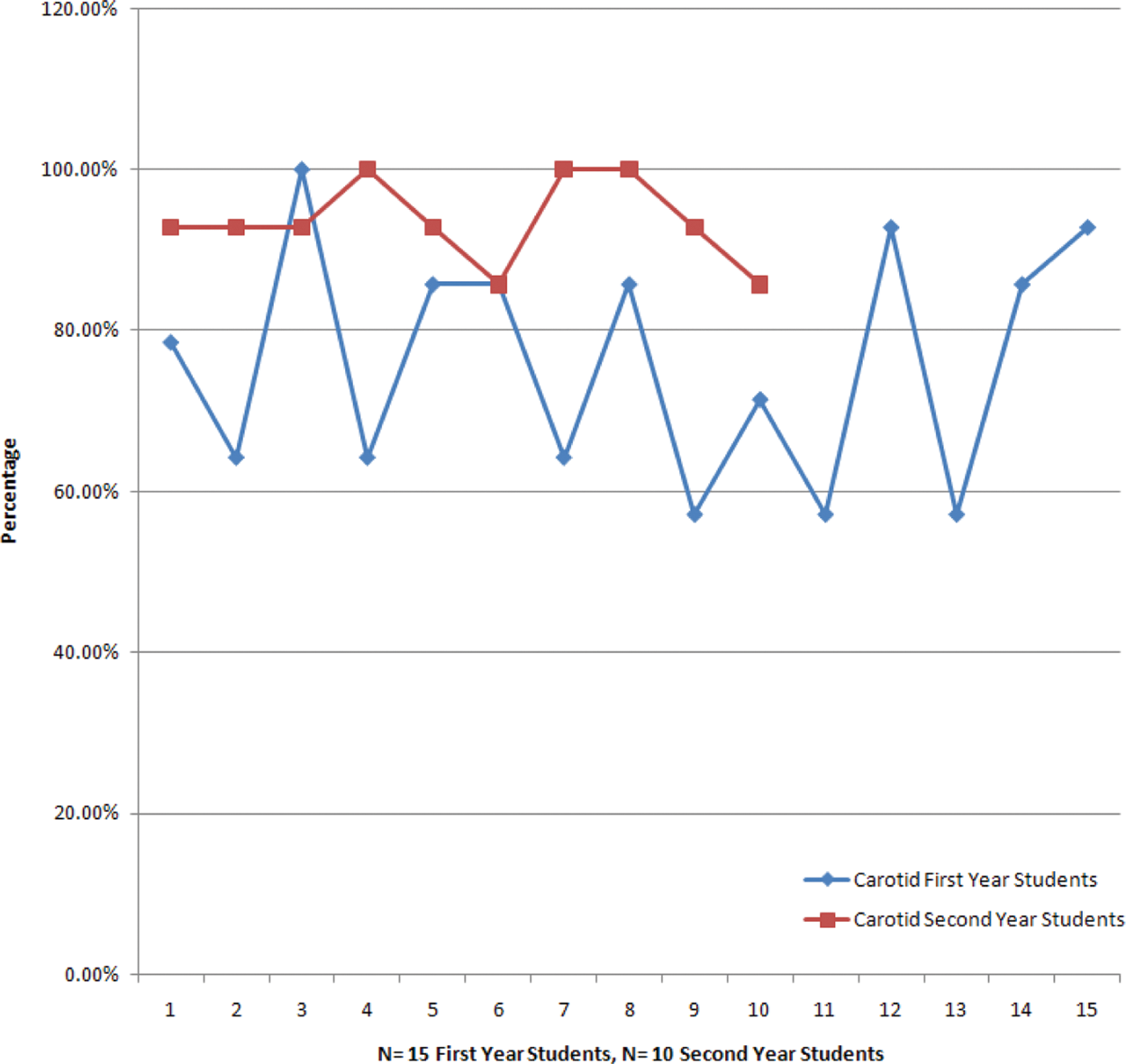

In all, 25 sonography students (first year [n = 15]; second year [n = 10]) participated in the vascular OSCE. The overall average on the image analysis station was 94.0% for the first-year students and 98.3% for the second-year students (Figure 3). The scanning station had a wider range of scores. Between the two scanning stations, the first-year students overall scored higher on the lower extremity venous examination than on the carotid examination (lower extremity venous examination first-year student average = 90.5%; carotid examination first-year student average = 76.2%). The second-year students scored higher on the carotid examination than on the lower extremity venous examination (lower extremity venous examination second-year student average = 90.7%; carotid examination second-year student average = 93.6%). As expected, the second-year student scores averaged higher than the first-year student scores (see Figures 4 and 5 to see the difference in scanning scores between the first- and second-year students).

The comparison of first-year (n = 15; blue line) and second-year (n = 10; red line) student scores on the image analysis station. Note scores ranged from 80% to 100% for the first-year students and from 90% to 100% for the second-year students.

The comparison of first-year (n = 15; blue line) and second-year (n = 10; red line) student scores for the lower extremity venous scanning station. Note scores ranged from 57.1% to 100% for the first-year students and from 78.5% to 100% for the second-year students.

The comparison of first-year (n = 15; blue line) and second-year (n = 10; red line) student scores for the carotid scanning station. Note scores ranged from 57.1% to 92.8% for the first-year students and from 85.7% to 100% for the second-year students.

The presence of construct validity was demonstrated in the objectives measured in the scanning and interpretation stations. The objectives for the OSCE assessment tool were formulated based on the NEC and SDMS Scope of Practice10,11 and included knowledge and abilities that sonographers should exhibit through the proper performance of the carotid and lower extremity venous examinations (Tables 1 and 2). In addition, the reliable extent to which construct validity was present was calculated using a Pearson correlation between the OSCE scores and the didactic final scores from both the Vascular I and Vascular II courses. The OSCE and the multiple-choice tests were shown to have only a weak positive correlation (.23). This supports the idea that multiple-choice tests are not able to adequately assess student performance in the clinical setting. The OSCE is able to test students for different skills and abilities than the multiple-choice tests. Conversely, the multiple-choice questions test students for a different set of knowledge and abilities than the OSCE does. Together, these tests may give a more complete and accurate evaluation of a student’s abilities as a sonographer than either type of test can reveal alone. The preliminary data illustrate that it is valuable to test students in both the didactic and objective practical setting to ensure that students can demonstrate not only their knowledge base but also their scanning ability and the ability to analyze sonographic images. The OSCE was also found to be a reliable test through the calculation of the alpha coefficient. The alpha coefficient was .72, which demonstrates high test reliability on a nonstandardized test. In this instance, the OSCE was shown to be a reliable and valid evaluation tool for sonography students.

Discussion

Measuring outcomes in medical education programs and their relation to on-the-job performance are issues in medical education that require further study. 17 Providing an OSCE in addition to clinical evaluations and multiple-choice question tests, during the course of a sonography program, could reveal if a student is capable of performing sonography independently. The addition of an OSCE would act as a complement to testing and subjective evaluations that are already in place. 17

By providing an OSCE, sonography educators can identify with more confidence that students in the program are knowledgeable both academically and clinically. Employers may then have more assurance that a graduate-level sonographer not only possesses the ability to pass board examinations but is also clinically competent.

A few limitations and disadvantages were also identified within the course of this study. One of the main disadvantages to administering the OSCE are the costs in both time and money. The OSCE can take up a significant amount of faculty time depending on how many stations are incorporated and how many students are taking part in the examination. The monetary cost of an OSCE can also vary based on the number of students, standardized patients, evaluators, and other faculty involved. 7 This particular OSCE, which involved 25 students, 15 SPs, and 4 faculty, cost the program approximately $7000 to administer. There are a few ways to reduce the cost of the OSCE. One would be to use sonography students as models instead of the SPs. The SPs are paid a certain amount per hour, whereas the sonography students would be free to scan. Another way to reduce the amount would be to hold the examination in a facility that does not charge a room fee.

Other disadvantages to the OSCE include the amount of variability within the examination. Two main sources of potential variability with an OSCE include the examiner and the patient. If the students are expected to test on different patients, a large source of variability could be introduced. Also, if students are evaluated by different evaluators, there could be a large variability in evaluator ratings, regardless of how well the student performs. 8 In this study, these variability challenges were addressed through the use of a single evaluator for all students and the use of the same set of SPs for the carotid examination and the lower extremity venous examination.

Conclusion

This study has shown that an OSCE has the potential to be both valid and reliable in the evaluation of sonography students. In addition, the use of an OSCE in sonography education may provide a much more complete illustration of how well a student will perform in the clinical setting than the use of subjective clinical evaluations and objective multiple-choice question testing alone. This OSCE rendered valuable insight into the progression of sonography students’ technical and interpretative competence, which had not been adequately demonstrated through subjective clinical evaluations. The use of the OSCE may be the “missing link” in the assessment of sonography students, providing educators with the information needed to aid students in becoming more competent both clinically and didactically, ultimately providing patients with a higher level, quality sonographic examination.

Footnotes

Acknowledgements

The authors thank Jane Banning, Dr. Michael Subkoviak, and Dr. Mark Kliewer for their time, assistance, and expertise with this project. The authors also thank the International Foundation for Sonography Education and Research (IFSER) for granting Sara Baker with the Bracco Diagnostics, Inc. Research Award to aid in funding this project.

The author(s) declared no potential conflicts of interest with respect to the authorship and/or publication of this article.

The authors received financial support for the research through the Bracco Diagnostics, Inc. Research Award granted by the International Foundation for Sonography Education and Research (IFSER).