Abstract

As artificial intelligence (AI) and its applications continue to reshape our world, understanding its historical roots becomes increasingly crucial. However, this historical perspective, focused on documenting what actually happened from the most objective point of view, is incomplete if we do not capture how different groups remember those events. For this reason, we argue in this paper in favor of developing a collective memory of AI in three different lines (as a body of knowledge, as attributes, and as a process) which will complement the historical task. As a result, we will obtain a more comprehensive vision of the development of AI, incorporating a more humanistic perspective, which will be crucial to understanding how society assesses AI.

Introduction

The pervasive influence of artificial intelligence (AI) and its applications continues to reshape our world. Often described as the “Fourth Industrial Revolution,” AI follows the digital or third industrial revolution, which combined technological innovations to create a globalized computer network (Moll, 2021). Given the growing societal, scientific, and political relevance of AI (Casado, 2025), we need to understand its historical roots and development, especially in Europe, which has played a major role in its evolution.

Current accounts of AI history emphasize a linear narrative of progress, occasionally interrupted by the so-called “AI Winters” (Toosi et al., 2021). However, this narrative is incomplete. The rapid evolution of AI means that many of its key contributors have witnessed important transformations within their lifetimes and these testimonials represent valuable sources for constructing a more nuanced history of the field.

This is especially important in Europe since, in comparison with USA and China, it is a mosaic of over 50 countries, each with its own languages, cultures and institutional practices. Current approaches to AI history do not adequately reflect this richness and complexity and we claim that it is necessary to preserve it. This paper argues for the creation of a European Collective memory of AI (ECMAI), which aims to gather and interpret the diverse European experiences with AI.

Our main research question is: “How can a European Collective Memory of AI be constructed?”. Drawing on the distinction between past, history and memory, we propose three lines of research: (i) ECMAI as a body of knowledge; (ii) ECMAI as an attribute; and (iii) ECMAI as a process (Roediger & Abel, 2015).

Past, History, and Memory

Past, history, and memory are three closely related concepts, yet they have important differences. The past refers to events that actually happened at a given moment. It is likely impossible for an individual to fully grasp it, as inevitably bound by its own perspective. Nonetheless, it is the roots upon which history and memory are constructed.

History is an academic and multifaceted concept. We encounter historicist and objectivist perspectives, such as Leopold von Ranke, who claims the study of the past is the recapitulation of evidence to understand what actually happened (von Ranke, 1887). On the other hand, narrative approaches see the study of history as a way to interpret the meaning of past events. Friedrich Hegel, for example, viewed history as the rational development of humanity to achieve total freedom (Hegel et al., 1955); Karl Marx emphasized history as the fight between classes (Marx & Engels, 1848); Paul Ricoeur, from a hermeneutic perspective, conceptualizes history as the task of probing past events to understand the meaning of human actions and their symbolism (Ricoeur, 1965); or Michel Foucault considered history a means to understand how the power structures have been changed (Foucault, 1970). Both approaches presuppose that history is evidence-based (at least as much as possible), systematic (following a specific methodology), based on evidence (primary or secondary) about the events studied. History aims to explain events of the past and their consequences.

In the field of AI, several initiatives pursue this goal. Toosi et al. (2021) highlights the crucial moments in the development of AI using the “summer and winter” metaphor. The Britannica article 1 outlines a set of milestones presenting AI as a progressive continuum. The AI100 initiative by Stanford 2 seeks to study the impact of AI in society, emphasizing the academic perspective and aiming to develop a sort of unified historical account of AI.

Memory, on the other hand, is a scientifically complex concept too. There are various forms of memory, such as historical memory, collective memory, working class memory, etc. Despite their diversity, they share two fundamental characteristics: (i) it is about representations and experiences, (ii) it is about individuals and groups of people. This paper focuses on the concept of collective memory, defined as the memories, representations and interpretations that a particular group has about past events and how they were transmitted from one generation to another and bear on the collective identity of the community (Halbwachs, 2020).

Beyond history and philosophy, collective memory has been studied in several disciplines. In cognitive science (Hirst et al., 2018), researchers investigate how personal experiences are transmitted from one generation to the next one. In psychology, the concept of collective memory is contrasted with the notions of remembering or historical fact (Wertsch & Roediger, 2008). In social sciences, collective memory is analyzed as a meaning-making process embedded into a community (O’Connor, 2022). Despite different methodologies, all these fields converge in the difficulty of providing a single and definitive definition of collective memory.

For the purposes of this paper, we assume that collective memory refers to “the individual memories shared by members of a community that bear on the collective identity of that community” (Wertsch & Roediger, 2008). We don’t make a priori assumptions regarding the size of the community—whether small (couples, families, etc.) or large (nations, large congregations, etc.)—but we assume that practice serves as a means of capturing evidences about individual memories.

Additionally, we assume that collective memory is intersubjective, shared, selective, dynamic, identity-forming, based on testimonies, and transmitted across generations. Methodologically, it can be studied in two complementary ways: a top-down approach, which begins by identifying persistent collective memories within a group and the individuals who carry them; and a bottom-up one, which focuses on the microlevel psychological processes that generate those narratives (Hirst et al., 2018). This proposal is grounded on the first methodology and it is the result an interpretative process empirically guided.

Thus, history and memory complement each other. Both disciplines aim to capture different aspects of the past, and both are necessary to gain a more complete picture of the development of AI in Europe given its melting pot of cultures. AI is a relatively young field, around 70 years old, everything is well documented but we cannot assume that there is not memories to be transmitted from one generation to another. Its rapid development has brought about major changes in a very short period of time, accelerating the processes of cultural transmission and the construction of collective memory.

In this paper, we present three complementary approaches or lines for the development of a collective memory of European AI. These are based on an empirical methodology due to the extensive documentation of AI that is available. In order to achieve this objective, this paper is structured as follows: Section 2 explains the dual nature of AI, as both source code and as software, which play an significant role in development of its collective memory; Section 3 proposes the aforementioned three possible approaches to study collective memory and it is divided into three subsections; Subsection 3.1 describes the proposal of ECMAI as a body of knowledge in computing; Subsection 3.2 describes ECMAI as a set of attributes and values of software; Subsection 3.3 outlines ECMAI as a way to capture the inherent dynamic properties of collective memory; Section 4 outlines a pipeline for conducting a research on ECMAI; and, finally, Section 5 summarizes the main conclusions of this paper.

Double Nature of AI: Software and Source Code

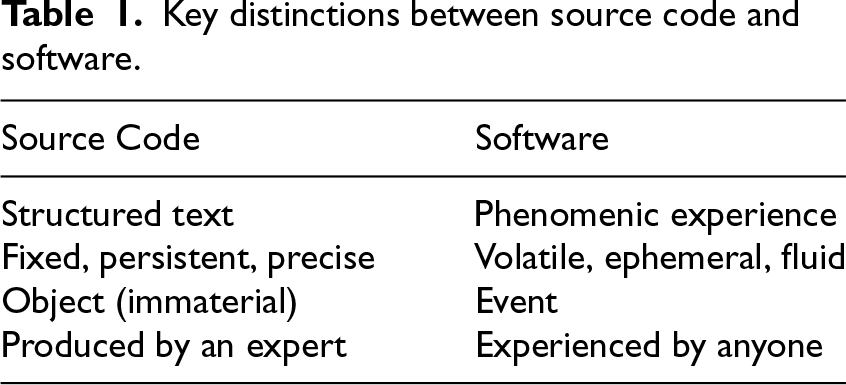

An AI system is a piece of software; i.e., it is the experience for a user resulting from the fact that a computer interprets instructions received in the source code (Gonzalez-Perez, 2025). Source code can be regarded as a structured text that determines the user experience when utilizing software. However, it should be noted that this experience is not entirely predetermined, as each user brings their unique personal experience when using it. An extreme example of this phenomenon is the use of any chatbot utilizing large language models (LLMs). Despite employing the same version of the model, different users will have different experiences depending on the prompt, despite the fact that they can have the same purpose. The same can be applied in the context of opening a basic word processor, wherein different users can have distinct personalizations of the same program. Table 1 provides an overview of the key distinctions between source code and software (Gonzalez-Perez, 2025).

Key distinctions between source code and software.

Key distinctions between source code and software.

So we can characterize the software as follows: It is a phenomenic experience. Each user has a specific objective when utilising a software application, generating a unique personal experience. This experience may be significantly different from that of another user, or even from a user’s own experience at different moments. It is fleeting, ephemeral, fluid. The software is subject to variation on account of the user. Sometimes this is because we use different devices, sometimes because we use the same software for different things. For instance, recommendation algorithms give different results depending on the device being used or the prior searches made on it. It is an event. The software can only be experienced when it is used; consequently, it is totally dependent upon the context in which it is employed. This occurs in a specific time. Imagine that we have a chatbot within our computer. Even though we are not using it, the chatbot is in our computer, but we can only experience it when our computer is on, the program is open and we are interacting with it. It is experienced by everyone. Nowadays, you do not need to be an expert to use software, even if you have to read a manual to understand the first steps before using it. From young to old, from beginner to advanced user, everyone can experience the software.

Let’s analyze the main characteristics of the source code: It is structured text. The source code contains all the instructions that a computer needs to process in order to carry out the specific task for which it has been designed. Moreover, it is a text without ambiguities (otherwise the software will not work properly) and everything must be explicit, not only for the compiler, but also with the comments of the programmer, who must comment the code to make it as interpretable as possible for any other programmer. It is fixed, permanent, precise. The same source code can be executed in different computers or devices and the device doesn't change the code. In addition, it can be duplicated indefinitely without any loss, exactly the same, and it is a cheap process as long as it can be done as duplicating any fragment of text. It is an object (immaterial). The source code can be preserved both in an analogue format (the source code of a software can be printed, although it is not) or in a digital format (the source code can be saved in a lightweight file as plain text). Moreover, it does not require special infrastructures or laboratories to be preserved for the future. Finally, it is very easy to keep track of any changes made to the source code, since an old version can easily be duplicated and the changes incorporated in a new version. It is produced by experts. Although anyone can write source code, it requires certain knowledge and skills to be able to produce software that works. So we can say that to produce functional source code you need to be an “expert” in a broad sense; someone who knows a programming language to a certain level. In addition, if we look at the production of source code as a practice, it also includes assumptions, organizational structures, priorities, etc. The language coding and the programmer’s comments on it give us a lot of information about the moment when the source code was produced.

In consideration of the dual nature of AI—as with any software—and with that important part of phenomenic experience, capturing memories of a group using AI software is essential to understand its history and how collective memory can be studied. Nevertheless, recollections—regardless of the facts—can only be captured indirectly. In light of this, we propose three empirically grounded approaches to the study of AI software that can provide evidence how different groups remember AI.

As we said, collective memory can be defined as “a form of memory shared by a group and central to the social identity of members of the group” Roediger and Abel (2015, p. 359). From a methodological perspective, research in this discipline has been primarily qualitative and humanistic (Roediger & Abel, 2015), although some research focusing on empirical methods has emerged in recent years. In this proposal, we assume that the qualitative study of collective memory can be enriched by empirical research in three lines (Roediger & Abel, 2015): i) collective memory as a body of knowledge; ii) collective memory as an attribute; iii) collective memory as a process.

ECMAI as Body of Knowledge

Collective memory, understood as a body of knowledge, aims to encapsulate what is actually known by a group of people, encompassing the shared understanding of past events. The concept of body of knowledge considers what can actually be established about the past. In the context of AI, this phenomenon can be traced back to the source code. There are several reasons why source code is considered a more suitable candidate for constituting an ECMAI as a body of knowledge than software.

Firstly, all programmers possess a certain degree of familiarity with contemporary programming languages, in addition to an understanding of the methodologies employed in the past. A comparison of contemporary coding methodologies with historial ones can provide insights into the preservations of those methodologies in current practices.

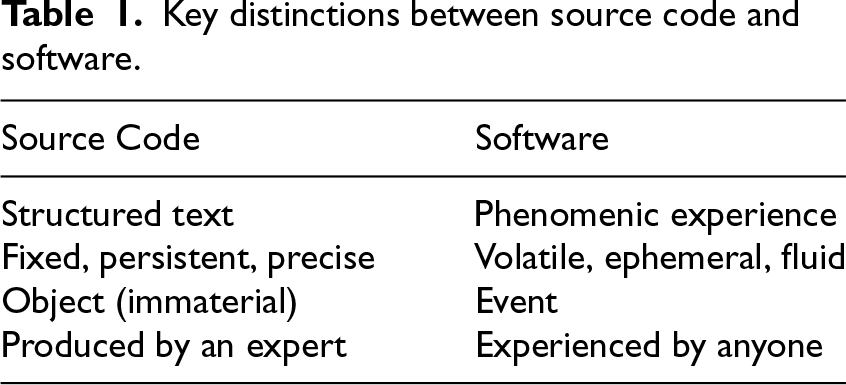

Secondly, the group that shares the experience is much easier to define, because it is possible to trace all the programmers who have contributed to the construction of any piece of software. In the case of large-scale projects, the formation of different sub-groups can occur. However, these sub-communities can be clearly identified and delineated. These communities can be categorized using different criteria, such as geographical criteria (code developed in one European country versus code developed in another European country), age criteria (senior programmers vs junior programmers), etc. Software repositories, such as GitHub, SourceForge, etc., serve as highly reliable sources for studying how source code is being created now, and to some extent, what from previous coding is ”remembered” in current practices. In addition, programmers usually rely on a shared platform as a medium for discussion and dissemination of knowledge. These platforms, such as GitHub, 3 Hugging Face 4 or StackOverflow, 5 serve as collective spaces where programming techniques evolve and spread. Analytics provided by these platforms can track the rise and fall of interest in different AI frameworks, programming languages, and algorithms, providing a quantifiable measure of the collective attention of the programming community. For instance, Stack Overflow trends data (Figure 1) reveals both the dramatic rise of Python’s dominance in programming discussions and the overall decline in platform usage since around 2022, potentially reflecting the impact of LLMs on how programmers seek assistance and share knowledge.

Stack overflow trends—most popular programming languages.

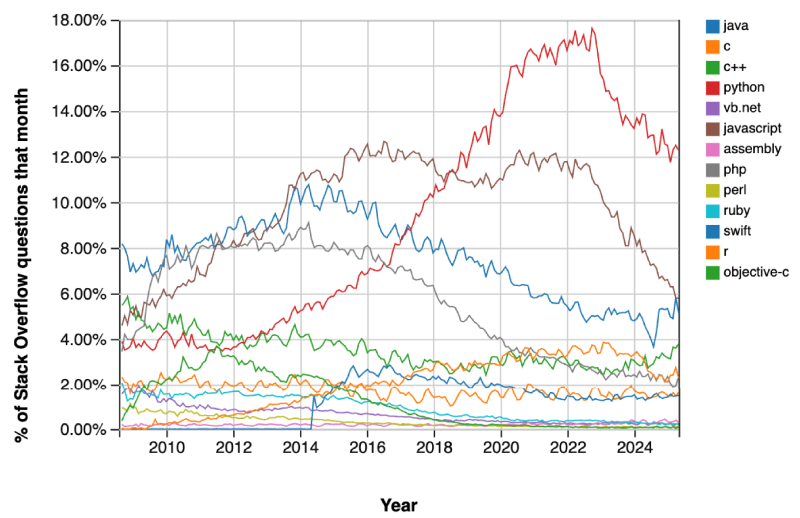

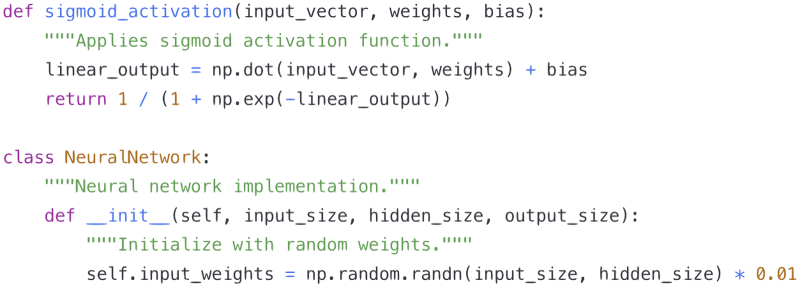

Thirdly, the code is stable. It is consistent for all analysts who undertake its examination. It remains unaltered upon analysis. This ensures that the analysis conducted by two different researchers on the same source code fragment does not affect the code itself. Moreover, this analysis is independent of the analyst’s personal experience of the code; rather, it is contingent on the methodology used in its study. As a piece of structured text, a source code can be empirically analyzed using natural language processing techniques. Such analysis can reveal, for instance, the most common words in the comments depending on the country where the programmer is based. It can also show how different programmers label the variables they use; the different source codes used to perform the same task, the differences in writing style, and so on. Figures 2 and 3 illustrate this idea. They present two neural network implementations that, while functionally equivalent, exhibit contrasting coding practices. Static analysis using SonarQube 6 yields quality scores of 2.1/10 and 9.3/10, respectively, highlighting differences in naming conventions (5 violations vs. 0) and documentation (commenting) coverage (0% vs. 95%).

Poor quality code example.

Good quality code example.

This exemplifies how empirical analysis can systematically capture cultural coding practices embedded within source code. Furthermore, Hassan and Sarhan (2024) surveys different static code analysis tools that can be applied according to FAIR principles (Findability, Accessibility, Interoperability, and Reusability). This provides empirical measures of other dimensions of scripts, such as cognitive complexity, style or memory leaks. These data can facilitate the establishment of standardized metrics that enable objective comparison across different programming communities and time periods. Moreover, these metrics can reveal implicit knowledge embedded in code that might not be evident in other artifacts. For instance, they can demonstrate how security vulnerabilities are addressed in different regions or time frames, impacting systems security and reliability (Ferrara et al., 2021).

Fourthly, the source code is produced at a particular moment in time and with the computing conditions available at that time. This can make the source code the perfect archaeological material, given its resistance to temporal shifts.

Fifthly, the accessibility of the source code itself is an important indicator for the study of ECMAI. Its presence or absence can be used as an indicator of multiple dynamics (commercial, political) within the AI community. The open availability of code and resources is indicative of values such as transparency, collaboration, and knowledge sharing. Conversely, the presence of closed code or the necessity to pay may indicate the priorities of competitive advantage. In this sense, even the absence of code creates a meaningful negative space for ECMAI, allowing researchers to shed light on what knowledge remains privileged or deliberately withheld from public scrutiny.

In conclusion, source code can be utilized as a reliable knowledge base to establish the collective memory of AI in Europe. This methodology can be applied to recall the relevant practices at the time the code was produced, as well as the specific set of events that transpired in the European history of AI.

Collective memory, as an attribute, seeks to encapsulate narratives, the manner in which individuals recount events and the manner in which these events are evaluated. This process usually accentuates positive elements, such as heroism and mythology, while diminishing negative or contradictory aspects. In this regard, the study of software can be considered a more valuable source of collective memory than the study of source code. The question that arises from this is how software, understood as the personal experience of different members of a group, can be studied empirically.

In order to tackle this question, it is first necessary to examine the process by which attributes are added to a given AI system. This process bears a family resemblance (Wittgenstein, 1989) to the manner in which cultural heritage values are attributed to objects by a collective of individuals, the phenomenon of valorization (Gonzalez-Perez, 2018).

The term valorization is defined as “an abstract entity of discursive nature that adds cultural heritage values to other valuable entities through an interpretive process agreed upon within a group or discipline” Gonzalez-Perez (2018, p.220-221). This definition, drawn from the domain of conceptual modeling for heritage studies, comprises five salient characteristics of this research: Not every type of entity can receive a valorization. It is assigned a cultural (heritage) value. It has a discursive nature, so it must be produced in natural language in a specific context. It is the result of an interpretive process. It is the result of agreement within a group or discipline.

As previously stated in Section 2, AI has a dual nature, functioning as both source code and software. As a preliminary starting point, we can assume that both perspectives can be considered valuable entities by a group of people. This is due to the fact that source code is a structured text created by experts and the software is a phenomenal experience that anyone can have (both satisfy characteristic 1).

Nevertheless, characteristic 2 appears to be inapplicable to source code, yet clearly applicable to software. For example, let’s imagine the following two scenarios: (i) we have Freddy the robot (National Museums Scotland, n.d.) but we can’t access the source code because the computers’ screens are broken, so the source code is inaccessible to us, but we have the user manual and we can interact with it; (ii) the source code is known but we can’t implement it because none of the modern computers can compile it, although there are some people who can read the source code and understand it. In scenario (i), the robot is accessible to anyone who understands the manual and s/he is able to interact with the robot. As a result, the user has a phenomenal experience of the software and this can be valorized (good/bad, historically relevant or not, etc.). In scenario (ii), the source code is unintelligible to most of the people because only a few experts can understand it, but this understanding cannot generate the phenomenal experience of the actual interaction with Freddy. It is evident that the source code can be endowed with cultural value, but as a piece of structured text. In any case, it cannot be given to the software because no one can experience it.

Characteristic 3 can only be applied to software, given that it determines the manner in which valorization can be produced, namely through a discursive nature. Nowadays, thanks to the Internet, it is very easy to identify a group of people or a community that collectively articulate their valorizations in the form of opinions concerning a particular software. For example, on the Trustpilot website, 7 a platform that allows users to share their experiences with various products, the Deepl service has received 377 user reviews of the software and the company itself. This fulfills the conditions of having a well-defined group of people who, through an interpretive process, create a value for the software. They can interact with each other in natural language, and a global rating for the item under review can be seen (in the form of a five-star ranking) which, in a way, sums up the agreement of this group. These comments can be empirically analyzed using NLP techniques, as we do today to analyze political opinions (Bassi et al., 2024) or disinformation (Bassi et al., 2024).

In examining discussions of European AI developments, we need to consider what Marres calls the “inherent ambiguity of the empirical object” of online research. As she notes, ‘‘digital media technologies have a significant impact on the staging of controversies in online settings’’ Noortje (2015, p. 657). This suggests that digital platforms do not simply document AI controversies, but actively shape how they unfold. Rather than perceiving this as a methodological problem to be eliminated, we should adopt what she calls an “affirmative approach” that recognizes how digital technologies participate in the very process of AI memory formation.

Characteristics 4 and 5 are more applicable to software valorizations than to source code. In order to analyze both of these concepts, we will focus on the process of valorization of scientific publications rather than on user experiences. It is possible to comprehend a scientific publication as the process by which authors assign a specific value to their contribution, a value which is recognized by the community through the peer review process. This process produces a discourse, a text in natural language with a particular communicative intention and goal (characteristic 3). The discourse comprises an interpretation of the novel contribution presented in a scientific publication and, in order to be accepted, it requires the endorsement of at least several people who ratify or not the valorization presented in the scientific publication.

In conclusion, peer-reviewed scientific publications (comprising conference papers, books, book chapters, etc.) constitute a reliable source to analyze community valorizations of specific AI systems, and thus for studying collective memory. For instance, the Proceedings of the First Workshop on Artificial Intelligence in Europe (WHAI) (Cortés et al., 2024) is a good example, since it includes several papers dedicated to the analysis of the European contribution to the development of AI. Empirical analysis of these corpora is also possible, with relevant techniques including sentiment analysis, topic analysis, discourse analysis (Gonzalez-Perez et al., 2024). These techniques facilitate comprehension of the diverse opinions and attributions given by the community in relation to specific events.

The final characteristic of collective memory is to understand it as a process. This should capture the ongoing reframing and interpretation of the past. Let’s consider, for example, the case of Alan Turing. His seminal contributions to the birth and first developments of AI, in addition to his work during the Second World War to break Nazi codes, have garnered significant acclaim. However, in 1952, he was convicted for homosexuality, which destroyed his scientific career and from that moment on he stopped receiving the honors corresponding to his scientific achievements. It was not until 2009, 57 years later, that Gordon Brown, then Prime Minister of the United Kingdom, apologized on behalf of the British government for the treatment of Turing; in 2013, Queen Elizabeth II granted him a posthumous pardon under the Royal Prerogative of Mercy. This analysis demonstrates the varied perceptions of Alan Turing in the United Kingdom, remembering him as a hero, as a criminal and as a victim of injustice, by the same country but at different moments.

Collective memory, as a process, is the most intriguing facet of memory, yet it is also the most challenging to study empirically. In the two previous approaches (collective memory as a body of knowledge and as an attribute), we defined a set of criteria that would allow us to determine the sources to be analyzed and the possible techniques to be applied. In this case, however, the definition of these sources and their subsequent analysis is considerably more complex; in any case, we propose below three main areas that can serve as a source for this research: Controversy analysis. Reshaping the past means change, and the engine of change is disagreement. The present proposal is for disagreements to be understood as differential evaluations made by different groups of people about the same event or issue (Gonzalez-Perez, 2018). There are three main types of disagreement: (i) predication disagreement, it occurs when two or more people or groups of people assign divergent values to a specific attribute of an event. For instance, this could be the case when evaluating if an improvement of Policy changes. Regulatory changes are another source of reinterpretation and reappraisal of a particular event. For example, the EU’s AI law has generated some who feel it does not go far enough, while others say it may harm European companies by imposing additional constraints.

8

These types of conflicts are usually captured in the news media and sources of information other than peer-reviewed academic publications. However, they still constitute a relevant element in the construction of the collective memory that a particular group of people may have about AI. Popular cultural manifestations and public remembrance. Literary works, cinema and other artistic expressions serve as valuable repositories of changing societal perceptions of AI. These cultural artefacts not only reflect contemporary attitudes, but also shape public understanding through their narratives and imagery. These cultural products often precede formal academic or policy discussions, capturing emerging concerns and hopes before they crystallize into institutional discourse, and thus providing a temporal dimension to understanding how collective memory of AI is continually reconstructed across different periods and contexts.

In such cases, the sources for analyzing this feature of collective memory are mainly outside the AI scientific community. These mainly involves other groups whose interests may clash with the AI community in various senses. The elements that constitute the corpora to be analyzed are the regulations about AI and the news published in different media and social networks. The objective is to construct this facet of collective memory by identifying the controversial positions on a particular topic about AI, reconstructing how their position is built argumentatively (Gonzalez-Perez et al., 2024) and, on top of that, elaborating how memory is built dynamically. Ideally, these studies should be diachronically, that is, they should examine how these different positions have evolved over time and can also be done synchronically, that is, identifying what are the opposing positions on a controversial issue at a given moment.

Digital tools can facilitate the analysis of online AI controversies (Venturini, 2012; Venturini & Munk, 2021). On the methodological side, following the sociological tradition (Crawford, 2020; Latour, 2005), the systematic study of societal transformations around technological progress requires a more nuanced approach. Specifically, researchers need to map the discursive positions of stakeholders, paying attention to two critical dimensions: the demarcation between legitimate and illegitimate knowledge, and the mediating influence of the communication platforms themselves. This reflexive stance acknowledges that media that host controversies inherently shape both the debates and the interpretive frameworks of researchers, thus actively influencing the construction of collective memory processes around AI (Noortje, 2015).

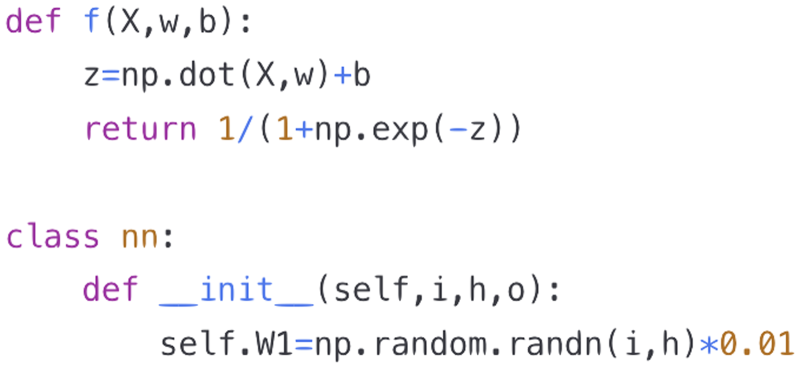

Towards a Methodological Pipeline for the Study of European Collective Memory

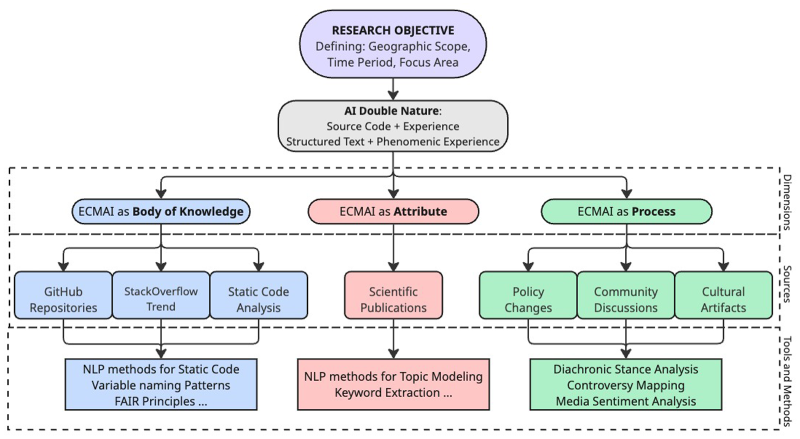

The last step is the integration of the three approaches described in previous sections. Figure 4 presents our methodological framework for understanding, collecting, and analyzing materials that constitute collective memory of AI. While our theoretical proposal could be applied to any context, following the pipeline structure reveals how focusing on European collective memory generates specific challenges and opportunities.

Pipeline for the creation of a project of ECMAI.

The choice of studying European collective memory (i.e., definition of the objective, pipeline’s first step) sets the focus area, time period, and geographical scope (a very common practice in memory studies Gavriely-Nuri, 2014; Klep, 2012; Taussig, 2017), creating unique methodological requirements due to Europe’s peculiarities.

For instance, Europe has pioneered a values-driven approach to AI development. GDPR (Parliament, 2016) and the AI Act (Parliament, 2024) reflect collective European memories of historical experiences with technological power, emphasizing privacy, human rights, and algorithmic accountability, making the study of ECMAI as a process (e.g., policy changes) more compelling, compared to other countries that focused primarily on market competition or technological advancement.

Additionally, given the heterogeneous nature of Europe (50 countries with distinct traditions and languages) studying the dual nature of AI (source code + software experience) requires addressing both linguistic challenges (both for human and automated analysis) and cultural specificities, requiring cultural/country clustering analysis and proportional sampling across member states to ensure minority perspectives are not homogenized within dominant narratives.

Moreover, precisely for European value-driven approach to AI, following the proposed pipeline faces data accessibility challenges from closed-source projects, legal constraints from GDPR. While mitigated by focusing on open-source initiatives or proxy indicators through documentation, these European specificities constitute a serious challenge for researchers (Crockett et al., 2018).

It is worth emphasizing that the project of a ‘European collective memory’ doesn't aim to create a unique narrative of Europe’s contribution to the development of AI, but rather to create a constellation of narratives creating a sort of network, complementing each other, about how Europeans remember and which were their practices in the development of AI.

A potential pilot study for the European collective memory proposal is the study of ALIA

9

initiative in Spain. Its primary objective is to establish a public infrastructure of AI resources, with a particular emphasis on the development of open and transparent language models in all the official languages of Spain (Catalan, Euskaro, Galician and Spanish). The research objective is clearly defined (the geographical area of Spain, started in 2019, etc.) and it has all the elements that we define in our framework: Body of knowledge: The development of source code that will be open source and publicly available for different natural languages, and developed by different teams. This aspect of the study makes the analysis of coding interesting by itself as it enables the observation of the varied coding practices employed by the different teams that, each of which will have different mother tongues. Attribute: The various projects constituting the ALIA initiative (AINA in Catalonia, ILENIA in Spain, Proxecto Nós in Galicia, etc.) are independent projects (although contributing to the same goal) and they show, for instance, different practices for the publication of their results. Process: It entails the study of language, a pivotal identitarian element for a community, and an interdisciplinary collaboration between linguistics and computer science. This requires creating a common language that facilitates understanding between several disciplines and that must be reflected in the documentation in the project. Moreover, ALIA is guided by the Spanish Government, which articulates its policy and vision regarding the role of AI systems for language resources and the consideration of diverse different identities in Spain.

For instance, this can be complemented by an analogous study of OpenEuroLLM. 10 The purpose of such a study would be to create a new narrative and, as a result, we will obtain a comparison of two alternative paths of pursuing similar goals. This would enhance our understanding of how Europe contributes to the development of AI.

We have proposed the distinction between history and collective memory for the development of the study of the history of AI in Europe. While history seeks to document what actually happened at a specific moment in the past, collective memory aims to capture how a group of people remembers what happened. Therefore, history and collective memory are not competing disciplines but complementary: while history aims to generate a unified and solid narrative about how things happened in the past and following a path; collective memory aims to generate multiple narratives, complementing each other, compelling different specific perspectives about the same facts and how they acquire a specific meaning and contribute to the identity of a community.

We propose a collective memory that is built empirically; that is, it is based on compiling and analyzing objective evidence about AI in three different lines: body of knowledge, attributes, and process. Collective memory as a body of knowledge is based on the analysis of AI source code because it was fixed at a particular time and can be studied from different perspectives to unpack memories. Collective memory as attributes analyzes AI as a software and in terms of the valorizations made by different communities and how they experience AI. Finally, collective memory as a process aims to capture how the remembrances of AI change over time, proposing to analyze discourse about AI outside the technological field, such as regulation, public controversies, cultural manifestations, or public remembrances.

However, we acknowledge that this presents several challenges. Firstly, it requires a multidisciplinary approach, involving collaboration among computer scientists, historians, philosophers, linguists, and discourse analysis researchers with the objective of developing this collective memory from a humanistic perspective. Secondly, the amount of documentation to be analyzed is vast and cannot be just qualitatively studied but it requires computational support and specific algorithms. Thirdly, there are multiple regulations that might constrain access to the data conducting this type of research. Consequently, these regulatory constraints must be taken into account when presenting a study on collective memory. Finally, AI is a domain that changes rapidly, making it challenging to identify which sources are relevant for analysis.

Despite these challenges, a synthesis of the European historical studies of AI and studies of its collective memory, we will achieve a much more profound comprehension of AI and its varieties across Europe. We have introduced a humanistic perspective and examined how social and cultural valuations of AI are transmitted from one generation to another. This approach goes beyond the traditional history of technology, which primarily assesses computational progress and often overlooks its social implications.

Footnotes

Acknowledgments

We thank the reviewers of this article for their suggestions and their lucid and accurate remarks.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the AEI (Spanish National Research Agency) through grants PID2020-114758RB-I00, MCIN/AEI/10.13039/501100011033 and PID2020-115482GB-I00, MCIN/AEI/10.13039/501100011033, by Xunta de Galicia “Consolidación e Estruturación” 2023 GPC GI-2046 – Episteme ED431B2023/24 and by the EUHORIZON2021 European Union’s Horizon Europe research and innovation program https://cordis.europa.eu/project/id/101073351/es the Marie Sklodowska-Curie Grant No.: 101073351. Views and opinions expressed are however those of the author(s) only and do not necessarily reflect those of the European Union or the European Research Executive Agency (REA). Neither the European Union nor the granting authority can be held responsible for them. The authors have no relevant financial or non-financial interests to disclose.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.