Abstract

The verification problem in abstract argumentation consists of checking whether a set is acceptable under a given semantics in a given argumentation graph. Explaining why the answer is so is the challenge tackled by this paper. Visual explanations in the form of subgraphs of the initial argumentation framework are defined. These explanations are grouped into classes, allowing one to select the explanation that suits them best among the several offered possibilities. Results are provided on how to use the visual aspects of these explanations to support the acceptability of a set of arguments under a semantics. Computational aspects of specific explanations are also investigated.

Introduction

In the context of explainable artificial intelligence (XAI), abstract argumentation is receiving a growing interest as a formal tool to provide explanations. The term argumentative XAI has emerged, with a number of application domains, ranging from machine learning to decision (for instance, in medicine or security); see Vassiliades et al. (2021) and Guo et al. (2023) for an overview. Čyras et al. (2021) present the current approaches of argumentative XAI and their open challenges, and underline that explanations for the argumentative process itself are also necessary.

According to Dung (1995), the argumentation process relies on an abstract framework which takes the form of a directed graph, whose nodes are arguments and edges represent attacks between arguments. Semantics characterize the acceptability of arguments, which, in the case of Dung (1995), take the form of extensions, that is, of sets of arguments which are collectively acceptable according to the semantics. Explaining the acceptability status of an argument or of a set of arguments, that is, why, under a given semantics, they belong to at least one extension (credulous acceptance) or to every extension (skeptical acceptance), are the main explainability issues that have been addressed so far in this context.

The most common explanation approach consists of identifying set(s) of arguments which act as explanation(s), as in Fan and Toni (2015a), Borg and Bex (2021b), Borg and Bex (2021a), Ulbricht and Wallner (2021), Liao and van der Torre (2020), and Baumann and Ulbricht (2021). However, since the argumentative process of abstract argumentation already provides ways for selecting arguments, explaining this process by further selection of arguments (although different ones) may not be fully helpful. Indeed, an explanation must be viewed as an answer to a given user that could have potentially no expert knowledge in abstract argumentation; thus, the selection of more arguments for answering does not help such users, and we think that another way for defining and presenting explanations could be more significant. Moreover, this set of argument approaches does not highlight the attacks that are involved in the explanations.

Another explanation approach consists of presenting relevant subgraphs of the framework, as in Cocarascu et al. (2018), Saribatur et al. (2020), Niskanen and Järvisalo (2020), and Racharak and Tojo (2021). Such a visual approach is particularly of interest for human users, graphs having been shown to be helpful for humans to comply with argumentation reasoning principles (Vesic et al., 2022). This graph-based approach not only highlights arguments, but also attacks.

Besides the main argumentation acceptability issues, the verification problem

One of the first approaches that addressed this problem is Cocarascu et al. (2018): an explanation for a set

A limitation of Besnard et al. (2022a) is, however, that, for each semantic principle, a single explanation subgraph is defined. It is more realistic to consider classes (sets) of explanations. Indeed, such classes would be particularly meaningful and useful when several agents, human or artificial, are involved in the explanation of the same problem: classes offer a variety of answers, which all follow the same schema, but which may differ on their exact content. Any agent can choose or can be presented with an explanation that suits them best, and any agent can understand an explanation given by another agent, different from theirs. 2 The need for classes of explanations is also supported by the study of Miller (2019) in social sciences, which highlights that explanations are selected by humans among a set of possible explanations.

In the context of formal argumentation, only a few related works can be found concerning this notion of classes of explanation. Such classes have already been proposed in Baumann and Ulbricht (2021) for the problem of credulous acceptance of an argument, where the authors consider explanation schemes made of several elements, one of them being fixed, the other ones varying from one explanation to another. Another related work is Borg and Bex (2021b), in which the authors define a parametric computation of explanations. As such, it is more the computation processes that are grouped in classes, rather than the explanations (i.e., results of the processes) themselves.

This paper aims at defining classes of explanations following a generic methodology, applied to classical semantics (conflict-free, admissible, complete, and stable), by building up on the approach of Besnard et al. (2022a). Additional properties (emptiness, uniqueness, maximality, minimality, complexity, and computation) of explanations on these new classes will be defined and investigated.

Section 2 recalls background notions relative to abstract argumentation, graph theory, and presents the explanation approach defined in Besnard et al. (2022a). Classes of explanations are defined in Section 3, Section 4 studies their properties; Section 5 shows how to compute their maximal and minimal explanations. Section 6 describes some related works and Section 7 concludes and presents some potential future works. Proofs are given in Supplement A.

Note that this work originates from Duchatelle (2023), that explanations for the defense principle have been addressed in Doutre et al. (2023a), and that this contribution is an extended version of Doutre et al. (2023b). In particular, we now propose a generalized definition for some classes of explanation, while showing that our previous results are preserved. We also bring new results for all our classes of explanations, which place boundaries on the spatial complexity of minimal explanations. Moreover, unlike Doutre et al. (2023a) and Doutre et al. (2023b), the proofs of all the results are presented.

Background Notions

Abstract Argumentation and Graph Theory

We begin with recalling basic notions on abstract argumentation as they have been defined by Dung (1995).

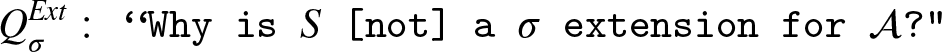

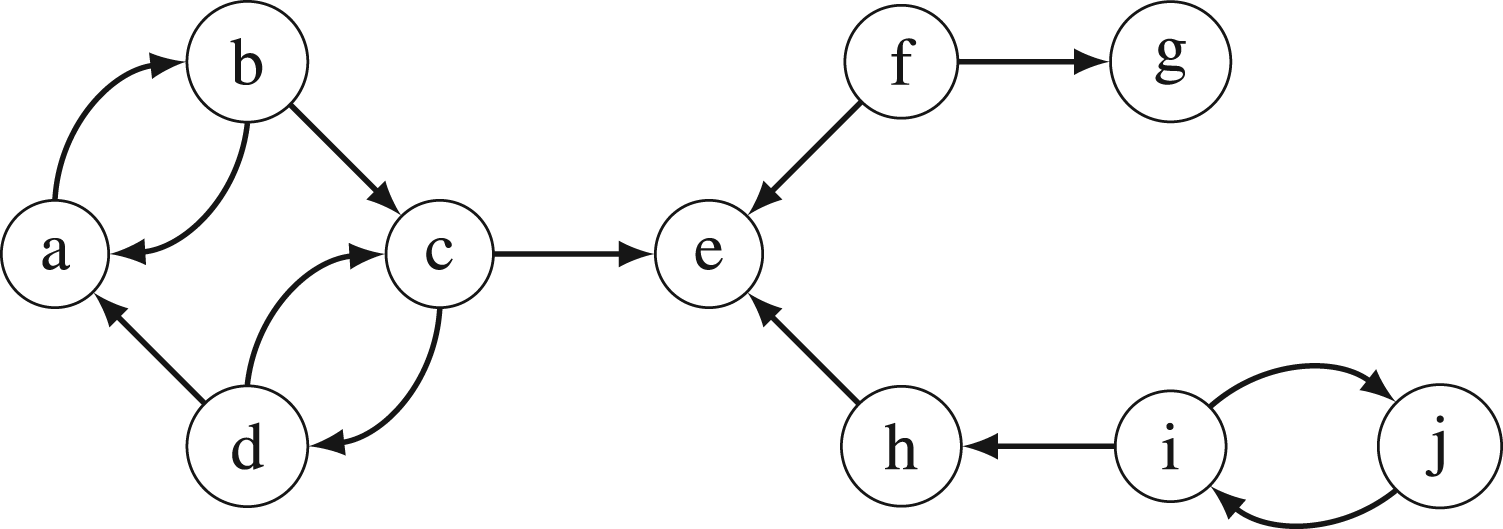

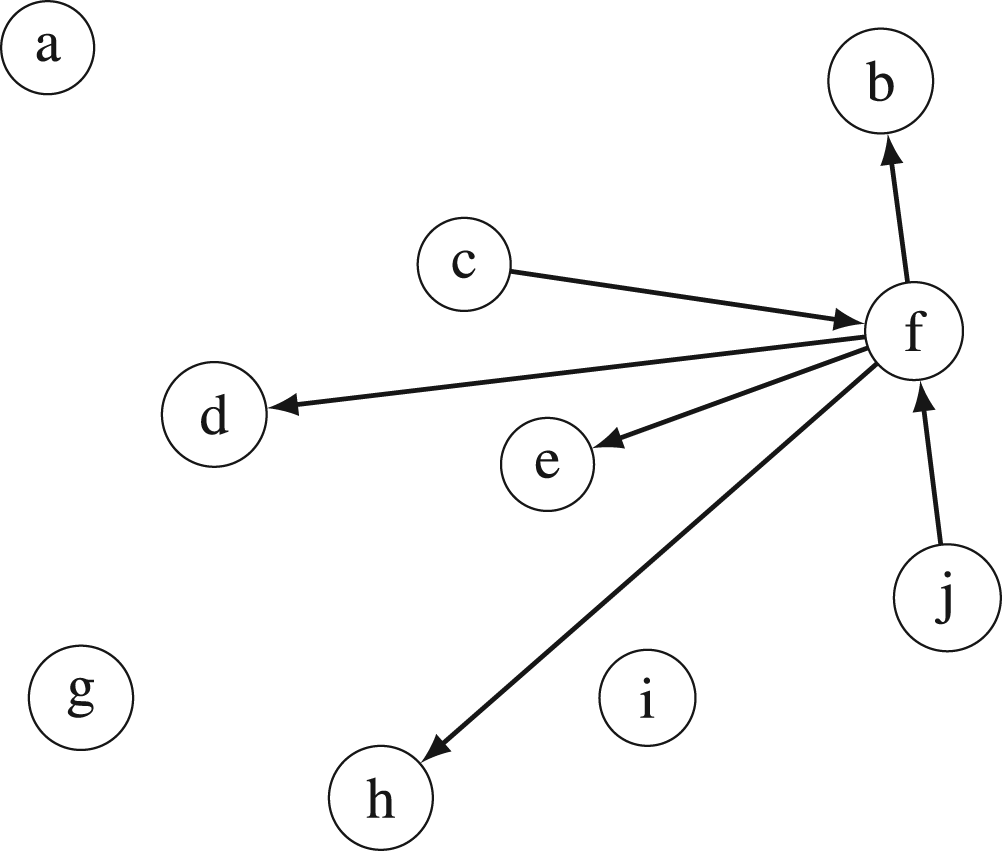

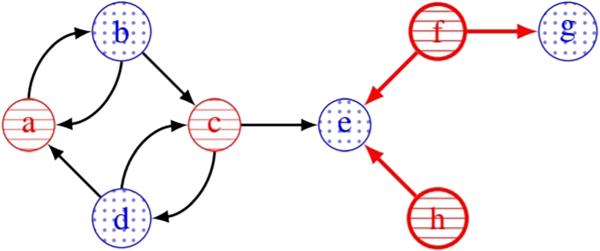

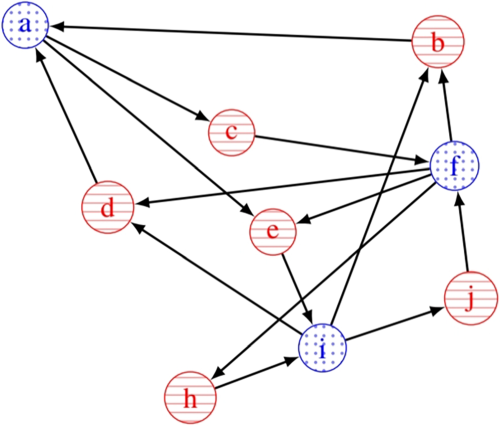

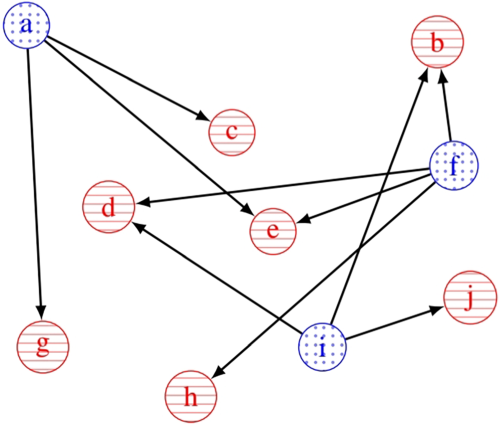

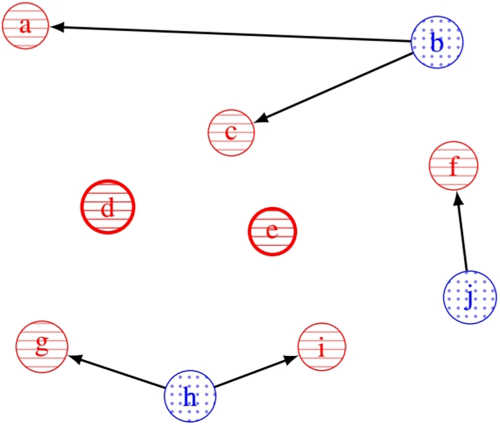

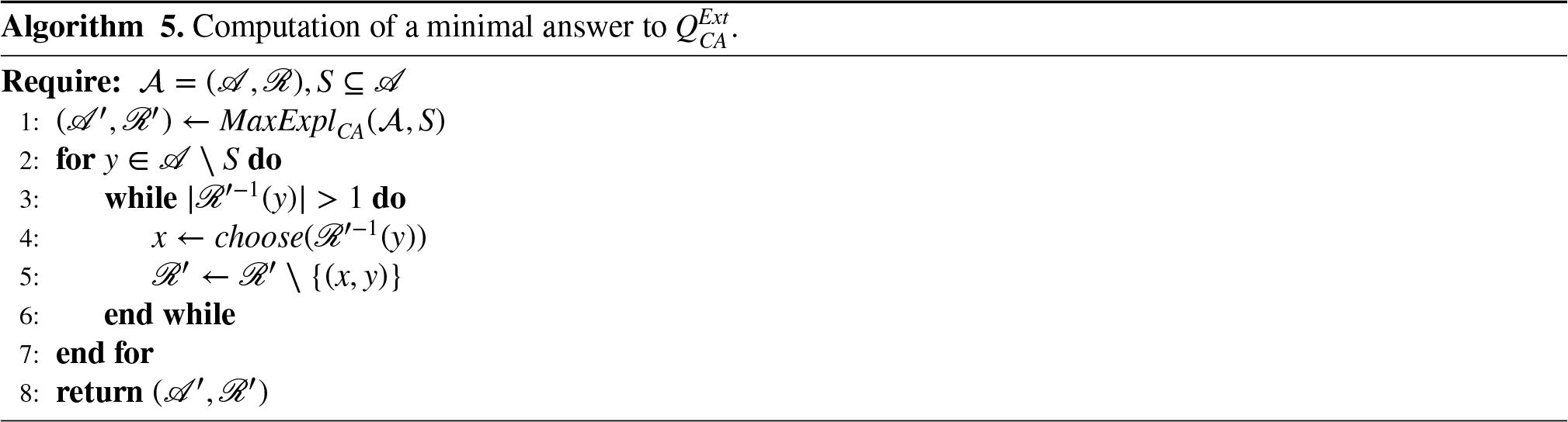

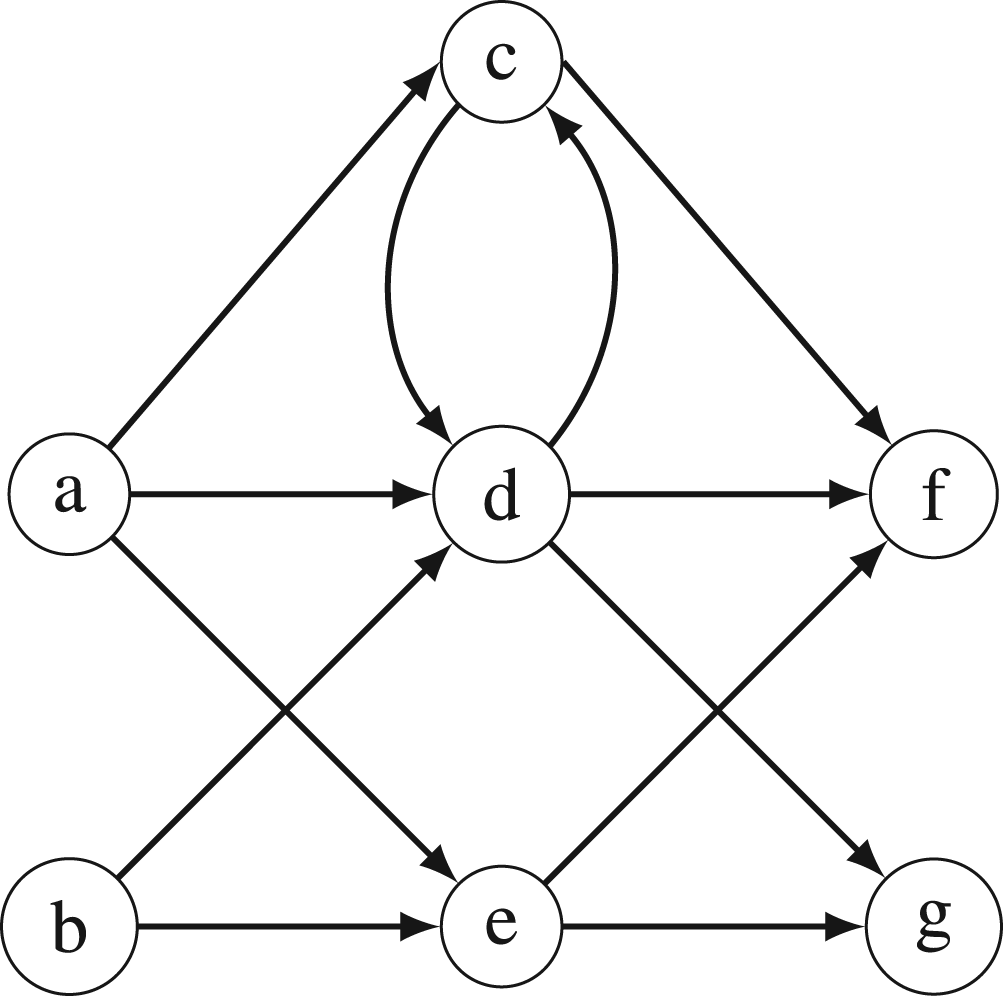

Figures 1 and

2

give two examples of AFs.

A First Example of an Argumentation Framework (Arguments are Represented by Nodes and Attacks by Edges). A Second Example of an Argumentation Framework.

For an AF The elements of For

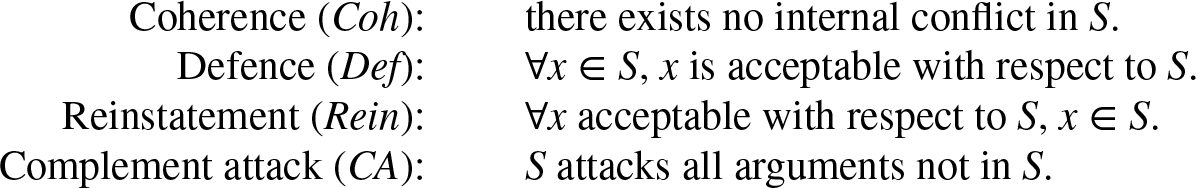

The main asset of Dung’s approach is the definition of semantics using some basic properties in order to define sets of acceptable arguments, as follows.

Let

Let conflict-free iff there are no admissible iff complete iff stable iff

The verification problem for the four semantics given in Definition 3 can be solved in polynomial time, as indicated by Dvorák and Dunne (2018).

Considering the AF of Figure 2, an instance of the verification problem could be: “

Since an AF can be represented using directed graphs, we also need to recall some basic notions of graph theory.

Let

Let

A subgraph

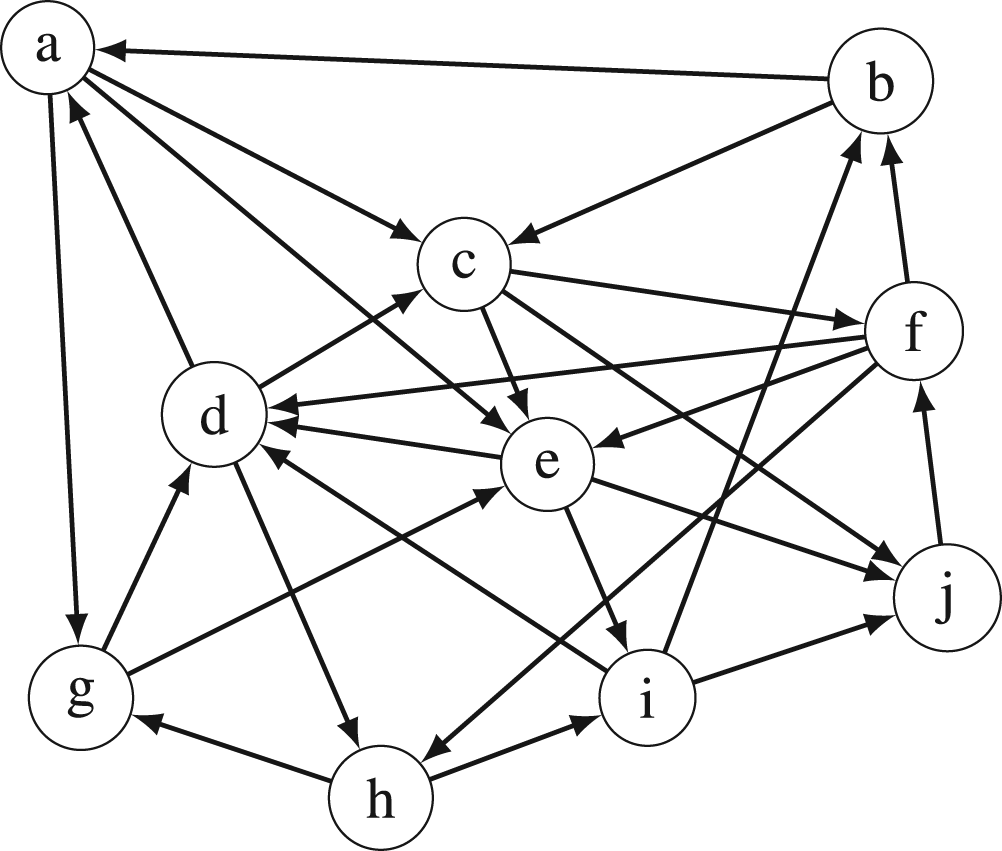

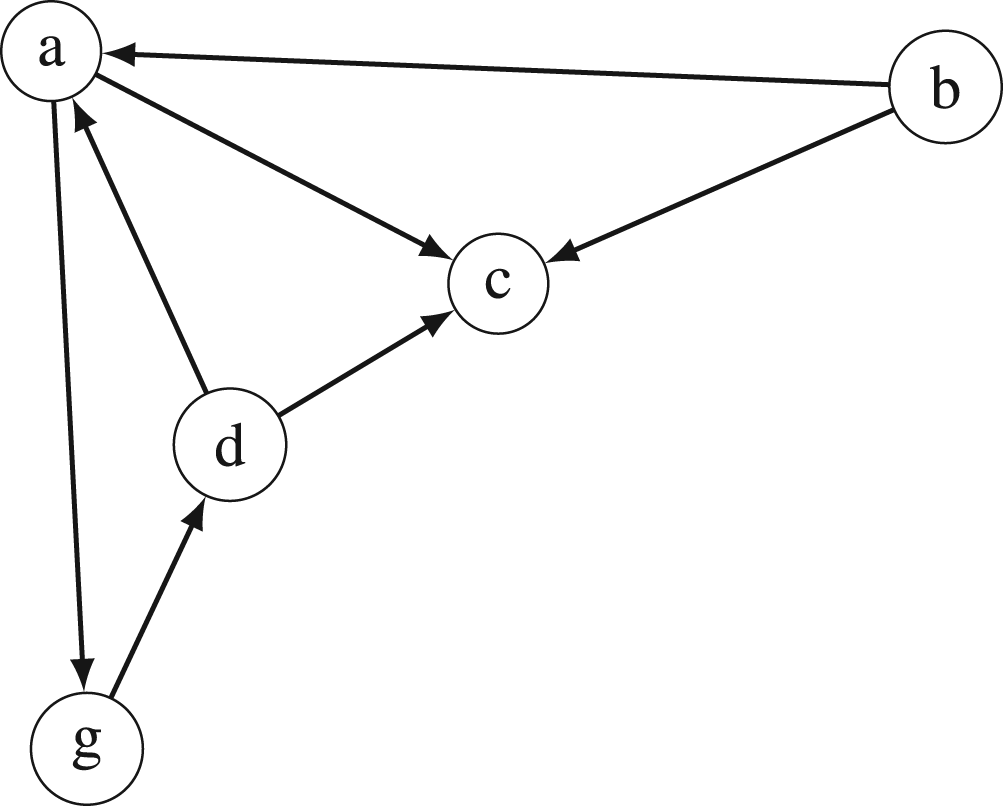

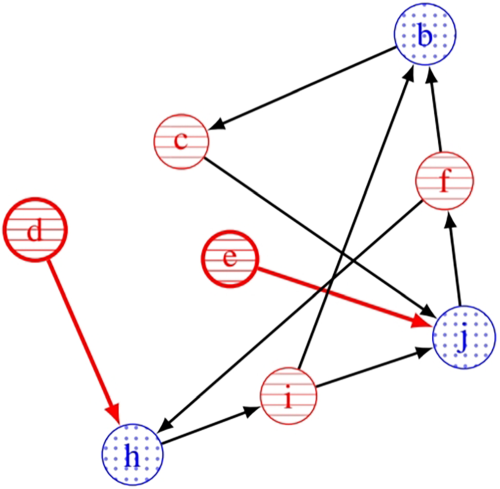

Figure 3 depicts the induced subgraph of Figure 2 by the set An Induced Subgraph of the Argumentation Framework (AF) in Figure 2. A Spanning Subgraph of the Argumentation Framework (AF) in Figure 2.

Induced and spanning subgraphs are examples of ways to compute a graph from another single graph. Another operation producing a new graph from other ones is the union which represents the aggregation of the information contained in the two graphs:

Let

Let us consider also particular kinds of graphs, bipartite graphs, whose set of vertices can be split into two disjoint sets and in which every arc connects a vertex of one part to a vertex of the other part:

Let

Some important functions can be defined over graphs.

Let The successor function of Let Let

Considering an AF, the successor (respectively, predecessor) function represents the arguments that are attacked by (respectively, are the attackers of) some argument(s). An AF being usually denoted by

We then recall some notions on vertices having a particular status in a graph.

Let

Thus, sources (respectively, sinks) are vertices that may only be origins (respectively, endpoints) of arcs. Isolated vertices are those that are connected to no other vertices.

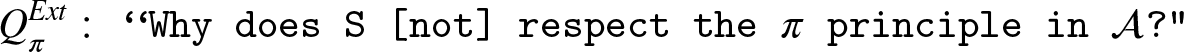

In the graph of Figure 4,

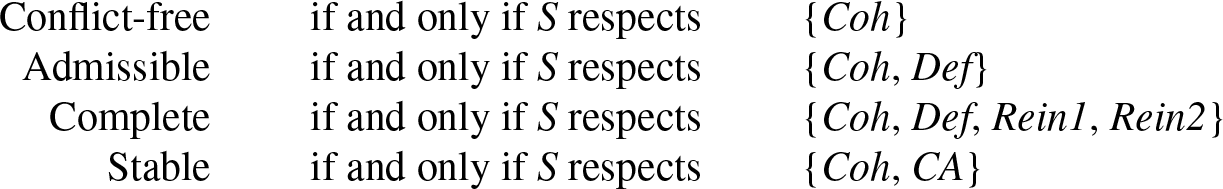

We recall the main definitions of what explanations are in Besnard et al. (2022a), but only for those answering the questions about semantics results in abstract argumentation. These questions are defined as follows: let

In order to answer these questions, and hence to provide explanations, Besnard et al. (2022a) use the decomposition of semantics into principles. The idea is to identify some properties that can be used to provide a modular characterization of semantics. We refer the reader to Doutre and Mailly (2016) for further details. Given a set

It is worth noting that the reinstatement principle can be split into two subprinciples. Indeed, to decide whether a set

The following has been proven in Doutre and Mailly (2016).

The Explanation

Let

With this result, a straightforward answer arises for

Besnard et al. (2022a) define visual answers to these questions. These answers take the form of a graph. This allows for the answers to be drawn, as well as to study their visual (i.e., structural) properties. More precisely, as AFs are graphs themselves, the answers given are subgraphs of an AF. Moreover, the interpretation of these subgraphs can be done using a “checking procedure” in order to explicitly identify if the given subset satisfies or not the concerned principle.

Let

Answering this question comes down to answering the following questions: 1. “ 2. “

Regarding question

Regarding now the defense principle of question 2, the explanation graph of Besnard et al. (2022a) shows the elements of The Explanation

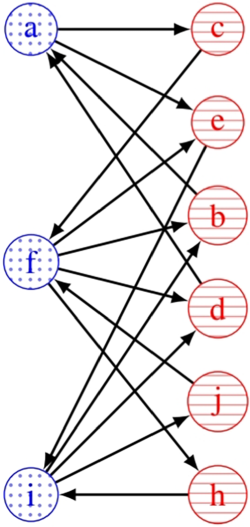

The graphs of Figures 5 and

6

together constitute an explanation for why

The definitions of the explanation graphs for the reinstatement and the complement attack principles follow a similar procedure, which will not be detailed here, but which can be found in Besnard et al. (2022a).

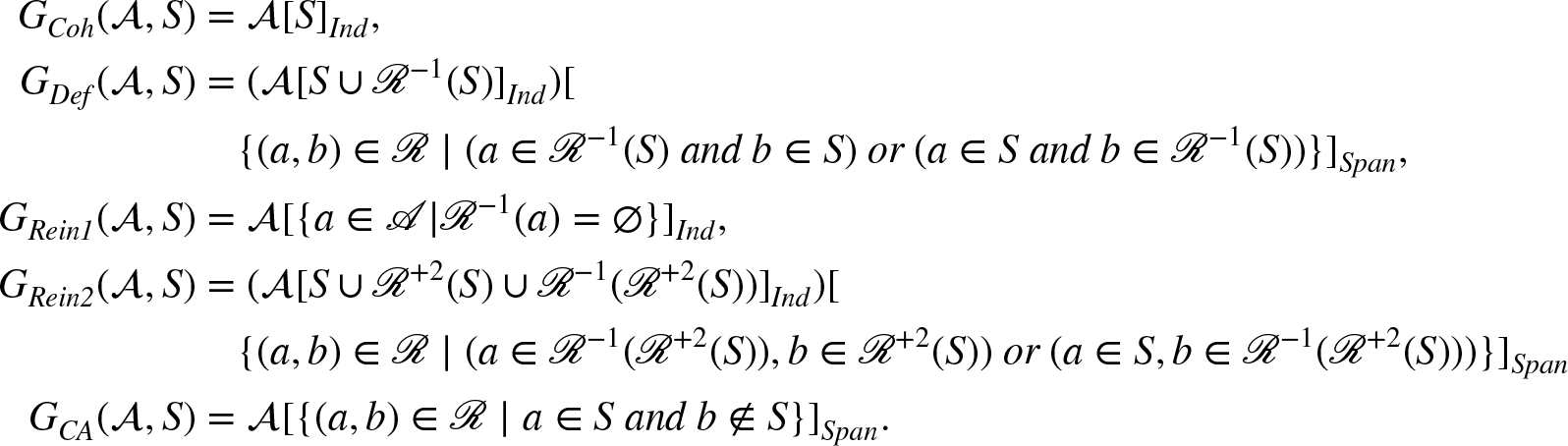

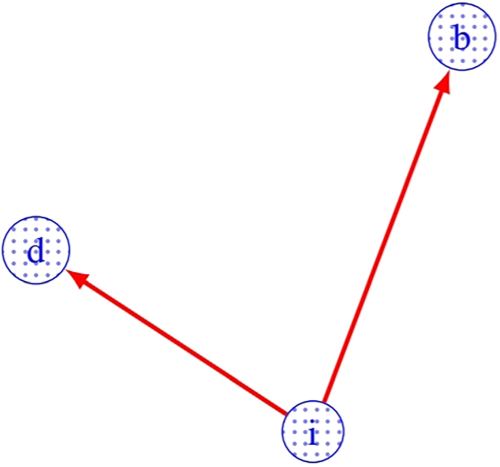

The formal definition of the explanation graph for each principle in Besnard et al. (2022a) is as follows:

Let

The checking procedures which allow one to interpret these subgraphs in order to explicitly identify if the given subset satisfies or not the concerned principle are defined as follows by Besnard et al. (2022a):

In the following, considering an AF

For each principle

Legend of the colors used in the explanation graphs (wrt a given input set and a given principle):

The arguments of the set that is given as input in the question will be in blue with dotted background

. Arguments that are displayed because they take the role of attackers (of a given set, wrt the given principle), as well as problematic attacks, will be in red with horizontal lines

. Arguments that are defended or acceptable with respect to the input set, but not part of it, will be in green with vertical lines

. Other arguments and attacks of the explanation subgraph will appear in black without background. Elements of the explanation graph that make the conformity check of the given principle fail, will be displayed as

Note that Section 4.2 will show that these explanation graphs correspond to the maximal explanations of the classes which will be defined in Section 3.

We are interested in refining the notion of explanation proposed in Besnard et al. (2022a) and recalled in Section 2.2. Indeed, considering the last example (see Figure 6) leads to the following remark: for explaining the respect of the Defence principle, it seems useless to consider the two defenders of

Hence, as briefly presented in Doutre et al. (2023b), for each principle

An explanation to a question

Note that, in each following section, we first consider the explanations for some given principles, then the explanation for the semantics built using these principles. In the case when the semantics is composed of only one principle, we give two distinct definitions: one for the principle and one for the semantics. Indeed, we want to be the most general as possible and so conserve the distinction between the principles and the semantics.

Explanations for Coherence

Recall that a set of arguments respects the coherence principle if and only if there are no arcs between its elements. Hence, if we are to show why a set of arguments is coherent, we must show a part of the graph that highlights the absence of arcs within this set.

The idea here is to have a subgraph in which we make sure that if there is at least one arc between two arguments of the questioned set, then such an arc appears in it. Indeed, the presence of at least one such arc is enough to conclude that the set is not coherent. If no such arc exists, then none is displayed, and we can conclude that the set is coherent.

In this situation, we may only focus on the arguments of the questioned set and forgo all the others.

Even if only one arc between two arguments of the set is enough, there may very well be several of them. In this case, we may choose to keep only one, all of them, or even any number in between. All these choices result in valid explanations, since we can infer from them that the set is not coherent. It is, however, crucial that at least one appears so that we do not make a false conclusion.

All these considerations give rise to the following definition.

Let If

Consider the AF of Figure 2 and the questions: “ “

Figures 7 and

8

show the answers for the first and second question, respectively.

Explanation on Why Explanation on Why

Once an answer to

Let

This theorem indeed states that, in order to know whether a set

Let us consider now the conflict-free semantics. Recall that this semantics is only made of the coherence principle. As such, we can define an answer to

Let

Figures 7 and

8

both display answers to

Explanations for Defense

Recall that a set of arguments respects the defense principle if and only if all its arguments are acceptable with respect to it. In particular, this means that for every argument that attacks one in the set, there is an argument in the set that attacks this attacker. Therefore, if we are to show why a set of arguments only contains arguments that are acceptable with respect to it, we must exhibit a part of the graph highlighting that all the attackers of this set are attacked in return.

As presented in Doutre et al. (2023a) and Doutre et al. (2023b), the idea here is to have a subgraph in which we make sure that, for every attacker of the set, if there is at least one arc from an argument of the set to that attacker, then such an arc appears in the subgraph. Indeed, the presence of such an arc is enough to conclude that the attacker we consider is being taken care of. Hence, the need to do so for every attacker. If no such arc exists, then none is displayed, and we can conclude that there is a hole in the defense of the set, and so that it does not respect the defense principle. Additionally, we should make sure that, for every attacker, an arc from that attacker to an argument of the set appears in the subgraph, to show that they are indeed attackers.

In this situation, we may only focus on the arguments of the questioned set and its attackers, and so forgo all the others. Additionally, we are only interested in attacks that go from an element of

We can make a similar observation as in the case of explanations for the coherence principle, concerning the arcs from the questioned set to its attackers or the arcs from the attackers to the questioned set. For each attacker, even if only one in both directions is enough, there may very well be several of them. Just as in the case of explanations for the coherence principle, we may in this situation choose how many we keep between one and all of them, all these choices resulting in valid explanations. Yet again, it is crucial that at least one appears, so that we do not make a false conclusion.

All these considerations give rise to the following definition.

Let

Consider the AF of Figure 2 and the questions:

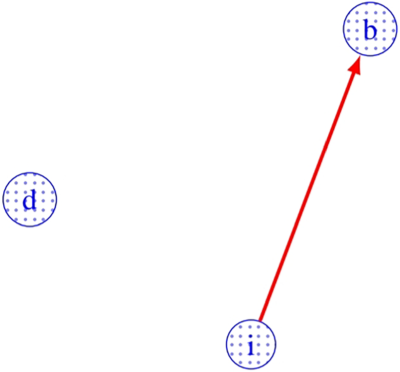

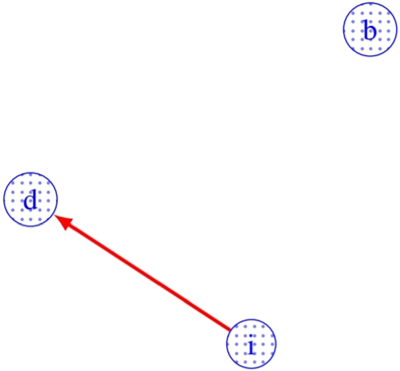

Figures 9 and

10

show the answers for the first and second questions, respectively.

Explanation on Why Explanation on Why

Once an answer to

Let

This theorem states that, in order to know whether a conflict-free set

In addition to Theorem 2, we have an additional result concerning the visual behavior of explanations for defense. Recall that the idea is to consider a set, its attackers, and the attacks that go from one to the other and vice versa. Hence, we can feel that there is an inherent separation between the two groups of arguments in the explanation. The following proposition formalizes this intuition.

Let

The previous proposition is illustrated in Figure 11, which is Figure 9 reorganized for highlighting the partition of arguments.

Explanation on Why

As such, if an explanation for defense is computed on a set that is conflict-free, it is possible to display it so that its vertices are separated into two groups, with the arcs always going from one group to the other group. Alternatively, if one computes an explanation for defense for a set and if it does not result in a bipartite graph with the set as one of its parts, we may conclude that the set is not conflict-free.

Let us consider now the admissible semantics. Recall that this semantics is made of the coherence and the defense principles. As such, we can define an answer to

Let

Figures 7 and

9

constitute an answer to

Recall that a set of arguments respects the reinstatement principle if and only if all the arguments that are acceptable with respect to it belong to it. In particular, this means that all the arguments for which the set attacks all the attackers should belong to it. However, in virtue of Definition 2, this also means that all the arguments for which there exists no attacker should belong to the set as well. The latter consideration corresponds to the

Explanation for

In the case of

In this situation, we may only focus on the arguments that are not attacked and forgo all the others. Additionally, since the arguments displayed are unattacked, there cannot exist any interaction between them; thus, we will always have an empty set of arcs.

We can make a similar observation as in the previous cases, concerning the unattacked arguments that are not in the set. Even if only one is enough, there may very well be several of them. Just as in the previous cases, we may in this situation choose how many we keep between one and all of them, all these choices resulting in valid explanations. Yet again, it is crucial that at least one appears, so that we do not make a false conclusion.

All these considerations give rise to the following definition.

Let If

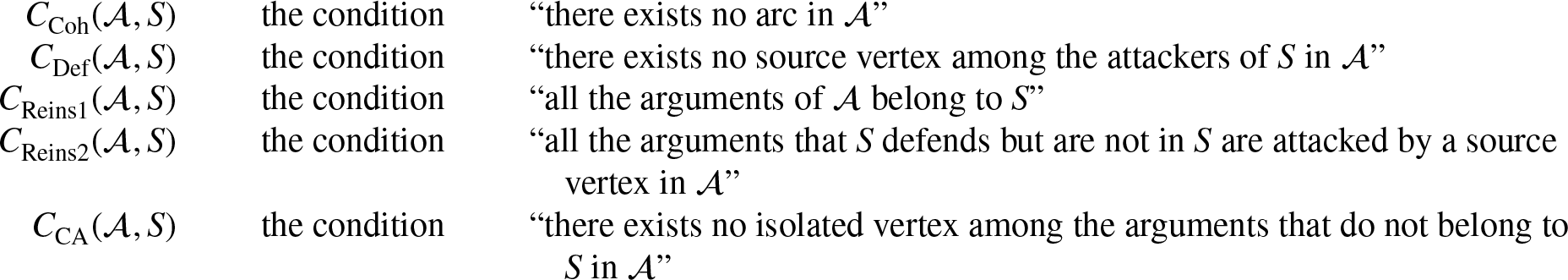

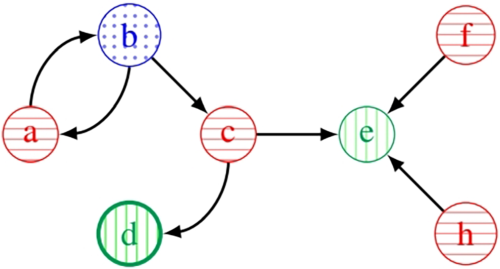

Consider the AF of Figure 1 and the question Explanation on Why

Once an answer to

In the case of

In this situation, we may focus on the arguments of the questioned set, the arguments that it defends, and the attackers of those arguments. Additionally, we are only interested in attacks from the set to the attackers (to know whether or not the defended arguments are acceptable) and from the attackers to the defended arguments (to show that they are indeed attackers).

We can make a similar observation as in the previous cases, concerning the attacks from the set to the attackers of the arguments it defends, or the arcs from the attackers of the arguments it defends to those arguments. For each attacker, even if only one in both directions is enough, there may very well be several of them. Just as in the previous cases, we may in this situation choose how many we keep between one and all of them, all these choices resulting in valid explanations. Yet again, it is crucial that at least one appears, so that we do not make a false conclusion.

All these considerations give rise to the following definition.

Let

Consider the AF of Figure 1 and the question Explanation on why

Once an answer to

The following theorem formalizes the correctness of both conformity checks associated with

Let

This theorem states that by computing

Notice that, on the contrary of previous results, this is not an equivalence result. We also have a result that reverses the implication, but it does not rely on the same conditions.

Let

This theorem states that if we compute

From Theorems 3 and 4 follows the next corollary, which shows an equivalence result for the reinstatement principle (due to the additional constraint about the conflict-freeness of

Let

Let us consider now the complete semantics. Recall that this semantics is made of the coherence, defense, and reinstatement principles, the last one being divided into the

Let

Figures 12 to

15

constitute an answer to Explanation on Why Explanation on Why

Explanations for Complement Attack

Recall that a set of arguments respects the complement attack principle if and only if all the arguments that do not belong to the set are attacked by an argument of the set. Consequently, if we are to show why a set of arguments attacks its complement, we must show a part of the graph highlighting the attacks from this set to its complement.

The idea here is to have a subgraph in which we make sure that, for every argument that is not in the considered set, if there is at least one arc from an argument of the set to that outsider, then such an arc appears in the subgraph. Indeed, the presence of such an arc is enough to conclude that the outsider we consider is being dealt with. As a consequence, it is needed to do so for every argument that is not in the set. If no such arc exists, none is displayed, and we can conclude that some outsider is being left aside, so that the set does not respect the complement attack principle.

In this situation, it seems best to focus both on arguments of the questioned set and the arguments that do not belong to it, that is to say, all the arguments. However, we are only interested in the arcs that go from the arguments of the set to those that are not in it, and so we forgo all the others.

Similarly to the previous cases, we can observe that, for each argument that is not in the set, even though only one arc from an argument of the set is enough, there may be several of them. In such a case, we may again choose how many arcs we keep, between one and all of them, all these choices resulting in valid explanations. Once again, it is crucial that at least one appears, so that we do not make a false conclusion.

All these considerations give rise to the following definition.

Let

Consider the AF of Figure 2 and the questions:

Figures 16 and

17

show the answers for the first and second questions, respectively.

Explanation on Why Explanation on Why

Once an answer to

Let

This theorem states that, in order to know if a set

As for the defense principle, we have an additional result regarding the visual behavior of explanations for the complement attack. Indeed, recall that the idea is to separate the arguments between those that belong to the questioned set and those that do not, while only considering the attacks that go from the set to its complement. Again, we can sense that a clear separation between the two groups of arguments can be made. The following proposition formalizes this intuition.

Let

Thus, it is possible to display an explanation for the complement attack principle so that its vertices are separated into two groups, with the arcs always going from one group to the other.

Notice that Proposition 3, on the contrary to Proposition 2, does not require a condition on the set of arguments. Moreover, it is more precise in that we have an additional result on the status of the arguments in the explanation, which is not the case for Proposition 2.

Let us study now the stable semantics. Recall that this semantics is made of the coherence and the complement attack principles. As such, we can define an answer to

Figures 7 and

16

constitute an answer to

Now that we have formalized the correctness of the conformity checks, linking structural (and so, visual) properties to semantic ones, as well as giving additional results regarding the visual behavior of some explanations, we turn to the study of other types of properties. As briefly presented in Doutre et al. (2023b), these additional properties help to identify specific explanations inside a given class, some of them that could be deemed undesirable, and help us understand how these classes of explanations are organized. Let us first define the properties that we investigate.

Definitions of Some Specific Explanations

Minimality, Maximality

A minimal (respectively, maximal) explanation is an explanation that contains the least (respectively, all the) possible amount of information. In a sense, a minimal explanation only provides what is required to explain, whereas a maximal explanation in fact provides everything that might be relevant to explain, even if it might be redundant.

Let

Emptyness

The notion of an empty explanation is one that should be avoided when providing explanations, in the sense that it somewhat represents the incapacity of the system to answer the question that has been asked. So, considering that the verification problem

Let

Uniqueness

We consider an explanation to be unique when there is only one of its kind. Although we defined classes of explanations that can represent all the different points of view that could emerge as to how to answer a question, in some situations, there can only be one way to answer that question.

Let

Minimality and uniqueness are also seen as explanation principles in Liao and van der Torre (2020). However, in Liao and van der Torre (2020), explanations are defined as sets of arguments, and not subgraphs, as we do. This obviously implies that the definitions of minimality and uniqueness given in Liao and van der Torre (2020) are slightly different from ours (we also take into account attacks and not only arguments).

Here, we provide the results of our formal study on our explanations using the aforementioned properties. The first results concern empty explanations. The following theorem shows that although empty explanations can occur, they only do so in very specific situations.

Let

As such, the empty explanation is, and can only be, a valid explanation when the question

Not only is the empty explanation a valid explanation in specific cases, it is itself a very specific one, as shown by the following result.

Let

This theorem states that the empty explanation is in fact so specific that, when it occurs, it is the only possible explanation.

We now turn to maximal explanations. For every abstract argumentation principle that we consider, there is only one maximal explanation. This is the object of the next theorem.

Let

Since there exists only one maximal explanation for every abstract argumentation principle that we consider, we introduce a dedicated notation for referring to them.

In the following, considering an arbitrary AF

Our notion of maximal explanations is also what allows us to bridge the present work with the work done in Besnard et al. (2022a). This is the subject of the following proposition.

Let

As such, our maximal explanations precisely correspond to the explanations defined in Besnard et al. (2022a). Thus, the classes of explanations studied in the present work can be thought of as a way to reduce the complexity of the explanations of Besnard et al. (2022a), while preserving the same properties. Indeed, in the worst case, the number of explanations can be exponential in the size of some specific sets of elements, depending on the type of explanation (for instance, the set of attacks between the arguments belonging to the extension

Figures 18 and

19

depict different minimal explanations for coherence for A Minimal Explanation on Why Another Possible Minimal Explanation on Why

However, minimal and maximal explanations have a particular relation, which is the object of the next theorem.

Let

This last theorem states that, for each abstract argumentation principle that we consider in the present work, the maximal explanation of the class related to this principle is exactly the union (in the sense of the graph union operator defined in Definition 6) of all the minimal explanations. This gives us some insight as to how the class of explanations is organized regarding the inclusion (i.e., subgraph) relation.

Concerning the explanations for coherence for

Finally, an original contribution of the present work is an additional result on minimal explanations. It is often acknowledged that an important quality of good explanations is simplicity. Simplicity, however, is not easily characterized and might take different forms depending on the context in which this concept is used. The following results are an attempt at characterizing the simplicity of our minimal explanations.

Let If If If If If

What these results essentially say is that the spatial complexity of our minimal explanations is linear in the number of arguments in the initial AF. Consider that in the general case, the size 13 of a graph is quadratic in the number of its vertices. This might very well be the case of the initial AF on which to compute an explanation. However, we can guarantee that, if a minimal explanation is computed, the size of this explanation is linearly bounded by the number of arguments in the initial AF from which it is derived. We believe that this reduction in the degree of complexity is a strong argument advocating in favor of the simplicity of our minimal explanations.

Notice that in the case of the defense and

Let

Note that some results presented in this section open the way to algorithmic solutions since, for a given principle, a maximal explanation covers all the possible explanations (the minimal ones, but also all the intermediate explanations). This is the aim of the next section.

We now turn to how to obtain an answer to a question The initial AF. The set of arguments for which to compute the explanation. The abstract argumentation principle for which to compute the explanation.

Now, using these elements to compute explanations, we take advantage of the inner organization of classes of explanation regarding the inclusion relation (cf. Theorem 9). The idea is to have a way to obtain maximal explanations (which are unique in virtue of Theorem 8), and a way to obtain the other explanations from the maximal ones.

Using Proposition 4, we see that we can easily and efficiently obtain maximal explanations only using the induced subgraph and spanning subgraph operators (Definition 5) on the initial AF. Some maximal explanations require both operators (using the induced subgraph first, then the spanning subgraph), while others require only the use of one of them. In particular, maximal explanations for coherence and

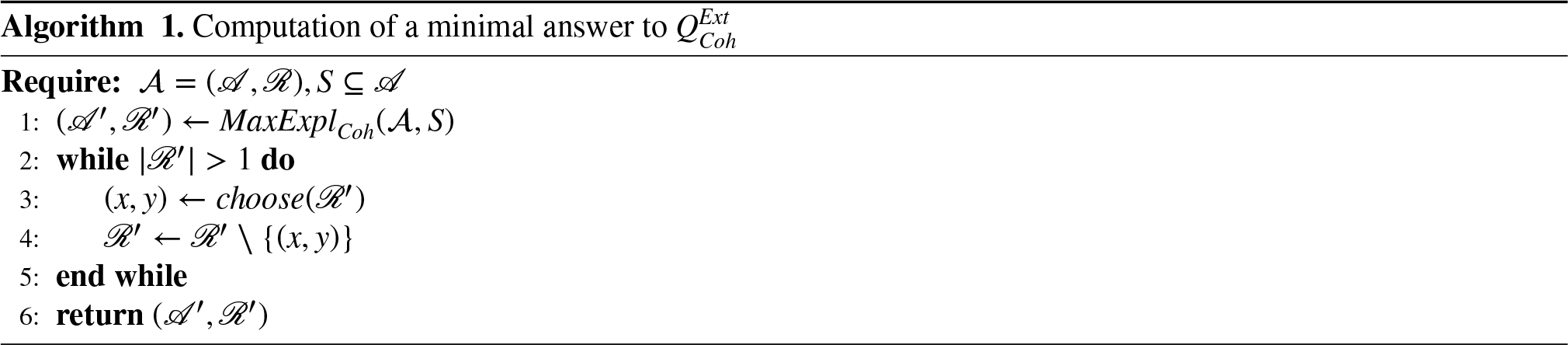

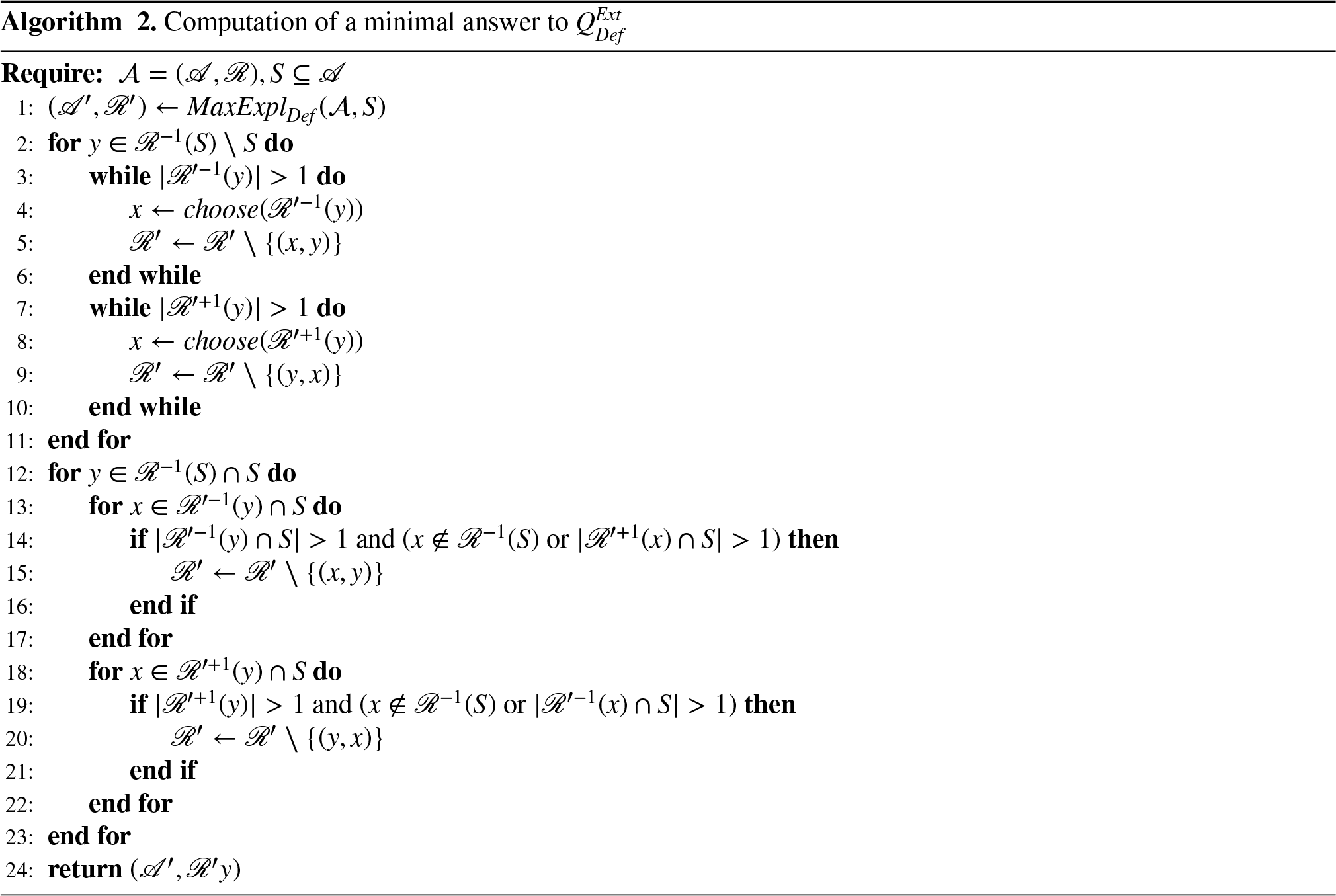

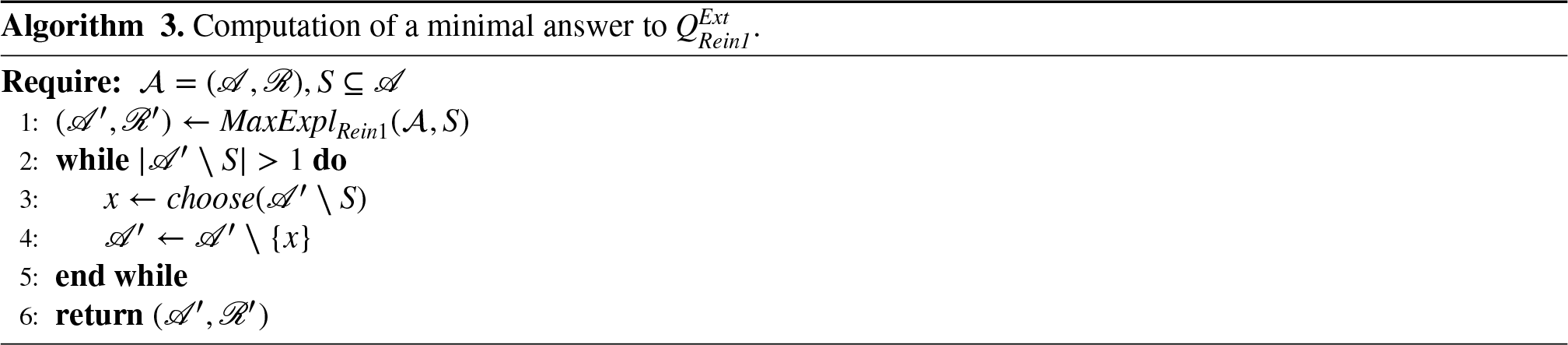

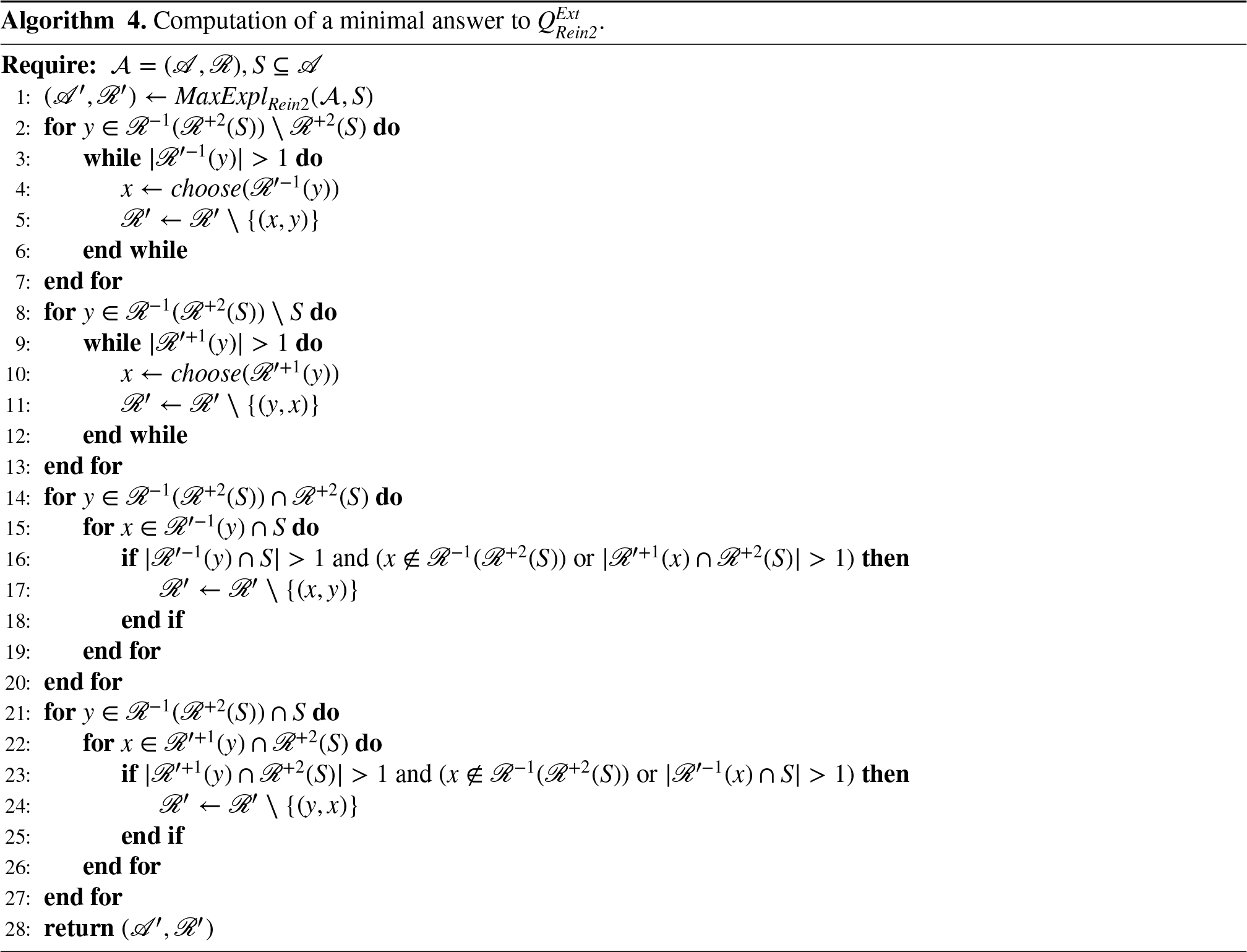

Next, from the maximal explanations, we give algorithms to compute minimal explanations as briefly presented in Doutre et al. (2023b). These algorithms all follow the same schema. The idea is to start from a maximal explanation and to gradually remove elements until a minimal explanation is obtained. We give one algorithm for each abstract argumentation principle that we consider, and name them

To make the algorithms for defense and

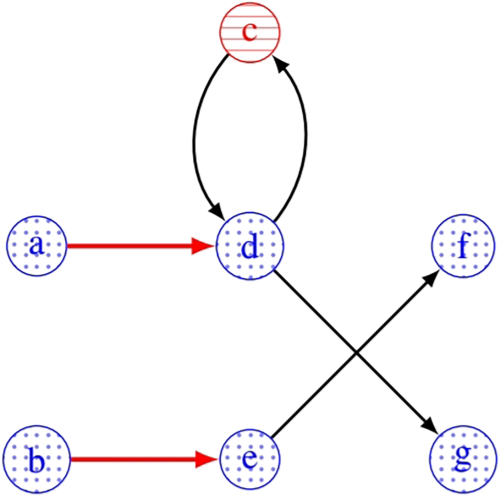

Consider the AF of Figure 20 and An Argumentation Framework.

We begin by computing

Then, the whole idea of this algorithm can be summed up in trying to remove as many arcs as possible, without breaking the third and fourth conditions of Definition 13. To remove arcs, we use the observations that one attack to

Consider the argument Regarding the attacks from Likewise, regarding the attacks from

Consider now the argument Regarding the attacks from Likewise, regarding the attacks from

In the end, applying these procedures to all the attackers of A Minimal Explanation for Defense for

The following theorem states that our algorithms are sound and complete for the computation of minimal explanations.

Let

The computation of minimal explanations thus relies on the computation of maximal explanations, and the removal of some arcs (or arguments) in them. The computation of maximal explanations is already known to be polynomial (see Besnard et al., 2022a). Moreover, the complexity of the removal operation in the worst case is linear in the number of removed elements and this number is either quadratic in the number of vertices in the graph when these elements are attacks (so for any principle except the one for the first part of reinstatement), or linear in the number of vertices in the graph when these elements are vertices (for the first part of reinstatement). From these considerations, our algorithms can be considered as computationally efficient.

To finish with, we would like to point out that Algorithms

In this section, we compare our work with some related works existing in the literature, and the presentation of this comparison is done using the categorization from Čyras et al. (2021) with four main categories of explanations (explanations can be either subgraphs or changes or extensions, or dialogues).

Let recall briefly the three important points concerning our approach: firstly, our explanations are subgraphs of the initial AFs; secondly our explanations must be viewed as answers to precise questions; thirdly, our explanations rely on visual criteria in order to make them usable without expert knowledge.

Let us now consider the main categories used in related works.

Explanations and Subgraphs

In Saribatur et al. (2020), the authors define explanations for the credulous nonacceptance of some argument. So the first difference with our approach is that we are not interested in the same questions. Moreover, they consider some semantics we do not consider (grounded and preferred semantics). And finally, to the contrary of Saribatur et al. (2020), our subgraphs are not only induced subgraphs but also spanning subgraphs. In Niskanen and Järvisalo (2020) and Ulbricht and Wallner (2021), explanations for the credulous nonacceptance and acceptance of some argument are defined as sets of arguments (respectively, attacks). So the same first difference is that we do not address the same problem. Moreover, the explanations proposed in Niskanen and Järvisalo (2020) and Ulbricht and Wallner (2021) are based on the behavior of the induced (respectively, spanning) subgraph resulting from the considered set of arguments (respectively, attacks). Our work instead considers the subgraphs to be the explanations themselves. And finally, the subgraphs we define are computed using both the induced subgraph and the spanning subgraph operations, while Niskanen and Järvisalo (2020) consider them separately and Ulbricht and Wallner (2021) only use induced subgraphs. Some other works, Fan and Toni (2015a) and Racharak and Tojo (2021), use specific graphs (the defense trees in which nodes are arguments and each successor of a node is an attacker of that node) to explain the credulous acceptance of some argument under admissibility. And so the same main difference between the works of Fan and Toni (2015a) and Racharak and Tojo (2021) and ours is that we do not explain the same problem.

Explanations and Changes

Changes consist of identifying which elements to remove from an AF in order to modify a given result. This is the method used in Fan and Toni (2015b), in which the authors explain why an argument is not credulously accepted under admissibility. Their explanations consist of sets of arguments or attacks to remove from the AF in order to make the considered argument credulously accepted under admissibility in the resulting subgraph. These explanations are computed using defense trees. Such sets were also studied in Ulbricht and Baumann (2019) (and called here “diagnoses”) when no arguments are credulously or skeptically accepted under some semantics and when one tries to know how to “repair” this. Diagnoses are also parts of the study of Niskanen and Järvisalo (2020) for identifying underlying reasons for the nonacceptance of an argument under credulous reasoning. Another example is given in Čyras et al. (2019) in which the existence or nonexistence of attacks is seen as an explanation in the context of argumentation for explainable scheduling. Note that all these approaches are clearly different from ours, particularly because of the addressed problems and the form of the explanations.

Explanations and Extensions

The third category of approach consists of taking sets of arguments as explanations. This is probably the most widely used approach to this problem. Here, the point of view is to consider that explanation equates to justification. In Fan and Toni (2015a), an explanation semantics, called related admissibility, is defined, providing all the reasons why an argument belongs to an admissible set. Extensions of this new semantics are explanations, and they are computed using defense trees. In Borg and Bex (2020) and Borg and Bex (2021a), the authors propose a basic framework to compute explanations as sets of arguments for the credulous/skeptical acceptance or nonacceptance of an argument with several variants: skeptical and credulous explanations, parametrization for the computation, adaptation to structured argumentation (Borg & Bex, 2021b, 2021c), etc. Some other works define their explanations from the observation that in the computation of an extension, some parts are nondeterministic choices, while others, deterministic, result from the first ones. This is the case of Liao and van der Torre (2020) (based on the strongly connected component of the AF), or of Baumann and Ulbricht (2021) (based on the grounded or the strongly admissible extension). All these approaches differ from ours in the addressed problems and the form of the explanations.

Explanations and Dialogues

The fourth and last category regroups approaches considering dialogues (or dialogue-games) as an explanation. A dialogue is a formalism in which several agents are engaged in a conversation built turn by turn by the agents and regulated by a protocol. This allows us to use dialogues as proofs of certain results (typically, a proof for the acceptability or not of a given argument). Such proofs are called dialectical proofs. Modgil and Caminada (2009) study how dialogues (called argument games) can be used to prove certain results in argumentation. This is not necessarily tied to explainability, but provides good insights into the workings of dialogues and how to use them. Booth et al. (2014) take changes as explanations for argumentative results, but use dialogues to obtain them. Arioua et al. (2017) propose a dialogue with explanatory abilities using abstract argumentation in the context of inconsistent databases. In this setting, the dialogue is taken to be the explanation that answers the user’s query. In Sklar and Azhar (2018), the authors present their argumentation-based dialogue framework for explanation in a human–robot interaction setting. As such, the dialogues produced have an explanatory role. Once again, all these approaches differ from ours in the addressed problems and the form of the explanations.

Conclusion and Future Works

This paper has defined classes of explanations for principles and semantics for the verification problem in abstract argumentation. These classes of explanations have been studied according to general properties such as maximality, minimality, emptyness, uniqueness, and results that place boundaries on the spatial complexity of minimal explanations, advocating for their simplicity. These classes extend and generalize the single explanation approach of Besnard et al. (2022a), allowing more flexibility in the choice of explanations that could be presented to potential users. Moreover, the paper establishes that the explanations of Besnard et al. (2022a) correspond to the maximal explanations of the defined classes, thus providing a way to compute them using graph operators. A procedure to compute minimal explanations from the maximal ones has also been provided and proven sound and complete for each class of explanations.

This contribution will be of help in any application that uses computational abstract argumentation (Čyras et al., 2021; Vassiliades et al., 2021).

Conjectures have been stated, which we believe to hold. A complete proof should be sought to either validate them or to lead to counterexamples that would invalidate them: this is a first perspective for future work.

In addition, as underlined by Čyras et al. (2021), and like in any XAI approach, an empirical evaluation should be conducted to assess to what extent these visual explanations actually are helpful for human agents to understand the answer to the verification problem. This is an important future work.

Moreover, this evaluation could also provide a first study about what is a “best explanation” and how to select it. It is therefore also related to another important future work: how to take into account the issue of the “realizability” or personalization of an explanation. Indeed, one may have in mind parts of an explanation (some arguments and some attacks), but not a correct and complete explanation; determining whether there exists such an explanation, and providing it would ensure a personalized answer. This study is also encouraged by the findings in the context of social sciences, which highlight that “humans are adept at selecting one or two causes from a sometimes infinite number of causes to be the explanation” (Miller, 2019). The reconstruction of an entire formal explanation from what is selected by a user is of interest. In order to do so, a deeper investigation of the inner structure of the classes of explanation, and more specifically of the links they could have with lattices, may be a first step.

Another finding in Miller (2019) is that explanations are contrastive. Single explanations to contrastive questions have been proposed in Besnard et al. (2022a); their generalization to classes of explanations may be studied using the work presented here, since, very often, as Besnard et al. (2022a) shows, a contrastive question can be viewed as the conjunction of some specific single questions.

The approach presented in this paper may also be extended to semantics that use additional principles such as maximality/minimality for set inclusion, for instance, the preferred or grounded semantics; in this case, some new visual criteria must be identified in order to be able to explain why a given set is or is not a preferred or a grounded extension; note that the visualization difficulty is not related to the complexity of the underlying problem (since the verification problem for the grounded semantics is a polynomial problem whereas it is an exponential one for the preferred semantics).

These last two research avenues can be considered as an attempt to produce a generic approach for the computation of explanations, on the model of the approach of Besnard et al. (2022b).

Finally, more notions of graph theory may be investigated in order to provide other kinds of visual explanations. In particular, the notion of graph isomorphism seems of great interest, especially to provide ways of reasoning by association (explaining a result via a structurally identical AF that one has already accepted).

Supplemental Material

sj-pdf-1-eai-10.1177_30504554251374991 - Supplemental material for Visual Explanations for the Verification Problem in Abstract Argumentation

Supplemental material, sj-pdf-1-eai-10.1177_30504554251374991 for Visual Explanations for the Verification Problem in Abstract Argumentation by Sylvie Doutre, Théo Duchatelle and Marie-Christine Lagasquie-Schiex in The European Journal on Artificial Intelligence

Footnotes

Acknowledgments

We would like to thank the reviewers for their helpful comments and suggestions on a previous version of this work.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.