Abstract

Lately, the availability of massive amounts of data necessitates the adoption of modern representation techniques, such as knowledge graphs (KGs). KGs are typically constructed via automated procedures and by utilizing heterogeneous data sources. This nevertheless hinders the quality of these large resulting KGs, as they might contain contradictions, that is a set of assertions that conflict with some axioms often set by human experts. In turn, classical description logics reasoners cannot be applied as no useful inference results can be generated in the face of inconsistencies. Meanwhile, classical reasoners can be used in order to retrieve the inconsistency explanations, but as the KG size grows larger the required time for this task increases significantly. To address the problem of reasoning with large and inconsistent KGs, we propose an open-source system that detects and fixes inconsistencies by splitting the KG into modules and then processing them in parallel to speed up the process. An empirical evaluation of two datasets illustrates the potential for effective inconsistency detection and fixing of large KGs.

Introduction

Knowledge graphs (KGs) are widely adopted globally, among leading entities from diverse sectors such as finance, healthcare, and information and communications technology (Abu-Salih, 2021; Ji et al., 2022). KGs integrate data from various heterogeneous sources and provide a plethora of capabilities with respect to knowledge acquisition, fusion, and up-keeping (Abu-Salih, 2021). A key aspect of KGs is the ability to apply formal reasoning tasks for querying, inferencing, and explainability (Lecue, 2020). At the same time, the construction of KGs often relies on automated or semi-automated processes for extracting knowledge and on combining data from diverse sources; these are prone to producing errors and in turn inconsistencies (Hofer et al., 2024; Huang et al., 2005; Paulheim, 2016). Unfortunately, the existence of KG inconsistency in practical applications prohibits the utilization of classical reasoners (Pensel & Turhan, 2018). Even a single contradiction within the KG obstructs the entailment of other consistent conclusions; from an inconsistent KG, anything can be entailed (Lembo et al., 2015). To overcome this obstacle, the KG can either be fixed—that is, to alter particular assertions—so that inconsistencies are corrected, or incorporate nonstandard reasoning techniques that tolerate the presence of inconsistencies (Ma et al., 2007). The detection and fixing of KG inconsistency is not always an easy-to-perform task, especially when considering large KGs.

To begin, the detection of inconsistencies and the retrieval of their explanations is a complex procedure. Inconsistency explanations, which are also known as justifications, are the minimal sets of axioms and assertions that logically contradict each other (Horridge et al., 2009). Note that computing the explanations is a computationally demanding task, as it is affected by the exponential explosion in the case of KGs with large numbers of assertions. This way, the invocation of an off-the-shelf description logics (DL) reasoner, does not always guarantee that all the explanations will be retrieved within a reasonable time frame Tran et al. (2020). Considering that all KG inconsistencies are detected, the next step would be to update the erroneous assertions with appropriate fixes. Instead of updating the wrong assertions, another approach would be to remove an assertion from each explanation and this way overcome the corresponding inconsistency. This, however, would result in a loss of information. Instead, we seek to replace some entities found in the assertions of an explanation with different ones, in an attempt to recognize the erroneous ones and correct them. Existing work mainly performs this step with the help of human experts, who revise the inconsistency explanations and manually select appropriate assertion updates that can also be generated by appropriate algorithms (Arioua & Bonifati, 2018; Fan et al., 2019). Considering the size of real-world KGs though, the number of alternative updates increases significantly, often rendering this way the manual selection process infeasible.

We develop a framework for detecting and fixing inconsistencies in large KGs. We present a method for the detection of inconsistency that is performed in a horizontally scalable manner. We split the KG into extended modules of individuals; that is, subgraphs that contain the assertions that refer (directly or indirectly) to a particular individual. Such modules are usually significantly smaller than the initial KG and in this way, the application of a classical DL reasoner to each of the modules in parallel for obtaining the explanations of inconsistency can be deemed as a realistic venture. Additionally, after retrieving the inconsistency explanations, and inspired by the work of Arioua and Bonifati (2018), we calculate update-based fixes for each explanation and automatically select those that can lead to consistency by following different fix selection strategies. To illustrate the applicability of our approach, we evaluate our system on two KG datasets, which contain inconsistencies of different kinds. Experimental results suggest that our approach can fix large and inconsistent KGs, so that reasoning can be further performed.

Our contributions are the following:

We propose a unified framework for detecting and fixing inconsistencies in KGs. We expand the language expressivity of a state-of-the-art parallel inconsistency detection approach from a fragment of OWL2 (Tran et al., 2020) to the full OWL2, by splitting the initial KG into extended modules of individuals. We re-formulate the task of KG fixing by adopting standards of the Semantic Web and propose new fix-selection strategies for increased efficiency. The implementation of our framework is openly available via an online repository.

1

This work is an extended version of a conference paper published in the SETN-2024 conference. In particular, we have enriched the background and related work section with references to paraconsistent logics, and a more thorough analysis of additional related works than what was present in the conference paper version. Also, we have included a theoretical discussion regarding the correctness of our method in detecting inconsistencies in more expressive languages. Furthermore, in this extended version, the algorithm and implementation details are described in more depth. Finally, we have included experiments on an additional real-world dataset.

The remainder of this article is structured as follows. In Section 2, we present necessary background notions and discuss the state-of-the-art in inconsistency detection and fixing. In Section 3.1, we explain the inconsistency detection part and provide details regarding the correctness of the full OWL2 expressivity support. Section 3.2 introduces KGFixer, which is our proposed method for update-based fixing of inconsistent KGs. Section 4 describes our implementation. In Section 5, we present the results of our experimental evaluation, and, finally, in Section 6, we conclude with future research directions.

Background and Related Work

A KG (Hogan et al., 2022)

Moving forward, an interpretation of a KG is a mapping of sets of individuals to each class, and of sets of pairs of individuals to relations. An interpretation is a model of

To perform reasoning on inconsistent KGs, a different class of formal logics could be incorporated, termed as paraconsistent logics. In this category, the work of Salhi and Sioutis (2023) introduces the concept of paraconsistent scenarios and provides implementations that are able to solve such problems. Paraconsistent approaches consider more than two truth values, for example, apart from “true” and “false,” there are also the “overdetermined” and “underdetermined” ones (Nescolarde-Selva et al., 2015). A thorough comparison between paraconsistent DL can be found in Kamide (2013). Nevertheless, due to the increased complexity of paraconsistent reasoning compared to classical reasoning, we do not further explore such approaches as our goal is to provide a scalable method that can tackle large inconsistent KGs.

Existing Work on Inconsistency Detection

Various approaches for detecting KG inconsistencies have been introduced in the literature. To begin, approximate methods can be proven quite effective in highlighting erroneous ABox assertions, nevertheless, they cannot always detect every inconsistency that is present in the original KG. de Groot et al. (2021) propose a method that divides the KG into subgraphs. Each subject of a triple can be deemed as a root node of a subgraph, which is then expanded by breadth-first search, following also the predicate and object expansions. Then, classical reasoners can be used to retrieve the inconsistency explanations on these smaller subgraphs. The expansion continues up to a maximum subgraph depth that is set by the user and effectively tunes the balance between scalability and completeness. The work of Paulheim and Stuckenschmidt (2016) operates on a series of ABoxes, for which particular feature vectors are calculated that attempt to indicate inconsistency. Based on these feature vectors, off-the-shelf classifiers are employed to predict if an ABox can be considered inconsistent or not. In a similar manner, Paulheim and Bizer (2014) analyze the statistical properties of types in the subject and object positions of the KG triples, and judging by a confidence score they categorize some as erroneous.

In Senaratne (2023), a method based on anomaly detection is presented. The so-called Corroborative Path algorithm is introduced, by which alternative paths between entities in a triple are considered, and a binary feature matrix is constructed. This, along with other types of features is taken into account for highlighting anomalous (i.e., erroneous) triples using a one-class support vector machine. In a different philosophy, Chen et al. (2021) rely on neural networks that operate upon embeddings of the TBox axioms and the ABox assertions. The embeddings calculated are such, that the distance between the original data, combined with additional attention coefficients, constitute an indicator of probable errors. Overall, the sacrifice approximate methods performed on completeness make them inappropriate for our purposes, that is, to fix a KG so that all meaningful reasoning results can be obtained from it.

Turning our attention to sound and complete inconsistency detection methods, as, for example, in Horridge et al. (2009), we can see that the scalability issues faced make them inapplicable for our purposes. To overcome this shortcoming, we can either reduce the expressivity of the supported language or employ modularization techniques that effectively achieve scalability. More specifically, Meilicke et al. (2017) focus on the

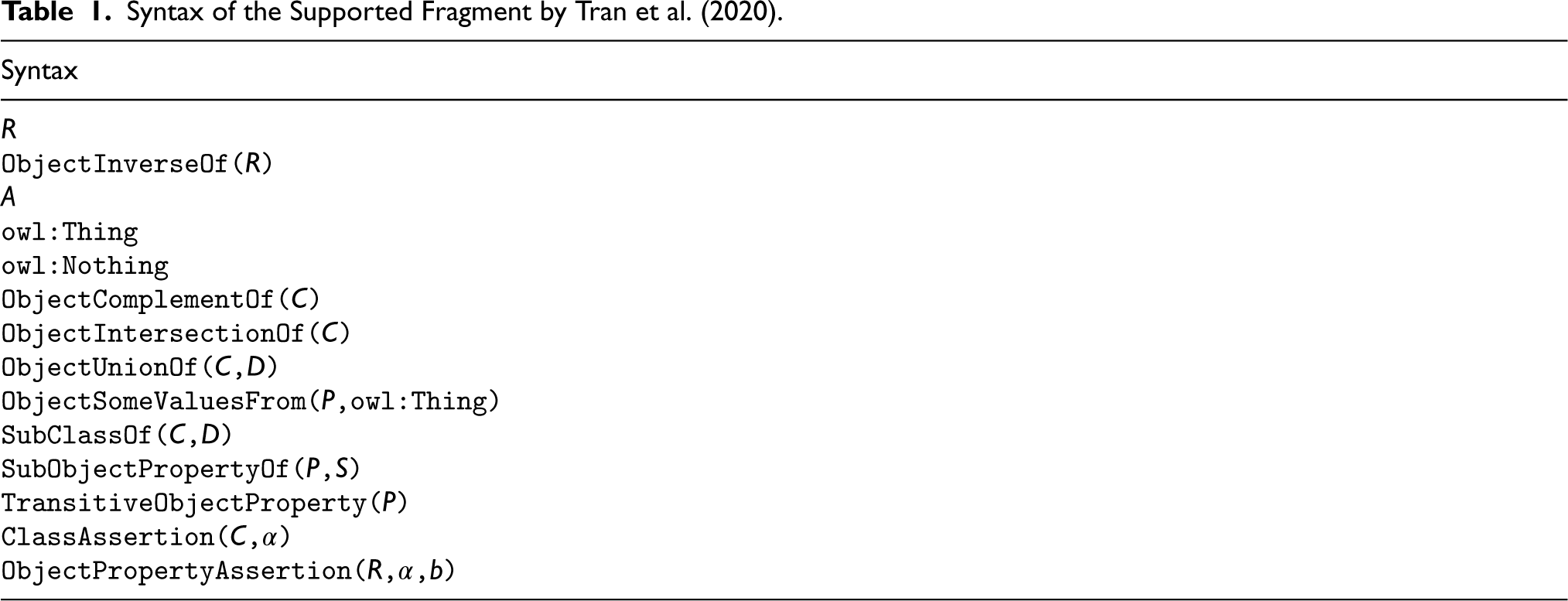

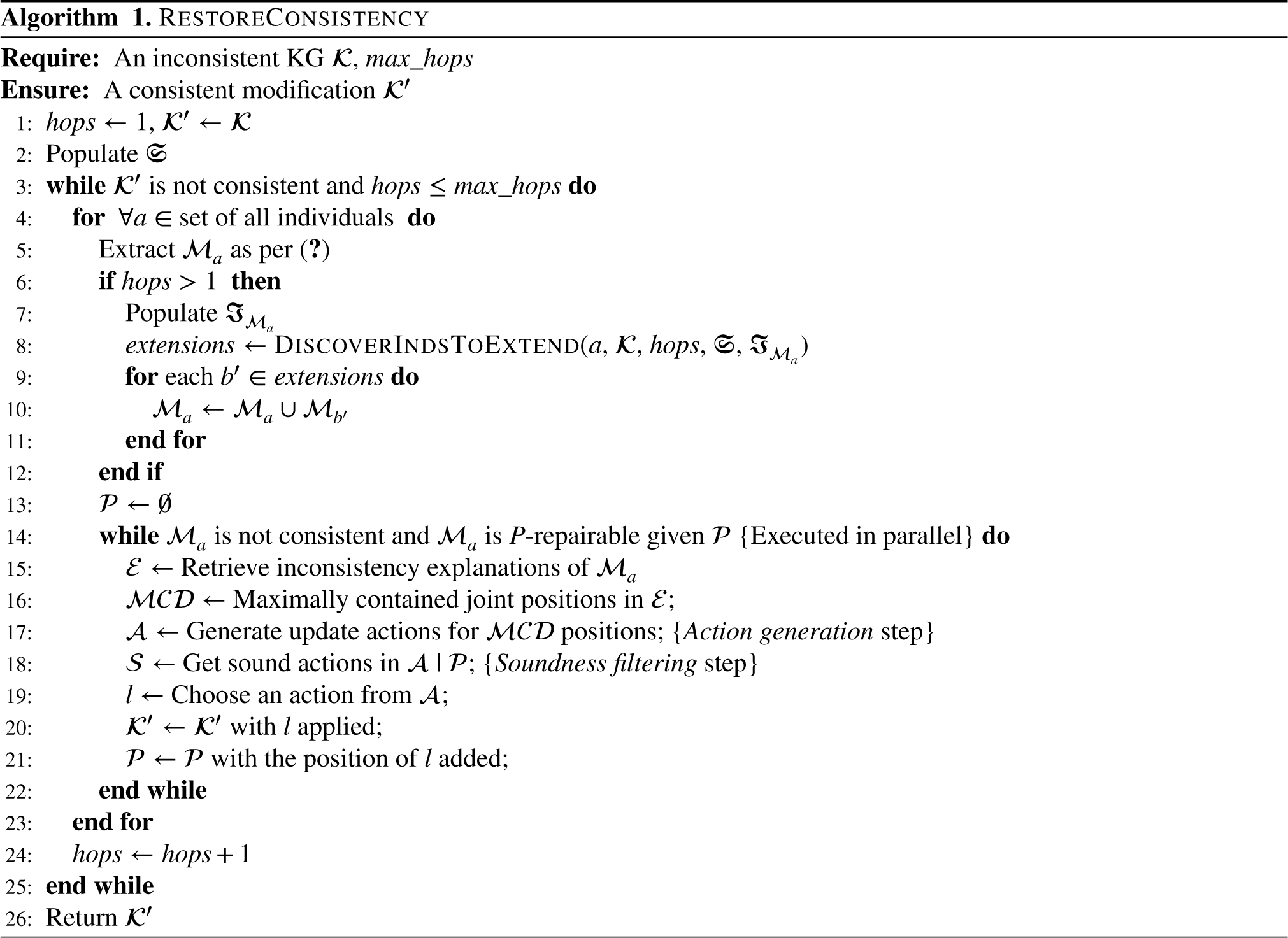

Syntax of the Supported Fragment by Tran et al. (2020).

Syntax of the Supported Fragment by Tran et al. (2020).

Note.

Finally, the approach of Zhu et al. (2022) incorporates the

Considering the analysis of the existing scalable methods for inconsistency detection, we adopt an approach that is similar to Tran et al. (2020), but instead of modules of individuals, we consider extended modules of individuals to also capture more expressive KGs, such as those expressed in OWL2 DL. In our approach, the initial KG is divided into modules of individuals, which are nevertheless extended in a guided manner, according to whether “suspicious” axioms or assertions for inducing inconsistency are included in the original KG. If

Having detected the inconsistencies that are present in a KG, the next step is to attempt to correct them, so that classical reasoners can perform inferencing without issues. KG correction can be performed in many ways, each having different merits and shortcomings. The inconsistencies of a real-world KG can be induced either solely by conflicting TBox axioms or by ABox assertions that do not follow the rules defined in the TBox. Regarding the case of an unreliable TBox, quite a few approaches have been introduced in the literature, but they require significant effort from human experts in order to resolve the errors. For example, in the method introduced by Heyvaert et al. (2019), the elements of the KG are ranked according to the number of inconsistencies that they take part in; the work of Nikitina et al. (2012) ranks them according to how many axioms are involved during their evaluation; and in Peñaloza (2019), the author discerns them based on the consequences of the possible update actions that are available. Interactive query-based approaches that guide human experts are also available, as, for example, in Shchekotykhin et al. (2012), Schekotihin et al. (2018), and Rodler (2022). In our work, we consider that the TBox is reliable, and focus on the existence of wrong ABox assertions, since this kind of inconsistency fixing is more easily managed by automated algorithms and, due to the fact that the ABox is typically much larger than the TBox, it is more difficult to be managed by humans (Paulheim, 2016).

The fixing of the ABox can be performed either by deletion-based or update-based repairs. In the deletion-based category, the aim is to select the ABox assertions that induce the inconsistencies and simply remove them from the KG (Baader et al., 2022; Bonatti et al., 2011; Melo & Paulheim, 2020). However, this naturally results in loss of information which can be deemed as a shortcoming of the respective methods. We thus focus on the update-based repairs. In contrast to the previous category, these approaches do not remove assertions, but minimally alter parts of them with such values that the inconsistencies are resolved and the most possible amount of original information is retained (Arnaout et al., 2022; Pellissier Tanon et al., 2019). For example the approach of Geerts et al. (2020) relies on the well-known chase algorithm (Benedikt et al., 2017) to apply update actions “on-the-fly” during the reasoning process. The fixes are applied incrementally and the exhaustive evaluation of all alternative update actions is avoided. The work of Arioua and Bonifati (2018) has introduced an interesting approach accompanied with guarantees, but for a slightly different domain where the TBox consists of certain types of database-oriented constraints, which do not account for the whole OWL2 DL expressivity. Also, Fan et al. (2019) introduce a graph database compatible method, that is too based on the chase algorithm.

A differentiating factor among the existing KG fixing techniques is the method for selecting the most appropriate fix. Toward this aspect, a primary objective is the automated selection of alternative fixes based on particular reliability levels or minimality criteria (Ahmetaj et al., 2022; Baader et al., 2022). Moreover, various machine learning (ML) models have emerged as effective tools for detecting and correcting errors in KGs, often utilizing patterns or external knowledge sources (Chen et al., 2020; Paulheim & Bizer, 2014). While analytical approaches provide precise formal frameworks for generating fixes, ML-based methods rely on the KG itself, though ensuring consistency remains a challenge. The combination of these approaches shows potential for efficiently addressing inconsistencies, with some research exploring reasoning strategies to tolerate inconsistencies when resolution is not feasible (Bienvenu, 2020). In our work, we provide an open-source implementation of the update-based approach for user-guided KG fixing (Arioua & Bonifati, 2018), re-formulating the task to make it applicable to a variety of RDF KGs with an OWL2 DL TBox. In addition, we combine fixing with parallel inconsistency detection and propose two new fix-selection strategies, leading to improved KG-fixing performance.

Restoring KG Consistency

In this work, our focus is on a framework for detecting and fixing inconsistencies in large real-world KGs. As we have already mentioned in our analysis in the previous section, the attempt to cover more expressive languages such as OWL2 DL, leads classical reasoning approaches to exponential explosion as the size of the KG increases. In what follows, we describe a method for restoring KG consistency, which relies on a parallel detection and fixing procedure operated upon extended modules of individuals. This splits the KG into smaller subgraphs on which reasoning can be performed both faster on the one hand, and in a parallel manner on the other hand. The module extension is such, that the same inconsistency explanations are detected, as if we performed reasoning on the initial KG. Having detected the inconsistencies, the next step is to apply fixes so that a reasoner can perform all relevant tasks without issues.

Parallel Inconsistency Detection

Modern DL reasoners (e.g., HermiT [Glimm et al. 2014], Pellet [Sirin et al. 2007], etc.) provide the means to calculate the inconsistency explanations

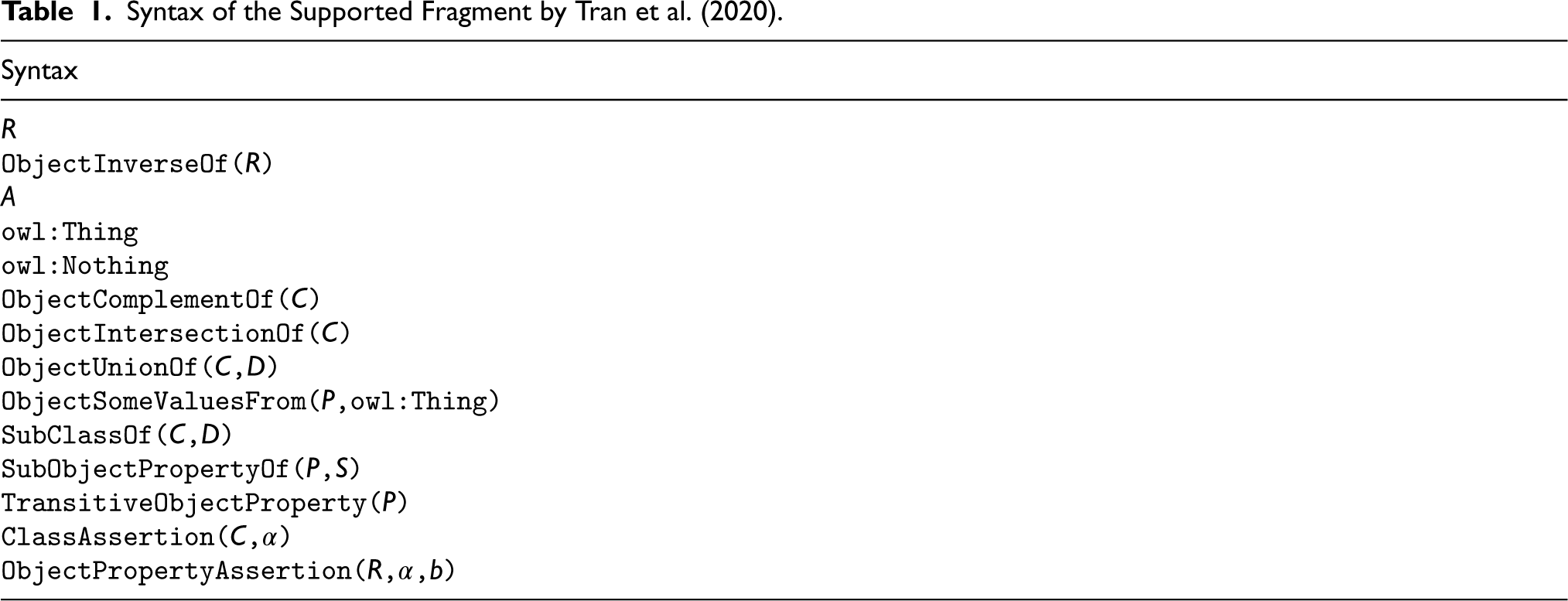

To further explain the limitations of the plain modules of individuals against our approach for the extended modules, we employ the KG example of Figure 1. In this case, the TBox is composed of two axioms: the first declares class equivalence between

An Example of Inconsistency not Captured by Tran et al. (2020).

According to the language considered in Tran et al. (2020), the existential quantifier

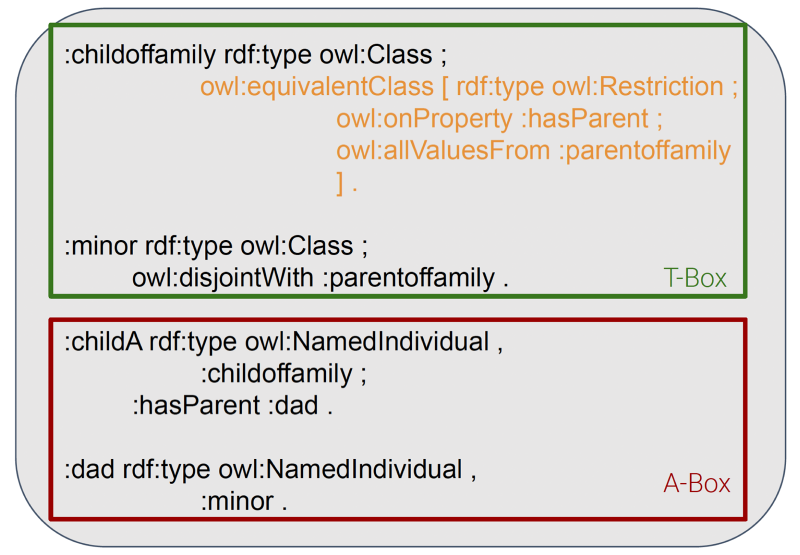

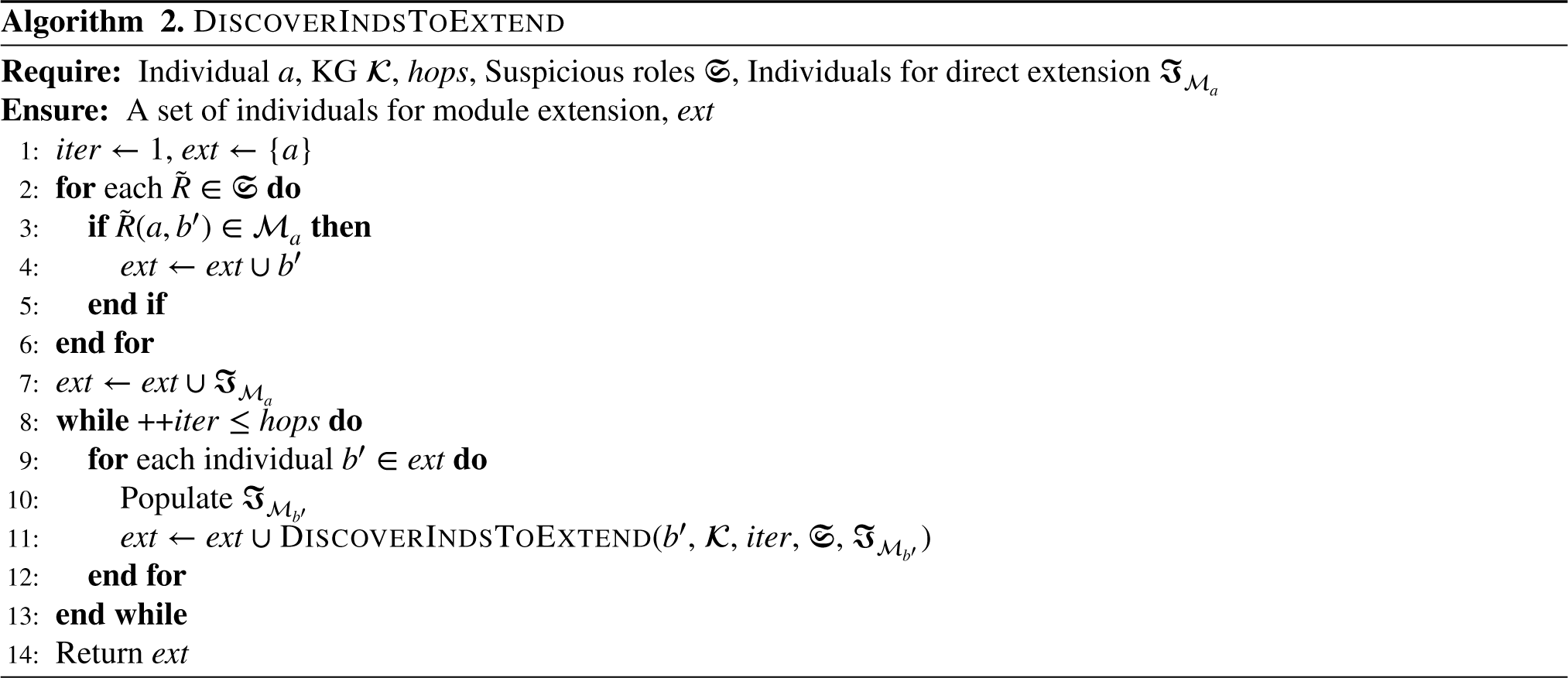

To address this issue, we have developed a method that extends the modules of individuals, nevertheless not exhaustively by following all existing links to other entities in the original KG, but instead guided by particular axiom types that are capable of inducing inconsistencies that are left undetected by other approaches. In our method, which is given in Algorithm 1, a module of individual

We can construct the set of “suspicious”

Additionally, assertions binding together

are also indicators of when to combine modules of individuals into a single extended module (Algorithm 1, line 8). We include any such linked individual

Note that, in contrast to the

We now discuss the soundness and completeness properties of our approach. Regarding soundness, recall that an inconsistent explanation is a minimal subset of the KG’s axioms and assertions. Assume that Algorithm 1 could detect an explanation

For completeness, we need to show that every inconsistency explanation

Now, in the more complex case of chain relationships,

As we have already mentioned, the extended modules can be overlapping, for example, we can have both

KGs often contain erroneous triples that lead to inconsistencies against their semantic schema. Fixing such inconsistencies involves actions that edit the data of the KG, such as the removal and/or insertion of triples. Update-based repairing approaches focus on updating the erroneous triples, by modifying the object or subject, in order to minimize information loss. Identifying such sets of actions that lead to consistency (termed as fixes) is a challenging task, in particular, considering that the same triple can be involved in more than one inconsistency and that a single inconsistency typically involves several triples. In addition, the task becomes even more challenging when considering that some triples in a KG can be the result of entailment, which makes their removal even more complex (Arioua & Bonifati, 2018; Arnaout et al., 2022; Melo & Paulheim, 2020).

Aligned with the work of Arioua and Bonifati (2018), our method performs update-based fixing of inconsistent KGs. As already stressed, we consider a reliable 2 TBox, and fixing is achieved by updating conflicting ABox assertions. In particular, a knowledge base schema is supported that consists of specific types of dependencies, namely contradiction-detecting dependencies (CDDs) expressing class disjointness, and tuple-generating dependencies (TGDs) expressing existential rules. This semantic schema might be less expressive than OWL2 DL as it does not support complex ontological constructs, disjoint union, and data-type reasoning. In our implementation of the method, however, we reformulate the task by adopting semantic web standards and enable the method to support respective OWL2 DL schemas, namely of the particular OWL2 DL subset defined later in Section 4. The notion of update-based repair (Wijsen, 2005) for inconsistency fixing refers to cases where a fact can be updated in order to fix an inconsistency, as opposed to deletion-based repair, where facts are removed from the KG. This way, update-based repair preserves as much information as possible. In this direction, Arioua and Bonifati (2018) introduce the notion of position fix, which can update an entity in a specific position (i.e., subject or object in a triple) of a fact and propose a method that given an inconsistent KG and some immutable positions in it, generates all the alternative position fixes that can lead to consistency. Such alternative fixes are then presented to a user, for example, a domain expert, in the form of multiple-choice questions, and are resolved sequentially gradually leading this way to consistency.

Regarding the generation of alternative update actions, the original method of Arioua and Bonifati (2018) starts with all joint positions on the axioms involved in the body of a CDD that is fired. For example, if a CDD is fired by the ABox axioms

Such an approach relies on the notion of

Implementation Details

Since there are no publicly available implementations of the methods that inspired us (Arioua & Bonifati, 2018; Tran et al., 2020), we developed our codebase from scratch. We employed the library of OWL-API 3 that supports OWL2 DL and developed a service using Java that, given a KG (i.e., in terms of DL, a TBox, and an ABox), (a) extracts the modules of the individuals and (b) invokes the HermiT 4 (Glimm et al., 2014) reasoner to analyze the subgraphs, checks for inconsistency, and returns the explanations where applicable. We chose to utilize HermiT, as it is illustrated to perform better than others in Horrocks et al. (2012), but any OWL-API compatible reasoner can be used without any modification in the whole approach. The reasoning tasks for each module are delegated to different CPUs so as to parallelize the time-consuming process of inconsistency detection in large KGs.

Next, toward fixing the KG, we introduce our method as an open-source implementation of the MCD-fix strategy (Arioua & Bonifati, 2018). In addition, we re-formulate the problem of fixing a KG adopting Semantic Web standards used in several KGs, namely RDF and OWL2 DL. Our method is implemented in Java and utilizes a standard reasoner, namely HermiT, for consistency checking. In particular, given a KG consisting of an ABox, in the form of RDF triples, and a TBox, in the form of an OWL2 DL schema, our method updates the ABox assertions of the KG leading to repairing certain inconsistencies detected by the reasoner. These include inconsistencies due to entity confusion (e.g.,

Moving into the Semantic Web formalization, we consider a TBox that consists of OWL2 DL Class Axioms. As OWL2 DL is more expressive than TGDs and CDDs alone, our method is restricted to a particular subset of OWL2 DL, defined by:

The use of simple Data Ranges and Classes, but not Data Range and Class Expressions consisting of constructs. The use of any OWL2 Axiom type except Assertions of the types Assertions of the type

The TBox is considered reliable and only the ABox is updated. The ABox can consist of any OWL2 DL ABox type axiom, but:

The main steps of our KG fixing method are presented in Algorithm 1. After an initial

All alternative actions to a multiple-choice question should have the potential to lead to a consistent KG. That is, they should retain the KG

The Action generation and Soundness filtering steps can be computationally demanding. The Action generation step is affected by the number of alternative entities to consider. And the Soundness filtering step by the number of alternative actions and the time required for the

Empirical Analysis

In this section, we apply our method for detecting and fixing inconsistencies in two datasets from the existing literature. First, we showcase the inconsistency detection capabilities of our approach that split the KG into extended modules of individuals for TBoxes of increased language expressivity. Next, we proceed with applying the KG fixing strategies that we put forward and examine the results.

Experimental Setup

For our experiments, we employ the KG from the work of Bienvenu et al. (2016), which constitutes a variant of the Lehigh University Benchmark (LUBM; Guo et al., 2005) with additional disjointness axioms to induce inconsistencies of different types. Initially, the ABox is consistent. To facilitate reasoning tasks, controlled inconsistency is introduced in the ABox by adding additional assertions:

Concept assertions: Each individual has a probability Role assertions: The probability of contradicting each role assertion for an individual is

Furthermore, the LUBM KGs used in our experiments have been slightly modified to include types of inconsistencies that are left undetected by the approach of Tran et al. (2020). In particular, similarly to the example of Figure 1, we added an

To further illustrate our method’s applicability, we also incorporate an additional dataset, the Food Inspection KG, as introduced in Bienvenu and Bourgaux (2022). This KG contains data as resultof restaurant inspections in the areas of New York and Chicago, and has a smaller TBox than the LUBM (24 axioms, as opposed to the 1085 of the LUBM TBox). Also, the inconsistencies that are present in the original KG mainly contain

The evaluation is performed on subgraphs of the aforementioned KGs of gradually increasing size to calculate (a) the time required for the detection and (b) the total number of detected inconsistencies for the first batch of experiments, and to examine the timely correction of inconsistencies for each fixing strategy for the second batch. The experiments were executed on a computer with Linux OS, equipped with an Intel Xeon E5-2630 2.60 GHz (24-cores) CPU and 256 GB of RAM.

Results on Inconsistency Detection

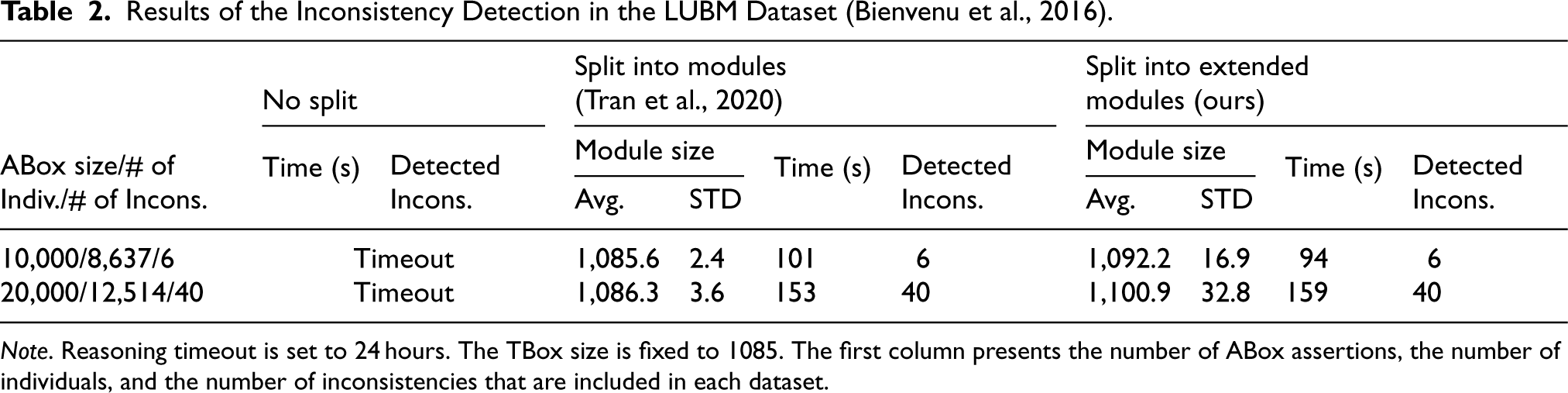

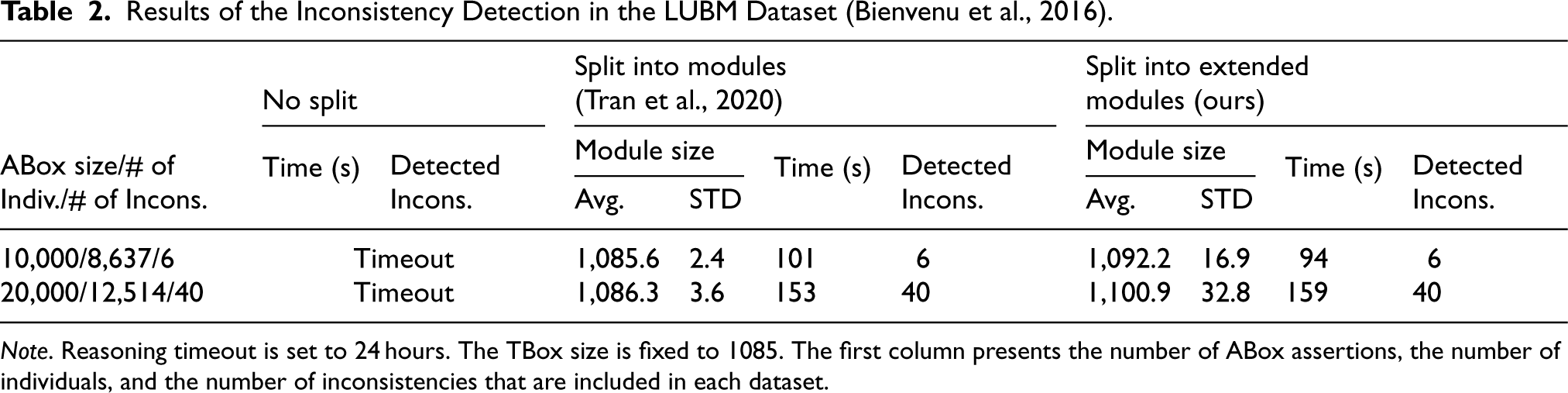

The results from the original LUBM dataset are shown in Table 2. We can see that even for ABox sizes of 10K and 20K assertions, the “monolithic” approach required more than 24 hours to produce any results. On the other hand, the two parallel approaches complete their execution significantly faster, without significant deviations in the execution time. The number of modules of individuals extracted for parallel evaluation was equal to the number of individuals reported for each subgraph in the corresponding tables.

Results of the Inconsistency Detection in the LUBM Dataset (Bienvenu et al., 2016).

Results of the Inconsistency Detection in the LUBM Dataset (Bienvenu et al., 2016).

Note. Reasoning timeout is set to 24 hours. The TBox size is fixed to 1085. The first column presents the number of ABox assertions, the number of individuals, and the number of inconsistencies that are included in each dataset.

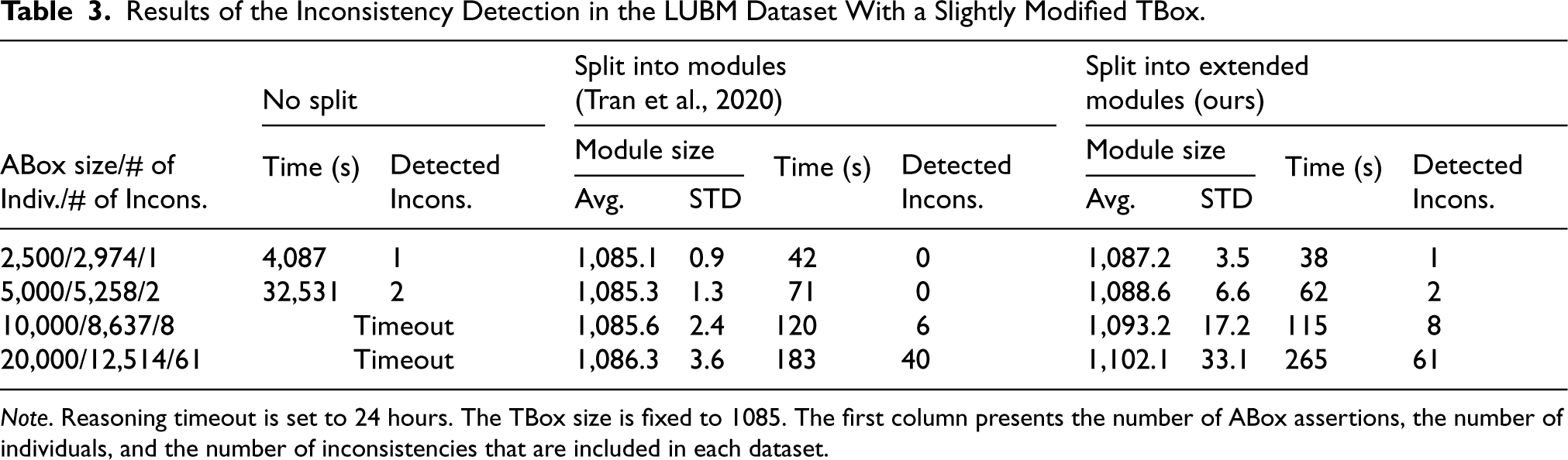

Since in the real world, we expect to encounter more complex inconsistencies, we also performed tests on a slightly altered LUBM TBox that contained the extra axioms we described earlier in the previous subsection. The results are shown in Table 3. The monolithic approach of applying the HermiT reasoner to the whole KG became intractable quite quickly, as it managed to produce results only for ABox sizes of 2.5K and 5K triples. On the contrary, the module splitting techniques were able to run for up to 20K triples producing results quite fast.

Results of the Inconsistency Detection in the LUBM Dataset With a Slightly Modified TBox.

Note. Reasoning timeout is set to 24 hours. The TBox size is fixed to 1085. The first column presents the number of ABox assertions, the number of individuals, and the number of inconsistencies that are included in each dataset.

Importantly, we can see that the proposed method for splitting the KG into extended modules was capable of detecting all the inconsistencies, in contrast to the approach of Tran et al. (2020) that overlooked particular inconsistency types. For ABox sizes of 2,500 and 5,000 triples, the proposed method detected all the inconsistencies that could be found without splitting the KG, while Tran et al. (2020) were not able to detect any of them. For ABox sizes of 10K and 20K triples, our method detected two and 21 additional inconsistencies, respectively, which were left undetected by Tran et al. (2020). The size of the modules extracted by these two approaches differed, resulting in a slight increase in the average module size and its standard deviation for the extended module splitting method. Note that the module size includes TBox axioms, which in these experiments were 1085. Overall, the execution time did not differ significantly between the two parallel implementations, but we anticipate that the extended modules approach will be slightly slower for larger KG, as a result of the higher number of triples in the modules that are examined.

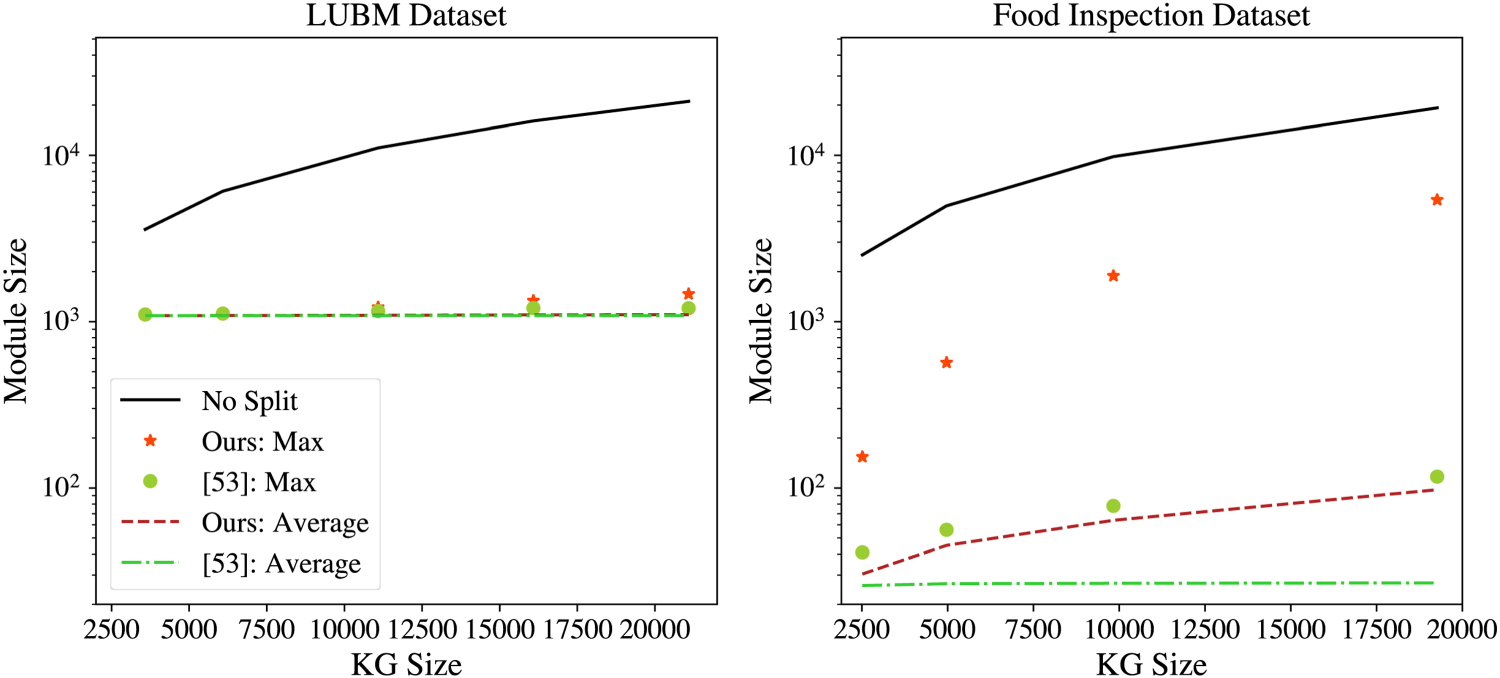

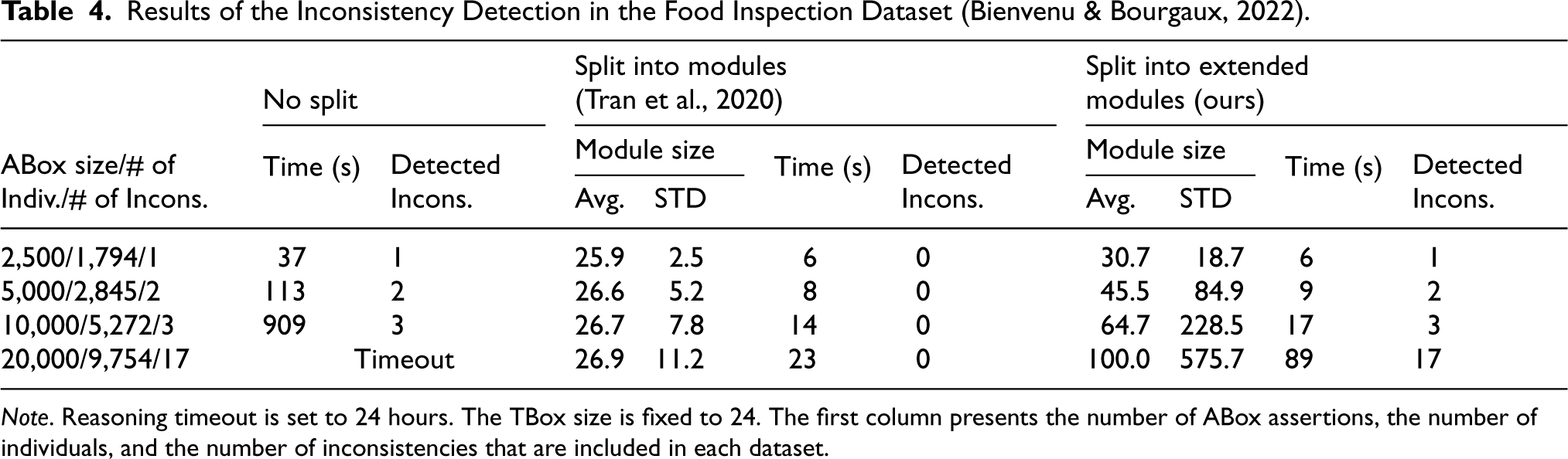

The evaluation of the Food Inspection dataset led to similar results (Table 4). The monolithic approach became ineffective for 20K or more assertions, and the plain modules of individuals were not sufficient to capture any of the inconsistencies that exist in this dataset. On the contrary, our method was able to detect all inconsistencies and did so in a reasonable amount of time. Again, we can see that the size of the extended modules was larger due to the extensions to additional individuals, as dictated by the existence of

Module Sizes for Different Knowledge Graph (KG) Subsets.

Results of the Inconsistency Detection in the Food Inspection Dataset (Bienvenu & Bourgaux, 2022).

Note. Reasoning timeout is set to 24 hours. The TBox size is fixed to 24. The first column presents the number of ABox assertions, the number of individuals, and the number of inconsistencies that are included in each dataset.

The execution times of the parallel approaches presented in Tables 2to 4 can be further improved, provided that we increase the number of the parallel processors, as in our case the equipped machine for the experimental evaluation had only 24 cores available, while, for example, in the LUBM 20K we have 12,514 modules of individuals that could be processed in parallel. The slightly faster execution time of our method against that of Tran et al. (2020) in cases of smaller datasets is a result of an optimization step that examines if an extended module has already been processed, and if so then its processing is skipped. The extended modules with base individuals

In the next part of our experimental evaluation, we applied our method for fixing the KGs, using the baseline strategy MCD-fix and the two optimization strategies Trivial-fix and Rank-fix. In the absence of a user to decide on the fix selection, one of the generated sound actions was selected randomly, as in the experiments of Arioua and Bonifati (2018). The same holds for the Rank-fix method, in case of ties between alternative actions.

We also performed experiments by utilizing the extended modules of the individual method we propose for the inconsistency detection and the method of Tran et al. (2020), as described earlier in Section 3.1. The experiments presented in the work of Arioua and Bonifati (2018) considered datasets with up to 1,000 assertions. We experiment with subsets of the original LUBM dataset, consisting of 2,500, 5,000, 7,500, 10,000, and 12,500 assertions. For the Food Inspection dataset, we employed subgraphs of size 2,500, 5,000, 10,000, and 20,000 assertions. We introduce a time threshold for fixing the KG of 1 minute and 5 minutes for the LUBM and the Food Inspection datasets, respectively.

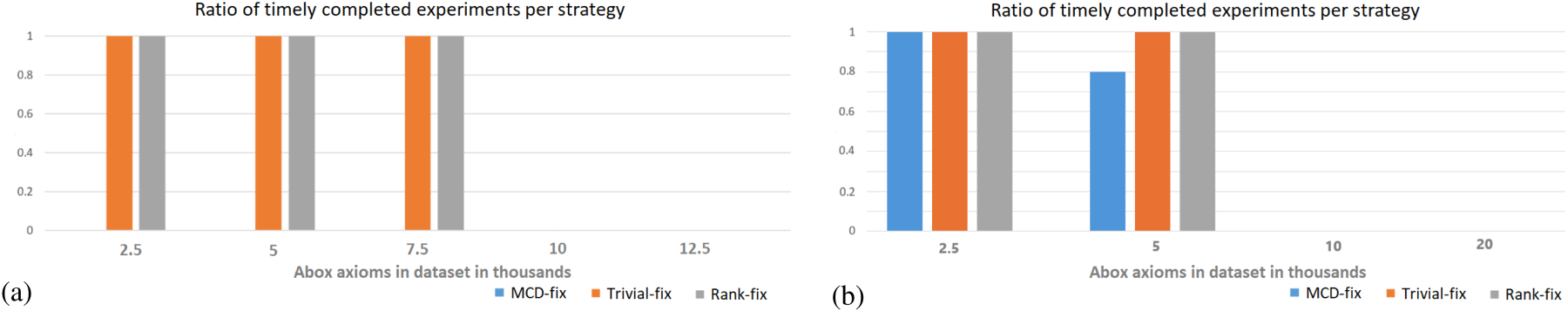

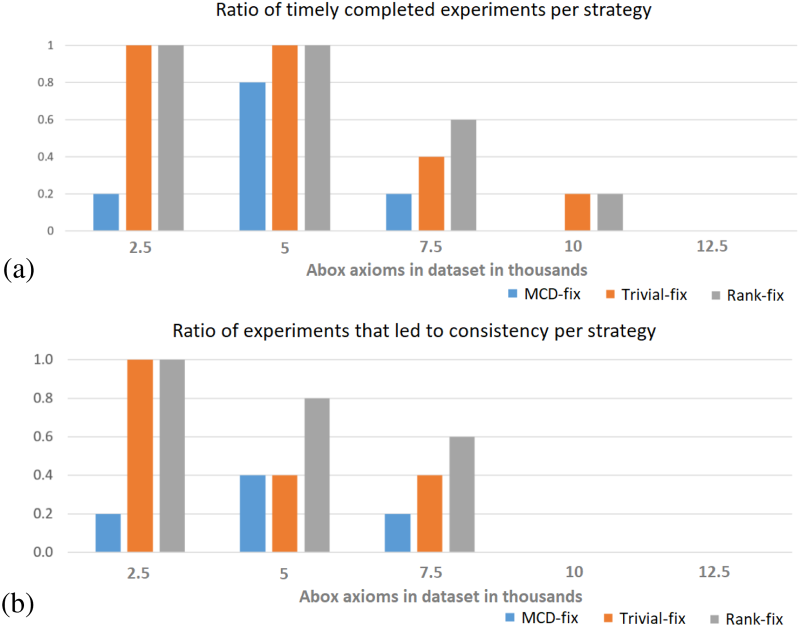

The main aim of this first series of experiments was to investigate the applicability of MCD-fix and reveal the benefits of introducing the proposed strategies Trivial-fix and Rank-fix, by invoking the reasoner on the whole KG, without incorporating any of the parallel inconsistency detection methods. The benefit of these strategies is shown in Figure 3. For the LUBM dataset (Figure 3(a)), all the experiments with the MCD-fix baseline were overtime—having a time threshold of 1 minute, even for the smallest subset of the dataset. On the other hand, all experiments with the Rank-fix and the Trivial-fix strategy managed to repair the KG with up to 7,500 assertions within the time limit and only became overtime for larger subsets. For the Food Inspection dataset (Figure 3(b)), the behavior of the Trivial-fix and Rank-fix strategies was similar, but we can see that the MCD-fix strategy managed to timely complete the experiments at 100% for the 2,500 assertions KG, and at 80% of the times for the 5,000 assertions version. The above suggests that the MCD-fix baseline strategy is not the best option, whereas the Trivial-fix and Rank-fix alternatives were shown to be more effective, especially in the case of the LUBM dataset.

Results of the Monolithic Approach for Fixing, Without Splitting the Knowledge graph (KG) into Modules. (a) LUBM Dataset: The Ratio of Timely Completed Experiments (i.e., not Exceeding the 1-Minute Limit) per Strategy Across Dataset Subsets of Different Sizes. All Completed Experiments Lead to an Updated KG that is Consistent. MCD-fix Values are Zero, as this Method did not Manage to Complete before the Timeout. and (b) Food Inspection Dataset: The Ratio of Timely Completed Experiments (i.e., not Exceeding the 5-Minute Limit) per Strategy Across Dataset Subsets of Different Sizes. All Completed Experiments Lead to an Updated KG that is Consistent.

Moving forward with the evaluation of the parallel methods, the results when splitting the LUBM KG into plain modules of individuals are presented in Figure 4. The MCD-fix baseline clearly benefits from splitting into modules. With the plain modules of individuals (Figure 4(a)) MCD-fix managed to complete the repairing process under the 1-minute limit for all experiments with less than 10,000 assertions and for 40% of experiments with 10,000 assertions. In addition, it managed to repair the KG reaching global consistency (Figure 4(b)) in a ratio of experiments ranging from 60%, in the smallest subgraphs, to 20% in the subgraph of 10,000 assertions. For the Trivial-fix strategy, we can see in Figure 4(a) that the ratios of timely completed experiments were quite similar to those with no module splitting, apart from the fact that it could complete the procedure for also 20% of the times for 10,000 assertions. It is worth noting that for this fixing strategy, all timely attempts led to global consistency (Figure 4(b)). For Rank-fix, on the other hand, the effect of splitting into modules is more complex. First, a negative effect is observed as the overhead of module splitting results in having some overtime experiments for the subset of about 7,500 assertions, where no overtime experiment was observed when there was no splitting into modules. In addition, several timely completed experiments with Rank-fix failed to lead to global consistency. The failure of some parallel experiments to reach global consistency is because we apply the fixing method on each module locally, without global checking of the KG at each fixing step, as described in the original fixing method. In a real-world scenario, where the update actions are selected in a meaningful way, for instance, by users that crosscheck each action that they choose with some database or other external knowledge, introducing fixes that create new conflicts could be considered probably unexpected. In these experiments, however, where the update actions for each module are chosen randomly and independently, there is no guarantee that the combination of the independently fixed modules achieves global consistency. In such cases, the algorithm needs to be re-run.

Splitting the LUBM Knowledge Graph (KG) Into Plain Modules of Individuals for Fixing the Inconsistencies. (a) The Ratio of Timely Completed Experiments (i.e., not Exceeding the 1-Minute Limit) per Strategy Across Dataset Subsets of Different Sizes. In This Parallel Configuration Timely Completion does not Guarantee Consistency of the KG. and (b) The Ratio of Experiments that were (i) Timely Completed (i.e., not Exceeding the 1-Minute Limit) and (ii) Led to the Consistency of the KG. The Ratios are Presented per Strategy Across Dataset Subsets of Different Sizes.

For larger subsets, on the other hand, a benefit is observed as splitting into modules allowed some experiments with about 10,000 assertions or more to be completed timely (Figure 4(b), which was not possible without module splitting. In particular, the Rank-fix strategy managed to lead to global consistency for 20% of the experiments with about 10,000 assertions. In addition, when splitting into modules in some cases Rank-fix managed to timely complete more experiments even compared to Trivial-fix. This observation is appointed to Rank-fix choosing more targeted fixes that involve fewer update actions. We omit the assessment of the method of Tran et al. (2020) on the Food Inspection dataset, since no inconsistencies could be detected, and thus fixed.

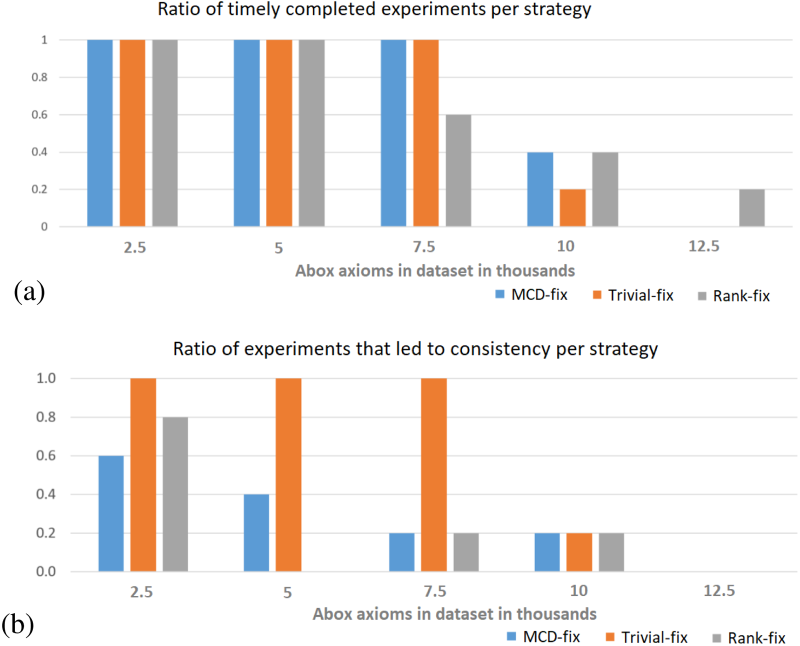

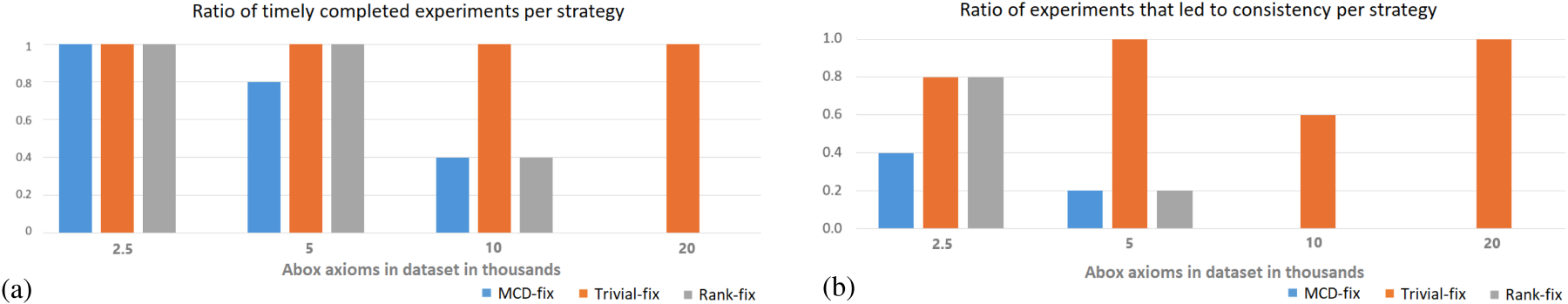

Finally, we present the results of our proposed approach for the parallel KG inconsistency detection and fixing approach based on the extended modules of individuals. Figure 5 presents the results on the LUBM dataset, while Figure 6 for the Food Inspection one. We can see that with the extended modules in the LUBM (Figure 5(a)) MCD-fix benefited less from splitting the KG, as it completed only some of the experiments of up to 7,500 assertions. This happens because (a) more time is required for extending the module, and (b) the reasoning process on the (slightly larger) extended modules is inherently more complex. Also, only 40% of experiments with about 5,000 assertions led to global consistency (Figure 5(b)). Nevertheless, recall that without KG splitting none of the MCD-fix experiments managed to complete under the time limit of 1 minute (Figure 3(a)). Regarding Trivial-fix and Rank-fix, we can see that in the case of the extended modules, the performance gradually drops as the size of the dataset grows larger. In Figure 5(b), we can see that Rank-fix was more effective in terms of achieving consistency than the two other strategies for 5,000 and 7,500 assertions. For 10,000 and 12,500 assertions, however, all strategies reached the timeout threshold.

Splitting the LUBM Knowledge Graph (KG) Into Extended Modules of Individuals for Fixing the Inconsistencies. (a) Ratio of Timely Completed Experiments (i.e., not Exceeding the 1-Minute Limit) per Strategy Across Dataset Subsets of Different Sizes. In This Parallel Configuration Timely Completion does not Guarantee Consistency of the KG. and (b) The Ratio of Experiments that were (i) Timely Completed (i.e., not Exceeding the 1-Minute Limit), and (ii) Led to the Consistency of the KG. The Ratios are Presented per Strategy Across Dataset Subsets of Different Sizes.

Splitting the Food Inspection Knowledge Graph (KG) Into Extended Modules of Individuals During Repairing. (a) The Ratio of Timely Completed Experiments (i.e., not Exceeding the 5-Minute Limit) per Strategy Across Dataset Subsets of Different Sizes. In This Parallel Configuration Timely Completion does not Guarantee Consistency of the KG. and (b) The Ratio of Experiments that were (i) Timely Completed (i.e., not Exceeding the 5-Minute Limit) and (ii) Led to the Consistency of the KG. The Ratios are Presented per Strategy Across Dataset Subsets of Different Sizes.

With regard to the Food Inspection dataset, we can see in Figure 6 that Trivial-fix managed to complete all experiments in a timely manner, and achieved KG consistency in the cases of 5,000 and even of 20,000 assertions (Figure 6(b)). Once again the MCD-fix strategy was the least effective, and the Rank-fix had a slight benefit with respect to timely completion on 5,000 assertions, and to final KG consistency in the case of 2,500 assertions.

In our experimental evaluation, we compared a monolithic approach for detecting inconsistencies against two module-based approaches that can process the KG in a parallel and in a more time-effective manner. The monolithic approach becomes intractable for datasets consisting of more than 5K triples, but parallel methods that split the KG into smaller modules completed their execution significantly faster. Our approach incorporating extended modules of individuals outperforms the state-of-the-art method approach by detecting all inconsistencies, while maintaining reasonable execution times. For fixing inconsistencies, we evaluated the three strategies we propose, that is, MCD-fix, Trivial-fix, and Rank-fix. MCD-fix was found inefficient without parallelization, as it did not manage to fix the KGs within the provided time limits (1 minute for LUBM, 5 minutes for Food Inspection). Trivial-fix and Rank-fix performed significantly better, managing to fix KGs up to 7,500 assertions. The modular approaches improved MCD-fix, achieving the fixing of the KGs in more cases. The Rank-fix strategy may struggle due to the module-splitting overheads but is able to apply more targeted fixes than the other strategies for larger datasets. While our module splitting approach improves scalability, the availability of additional computational resources is necessary for handling even larger KGs.

Conclusions and Future Work

We presented an open-source system for detecting and fixing inconsistencies in KG. To perform the detection in parallel and to account for KGs described in more expressive languages, we have put forward the notion of the extended modules of individuals. According to this, plain modules of individuals are merged into combined extended modules, if particular kinds of axioms and assertions are linked to the base individual, such that inconsistencies may be induced, for example, by chain relations. Subsequently, each extended module is processed in parallel for detecting and fixing any inconsistency, this way being able to scale horizontally for KGs of large sizes. Regarding fixing, we reformulated the corresponding task of update-based repairs, by adopting Semantic Web standards and by introducing necessary modifications that make it applicable to a variety of RDF KGs with an OWL2 DL TBox. We evaluated our approach on two well-known cases of inconsistent KGs, and the results show that it is able to restore consistency even in narrow time frames with strict timeout configurations. Our evaluation results suggest that combining the fixing strategies we put forward with splitting large KGs into modules is a promising direction for the repairing of even larger KGs.

In the absence of user input, the random selection among sound fixes can lead to different fixed KGs after each execution. In this direction, we aim to investigate the development and use of ML models for ranking the alternative position fixes based on their plausibility as a means to minimize the need for input from domain experts. This can be formulated as a link-prediction/fact-validation task where each update action is one of many potential links between entities or facts, considering features based on relation paths in the KG (Lao & Cohen, 2010; Paulheim & Gangemi, 2015), or embedding-based methods, that develop and exploit distributed representations of entities and relations (Bordes et al., 2013; Demir et al., 2021). In addition, the incorporation of large language models for generating or selecting among alternative possible fixes, is another promising direction (Frey et al., 2024). Moreover, the loading of web-scale KGs to the computer’s memory might be deemed impossible in most cases, due to their extreme size. To this end, work is in progress toward an implementation that interoperates with a separate triplestore (e.g., irtuoso 6 ) using SPARQL queries for the retrieval of the extended modules of individuals that are to be processed in-memory and in parallel.

Footnotes

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work has been supported by the ENEXA project, funded by the European Union’s Horizon 2020 research and innovation programme, under grant agreement No. 101070305.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.