Abstract

Social media platforms evolve rapidly. While platform studies have often analyzed specific policy or feature changes, there remains a lack of shared language to conceptualize how platforms themselves represent such changes. In this article, we analyze public communications from Meta, YouTube, X, and TikTok to examine how platforms construct and justify change. We explicate platform evolution as a technical but also deeply discursive process. Platforms frame their transformations in interesting ways, especially if these shifts consolidate power or deepen user dependence. We introduce the concept of

Keywords

Introduction

As social media platforms evolve, they generate new affordances that reshape user experiences and policies, in turn, altering societal dynamics and redefining how individuals interact, communicate, and even organize within various social, political, and economic contexts (van Dijck et al., 2018). It is challenging to study the social implications of platforms without considering how they change. While scholars have studied technological change broadly from organizational and innovation perspectives (Anderson and Tushman, 1991), platforms exhibit unique business models, systems, and logics that warrant a more specific framework. Research has primarily focused on policy and feature changes, often on a case-by-case basis (see, e.g., Barrett and Kreiss, 2019; de Keulenaar et al., 2023; DeVito et al., 2017; Katzenbach, 2021). However, as platforms play an increasingly integral role in society (Nielsen and Ganter, 2022; van Dijck et al., 2018), it becomes important to develop language for documenting and analyzing their changes. In this work, we present an analysis of communications (

While technological change has historically been slow and materially grounded (Roser, 2022), the current pace of

Discursive framing enables platforms to present themselves as open, neutral, and responsive, even when the changes they enact consolidate power or increase user dependency (Gillespie, 2010; Plantin, 2018). For example, Meta's public communications around content moderation and misinformation rely on a discourse of authenticity and innovation, positioning the company as responsible while avoiding structural accountability (Hurcombe et al., 2025). Likewise, TikTok emphasizes AI-based moderation as both transparent and effective, framing algorithmic governance as trustworthy despite its opacity (Grandinetti, 2023; Chan et al., 2023). Such discourse actively shapes how platforms are perceived and regulated.

Therefore, studying platform discourse provides a necessary lens to understand platform change. Rather than treating change as an objective process, a discursive approach highlights how platforms construct and manage instability and strategically shape their public identities (Gillespie, 2018; Helmond et al., 2019; Barrett and Kreiss, 2019). Studying discourse allows us to unpack how platforms narrate their transformations in ways that obscure power asymmetries and legitimize their evolving roles within digital ecosystems (Hoffmann et al., 2016; van Dijck et al., 2018).

In this article, we (1) investigate and explicate platform change, (2) provide language and context for platform studies and related disciplines to discuss the implications of this change, and (3) document the discursive rationales of change as framed by the platforms. We do so through content analyses of documents from Meta, YouTube, X, and TikTok to develop and introduce the concept of

Background

Situating platform change

Historically, technological progress has been slow, with innovations remaining unchanged for generations (Roser, 2022). However, today, rapid advancements integrate once-unimaginable developments into daily life at an unprecedented rate, highlighting an

Our work focuses on

Our work contributes to platform studies by focusing on how platforms communicate and position change. In doing so, we follow Helmond et al.'s (2019) emphasis on the value of attending to “self-description histories” or key archival resources that capture how platforms narrate their own transitions. Through a content analysis of platform-authored texts such as blog posts, announcements, and policy updates, we examine how platforms frame, justify, and make sense of their own evolution. This discursive layer is crucial: it reveals how platforms manage public perception, preempt criticism, and shape dominant understandings of technological progress. By attending to these communicative acts, our work addresses a critical gap in the literature and offers a framework for studying platform change not only as a technical or social process but also as a discursive and political one.

Platform discourses

Platform discourse refers to the strategic use of language by technology companies to shape their public identity and regulatory positioning (Plantin, 2018). It often functions as a tool used to present platforms as neutral and open infrastructures, particularly when such portrayals align with corporate interests. At the same time, these narratives obscure the power platforms exert over content, policy, and user behavior. This dynamic is especially evident in companies such as Facebook, now Meta, where scholars have shown that discourse is used to manage public perception and deflect criticism regarding content moderation, data commodification, and a lack of accountability, among others (Gillespie, 2010).

Over time, scholarship has observed that platform discourse often emphasizes narratives of openness, innovation, and empowerment. For example, Maddox and Malson (2020) shared that US social media platforms use the “marketplace of ideas,” a free speech ideal where truth is believed to emerge through open debate, to justify global enforcement of US-centric expression norms. However, closer scrutiny has exposed the opaque operational structures of platforms (Gillespie, 2018; Pasquale, 2015). These critiques foreground concerns such as the commodification of user data, the politics of content moderation, and the disproportionate influence platforms exert over public discourse, often without meaningful accountability (Gillespie, 2018; Plantin, 2018; van Dijck et al., 2018). Platform discourses often function as disciplinary devices, projecting ideological and self-interested visions of how users ought to use their sites or understand their policies (DeCook et al., 2022; Divon et al., 2025; Petre et al., 2019). Through narratives of connection, virtue, and empowerment, they also obscure deeper forms of labor and governance (Proferes et al., 2025).

Meta has been a central focus in such analyses. Scholars have shown how the company strategically frames challenges around harmful content, particularly misinformation, through narratives centered on authenticity and technological solutions (Hoffmann, 2021). This framing positions the platform as proactive and responsible, while deflecting blame and limiting scrutiny. Meta Newsroom, initially a space for corporate announcements, has evolved into a key tool for shaping both public and policy perceptions of contentious issues such as misinformation (Hurcombe et al., 2025).

Platform discourse also shapes regulatory expectations. Facebook, for instance, was found to have invoked the language of self-regulation to legitimize its authority while projecting an image of accountability, even when its actions contradict these claims (Medzini, 2021). Zuckerberg's public statements consistently frame the company as a visionary force grounded in Silicon Valley ideals of global connectivity and community. This rhetoric masks the structural imbalances between the platform, its users, and commercial interests (Haupt, 2021).

Other platforms, including TikTok, deploy similar rhetorical strategies. Both Facebook and TikTok emphasize transparency and AI-driven solutions to harmful content as part of their discursive toolkit. These narratives frame AI as both innovative and trustworthy, shaping public understanding while obscuring the complexity and opacity of algorithmic systems (Grandinetti, 2023). For example, TikTok presents its use of AI in content moderation as a responsible and effective approach, deflecting deeper inquiry into how decisions are made and enforced (Chan et al., 2023). Studying discourses of change is thus key to understanding how platforms shape public perception, deflect accountability, and influence regulation, often masking persistent structural and governance issues. Analyzing these narratives allows us to critically unpack the gap between what platforms say, what they mean, and most importantly, what they do not say.

Methods

This study applies qualitative methods to the analysis of communications from Meta, X, YouTube, and TikTok. Our analysis follows an iterative approach, guided by sensitizing concepts derived from literature.

Data collection

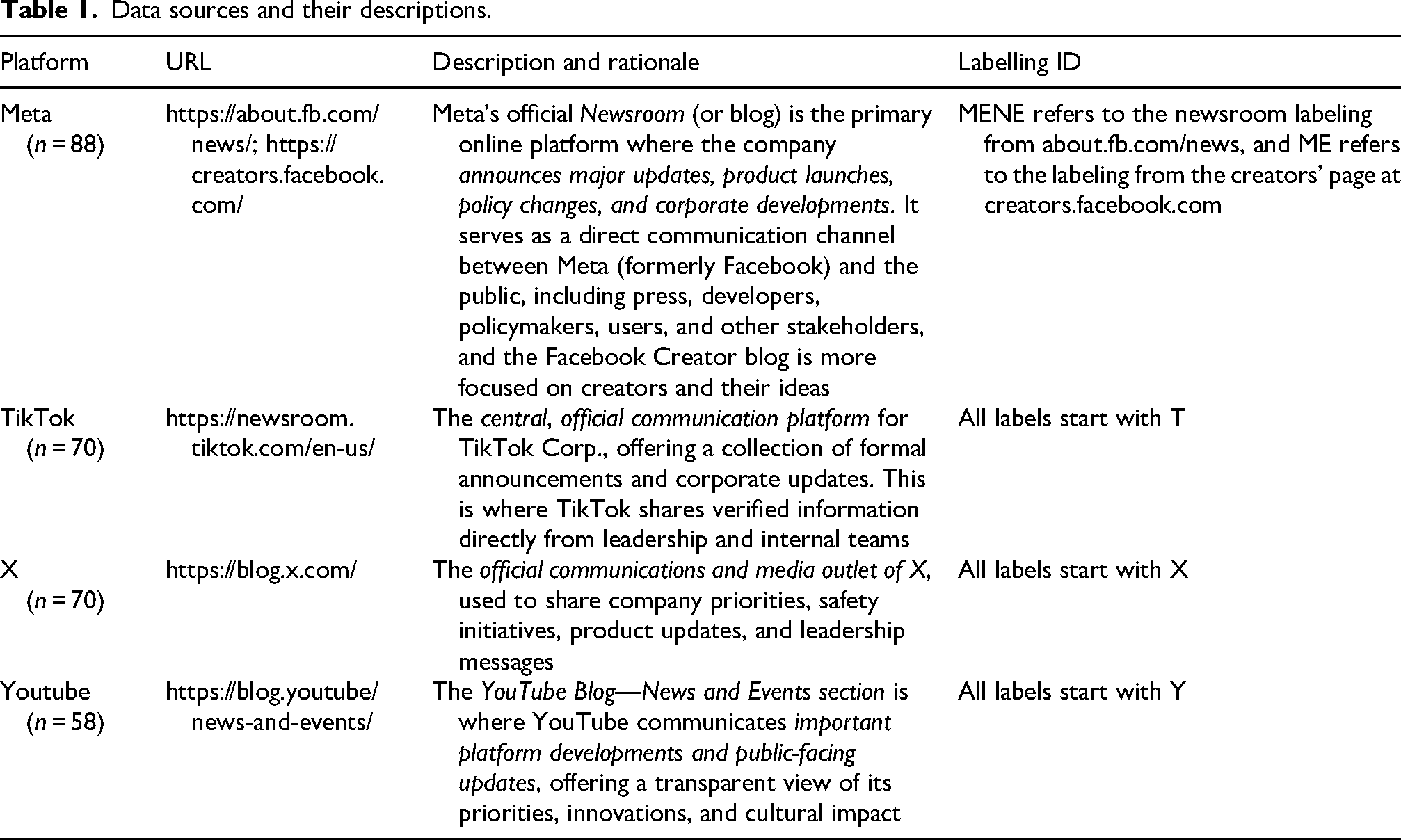

We manually collected data from official company communications, which were published as news, communications, or other forms of public-facing discourse. We outline the key data source for each platform in Table 1, along with details regarding the data analyzed.

Data sources and their descriptions.

Documents from April 2022 to January 2025 were collected. These timeframes were selected to provide a focused yet sufficiently broad view of recent platform discourse. It captures a period during which many companies introduced key updates such as AI features, privacy revisions, and moderation policy changes—without extending so far back as to preclude a close analysis or obscure more recent trends. Our goal was not to showcase the sheer volume of change but rather to examine

Given the varying publication frequencies across platforms, we ensured that the data were representative of key updates, ideas, and innovations shared by each company over time. To further refine the data, we conducted a round of data reduction to ensure that the documents accurately reflected meaningful shifts. During this process, we removed any documents that did not align with one of the following categories: platform updates (e.g., feature announcements), company communications (e.g., press releases), user-directed messages (e.g., privacy updates), or statements from platform management (e.g., executive comments on policy changes).

Data analyses

The analysis was conducted using ATLAS.ti. Initially, the first author performed inductive thematic analyses (Braun and Clarke, 2021) on five randomly selected documents from each platform to develop a preliminary set of categories for classifying types of platform change. This iterative process aimed to comprehensively capture the diverse ways in which platform changes were described. These initial categories were then systematically applied to five other random documents across all platforms to assess their coverage and guide further refinement. To gain a broader perspective on how changes manifested, the codes were subsequently grouped to identify overarching themes across the corpus. Throughout this phase, the research team maintained analytic memos and engaged in collaborative discussions, paying particular attention to how platforms framed and justified changes. The categories were revised multiple times during this process, ultimately resulting in two major themes: material changes and ideological changes. These themes were then used to analyze the entire corpus of documentation.

During the iterative rounds of inductive coding, rationales for change emerged as a distinct and analytically significant category. To explore this further, the first author conducted several additional rounds of open coding on new documents, specifically targeting how platforms constructed and communicated their justifications for change. This process was followed by deliberations to develop a separate set of rationale codes, which were iteratively refined through memoing and team discussions.

While our overall approach was grounded in qualitative thematic content analysis, we drew on select facets of discourse analysis as described by Wood and Kroger (2000), particularly their emphasis on how language shapes meaning and social actions, including what is left unspoken. By carefully examining small segments of data multiple times, we interpreted not just what was said but how it was said and the positions the platforms took. This flexible approach helped us uncover patterns, including subtle implications and framing strategies. Likewise, our findings present the resulting analyses.

Findings

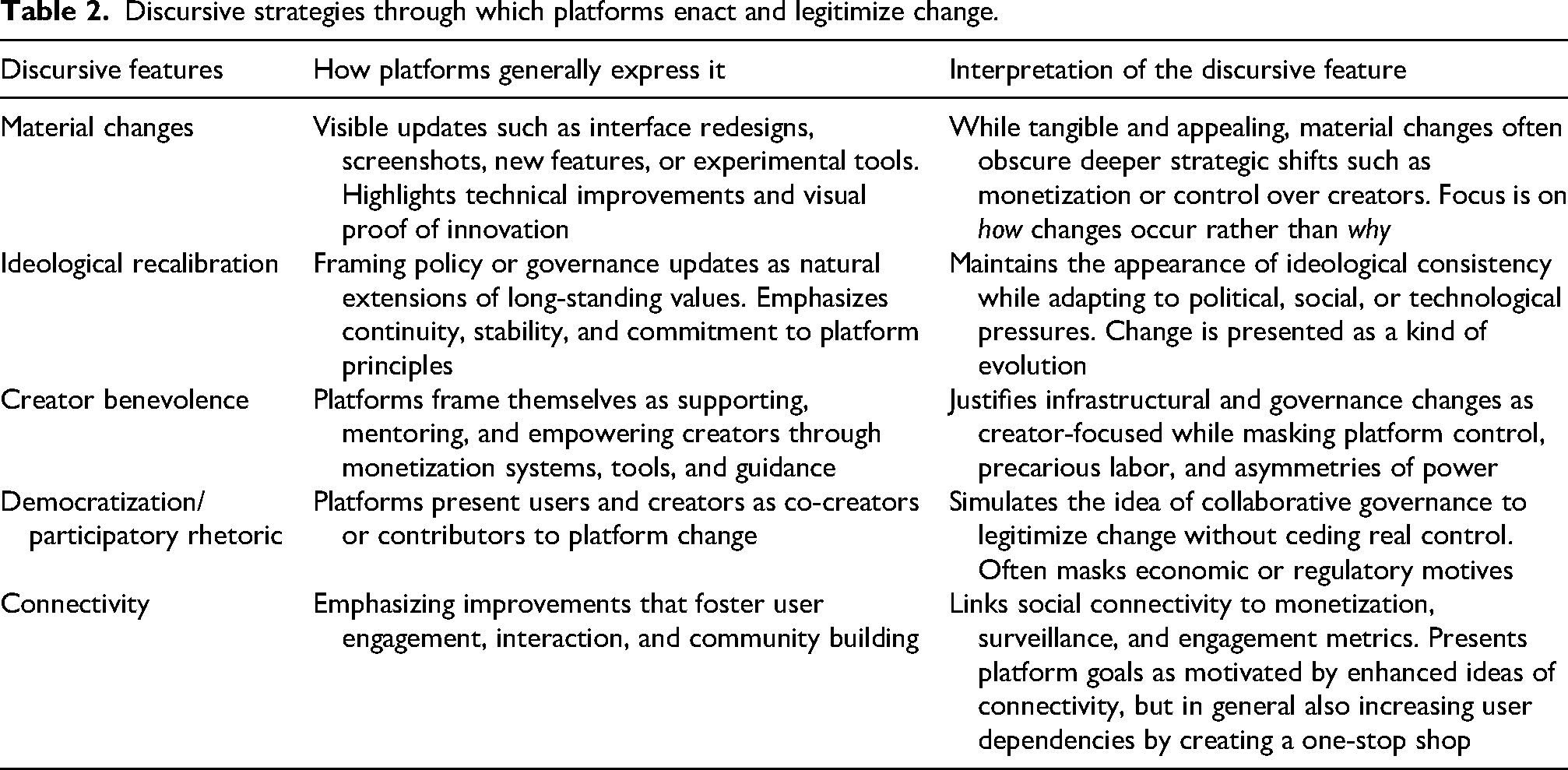

We found that social media platforms strategically deployed

Discursive strategies through which platforms enact and legitimize change.

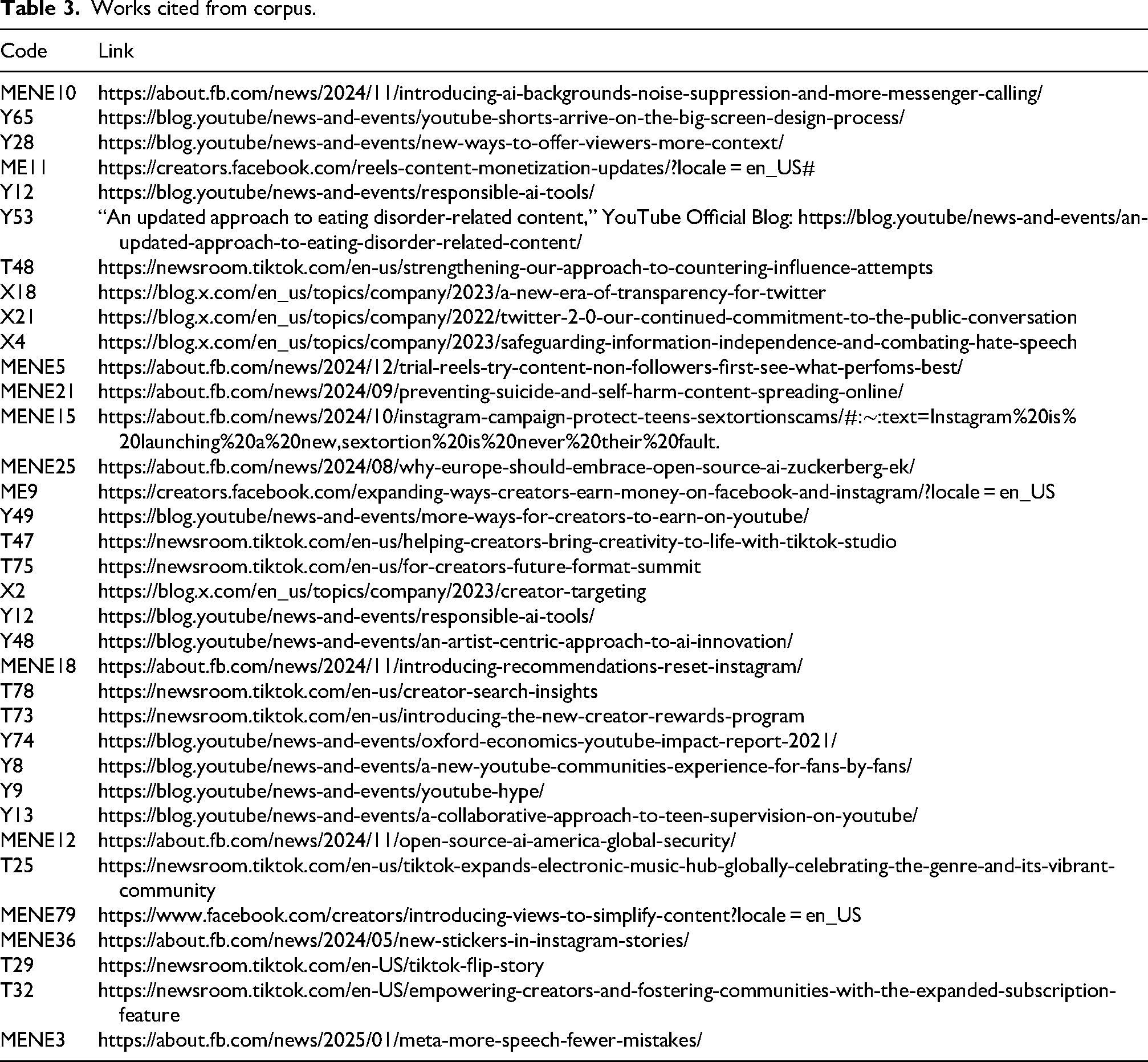

Works cited from corpus.

The discourse and manifestation of change

We first report on how platforms manifest change through

Material changes

Material changes functioned as a primary discourse through which platforms communicated evolution, making shifts both visible and tangible to users. This emphasis on visual proof positioned change as something immediate, recognizable, and inherently positive. Platforms frequently introduced new features with explicit, observable improvements creating an

For example, Meta's announcement, “ We wanted to know if the unique feel of Shorts could be conveyed in our conventional video player (Option A) or if it should be customized to better fill the blank spaces on either side of the video (Option B). We also considered a divergent option—the ‘Jukebox’ style (Option C)—where multiple Shorts would fill the screen at the same time, taking full advantage of the TV screen’s additional space (see Figure 2).

Visual display of how AI backgrounds work (MENE10). Source: https://about.fb.com/news/2024/11/introducing-ai-backgrounds-noise-suppression-and-more-messenger-calling/.

Visual display of reels on a TV (Y65). Source: https://blog.youtube/news-and-events/youtube-shorts-arrive-on-the-big-screen-design-process/.

This focus on process and visual display conveyed a sense of forward-looking creativity and optimization, yet it also obscured meaningful reflection on the necessity and broader implications of the change. Such an aesthetic implied that adaptation and iteration were inherently beneficial and unproblematic, reinforcing the assumption that ongoing technical tinkering equates to progress.

More complex algorithmic changes were similarly framed in terms of simplicity and seamless integration. For instance, YouTube's “experimental feature to allow people to add notes to provide relevant, timely, and easy-to-understand context on videos” (Y28) was presented with an intuitive explanation of the underlying algorithm: “

This explanation made the change appear straightforward and accessible, glossing over potential risks such as the historical limitations of crowdsourced content moderation (Saeed et al., 2022). The framing aimed to assure users that these updates were user-friendly improvements, encouraging adoption while eliding broader complexities.

Yet beyond functional benefits, material changes often concealed deeper ideological and business shifts within platform governance. Updates that appeared discrete and technical frequently served to advance monetization strategies and reshape control over content and creators. Meta's “

YouTube's introduction of likeness management technology in “ This means equipping [creators] with the tools they need to harness AI's creative potential while maintaining control over how their likeness, including their face and voice, is represented.

While presented as protection, this infrastructure arguably enables YouTube to formalize identity-based content claims, positioning the platform to monetize AI-generated media and regulate identity at scale. This raises concerns about how platforms might operationalize identity claims in ways that circumvent legal frameworks such as the US First Amendment, creating parallel governance regimes that prioritize monetization and content takedown over expressive freedom.

Thus, platforms foregrounded changes as beneficial and creator-focused, thereby obscuring their deeper role in expanding monetization, consolidating control, and redefining norms around digital expression and visibility. By emphasizing visible updates such as interface redesigns, screenshots, and clear demonstrations of new features, they distracted attention from less visible yet more consequential infrastructural changes that reshaped platform economics and governance. This aesthetic of platform change—marked by a constant stream of updates, visually driven narratives, and a focus on immediate user or creator benefits—performed multiple functions, including masking the redefinition of monetization pathways, intensifying platform control, and deepening user dependence. Ultimately, platforms not only managed transformation discursively but also aestheticized it, rendering change itself as a form of engagement while deflecting scrutiny from its structural consequences.

Ideological changes

Across our corpus, platforms rarely introduced ideological change as an outright transformation. Instead, ideological changes manifested as recalibration of platforms’ commitments through subtle yet strategic shifts in emphasis, framing change as

YouTube and TikTok both engaged in recalibration through updates to their policy and framing to emphasize continuity. In Y53, YouTube announced, “

Recalibration was also apparent in responses to political change. At the time of Elon Musk's acquisition of Twitter and its rebranding as X, the company published a series of updates emphasizing transparency and experimentation. In X18, it declared: “ We’ve always understood that our business and revenue are interconnected with our mission; they rely on each other […] All of this remains true today. What has changed, however, is our approach to experimentation.

This shifted the focus on experimentation and openness, while explicitly reiterating the idea of continuity with past values and ideals. In X4, it added: “

A similar move appeared in Meta's response to criticism from conservative lawmakers and users around its fact-checking practices. In MENE5, the company explained a major shift away from third-party fact-checking: “

Recalibrations were also prompted by social changes and growing public concern over issues such as mental health, teen safety, and sextortion. In response to mounting pressure from lawmakers and grieving families, Meta launched Thrive, which it described as “

Finally, technological change, particularly the rise of generative AI, prompted another set of recalibrations. Meta, in their 2025 statements, positioned themselves as leaders in promoting equitable access to AI tools, especially in the context of the European market. As Meta put it, “

Across these examples, recalibration functioned as a central discursive technique—one that enabled platforms to manage change without appearing inconsistent. What drove these recalibrations varied: political shifts necessitated redefinitions of free speech and moderation; social crises prompted intensified protections; and technological transformations demanded renewed commitments to openness and creativity. Yet in each case, platforms presented themselves as ideologically continuous. Recalibration allowed them to adapt to a changing world while retaining the image of coherent, principled actors.

Rationalization of change

While material and ideological strategies enacted change, rationalization supported these strategies by shaping how change was interpreted and received. Rationalizations were discursive moves that framed changes as benevolent, inevitable, or unquestionably positive. They offered affectively persuasive, commonsense appeals—positioning platforms as trustworthy actors, their interventions as reasonable, and the resulting transformations as beneficial. It made change feel natural, welcome, or even overdue, smoothing resistance and helping users assimilate new systems or policies, often by presenting each change as a discrete fix or upgrade. Platforms framed themselves as merely patching existing issues. In what follows, we trace several dominant rationalization strategies that platforms used to legitimize change: appealing to creator benevolence, invoking democratization, and emphasizing enhanced connectivity. Across each domain, rationalizations helped render platform interventions legitimate and even desirable.

Creator benevolence

In recent years, digital platforms have rapidly introduced new tools, infrastructures, and monetization pathways in response to the rise of the “

This creator-first framing was used to justify the introduction of new monetization systems. Meta, for instance, claimed it was “

In parallel, platforms described their increasing intervention into content production and distribution as efforts to empower creators. TikTok, for example, claimed its Creator Studio would “

This narrative extended to platform-led efforts to protect creators’ rights. Meta emphasized the introduction of “

Platforms also framed themselves as educators and mentors. Instagram's “

This vision, as one YouTube release put it, of “

Democratization

Platforms also justified change through the rhetoric of participatory design, invoking a discourse that echoed democratic ideals. They framed their decisions as the product of collaborative processes, where users and creators are not simply governed but actively involved in governance. In doing so, platforms mimicked the structure and discourse of democratic deliberation, seeking input, demonstrating responsiveness, and signaling accountability to their stakeholders (Caplan, 2023. But this performance also often served to legitimate change rather than to redistribute power.

Platforms commonly framed users as co-creators or originators of innovation, thereby urging a vision of user control over the platform's direction. For instance, YouTube introduced a new feature as “

At times, the language of democratic governance obscured deeper economic motives. When launching the Hype program, YouTube framed monetization shifts as enhancing creator-audience relations, stating, “

Even when discussing domains of the platform's centralized authority, such as content moderation, platforms invoked a democratic logic. YouTube, for instance, introduced new parental control features as part of “ Our partnership with the U.S. State Department, which includes leading industry voices, promotes safe, secure and reliable AI systems that address societal challenges like expanding access to safe water and reliable electricity, and helping support small businesses.

TikTok similarly employed participatory rhetoric in cultural and commercial domains, whether “

Across these examples, participatory discourse functions as a legitimizing frame. It enables platforms to present themselves as democratic-responsive, inclusive, and accountable—without ceding meaningful control or subjecting themselves to external structures of accountability. In effect, they simulate the practices of democratic governance to resist actual regulation, presenting transformation as community-driven while retaining institutional authority over what change looks like, and for whom.

Connectivity

A final rationalization strategy revolved around connectivity in presenting change as a way to bring people closer together. While enhancing user connection is a long-standing goal of social media, platforms increasingly link connectivity to monetization, surveillance, and growth metrics. Rationalizations in this domain rendered complex infrastructural overhauls or interface changes as simple efforts to improve user experience and community interaction.

Platforms have transformed connectivity into a structured, monetized practice shaped by corporate and ideological imperatives (van Dijck, 2012). Meta, for instance, introduced “

YouTube echoed this focus on connectivity, announcing “

Likewise, TikTok explicitly tied connectivity to creator growth in its statement about the now more widely available subscription feature: Subscription allows creators to offer their

This rationalization reflects a platform logic that commodifies social connectivity by linking it to monetization, with affective engagement optimized for revenue and growth. While changes are framed as user-centric improvements, they also serve platforms’ broader objectives by increasing time spent on sites and apps through engaging content formats, streamlined metrics, and, indeed, stronger connections to creators. Enhanced connectivity thus serves a dual purpose: ostensibly benefiting users while advancing platform goals of engagement and data collection. By emphasizing community building and audience interaction, platforms reinforce user dependence on their sites as central hubs.

Changecraft

In this article, we have documented how social media platforms strategically narrativize continuous change. We find that they communicated material changes, such as new features and design updates, with visual proof to emphasize tangible improvements, often promoting enhanced connectivity between services to increase user engagement and dependency. Concurrently, ideological shifts rearticulate and recalibrate their stated values, framing initiatives as contributions to social good, such as supporting diverse creators, improving safety features, or advocating for responsible AI, while also solidifying their market position. These changes are consistently rationalized through discourses of benevolence, positioning platforms as supportive partners to their users, and democratization, implying user participation in design (see Table 2). Ultimately, this multilayered approach allows platforms to appear innovative and user-centric, deflecting questions and explanations from the deeper control-oriented motivations behind their evolving structures.

Platform rationales for change often functioned not just as explanations but as commentaries on what platforms were and what they aspired to be. In invoking the language of benevolence, democracy, social good, and connectivity, platforms construct a self-image that exceeds a corporate identity, positioning themselves as facilitators of creativity, stewards of model forms of governance, and engines of social progress (Gillespie, 2010). These rationalizations perform a kind of

Building on past work that highlights explicit, continual shifts in platform governance (Poell et al., 2021), we complicate these accounts by drawing attention to the

Our analyses illustrate how change is not simply enacted

First, our analysis suggests that platforms tend to foreground material changes, such as visual redesigns, added features, and UI adjustments, to make change recognizable and intuitive, thereby securing user buy-in. While infrastructure is often understood as receding into the background and becoming visible only upon failure (Star, 1999), platforms invert this logic. They make infrastructural changes intentionally visible through blog posts, animations, and interface cues. This visibility is not incidental but strategic: it frames minor changes as signs of responsiveness and innovation. Rather than undermining the concept of infrastructure, such practices remind us of its complexity and critical importance. Platforms visibilize interface tweaks as a tool of governance, shaping user expectations and legitimizing ongoing transformation. In doing so, platforms tactically sidestep questions of

Although platforms foreground material change, their changecraft involves granting a sense of steadfastness. They accomplish this by reframing ideological shifts as continuity, even when they reflect substantial changes in platform values, governance, or orientation. Platforms rarely announce ideological pivots directly; instead, they present carefully crafted narratives that invoke long-standing values to characterize new priorities, allowing them to reposition themselves without appearing capricious or contradictory. Drawing from Gillespie (2010) and Plantin et al. (2018), we argue that platforms adopt discursive elasticity, adapting their public-facing commitments to strategically align with shifting political, economic, and cultural climates. This aligns with what Ames (2015) refers to as charismatic technology—forward-facing, ideologically charged, and aimed at smoothing away uncertainties. For instance, when Meta removed fact-checking labels on political content, it framed the decision as a return to its foundational commitment, “

Our analysis also shows how platforms deploy patchworking as a deliberate obscuring strategy: rather than announcing wholesale or radical transformations, they break down broader shifts into small, piecemeal changes. These changes are then accompanied by carefully crafted rationales that serve not to clarify but to obscure the true extent and nature of the transformation. The rationales almost invariably frame changes as minor, necessary, or even benevolent, appealing to ideals such as “

Conclusions, limitations, and future work

This study introduces changecraft, a framework for understanding the strategic discursive practices platforms employ to manage, legitimize, and normalize their continuous transformations. Our analysis of public communications from Meta, YouTube, X, and TikTok reveals that platform evolution is not merely technical but profoundly discursive. Platforms actively frame their changes as neutral, responsive, and ongoing, even when these shifts serve to consolidate power or deepen user dependence. By introducing changecraft, we provide scholars with a critical lens to interrogate platform change not solely by what changes but by how platforms strategically construct and communicate these transformations to publics, regulators, and users. This framework underscores the crucial role of discourse in shaping perceptions of technological progress, managing accountability, and influencing the governance of digital spaces.

This study has limitations that suggest avenues for future research. Our analysis focused on public communications from a limited number of major platforms, meaning future work could explore internal documents or a wider range of platforms for a broader understanding. The qualitative nature of our analysis provides deep insights into discursive patterns, but quantitative approaches such as large-scale text analysis could complement these findings by revealing statistical trends across vast datasets. Finally, while we infer the impact of changecraft, direct measurement of user responses or the actual societal effects of these communication strategies remains a promising area for future empirical investigation.

Footnotes

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.