Abstract

Algorithms have become central organising infrastructures for participatory media platforms, structuring both content production and distribution. While users engage with these algorithmic systems in pursuit of visibility and success, the underlying mechanisms remain largely opaque. This study investigates how Douyin strategically constructs algorithmic imaginaries through the Douyin Content Assistant (DCA), an official platform resource designed to instruct content creators. Drawing on a mixed-method analysis of 725 DCA videos – combining critical discourse analysis with GSDMM topic modelling – we identify four key thematic clusters that reveal how Douyin frames its algorithmic system, content strategies, video aesthetics and platform governance. We conceptualise this deliberate platform-led instruction as algorithmic pedagogy: an assemblage of discourses and practices through which the platform not only shapes creators’ perceptions of how its algorithm operates, but also prescribes normative modes of engagement that guide creators in optimising their practices for platform-defined objectives. In doing so, Douyin produces an illusion of algorithmic fairness while subtly responsibilising creators to serve its commercial and ideological priorities. This analysis demonstrates that algorithmic platforms govern not solely through technical infrastructures, but also through the imaginative construction of algorithmic understanding as a form of soft governance.

Keywords

Introduction

Algorithms have become fundamental organising principles for participatory media platforms, structuring how content is produced, distributed and consumed. Across platforms such as Facebook, Instagram, Twitter and TikTok, algorithmic feeds now constitute a pervasive infrastructure underpinning platform capitalism (Schulz, 2023). These developments in platform affordances have fundamentally reshaped the content creation ecosystem (Poell et al., 2022). For content creators, navigating and catering to algorithmic systems has thus become essential for securing visibility, audience engagement and commercial success. Yet despite widespread recognition of the algorithm's importance, the technical operations of these systems often remain opaque and inaccessible to non-expert users.

In response to this opacity, forms of algorithmic folklore and algorithmic imaginaries – collective beliefs, speculations and narratives about how algorithms function – have emerged as critical frameworks for understanding user behaviour and the socio-cultural practices of algorithmic engagement (Bucher, 2016; Gran et al., 2020; Jones, 2023). Much of the existing research has focused on these grassroots formations of algorithmic imaginaries, examining how users construct speculative knowledge to navigate opaque recommendation systems and visibility hierarchies (Benjamin, 2022; Bucher, 2016; Treré, 2018).

By contrast, the platform's deliberate efforts to construct and circulate algorithmic imaginaries as a form of content governance remain comparatively underexplored. This study conceptualises such interventions as algorithmic pedagogy – an assemblage of discourses, practices and infrastructures through which platforms actively instruct creators on how to imagine, interpret and comply with algorithmic logic. Such pedagogical interventions are evident in various forms of official communication, including YouTube's ‘How YouTube Works’ webpage and Instagram's blog post ‘Shedding More Light on How Instagram Works’, where platforms publicly frame algorithmic operations and their broader economic (e.g. revenue-sharing), political (e.g. misinformation control) and social (e.g. child protection) implications. These corporate explanations do not simply offer technical transparency; rather, they function as governance devices that shape user perceptions and normalise platform priorities (Cotter, 2019; Gillespie, 2018). In this sense, algorithmic pedagogy operates as a form of soft governance (Gillespie, 2018; Gorwa, 2019), subtly aligning creator practices with platform-defined commercial, cultural and aesthetic objectives (Abidin, 2017; Duffy, 2015) and disciplining cultural production through managed imaginaries of fairness, opportunity and success.

This study focuses on Douyin, the Chinese version of TikTok, to investigate how the platform strategically constructs algorithmic imaginaries that guide and discipline creator practices. Douyin serves as a paradigmatic case: as a dominant actor in China's media economy, it has played a central role in expanding the algorithmically mediated gig economy and reorganising creative labour around platform-defined priorities. Central to this process is how the platform introduces its algorithmic mechanisms to creators – framing algorithmic functionality, monetisation practices, creative labour and video language – to cultivate creator compliance and participation in platform economies. Regarding this, this research examines the Douyin Content Assistant (DCA), an official Douyin account that the platform describes as a resource integrating ‘creative tools, directions, guidelines, and growth plans’ to empower creators for efficient and sustainable content production. It addresses the following questions. (a) How does Douyin construct and communicate specific algorithmic imaginaries through its official creator-facing content Douyin Creator Assistant? (b) In what ways are these imaginaries mobilised to guide, shape, or discipline creators’ practices and engagement with the platform? (c) How do these discursive strategies reveal a broader blueprint of platformised content economies, particularly in the context of the global TikTokification trend?

In this essay, we argue that Douyin's algorithmic pedagogy extends beyond technical instruction, embedding platform governance within broader political-economic and cultural frameworks. Rather than relying on coercive enforcement, platform governance mobilises soft governance mechanisms that promote voluntary compliance. It constructs an aspirational vision of individual entrepreneurial success, positioning content creation as a pathway to upward mobility while guiding creators toward algorithmically preferred practices. Data-driven feedback loops continuously reinforce optimisation imperatives, responsibilising creators for their own visibility, engagement and monetisation outcomes. In this process, platform accountability is displaced, and structural inequalities are masked beneath discourses of fairness, transparency and empowerment.

Building on this, the research further demonstrates how such algorithmic pedagogies displace responsibility onto creators while masking platform power behind discourses of fairness and transparency. By promoting authenticity as a moral imperative, prescribing optimisation routines and framing commercial success as a function of individual discipline, platforms govern cultural production within a tightly regulated participatory framework. On Douyin, this logic manifests as part of the broader TikTokification of media production, wherein everyday life is continuously recorded, monetised and converted into algorithmically valued content streams. Through this process, creators are given responsibilities as entrepreneurial, resilient and self-optimising subjects, embodying core tenets of neoliberal governmentality.

Literature review

Algorithmic imaginary, platform visibility and content production

Algorithms have become fundamental organising principles for participatory media platforms, profoundly shaping user behaviour and cultural production. Central to this ‘algorithmic turn’ is the concept of the algorithmic imaginary – users’ perceptions, beliefs and interpretive frameworks regarding how algorithms operate. Bucher (2018) defines algorithmic imaginaries as ‘ways of thinking about what algorithms are, what they should be, and how they function’, emphasising how such imaginaries mediate user engagement with opaque algorithmic infrastructures. Similarly, Schellewald (2022) underscores the ongoing interpretive processes through which users make sense of the otherwise invisible algorithmic systems structuring their experiences.

Algorithmic imaginaries influence both individual user strategies and broader forms of content production within platform economies (Schulz, 2023). For content creators, these imaginaries become particularly consequential in navigating visibility hierarchies and optimising content for algorithmic distribution (Bucher, 2016; Gran et al., 2020; Jones, 2023). As platforms increasingly commodify visibility, creators develop speculative theories and adaptive strategies to secure audience reach and monetisation (Cotter, 2019; Gillespie, 2010; Prey and Esteve-Del-Valle, 2023; Richter and Ye, 2024; Wang and Cao, 2024; Zeng and Kaye, 2022).

Algorithmic imaginaries encapsulate users’ awareness and perceptions of an algorithmic system, shaping the folk theories and behavioural strategies they develop in response (Bucher, 2016). Research indicates that these imaginaries are highly malleable, continuously shaped and reshaped by evolving folklore theories (DeVito et al., 2018). For non-technical users, these imaginaries are often influenced by deliberate algorithmic explanations aligned with platform capitalism's commercial objectives. Influencers, such as those examined by Thomas MacDonald (2023), produce algorithmic lore videos that speculate on how algorithms function, helping creators navigate the platform's visibility markets. Similarly, Sophie Bishop (2020) identifies a new class of ‘algorithmic experts’ – influencers who guide creators in adapting to algorithmic visibility on platforms like YouTube. These intermediaries aiming at manufacturing algorithmic imagery for content creators on the platform play a crucial role in platform economies by offering strategies to mitigate risks of algorithmic invisibility and sustain creators’ engagement in the platform ecosystem.

Douyin, TikTokification and platform capitalism

Understanding algorithmic systems is particularly critical for content creators on Douyin, as the platform adopts a fundamentally distinct approach from many participatory media platforms that evolved out of social networking. Unlike platforms that layer algorithmic features onto pre-existing social graphs, Douyin prioritises algorithmically distributed content over interpersonal connections, establishing a unique platform ecosystem (Bhandari and Bimo, 2022; Siles et al., 2024). This infrastructural design positions Douyin less as a social network and more as an algorithmic medium (Liang, 2022; Wei and Yan, 2023), where content exposure is governed by automated decision-making processes rather than human editorial control or user networks (Napoli, 2014).

Such developments are part of a broader phenomenon often described as TikTokification (Gerbaudo, 2024), characterised by the rise of short-form, algorithmically distributed media across various platforms. Two key features define this model. First, interest-based clusters have replaced social networks, with users grouped into statistically generated ‘neighbourhoods’ based on prior behaviours and shared preferences (Gerbaudo, 2024). Second, individual social influence has been eclipsed by platform-generated memes – algorithmically categorised content units optimised for attention capture (Zulli and Zulli, 2022).

This platform design fundamentally restructures Douyin's media economy and shapes its cultural practices (de Kloet et al., 2019; Poell et al., 2022). Like other major advertising-driven platforms such as Google and Facebook, Douyin extracts user data and monetises audience attention, embedding itself within the broader architecture of platform capitalism (Srnicek, 2016). From a historical perspective, Douyin extends what Wu (2015) describes as the attention economy, where media industries commodify audience attention for advertisers. However, Douyin automates this logic through algorithmic infrastructures that translate every user interaction – likes, comments, shares – into data inputs that dynamically recalibrate content distribution strategies (Napoli, 2014). Unlike traditional models of audience segmentation or demographic targeting, Douyin's monetisation model assigns value to real-time engagement metrics (MacDonald, 2023; Neyland and Möllers, 2016), positioning algorithmic performance rather than social capital as the primary determinant of visibility (Huang and Ye, 2024; Liang, 2022). In this system, creators can achieve substantial reach even without large follower bases, provided their content aligns with engagement-driven algorithmic preferences. These metrics feed into the platform's algorithm, shaping the patterns of content distribution and consumption. As a result, content creators must move beyond simply growing their follower count; instead, they must strategically adapt to the algorithm's preferences, exploit its logic, and, at times, navigate ways to influence the system's behaviour.

Along with this algorithmic-driven media economy, Douyin has developed an expansive platform infrastructure that tightly integrates monetisation, data extraction and commercial participation. This infrastructure supports not only seamless content production but also a thriving content economy, advancing Douyin's ambition to serve as a salable, rankable and archivable ‘video encyclopaedia’ (Zhang, 2021). By 2020, over 22 million users had directly monetised their activities on Douyin (Ocean Engine, 2020), while the platform played a significant role in expanding China's gig economy, facilitating approximately 36.17 million flexible jobs (National Academy of Development and Strategy, 2021). These roles span influencers, e-commerce sellers, marketing agencies and corporate clients leveraging Douyin's visibility infrastructure for commercial gain. Through this integration of monetisation and labour, Douyin illustrates how algorithmic platforms not only reorganise attention economies but also restructure the political economy of digital labour.

Algorithmic pedagogy as soft governance: disciplining content production through algorithmic imaginaries

Recent scholarship has highlighted how platform economies exercise power not merely through direct control, but through soft governance mechanisms that cultivate voluntary compliance and internalisation among participants (Gorwa, 2019). Rather than enforcing strict rules, platforms shape creator behaviour through a combination of discursive framings, algorithmic architectures and normative expectations embedded within platform infrastructures (Plantin et al., 2016). This mode of governance subtly rationalises and legitimises structural inequalities by framing cultural production as a space of individual entrepreneurial opportunity while systematically obfuscating the uneven conditions under which visibility, monetisation and success are distributed (Carmi and Yates, 2023; Duffy and Saeway, 2022; Glatt, 2024). As Duffy (2015) argues, platforms promote an ethos of aspirational labour that displaces responsibility onto creators, framing precarious labour conditions as personal failure or insufficient optimisation. In this sense, algorithmic infrastructures function not merely as technical systems but as ideological devices that naturalise commercial priorities and shift accountability away from the platform itself.

In parallel, research on algorithmic imaginaries has examined how users construct speculative interpretations of algorithmic systems to navigate opaque recommendation mechanisms and platform visibility hierarchies (Bucher, 2016; Ytre-Arne and Moe, 2020). In this view, user imaginaries emerge as situated responses to algorithmic uncertainty, serving both as coping strategies and as informal forms of algorithmic knowledge. More recently, however, scholars have begun to emphasise how platforms themselves actively intervene in shaping these imaginaries. For instance, Schulz (2023) demonstrates how platform engineers anticipate user interpretations in the design of algorithmic feeds, while Nagy and Neff (2024) highlights how corporate algorithmic explanations foster compliance and trust by deliberately ‘conjuring the algorithm’ where tech companies deploy advertising, strategic language and polished demonstrations to obscure critical issues behind algorithmic systems – such as data provenance, embedded biases and the often invisible labour sustaining these technologies. These corporate-build imagination of the algorithms and its influence are not passive responses to user uncertainty, but active interventions that guide user behaviour and align public understanding with platform interests (Oeldorf-Hirsch and Neubaum, 2025).

Existing studies have examined how social media platforms employ soft governance including the design in interface, policy and platform advertisement to steer creators toward business-aligned practices (Caplan and Gillespie, 2020; Petre et al., 2019). This essay highlights that manipulating the algorithmic imaginaries as a central mechanism in this process. Through platform official blogs, tutorials, promotional content and media campaigns, platforms present algorithms as transparent, fair and empowering, normalising the platform commercial priorities under the guise of neutrality. By framing success as dependent on proper engagement, these narratives legitimise platform control while shifting responsibility onto creators. Yet, how such platform-generated explanations actively shape user imaginaries remains underexplored. This study addresses this gap by analysing how Douyin manufactures algorithmic imaginaries to integrate creators into a tightly governed, commercially driven production system.

In this context, Douyin exemplifies how platforms incorporate algorithmic imaginaries into the discipline of content creation. The DCA, officially operated by the platform, serves as a part of this practice in shaping user behaviour. Positioned as an authoritative guide for creators, DCA provides ‘creative tools, directions, guidelines, and growth plans’ to streamline and standardise content production. 1 Since its first video in November 2019, DCA has gained over 9.68 million followers and partnered with influencers across fields such as technology, music, travel and culture. The account primarily focuses on teaching creators ‘how to create content on Douyin’, providing detailed explanations of platform mechanism, rules and tailored guidance. These videos outline a systematic methodology for creators, explaining how Douyin's algorithmic mechanism operates and offering strategies to optimise visibility. This includes practical advice on account setup, content selection, filming techniques and editing skills. Beyond technical guidance, these tutorials also highlight Douyin's distinctive production model, aesthetic norms and narrative frameworks for short videos.

Methodologies

Former research already proved the compatibility of topic modelling and discourse analysis, showing that the former method could provide a blueprint for the later one (Jacobs & Tschötschel, 2019). On 23 November 2024, we extracted metadata for 725 publicly available videos from the DCA homepage using Zeeschuimer, followed by manual downloading of video content. As the data originated from official accounts and contained no personal information, this process complies with internet research ethics (Franzke et al., 2020). A 10% pilot sample (73 videos) revealed typical social media characteristics – fragmented, unstructured and information-dense (Törnberg and Törnberg, 2016). Given the prevalence of spoken narration and content-descriptive titles, visual elements were deemed analytically limited.

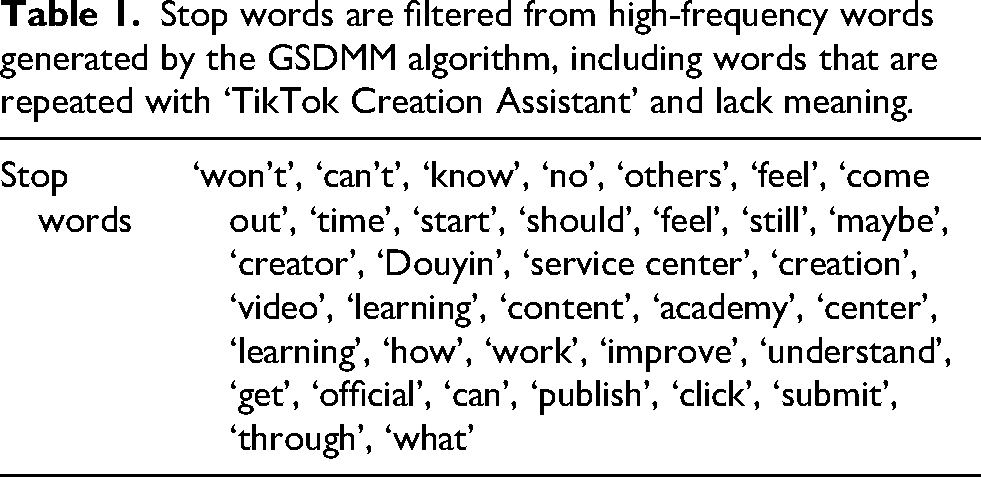

To obtain an initial thematic overview, we segmented video titles using Jieba and applied the GSDMM model, which is optimised for short texts. Following Yin and Wang (2014), we adjusted model parameters (

Stop words are filtered from high-frequency words generated by the GSDMM algorithm, including words that are repeated with ‘TikTok Creation Assistant’ and lack meaning.

Building on the four thematic clusters identified through GSDMM, we randomly selected 100 videos (25 per cluster) for further qualitative analysis. To investigate how Douyin constructs algorithmic imaginaries within these creator-facing materials, we adopted Critical Discourse Analysis (CDA) as our primary analytical lens. Our approach draws on Fairclough's (1995) three-dimensional model – text, discursive practice and social practice – not as rigid analytical categories, but as interpretive orientations that guide our reading of DCA tutorials, instructional strategies and platform soft governance rationales. To operationalise CDA in our analysis, we paid close attention to modality (e.g. motivational appeals), metaphors (e.g. portraying the algorithm as a transparent, rule-based infrastructure) and disciplinary language (e.g. prescriptive instructions and rules) that encode platform values and behavioural expectations. We also draw on Wodak and Meyer's (2001) emphasis on embedding discourse within institutional and economic structures, which enables us to situate the algorithmic imaginary shaped by creator instruction within broader logics of platform capitalism and creator economies, where normative discourses around visibility, success and self-regulation become integral to soft governance.

Results and analysis

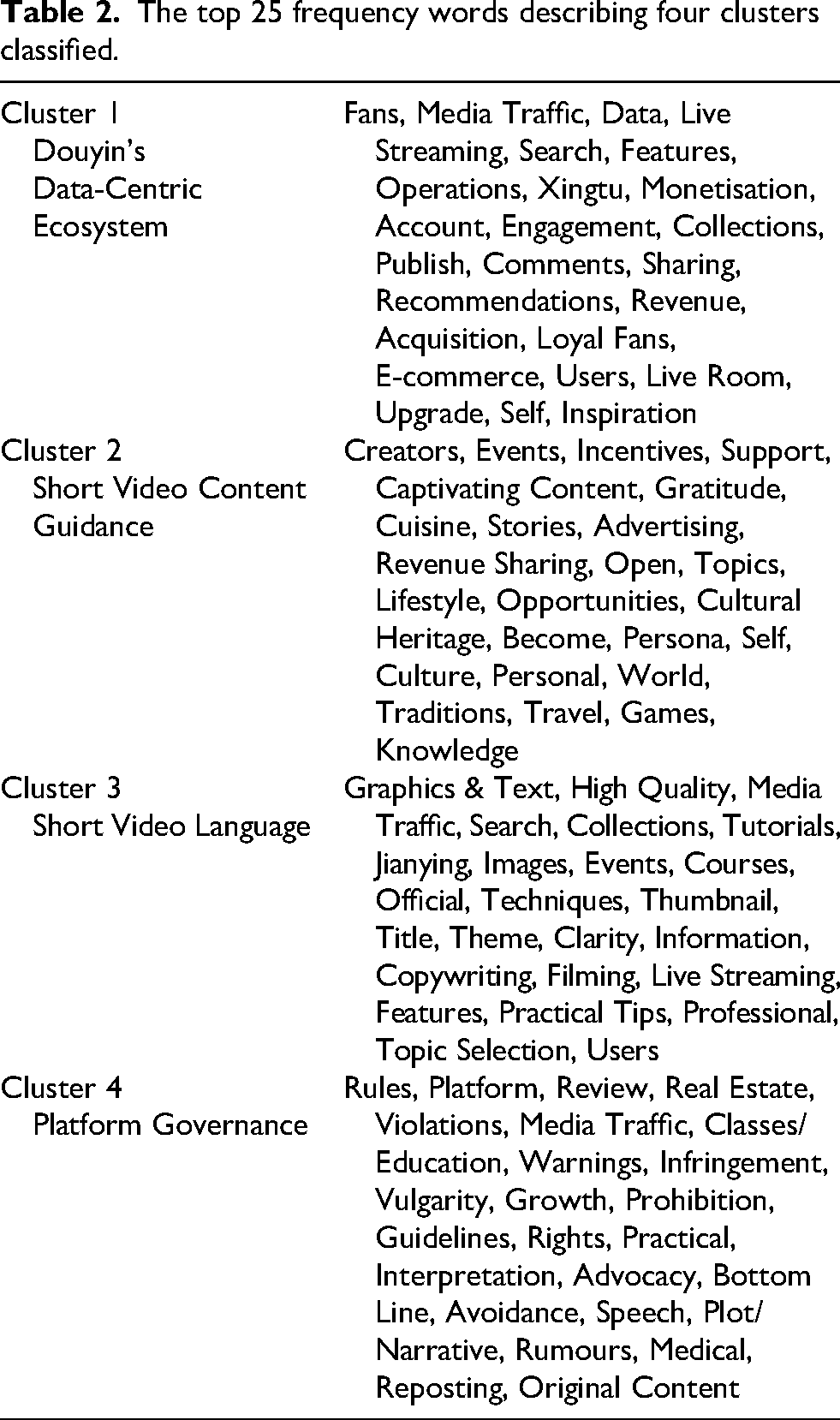

This method allowed us to categorise 725 contents and identify four key thematic clusters in DCA's content: (a) Douyin's Data-Centric Ecosystem, (b) Short Video Content Guidance, (c) Short Video Language and (d) Platform Regulation. For better understanding of each cluster, the top 25 frequency words were summarised for describing the theme of each (Table 2).

The top 25 frequency words describing four clusters classified.

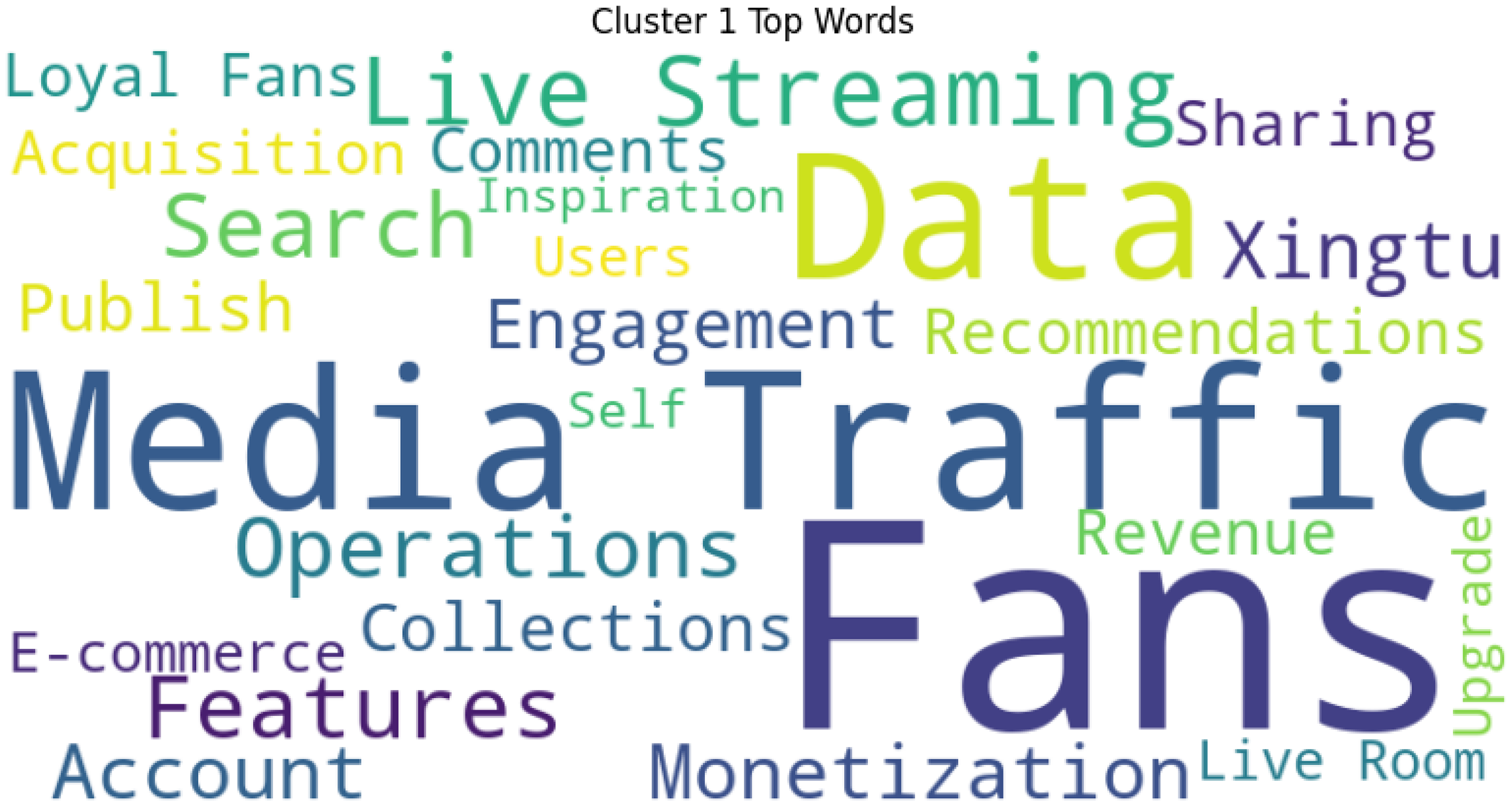

Cluster 1: the promise of algorithmic accessibility

The first and largest cluster (241 videos, 25 keywords; see Figure 1) centres on Douyin's construction of algorithmic imaginaries that frame its algorithms as transparent, fair and universally accessible. By promoting the belief that success depends on personal effort and proper engagement with the algorithm, the platform mobilises mass participation in content production, expanding its creative labour pool and generating a continuous stream of monetisable content. These keywords can be further grouped into three themes. The first theme is to establish a persuasive narrative that frames monetisation as universally attainable through individual discipline and proper engagement with algorithmic processes. At the textual level, instructional videos consistently adopt an encouraging and motivational tone: ‘Unwilling to just be a spectator in the short video era? Start creating!’, ‘You too can become a tech influencer! @DouyinTech’, or ‘Can beginners make money through live-stream e-commerce? #DouyinCreatorLearningCenter’; ‘99% of creators forget to plan their “monetization strategy”!’ Such messaging constructs an entrepreneurial imaginary that positions algorithmic visibility as a technically solvable challenge, contingent on personal initiative, consistency and data literacy. For example, they use phrases like, ‘Are you still envious of others monetizing their content? It's time to take action’! Correspondingly, the videos repeatedly emphasise and argue that even grassroots users with no followers can quickly monetise their content on Douyin if they apply the right strategies. Aligned with this push for content commercialisation, this cluster identifies several general principles that outline a data-oriented methodology, which formalises and promotes discursive practices on Douyin. For instance, creators are advised to ‘use data and feedback to improve recommendations for potential audiences’ and to ‘encourage users to interact with the content – through likes, saves, or shares with friends – which in turn prompts the algorithm to promote the video to a wider audience’.

Methodological workflow combining GSDMM topic modeling and Critical Discourse Analysis.

Following the general promise of individual commercial success, the second subcategory delineates normative pathways for creators by framing Douyin's algorithmic infrastructures as transparent, optimisable and instrumental to economic advancement. These content are constantly indicating a casual link between the individual commercial success with the use of algorithms. In specific textual examples, DCA systematically introduces the platform's technical affordances as reliable mechanisms that creators can master to improve visibility and monetisation outcomes. This subcategory encompasses various features, including Xingtu (星图), live streaming, search functions, collections, recommendation algorithms and live rooms. Instructional videos demonstrate practical tactics such as embedding commodity links during live streams or enhancing visibility through optimised search practices. Among these, Xingtu occupies a particularly central role. Promoted as ‘a service platform for the creator marketing ecosystem, enabling efficient connections between creative content and advertisers’, Xingtu serves as a structured system wherein creators receive commercial tasks, negotiate advertising partnerships and generate revenue through a data-driven matching process (Figure 2).

A word cloud of the 25 most frequent words in the first cluster, sized by local frequency.

DCA materials repeatedly describe Xingtu as a ‘transparent and scientific’ framework for managing commercial orders. By emphasising its automated matching based on content frequency and prior performance, DCA appeals particularly to smaller creators, presenting algorithmic optimisation as a pathway that democratises access to monetisation. Additional tutorials provide creators with detailed operational guidance, including account setup, payment withdrawals and alternative monetisation models such as pay-per-view systems calculated over 14-day periods. The step-by-step instructions present the algorithm as a neutral, rule-based system, thereby obscuring the broader structural inequalities that shape content distribution and monetisation. In this way, Douyin's algorithmic pedagogy not only instructs creators on how to navigate the platform, but also promotes an understanding of platform power that centres on individual technical competence.

A further dimension in the third category of Douyin's algorithmic imaginary involves the reconfiguration of follower relations, wherein audiences are reframed not as social communities but as monetisable assets embedded within algorithmic infrastructures. While follower counts, media traffic, comments and shares appear to reflect vibrant social engagement, these metrics ultimately serve an algorithm-first logic that privileges data-driven monetisation over relational interaction.

Departing from earlier models of social media, where followers represented loosely connected audiences embedded within existing social networks, Douyin positions followers at both the textual and instructive levels – as ‘private data traffic’, resources to be actively cultivated, segmented and converted into commercial value. Under this logic, follower quantity alone does not secure algorithmic visibility; instead, creators must engage in ongoing management of their follower base to optimise monetisation potential. DCA tutorials instruct creators to ‘actively operate fan groups’, emphasising the cultivation of ‘die-hard followers’ through sustained emotional engagement, personalised communication and loyalty-building practices.

In this framing, creators are advised to analyse follower behaviour, tailor content strategies and foster perceived intimacy in order to enhance algorithmic performance. DCA explicitly recommends treating followers as ‘close friends’ or prioritising ‘high-value followers’ based on their commercial potential. Personalised interactions, such as direct conversations with new fan group members, are positioned as tactical instruments to secure engagement, retention, and ultimately, monetisation.

Through this process, intimacy itself is instrumentalised as a calculable variable within the platform's economic system. Emotional proximity is no longer simply a by-product of content production but becomes a managed resource embedded within algorithmic optimisation. In doing so, Douyin's algorithmic pedagogy advances a form of affective governance, wherein creators are responsibilised not only for content production but also for the commercial management of interpersonal connections. This reflects broader dynamics of platform capitalism, where emotional labour is commodified within increasingly granular forms of data extraction and audience segmentation (Duffy et al., 2019)

Cluster 2 authentic self-representation and economic gain

Figure 3 shows that the second cluster centres on content creation within individual accounts. It focuses on the long term development and plan of an account on Douyin. This cluster includes 179 videos, within the form that most of which feature successful creators sharing their monthly experiences. The platform promotes an imagination of authentic sharing, encouraging users to document their daily lives with the promise that genuine self-expression will naturally generate economic rewards. However, this notion of authenticity is carefully structured: personal content is sorted into verticalised categories that serve the platform's attention economy, allowing for more efficient targeting, distribution and monetisation at the account level.

A word cloud of the 25 most frequent words in the second cluster, sized by local frequency.

These videos can be further divided into two subcategories. The first subcategory, vertical content, is characterised by keywords such as World, Traditions, Travel, Games, Knowledge and Persona. A total of 129 videos containing these keywords reflect Douyin's emphasis on niche specialisation, a practice the platform refers to as ‘vertical content’. In these videos, creators consistently introduce themselves with genre-specific labels that define their work, such as ‘I am a film and television creator’, ‘I am a beauty creator’, or ‘I am a pet creator’. This deliberate focus on vertical content underscores Douyin's strategic prioritisation of highly specialised content categories.

DCA explicitly instructs content creators to maintain a high degree of verticality in their production practice, emphasising thematic consistency across all videos. For instance, creators are advised, ‘If you are a pet creator, then try to stick with this theme in all of your short videos. This will help the algorithm identify your content and match it with audiences interested in this topic’. This framing presents algorithmic recognition not merely as a technical process but as a normative guideline that shapes creators’ behaviour to align with platform priorities. This guidance also reveals how algorithmic preferences are naturalised within creator practices. For example, DCA outlines four specific strategies for optimising vertical content: (a) maintaining thematic consistency within the content itself, (b) organising videos into thematic collections, (c) using similar keywords in titles and descriptions and (d) ensuring coherence between video titles and content. In doing so, DCA persuade the platform's technical infrastructure as essential for achieving the defined success. For example, in instructional videos targeting fitness creators, DCA recommends dividing content into three vertical subcategories: fitness tutorials, fitness science and fitness meals. Creators are encouraged to produce content within these rigid categories and structure them accordingly.

In DCA's content, the theme of sharing is closely linked to the recurring concept of ‘authenticity’. This idea is reinforced by keywords such as Creators, Support, Gratitude, Open, Lifestyle, Become and Self. Together, these keywords frame an idealised content production goal: ‘sharing a good life’. DCA videos actively shape platform discourse by consistently highlighting creators whose content exemplifies relatable, positive moments. Examples include: ‘She is a fitness creator who consistently records and shares the beautiful moments of her workouts’, or ‘They are light-hearted snack enthusiasts who achieved monetization for the first time on Douyin through homepage ad revenue sharing’. Another example features a creator who ‘shares her daily life with her pet cat, documenting a joyful lifestyle while earning a four-figure income through homepage ad revenue’. Similarly, DCA spotlights ‘Captain Yang, a well-known rural content creator, who focuses on producing quality content while earning substantial income via homepage ad revenue sharing’. The narrative stresses that creators, by dedicating themselves to their craft, can effortlessly enjoy financial rewards by content creation.

DCA equates authenticity with a creator's professional niche, which indicates an encouragement for content creators to strategically navigate the tension between appearing authentic and remaining appealing to advertisers (Van Driel and Dumitrica, 2020). This discursive framing effectively instructs creators to engage in calibrated amateurism (Abidin, 2017) – a production aesthetic in which the performance of raw, amateur-like authenticity is carefully crafted, regardless of the creator's actual professional status. This mode of content creation relies on platform-specific ecologies, including affordances, tools, cultural vernaculars and social capital. While commonly seen among family and kid influencers, Douyin elevates calibrated amateurism to a platform-wide principle. For example, a creator described as ‘a fitness creator who consistently records and shares the beautiful moments of her workouts’ is deemed authentic precisely because her identity is narrowly defined through fitness-related content. Similarly, a creator who ‘shares her daily life with her pet cat’ is considered authentic in terms of consistent, pet-centric documentation. In this way, DCA constructs authenticity as inseparable from vertical content strategies, wherein any aspect of everyday life can be reframed as ‘authentic’ and subsequently monetised through algorithmically favoured content verticals.

Cluster 3 producing for platform visibility

Figure 4 highlights many terms related to short video components, showing that the third cluster focuses on the video language of individual clips. In this cluster, it presents an imagination of quality content, suggesting that producing high-quality videos will guarantee greater visibility and financial returns. Yet, this quality standard is closely tied to platform-preferred formats that favour glance-based consumption and short-form aesthetics. As a result, creators are incentivised to adapt their production styles to maximise immediate attention and per-video monetisation, aligning their practices with the platform's commercial logic. In DCA's framing, video language enhances ‘content value’ through clear storytelling, effective use of text and graphics and refined editing to boost retention and algorithmic visibility. These strategies define what DCA calls ‘High-Quality Content’ – designed to maximise commercial value efficiently – and reflect the platform's broader push for monetisation discussed in the first cluster. The third cluster offers strategies around visuals, editing styles and narrative techniques to improve content performance within Douyin's algorithm-driven ecosystem.

A word cloud of the 25 most frequent words in the third cluster, sized by local frequency.

DCA's guidelines for high-quality content are designed to streamline creation while ensuring videos meet platform standards and are easily picked up by Douyin's recommendation system. These standards prioritise clarity, informativeness and algorithmic readability, making content more likely to be promoted and widely distributed. To help creators meet these goals, DCA breaks down key media elements: thumbnails and images should prominently feature the main subject (e.g. the speaker or key object), and titles should include relevant keywords or 1 to 3 hashtags to improve algorithmic recognition. The guidelines also cover visual tone, camera angles and background music.

This cluster focuses on practical strategies to maximise audience engagement and boost video visibility, reinforcing creators’ reliance on Douyin's algorithmic system. A key example is the ‘Golden 3-Second Opening Formula’, featured in a tutorial on making movie recap videos. This technique emphasises capturing viewer attention early to boost completion rates – the metric that measures how long viewers stay engaged with a video. This suggestion directly reflects what Diana Zulli (2017) describes as the glance economy, where platforms structure user behaviour around rapid, surface-level encounters that convert fleeting attention into algorithmic value and, ultimately, economic capital. Higher completion rates directly increase the chances of a video being recommended by Douyin's algorithm to a broader audience. This focus on retaining attention is echoed in other tutorials, where DCA suggests practical storytelling techniques, such as summarising contrasting plots in a single sentence, using attention-grabbing data (like box office figures), linking stories to real-life contexts and posing thought-provoking questions to sustain viewer interest.

Beyond content structure, DCA offers detailed guidance on video editing, specifically using tools within Douyin's ecosystem, such as Jianying (CapCut). It promotes pre-made templates that allow creators to easily insert their own media, simplifying the editing process while ensuring content aligns with platform standards. Additionally, DCA provides data on trending hashtags, popular templates, memes and keywords tied to official events, guiding creators on how to optimise their content for better algorithmic visibility. By embedding these strategies within Douyin's proprietary tools and metrics, DCA subtly encourages creators to depend on the platform's infrastructure from content creation to distribution. This creates an ecological closed loop, where the entire production process is tightly integrated with Douyin's algorithmic logic, reinforcing the platform's commercial and content priorities (Figure 5).

This official Douyin event set strict content guidelines – scripts, themes, formats and length – encouraging users to follow a template in exchange for platform exposure.

Cluster 4 platform regulation: rules, policy and governance

The final cluster of analysis focuses on Douyin's platform rules and governance, highlighted by the key terms ‘Rules’, ‘Platform’ and ‘Review’ in Figure 6. Through its official discourse, the platform reiterates an imagination of fairness and social responsibility, framing itself as a neutral and accountable actor within the content ecosystem. However, this discourse often serves to obscure the underlying governance structures and deflect attention from the platform's asymmetrical control over content distribution, visibility and economic outcomes. First, DCA's findings illustrate a regulatory framework shaped by China's political, economic and social contexts (Lin and de Kloet, 2023; Ye et al., 2025). Douyin's regulations reflect governmental priorities, such as banning content related to real estate investments and unofficial policy interpretations due to political sensitivity, highlighting real estate's centrality to national economic stability. 2 Public discourse challenging state policies is strictly controlled, and uncertified medical content, medication advertisements and surgical displays are prohibited to prevent misinformation and maintain trust in official health communications. Second, content deemed ‘Vulgar’ or ‘Violative’, such as materialistic displays, sexualised promotion, or inappropriate behaviour by minors, is strictly regulated. Douyin's ‘red warning’ system flags content involving smoking, drinking, profanity, flaunting wealth, or teenage romance, reinforcing Confucian values of modesty and socialist ideals that oppose conspicuous consumption (Leo-Liu et al., 2024). This reflects broader societal norms aimed at protecting minors and upholding public decency.

A word cloud of the 25 most frequent words in the second cluster, sized by local frequency.

DCA also includes a large proportion of the content that defends the platform's economic interests by warning against content piracy, which is framed as a legal and reputational risk. Also, low-quality content – including poorly produced videos, exaggerated claims and attempts to redirect traffic off-platform – faces penalties such as reduced visibility, removal or account suspensions, with users notified via platform alerts. This economic dimension highlights Douyin's commercial priorities in maintaining content quality and protecting intellectual property.

Crucially, beyond these explicit regulations, Douyin also constructs algorithmic pedagogy through strategic opacity. While DCA publicly refutes widely circulated claims such as the qihao (meaning ‘starting an account’ in Chinese) theory – which suggests that artificially boosting engagement in the early days of an account can manipulate the algorithm and secure long-term visibility – the platform asserts that all creators are offered equal opportunities for exposure, regardless of initial engagement tactics. Meanwhile, tutorials like ‘The First to Third Lesson of Video Censorship’ acknowledge that both human moderators and algorithms evaluate content, but the criteria remain deliberately ambiguous. In practice, this opacity forsers self-censorship, encouraging creators to align with evolving standards and the platform's shifting monetisation priorities.

Summary: algorithmic pedagogy, Douyin's professional grassroots and the neoliberal subjectivity

Douyin's algorithmic pedagogy constructs a highly structured imaginary that governs creator behaviour across multiple levels. It first sets up a compelling promise of meritocratic commercial success, offering individual creators the vision that ‘everyone can monetize’ if they simply follow the right path in the algorithmic system. This promise is then operationalised through normative instructions that guide creators to select niche identities, maintain content verticality and produce performances that balance authenticity with market appeal. While this dynamic resonates with existing discussions of calibrated amateurism (Abidin, 2017), Douyin's model extends beyond aesthetics into a broader formation of what may be termed professional grassroots. This mode of subject formation involves not only performing amateur-like authenticity, but also adopting a fully professionalised approach to content production, audience management and commercial optimisation, embedded within the platform's algorithmic and ideological infrastructures. Through continuous data feedback loops, creators are further encouraged to optimise their practices in response to algorithmic signals, embedding platform logics into their everyday production. At the same time, these processes obscure the platform's structural power by framing monetisation as a function of personal effort rather than systemic asymmetries, thereby responsibilising creators for their own precarity while masking the underlying political economy of platform governance. Taken together, these interlocking mechanisms illustrate that the process of manufacturing the algorithmic imaginaries is not simply as technical explanation, but as normative infrastructures that shape the conditions, responsibilities and possibilities of cultural production in platform economies.

The aim of creating this imaginary in Douyin through algorithmic pedagogy is the formation of what we term professional grassroots – a hybrid subjectivity involving the full professionalisation of content production, account management, emotional labour and platform-optimised audience engagement. Creators are encouraged to widely blend personal narratives with monetisable formats, professionalised technical standards and algorithmic compliance. This process not only disciplines creative practice but also reframes cultural labour itself, dissolving boundaries between amateur and professional, personal and commercial, and rendering even authenticity as a functional component of platform optimisation.

However, this fabrication of algorithmic imaginaries is inherently fragmented and contradictory. Our analysis reveals several tensions embedded in Douyin's discursive construction: between authentic personal sharing and commercial optimisation; between grassroots accessibility and professionalised content labour; and between claims of algorithmic transparency and the persistent restriction of user agency. Rather than resolving these contradictions, the platform manages them through strategic ambiguity and moralised narratives that encourage creators to internalise responsibility while suppressing critical engagement with platform governance. A central technique in this process involves constructing simplified causal narratives that obscure the underlying complexity of platform operations. By selectively concealing how the algorithm actually functions, Douyin promotes the idea that creator success can be attained through individual compliance – to ‘follow the algorithm’ and ‘persist.’ Such narratives offer an illusion of predictability while relying on unfalsifiable claims: creators are unable to verify whether success or failure truly results from adherence to these prescribed strategies. This logic ultimately naturalises algorithmic authority, placing the burden of success on individual creators while shielding platform governance from scrutiny.

Douyin's algorithmic pedagogy participates in the active construction of algorithmic imaginaries that reshape how creators understand and engage with platform governance. Through procedural instructions, moralised narratives and selective transparency, creators are encouraged to internalise platform logics and align their practices with algorithmic optimisation. This process not only responsibilities individual creators for managing their own visibility, but also transforms grassroots participation into a professionalised form of content labour embedded within the platform's commercial imperatives. In this sense, Douyin exemplifies how algorithmic cultural production operates as both a pedagogical project and a labour regime, where the promises of participation are reconfigured into structured economic extraction under the broader dynamics of TikTokification.

Footnotes

Funding

The authors received no financial support for the research, authorship and/or publication of this article.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.