Abstract

Background:

Addressing the negative impact of substance use disorders (SUDs) on individuals, families, and communities is a public health priority. Most treatments and interventions require engagement with a healthcare provider or someone who can offer recovery support. The need for interventions that facilitate self-management of relapse triggers at the moment they occur is also critical. Our study aimed to explore the user experience of individuals using a just-in-time smartphone episodic resonance breathing (eRPB) intervention to address stress, anxiety, and drug cravings.

Methods:

We conducted an 8-week pilot study of the eRPB with 30 individuals in recovery from SUD. Data on 3 indicators of user experience—acceptability, appropriateness, and feasibility—were collected using survey questions (n = 30) and semi-structured interviews (n = 11). We performed univariate analysis on the survey data and deductive thematic analysis on the qualitative data.

Results:

A majority of the survey respondents agreed that the application (app) was acceptable (> 77%), appropriate (> 82%), and feasible (> 89%). Several interview participants stated that the app helped them relax and manage stress and cravings and expressed appreciation for the simplicity of its design. Participants also reported barriers to feasibility (such as forgetting to use the app) and recommendations for improvement (such as the addition of motivational messages).

Conclusions:

Our findings show that individuals in recovery from SUD had highly positive experiences with the eRPB app. A positive user experience may improve adherence to the intervention and, ultimately, the self-management of stress, anxiety, and craving relapse triggers.

Highlights

Qualitative studies of just-in-time interventions identify input for improvement.

Individuals in recovery for substance use disorder had a positive experience with the smartphone app.

Enhancements based on user feedback could improve intervention adherence.

Introduction

A national survey conducted in 2020 found that 1 in 6 people aged 12 or older living in the United States had a substance use disorder (SUD). 1 During the same year, 93 000 people died from drug overdoses—a 30.0% increase from the year prior. 2 Undoubtedly, there is a critical need for innovative interventions and treatments to address this national public health crisis. Because of the sustained efforts of thousands of clinicians and researchers, evidence-based approaches that address the harms of substance misuse are being employed across the world.3-5 These approaches can be broadly categorized as psychological/behavioral (e.g., cognitive-behavioral therapy), 6 social (e.g., peer-support services), 7 or pharmacological (e.g., naltrexone). 8 Most of these approaches require that individuals engage in interpersonal communication with their health service providers or individuals in their recovery support network. However, at the specific moment when people resume their misuse of substances, they are often alone or with people who enable their substance use. As such, supplementing interpersonal-based approaches with interventions that facilitate self-management of threats to sustained recovery at the exact moment, the individual needs assistance can provide additional real-time support.

Just-in-time interventions (JITIs) for substance misuse have been developed to address this need.9-11 Researchers have developed and tested JITIs to address misuse of alcohol,12-14 tobacco,15-17 marijuana, 18 and other drugs.19,20 Most of the interventions are delivered by mobile phone. Available support types include text messages (e.g., reminders, motivational messages),17,21 prompts to engage in mindfulness activities (e.g., walking and meditation), 9 and tips (e.g., coping and protective behavioral strategies).22-25 The primary goals of most of JITIs are abstinence maintenance,16,26 reduction in the frequency of use,14,18 and reduction in drug craving.19,27

An innovative JITI developed recently involves using the body’s physiological mechanisms (i.e., respiratory stimulation of the vagus nerve) for relapse prevention. 20 This episodic resonance paced breathing (eRPB) intervention aims to reduce drug cravings—a conscious or unconscious urge or strong desire to use drugs.28,29 Craving is a psychological state with attendant physiological changes (e.g., increased heart rate) and is highly associated with relapse risk.28,30 The development of the eRPB is based on heart rate variability biofeedback research, which has demonstrated that paced breathing techniques that stimulate homeostatic reflexes (e.g., vagus nerve activation) are effective in managing the physiological changes that accompany craving and can reduce craving among individuals with SUD.31,32 Physiological arousal driven by drug carving often changes heart rate oscillations such that they are out of sync with their optimal rhythm. Slow breathing at 6 breaths per minute leverages respiratory sinus arrhythmia control of cardiac activity to match respiration and heart rate.31,33,34 This process activates the parasympathetic nervous system, which is responsible for rest and relaxation. 35 In a prior randomized control trial of an eRPB smartphone application (app), participants were directed to complete a 5-minute breathing session using the app when they anticipated or encountered substance use triggers or at the end of every day. The app has 3 major features—adjustable settings for the frequency of breaths per minute, a breathing pacer bar displayed on the cell phone screen, and a timer. These features provide important benefits compared to breathing techniques that are not assisted by the app. The frequency setting of the app and the pacer bar mitigate the risk that participants will breathe faster or slower than the optimal rate of 6 breaths. The timer assists the participant in adhering to the recommended 5-minute session. The 8-week trial found that participants who used the app frequently had lower craving levels than those in a control group.20,36

The aim of the current study is to examine the user experience of the eRPB among a sample of individuals in recovery from SUD using data from interviews and survey questions from a pilot study. This study addresses a significant gap in the literature. To our knowledge, it is the only study that has published results of interviews specifically designed to assess multiple domains of a substance use JITI’s user experience—acceptability, appropriateness, and feasibility. Most studies report quantitative assessments of user experience from Likert scale questions.14,23,27,37,38 Two studies ventured beyond quantitative measures and analyzed content from open-ended survey questions.17,18 The responses helped researchers obtain a general sense of the user experience; however, data generated from interviews may have provided more detail and depth of understanding. 39 By using participant interviews in our study, we demonstrate that the results of qualitative studies of JITIs can (1) generate suggestions from users for improvement of the intervention and (2) be used to address issues that may negatively affect adherence to the intervention.

Methods

Intervention

The intervention uses the Camera Heart Rate Variability (CHRV) app 40 to deliver resonance breathing exercises. The CHRV app is designed to record heart rate variability using photoplethysmography technology to measure variations in blood circulation when an individual places their index finger over the camera in their phone. The app also has a breathing pacer that appears as a red bar on the screen. The bar ascends to guide an individual to inhale and descends to guide an individual to exhale. A digital timer on the same screen (to the right of the pacing bar) displays the duration of the breathing session in minutes and seconds. Participants were enrolled in an 8-week trial and directed to initiate a resonance breathing session for at least 5 minutes daily. Participants were also encouraged to use the app whenever they experienced cravings, felt like they were going to relapse, felt anxious or stressed, or wanted to feel calm. At the beginning of the trial, participants completed an online questionnaire that included validated psychosocial measures on stress, trauma history, craving, anxiety, depression, demographic questions, and questions on substance use behavior. They also completed this survey at the end of the trial. Participants were instructed to export their app use history weekly to an Excel file they shared with the study team. They received $12.00 compensation for each survey and $1.00 for each day of app use. Additional study protocol details are available at ClinicalTrials.gov (NCT#05830773).

Procedure

Participants in this study were persons in recovery from opioid and stimulant use disorders who received support services funded through the Texas Health and Human Services Commission (TXHHSC) Recovery Support Services division. Eligible individuals needed to be 18 years of age or older, be able to read and speak in English, and have access to a cellular phone. Members of the research team trained peer recovery support specialists to explain the study and invite clients to participate in the intervention through email, telephone, or in-person conversations. The peer recovery support specialists collected contact information from interested individuals and shared it with the study team. The study team contacted those individuals, explained the study in more detail, and reviewed the informed consent form. Individuals who chose to enroll in the study received a unique study ID and an email request to complete an online survey that included an informed consent document and the baseline questionnaire. They also received a PDF of instructions on how to install the app and a video instruction guide on how to use it. Research team members provided support by phone and email to participants who needed technical support. A convenience sample of 30 individuals enrolled to participate in the intervention; all 30 were invited to participate in an in-depth interview about their experience with the app. Eleven individuals participated in the interview and received a $30.00 incentive. There is no record of why the remaining 19 individuals declined to participate in interviews. Power analyses were not conducted to determine the sample size. University of Texas at Austin’s Institutional Review Board reviewed and approved the study protocol.

Measures

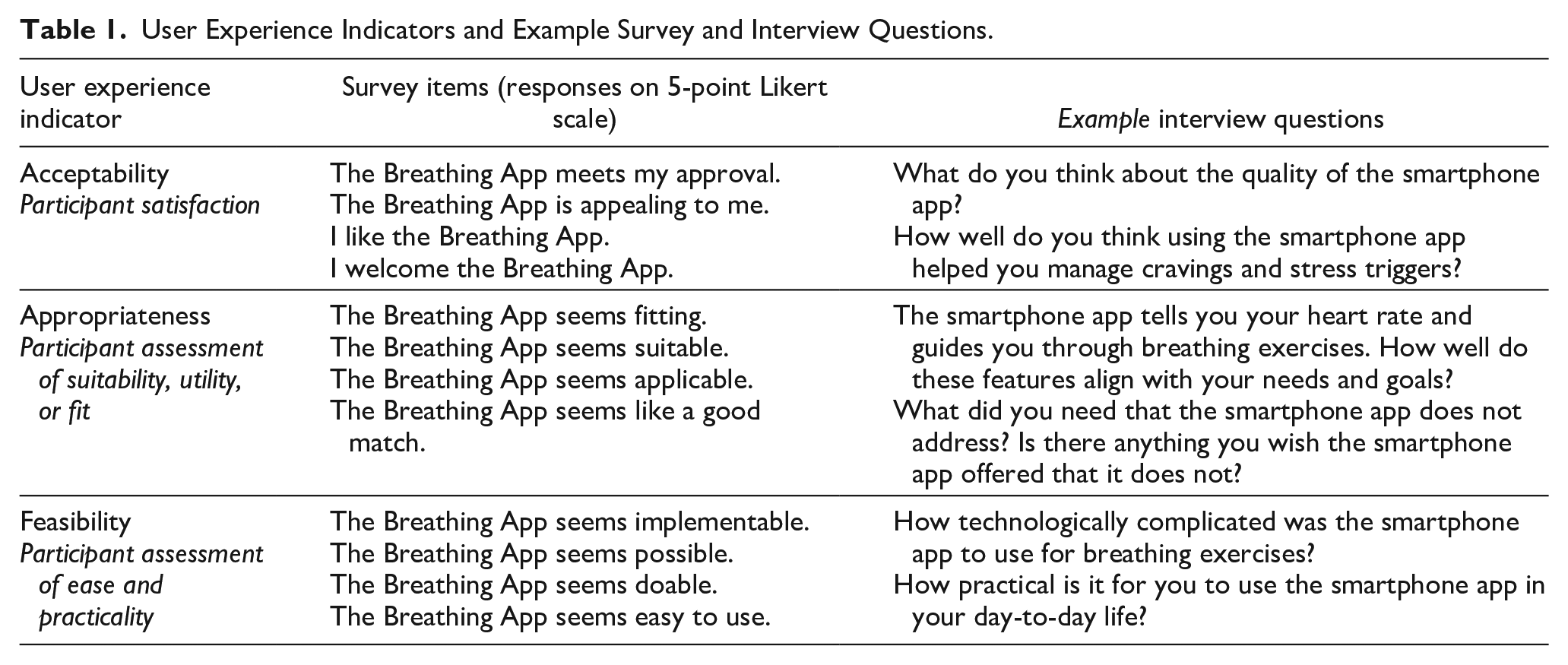

We collected quantitative and qualitative data to assess three indicators of user experience—acceptability, appropriateness, and feasibility. These indicators are often used as measures of intervention and implementation success. 41 We adapted the language to solicit feedback specifically about user experience with the app. Acceptability questions assessed whether the app enabled participants to manage stress, anxiety, and cravings. Appropriateness questions examined the alignment between participant needs and the app’s function. Feasibility questions explored whether the app was easy and practical to use. Table 1 displays the user experience indicators, survey items, and example interview questions.

User Experience Indicators and Example Survey and Interview Questions.

Quantitative Data Collection

The Acceptability of Intervention Measure, Intervention Appropriateness Measure, and Feasibility of Intervention Measure were used to collect survey data regarding the user experience.42,43 The measures have psychometric validity (content and structural) and reliability. 43 Cronbach’s alpha internal consistency are as follows: acceptability (α = .85), appropriateness (α = .91), and feasibility (α = .89). Each measure has four statements and asks participants if they agree with the statements on a scale of 1 to 5 (1 = Completely disagree, 2 = Disagree, 3 = Neither agree nor disagree, 4 = Agree, 5 = Completely agree). Quantitative data also included participant demographics (e.g., age, race/ethnicity, sex) and app usage (number of sessions and number of minutes).

Quantitative Data Analysis

The study team linked each participant’s survey responses and app use data via their unique study ID numbers in a single master data file. After cleaning the data, the team ran descriptive statistics on participant demographics, app usage, and percent of agreement of acceptability, appropriateness, and feasibility statements of their user experience. Percent agreement of user experience statements was calculated based on the number of participants who endorsed “agree” or “completely agree.” All quantitative statistical analyses were conducted using SAS Enterprise Guide 8.3 software (SAS Institute Inc., Cary, NC, USA).

Qualitative Data Collection

Qualitative data were gathered using a semi-structured interview guide developed by two authors (SVP and FNC) with doctoral degrees and training in health services research, implementation science, and substance use research. The questions in the guide were developed to conceptually align with user experience indicators of acceptability, appropriateness, and feasibility. For example, the interview question which asks, “How practical is it for you to use the smartphone app?” assesses feasibility. Table 1 contains additional examples of interview questions and their matching indicators. The guide was not pilot-tested with participants. Study team members sent an email invitation to all individuals who completed the intervention and asked them to participate in an in-depth interview about their experiences with the app. Two authors (PK: male, white, undergraduate student, psychology major; ET: female, Asian, master of social work student) received interview training from researchers at RTI International, a research institute that provides research and technical services. PK and ET conducted 30- to 60-minute phone or video conference interviews from their homes. Only the participants and researchers were present. The interviews were audio-recorded and transcribed with participant permission. No repeat interviews were conducted. Transcripts were not returned to participants and they did not provide feedback on the findings. Members of the research team had no relationship with participants prior to the study’s commencement. The participants did not have knowledge of the interviewer’s personal goals or motives for doing the research, and no other interviewer characteristics were shared with the participants beyond the interviewer characteristics visible during the interviews.

Qualitative Data Analysis

The study team used a content analysis approach to identify themes derived from the data. Two research team members (PK and ET) imported all transcripts into NVivo 20.0. Three members (PK, ET, and AB) coded transcripts with the codebook that aligned with the interview guide and focused on the user experience outcomes. Acceptability subcodes centered on design quality and relative advantage; appropriateness subcodes included intervention fit and utility. Feasibility subcodes consisted of technological complexity and practicality. On completion of the deductive coding, one analyst (AB) generated code reports and used an open coding approach to identify themes. 44 The analyst documented themes in analytic matrices, 45 which were reviewed by senior researchers (HK and JDC) with doctoral degrees and training in qualitative research methodologies and public health. Data saturation was not assessed 46 and participants did not review or provide feedback on interview findings.

Results

Characteristics of Participants

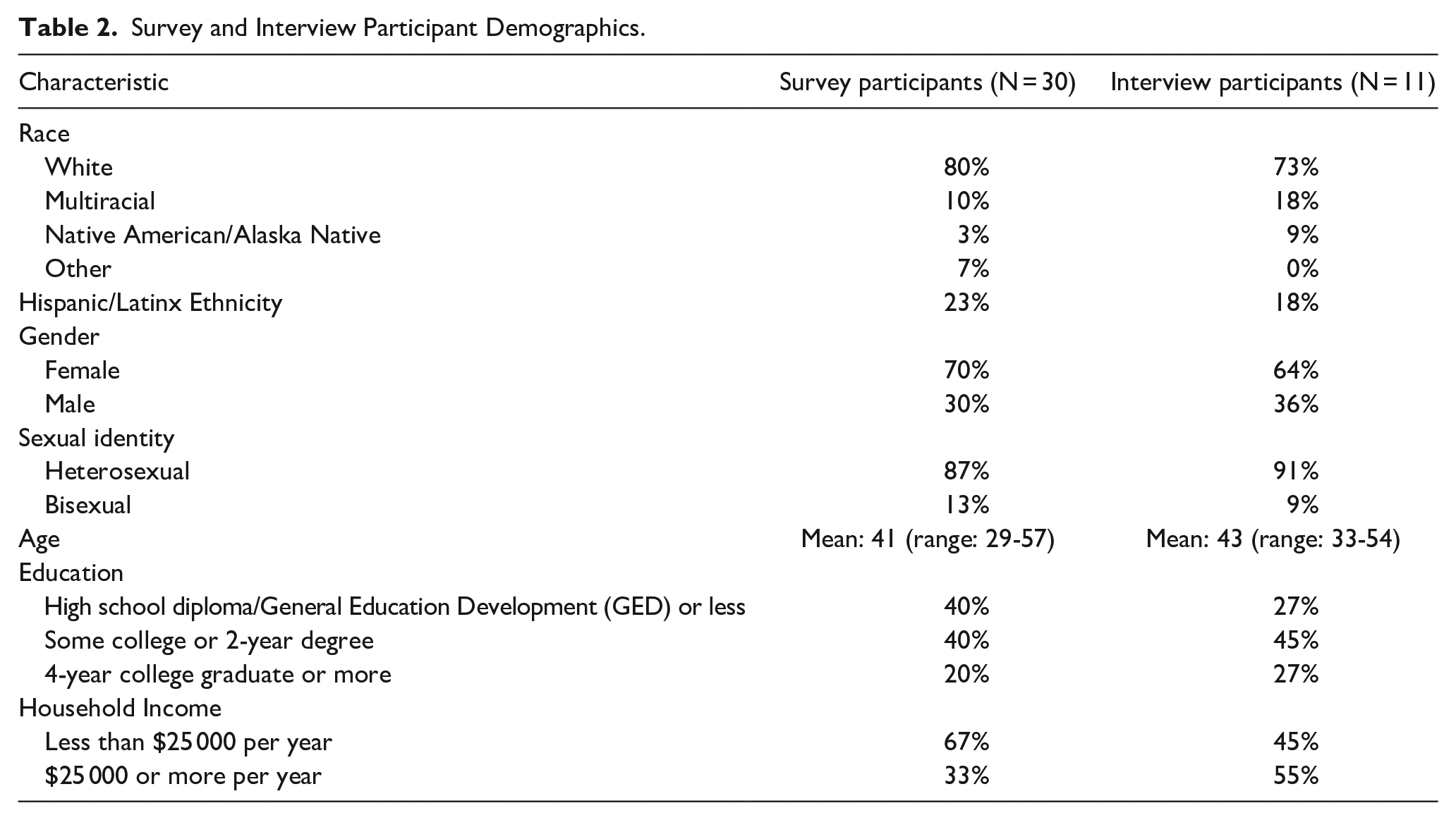

Table 2 displays the characteristics of participants.

Survey and Interview Participant Demographics.

App Usage

All 30 participants reported using the app. Over the 8-week intervention period, participants used the app 30 times on average (range: 5 to 55 sessions per participant), spending an average of 3.8 minutes on the app per session and a total of 113 minutes on average across all sessions, with usage ranging from 5 minutes to 313 minutes per participant across all sessions.

User Experience

Acceptability

Whether participants find an intervention satisfactory or palatable can shape their ongoing involvement in the intervention and use of the app. Achieving intervention effectiveness outcomes (e.g., clinical outcomes) is not possible if an intervention is not acceptable to participants and they discontinue the intervention or do not engage in it with fidelity. 47 Survey data showed that participants generally liked the design of the app, with 83% of participants agreeing that the app was appealing to them, 79% agreeing they welcomed the app, 77% of participants agreeing that they liked the app, and 77% agreeing the app met their approval.

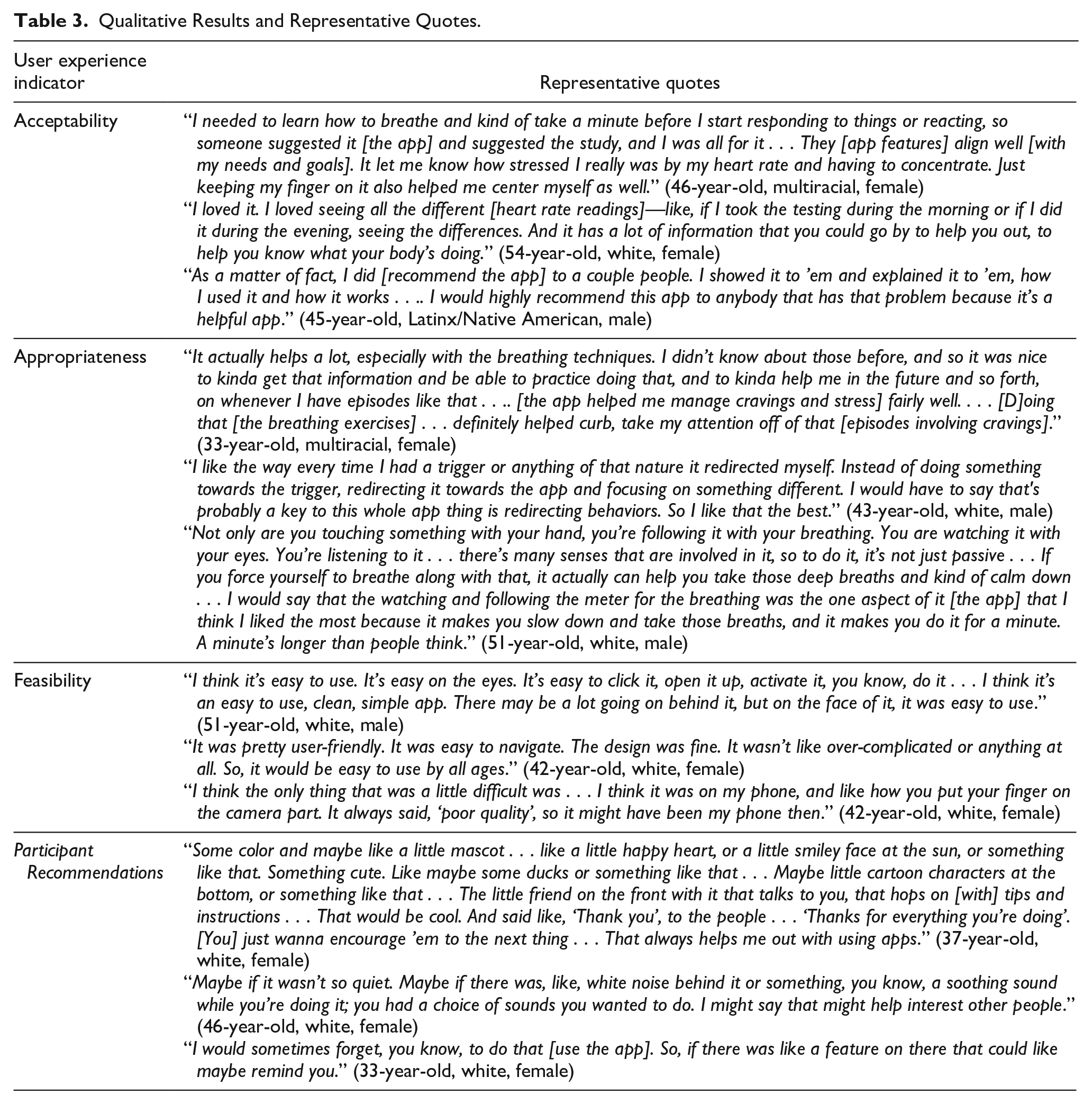

In interviews, participants described how the app’s design and features were satisfactory, explained they understood the app’s purpose, and articulated how the app aligned with their needs (see Table 3). In discussing the app design, participants commented that the app was “neat,” “simple,” and “helpful.” After using the app, most participants reported they would recommend it to others. Participants also appreciated the app’s features, such as showing the differences in their heart rate measurements across time points, how the app collected heart rate data from their finger, and how it required engagement (e.g., guided breathing). Participants described various uses for the app. Some commented on how the app allowed them to monitor heart rate and breathing while experiencing a trigger and provided a tool to calm themselves after a trigger. Another reported that the app aligned with their needs to address stress and gain awareness of their body’s reactions to stress. Although most survey and interview participants found the app acceptable, their primary motivation for engaging in the intervention was often financial, rather than spurred by a desire to address stress, anxiety, or cravings. One participant (34-year-old, multiracial, female) explained, “Personally, I just did it because I needed the money ’cause I’m in recovery, and I’m trying to get back on my feet.” Despite financial incentives driving engagement, participants appreciated the app once they began using it.

Qualitative Results and Representative Quotes.

Appropriateness

Appropriateness captures the fit or alignment between an intervention and its end users. 42 Like acceptability, if intervention participants do not find the app suitable or a “good fit,” they may not use the app in a way that is consistent with the intervention’s intended protocol, which would make it difficult to assess whether changes in outcomes arose from misfit between the app and the goals of the individual. In the survey, 89% agreed the app seemed applicable, 85% agreed the app was suitable, 82% agreed the app seemed like a good match, and 79% agreed the app seemed fitting.

In interviews, the team asked participants to assess the app’s utility in managing stress, anxiety, and cravings. Nearly all interview participants shared that the app helped manage cravings or stress and/or supported relaxation. Participants frequently mentioned that the app helped them with stress rather than with cravings. About half of these participants specifically noted that the app helped them manage stress and triggers by encouraging them to focus on their breathing and redirecting their attention. Participants explained the app encouraged them to take an active role in calming themselves by changing their physical space to seek somewhere quiet or by engaging multiple senses (e.g., breathing, touch, sight). One-third of interview participants reported that the app was not helpful in managing stress or cravings. One of these participants did not offer an explanation, and others reported the app was not helpful because they did not find the heart rate statistics measured by the app meaningful or thought the app instructions were insufficient. However, three participants who reported the app was not helpful in managing stress or cravings mentioned that the app was helpful in other ways, such as managing their blood their pressure or refocusing their mind. One (54, white, female) explained: “It did help me lower it [my blood pressure] if I was having a hard time. Then I would just do that [use the app]. And it helped me keep my blood pressure in check.”

Feasibility

Feasibility refers to whether an intervention can reasonably be implemented by participants (or in particular settings). 42 If the intended audience cannot do the intervention, then the intervention outcomes cannot be achieved. The surveys and interviews explored participants’ assessments of whether the app was easy and practical to use. In the surveys, 97% of participants agreed the app seemed doable, 89% agreed the app seemed easy to use, 86% agreed the app seemed possible, and 82% agreed the app seemed implementable.

In interviews, most participants agreed that the app was practical and did not take much time to complete. Nearly all participants reported that the app was easy to use, not complicated, and user-friendly. As one participant (43, white, male) succinctly put it, “I couldn’t think of anything easier to use than this.” A few participants who classified themselves as “tech-averse” or not tech-savvy specifically confirmed that they had no problems using the app. The design was self-explanatory, and the few options presented on the app made it easy for participants to follow. Despite ease of use, participants reported two common technological challenges: (1) A quarter of participants reported difficulty aligning their fingers properly on their phone camera for the app to register their heart rate information. One participant attributed this to having arthritis in their hands, while another noted that the issue could have been with the quality of their phone, (2) almost a fourth of participants shared that their finger would get too hot when placing it against their phone’s flashlight for heart rate tracking.

Although participants generally found the app feasible to use, about three-fourths also noted elements of their everyday lives presented obstacles to daily app use; these included remembering to use the app, having a busy work or school schedule, having family demands, and being in inappropriate settings for app use, such as work. One participant (34, multiracial, female) explained, “I tried to [use it every day]. I forgot a few days, but I had to do a reminder on the Alexa. At first, I kept forgetting and forgetting, forgetting.” Another participant explained that they were not currently working, making it easier to use the app every day, but that it would be more difficult to do so if they had a job.

Participant Recommendations

Several participants offered recommendations to improve the app by increasing participant engagement and bolstering users’ understanding of the app. Some requested more health trend data, compelling colors, or engaging graphics (e.g., duck, smiley face). A few participants suggested adding an auditory component to the app (e.g., a choice to play white noise or background music), a function to let participants enter in words or phrases (e.g., a mantra) they may want to hear, a verbal countdown, or a voice guiding their app usage. Some participants proposed enhancing the guidance for using the app. Suggestions included developing a “how-to” video, adding more guidance on the breathing exercises, and programming pop-ups with directions that walk the participant through app activities. Some recommended adding a reminder notification feature and connecting the app with other devices, like the FitBit.

Discussion

This study examined the user experience of individuals in recovery who used a smartphone JITI to self-manage stress, anxiety, and drug craving—all of which are risk factors for relapse. Most published results of JITI outcomes do not include in-depth exploration of user experience. This study addresses this significant gap in the literature by presenting findings from interviews that exclusively explored the user experience of the JITI using acceptability, appropriateness, and feasibility indicators. Likert survey questions supplemented the interview data.

Over 77% of participants who completed the survey questions agreed the app was acceptable (i.e., satisfactory). Interview participants stated that they enjoyed the interactive features of the app, recommended the app to others, and found the app helpful. Survey ratings on the app’s appropriateness (i.e., perceived fit with the participants’ needs) were also high (over 82% agreement). In interviews, most participants mentioned that the app helped them relax or manage stress and cravings. Some interview participants did not find the app helpful in those specific areas but mentioned other benefits, such as lowering their blood pressure. The app’s feasibility (i.e., ease of use) received the highest survey ratings (over 89%). Interview participants appreciated the simple design and stated that it was easy to navigate. The interviews also generated feedback highlighting barriers to using the app and recommendations for improvement. Aspects of the app that negatively affected feasibility were uncomfortable heat generated by the flashlight used to measure heart rate and participant difficulty aligning their finger to the phone’s camera lens for accurate heart rate readings. Recommendations for improvement included enhancing the app’s user experience by adding colorful graphics to the app design, motivational messages, an instructional video, an audio component, and a reminder feature.

One of our findings was consistent with a previous study 17 that reported qualitative feedback from open-ended survey questions on a mindfulness JITI smoking cessation intervention. The notable similarity was the mechanism by which participants perceived the JITI helped them manage their cravings. Participants in our study and theirs reported that the interventions redirected their attention or distracted them from their cravings. Attention redirection is a key component of mindfulness activities.48,49 The findings from both studies provide evidence that these activities are helpful regardless of the type of activity (awareness strategies vs breathing exercises) or target substance (cigarettes vs other drugs). More broadly, our findings are also consistent with reports of favorable participant feedback from JITI studies that assessed acceptability (i.e., satisfaction) with quantitative surveys. In these studies, participants reported moderate to high satisfaction14,26 and that the interventions were helpful,23,27,50 motivating, 18 usable, 24 and acceptable. 38 These results provide empirical support for further development of JITIs for SUDs. However, we should evaluate these findings with the knowledge that there is large variability in methodological approach, especially in measurement. In the literature, a variety of indicators are used to evaluate user experience, including satisfaction, 23 acceptability, 18 usefulness, 14 and usability. 50 Also, the measures often differ even when the indicator is consistent across studies. For example, some researchers used a few questions (e.g., 4 questions) 38 to assess acceptability, while others have used more than three times as many (e.g., 15 questions). 18 Given this diversity of measurement approaches, future research should focus on consistently using the same psychometrically valid measures to facilitate rigorous comparison of user experience across multiple studies.

Limitations

The findings of our study are noteworthy, but there are limitations. Most of our participants were white, female, and heterosexual. This hinders our ability to generalize our findings to individuals who do not have those social identities. Also, we did not assess data saturation, which limits our confidence that additional interviews would not present new themes to explore. Another limitation is the type of study that generated our results. Our study was a pilot, which used a convenience sample. As such, the participants opted into the study, which may reflect their comfort with mobile phone apps. A study with a randomized sample of individuals would more likely include individuals not predisposed to engaging with apps, which could offer insights into the usefulness of JITIs among individuals with a broader range of technological aptitudes using mobile apps.

Conclusions

Despite these limitations, our study found that individuals in recovery from SUD had an overwhelmingly positive user experience with a smartphone app that delivered breathing exercises for the self-management of stress, anxiety, and cravings. Future trials of this app should address the barriers to feasibility (i.e., ease and practicality of use) reported by participants in interviews. Also, enhancing the design of the app based on participant recommendations could improve intervention adherence and, ultimately, its usefulness in addressing substance relapse among individuals in recovery from SUD.

Supplemental Material

sj-docx-1-saj-10.1177_29767342241263675 – Supplemental material for User Experience of a Just-in-Time Smartphone Resonance Breathing Application for Substance Use Disorder: Acceptability, Appropriateness, and Feasibility

Supplemental material, sj-docx-1-saj-10.1177_29767342241263675 for User Experience of a Just-in-Time Smartphone Resonance Breathing Application for Substance Use Disorder: Acceptability, Appropriateness, and Feasibility by Fiona N. Conway, Heather Kane, Amanda Bingaman, Patrick Kennedy, Elaine Tang, Sheila V. Patel and Jessica D. Cance in Substance Use & Addiction Journal

Footnotes

Author Contributions

FNC conceptualized the study and obtained funding. SVP and FNC created the interview guide, and PK and ET collected the data. PK, ET, and AB conducted the data analysis. FNC, HK, and JDC wrote the initial draft. All authors contributed to reviewing, revising, and approving the submitted manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by Texas Targeted Opioid Response, a public health initiative operated by the Texas Health and Human Services Commission through federal funding from the Substance Abuse and Mental Health Services Administration (SAMHSA) grant award number H79TI085747. The views expressed do not necessarily reflect the official policies of the Department of Health and Human Services or Texas Health and Human Services; nor does mention of trade names, commercial practices, or organizations imply endorsement by the United States or Texas Government. The funder had no role in the design and conduct of the study; collection, management, analysis, and interpretation of the data; and decision to submit the manuscript for publication.

Compliance,Ethical Standards,and Ethical Approval

The study was approved by the University of Texas at Austin’s Institutional Review Board.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.