Abstract

Quantum computers are expected to contribute more efficient and accurate ways of modeling economic processes. Quantum hardware is currently available at a relatively small scale, but effective algorithms are limited by the number of logic gates that can be used, before noise from gate inaccuracies tends to dominate results. Some theoretical algorithms that have been proposed and studied for years do not perform well yet on quantum hardware in practice. This encourages the development of suitable alternative algorithms that play similar roles in limited contexts. This paper implements this strategy in the case of quantum counting, which is used as a component for keeping track of position in a quantum walk, which is used as a model for simulating asset prices over time. We introduce quantum approximate counting circuits that use far fewer 2-qubit entangling gates than traditional quantum counting that relies on binary positional encoding. The robustness of these circuits to noise is demonstrated. While this paper is mainly about robust simplified quantum circuit designs, we compare some aspects of the results with price change distributions from stock indices, and compare the behavior of circuits with and without mid-measurement to trends in the housing market.

Keywords

1. Motivation: Quantum Finance Implementations in 2023

Quantum computers are expected to enable more sophisticated and accurate modeling of various financial situations. The reasons for the high expectations for quantum finance are in some cases thoroughly worked-out algorithmically: for example, Egger et al. (2020) survey applications including option pricing and risk management, where Monte Carlo simulation methods are commonly used, and explain how quantum algorithms for amplitude estimation offer a potential quadratic speedup (by reducing the number of samples needed for the variance of the probabilistic outcomes to converge). As with quantum factoring and search, there are solid reasons for expecting quantum computers to perform well at large-scale problems that are especially challenging for classical computing methods.

In some cases, the proposed models are simple and concise enough to be simulated on classical hardware, and now in the early 2020’s their behavior can be explored on real quantum computers. However, these models have been small. Using just a 2-qubit photonics processor, Qiang et al. (2016) were able to model a continuous time walk on a connected graph with 4 states. Stamatopoulos et al. (2020) used 3 superconducting qubits for option pricing and Zhu, Johri, et al. (2022) used 6 trapped-ion qubits to perform generative modeling for correlated stock prices.

The scale of such experiments has been particularly limited by qubit availability and quantum gate accuracy. For example, the 3-qubit circuit of Stamatopoulos et al. (2020) was optimized down to 18 2-qubit entangling gates and 33 single-qubit gates, but even with this small circuit, error rates in the results ranged from 62% raw, to 21% using Richardson extrapolation for error-correction. This is not surprising, since the accuracies of the single-and 2-qubit gates were estimated at 99.7% and 97.8% respectively, and .99733 × .97818 ≈ .587, so the compounded gate error rate is at least 40%.

A safe implementation strategy might be to wait for large-scale fault-tolerant quantum computers to become available, but this runs the risk of missing opportunities in the meantime. Instead, researchers such as Stamatopoulos et al. (2020) and Zhu, Johri, et al. (2022) try to use currently-available quantum hardware, and ask whether implementations can be made robust enough to provide value sooner. In the current NISQ era (Noisy Intermediate-Scale Quantum), the scarce resources include the number of qubits, and also, as seen above, the number of gates, and especially the number of 2-qubit entangling gates. Circuits are sometimes described in terms of width (number of qubits) and depth (number of dates, or layers of gates), and both need to be minimized.

Quantum developers sometimes have many suggested designs to start from: quantum information processing has been explored as an academic field for some decades, and established literature provides many circuit recipes (Nielsen & Chuang, 2002). A natural strategy is to take such designs, consider their NISQ era limitations, and see if there are alternatives that provide some of the same functionality using fewer qubits or gates.

This paper develops some new examples of this approach, with the basic example of quantum counting. The central novel contribution of the paper are the approximate quantum counting circuits, introduced in Section 7. The motivation is that quantum counting is used as a component in the implementation of quantum random walks, which are proposed as a model for stock prices, and also for

A distinct feature of quantum systems including quantum walks is that they behave differently when they are measured, compared to when they are left to evolve dynamically. Such behavior has been demonstrated with humans in psychology experiments (Kvam et al., 2015; Yearsley & Pothos, 2016), and is an important feature in quantum cognition and economics (Busemeyer & Bruza, 2012; Orrell, 2020). Section investigates the simulated behavior of the approximate counting circuits with mid-measurement, and shows that they exhibit desirable behavior (in this case, that more frequent measurement tends to reduce the chances of large changes). In a departure from many quantum cognition models, the macro effects of beliefs on transactions and prices cannot be described as the decision of particular cognitive agent, and it may be more appropriate to think of quantum models as representing the beliefs of whole groups of buyers and sellers, and measurements as decisions observed by the whole market. This theme is considered as part of introducing quantum walks in finance in Section 3, and the effects of measurement in Section 7, especially with reference to the housing market.

To begin with, the next few sections review some of the basic quantum logic gates and how they are put together into quantum circuits, the use of random walks and quantum walks in finance, and how these come together to emphasize the practical quantum counting problem.

2. Quantum Gates Used in this Paper

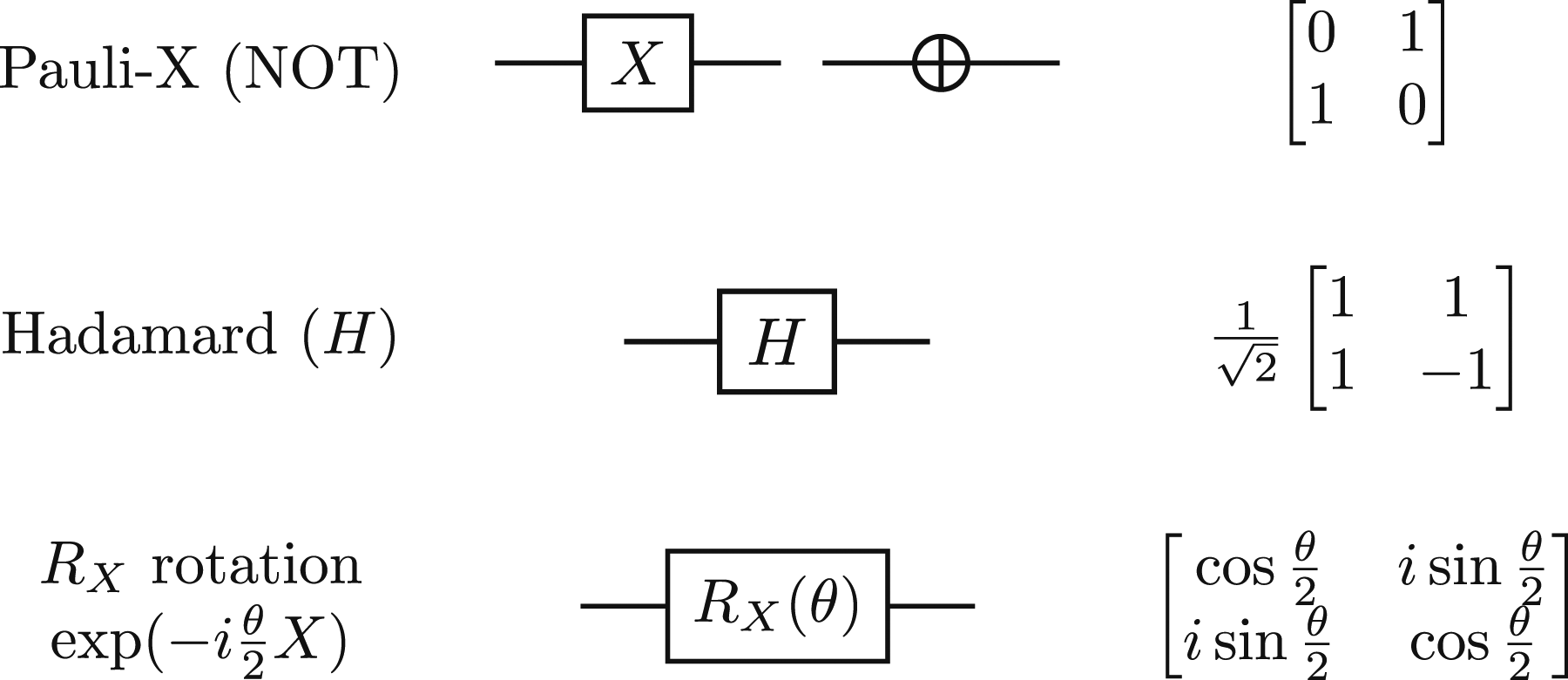

In mathematical terms, the key features that distinguish quantum from classical computers are superposition and entanglement. This section gives a brief summary of how these properties are worked with in quantum circuits. Some familiarity with quantum mechanics, especially Dirac notation, is assumed, so that |0⟩ and |1⟩ are the basis states for a single qubit whose state is represented in the complex vector space

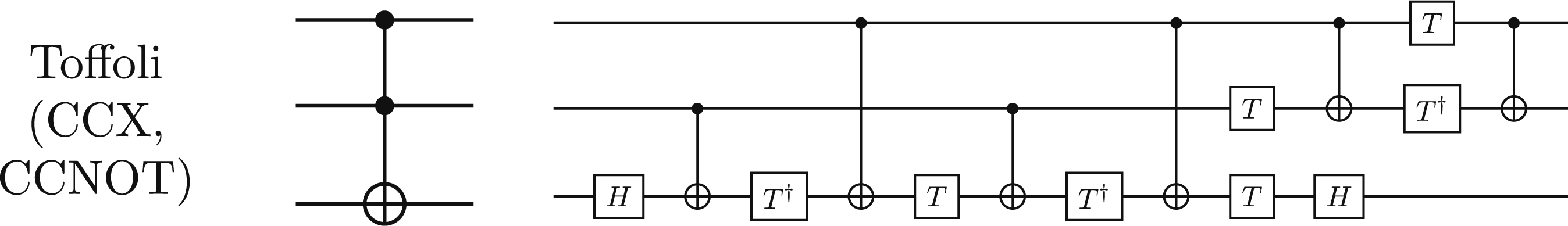

Superposition can be realized in a single qubit: the state Some standard single-qubit gates and their corresponding matrices, which operate on the superposition state

Entanglement is a property that connects different qubits. Since the 1930’s, quantum entanglement has gone from a hotly-disputed scientific prediction, to a statistical property demonstrated with large ensembles, to a connection created between pairs of individual particles, to a working component in quantum computers. All modern quantum computers have some implementation of an entangling gate, and only one kind is really needed, because all possible 2-qubit entangled states can be constructed mathematically by combining appropriate single-qubit gates before and after the entangling gate. Furthermore, a single 2-qubit entangling gate and a set of single-qubit gates forms a

The CNOT (controlled-NOT) gate of Figure 2 is the most common example of a 2-qubit gate in the literature. In the standard basis, its action is sometimes described as performing a NOT operation on the target qubit if the control qubit is in the |1⟩ state. Thus, as well as causing entanglement, it is sometimes thought of as a kind of conditional operator in quantum programming. Entanglement is the crucial property that distinguishes quantum computing algorithmically, because predicting the probability distributions that result from quantum operations with entanglement can become exponentially hard for classical computers. In simpler terms, quantum computing is special because it offers special kinds of interference, not because it offers special kinds of in-between-ness. The CNOT gate is a 2-qubit entangling gate, that acts upon the state

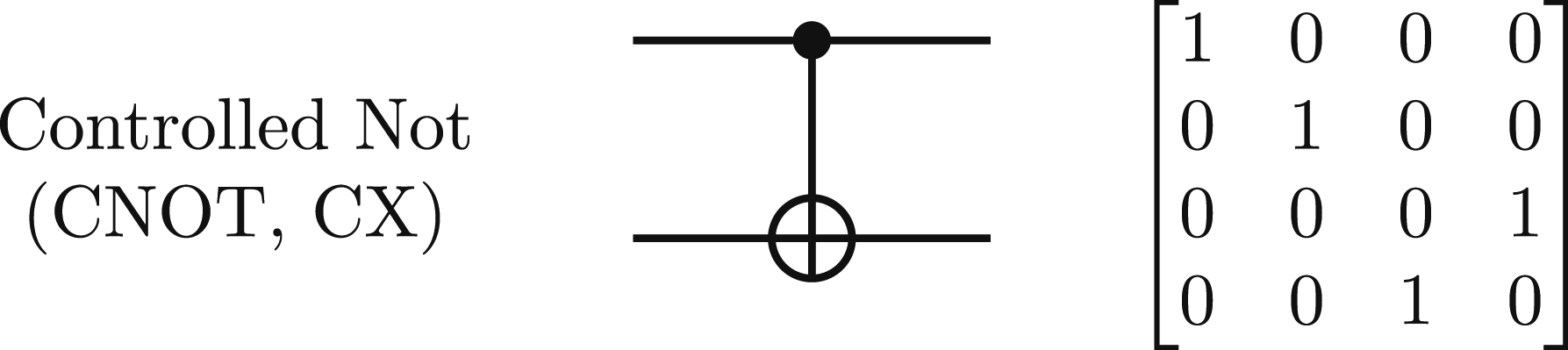

A quantum circuit consists of a register of qubits, and a sequence of logic gates that act on these qubits. For example, the circuit in Figure 3 prepares the famous Bell state (named after physicist John Bell, whose pioneering theorem motivated experiments that demonstrated real entanglement). It maps the input state |00⟩ to the state Hadamard and CNOT gates in sequence make a quantum circuit that prepares the Bell state

Quantum circuits finish with measurements that record the 0 or 1 state of at least some of the qubits, and output this as classical information. (Some platforms also support measuring qubits before the end of a circuit.) The measurement outcomes are probabilistic, following the Born rule. A run of a single quantum circuit including outputting one sample of measurements is typically called a

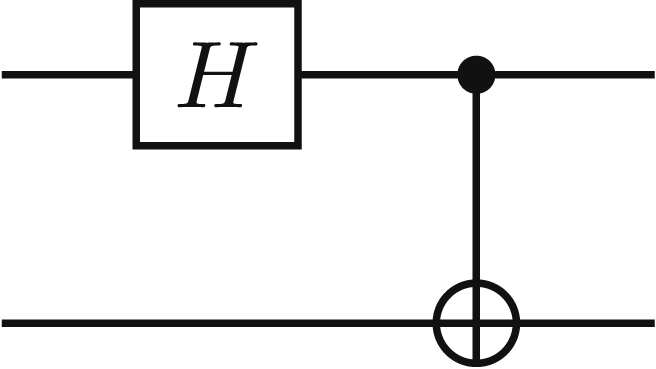

There are many standard gate recipes and equivalences. In particular, larger operators are often thought of as distinct gates in their own right, an important example being the 3-qubit Toffoli gate of Figure 4. This is like an extended CNOT gate — it has 2 control qubits instead of 1, and performs an X-rotation/NOT operation on the target qubit if both the control qubits are in the |1⟩ state. The decomposition in Figure 4 shows that 5 CNOT gates are needed for each Toffoli gate. There are variants of this, but as a general rule-of-thumb, the error-rate of a Toffoli gate will be at least 4 times the error-rate of the 2-qubit gates form which it is assembled. Toffoli gates are particularly important for binary arithmetic, as seen in Section 5. The Toffoli gate diagram, showing that it performs a NOT operation on the target qubit if both the control qubits are in the |1⟩ state. On the right is one of its standard decompositions into CNOT and single-qubit gates, in which 5 CNOT gates are needed to implement one Toffoli gate.

In the NISQ era, such considerations are pervasive: there is a ubiquitous tradeoff between circuit complexity (the number of gates needed to execute a given algorithm) and expected circuit accuracy (the more gates we use, the more inaccurate our results become).

3. Random Walks, Stock Prices, and Quantum Walks

This section briefly reviews the role of random walks and quantum walks in the modeling of asset prices. For a more thorough introduction, see Orrell (2020, Ch 7, 8). A random walk is a mathematical process that constructs a path through some base space composed of a succession of randomly-chosen steps (Xia et al., 2019). Random walks have been used to model a range of scenarios including physical (Brownian) motion, population dynamics, and web browsing sessions, though they were first proposed for modeling prices of stocks in the Paris Bourse in the work of Bachelier (1900). This was formalized in the Black-Scholes model for pricing financial options: the paper introducing the Black-Scholes formula assumes that: The stock price follows a random walk in continuous time with a variance rate proportional to the square of the stock price. (Scholes & Black, 1973, §2(b)).

A classical random walk with unit steps up-or-down leads to a binomial distribution, which at large scales is approximated by a corresponding normal distribution. Thus, for large simulations, the simplifying assumption of a fixed size for each step is immaterial, because the overall distribution is normal. However, the most standard formulation for the Black-Scholes model assume that the price change for each unit of time is not fixed, but (log-)normally distributed. The Black-Scholes formula has been widely used as a pricing tool: indeed, over-reliance on the model, and the amounts of money entrusted to it, have been found to be significant contributors to the 2008 market crash (Cady, 2015; Wilmott & Orrell, 2017). One particular observation is that the assumption of a constant rate of volatility is not borne out by the long-tail of variations in strike-price, leading to the claim that a ‘volatility smile’ distribution is a more faithful model in practice (Orrell & Richards, 2023).

Quantum random walks were introduced in the 1990s (Aharonov et al., 1993) as a counterpart for classical random walks, and have become a rich and established area of quantum modeling (Venegas-Andraca, 2012). In economics, quantum walks have been proposed as an alternative that takes into account key factors including varying beliefs or opinions about the future, and the transactions between different traders (Orrell, 2020, Ch 7). Another anticipated benefit of these quantum walk models is that they will work natively on quantum computers, when large fault-tolerant quantum hardware is available (Orrell, 2021). Quantum walks are thus expected to be a powerful component in pricing models: for example, they may be used to model the input distributions on which the Monte Carlo methods proposed by Stamatopoulos et al. (2020) depend.

Often the term ‘quantum walk’ is preferred to the term ‘quantum random walk’, not only for brevity, but because the internal state of a quantum walk is typically an entirely deterministic superposition of different states. For example, a walk that starts in position 0 with a 50-50 chance of going in either direction will, after one step, be in a superposition of the states representing positions −1 and +1, with equal amplitudes in the superposition. The quantum state vector representing this superposition can be predicted exactly: it is only the measurement outcome that is probabilistic, when one of these distinct possibilities is randomly selected.

In the most standard presentation, a quantum walk consists of a

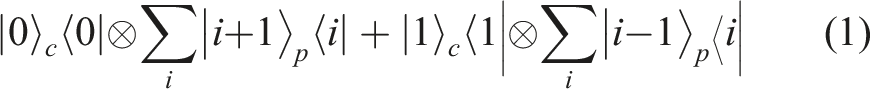

This leads to an elegant expression for the

The

The quantum walk model can be used in two different but related ways. Firstly, it can be used to model evolving beliefs or opinions, for example, subjective estimates of what a particular asset will be worth at a given future time. Different and even contradictory beliefs can be modeled in the quantum walk as a coherent superposition of different possible values. Secondly, it can be used to model actual asset prices, as observed in recorded transactions. A transaction behaves like a measurement on the quantum walk state, which forces the coherent superposition into a particular state. Some ways of inserting and tuning the level of decoherence are discussed by Orrell (2021), noting in particular that if the quantum walk is measured at every time increment, decoherence is complete and the quantum walk collapses to the classical version.

An interesting feature of long, coherent quantum walks is that the distributions become two-tailed, as do those of the quantum harmonic oscillator at higher energy levels (Orrell, 2020, Ch 10). Quantum dynamic models have been used to represent several cognitive scenarios (Busemeyer & Bruza, 2012, Ch 9), and oscillator models in particular have been used to model fluctuations in the stock market (Orrell, 2022). It is intriguing that the distributions produced by quantum walks with and without decoherence are similar to those produced by oscillator models at high and low energy levels.

This has provided considerable motivation for implementing quantum walk and oscillator models. However, experiments in simulating quantum walks and harmonic oscillators on real quantum computers have been very small so far, restricted to just 2 or 3 qubits, and have reported very noisy results using superconducting hardware (Kadian et al., 2021; Puengtambol et al., 2021; Qiang et al., 2016). The reasons for this are explained in the next section.

4. Quantum Counting and the Challenge of Recording Position

To simulate a quantum walk using repeated applications of the shift operator in equation (1), we need to model tossing a coin, and tracking position. The coin-toss is easy for today’s quantum computers to implement effectively. For example, we use a single qubit and a Hadamard gate which acts like a ‘beam-splitter’, putting the coin into a superposition of |0⟩ and |1⟩ states.

The bigger challenge for quantum computers today is tracking the position: in other words, the quantum counting problem (Haven et al., 2017, Ch 4). For non-negative numbers, the states |

The most traditional way to represent numbers on a computer is to use some form of binary positional notation. For example, the binary expression 110 represents the number 6 (or the number 3, if the bits are read in reverse order). Quantum binary ‘adder circuits’ were designed by Feynman in the early papers that first motivated quantum mechanical computing (Feynman, 1986), but the ongoing presence of errors in NISQ-era machines limits the number of steps we can reliably count (Orts et al., 2020).

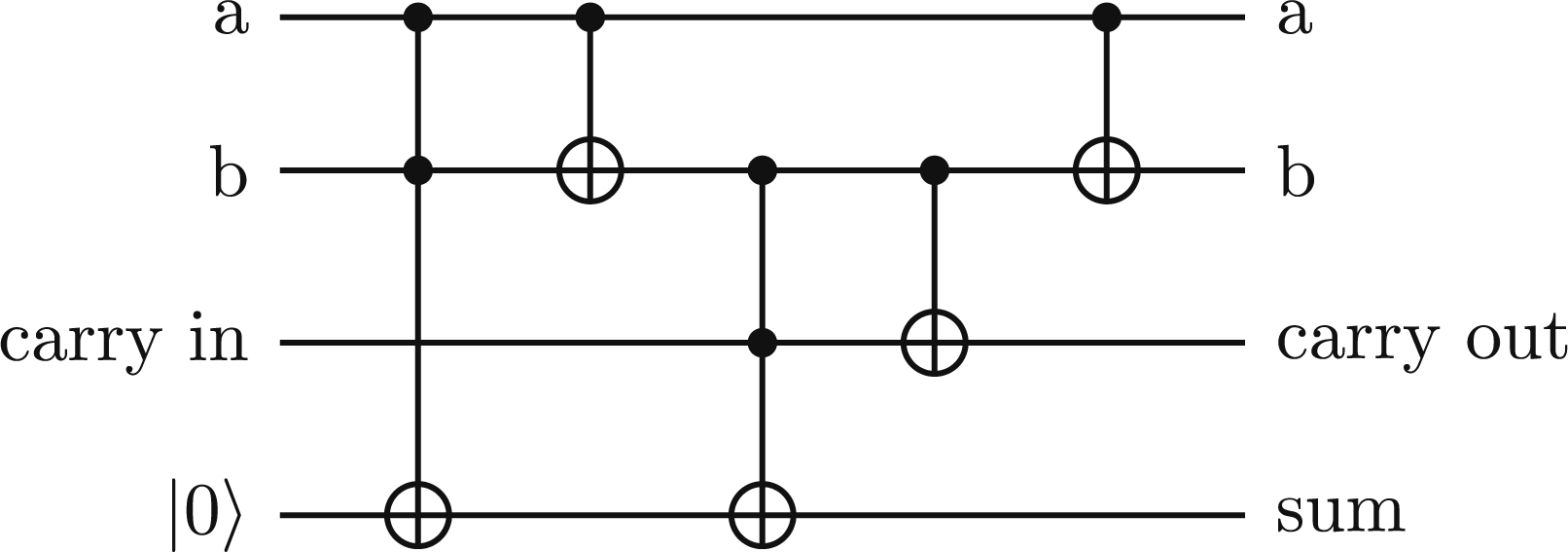

Choosing a binary positional encoding, as used in classical computing, makes quantum counting very susceptible to 2-qubit gate errors, because manipulating binary encodings takes a lot of entanglement and coordination between qubits. To compute the sum of two binary numbers A quantum full adder circuit, first introduced by Feynman (1986), uses 2 Toffoli and 3 CNOT gates, which is at least 11 2-qubit gates.

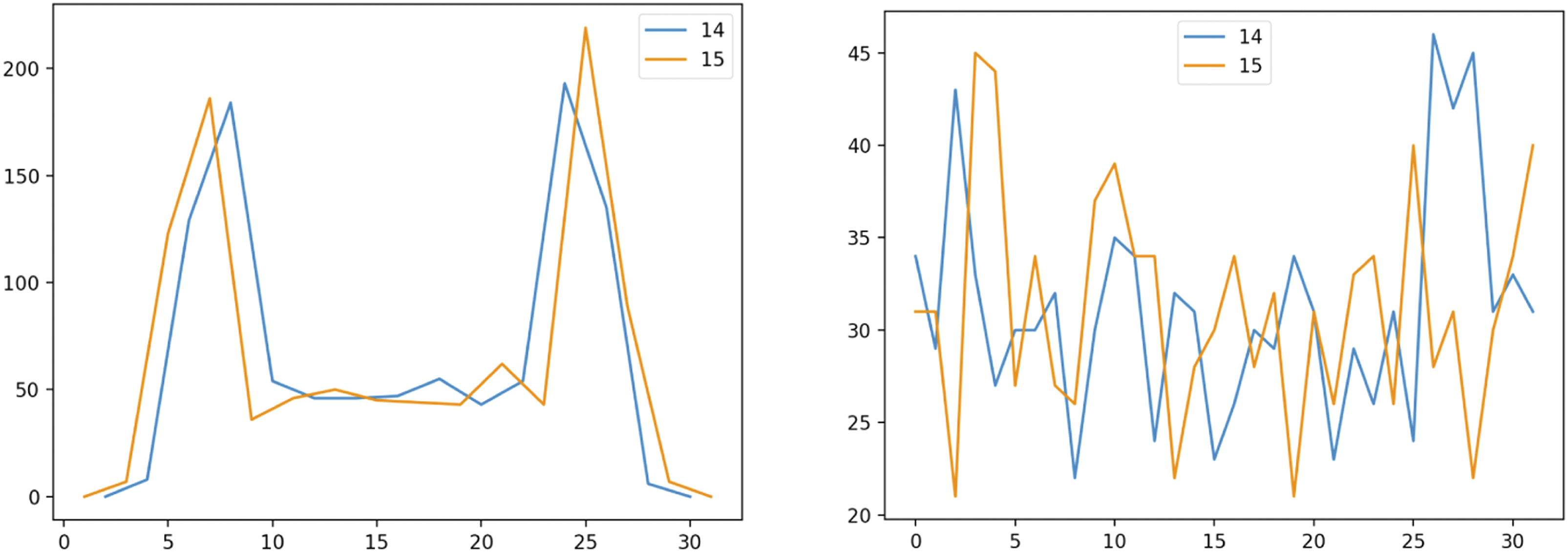

Error rates with quantum counting can thus undermine the simulation of quantum walks, and block this application of quantum finance. The problem is demonstrated in Figure 6, which compares quantum walks with ideal outcomes and with noise. This shows the vulnerability of the quantum counting process to noise after a handful of steps. It should be noted that Ideal simulated quantum walk after 14 and 15 steps (left), compared with results simulated with expected noise (right). The ideal distribution has the two-tailed peaks characteristic of a quantum walk or harmonic oscillator, but this quickly gets lost with noise (right).

There are many optimizations and alternatives. We expect progress in quantum hardware to enable greater fidelity and stability, and eventually mid-circuit quantum error-correction should make the current problems with quantum counting obsolete — but this comes at the cost of waiting for fault-tolerant quantum computing. Quantum addition algorithms can be optimized (Cuccaro et al., 2004; Gidney, 2018), and the incremental operation of counting can be made simpler than repeated full-register addition (Li et al., 2014). An interesting benchmark challenge could be to design and evaluate quantum circuits and see how far they can count with

5. Approximate Quantum Counting: Fault-Tolerant Circuits for Tracking Position

By now, the central modeling problem of this paper should be clear: we would like to be able to model a quantum walk on a quantum computer, but the use of positional binary notation to represent integer quantities requires ‘increment’ and ‘decrement’ operators that require too many entangling gates to give accurate results on current quantum hardware.

To avoid these pitfalls, we introduce alternative circuit designs that can also be used for recording position in a random walk. Instead of trying to ensure that every move goes up and down by exactly one step on the position axis, the position register is incremented using some gate combination that is likely to move the position by some amount that is generally positive for upward steps, and downwards for downward steps. Another way to describe this is that instead of putting all the randomness in the coin toss and following this with deterministic shift operators, we toss a random coin and then combine this with a somewhat-random shift operator. For larger circuits, such methods can give a walk that goes up and down more reliably than the results we get if we try to insist that the position represented by the state |

The reader should note that all the results from the circuits in this section are statistical in nature, so the results are naturally noisy in various ways. Some of the randomness is purely quantum, in that different runs of the same circuit are expect to give different measurements according to the Born rule, and running jobs with more shots gives closer statistical approximations to the ‘real’ quantum distribution. For some of the design patterns in this section, randomness is also due to the way the circuits are constructed, so this randomness is expected and is classical in nature. Also, NISQ-era results also include noise from hardware errors. It is not always immediately obvious which sources of noise or randomness are most impactful for a given design, though some of the key features are noted.

5.1. Arc Counter Circuits

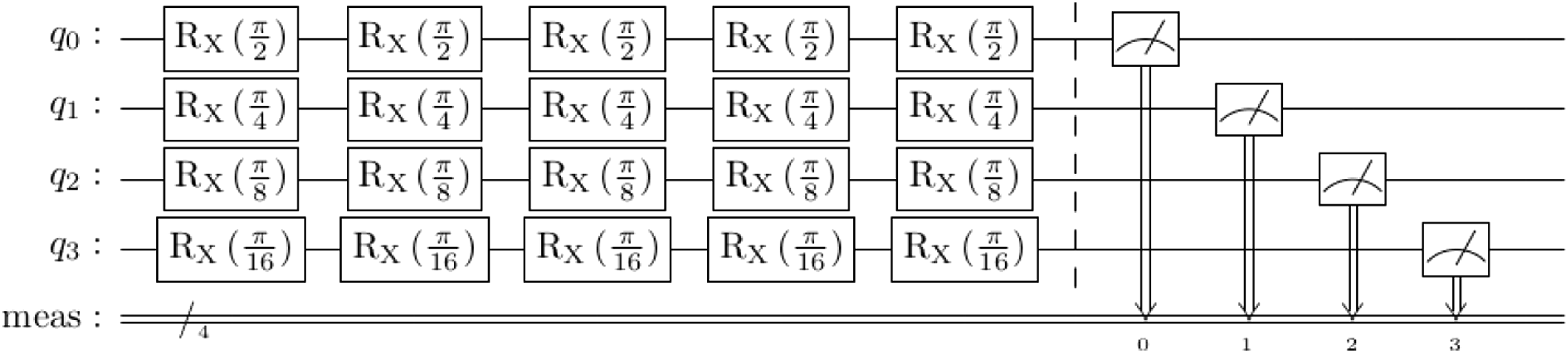

The Arc Counter circuit design uses only single-qubit rotations throughout. Such a representation is sometimes called a rotation encoding (Schuld & Petruccione, 2021). It is particularly easy to implement for a modest number of features, and can be incremented as new feature weights are encountered.

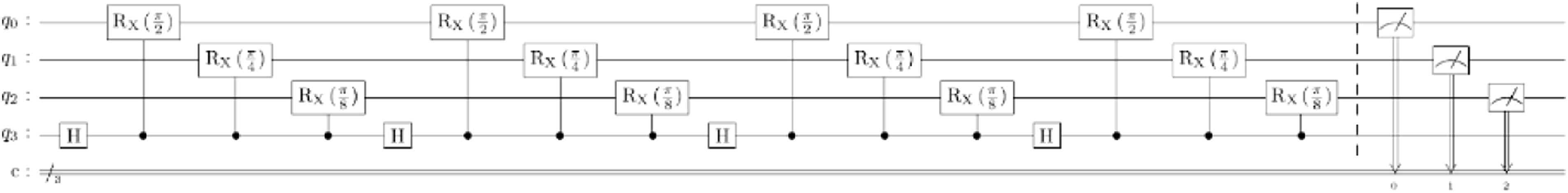

To use a rotation encoding for counting, qubits are rotated through particular arc-lengths or angles at each incremental step. Each qubit is used to represent one digit in a binary register, where each bit is twice as significant as its predecessor. At each step, each qubit traverses an arc that is inversely proportional to that qubit’s significance, as in Figure 7. This means that the Arc counter circuit.

A good analogy for this representation is to think of each qubit as one of the hands on a traditional analog clock. On an analog clock, the minute hand cycles at 12 times the speed of the hour hand, and the second hand 60 times faster still, whereas in our binary clock, the ratio between the speed of rotation of each successive pair of ‘hands’ is 2:1. This analogy works well for the standard binary positional notation for integers, which can be thought of as a binary digital clock. In a digital register (or an abacus), the digits logically depend on one another for correct incrementing, because we need to know that one register is full before we increment the next. By contrast, the hour hand on a clock does not ‘carry’ information when the minute hand completes a cycle — it just rotates at its own slower pace. Thus the rotation encoding clock-based design requires much less coordination (and hence entanglement) between the qubits.

This comes at a representational cost — the register does not represent exact integers, and random variations in the outputs are expected, because many fractional angles are used throughout the circuit. (This is true for the basic counting operation, irrespective of whether the counting is coupled with a ‘coin toss’ operation).

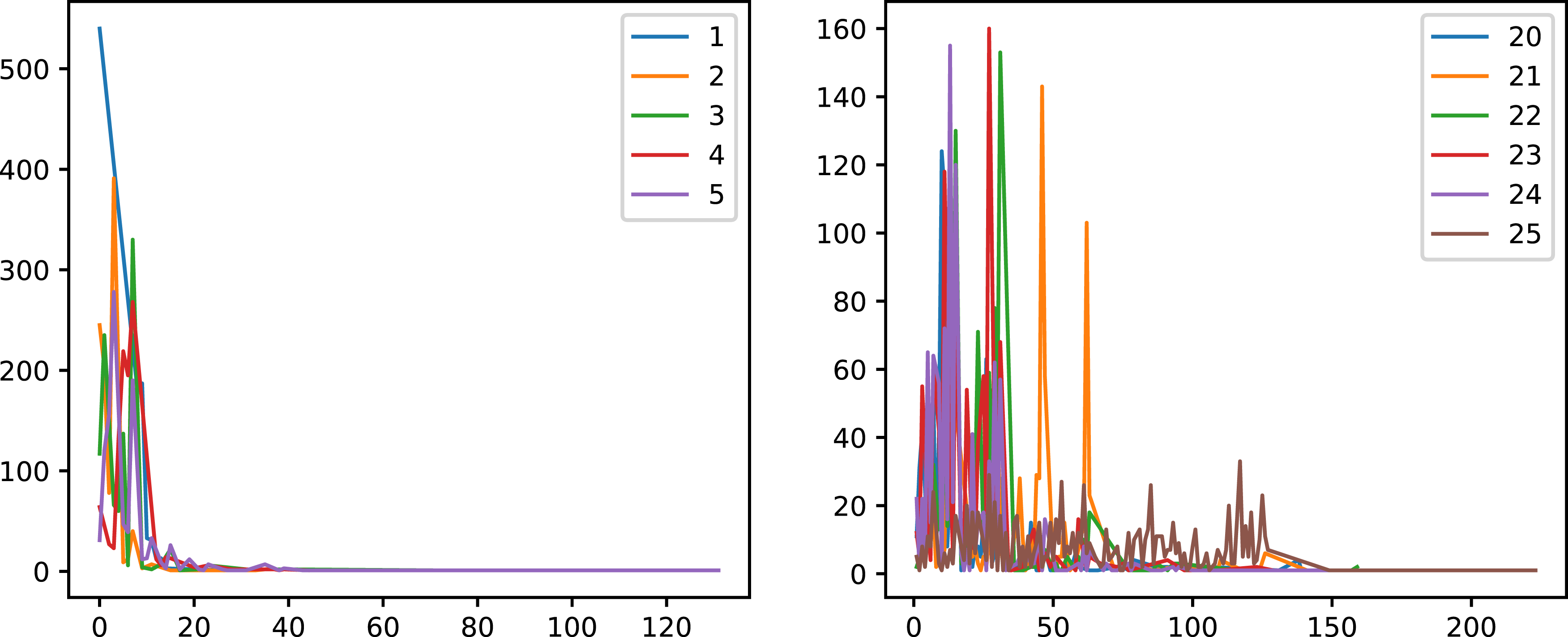

Sample results from a quantum walk with an 8 qubit register are shown in Figure 8. Statistically noteworthy properties include: • The mean distance from the starting point generally increases with the number of steps. • In some cases, the position appears to jump ahead, because a high-order qubit is measured in the |1⟩ state. This can happen (with low probability) after just a single step. • There are sometimes peaks in the distributions after specific powers of two or their combinations (e.g., peaks at 48, 64, 96). It may be possible and desirable to find ways to smooth out these peaks. Quantum walk results after different numbers of steps with arc counter circuit and an 8-qubit register. 1000 shots for each number of steps.

Since there are no 2-qubit entangling gates, error rates are lower, but there’s also no physical quantum advantage from this design — it is easy to model this distribution on a classical computer. It’s possible that such distributions might be useful models for random processes, but this would not require quantum computers to simulate.

5.2. Reversal and Superposition by Classical Post-Processing

An important feature of the traditional ‘binary adder’ circuit components is that they are able to decrement (subtract) as well as increment (add). The arc counter circuit, and the others below, do not support this feature. The logical work to guarantee that a change from 01111111 to 1000000 happens in-concert for all of the bits is precisely what we’ve given up, which makes it much harder to orchestrate a difference between positive and negative steps with large distances.

As noted by Haven et al. (2017, Ch 4), it is natural for quantum systems to have a lowest state which we may call |0⟩, and if we want to generate a full set of integers including the negative ones, these can be constructed as differences between positive natural numbers. This leads to an alternative method for simulating random walks that can evolve in both directions. We use two quantum circuits, one for the ‘up’ steps and one for the ‘down’ steps, and subtract the results from the down circuit from the results from the up circuit as a classical post-processing step. The ‘up’ and ‘down’ circuits can even be configured with different ‘clock speeds’, which has been done in the example of Figure 9. Two-directional arc walk circuit from combining positive and negative distributions. 1000 shots for each number of steps.

5.3. Arc Walk Counter Circuit

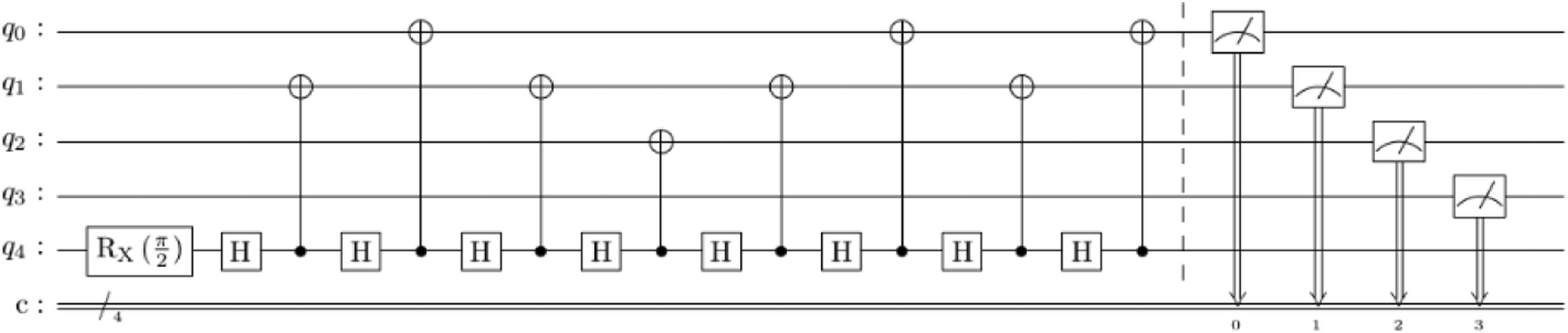

This circuit design combines the arc rotations of the Arc Counter design above, with the ‘Hadamard quantum coin’ prevalent in the quantum walk literature. At each step, a Hadamard gate ‘tosses a coin’, and if the outcome is ‘heads’, or |1⟩, a controlled rotation is performed on each of the other qubits, following the same pattern of angles as in the Arc Counter circuit above. This gives the circuit pattern of Figure 10. Arc walk counter circuit.

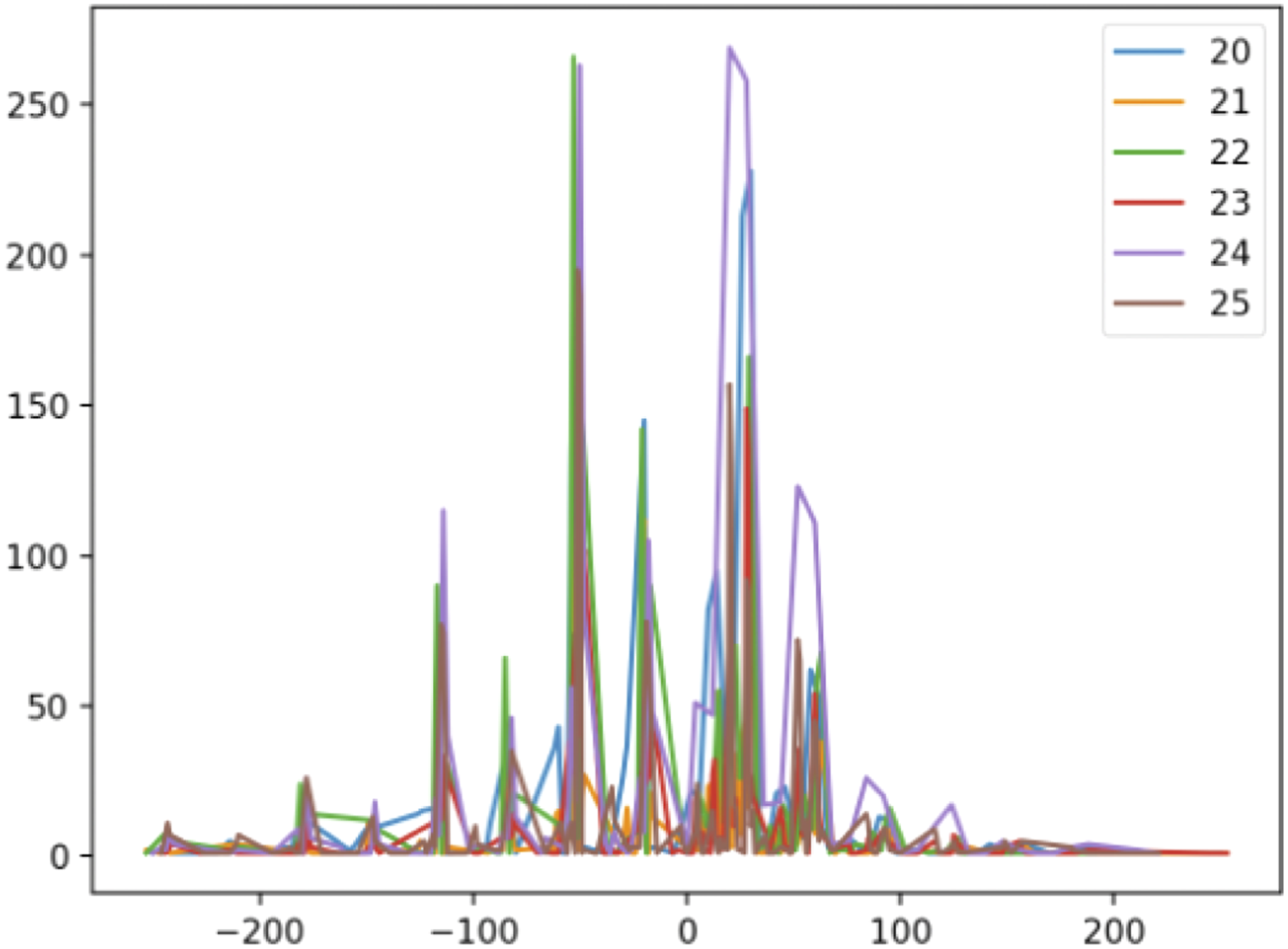

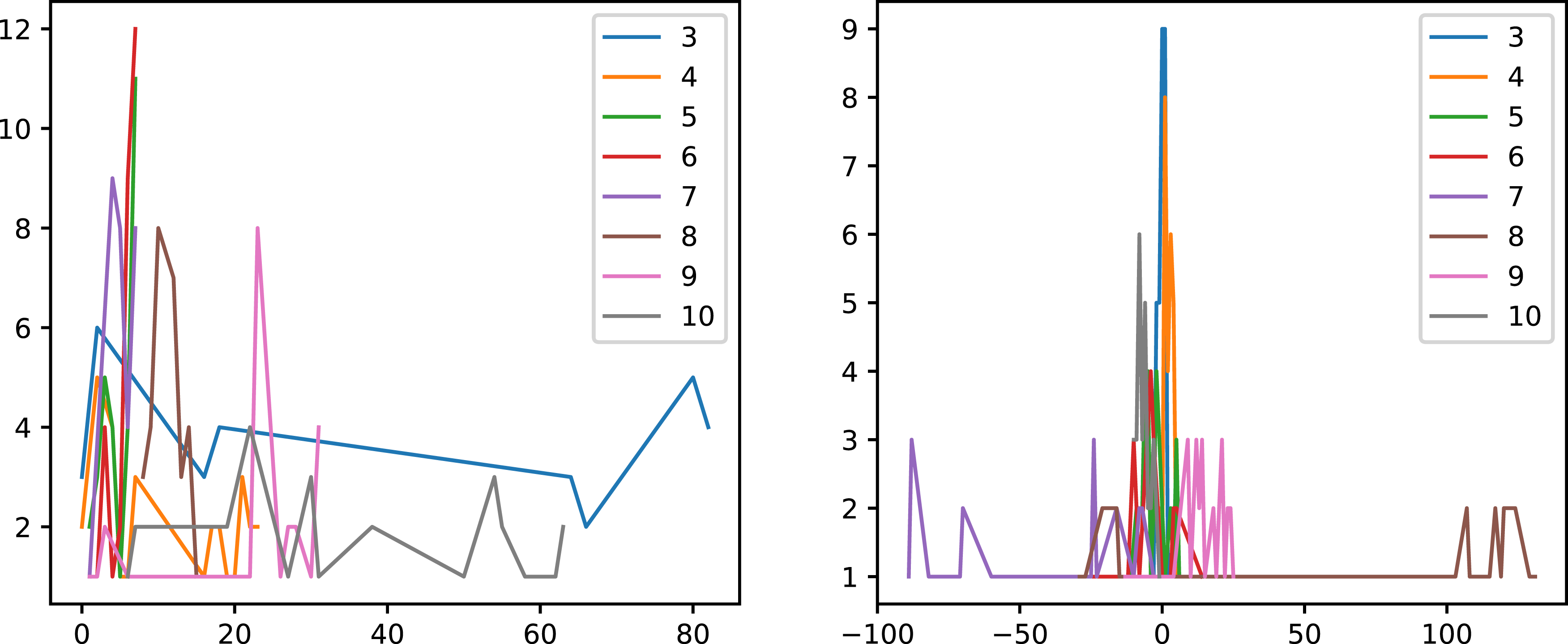

Example results (8 counter qubits, 10 to 15 steps) are shown in Figure 11. On the left is a purely incrementing circuit, on the right is a two-way walk using the reversal and superposition technique above. Example results for arc walk counter circuit (8 counter qubits, 10 to 15 steps). On the left is a purely incrementing circuit, on the right is a two-way walk using the reversal and superposition technique above. 1000 shots for each number of steps.

5.4. Random Jump Circuit

In this class of examples, instead of using smaller rotations for higher-order qubits, we set up the circuit so that these qubits are changed less often.

This can be done with and without a quantum coin controlling the gates. The example in Figure 12 uses a standard Hadamard quantum coin. Random jump circuit.

At each step, a different target qubit in the counter register is selected, according to some weighted random sampling function. This function should prefer the lower-order qubits). In the example below, the selection was done with the distribution {1/2, 1/4, 1/8, ….}. Note that the circuit creation now introduces classical randomness, as well as the circuit measurement still having quantum randomness.

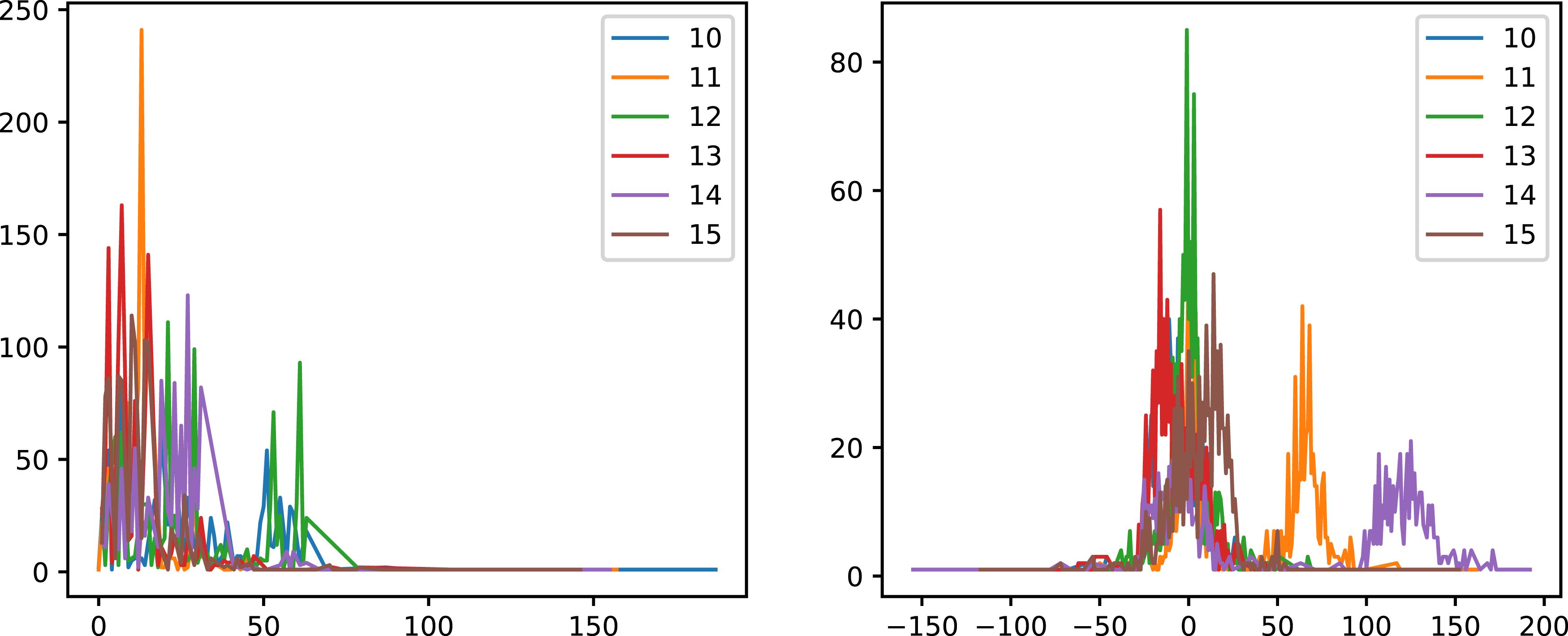

Results for up to 9 steps, using an 8 qubit counter register, are shown in Figure 13. As expected, each walk can go both ways (because randomly flipping a bit can reduce as well as increase a number), though the average tendency for an individual walk is to increase. This is because the registers are initialized to zero, so the process randomly diffuses out from zero. Random jump circuit results (positive only on the left, two-way walk using reversal and superposition on the right). 30 random jump circuits per step, 30 shots per circuit.

5.5. Cascading Disjunction Circuits

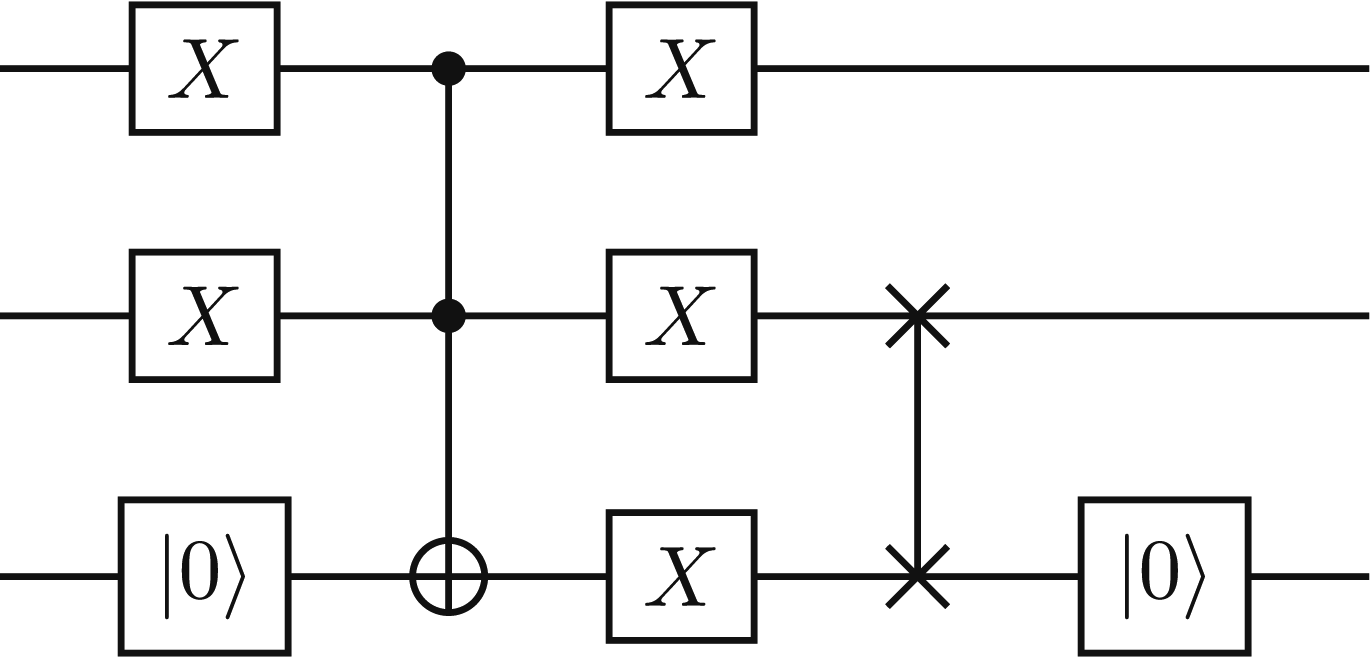

‘Cascading disjunction’ is an extra circuit component that can be added to any of the counting circuits above. The idea uses a standard circuit component that performs Boolean disjunction, as in Figure 14. Boolean in-place disjunction circuit, which sets the state of the higher order qubit to the Boolean OR of the input states of the lower and higher order qubits. The

In this implementation, such gates are added between randomly chosen lower-and higher-order bits, using the same sampling distribution as in the Random Jump Circuits. This enables the values of lower-order qubits to propagate to higher-order qubits, which increases and tends to preserve progress in the walk, because the higher-order qubits are less likely to be randomly selected to be switched back to |0⟩ later.

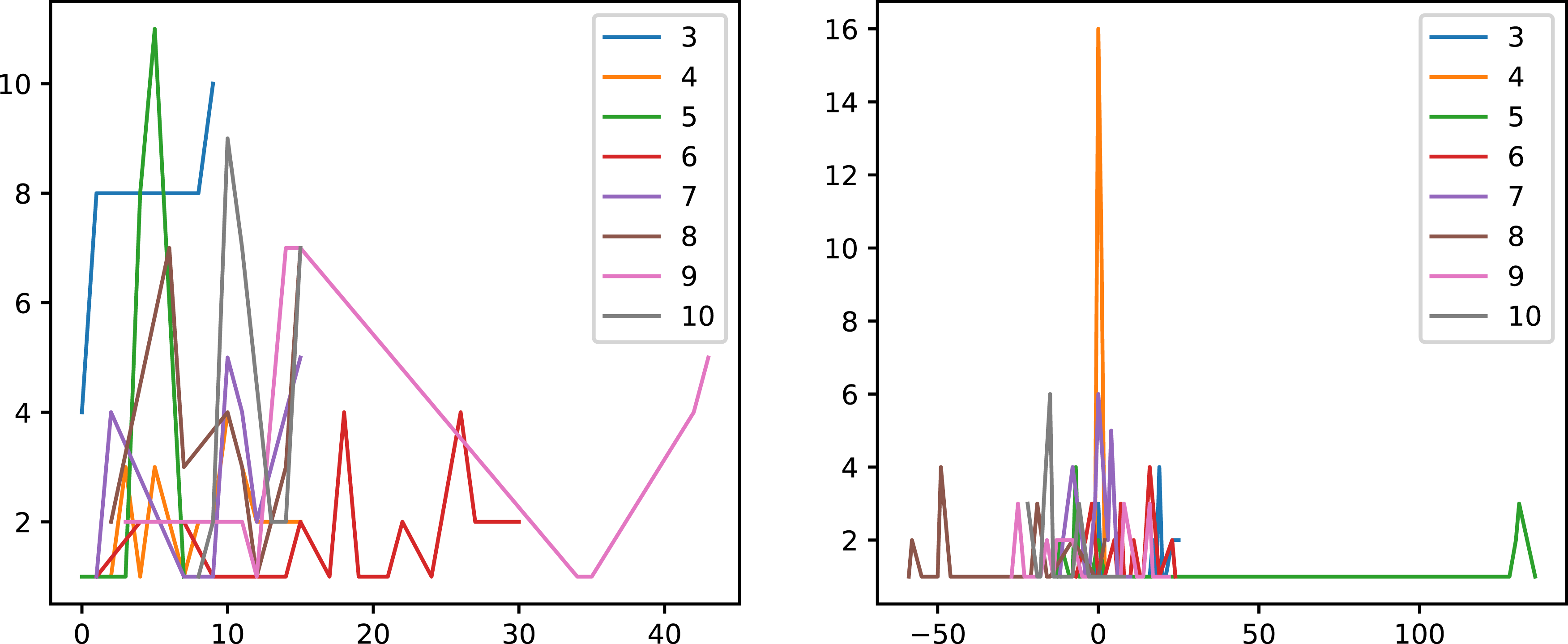

Results of including this technique are shown in Figure 15 (positive only on the left, two-way walk using reversal and superposition on the right). The walks are still random, but propagating more reliably with the cascading disjunction components. Cascading disjunction circuit results (positive only on the left, two-way walk using reversal and superposition on the right). 30 random jump circuits with cascading disjunctions per step, 30 shots per circuit.

Another variant of this technique would be to add a conjunction between the coin qubit and the target qubit, that sets the next higher qubit to |1⟩ before reversing the target qubit to |0⟩. This would behave like a limited-carry operation: it performs some of the coordination between qubit values in the traditional bit-counter circuits in the literature, but much less, in a much more targeted fashion.

6. Preliminary Results of Counting Circuits, With and Without Noise

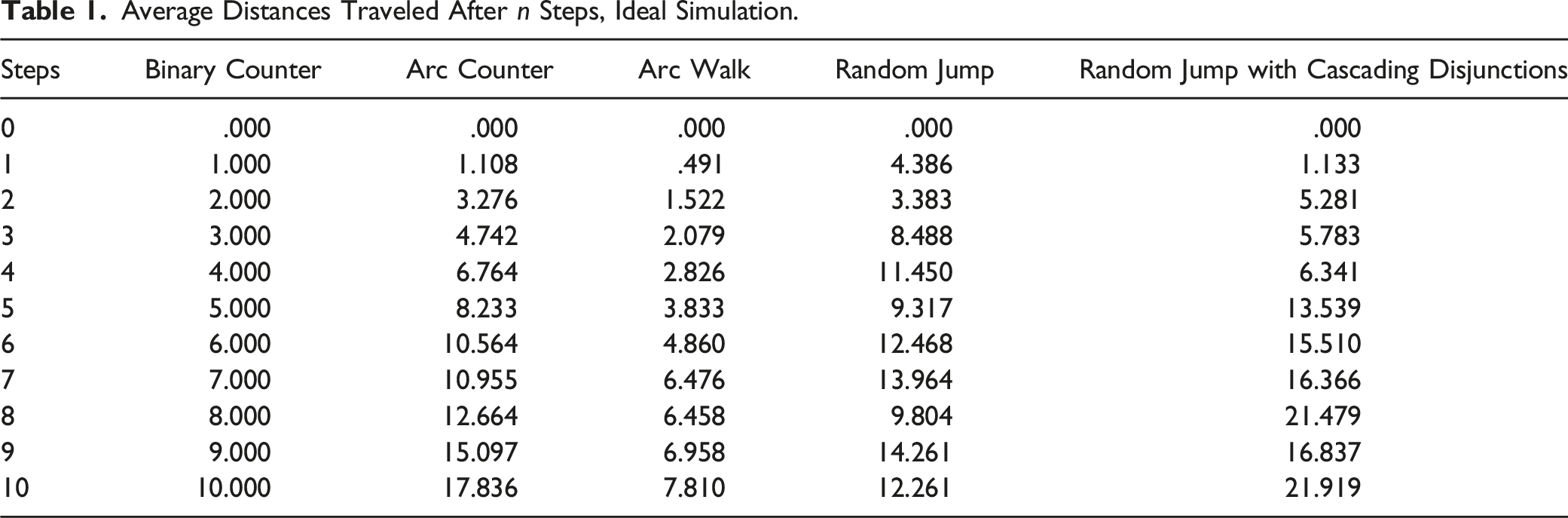

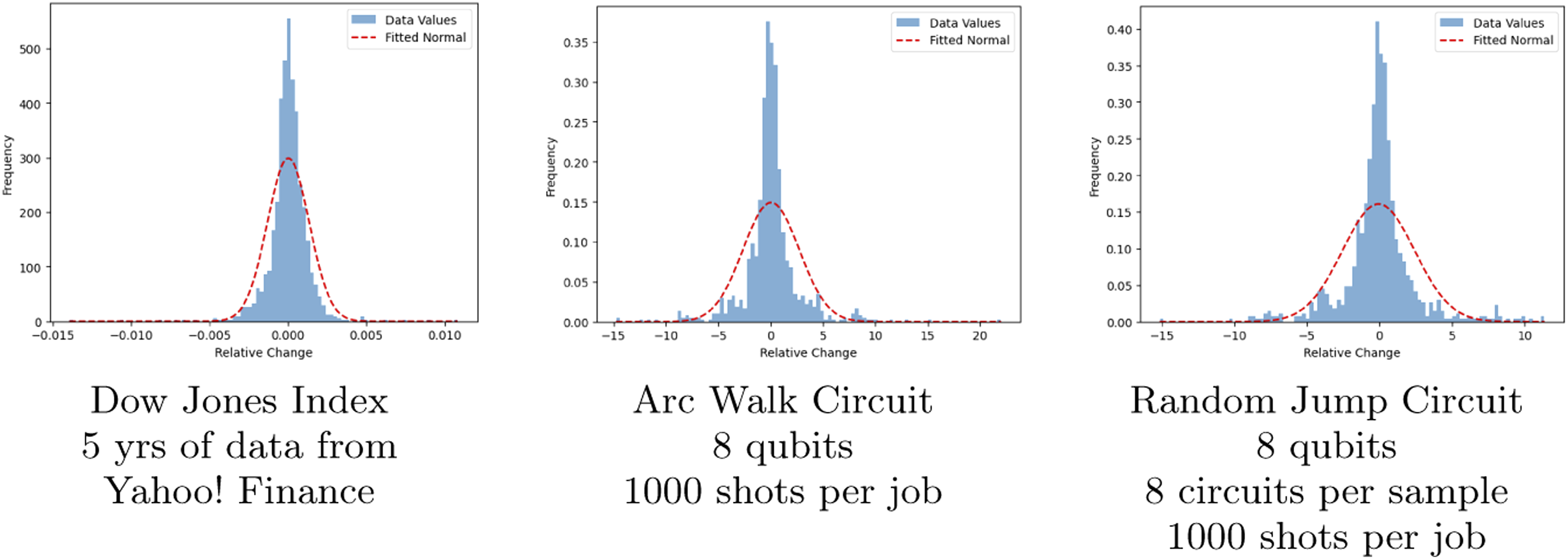

Average Distances Traveled After

Average Distances Traveled After

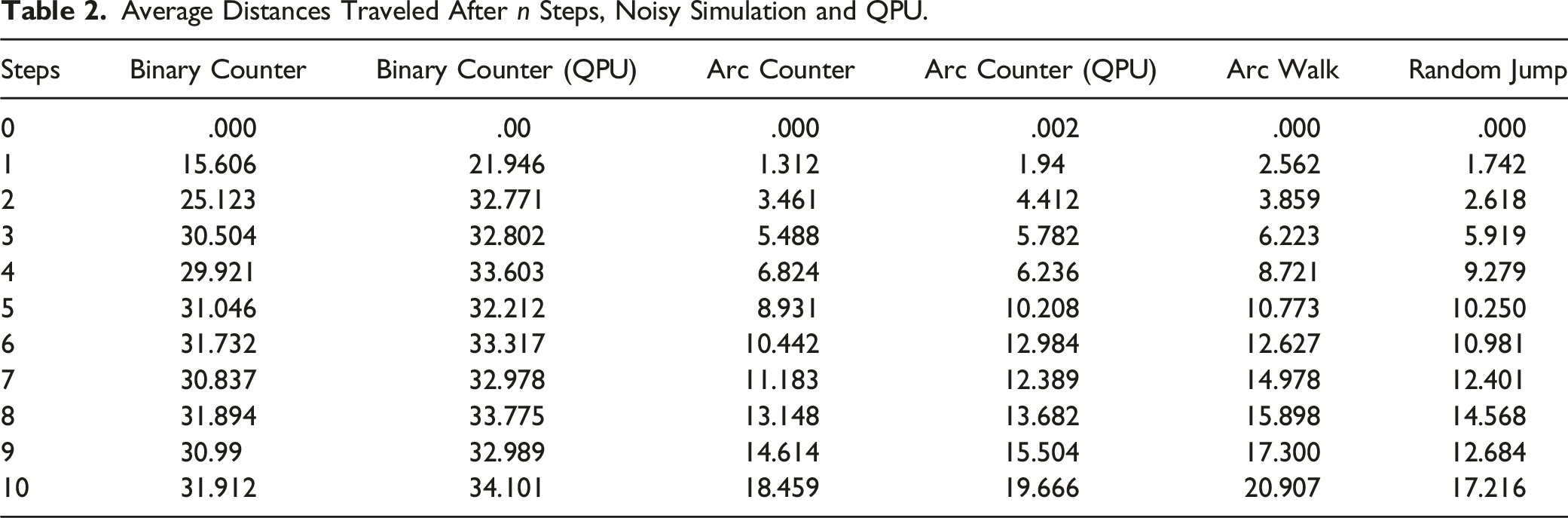

Ideal simulations can only be performed for small numbers of qubits (as a rule-of-thumb, as we pass 30 qubits we start to break the limits of classical simulation). In addition, the binary counter and arc counter circuits were run on a quantum computer with 11 trapped-ion qubits, described by Wright et al. (2019). These results are also show in Table 2, with the label QPU (quantum processing unit). Note that 11 qubits is relatively small by today’s standards: the number of reliable qubits in state-of-the-art machines in 2023 is at least in the 20s (IonQ Aria, 2022). The older machine was deliberately chosen for this experiment, because it highlights the frailty of exact binary counting circuits compared with approximation counting circuits.

The random jump results were computed using 30 shots on each of 30 randomly-generated circuits, so these results include both classical and quantum randomness. The binary and arc circuits are generated deterministically, so these results include 1000 shots for a single circuit, and all the randomness is quantum.

Key findings include: • The binary counter results are perfect with ideal simulation, but are rendered useless in the noisy simulation. They quickly converge to a random number around 32, which is the average • For all the other circuits, the difference between ideal and noisy results is much less. • The average results for the arc counter and arc walk circuits are the most reliable for simulating a monotonically-increasing position, with or without noise. • The random jump results also tend to increase, but tend to plateau and then move up and down randomly. (This randomness is smaller with a larger register).

The QPU results for binary and arc counting are compared graphically in Figure 16. This shows how quickly the binary counter becomes useless on a real QPU, whereas by contrast, the arc counter QPU results stay close to the ideal simulated results. Ideal and actual QPU results for binary counter and arc walk circuits. The QPU are much closer to the ideal monotonically-increasing results for the arc counter, whereas they are useless after 2 steps for the binary counter.

6.1. Quantum Walk Distributions and Real Financial Data

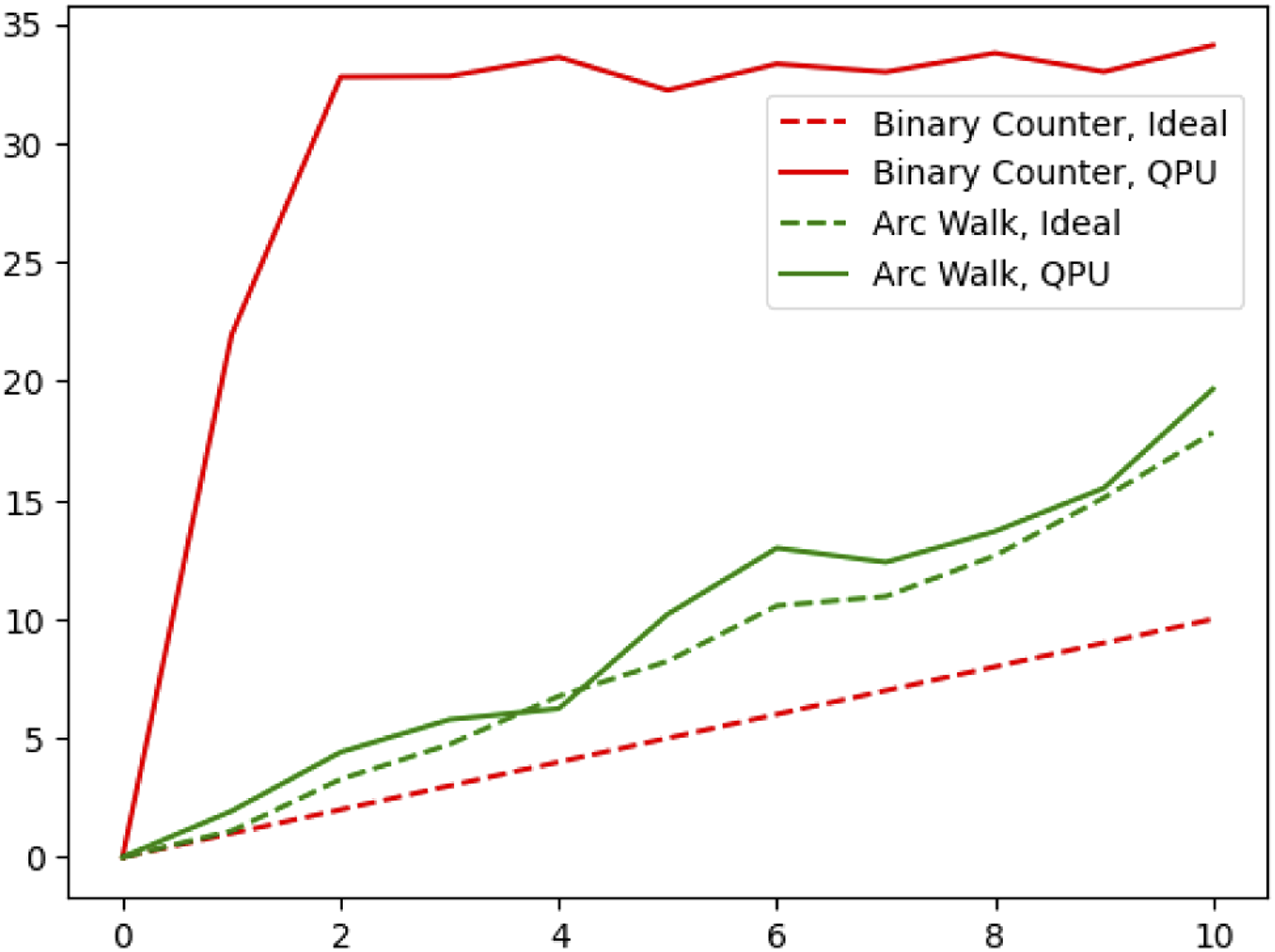

The notion that market returns follow a normal (or log-normal) distribution is standard and established in quantitative finance, even though it has been known for decades that heavy-tailed distributions are sometimes a better fit (Mandelbrot, 1963; Zi-Yi, 2017). In the traditional random walk model, daily changes in asset price are also assumed to follow a normal distribution, or even more simply, to take a constant step in either direction, and the accumulation of many such steps in a binomial distribution eventually approximates the normal distribution.

In practice, daily relative changes in stock prices also tend to have a distribution where most values cluster around zero, but significant outliers cause a normal distribution fitted with the same mean and standard deviation to underestimate the density in the middle if the distribution. This is shown for the Dow Jones Industrial Average in Figure 17 (using data from the Yahoo! Finance API). An initial comparison shows that distributions of daily changes generated by the arc walk and random jump circuits also follow this heavy-tailed pattern, which is not modeled well by normal approximations. Distributions of relative changes in the Dow Jones Industrial Average, and in quantum approximate counting simulations. The dashed curve (red) shows the best-fit normal distribution, that is, the normal with the same mean and variance.

This does not show that the quantum approximate counting circuits give a better prediction of stock price changes on a specific day. But it does show that the distribution of possible changes can be better-adapted to real-world financial data, without the artificial constraint that daily changes should be normally or uniformly distributed.

It should be noted that quantum results in Figure 17 are obtained with some parametrization and averaging, because all quantum job results are averaged over the number of shots, and the random jump circuits are averaged over a number of sample circuits as well. The impact of long-tail measurements depends on how large a sample we take. While this implies that there is some arbitrariness in results, it also means that parameters such as the number of qubits, circuits, and shots can be tuned to model particular datasets.

In related work, IonQ quantum computers have also been used to model the normal distribution itself, using a matrix product state technique that can readily be adapted to other distributions, because it relies on piecewise polynomial approximation (Iaconis et al., 2023). One of the longer-term promises of such work is that such distributions can be used as inputs for models such as the Monte Carlo simulations advocated by Egger et al. (2020). If we have a reliable circuit for preparing a particular distribution, then such a circuit could be used as input for Monte Carlo modeling by entangling its output with the simulated variables, rather than by sampling an individual number from the distribution and using this as a single ‘classical’ random input value.

7. Quantum Walks with Mid-Measurement: A Quantum Zeno Effect

A crucial difference between classical random walks and quantum walks is that quantum walks behave differently depending on when they are measured. There is no classical counterpart for this behavior, because a hallmark of classical systems is that their state is revealed but not changed by measurement.

In theory, it is possible to prevent a quantum system from changing state at all, by measuring smaller and smaller intervals. As a simplest example, the gate

The probability of transitioning to the state |1⟩ is thus proportional to sin2(

This phenomenon is sometimes called the quantum Zeno effect, after Zeno’s classical paradox of motion. Of crucial interest for this paper, such effects have also been observed in psychology. Kvam et al. (2015) demonstrated that participants are likely to form less extreme judgments of moving scenes if asked to judge the motion in smaller time-frames, and Yearsley and Pothos (2016) demonstrated that participants evaluating evidence in a criminal trial are more likely to change their minds if several pieces of evidence are presented before asking for a decision.

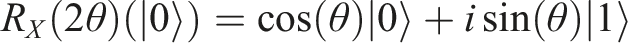

It is easy to add mid-circuit measurement to our quantum approximate counting circuits and to evaluate the results, at least in simulation. (The availability of mid-circuit measurement varies across quantum platforms currently, partly because the accuracy of the measurement and reset operations is hard to guarantee.) Example results are shown in Figure 18, simulating walks with 20 steps, with no mid-measurement, measurement every 7 steps, and measurement every step. The average positions reached by these walks were 35.6, 14.3, and 5.7 respectively, so as expected, the use of mid-measurement reduces the average distance traveled in the quantum walk. (It is not always this simple, particularly due to periodicity.) A further step for this research would be to experiment with parameters including the number of steps, distribution of step sizes, and frequency of measurements, to see if different levels of deliberate decoherence can bring the quantum results of Figure 18 closer to the distributions observed with the Dow Jones Index and other asset prices. Arc counter circuit results, simulating the results of quantum walks with 20 steps, with and without mid-circuit measurement at the given positions.

7.1. Mid-Measurement, Transactions, and the Housing Market

In the quantum economics theory of Orrell (2020), the act of measurement is compared with fixing a transaction, and subjective opinions of value can vary like quantum states between transactions. By analogy with the quantum Zeno effect, we may expect that opinions about prices vary less if there are more frequent measurements, i.e. more frequent transactions. Evidence is presented by Orrell (2022) and Orrell and Richards (2023) showing that price volatility is not constant, and that high volatility corresponds to both uncertainty in value, and wide ranges between different bid prices and asking prices. This section presents some findings from housing market data, indicating that indeed, larger transaction volumes correlate with smaller differences between the higher prices asked by sellers, and lower prices offered by buyers.

The range of prices offered to buy or sell an item is typically much more apparent in the housing market than the stock market, because each item for sale is priced and negotiated much more individually. A standard process involves the use of comparable sales or comps, in which transactions on properties nearby in time, space, and value are used to form a pricing estimate (Pagourtzi et al., 2003). If these nearby transactions correspond to measurements of the system, then the quantum Zeno effect would suggest that the outcome of this measurement is more certain if there are more nearby transactions.

This effect was demonstrated in practice using the following modeling assumptions, and summary data published by Zillow.

When a house sells for less than its original listing price, we assume that this indicates a difference between the seller’s and the buyer’s estimate of the house’s value. Larger uncertainties in the market would support larger differences of opinion. Even when considering monthly averages of data, we would expect that a smaller number of sales in a given area would contribute to greater market uncertainty, and this should correlate with a greater difference between the list price and the sale price.

By contrast, when a house sells for

Thus, we assume that the markets where lack of comparables is a primary factor in price uncertainty are those where the average sale price is less than the average list price. It follows that, if we restrict our attention to markets where the average sale price is less than the average list price, we should see evidence that lower transaction volumes are correlated with greater disparities between list price and sale price.

Data used to test these hypotheses was gathered from the Zillow Housing Data portal 1 . The datasets are summary statistics: counts and averages. These are only comparable within a given metro area: for example, 2000 sales in a month would be very low for New York, NY, and very high for Wichita, KS. Thus we compute correlations by comparing monthly statistics within each metro area.

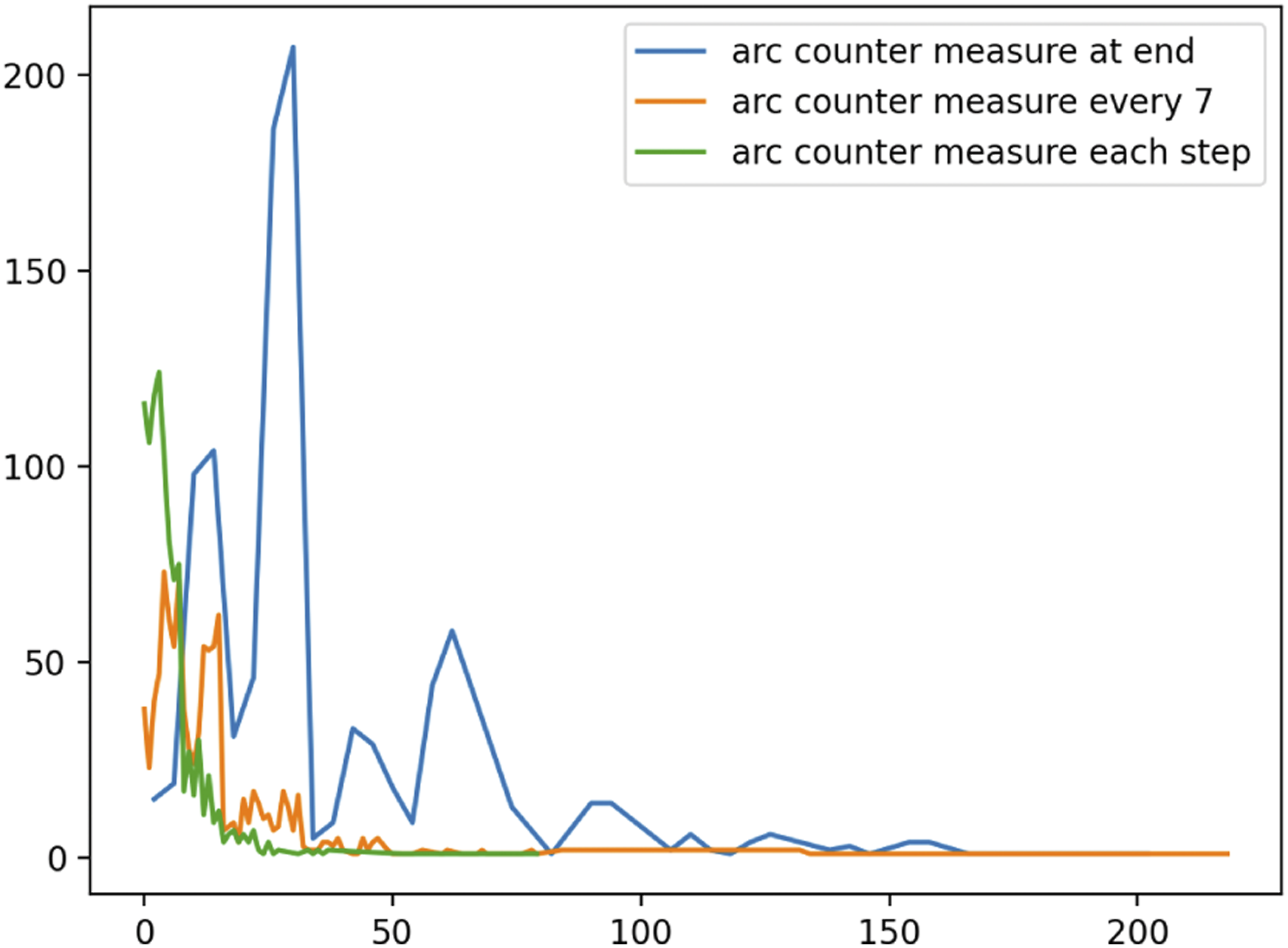

The algorithmic steps are as follows: • For each metro area: • For each month: – Collect the sales count and the average list-to-sale price ratio. – If the average list-to-sale price ratio is greater than 1, skip this month. • This gives a set of (count, ratio) pairs, e.g. [(822, .98), (785, .96), (803, .97)], etc. • Compute the Pearson correlation coefficient between these sales counts and list-to-sale price ratios. • Gather the Pearson correlation coefficients into a histogram to see if there is a general trend.

The result is in Figure 19. Nearly all the correlations are strongly positive. This shows that, in cases where a house is sold for less than its asking price, there is a very strong correlation between the translation volume, and the closeness of the list and the sale prices. Histogram showing correlations between larger numbers of transactions and smaller list-to-sale price differences. Data from Zillow Housing Data.

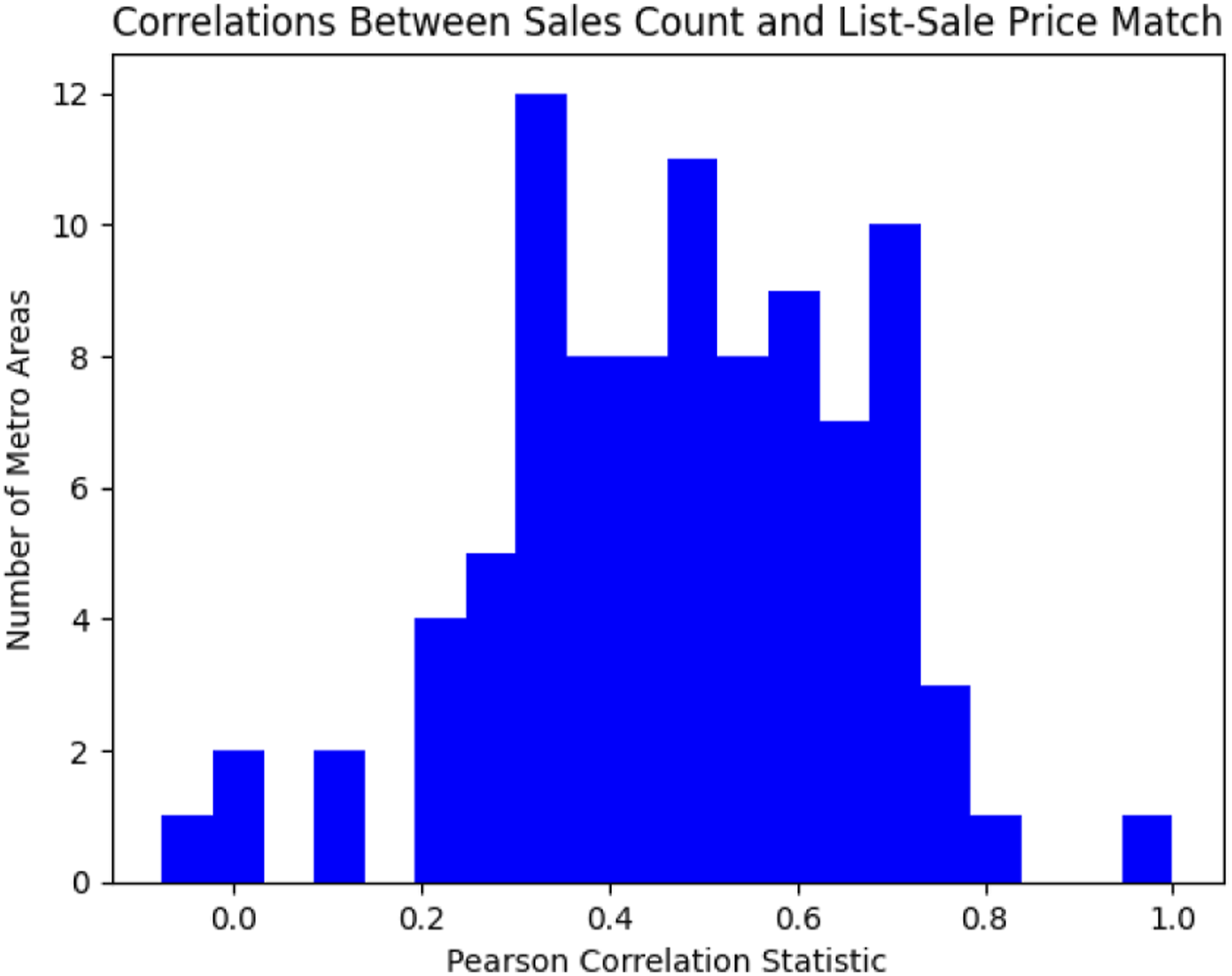

In addition to the number of sales in a region, it is instructive to look at the correlation between home prices and the list-to-sale price ratio since the price of a home is the driving factor in a home purchase. As a proxy for home prices we use the Zillow Home Value Index (ZHVI) which reflects the typical value for homes in the 35th to 65th percentile range.

Figure 20 shows both the raw monthly values (above) for the San Francisco region as well as monthly changes (below) comparing the ZHVI and list-to-sale ratio values for the last 6 years. The bottom chart shows more clearly the positive correlation between the two if we consider monthly changes. Monthly changes give a better sense of market ups and downs. As prices fall, we see that buyers are more likely to bid lower than the list price. Conversely, in a rising market, buyers are more likely to bid more than the list price. Monthly values of Zillow Home Value Index (ZHVI) and list-to-sale ratio as well as monthly changes that show positive correlation in both rising and falling markets.

That fewer transactions correlates with larger list-to-sale price ratios is in line with the trends expected from quantum economics models, in which various beliefs and opinions about value can evolve and diverge more when there are fewer transactions or measurements. The correspondence between overall price changes and list-to-sale ratios could be described by a quantum walk with a clear directional momentum. However, it is also easy to propose simple non-quantum models for these behaviors. Fewer comparable samples leads to greater sampling error and thus greater price uncertainty: thus lower liquidity brings higher volatility. One potential strategy for evaluating and distinguishing which approaches are better would be to consider the dynamics/evolution of prices in such models: for example, to try applying the quantum Monte Carlo sampling reduction described by Egger et al. (2020) to the problem of making accurate price estimates with fewer comparable sales.

7.2 Macro Effects: Collective Beliefs and Reckonings

As well as the belief states of individuals, quantum models have been proposed to represent the beliefs and ideologies of whole groups of people (Kitto & Widdows, 2016). With pricing models, the influence of contrasting beliefs held by groups of people is especially important — as Bachelier (1900) wrote, “Contradictory opinions in regard to these fluctuations are so divided that at the same instant buyers believe the market is rising and sellers that it is falling.” The importance of group beliefs is particularly apparent with rare luxury goods: the opinion of a few wealthy people can be enough to establish high prices for rare art, even without mass appeal.

In such a model, decision making becomes partly a constructive process. This is standard in quantum models for individual behavior, where participants tend to remain in a particular state once it has been chosen if an identical question is asked (Busemeyer & Bruza, 2012). Memory and recollective processes are studied from a quantum-like point of view by Waddup et al. (2023), who try to measure the influence of intervening steps in decision-making. One problem in demonstrating quantum-like properties, such as violations of temporal Bell inequalities, in these processes is that it is hard to rule out interference and signaling.

With financial decisions, ‘signaling’ is a clear part of making decisions collective. Historic examples are notable in the early telegraph era, where the ability to publish outcomes of horse-races and stock-transactions across large distances led to opportunities for short-term fraud and long-term financial services industries (Standage, 1998). Applying the notion of quantum measurement and state collapse to a whole group of people may sound far-fetched in the abstract, but less so when we consider how much modern infrastructure is built to make a decision in one place felt immediately everywhere. More generally, there are many social processes where the views of groups of people are changed, sometimes reluctantly, in the face of events — here the notion of a ‘transaction’ becomes the even more general ‘reckoning’.

Such collectively-recognized events are naturally scarce: we cannot create housing transaction examples in the same way that we can collect and annotate training examples with other supervised machine learning models. Any quantum ‘modeling advantage’ with such problems would be especially compelling, because when training data is scarce, we cannot just train larger classical models for longer and assume they will give better results. Quantum Monte Carlo pricing models are expected to converge faster with fewer samples (Rebentrost et al., 2018), which raises the intriguing question of whether such techniques lead to faster convergence with fewer examples from real experience. Even with other asset types such as stocks and options where transaction samples are plentiful and speed is more important, the dynamic effects of large events from other market sectors could be anticipated more effectively. There is still scope for the use of quantum-like models in these areas, even before the widespread use of quantum computers.

8. Conclusions and Future Work

This paper has introduced and explored quantum approximate counting circuits, as fault-tolerant alternatives to the traditional quantum walk design, particularly for the way position is tracked and incremented. The new designs presented here lack some of the mathematical elegance, and the theoretical results, that accompany the traditional quantum walk design: and in particular, there are no longer unit increment and decrement operators that correspond to the ladder operators of a quantum oscillator. However, the enormous advantage for the simpler models presented here is that they behave much more accurately on NISQ-era quantum hardware, which could contribute to commercially advantageous applications of quantum computers in economics.

These are just prototype designs so far. The main next steps for this work are to evaluate the proposals more quantitatively, answering the following two questions: 1. How do results on NISQ-era quantum computers correspond to ideal or simulated results for small circuits, and what does this indicate about the expected behavior on quantum hardware for systems that are too big to simulate on classical hardware? 2. How do results compare with the distributions observed with real market behaviors?

The ideal outcome of this research is that we would find circuit walk designs that are robust enough to given better models of market behavior that include some of the benefits of quantum approaches noted by Orrell (2020), while being able to run on today’s quantum hardware without waiting for error-correction.

Given the crucial and explicit role that measurement plays in quantum models, it is possible that some of the earliest such quantum advantages will be apparent in markets where a small number of significant transactions can dramatically influence the price of a particular asset. An initial analysis suggests that the housing market may be an appropriate area to test this hypothesis.

This work can be seen as part of a larger program to bring value in economic modeling on quantum computers. Other successes for quantum circuit designs include modeling and sampling from key distributions (Iaconis et al., 2023), and demonstrating particularly effective time-series models using copula functions implemented using entanglement (Zhu, Shen, et al., 2022). Related work in cognitive science has demonstrated that simple quantum circuits can also be used to model decision-making processes (Widdows & Rani, 2022). In the next few years, it is likely that several such small components, being developed today, will be used as key building blocks in the first profitable applications of quantum computing in economics.

Footnotes

Acknowledgements

The author would like to thank Emmanuel Pothos and David Orrell for interesting conversations and encouragement.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by IonQ, Inc.