Abstract

While remarkable recent developments in deep neural networks have significantly contributed to advancing the state-of-the-art in computer vision (CV), several studies have also shown their limitations and defects. In particular, CV models often make systematic errors on important subsets of data called

Introduction

Computer vision (CV) (Szeliski, 2022) is a field of artificial intelligence (AI) that enables computer systems to obtain semantic information from digital images and videos. Following the remarkable recent developments of deep neural networks, significant achievements have been made in advancing state-of-the-art performance in various CV tasks (Krizhevsky et al., 2017), among which it is crucial to mention safety-critical applications, such as autonomous driving (M. Zhang et al., 2018).

However, empirical studies, for example Recht et al. (2019), show that CV models struggle to generalise to new data slightly different from those on which they were initially trained and tested. A related problem is the presence of important subsets of data, called

Accurately detecting underperforming slices, called

In this work, we propose to tackle the slice discovery problem with a

We present our modular neurosymbolic framework for slice discovery, which consists of a closed loop that involves data generation (or subsampling), object detection (or image classification), scene graph generation that describes the semantic contents of images, rule learning to detect rare slices, and neural network model mending. First, we provide image datasets with rare slices leveraging In order to test the proposed approach on various slice discovery settings, we focus on generating datasets with rare slices. Closest to our work, Eyuboglu et al. (2022) considered the generation of rare slices in the context of the hierarchical class structure, but did not consider further class taxonomies besides the default one; this makes their method not really suitable for the scenarios we are considering. In contrast, a taxonomy-based approach is pursued in this work, and a methodology for building datasets with rare slices is presented. We provide an implementation along with experimental results for both datasets to test the effectiveness of rare slice generation, rule extraction on the classification results of the neural network model, and model mending. The results show that our approach could reliably generate rare slices and that rule learning delivered meaningful rules describing rare slices. Furthermore, feeding the neural network with additional training data generated according to such rules resulted in a significant performance improvement, as misclassifications decreased considerably.

With our framework, we can generate controlled rare slices in datasets to then test the model behaviour on them. Furthermore, it allows the automatic mining via ILP of human-readable logical rules that pinpoint the deficiencies of a classification model and benefit the user’s intuition for model mending. The transparent nature of logical rules makes them highly interpretable and provides a basis for finding model explanations from possible background information.

This article extends our previous work (Collevati et al., 2024) with (i) a more detailed and extended related work section, (ii) the use of further ILP systems, (iii) a more rigorous experimental evaluation using an additional real-world dataset, and (iv) an improved implementation of the proposed SDM framework.

The remainder of the article is organised as follows. In Section 2, we provide a review of related work on SDMs. Section 3 presents an introduction to the

Related Work

Several studies (Buolamwini & Gebru, 2018; DeVries et al., 2019; Koenecke et al., 2020; Oakden-Rayner et al., 2020) have shown that neural network models often make systematic errors on data slices. The impact of such errors is especially pronounced for critical application areas, such as medical diagnostics (Olesen et al., 2024) and fraud detection (Kalid et al., 2024), where accurate identification of rare slices positively influences essential decision-making. Consequently, recent research has proposed automated SDMs aimed at identifying semantically meaningful slices in which the model exhibits prediction errors. An optimal SDM should automatically detect data slices containing

Previous research has addressed the slice discovery problem by focussing on datasets with metadata or structured (e.g., tabular) data. In Chung et al. (2019) the

Dealing with the slice discovery problem becomes particularly challenging for unstructured data, such as images and audio. Recent studies have proposed methods for identifying slices in this context. Several of them embed the data in a representation space and then use clustering or dimensionality reduction techniques. The

The recent explosion of generative AI has seen various works considering the use of such models to address the slice discovery problem. The

Distinguishing between positive and negative examples is central to our method. Prior work has leveraged linear temporal logic over finite traces (LTLf) to separate temporal classes (Francescomarino et al., 2024), and used learning from interpretation transitions (LFIT) to explain black-box behaviour (Tello et al., 2023). Other approaches generate interpretable signal temporal logic (STL) formulas for time-series classification (Yan et al., 2022), integrate symbolic reasoning with neural models via abductive inference (Dai et al., 2019), or learn differentiable rule sets from continuous features (W. Zhang et al., 2023). Our method follows this line of research but focuses on ILP for discovering logical rules in the context of slice discovery for high-dimensional visual data.

While several prior works described above have tackled the problem of identifying data slices on which models underperform, they typically focus on black-box or subsymbolic techniques. A key challenge in this area concerns the lack of interpretability in the discovered slices. Our work directly addresses this gap by introducing a neurosymbolic approach that extracts human-readable rules to describe underperforming slices. Furthermore, we demonstrate that the rules can also be effectively applied to mend the CV model, thereby providing their direct practical application.

Preliminaries

In this section, we provide an overview of both the

Super-CLEVR

Inspired by the seminal work on

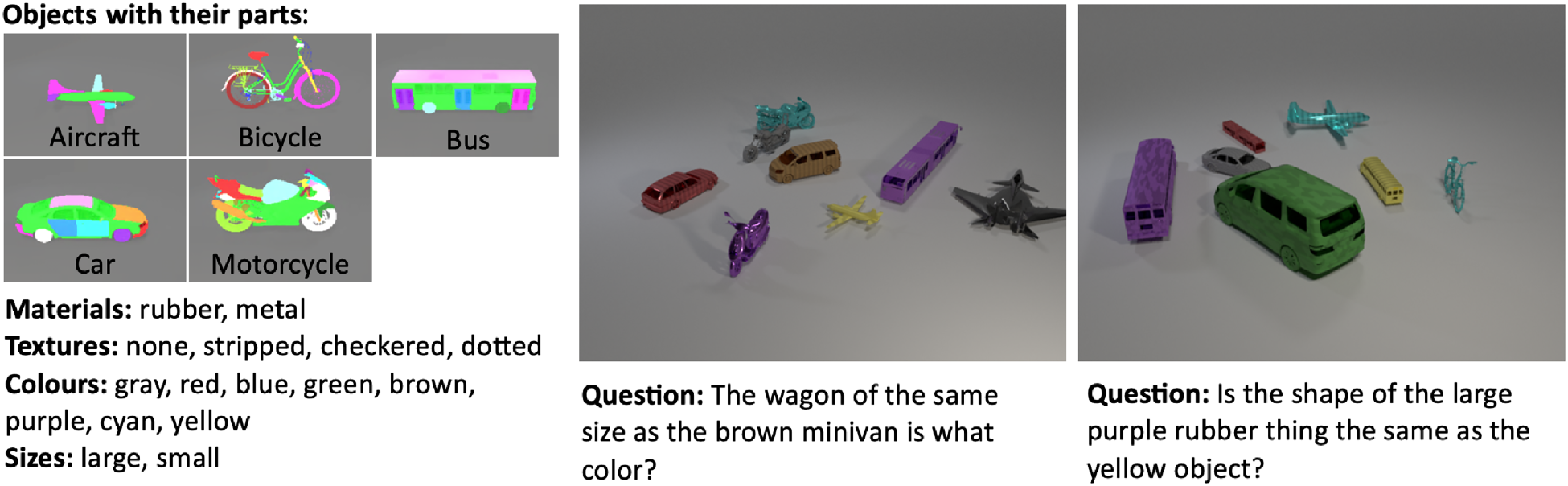

The figure on the left shows images from

Hierarchical Structure of Vehicle Classes and Their Corresponding Subclasses (Shapes) Defined in the Original

The

Throughout the article, we use a running example to illustrate our methodology: a rare slice from the

ImageNet

To validate our SDM framework on a real-world benchmark, we use the well-known

Inductive Logic Programming

Inductive logic programming (Muggleton, 1991) is a subfield at the intersection of ML and KRR that aims to find patterns in data by learning logical descriptions, utilising background knowledge (

ILP has applications in various fields, among them robotics (Youssef & Müller, 2023), bioinformatics, for example, protein structure discovery (Turcotte et al., 1998), medicine, for example, drug design (Enot & King, 2003; Finn et al., 1998), and ECG waveform learning (Kókai et al., 1997), to mention a few; see Bratko and Muggleton (1995) and Lavrac and Dzeroski (1994) for more of them.

A number of ILP approaches and tools are available; for a comprehensive survey on ILP, we refer to Cropper et al. (2022). This work tests the following three ILP systems as symbolic reasoning components within the proposed neurosymbolic architecture for slice discovery:

The ILP systems we use, with the exception of

The ability of these three ILP systems to learn from noisy data is fundamental to the functioning of our SDM. Indeed, a rare slice can be interpreted as a set of exceptions on which a classifier underperforms. In order to find the pattern that characterises such a set of exceptions, we use the same idea as in Shakerin et al. (2017), which is to consider misclassifications as positive examples and correct classifications as negative examples to obtain rules describing rare slices.

Continued

Continuing our example, suppose the trained model frequently confuses “utility bike” with “mountain bike” and misclassifies it as “sports bicycle” instead of “urban bicycle”. After feeding these misclassifications (positive examples) and correct classifications (negative examples) into an ILP system, it might produce the following logical rule:

This rule provides a precise, human-readable diagnosis. It has learned that “a scene

Neurosymbolic Framework for Slice Discovery

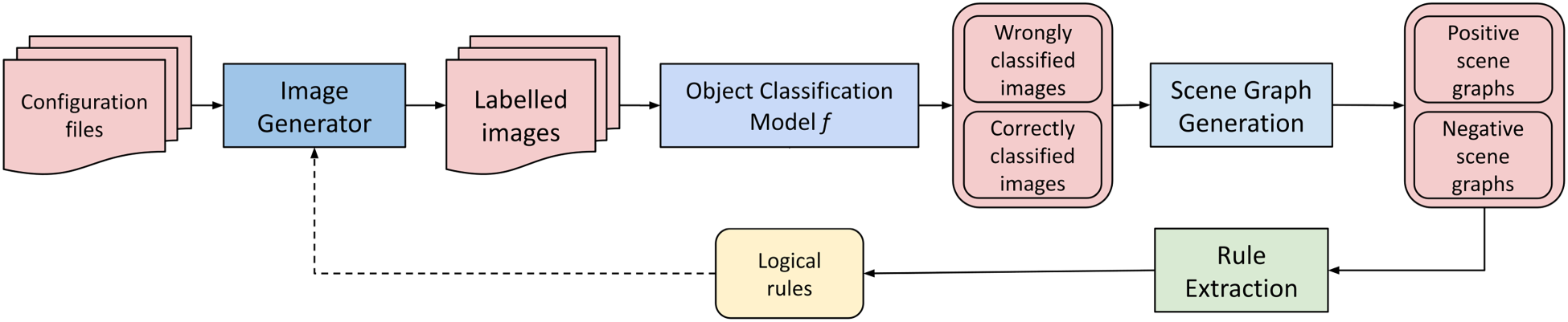

In order to construct a neurosymbolic SDM approach, we propose an architecture of a system as shown in Figure 2. The system comprises several modules, shown as boxes, which process inputs in a pipeline. From configuration files or available data sources, datasets containing rare slices are constructed (either through generation or subsampling) on which a neural network model is trained and evaluated. Then, a semantic description of the images is produced, from which rules for detecting rare slices are extracted. Finally, the rules are used to generate further training data to mend the neural network model, thus closing the loop of model learning. In the following, we describe the tasks in the processing pipeline in more detail.

Overview of the proposed neurosymbolic SDM architecture. The solid arrows show the data flow, while the dashed arrow represents user input in selecting extracted logical rules to generate additional training data to improve classification performance.

According to Kautz’s taxonomy of neurosymbolic systems (Sarker et al., 2021), our SDM approach aligns with the [

The first step in the pipeline is concerned with data preparation, that is, producing datasets containing rare slices. We provide a methodology for them, which will be detailed in Section 5.

CV encompasses a wide range of tasks, among which image classification and object detection are two of the most prominent. Image classification assigns a single label to an entire image, identifying the most prominent object or scene within it. In contrast, object detection involves identifying and localising multiple objects within an image by predicting both their classes and bounding boxes. Our framework handles both tasks, but requires adjusting the processing pipeline accordingly.

At an abstract level, the task involves creating a labelled dataset

For illustration, in the

For datasets with a synthetic generator, such as

The

Object Detection and Image Classification

Once a dataset is prepared, we train and evaluate a neural network model to produce classification results that will be analysed for the discovery of rare slices. This process involves a standard training and validation cycle, followed by a specific step to categorise the results for our SDM pipeline. First, a model (e.g.,

These sets of positive (failure) and negative (success) examples constitute the input for the subsequent scene graph generation and rule extraction steps.

Scene Graph Generation

Scene Graph Generation (SGG) involves creating a semantic graph representation from an input image. A scene graph is a (labelled) directed graph

In synthetic datasets like

Rule Extraction Via Inductive Logic Programming

We define an instance of the

The left side of the figure shows excerpts of positive and negative examples with their context-dependent background knowledge, in the language of

Then,

As an example of ILP encoding, Figure 3 shows excerpts of positive and negative examples and part of the mode bias used for

Consider a scene where “a yellow, rubber utility bike facing south” is misclassified by the object detector. Its corresponding scene graph is translated into a positive example for the ILP system for rule extraction. In the

The mode bias then defines the structure for the rules the ILP system can learn. For example,

Given the complete input in Figure 3,

Informally, these rules express that a scene is considered difficult for the model to classify if it contains a “utility bike” with specific attributes. For example, the first rule says that whenever there is a scene with a “large rubber utility bike facing south”, the neural network model will likely make a misclassification error on such an object.

The

Model Mending

The final step in our SDM pipeline is model mending, where the extracted rules discovered in the previous stage are used to correct model deficiencies. In particular, we note that while ILP systems automatically extract logical rules from validation examples, our current SDM implementation requires user input for hypothesis formation and rule selection. The user analyses the extracted rules to identify common patterns and formulate generalised candidate rules for model mending. This

Continued

Figure 4 shows the effectiveness of our model mending process: before the intervention, the model misclassifies the “utility bike” rare slice as “sports bicycle”, whereas after retraining it with data guided by the extracted rules, it correctly classifies the “utility bike” as “urban bicycle”.

The left figure shows a scene (based on the

Motivated by the limitations of existing rare slice generation methods in our context and the need for a reliable testbed for our SDM, we present a taxonomy-based methodology to induce the occurrence of controlled rare slices in a model.

In our framework, following the characterisation introduced by Domino (Eyuboglu et al., 2022), we define a rare slice as an object subclass that appears infrequently in the dataset and on which the model underperforms. Therefore, a rare slice has two key properties:

While statistical rarity can be directly controlled (e.g., by setting a low occurrence probability via the

To formally identify these underperforming slices, we use a main performance metric for each task as a proxy for difficulty. A target class is flagged as possibly containing a rare slice if its metric falls below a dataset-dependent target class threshold

Our methodology for generating rare slices begins with a given class hierarchy

Following the above steps, specific rare slices can be generated depending on the taxonomy under consideration. Furthermore, for each generated image, the

Experiments

The proposed SDM approach was evaluated in a series of experiments aimed at assessing the effectiveness of rare slice generation, rule extraction, and model mending. To demonstrate the versatility of our approach, we performed the evaluation on two distinct benchmarks: the synthetic

Evaluation Platform

The evaluation platform is a server running Ubuntu 22.04.2 LTS (kernel version 6.8) with two Intel Xeon Silver 4314 CPUs (each having 16 cores at 2.40GHz, 2 threads per core, and 24MB of cache), 1,024GB of DRAM, four NVIDIA RTX A5000 GPUs (each having 24GB of VRAM), and the CUDA 12.2 API.

Super-CLEVR Experiments

This section details the experimental setup and presents the results from our evaluation of the proposed SDM architecture for the object detection task on the generated

Experimental Setup

In the following, we describe the experimental setup for each module of our SDM architecture.

Note that we purposely designed

For For For

All hyperparameter values mentioned were empirically fine-tuned by exploratory experimentation. Specifically, we selected several reasonable values to test different configurations of ILP systems in extracting meaningful rules describing rare slices. All other system hyperparameters use default values. A set of rules was obtained for each experimental configuration based on the hierarchy, target class, ILP system, and hyperparameter values considered. This comprehensive evaluation allows us to assess the effectiveness, speed, and verbosity of each ILP system and to verify that the identified rare slices are consistent across different configurations.

Rules extracted by

In the following, we present the experimental results for rare slice generation, rule extraction, and model mending from the iterative application of our SDM architecture.

For For

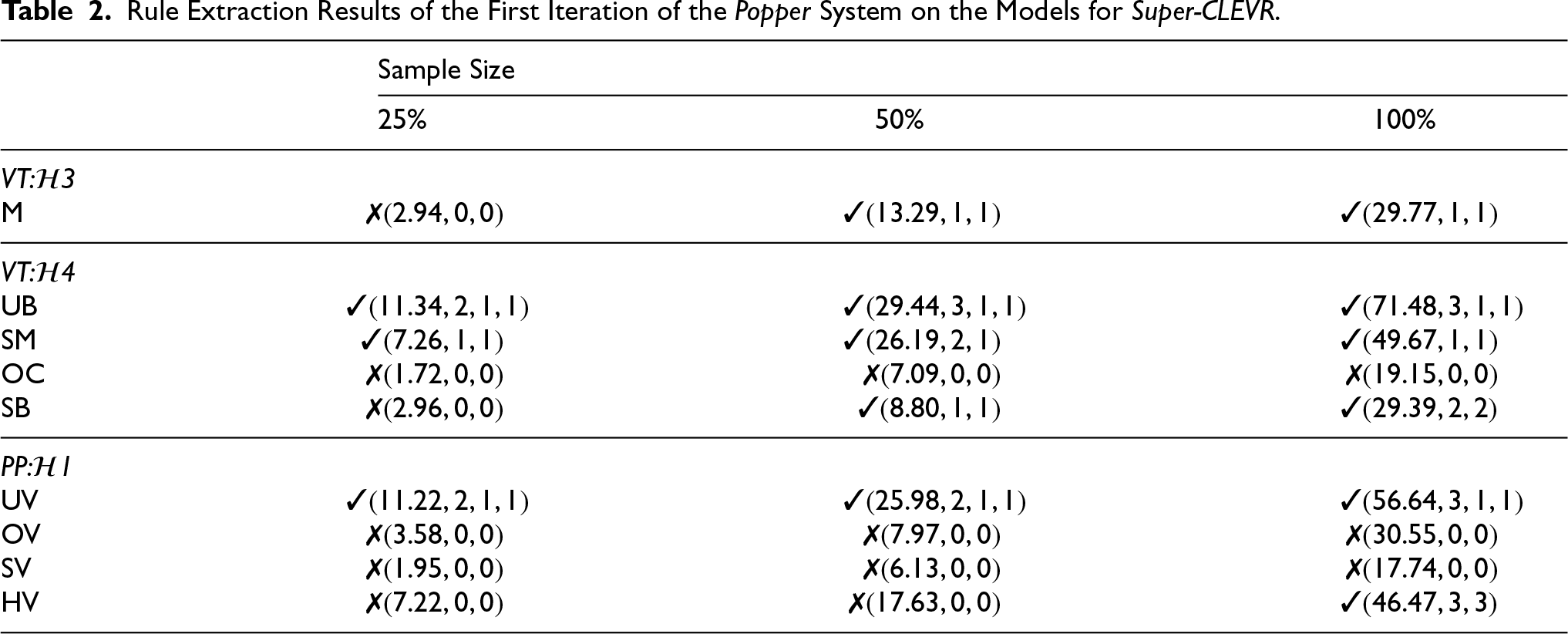

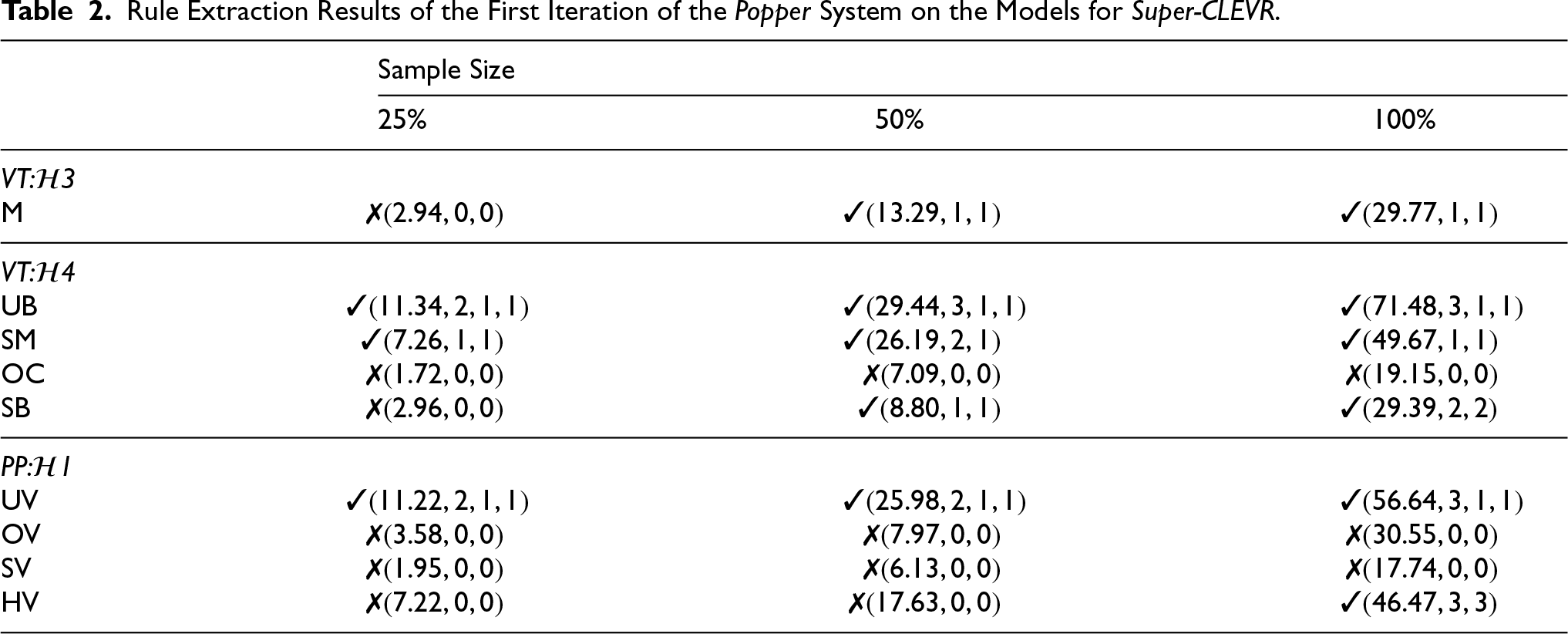

Rule Extraction Results of the First Iteration of the Popper System on the Models for Super-CLEVR .

Rule Extraction Results of the First Iteration of the

Rule Extraction Results of the First Iteration of the

Rule Extraction Results of the First Iteration of the

Motorcycle Rule Extraction Results of the “motorcycle” Class of the First Iteration on the

where

For “specialised bus”, For “offroad car”, For “sports motorcycle”, For “urban bicycle”, Rule Extraction Results of the “specialised bus” Class of the First Iteration on the Specialised bus

Offroad car

Sports motorcycle

Urban bicycle

Urban bicycle

where

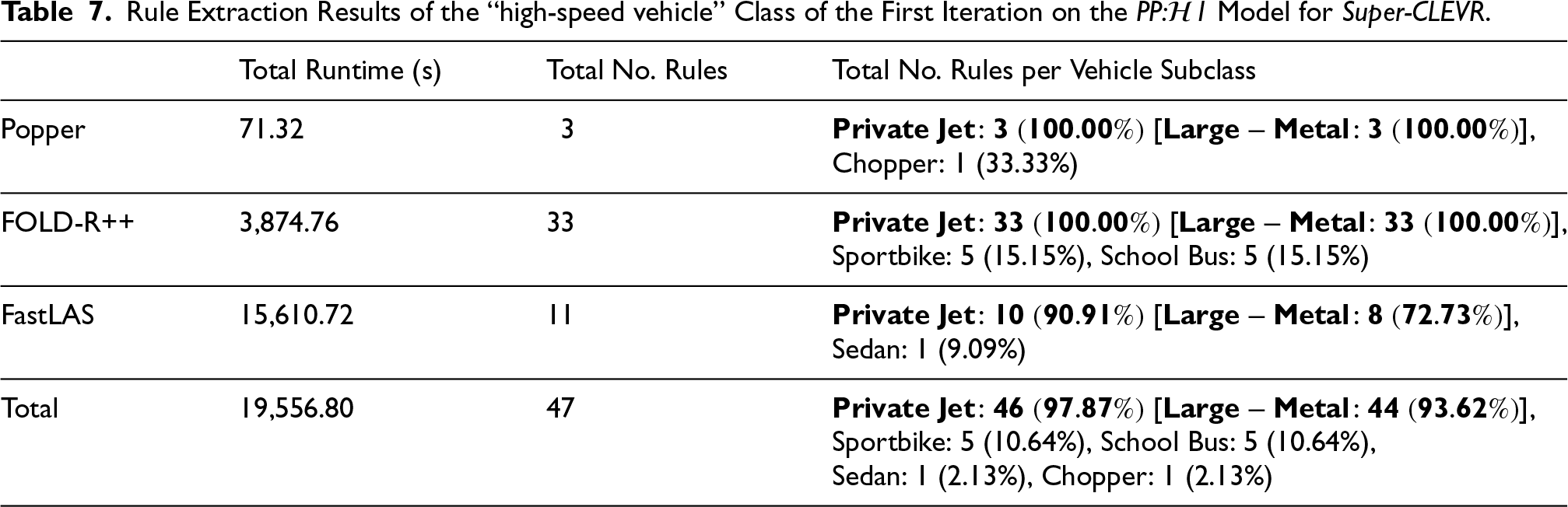

For “high-speed vehicle”, For “offroad vehicle”, For “specialised vehicle”, For “urban vehicle”, Rule Extraction Results of the “high-speed vehicle” Class of the First Iteration on the High-speed vehicle

Offroad vehicle

Specialised vehicle

Urban vehicle

Urban vehicle

where

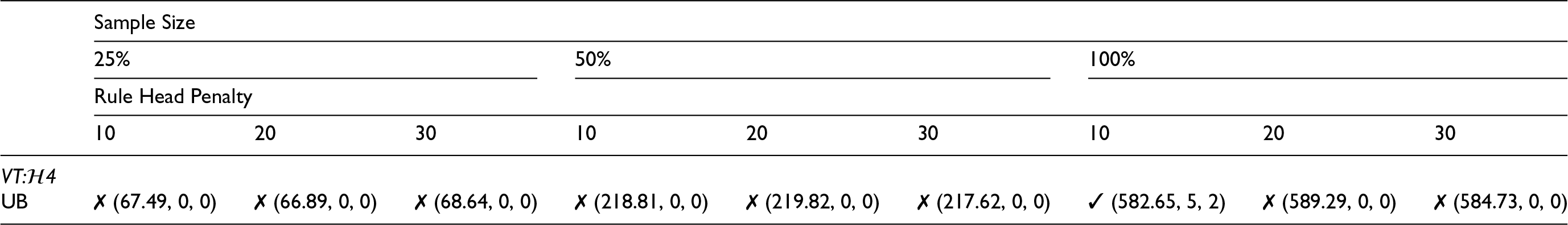

For For For

The mending process was successful across all hierarchies, substantially improving the recall of the target classes; notably, the overall performance of the model for VT:

Urban bicycle Rule Extraction Results of the Second Iteration of the

Rule Extraction Results of the Second Iteration of the

Rule Extraction Results of the Second Iteration of the

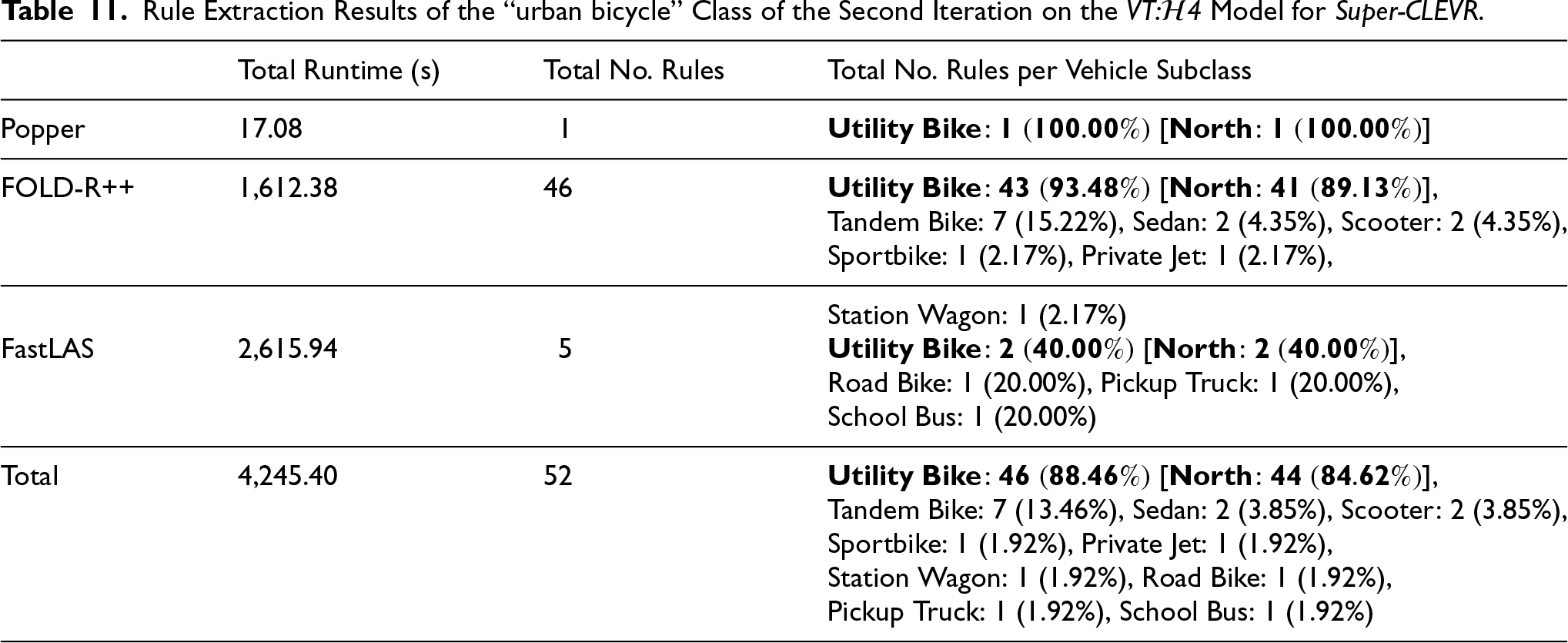

Rule Extraction Results of the “urban bicycle” Class of the Second Iteration on the

The model retrained for an additional 20 epochs proved to be the most effective. This second intervention successfully resolved the persistent slice, as shown in Figure 24 Appendix C. The recall for the problematic “urban bicycle” class rose significantly from

This section details the experimental setup and presents the results from our evaluation of the proposed SDM architecture for the image classification task on a curated subset of the

Experimental Setup

In the following, we describe the experimental setup for each module of our SDM architecture.

Problematic target classes are those with a While the hyperparameter settings for

Then, the extracted rules are analysed to form candidate hypotheses, which are formally selected if they meet a predefined rare slice hypothesis threshold

Experimental Results

In the following, we present the experimental results for rare slice generation, rule extraction, and model mending from the iterative application of our SDM architecture.

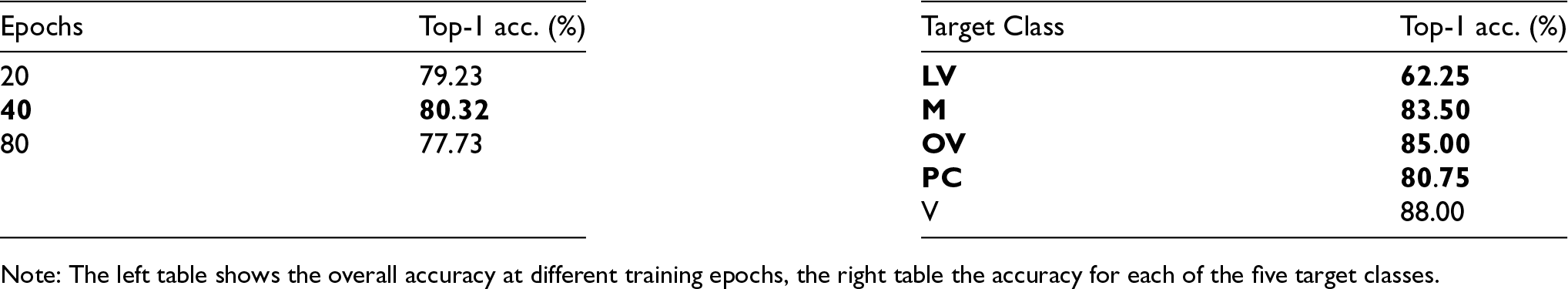

Top-1 Accuracy of the VE:

1 Model on the ImageNet Validation Set after the Initial Model Training.

Top-1 Accuracy of the

Rule Extraction Results of the First Iteration of the

Rule Extraction Results of the First Iteration of the

Rule Extraction Results of the First Iteration of the

Leisure vehicle Rule Extraction Results of the “leisure vehicle” Class of the First Iteration on the

Motorcycle

Offroad vehicle

Passenger car

Top-1 Accuracy of the

The left figure shows a scene, based on

Our experiments, conducted on both the synthetic

Comparison of ILP Systems

Our comparative analysis among the three ILP systems –

In addition to requiring less data for slice discovery, smaller samples have the advantage of significantly reducing the running time of ILP systems. In particular, using smaller samples was necessary for

Impact of Model Mending

The model mending phase, guided by the rules extracted via our SDM, proved highly effective across all hierarchies. By augmenting the training set with new images specifically targeting the identified rare slices, we achieved substantial improvements in model performance. For instance, in the challenging VT:

Finally, the results across both the

Comparison With Existing Methods

As detailed in Section 2, the state-of-the-art can be broadly categorised, and our neurosymbolic architecture offers a distinct alternative that emphasises logical rule extraction. Several methods in slice discovery and rare data mining, such as

Our neurosymbolic SDM contrasts with these methods by prioritising interpretability and targeted causality. The main technical difference is the use of ILP to move from systematic model errors to a set of compact, human-readable, and formal logical rules describing them. This provides several advantages:

In summary, our work addresses the fundamental challenge of making slice discovery interpretable and directly actionable for targeted model correction.

Limitations

For each CV task, our SDM approach builds on the availability of scene graph representations to extract interpretable logical rules describing “hidden” rare slices, that is, underperforming subsets of data not explicitly labelled and difficult to spot from unstructured data, such as images. These representations provide the rich semantic structure necessary for our method. However, scene graphs are generally not readily available for datasets, especially in real-world scenarios. Nevertheless, rapid advances in Vision Language Models (VLMs) are making it increasingly feasible to semi-automatically generate (curated generation) such semantic representations from image data. In our ongoing work, we are actively and systematically exploring the integration of VLMs to automate the scene graph generation step, as we have experimented here for real-world images from the

A second limitation is the current reliance on manual, exploratory tuning for the hyperparameters of the ILP systems. While our experiments show that robust rules can be found by testing a range of configurations, this process can be time-consuming and may require domain expertise.

Furthermore, the scalability of ILP systems can be computationally intensive, especially with large validation sets or with a complex hypothesis space defined by numerous attributes and predicates. As observed in our experiments,

Finally, the current implementation of our SDM focuses on discovering rare slices defined by object attributes (e.g., “a yellow rubber utility bike facing south”). A limitation of this approach is that it does not yet consider slices defined by the relationships between objects (e.g., “a bicycle next to a car”). Extending the proposed SDM to incorporate object relations would allow for the discovery of more complex rare slices, providing deeper insights into model failures.

Conclusion and Future Work

In this work, we have presented a neurosymbolic approach to address the slice discovery problem. In particular, we have provided a modular architecture and an implementation that connects dataset generation (or subsampling), model classification, and rule extraction via ILP to identify misclassified rare slices. Our experiments, conducted on both the synthetic

The results obtained are encouraging and demonstrate the potential of simultaneously exploiting DL and KRR methods for slice discovery. In this way, compact and human-readable logical rules can be extracted that improve the interpretability and explainability of a CV model under examination, also paving the way to advanced concepts such as causal and contrastive explanations.

Footnotes

Funding

The project leading to this research has received funding from the European Union's Horizon 2020 research and innovation programme under grant agreement No 101034440. Additionally, this research was funded in whole or in part by the Austrian Science Fund (FWF) 10.55776/COE12, and it was supported by Bosch Center for AI (BCAI) in Renningen, Germany.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.