Abstract

Management scholars now recognize the important contribution that replication research can make to our field by examining the robustness of scientific findings and boundary conditions of previously tested relationships. Replications can also serve as an important tool for training doctoral students. Despite these strong arguments for conducting replication research, the field has been slow to respond to calls for increased use of replication. In part, this may be influenced by concerns that reviewers will discount the contribution of replication research. A less frequently discussed barrier to replication, however, is that due to a lack of replication training, engaging in this type of research constitutes embarking on unfamiliar territory. In this piece, I attempt to address the need for replication research training by providing researchers with a roadmap for taking replication ideas from conceptualization to publication, drawing on my own experience publishing replications and observations in the evolving replication literature.

Keywords

Introduction

“Do you really think you can get tenure based on

The goal of this editorial is to provide researchers with a set of basic guidelines for conceptualizing, designing, and publishing replication research. This will include advice on identifying the different purposes of a replication study, choosing a target study to replicate, designing a replication study, and various aspects associated with preparing the paper for journal submission. It is not meant to be an exhaustive list of guidelines. Rather it serves as a starting point for members of a field that recognizes the need for replication, but also lacks resources to guide researchers in replication. I will provide this guidance by drawing on my own experience publishing replication research (Kalsher et al., 2019; Obenauer, 2023; Obenauer & Kalsher, 2023; Obenauer & Rezaei, 2023) as well as patterns that I have observed in the literature.

Before beginning that process, however, it is important to clarify the language that will be used in this discussion. Prior research has discussed types of replications in detail, so the discussion of replication types here will be brief. Reproducibility refers to reanalyzing a dataset, whereas replication refers to the reexamination of relationships through the use of a different dataset (Köhler & Cortina, 2021). Literal reproducibility (Cortina et al., 2023) and literal replication (Köhler & Cortina, 2021) refer to studies where researchers attempt to follow the research procedures of the target study as closely as possible. Constructive reproducibility (Cortina et al., 2023) and constructive replication (Köhler & Cortina, 2021) refer to studies in which researchers follow some procedures from the target study, but make strategic modifications to more robustly test a phenomenon or strengthen insights derived from the research. Although reproducibility and replication are distinct terms, for improved readability, I will use replication as an all-encompassing term except when it is important to distinguish between reproducibility and replication.

Identify replication purpose

Before choosing a replication target, it is important to identify the purpose of your replication study. Identifying the purpose will help you select a replication target and frame your research properly for your audience. A non-exhaustive list of potential purposes includes (a) update an accepted theory after a relevant event, (b) test an established theory with stronger methodology, (c) test the boundary conditions of theory, (d) advance an accepted methodological precedent, (e) educating doctoral students on various steps in the research process (

It is critical that researchers have a passion for the identified purpose of replication because, as described above, the publication process

While the purpose of the research will undoubtedly be influenced by the authors’ passions and interests, it should also include a theoretical element. Management research has trended away from testing established relationships and has become dominated by manuscripts that are perceived to make strong theoretical contributions (Colquitt & Zapata-Phelan, 2007). Through this transition, management scholars have positioned theoretical contribution as a critical differentiating factor (Geletkanycz & Tepper, 2012) and gone so far as to question the value of research with limited theoretical contribution (Sutton & Staw, 1995). Therefore, researchers pursuing replication should incorporate a theoretical purpose into their work as well.

For example, early in my career, I chose to pursue replication in a special issue for pragmatic purposes. Identifying this purpose allowed me to also identify my potential audience. From a research perspective, my interest was examining the impact of different experimental design choices on research outcomes. I thought this could make an important contribution to future research design. While this would fall under the category of “testing the boundary conditions of theory,” I recognized that it was unlikely to meet reviewers’ standards for a theoretical contribution. Therefore, I sought to identify an additional purpose for my research that I found interesting but would also help me present the value of this research to reviewers. Given its relevance to my research stream, I identified the potential to update leadership categorization theory and the role of race in the leadership prototype (Lord & Maher, 1993; Rosette et al., 2008) following a period of more diverse representation in leadership positions. Although this was not my initial motivation for conducting replication, this purpose became the primary framing for my manuscript (Obenauer & Kalsher, 2023) and it allowed me to pursue the aforementioned purposes of my research.

Choosing a replication target

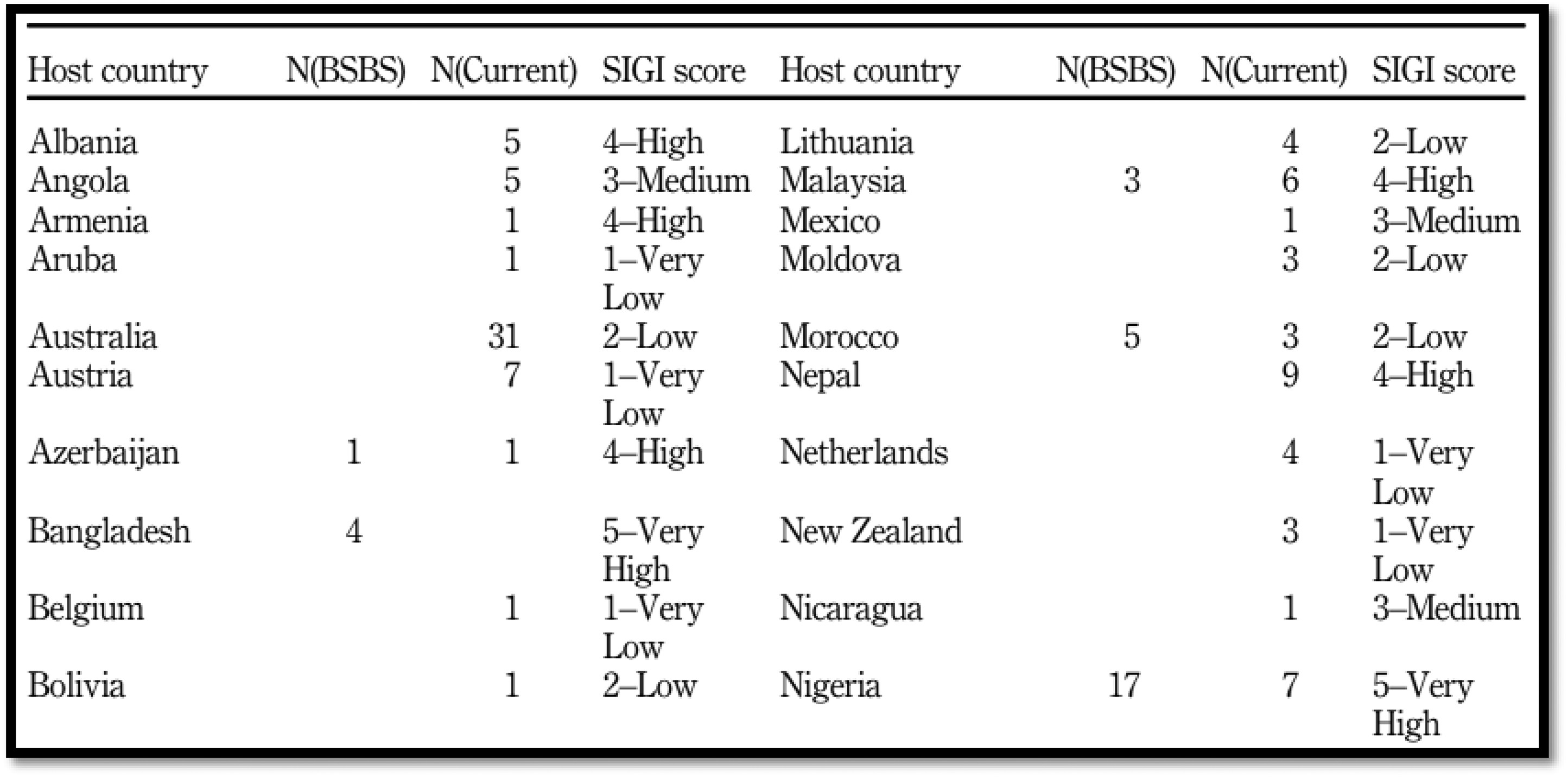

After identifying a purpose for replication, researchers should identify a target study to replicate. This is often an expectation of editors and reviewers. For instance, JOMSR advises that “authors should clearly identify the original article (i.e., with a citation) being reproduced/replicated” (Kraimer et al., 2023, p. 12). Even when such an expectation is not set, it may be implicit as this is the norm. Table 1 provides information on the targets of 29 replication publications published in four different management journals. All but five of them have a single clearly identified target of replication. In my own work, even when a replication could apply to multiple studies, I have clearly identified a focal target (e.g., Kalsher et al., 2019). Doing so clarifies for the reader what they should be using as a referent point.

Targets of 29 published replication studies

Replication published = citation for the replication research published; Replication target = citation for the replicated research (i.e., the target study); Target citations = target study's citation count at time of submission; Target FT50 = a binary indicator of whether the target appears on the Financial Times’ “50 Journals used in FT Research Rank” list; Lag = years between target study publication and replication submission.

There are several factors that researchers will want to consider when selecting a replication target. I recommend beginning by attempting to identify studies that are aligned with your identified purposes. For example, when attempting to publish in a special issue in The Leadership Quarterly (Obenauer & Kalsher, 2023), I needed to identify a study that was relevant to leadership theory. To meet my methodological objective, I had to identify potential targets in which the research design was clearly presented and aspects of design that I was interested in manipulating could be altered such that effects of these manipulations could be measured. To meet the theoretical goal of updating leadership categorization theory following an increase in demographic representation in leadership roles, I had to identify a study that was published prior to notable changes in leadership representation. Rosette et al.'s (2008) paper met all of these criteria.

It is also important to remember that reviewers, editors, and potential readers will care about the impact of the work you are replicating. In other words, the value of replicating a low-impact publication is likely to be perceived as minimal. With 341 citations at the time of submission and multiple popular press mentions, the Rosette et al. (2008) paper met this criterion as well. More than half of the replication targets shown in Table 1 had at least 200 citations, with nine having 600 citations or more, when the replication was first submitted for peer-review. Only three replication targets on this list had fewer than 50 citations.

When considering a replication target that is not well-cited, there should be other important indicators of impact. For example, Bermiss et al. (2023) replicated a pair of studies with conflicting findings that had been widely discussed in the academic community and popular press. In Obenauer and Rezaei (2023), we replicated a paper that was only cited 58 times, but it was an award-winning paper. Additionally, we were specifically targeting a paper based on a call for submissions that said, “The submission may be a replication of an article published in JGM or a replication of a study with an appropriate topic for JGM but published in another journal” (Selmer et al., 2022). Based on this statement and the fact that the call for papers was for a special issue celebrating the first decade of the journal's existence, we believed that replicating a publication from the

After deciding to restrict our target to a publication in a relatively new journal, we recognized that we would have to look at impact differently. We started by building a spreadsheet with data on every article in the journal (see Table 2). We then sorted the table by download count as we considered downloads representative of how many people read an article and were informed by its findings. We then reviewed the abstracts of the ten articles with the highest download count to identify (a) their alignment with our expertise, (b) the importance of their theoretical contribution, (c) their potential replicability, and (d) whether we could identify a relevant post-publication event that would justify updating the theory through replication. Using these criteria, we identified Bader et al. (2018) as an appropriate target. The fact that the paper won a Literati Award strengthened our justification for replication as it was another indicator of impact.

Sample target study evaluation table

Similarly, in Kalsher et al. (2019), we targeted Shaver et al.'s (2006) published conference proceedings. Selecting a conference proceedings piece that was not highly cited as a target was a risky prospect. We justified this decision by focusing on the importance of the research question, its position in the research stream, and its relevance to the journal, while arguing that we used replication as a vehicle for examining the research question rather than focusing on the target study. In the cases of both Kalsher et al. (2019) and Obenauer and Rezaei (2023), our replication target selection was justified, but risky. Had we been unable to publish our replications in the targeted journals, our potential outlets for subsequent submissions may have been limited. Researchers should carefully consider such risks when selecting replication targets.

Lastly, before finalizing the selection of a research target, I encourage researchers to conduct a thought experiment regarding the potential contribution of their research. This process should occur after preliminary selection of a replication target, sometime during the design phase. In this thought experiment, the researcher must recognize that in replication, you do not simply replicate or fail to replicate the results of the target study, but you might successfully replicate some findings while failing to replicate others. The researcher should consider all of the potential possibilities. For example, if a replication would retest three different hypotheses, there are eight potential combined outcomes in terms of replicating and failing to replicate (see Figure 1). In this thought experiment, the researcher should attempt to identify the potential contribution for each combination of outcomes. If the researcher cannot identify the potential contribution for each combination of outcomes, they may want to reevaluate why they believe the target study is appropriate for replication.

Potential outcomes to consider when evaluating the replication study's potential contribution(s)

Design

Hypothesizing

In the planning stages, it may seem like identifying whether you replicated the results of your target study will be obvious. In practice, however, if your criteria for successful replication are not clearly defined a priori, this can become a point of debate in the review process. I have found that the most effective way of addressing this issue is to clearly define the hypotheses that you will be testing and connect them to your definition of successful replication.

For example, when conducting literal replication, the researcher can simply restate the hypotheses from the target study that they will be retesting (e.g., Bermiss et al., 2023; Hamdani et al., 2023; Obenauer & Kalsher, 2023; Obenauer & Rezaei, 2023). When introducing new variables, it is helpful if the researcher motivates the introduction of these variables and proposes new hypotheses to test that will generate an understanding of the impact of these variables (e.g., Hammond et al., 2023; Obenauer, 2023; Obenauer & Rezaei, 2023). Choosing not to articulate hypotheses and utilize a research question is also possible (e.g., Chang et al., 2016; Hopp & Pruschak, 2023; Kalsher et al., 2019), though this method can make defining successful replication more difficult. As shown in the cited examples, I have used all three approaches. Which approach is most appropriate will be guided by the identified replication purpose.

Replication approach

An important decision to make in the design process is what type of study will be conducted. Given the previously discussed emphasis on theoretical contributions in the field of management, reviewers tend to respond more favorably to constructive replication studies. With that said, constructive replication studies can be strengthened by starting with a literal replication study. Literal replication studies allow researchers to establish whether they could replicate the relationships established in the target study before implementing any strategic modifications to research procedures. Failing to establish this limits the researcher's ability to infer whether deviations in findings were the result of strategic modifications to research procedures or an idiosyncratic effect.

For example, in Obenauer (2023), which is a constructive reproducibility study, I sought to understand how factors such as a job applicant's name having Arabic origins or the name's overall frequency within the population influenced the findings of Bertrand and Mullainathan's (2004) seminal research on the relationship between the job applicant's race and their likelihood of receiving an invitation to interview. Before testing these relationships, however, I had to demonstrate that I could reproduce the results of the original study through literal reproduction using the same data. Failure to do so would prevent me from reasonably inferring deviations in findings from the target study were caused by the introduction of new variables that account for other aspects of an applicant's name. By first establishing that I could reproduce the results of Bertrand and Mullainathan's (2004) research when following their methodology, I was able to strengthen the theoretical contribution of my subsequent constructive reproducibility study.

Similarly, in Obenauer and Kalsher (2023), we used literal replication to examine the relationships studied by Rosette et al. (2008) before conducting constructive replications. In this research, however, our literal replications yielded different results from those of the target studies. Therefore, we then repeated one of the literal replications to reduce the likelihood that deviations in findings were idiosyncratic. After repeatedly failing to replicate the results of the original research, we

One potential risk to successful publication caused by beginning with literal replication is that despite its importance to making a strong theoretical contribution through subsequent studies, because of our field's emphasis on theory, reviewers and potential readers may have limited interest in these studies. Unfortunately, however, demonstrating how well a researcher was able to follow the methodology of the original study requires great detail. While this could create a conundrum for researchers, advancements in technology have helped to address challenge. I share the extensive details of literal reproducibility and replication studies in Open Science Framework (OSF) projects while reporting critical details in the primary manuscript (see https://osf.io/jq5f9/?view_only=921284f626d849979ca5b6871c0a9bd6 for an example).

Another consideration that researchers will have to ponder is what study(ies) within a paper to replicate, as many manuscripts with appropriate potential replication targets will include multiple studies. There is no single correct formula to answer this question. For instance, while Obenauer and Kalsher (2023) conducted a series of literal and constructive replications of two studies from Rosette et al. (2008), Ubaka et al. (2023) concurrently conducted a single replication of each study (four total) in the same manuscript.

1

Both replication manuscripts were published in the same special issue of

When choosing to replicate one, some, or all studies within a manuscript, researchers will need to consider tradeoffs. Replicating all studies may limit the potential for constructive replications. For example, if the manuscript being replicated has four studies, one literal replication and two constructive replications of each study would result in 12 replications. Conversely, replicating only one or two studies within the manuscript may limit the researchers’ ability to examine a phenomenon that includes multiple relationships. While there is no “right” answer about whether to replicate one, some, or all studies reported in a manuscript, researchers should be deliberate in this decision and clearly communicate how their strategic choice enhances their contribution to the literature.

Power

A potential misconception about replication is that researchers should also seek to replicate the sample size of the target study. This can be problematic as the target study may be underpowered. Additionally, detecting the smaller effect sizes that are frequently present in replication (Camerer et al., 2018) may require larger sample sizes. Replication researchers should conduct independent power analyses and use these to justify sample sizes. Failure to do so may result in reviewers attributing failure to replicate to weakness in replication design.

Preparing for contingencies

Preparing for unexpected results in pretesting of materials, attention checks, manipulation checks, response rate, etc., is important in all research, but it is even more salient in replication, because these factors can influence whether you report that original findings were successfully replicated. This concern emerged three times in the development of Obenauer and Kalsher's (2023) replication of Rosette et al. (2008).

We had successfully obtained the original experimental materials used in the target study, but when we pretested some of these materials, as done in the original study, we were unable to detect evidence that the manipulation was perceived as intended. We were fortunate in that our work had been submitted as a registered report, a process in which peer review also occurs prior to data collection (Briker & Gerpott, 2023), and the response to this outcome had been clearly defined and accepted by our review team. Similarly, we were unable to meet our target sample size in Study 2B, but we had also specified a minimum sample size in our registered report that allowed us to use a smaller sample. What we had not done, however, was specify what we would do if our minimum sample size was not met. For example, would we discard the data, supplement the data with data from another source, or something else? Fortunately, we were not faced with this decision post-data collection as we achieved all of our minimum sample sizes.

A decision that we were faced with, however, was what to do with participants who failed manipulation checks. We had not clearly articulated this in our analysis plan. In the target study, removing participants who failed manipulation checks did not influence findings. In our Study 1A, it directly influenced whether we would report that we successfully replicated the findings of our target study. Ultimately, we reported both tests and referred to the replication as showing minimal support, but this became a point of discussion during peer review and our decision would have been streamlined had we better planned for such a scenario.

Preregistration

One tool that can help researchers prepare for such contingencies is preregistration of research. Preregistration is a process where researchers log their hypotheses and plans for all elements of research design and analyses in a repository where this information will later be discoverable (Logg & Dorison, 2021). When using a preregistration platform such as OSF, it forces researchers to address specific methodological choices, such as those described above, a priori. As Logg and Dorison note when describing “the Odysseus Fallacy,” some researchers worry that preregistration may limit a researcher's freedom which could complicate responding to reviewers. This is not the case. For example, when Obenauer and Rezaei's (2023) reviewers suggested that we test additional relationships, we simply updated our preregistration with a note explaining that updates occurred in response to peer review.

Framing

Motivate replication in general—contribute to the literature rather than attack it

Early in my role as a replication scholar, one of the most common comments that I would receive from reviewers was “why is replication necessary?” It quickly became apparent that in order to successfully publish a type of research that is uncommon and often discounted in our field, I would need to educate reviewers as to why replication was necessary. I began including one to two paragraphs in my introduction that made this case to reviewers. While one motivation for replication can be to address flaws in the scientific process (Köhler & Cortina, 2021), in this motivation I focus on the general need for replication to contribute to robust science. In doing so, I do not force reviewers to take a position in a contentious debate, but simply invite them to be partners in building better science. For example, in Obenauer and Rezaei (2023, p. 413), we said:

Notice how there is no mention of sampling error or questionable research practices. The need for replication has been clearly positioned as not attacking prior research. Beyond not forcing reviewers to take a stand on a potentially divisive topic, it is a disarming approach. What I mean is that if I were to argue that replication is necessary because of potential sampling errors in prior research; that is a falsifiable statement. A reviewer could potentially argue that there is no evidence of sampling error in my target study, or that my research may not be void of sampling error, and therefore my motivation is unwarranted. This would be a fatal flaw. The disarming approach described above, however, practically eliminates this argument.

Even when responding to a call for replications, I still include this type of motivating statement. When I have not, reviewers have questioned the need for replication. Some journals (e.g., JOMSR) may not require such a statement. However, I would still include it in my first draft because while editors at these journals may clearly understand the need for replication, reviewers may have limited experience and understanding of replication (Selmer et al., 2023). Therefore, such a motivation may help you communicate the importance of your work to reviewers, even if an editor asks you to remove the statement in subsequent rounds of review. In fact, an editor communicating that such a motivation is unnecessary may be a powerful message to reviewers about the importance of replication.

Motivating the target—build rather than tear down

After motivating replication in general, it is important to tell the reader why your target study should be replicated. Fortunately, if you have followed the guidance provided for choosing a replication target, this is simply a matter of articulating your rationale. Again, I recommend focusing on establishing the importance of the target study along with how the current replication will contribute to the literature, rather than focusing on perceived problems with the target. For example, in Obenauer (2023, p. 116) I said:

You can see that even though I found that introducing alternative variables to the original model influenced findings, this study was not positioned as challenging previous research. Instead, it was positioned as offering additional insights beyond those provided by a highly influential study. Before submitting to JOMSR, earlier drafts of this manuscript were framed more like a criticism and reviewers were less receptive as it received comments such as, “if you are focusing your critique on a single paper, you need to explain ‘why’ in more detail,” and “labeling this work as a critique makes it feel like it belongs in a follow-up commentary section.” Reframing it as an extension that contributes to the literature has not prevented readers from recognizing the opportunities for methodological advancement that motivated the research. This assertion is supported by Cortina et al. (2023, p. 182) referring to the “superior analyses” used in my paper. What the motivation shown above did, however, was it helped direct attention toward the contribution rather than the methodological debate.

Of course, there will be times where critiquing the methodology of a study will be necessary for motivating replication. For instance, in Kalsher et al. (2019), we discussed how previous research failing to identify a significant relationship between product warning format and user compliance may have been confounded by small sample sizes. In general, however, I have limited the use of criticizing prior work in the motivation of replications. If one can argue that a replication has merit in the absence of methodological flaws in the target study, its contribution will likely be perceived as stronger.

Defining replication

In my first replication submission, I received a reviewer comment that read, “But what type of replication do you do?” That comment clearly communicated that just as we would define a statistical analysis, researchers should define their replication methodology. Defining this methodology, however, is not as simple as it sounds. As noted by Köhler and Cortina (2021), the replication literature incorporates a variety of methodological terms that are often difficult to distinguish. Köhler and Cortina (2021) offered detailed recommendations for language to be utilized moving forward, but their language has not been universally adopted. Several editors have recognized this problem, and many have sought to establish standards for their own journals, but these efforts have not resulted in unification across the field. Table 3 demonstrates how the replication and reproduction language specified in standards for four different journals fails to align such that for some types of replication, each journal listed uses its own distinct term.

Examples of replication language used in different journals

Language listed for

For authors who plan on publishing multiple replication manuscripts, I recommend adopting established language that you will use in your own work. This will allow you to be consistent across your research portfolio. However, you must also recognize the standard of the journal you are targeting and adhere to that standard. This recommendation may sound paradoxical, so I will illustrate with an example. Imagine that you have chosen to use the language articulated by Köhler and Cortina (2021), the same language adopted by JOMSR, but you are submitting what they would classify as a “literal reproducibility study” to Strategic Management Journal. In defining the type of study you perform, you might say something like, “The current research constitutes a narrow replication (Bettis et al., 2016), also frequently referred to as a literal reproducibility study (Köhler & Cortina, 2021).” This allows the author to maintain consistency in their own work while complying with the standards of different journals, thus increasing their likelihood of publication.

Describing methods, reporting results, and interpretting findings

The description of research methodology is fundamental in replication research. Although referring to methodological sources rather than describing every methodological component in detail is often considered accepted practice (e.g., Golden et al., 2006; Gündemir et al., 2019; Kulich et al., 2015), reviewers of replication research can be resistant to such an approach. For example, one replication reviewer said, “The authors often referred to [target study] for complete materials—I kept [target study] handy while reading this manuscript but this is probably too much to ask of a reader who is not also a reviewer.” It is important to recognize that because methodological details are critical to replication design, reviewers may be more interested in these details than they typically are. Also, neither reviewers nor readers are likely to have as much familiarity with the replication target as the replication researcher, therefore details that may seem obvious to the researcher, may not be obvious to the reader or reviewer. Including all of these details can be challenging, however, due to space constraints, thus online appendices or an OSF project can be helpful for sharing methodological details.

When results are reported and compared to those of the target study before reviewers fully understand the similarities and differences of samples, it can impede the review process as reviewers instinctively, and justifiably, begin to wonder how the samples across studies compare. To address this, I recommend including a table of sample demographics that directly compares the sample in the current research to that of the target study. This may be relegated to an online appendix later in the review process, but early on, it is helpful to present it within the manuscript to ensure that the review team understands how samples across studies compare prior to reading results. In some cases, a simple table of demographics may be insufficient. For example, in Obenauer and Rezaei (2023), we sampled expatriates from across the world, similar to Bader et al. (2018). The home and host countries of our participants, however, differed from those of participants in the target study. To clearly communicate this, we built a table of host countries that compared research samples (see Figure 2 for an example).

Example comparison of expatriate host countries in different samples

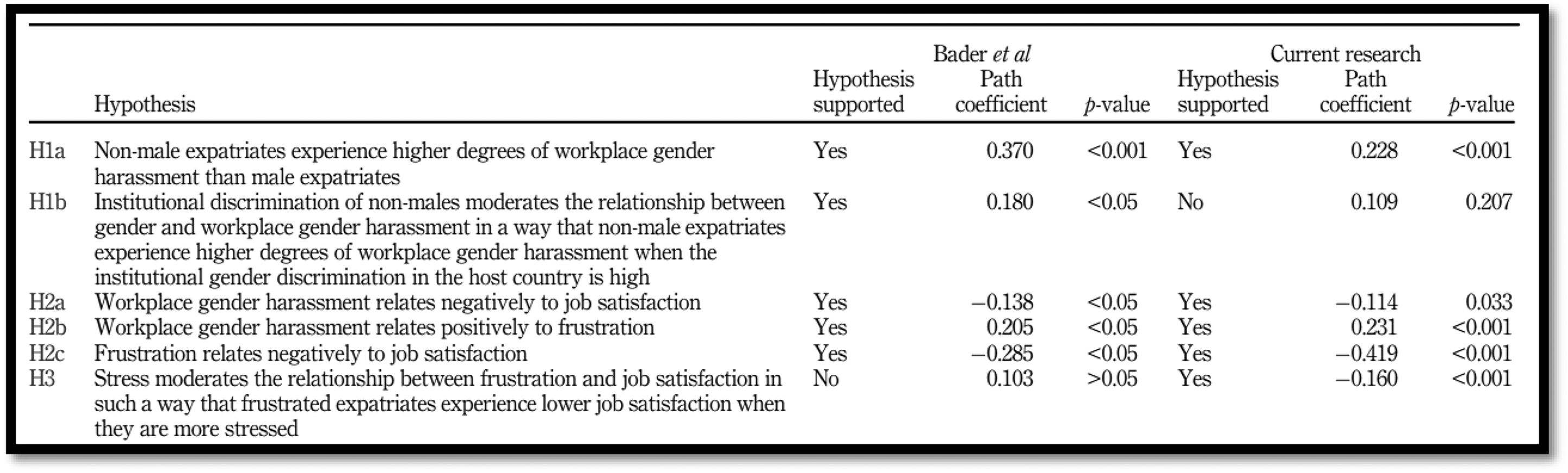

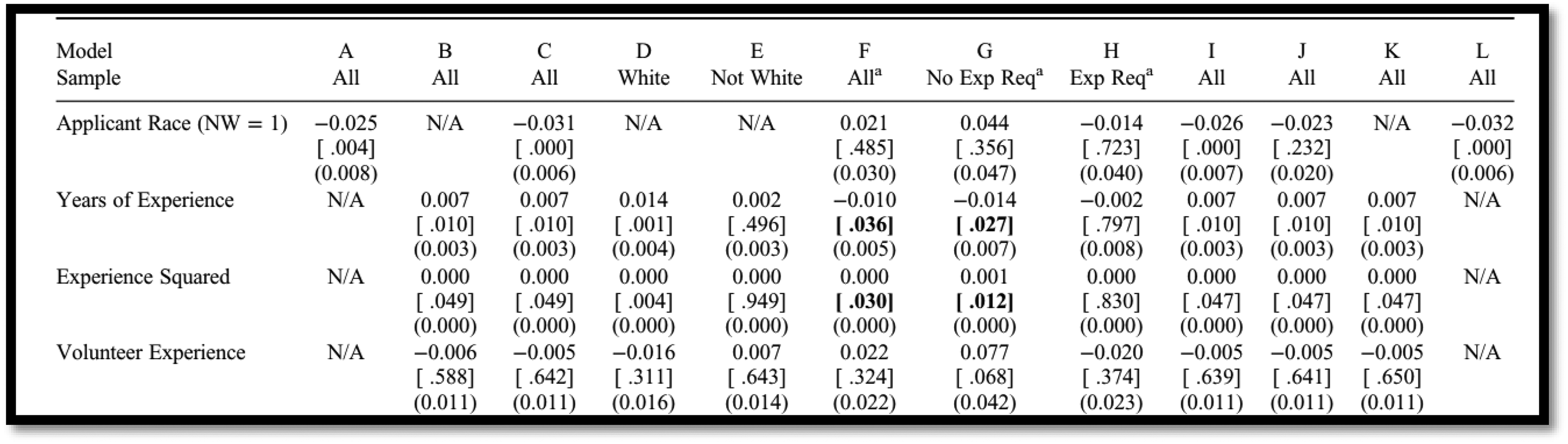

After describing methodology and the sample, the manuscript will move on to reporting results. It is helpful here to report results within the context of the replication target by including both the findings from the current research and the replication target. When space permits, a table that lists hypotheses, whether each hypothesis was supported, and the corresponding statistical output (e.g., path coefficients, effect sizes,

Example comparison of results across two studies

Example comparison of results across multiple analyses

Finally, given the strong desire for management research to make meaningful theoretical contributions, it can be tempting for replication researchers to overstate their findings. For example, a researcher may be inclined to say something such as “we failed to replicate Study X…” when there were some ancillary findings that did replicate. Researchers should be careful to be precise with language such that findings are not overstated. Additionally, replication researchers should follow best practices for reporting boundary conditions and limitations of their findings. Doing so will inspire confidence in their work and present it as less adversarial in nature.

Considering tradeoffs when responding to reviewers

Researchers must consider tradeoffs when responding to reviewers whether a manuscript under review is a replication or not, thus I will not fully address the process of considering tradeoffs when responding to reviewers, but I will briefly address the types of challenges that may emerge in the review process for replication research. The most frequent challenge that I have observed tends to be derived from perceptions that replication has limited contribution and review teams are attempting to identify greater opportunities for theoretical contributions.

For example, in Obenauer and Kalsher (2023), we initially only set out to replicate one study because that was all that was needed to meet our goal of examining the robustness of a relationship to the passing of time and modifications to research methodology. Our review team, however, felt that the contribution of such a study was limited and that in the absence of an additional replication, our research may not warrant publication. Similarly, in Obenauer and Rezaei (2023), we sought to understand if a relationship still existed following two exogenous shocks to the global workplace. In this case, our review team felt the contribution would be stronger if we could identify variables to measure these shocks and incorporate them into our model.

In both cases, the recommendations to strengthen the contribution were not aligned with what we perceived as the purpose of our research, but they were not “bad” recommendations, per se. We recognized that the potential outlets for replication research may be limited (ARIM, 2023) and that in order to meet our primary objective of sharing knowledge gleaned from replication research, we would have to accept the fact that the perceived value of replication research may not be consistent (Selmer et al., 2023), and we may have to incorporate elements into our replication research that we did not think were necessary. While making concessions in the review process may seem more common, or at least more salient, when attempting to publish replication research, it is fairly consistent with what is typically experienced in the peer review process. As long as suggestions in the review process are ethical and reasonable, I encourage researchers to meet the requests of reviewers to help move their research forward. Failure to accept such tradeoffs may limit their ability to publish replication research. Reviewers, however, should be cautious when using previously published replication manuscripts as templates in the review process as these publications may include “concessions” that emerged due to limited understanding of the value of replication research.

Concluding remarks

This editorial was written based on a presentation that I first delivered at the Southern Management Association's annual meeting in 2023. At the time, I was told that the knowledge shared in my presentation was not readily available. Carsten et al. (2023) called replication “the cornerstone of the scientific method,” while also acknowledging that replication research is not widely published. Cortina et al. (2023) observed that few researchers embark on reproducibility studies, while Köhler and Cortina (2023) suggested that the absence of replication research in our field has essentially created a blue ocean of opportunities, so to speak. Why then are more researchers not engaging in replication research? It is likely a combination of Selmer et al.'s (2023) observation that scholars are uncomfortable with replication, Carsten et al.'s (2023) observation that replication requires training, and the reality that replication training resources are limited. I hope that this editorial helps to address the need for these resources and, in doing so, helps to facilitate future replication research.

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.