Abstract

In this editorial, we provide guidance regarding the design and execution of replication, reproducibility, and generalizability studies and explain the unique purpose and empirical contribution of each research design to the overall knowledge generation and consolidation cycle. We evaluate these research designs within the confines of JOMSR's mission to avoid novel theorizing, but instead to engage only in testing of existing theory or of combinations of existing theory. We identify two important dimensions on which these research designs can vary, independence and constructiveness, and highlight the particular utility of independent studies that are high in constructiveness. We offer suggestions for the identification of suitable targets for reproducibility and replication as well as concrete examples demonstrating high-quality theory testing.

We thank the editorial team of

Per the Editors’ opening editorials (Kraimer, 2023; Kraimer, Martin, Schulze, & Seibert, 2023), the theories whose tests would appear in the pages of

In our editorial, we first define reproducibility, replication, and generalizability and distinguish them from each other. We explain the unique purpose and empirical contribution of each research design to the overall knowledge generation and consolidation cycle. We then identify two important dimensions on which these research designs can vary, independence and constructiveness, and highlight the particular utility of independent studies that are high in constructiveness. We then discuss important research design considerations for a variety of combinations of the above-mentioned attributes. We pay particular attention to the identification of suitable targets for reproducibility and replication. We also provide concrete examples that demonstrate high-quality theory testing leading to important conclusions regarding the tested theories.

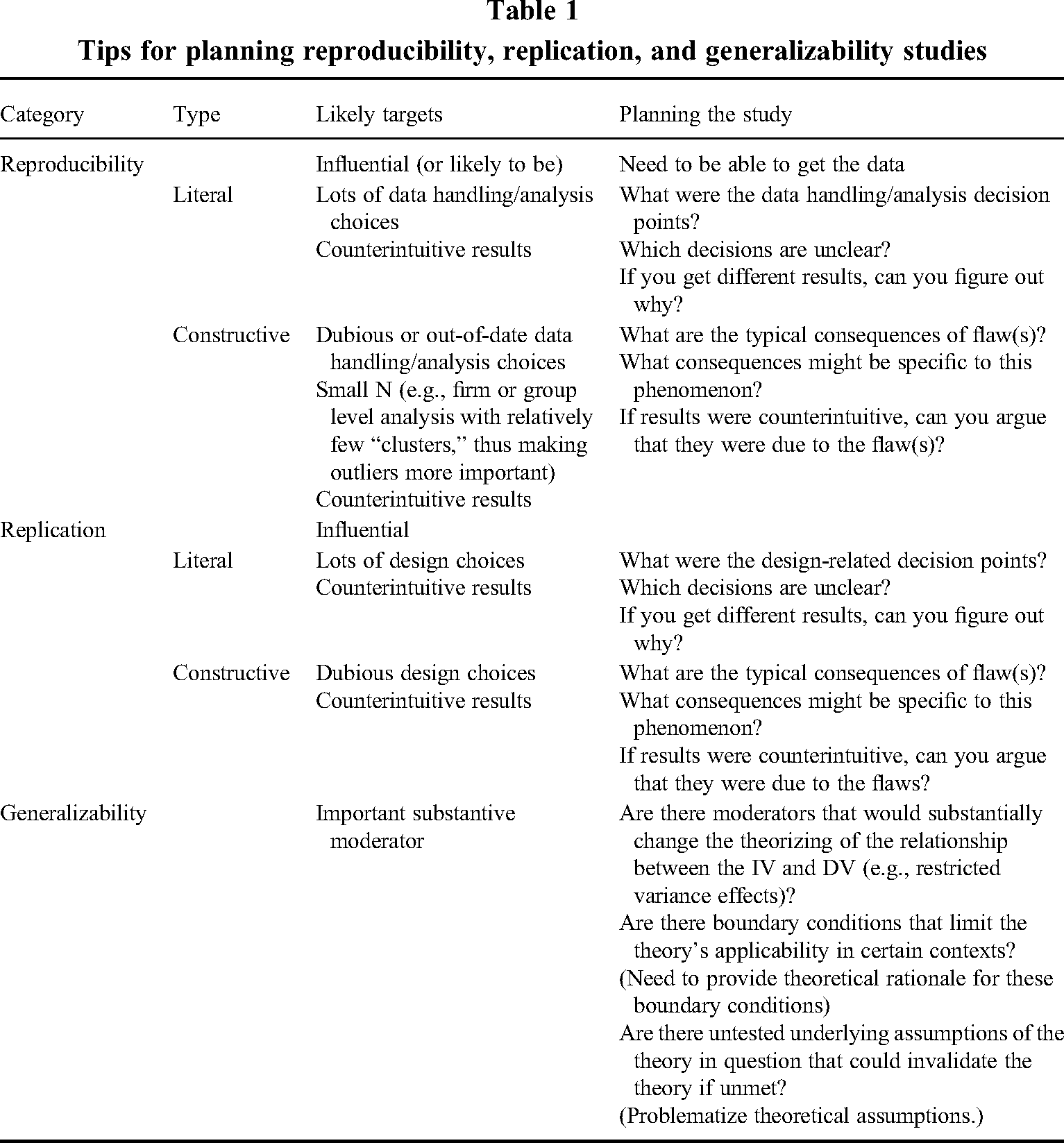

Tips for planning reproducibility, replication, and generalizability studies

Defining and distinguishing replication, reproducibility, and generalizability

One of the challenges that our field faces is that it lacks consistently applied definitions of and distinctions between reproducibility, replication, and generalizability. As a consequence, researchers often disagree about whether these sorts of studies are necessary, play an important role in the advancement of our field, and are worthy of journal space. As such, our first priority for this editorial is to provide clear definitions of the three types of study. In so doing, we draw heavily on our prior work in this space (e.g., Köhler & Cortina, 2021; Cortina, Köhler, & Craig, 2022), which also constitutes the foundations on which the

A

The purpose of a reproducibility study is to determine whether a reanalysis leads to the same conclusions as did the original analysis. One possible source of variation between the original findings and the reanalysis is a lack of transparency in the original study vis-à-vis the data handling and analysis steps that were followed. This is, presumably, less of a problem in dependent reproducibility studies, but constitutes a significant source of error in independent reproducibility studies (e.g., Aguinis, Ramani, & Alabduljader, 2018). Other possible sources of variation are researcher competence, with differences in competence (in either direction) between the original research team and the reanalysis team leading to differences in analytic choices, and differences in motives. Finally, variation can stem from differences in software packages. For example, software packages might use bootstrapping algorithms with different starting points, have different default settings for treating missing data, or treat correlations between residuals differently. This can lead to different results (e.g., Albright & Park, 2009; Asparouhov & Muthen, 2006; Cortina et al., 2022). A reproducibility study can expose any of these sources of variation, thus pointing to the most defensible conclusions vis-à-vis the hypotheses. As we explain later, the different types of reproducibility study will expose different sources of variation.

Unlike a reproducibility study, a

As with reproducibility, variation between the original findings and the replication might stem from differences in experimenter competence, experimenter motives, or reporting accuracy. Unlike reproducibility, sampling error is also a possible culprit in replications. The specific differences or inaccuracies that are targeted by replication, however, differ from those targeted by reproducibility. If one suspects that findings would be different with, for example, a superior control or experimental condition for an independent variable or a more valid measure of a dependent variable or a more representative sample, then replication is needed. Eden and Shani (1982), for example, could not have achieved what they did with reanalysis alone. We strongly encourage the reader to spend some time with this paper because it is a model of its type. Briefly, prior work on the Pygmalion effect had suffered from various major flaws. Eden and Shani (1982) improved upon this prior work by, among other things,

Finally, there is generalizability. Köhler and Cortina (2021) make the point that the meaning of generalizability is often muddled. In order to keep generalizability distinct, we defined it as “lack of variation in findings across studies that differ on one or more

As a point of distinction, it should be noted that reproducibility and replication studies usually target moderators as well, just not substantive ones. If the magnitude of an effect varies across levels of a researcher's data analysis knowledge, then a reproducibility study can be conducted to reveal this fact. If the magnitude of an effect varies across levels of a researcher's desire to manipulate an independent variable in a way that will result in support for their hypotheses, then a replication study (an independent one anyway) would point us toward the light. One final point made by Köhler and Cortina (2021), however, is worth noting. The phrase “replicating and extending” is often used in reference to additions of substantive moderators, that is, generalizability rather than replication. We return to this topic shortly.

Methodological moderators: different forms of reproducibility and replication and their value for scientific advancement

Having distinguished reproducibility and replication in the previous section, we now turn our attention to identifying the different forms that such studies can take. These different forms vary on two important dimensions,

No study is perfect. 1 Consequently, design flaws are ubiquitous. Flaws might stem from contextual factors outside of our control (e.g., when conducting research on phenomena that cannot be manipulated or when working with secondary data that only exists for certain contexts or countries), data collection limitations (e.g., access to sensitive populations; secondary data that may or may not contain measures for variables of interest), measurement limitations (e.g., no superior measure exists in a field), or data analysis limitations (e.g., the optimal statistical analysis tool simply does not exist yet). Because these flaws are seldom inherent in the research questions being asked, improvements are usually possible, especially by independent teams of researchers who might have better access to data, have more advanced design capabilities, or are able to develop new or apply better measures and analysis tools. The goal of comprehensive constructive replications is to address all major limitations of previous studies, while the goal of substantial constructive replications is to address at least one major limitation. These constructive replications rarely invalidate the original study. Rather, the constructive replication study provides a stronger test of the phenomenon of interest and allows researchers to determine the effect of research design characteristics on observed findings (see e.g., Minefee, McDonnell, & Werner, 2021). This in turn provides deeper knowledge about the phenomenon and its methodological moderators.

There are a few more terminology-related issues worth mentioning. First, a literal study is, by definition, not constructive. It can still have a great deal of value, but that value does not come from correcting research design flaws or analysis execution errors. Second, Köhler and Cortina (2021) found examples of what they called “regressive” studies, that is, studies with all of the flaws of the original plus at least one additional flaw. These are of little value and should not be submitted to

Issues addressed by literal versus constructive studies

A constructive study, by definition, provides an evaluation of the importance of a design flaw or limitation in the original study. A constructive

Examples of constructive and literal reproducibility studies

Constructive reproducibility attempts could involve using a different treatment of outliers so that a more appropriate version of a dataset is analyzed (e.g., exclusion of extreme or unlikely cases; bounding secondary data analyses to specific time frames that contain a more comparable set of data points), using a superior analytical technique that better accounts for characteristics of the data (e.g., non-normal dependent variables, nestedness, endogeneity), or incorporating control variables that allow one to rule out alternative explanations. Whether these would be considered major or minor depends on the likelihood that repair of the flaw would change the conclusions of the paper. Dropping an outlier, for example, usually would not have enough of an effect on analyses to change many of the words in a Results section. In the case of Hollenbeck, DeRue, and Mannor (2006), however, the authors argued that one case in Peterson, Smith, Martorana, and Owens (2003) was unrepresentative and was so influential that its inclusion had a dramatic effect on the conclusions of Peterson et al. (2003). Its omission by Hollenbeck et al. (2006) did in fact change results dramatically, and this would lead us to characterize the inclusion of that case as a major flaw. In any case, the more flaws that are addressed in the constructive reproducibility study, and the more serious the flaws, the greater the contribution.

The target of

Of more interest to

Examples of constructive and literal replication studies

When evaluating constructive replications, an incremental improvement might be the use of a slightly different time lag in a repeated measures design that better represents the stability of the construct. As another example, one might use the full rather than the shortened version of a scale or use a sample with slightly more power. Or one might employ a superior data analysis method in the newly collected data. For example, Ghosh, Ranganathan, and Rosenkopf (2016) replicated a prior study of endogenous characteristics of alliance network structure by Ahuja, Polidoro, and Mitchell (2009) via a three-stage process. In their first stage, they replicated the data analyses of Ahuja et al. in a different time period, keeping everything else constant, with the goal of replicating as literally as possible (reproducibility was not possible because the data from original study data was supposedly proprietary). In stage two, the authors analyzed the data with a superior data analysis method (Exponential Random Graph Models: ERGMs) which had been developed after the original study. Stage two constitutes a substantial constructive replication attempt because ERGM allowed the authors to model network formation processes and dependencies that are known to exist but were ignored in the analytic approach used in previous alliance research. In stage three, Ghosh et al. conducted an extension to a different industry for a generalizability assessment.

Note that simply recoding variables included in the same dataset, as is often done in macro level studies, is not considered a replication, but rather a reproducibility attempt as it is based on the same data collection. Similarly, using different performance indicators included in the same dataset would not be considered a replication attempt. Instead, it might be a reproducibility attempt if the alternative performance indicator captures the same underlying variable. If the alternative performance indicator were to capture a slightly different concept, for example, effectiveness versus efficiency, individual versus team performance, or patent filings versus R&D expenditures, then this would be considered neither a replication nor a reproducibility attempt but simply the testing of a different hypothesis. In any case, authors of an incremental constructive replication would need to argue that the relatively minor flaws that they targeted were, nevertheless, consequential, and that, taken together, they could be expected to influence conclusions.

A substantially constructive study needs to go beyond that. For example, using valid measures of core variables when the original study used deeply flawed measures would constitute a substantial improvement as would using a more rigorous experimental design that controls for more or more important sources of variance. A substantial improvement could also come from using a sample that is more representative of the population of interest when the original sample differs from the population of interest in a way that is likely to affect results. A sample of employees in a real or single organizational context—rather than a student or MTurk sample—would be more appropriate when studying organizational phenomena that might vary substantially with job experience or that might be highly dependent on organizational context factors. As another example, many phenomena are particularly relevant for small- to medium-sized enterprises (SMEs) (e.g., informal HR practices, collaborative cultures) but are, for reasons of access if nothing else, studied primarily with large firms. A study that managed to gain access to data from SMEs to study such a phenomenon would represent a substantial constructive replication. And as with reproducibility, the more (and more serious) the design flaws, the greater the opportunity for contribution. Hence,

A literal replication study, in principle, addresses sampling error. If the original authors were just lucky, then a literal study will probably reveal it. However, a literal study can also shed light on details, and possibly flaws, that were not obvious in the original report due, for example, to lack of transparency about how the study was conducted (Aguinis et al., 2018). Of course, lack of transparency makes literal replications more challenging. Literal replications can be difficult for other reasons as well, including lack of access to an equivalent participant pool, or lack of access to proprietary instruments or experimental apparatus. When a literal replication is possible, however, it can provide useful information about variation in findings due to sources ranging from sampling error to researcher competence and motives. If (and it can be a big if) a more or less exact duplication of the original study is possible, then the source of variation that is the target of the study is sampling error. As with literal reproducibility studies,

Issues addressed by independent versus dependent studies

Generally speaking, an independent reproducibility or replication study allows one to assess the role of researcher-related factors. If flaws exist in the original work, then they must be due either to a lack of knowledge and skills on the part of the original author team, lack of access to superior design/analysis elements (such as access to a larger sample, benevolent organizational research partners, or access to proprietary data or software), lack of funding to support superior design/analysis elements, or to a lack of desire to subject their hypotheses to appropriate scrutiny. Only an author team with different competencies, resources, and/or motives is likely to correct these flaws. 2

The application of the above to constructive studies is obvious, but these principles also apply to literal studies. As mentioned above, some of the flaws in Reinhart and Rogoff (2010) were accidental, some intentional, but

This last example also serves to make a final point about independent literal studies. There would not have been much point in Herndon et al. (2014) showing that, when they reanalyzed the Reinhart data, omitting theory-disconfirming cases and overweighting theory-confirming cases as did the original authors, they produced the same results. That might have been a good starting point, but the real hook for their paper had to be to show that the conclusions regarding austerity measures were very different when one

Recommendations for designing reproducibility and replication studies for JOMSR

In order to make a sufficient contribution, each type of study must possess certain virtues, and in sufficient quantities. This is true of any paper of course, and because

Literal studies

A literal study must follow the procedures of the original as exactly as possible. Obviously, this means incorporating every detail in the Methods section of the original. There may, however, be other details that were omitted from the original. Perhaps the original authors felt that they were unimportant. Or perhaps a reviewer (as they sometimes do, unfortunately) suggested that certain methodological details be excised in the interest of journal space. We, therefore, recommend that the literal replicator/reproducer contact the first author of the original study in order to discover if there were any additional details that would not be obvious to a reader of the published work.

Along these same lines, the literal replicator/reproducer may begin their work only to realize at some point that the original authors had had options, but that it was not clear which they had chosen. For example, suppose that the original authors had stated that they had used the 8-item measure of Y by Abbott and Costello (1937), but that their 1-factor CFA had 9 degrees of freedom, which is consistent with the shorter 6-item measure of Y developed by Laurel and Hardy (1941). Or perhaps there were two different versions of the experimental task and it was not clear which had been used by the original authors. It would be important for the replicator/reproducer to pause their work in order to find out 3 .

As we mentioned earlier, if the original work had a major flaw, then a stand-alone literal replication probably would not make enough of a contribution to be considered for publication in

If one's purpose really is to perform a literal study only, then one's target is presumably sampling error. And because sampling error would not be a factor in a reproducibility study, we are really just talking about replication here. To do justice to issues regarding sampling error, then, the literal replicator would have to do more than just repeat the small-sample original study. Perhaps multiple literal replications could be conducted, or perhaps a single large-sample literal replication could be conducted. One way or another, the reader would have to have confidence that the replicator's conclusions were at least as legitimate as, but probably more legitimate than the conclusions in the original study.

There is one other element of literal studies that is worth mentioning. Köhler and Cortina (2021) and others (Lykken, 1968; Stroebe & Strack, 2014) have pointed out that, strictly speaking, literal (aka exact, direct) replication/reproducibility studies are not possible. Time will have passed, participants will have come from a different part of the country, a different secondary dataset will not have used the exact same measures for core variables, etc. In order to allow for literal studies, then, the “literalness” requirement must be relaxed slightly. In order to be considered for publication, then, a literal study must differ

The replication does differ from the original, but it is difficult to imagine constructs whose relationship would be expected to differ between Class of 2015 undergraduates at one Melbourne-based “Australian Group of 8” university and Class of 2016 undergraduates at a different Melbourne-based “Australian Group of 8” university. There is a difference, but it is of no obvious consequence. As a result, the work of Experimenter B is sufficiently literal.

But that was an easy example. What if Experimenter B, in replicating Experimenter A's study, had used undergraduates from Virginia Commonwealth University in the USA instead of Monash students? What if Experimenter B had used Class of 2022 University of Melbourne students rather 2015 students? With regard to geographic location, depending on the nature of X and Y, there might be cultural differences that would influence their relationship. Experimenter B would do well to point out that the USA and Australia are very similar on Hofstede's dimensions (Hofstede, Hofstede, & Minkov, 2010), so, no worries mate. Or the Class of 2022 might be expected to differ from the Class of 2015 because the Class of 2022 had spent half of their college years on Zoom. Experimenter B should consult the literature on the consequences of this fact for the Class of 2022 in the hopes of being able to argue that, although there would be many relationships for which these consequences would be relevant, the relationship between X and Y is not among them. Just to be clear, if Experimenter B decides that these differences matter and subsequently adds cultural differences or pre/post Covid educational experience as moderators to the tested hypotheses, they are conducting a generalizability study and, therefore, should follow the guidelines provided below.

In short, a literal study must be conducted with great care in order to ensure that no confounds have been introduced. Depending on the nature of the difference between the original and the follow-up, the author may need to argue why there is little reason to fear that the difference is consequential vis-à-vis the phenomenon of interest.

Candidates for Literal Studies

The most important characteristic of a target for a literal study is that it is influential (or is likely to become so). There is not much point to a second attempt when the first attempt was ignored.

It also helps if the findings of the target were, in some way, counterintuitive. In illustrating the importance of replication, for example, Lykken (1968) described a study published by Sapolsky (1964) in a prominent journal. Sapolsky suggested that psychiatric patients with eating disorders harbor an unconscious “cloacal theory of birth,” that is, oral impregnation 4 . Thus, patients who see frogs in the Rohrschach are more likely to suffer from eating disorders. And Sapolsky (1964) did indeed find more eating disorders among frog responders. Lykken (1968), for some reason, was not buying it. As he put it, “I regarded the prior probability of Sapolsky's theory…to be nugatory and its likelihood unenhanced by the experimental findings” (p.151). Put another way, the findings were sufficiently counterintuitive to warrant a revisit.

But counterintuitiveness can take many forms. For example, the probability of a study with a typical sample size finding support for all three two-way interactions and the three-way interaction among three predictors is in the low single digits. Such a finding is, therefore, counterintuitive. A literal study, or better yet, a series of literal studies would be likely to shed light on the reasons for the original findings.

We should also repeat the fact that a literal study is often a precursor to a constructive one. Herndon et al. (2014) set out to conduct a literal reproducibility study, and based on what they learned from that attempt, they were able to conduct a comprehensive constructive reproducibility study that debunked the target study. Schultze et al. (2012) began with a literal replication just to show that they were in fact doing the original study justice. Their series of constructive replications could then be used to show that a design flaw in the original had led to incorrect conclusions. Similarly, Ghosh et al. (2016), in their Stage 1, tried to get as close as possible to a literal replication of the Ahuja et al. study, varying only the time period of the collected data.

Constructive studies

A constructive study must retain all of the major virtues of the original while eliminating at least one major flaw. To use the terms from Köhler and Cortina (2021), a constructive study must be either

In most cases, though, what constitutes a major flaw will be a matter for debate. Authors of constructive studies must, therefore, argue convincingly that their improvement really is a major one. This usually involves a methodological argument based on current best practice recommendations that are relevant for the chosen research question, the constructs of interest, etc. One way to avoid this debate is to crowdsource the effort. For example, Landy et al. (2020) had 15 different research teams design and conduct studies to test hypotheses related to moral judgments, negotiation, and implicit cognition. If the teams in such an effort were instructed to design their studies in a manner most appropriate to the research question, then those studies would satisfy Lykken's requirements for constructiveness. Several such independent efforts would shed light not only on the research question but also on the importance of design characteristics for its answer.

Authors of constructive studies must also take care not to introduce any flaws of their own. Consider Chen, Chen, and Sheldon (2016). In study 1, these authors somehow managed to incorporate an objective measure of unethical pro-organizational behavior (UPB), thus avoiding the problems of intentional distortion that infect self-report measures of such behaviors. Their study 2 improved upon study 1 in some ways, but it also eliminated its greatest virtue by replacing the objective measure of actual UPB with a self-report measure of intentions to engage in UPB. Köhler and Cortina (2021) described this as a “confounded” replication because there would be no way of knowing whether differences in results between the two studies were due to the elimination of flaws or the elimination of virtues. Authors intending to submit to

Across all of these designs, it is important that authors writing for

When discussing limitations of the reproducibility or replication study, authors of substantial constructive studies could discuss how future research could address any remaining methodological flaws of the original study that they were unable to address. Authors of literal studies could highlight the utility of future constructive replication attempts. However, it needs to be clear why the authors chose not to incorporate their suggestions in their own work.

Candidates for constructive studies

As is the case with a literal study, the contribution of a constructive study is enhanced when the target is influential and its findings counterintuitive, two attributes that, unfortunately, often go hand in hand. In addition, the target of a constructive study needs to have at least one flaw that is likely to be consequential. The consequentiality of the flaw may be general or specific. Regarding the former, one could lean on the methods literature to argue that correction of the flaw is likely to bear fruit. If, by contrast, the flaw is specific to the phenomenon of interest, then one would lean on the substantive literature to argue for consequentiality. Herndon et al. (2014) could lean on the methods literature, or indeed on common sense, to argue that omission of cases that flew in the face of the original author's reasoning was likely to be consequential. Schultze et al. (2012), on the other hand, had to get into the details of typical escalation-of-commitment argumentation and methods to explain why their changes to Conlon and Parks (1987) were, in fact, improvements and why these improvements were likely to matter.

Substantive moderators: generalizability studies

Let us now turn our attention to generalizability studies, which include the addition of a substantive moderator to the originally tested relationship or model. Popular substantive moderators include gender, cultural value differences, membership in different organizational groups, geographic location, and different levels of environmental risk, to name a few. Ultimately, the goal is to determine whether existing theorizing is universally applicable or needs to be modified to include theorizing about suspected variations across different contexts, people, etc.

As pointed out previously, the abundance of meta-analyses and the many studies contained within them provide an indication that there is no shortage of generalizability studies in our field.

On the other hand, addition of a moderator to an existing model requires some sort of theoretical justification of the proposed interaction effect. If no such justification exists, then authors are essentially engaging in some sort of random fishing for significant effects to be explained via HARKing (hypothesizing after results are known). Needless to say,

If a researcher were interested in the relationship between abusive supervision and interpersonal justice (e.g., Vogel et al., 2015) and believed that this relationship varies across cultures, then the researcher would need to explain why cultural differences, for example, differences in cultural values or norms, would impact the abusive supervision-justice relationship. The employment of existing theorizing about cultural value or norm differences would lead to refinement of the theoretical argument for the linkage between abusive supervision and employee justice perceptions to clarify that such theorizing may not be universally applicable across all cultural contexts. The researcher does not need to generate new cross-cultural theory or come up with novel theorizing that explains the abusive supervision–justice relationship. Rather, the researcher borrows from existing cultural theorizing to define boundary conditions for the abusive supervision–justice relationship. Similarly, if the researcher argued that gender moderated the relationship, they might incorporate gender role theory or gender identity theory to explain how gender differences impact on the abusive supervision–justice relationship.

These examples fall into the category of

Important to note is that not every possible generalizability study is worth submitting to

One type of reasoning that can be layered onto existing theory to justify an interaction is restricted variance reasoning (Cortina, Koehler, Keeler, & Nielsen, 2019; Cortina et al., 2015, 2022a). A generalizability study could, for example, take the form of determining when a theorized relationship between two variables holds and when situational context factors restrict the variance of one of the variables in such a way that the proposed relationship is weaker or stronger than it was previously thought to be. Cortina et al. (2022b) showed that IPO value of biotech firms was compressed upwards in biotech hubs such as Boston and San Francisco but not in areas such as Chicago and Houston that have middling densities of biotech. Studies that had, not unreasonably, studied predictors of biotech IPO value using data from biotech hubs would have found weaker prediction than would a study using data from mid-density areas.

Consider as another example the mediation in Wasti, Bergman, Glomb, and Drasgow (2000). These authors hypothesized that job masculinity affects perceived sexual harassment, which in turn affects women's health. They further argued that the first stage of the mediation would be weaker in Turkey than in the USA because Turkish women would be likely to see harassment at work as normal and not as harassment at all. Cortina et al. (2022) reframe this interaction in restricted variance terms: The harassment variable is compressed downwards in Turkey, and this compression reduces the prediction of harassment by job masculinity. But what about the second stage of the mediation? Compression on a predictor actually

Let us be clear: Justification of a generalizability study would necessitate an alteration of the existing theorizing about the relationship or model of interest. However, the alteration to theorizing needs to be one of theoretical refinement, clarification, and integration to be of interest to

However,

Another example of generalizability studies welcomed by

Generalizability studies of this type should establish why the testing of this moderator is needed for theory confirmation. For example, it might be that a theorized moderator, when tested, could establish important boundary conditions of the theoretical model similar to our previously mentioned example of restricted variance interaction effects. As such, insights from such a generalizability study would provide important insights into the limitations of the theory's applicability.

Another example for this type of generalizability study specified by Kraimer (2023) are tests of the underlying assumptions of theoretical models with the goal of theory refinement and clarification. As Kraimer (2023, p. 3) rightly notes, this is “an often-overlooked aspect when testing theory.” Kraimer et al. (2023) offer an example from the work of Zhao and Liu (2022). Careful examination of scale characteristics of three different available measures of entrepreneurial passion (i.e., question content, correlations between dimensions, reliability, internal and external validity) challenge the theorized “dimensional structure and operation of multi-dimensional constructs” (Kraimer et al., 2023, p. 10). Zhao and Liu (2022) hence conclude that the theoretical model for entrepreneurial passion needs further development. According to Cronin et al. (2021) such testing of the construction of a unit theory “increases clarity of constructs and causal linkages” (p. 675). Useful guidelines to explore and challenge assumptions of existing theories can be found in the work of Alvesson and Sandberg who elaborate the process of problematization and of generating research questions that challenge assumptions on which theories are built (e.g., Alvesson & Sandberg, 2011; Sandberg & Alvesson, 2011).

The Editors of

Conclusion

Many scholars, ourselves included, have long perceived a “replication crisis” in our field. But the real crisis is that we do not know if we have a crisis or not, the reason being that our journals publish so few replication/reproducibility studies that are independent and constructive. Enter

We are grateful to the

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Work on this paper was supported by a University of Melbourne – Faculty of Business and Economics Visiting Research Scholar grant.