Abstract

A diagnosis of autism does not provide sufficient information to understand how the world is experienced by an autistic person. The World Health Organisation's International Classification of Functioning (ICF) Core Sets for autism provides a framework from which a more in-depth understanding of an autistic person's profile of strengths and needs can be acquired. This is the first U.K. evaluation of the ICF CoreSets for Autism platform, an operationalisation of the ICF Core Sets for Autism via an online assessment. In a Development Phase, 20 autistic adults were supported to complete the assessment, provide feedback via think-aloud interviews and to evaluate it. In a Main Study, which was pre-registered, 464 autistic adults completed the assessment independently online and evaluated it. A participatory approach was used throughout. Using standardised questionnaires, overall autistic adults found the assessment to be ‘acceptable’ and usability was rated as ‘ok’ or better. This was also true for subgroups of autistic adults who expressed clinically significant autistic traits, anxiety or depression, those from minoritised ethnicities, older adults and who were unemployed. The findings suggest the ICF CoreSets for Autism platform has potential as a standardised evidence-based tool to improve understanding of autistic adults’ strengths and needs.

Lay Abstract

Knowing whether or not someone is autistic does not provide information about a person’s strengths and needs, or how they are impacted by their environment. The International Classification of Functioning (ICF) provides an in-depth understanding of how the world is experienced by a person, what their strengths are and where they need help. This is the first test of the ICF CoreSets platform for autism in the United Kingdom. In a Development Phase 20 autistic adults completed the assessment and provided feedback on content, layout and usefulness. The researchers used this to improve the assessment. Nineteen of the original 20 participants then completed the updated version and most said it was acceptable and ok to use, though a few did not. In a Main Study 464 autistic adults completed the assessment online. Overall, most people said it was acceptable and ok to use. We checked and this was also true for autistic adults whose autistic traits were very prominent, those who experienced mental health difficulties, those from ethnic minorities, older adults and those who were unemployed. Overall, this U.K. study shows that the ICF CoreSets platform for autism could contribute to supporting autistic people.

Keywords

Introduction

On its own, an autism diagnosis does not provide the necessary information for effective support to be provided or for a person to understand their own autistic profile and advocate for their needs (Bölte, 2023). There is a general lack of adequate post-diagnostic support for autistic people (Kiehl et al., 2024), and in the United Kingdom, the provision that does exist is variable (Beresford et al., 2020; Norris et al., 2025). Diagnoses of autism are increasingly prevalent, attributed to improved understanding of autism (Russell et al., 2022) but outcomes for this population remain poor (Bentum et al., 2024; Lai et al., 2019). It is vital that post-diagnostic support provision improves. However, clinical diagnosis reports tend to focus on perceived impairment and neglect the strengths of autistic people and the significance of environmental factors in relation to disability. Focus on impairment means that diagnostic assessments tend to be experienced as unacceptable to autistic people (Wilson et al., 2023), which may undermine the development of a positive autistic identity. Evidence suggests that embracing an autistic identity leads to better mental health and wellbeing (Davies et al., 2024). When asked about priorities for what they want from post-diagnostic support, autistic adults indicated that an individualised support plan was a key priority (Crowson et al., 2024).

Autism has primarily been understood within a biomedical model, characterised by a set of cognitive deficits which cause impairments in everyday life (DSM-5-TR, American Psychiatric Association, 2022; ICD 11; World Health Organisation, 2019). A biopsychosocial model proposes that disability emerges through the interaction between biological, psychological and social factors and such a model could lead to a more holistic understanding of autism (Bölte, 2023). It moves beyond diagnostic classification and attempts to identify individual strengths and needs (Black et al., 2023). Use of such a framework could contribute to addressing the need to improve autism post-diagnostic support. The World Health Organisation (WHO) developed the International Classification of Functioning, Disability and Health (ICF) to combine measures of physical and psychological functioning with environmental factors, activities, and participation to provide a framework for describing functioning in relation to health conditions (Organization, 2007, 2019). Individual Core Sets have been developed from this framework to improve the clinical utility and adoption of the ICF for a number of specific conditions, such as stroke (Geyh et al., 2004), severe mental disorders (Guilera Ferré et al., 2020) and attention deficit hyperactivity disorder (Bölte et al., 2024b).

Developing condition-specific Core Sets according to WHO standards follows a rigorous process comprising an empirical multi-centre study, a systematic literature review, a qualitative study with stakeholders, and a survey of professionals and opinion leaders (Selb et al., 2015). Core Sets are a battery of codes from which individual items can then be developed to create an assessment. The ICF Core Sets for Autism were developed from this ICF framework to provide specific measures of strengths and needs for Autistic people, framed around functioning, or day-to-day operating, rather than impairment. The original version was published in 2019 (Bölte et al., 2019) and a first revision has recently been completed (Bölte et al., 2024a). The Core Sets for Autism include brief (60 code) and comprehensive (121 code) versions, with three age-appropriate sets (pre-school, school-age, and older adolescent/adult). As well as providing a holistic assessment of an Autistic person's individualised areas of strengths and needs, the ICF Core Sets assess environmental facilitators and barriers that are most relevant to an individual. This provides a detailed profile that could be helpful for self-management and to guide the support provided by healthcare providers, education professionals, and employers. The adult Core Set for Autism has been developed into an assessment using an online platform to facilitate use by individuals and services.

For this assessment to be useful, it is important that it undergoes validation and implementation evaluation. This is important as too many healthcare interventions used with autistic people do not have an existing strong evidence base, which could be contributing to poor outcomes (Bottema-Beutel, 2023; Vivanti, 2022). Important aspects of validation and implementation evaluation are piloting the assessment platform in a number of settings and evaluating acceptability and usability with autistic adults. This is important to maximise feasibility in practice (Bölte, 2023) and initial work towards these goals has begun in Sweden (Alehagen et al., 2025, in press-a, in press-b). The present work contributes to this process.

A commonly used evaluative framework in healthcare intervention research is the theoretical framework of acceptability (TFA; Sekhon et al., 2017) which defines acceptability as a multifaceted construct that reflects the extent to which people delivering or receiving a healthcare intervention consider it to be appropriate, based on anticipated or experienced cognitive and emotional responses to the intervention. The TFA consists of seven component constructs: affective attitude, burden, perceived effectiveness, ethicality, intervention coherence, opportunity costs, and self-efficacy. Usability is also crucial for the success and adoption of digital health products (Maqbool & Herold, 2024). The System Usability Scale (SUS) (Brooke, 1996) is the most widely used standardised questionnaire for the assessment of perceived usability (Lewis, 2018) and has been shown to be suitable for evaluating the usability of digital health apps (Hyzy et al., 2022). In this article we report a Development Phase and a Main Study. The Development Phase involved 20 autistic adults, resident in the United Kingdom, who completed the assessment with the support of a researcher and provided feedback via think-aloud interviews. Feedback from the think-aloud interview contributed to the first revision of the ICF Core Sets for Autism (Bölte et al., 2024a) and the online platform that hosts the assessment. The revised ICF CoreSets for Autism platform assessment was then evaluated in terms of acceptability using the TFA (Sekhon et al., 2017) and usability using the SUS (Brooke, 1996). The Main Study reports data from a large online sample of autistic adults, who also completed a range of clinical and wellbeing measures and provided demographic data to contextualise and stratify the sample. The purpose of this Main Study was to assess acceptability and usability of the assessment.

Facilitating appropriate use of digital technologies without leaving anyone behind is one of the guiding principles in the WHO global strategy on digital health 2020–2025 (WHO, 2021). It is therefore important to evaluate the utility of assessments in subsets of the population who face additional vulnerabilities, for example, those who have high mental health needs, those from minoritised ethnicities, older adults and those who are unemployed, all of whom can experience different digital health service needs compared to the general population (Kaihlanen et al., 2022). We therefore assessed acceptability and usability in subgroups of the autistic sample in the Main Study who expressed clinically relevant levels of autistic traits, anxiety and depression and also considered those from minoritised ethnicities, older adults (50 years+) and those who were unemployed. These subgroup analyses provide a preliminary evaluation of acceptability and usability in those who experience different types of challenges in their day-to-day lives and provide an indicator of how useful the assessment could be if adopted as a standardised tool.

To summarise, the purpose of the work presented in this article was to develop the ICF CoreSets for Autism assessment with a group of Autistic Adults in a Development Phase and then, in a Main Study, to evaluate the acceptability and usability of the assessment to U.K. autistic adults and to subgroups of autistic adults.

Methods

Patient and Public Involvement (PPI) Prior to the Development Phase PPI events indicated the need for a standardised strengths and needs assessment. These events also communicated enthusiasm from autistic adults in exploring the potential of the ICF CoreSets for Autism assessment for this purpose. An autistic advisor (KS) contributed to funding acquisition for this study. At the start of the study a steering group of eight autistic adults (five female; three male) was set up. Two members identified as being from a minoritised ethnicity, two identified as having significant physical healthcare needs. This steering group met twice throughout the lifecycle of this study and advised on research design, methods, analysis approach and interpretation, dissemination strategies. All members of the steering group were also invited to the study dissemination event. At the end of the study, all steering group members expressed their wish for further work to be done to progress the work on the assessment as all members felt that it held promise for improving the lives of autistic people. Study data are available in an Open Access repository [https://osf.io/t3z7f/overview?view_only = ee8846576c5d48648edcc6beaefe72c4]. For the Main Study, KS contributed to funding acquisition for this study. At the start of the study a steering group of five autistic adults (three female; two male) was set up. One member identified as being from a minoritised ethnic group and one identified as having significant physical healthcare needs. This steering group met twice throughout the lifecycle of this study and advised on research design, methods, analysis approach, interpretation and dissemination strategies. All steering group members in both the Development Phase and Main Study were compensated for their time according to NIHR Involve rates.

Ethical approval

Ethical approval for the Developmental Phase was provided by University of Sheffield Sub-Committee Committee at the School of Psychology (Application No. 049946). Ethical approval for the Main Study was provided by the University of Sheffield Sub-Committee Committee at the School of Psychology (Application No. 057605). This study was registered on the Open Science Framework. This report corresponds to Research Aim 2. Study pre-registration and data are available here [https://osf.io/t3z7f/overview?view_only = ee8846576c5d48648edcc6beaefe72c4].

Developmental phase and main study measures

ICF CoreSets assessment platform (Comprehensive Version). The English version of the ICF Core Sets is hosted on an internet platform (https://icfcoresets.se/). Post-modifications, which the current study contributed to, this contained 121 operationalised ICF codes (body functions: 26, activities/participation: 61, and environmental factors: 34). The assessment includes 257 individual items which are answered using a 10-point Likert scale, from ‘no extent’ to ‘a great extent’, which reflect areas of strength, typical performance or requiring support. Participants could add written comments at the end of the three subsections (body functions, activities/participation and environmental factors).

Acceptability: TFA (Sekhon et al., 2017). The TFA is a 10-item scale which comprises seven constructs (affective attitude, burden, ethicality, intervention coherence, opportunity costs, perceived effectiveness, and self-efficacy) that represent different dimensions of acceptability for healthcare interventions. The items are answered on a 5-point Likert scale. For example, ‘Do you like or dislike the assessment?’ ranging from ‘strongly dislike’ to ‘strongly like’.

Usability: SUS (Brooke, 1996). The SUS consists of 10 items measuring the usability of interventions. It is answered on a 5-point Likert scale from ‘strongly disagree’ to ‘strongly agree’. Scores are interpreted as ‘excellent’ if SUS ≥ 85.5, ‘good’ if 71.4 ≤ SUS < 85.5, ‘ok’ if 50.9 ≤ SUS < 71.4, ‘poor’ if 35.7 ≤ SUS < 50.9, and ‘awful’ if SUS < 35.7. The SUS has an acceptable level of reliability (coefficient alpha of .91) and construct validity (Bangor et al., 2009).

Main study additional measures

Autism Spectrum Quotient-10 (AQ-10; Allison et al., 2012). This is a brief questionnaire used to assess autistic traits in adults. A score above 6 indicates the presence of current clinically relevant autistic traits.

Patient Health Questionnaire-9 (PHQ-9; Kroenke et al., 2001). The PHQ-9 assessed symptoms of depression over the past two weeks. A score of 10+ indicates moderate depression.

Generalised Anxiety Disorder-7 (GAD-7; Spitzer et al., 2006). The GAD-7 screens for and assesses the severity of general anxiety disorder symptomatology. A score of 10+ indicates moderate anxiety.

EUROHIS-QOL 8-item index (Schmidt et al., 2006). This measure assesses quality of life. Total score ranges from 8 to 40, with higher scores indicating a better overall perception of quality of life.

5-level EQ-5D version (Herdman et al., 2011). This measure assesses health-related quality of life in adults within five areas, on that day: mobility, self-care, usual activities, pain/discomfort, and anxiety/depression.

WHO-5 Well-being Index (Topp et al., 2015). This index assesses psychological wellbeing. A raw score from 0 to 25 is calculated, with a higher score indicating a better quality of life.

We modified the Client Socio-Demographic and Service Receipt Inventory-European Version (Chisholm et al., 2000) which collects data on participant demographics, socio-economic status factors and service utilisation. Data from this questionnaire were used to report employment status.

Developmental phase participants

The sample was recruited via the Sheffield Autism Research Lab database. A total of 20 individuals, diagnosed as Autistic by a U.K. healthcare professional within the last 5 years, took part at T1 and 19 individuals remained at T2 (see Table 1). We wanted feedback from those recently diagnosed to gain their insights on whether they thought this assessment would have been a useful supplement or acceptable assessment to be included in the diagnostic/post-diagnostic pathway.

Development Phase Participant Demographics.

Developmental phase procedure

Between November 2022 and January 2023 (T1), individual demonstrations of the ICF CoreSets for Autism platform were conducted in interviews with 20 Autistic adults (19 were conducted online, one was conducted in-person, as per participant preference). Each of the participants was supported by a researcher SC to complete the ICF CoreSets for autism comprehensive-version assessment on the platform and to view their responses after completion. During completion, participants were asked to engage in a concurrent think-aloud interview (McDonald et al., 2012) where they commented on user-friendliness, the appropriateness of the question content, any areas that were missing, clarity of wording and look and feel of the assessment and online platform. Further, on completion of the assessment, participants were asked eight open-ended written questions inviting them to comment on acceptability, perceived usefulness, ease of understanding and suggested recommendations for the ICF Core Sets platform assessment.

Feedback from participants was collated and reported back to the ICF Core Sets for Autism research team (SB, LA, and JH) who further developed and refined the assessment content. Information was then passed from the research team to the software developers who implemented the changes requested by the research team. In April 2023 (T2), the updated assessment was presented to the same autistic adults involved in the initial evaluation who provided further qualitative feedback on the assessment tool. Participants also completed standardised measures to assess user experience of acceptability (TFA), usability (SUS). Each interview at each timepoint took approximately 2 hr to complete. Participants received a £75 voucher per interview as compensation for their time.

Main study participants

The sample was recruited via research databases (ShARL and Autistica) and Prolific.com (an online research recruitment platform: Prolific.com; see Table 2).

Main Study Participant Demographics.

RAADS-14 (Eriksson et al., 2013). This is a screening measure for autism. The RAADS-14 is commonly used in clinical practice as part of an adult autism diagnostic assessment. Items are answered on a 4-point Likert scale: ‘true now and when young’, ‘true only now’, ‘true when I was young’, ‘never true’. Higher scores reflect more Autistic traits. The diagnostic threshold for presence of clinically relevant autism symptomatology is 14.

Autistic group inclusion criteria: 18 years of age or older; currently resident in the United Kingdom; self-confirmation of an official diagnosis of autism from a U.K. health professional; completion of the RAADS-14 (Eriksson et al., 2013) from which a score of 14+ was required. Adverts were presented to people on the databases who were adults and had previously specified that they identified as being autistic. For recruitment via Prolific, a screening questionnaire was only sent to people who had previously indicated they believed themselves to be autistic. In the screener, participants were told ‘we want to test this assessment with groups of autistic and non-autistic adults so participation is not dependent on whether you are autistic’ so there was no incentive to lie about diagnostic status in the screening questionnaire. The Prolific platform requires a government issued ID and a selfie video to be verified via a partner organisation prior to registration to enable creation of an account and it is only possible to create one account per person. This helps to mitigate the risk of fraudulent participants. At the screening stage the following potential participants were not invited to complete the main survey: n = 312 due to indicating they did not have a formal autism diagnosis; n = 23 due to not being diagnosed by a U.K. service; n = 18 due to not scoring 14+ on the RAADS-14. In total, 623 individuals met eligibility criteria according to the screening survey and were invited to complete the main survey. Five hundred and ten participants consented to take part and began the ICF assessment, 464 participants completed the ICF assessment and all parts of the survey (completion rate of 91%; n = 168 autistic adults were recruited via research databases, n = 296 autistic adults were recruited via Prolific.com).

Main study procedure

Between February 2024 and May 2024 participants were invited to complete a screening and demographics survey, administered in Qualtrics. If they met eligibility criteria, they were invited to complete a main survey comprising the ICF CoreSets for Autism platform assessment hosted on an internet platform (https://icfcoresets.se/), followed by a set of standardised questionnaires administered via Qualtrics (see Measures for full details). No researcher support was provided during completion though researcher contact details were provided so that participants could ask questions about the study, if they so wished. Common points of feedback from participants were collated and reported back to the ICF Core Sets for Autism research team (SB, LA, and JH) to consider as potential improvements to be made to the ICF CoreSets for Autism platform. The main survey included two attention control questions to detect participants who did not read the questions and were answering randomly, for example, ‘This is an attention check question. Please select the “somewhat agree” option below’. Eight Autistic participants and two non-autistic participants failed an attention check question. Data from these participants was not included in the final sample. Participants were able to leave comments at the end of the survey if they had any other suggestions or concerns about the assessment which were not covered in the usability or acceptability scales. Participants received a £15 shopping voucher/£15 was credited to their Prolific account, depending on their recruitment method, as a ‘thank you’ for participating.

Analysis procedure

Development Phase Analyses Timepoint 1 Qualitative data were collected from the think-aloud interviews at Timepoint 1 by a female research associate (SC) who had no prior relationship with any of the study participants. Participants were asked for their thoughts on the assessment content, wording, layout, look and feel. The reasons for conducting the research and how data would be handled and analysed were explained to participants in advance of study participation. The researcher made an audio-recording of the think-aloud interview and supplemented this with field-notes from which the researcher made a set of notes comprising key points from each participant. These key points were subsequently summarised and communicated to the assessment development team which fed into assessment development. Responses to open-ended questions were audio-recorded and analysed using interpretive content analysis (Drisko & Maschi, 2016). Participant responses were coded by the research associate who then discussed the output with two other researchers (MF and DP). The number of times each code was mentioned was counted to give a measure of how commonly they were mentioned. These were then described in terms of positive and negative evaluations of the assessment, and suggestions for improvement. On completion, MF and DP conducted an interview process audit. Findings were collated in a formal report which included illustrative quotes. This report was submitted to the assessment development team and the funder. The findings report was shared with participants.

Development Phase Analyses Timepoint 2 acceptability scores on each of the 10 TFA items were recorded for each participant. Total usability scores on the SUS were calculated by converting the SUS item Likert-scale responses to numbers, 1 for ‘strongly disagree’, 5 for ‘strongly agree’. For odd-numbered questions, 1 was subtracted from each item score. For even-numbered questions, 5 was subtracted from each item score. Scores were then summed and multiplied by 2.5 to produce a SUS total score on a scale of 1–100.

Participants also completed eight written open-ended questions after completing the assessment and looking at the report. These questions covered positive evaluations (what they liked, what they found useful, emotional reactions), negative evaluations (what they disliked, emotional reactions), and suggested improvements for the assessment and the summary report. These questions were completed at timepoints 1 and 2 of the Development Phase and were analysed using interpretive content analysis (Drisko & Maschi, 2016).

Main study quantitative analyses

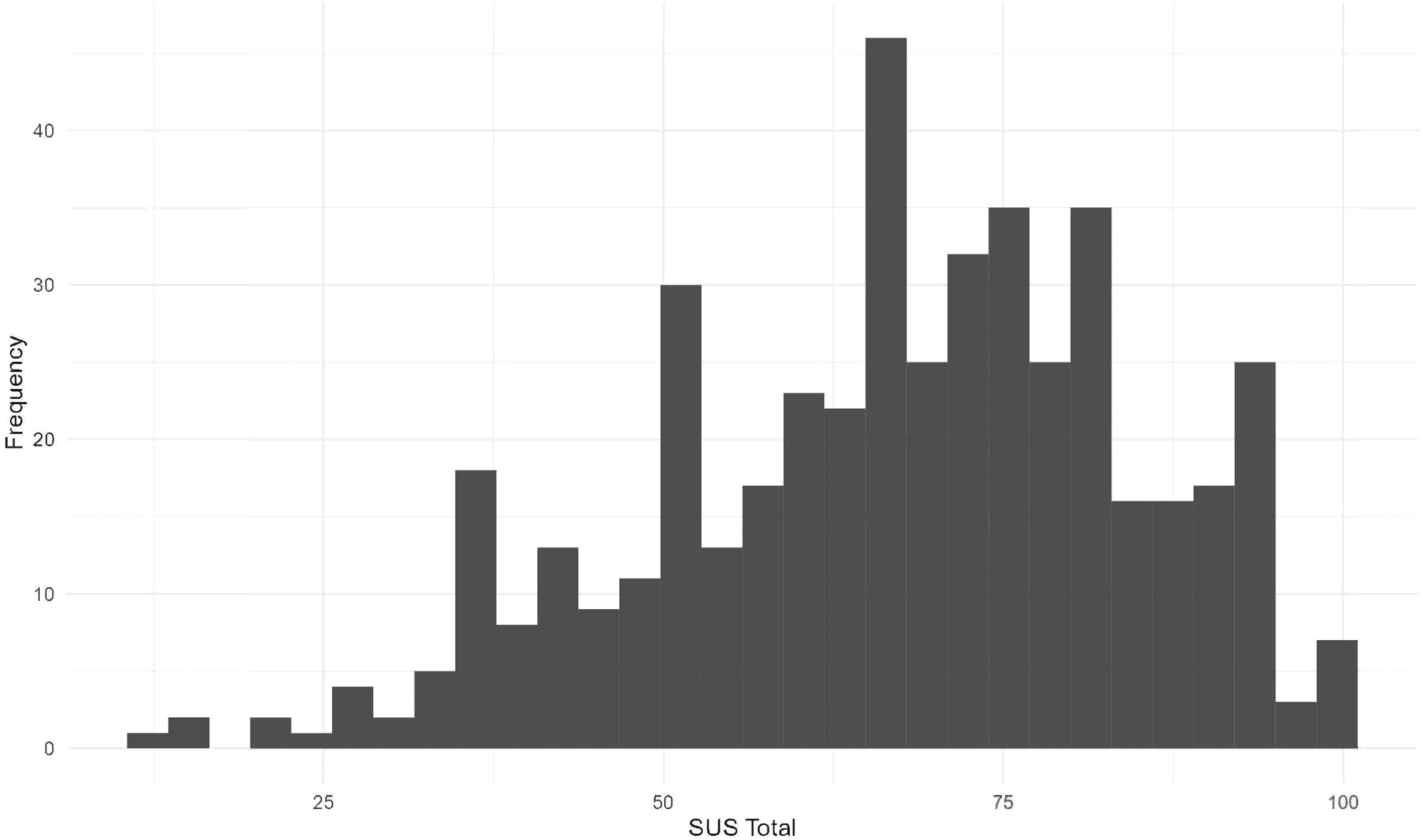

Total scores on all questionnaires were calculated as described in the Main Study Measures section (Table 3) which characterised the sample. Completion rate and completion times for the ICF assessment itself were calculated for each participant and median completion time was calculated. Scores on the 10 TFA items were recorded for each participant, frequencies are reported in Figure 1. Total scores on the SUS were calculated and are presented in Figure 2. Mean scores and standard deviations were calculated for acceptability (TFA item 10) and usability (SUS total) overall and for sub-groups. Spearman's rank-order correlations were conducted between acceptability and all questionnaire total scores to assess whether acceptability was related to strength of clinical features (autistic traits, anxiety, depression, quality of life, wellbeing). The primary pre-registered outcome measures were completion rates, median completion times, assessment of acceptability and usability in the sample overall. Collection of free-text response data enabled further context to responses to be provided by autistic adults and data to be assessed via interpretive content analysis.

Main study frequency of responses to TFA items.

Main study frequency of SUS total scores.

Main Study Standardised Questionnaire Data.

Main study qualitative analyses

At the end of the ICF assessment, participants were asked if they have any final comments about the assessment. Some evaluative responses were also received via email. Both types of responses were analysed using content analysis (Drisko & Maschi, 2016). Participant responses were collected and coded independently by two researchers (MD and KL). These codes were then compared and discussed until consensus was reached, producing the final coding frame [https://osf.io/t3z7f/overview?view_only = ee8846576c5d48648edcc6beaefe72c4] which included codes (e.g., survey structure, question format) and subcodes (e.g., context, thoroughness) arranged into three higher level themes (positive evaluations, negative evaluations and suggestions for improvements). The final dataset was then re-coded using this frame and the number of participants who mentioned each code was counted. These were then described in terms of positive and negative evaluations of the assessment, and suggestions for improvements.

Results

Development phase results timepoint 1—feedback on ICF CoreSets for Autism platform and content development

Qualitative data. The concurrent think-aloud interviews produced feedback on a range of different aspects of the ICF CoreSets for Autism assessment platform. The transcripts from these interviews were analysed using interpretive content analysis to summarise and describe high consensus (mentioned by over 50% of the participants) and moderate consensus (mentioned by 20–49% of participants) issues in relation to the assessment. Below we specify the high consensus areas and provide illustrative participant quotes. Moderate consensus issues (mentioned by 20–49% of participants, n = 4–9) can be found in the OSF registration [https://osf.io/t3z7f/overview?view_only = ee8846576c5d48648edcc6beaefe72c4].

High consensus issues related to: (a) the scoring system (i.e., the need to replace value-laden functioning labels from the response scale and the need to keep the response scale consistent or give clear descriptions when it changes). What does an appropriate range of emotions mean? Who's going to assess that and say that it's appropriate or normal or, you know, neurotypical. So I don't really know so I can't comment. (Participant 1) At the start [of the assessment] it needs to say we're going to score these questions from one to ten. We're gonna score some questions differently. So don't be alarmed if the scoring system changes. Otherwise … it kind of throws you off. (Participant 4)

(b) question wording (i.e., questions to be more specific and include examples to reduce ambiguity). ‘Cooperative and accommodating’ - “in what sense? Like if someone asks you to do something?”. (Participant 13)

(c) incorporation of fluctuations (i.e., acknowledgement that functioning can fluctuate depending on the day/situation should be incorporated). It's the fact that if you're in a calm environment that you feel safe in, you would appear and act fairly typically, but put in a situation that you feel is unsafe … and suddenly I've all those things just go off, so you know the answers to all those questions then change. That's what I'm really struggling with. (Participant 20)

(d) accessibility (i.e., the need to reformat the page so the full question is visible without the need to scroll). The majority of participants had to ask the researcher to scroll back up while they were completing the assessment.

(e) missing content (i.e., additional questions and context around sensory sensitivities, processing, masking, and burnout) or social demand clarification (i.e., the need to specify the social environmental context of the question situations, such as whether conversational partners are known): So the question that needs asking is, how do I feel after I do these things? Like I can do these things but only for a very short amount of time. And then I need to go in and I call it the defraggle process. (Participant 14) It's not really gone into the triggers. You can get sound triggers, smell triggers from scents and the rubbish, touch triggers… (Participant 15) distinguish between being social on your own terms and having to be social. (Participant 17)

The summarised data from these think aloud interviews was passed onto the platform development team with recommendations from improvements which were then implemented as comprehensively as possible whilst ensuring the ICF framework was adhered to.

Development phase results timepoint 2

Quantitative evaluation

Acceptability (TFA) Acceptability of the assessment was reviewed positively by 14 participants according to responses to the summary question ‘how acceptable was the intervention to you?’ (completely acceptable n = 6; acceptable n = 8), neutrally by one (no opinion n = 1) and negatively by four (unacceptable n = 3; completely unacceptable n = 1) according to the TFA.

Usability (SUS) The usability of the ICF Core Sets for Autism assessment was considered ‘ok’ or better by 14/19 participants (excellent n = 2; good n = 4; ok n = 8; poor n = 3; awful n = 2).

Qualitative evaluation

Interpretive content analysis of responses to the written open-ended questions (answered by 19 participants) was used to identify data relevant to three areas: positive evaluations (what they liked, what they found useful, emotional reactions), negative evaluations (what they disliked, emotional reactions), and suggested improvements for the assessment and the summary report. These are reported for responses given after the development team made changes in response to previous feedback. Coded data can be found at [https://osf.io/t3z7f/overview?view_only = ee8846576c5d48648edcc6beaefe72c4].

Positive evaluations commended the thoroughness of the assessment (n = 9), the inclusion of strengths as well as needs (n = 3), the potential for self-reflection and increased self-knowledge (n = 7), the potential usefulness of the report (n = 7), the ease of use of the assessment (e.g., question grouping under subheadings; n = 7), the comprehensibility of the report (n = 5), and the response scale format (n = 4).

Negative evaluations were related to unclear questions (e.g., due to lack of context or examples; n = 12), ableist or seemingly judgemental language (n = 4), length of the assessment (n = 4), difficulties in understanding/using the response scales (n = 2) or the report (n = 2) and no additional insights or understanding after completing the assessment (n = 5). Suggestions for improvements were improving the clarity of questions (n = 2) and the response scale (n = 2), removing ableist language (n = 3), improvements to survey flow (e.g., ability to skip questions, providing a progress bar; n = 3), considering how masking could impact answers (n = 4), and improving the report and clarifying its use for others (n = 4).

Following this phase, further refinements were made to the assessment by the platform development team.

Main study results

Sample characteristics

In order to characterise the sample evaluating the ICF CoreSets for Autism assessment, scores for each individual on each standardised measure were calculated (see Table 3).

ICF CoreSets for Autism assessment evaluation

Completion rates. Of those who started the ICF CoreSets for autism assessment, the completion rate of the ICF CoreSets for autism assessment was 91%.

Completion times. The median completion time was 34 min.

Acceptability

In order to investigate whether the ICF CoreSets for Autism assessment was considered acceptable, TFA item 10 ‘How acceptable was the survey to you?’ scores were used. Overall, Autistic adults found the assessment to be ‘acceptable’ (mean = 3.9/5; SD = 0.87; Figure 1).

Exploratory analyses to assess acceptability in sub-groups of the autistic cohort who scored above clinically relevant cut-points on autistic traits, depression and anxiety were conducted. In those who scored 6 or higher on the AQ10, the assessment was found to be ‘acceptable’ (mean = 3.9/5; SD = 0.85). In those who scored 10 or higher on the GAD7 (moderate anxiety), the assessment was found to be ‘acceptable’ (mean = 3.9/5; SD = 0.85). In those who scored 10 or higher on the PHQ9 (moderate depression), the assessment was found to be ‘acceptable’ (mean = 3.8/5; SD = 0.86). Further, Spearman's Rank-Order correlations indicated acceptability was not significantly correlated with scores on the AQ10, rs < −.01, p = .96, GAD7, rs = −.40, p = .39, PHQ9, rs = −.10, p = .03, WHO-5, rs = .09, p = .06, and was only weakly correlated with EuroHIS_QOL, rs = .15, p < .001. The assessment was also ‘acceptable’ to those who described themselves as ‘Non-White’ (mean = 3.7; SD = 0.71), those who were aged 50 years or older (mean = 3.7; SD = 0.95) and those who were unemployed (mean = 3.9; SD = 0.82). Overall, these results demonstrate that autistic adults in our sample generally found the assessment to be acceptable and this was also the case for those who have additional vulnerabilities or minoritised identities.

Usability

To investigate whether the ICF CoreSets for Autism assessment was considered usable overall, total scores on the SUS were calculated. Overall, autistic adults found the usability to be ‘ok’ (mean = 67; SD = 17.7; range = 12.5–100; interquartile range = 55.0–80.0; Figure 2).

Exploratory analyses to assess usability in sub-groups of the cohort who scored above clinically relevant cut-points on autistic traits, depression and anxiety were conducted. In those who scored 6 or higher on the AQ10, usability was rated as ‘ok’ (mean = 67; SD = 17.5). In those who scored 10 or higher on the GAD7 (moderate anxiety), usability was rated as ‘ok’ (mean = 66; SD = 17.8). In those who scored 10 or higher on the PHQ9 (moderate depression), usability was rated as ‘ok’ (mean = 66, SD = 17.5). Further, Spearmon's Rank-Order correlations indicated usability was not significantly correlated with scores on the AQ10, rs = −.09, p = .07, GAD7, rs = −.04, p = .39, PHQ9, rs = −.09, p = .06, WHO-5, rs = .09, p = .15, and was only weakly correlated with EuroHIS_QOL, rs = .13, p < .01. Usability was also rates as ‘ok’ in those who described themselves as ‘White’ (mean = 67; SD = 17.9) and those who described themselves as ‘Non-White’ (mean = 66; SD = 15.5), those who were aged 50 years or older (mean = 63; SD = 18.5), those who were employed (mean = 68; SD = 18.0), unemployed (mean = 67; SD = 18.0) and retired (mean = 60; SD = 15.5). Overall, these results demonstrate that usability was considered to be ‘ok’ for autistic adults in our cohort in general and in those who were currently experiencing clinically relevant difficulties and did not differ depending on ethnicity or employment status.

Qualitative feedback

Evaluative feedback was provided by participants at the end of the ICF assessment (n = 108) and via email (n = 8). The most commonly mentioned criticism was that the questions lacked sufficient context or examples, which made them difficult to answer (n = 30). Participants felt that their strengths and needs changed over time and in different situations which made giving a definitive answer difficult to some questions. For example: I found this hard to answer in places because my needs change a lot. Some days are good, some days I struggle. I've got learned coping mechanisms and I can be almost ‘normal’ on a good day but on a bad day everything falls apart. the questions with the hinder/improve multiple choice (environmental factors) were extremely vague and very hard to answer as there are so many varied examples of situations that are related to the question. I found myself mentally answering ‘depends’ to every single one.

Other negative evaluations related to survey structure (assessment was too long or overwhelming: n = 8; spelling errors: n = 7; not enough space for comments: n = 2; function of accessibility features: n = 1), to question format (difficulty with neurotypical comparisons: n = 4; limited timeframe for answers: n = 1; simplistic sensory questions: n = 1), to response format (response scale: n = 6), and to survey function (n = 1).

The most frequent positive response was that the assessment was thorough (n = 12). For example: It felt quite comprehensive when working through it so I can't really think of anything that might have been missed out.

Other positive evaluations related to survey structure (additional comments function: n = 1; accessibility features: n = 1; survey format: n = 2), and to potential benefit and enjoyment (n = 2).

The most common suggestion for improving the survey was including questions on other aspects of autistic people's experiences, particularly around specific examples of community/social awareness and amenities (n = 19; e.g., support services, workplace adjustments, public transport, public amenities, supported housing and respite care). For example: you haven't mentioned public toilets or eating in public. I also don't shop for clothes or shoes. I don't like stores. But I do like going to movies when the movie theatre is fairly quiet. Access to Work is a massive topic, as is disabled student allowance but it was not really talked about. The process of applying, waiting and dealing with the case worker have all been extremely challenging and distressing.

Other suggested additions were around autistic strengths and personality (n = 10), executive processing (n = 8), mental health (n = 8), physical health (n = 8), family/home (n = 4), treatments/diet (n = 1), and technology (n = 1).

Other common suggestions for improvement were around improving question format (adding examples and context to the questions: n = 12; rephrasing questions: n = 1, changing comparison to neurotypical people: n = 1), and improving the survey format (more space for comments: n = 2, reorganising sections: n = 2, reminders for the response scale: n = 1).

This feedback was passed to the ICF CoreSets for Autism development team. The coding frame and double coding table can be found at [https://osf.io/t3z7f/overview?view_only = ee8846576c5d48648edcc6beaefe72c4].

General discussion

Overall, these studies indicate that the ICF CoreSets for Autism assessment, in its current form, is generally considered to be acceptable and usable by autistic adults in the United Kingdom. Importantly, we also evaluated the utility of the assessment in subsets of the population who face additional vulnerabilities, including those who have high mental health needs, those from minoritised ethnicities, older adults and those who are unemployed, as these populations can experience different digital health service needs compared to the general population (Kaihlanen et al., 2022). We found the assessment to be acceptable and usable in all sub-groups assessed, namely those who scored above clinically relevant cut offs for autistic traits, anxiety and depression, those who identified as non-White, older adults (50 years+) and those who were unemployed. Qualitative feedback from autistic adults suggested that the assessment was thorough. A small number suggested that the assessment was too long or overwhelming to complete although some suggested adding additional support and functioning sections. The main area of concern for some autistic adults was the lack of context or examples. Some expressed that questions were difficult to answer because functioning and support needs vary according to situation, and also over time.

The results from both the Development Phase and the Main Study build the evidence base for the ICF CoreSets for Autism assessment as a standardised strengths and needs assessment based on the WHO framework (Organization, 2007, 2019). Development of this assessment has been conducted in accordance with the requirements of the ICF Core Sets development process (Selb et al., 2015) and initial work on the psychometric properties of this assessment has been led from Sweden (Alehagen et al., 2025, in press-b). The ICF CoreSets for Autism assessment is currently being evaluated in a clinical trial in adults diagnostic services in the United Kingdom (ISRCTN10283350) and feasibility studies in other settings are also underway. The studies reported here used a participatory approach receiving input from an autistic advisor and steering groups of autistic adults throughout the process. In the Development Phase the length of time spent evaluating the assessment with individual participants meant that detailed qualitative responses could be collected. This concurrent think-aloud interview with potential end users is an important part of assessment development (Jaspers et al., 2004; McDonald et al., 2012). Overall, the Main Study indicates that autistic adults recruited via online databases generally consider the ICF Core Sets for Autism assessment to be usable and acceptable indicating its promise as an assessment for understanding an individual's strengths and needs and potential for supplementing clinical practice. The high completion rate (91% of Autistic adults who started the assessment completed it) and relatively quick average completion times (34 min) indicate that participants recruited to this study did not find the assessment too burdensome when presented as an assessment to be completed independently online.

It is important to note the strengths and limitations of the work reported here. A strength is that all of the work involved Autistic adults as co-researchers throughout. Perspectives from our autistic advisor and both steering groups were invaluable in giving the broader research team the confidence that evaluating this assessment was a valuable endeavour. The perspectives of our autistic researchers also enhanced the study methodologies in myriad ways and enabled the broader research team to stay grounded in the research questions that were most important to autistic people and to more effectively interpret the responses from participants. A limitation is that the conclusions reported here are drawn from those who do not experience digital exclusion due the recruitment being done via online databases. It will be important to conduct further evaluation of the assessment in different contexts and with different subgroups in future. It will also be important to consider how those who would find the current assessment format inaccessible could be supported to engage with the assessment. Another important future direction will be to investigate whether this assessment can be effective in improving outcomes for autistic adults. If this assessment is used to improve post-diagnostic support services and self-management, it is possible that it will have wide-ranging benefits including improved quality of life and wellbeing. It is also possible that, in future, the assessment could contribute to more positive economic markers, such as improved employment rates, particularly if the assessment reports can be used by employers to inform the planning and implementation of reasonable adjustments. Effectiveness is yet to be evaluated in different contexts but doing so is important. In particular, it will be important to evaluate the potential utility of this assessment in clinical services. In relation to the content of the assessment, there are further adjustments that could be made to increase the frequency of positive responses from autistic people, such as the addition of ‘person factors’ from the ICF, representing a large variety of individual characteristics such as coping styles, lifestyles, identity, spirituality and quality of life.

In the studies reported here we used the TFA (Sekhon et al., 2017) to assess acceptability, which considers how appropriate people delivering or receiving a healthcare intervention deem it to be based on anticipated or experienced cognitive and emotional responses to the intervention. While we found the assessment to be generally acceptable overall, and to all subgroups, the whole range of responses must be considered. It is notable that a significant minority of participants did not deem the assessment to be acceptable. It is important to acknowledge that it is unlikely that an assessment of this general format will be seen as acceptable, useful and usable to all. Hence, if adopted by organisations and/or policy makers this needs to be acknowledged and alternative provision explored for those who do not find this assessment to be accessible. However, given that a relevant comparison here is satisfaction with the status-quo, whereby the current standard use of the medical model as a basis of autism understanding in the United Kingdom is considered to be inadequate by autistic people (Crowson et al., 2024; Wilson et al., 2023), we propose that this assessment has promise in contributing to providing a better offering for Autistic adults, particularly if coupled with person-centred support guided by the outcome of this assessment.

In conclusion, the work reported here demonstrates that the ICF CoreSets for Autism assessment has promise as a strengths and needs assessment. This U.K. evaluation, that used a participatory approach, indicates that Autistic adults generally consider the assessment to be acceptable and usable. These conclusions can be drawn not only for autistic adults in general but also for autistic adults who experience additional vulnerabilities in terms of high mental health needs, older adults, minoritised ethnicities and those who are unemployed. This evaluative work is important as too many healthcare interventions are used with autistic people without having a strong evidence base, which could be contributing to poor outcomes (Bottema-Beutel, 2023; Vivanti, 2022). The assessment's focus on strengths as well as needs and the influence of the environment was well received and may contribute to an individual's sense of positive autistic identity, which has been shown to lead to better mental health and wellbeing (Davies et al., 2024). Given that a diagnostic label of autism on its own does not provide the necessary information for effective support to be provided (Bölte, 2023), this assessment is likely to be a useful supplement to a diagnostic label and will likely facilitate both self-understanding of what an autism diagnosis means to the person who receives it and will provide a basis from which appropriate, individualised, support can be planned by professionals.

Footnotes

Acknowledgements

We would like to thank all members of our Autistic steering groups for their contributions.

ORCID iDs

Funding

This work received funding from SBRI Healthcare Grant (Grant No. SBRIH20P1013) and QR-policy funding at the University of Sheffield. The development of the ICF CoreSets platform is funded by Swedish Research Council for Health Working Life and Welfare (FORTE), Trygg Hansa, Folksam, Stiftelsen Promobilia, Sunnerdahls Handikappfond, Stiftelsen Clas Groschinskys Minnesfond.

Disclosure Statement

Sven Bölte discloses that he has in the last 3 years acted as a consultant or lecturer for Medice, Takeda, Neuraxpharm, Merk Sharp and Dohme, and LinusBio. He receives royalties for textbooks and diagnostic tools from Hogrefe, UTB, Ernst Reinhardt, Kohlhammer, and Liber. Bölte is CEO of NeuroSupportSolutions International AB who owns the IP for the ICF CoreSets for Autism assessment platform. All other authors have no conflicts of interest to report.