Abstract

It is often thought that an agent may be held morally responsible for bringing about a negative outcome only if they could have done otherwise. Inspired by previous research linking moral judgment to free will ascriptions and representations of possibility, we probe the reverse link: Does learning about a morally undesirable outcome make preferable alternatives appear more possible? We find modest evidence that this could be the case. In a preregistered experiment, we presented 317 participants with animated footage of a traffic accident in which two bystanders fail to intervene as a third person gets run over by bus, and manipulated whether the victim was evil, virtuous, or neutral. Judging from the same visual input, people indicate that saving an evil victim would have been slightly less possible than saving a virtuous or neutral victim, and arriving at the conclusion that it would have been possible demanded more time. Using drift-diffusion modeling to better understand the underlying cognitive processes, we found that participants were biased against counterfactual attempts to save the evil victim’s life, but then gathered evidence toward their decisions at the same rate regardless of the victim’s morality.

Introduction

In the philosophical literature on free will, it was historically thought that agents can be held morally responsible for their actions as long as they could have done otherwise. This principle has come to be known as the principle of alternative possibilities (for an overview, see Robb, 2020). Suppose, for example, that I sleep through my alarm one morning. In ordinary circumstances, one would conclude that I did so freely and that I am morally responsible for the consequences of that action—for example, any complications that may arise at work that day if I am late. If instead I sleep through my alarm because I was knocked unconscious throughout the night, the absence of alternative possibilities—that is, the fact that there is nothing else I could have done—seems to preclude ascriptions of freedom and responsibility.

This principle, however, can be shown to be counterintuitive with the help of a thought experiment originally devised by philosopher Frankfurt (1969). In this thought experiment, a neuroscientist implants a chip in Ms. Jones’s brain without her knowledge. The chip is programmed to send, at exactly noon the next day, impulses that will certainly cause Ms. Jones to vote for Candidate A if she tries to vote for Candidate B. Then, as it turns out, at exactly noon the next day Ms. Jones decides to vote for Candidate A. Since Ms. Jones decided to vote for Candidate A, the impulses from the device made no difference to her behavior. However, if Ms. Jones had not decided to vote for Candidate A, the device would have activated, and Ms. Jones would have voted for Candidate A anyway. Therefore, Ms. Jones did not have alternative possibilities: It was determined that she would vote for Candidate A, and she could not have done otherwise. Frankfurt (1969) claimed that, nevertheless, one should think of Ms. Jones as having freely voted for Candidate A. Empirical evidence demonstrates that, when encountering Frankfurt cases like the above, people affirm that the agent acted freely (Miller & Feltz, 2011). Furthermore, the tendency to ascribe free will and moral responsibility to agents in Frankfurt-style cases has been documented in a cross-cultural study sampling from 20 countries (Hannikainen et al., 2019).

Why then would the demonstrably false principle of alternative possibilities nevertheless persist, and a play a predominant role, in ordinary moral and legal reasoning? Inspired by convergent lines of evidence revealing effects of actions’ moral valence on ascriptions of agents’ free will (Clark et al., 2014, 2018) and on representations of possibility (Phillips et al., 2015, Phillips & Cushman, 2017; Shtulman & Phillips, 2018), we arrived at the hypothesis our present study sought to test: namely, that a person’s immoral conduct makes alternative courses of action appear more possible than if they had engaged in morally neutral or benevolent conduct. On this view, immoral conduct leads both to the impression that an agent acted more freely, and that they had more alternative possibilities to act. However, the perception that an agent had alternative possibilities is not the cause of them being seen as acting freely (as the PAP stipulates, and as Frankfurt style cases undermine). Instead, both judgments may be rooted in the judgment that the agent acted immorally.

A broader literature has documented phenomena that are compatible with this hypothesis: Research by Clark et al. (2014, 2018) points toward the tendency for immoral action to motivate ascriptions of free will (but see Monroe & Ysidron, 2021). In one experiment, participants read a news story about a pediatric hospital, in which a batch of expensive lasers was either (i) stolen by a thief, (ii) bought by a hospital administrator, or (iii) donated by a philanthropist. Participants in this study were much more likely to view the thief as having acted freely than the administrator. In fact, the thief was seen as having acted slightly more freely than the philanthropist. These studies raise the possibility that the belief that individuals act freely can arise in reaction to moral praise and, especially, blame.

In parallel, a series of studies have shown that immoral events are more likely to be perceived as impossible under time pressure than after a forced delay (Phillips & Cushman, 2017). This pattern has also been observed in the comparison between adult’s and children’s modal reasoning (Shtulman, 2009, Shtulman & Phillips, 2018): Specifically, children are more likely to report that immoral behavior is impossible than are adults. In other words, under intuitive reasoning conditions, the moral valence of an action impacts whether it is perceived as possible or impossible. Building on Phillips and Cushman (2017)’s demonstration that morally bad events themselves are seen as less possible than morally good or morally neutral events, we predict that alternatives to morally bad events should appear more possible than alternatives to morally good or neutral events.

In the present work, we put this hypothesis to the test. In our experiment, participants viewed the exact same animation in every condition, depicting a person being fatally run over by a bus (see Figure 1), while we manipulated whether the victim of the accident was a villain, a moral exemplar, or whether no morally relevant information about the victim was provided (as a control condition). Importantly, the scenario involved two bystanders who, in the actual sequence of events, did not intervene to save the victim. Participants in every condition were asked to determine whether the bystanders could have saved the victim if they had intervened in various ways. Predicated on the assumption that saving a villain is intuitively worse than saving a moral exemplar, we predict that the same counterfactual actions—for example, gesturing at the driver, or pulling the victim out of the way of the bus—would be perceived as more possible when geared to save the moral exemplar’s life (moral conduct) than the villain’s life (immoral conduct).

Frame of the accident video just before the victim (the man in the orange shirt) is run over by a bus.

This study, including planned design and analyses, was pre-registered at https://aspredicted.org/25xm3.pdf. Open data, scripts, and materials are available on the Open Science Framework at: https://osf.io/7qzav/.

Method

Design and participants

We used a 3 (moral character of victim: good vs. bad vs. neutral, between subjects) × 18 (specific actions that might have saved the victim’s life: 15 target actions and three control items, within-subject) design. 317 participants were recruited via prolific.co (mean age = 37.5 years, SD = 13.5 years, 159 female, 158 male), and were native English speakers with a 90% approval rate. Sample size was determined by planning for 90% power to detect a small effect (w = 0.2) in a 3×2 chi squared test, probing the influence of victim moral character (good vs. bad vs. neutral) on the distribution of yes/no judgments when asking about an action’s possibility (using the pwr package in R; Champely, 2020). This is an approximate but conservative estimate, since our study involved not one, but 15 possibility judgments per participant. Participants were compensated with £0.50 for an estimated 4 min of their time.

Materials and procedure

The experiment was implemented in jsPsych (https://www.jspsych.org/7.3/) and hosted on cognition.run. A demo version and the source code can be accessed at https://neeleengelmann.github.io/alternative-possibilities/.

First, participants were presented with the description of either a morally good, morally bad, or a morally neutral person, alongside a (cartoon) picture of the person which would be used in a subsequent animation. The descriptions read:

Tom consistently demonstrated unwavering compassion and empathy towards others. He actively volunteered at local charities and supported marginalized communities. In his personal life, he prioritized open and honest communication, always strove to treat others with respect and fairness. Tom’s friends and family remember his integrity, his unwavering support, and his drive to stand up for what is right, even in difficult situations.

Tom’s positive outlook and resilience in the face of challenges inspired those around him. His everyday acts of compassion, whether lending a helping hand to those in need or practicing random acts of kindness, had a ripple effect on those around him, encouraging them to follow suit.

In his personal life, Tom’s interactions were marked by callousness and indifference. He consistently manipulated and exploited those around him for personal gain, showing no remorse for the suffering he inflicted on others. Whether it was deceiving people for financial advantage or manipulating their emotions for his own amusement, Tom's moral compass was deeply distorted.

Tom’s acquaintances remember his consistent pattern of dishonesty, betrayal, and complete lack of accountability. He was unapologetic about his actions and refused to take responsibility for the harm he caused, deflecting blame onto others, and manipulating situations to his advantage.

His wardrobe consisted of plain and practical clothing, lacking any sense of style or individuality. In his day-to-day routine, Tom fulfilled his obligations responsibly, but he occasionally displayed a tendency to be overly cautious and stingy with his money.

In conversations, he engaged in polite but unmemorable exchanges, rarely offering insightful or engaging remarks. Tom pursued a range of hobbies and interests, although his lack of passion or expertise in any specific area made him appear somewhat uninteresting.

As a manipulation check, we asked “How would you evaluate Tom’s moral character?” on a scale ranging from 1 (“very bad”) to 5 (“very good”). Next, we informed participants that Tom had died in a traffic accident, and that they were going to watch a short animation (with no graphic details) of the situation that led to Tom’s death. In the video (see Figure 1 for an example frame), the person who was introduced as Tom moves from the top left corner of the screen toward the bottom right, approaching a street as he does so. At the same time, two other agents (a man in a blue jacket and a woman in a business suit) move from right to left. Meanwhile, a yellow bus appears on the street, moving from left to right and colliding with Tom just as he crosses the street. At this point, the video stops. The video was created in Microsoft PowerPoint and lasted 10 seconds. Participants watched the video three times before advancing to the next phase of the study.

After watching the video, we informed participants that their subsequent task would be to evaluate whether different actions by the bus driver, the businesswoman, or the man in the blue jacket would have been possible, with each action describing a way in which Tom might have been saved. Their task was to indicate either “yes” or “no,” and to provide their assessment as quickly as possible, since we were measuring their reaction times as well. We induced soft time pressure by instructing participants that there was a maximum of 8 seconds to provide a response. After that time, a trial would be recorded as invalid. Responses were provided via keyboard, with the e and i keys designated as yes or no, respectively (key assignment was balanced between participants). The 15 target actions included statements such as: “The man in the blue jacket could have pushed Tom to the side,” “The businesswoman could have gestured at the bus driver to veer off the street,” or “The bus driver could have honked at Tom to keep him off the road.” In addition, we included three control items which we expected participants to consider impossible (e.g. “The businesswoman could have stopped the bus with her mind”). The statements were presented in random order, separated by the presentation of a fixation cross of varying duration (between 250 and 2,000 ms). The full list of actions is available in Appendix 1.

After recording participants’ possibility judgments, participants were asked the question “Should Tom have been saved?” (with the response options “yes” or “no”). This question was presented in the same format as the other reaction time trials, and was intended as a further manipulation check.

Lastly, we asked participants to express their agreement with the claims that either the man in the blue jacket, the businesswoman, the bus driver, or Tom himself were responsible for the accident on a 5-point scale ranging from “not at all” to “completely.” We also asked them for their agreement to the Principle of Alternative Possibilities:

In law and philosophy, people sometimes affirm that a person is responsible for a certain outcome only if they could have done otherwise. For example, if a person sets their alarm for 7 AM and is late to work that day, they are responsible for having been late to work because they could have set their alarm for 6 AM instead. This idea, that a person is responsible for a certain outcome if they could have done otherwise, is called the Principle of Alternative Possibilities. To what extent do you agree that the Principle of Alternative Possibilities is true?

Agreement was rated on a 5-point scale ranging from “strongly disagree” to “strongly agree.” The experiment ended with the assessment of demographic variables, an attention check in the form of a simple transitivity task, 1 and a debriefing.

Results

Manipulation checks revealed that the victim’s character was perceived as significantly different across conditions (all p's < .001 in pairwise t-tests, after Holm-adjustment for multiple comparisons), yet participants were equally likely to believe that the victim should have been saved (χ2df=2 = 5.03, p = .081).

Participants’ victim character ratings were almost at floor for the morally bad victim (M = 0.19, SD = 0.52), high for the morally good victim (M = 3.80, SD = 0.65), and in the middle for the morally neutral victim (M = 2.59, SD = 0.63). Meanwhile, the belief that the victim should have been saved was roughly equally high across conditions (ranging from 78% for the evil victim condition, to 83% for the morally good victim, to 89% for the morally neutral victim).

Pre-registered analyses

Possibility judgments

As expected, agreement with the three control items (claiming that bystanders could have stopped the bus with their minds or with their bare hands) was low (between 92% and 98% “no” responses), indicating that participants took the task seriously overall. Responses to these three items are excluded from the following analyses. In addition (and as preregistered), we excluded all trials on which reaction time was below 200 ms, since we take such responses to reveal insufficient attention.

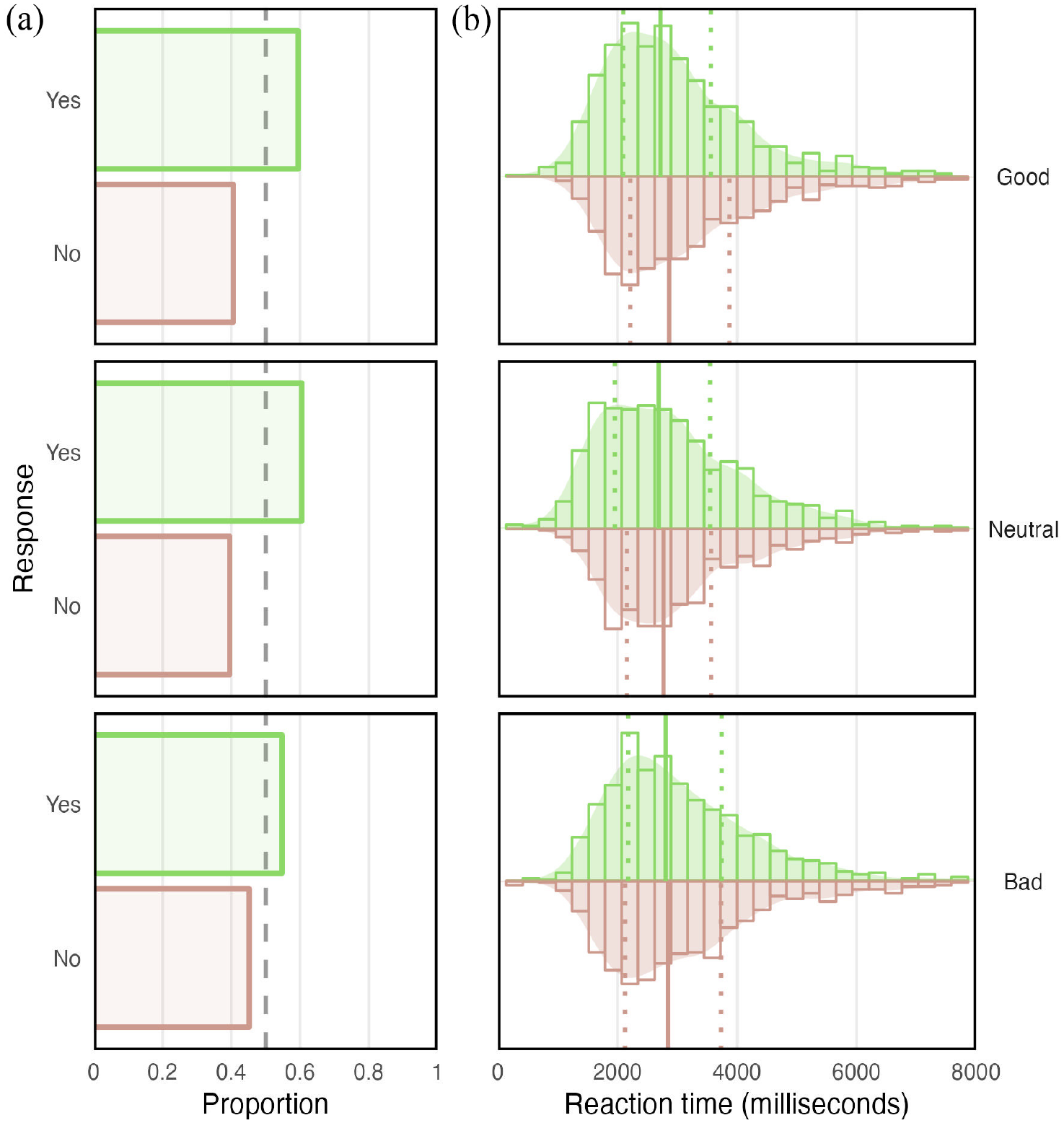

Figure 2a displays the proportions of “yes” and “no” responses across all target items per victim character condition. Participants thought that saving a morally bad victim was slightly less possible (pYes = .55), than saving a morally good victim (pYes = .59) or a morally neutral victim (pYes = .60). In line with our preregistration, we tested for an effect of victim character on possibility judgments by comparing a logistic regression model including only random intercepts for participant and item to a model that additionally contained a fixed effect of character via a likelihood ratio test. This test was not significant at the preregistered alpha level of .05 (χ2 df=2 = 5.92, p = .052). Nevertheless, counterfactual life-saving actions were perceived as slightly more possible when the victim was good compared to bad (OR=1.39, z=2.05, p=.041), or neutral compared to bad (OR = 1.43, z = 2.20, p = .028).

(a) Proportion of “yes” and “no” responses to the question whether certain actions that might have saved the victim would have been possible, per moral character condition. (b) Distribution of reaction times and median reaction times (vertical lines) to the question whether certain actions that might have saved the victim would have been possible, per victim character condition. Dotted vertical lines indicate interquartile range.

Reaction times

See Figure 2b for grouped histograms of reaction times per victim character condition and response (“yes” vs. “no”). If immoral conduct (saving a bad victim) facilitated a “no” response to possibility questions and moral conduct (saving a good victim) facilitated a “yes” response, we would expect faster “no” compared to “yes” responses in the morally bad victim condition, but faster “yes” than “no” responses in the morally good victim conditions. Therefore, in line with our preregistration, we used stepwise model comparisons to test whether response (“yes” vs. “no”), victim character, and their two-way interaction predicted reaction times. Indeed, the data were best described by a model that included random intercepts of participant and of item, as well as fixed effects of response (χ2df=1 = 20.94, p < .001), character (χ2 df=2 = 24.33, p < .001), and the response × character interaction (χ2 df=4 = 50.14, p < .001). Inspection of median reaction times per condition and response revealed that “yes” and “no” responses were equally fast in the morally bad victim conditions (median rt for “yes” = 2,805 ms, median rt for “no” = 2,848 ms, z = 0.64, p = .52), while “yes” responses were faster than “no” responses in the morally good victim condition (median rt for “yes” = 2,721 ms, median rt for “no” = 2,864 ms, z = 3.61, p < .001), and in the neutral victim condition (median rt for “yes” = 2,687 ms, median rt for “no” = 2,769 ms, z = 2.38, p = .017). Thus, while “yes” responses were descriptively faster than the “no” responses in all conditions, the difference was diminished (and non-significant) when victims were described as morally bad. In particular, “yes” responses seem to have been slowed down in this condition (see also Figure 2b).

Exploratory analyses

Principle of alternative possibilities

Agreement with the Principle of Alternative Possibilities was moderate in all victim character conditions (Mgood = 2.78, SDgood = 0.73, Mbad = 2.86, SDbad = 0.73, Mneutral = 2.78, SDneutral = 0.77), and did not differ significantly between conditions.

Responsibility judgments

Participants’ ratings that either of the two bystanders were responsible for Tom’s accident were at floor level in all conditions (for the man: Mgood=0.21, SDgood= 0.61, Mbad=0.20, SDbad=0.61, Mneutral=0.18, SDneutral=0.43, for the woman: Mgood = 0.32, SDgood = 0.70, Mbad = 0.17, SDbad = 0.49, Mneutral = 0.22, SDneutral = 0.56) and did not differ significantly between any of them. The driver’s responsibility was rated as somewhat higher (Mgood=1.74, SDgood=1.23, Mbad= 1.44, SDbad=1.10, Mneutral=1.78, SDneutral=1.06), again with no significant differences between conditions. The victim himself was seen as most responsible for the accident (Mgood=2.96, SDgood=0.85, Mbad=2.95, SDbad=0.98, Mneutral=2.73, SDneutral =0.91), also independent of victim character.

Hierarchical drift-diffusion model

For further insight into the cognitive process underlying people’s responses in our task, we applied a drift diffusion model to the data. Drift diffusion models can extract information about components of a decision process from binary response data and reaction times (Ratcliff, 1978; Ratcliff & McKoon, 2008; Ratcliff & Smith, 2004; Ratcliff et al., 2016). They have their origins in psychophysics, where they are used to model responses to classical paradigms like the Stroop task (see MacLeod, 1991), and have since been applied to a growing range of other tasks as well (see, e.g. Cohen & Ahn, 2016). Myers et al. (2022) provide an excellent and novice-friendly introduction to the framework. Put briefly, drift diffusion models assume that a binary decision process can be represented as follows: There are two “boundaries,” an upper and a lower boundary, which represent the response options in a two-alternative forced choice task (in our experiment: “yes” vs. “no”). The decision process starts somewhere in the middle, and then evidence is accumulated over time in favor of either response option (e.g. via sensory perception or reasoning). Through this accumulation of evidence, the process gradually moves toward one of the response boundaries. Once a response boundary is reached, the decision has been made.

The values of four parameters determine the characteristics of the process in a specific task: z (starting point), v (drift rate), a (boundary separation), and t (non-decision time). The starting pointing, or bias, parameter z determines where the evidence accumulation process initiates, on a normalized scale from 0 to 1—where the value of 0.5 implies that the starting point of evidence accumulation process is equidistant from the two response boundaries. The z parameter can differ from 0.5 in circumstances in which participants exhibit an initial bias or predisposition toward either response option even before the evidence accumulation process begins. The drift rate, v, represents how strongly or rapidly the evidence draws the response toward either boundary (the ease of information processing). The drift rate will be very strong for easy tasks, but would be weaker for harder tasks (e.g. when conflicting evidence is presented). Boundary separation, a, specifies how much evidence the reasoner requires to decide. Large values for boundary separation represent increased response caution. Finally, the t parameter represents how much time is needed for all components that are extraneous to the central decision process. This encompasses stimulus encoding and the time that is needed to execute a motor response.

Decomposing the decision process in this way allows for insights that the simple comparison of response times and of the frequency of “yes” versus “no” responses cannot provide. For instance, longer reaction times in one experimental condition compared to another may be due to increased response caution in this condition (differences in boundary separation, a), or to increased difficulty of information processing (differences in drift rate, v). Evidence for the validity of the drift diffusion framework comes from studies in which participants have been instructed to modify their behavior in ways that should specifically affect one parameter or another, or studies in which tasks have been modified such that specific parameters should be affected. For instance, people have been instructed to selectively emphasize speed or accuracy of their responses, and it could be shown that increased accuracy leads to larger boundary separation, whereas emphasizing speed leads to lower values on this parameter (Milosavljevic et al., 2010; Voss et al., 2004).

Most applications of drift diffusion models have been in psychophysics, and only few in higher order reasoning like moral judgment (but see Cohen & Ahn, 2016; Siegel et al., 2022; Yu et al., 2021). Therefore, there are no clear benchmarks as to how the model’s parameters are affected by moral content, and we regard our analysis as exploratory. Nevertheless, some effects are more plausible than others. For once, it is possible that drift rate, v, will be affected by victim morality in our task. When faced with the question whether a morally bad victim could have been saved, participants might experience a conflict between the obligation to save and the reluctance to help someone unlikeable. They might still, by and large, arrive at the conclusion that the morally bad victim could have been saved, but evidence accumulation in favor of this response might be slowed down compared to the conditions in which there is no conflict. Such a difference in drift rate (weaker in the morally bad victim conditions) would be consistent with the observation that “yes” responses were slowed down in this condition relative to the others. However, differences in other parameters might also explain it. For example, people could be biased toward the “no” response in the morally bad victim conditions, a difference in the start point parameter z. When faced with the question whether a morally bad victim could have been saved, people might feel more drawn to the “no” response initially, but then accumulate evidence toward the “yes” response with the same strength as in the other conditions. Due to the process starting closer to the “no” response in the morally bad conditions, this difference would also lead to slower “yes” responses in this condition.

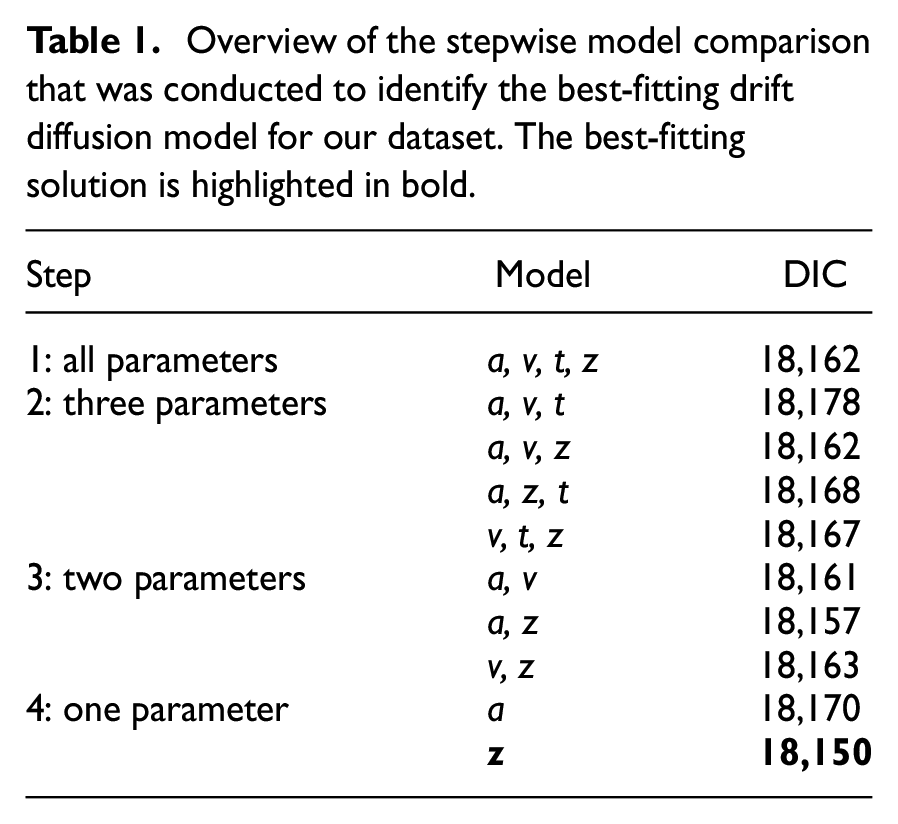

We conducted a hierarchical drift-diffusion model using the hddm package in python (Wiecki et al., 2013). Convergence was achieved in a model with 5,000 Monte Carlo Markov Chain samples, 20% burn-in and a thinning factor of 4. We first fit a model in which all parameters (drift rate v, non-decision time t, boundary separation a, and bias z) were allowed to vary by victim character condition. We then fit four further versions of the model, holding one of the four parameters fixed across conditions each time, and checked whether model fit was improved (based on the deviance information criterion, DIC, where smaller values indicate a better fit, taking into account the number of free parameters in the model). A model with three parameters varying per condition (a, v, and z) provided an equally good fit as the full model. Using this model as the new baseline, we subsequently fit three new models with only two parameters varying per condition, and discovered that a model in which only a and z varied per condition achieved the best fit. Finally, holding a constant as well further improved model fit. Table 1 provides an overview of the stepwise model comparisons.

Overview of the stepwise model comparison that was conducted to identify the best-fitting drift diffusion model for our dataset. The best-fitting solution is highlighted in bold.

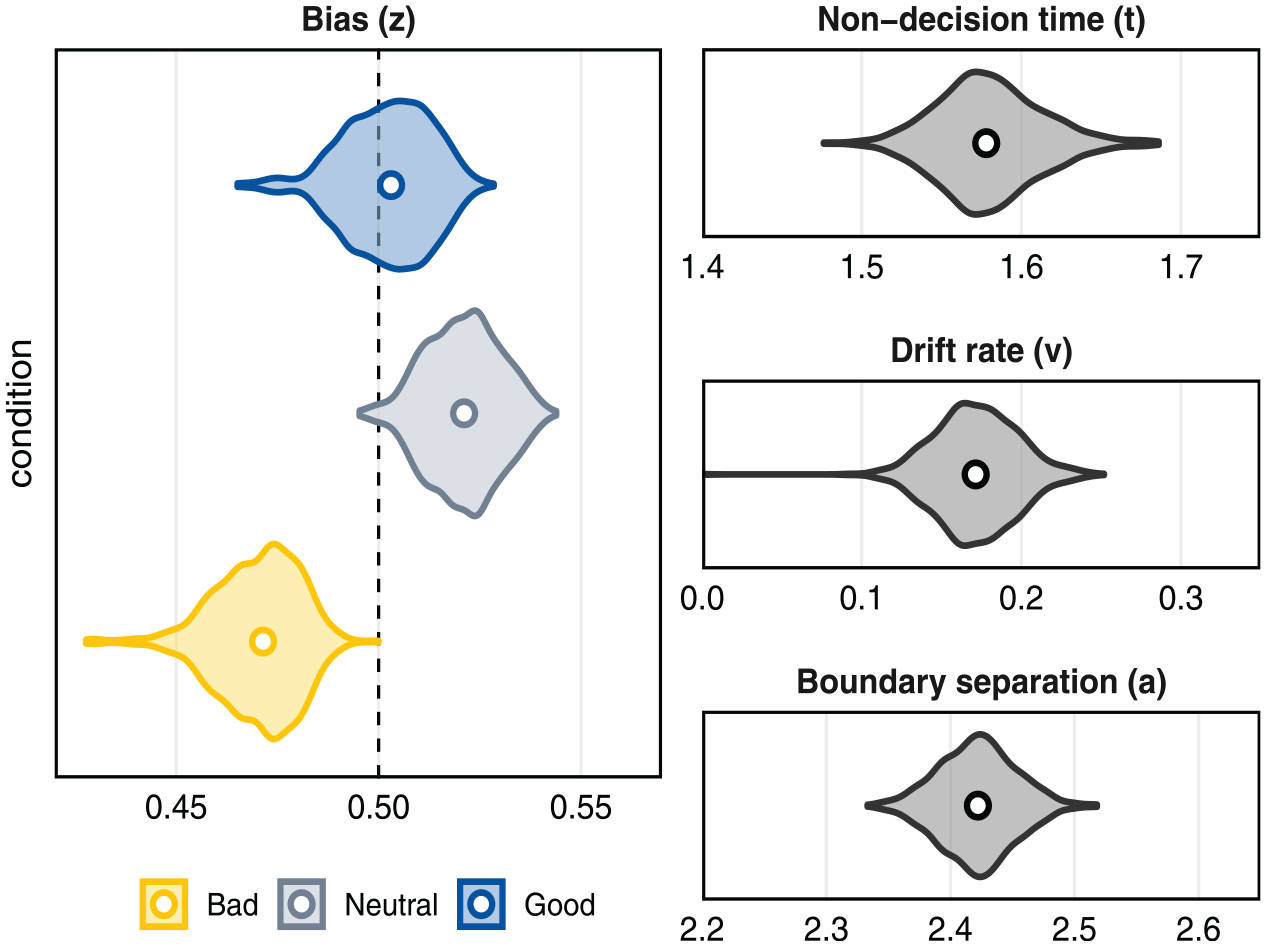

Our final, best-fitting model was thus the one in which non-decision time (t = 1.58 s, 95% HDI [1.52–1.64]), drift rate (v = 0.17, 95% HDI [0.12–0.22]), and response caution (a = 2.42, 95% HDI [2.36–2.48]) were unaffected by condition. Meanwhile, bias z varied across conditions, as shown in Figure 3.

Posterior distribution of model parameters in the best-fitting model with condition-varying bias.

Bias was lower in the bad condition (z=0.47, 95% HDI [0.45, 0.49]) than in either the neutral (z=0.52, 95% HDI [0.50, 0.54]) or the good (z = 0.50, 95% HDI [0.48, 0.52]) victim conditions (p(neutral > bad) > .99, p(good > bad) =.99). The good and neutral conditions did not differ as markedly (p(neutral > good) = .92). Contrary to expectation, participants were biased towards possibility for the neutral – and not the morally good – agent. These results therefore point toward differences in the starting point of evidence accumulation. In particular, participants appeared to be predisposed toward impossibility judgments in the bad victim condition, relative to either the neutral or good victim conditions. Appendix 2 provides exploratory analyses of the relationship between self-report measures and drift diffusion model parameters.

Discussion

Does moral valence influence the construal of alternative possibilities? We here investigated this question by varying the moral character of the victim of a traffic accident, and asking participants whether bystanders could have saved the victim by performing various counterfactual actions (e.g. “could the man in the blue jacket have stopped Tom from crossing the road?”). We assumed that actions which would have saved a morally bad victim would be perceived as immoral conduct, and actions that would have saved a morally good victim would be perceived as moral conduct. Our prediction was that immoral conduct, that is, actions that would have saved a morally bad victim, would be seen as less possible than moral conduct, that is, actions that would have saved a morally good victim. Our results documented a small effect in the expected direction. Actions that would have saved a morally bad victim were perceived as slightly less possible than actions that would have saved a morally good or neutral victim (in consonance with Phillips & Cushman, 2017, but extending the scope of their results). The physical actions themselves were the same in all conditions.

More pronounced effects were observed for reaction times (than for possibility judgments). For one, all decisions were slower when victims were described as morally good or bad rather than as morally neutral. 2 Furthermore, “yes”-responses to the question whether saving the victim would have been possible took longer when the victim was morally bad compared to when he was morally good or neutral. Through the lens of drift diffusion modeling, we may be able to better understand the source of the slowed-down Slower “yes” responses to the question whether immoral conduct (saving a morally bad victim) would have been possible were mirrored in condition differences in the bias parameter, z, of the drift diffusion model. These differences indicated that the process of evidence accumulation started closer to the “no”-boundary when the victim was morally bad, compared to the other conditions. A salient alternative hypothesis would have predicted a difference in drift rate v, that is, in information processing. If the drift rate had been lower in the morally bad condition, this would have indicated slower evidence accumulation when contemplating the saving of a villain’s life. This is not what we found; judging that a morally bad victim could have been saved did not take longer as a result of cognitive conflict, for example, between the concurrent representations of a target action as both physically possible and morally undesirable. Rather, immoral actions engender a bias toward impossibility, such that participants were by default, at the stimulus onset, “attracted” to the conclusion that immoral actions (but not morally neutral or good actions) are impossible—and this fact primarily accounted for delayed responses in the morally bad condition. In sum, our manipulation of victim character shifted the starting point, without affecting the ease, of evidence accumulation.

The fact that we did not observe salient differences in drift rate between moral character conditions also somewhat alleviates potential worries that our results for the morally bad victim conditions are an artifact of stimulus complexity. When we ask whether a morally bad victim could have been saved, we prompt people to represent that a bad thing (the accident) happening to someone bad is good, and that the bad thing not happening would therefore have been bad. The mere fact that this counterfactual alternative is rather complex could have explained why people take longer to indicate that it would have been possible. However, difficulties in information processing are usually assumed to be reflected in lower drift rates for the respective stimuli. This is not what we observed. Nevertheless, future studies should control for the complexity of stimuli across conditions. For instance, it would be possible to add a control condition in which the morally good stimuli are the more complex ones instead (e.g. asking whether it would have been possible that someone morally good would not have been saved in a traffic accident).

Future research should also address other limitations of our present study: First, future studies should describe actions that differ more drastically in their perceived morality. We found that people also largely believed the morally bad victim should be saved—which may have attenuated the effect of victim character on possibility judgments. Second, previous research has documented discrepancies between responses to realistic versus unrealistic stimuli (Francis et al., 2017; Kneer & Hannikainen, 2022)—which points toward the need to replicate our current findings using more immersive stimuli. Finally, the fact that preventing a morally bad victim’s death was seen as (slightly) less possible could be rooted in beliefs about karma. Perhaps bad things happening to bad people appears fated to some participants, and hence not easily preventable. This hypothesis was not explored in the present study. However, the fact that participants rated the victim to be equally responsible for his own accident in all conditions seems to speak against this explanation for this particular scenario.

To conclude, in this study, we found limited, yet perhaps promising, evidence that moral valence influences the construal of alternative possibilities. Morally valued counterfactuals were seen as slightly more possible than morally devalued counterfactuals, although the difference was small. More evidently, acknowledging devalued alternative possibilities took longer than acknowledging valued alternative possibilities. Drift diffusion modeling indicated that this effect was primarily explained by a pre-decisional tendency to consider devalued counterfactuals impossible (see also Phillips & Cushman, 2017), and not by the manifestation of cognitive conflict between a behavior’s morality and its modality.

Footnotes

Appendix 1

The man in the blue jacket could have pushed Tom to the side.

The man in the blue jacket could have pulled Tom out of the way of the bus.

The man in the blue jacket could have gestured at the bus driver to veer off the street.

The man in the blue jacket could have yelled at the driver to break immediately.

The man in the blue jacket could have yelled at Tom to get out of the way of the bus.

The business woman could have pushed Tom out of the way of the bus.

The business woman could have dragged Tom out of the way of the bus.

The business woman could have gestured at the bus driver to veer off the street.

The business woman could have stopped Tom from crossing the road.

The business woman could have signaled at Tom not to cross the road.

The bus driver could have swerved off the road to avoid a collision.

The bus driver could have hit the brakes in time to avoid a collision.

The bus driver could have honked at Tom to keep him off the road.

The bus driver could have yelled at Tom out the window to stay back.

The bus driver could have flashed his lights to catch Tom’s attention.

The bus driver could have brought Tom to a halt with his mind. (control item)

The man in the blue jacket could have stopped the bus with his bare hands. (control item)

The business woman could have stopped the bus with her mind. (control item)

Appendix 2

To enrich our interpretation of the drift diffusion parameters, we examined the pattern of correlations between subjects’ posterior estimates for each parameter and their self-reports at the end of the experiment of the victim’s moral character, bystanders’ responsibility and attitudes toward saving the victim’s life. Individual differences in non-decision time and boundary separation revealed no linear relationship to responses to these post-test questions. Meanwhile, bias was positively associated with evaluations of the victim’s moral character, r = .64, BF10 = 7 × 1032. In other words, participants who evaluated the victim’s character positively were more likely to exhibit bias toward a possibility judgment than participants who evaluated the victim’s character negatively. By contrast, individual differences in the bias parameter were unrelated to beliefs in bystanders’ responsibility, BF10 = 0.68, or the belief that the victim should have been saved, BF10 = 2.78. Drift rates exhibited the reverse pattern: Specifically, the rate at which participants accumulated evidence toward a possibility judgment correlated positively with beliefs in bystanders’ responsibility, r = .44, BF10=3× 1010, and with the belief that the victim should have been saved, r=.27, BF10=9×103, but not with evaluations of the victim’s moral character, BF10=0.29. In other words, faster evidence accumulation toward the affirmative response was associated with the overt belief that the agents were responsible, and with the conclusion that the victim should have been saved.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded by a grant from the Spanish Ministry of Science and Innovation (PID2020.119791RA.I00). Neele Engelmann’s work on this article was additionally supported by Deutsche Forschungsgemeinschaft (DFG) Grant Number: 434400506.