Abstract

Introduction

As deputy editors for Paramedicine journal as well as peer-reviewers for numerous other journals, we have the privilege of reading and providing constructive feedback to our research colleagues to improve the rigour and contribution of their research and clarify its message. Whilst we are frequently impressed by some of the strong work submitted, we have seen many research submissions to these journals where design flaws present from the inception of the project mean the findings are not meaningful and do not (or cannot) contribute to the broader body of knowledge. Often it is clear that an enormous amount of time and effort has gone into the work, only to produce something that is not publishable. There is much that can be discussed about taking the time to thoroughly investigate and identify the most appropriate methodological approaches for a study. However, in this editorial, we want to limit this examination to the significance of outcome measures, and in particular, the need to ensure the correct outcome measures and tools are used at the correct time.

Outcome measures are the foundation upon which medical research builds its conclusions. They are critical for assessing the efficacy of interventions and informing clinical practice. 1 Using consistent or standardized outcome measures and tools to derive these measures supports the validity, reliability, comparability, and utility of study findings and allows for data from different studies to be aggregated and compared, enhancing the statistical power and providing a clearer picture of what works and what doesn't in clinical practice. 2 This ability to aggregate study findings is essential for conducting meta-analyses and systematic reviews, which provide a higher level of evidence by combining data from multiple sources. 2 They also reduce the risk of bias and improve the reproducibility of research findings, which are essential for advancing knowledge and improving patient care.1,2 Using consistent and rigorous outcome measures and tools improves the generalizability of findings and when translating the research into practice, provides a stronger basis for clinical recommendations and guidelines. 2 However, as research progresses the tools to derive the outcome measures we seek are tested, validated and updated. As researchers, we need to stay abreast of these advances to ensure our research can support its claims and stand up to future scrutiny.

In this editorial, we attempt to demonstrate the importance of taking the time to consider the study outcome measures and tools used. We will do this by discussing our experience with encountering a lack of consistency in tools used to derive an outcome measure, and when attempting to remain consistent with previous research should be questioned. We will use the Utstein framework, widely implemented in cardiac arrest research, as an exemplar of how consistency can be achieved and result in advances in cardiac arrest research. We will then briefly connect with the patient-centred outcome measures (PCOMs) before finally suggesting a way forward for researchers to leverage these insights when developing their study protocols and their submissions to this journal.

To be, or not to be……(consistent)

In 2023, I (KE) led a team that conducted a large systematic review of emergency department distraction tools for managing pain and distress in children to see which tools may be suitable for the prehospital setting. 3 The quality appraisal identified a range of methodological flaws across the 29 included studies which compromised the strength of the resulting evidence (for example many studies did not stratify the children according to age, so in one study, 5 year old children were compared with 12 year old children). 4 However, one of the surprising findings, was that across the studies (including randomised and non-randomised controlled trials), 12 different pain measurement tools and 15 different distress measurement tools were used. 3 Many of these were validated tools, so when constructing the study protocol, the researchers may have felt confident that their approach was sound, however, had they conducted a more thorough surveillance of the existing literature, they may have identified that one tool was more prolific in the literature and was suitable for their study. To be clear, we are not suggesting there is one supreme tool, nor are we suggesting that the most prolific tool is always the most appropriate tool, we are merely suggesting that for many of the studies that used less common (yet potentially validated) tools, there was a more prevalent, rigorous and validated tool that would have suited their study, study setting and participants. Instead, the heterogeneity in measurement tools used across the studies, contributed in part, to our inability to pool the findings and conduct a meta-analysis. 3 As mentioned, there were other factors that limited our ability to compare the studies, butpain scoring tool did not necessarily need to be one of them. There are many different pain scoring tools that have been validated for particular age groups, and some variation can be expected. However, it was evident that in some studies very little consideration had been given to the selection of the pain assessment tool to derive pain outcome measure, with some indicating a lack of understanding of the tools by documenting they used one tool, when in fact they used another. 5 When reading papers, identifying methodological problems such as this with the selection of tools used to derive outcome measuresmay immediately undermine the quality of the research, and lead other researchers in the field to question their findings (i.e., the internal validity) and whether these findings apply to real-world situations (i.e., the external validity).

A time does come however, where simply using tools commonly used in the existing literature could impact the quality and relevance of the findings. To illustrate this, let's consider the investigation of mental health issues in paramedicine, which is currently a popular and important field of research. When investigating post-traumatic stress disorder (PTSD) in paramedics care should be taken when selecting appropriate tools. If one was designing a study that sought to measure prevalence of PTSD in paramedics, a comprehensive review of literature would identify a paper by Baqai (2020) that reviews the evolution of PTSD diagnostic criteria and how this specifically related to paramedics. 6 This paper provides valuable insight into how the Diagnostic and Statistical Manual of Mental Disorders (DSM) diagnosis of PTSD has evolved, contrasts this with the International Classification of Diseases (ICD-11) definition, and how this impacts paramedic diagnosis. Despite the DSM-5 being published in 2013, Baqai identifies that studies published up to 2018 about PTSD in paramedics were still using the previous DSM-IV. 6 In this paper, the author provides valuable insights that can inform researchers when deciding on PTSD questionnaires for paramedics. 6 In this instance, to simply adopt a questionnaire commonly used in the literature (to allow for the pooling of data for systematic reviews and meta-analyses) may not be the right decision if a newer version more sensitive to the chronic cumulative trauma paramedics experience is available. This is an example of where a considered decision to produce research findings that cannot be pooled with existing paramedic research may be useful.

By spending the time to establish a strong understanding of the outcome measures and tools to derive them, researchers can position their studies to produce the most impactful findings that can set and justify the standard for future work and enhance the advancement of knowledge in paramedicine.

An example of consistent outcome measures and tools

Core outcome sets are an agreed-upon collection of standardised outcomes for an area of health. 2 Cardiac arrest is one of the most highly researched fields; however, before the Utstein template, studies on cardiac arrest often used different definitions, endpoints, and data collection methods, thereby impeding meaningful comparisons and hindering the development of effective resuscitation practices. 7 The Utstein template is a form of core outcome set first introduced in 1991 having been developed by an international group of experts to address the variability in how cardiac arrest data were reported.7–9 Since its development, it has gone beyond recommending the consistent use of particular outcome measures, to producing a standardized reporting guideline for cardiac arrest research.7–9 It has now been widely adopted as a reporting standard for cardiac arrest research and serves as a prime example of how standardized outcome measures (amongst other things) can inform practice and enhance the quality of research.

By providing a common language and structure for reporting, the Utstein template has facilitated more accurate and meaningful comparisons of study outcomes.8,9 When used consistently, researchers can now identify trends, gaps, and areas needing further investigation more easily because data from many studies can be pooled, increasing their reliability and impact. The Utstein template has also fostered international collaboration in cardiac arrest research, with researchers from different countries contributing data to large, multinational studies that enhance the generalizability of findings and promote global improvements in cardiac arrest care.

The emphasis on consistent and standardized outcomes in cardiac arrest research has allowed for comprehensive systematic reviews that include meta-analyses and, as a result, have identified key predictors of survival and effective interventions. 2 This aggregated evidence has allowed for treatment recommendations to be developed which have in turn been instrumental in shaping global resuscitation guidelines, such as those recommending high-quality chest compressions and early defibrillation as critical components of effective cardiac arrest management. 2

The value of outcome measures

Whilst consistency in outcome measures and standardisation contribute enormously to producing meaningful research, they do not guarantee the rigor of the research, or an ability to completely understand a field of research. A 2019 study investigating international variation in out-of-hospital cardiac arrest survival between emergency medical services (EMS) found the outcome measures collected in the Utstein template only accounted for half of the variation. 10 The study speculated that the other half could be from outcome measures not collected in the Utstein template, such as the existence of community first responder programs. 10 Another aspect is the potential for incorrect application of Utstein definitions and incomplete data that can interfere with the interpretation of the outcome measures. 10

Another risk in the use of any outcome measures is incorrectly valuing their impact or misinterpreting their message. For example, the Utstein variables survival to hospital, 30 days, or hospital discharge may not be suitable outcome measures to identify the efficacy of a basic life support (BLS) course for a cohort of laypersons in a study of people up to 5 years after their course. There are far too many events and situational factors that occur between the course and a cardiac arrest to be able to demonstrate any level of causation between the course and the patient outcomes. Even if participants walked out the door of the BLS course to find someone in cardiac arrest, so many other variables and confounders interact that contribute to the patient outcomes. How long has the patient been in cardiac arrest? Do they have any comorbidities? Is there a defibrillator? Did the EMS arrive promptly? What sort of medical system supports the community? And the questions go on. This is not to say that a ‘survival to hospital’ outcome measure cannot be included in a suite of outcome measures, but in this instance, it would be misleading to say that a patient survived to hospital discharge because of the BLS course, but their survival to hospital is more closely linked.

Patient-Centred outcome measures

Patient-centred care has been identified as a core element of health equity. With a wide range of PCOMs published (including clear methodologies) in areas including diabetes, stroke, older adult health, pregnancy, and addiction to name just a few, we have previously identified that meaningful engagement with the concept of PCOMs from the outset of a study's design remains elusive in our discipline. 11

Note that these are not in place of robust outcome measures that measure clinical effectiveness or efficacy, but rather are in addition to such measures. It also does not equal satisfaction ratings. 12 However, it can include ‘patient-reported’ outcomes. Just as quantitative or qualitative approaches to collecting research data can only tell us so much in isolation, a combination of data helps to provide us with a richer picture. Given the individual complexity associated with every patient presentation, it is time we acknowledge this in our approaches to measuring our success. Of note, none of the PCOMs we found published by the International Consortium for Health Outcomes Measurement (ICHOM) focused on out-of-hospital care or included the perspectives of paramedics as members of working groups. 13 Perhaps it is time we developed some PCOMs for our field in the absence of appropriate measures.

How can we increase quality in the use of outcome measures in paramedicine research?

When developing study protocols, deciding upon the outcome measures for the study may initially seem simple. However, for research to be impactful and contribute to the field, it is important the outcome measures and tools to derive them are suitable, correctly used, and address the study's aims. If this is achieved, the study findings will be meaningful and generalisable and therefore, high quality.

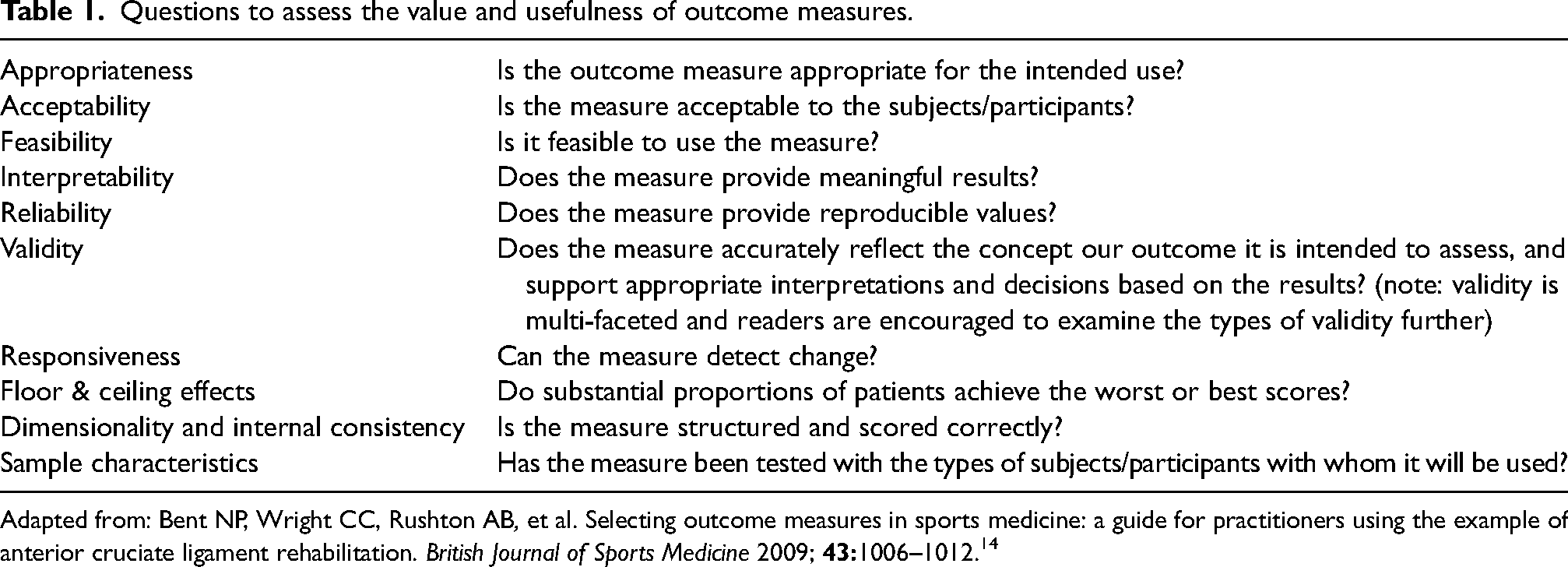

First, we should conduct a sound review of relevant studies within the same field of research, and not necessarily limited to those in paramedicine alone. This will allow for the identification of outcome measures commonly used in similar work. Then, a review of the literature around the outcome measures and tools used to derive these outcome measures will provide information about their strengths and limitations, as seen in the example above about investigating PTSD in paramedics. This will clarify definitions for the outcome measures and associated tools and will ensure their rigour. A series of questions across a range of domains have been proposed in a study that interestingly, is directed at practitioners assessing the readiness of a sportsperson to return to competition following injury (Table 1). 14 Despite this, these questions are relevant to developing research studies and demonstrate that the correct outcome measures can produce findings that translate into practice. We recommend that once researchers have identified the most appropriate outcome measures and tools to derive them, they consider the following questions to ensure their choice is robust and can stand up to scrutiny. These questions will also assist researchers to ensure they have not compromised the existing characteristics of a measurement tool (such as the validity, reliability and sensitivity) by adapting it to their study.

Questions to assess the value and usefulness of outcome measures.

Adapted from: Bent NP, Wright CC, Rushton AB, et al. Selecting outcome measures in sports medicine: a guide for practitioners using the example of anterior cruciate ligament rehabilitation. British Journal of Sports Medicine 2009;

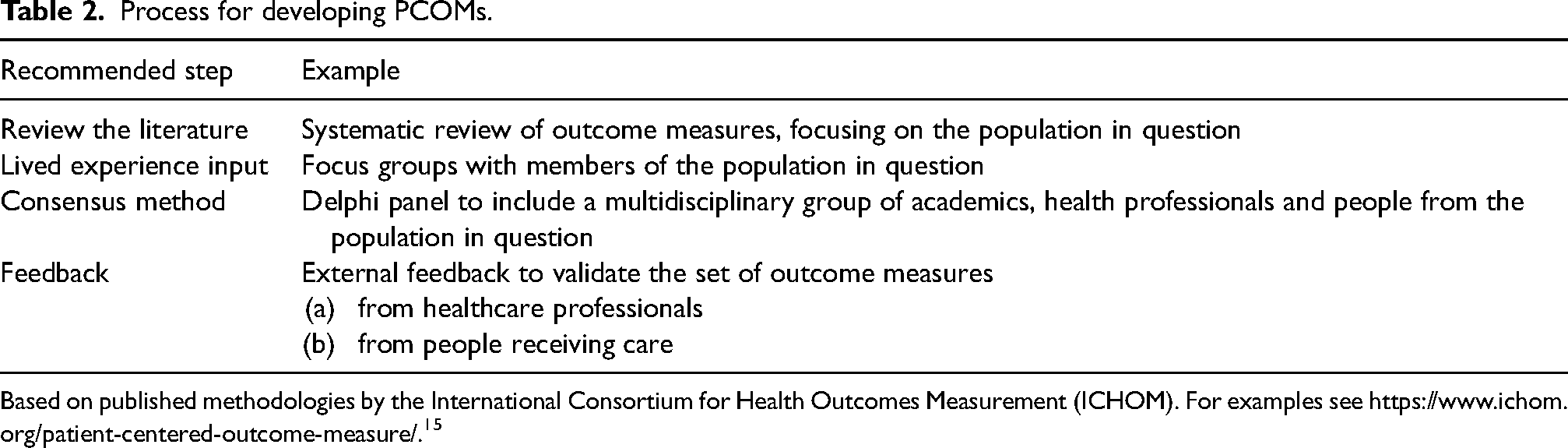

Developing PCOMs also requires much consideration and preparation if the outcomes are to be meaningful. In Table 2, we have listed the recommended steps.

Process for developing PCOMs.

Based on published methodologies by the International Consortium for Health Outcomes Measurement (ICHOM). For examples see https://www.ichom.org/patient-centered-outcome-measure/. 15

It is important to note that there is a wealth of research available about developing research tools to derive outcome measures that is not covered in this editorial but should be considered by anyone developing a study protocol, or indeed reviewing ethics applications or manuscripts for publication.

Finally, we know the importance of being cognisant of what we do not know and what the limitations of our individual knowledge and expertise are. We recommend collaborating with researchers or clinicians who have confirmed expertise in a given instrument or measurement approach, thus increasing the strength of the research team and therefore of the research itself. At Paramedicine, we see many submissions that on face value have great potential, but that on closer examination use outcome measures that are not appropriate, appear flawed in their construction, and at times, seem poorly understood. A lack of suitable consideration of the outcome measures can completely undermine a study, diminishing its contribution to the knowledge and potentially rendering the work unpublishable. Conversely, identifying the most appropriate outcome measures and tools to derive them can position papers to be highly cited by future publications. Justifying the decision to use particular outcome measures in method sections of manuscripts demonstrates a rigorous and defensible approach to the study development, and increases confidence in the peer-review and editorial processes.

Using the most appropriate and trustworthy outcome measures is essential for advancing paramedicine research and improving patient care in the out-of-hospital setting. Where possible, by attempting to be consistent in the use of suitable outcome measures, we can overcome the inevitable challenges of variability and drive meaningful improvements in patient outcomes. As the field of paramedicine research continues to evolve, embracing consistent and meaningful outcome measures will be key to achieving robust, reliable, and impactful research findings.

Footnotes

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: All three authors are Deputy Editors of Paramedicine journal.

Funding

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: All three authors are Deputy Editors of Paramedicine journal.