Abstract

Introduction

Competency assessments across medical education

There is a lot of published literature on competency assessment in medical education, covering topics such as: achieving authenticity in assessment tasks and activities,1–4 addressing validity and reliability of assessment methods,5–9 assessment of critical thinking and clinical reasoning underpinning professional judgment,10–12 the use of critical reflection to assess competence,10,13–15 competence and work readiness of graduates,16,17 and the use of digital technology in assessment. 18 However, there is a lack of agreement on the essential attitudes and values needed for safe medical practice, as well as a lack of consensus on the definition of competence. 19 This lack of clarity has led to confusion and differing perspectives about assessment practices. Some suggest that the confusion arises from differing philosophical positions and over-definition of competence.20,21

In relation to the assessment of competence, there are three dominant perspectives that underpin competency assessment: i) the task-based, ii) personal attributes and iii) integrated perspectives. The task-based perspective focuses on a set of skills required to complete an individual task. Here the task becomes the focus of determining competency with the assessment based on the interpretation of the performance. The weakness of this approach is that it is reductionist as the psychomotor skill becomes the main assessment focus.22,23 Characteristics such as personal attributes, teamwork, knowledge, critical thinking and critical decision-making are not considered and are largely overlooked. Many consider the task-based perspective of competence as conservative as it ignores the role of professional judgement used in intelligent performance and does not consider the holistic nature of practice.22–24

The personal attributes perspective focuses on personal characteristics, demeanour and behaviour. This approach to competency assessment typically acknowledges a combination of knowledge, communication skills, clinical decision-making, critical thinking and humanist skills that are required in holistic care of the patient, and relies on the existence of a generic set of behavioural competency standards as a measurement for assessment. 25 Here the underlying assumption is that a person has these attributes and will be able to apply them to a range of tasks in a variety of practice contexts. 13 There are several concerns with this approach, in that, generic competencies do not exist that can account for the individual nature of human activity, or can be applied across all disciplines in competency assessment. 25 This position is supported by others who suggest that high levels of professional expertise (competence) are domain specific.26,27 McMullan et al. 28 concur, drawing attention to the importance of context. They acknowledge the different ways of practising and raise questions about tools used to objectively measure personal attributes.

The integrated approach assesses a combination of skills, personal attributes and other capabilities such as critical thinking and problem-solving.25,29 Integrative competency assessment approaches incorporate general attributes and tasks (specific to a work practice) and address knowledge, abilities and attitudes. 13 The integrative perspective is considered a more comprehensive evaluation of performance 20 and underpins many professional standards used for assessing competence. 13 The integrated approach defines competence in a wholistic manner that acknowledges the richness of professional practice. This approach to competency assessment incorporates professional judgement, with competence demonstrated by ‘complex structuring of attributes needed for intelligent performance in specific situations’. 25 (p2) The competency movement in medical education argues that an integrated approach to competence is needed.

Overall, the nuanced perspective on the challenges and opportunities associated with competency assessment in medical education highlights the need for a more integrated and holistic approach that considers the complex nature of professional practice. This article responds to this gap by exploring competency assessment in paramedicine practice in Australia and New Zealand.

The inquiry: How assessors determine competence of paramedicine students

In 2011, competency standards were introduced in Australia and New Zealand to define the paramedic scope of practice and provide direction regarding practice expectations to ensure that paramedics provide safe and effective health care. 30 With the move to regulation, a national consultation process resulted in revised professional capabilities effective from June 2021. 31 The new capabilities drew on the Professional Competency Standards – Paramedics Version 2.2 2013 31 and the Paramedicine Australasia 2011 Australasian Competency Standards for Paramedics 30 to ‘identify the knowledge, skills and professional attributes needed for safe and competent practice of paramedicine in Australia’. 32 (p11) These and the Australian Health Practitioner Regulation Agency (AHPRA) and National Boards Code of Conduct have implications for the preparation of paramedics, curricular development and assessment of students.30–32

With the professional growth of paramedicine, different scopes of practice have emerged over time and with these differences in education and scope of practice. This is reflected in service models and roles ranging from volunteer first-aid workers to those with higher qualifications registered with AHPRA. 19 Whether paramedics hold a degree or not, competence – which refers to as ‘the combination of skills, knowledge, attitudes, values, and abilities that underpin effective and/or superior performance in a profession 33 (p166)’ – is required with a need to demonstrate the same professional standards.1,34 For assessors of competency assessment, the introduction of the new standards call for an in-depth understanding of the expectations for safe practice. Regardless of their background those assessing students need to understand competency requirements, and how standards written for registered paramedics translate to levels of student practice and expectations of novices.

With the evolution of paramedic education from a vocational to a higher education and universities, the absence of a standardised paramedic university curriculum has resulted in little congruity in assessment, including expectations regarding student competency assessment. 35 The application of differing standards in assessment processes in undergraduate paramedic programmes has raised questions regarding the competence of graduates.36,37 This issue has reignited interest in how competency assessment is undertaken in ambulance services and universities and the reliability and validity of assessment outcomes. While moderating assessment, procedures and outcomes instigated by regulatory bodies 38 has put in place a framework to facilitate reliable assessment outcomes, and the literature provides insight into the type of assessments used to assess the competence of undergraduate student paramedics, how assessors in academia and industry manage the complexity of the assessment processes and construct professional judgements about competence are not clear.13,19

Against this background, the purpose of this enquiry was to discover how paramedic assessors in academia and ambulance services determine the professional competence of undergraduate paramedic students in Australia and New Zealand. The specific objectives were to investigate how:

final year undergraduate student competence is assessed; professional competencies are used in the assessment of final year undergraduate students; assessors make decisions about final year undergraduate student competency.

While assessment of competencies is scaffolded across education programmes using methods such as Objective Structured Clinical Evaluation (OSCE), these assessments are often focused on tasks, not undertaken in the context of on-road practice, and reflective of the development of students as they progress through their program of study. Determining the competence of final year students was chosen as it is at this point that they need to practice holistically, demonstrate their ability to meet the needs of real-world practice and evidence that they meet AHPRA practice requirements.

Typical of Grounded Theory, the initial research question guiding our enquiry was ‘What is happening regarding competency assessment for entry to practice of final-year paramedical students?’ While participants from universities were more informed about competency assessment, a wide variety of assessments format were used. This was confusing for on-road assessors. Further, it became apparent that there was no selection process or formal education and briefing for on-road assessors who confessed to having little or no knowledge of professional competencies or the practice expectations of students. In light of this discovery and in keeping with the methodology, the question refocused and changed to ‘How do paramedic assessors determine student competency to practice for final-year paramedical students?’

Methodology, methods and analysis

Glaserian Grounded Theory (GGT) was the chosen methodology for this enquiry as it describes an experience or problem and provides a means of understanding what is happening in the area of interest, generating an interpretive substantive theory as an outcome.33,34 The area of undergraduate paramedic competency assessment is not well understood and there is limited literature available to explain this. GGT methodology is used where there is limited knowledge or research and therefore it is suited to this enquiry.39,40 Ontologically, the Glaserian approach reflects that of critical realism, which defines reality as existing independently of the knowledge and beliefs of the researcher and assumes that reality is waiting to be discovered by an unbiased investigator. 41 This is different to other Grounded Theory approaches42,43 that are based on relativism, assuming that reality is related to the knowledge and beliefs of the researcher. Methodologically to avoid forcing data, these issues have implication for the way in which the research is conducted. 44 The Glaserian approach allows the researcher to immerse themselves in the data and develop a model that explains “what is happening” in the area of interest. Regardless of approach, the aim of GT is to explore the social interactions45,46 of a problem or issue and is philosophically underpinned by symbolic interactionism. 47 This is concerned with social meanings and their constructs and involves discovering patterns within data to explain how the participants resolve the problem or issues at the centre of the research. This presents a core category or Basic Social Process (BSP) which explains the most variation in the data. 44 GGT prescribes that the researcher must enter the field of study with an open mind.39,48 Although it can be argued that there is no such thing as total naivety, as the researcher must have some idea of the area of study, it is recommended that prior to data collection a literature review is not conducted and that engagement with this is limited to affirming the need for the enquiry. 44 As literature is considered a source of data, it is methodologically appropriate that in this study it has been woven into the substantive theory and is reported here in conjunction with the Paramedic Assessment Process. 19

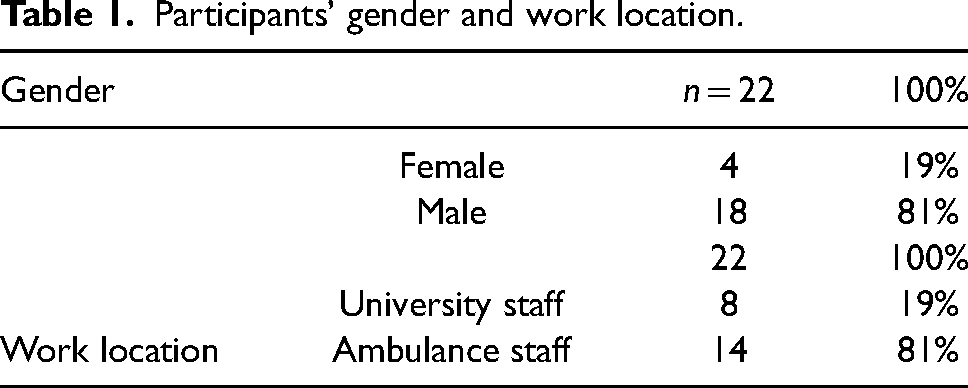

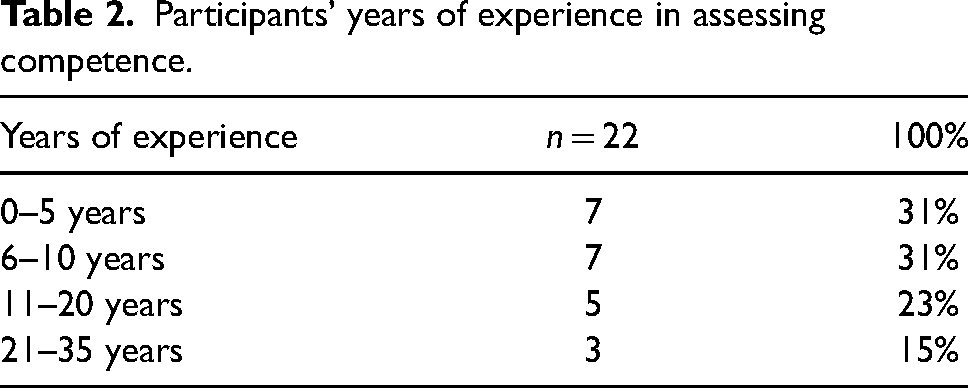

Our sample included lecturers and sessional staff at universities, and on-road paramedics involved in the summative competency assessment of final-year paramedic students in New Zealand and Australia. For the purposes of this enquiry, an assessor is defined as those individuals who assess undergraduate students in universities and ambulance services in Australia and New Zealand. 19 We conducted recruitment through professional networks and utilised the process of snowballing. In total, n = 22 participants volunteered to participate in the study, eight (8) were university staff and fourteen (14) were on-road paramedics. Table 1 provides a summary of participant demographics. Table 2 details the experience that participants had in assessing paramedic students.

Participants’ gender and work location.

Participants’ years of experience in assessing competence.

Data collection

We obtained ethical approval involving human subjects for this research from the University of the Sunshine Coast Human Research Ethics Committee (October 2018, A181158) and the Health and Disability Ethics Committee of New Zealand (HDEC). The processes employed to conduct and report the enquiry are consistent with the International Committee of Medical Journal Editors requirements.

The fundamental methodological elements guiding the research process were theoretical sampling, Constant Comparative Analysis (CCA), theoretical sensitivity, the use of the literature as data, and memo writing.13,44,47 To prevent forcing data, we held semi-structured interviews. These were conducted by a member of the research team with extensive education and on-road experience, undertaking PhD study. All interviews were undertaken outside of the work environment. Face-to-face individual and focus groups interviews were offered in various locations in Australia and New Zealand. Participation was voluntary and the type of interview was determined by the participants’ availability and preference. Two participants chose to have individual interviews. The remaining 20 participants participated in a total of five focus groups comprising two to five people in each session. Participants were asked to describe how they assessed the competence of final-year paramedical students and issues they thought were of importance. Interviews were up to 60 min in length, audio-recorded, and transcribed verbatim. In keeping with GGT, we accessed a wide variety of other data sources, including literature, professional documents, and grey data such as assessment and course materials, policy and procedures. Which literature/data source was explored was determined by the questions/hypotheses arising from interviews and other data as these were analysed.

Data analysis

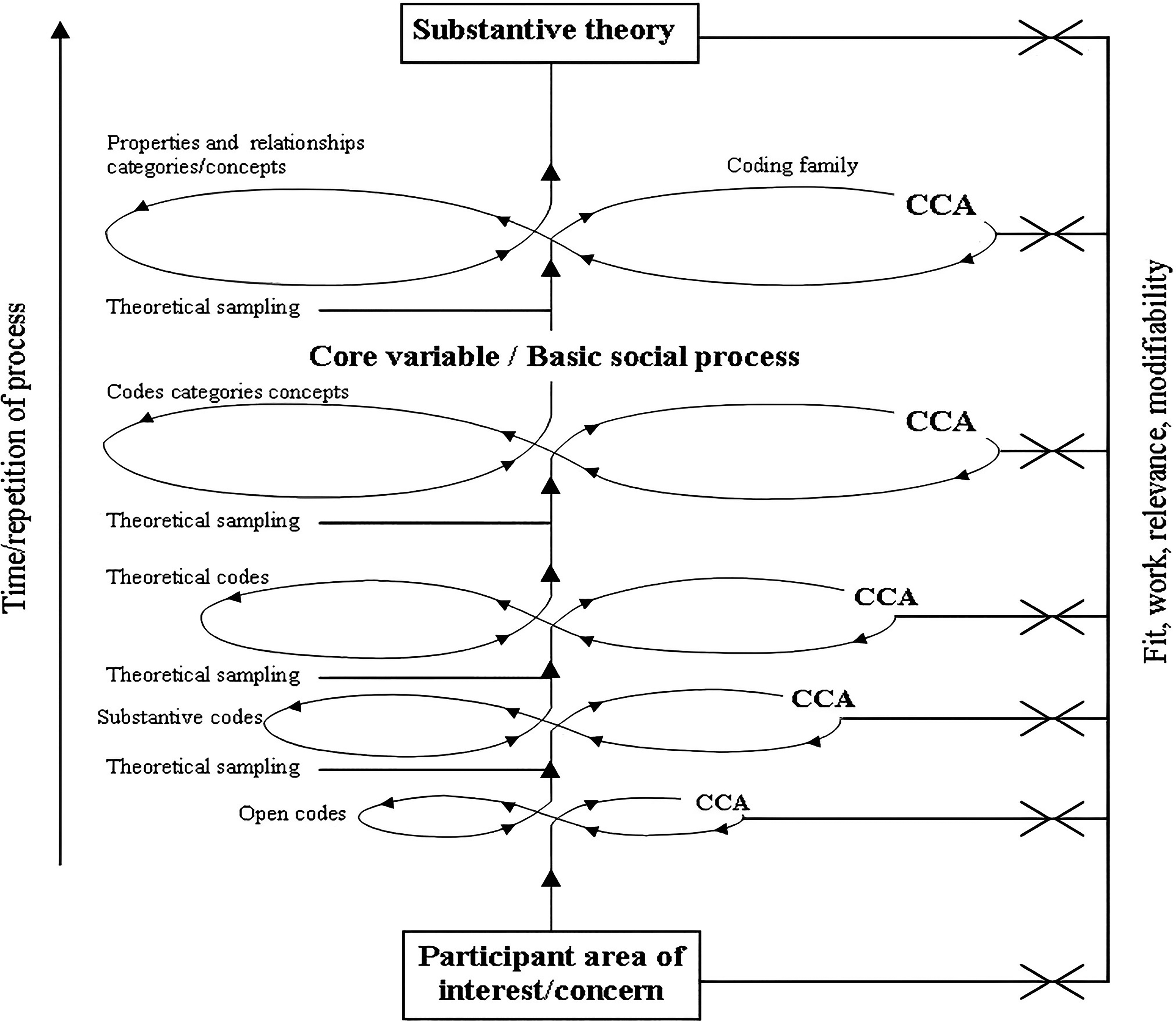

We employed the process of analysing data in this research based on the original work undertaken by Glaser (1978) 1 in which CCA was central to analysis. This was conducted manually and applied to all data collected at all levels in GGT that resulted in the generation of theory.39,40,44 This required us to engage in a systematic process where data were compared to determine similarities and differences until saturation was achieved and the BSP revealed. Like interview transcripts, all data sources were exposed to the same scrutiny with CCA determining similarity and difference. The outcome of which assisted with the identification of relevant data and confirmed themes and assisted in explaining what was happening in the substantive area. The process was aided by the numbering of each line of data and the addition of a code to identify the data source and analysis outcome. This drew memos and raw data together and provided an audit trail to check and validate data sources, contributing to the emergent theory which was checked by the supervision team. The process of analysis and generation of substantive theory is illustrated in Figure 1. The model depicts the levels of coding and processes inherent in each phase of substantive theory development used in this research.13,49

Model used for generating a substantive theory. 49

We completed the process of facilitating the emergence of a substantive theory in accordance with Andersen et al.'s 49 five stages where data collection and analysis were undertaken concurrently:

Stage 1: Facilitated by the use of CCA, interviews and other sources of data (including literature) were open-coded every time theoretical sampling (data collection) occurred.

Stage 2: Initial codes were used to form questions and to direct theoretical sampling for subsequent data collection. The initial data were integrated with new data. Sorting codes resulted in an emergent set of substantive codes.

Stages 3 & 4: Coding and re-sorting of data continued by utilizing CCA. This led to the development of theoretical codes and eventually confirmation of codes, categories and concepts from which the basic social process emerged.

Stage 5: The use of the 6 ‘C's coding family 18 was applied to determine the relationships between categories and concepts and explain what was happening in the substantive area.

In keeping with GGT, member checking was employed to ensure the rigor and validity of the theory.44,50 The validation of a constructed substantive theory is the bedrock of high-quality qualitative research. The hallmarks of this are that it can be applied in the real world, where it ‘fits, works, is relevant and modifiable’.

39

(p236) Three participants previously interviewed who were representative of education and on-road practice were involved in appraising the PAP substantive theory. They confirmed categories and concepts within the model explaining the assessment of students and reported that this aligned with their perception of the assessment process. “…we do move backwards and forwards as the model shows because there needs to be a gateway to come back to if something is not clear…[it]is what we do” MC1:3. “I am a driving instructor and paramedic and I use this type of process to see if students are competent…This model could work in a lot of different industries that have a one-on-one instruction” MC1:5.

Results

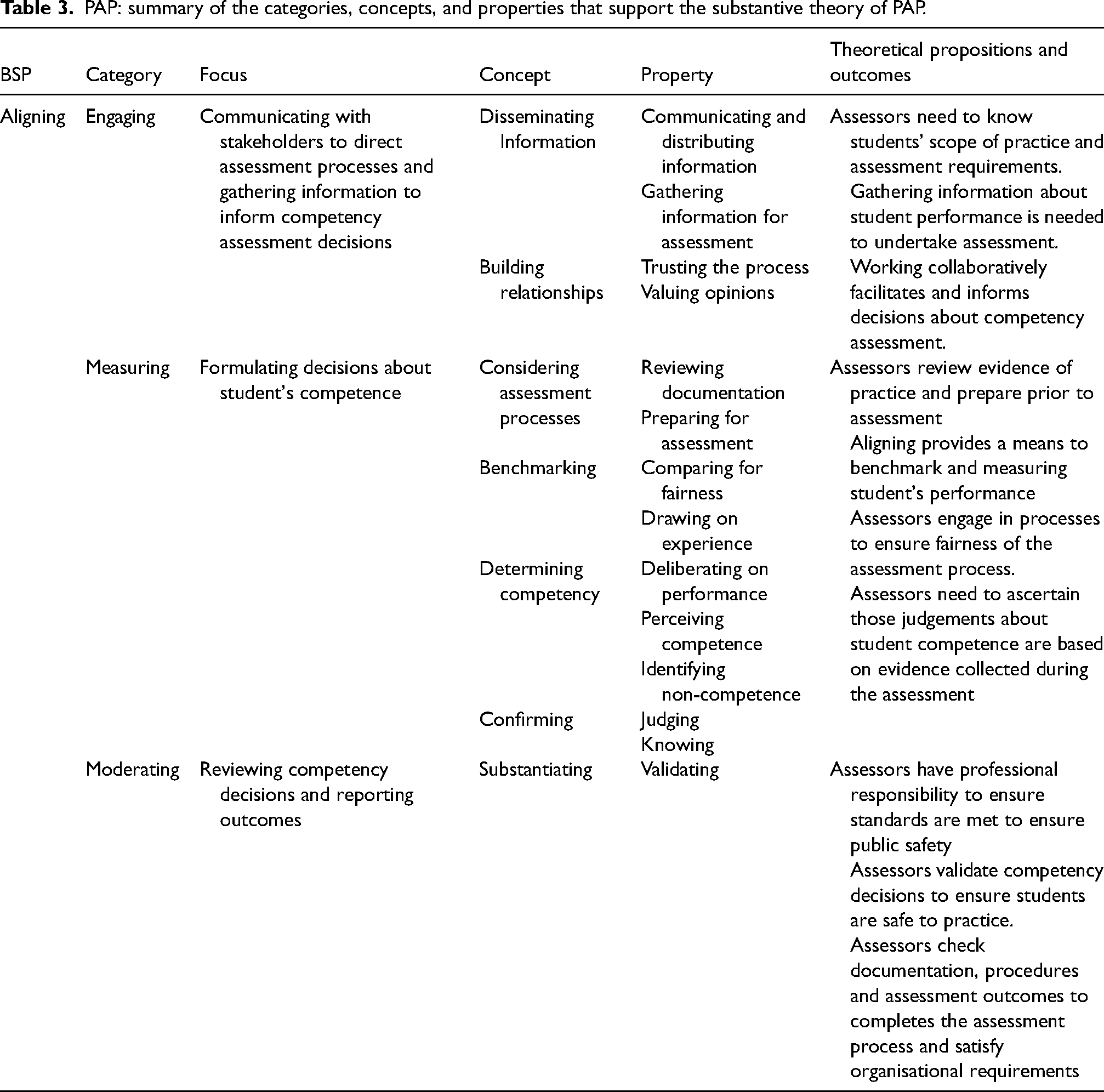

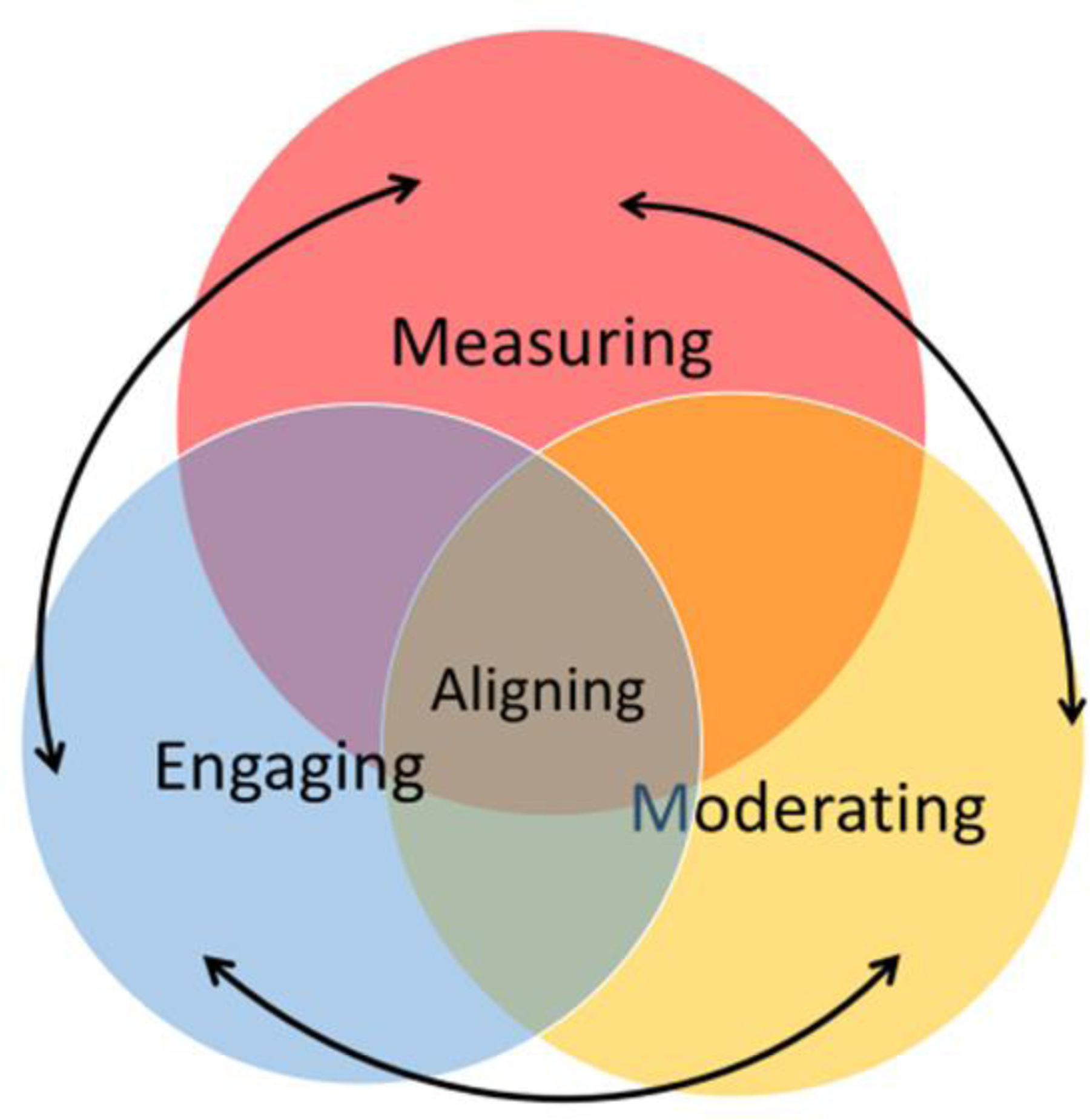

Three categories emerged from the analysis. These were engaging, measuring, and moderating. Interaction between these confirmed the presence of the BSP ‘aligning’ and associated concepts and properties inherent in the substantive theory. This was named the Paramedic Assessment Process (PAP). Table 3 summarises the concepts and properties embedded within the categories and the relationship of these to the BSP and theoretical propositions. This is used as a framework to present the PAP substantive theory.

PAP: summary of the categories, concepts, and properties that support the substantive theory of PAP.

Engaging

Engaging is the process assessors undertake when gathering information in preparation for assessment. ‘Disseminating information’ and ‘building relationships’ are the concepts that drive engaging. Disseminating information assists assessors to obtain knowledge about how students are to be assessed. Assessors disclosed that they had little knowledge of curriculum, student scope of practice, and assessment processes, tools, and criteria. Difficulties were compounded by assessors supervising students from different universities. “It's hard to know where the individual [is] at when we don't even know what they're allowed to do at a particular time in their university degree” 6:185:1.

The concept of disseminating information involves publishing and circulating documentation, resources, and information to ensure assessors understand what is required. Our study found that there were conflicting opinions about the adequacy of dissemination processes. Some participants were impressed with the level of dissemination of information they received from the university. “…the university provides ample documentation to assess their students as they come through” 3:47:1.

Other assessors were critical, claiming further education was required about how the handbook was to be used, and on professional expectations of student practice, as some paramedics were unsure about the procedure of assessing students. “I believe that the documents for student assessment have always been foggy, and hard to use” 7:33:3.

The concept of building relationships instills trust and contributes to having opinions valued. Generating a collaborative assessment process is believed to inform and strengthen decisions about competency assessment.

51

Assessors expressed a need to have trust in the assessment process and feel that their opinions are valued. Some university staff found it challenging to build relationships with ambulance services. “…managing industry [ambulance managers] and dealing with industry [ambulance services] when trying to maintain a working relationship is a challenge” 1:132:1.

While some university staff found relationships with the ambulance service difficult to navigate, relationship concerns were also expressed by on-road paramedics who questioned the application of the principles of a fair and reliable assessment processes. This occurred when assessment expectations did not align with industry values.

52

In these circumstances, confidence in the university education system was undermined, leading to a loss of faith and trust in the assessment process: “…the assessment criteria, it's not fair, it's not valid and it's not reliable… they're failing all the principles of assessment” 7:148:1.

Participants from ambulance services and universities both claimed that they should value each other's opinions in relation to the assessment of students. This, however, was not always practiced as evidenced in criticism of the grading practice and situations where students’ grades were changed. “…I've heard from the university saying we get these reports back from on-road paramedics and they are not accurate and do not add value” 3:102:1.

The devaluing of the on-road paramedic's ability to assess was reported to have impacted morale and motivation in relation to the assessment of students on clinical placement, resulting in some paramedics stating that they were not taken seriously and as a result were not interested in supervising and assessing students. “On his mentoring report … I recommended he not carry through… they still put him through. So, what's the point?” 3:233:1.

On-road assessors reported that the undervaluing of their professional judgement resulted in staff avoiding taking responsibility for and engaging in assessment of students. Disengagement was perceived by some educators expressed as: “… assessors don't give a rat's and don’t care about the student's assessment…”6:120:1.

These issues highlight the tension between industry and education and give rise to questions generally about assessment practice, reliability of assessment results and implications for patient safety.

Measuring

The second category in the PAP is measuring. This is a process that assessors engage in during the assessment of students. Measuring describes the practical components of ‘considering the assessment process’, the cognitive process of ‘benchmarking’, and ‘determining competency’. Here assessors review evidence of student practice and prepare for the assessment. In doing so, they familiarise themselves with marking guides, assessment guidelines, and the intended learning outcomes.

Not knowing the competency standards and the level of expected knowledge, skills and scope of student practice was considered to adversely impact the ability to successfully engage in considering performance and assessment process. The length of students’ placement and the amount of time available to accurately assess students also influenced assessment. The acute and unpredictable nature of paramedic practice was a primary issue. Depending on the severity of call outs, students’ engagement may be minimal with students allocated observing roles rather than being actively involved in case management. Inability to observe students’ practice had a significant impact on gathering evidence and assessors being able to formulate perceptions of competence.1,34 Situations like this were common and often resulted in assessors not knowing whether students were competent or not. “Sometimes you just don’t know what they can and can’t do” 6.46.1.

These circumstances impacted the amount of time needed to undertake the assessment process and had the potential to influence the assessment outcome if assessors were unclear.

Benchmarking is central to measuring competence.

13

A benchmark is a point of reference that provides paramedic assessors with a position from which they can align and measure student practice53,54 and is akin to ‘frames of reference’ described in medical assessment.

55

Participants in our study explained their process of benchmarking as: “…essentially as a paramedic you need to develop your own mental checklist…[they] will be slightly different in everybody's brain” 4:96:7.

“Intuitively I know when someone is exhibiting leadership or someone is exhibiting good communication, critical communication, or when someone has made good decisions. This is how I benchmark competency” 4:21:1.

The assessors benchmarked by engaging with cognitive cues, reflecting on students’ practice, and aligning outcomes with benchmarks that they believed were evidence of competent practice.54,56 These may be evidenced-based or a reflection of how they thought students should practice. The issue here is that when the assessors drew on their own beliefs and values and ‘my way’ became the ‘prescribed way’, there is a risk that incorrect measures may result in inaccurate assessment outcomes if assessors’ individual benchmarks and beliefs about competent practice did not conform with the competency standards.

When determining competency and analysing assessment data that reflects performance, the assessors considered the finer points of practice to make decisions about competence. The assessors’ perceptions of what constitutes competence, or non-competence, influenced this aspect of the assessment and confirmed the award of a pass or fail result. To do this, they searched for specific examples that provided evidence to support their decision.35,36,57 If a student's performance resulted in harming the patient, themselves, or others, this was deemed a critical error. Critical error is defined as an action that may increase the morbidity or mortality of the patient.

58

When determining competency, patient safety was a primary benchmark used by assessors: “We tell them it's a critical fail if you do that because it's safety, not only for you but for your patients” 2:213:1.

If the assessors determine critical errors during the assessment; the result is a fail or non-competent grade. The absence of critical errors confirms safety and a pass.58,59

The process of ‘confirming’ in the PAP involves considering outcomes arising from ‘benchmarking’ and ‘determining competency’. Evidence is interpreted and a professional judgement is made as to whether the student meets competency expectations or not. Participants expressed concerns that when they assessed students who were on clinical placement for short periods, it was impossible to assimilate all knowledge, skills and attitudes that were required to be competent in the little time that they had working alongside them: “… in that narrow timeframe you sort of think through the job and make a confirmation on well, yeah, did the student make or not make competency” 4:19:1.

Participants deemed that ultimately, the assessors needed to know that the student was safe to practice: “Obviously you know … you just want to know they are safe” 5:45:3.

They revealed that there were times when they did not know if students were safe or not. In these circumstances, they reported that they discounted criteria

60

and chose to overlook competencies and or behaviours they did not feel comfortable addressing, or when they had little or no evidence to support the award of a pass. In these situations students were given the benefit of the doubt with the assessor ticking a box indicating achievement. Feeling guilty about failing a student or worrying about making the right decision also led to this practice.13,49 This raises questions about reliability and validity of the results, and dishonest assessment practices. “The issues that I have with any of the assessments, with any of the uni students or uni grads is the dishonesty in the marking of their assessments” 6:16:1.

In situations where students did not meet performance requirements, some assessors revealed that they found it difficult to fail a student who as a mentor they had befriended. Similar situations are reported in other health professions where assessor and student relationships were found to be an influencing factor in student assessment outcomes61–63 “And if you know them already, like some of the students we work with its hard to make decisions on competency” 3:24:1.

“…you are nice because you don’t want to break him…” 7:142:1.

Assessment concerns like this are reported in other disciplines.13,49 Participants reported that while it made no sense to fail a student who had little control of the practice environment, or what might present on-road, fabricating assessment resulted in some students passing when they should not. The concept of failure to fail61,64 was of serious concern that participants considered a risk to professional standing and the public.

Moderating

Moderating is the third phase of the competency assessment process. Moderating describes the post-assessment procedure of checking and validating the decisions made about student competence to ensure that results are fair, equitable, align with the educational and professional expectations of paramedicine, and that students are safe to practice. Participants acknowledged the unpredictability of the work environment and the impact this could have on assessment outcomes. Another influence was personal opinions and workplace gossip about students. Participants thought prejudice could arise as a result of personality differences, knowledge of a student personal circumstances and assessors discussing students’ performance.13,59,65 The outcome of which may influence judgements regarding fitness for practice. ‘Some people get treated differently than others in the assessment, and it's not fair’ 7:246:4

The participants believed assessment should be an objective and fair process,64,65 and were acutely aware of issues surrounding the adverse impact a fail would have on student progression.61,64 This was a driver for assessor actions in the process of moderating. Another confounding issue was errors. It was not uncommon for moderators to detect errors or mistakes in competency assessment documentation.

13

“… you are finding there are some discrepancies in results” 5:47:1.

These circumstances highlight the need for inter-rater reliability.57,66,67 As a reflection of this moderator activity, focus shifts to validating results by ‘substantiating’ and finalising results. Substantiating is the first step in moderating. Here, assessors often discussed results with colleagues and checked that their perceptions of competence and resulting professional judgement were accurate.

54

“…I think the discussion on results is an essential part of it [moderating]” 2:61:2.

If there were concerns surrounding students’ performance, discussions were generally held with other members of the team who had worked with the student over the period of their clinical experience to gather further evidence. According to Saul, 68 people need to justify their opinions and actions; ‘substantiating’ assists assessors to arrive at a point of rational deduction that instils confidence in decision making.

The concluding stage of moderating is ‘finalising’. Finalising explains the activities employed by assessors to complete the assessment and confirm the results. The properties of finalising are ‘completing’ and ‘satisfying’. In completing, the assessors from universities and ambulance services expressed a feeling of success and accomplishment as they finalised the assessment of the final year undergraduate paramedic student. Participants expressed the emotional journey of satisfying and finalising the students’ competency. “I had him for four blocks I think, and it was a pleasure to watch him develop” 3:103:1.

Another assessor supported this notion by stating: “In some students you really see a lot of growth; it was a pleasure and I used to ring them all up and praise them” 2:304:6.

Participants expressed that it was satisfying to witness the development of students, and they felt proud of their involvement in the teaching and assessment of students and being a part of their professional development.

Basic social process (BSP) – aligning

Each of the conceptual categories in the PAP are inextricably linked, allowing the assessor to move back and forth between processes using ‘aligning’ to resolve assessment decision challenges.

Figure 2 depicts the interconnectedness of the components of the PAP substantive theory and the BSP of aligning which is central. The arrows illustrate how an assessor moves from process to process as they navigate competency decision-making. This may require assessors to backtrack. For example, in order to measure practice and make a competency decision, an assessor may find they need to return to engaging to gather further evidence.

Interconnectedness of components of the PAP substantive theory.

Aligning was found to be the BSP that accounted for the most variation in the data and explained how, in the absence of knowledge and understanding of the professional competency standards, paramedic assessors determined practice competence. Aligning is associated with processes inherent in comparative analysis13,69 and in this study, it is defined by assessors matching and comparing actions (feelings, behaviours, beliefs, attitudes). Aligning provided a means to compare student practice with standards and professionally accepted ways of doing, to match similarities and identify differences to determine sameness and confirm perceptions of competence or non-competence.1,34,70 Aligning was found to facilitate robust assessment processes; however, in some cases, misalignment occurred that threatened the integrity of the assessment process. Misalignment can result when students who have not achieved competency are given a pass result by assessors. 69 Assessors who do not align to the assessment processes can neglect to fail students who do not meet competency requirements.

Discussion

As a substantive theory, the PAP has implications for the assessment of competence generally as the profession moves to apply practice standards across the service. PAP highlights situations that raise concern about assessment practices that may compromise the rigour and validity of assessment outcomes,53,54,59 and exposes the delicate balance between the participants’ views and expectations surrounding safe practice, competency assessment practices, the environment in which assessment takes place, and the impact that the realities of practice have on assessment outcomes.1,34 PAP contributes to paramedic professional knowledge by providing insights into current practices and increasing understanding about how students are assessed, and which may provide direction and inform policy and procedure development.

While many paramedics are competent assessors, the substantive theory challenges general assumptions that all paramedics understand the professional competency standards and are able to conduct trustworthy reliable assessment. In doing so, it exposes workforce issues71,72 which impact on the assessor's ability to complete fair, accurate and trustworthy competency assessment. It gives a voice to paramedics who acknowledge the need for professional development in the area of competency assessment and calls for the need for careful selection and preparation of competency assessors. Further, the theory identifies tension points which, if addressed, could streamline assessment processes, and facilitate consistent outcomes. Importantly it highlights the need for closer working relationships between university and industry. Its contribution is important not only for reliable assessment of students 19 – it has implications for the assessment of competency generally as the profession moves to apply practice standards across the service.

Reflecting on the outcome of this enquiry, the authors believe that assessment approaches utilised in the field conflict. This is reflective of the varying types of assessment used and grading systems utilised. Some are underpinned by positivist principles where competency assessment is purely summative. Here assessors aim to be objective, unbiased, value natural and take the position that personal feelings about student performance should not intrude on the interpretation of data and assessment outcomes. 73 Others employ post-positivist ideals accepting that the assessors’ individual ideas about professional practice influence what they observe and will impact what they conclude is, or is not competent practice. 74 Opposing views may contribute to confusion surrounding competency assessment expectations and process. The authors believe that while the aim in summative assessment is to be objective, they acknowledge that professional judgement is complex, and ‘while tools identify sources of evidence that may be useful in informing decisions, they do not address issues related to how educators use these to inform decisions, and how they know that practice is competent’. 13 (p39), 75 Further, confusion and misinterpretation arising from using the terminology surrounding the notion of competence in medical education is a consequence if competence is assumed to be a descriptive concept, rather than a normative concept, and its reference to a thing or an activity rather than a quality or state of being. 76

To address these tensions, the authors hold the view that there is a place for middle ground where a combination of philosophical positions could be considered. This includes recognising the contribution of a constructivist approach77,78 where formative assessment is incorporated to improve the quality of student learning. Inclusion of formative assessment which provides students with feedback on performance, facilitating opportunity to apply knowledge and address learning needs and ultimately performance, is a curial aspect of the assessment process.

It is acknowledged that the dissemination of information and preparation for the use of the competency assessment framework has been problematic in some areas of paramedic practice, and that on-road paramedic staff have been confused and demonstrated a lack of understanding about the intent of the standards and expectations regarding implementation of competency in practice. 79 Specifically there is a lack of education and guidance for universities in Australia and New Zealand.80,81

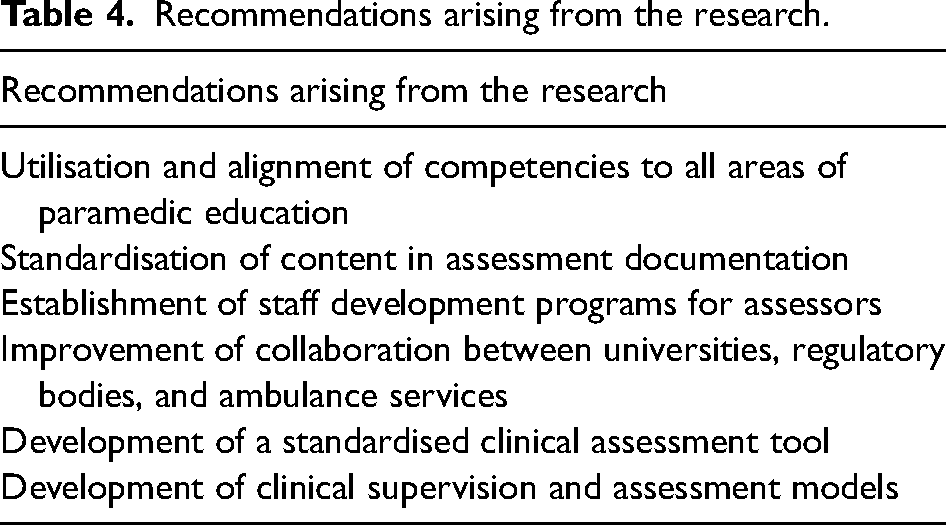

While the PAP brings understanding to what is happening in the field in relation to assessment of paramedic students practice, significant work is still needed in this area to address inconsistencies and issues identified in this publication, and there is a broader need for cooperation in research and in practice to ensure validity and authenticity of competence-based education and assessment internationally. Consistency in assessment is of particular importance in paramedicine where the competence of graduates and public safety are primary concerns. 79 To address this, the recommendations arising from this enquiry are detailed in Table 4.

Recommendations arising from the research.

Limitations of the study

Since data was collected for this enquiry, new competency standards with paramedic capabilities have been released in conjunction with new accreditation requirements from AHPRA. 2 While it could be argued that these circumstances undermine the relevance of the PAP substantive theory, the focus of this enquiry was not specifically on the standards, rather how paramedic assessed student competence in the absence of understanding of the competency framework and assessment requirements. We acknowledge that this theory is representative of the experience, knowledge, beliefs, and values of paramedic assessors who chose to participate in this research at that time, and as a substantive theory hold relevance to Australia and New Zealand only. Further our beliefs about, and experience in education have, through the processes inherent in conceptualisation, been incorporated in the development of this substantive theory. It is important to note that while the PAP substantive theory may provide direction and inform policy and procedure development, it is important to note that the PAP is untested and further research is required to determine if this holds relevance in the broader context in Australia and New Zealand and internationally.

Conclusion

The substantive theory of PAP emerged through the application of a Glaserian grounded theory approach. The PAP describes and explains the process that academics and on-road paramedics undertake to assess the practice competence of paramedic students. The categories of engaging, measuring and moderating explain the concepts and properties inherent in the assessment process. The BSP of aligning accounts for the most variation in the data by explaining the central behaviour or actions used by assessors to manage the competency assessment of the final year paramedic student in the absence of knowing and/or understanding the competency framework and expectations of student practice. The limited research on paramedic education has been identified as a key driver for this research. Extending the understanding on how students’ practice competency is assessed has benefits for universities, ambulance services and ultimately patient safety.

Footnotes

Acknowledgements

The authors wish to thank Professor Stephen Gough, ASM Chair of the Paramedicine Board of Australia.

Authors’ contributions

PA and MC wrote the manuscript; ACS designed and conducted the research and provided the data. All authors reviewed the final manuscript.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article: This study was funded by KJ McPherson Education and Research Foundation.