Abstract

Background

Mindfulness research and clinical programs are widespread, and it is important that mindfulness-based interventions are delivered with fidelity, or as intended, across settings. The MBI:TAC is a comprehensive system for assessing teacher competence, yet it can be complex to implement. A standardized, simple fidelity/engagement tool to address treatment delivery is needed.

Objective

We describe the development, evaluation, and outcomes of a brief, practical tool for assessing fidelity and engagement in online mindfulness-based programs. The tool contains questions about session elements such as meditation guidance and group discussion, and questions about participant engagement and technology-based barriers to engagement.

Methods

The fidelity rating tool was developed and tested in OPTIMUM, Optimizing Pain Treatment in Medical settings Using Mindfulness. The OPTIMUM study is a 3-site pragmatic randomized trial of group medical visits and adapted mindfulness-based stress reduction for primary care patients with chronic low back pain, delivered online. Two trained study personnel independently rated 26 recorded OPTIMUM sessions to determine inter-rater reliability of the Concise Fidelity for Mindfulness-Based Interventions (CoFi-MBI) tool. Trained raters also completed the CoFi-MBI for 105 sessions. Raters provided qualitative data via optional open text fields within the tool.

Results

Inter-rater agreement was 77-100% for presence of key session components, and 69-88% for Likert ratings of participant engagement and challenges related to technology, with discrepancies only occurring within 2 categories: ‘very much’ and ‘quite a bit’. Key session components occurred as intended in 94-100% of the 105 sessions, and participant engagement was rated as ‘very much’ or ‘quite a bit’ in 95% of the sessions. Qualitative analysis of rater comments revealed themes related to engagement challenges and technology failures.

Conclusion

The CoFi-MBI provides a practical way to assess basic adherence to online delivery of mindfulness session elements, participant engagement, and extent of technology obstacles. Optional text can guide strategies to improve engagement and reduce technology barriers.

Keywords

Introduction

There is a growing evidence base for Mindfulness-Based Interventions (MBIs) across a wide variety of conditions, including depression, 1 neuropathic pain, 2 and headache, 3 and contexts such as sports performance 4 and job burnout. 5 Mindfulness has shown particular effectiveness in patients with chronic low back pain (cLBP), indicating reductions in both pain intensity and frequency.6-9 Mindfulness has a low rate of adverse events when compared to pharmacological pain treatments 10 and can be delivered in a variety of ways (in-person, online, via telephone). Mindfulness is now recommended in clinical guidelines 11 as a first-line non-pharmacologic treatment for chronic low back pain.

As the research evidence base for MBI’s has expanded, there is growing interest in evaluating whether MBIs can be successfully delivered in clinical contexts. When delivering interventions to patients, the quality of the intervention as well as adherence to the protocol can vary greatly across settings (e.g., clinical trial vs. busy medical practice). Differences in implementation can have effects on both research and clinical outcomes and make it difficult to compare results across studies and programs. The extent to which an intervention is delivered and implemented as intended or per protocol is known as fidelity. 12 Fidelity assessment is an important quality control method to ensure that data being collected reflect results that are based on intervention delivery that was true to the treatment protocol and consistent across sites. Fidelity data may also help explain potential differences in outcome measures across sites.

Assessing fidelity in MBI trials and programs can be challenging, particularly for multi-site MBIs delivered online. MBIs are generally multi-component programs that include meditation, informal mindfulness experiences, discussion, exploration of one’s stress and habit patterns, and encouragement of home meditation practice. Considerable teacher experience and skill are required, and group process is important for growth and learning to take place. MBIs typically follow an adaptable curriculum that provides structure but is flexible and responsive to the needs of the group.

The Mindfulness-Based Intervention Teacher Assessment Criteria (MBI:TAC) is a comprehensive training and evaluation system for assessing MBI teacher competence and intervention fidelity.13-16 The MBI:TAC can be used to assess key features of MBI teaching that allow group learning to take place in a safe and accepting environment. MBI:TAC key features include important teacher capabilities such as the teacher’s own embodiment of mindfulness and their ability to flexibly adapt the curriculum and to bring forth important course themes through discussion. Thus, the MBI:TAC system can assess instructor skills within the multi-component, group learning environment of an MBI.

Research and clinical programs that use the MBI:TAC for fidelity assessment can be assured of intervention integrity and teacher expertise. However, assessing teacher expertise on key features of MBIs is challenging. The MBI:TAC requires that the rater/observer have significant experience with MBIs, including, perhaps, being an experienced MBI teacher themselves or a supervisor or trainer of MBI teachers, and being trained to conduct MBI:TAC ratings. While the MBI:TAC is a comprehensive, carefully constructed, and validated system for evaluating MBI teacher competence, practical considerations may be barriers to its widespread use. For example, budget constraints may preclude hiring of trained MBI:TAC assessors, and the time required to complete MBI:TAC ratings may be burdensome in some settings.

Recently, guidelines based upon behavior change theory have recommended a comprehensive approach to MBI fidelity and quality assurance assessment that expands beyond assessment of the teaching to include documentation regarding interventionist training, delivery, receipt by participants, and enactment of skills in daily life settings. 17 However, comprehensive assessment is not always feasible, particularly in pragmatic trials or programs focused on implementation into clinical settings. A fidelity tool that can succinctly document basic elements of MBI delivery and participant engagement is needed.

This paper describes a novel, brief MBI fidelity assessment tool developed and used in a multi-site research study called OPTIMUM (Optimizing Pain Treatment in Medical Settings Using Mindfulness). 18 The OPTIMUM study is an ongoing 3-site pragmatic randomized controlled trial that will test the effectiveness of an MBI program that combines mindfulness with group medical visits for chronic low back pain in primary care. Pragmatic randomized controlled trials test interventions under real-world conditions, allowing for more variability than in efficacy trials. In preparation for wide-scale dissemination and implementation of mindfulness interventions like OPTIMUM into real-world clinical contexts, ensuring intervention fidelity is critical to maintaining the effectiveness of the program. A practical, easy to use and cost-effective tool to rate intervention fidelity was needed for OPTIMUM. The investigators and research staff recognized that a brief rating tool would not provide a comprehensive assessment of teacher competence such as is obtained when rating fidelity using the MBI:TAC system. Supporting teaching quality and integrity in OPTIMUM was a valued intention in the trial but assessment of teacher competence was not included directly in the brief fidelity tool.

In the OPTIMUM study, several methods are used to assure quality of the teaching and participant uptake of the intervention, in addition to ratings on our newly developed fidelity tool. Regarding instructor training, the teachers have 13 to 17 years of experience teaching Mindfulness-Based Stress Reduction (MBSR), the program upon which the OPTIMUM mindfulness intervention is based. Teachers met weekly with investigators during the initial phases of the study and moved to quarterly meetings thereafter. The teachers complete self-reflections after sessions using a MBI:TAC summary of key features of teaching,13,19 noting teaching strengths and learning edges (room for improvement) in such areas as embodiment of mindfulness, relational skills, and holding the group learning environment. These reflections are discussed during teacher meetings but are not evaluated by research study staff. In OPTIMUM, the participants’ perspectives on intervention quality and uptake are highly valued. The participants are invited to complete a post-course semi-structured interview that includes feedback, estimates of helpfulness of the program, overall satisfaction, and reasons for maintaining or discontinuing their participation. The teacher reflections and participant post-intervention interviews are methods for ongoing quality assurance in OPTIMUM. However, a more structured and quantifiable approach to fidelity assessment is also needed. Therefore, the research team developed and evaluated the Concise Fidelity for Mindfulness-Based Interventions (CoFi-MBI).

The main purpose of this paper is to describe the development, evaluation, and outcomes of a pragmatic MBI fidelity instrument, the CoFi-MBI. The CoFi-MBI was developed to be a cost-effective, short, accessible tool to rate the presence or absence of important elements of an MBSR session as well as participant engagement. The CoFi-MBI does not require the rater to have an extensive knowledge of MBIs or extensive training in MBI assessment, such as would be required by the MBI:TAC system. The CoFi-MBI captures basic fidelity of delivery of intervention elements as well as assessment of participant engagement and extent to which technology challenges (eg, online delivery) interfered with engagement. We describe the methods used to develop the CoFi-MBI, rater training, and inter-rater reliability of the CoFi-MBI. We report quantitative and qualitative results of the CoFi-MBI in over 100 OPTIMUM sessions that have taken place in the ongoing OPTIMUM pragmatic randomized controlled trial.

Methods

Overview

Mindfulness intervention fidelity, including adherence to intervention elements and participant engagement, is evaluated in the OPTIMUM study, a 3-site pragmatic trial that compares primary care patients with cLBP in a group MBI to usual care. The intervention (group mindfulness + medical visits) is delivered entirely online via a HIPAA-compliant version of the Zoom (https://zoom.us) platform. The OPTIMUM study is being conducted in a diverse set of health care settings in Boston MA, Pittsburgh PA, and Chapel Hill NC. The OPTIMUM intervention includes 8 2-hour sessions that generally follow the 2017 MBSR Authorized Curriculum Guide©, adapted to include brief (approximately 5 minute) private appointments with the primary care provider (PCP) via the Zoom breakout room function during 1 of the check-in/discussion periods at each session. Typically, the individual breakout room sessions with the PCP occur during the initial 30 minutes of each session when group members connect about their week and their mindfulness practice. Any new content and meditation instruction takes place during the following 90-minute portion of the session. For OPTIMUM, we adapted the mindful movement to include chair yoga stretches rather than lying and standing yoga sequences, and we did not include a retreat day.7,18 OPTIMUM (NCT04129450) was approved by the University of Pittsburgh single Institutional Review Board, and all participants completed informed consent procedures and provided verbal consent for participation.

Approach to Fidelity in Optimum

Concise Fidelity for Mindfulness-Based Interventions (CoFi-MBI) rating form.

Concise Fidelity-MBI Development

In developing the CoFi-MBI, research team members with expertise in MBIs and medical group visits aimed to balance comprehensiveness and practicality. Informed by literature on key MBI features, 20 and using an iterative, discussion-based and consensus decision-making approach, the team created a fidelity tool that was (1) simple enough to minimize burden on fidelity raters, (2) practical for use by raters without extensive background in MBIs, and (3) sufficiently nuanced to include assessment of participant engagement and technological barriers as well as basic session elements.

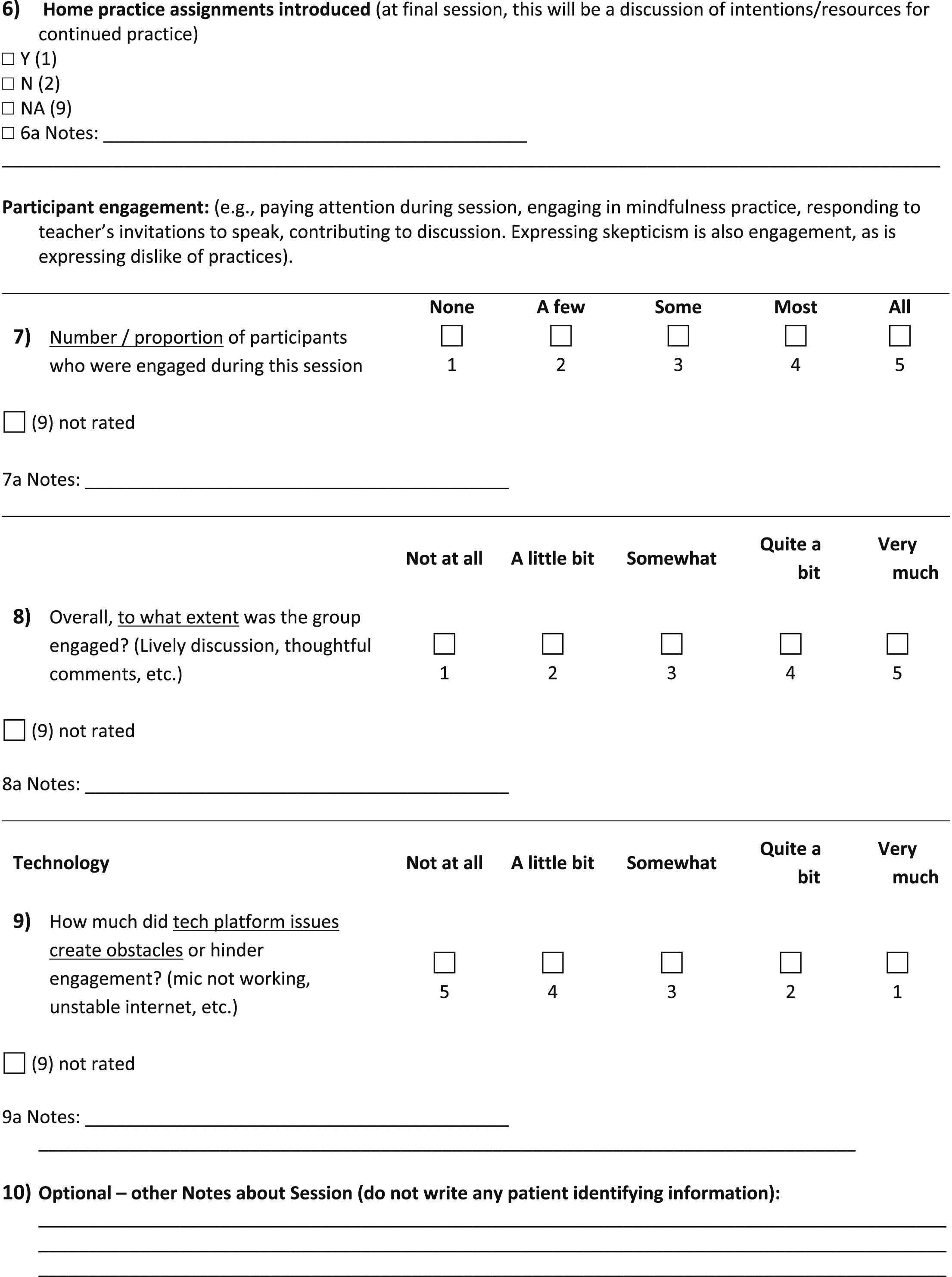

The CoFi-MBI includes 9 rated items and 10 optional comments fields. The tool includes rater and session logistics information, and rating domains of: Session Content Elements, Participant Engagement, and Technology Obstacles. Session logistics include date of session, site, rater, instructor, cohort number and session number. Examples of Session Content Elements, (rated for their presence: yes, no, not applicable/not rated) are: welcoming participants, meditation guidance and practice, discussion, breakout sessions with the PCP, including the PCP in discussions, and instructions for home practice. Examples of ‘Not applicable/not rated’ include situations in which the rater was not present to observe part of a session, or if the PCP was unable to be present for discussions. Participant engagement is rated by 2 questions about proportion of participants who were engaged and extent of participant engagement overall, rated on 5-response Likert scales from none/not at all, to all/very much. Technology obstacles are assessed with a single Likert scale question about the extent to which technology obstacles hindered participants’ engagement. An optional ‘comments’ field follows each CoFi-MBI item for the rater to include a note regarding their observations. In the note, raters may comment on a range of subjects, such as technical difficulties, attendance numbers, participant engagement, and qualifiers or rationale for the rating.

Procedures

Fidelity tool training and inter-rater reliability

Research associates and coordinators at each of the 3 OPTIMUM study sites attended 2, 1-hour online training meetings to learn how to accurately complete the CoFi-MBI. The meetings were recorded for future use by new team members, and ongoing supervision and consultation was provided as needed by the CoFi-MBI developers (GAD, CMG, SL). All CoFi-MBI raters had familiarity with MBIs because they had either participated in OPTIMUM courses as research staff or had previously attended MBSR courses.

Because the CoFi-MBI is a new instrument for assessing MBI fidelity, it is important to determine whether it can be reliably completed by different raters. To assess inter-rater reliability of the CoFi-MBI, 2 trained research assistant raters (RR, MM) independently viewed and rated a set of recorded OPTIMUM sessions from the 3 sites. The 2 raters had familiarity with the program but were not mindfulness instructors. For the inter-rater reliability study, the raters first completed training with CoFi-MBI developers (GAD, CMG, SL), then each rated the same recorded session and kept a notebook for questions and comments. Then, the 2 raters discussed their ratings to resolve any discrepancies. Following the first session rating, a second review and training with the CoFi-MBI developers took place, to discuss decision-making regarding details of rating participant engagement and extent of technological challenges. This additional training review session was completed and recorded for future use, and additional written guidelines were created for raters. Next, the 2 raters independently rated the remaining recorded sessions, and their ratings were then provided to the study statistician (JMW) for inter-rater reliability analysis.

General CoFi-MBI training

A larger pool of fidelity raters was also trained to complete the CoFi-MBI. The trained raters were student research assistants, research staff, and study coordinators at the 3 sites. The larger pool of raters also completed 2 1-hour trainings and received ongoing supervision and consultation as needed. These raters were integral members of the research team who regularly participated in the OPTIMUM sessions as participant-observers and technology trouble-shooters. In OPTIMUM, at least 1 research staff member typically attends the online OPTIMUM sessions to provide any needed administrative support to the mindfulness instructor and the PCP, and thus it is feasible to collect fidelity ratings during or immediately after sessions.

CoFi-MBI electronic data collection procedures

Once the CoFi-MBI development and training was accomplished, research staff at each of the 3 sites aimed to complete CoFi-MBI ratings for each intervention session. The CoFi-MBI can be completed on paper; however, in the OPTIMUM study the CoFi-MBI is generally completed using QualtricsXM Survey Software (https://www.qualtrics.com). This software enables the survey to be formatted to easily rate sessions using any device with internet connection (eg, mobile phones, tablets, desktop computers). The electronic data collection method is convenient for consolidating data for centralized access and use by team members. Although no protected health information regarding OPTIMUM participants is collected on the CoFi-MBI, the Qualtrics survey is password-protected with the link and password provided only to OPTIMUM staff at 3 sites, ensuring data entry security.

CoFi-MBI inter-rater reliability data analysis plan

When mathematically possible, inter-rater reliability was estimated using a Kappa coefficient for binary responses and the weighted Kappa for Likert-type scale responses, along with 95% confidence limits. A sample of no fewer than 20, and preferably 25-50 rated cases is needed for estimating confidence intervals around the kappa estimate. 21 Percent agreement is also presented. McNemar’s test was used to assess significant disagreement between raters. All analyses were performed in SAS v9.4 with α = .05 to determine statistical significance.

CoFi-MBI quantitative reporting and qualitative data analysis plan

Quantitative results of the CoFi-MBI used generally in OPTIMUM sessions are reported as percentages of sessions in which individual Session Content Elements were present, and ratings of Participant Engagement, and Technology Obstacles.

The optional comments fields of the CoFi-MBI provide qualitative data that explain ratings and may inform strategies for improving engagement or addressing technology issues. CoFi-MBI qualitative data was coded using thematic content analysis. Qualitative data were coded by a research assistant (GAD) and codes and quotes were reviewed by an experienced qualitative researcher (IR). Codes were then revised and subsequently grouped into themes. Data were managed using Microsoft Excel.

Results

Participants

To date, 199 people with chronic low back pain have enrolled in the OPTIMUM study. Of those enrolled, 140 (70%) are female, 87 (44%) are Black, 91 (46%) are White, 8 (4%) more than 1 race, and 8 (4%) are Hispanic or Latin. The mean age of participants is 53.3 years (SD 14.0, range 18-84). Participants were assigned randomly to receive either the 8-week OPTIMUM MBI (group Mindfulness sessions + PCP visit) or their usual primary care treatment. The OPTIMUM intervention has been delivered to a total of 14 cohorts across the 3 study sites. Each cohort includes between 4 and 13 intervention participants.

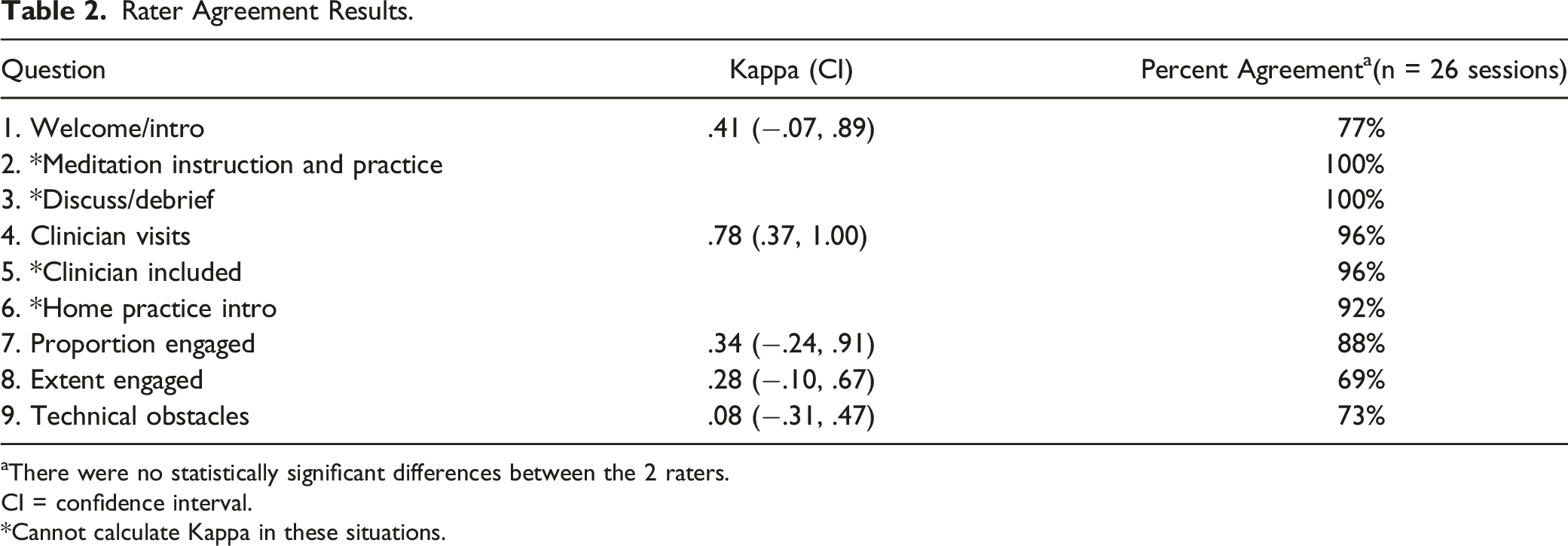

CoFi-MBI Inter-Rater Agreement

Rater Agreement Results.

aThere were no statistically significant differences between the 2 raters.

CI = confidence interval.

*Cannot calculate Kappa in these situations.

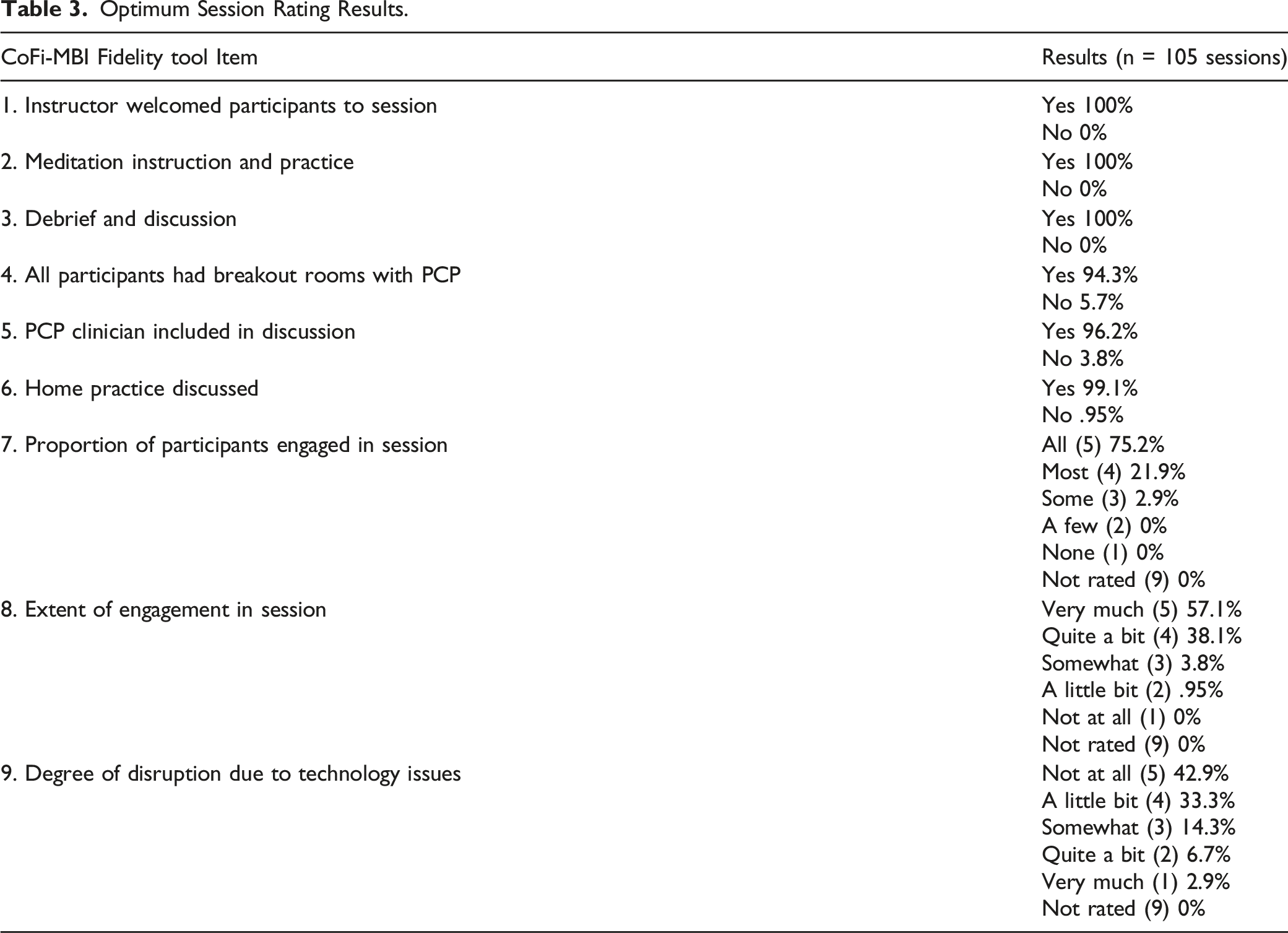

CoFi-MBI Overall Session Rating Results

Optimum Session Rating Results.

CoFi-MBI Comment Field Results

Qualitative Results.

Discussion

The CoFi-MBI was developed as a brief and practical MBI fidelity tool to document session elements and participant engagement in pragmatic MBI research studies, especially those delivered online rather than in-person. The CoFi-MBI is potentially scalable for use in busy primary care clinical settings, as it can be completed in 1-2 minutes by a rater who is present at the session or views a recording. We found that raters with moderate familiarity with MBIs could be trained on the CoFi-MBI in approximately 2 hours, and there was generally good to excellent inter-rater agreement on the individual CoFi-MBI questions about session elements and participant engagement. In terms of CoFi-MBI results in 105 rated OPTIMUM sessions, important elements were present in nearly all sessions (94 – 100% of sessions), and participant engagement was high. Technology-based challenges in online MBI delivery are rated on the CoFi-MBI, and we found that such challenges interfered with participant engagement in the online OPTIMUM MBI sessions in approximately 1 quarter of the rated sessions. Because the OPTIMUM study is currently ongoing, we are not yet able to link the CoFi-MBI fidelity ratings with outcomes of the study.

The CoFi-MBI and the MBI:TAC are 2 fidelity assessment tools that each offer different strengths to the field of MBIs. In comparison to the MBI:TAC, the CoFi-MBI has strengths in the areas of (1) brevity and simplicity, (2) minimal training time for raters, (3) minimal time and effort required for completion, (4) inclusion of participant engagement ratings, and (5) quantitative and qualitative evaluation of technology challenges influencing participant engagement. However, it is important to acknowledge that the CoFi-MBI is not a comprehensive system for evaluating teacher competence, and teacher expertise is essential for any MBI. A major strength of the MBI:TAC as an assessment system is its focus on these essential areas of teacher expertise and competence. Rather than simply rating presence or absence of MBI session elements, the MBI:TAC includes evaluation of profound and complex teacher characteristics such as embodiment of mindfulness and ability to foster a group learning environment safely and successfully. This richness and depth are MBI:TAC strengths that are offset to some extent by the requirement that raters have significant expertise in MBIs and extensive training in how to use the MBI:TAC. In choosing a fidelity assessment tool, MBI program developers and researchers will each need to appropriately balance the potential competing needs for depth of teacher competence evaluation and ease of use.

The fidelity rating tool developed for OPTIMUM is practical, simple to use, and is minimally burdensome for research staff raters with limited meditation and mindfulness experience. The CoFi-MBI can be used to document elements of an MBI, assess participant engagement in sessions, and address the novel and important area of technology challenges during an MBI delivered online. The shared online rating system allows for systematic practical feedback from raters. When applied in a multi-site trial, use of this simple fidelity tool has the potential to inform the research team about barriers and facilitators of engagement and any site differences in MBI implementation.

There are several limitations of this MBI fidelity investigation. First, although the CoFi-MBI is easy to use, the reduced time for completion assumes that the rater is present at the session. In the OPTIMUM study, a coordinator or research assistant participates in the session or assists with technology challenges, thus, fidelity ratings are completed with minimal time and effort. However, in a setting in which the meditation instructor and PCP have no administrative help, the 1-2 minutes required for CoFi-MBI completion would also require additional time for a rater to view a recording.

Regarding limitations to reliability of the CoFi-MBI, we found that despite careful training and re-training, the inter-rater agreement between our 2 raters was sub-optimal for some questions, particularly those questions that require subjective assessments. For example, despite training and additional written guidance and examples, the inter-rater reliability was low for the questions about the extent of technology challenges hindering engagement and extent of participant engagement because 1 rater may choose ‘very much’ and the other ‘quite a bit.’ We acknowledge that the distinction between ratings such as ‘very much’ and ‘quite a bit’ (eg, engagement) is subjective and perhaps of limited importance. One strategy going forward is to provide ongoing training and oversight, particularly for new raters. Alternatively, categories near to 1 another may potentially be combined without loss of crucial information. In future studies, a three-category rating with clear distinctions could be used rather than a five-point rating scale.

An additional limitation of the CoFi-MBI is that it does not include a nuanced assessment of teacher competency in delivering the elements and the essence of the MBI. The CoFi-MBI documents presence of basic elements of an MBI; however, such elements may be delivered unskillfully (eg, poorly guided meditations) and this would likely not be reflected on the CoFi-MBI. Thus, the CoFi-MBI is best suited for use in programs with certified and experienced instructors rather than new teachers. Research or clinical programs with sufficient budget and time resources may decide to use the MBI:TAC, perhaps alongside the CoFi-MBI, to evaluate teacher competency and fidelity, particularly if they employ MBI instructors with limited experience. The MBI:TAC provides a comprehensive and rigorous assessment of teaching competency; however, it may not be feasible for most research studies, particularly pragmatic trials, and implementation into busy clinical settings that lack funding for MBI:TAC-trained staff. In OPTIMUM, the instructors are experienced MBSR teachers who regularly participate in peer meetings. The OPTIMUM instructors employ the MBI:TAC in a novel way: completing self-reflection assessments using an MBI:TAC summary sheet. This innovative use of the MBI:TAC kept instructors aware of MBI best practices for maintaining teaching integrity throughout the intervention without adding assessment burden to other research personnel.

Next steps for evaluating the reliability and validity of the CoFi-MBI include collecting individual participants’ ratings of their engagement in each session, and having instructors assess group engagement and proportion of participants engaged each week using the same or similar rating scales as those used in the CoFi-MBI. In the OPTIMUM pragmatic trial, participants complete post-intervention semi-structured interviews, and instructors complete self-reflections of their teaching, but these evaluations are not in a format that allows comparison to the CoFi-MBI ratings. Adding additional weekly assessments of engagement would add undue burden in OPTIMUM and could detract from the felt experience of participants. However, in future investigations, integrating session-by-session ratings from participants and instructors would provide further support for the validity and reliability of the CoFi-MBI or similar fidelity rating tools, particularly in the understudied area of participant engagement in MBIs.

The CoFi-MBI is a useful tool for assessing and recording intervention fidelity, measuring participant engagement, logging technical difficulties with the intervention’s online platform (HIPAA-Compliant Zoom), and sourcing information about protocol adherence and implementation differences across sites, instructors, and cohorts. It is easily shared via a centralized online platform allowing for the effective tracking of entries and data consolidation across sites. The rater notes are particularly useful for identifying barriers to participants’ full engagement and reporting specific details regarding technology challenges. Through this shared resource, the 3 OPTIMUM sites have been able to come together to address challenges that may have remained hidden without the CoFi-MBI assessments, such as issues with breakout rooms and strategies for optimally including the PCP in sessions.

The CoFi-MBI is highly suitable for use by other research teams, particularly those engaged in online, multi-site MBIs, because of its brevity, simplicity, and potential for centralized electronic data collection. The CoFi-MBI is provided in Table 1 and is also available upon request from the corresponding author. An advantage of the general format of the CoFi-MBI is that it can be easily adapted for other MBI’s and other group interventions. For example, questions concerning PCP visits can be removed if they are not relevant, and other questions may be added as needed to meet the intervention delivery needs of a study. Because it was developed in the context of a pragmatic trial, the CoFi-MBI format and questions may be relevant and scalable for broad implementation in primary care or other clinical settings in which MBI fidelity assessments are needed.

Footnotes

Acknowledgments

The authors gratefully acknowledge the OPTIMUM participants, and OPTIMUM study research team members, mindfulness instructors, and primary care providers.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institutes of Health (NIH) through the NIH HEAL Initiative under award number UH3AT010621 from the National Center for Complementary and Integrative Health. This work also received logistical and technical support from the PRISM Resource Coordinating Center under award number U24AT010961 from the NIH through the NIH HEAL Initiative. The content is solely the responsibility of the authors and does not necessarily represent the official views of the NIH or its HEAL Initiative.